AI and Multi-Omics Integration: New Frontiers in Predicting Emergent Tumor Behavior

This article synthesizes the latest advancements in predicting dynamic tumor phenotypes such as metastasis, therapeutic resistance, and relapse.

AI and Multi-Omics Integration: New Frontiers in Predicting Emergent Tumor Behavior

Abstract

This article synthesizes the latest advancements in predicting dynamic tumor phenotypes such as metastasis, therapeutic resistance, and relapse. It explores the foundational biology of cancer stem cells and tumor heterogeneity before detailing cutting-edge methodologies, including artificial intelligence (AI)-driven analysis of histopathology and liquid biopsies. The content addresses critical challenges in model interpretability and data standardization while evaluating the clinical validation of these predictive tools. Aimed at researchers and drug development professionals, this review provides a comprehensive framework for developing robust, clinically actionable models to forecast cancer progression and optimize therapeutic intervention.

Decoding the Biological Drivers of Unpredictable Tumor Phenotypes

The Central Role of Cancer Stem Cells (CSCs) in Tumor Initiation and Relapse

Cancer Stem Cells (CSCs) represent a small subpopulation of cells within tumors that possess stem cell-like properties, including self-renewal, multi-lineage differentiation, and enhanced survival mechanisms [1] [2]. Although rare, CSCs are now recognized as a central force driving tumorigenesis, metastasis, recurrence, and resistance to therapy [2]. Their ability to evade conventional treatments and remain dormant for extended periods makes them critical targets for improving cancer therapies and predicting emergent tumor behavior [1].

The CSC concept has evolved significantly from its initial proposals in the 19th century to modern validation through advanced technologies. Current understanding suggests CSCs are not always a fixed subpopulation but rather a dynamic functional state that tumor cells can enter or exit, driven by intrinsic programs, epigenetic reprogramming, and microenvironmental cues [3]. This plasticity complicates their identification and targeting but offers new avenues for therapeutic intervention.

Frequently Asked Questions (FAQs) on CSC Biology and Technical Challenges

Q1: What are the core functional properties that define CSCs?

CSCs are primarily defined by three key functional properties:

- Tumor-initiating capacity: The ability to initiate and sustain tumor growth when transplanted into immunodeficient mice, often requiring significantly fewer cells than non-CSC populations [2].

- Self-renewal: The capacity to generate identical copies of themselves through cell division, maintaining the CSC pool long-term [2].

- Multi-lineage differentiation: The potential to produce the heterogeneous cell types that constitute the tumor bulk, thereby establishing and maintaining tumor heterogeneity [2].

Q2: Why is CSC identification so challenging, and how reliable are common markers?

CSC identification faces several significant challenges:

- Lack of universal markers: No single marker is consistently expressed across all cancer types [1].

- Marker specificity issues: Commonly used markers like CD133 are also expressed in normal stem cells and non-tumorigenic cancer cells [1] [4].

- Dynamic plasticity: CSC identity is shaped by both intrinsic genetic programs and extrinsic cues from the tumor microenvironment [1].

- Context-dependent expression: Marker expression varies across tumor types, reflecting tissue origin and microenvironmental influences [1].

Q3: How does CSC plasticity contribute to therapy resistance and relapse?

CSC plasticity enables several adaptive mechanisms:

- Phenotypic switching: CSCs can reversibly transition between epithelial and mesenchymal states, as well as between quiescent and proliferative phases [2].

- Metabolic adaptability: They can switch between glycolysis, oxidative phosphorylation, and alternative fuel sources to survive under diverse environmental conditions [1].

- Microenvironmental interaction: Bidirectional crosstalk with stromal components creates specialized survival niches that protect CSCs from therapeutic insults [2].

- Epigenetic reprogramming: Rapid changes in gene expression patterns in response to therapeutic pressure enable resistance mechanisms [3].

Q4: What advanced technologies are improving CSC research predictability?

Emerging technologies are transforming CSC research:

- Single-cell sequencing: Enables characterization of CSC heterogeneity and stem-like features at unprecedented resolution [1].

- Spatial transcriptomics: Reveals how CSC biology is influenced by geographical positioning within the tumor ecosystem [2].

- AI-driven multiomics analysis: Facilitates the identification of CSC-specific features and vulnerabilities across various cancer types [1].

- 3D organoid models: Provide more physiologically relevant systems for studying CSC behavior and therapeutic responses [1].

- CRISPR-based functional screens: Enable systematic identification of genes essential for CSC maintenance and function [1].

Troubleshooting Common Experimental Challenges in CSC Research

Challenge: Inconsistent CSC Isolation and Purity

Problem: Low purity and yield when isolating CSCs using surface markers, leading to inconsistent experimental results.

Solutions:

- Combine multiple markers: Use a combination of surface markers and functional assays rather than relying on a single marker. For example, recent protocols successfully combine CD133 with α-1,2-high-mannose type glycan chains for more specific isolation of CSCs from intrahepatic cholangiocarcinoma [4].

- Verify with functional assays: Always confirm tumor-initiating capacity through in vivo limiting dilution assays after isolation [5].

- Standardize handling procedures: Ensure consistent tissue processing, enzymatic digestion times, and temperature controls during cell isolation [4].

Challenge: Maintaining CSC Phenotype In Vitro

Problem: CSCs frequently lose their stem-like properties during in vitro culture.

Solutions:

- Use specialized culture conditions: Employ serum-free media supplemented with B27, epidermal growth factor (EGF), and fibroblast growth factor (FGF-2) to maintain stemness [4].

- Implement 3D culture systems: Grow cells as floating spheres (tumor spheres assay) to enrich for and maintain cells with self-renewal capacity [5].

- Limit passaging: Use low-passage cells and cryopreserve early passages to prevent phenotypic drift [5].

- Control oxygenation: Maintain physiological oxygen tensions (often 1-5% O2) as oxygen concentration can affect CSC marker expression and tumorigenic potential [4].

Challenge: Validating Tumor-Initiating Capacity

Problem: Difficulty in establishing reliable xenograft models with consistent engraftment rates.

Solutions:

- Use immunocompromised hosts: Select appropriate mouse models (e.g., NSG, NOG) with sufficient immunodeficiency to support human tumor cell engraftment [5].

- Apply limiting dilution analysis: Systematically quantify tumor-initiating cell frequency through calculated dilutions and endpoint analysis [5].

- Include stromal support: Co-inject with Matrigel or supportive stromal cells to provide essential microenvironmental cues [5].

- Monitor appropriate timeframes: Allow sufficient time for tumor development, as CSCs may have delayed growth kinetics compared to bulk tumor cells [5].

CSC Markers and Technical Specifications

Table 1: Key CSC Markers and Their Applications Across Different Cancer Types

| Marker | Common Cancer Types | Isolation Method | Technical Considerations | Limitations |

|---|---|---|---|---|

| CD133 | Glioblastoma, colon cancer, intrahepatic cholangiocarcinoma [1] [4] | Immunomagnetic beads, FACS [4] | Antibody recognition depends on glycosylation state; use AC133 antibody for glycosylated epitopes [4] | Also expressed in normal bile ducts; structural ambiguity of N-glycan limits specificity [4] |

| CD44 | Breast cancer, head and neck squamous cell carcinoma [1] | FACS, immunomagnetic separation | Multiple isoforms exist; standardize antibody clones across experiments | Expressed in many normal cell types; not sufficient alone for isolation |

| CD34+/CD38- | Acute Myeloid Leukemia (AML) [1] [2] | FACS | First validated CSC population; well-established in hematopoietic malignancies | Limited to hematopoietic malignancies |

| ALDH1 | Breast cancer, multiple solid tumors [2] | ALDEFLUOR assay, enzymatic activity | Measures aldehyde dehydrogenase activity; often combined with surface markers | Activity can be influenced by cell state and metabolism |

| α-1,2-Man+/CD133+ | Intrahepatic cholangiocarcinoma [4] | Cyanovirin-N (CVN) lectin binding with CD133 | Uses bacterial lectin specific for α-1,2-mannose chains; improved specificity | Emerging method; limited validation across cancer types |

Table 2: Quantitative Parameters for CSC Functional Assays

| Assay Type | Key Readout Parameters | Optimal Experimental Conditions | Validation Requirements |

|---|---|---|---|

| Tumor sphere formation | Sphere number and size after 7-14 days [5] | Serum-free medium, low-attachment plates, growth factors | Limit dilution to ensure clonality; confirm secondary sphere formation |

| In vivo limiting dilution | Tumor-initiating cell frequency, calculated using ELDA software [5] | Immunocompromised mice (NSG preferred), Matrigel support, 3-6 month monitoring | Statistical analysis of engraftment rates across multiple dilutions |

| Chemoresistance assays | IC50 values, recovery potential post-treatment [5] | Physiological relevant drug concentrations, assessment of residual cells | Compare to bulk tumor cells; evaluate colony formation post-treatment |

| Differentiation capacity | Lineage marker expression, morphological changes [5] | Serum-induced differentiation, time-course analysis | Verify loss of self-renewal in differentiated progeny |

Essential Experimental Protocols

Protocol: CSC Isolation Using Combined CD133 and α-1,2-Mannose Recognition

This protocol provides enhanced specificity for CSC isolation by addressing limitations of CD133 alone [4].

Materials and Equipment:

- Tumor tissue samples (freshly collected or properly preserved)

- CD133 MicroBead Kit (Miltenyi Biotec) [4]

- Purified and biotinylated Cyanovirin-N (CVN) protein [4]

- MACS buffer (Miltenyi Biotec) [4]

- MS columns (Miltenyi Biotec) [4]

- Cell strainer (70 μm)

- Collagenase Type IV and Dispase II enzymes [4]

- DMEM/F12 medium supplemented with B27, EGF, FGF-2, heparin, and antibiotics [4]

Step-by-Step Procedure:

- Tissue Processing:

- Mechanically dissociate tumor tissue using sterile scalpel and scissors in cold DPBS.

- Enzymatically digest using collagenase IV (1-2 mg/mL) and dispase II (1-2 U/mL) for 30-60 minutes at 37°C with gentle agitation.

- Filter through 70 μm cell strainer to obtain single-cell suspension.

- Lyse red blood cells if necessary using appropriate buffer.

CD133 Positive Selection:

- Resuspend up to 10^8 cells in 500 μL MACS buffer.

- Add 100 μL FcR Blocking Reagent and 100 μL CD133 MicroBeads per 10^8 cells.

- Mix well and incubate for 30 minutes in refrigerator (2-8°C).

- Wash cells with 10-20× the labeling volume of MACS buffer and centrifuge.

- Place MS column in magnetic field and prepare with 500 μL MACS buffer.

- Apply cell suspension to column, collecting flow-through containing CD133- cells.

- Wash column 3× with 500 μL MACS buffer.

- Remove column from magnetic field and elute CD133+ cells with 1 mL MACS buffer.

α-1,2-Mannose Positive Selection:

- Incubate CD133+ cells with biotinylated CVN (5-10 μg/mL) in MACS buffer for 20 minutes at 4°C.

- Wash cells to remove unbound CVN.

- Incubate with anti-biotin MicroBeads for 15 minutes at 4°C.

- Perform a second magnetic separation following the same procedure as for CD133.

- The positively selected cells represent the CD133+α-1,2-Man+ CSC population.

Validation and Culture:

- Assess viability using trypan blue exclusion.

- Plate cells in specialized serum-free medium for tumor sphere formation or functional assays.

- Verify purity by flow cytometry using appropriate antibodies.

Protocol: Tumor Sphere Formation Assay for Self-Renewal Assessment

The tumor sphere assay enables in vitro evaluation of self-renewal capacity and CSC enrichment [5].

Workflow:

Critical Considerations:

- Use extreme limiting dilutions to ensure clonality of resulting spheres.

- Include appropriate controls for cell viability and background aggregation.

- Standardize quantification methods (automated imaging preferred over manual counting).

- Always passage spheres to assess self-renewal capacity in secondary and tertiary generations.

CSC Signaling Pathways and Microenvironment Interactions

CSCs maintain their properties through complex signaling networks and bidirectional communication with the tumor microenvironment (TME). Key pathways include Wnt/β-catenin, Notch, Hedgehog, and Hippo signaling, which are often dysregulated in CSCs [2]. Additionally, metabolic pathways involving glycolysis, oxidative phosphorylation, and alternative fuel sources like glutamine and fatty acids contribute to CSC maintenance and therapy resistance [1].

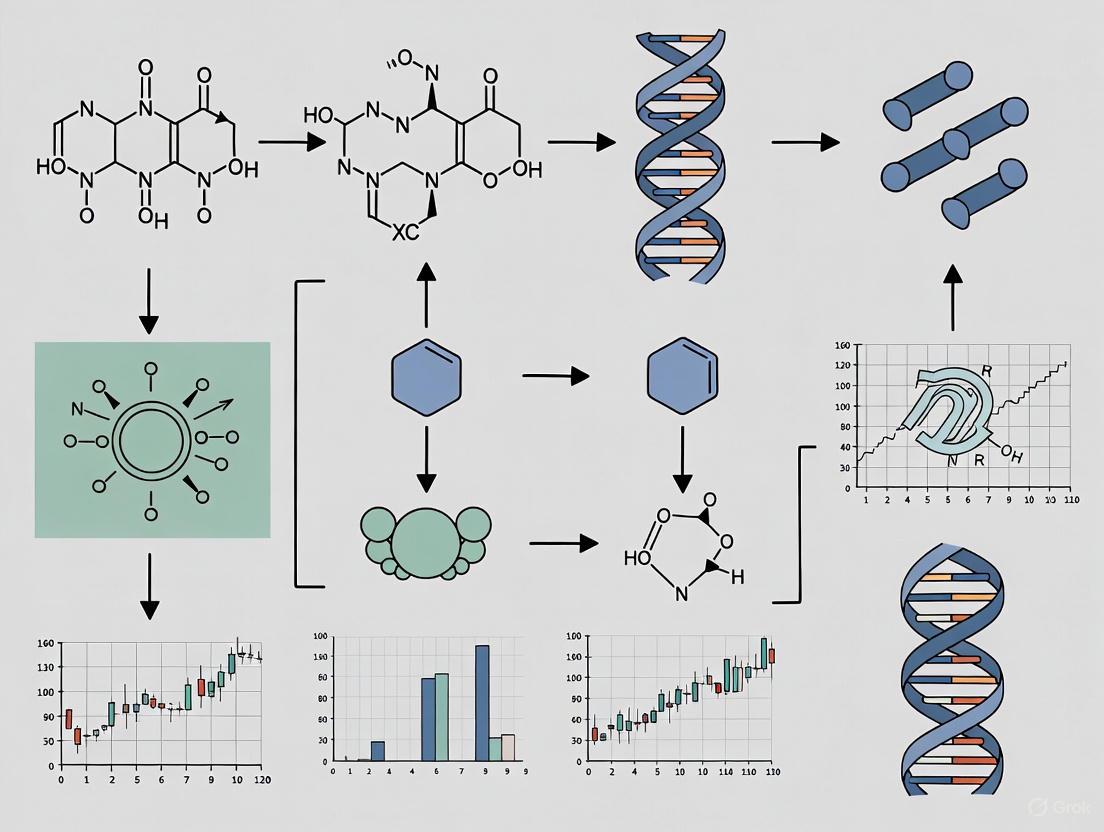

The diagram below illustrates the core signaling networks and microenvironmental interactions that sustain CSCs:

The TME creates specialized niches that protect CSCs and maintain their stemness through:

- Metabolic symbiosis: Stromal cells provide metabolites like methionine or activate pro-survival pathways such as PDGFR-β/GPR91 [2].

- Immune evasion: Tumor-associated macrophages (TAMs) polarized toward M2 phenotype release immunosuppressive cytokines (TGF-β, IL-10), shielding CSCs from immune surveillance [2].

- Microbial influences: In colorectal cancer, colistin-producing Escherichia coli strains induce genomic instability and upregulate stemness markers (CD133, OCT4) [2].

Research Reagent Solutions for CSC Studies

Table 3: Essential Research Reagents for CSC Investigation

| Reagent Category | Specific Examples | Research Application | Technical Notes |

|---|---|---|---|

| Surface Marker Detection | CD133 MicroBeads, CD44 antibodies, EpCAM antibodies [5] [4] | Isolation and purification of CSC populations | Combine multiple markers for improved specificity; verify with functional assays |

| Lectins for Glycan Recognition | Biotinylated Cyanovirin-N (CVN) [4] | Detection of specific glycosylation patterns on CSC markers | Particularly useful for recognizing α-1,2-mannosylated CD133 |

| Culture Supplements | B27 supplement (minus vitamin A), EGF, FGF-2, heparin [4] | Maintenance of stemness in serum-free conditions | Essential for tumor sphere assays and long-term CSC culture |

| Enzymatic Dissociation | Collagenase Type IV, Dispase II, DNase I [4] | Tissue processing and single-cell suspension preparation | Critical for maximizing cell viability and preserving surface markers |

| Magnetic Separation | MS columns, MACS buffer [4] | Immunomagnetic cell separation | Enables high-purity isolation with minimal equipment requirements |

| In Vivo Modeling | Matrigel, immunocompromised mice (NSG, NOG) [5] | Tumor-initiating capacity assessment | Essential for functional validation of CSCs |

Emerging Technologies and Future Directions

The field of CSC research is rapidly evolving with several promising technological advances:

Single-Cell Multiomics: Integration of transcriptomic, epigenomic, and proteomic data at single-cell resolution is revealing previously unappreciated heterogeneity within CSC populations [1] [2]. This approach enables the identification of rare subpopulations and transitional states that may be critical for therapeutic resistance.

Spatial Biology Technologies: Techniques such as spatial transcriptomics and multiplexed immunohistochemistry are mapping CSC positions within the tumor architecture, revealing how niche-specific signals influence CSC behavior [2] [6].

AI-Driven Predictive Modeling: Machine learning algorithms are being applied to multiomics data to predict CSC dynamics, therapeutic vulnerabilities, and emergent resistance patterns [1] [6]. These approaches show promise for identifying optimal combination therapies that prevent CSC-driven relapse.

Synthetic Biology Approaches: Engineered cellular therapies, such as CAR-T cells with Boolean logic gates that require multiple CSC markers for activation, are being developed to enhance specificity and reduce off-target effects [6].

Advanced Imaging Biomarkers: Techniques like dynamic contrast-enhanced MRI (DCE-MRI) are being explored to non-invasively monitor CSC-rich areas based on distinct microvascular features, potentially enabling real-time assessment of treatment response [7].

These emerging approaches, combined with the established methodologies detailed in this guide, provide researchers with an expanding toolkit to address the challenges of CSC research and develop more effective strategies for predicting and preventing tumor relapse.

Core Mechanisms: The Metastatic Cascade

This section breaks down the key biological processes that cancer cells use to spread, become dormant, and reactivate.

What are the defined steps of the invasion-metastasis cascade?

The metastatic cascade is a multi-step process that disseminated tumor cells (DTCs) must complete to form secondary tumors [8].

- Invasion: Cancer cells dissociate from the primary tumor, degrade the basement membrane, and penetrate the underlying interstitial matrix [8].

- Intravasation: Tumor cells enter the bloodstream or lymphatic system [8].

- Circulation: Cells travel through the circulatory system. Most CTCs undergo apoptosis due to shear stress or anoikis, but some survive as single cells or clusters [9].

- Extravasation: Surviving CTCs exit the circulation and infiltrate the stroma of a distant organ [8].

- Colonization: DTCs survive in the new microenvironment and initiate proliferative outgrowth to form macroscopic metastases. This step includes a often-protracted period of dormancy [8].

What is metastatic dormancy and why is it clinically significant?

Metastatic dormancy is a state where DTCs remain in a quiescent, growth-arrested state at a secondary site for months, years, or even decades before potentially reactivating [10] [11]. There are two primary models:

- Tumor Cell Dormancy (Cellular Quiescence): Solitary cells exist in a reversible, quiescent state (G0/G1 cell cycle arrest) with low metabolic activity [11] [12] [13].

- Tumor Mass Dormancy: A microscopic cluster of cells where proliferation is balanced by apoptosis, often due to a lack of angiogenesis or effective immune surveillance [12] [13].

Significance: Dormancy is a major clinical challenge. It allows cancer cells to evade conventional therapies that target rapidly dividing cells, leading to late-term recurrences that account for the majority of cancer-related deaths [10] [11] [9].

What mechanisms govern dormancy and reactivation?

The balance between dormancy and proliferation is regulated by intrinsic and extrinsic signals.

Key Signaling Pathways and Microenvironment Cues

| Mechanism | Key Players | Role in Dormancy/Reactivation |

|---|---|---|

| ERK/p38 Signaling Ratio | ERK, p38 MAPK | A low p38/ERK ratio promotes proliferation; a high ratio induces and maintains dormancy [11] [12]. |

| Microenvironment Signaling | TGF-β2, BMP-7, GAS6 | Bone marrow stromal cells secrete these factors, inducing dormancy in prostate and breast cancer cells via p38 and cell cycle inhibitors [10] [11] [12]. |

| Immune Surveillance | Natural Killer (NK) cells, Macrophages | Immune cells can suppress awakening. Alveolar macrophages in the lungs keep breast cancer cells dormant via TGF-β2. NK cells control dormant cells in the bone marrow [10]. |

| Metabolic Reprogramming | OXPHOS, FAO, Autophagy | Dormant cells shift from glycolysis to oxidative phosphorylation (OXPHOS) and fatty acid oxidation (FAO) to survive stress. Autophagy recycles nutrients [13]. |

| Extracellular Matrix (ECM) Engagement | uPAR, β1-integrin | Inefficient engagement with the ECM (low uPAR signaling) leads to poor adhesion and activation of dormancy pathways (p38) [11]. |

Diagram: The Metastatic Journey from Primary Tumor to Reactivation. The diagram illustrates the multi-step cascade of metastasis, highlighting the critical juncture at a secondary site where disseminated tumor cells (DTCs) enter a dormant state based on local signals. Key pro-dormancy signals (green) include immune surveillance and specific microenvironmental cues, while reactivation (red) is driven by factors like immune escape and angiogenesis.

The Scientist's Toolkit: Key Reagents & Experimental Models

This table details essential reagents and models for studying metastasis and dormancy.

Table 1: Key Research Reagent Solutions

| Reagent / Model | Function / Application | Key Findings Enabled |

|---|---|---|

| D2.0R & D2A1 Cell Lines (Breast Cancer) | In vivo models for studying dormancy vs. rapid growth. D2.0R remain dormant, D2A1 form tumors. | Identified fibronectin and β1-integrin signaling as crucial for breaking dormancy via ECM engagement [11]. |

| HC-5404 (Experimental Drug) | Targets a signaling pathway essential for dormant cell survival. | Prevented dormant cancer cells in mice from causing metastases; granted FDA Fast Track designation [10]. |

| STING Agonists (e.g., MSA-2) | Boosts the STING pathway, activating the immune system against dormant cells. | Made dormant mouse cancer cells vulnerable to attack by natural killer (NK) cells, suppressing metastatic progression [10]. |

| BMP-7 (Recombinant Protein) | Bone morphogenetic protein used to treat cancer cells in vitro/in vivo. | Induces dormancy in prostate cancer cells via p38 pathway and upregulation of the metastasis suppressor NDRG1 [11] [12]. |

| Patient-Derived Xenografts (PDX) | Immunodeficient mice implanted with human tumor tissue. | Allows study of human cancer dormancy and reactivation in a living system, preserving tumor heterogeneity. |

Troubleshooting Guide & FAQs

Dormancy Model Challenges

Problem: Inconsistent entry into or exit from dormancy in in vivo models.

- Solution A: Validate the ERK/p38 signaling ratio in your cells. A high p38/ERK ratio is a reliable indicator of the dormant state [11] [12]. Use phospho-specific antibodies for western blot or flow cytometry.

- Solution B: Characterize the microenvironment. Use models with functional immune systems and profile stromal cell secretions (e.g., TGF-β, BMP-7) in the target organ, as these are critical dormancy signals [10] [12].

- Solution C: Ensure your model allows for sufficient time. Dormancy can extend for a significant portion of an animal's lifespan; short-term experiments may not capture reactivation [10].

Detecting and Quantifying Dormant Cells

Problem: How can I identify and track rare, quiescent DTCs in a complex tissue?

- Protocol: Immunohistochemistry/Fluorescence-Based Detection

- Stain for Proliferation Markers: Use antibodies against Ki-67 or EdU incorporation. Dormant cells will be negative for these markers [11].

- Stain for Dormancy-Associated Signals: Co-stain for upregulated proteins like p21, p27, or p53, which are associated with cell cycle arrest [12].

- Use a Reporter System: Engineer cancer cells with a fluorescent reporter (e.g., GFP) under the control of a proliferation-specific promoter (e.g., Ki-67). Dormant cells will not express GFP, allowing for isolation and analysis [8].

Therapeutic Targeting of Dormancy

Problem: My therapeutic is effective against the primary tumor but relapse occurs from dormant cells.

- Strategy 1: "Kill" Strategy: Sensitize dormant cells to immune attack. STING agonists have shown promise in pre-clinical models by activating NK cells to eliminate dormant DTCs [10].

- Strategy 2: "Lock-in" Strategy: Therapeutically enforce dormancy. Target pathways that are essential for reactivation but not for maintenance, aiming to permanently "lock" cells in a harmless dormant state [10]. This requires a deep understanding of the reactivation triggers.

- Strategy 3: Target Dormancy Metabolism: Exploit the metabolic vulnerabilities of dormant cells. Investigate inhibitors of fatty acid oxidation (FAO) or oxidative phosphorylation (OXPHOS) to selectively target their unique metabolic dependencies [13].

Predictive Modeling & Future Directions

How can we improve predictability of metastatic behavior?

Emerging computational approaches are key to forecasting tumor progression.

- Reaction-Diffusion Models: These mathematical models simulate the spatiotemporal growth of tumors as a function of cell proliferation and diffusion rates. They can be initialized with patient MRI data to predict glioma growth and treatment response [14].

- Hypothesis-Driven "Grammar" for Modeling: A new approach uses a plain-language "hypothesis grammar" to build digital representations of cellular ecosystems. By combining this with genomic data from patient samples, researchers can simulate how cancer and immune cells interact over time, creating a "digital twin" to predict individual patient responses to therapies like immunotherapy [15].

Diagram: Predictive Modeling Workflow for Tumor Behavior. This workflow integrates multi-faceted patient data into computational models to run simulations and generate predictions about future tumor dynamics, including the risk of dormancy escape.

What are the key future research directions?

- Defining the Dormancy "Niche": Precisely characterize the molecular and cellular components of the microenvironment that house and protect dormant cells [10] [8].

- Understanding Reactivation Triggers: Identify the systemic and local signals (e.g., chronic inflammation, aging) that awaken DTCs, which is critical for intervention [8] [13].

- Developing Dormancy-Specific Biomarkers: Discover biomarkers to detect the presence of dormant cells or predict the risk of recurrence, enabling early intervention [12].

- Combination Therapies: Design treatments that simultaneously target proliferating cells and dormant populations, such as combining standard chemotherapy with dormancy-metabolism disruptors or immune-stimulating agents [10] [12] [13].

Genetic and Epigenetic Landscapes Underlying Tumor Heterogeneity and Plasticity

This technical support center provides FAQs and troubleshooting guides to help researchers address common experimental challenges in the study of tumor heterogeneity and plasticity. The content is framed within the broader thesis that improving the predictability of emergent tumor behavior requires a deep, mechanistic understanding of the interconnected genetic and epigenetic landscapes.

Frequently Asked Questions (FAQs)

Tumor heterogeneity arises from both genetic and non-genetic sources. Key drivers include:

- Genetic Alterations: Accumulation of DNA mutations (e.g., in oncogenes like KRAS or tumor suppressor genes like TP53) leads to subpopulations of cells with distinct genotypes [16].

- Non-genetic Cell States: Within a tumor, diverse cell states coexist, maintained by specific chromatin landscapes and gene regulatory networks. These states include cancer stem cells (CSCs) which are associated with therapy resistance and tumor progression [17] [18].

- Cell Plasticity: Cancer cells can adapt to stresses, like therapy, by transitioning between states. This phenomenon is a major source of drug-resistant adaptation and is heavily influenced by epigenetic remodeling, such as histone modifications [17].

Experimental Consideration: To model this, move beyond bulk sequencing. Utilize single-cell RNA sequencing (scRNA-seq) to deconvolute the cellular composition of the tumor microenvironment (TME), identifying distinct neoplastic, immune, and stromal subpopulations [19]. For spatial context, integrate spatial transcriptomics to understand how these subpopulations are organized and interact [19] [20].

How does the epigenome directly influence the DNA damage response and repair pathway choice?

The chromatin state is a significant factor in DNA damage and repair, creating a bidirectional relationship:

- Epigenome Guides Repair: The spatial mapping of DNA double-strand breaks (DSBs) is not random. DSBs are enriched in regions with specific epigenetic marks, such as those for transcriptionally active genes (H3K4me2/3) or enhancers (H3K27ac) [17]. Furthermore, cell identity safeguards like the Polycomb group complexes (PRC1 and PRC2) have a dual role. For example, the PRC1 component BMI1 deposits H2AK119ub at DNA lesions to promote repair via Homologous Recombination (HR), a pathway often associated with CSCs and therapy resistance [17].

- Repair Reshapes Epigenome: Conversely, the DNA repair process itself can induce chromatin changes. DNA damage and repair can reshape chromatin organization, altering intracellular signaling pathways and influencing cell plasticity and adaptive capacity [17].

Experimental Consideration: When studying DNA damage response, profile histone modifications (e.g., H3K27me3, H2AK119ub) before and after inducing damage. Inhibition of epigenetic regulators like EZH2 (a component of PRC2) can be used to test their functional role in repair and cell survival [17].

Our single-cell RNA-seq data is highly complex. How can we better predict dynamic cell state transitions from this static data?

Static snapshots from scRNA-seq can be leveraged to predict dynamics through computational modeling.

- Pseudotime Analysis: This computational method uses scRNA-seq data to order cells along a hypothetical continuum of a biological process, such as differentiation or state transition. For instance, in breast cancer, pseudotime analysis can reveal tumor subpopulations that occupy early differentiation states [19].

- Digital Twin Framework: A cutting-edge approach combines genomics data with computational modeling to simulate cell activity over time. Researchers can use a "hypothesis grammar" to build digital representations of biological systems using plain-language sentences, creating in silico models to test hypotheses about cell behavior and treatment response [21].

Experimental Consideration: Use trajectory inference algorithms (e.g., Monocle, PAGA) on your scRNA-seq data to reconstruct potential cell state transitions. For more complex, predictive simulations, explore computational frameworks that allow for the creation of agent-based models informed by your genomic data [21].

Troubleshooting Guides

Guide 1: Addressing Challenges in scRNA-seq to Decode Tumor Microenvironment Heterogeneity

Problem: Cell type annotation is inconsistent, and rare cell populations are missed.

- Solution & Protocol:

- Comprehensive Marker Gene Panels: Do not rely on a single marker. Annotate clusters using validated, canonical gene sets for major cell types [19]:

- Neoplastic Epithelial: EPCAM, KRT18, KRT19, CDH1

- T cells: CD3D, CD3E, CD3G

- Myeloid cells: LYZ, CD68, FCGR3A

- Fibroblasts: DCN, THY1, COL1A1

- Secondary Clustering: After initial annotation, re-cluster major cell types (e.g., all T cells or all fibroblasts) to uncover transcriptionally distinct subtypes that may have functional significance [19] [20].

- Cross-Reference with Pan-Cancer Atlases: Validate your findings against established pan-cancer single-cell atlases that have identified 70+ shared cell subtypes across cancer types. This helps confirm rare populations and their co-occurrence patterns [20].

- Comprehensive Marker Gene Panels: Do not rely on a single marker. Annotate clusters using validated, canonical gene sets for major cell types [19]:

Problem: Technical variability confounds biological signals.

- Solution: Implement rigorous batch correction methods (e.g., Harmony, Seurat's CCA) during data integration, especially when pooling data from multiple patients [19].

Guide 2: Resolving Issues in Linking Epigenetic States to Functional Plasticity

Problem: An observed epigenetic mark does not correlate with expected gene expression or phenotype.

- Solution & Protocol:

- Multi-Omics Integration: Profile the epigenome and transcriptome simultaneously from the same cells (e.g., scATAC-seq + scRNA-seq) or from parallel samples from the same tumor. This directly links chromatin accessibility or histone marks to regulatory networks.

- Functional Validation via Perturbation: Use small molecule inhibitors or CRISPR-based epigenetic editing to directly manipulate the epigenetic regulator in question (e.g., an EZH2 inhibitor) [17]. Then, assay for:

Problem: Difficulty in tracking plastic cell state transitions in real-time.

- Solution: Employ lineage tracing technologies in vitro or in vivo. Combine with barcoding strategies to clonally track daughter cells and their state transitions in response to therapeutic pressure.

Key Signaling Pathways and Molecular Interplay

The core relationship between DNA damage, epigenetics, and tumor heterogeneity can be visualized as a dynamic, self-reinforcing cycle. The following diagram, generated from the DOT script below, illustrates this critical interplay.

Diagram 1: The Interplay of DNA Damage, Epigenetics, and Heterogeneity. This cycle shows how DNA damage induces epigenetic changes that drive heterogeneity and resistance, which in turn create conditions for further DNA damage.

The diagram above shows the core feedback loop. The following workflow details the experimental approach for investigating these relationships using modern multi-omics technologies.

Diagram 2: Multi-Omics Experimental Workflow. A recommended pipeline integrating single-cell, spatial, and epigenetic data with computational modeling to achieve predictive insights.

Research Reagent Solutions

The table below summarizes key reagents and their applications for studying tumor heterogeneity and plasticity.

| Reagent / Material | Primary Function | Example Application in Research |

|---|---|---|

| Single-Cell RNA-seq Kits | Profiling transcriptomes of individual cells to map cellular heterogeneity. | Identifying 15+ distinct cell clusters in the breast cancer TME, including neoplastic, immune, and stromal populations [19]. |

| Spatial Transcriptomics Slides | Capturing gene expression data within the two-dimensional spatial context of a tissue section. | Visualizing region-specific cell distribution and confirming the co-localization of immune-reactive cell subtypes in tertiary lymphoid structures [19] [20]. |

| HDAC / HMT Inhibitors | Chemical inhibition of histone deacetylases (HDACs) or histone methyltransferases (HMTs) to alter the epigenetic landscape. | Testing the role of specific histone marks (e.g., H3K27me3) in drug tolerance and cell state transitions [17]. |

| CRISPR-based Epigenetic Editors | Targeted activation or repression of genes without altering the DNA sequence. | Functionally validating the role of specific enhancers or promoters in maintaining cancer stem cell identity and plasticity [16]. |

| CSC Marker Antibodies | Isolation and identification of cancer stem cell populations via FACS or immunohistochemistry. | Enriching for CD44+, CD133+, or ALDH1+ cells to study their enhanced DNA repair capacity and therapy resistance [17] [23]. |

Recent studies provide quantitative insights into the cellular composition of tumors and the distribution of specific subtypes. The table below consolidates key findings from pan-cancer and breast cancer-specific analyses.

| Cancer Type / Scope | Key Quantitative Finding | Clinical/Therapeutic Association |

|---|---|---|

| Pan-Cancer Atlas (9 cancer types) | Identification of 70 pan-cancer single-cell subtypes within the TME. Discovery of 2 TME hubs of co-occurring, spatially co-localized subtypes [20]. | Hub abundance correlates with early and long-term response to immune checkpoint blockade (ICB) therapy [20]. |

| Breast Cancer (BRCA) | scRNA-seq revealed 15 major cell clusters and 7 transcriptionally distinct tumor epithelial subpopulations [19]. | SCGB2A2+ neoplastic cells (enriched in low-grade tumors) display a distinct lipid metabolism phenotype [19]. |

| Breast Cancer (BRCA) | Low-grade tumors paradoxically show enriched stromal/immune subtypes (e.g., CXCR4+ fibroblasts, IGKC+ myeloid cells) linked to reduced immunotherapy responsiveness despite favorable clinical features [19]. | Highlights the complex, non-linear relationship between immune cell presence and therapy response. |

The Tumor Microenvironment (TME) as a Key Modulator of Cancer Cell Behavior

Frequently Asked Questions (FAQs)

Q1: Why do my in vitro drug sensitivity results fail to predict in vivo therapeutic outcomes? A common issue is the oversimplification of the tumor model. Traditional 2D cell cultures lack the complex cellular and non-cellular components of the TME that significantly influence cancer cell behavior and drug response [24]. The TME includes cancer-associated fibroblasts (CAFs), immune cells, endothelial cells, and the extracellular matrix (ECM), all of which contribute to creating a pro-tumorigenic, immunosuppressive, and therapy-resistant environment [25] [26]. To improve predictability, consider adopting more physiologically relevant models such as patient-derived organoids or 3D co-culture systems that incorporate key stromal cells.

Q2: How does hypoxia invalidate the assumptions of my standard cell proliferation and cytotoxicity assays? Hypoxia, a hallmark of most solid tumors, triggers profound molecular changes in cancer cells. It activates hypoxia-inducible factors (HIFs), which in turn drive metabolic reprogramming (like the Warburg effect), enhance invasive potential, and promote resistance to chemotherapy and radiotherapy [27] [28]. Standard assays performed under normoxic conditions (21% O₂) do not capture these critical adaptations. To troubleshoot, incorporate hypoxic chambers (maintaining 1-5% O₂) into your experimental workflow and utilize HIF-pathway reporters or inhibitors to validate the role of hypoxia-specific signaling in your findings.

Q3: What are the major stromal cell types I should account for when modeling tumor behavior? The most impactful stromal cells within the TME are:

- Cancer-Associated Fibroblasts (CAFs): They remodel the ECM, promote angiogenesis, and secrete growth factors/cytokines (e.g., TGF-β) that enhance tumor cell invasion and metastasis [25] [24].

- Tumor-Associated Macrophages (TAMs), particularly the M2 phenotype: They suppress immune responses, foster angiogenesis, and aid in metastasis [25] [26].

- Tumor Endothelial Cells (TECs): They form disorganized and leaky blood vessels, leading to aberrant perfusion, hypoxia, and impaired drug delivery [24]. Neglecting these interactions in your model can lead to inaccurate predictions of tumor growth and treatment response.

Q4: My engineered T cells show potent activity in flow cytometry but fail to infiltrate and kill solid tumors. What TME factors should I investigate? This is a classic problem of the TME acting as a physical and chemical barrier. Key factors to investigate include:

- Physical Barrier: The altered ECM, often stiffened and denser due to CAF activity, can physically impede T-cell infiltration [25] [26].

- Chemical Barrier: The TME is often acidic due to high lactate production from glycolytic tumor cells (Warburg effect). This low pH can directly suppress the cytotoxic function of T cells and other immune effector cells [27] [26].

- Immunosuppressive Signals: Checkpoint molecules like PD-L1 on tumor and stromal cells engage with PD-1 on your engineered T cells, inducing an exhausted, non-functional state [26].

Experimental Protocols & Troubleshooting Guides

Protocol: Establishing a 3D Co-Culture Spheroid Model to Study TME-Mediated Drug Resistance

Objective: To create a more predictive in vitro model that recapitulates the cell-cell interactions and gradient conditions of the in vivo TME.

Materials:

- Cell Lines: Your cancer cell line of interest, human foreskin fibroblast line (e.g., BJ-5ta) or patient-derived CAFs, and THP-1 monocyte line (to differentiate into macrophages).

- Research Reagent Solutions: See Table 1.

- Equipment: Low-attachment U-bottom 96-well plates, standard cell culture incubator, hypoxic chamber (or gas-controlled incubator), confocal microscope.

Methodology:

- CAF Conditioning: Culture fibroblasts in the presence of conditioned medium from your cancer cell line for 7-10 days to induce a CAF-like phenotype. Validate by increased expression of α-SMA and FAP via western blot or immunofluorescence [25] [24].

- Spheroid Formation:

- Prepare a single-cell suspension containing your cancer cells and conditioned CAFs at a desired ratio (e.g., 1:1). Include monocytes if modeling immune interaction.

- Seed 200 µL of the cell suspension (e.g., at 5,000 cells total per well) into a low-attachment U-bottom 96-well plate.

- Centrifuge the plate at 300 x g for 3 minutes to aggregate cells at the well bottom.

- Culture for 72-96 hours to allow for compact spheroid formation.

- Drug Treatment & Hypoxia Challenge:

- Once spheroids are formed, add the drug of interest at various concentrations.

- Immediately transfer one set of plates to a hypoxic chamber (1% O₂, 5% CO₂, balanced N₂). Maintain another set under normoxia as a control.

- Incubate for 48-72 hours.

- Viability Assessment:

- Use a ATP-based luminescence viability assay (e.g., CellTiter-Glo 3D). Transfer spheroids to a white-walled plate, add reagent, and lyse on an orbital shaker before reading luminescence.

- For spatial resolution of cell death, perform live/dead staining (e.g., Calcein AM for live cells, Propidium Iodide for dead cells) and image using confocal microscopy.

Troubleshooting:

- Problem: Spheroids are irregular in shape and size.

- Solution: Ensure a consistent single-cell suspension at seeding. Use plates specifically designed for spheroid formation and confirm centrifugation steps are uniform.

- Problem: No difference in drug response between 2D and 3D models.

- Solution: Optimize the stromal cell-to-cancer cell ratio. Extend the pre-treatment incubation time to allow for stronger ECM deposition and cell-cell contact-mediated survival signaling.

Protocol: Predictive Drug Screening Using Patient-Derived Cells and Machine Learning

Objective: To functionally identify patient-specific drug sensitivities by screening a small panel of drugs and using a machine learning model to impute responses to a larger library.

Materials:

- Patient-Derived Cells: From biopsies, cultivated as primary cultures or organoids [29].

- Drug Libraries: A full library (e.g., 200+ compounds) and a smaller, strategically selected probing panel (e.g., 30 drugs).

- Equipment: High-throughput screening systems, automated liquid handlers, plate readers.

- Software: Machine learning environment (e.g., Python/R) with random forest or similar algorithms.

Methodology:

- Generate Historical Dataset: Screen a diverse set of patient-derived cell lines against the full drug library to establish a historical database of dose-response curves [29].

- Screen New Patient Sample: For a new patient-derived cell line, screen only against the small, predefined 30-drug probing panel.

- Machine Learning Imputation:

- Train a random forest model (with 50+ trees) on the historical dataset to learn the relationships between drug responses.

- Input the new cell line's response profile from the 30-drug panel into the trained model.

- The model will then predict the new cell line's sensitivity to all other drugs in the full library.

- Validation: Experimentally validate the top hits (e.g., top 10 predicted most active drugs) from the imputation on the new cell line.

Troubleshooting:

- Problem: The model's predictions are inaccurate.

- Solution: Curate the historical training set to include cell lines that are biologically relevant (e.g., same tissue of origin or genetic background). Ensure the probing panel drugs are mechanistically diverse to capture a wide range of cellular phenotypes [29].

- Problem: Patient-derived cells have low viability or proliferation rate for screening.

- Solution: Optimize the culture conditions using specialized media and extracellular matrix coatings (e.g., Matrigel). Use whole-tumour cell cultures that include native TME cells, which can be more robust than purified cancer cells alone [29].

Data Presentation

Table 1: Key Research Reagent Solutions for TME Research

| Reagent / Material | Function / Target | Key Application in TME Research |

|---|---|---|

| Recombinant TGF-β | Activates SMAD signaling pathway | To induce Epithelial-Mesenchymal Transition (EMT) in cancer cells and differentiate fibroblasts into CAFs [25] |

| Dimethyloxallyl Glycine (DMOG) | Inhibits HIF-PHDs, stabilizing HIF-α | To chemically mimic a hypoxic response in cells, even under normoxic conditions [27] |

| HIF-2α Inhibitor (e.g., PT2385) | Selectively targets HIF-2α for degradation | To investigate the specific role of HIF-2α in chronic hypoxia and validate it as a therapeutic target [27] [28] |

| Collagenase Type I/IV | Degrades collagen types I and IV | To digest tumor tissue for the isolation of primary cells, including those from the stroma [25] |

| Anti-PD-1/PD-L1 Antibody | Blocks PD-1/PD-L1 immune checkpoint | To reverse T-cell exhaustion in co-culture assays with tumor-infiltrating lymphocytes (TILs) [26] |

| CAF-Conditioned Medium | Contains secretome from activated CAFs | To study the paracrine effects of CAFs on cancer cell proliferation, migration, and drug resistance [25] [24] |

Table 2: Quantifying Hypoxic Influence on Cancer Cell Behavior

| Cellular Process | Normoxia (21% O₂) | Acute Hypoxia (1% O₂, 24h) | Chronic Hypoxia (0.5% O₂, 72h) | Key Mediators & Notes |

|---|---|---|---|---|

| Glucose Uptake | Baseline | 2-3 fold increase | 3-5 fold increase | Measured via 2-NBDG assay; driven by HIF-1α upregulation of GLUT1 [27] [28] |

| Lactate Production | Baseline | 3-4 fold increase | 5-8 fold increase | Warburg effect; extracellular pH drops to ~6.5-6.8, suppressing immune cell function [27] [26] |

| Invasive Capacity | Baseline | 1.5-2 fold increase | 3-4 fold increase | Matrigel invasion assay; linked to HIF-induced MMP secretion and EMT [25] [28] |

| Radiation IC₅₀ | 2 Gy | 4-6 Gy | 6-8 Gy | Hypoxia confers radioresistance by reducing ROS-induced DNA damage [28] |

Signaling Pathways and Workflow Diagrams

TME Mediated Metastasis

Predictive Drug Screening

Leveraging AI and Novel Biomarkers for Predictive Modeling

AI-Powered Analysis of Histopathology Images for Prognostic Insight

Frequently Asked Questions & Troubleshooting Guide

This guide addresses common challenges researchers face when developing AI models for prognostic insight from histopathology images.

Q1: My deep learning model for survival prediction is underperforming (AUC < 0.80). What factors should I investigate?

- A: Models for prognostic tasks like survival prediction and treatment design often show lower performance (AUC ~0.80) compared to diagnostic tasks [30]. To improve your model:

- Incorporate Multimodal Data: Enhance your WSIs with clinical data, genomic information, or treatment regimens to create a more comprehensive patient profile [31] [32].

- Verify Annotation Quality: Ensure your ground-truth annotations for outcomes like survival are accurate and consistently defined across your dataset.

- Address Data Variability: Implement strategies to make your model robust to biological, pathological, and technological variations in your slides [33].

Q2: What are the main data-related challenges in AI for histopathology, and how can I mitigate them?

- A: The primary challenges fall into three categories [33]:

- Sample Variation: Manage biological diversity, a wide range of pathological presentations, and technological differences in slide preparation and scanning.

- Mitigation: Use data augmentation techniques and ensure your training data is representative.

- Data Size and Complexity: WSIs are gigapixel-sized, making storage, transfer, and whole-slide analysis computationally difficult.

- Mitigation: Use patch-based analysis or leverage weakly supervised learning methods like Multiple Instance Learning (MIL) that can operate on slide-level labels [30].

- Annotation Scarcity: Expert pathologist annotations are time-consuming and expensive to obtain.

- Mitigation: Explore self-supervised pre-training or utilize emerging vision transformers designed for weakly supervised learning [31] [30].

Q3: Which open-source software is best for analyzing Whole Slide Images (WSIs)?

- A: The best tool depends on your specific task and expertise. Here are some of the most popular open-source options:

| Software | Primary Function | WSI Capability | Skill Level |

|---|---|---|---|

| QuPath [34] | Biomarker analysis, cell detection, tumor analysis | Yes, specifically designed for WSI | Pathologists & researchers (no coding required) |

| ImageJ / Fiji [34] | General biological image analysis, prototyping | Yes, with SlideJ plugin | Researchers (minimal to advanced skills) |

| Ilastik [34] | Interactive pixel-based classification & segmentation | Yes | Researchers (minimal coding skills) |

| CellProfiler [34] | Automated cell identification & analysis | Only when integrated with other tools (e.g., Orbit) | Biologists (no coding required) |

Q4: My model performs well on internal validation but fails on external datasets. How can I improve generalizability?

- A: This is often caused by domain shift (e.g., different scanners, staining protocols, or patient populations) [33].

- Stain Normalization: Apply stain normalization techniques to standardize the color appearance of H&E images across different sources.

- Diverse Training Data: Train your model on multi-institutional datasets that reflect real-world variability [35].

- Data Augmentation: Use aggressive data augmentation during training to simulate variations in color, rotation, and magnification.

- External Validation: Always test your final model on a completely held-out dataset from a different institution to estimate real-world performance [36].

Q5: What are the emerging AI trends in histopathology for predicting tumor behavior?

- A: The field is rapidly evolving beyond basic classification. Key trends include [31] [30]:

- Multimodal Integration: Fusing histopathology with genomic and clinical data for a holistic view [32].

- Explainable AI (XAI): Developing models that provide visual explanations for their predictions, building trust with pathologists. Techniques like GANs can create inherently explainable biomarkers, such as the Multinucleation Index (MuNI) [35].

- Self-Supervised Learning: Leveraging large unlabeled datasets for pre-training, reducing reliance on extensive annotations.

- Vision Transformers: Adopting transformer architectures, which have shown great success in capturing long-range dependencies in WSIs [31] [30].

- Autonomous AI Agents: Developing systems that can chain multiple tools (e.g., a genetic mutation detector + a clinical database) to autonomously reason through complex patient cases [32].

Performance Metrics of AI Models in Histopathology

The following table summarizes the performance of deep learning models across different clinical tasks, based on an analysis of over 1,400 studies (2015-2023) [30].

| Clinical Task | Prevalence in Literature | Reported AUC (Range) | Key Challenges |

|---|---|---|---|

| Diagnosis & Subtyping | 55.1% (Most common) | Up to 96% | Inter-observer variability, granular subclassification |

| Detection | 24.2% | High (Specific data not provided) | Handling large WSI areas, false positives |

| Segmentation & Object Detection | 21.0% | Varies by structure | Pixel-level annotation cost, complex tissue morphology |

| Risk Prediction | 9.2% | Varies by mutation | Linking morphology to genetic events |

| Survival Prediction | 5.9% | ~80% (Lowest) | Integrating treatment regimen data, long-term follow-up |

| Treatment Design | 2.4% | ~80% | Modeling complex treatment-response relationships |

The Scientist's Toolkit: Essential Research Reagents & Materials

This table lists key resources for building and validating AI models in digital pathology.

| Item / Reagent | Function in AI Experiment |

|---|---|

| Haematoxylin & Eosin (H&E) Stained Slides | The foundational data source; provides structural and cytological detail for most deep learning models, comprising ~70% of studies [30]. |

| Immunohistochemistry (IHC) Stained Slides | Enables models to identify specific protein biomarkers (e.g., PD-L1, Ki-67) for segmentation, quantification, and multimodal integration [30]. |

| Public Datasets (e.g., TCGA) | Provide large volumes of WSI data, often with associated clinical and genomic information, for model training and validation [31]. |

| Vision Transformers (ViTs) | A modern neural network architecture effective for slide-level classification by modeling relationships between image patches [30]. |

| Multiple Instance Learning (MIL) | A weakly supervised learning framework that allows model training using only slide-level labels, bypassing the need for extensive patch-level annotations [30]. |

| Generative Adversarial Networks (GANs) | Used for image-to-image translation tasks, such as generating segmentation masks from H&E images in an explainable manner (e.g., for calculating the MuNI) [35]. |

| Digital Slide Storage (DICOM Standard) | The emerging standard file format for WSIs; ensures interoperability, secure storage, and integration with hospital information systems [33]. |

Experimental Protocol: Predicting Prognosis via the Multinucleation Index (MuNI)

This protocol details the methodology for developing an explainable AI-based prognostic biomarker, as demonstrated in p16+ oropharyngeal squamous cell carcinoma (OPSCC) [35].

Objective: To automate the calculation of the Multinucleation Index (MuNI) from H&E-stained whole-slide images (WSIs) for prognostication of disease-free survival (DFS), overall survival (OS), and distant metastasis–free survival (DMFS).

Materials:

- H&E-stained WSIs from a retrospective, multi-institutional cohort of patients with p16+ OPSCC.

- Access to clinical outcome data (DFS, OS, DMFS).

- Computational resources (GPU recommended).

- Image analysis software (e.g., QuPath for initial annotations) [34].

- Deep learning framework (e.g., TensorFlow, PyTorch).

Methodology:

Data Curation & Cohort Definition:

- Acquire WSIs from a large cohort (e.g., N=1094 across 6 institutions) to ensure statistical power and generalizability.

- Collect corresponding clinical and outcome data for all patients.

Model Development with GANs:

- Architecture: Employ a Generative Adversarial Network (GAN) designed for image-to-image translation.

- Training: Train the GAN to translate patches of H&E-stained tumor tissue directly into a binary segmentation mask. In this mask, multinucleated tumor cells are highlighted.

- Input: H&E image patch.

- Output: Segmentation mask where pixels belonging to multinucleated cells are labeled.

Inference & Index Calculation:

- Apply the trained GAN to the entire tumor region on all WSIs in the validation set.

- Calculate the MuNI: For each patient, the MuNI is defined as the ratio of the area of segmented multinucleated tumor cells to the total area of tumor epithelium analyzed.

Statistical Validation:

- Perform multivariable Cox proportional hazards regression analysis to evaluate the MuNI as an independent prognostic factor.

- Covariates: Include standard clinical variables such as age, smoking status, treatment type, and tumor stage (T/N categories).

- Endpoint: The MuNI should be significantly prognostic for DFS, OS, and DMFS (with Hazard Ratios >1.7, as in the original study) [35].

Troubleshooting:

- Poor Segmentation: If the GAN fails to segment multinucleated cells accurately, review and refine the training annotations with a pathologist.

- Lack of Statistical Significance: Ensure the cohort is sufficiently large. Re-evaluate the MuNI calculation to confirm it is accurately capturing the biological signal.

Workflow Visualization

The following diagrams, created with Graphviz, illustrate key signaling pathways, experimental workflows, and logical relationships in this field.

AI Histopathology Prognosis Workflow

MuNI Biomarker Development

Frequently Asked Questions (FAQs)

Q1: What are the key differences between ctDNA and CTCs as liquid biopsy biomarkers?

| Characteristic | Circulating Tumor DNA (ctDNA) | Circulating Tumor Cells (CTCs) |

|---|---|---|

| Origin | DNA fragments released from apoptotic or necrotic tumor cells [37] [38] | Intact cells shed from primary or metastatic tumors into the bloodstream [37] [38] |

| Composition | Short DNA fragments (typically 160-200 base pairs) [38] | Whole cells containing DNA, RNA, and proteins [39] |

| Half-Life | Short (15 minutes to 2.5 hours) [38] | Short (approximately 1-2.5 hours) [37] |

| Primary Analysis | Genomic alterations (mutations, methylation), fragmentation patterns [40] [41] | Cell enumeration, phenotypic characterization, molecular profiling of intact cells [42] [39] |

| Key Advantage | Captures tumor genetic heterogeneity; real-time snapshot of tumor burden [37] [38] | Provides functional information on metastatic potential and therapeutic targets [39] [43] |

Q2: My ctDNA yields are low, even from patients with advanced cancer. What could be the cause?

Low ctDNA yield is a common challenge, often attributed to the biological nature of the tumor. The ctDNA tumor fraction (TF) can vary widely, comprising between 0.01% and 90% of the total cell-free DNA (cfDNA) [38]. Factors influencing this include:

- Tumor Type and Location: Some cancers, such as certain brain tumors, shed less DNA into the bloodstream [40].

- Tumor Burden: Smaller or early-stage tumors naturally release less ctDNA [44].

- Blood Collection and Processing: Use specialized blood collection tubes designed to stabilize nucleated cells and prevent contamination from genomic DNA released by white blood cells. Ensure plasma is separated from blood cells within a few hours of collection to avoid dilution.

Q3: I am isolating CTCs, but the cell viability is poor for downstream culture. How can I improve this?

The method of CTC enrichment significantly impacts cell viability. The Parsortix PC1 system, which enriches CTCs based on size and deformability rather than relying on surface epitopes like EpCAM, is designed to preserve cell viability for subsequent molecular analyses and culture [38]. Immunomagnetic methods that use harsh lysis steps or fixatives can compromise cell membrane integrity and viability. Switching to a gentler, label-free enrichment technology can greatly enhance the success of functional studies and in vitro culture of CTCs.

Q4: How can I distinguish a true tumor-derived mutation from a clonal hematopoiesis signal in my ctDNA data?

Clonal hematopoiesis of indeterminate potential (CHIP) is a major source of false positives, where mutations from blood cells are detected in cfDNA. To mitigate this:

- Paired White Blood Cell Sequencing: The most robust method is to sequence the patient's white blood cells (e.g., from the buffy coat) in parallel with the plasma cfDNA. Any mutation found in both is likely of hematopoietic origin [38].

- Methylation-Based Analysis: Some advanced platforms, like an updated Guardant360 assay, incorporate methylation-based analysis of ctDNA, which can help minimize signal contamination from clonal hematopoiesis [38].

- Bioinformatic Filtering: Use databases of common CHIP mutations to filter out suspect variants.

Troubleshooting Guides

Issue 1: Low Sensitivity for Early-Stage Cancer Detection

Problem: Liquid biopsy fails to detect ctDNA or CTCs in patients with early-stage disease.

| Potential Cause | Solution | Technical Tip |

|---|---|---|

| Low abundance of tumor-derived material in blood. | Use highly sensitive detection methods. | Employ tumor-informed ctDNA sequencing (e.g., Signatera test), which designs personalized assays based on the patient's tumor tissue genotype to track minimal residual disease (MRD) with high sensitivity [38] [45]. |

| Biomarker is present but not captured by the assay. | Utilize multi-analyte approaches. | Combine ctDNA mutation analysis with other markers like cfDNA fragmentomics or methylation patterns. Machine learning analysis of genome-wide cfDNA fragmentation patterns has shown promise for detecting early-stage cancers, including hard-to-detect types like brain cancer [40] [41]. |

| CTC heterogeneity; epithelial marker-based capture misses cells that have undergone EMT. | Use size-based or marker-independent CTC enrichment platforms. | Platforms like the Parsortix PC1 system, which captures CTCs based on size and deformability, can isolate a broader range of CTC phenotypes, including those with low or no EpCAM expression [38]. |

Issue 2: Handling Tumor Heterogeneity and Clonal Evolution

Problem: A single liquid biopsy does not reflect the full genetic diversity of the tumor, leading to an incomplete picture for therapy selection.

Solutions:

- Implement Serial Monitoring: The key advantage of liquid biopsy is its ability to monitor tumor evolution in real-time [42] [37]. Collect blood samples at multiple timepoints: before treatment (baseline), during treatment to monitor response, and at progression to identify emerging resistance mechanisms.

- Combine with Tissue Biopsy: Use an initial tissue biopsy to define the core mutational landscape, then use liquid biopsies to track how this landscape changes under therapeutic pressure [45].

- Multi-Omics Analysis: Go beyond genomic sequencing. On CTCs, perform single-cell RNA sequencing to understand transcriptional heterogeneity and identify subpopulations with metastatic potential or resistance signatures [39]. For ctDNA, analyze epigenetic modifications like DNA methylation, which can provide information on the tumor's tissue of origin and biological state [41].

Experimental Protocols for Key Applications

Protocol 1: Monitoring Treatment Response via ctDNA Dynamics

Objective: Quantify changes in ctDNA levels to assess early response to therapy.

Materials:

- Cell-free DNA BCT or Streck tubes for blood collection.

- DNA extraction kit for plasma (e.g., QIAamp Circulating Nucleic Acid Kit).

- Next-generation sequencing platform (e.g., for Guardant360 CDx or FoundationOne Liquid CDx) or droplet digital PCR (ddPCR) for target-specific monitoring [38].

Method:

- Blood Collection & Processing: Collect 10-20 mL of peripheral blood in stabilizing tubes. Centrifuge twice (first at 1600 x g for 10 min to isolate plasma, then at 16,000 x g for 10 min to remove residual cells) within 2-6 hours of draw [38] [43].

- cfDNA Extraction: Extract cfDNA from 2-5 mL of plasma according to the manufacturer's protocol. Quantify using a fluorometer.

- Analysis:

- For NGS: Use a validated panel like Guardant360 CDx or FoundationOne Liquid CDx to sequence and calculate the ctDNA tumor fraction (TF) [38].

- For ddPCR: If a known mutation is tracked, design assays to quantify mutant allele frequency.

- Interpretation: A rapid decline in ctDNA TF or mutant allele frequency after treatment initiation correlates with a positive therapeutic response. A rising level indicates potential resistance [37] [38].

Protocol 2: Isolating and Molecularly Profiling CTCs

Objective: Enrich, enumerate, and perform genomic analysis of CTCs from whole blood.

Materials:

- EDTA tubes for blood collection.

- CTC enrichment platform (e.g., CellSearch for EpCAM-positive CTCs or Parsortix PC1 for size-based, EpCAM-independent capture) [38].

- Lysis buffer for nucleic acid extraction or fixative for immunocytochemistry.

Method:

- Blood Collection & Enrichment:

- CellSearch: Uses immunomagnetic beads coated with anti-EpCAM antibodies to capture CTCs. Subsequent staining with cytokeratin (CK) antibodies, CD45 (leukocyte marker), and DAPI allows for automated enumeration [38].

- Parsortix: Uses a microfluidic cassette to capture CTCs based on their size and deformability. This method preserves cell viability, allowing for subsequent RNA sequencing, FISH, or protein profiling [38].

- Downstream Analysis:

- Genomic DNA: Lyse isolated CTCs and perform whole genome amplification (WGA) followed by NGS to identify mutations.

- RNA: Extract RNA for transcriptomic analysis (e.g., single-cell RNA-seq) to study gene expression profiles and heterogeneity [39].

- Protein: Perform immunocytochemistry to characterize protein biomarkers on the CTC surface or cytoplasm.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example Use Case |

|---|---|---|

| Cell-free DNA BCT Tubes | Chemical stabilization of nucleated blood cells for up to 14 days, preventing release of genomic DNA and preserving the native cfDNA profile. | Ensures pre-analytical stability for multi-site clinical trials where immediate plasma processing is not feasible [43]. |

| EpCAM-coated Magnetic Beads | Immunoaffinity capture of epithelial-derived CTCs from whole blood. | Standardized CTC enumeration in metastatic breast, prostate, and colorectal cancer using the CellSearch system [38]. |

| Microfluidic Cassette (Parsortix) | Label-free, size-based isolation of CTCs from whole blood based on their larger size and rigidity compared to leukocytes. | Captures CTC populations that have undergone epithelial-to-mesenchymal transition (EMT) and lost EpCAM expression, enabling broader phenotypic analysis [38]. |

| Bisulfite Conversion Kit | Chemical treatment of DNA that converts unmethylated cytosines to uracils, while leaving methylated cytosines unchanged. | Enables detection of cancer-specific hypermethylation patterns in ctDNA (e.g., CDKN2A, RASSF1A) for early detection and monitoring [41]. |

| Tumor-Informed ctDNA Assay | Custom-built, PCR-based NGS assay designed to track a set of 16-50 somatic mutations unique to an individual's tumor (from prior tissue sequencing). | Ultra-sensitive detection of Molecular Residual Disease (MRD) and recurrence in solid tumors (e.g., via the Signatera test) [38] [45]. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What is the primary challenge when integrating unmatched multi-omics data from different cells? The core challenge is the absence of a direct biological anchor, like the same cell, to link the different data types. Instead, computational methods must project cells from different modalities into a shared, co-embedded space using manifold alignment or other machine learning techniques to find commonalities [46].

Q2: My multi-omics data is "matched" from the same cells. Which integration tools are most suitable? For matched data, several powerful tools are available. Seurat v4 is effective for integrating mRNA, protein, and accessible chromatin data [46]. MOFA+ uses factor analysis to integrate data types like mRNA, DNA methylation, and chromatin accessibility, and is excellent for identifying the principal sources of variation across your datasets [46].

Q3: Why is there often a poor correlation between transcriptomics and proteomics data in my experiments? This is a common challenge due to biological complexity. A highly transcribed gene may not always result in abundant protein due to post-transcriptional regulation, varying protein degradation rates, and the limited sensitivity of some proteomic methods, which might miss proteins even when their RNA is detected [46].

Q4: Which public repositories are essential for accessing multi-omics data for cancer research? Key repositories include The Cancer Genome Atlas (TCGA), which offers data for over 33 cancer types [47], and the International Cancer Genomics Consortium (ICGC), which focuses on genomic alterations [47]. The Clinical Proteomic Tumor Analysis Consortium (CPTAC) provides complementary proteomics data for TCGA cohorts [47].

Q5: How can I visually explore my integrated multi-omics datasets? Tools like PaintOmics 3 are web resources for pathway analysis and visualization [48]. Another approach involves using organism-scale metabolic network diagrams that paint different omics data (e.g., transcriptomics as reaction arrow color, proteomics as arrow thickness) onto different visual channels of the same chart [48].

Troubleshooting Common Experimental Issues

Problem: Technical Variance Obscures Biological Signals A frequent issue is high technical noise from different sequencing platforms or batch effects overwhelming true biological variation.

| Troubleshooting Step | Action | Objective |

|---|---|---|

| Pre-processing | Apply platform-specific normalization (e.g., CPM for RNA-Seq). | Remove technology-driven noise. |

| Batch Correction | Use methods like ComBat or tools with built-in correction (e.g., Seurat). | Minimize non-biological variation from different experimental runs. |

| Feature Selection | Focus on highly variable genes/proteins and known biological pathways. | Reduce dimensionality and highlight relevant features. |

Problem: Disconnect Between Omics Layers As noted in the FAQs, a direct correlation between RNA and protein abundance is often not present.

| Troubleshooting Step | Action | Objective |

|---|---|---|

| Causal Modeling | Use tools like CellOracle to model gene regulatory networks [46]. | Understand if chromatin accessibility (genomics) logically explains transcriptomic changes. |

| Pathway Enrichment | Perform over-representation analysis on each dataset separately, then compare. | Identify convergent biological pathways across omics layers. |

| Prior Knowledge Integration | Employ tools like GLUE that use prior biological knowledge to anchor features [46]. | Leverage established relationships to guide integration. |

Problem: Sparse or Missing Data in Specific Modalities This is particularly common in proteomics, which may profile far fewer features than transcriptomics.

| Troubleshooting Step | Action | Objective |

|---|---|---|

| Mosaic Integration | Use tools like StabMap [46] or Cobolt [46] if you have datasets with partial overlap. | Leverage shared modalities across sample sets to create a unified representation. |

| Imputation | Apply careful, modality-specific data imputation (e.g., MAGIC for RNA-seq). | Fill in plausible values for missing data, acknowledging inherent uncertainty. |

Key Data Repositories

The table below summarizes essential public data repositories for multi-omics cancer research.

| Repository Name | Primary Focus | Available Data Types |

|---|---|---|

| The Cancer Genome Atlas (TCGA) | Pan-Cancer Atlas | RNA-Seq, DNA-Seq, miRNA-Seq, SNV, CNV, DNA Methylation, RPPA [47] |

| Clinical Proteomic Tumor Analysis Consortium (CPTAC) | Cancer Proteomics | Proteomics data corresponding to TCGA tumor samples [47] |

| International Cancer Genomics Consortium (ICGC) | Cancer Genomics | Whole genome sequencing, somatic and germline mutation data [47] |

| Cancer Cell Line Encyclopedia (CCLE) | Cancer Cell Lines | Gene expression, copy number, sequencing data, drug response profiles [47] |

Multi-Omics Integration Tools

This table provides a selection of computational tools for different integration scenarios.

| Tool Name | Year | Integration Capacity | Ideal Use Case |

|---|---|---|---|

| MOFA+ [46] | 2020 | mRNA, DNA Methylation, Chromatin Accessibility | Identifying latent factors of variation across matched omics data. |

| Seurat v4 [46] | 2020 | mRNA, Spatial, Protein, Chromatin | Matched integration and weighted nearest-neighbor analysis. |

| GLUE [46] | 2022 | Chromatin, DNA Methylation, mRNA | Unmatched integration using prior knowledge graphs. |

| StabMap [46] | 2022 | mRNA, Chromatin Accessibility | Mosaic integration of datasets with partial feature overlap. |

Experimental Protocols & Methodologies

Protocol 1: A Basic Workflow for Vertical (Matched) Multi-Omics Integration

This protocol outlines the steps for integrating genomics, transcriptomics, and proteomics data obtained from the same tumor sample set.

1. Data Acquisition & Pre-processing

- Genomics (e.g., WGS/WES): Process raw FASTQ files. Align to a reference genome (e.g., hg38). Call somatic variants and copy number alterations using tools like GATK.

- Transcriptomics (e.g., RNA-Seq): Align reads and generate a count matrix. Normalize using a method like DESeq2 or log2(CPM + 1).

- Proteomics (e.g., LC-MS/MS): Identify and quantify peptides and proteins. Normalize protein abundance data.

2. Data Concatenation & Batch Effect Correction

- Objective: Create a unified data matrix while minimizing non-biological technical noise.

- Method: Use the

IntegrateDatafunction in Seurat or similar functions in other tools to identify "anchors" between datasets and correct for batch effects.

3. Joint Dimensionality Reduction & Analysis

- Objective: Visualize and explore the integrated data to identify patterns, such as novel tumor subtypes.

- Method: Apply MOFA+ to decompose the multi-omics data into a set of latent factors. These factors represent the dominant sources of biological and technical variation across all data layers. The resulting factors can be used for downstream clustering and visualization.

Protocol 2: A Workflow for Diagonal (Unmatched) Data Integration

This protocol is for integrating data from different sets of cells, a common scenario when combining public datasets.

1. Individual Modality Processing

- Process each omics dataset (e.g., a transcriptomics matrix and a separate ATAC-seq matrix) independently through their standard pre-processing and dimensionality reduction pipelines (e.g., PCA).

2. Manifold Alignment & Co-Embedding

- Objective: Project the cells from different modalities into a shared low-dimensional space where cells with similar biological states are close, despite originating from different datasets.

- Method: Use a tool like GLUE (Graph-Linked Unified Embedding), which employs a graph-linked variational autoencoder. GLUE uses a prior biological knowledge graph to link features across omics, guiding the alignment process to be more biologically meaningful [46].

3. Joint Clustering & Subtype Identification

- Objective: Identify cell clusters (e.g., tumor subpopulations) based on the integrated, co-embedded space.

- Method: Perform clustering (e.g., Louvain, Leiden) on the shared latent space derived from GLUE. These clusters represent cell groups defined by coordinated patterns across all input omics modalities.

Visualizing Multi-Omics Workflows & Signaling

Diagram 1: Multi-Omics Fusion Workflow

Diagram 2: Simplified EGFR Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Multi-Omics Experiment |

|---|---|

| TCGA Tumor Sample RNA & DNA | Benchmarking and validation using well-characterized, publicly available multi-omics data from a large number of patients [47]. |

| CPTAC Proteomics Data | Provides corresponding protein abundance data for TCGA samples, enabling true tri-omics integration (Genomics, Transcriptomics, Proteomics) [47]. |

| Single-Cell Multi-Omics Kit (e.g., CITE-seq) | Allows for simultaneous measurement of transcriptome and surface proteins from the same single cell, generating perfectly matched data for vertical integration [46]. |

| MOFA+ Software Package | A key computational reagent that performs factor analysis to decompose multiple omics data sets and identify the principal sources of variation [46]. |

| Seurat v4/v5 R Toolkit | An essential analytical suite for the integration and analysis of multimodal single-cell data, including matched and unmatched integration strategies [46]. |

Radiomics is a rapidly developing field in oncology that converts medical images from modalities like CT, MRI, and PET into mineable, high-dimensional data [49]. This process extracts quantitative features that can reveal hidden patterns and complex tumor characteristics which are imperceptible to the human eye [49]. Within the context of predicting emergent tumor behavior, radiomics provides a non-invasive method to understand tumor heterogeneity, phenotype, and the tumor microenvironment, thereby offering valuable biomarkers for prognosis prediction and personalized treatment planning [49].

The typical radiomics workflow involves several key stages: image acquisition and preprocessing, tumor segmentation, feature extraction, feature selection, and model building for correlation with clinical outcomes [50]. This technical support guide addresses common challenges and provides troubleshooting advice for researchers and drug development professionals implementing this workflow to improve the predictability of tumor behavior in their studies.