AI-Powered Predictive Analytics in Neurological Disorders: Transforming Early Diagnosis and Precision Medicine

This article explores the transformative role of artificial intelligence (AI) and predictive analytics in the diagnosis of neurological disorders.

AI-Powered Predictive Analytics in Neurological Disorders: Transforming Early Diagnosis and Precision Medicine

Abstract

This article explores the transformative role of artificial intelligence (AI) and predictive analytics in the diagnosis of neurological disorders. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how machine learning and deep learning models are revolutionizing early detection, prognostic assessment, and personalized treatment strategies for conditions like Alzheimer's and Parkinson's disease. The scope encompasses foundational concepts, advanced methodological applications, critical challenges in model optimization and clinical translation, and rigorous validation frameworks. By synthesizing recent advancements and identifying future trajectories, this review serves as a strategic guide for accelerating the integration of data-driven diagnostics into neurological research and clinical practice.

The New Paradigm: Foundations of Predictive Analytics in Neurology

Defining the Shift from Reactive to Proactive Neurological Care

The management of neurological disorders (NDs) is undergoing a fundamental transformation, shifting from a reactive model that addresses symptoms after clinical manifestation to a proactive framework focused on early prediction and intervention. This paradigm shift is critically important for conditions like Alzheimer's disease (AD) and brain tumors (BTs), where early treatment can substantially minimize disease spread and improve quality of life [1]. Traditional diagnostic methods reliant on subjective human interpretation of medical images like Magnetic Resonance Imaging (MRI) present significant limitations, including diagnostic inaccuracy, inter-rater variability, and the frequent failure to detect subtle early-stage anatomical changes [2] [1]. The emergence of predictive analytics, powered by advanced machine learning (ML) and deep learning (DL) models applied to rich data sources such as structural MRI, is enabling this transition by identifying at-risk individuals and facilitating timely therapeutic strategies long before overt clinical symptoms emerge [3].

The Limitation of Reactive Care and the Imperative for Change

Reactive approaches to neurological care, which initiate treatment only after symptom manifestation, face several critical drawbacks, particularly for neurodegenerative diseases.

- Symptom Latency and Irreversible Damage: In many NDs, the underlying pathology begins years or even decades before clinical symptoms become apparent. By the time a diagnosis is made, significant and often irreversible neurological damage may have already occurred, drastically limiting treatment efficacy [1].

- Symptom-Based Management: Reactive care often focuses on compensatory strategies and diet modifications after dysphagia (swallowing impairment) has already developed, disempowering patients and failing to address the progressive nature of the disease [4].

- Poor Outcomes and High Burdens: For conditions like Amyotrophic Lateral Sclerosis (ALS) and AD, dysphagia is a common and serious complication. A reactive approach leads to high rates of life-threatening sequelae such as aspiration pneumonia, malnutrition, and dehydration. Dysphagia accounts for 26% of mortality in persons with ALS, resulting in a 7.7-fold increase in risk of death [4].

Table: Consequences of Reactive Dysphagia Management in Neurodegenerative Disease

| Condition | Dysphagia Prevalence | Major Complication | Impact on Mortality |

|---|---|---|---|

| ALS | 48% - 86% (up to 85% during disease progression) | Aspiration, Malnutrition | 26% of ALS mortality; 7.7x increased risk [4] |

| Alzheimer's Disease | 32% - 84% | Aspiration Pneumonia | Most common cause of death in AD [4] |

Predictive Analytics as the Foundation of Proactive Care

Predictive analytics in healthcare is the process of analyzing historical data to identify patterns and trends predictive of future events [3]. In neurology, this translates to analyzing data from sources like electronic health records (EHRs) and medical images to identify patients at high risk of developing or progressing in a neurological disorder. This allows healthcare providers to "anticipate problems before they occur and provide interventions that prevent complications," fundamentally shifting the care model from passive to active [3].

The core promise of predictive analytics lies in its ability to turn data into foresight. By leveraging artificial intelligence (AI) and machine learning, these models can detect complex, subtle patterns in large datasets that are often imperceptible to the human eye [3]. For neurological disorders, this means that minor changes in brain anatomy visible on an MRI can be detected at their earliest stages, enabling intervention when it is most likely to be effective [2] [1].

Technical Core: Deep Learning Models for Early ND Diagnosis

The technical engine driving this shift is the application of sophisticated DL models to structural neuroimaging data, particularly MRI. Convolutional Neural Networks (CNNs), a class of DL models designed for image processing, have become increasingly popular for this research [5]. Their architecture uses filters and feature maps to detect spatial patterns and increasingly abstract representations of brain structure, making them ideal for identifying anatomical anomalies associated with NDs [5].

The STGCN-ViT Hybrid Model: Addressing Spatial and Temporal Dynamics

While CNNs excel at spatial feature extraction, they often fail to capture temporal dynamics, which are crucial for understanding disease progression. A state-of-the-art hybrid model, the STGCN-ViT, was developed to address this gap by integrating spatial, temporal, and attentional mechanisms [2] [1]. This model combines three powerful components:

- EfficientNet-B0: A CNN used for preliminary spatial feature extraction from high-resolution MRI scans [1].

- Spatial-Temporal Graph Convolutional Networks (STGCN): Models the temporal dependencies and progression of anatomical changes across different brain regions over time [2] [1].

- Vision Transformer (ViT): Employs a self-attention mechanism (AM) to focus on the most critical spatial patterns and regions in the MRI scans, refining the feature extraction process [1].

This integrated approach allows for a comprehensive analysis of the brain's changing anatomy, which is vital for the accurate early diagnosis of progressive neurological disorders [1].

Experimental Protocol and Performance

The STGCN-ViT model was validated using benchmark datasets like the Open Access Series of Imaging Studies (OASIS) and data from Harvard Medical School (HMS). The experimental workflow typically involves a structured pipeline from data preprocessing to model evaluation [2] [5].

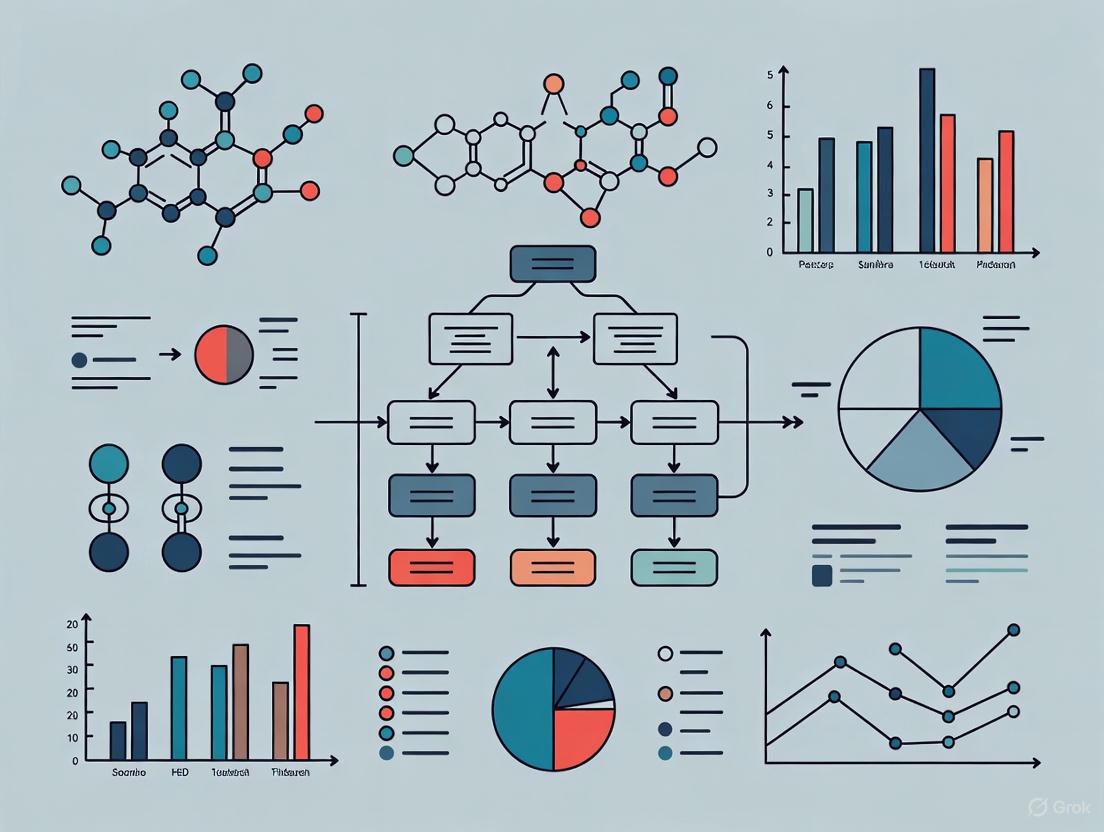

Diagram 1: Experimental workflow for the STGCN-ViT model, illustrating the pipeline from raw data to diagnostic output.

The model's performance demonstrates its potential for real-world clinical application. Quantitative results from the study show a significant improvement over standard and transformer-based models [2] [1].

Table: Performance Metrics of the STGCN-ViT Hybrid Model on Benchmark Datasets [2]

| Metric | Group A | Group B |

|---|---|---|

| Accuracy | 93.56% | 94.52% |

| Precision | 94.41% | 95.03% |

| AUC-ROC | 94.63% | 95.24% |

Beyond standalone accuracy, a systematic review of 55 CNN-based studies for brain disorder classification highlights three critical principles for ensuring the clinical value of such models [5]:

- Modelling Practices: Use of robust validation methods like k-fold cross-validation to ensure reliability and mitigate overfitting.

- Transparency: Detailed reporting of methodologies to enable comparison and reproduction.

- Interpretability: Providing explanations for model outputs, which is crucial for building clinician trust and facilitating integration into clinical care [5].

Implementing predictive models for neurological care requires a suite of data, software, and computational resources.

Table: Essential Research Resources for Predictive Modeling in Neurology

| Resource / Reagent | Function / Application | Specific Examples / Notes |

|---|---|---|

| Neuroimaging Datasets | Provides large-scale, standardized structural MRI data for model training and validation. | Open Access Series of Imaging Studies (OASIS) [2]; Alzheimer's Disease Neuroimaging Initiative (ADNI) [5]; UK Biobank [5]. |

| Deep Learning Frameworks | Software libraries providing the building blocks for designing, training, and deploying complex deep learning models. | TensorFlow, PyTorch. Essential for implementing CNN, STGCN, and ViT architectures. |

| High-Performance Computing (HPC) | Computational power necessary for processing high-dimensional MRI data and training parameter-dense models. | GPUs (Graphics Processing Units). Critical for reducing computation time in deep learning workflows [5]. |

| Preprocessing Tools | Software for standardizing raw MRI data before model input, improving consistency and model performance. | Tools for skull stripping, image registration, cropping, resizing, and contrast normalization [5]. |

| Predictive Model Architecture | The mathematical blueprint of the algorithm that performs spatial-temporal feature extraction and classification. | Hybrid models (e.g., STGCN-ViT [2] [1]), CNNs [5], Vision Transformers [1]. |

Implementation Challenges and Future Directions

Despite promising results, integrating predictive models into routine clinical practice presents several challenges. A systematic review of implemented EHR-based predictive models identified common obstacles, including alert fatigue among clinicians, lack of adequate training for end-users, and perceptions of increased work burden on the care team [6]. Furthermore, the "black box" nature of some complex models creates a barrier to adoption, underscoring the need for transparency and interpretability to build trust [5].

Future efforts must focus on workflow integration, embedding risk scores via dashboards or non-interruptive alerts that seamlessly fit into clinical routines [6]. As these challenges are addressed, the potential for predictive analytics to reshape neurological care is immense, paving the way for personalized medicine and improved population health outcomes [3]. The shift from reactive to proactive neurological care, powered by predictive analytics, represents the future of neuroscience medicine—a future where diagnosis anticipates disease, and intervention begins at the earliest possible moment.

The Critical Role of Early Intervention in Alzheimer's, Parkinson's, and Brain Tumors

Neurological disorders represent one of the most challenging frontiers in modern medicine, with Alzheimer's disease (AD), Parkinson's disease (PD), and brain tumors posing significant threats to global health. The public health impact of Alzheimer's alone is substantial, with an estimated 7.2 million Americans age 65 and older currently living with Alzheimer's dementia, a figure projected to grow to 13.8 million by 2060 barring medical breakthroughs [7]. Early intervention is critically important because the brain changes that cause Alzheimer's symptoms are thought to begin 20 years or more before symptoms start, creating a substantial window for potential intervention [7].

The emergence of artificial intelligence (AI) and machine learning (ML) technologies has opened new frontiers in neurological disease diagnosis and management by identifying subtle patterns in complex, multidimensional data that may escape human observation [8]. This technical review examines cutting-edge predictive analytics approaches for these neurological disorders, focusing on experimental protocols, performance metrics, and research methodologies that enable earlier detection and intervention. By framing this examination within the broader context of predictive analytics research, we aim to provide researchers, scientists, and drug development professionals with a comprehensive technical foundation for advancing early intervention strategies.

Alzheimer's Disease: Predictive Modeling of Progression

Integrated Predictive Models for Disease Trajectory

Recent advances in Alzheimer's disease prediction have focused on integrating multiple data modalities and modeling techniques to achieve earlier and more accurate prognosis. One innovative approach employs a three-stage process: (1) estimating the probability of transitioning from cognitively normal (CN) to mild cognitive impairment (MCI) using ensemble transfer learning; (2) generating future MRI images using Transformer-based Generative Adversarial Networks (ViT-GANs) to simulate disease progression after two years; and (3) predicting AD using a 3D convolutional neural network with calibrated probabilities using isotonic regression [9]. This method addresses the challenge of limited longitudinal data by creating high-quality synthetic images and improves model transparency by identifying key brain regions involved in disease progression through Gradient-weighted Class Activation Mapping (Grad-CAM) [9].

The performance of this integrated framework is noteworthy, demonstrating high accuracy (0.85) and F1-score (0.86) in predicting conversion from cognitively normal to Alzheimer's disease up to 10 years before clinical diagnosis [9]. This approach is particularly valuable because it doesn't definitively classify subjects but emphasizes the obtained probability, acknowledging the diagnostic uncertainty inherent in long-term predictions.

Table 1: Performance Metrics of Recent Alzheimer's Disease Prediction Models

| Study | Methodology | Dataset | Accuracy | AUC | Key Predictors |

|---|---|---|---|---|---|

| Integrated Predictive Model [9] | Ensemble Transfer Learning + ViT-GAN + 3D CNN | ADNI | 0.85 | - | Synthetic MRI features, CN to MCI probability |

| Explainable ML Model [10] | Random Forest with Ant Colony Optimization | Multimodal clinical data (2,149 patients) | 0.95 | 0.98 | Functional assessment, ADL, memory complaints, MMSE |

| Hybrid Deep Learning Framework [11] | LSTM + FNN for structured data | NACC | 0.998 | - | Temporal dependencies, static correlations |

| MRI-based Model [11] | ResNet50 + MobileNetV2 | ADNI | 0.962 | - | Spatial patterns in MRI images |

| STGCN-ViT Hybrid Model [1] | CNN + STGCN + Vision Transformer | OASIS, HMS | 0.936 | 0.946 | Spatial-temporal dependencies |

Multimodal Data Integration and Explainability

Beyond neuroimaging, successful Alzheimer's prediction leverages multimodal data integration. Recent research achieving 95% accuracy and 98% AUC utilized a comprehensive dataset of 2,149 patients encompassing demographic, medical history, lifestyle, clinical measurements, cognitive assessments, and symptom data [10]. Through rigorous preprocessing including MinMax normalization, Synthetic Minority Over-sampling Technique for class imbalance, and Backward Elimination Feature Selection, 32 initial features were reduced to 26 optimal predictors [10].

The explainability of predictive models is crucial for clinical adoption. SHAP analysis has identified functional assessment, activities of daily living, memory complaints, and Mini-Mental State Examination scores as the most influential predictors, while LIME provides complementary local explanations that validate the clinical relevance of identified features [10]. This transparency bridges the gap between model accuracy and clinical trust, fostering potential real-world deployment.

Diagram 1: Alzheimer's Disease 10-Year Predictive Framework. This workflow illustrates the integrated approach for predicting Alzheimer's disease progression from cognitively normal subjects using ensemble transfer learning and generative modeling [9].

Parkinson's Disease: Multimodal AI Diagnostic Approaches

Comprehensive Framework for Early Detection

Parkinson's disease detection has been revolutionized by multimodal AI frameworks that integrate diverse data sources. A recent comprehensive review of 133 papers published between 2021 and April 2024 classified PD diagnostic approaches into five categories: acoustic data, biomarkers, medical imaging, movement data, and multimodal datasets [12]. This systematic analysis reveals that ML and DL approaches can assess patient data such as motor symptoms, imaging scans, and genetic information to recognize patterns over time and estimate disease progression [12].

Experimental results from a novel multimodal AI diagnostic framework demonstrate the power of this integrated approach. Combining deep learning, computer vision, and natural language processing techniques for PD assessment using motor symptom analysis, voice pattern recognition, and gait analysis achieved 94.2% accuracy in early-stage PD detection, outperforming traditional clinical assessment methods [8]. The integrated approach showed particular strength in identifying subtle motor fluctuations and predicting treatment response patterns [8].

Table 2: Parkinson's Disease Diagnostic Modalities and Performance

| Modality | Technology | Key Features | Reported Accuracy | Strengths |

|---|---|---|---|---|

| Neuroimaging [8] [12] | CNN analysis of DaTscan, Graph Neural Networks | Functional connectivity, dopamine transporter density | 88-96% | High specificity, differential diagnosis |

| Voice Analysis [8] | Acoustic feature extraction | Fundamental frequency variation, jitter, shimmer, harmonics-to-noise ratio | 85-93% | Early detection, non-invasive |

| Gait Analysis [8] [12] | Wearable sensors, computer vision | Step length, rhythm, arm swing, postural stability | 85-90% | Continuous monitoring, quantitative |

| Multimodal Framework [8] | Hybrid ML integrating multiple inputs | Motor symptoms, voice patterns, sensor-derived metrics | 94.2% | Comprehensive assessment, early detection |

Neuroimaging and Digital Biomarkers

Neuroimaging represents one of the most extensively studied domains for AI application in PD diagnosis. Dopamine transporter imaging combined with convolutional neural networks has demonstrated remarkable success in distinguishing PD patients from healthy controls, with recent studies reporting accuracies exceeding 95% using deep learning analysis of DaTscan images [8]. Structural and functional magnetic resonance imaging applications have shown promising results in both diagnosis and progression monitoring, with graph neural networks applied to resting-state functional connectivity data achieving classification accuracies of 88-92% in distinguishing PD patients from controls [8].

Beyond traditional clinical assessments, digital biomarkers derived from wearable sensors and smartphone applications provide unprecedented opportunities for continuous monitoring. These technologies can identify subtle alterations in motor functions that may precede clinical symptom onset, creating opportunities for earlier intervention [8]. The integration of these digital biomarkers within deep learning frameworks enables a more holistic view of patient health, fostering a shift from symptom-based to data-driven precision neurology.

Diagram 2: Parkinson's Disease Multimodal Diagnostic Framework. This workflow illustrates the integration of multiple data modalities for enhanced PD detection accuracy [8].

Brain Tumors: AI-Driven Classification and Precision Treatment

Deep Learning for Automated Tumor Classification

The application of deep learning in brain tumor diagnosis has yielded remarkable classification accuracy. Recent research proposes a smart monitoring system that employs a custom CNN model and two pre-trained models for classification of brain tumor cases into ten categories: Meningioma, Pituitary, No tumor, Astrocytoma, Ependymoma, Glioblastoma, Oligodendroglioma, Medulloblastoma, Germinoma, and Schwannoma [13]. The results demonstrate exceptional accuracy, with the custom CNN achieving 97.58%, Inception-v4 reaching 99.56%, and EfficientNet-B4 attaining 99.76% classification accuracy [13].

This high performance is particularly significant given the heterogeneity of brain tumors, which present substantial diagnostic challenges. The custom CNN model was specifically designed to focus on computational efficiency and adaptability to address the unique challenges of brain tumor classification, making it suitable for deployment in resource-constrained settings [13]. Furthermore, the integration of IoT and edge computing technologies enables real-time health monitoring, potentially shifting non-critical patient monitoring from hospitals to homes and easing the burden on hospital resources [13].

Radiomics and Molecular Characterization

Artificial intelligence has the potential to redefine the landscape in neuro-oncology through deep learning-driven radiomics and radiogenomics, enhancing glioma detection, imaging segmentation, and non-invasive molecular characterization better than conventional diagnostic modalities [14]. Radiomics involves voluminous data extraction from radiological images using characterization algorithms that transform complex qualitative data into quantifiable, reproducible, and analyzable features [14].

These quantitative metrics obtained through advanced computational algorithm application to MRI or CT scans can characterize tumor biological behavior, morphology, and microenvironment with capabilities far superior to what the human eye can achieve [14]. Key applications include non-invasive lesion characterization through techniques such as diffusion-weighted imaging or perfusion MRI to extract features indicative of tissue architectural characteristics that differentiate low- from high-grade lesions [14].

Radiogenomics represents the integration of radiomics with genomic and molecular data, linking imaging phenotypes with genetic and molecular tumor characteristics traditionally determined through invasive tissue sampling [14]. Specific imaging phenotypes including tumor texture patterns, apparent diffusion coefficient values, and the degree of contrast enhancement have been found to correlate with molecular subtypes, enabling non-invasive prediction of genetic markers [14].

Table 3: Brain Tumor Classification Models and Performance

| Model | Tumor Classes | Dataset | Accuracy | Clinical Application |

|---|---|---|---|---|

| Custom CNN [13] | 10 classes | Diverse brain MRI datasets | 97.58% | Computational efficiency, adaptable system |

| Inception-v4 [13] | 10 classes | Diverse brain MRI datasets | 99.56% | High-accuracy classification |

| EfficientNet-B4 [13] | 10 classes | Diverse brain MRI datasets | 99.76% | State-of-the-art performance |

| Deep Learning Radiomics [14] | Glioma subtypes | Multimodal imaging | 88-95% | Molecular characterization, treatment planning |

Experimental Protocols and Research Reagent Solutions

Standardized Methodologies for Predictive Modeling

The experimental protocols for developing predictive models in neurological disorders follow rigorous methodologies. For Alzheimer's disease prediction using integrated frameworks, the process involves:

Data Acquisition and Preprocessing: Utilizing the Alzheimer's Disease Neuroimaging Initiative dataset, images undergo skull stripping, intensity normalization, and registration to a standard template [9].

Ensemble Transfer Learning: Implementing a combination of two pre-trained models - a brain age estimation model and an sMCI/pMCI classifier - to estimate the probability of transitioning from CN to MCI [9].

Synthetic Image Generation: Employing Transformer-based Generative Adversarial Networks to generate future MRI images simulating disease progression after two years, addressing limited longitudinal data [9].

3D CNN Architecture: Implementing a 3D convolutional neural network with Grad-CAM interpretability for AD prediction from synthetic images [9].

Probability Calibration: Applying isotonic regression to calibrate probabilities and correct biased predictions [9].

For Parkinson's disease multimodal diagnosis, the protocol includes:

Multimodal Data Collection: Acquiring voice recordings, gait sensor data, DaTscan images, and motor examination videos from 847 participants (423 PD patients, 424 age-matched controls) [8].

Feature Extraction: Implementing specialized feature extraction pipelines for each modality, including acoustic features, sensor-derived motor metrics, and imaging features [8].

Hybrid Model Architecture: Developing a framework that integrates computer vision, voice pattern recognition, and gait analysis through deep learning fusion [8].

Validation: Employing rigorous cross-validation against established clinical rating scales and movement disorder specialist diagnoses [8].

Essential Research Reagent Solutions

Table 4: Key Research Reagent Solutions for Neurological Disorder Prediction

| Reagent/Resource | Function | Application Context |

|---|---|---|

| ADNI Dataset [9] [11] | Standardized multimodal data for Alzheimer's research | Model training and validation for AD prediction |

| NACC Dataset [11] | Comprehensive clinical, demographic, cognitive data | Structured data analysis for AD progression |

| DaTscan Imaging Agents [8] [12] | Dopamine transporter visualization | PD differential diagnosis and progression monitoring |

| Gradient-Weighted Class Activation Mapping [9] | Deep learning model interpretability | Identification of critical regions in MRI for AD |

| SHAP/LIME Frameworks [10] | Explainable AI for model decisions | Clinical validation and trust in predictive models |

| Synthetic Minority Over-sampling Technique [10] | Addressing class imbalance in medical data | Improving model performance on underrepresented classes |

| Ant Colony Optimization [10] | Hyperparameter tuning for machine learning | Optimizing model performance without manual search |

| Vision Transformers [9] [1] | Advanced image analysis using self-attention | MRI classification and synthetic image generation |

The integration of artificial intelligence and predictive analytics represents a paradigm shift in the early intervention landscape for Alzheimer's disease, Parkinson's disease, and brain tumors. The technical approaches detailed in this review demonstrate unprecedented accuracy in detecting these neurological disorders at earlier stages than previously possible. For researchers and drug development professionals, these advances create opportunities for identifying candidate populations for clinical trials during prodromal stages when interventions may be most effective.

The critical challenges moving forward include ensuring model generalizability across diverse populations, addressing computational requirements for real-world deployment, and establishing regulatory frameworks for clinical implementation. Future research should prioritize the development of interpretable AI models that maintain high predictive accuracy while providing clinically meaningful insights that healthcare professionals can trust and utilize in patient care decisions.

As these technologies continue to evolve, the potential for significantly impacting the trajectory of neurological disorders through early intervention becomes increasingly attainable. By leveraging multimodal data, advanced machine learning architectures, and explainable AI techniques, the field is poised to transform how we diagnose, monitor, and ultimately treat these devastating neurological conditions.

The integration of neuroimaging, multi-omics, and clinical records represents a paradigm shift in neurological research and drug development. These complementary data ecosystems provide unprecedented insights into disease mechanisms, enabling precise predictive analytics for diagnosis, subtyping, and treatment monitoring. This technical guide examines the foundational architectures, methodologies, and experimental protocols that underpin successful data integration, focusing on practical implementation for research and clinical translation. We demonstrate how unified frameworks are advancing the diagnosis of complex neurological disorders including Alzheimer's disease (AD) and vascular dementia (VaD), with specific examples achieving diagnostic accuracy up to 89.25% through sophisticated multi-omics integration [15].

The Integrated Data Ecosystem: Components and Architecture

Modern neuroimaging data ecosystems encompass diverse modalities stored across specialized repositories. The BRAIN Initiative coordinates seven primary archives forming a distributed data-sharing network, each optimized for specific data types and analytical approaches [16].

Table: BRAIN Initiative Data Archives and Specifications

| Archive | Host Institution | Primary Data Types | Supported Formats | Public Datasets |

|---|---|---|---|---|

| Brain Image Library (BIL) | Carnegie-Mellon University | Confocal microscopy | DICOM, NIfTI | 8,418 |

| DANDI | Massachusetts Institute of Technology | Cellular neurophysiology, neuroimaging, microscopy | BIDS, NWB | 640 |

| OpenNeuro | Stanford University | MRI, PET, MEG, EEG, iEEG | BIDS | 1,076 |

| NeMO Archive | University of Maryland, Baltimore | Multi-omics | FASTQ, BAM, TSV, LOOM | 49 |

| NEMAR | University of California, San Diego | EEG, MEG | BIDS | 297 |

| BossDB | Johns Hopkins University | Electron microscopy, x-ray microtomography | PNG, JPG, BMP, GIF | 50 |

| DABI | University of Southern California | Invasive neurophysiology, brain signal data | EDF, BrainVision, NWB | 110 |

The interoperability of this ecosystem is facilitated by standardized data formats, particularly Neurodata Without Borders (NWB) for neurophysiology and the Brain Imaging Data Structure (BIDS) for neuroimaging data. These standards enable data pooling, re-analysis, and experimental replication across distributed archives [16] [17].

Multi-Omics Data Landscapes

Multi-omics data integration provides complementary molecular perspectives on neurological mechanisms, encompassing genomic, transcriptomic, proteomic, and metabolomic dimensions. Major repositories include The Cancer Genome Atlas (TCGA), International Cancer Genomics Consortium (ICGC), and METABRIC, which collectively house molecular profiles from thousands of patients [18]. These resources enable researchers to identify driver genes, molecular signatures, and pathway alterations underlying neurological pathologies.

Clinical Records and Phenotypic Data

Electronic Health Records (EHR) systems provide rich phenotypic data including clinical assessments, cognitive testing results, treatment histories, and demographic information. When structured and standardized, these records offer crucial clinical context for molecular and imaging findings, enabling correlation between biological mechanisms and clinical manifestations [6].

Methodological Frameworks for Data Integration

Structural Bayesian Factor Analysis (SBFA) for Multi-Omics Integration

The Structural Bayesian Factor Analysis (SBFA) framework represents an advanced methodology for integrating genotyping data, gene expression data, and neuroimaging phenotypes while incorporating prior biological network knowledge [19].

Experimental Protocol: SBFA Implementation

Data Preparation and Inputs

- Collect multi-modal datasets: ( X = [X1, X2, ..., Xm] ) where ( Xi ) represents different omics or imaging modalities

- Format genotyping data (discrete), gene expression (continuous), and neuroimaging phenotypes (continuous)

- Obtain biological network information from databases (KEGG, HumanBase) as adjacency matrices

Model Specification

- Decompose mean parameters: ( \theta = WZ ) where ( W ) is the sparse factor loading matrix and ( Z ) represents latent factors

- Employ Laplace priors for sparsity: ( W{i,j} \sim \text{Laplace}(0, \lambda{i,j}^{-1}) )

- Assign standard Gaussian priors for factors: ( Z_{i,j} \sim N(0,1) )

- Incorporate biological network structure through graph Laplacian prior on precision matrix

Parameter Estimation and Inference

- Implement Bayesian inference using Markov Chain Monte Carlo (MCMC) methods

- Extract latent factors representing shared information across modalities

- Identify biologically relevant features through structured sparsity patterns

Validation and Application

- Apply latent factors to predict clinical outcomes (e.g., Functional Activities Questionnaire scores)

- Compare prediction accuracy against alternative factor analysis methods (iCluster+, JIVE, SLIDE)

- Perform biological interpretation through pathway enrichment analysis of selected features [19]

The SBFA framework successfully overcomes the phase transition problem of previous Bayesian integrative methods (e.g., GBFA) while incorporating biological network information to produce more interpretable results [19].

Figure 1: Structural Bayesian Factor Analysis (SBFA) Framework for Multi-omics Integration

MINDSETS: Multi-omics Integration with Neuroimaging for Dementia Subtyping

The MINDSETS framework provides a comprehensive methodology for differentiating Alzheimer's disease from vascular dementia using integrated multi-omics data, achieving 89.25% diagnostic accuracy in validation studies [15].

Experimental Protocol: MINDSETS Implementation

Data Acquisition and Preprocessing

- Obtain longitudinal MRI scans from ADNI database or similar resources

- Segment MRI data to extract advanced radiomics features

- Collect genetic data (SNP arrays, sequencing), proteomic profiles, and clinical assessments

- Perform quality control and normalization for each data modality

Feature Engineering and Selection

- Extract radiomics features from segmented brain regions

- Identify genetic variants associated with dementia subtypes

- Select relevant clinical and cognitive assessment measures

- Apply dimensionality reduction techniques to manage feature space

Multi-omics Data Integration

- Implement data-level fusion combining clinical data, MRI segmentation, and psychological assessments

- Apply feature-level fusion using neuropsychological tests, MRI biomarkers, and clinical risk factors

- Utilize hybrid fusion strategies where genetic data enhances early prediction and MRI data characterizes progression

Predictive Modeling and Interpretation

- Train ensemble classifiers (Random Forest, SVM, KNN) on integrated features

- Incorporate SHapley Additive exPlanations (SHAP) for model interpretability

- Develop longitudinal models to monitor diagnostic confidence and treatment efficacy

- Validate using cross-validation and external datasets to prevent overfitting [15]

The MINDSETS approach demonstrates that semantic fluency measures are more impaired in AD, while VaD patients perform worse on phonemic fluency tasks, reflecting distinct neuroanatomical patterns of degeneration [15].

Data Standards and Interoperability Frameworks

Neurodata Without Borders (NWB) Ecosystem

The Neurodata Without Borders (NWB) data language provides a standardized framework for neurophysiology data, enabling integration across diverse experiments and species [17].

Core Components of NWB:

- Hierarchical Data Modeling Framework (HDMF): Modular, extensible architecture for complex data relationships

- NWB:N Format: Standardized container for neurophysiology data and metadata

- Extension Mechanism: Community-driven schema extensions for novel experiment types

- API and Tooling: Comprehensive software ecosystem for data I/O, visualization, and analysis

NWB facilitates the entire data lifecycle from acquisition to publication, supporting data from intracellular patch clamp recordings to human ECoG signals. The framework is foundational to archives like DANDI, enabling collaborative data sharing and analysis [17].

BRAIN Initiative Ecosystem Interoperability

The BRAIN Initiative's distributed archive network achieves interoperability through several mechanisms:

- Standardized Data Formats: NWB for neurophysiology, BIDS for neuroimaging

- Cross-Archive Indexing: Data from multiple archives (NeMO, BossDB, BIL, DANDI) indexed through the Brain Cell Data Center (BCDC)

- Federated Query Capabilities: Ability to find and access data across archive boundaries

- Common Access Tiers: Standardized controlled-access protocols for human data [16]

Figure 2: BRAIN Initiative Data Ecosystem Architecture

Table: Core Resources for Multi-omics Neuroscience Research

| Resource Category | Specific Tools/Platforms | Primary Function | Access Information |

|---|---|---|---|

| Data Archives | DANDI, OpenNeuro, NeMO Archive | Storage, sharing, and discovery of neuroimaging and omics data | Public access with tiered authentication for controlled data |

| Data Standards | NWB, BIDS, FHIR | Standardization and interoperability across data types | Open-source specifications and APIs |

| Analytical Frameworks | SBFA, MINDSETS, iCluster+ | Multi-omics data integration and dimension reduction | Open-source implementations (e.g., SBFA: github.com/JingxuanBao/SBFA) |

| Biological Networks | KEGG, HumanBase, IMP | Prior knowledge for biological interpretation | Public databases with programmatic access |

| Clinical Data Tools | EHR APIs, OMOP Common Data Model | Extraction and standardization of clinical records | Institution-specific implementations with FHIR interfaces |

| Computational Environments | Brain Knowledge Platform, Bridges-2 supercomputer | Large-scale analysis and visualization | Web-based interfaces and HPC resource allocations |

Validation and Clinical Implementation Frameworks

Predictive Model Implementation in Clinical Settings

Implementing predictive models in clinical practice requires careful attention to workflow integration and validation. Systematic review evidence indicates that 69% of implemented EHR-based predictive models (22 of 32 studies) demonstrated improved clinical outcomes [6].

Key Implementation Considerations:

Workflow Integration

- Non-interruptive Alerts: Present risk scores through dashboards rather than modal alerts

- Role-Based Presentation: Tailor information displays to different clinical team members

- Timing and Context: Deliver predictions at clinically relevant decision points

Interpretability and Trust

- Provide model explanations using techniques like SHAP values

- Include confidence estimates with predictions

- Offer accessible training for clinical end-users

Performance Monitoring

- Implement continuous model validation against incoming data

- Establish feedback mechanisms for model refinement

- Monitor for concept drift and data quality issues [6]

Validation Protocols for Integrated Models

Rigorous validation is essential for models integrating neuroimaging, multi-omics, and clinical data:

Technical Validation

- Internal validation using cross-validation and bootstrapping

- External validation on independent datasets

- Comparison against established clinical benchmarks

Clinical Validation

- Prospective evaluation in clinical settings

- Assessment of clinical utility through randomized trials

- Evaluation of implementation barriers and facilitators

Biological Validation

- Pathway enrichment analysis of selected features

- Correlation with established pathological markers

- Experimental validation of novel mechanistic insights

The integration of neuroimaging, multi-omics, and clinical records within structured data ecosystems represents a transformative approach to neurological research and drug development. As these ecosystems mature, several emerging trends will shape their evolution:

- Enhanced Interoperability: Development of cross-archive query federations and analytical workflows

- AI-Driven Discovery: Application of deep learning approaches to integrated data spaces

- Real-Time Clinical Integration: Streamlined pathways from research insights to clinical implementation

- Patient-Centered Outcomes: Incorporation of patient-reported outcomes and digital health data

The foundational assets described in this whitepaper - neuroimaging, multi-omics, and clinical records - when integrated through sophisticated computational frameworks, provide unprecedented opportunities for understanding neurological disease mechanisms and developing targeted interventions. Continued investment in both the technological infrastructure and methodological frameworks will be essential to realizing the full potential of these integrated data ecosystems for advancing human health.

The exponential growth of scientific literature presents both unprecedented opportunities and significant challenges for researchers. This phenomenon is particularly pronounced in cutting-edge, interdisciplinary fields such as the application of artificial intelligence (AI) in healthcare. Within this domain, AI-powered predictive analytics for neurological disorder diagnosis represents a rapidly evolving research frontier that demands comprehensive quantitative assessment. The overwhelming volume of publications—exceeding 2.5 million articles annually in science alone—has necessitated the development of sophisticated bibliometric analysis tools to map intellectual landscapes, identify emerging trends, and quantify collaborative networks [20].

This bibliometric analysis examines the growth trajectory of research focused on AI applications in neurological disorder diagnosis, with particular emphasis on predictive analytics. By applying quantitative methods to the analysis of scientific literature, this study aims to delineate the development of this field, identify key contributors and collaborative networks, pinpoint research hotspots, and forecast future directions. Such analysis is crucial for researchers, clinicians, and policymakers seeking to navigate this rapidly expanding domain and allocate resources efficiently [21].

Methodology

Data Source and Search Strategy

This bibliometric analysis employed a systematic approach to data collection from the Web of Science Core Collection (WoSCC), widely recognized as an authoritative global database for academic literature [22] [23] [24]. To ensure comprehensive coverage of relevant publications, a search strategy was implemented using targeted queries combining terminology related to artificial intelligence, neurological disorders, and diagnostic applications.

The primary search query was structured as follows: TS = (("artificial intelligence" OR "AI" OR "machine learning" OR "deep learning" OR "convolutional neural network" OR "CNN" OR "neural network") AND ("neurological disorder" OR "Alzheimer" OR "Parkinson" OR "epilepsy" OR "brain disorder" OR "depression" OR "major depressive disorder") AND ("diagnos" OR "detection" OR "predict" OR "classification"))

Additional validation was performed through sensitivity analysis using alternative search string configurations to ensure robustness and comprehensiveness of the retrieved dataset [22].

Inclusion and Exclusion Criteria

The literature screening process applied strict inclusion and exclusion criteria to maintain methodological rigor:

Inclusion Criteria:

- Peer-reviewed research articles and reviews published between 2015-2024

- Publications explicitly focusing on AI applications for neurological disorder diagnosis

- Studies involving predictive analytics, neuroimaging analysis, or diagnostic biomarker discovery

- English-language publications

Exclusion Criteria:

- Non-peer-reviewed publications, editorials, conference abstracts, and book chapters

- Studies focusing exclusively on non-neurological conditions

- Publications without empirical validation or methodological innovation

- Duplicate publications or retracted articles

Data Extraction and Analytical Framework

Following the initial search, all retrieved records underwent deduplication and systematic screening based on titles and abstracts. The final dataset comprising 1,208 qualified publications was exported in plain text format for subsequent analysis [23].

Bibliometric analysis was conducted using CiteSpace (version 6.3.R1) and Bibliometrix (R package), specialized software tools designed for scientometric analysis and visualization [22] [23]. The analytical framework incorporated multiple dimensions:

- Temporal analysis: Publication growth trends, citation bursts, and historical evolution

- Network analysis: Collaboration patterns among countries, institutions, and authors

- Content analysis: Keyword co-occurrence, cluster identification, and research front mapping

- Intellectual base analysis: Co-citation networks of references, authors, and journals

Key metrics employed included betweenness centrality (identifying pivotal nodes bridging research communities), citation burst strength (detecting sudden surges of interest), modularity (Q) and silhouette scores (S) for cluster validation [22].

Results

Temporal Trends and Growth Trajectory

The analysis revealed a pronounced exponential growth pattern in publications focusing on AI applications for neurological disorder diagnosis, particularly accelerating after 2018 [22]. The field's development followed a distinct three-phase trajectory:

Table 1: Evolutionary Stages of AI in Neurological Disorder Diagnosis Research

| Phase | Time Period | Annual Publications | Characteristics |

|---|---|---|---|

| Incubation Phase | 2015-2017 | <100 | Early exploratory studies, proof-of-concept applications |

| Acceleration Phase | 2018-2021 | 100-500 | Methodological refinement, increased clinical validation |

| Exponential Growth Phase | 2022-2024 | >500 | Clinical translation focus, multimodal data integration |

This growth trajectory significantly outpaces the overall expansion of scientific literature, which has itself seen exponential growth with over 2.5 million articles published annually across all scientific disciplines [20]. The specific research domain of AI in neurological diagnosis demonstrates an annual growth rate exceeding 25% in recent years, reflecting intense academic and clinical interest [23].

Geographical and Institutional Contributions

The research landscape is characterized by strong international collaboration, with contributions from 85+ countries worldwide [24]. Analysis of publication output and citation impact revealed distinct geographical patterns of productivity and influence.

Table 2: Leading Countries in AI-Neurology Research (2015-2024)

| Country | Publications | Percentage | Citation Impact | Centrality |

|---|---|---|---|---|

| United States | 515 | 35.23% | High | 0.48 |

| China | 352 | 24.09% | High | 0.32 |

| Germany | 235 | 16.07% | Medium | 0.41 |

| United Kingdom | 172 | 11.77% | High | 0.35 |

| Canada | 98 | 6.70% | Medium | 0.52 |

Centrality values >0.1 indicate significant role as knowledge brokers in collaborative networks

The United States maintains a dominant position in both publication volume and influence, while China has demonstrated the most rapid growth in recent years. Notably, countries with high betweenness centrality scores, particularly Canada (0.52), serve as crucial bridges in international collaboration networks, facilitating knowledge exchange across geographical boundaries [24].

At the institutional level, the Max Planck Society (Germany), Harvard Medical School (USA), and Chinese Academy of Sciences emerged as the most prolific research organizations. A clear pattern of interdisciplinary collaboration was evident, with computer science departments increasingly partnering with clinical neuroscience units and medical imaging facilities [23].

Intellectual Structure and Research Fronts

Co-citation analysis of references and keyword co-occurrence mapping revealed the intellectual structure and evolving research fronts within the field. The knowledge base draws heavily from computer science, neuroscience, and clinical medicine, with a notable surge in engineering and translational research since 2020 [22].

Keyword burst detection identified several emerging research fronts with strong growth potential:

- Multimodal data fusion (burst strength: 12.45, 2021-2024)

- Explainable AI (burst strength: 10.83, 2022-2024)

- Transformer architectures (burst strength: 9.76, 2022-2024)

- Digital biomarkers (burst strength: 8.94, 2020-2024)

- Federated learning (burst strength: 7.65, 2023-2024)

The analysis of keyword clusters revealed several dominant research themes, with the largest clusters focusing on "neuroimaging analysis," "early diagnosis," "deep learning," and "biomarker discovery." The high modularity (Q=0.7843) and silhouette scores (S=0.9126) indicated well-defined cluster structure with strong internal coherence [23].

Experimental Protocols in AI-Enhanced Neurological Diagnosis

Protocol 1: Hybrid Deep Learning for Neuroimaging Analysis

Background: Conventional approaches to neurological disorder diagnosis using structural MRI often fail to capture subtle early-stage changes and temporal disease dynamics [1]. The STGCN-ViT model represents an advanced hybrid architecture designed to address these limitations through integrated spatial-temporal feature extraction [1].

Methodology:

- Data Acquisition and Preprocessing: The protocol utilizes the Open Access Series of Imaging Studies (OASIS) and Alzheimer's Disease Neuroimaging Initiative (ADNI) datasets. Standard preprocessing includes skull stripping, spatial normalization, intensity correction, and data augmentation to enhance model robustness [1] [5].

- Spatial Feature Extraction: Implementation of EfficientNet-B0 as the foundational convolutional neural network for extracting discriminative spatial features from structural MRI scans. This component identifies region-specific anatomical alterations associated with neurological conditions [1].

- Temporal Dynamics Modeling: Application of Spatial-Temporal Graph Convolutional Networks (STGCN) to model disease progression patterns across multiple timepoints. Brain regions are represented as graph nodes with anatomical connectivity defining edges, enabling capture of progressive pathological changes [1].

- Feature Integration and Classification: The Vision Transformer (ViT) module employs self-attention mechanisms to weight the importance of different brain regions and features, followed by a multilayer perceptron for final classification [1].

Validation Framework: The protocol implements rigorous k-fold cross-validation (k=5) with strict separation of training, validation, and test sets. Performance metrics including accuracy, precision, recall, F1-score, and AUC-ROC are reported alongside computational efficiency measures [1] [5].

Figure 1: STGCN-ViT Architecture for Neurological Disorder Diagnosis

Protocol 2: Multimodal Data Fusion for Depression Detection

Background: Depression diagnosis traditionally relies on subjective assessment methods with limitations in reliability and objectivity [22]. This protocol integrates multiple data modalities to develop robust AI-driven diagnostic tools.

Methodology:

- Multimodal Data Collection: Simultaneous acquisition of electroencephalography (EEG), facial expression video, and speech samples during structured clinical interviews. Additionally, digital phenotyping data from mobile devices is collected for longitudinal monitoring [22] [25].

- Signal Processing and Feature Extraction:

- EEG Analysis: Computation of band power ratios, functional connectivity metrics, and nonlinear dynamics from resting-state and task-based EEG recordings

- Visual Analysis: Implementation of Convolutional Neural Networks (CNNs) for facial expression dynamics and eye gaze patterns

- Acoustic Analysis: Extraction of prosodic features, speech rate, pause patterns, and voice quality metrics from speech samples

- Feature-Level Fusion and Classification: Application of late fusion architectures with attention mechanisms to weight contributions from different modalities based on context and signal quality. Ensemble methods combine predictions from modality-specific classifiers [22].

Validation Approach: The protocol employs leave-one-subject-out cross-validation and external validation on completely independent cohorts to assess generalizability across diverse demographic and clinical populations [22].

Key Research Reagent Solutions

Table 3: Essential Research Resources for AI-Enhanced Neurological Diagnosis

| Resource Category | Specific Examples | Function/Application |

|---|---|---|

| Neuroimaging Datasets | ADNI, OASIS, UK Biobank, ABIDE | Provide large-scale, well-curated neuroimaging data for model training and validation [1] [5] |

| Software Libraries | TensorFlow, PyTorch, Scikit-learn, NiPy, FSL, AFNI | Enable implementation of deep learning architectures and preprocessing of neuroimaging data [23] |

| Biomarker Databases | AMP-AD, Parkinson's Progression Markers Initiative | Offer multi-omics data and clinical biomarkers for multimodal model development [24] |

| Clinical Assessment Tools | MMSE, UPDRS, HAM-D, MoCA | Provide standardized clinical metrics for model validation and ground truth establishment [26] |

| Computational Infrastructure | GPU clusters, Cloud computing platforms, Secure data enclaves | Support computationally intensive deep learning workflows and protect sensitive patient data [5] |

Discussion

Interpretation of Key Findings

This bibliometric analysis reveals a field in a phase of rapid maturation and specialization. The exponential growth trajectory observed in AI applications for neurological disorder diagnosis reflects both technological advancement and urgent clinical need. The progression from proof-of-concept studies to clinically validated applications follows the typical pattern of emerging technologies, with an initial lag phase followed by accelerated adoption [20] [21].

The geographical distribution of research output highlights the dominance of developed nations with strong investments in both healthcare infrastructure and technology sectors. The bridging role played by countries with high betweenness centrality underscores the importance of international knowledge exchange in driving innovation in this interdisciplinary domain [24]. The rapid ascent of China in publication output demonstrates effective research investment and strategic priority-setting in AI healthcare applications.

The intellectual structure analysis reveals a field transitioning from technological demonstration to clinical implementation. The emergence of research fronts focused on explainability, multimodal fusion, and federated learning indicates increasing attention to the practical challenges of clinical deployment, including model interpretability, data integration, and privacy preservation [27] [5].

Challenges and Limitations

Despite the promising growth trajectory, several significant challenges threaten to impede the translation of AI technologies into routine clinical practice:

- Methodological Heterogeneity: Substantial variation exists in study designs, data preprocessing pipelines, validation approaches, and performance metrics, complicating cross-study comparisons and meta-analyses [21] [5].

- Data Quality and Standardization: Inconsistencies in data acquisition protocols, small sample sizes for rare conditions, and dataset-specific biases limit model generalizability across diverse populations and clinical settings [5].

- Black Box Problem: The inherent opacity of many deep learning models creates barriers to clinical adoption, particularly in high-stakes medical applications where explanatory justification is required [27].

- Regulatory and Ethical Considerations: Ambiguity surrounding regulatory pathways for AI-based medical devices, concerns about data privacy, and potential algorithmic bias necessitate careful consideration [27] [23].

Future Research Directions

Based on the bibliometric trends and emerging research fronts, several promising directions warrant focused attention:

- Development of Standardized Reporting Frameworks: Creation of domain-specific guidelines for transparent reporting of AI model development and validation, analogous to PRISMA for systematic reviews but tailored to bibliometric studies [21].

- Federated Learning Approaches: Implementation of privacy-preserving distributed learning techniques to leverage diverse datasets while maintaining data security and complying with evolving regulations [22].

- Causal AI Frameworks: Advancement beyond correlational pattern recognition toward causal models that can elucidate disease mechanisms and support intervention planning [27].

- Longitudinal Validation Studies: Conduct of prospective trials assessing the real-world clinical impact and economic value of AI-assisted diagnostic pathways across diverse care settings [27].

This bibliometric analysis demonstrates an unambiguous exponential growth trajectory in research applying artificial intelligence to neurological disorder diagnosis. The field has evolved from nascent explorations to a sophisticated interdisciplinary domain with distinct research fronts and collaborative networks. The increasing emphasis on multimodal data integration, model interpretability, and clinical translation reflects maturation toward practical healthcare applications.

The findings underscore the critical importance of international collaboration and standardized methodologies to maximize the potential of AI in addressing the growing global burden of neurological disorders. Future progress will depend on balancing technological innovation with thoughtful attention to clinical implementation challenges, ethical considerations, and equitable access. As the field continues its rapid expansion, bibliometric analysis will remain an indispensable tool for navigating the complex landscape and strategically guiding research investment and policy development.

Architectures in Action: Methodologies and Real-World Applications

The early and accurate diagnosis of neurological disorders (NDs) such as Alzheimer's disease (AD), Parkinson's disease (PD), and brain tumors (BT) represents a significant challenge in modern healthcare [2] [26]. These conditions often manifest with subtle changes in the brain's anatomy and functionality, making them difficult to detect with traditional diagnostic methods in their initial stages [1]. The integration of advanced machine learning (ML) and deep learning (DL) architectures into predictive analytics has ushered in a new era for ND diagnosis, enabling the identification of complex patterns within multi-dimensional data that escape human observation [2] [28]. This technical guide provides an in-depth analysis of four pivotal neural network architectures—Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), Graph Neural Networks (GNNs), and Transformers—framed within the critical context of predictive analytics for neurological disorder diagnosis. By dissecting the operational mechanisms, applications, and integration strategies of these architectures, this document aims to equip researchers, scientists, and drug development professionals with the knowledge to develop sophisticated, data-driven diagnostic tools.

Core Architectural Principles and Neurological Applications

Convolutional Neural Networks (CNNs)

CNNs are deep learning architectures specifically designed for processing structured, grid-like data, such as images. Their core strength in medical imaging lies in their ability to perform automatic spatial feature extraction through a hierarchy of learned filters [29].

- Architectural Mechanics: CNNs utilize convolutional layers that apply learnable kernels across input images (e.g., MRI, CT scans) to produce feature maps. These maps highlight salient regions indicative of pathology, such as areas of atrophy in AD or hyperintense signals in BT [2]. This is typically followed by non-linear activation functions (e.g., ReLU) and pooling layers that progressively reduce spatial dimensionality while retaining critical information, enhancing translational invariance and computational efficiency [29].

- Diagnostic Application: In ND diagnosis, pre-trained architectures like EfficientNet-B0 are leveraged for transfer learning, effectively extracting discriminative features from high-resolution neuroimages [2] [1]. Standard CNN models, however, are limited by their fixed receptive fields, which can restrict their ability to capture long-range dependencies in image data—a shortcoming addressed by newer hybrid and transformer models [2].

Recurrent Neural Networks (RNNs) & their Variants

RNNs are a class of neural networks engineered for sequential data. They maintain an internal state or "memory" that captures information about previous elements in a sequence, making them suitable for analyzing temporal dynamics in neurological data [30] [31].

- Architectural Mechanics: Basic RNNs compute the current hidden state (ht) as a function of the current input (xt) and the previous hidden state (h{t-1}): (ht = g(W \cdot xt + U \cdot h{t-1} + b)), where (g) is an activation function, and (W), (U), (b) are learned parameters [30]. A significant limitation of vanilla RNNs is the vanishing/exploding gradient problem, which hinders learning long-term dependencies.

- Advanced Variants: LSTM & GRU: Long Short-Term Memory (LSTM) networks introduce a gating mechanism (input, forget, and output gates) and a cell state to regulate information flow, enabling the network to retain information over long periods [30] [31]. The Gated Recurrent Unit (GRU) is a simplified variant that combines the input and forget gates into a single update gate, often achieving comparable performance to LSTM with greater computational efficiency [30].

- Diagnostic Application: In prognostic modeling for conditions like Traumatic Brain Injury (TBI), attention-based RNNs (e.g., GRU-D) have been employed. These models utilize longitudinal, time-series data from ICU stays (e.g., vital signs, lab results) to predict functional outcomes (e.g., GOSE scores) at six months post-injury, significantly outperforming models based solely on admission data [31].

Graph Neural Networks (GNNs)

GNNs are deep learning models specifically designed to operate on graph-structured data, making them exceptionally well-suited for analyzing the complex network organization of the human brain [32] [28].

- Architectural Mechanics: In brain connectivity analysis, the graph (G) is defined by a set of nodes (V) (representing brain Regions of Interest, ROIs) and edges (E) (representing structural or functional connectivity) [32]. The core operation in GNNs is message passing, where each node aggregates feature information from its neighboring nodes to update its own representation. This allows GNNs to learn from the rich, relational structure of brain networks [32].

- Common Variants:

- Graph Convolutional Networks (GCNs): Apply convolutional operations in the spectral domain of the graph [32].

- Graph Attention Networks (GATs): Incorporate attention mechanisms to assign varying levels of importance to connections from different neighbors, which is crucial for identifying critical brain hubs affected by disease [32].

- Dynamic GCNNs (DGCNNs): Extend GCNs to model temporal evolution in dynamic brain connectivity [32].

- Diagnostic Application: GNNs excel in identifying altered connectivity patterns in NDs. They can integrate multimodal data—such as fMRI (functional), DTI (structural), and EEG (dynamic functional)—to provide a comprehensive view of network disruptions in disorders like epilepsy and AD [32] [28].

Transformers and the Attention Mechanism

Originally developed for natural language processing, Transformer architectures have been rapidly adopted in medical image analysis due to their powerful self-attention mechanism [33].

- Architectural Mechanics: The self-attention mechanism allows the model to weigh the importance of all other elements in a sequence (or regions in an image) when encoding a particular element. This enables it to capture global dependencies and long-range interactions directly, a limitation of CNNs' local receptive fields. Models like the Vision Transformer (ViT) segment an image into patches, treat them as a sequence, and process them using the standard Transformer encoder [2] [33].

- Diagnostic Application: Transformers are particularly effective for early ND diagnosis where subtle, distributed changes are key indicators. Hybrid models, such as those combining CNNs and Transformers, leverage CNN for local feature extraction and Transformer's self-attention for global context modeling [33]. A meta-analysis of AD diagnosis studies found that hybrid Transformer models achieved a pooled AUC of 0.924, significantly outperforming traditional single-modality methods [33].

Table 1: Performance Comparison of Neural Network Architectures in Neurological Disorder Diagnosis

| Architecture | Primary Data Type | Key Strength | Example ND Application | Reported Performance |

|---|---|---|---|---|

| CNN | Images (MRI, CT) | Spatial feature extraction | Brain Tumor segmentation from MRI | Accuracy up to 97% on ADNI dataset [2] |

| RNN/LSTM/GRU | Time Series (EEG, ICU data) | Modeling temporal dependencies | TBI outcome prediction (GOSE) | AUC: 0.86 (95% CI: 0.83-0.89) [31] |

| GNN | Graph-structured (Brain Connectomes) | Modeling relational dependencies | Epilepsy focus identification using EEG | High accuracy in classifying brain network states [28] |

| Transformer | Sequences, Images | Capturing global dependencies | Early Alzheimer's disease diagnosis | Pooled AUC: 0.924, Sensitivity: 0.887, Specificity: 0.892 [33] |

| Hybrid (STGCN-ViT) | Spatial-Temporal | Integrated spatial & temporal analysis | Early diagnosis of AD and Brain Tumors | Accuracy: 94.52%, Precision: 95.03%, AUC-ROC: 95.24% [2] [1] |

Advanced Hybrid Architectures and Integration Strategies

The limitations of individual architectures have driven the development of sophisticated hybrid models that integrate their complementary strengths. These models represent the cutting edge of predictive analytics for NDs.

The STGCN-ViT Model: A Case Study in Integration

A seminal example is the STGCN-ViT model, which integrates CNN, Spatial-Temporal Graph Convolutional Networks (STGCN), and Vision Transformer (ViT) components [2] [1].

- Model Rationale: Standard CNN-based analyses often fail to account for temporal dynamics, which are crucial for tracking disease progression. Conversely, RNN-based hybrids can struggle with vanishing gradients over long sequences. The STGCN-ViT model was designed to overcome these gaps by providing a balanced and powerful integration of spatial and temporal feature extraction [2].

- Workflow and Component Functions:

- Spatial Feature Extraction (CNN): The model uses EfficientNet-B0 as a backbone to extract high-level spatial features from individual MRI frames. This step identifies anatomical structures and potential regions of interest [2] [1].

- Temporal Dynamics Modeling (STGCN): The spatial features are partitioned into regions of interest (ROIs) and used to construct a spatial-temporal graph. The STGCN component processes this graph, capturing the evolving relationships and functional dynamics between different brain regions over time [2].

- Global Context and Classification (ViT): The refined features are then passed to a Vision Transformer. The ViT's self-attention mechanism assigns importance weights to different features, focusing the model's capacity on the most discriminative spatial-temporal patterns for final classification [2] [1].

- Experimental Outcome: When validated on benchmark datasets (OASIS and HMS), the STGCN-ViT model achieved an accuracy of 94.52%, a precision of 95.03%, and an AUC-ROC score of 95.24%, surpassing the performance of both standard and other transformer-based models [2] [1].

Diagram 1: STGCN-ViT hybrid model workflow.

Multimodal Fusion Strategies

The integration of diverse data types—such as MRI, PET, genetic, and clinical data—through multimodal fusion is a key factor in boosting diagnostic accuracy. Transformers have proven particularly effective in this domain, with fusion strategies being a critical differentiator [33].

- Early Fusion: Data from different modalities (e.g., MRI and PET images) are combined at the input level. This approach is simple but can be sensitive to noise and misalignment between modalities [33].

- Intermediate (Feature-Level) Fusion: This is the most effective strategy, as identified by the meta-analysis. Features are first extracted independently from each modality and then combined in a shared latent space (e.g., within a Transformer encoder) where cross-modal interactions are modeled. This allows the model to learn complex, non-linear relationships between modalities [33].

- Late Fusion: Decisions are made independently on each modality, and the results are combined at the end (e.g., by averaging probabilities). This is robust to missing modalities but cannot capture fine-grained inter-modal relationships [33].

Table 2: Key Research Reagents and Computational Resources

| Category | Item / Solution | Function / Description in Research |

|---|---|---|

| Datasets | OASIS (Open Access Series of Imaging Studies) | Large-scale neuroimaging dataset used for training and validating models on AD and normal aging [2]. |

| ADNI (Alzheimer's Disease Neuroimaging Initiative) | Provides longitudinal MRI, PET, genetic, and clinical data to aid in AD prevention and treatment research [2]. | |

| TRACK-TBI | Prospective, multicenter study providing detailed clinical and time-series data for Traumatic Brain Injury prognosis [31]. | |

| Software & Libraries | TensorFlow / Keras | Open-source libraries for building and training deep learning models (e.g., CNN, RNN architectures) [29]. |

| PyTorch Geometric | A library for deep learning on irregularly structured input data such as graphs, used for implementing GNNs [32]. | |

| Hyperas | A Python package for performing hyperparameter optimization with Keras, crucial for model tuning [29]. | |

| Computational Hardware | NVIDIA GPUs (e.g., RTX 2080 Ti, A100) | Essential for accelerating the training of large-scale deep learning models, reducing computation time from weeks to days or hours [29]. |

Experimental Protocols and Methodological Considerations

Protocol: Benchmarking RNN-based Architectures with Monte Carlo Simulation

Objective: To ensure reliable and consistent benchmarking of various RNN architectures (RNN, LSTM, GRU) and their hybrid combinations for time-series forecasting in neurological data [30].

- Architecture Definition: Define nine distinct neural network architectures, each with two hidden layers. These include the three core types (RNN, LSTM, GRU) and six hybrid configurations (e.g., RNN-LSTM, LSTM-RNN, LSTM-GRU) [30].

- Data Preparation: Curate relevant time-series datasets. For neurological applications, this could include longitudinal EEG measurements or dissolved oxygen levels in cerebral tissue. Preprocess the data (normalization, handling missing values) and partition it into training and testing sets [30].

- Monte Carlo Iterations: For each architecture, execute a high number of training iterations (e.g., N=100). In each iteration, the model is initialized with different random weights. This accounts for performance variability due to stochastic weight initialization [30].

- Performance Evaluation: In each iteration, evaluate the model on the test set using multiple metrics (e.g., Mean Absolute Error, Accuracy, F1-Score). Record the results for every run [30].

- Statistical Analysis: After all iterations, perform statistical analysis (e.g., the Friedman test) on the collected results to determine if there are significant performance differences between the architectures. Analyze the consistency and robustness of each model based on the distribution of its performance over the 100 runs [30].

Key Insight: This protocol revealed that while no single architecture was universally optimal, LSTM-based hybrids (LSTM-RNN and LSTM-GRU) consistently demonstrated superior performance and robustness across diverse temporal patterns, providing evidence-based guidance for model selection [30].

Protocol: Developing a GNN for Brain Connectivity Analysis

Objective: To diagnose a neurological disorder by analyzing functional or structural brain connectivity derived from neuroimaging data (e.g., fMRI, DTI) [32] [28].

- Graph Construction:

- Node Definition: Parcellate the brain into distinct Regions of Interest (ROIs) using a standard atlas. Each ROI becomes a node in the graph.

- Node Feature Assignment: Assign features to each node, which could be ROI-specific measurements such as average fMRI BOLD signal intensity or gray matter volume from sMRI [32].

- Edge Definition: Construct the adjacency matrix that defines the connections between nodes. For functional connectivity, edges are typically weighted by the correlation coefficient (e.g., Pearson) between the time-series of two ROIs. For structural connectivity, DTI-based tractography can define edge weights as the number of connecting fiber tracts [32].

- Model Training:

- Select a GNN variant (e.g., GCN, GAT) suitable for the task.

- Train the model in a supervised manner for graph-level classification (e.g., AD vs. Healthy Control) or node-level prediction (e.g., identifying pathological hubs) [28].

- Interpretation: Use the trained model's attention weights (in the case of GAT) or other post-hoc interpretability techniques to identify which brain connections (edges) or regions (nodes) were most influential in the diagnosis. This can provide valuable biomarkers and insights into the pathophysiology of the disorder [32] [28].

Diagram 2: GNN-based brain connectivity analysis workflow.

The convergence of advanced neural network architectures—CNNs, RNNs, GNNs, and Transformers—with multimodal medical data is fundamentally transforming the landscape of predictive analytics for neurological disorders. While each architecture brings unique and powerful capabilities to the table, the future of this field lies in the strategic integration of these components into hybrid models. Architectures like STGCN-ViT, which seamlessly combine spatial feature extraction, temporal dynamics modeling, and global contextual attention, are demonstrating state-of-the-art performance, achieving diagnostic accuracies and AUC-ROC scores exceeding 94% [2] [1]. The rigorous application of robust experimental protocols, such as Monte Carlo benchmarking for RNNs and standardized graph construction for GNNs, is paramount for validating these models and ensuring their reliability. Despite the remarkable progress, challenges in data scarcity, model interpretability, and seamless clinical integration remain. Future research must therefore focus on creating large, shared, multimodal datasets, developing more transparent and interpretable AI systems, and conducting rigorous multicenter clinical trials to translate these powerful computational tools from the research bench to the clinical bedside, ultimately enabling earlier intervention and improved patient outcomes in neurological care.

Neurological disorders (NDs), such as Alzheimer's disease (AD) and Parkinson's disease (PD), present a significant and growing global health challenge. The early and accurate diagnosis of these conditions is critical for initiating timely therapeutic interventions and slowing disease progression. Magnetic Resonance Imaging (MRI) serves as a vital tool for visualizing the brain's anatomy in ND diagnosis. However, traditional diagnostic methods that rely on subjective human interpretation of MRI scans are often prone to inaccuracy, time-consuming, and lack the sensitivity to detect the subtle anatomical changes characteristic of early-stage neurological pathology [1]. The complex spatiotemporal dynamics of brain degeneration further complicate diagnosis, as these progressive changes involve intricate interactions across different brain regions over time [1].

The field of medical imaging has witnessed a paradigm shift with the adoption of artificial intelligence (AI), particularly deep learning models [34]. Convolutional Neural Networks (CNNs) have demonstrated remarkable success in spatial feature extraction from medical images, while transformer architectures, with their self-attention mechanisms, excel at capturing long-range dependencies [1]. Despite their individual strengths, these models face limitations when applied to the spatiotemporal dynamics of neurological disorders. CNNs struggle with temporal dynamics and long-range dependencies, and transformers may overlook fine-grained local details [1]. To address these limitations, a novel hybrid architecture—the Spatio-Temporal Graph Convolutional Network combined with a Vision Transformer (STGCN-ViT)—has been developed. This framework is specifically designed to capture the complex spatiotemporal dependencies inherent in brain network disorders, offering a powerful tool for enhancing the accuracy of early ND diagnosis [1].

Theoretical Foundations of STGCN-ViT Components

Spatio-Temporal Graph Convolutional Network (STGCN)