Benchmarking Single-Cell CNV Detection: A Comprehensive Guide to Algorithm Performance and Selection

This article provides a comprehensive analysis of the current landscape of computational tools for detecting copy number variations (CNVs) from single-cell RNA sequencing (scRNA-seq) data.

Benchmarking Single-Cell CNV Detection: A Comprehensive Guide to Algorithm Performance and Selection

Abstract

This article provides a comprehensive analysis of the current landscape of computational tools for detecting copy number variations (CNVs) from single-cell RNA sequencing (scRNA-seq) data. Drawing from recent large-scale benchmarking studies, we explore the foundational principles of CNV inference, categorize methodological approaches, and present performance evaluations across diverse datasets and conditions. Key findings reveal significant performance differences among popular tools like CaSpER, CopyKAT, and inferCNV, with optimal selection dependent on specific research goals, data types, and technical parameters including sequencing depth, platform selection, and tumor purity. We offer practical troubleshooting guidance and optimization strategies to address common challenges including batch effects, reference selection, and data quality issues. This resource equips researchers and drug development professionals with evidence-based recommendations for selecting and implementing CNV detection methods in cancer genomics and clinical research applications.

The Landscape of Single-Cell CNV Detection: Principles, Challenges, and Biological Significance

Copy Number Variations (CNVs), the gain or loss of genomic regions, are a hallmark of cancer and play a crucial role in tumor evolution and heterogeneity by amplifying oncogenes or inactivating tumor suppressor genes [1] [2]. The emergence of single-cell RNA-sequencing (scRNA-seq) has provided an unprecedented opportunity to study this genetic heterogeneity within tumors. Consequently, several computational methods have been developed to infer CNVs from scRNA-seq data, expanding its application to study genetic heterogeneity using transcriptomic data [3] [2]. This guide objectively benchmarks the performance of these single-cell CNV detection algorithms based on recent, comprehensive studies, providing researchers with data-driven insights for tool selection.

Benchmarking Single-Cell CNV Detection Algorithms

Multiple independent benchmarking studies have evaluated the performance of popular scCNV inference tools, revealing that their performance varies significantly depending on the specific research application, scRNA-seq platform, and data quality [3] [2] [4].

The table below summarizes the overall findings from these benchmarking efforts, highlighting the recommended use cases for each method.

| Tool Name | Primary Methodology | Performance & Recommended Use Cases | Key Limitations |

|---|---|---|---|

| CopyKAT [3] [2] [4] | Statistical model, segmentation approach [3] | - Overall CNV Inference: Top performer for sensitivity/specificity [2] [4].- Subclone Identification: Excels at identifying tumor subpopulations [2] [4]. | Performance affected by batch effects in multi-platform data [2]. |

| CaSpER [3] [2] [4] | Hidden Markov Model (HMM) integrating gene expression and allele frequency (AF) [3] | - Overall CNV Inference: Top performer for sensitivity/specificity, especially in large droplet-based datasets [3] [2] [4]. | Requires higher runtime [3]. |

| InferCNV [1] [2] [4] | Hidden Markov Model (HMM) on expression [3] [2] | - Subclone Identification: Excels in identifying tumor subpopulations from a single platform [2] [4].- Sensitivity: High sensitivity with sufficient sequenced cells [4]. | - Does not directly predict tumor cells (infers CNV scores) [1].- Highly affected by batch effects [2] [4]. |

| SCEVAN [1] [3] | Segmentation approach [3] | - Prediction: Moderate sensitivity but may overestimate tumor cells [1]. | Overestimates true number of tumor cells; requires epithelial filtering [1]. |

| sciCNV [1] [2] | Calculates expression disparity and concordance scores [2] | - Subclone Identification: Good performance in subclone identification from a single platform [2]. | - Does not directly predict tumor cells (computes CNV scores) [1].- Score distribution may not clearly separate malignant/non-malignant cells [1]. |

| HoneyBADGER [2] [4] | HMM + Bayesian method; can use expression and allele frequency [2] | - Batch Resilience: Allelic version more resilient to batch effects [4].- Sensitivity: Lower sensitivity for rare tumor populations [4]. | Lower overall sensitivity [4]. |

Quantitative Performance Data from Benchmarking Studies

A major benchmarking study published in Nature Communications (2025) evaluated six tools on 21 scRNA-seq datasets using ground truth from whole-genome or whole-exome sequencing [3]. The study assessed the ability to correctly identify ground truth CNVs and euploid cells. The results, summarized in the table below, show that methods incorporating allelic information (like CaSpER and Numbat) performed more robustly for large droplet-based datasets, though they required higher runtime [3].

| Method | Data Type Used | Performance on Euploid (PBMC) Dataset | Impact of Reference Dataset | Runtime & Memory |

|---|---|---|---|---|

| InferCNV [3] [2] | Expression only [3] | Performance varies with reference choice [3]. | Significant impact [3]. | Varies by method and data size [3]. |

| CopyKAT [3] | Expression only [3] | Performance varies with reference choice [3]. | Significant impact [3]. | Varies by method and data size [3]. |

| SCEVAN [3] | Expression only [3] | Performance varies with reference choice [3]. | Significant impact [3]. | Varies by method and data size [3]. |

| CONICSmat [3] | Expression only (per chromosome arm) [3] | Performance varies with reference choice [3]. | Significant impact [3]. | Varies by method and data size [3]. |

| CaSpER [3] | Expression + Allele Frequency [3] | More robust performance [3]. | Lower impact; more robust [3]. | Higher runtime requirements [3]. |

| Numbat [3] | Expression + Allele Frequency [3] | More robust performance [3]. | Lower impact; more robust [3]. | Higher runtime requirements [3]. |

Another study in Precision Clinical Medicine (2025) focused on five tools, evaluating their sensitivity and specificity on a breast cancer cell line (HCC1395) versus a matched normal B-cell line across four scRNA-seq platforms (10x, C1, C1 HT, ICELL8) [2]. The following table synthesizes the key findings.

| Tool | Sensitivity & Specificity (Overall) | Performance on 10x Data (0.5M reads/cell) | Performance on Full-Length Data (C1, ICELL8) |

|---|---|---|---|

| HoneyBADGER [2] | Lower than top performers [2]. | N/A | N/A |

| InferCNV [2] | Varied, not top tier for overall inference [2]. | Good performance for subclone identification [2]. | Good performance for subclone identification [2]. |

| sciCNV [2] | Varied, not top tier for overall inference [2]. | Good performance for subclone identification [2]. | Good performance for subclone identification [2]. |

| CaSpER [2] | Among the best (with CopyKAT) [2]. | Good sensitivity and specificity [2]. | Good sensitivity and specificity [2]. |

| CopyKAT [2] | Among the best (with CaSpER) [2]. | Good sensitivity and specificity [2]. | Good sensitivity and specificity [2]. |

Note: N/A indicates that specific data for this combination was not detailed in the provided search results.

Experimental Protocols for Key Benchmarking Studies

The conclusions drawn above are based on rigorous experimental designs. The following workflow diagrams and protocols outline the methodologies used in the major benchmarking studies.

Experimental Workflow for scRNA-seq CNV Caller Benchmarking

Description: This workflow, based on the Nature Communications study [3], involved applying six CNV callers to 21 diverse scRNA-seq datasets. Performance was benchmarked against a ground truth from whole-genome or whole-exome sequencing using metrics like correlation, AUC, and F1 score. The study also evaluated performance on a euploid PBMC dataset and the impact of reference dataset selection [3].

Protocol for Multi-Platform and Clinical Validation

Description: This protocol, from the Precision Clinical Medicine study [2], first evaluated tools on mixed cell line samples to assess subclone identification accuracy using metrics like the Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI). Findings were then validated using a clinical dataset from a small cell lung cancer (SCLC) patient, with scRNA-seq results corroborated by single-cell whole-exome sequencing (scWES) and bulk whole-genome sequencing (WGS) [2].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key materials and resources used in the benchmarking experiments, which are also essential for conducting robust scCNV analysis.

| Item Name | Function/Description | Example/Reference |

|---|---|---|

| Reference Euploid Cells | A set of normal cells used for expression normalization in CNV inference. Critical for accuracy. | Matched B-cell line (HCC1395BL) [2]; PBMCs from healthy donor [3]. |

| Orthogonal Validation Data | Data from a different modality (not scRNA-seq) used as a ground truth to validate CNV calls. | single-cell or bulk Whole-Genome Sequencing (scWGS/WGS); Whole-Exome Sequencing (WES) [3] [2]. |

| Benchmarking Pipeline | A computational workflow to systematically test and compare CNV callers on new datasets. | Snakemake pipeline from Colomemaria et al. (https://github.com/colomemaria/benchmarkscrnaseqcnv_callers) [3]. |

| Batch Effect Correction Tool | Software to correct for technical variation between datasets from different platforms or batches. | ComBat [4]. |

| Cell Type Annotation Tools | Methods to classify cell types (e.g., tumor vs. normal) which is necessary for selecting reference cells. | Louvain clustering and marker gene expression [3]. |

The benchmarking data leads to several clear recommendations for researchers:

- For overall CNV inference where the goal is accurate detection of gains and losses, CaSpER and CopyKAT are the most reliable choices [2] [4].

- For subclone identification within a tumor from a single scRNA-seq platform, InferCNV and CopyKAT deliver superior results [2] [4].

- The selection of reference euploid cells is a critical parameter that significantly influences the results of expression-based methods; careful annotation of normal cell types is essential [1] [3].

- Be cautious of batch effects, which can severely impact subclone identification when integrating datasets from different scRNA-seq platforms. Consider using batch correction tools or allele-frequency-based methods like the allelic version of HoneyBADGER for more robust integration [2] [4].

In conclusion, there is no single "best" tool for all scenarios. The choice of algorithm must be guided by the specific biological question, the scRNA-seq technology used, and the available computational resources. As the field progresses, the development of more accurate and robust algorithms remains a priority.

The study of copy number variations (CNVs) is crucial for understanding cancer evolution, tumor heterogeneity, and therapeutic resistance. While single-cell DNA sequencing represents the gold standard for CNV detection, single-cell RNA sequencing (scRNA-seq) has emerged as a powerful alternative that enables simultaneous analysis of genomic alterations and transcriptional states from the same dataset [3]. This dual-information capability has driven the development of numerous computational methods designed to infer CNVs from transcriptomic data, creating a critical need for comprehensive benchmarking to guide researchers through the rapidly expanding methodological landscape.

Several independent benchmarking studies conducted in 2025 have systematically evaluated these computational tools, revealing dramatic differences in their performance, strengths, and limitations [3] [2] [5]. These evaluations provide critical insights for researchers seeking to select appropriate methods for specific experimental contexts, from basic research to clinical applications. This review synthesizes these recent findings to offer evidence-based guidance for leveraging scRNA-seq data in CNV analysis, with a particular focus on practical implementation considerations for cancer research and drug development.

Performance Comparison of scRNA-seq CNV Callers

Computational methods for inferring CNVs from scRNA-seq data employ diverse algorithmic strategies, which can be broadly categorized into expression-based approaches and allele-frequency-integrated approaches. Expression-based methods (InferCNV, CopyKAT, SCEVAN, CONICSmat, sciCNV) operate on the principle that genes in amplified genomic regions show elevated expression, while those in deleted regions show reduced expression compared to diploid regions [3]. In contrast, allele-frequency methods (CaSpER, Numbat, HoneyBADGER) integrate single nucleotide polymorphism (SNP) information from scRNA-seq reads with expression signals, implementing hidden Markov models to call CNVs [3] [2].

Recent benchmarking studies have evaluated these tools across multiple dimensions, including CNV prediction accuracy, ability to identify euploid cells, subclone detection performance, computational efficiency, and robustness to technical variables. The most comprehensive analysis evaluated six popular methods (InferCNV, CopyKAT, SCEVAN, CONICSmat, CaSpER, Numbat) across 21 scRNA-seq datasets generated from different technologies (droplet-based and plate-based) and organisms (human and mouse) [3]. Another independent study published in June 2025 compared five methods (HoneyBADGER, inferCNV, sciCNV, CaSpER, and CopyKAT) across multiple scRNA-seq platforms and included clinical validation [2].

Quantitative Performance Metrics

Table 1: Performance Metrics of scRNA-seq CNV Callers Based on Independent Benchmarking Studies

| Method | Primary Strategy | CNV Calling Accuracy | Subclone Identification | Euploid Detection | Computational Demand |

|---|---|---|---|---|---|

| CaSpER | Expression + Allele frequency | High (AUC: 0.72-0.89) [2] | Moderate [2] | Good [3] | High runtime [3] |

| CopyKAT | Expression-based segmentation | High (AUC: 0.75-0.91) [2] | Excellent [2] [4] | Moderate [3] | Moderate [3] |

| InferCNV | HMM on expression | Moderate [3] | Excellent [2] [4] | Good [3] | Moderate [3] |

| SCEVAN | Segmentation-based | Variable across datasets [3] | Good [3] | Moderate [5] | Low-Moderate [3] |

| Numbat | Expression + Allele frequency | Moderate-High [3] | Good [3] | Good [3] | High runtime [3] |

| HoneyBADGER | HMM + Bayesian | Moderate [2] | Moderate [2] | Not comprehensively evaluated | Moderate [2] |

| sciCNV | Expression disparity scoring | Moderate [2] | Good (single platform) [2] | Challenging [5] | Low [2] |

Table 2: Performance in Specific Research Contexts Based on Application Studies

| Method | Tumor Cell Identification | Rare Population Detection | Batch Effect Sensitivity | Optimal Use Case |

|---|---|---|---|---|

| CaSpER | High sensitivity [2] | Moderate [2] | Moderate [2] | Large droplet-based datasets [3] |

| CopyKAT | Moderate sensitivity, overestimates tumor cells [5] | Good [2] | High sensitivity [2] | Subclone identification in homogeneous data [2] |

| InferCNV | Does not directly predict tumor cells [5] | Excellent with sufficient cells [4] | High sensitivity [2] | Subclone resolution in complex tumors [2] |

| SCEVAN | Moderate sensitivity, overestimates tumor cells [5] | Good [3] | Moderate [3] | Automated tumor/normal classification [5] |

| Numbat | Good [3] | Good [3] | Lower sensitivity to batch effects [3] | Datasets with good SNP coverage [3] |

| HoneyBADGER | Allele-based version more robust [4] | Poor [4] | Low (allele-based) [4] | Multi-platform integrated analysis [2] |

| sciCNV | Does not directly predict tumor cells [5] | Poor [4] | High sensitivity [2] | Low-computational budget analyses [2] |

Performance metrics reveal that no single method outperforms others across all evaluation criteria. CaSpER and CopyKAT consistently demonstrate superior performance in overall CNV inference accuracy, while InferCNV and CopyKAT excel specifically in subclone identification tasks [2] [4]. Methods incorporating allelic information (CaSpER, Numbat) generally perform more robustly for large droplet-based datasets but require higher computational resources [3].

The benchmarking analyses further highlight the significant impact of technical and biological variables on method performance. Specifically, sequencing depth, read length, choice of reference dataset, and tumor purity substantially influence accuracy metrics [3] [2]. For example, a study evaluating CNV identification in endometrial cancer found that SCEVAN and CopyKAT demonstrated moderate sensitivity but significantly overestimated the true number of tumor cells, emphasizing the importance of complementary validation through epithelial marker expression [5].

Experimental Design and Methodologies in Benchmarking Studies

Benchmarking Frameworks and Validation Strategies

The 2025 benchmarking studies employed rigorous experimental designs to evaluate method performance under controlled conditions and real-world scenarios. The most comprehensive assessment utilized 21 scRNA-seq datasets with orthogonal CNV validation through either single-cell or bulk whole-genome sequencing (scWGS/WGS) or whole exome sequencing (WES) [3]. This design enabled direct comparison between computationally inferred CNVs and experimentally determined ground truth across diverse biological contexts, including cancer cell lines, primary tumors, and diploid reference samples.

Evaluation metrics were carefully selected to assess different aspects of performance. Threshold-independent metrics included correlation analysis and area under the curve (AUC) scores, with separate evaluations for gain versus all and loss versus all regions [3]. Additionally, partial AUC values were calculated to focus on biologically meaningful threshold ranges [3]. For classification performance, F1 scores were computed based on optimal gain and loss thresholds identified through systematic testing of biologically meaningful values [3].

Table 3: Key Experimental Resources in scRNA-seq CNV Benchmarking

| Resource Type | Specific Examples | Function in Experimental Design |

|---|---|---|

| Reference Datasets | PBMCs from healthy donors [3], HCC1395/HCC1395BL cell lines [2] | Provide diploid controls for normalization and baseline establishment |

| Cell Line Mixtures | 5 human lung adenocarcinoma line mixtures [2], Gastric cancer spike-ins [6] | Enable controlled evaluation of subclone detection accuracy |

| Orthogonal Validation | scWGS, bulk WGS, WES [3], Array CGH [6], Karyotyping [7] | Provide ground truth for CNV calls and method validation |

| Software Pipelines | Snakemake benchmarking pipeline [3], SCOPE [6], SCYN [6] | Standardize analysis workflows and enable reproducible comparisons |

| Sequencing Platforms | 10x Genomics, Fluidigm C1, ICELL8, Drop-seq, CEL-seq2 [2] | Assess platform-specific performance and technical variability |

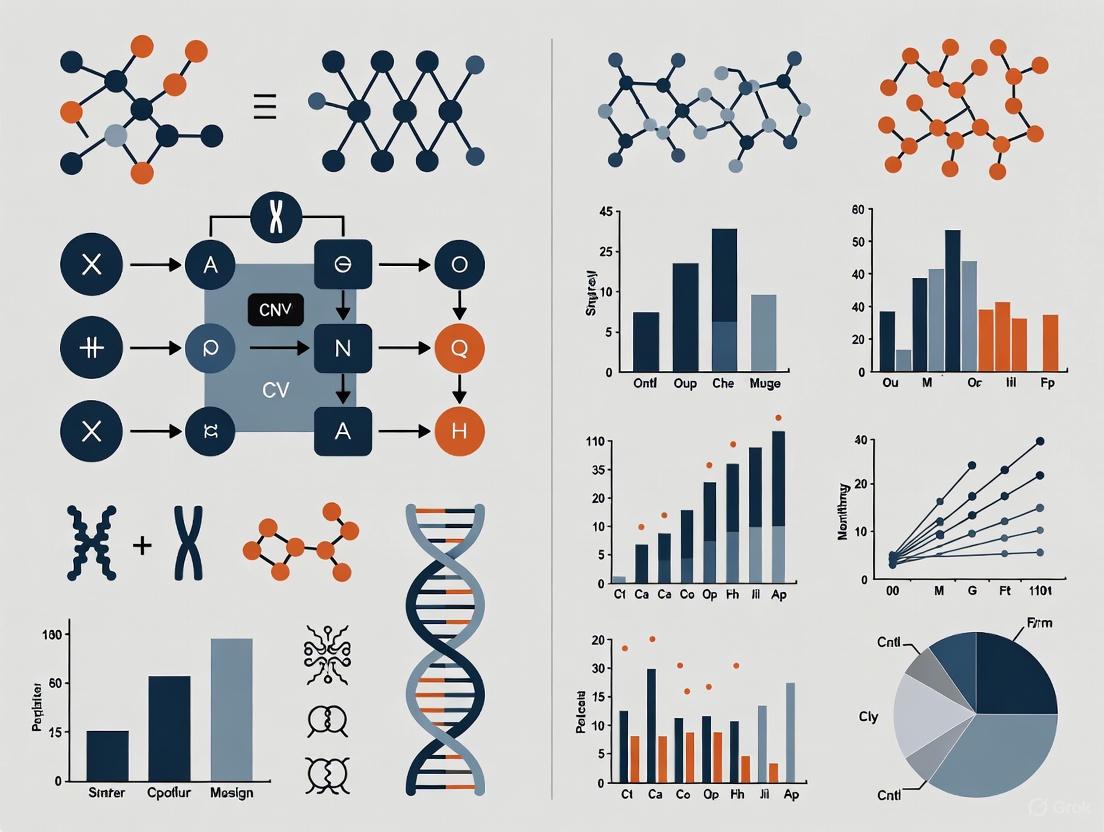

scRNA-seq CNV Calling Workflow

The following diagram illustrates the general computational workflow for inferring CNVs from scRNA-seq data, as implemented across the benchmarked methods:

This workflow highlights two critical steps that significantly impact performance: reference selection and algorithm selection. The benchmarking studies consistently demonstrated that the choice of reference diploid cells for normalization profoundly influences CNV calling accuracy, with performance varying substantially depending on whether internal or external references were used [3]. For cancer cell lines where matched normal cells are unavailable, the selection of appropriate external reference datasets becomes particularly important [3].

Factors Influencing Method Performance

Technical and Biological Considerations

Benchmarking studies have identified several key factors that significantly impact the performance of scRNA-seq CNV callers:

Sequencing Depth and Platform: Methods perform differently across scRNA-seq platforms (10x Genomics, Fluidigm C1, ICELL8, Drop-seq) with varying sensitivity to sequencing depth [2] [8]. CaSpER and CopyKAT maintain more consistent performance across platforms, while sciCNV and HoneyBADGER show greater platform-specific variability [2].

Tumor Purity and Composition: Complex tumor samples with low purity or high stromal contamination present challenges for all methods, though allele-frequency integrated approaches generally show better robustness in these scenarios [3] [9]. Methods vary in their ability to distinguish euploid from aneuploid cells, with several tools overestimating tumor cells in heterogeneous samples [5].

CNV Size and Complexity: Large chromosomal alterations are more reliably detected than focal CNVs across all methods [3]. The number and type of CNVs in the sample (gains versus losses) also influence detection accuracy, with most methods showing better performance for gained regions [3].

Batch Effects: When integrating datasets across multiple scRNA-seq platforms, batch effects significantly degrade the performance of most methods for subclone identification unless corrected using tools like ComBat [2]. The allele-based version of HoneyBADGER demonstrates greater resilience to such technical variability [4].

Analytical Considerations for Experimental Design

The following diagram illustrates the key decision points and considerations for designing scRNA-seq CNV studies:

Successful implementation of scRNA-seq CNV analysis requires careful selection of experimental and computational resources. The following table details key reagents and their functions based on the benchmarking studies:

Table 4: Essential Research Reagents and Resources for scRNA-seq CNV Studies

| Category | Specific Resource | Function & Importance |

|---|---|---|

| Reference Cells | PBMCs from healthy donors [3] | Critical normalization control for identifying aneuploid cells in tumor samples |

| Validated Cell Lines | HCC1395/HCC1395BL pair [2] | Provide matched tumor-normal system for method validation and optimization |

| Spike-in Controls | Gastric cancer cell spike-ins [6], Lung adenocarcinoma mixtures [2] | Enable controlled assessment of detection sensitivity and specificity |

| Orthogonal Validation | scWGS, bulk WGS, Array CGH [3] | Establish ground truth for benchmarking and performance verification |

| Analysis Pipelines | Snakemake benchmarking pipeline [3], SCYN [6] | Standardize analytical workflows and ensure reproducible results |

| Computational Infrastructure | High-memory computing nodes [3] | Essential for processing large datasets, especially allele-frequency methods |

The comprehensive benchmarking of scRNA-seq CNV callers reveals a complex performance landscape where method selection must be guided by specific research objectives and experimental constraints. For general CNV detection in large droplet-based datasets, CaSpER and CopyKAT emerge as top performers with balanced sensitivity and specificity [3] [2]. When subclone identification is the primary goal, particularly in complex tumor ecosystems, InferCNV and CopyKAT provide superior resolution of cellular subpopulations [2] [4].

The integration of allelic information with expression signals generally enhances robustness, though at increased computational cost [3]. Importantly, all methods show performance dependence on technical factors including sequencing depth, platform selection, and reference quality, underscoring the importance of appropriate experimental design. Future method development should address current limitations in detecting focal CNVs, managing batch effects in multi-platform studies, and improving accessibility for researchers without specialized computational expertise.

As single-cell genomics continues its transition toward clinical applications, accurate CNV detection from scRNA-seq data will play an increasingly important role in cancer diagnostics, biomarker discovery, and therapeutic monitoring. The benchmarking frameworks and performance insights summarized here provide a foundation for selecting optimal analytical strategies while highlighting critical areas for future methodological innovation.

Expression-Based vs. Allele-Frequency Based Approaches

Copy number variations (CNVs) are genomic alterations involving the gain or loss of DNA segments, playing crucial roles in cancer development and tumor heterogeneity [3] [2]. Single-cell RNA sequencing (scRNA-seq) has emerged as a powerful tool not only for characterizing cellular transcriptomes but also for inferring CNVs, enabling the simultaneous analysis of genetic alterations and their functional consequences in the same cells [3] [4]. Computational methods for CNV detection from scRNA-seq data primarily fall into two methodological categories: those utilizing only gene expression levels and those incorporating allelic imbalance information [3]. This guide provides a comprehensive comparison of these approaches, supported by recent benchmarking studies, to assist researchers in selecting optimal tools for their specific applications.

Methodological Categories and Representative Tools

Core Computational Principles

Expression-based approaches operate on the fundamental assumption that genes located in genomically amplified regions exhibit higher expression levels, while those in deleted regions show reduced expression compared to diploid regions [3]. These methods employ sophisticated normalization strategies using reference diploid cells, followed by various computational techniques to infer CNV patterns from expression outliers.

Allele-frequency based approaches integrate both gene expression data and single nucleotide polymorphism (SNP) information called from scRNA-seq reads [3]. These methods leverage minor allele frequency (AF) patterns that deviate from expected heterozygous distributions in regions with copy number alterations, providing an orthogonal signal to validate expression-based inferences.

Table 1: Classification of Single-Cell CNV Detection Methods

| Method Category | Representative Tools | Core Algorithm | Primary Output |

|---|---|---|---|

| Expression-Based | InferCNV [3] [2], CopyKAT [3] [2], SCEVAN [3], CONICSmat [3] | Hidden Markov Models [3], Segmentation approaches [3], Mixture Models [3] | Discrete CNV calls or normalized expression scores [3] |

| Allele-Frequency Integrated | CaSpER [3] [2], Numbat [3], HoneyBADGER [2] [4] | Hidden Markov Models integrating expression and allele frequency [3] [2] | CNV predictions with allelic imbalance support |

The following diagram illustrates the fundamental workflows for these two methodological approaches:

Performance Benchmarking and Experimental Data

Experimental Design in Benchmarking Studies

Recent independent benchmarking studies have employed rigorous experimental designs to evaluate the performance of single-cell CNV detection methods. The primary evaluation schemes include:

Sensitivity and specificity analysis using scRNA-seq datasets from cancer cell lines with matched normal B-cell lines from the same donor, generated across multiple scRNA-seq platforms (10x Genomics, Fluidigm C1, C1 HT, and ICELL8) [2] [4].

Subclone identification accuracy assessed using mixed scRNA-seq datasets comprising three or five human lung adenocarcinoma cell lines with known genetic profiles, mimicking tumor subpopulations [2] [4].

Clinical validation employing scRNA-seq data from 92 primary and 39 relapse small cell lung cancer cells, with orthogonal validation using single-cell whole exome sequencing (scWES) and bulk whole genome sequencing (WGS) [2] [4].

These benchmarking studies evaluated performance using multiple metrics including sensitivity, specificity, area under the curve (AUC), F1 scores, and clustering accuracy metrics such as Adjusted Rand Index (ARI) and Normalized Mutual Information (NMI) [3] [2].

Table 2: Quantitative Performance Comparison Across Methodological Categories

| Performance Metric | Top Performing Tools | Performance Characteristics | Method Category |

|---|---|---|---|

| Overall CNV Detection | CaSpER [2] [4], CopyKAT [2] [4] | Balanced sensitivity and specificity across platforms [2] | Allele-Frequency Integrated & Expression-Based |

| Subclone Identification | InferCNV [2] [4], CopyKAT [2] [4] | High accuracy in distinguishing tumor subpopulations [2] | Expression-Based |

| Rare Population Detection | InferCNV [4] [10] | Strong sensitivity with sufficient cell numbers [4] [10] | Expression-Based |

| Batch Effect Resilience | HoneyBADGER (allele-based) [4] [10] | More resilient to technical batch effects [4] [10] | Allele-Frequency Integrated |

| Runtime Efficiency | Expression-based methods (generally) [3] | Lower computational requirements [3] | Expression-Based |

Impact of Experimental Factors

Benchmarking studies have identified several critical factors that significantly impact method performance:

Reference dataset selection: The choice of euploid reference cells for normalization substantially affects CNV calling accuracy, particularly for expression-based methods [3].

Sequencing depth and platform: Allele-frequency integrated methods generally require higher sequencing depths for reliable SNP calling, while expression-based methods show variable performance across different scRNA-seq platforms [2].

Dataset size: Methods incorporating allelic information perform more robustly for large droplet-based datasets but require higher computational resources [3].

Batch effects: When combining datasets across multiple platforms, batch effects significantly impact most methods, particularly expression-based approaches, unless corrected using specialized tools like ComBat [2].

Experimental Protocols for Method Evaluation

Standardized Benchmarking Pipeline

The benchmarking study published in Nature Communications provides a reproducible Snakemake pipeline for evaluating scRNA-seq CNV callers (https://github.com/colomemaria/benchmarkscrnaseqcnv_callers) [3]. The key methodological steps include:

Data Preprocessing: Raw scRNA-seq data from 21 different datasets (13 human cancer cell lines, 6 human primary tumor samples, 1 mouse primary tumor, and 1 human diploid dataset) were processed using consistent quality control metrics [3].

Ground Truth Establishment: Orthogonal CNV measurements from (sc)WGS or WES data were used to establish validation sets. For plate-based datasets where scRNA-seq and scWGS were measured in the same cells, cell-by-cell comparison was performed [3].

Pseudobulk Analysis: For most datasets where ground truth was not measured in the same cells as scRNA-seq, per-cell results were combined into an average CNV profile (pseudobulk) before comparison with validation data [3].

Metric Calculation: Threshold-independent evaluation metrics included correlation analysis, area under the curve (AUC) scores, and partial AUC values with biologically meaningful thresholds. Sensitivity and specificity values for gains and losses were calculated using optimal thresholds determined by multi-class F1 scores [3].

Evaluation Metrics and Statistical Analysis

The following diagram illustrates the comprehensive evaluation workflow used in benchmarking studies:

Performance evaluation included both threshold-dependent and threshold-independent metrics. For AUC calculations, predictions were evaluated separately for gain versus all and loss versus all, resulting in two scores. Partial AUC values were calculated with maximal sensitivity defined by baseline scores to focus on biologically meaningful thresholds [3].

Table 3: Key Research Reagents and Computational Resources for scCNV Analysis

| Resource Category | Specific Items | Function/Purpose | Example Sources/References |

|---|---|---|---|

| Reference Cell Lines | HCC1395/HCC1395BL (breast cancer/B-lymphocyte) [2] | Paired tumor-normal model for method validation | Coriell Institute [2] |

| 25 Coriell cell lines with known CNVs [11] | Validation set for CNV detection performance | Coriell Institute [11] | |

| scRNA-seq Platforms | 10x Genomics, Fluidigm C1, ICELL8, C1 HT [2] | Generate scRNA-seq data for CNV inference | Multiple manufacturers [2] |

| Orthogonal Validation | scWGS, bulk WGS, WES [3] [2] | Establish ground truth for benchmarking | Various platforms [3] [2] |

| Benchmark Datasets | 21 scRNA-seq datasets [3] | Comprehensive performance evaluation | Public repositories [3] |

| Mixed lung adenocarcinoma cell lines [2] [4] | Subclone identification assessment | Tian et al. dataset (GSE118767) [2] | |

| Computational Resources | High-performance computing clusters | Handle memory-intensive algorithms (especially allele-based methods) | Institutional resources [3] |

| Reproducible workflow tools | Snakemake pipeline for standardized benchmarking [3] | https://github.com/colomemaria/benchmarkscrnaseqcnv_callers [3] |

The comparative analysis of expression-based and allele-frequency integrated approaches for single-cell CNV detection reveals distinct strengths and limitations for each methodological category. Expression-based methods generally offer computational efficiency and robust performance in subclone identification, while allele-frequency integrated approaches provide enhanced robustness in large datasets and resilience to certain technical artifacts at the cost of higher computational requirements [3] [2].

Selection of the optimal approach should consider specific research goals, experimental design, and computational resources. For studies focusing primarily on subpopulation identification in datasets from single platforms, expression-based methods like InferCNV and CopyKAT offer excellent performance [2] [4]. For large-scale studies integrating multiple datasets or requiring high confidence in CNV calls, allele-frequency integrated methods like CaSpER may be preferable despite their computational intensity [3] [2].

Future methodological developments will likely focus on hybrid approaches that optimally leverage both expression and allele frequency signals while addressing current limitations in computational efficiency and batch effect sensitivity.

The detection of Copy Number Variations (CNVs) from single-cell RNA sequencing (scRNA-seq) data has emerged as a powerful, indirect approach to study genetic heterogeneity in complex tissues, most notably cancer. Several computational methods have been developed to infer CNVs from transcriptomic data, operating on the core assumption that genes in amplified genomic regions show elevated expression, while those in deleted regions show reduced expression [3]. However, the path from raw scRNA-seq data to accurate CNV profiles is fraught with technical challenges that directly impact the reliability and biological interpretability of the results. Independent benchmarking studies have become essential for guiding researchers through the complex landscape of method selection and application [3] [12] [2].

This guide objectively compares the performance of leading scRNA-seq CNV callers, focusing on three interconnected technical hurdles: data normalization, technical noise, and resolution limitations. By synthesizing evidence from recent, large-scale benchmarking efforts, we provide a data-driven framework for selecting the optimal CNV inference method based on specific experimental conditions and research goals. The insights presented here are critical for researchers, scientists, and drug development professionals seeking to leverage scRNA-seq data for genomic studies.

Performance Benchmarking of scRNA-seq CNV Callers

The computational methods for inferring CNVs from scRNA-seq data can be broadly categorized into those using only gene expression information and those that integrate expression with allelic imbalance data from single nucleotide polymorphisms (SNPs) [3]. Each method employs distinct strategies for normalization, noise reduction, and segmentation.

Table 1: Classification and Key Characteristics of scRNA-seq CNV Callers

| Method | Core Data Input | Primary Algorithm | Reported Resolution | Output Type |

|---|---|---|---|---|

| InferCNV | Gene Expression | Hidden Markov Model (HMM) | Per gene or segment | Subclone groups [3] |

| CopyKAT | Gene Expression | Segmentation | Per gene or segment | Per cell [3] |

| SCEVAN | Gene Expression | Segmentation | Per gene or segment | Subclone groups [3] |

| CONICSmat | Gene Expression | Mixture Model | Per chromosome arm | Per cell [3] |

| CaSpER | Expression + Allelic Frequency | HMM + Multiscale Smoothing | Per gene or segment | Per cell [3] [2] |

| Numbat | Expression + Allelic Frequency | Hidden Markov Model (HMM) | Per gene or segment | Subclone groups [3] |

| HoneyBADGER | Expression + Allelic Frequency | Bayesian HMM | Per gene or segment | Subclone groups [2] |

| sciCNV | Gene Expression | Expression Disparity Score | Per gene or segment | Subclone groups [2] |

Comparative Performance Across Benchmarking Studies

Recent independent evaluations have revealed significant performance variations among methods, influenced by dataset-specific factors including technology platform, sequencing depth, and the choice of reference cells.

Table 2: Quantitative Performance Summary from Benchmarking Studies

| Method | Overall CNV Accuracy | Subclone Identification | Performance with Batch Effects | Sensitivity on Rare Populations | Key Strengths |

|---|---|---|---|---|---|

| CaSpER | Top performer [13] [2] | Moderate | Highly affected in multi-platform data [2] | Information Missing | Robust for large droplet-based datasets [3] |

| CopyKAT | Top performer [12] [13] [2] | Excellent [12] [2] | Highly affected in multi-platform data [2] | Information Missing | Accurate subpopulation characterization [2] |

| InferCNV | Moderate | Excellent [12] [13] [2] | Highly affected in multi-platform data [2] | Strong with sufficient cells [13] | Identifies tumor subclones effectively [13] |

| sciCNV | Lower than top performers | Good [2] | Highly affected in multi-platform data [2] | Falls short [13] | Effective on single-platform data [13] |

| HoneyBADGER | Lower than top performers | Falls short [13] | More resilient (allele-based) [13] [2] | Falls short [13] | Resilient to batch effects [13] |

Experimental Protocols in Benchmarking Studies

Standardized Evaluation Framework

The benchmarking methodologies employed in recent studies provide a template for rigorous CNV caller validation. Understanding these protocols is essential for interpreting performance claims and designing new evaluations.

Detailed Methodological Components

Dataset Selection and Ground Truth Establishment

Benchmarking studies utilized diverse datasets representing various biological contexts and technical platforms. The 2025 Nature Communications benchmarking included 21 scRNA-seq datasets encompassing human cancer cell lines (gastric, colorectal, breast, melanoma), primary tumor samples (leukemia, basal cell carcinoma, multiple myeloma), and diploid controls (PBMCs) [3]. Technologies included both droplet-based (17 datasets) and plate-based (4 datasets) platforms [3].

Ground truth CNV profiles were established using orthogonal genomic measurements, including single-cell or bulk whole-genome sequencing ((sc)WGS) and whole exome sequencing (WES) [3]. For cell line mixtures, the known proportions of different lines provided a truth standard for subclone identification accuracy [2]. This approach enabled both pseudobulk comparisons (averaging CNV profiles across cells) and, for plate-based datasets where scRNA-seq and scWGS were measured in the same cells, direct cell-by-cell comparisons [3].

Method Application and Parameterization

All evaluated methods were run according to their respective tutorials or with default parameters as specified in the benchmarking studies [3]. A critical standardized aspect was the selection of reference cells for normalization. To ensure fair comparison, normal (diploid) cells were manually annotated for each sample using Louvain clustering and known marker genes, with the same healthy cells used as reference across all methods [3]. For cancer cell lines where no directly matched reference exists, external reference datasets with healthy cells from similar cell types were selected [3].

Performance Quantification

Multiple complementary metrics were employed to evaluate different aspects of CNV calling performance:

Threshold-independent metrics: Correlation and Area Under the Curve (AUC) scores evaluated the agreement between inferred and ground truth CNVs, with separate analyses for gains versus all and losses versus all [3]. Partial AUC values were calculated to focus on biologically meaningful threshold ranges [3].

Classification accuracy: Optimal gain and loss thresholds were determined using a multi-class F1 score, from which sensitivity and specificity values were derived [3].

Subclone identification: Metrics including Adjusted Rand Index (ARI), Fowlkes-Mallows index (FM), Normalized Mutual Information (NMI), and V-Measure quantified the accuracy of cellular subpopulation identification compared to known cell line identities in mixed samples [2].

Computational efficiency: Runtime and memory requirements were assessed to evaluate practical scalability [3].

Critical Technical Hurdles in Detail

The Normalization Challenge

Normalization presents a fundamental hurdle for scRNA-seq CNV detection, as methods must distinguish true copy-number-driven expression changes from overwhelming technical and biological confounding factors.

The normalization challenge is particularly acute because global-scaling methods inherited from bulk RNA-seq analysis make assumptions that are frequently violated in single-cell data [14]. These methods assume that the expected read count for a gene in a cell is proportional to a gene-specific expression level and a cell-specific scaling factor representing technical effects [14]. However, scRNA-seq data exhibits unique features including high sparsity (zero-inflation) and substantial technical noise that complicate this relationship [14] [15].

The choice of reference cells for normalization profoundly impacts results. Methods that include allelic information (CaSpER, Numbat) generally perform more robustly for large droplet-based datasets, potentially because allele frequency data provides an orthogonal signal that is less dependent on reference selection [3]. Benchmarking revealed that dataset-specific factors including dataset size, the number and type of CNVs in the sample, and reference dataset choice significantly influence performance [3].

Technical Noise and Data Sparsity

The high levels of technical noise and dropout characteristics of scRNA-seq data directly challenge CNV detection sensitivity and specificity. The sparsity of scRNA-seq data—manifesting as a high proportion of zero read counts—arises from both biological reasons (genuine lack of expression in subpopulations) and technical reasons (dropouts where expressed genes fail to be detected) [14].

The benchmarking studies found that performance variations between methods were significantly influenced by sequencing depth and read length [12] [2]. Methods incorporating allelic information generally require higher runtime but demonstrate improved robustness to technical noise in larger datasets [3]. The ability to distinguish signal from noise also depends on the underlying algorithm, with HMM-based approaches (InferCNV, Numbat) and segmentation methods (CopyKAT, SCEVAN) employing different denoising strategies [3].

Batch effects represent a particularly pernicious form of technical noise. When combining datasets across different scRNA-seq platforms, most expression-based CNV inference methods (InferCNV, CaSpER, sciCNV, CopyKAT) were highly affected in their subpopulation identification accuracy [2]. Only the allele-based version of HoneyBADGER demonstrated relative resilience to these batch-related distortions [13] [2].

Resolution Limitations

The resolution of CNV detection—both in terms of genomic scale and cellular minority populations—represents a third critical hurdle. Resolution limitations manifest in several dimensions:

Genomic resolution: Methods vary in their reporting resolution, from CONICSmat (chromosome arm level) to other methods that report per gene or across segments of multiple genes [3]. Focal CNVs affecting small genomic regions are particularly challenging to detect reliably from scRNA-seq data due to the sparse nature of gene coverage.

Cellular resolution: The ability to identify rare tumor subpopulations varies significantly between methods. InferCNV showed strong sensitivity for detecting rare populations when sufficient cells were sequenced, while sciCNV and HoneyBADGER fell short in this regard [13].

Ploidy estimation: Methods struggle with accurate ploidy determination, particularly in highly aneuploid samples. The benchmarking revealed that no method consistently outperformed others across all datasets, with performance being highly context-dependent [3].

Table 3: Key Research Reagents and Computational Tools for scRNA-seq CNV Analysis

| Resource Category | Specific Item | Function/Role in CNV Analysis |

|---|---|---|

| Experimental Platforms | 10X Genomics Chromium | Droplet-based scRNA-seq platform for high-throughput cell capture [15] |

| Fluidigm C1 | Automated microfluidic system for plate-based scRNA-seq [15] [2] | |

| SMART-seq2/3 | Full-length transcript protocol for higher sensitivity [15] | |

| Reference Data | Human PBMC scRNA-seq | Common source of normal reference cells for blood-derived samples [3] |

| Cell line atlases (e.g., HCC1395BL) | Matched "normal" control cell lines for benchmarking [2] | |

| External diploid references | Healthy cells from similar tissues for normalizing cell line data [3] | |

| Bioinformatics Tools | InferCNV | Widely-used HMM-based method for subclone identification [3] [12] |

| CopyKAT | High-performance method for tumor subpopulation characterization [12] [2] | |

| CaSpER | Integrates expression and allele frequency for robust calling [3] [2] | |

| Seurat/Scanpy | Standard scRNA-seq preprocessing and cell type annotation [3] | |

| Validation Methods | scWGS (single-cell Whole Genome Sequencing) | Gold-standard orthogonal validation for CNV profiles [3] |

| Bulk WES/WGS | Ground truth establishment for pseudobulk comparisons [3] [2] | |

| Chromosomal Microarray | Traditional CNV detection for validation [7] | |

| Benchmarking Resources | Benchmarking pipeline [3] | Snakemake workflow for method comparison on new datasets [3] |

| Mixed cell line datasets | Controlled samples with known proportions for accuracy assessment [2] |

The comprehensive benchmarking of scRNA-seq CNV callers reveals that method selection involves navigating critical trade-offs across the three technical hurdles of normalization, noise, and resolution. Based on the consolidated findings:

For large droplet-based datasets, CaSpER and CopyKAT generally provide the most balanced performance, with CaSpER particularly benefiting from its integration of allelic information for normalization robustness [3] [13] [2].

For precise subclone identification in data from a single platform, InferCNV and CopyKAT deliver superior performance, making them ideal for studying tumor heterogeneity [12] [13] [2].

When analyzing datasets combined across multiple platforms, researchers should anticipate significant batch effects on most expression-based methods and consider allele-based approaches or implement batch correction strategies [2].

For euploid samples or studies requiring null detection, careful validation is essential, as methods vary in their ability to correctly identify the absence of CNVs [3].

The field continues to evolve with new methods like msCNVS [7] and SCOPE [16] emerging, though these were not included in the comprehensive benchmarks discussed here. Researchers should consult the benchmarking pipeline provided by Colomé-Tatché et al. [3] to determine the optimal method for their specific datasets and biological questions. As single-cell genomics progresses toward clinical applications, addressing these technical hurdles will be paramount for reliable biomarker discovery and therapeutic monitoring.

A Practical Guide to scRNA-seq CNV Callers: From Theory to Implementation

Copy number variations (CNVs), defined as the gain or loss of genomic regions, are fundamental drivers of disease, particularly in cancer, where they contribute to tumor initiation, progression, and therapeutic resistance [3]. The inherent heterogeneity of tumors means that distinct cellular subclones with unique CNV profiles coexist within the same sample, complicating treatment and influencing clinical outcomes. Single-cell RNA sequencing (scRNA-seq) has emerged as a powerful technology that enables researchers to dissect this complexity by capturing gene expression at the individual cell level. Computational methods that infer CNVs from scRNA-seq data leverage the principle that genes within amplified genomic regions tend to show elevated expression, while those in deleted regions show reduced expression compared to diploid regions.

Several tools have been developed to decode CNV signals from transcriptomic data, allowing scientists to study genetic and functional heterogeneity simultaneously from a single assay [3] [17]. However, these methods employ distinct algorithmic strategies, have different input requirements, and demonstrate variable performance across diverse datasets. This guide provides an objective, data-driven comparison of six prominent tools—InferCNV, CopyKAT, CaSpER, Numbat, SCEVAN, and CONICSmat—framed within the context of comprehensive benchmarking studies. By synthesizing empirical evidence from large-scale evaluations, we aim to equip researchers, scientists, and drug development professionals with the insights needed to select the optimal CNV detection tool for their specific experimental context and biological questions.

Methodologies of scRNA-seq CNV Callers

The computational tools for inferring CNVs from scRNA-seq data can be broadly classified into two categories based on their input data and underlying algorithms.

Algorithmic Approaches and Input Requirements

Expression-Based Methods: This category includes tools that rely solely on gene expression data. They operate on the core assumption that copy number amplifications lead to upregulated gene expression, while deletions result in downregulation within the affected genomic regions.

- InferCNV utilizes a corrected moving average over gene windows and employs a Hidden Markov Model (HMM) for CNV calling [3] [17].

- CopyKAT applies an integrative Bayesian segmentation approach to infer CNV profiles [17] [4].

- SCEVAN implements a multi-channel segmentation algorithm designed to distinguish tumor cells from normal cells and identify clonal subpopulations [17].

- CONICSmat estimates CNVs based on a Mixture Model and reports results per chromosome arm [3].

Expression + Allelic Information Methods: These more advanced tools integrate gene expression data with allelic imbalance information derived from single nucleotide polymorphisms (SNPs).

- Numbat employs a haplotype-aware Hidden Markov Model that integrates signals from gene expression, allelic ratio from scRNA-seq reads, and population-derived haplotypes to infer allele-specific CNAs and copy-number neutral loss of heterozygosity (cnLOH) [17] [3].

- CaSpER utilizes a multiscale signal-processing framework that integrates gene expression and allelic shift signal profiles for CNV calling [17] [3].

Benchmarking Experimental Designs

Independent benchmarking studies have evaluated these tools using rigorous frameworks to assess their real-world performance. The key aspects of these experimental designs include:

Ground Truth Validation: Performance was assessed by comparing scRNA-seq CNV predictions to orthogonal CNV measurements obtained from either (single-cell) whole-genome sequencing ((sc)WGS) or whole-exome sequencing (WES) data [3] [17]. In some studies, single-cell multi-omics datasets enabling simultaneous interrogation of DNA and RNA within the same cell provided the most accurate validation [17].

Diverse Dataset Composition: Benchmarking studies utilized multiple scRNA-seq datasets spanning various contexts, including:

- Human cancer cell lines (e.g., gastric, colorectal, breast, melanoma)

- Primary tumor samples (e.g., acute lymphoblastic leukemia, basal cell carcinoma, multiple myeloma, colorectal cancer, glioma)

- Diploid control samples (e.g., peripheral blood mononuclear cells - PBMCs)

- Different sequencing technologies (droplet-based and plate-based) [3] [17]

Performance Metrics: Multiple quantitative metrics were employed to evaluate different aspects of performance:

- CNV Prediction Accuracy: Correlation, area under the curve (AUC), partial AUC, and F1 scores for gains and losses [3]

- Tumor/Normal Classification: Sensitivity, specificity, and F1 scores for distinguishing malignant from normal cells [17]

- Subclone Identification: Accuracy in reconstructing clonal architecture [3] [4]

- Computational Efficiency: Runtime and memory requirements [3] [18]

The following diagram illustrates a typical benchmarking workflow for evaluating scRNA-seq CNV callers:

Performance Comparison Across Benchmarking Studies

Quantitative Performance Metrics

Independent benchmarking studies have systematically evaluated CNV callers across multiple dimensions. The table below summarizes key performance metrics from these comprehensive assessments:

Table 1: Comprehensive Performance Comparison of scRNA-seq CNV Callers

| Tool | Algorithm Type | Tumor/Normal Classification F1 Score | CNV Profile Accuracy | Subclone Identification | Aneuploidy Detection in Normal Cells | Runtime & Memory |

|---|---|---|---|---|---|---|

| Numbat | Expression + Allelic | 0.95-0.99 [17] | High [17] | Good [3] | High sensitivity [17] | High runtime [3] [18] |

| CopyKAT | Expression | 0.80-0.90 [17] | High [4] [12] | Excellent [4] [12] | Moderate [17] | Fast, low memory [3] [18] |

| CaSpER | Expression + Allelic | 0.75-0.85 [17] | High [4] [12] | Moderate [3] | Moderate [17] | High runtime [3] |

| InferCNV | Expression | 0.70-0.85 [17] | Variable [3] | Good [4] | Low [17] | Moderate to high runtime [3] |

| SCEVAN | Expression | 0.65-0.80 [17] | Moderate [3] | Good [3] | Best for breakpoint detection [17] | Fast [18] |

| CONICSmat | Expression | Not reported | Low resolution [3] | Poor [3] | Not reported | Fastest, low memory [3] [18] |

Tool Performance Across Different Applications

The benchmarking studies reveal that each tool has distinct strengths depending on the specific analytical task:

For Overall CNV Inference: CaSpER and CopyKAT consistently delivered the most balanced CNV inference results across multiple datasets and sequencing platforms [4] [12]. However, their effectiveness varied with sequencing depth and platform type.

For Tumor/Normal Cell Classification: Numbat demonstrated superior performance in distinguishing tumor cells from normal cells, achieving F1 scores of 0.95-0.99 across multiple solid tumor types [17]. Among expression-only methods, CopyKAT achieved the best classification performance.

For Subclone Identification: InferCNV and CopyKAT excelled in identifying tumor subpopulations, particularly when analyzing data from a single platform [4]. SCEVAN showed the best performance in clonal breakpoint detection [17].

For Specialized Detection: Numbat showed high sensitivity in detecting copy-number neutral loss of heterozygosity (cnLOH) [17], while SCEVAN performed well in identifying aneuploidy in non-malignant cells within the tumor microenvironment [17].

Experimental Factors Influencing Tool Performance

Critical Experimental Considerations

Benchmarking studies have identified several experimental factors that significantly impact the performance of scRNA-seq CNV callers:

Reference Dataset Selection: All methods require a set of euploid reference cells for normalization. The choice of reference significantly affects performance [3] [18]. When using T-cells from the same dataset as reference, good performance was observed for all methods. However, when using external references (e.g., Monocytes or T-cells from another dataset), Numbat and CaSpER outperformed other methods, likely due to their incorporation of allelic information [18].

Tumor Purity: The ratio of tumor to normal cells in the sample dramatically affects performance. Numbat consistently outperformed other tools across a wide range of tumor/normal cell ratios (from 1:100 to 100:1), while InferCNV exhibited errors in classification when tumor purity was high, sometimes incorrectly centering CN gains and losses in tumor cells as the baseline [17].

Sequencing Depth: As sequencing depth decreases, the overall classification accuracy for all tools drops significantly. One study showed that when median unique molecular identifiers (UMIs) per cell were down-sampled to ~10k, 3k, and 1k, all tools showed reduced F1 scores, with Numbat experiencing the most pronounced drop [17].

Inclusion of Tumor Microenvironment (TME) Cells: For samples with imbalanced tumor versus normal ratios, including TME cells (immune, endothelial, and fibroblast cells) significantly improved the accuracy of tumor cell prediction for SCEVAN and InferCNV [17].

The following diagram illustrates how these factors influence the CNV calling workflow and results:

Table 2: Key Research Reagent Solutions for scRNA-seq CNV Analysis

| Resource Category | Specific Examples | Function in CNV Analysis |

|---|---|---|

| Reference Datasets | Healthy PBMCs, matched normal tissue samples | Provides euploid baseline for normalization of gene expression signals [3] [18] |

| Validation Technologies | (sc)WGS, WES, single-cell multi-omics | Generates ground truth data for benchmarking CNV predictions [3] [17] |

| Platform-Specific Kits | 10x Genomics Chromium, SMART-seq2 | Produces scRNA-seq data with characteristics that affect tool performance [4] [19] |

| Computational Infrastructure | High-performance computing clusters | Enables running computationally intensive tools like Numbat and CaSpER [3] [18] |

| Bioinformatics Pipelines | Snakemake workflow, Conda environments | Ensures reproducible installation and execution of CNV callers [18] |

Based on the comprehensive benchmarking evidence, we recommend:

For most applications with available allelic information: Numbat demonstrates the best overall performance across multiple evaluation criteria, including tumor/normal classification and CNV profile accuracy [17].

When only expression matrix is available: CopyKAT is recommended, as it outperforms other expression-only methods in overall CNV inference and subclone identification [17] [4] [12].

For specific applications:

Critical implementation considerations:

- Use matched reference cells from the same sample when possible [18]

- Include tumor microenvironment cells for analysis of samples with high tumor purity [17]

- Ensure adequate sequencing depth (median UMIs >10k per cell) for optimal performance [17]

- Account for batch effects when combining datasets across different platforms [4] [12]

No single scRNA-seq CNV caller outperforms all others in every scenario. The choice of tool should be guided by the specific research question, data characteristics, and analytical requirements. As the field evolves, we anticipate that continued benchmarking efforts will further refine these recommendations and drive improvements in computational methods for detecting copy number variations from single-cell transcriptomic data.

The characterization of copy number variations (CNVs) at single-cell resolution is crucial for deciphering tumor heterogeneity, identifying rare subclones, and understanding cancer evolution. Single-cell RNA sequencing (scRNA-seq) has emerged as a powerful tool for inferring CNVs, allowing researchers to connect genetic alterations with transcriptional phenotypes from the same dataset. Several computational methods have been developed to tackle the challenge of inferring CNVs from scRNA-seq data, employing distinct algorithmic approaches including Hidden Markov Models (HMMs), segmentation techniques, and mixture models [3].

These methods operate on the fundamental principle that genes located in genomic regions with copy number gains tend to show higher expression levels, while those in deleted regions show lower expression compared to diploid regions. However, the indirect nature of this inference requires sophisticated normalization strategies and robust statistical models to distinguish true CNV signals from technical noise and biological variation [3]. This review provides a comprehensive comparison of the leading scCNV detection methods, focusing on their underlying algorithms, performance characteristics, and optimal use cases based on recent benchmarking studies.

Algorithmic Approaches and Method Classification

Algorithm Categories and Representatives

Single-cell CNV detection methods can be broadly categorized by their underlying computational frameworks. Hidden Markov Models (HMMs) are probabilistic models that treat the genome as a sequence of hidden states (copy number states) with probabilistic transitions between them. Segmentation approaches partition the genome into non-overlapping segments with homogeneous copy number profiles. Mixture models assume the data is generated from a mixture of probability distributions, each representing a distinct cell subpopulation or copy number state [3] [20].

Table 1: Classification of Single-Cell CNV Detection Methods by Algorithmic Approach

| Algorithmic Approach | Representative Methods | Core Methodology | Key Advantages |

|---|---|---|---|

| Hidden Markov Models (HMMs) | InferCNV, CaSpER, Numbat | Uses probabilistic transitions between hidden states (CNV states) across the genome | Robust to noise, models spatial dependencies along genome |

| Segmentation Approaches | copyKat, SCEVAN, SCYN | Partitions genome into segments with homogeneous CNV profiles using change-point detection | Efficient for large datasets, identifies clear breakpoints |

| Mixture Models | CONICSmat, CopyMix | Models data as mixture of distributions representing cell subpopulations | Simultaneously clusters cells and infers CNV profiles |

Technical Implementation Details

Methods also differ in their data requirements and output resolutions. Some tools, like CaSpER and Numbat, incorporate allelic information from single nucleotide variants (SNPs) in addition to expression data, which can improve accuracy but requires higher sequencing depth [3]. Output resolution varies from chromosome-arm level (CONICSmat) to gene-level or segmented regions (InferCNV, copyKat, SCEVAN) [3]. Some methods provide discrete CNV calls, while others output continuous scores that require thresholding for interpretation [3].

Performance Benchmarking and Comparative Analysis

Comprehensive Performance Evaluation

Recent large-scale benchmarking studies have systematically evaluated the performance of scCNV detection methods across diverse datasets. A study published in Nature Communications assessed six popular methods on 21 scRNA-seq datasets using ground truth CNV measurements from orthogonal techniques such as single-cell or bulk whole-genome sequencing ((sc)WGS) or whole-exome sequencing (WES) [3]. Performance was evaluated using metrics including correlation with ground truth, area under the curve (AUC) values for gain versus all and loss versus all classifications, and F1 scores [3].

Table 2: Comprehensive Performance Comparison of scCNV Detection Methods

| Method | Algorithm Type | Overall CNV Accuracy | Subclone Identification | Runtime Efficiency | Key Strengths | Optimal Use Cases |

|---|---|---|---|---|---|---|

| CaSpER | HMM + Allelic Info | High [2] [13] | Moderate [2] | Moderate [3] | Integrates expression and allele frequency | Large droplet-based datasets |

| CopyKAT | Segmentation | High [2] [13] | High [2] [13] | High [3] | Robust subclone identification | Tumor subpopulation detection |

| InferCNV | HMM | Moderate [2] | High [2] [13] | Low [3] | Flexible HMM framework | Single-platform studies |

| SCEVAN | Segmentation | Variable [5] | Variable [5] | Moderate | Automatic malignant cell detection | Datasets with clear normal reference |

| Numbat | HMM + Allelic Info | Moderate [3] | High [3] | Low [3] | Allele-aware modeling | Datasets with sufficient SNP information |

| SCICNV | Not Specified | Moderate [2] | Moderate [2] | Moderate | Expression disparity scoring | Full-length transcript datasets |

Platform-Specific and Context-Dependent Performance

Method performance shows significant dependence on sequencing platform and data characteristics. Methods incorporating allelic information (CaSpER, Numbat) generally perform more robustly on large droplet-based datasets but require higher computational resources [3]. A 2025 benchmarking study found that CaSpER and CopyKAT delivered the most balanced CNV inference results across platforms, though their effectiveness varied with sequencing depth and platform type [2] [13]. For subclone identification, inferCNV and CopyKAT excelled with data from a single platform [2] [4].

Batch effects significantly impact most methods when combining datasets across different scRNA-seq platforms. Unless corrected using tools like ComBat, batch effects can severely degrade performance, though the allele-based version of HoneyBADGER has shown more resilience to such technical variations [2]. For detecting rare tumor populations, inferCNV demonstrates strong sensitivity, particularly with sufficient cell numbers, while sciCNV and HoneyBADGER generally fall short in this application [13].

Experimental Design and Methodologies

Benchmarking Frameworks and Validation Strategies

Robust benchmarking of scCNV detection methods requires careful experimental design incorporating orthogonal validation. The Nature Communications study utilized 21 scRNA-seq datasets comprising 13 human cancer cell lines, six human primary tumor samples, one mouse primary tumor sample, and one human diploid dataset (PBMCs) [3]. Seventeen datasets were generated with droplet-based technologies and four with plate-based technology [3]. Ground truth CNV profiles were obtained from either (sc)WGS or WES data [3].

To enable comparison between scRNA-seq inferences and ground truth, the per-cell results from scRNA-seq methods were combined to create an average CNV profile (pseudobulk) before comparison [3]. For plate-based datasets where scRNA-seq and scWGS were measured in the same cells, direct cell-by-cell comparison was performed [3]. Threshold-independent evaluation metrics included correlation analysis and AUC scores, with separate evaluations for gain versus all and loss versus all classifications [3].

Diagram 1: Benchmarking workflow for scCNV detection methods. The process begins with scRNA-seq data and reference cells, progresses through CNV prediction using different algorithms, and concludes with performance evaluation against orthogonal ground truth data.

Critical Experimental Considerations

The choice of reference euploid cells significantly impacts method performance. For primary tissue samples, the common assumption is that tissues contain mixtures of tumor and normal cells, with the latter serving as reference [3]. Some methods require user-provided cell type annotations to specify reference cells, while others offer automatic detection of normal cells [3]. For cancer cell lines where no directly matched reference cells exist, researchers must select matched external reference datasets with healthy cells from similar cell types [3].

Performance evaluation requires careful metric selection. The Nature Communications study used partial AUC values with biologically meaningful thresholds to account for method-specific baseline scores [3]. Sensitivity and specificity values for gains and losses were obtained using optimal thresholds determined via multi-class F1 score optimization [3]. For subclone identification, studies often use metrics such as Adjusted Rand Index (ARI), Fowlkes-Mallows index (FM), Normalized Mutual Information (NMI), and V-Measure to compare estimated tumor subpopulations against known cell line identities [2].

Research Reagent Solutions and Computational Tools

Successful implementation of scCNV detection methods requires specific computational tools and resources. The following table outlines key solutions used in benchmarking studies and their functions in the analysis workflow.

Table 3: Essential Research Reagents and Computational Tools for scCNV Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| Reference Genome | Provides genomic coordinate system | Essential for all methods for gene positioning |

| Orthogonal Validation Data (scWGS/WES) | Ground truth for performance evaluation | Benchmarking studies and method validation |

| Cell Type Annotations | Identifies normal reference cells | Critical for normalization in most methods |

| Harmony/ComBat | Batch effect correction | Essential when integrating datasets from multiple platforms |

| Snakemake Pipeline | Workflow management | Reproducible benchmarking of multiple methods [3] |

| SCSsim | Single-cell sequencing simulator | Generating synthetic data for controlled evaluations [21] |

Implementation and Practical Considerations

For researchers implementing these methods, several practical considerations emerge from benchmarking studies. Computational requirements vary significantly, with methods incorporating allelic information generally requiring more runtime and memory [3]. The availability of high-quality reference cells is paramount, as reference choice dramatically impacts result quality [3]. Dataset size also influences performance, with some methods scaling better to large cell numbers than others [3].

Diagram 2: Decision workflow for scCNV detection method selection. The process guides researchers from data input through algorithm selection to final interpretation, with key considerations at each step.

The benchmarking studies reveal that no single method outperforms others across all scenarios and metrics. Method selection should be guided by specific research goals, data characteristics, and computational resources. For general-purpose CNV detection, CaSpER and CopyKAT provide the most balanced performance [2] [13]. For subclone identification where batch effects are minimized, InferCNV and CopyKAT excel [2] [4]. When allelic information is available and computational resources are sufficient, Numbat provides robust performance by integrating expression and allele frequency data [3].

Future method development should address current limitations, including sensitivity to batch effects, computational efficiency for large datasets, and improved detection of focal CNVs. The availability of standardized benchmarking pipelines, such as the Snakemake pipeline provided by Colomé-Tatché et al., will facilitate systematic evaluation of new methods as they emerge [3]. As single-cell genomics continues to advance toward clinical applications, accurate and robust CNV detection will play an increasingly important role in understanding tumor evolution and developing targeted therapies.

In the analysis of single-cell sequencing data, accurately interpreting the output of copy number variation (CNV) detection algorithms is as crucial as the analysis itself. These computational tools generally provide results in one of two forms: discrete CNV calls or continuous CNV scores. Discrete calls represent a definitive classification of genomic regions into states such as "loss," "normal," or "gain," providing a clear, categorical interpretation ideal for downstream analyses like clonal grouping. In contrast, continuous scores offer a quantitative measure of the relative copy number signal, allowing researchers to apply their own thresholds and assess the strength or confidence of the prediction [3].

This distinction is not merely an output formatting difference but reflects fundamental methodological approaches and underlying assumptions. The choice between these output types impacts everything from experimental design to biological interpretation, particularly in complex tumor ecosystems where genetic heterogeneity prevails. Understanding the input requirements that lead to these different outputs, and how to properly interpret them, forms a critical component of benchmarking single-cell CNV detection algorithms [2] [4].

Algorithm Classifications and Methodological Approaches

Conceptual Framework of CNV Detection Methods

Single-cell CNV detection methods can be broadly categorized based on their input data requirements and analytical approaches. Expression-based methods rely on the fundamental assumption that genes in amplified regions show elevated expression compared to diploid regions, while deleted regions show reduced expression. These methods require sophisticated normalization strategies against reference diploid cells to distinguish true CNVs from regulatory variation [3]. Allele-based methods incorporate single nucleotide polymorphism (SNP) information from sequencing reads, using allele-specific signals to infer copy number changes. A third category employs hybrid approaches that integrate both expression and allele frequency information for improved accuracy [3] [2].

The computational frameworks underlying these methods vary considerably. Several algorithms implement Hidden Markov Models (HMMs) to segment the genome into regions with distinct copy number states, while others apply segmentation approaches such as circular binary segmentation or dynamic programming to identify breakpoints. Additional strategies include mixture models for estimating CNV states and signal processing techniques that perform multiscale smoothing of input data [3] [6].

Comprehensive Method Categorization

Table 1: Classification of Single-Cell CNV Detection Methods

| Method | Primary Input Data | Computational Approach | Output Type | Cell Grouping |

|---|---|---|---|---|

| InferCNV | Gene expression | HMM | Both discrete & continuous | Subclones |

| CopyKAT | Gene expression | Segmentation | Both discrete & continuous | Per cell |

| SCEVAN | Gene expression | Segmentation | Both discrete & continuous | Subclones |

| CONICSmat | Gene expression | Mixture Model | Discrete | Per cell |

| CaSpER | Expression + Allelic information | HMM + Signal processing | Both discrete & continuous | Per cell |

| Numbat | Expression + Allelic information | HMM | Both discrete & continuous | Subclones |

| HoneyBADGER | Expression ± Allelic information | HMM + Bayesian | CNV probabilities | Per cell |

| sciCNV | Gene expression | Expression disparity scoring | Continuous scores | Per cell |

Methods also differ in whether they provide results for individual cells or group cells into subclones with similar CNV profiles. This distinction significantly impacts output interpretation, as subclonal groupings provide an immediate biological context but may mask rare cell populations or continuous evolutionary processes. Half of the commonly used methods report results per cell (CONICSmat, CopyKAT, CaSpER), while others like InferCNV, SCEVAN, and Numbat group cells into subclones with the same CNV profile [3].

Input Requirements and Data Processing

Fundamental Input Requirements

All scRNA-seq CNV callers require a set of reference diploid cells for normalization, which serves to distinguish technical artifacts from biological signals. For primary tissue samples, the common assumption is that measured tissues contain a mixture of tumor and normal cells, with the latter providing an internal control. However, for cancer cell lines or highly purified samples, researchers must identify matched external reference datasets from healthy cells of similar types [3]. The choice of reference significantly impacts performance, with studies showing that dataset-specific factors including dataset size, the number and type of CNVs in the sample, and reference selection considerably influence results [3] [2].

The sequencing technology represents another critical input consideration. Methods perform differently on droplet-based versus plate-based technologies, with allelic-information-based approaches generally requiring higher sequencing depths to reliably call SNPs from expression data [3]. The number of cells analyzed also affects performance, with some methods requiring minimum cell numbers for robust statistical analysis, while others are specifically designed for large-scale datasets [2].

Experimental Design Considerations

Table 2: Input Requirements Across Platform Types

| Requirement | Droplet-Based Platforms | Plate-Based Platforms |

|---|---|---|

| Minimum Cells | 100+ recommended | Can work with fewer cells |

| Sequencing Depth | Variable impact by method | Higher depth beneficial for allele-based methods |

| Reference Cells | Critical for normalization | Critical for normalization |

| SNP Information | Required for allele-based methods | Required for allele-based methods |

| Data Normalization | Essential for all methods | Essential for all methods |