Benchmarking TME Scoring Algorithms: A 2025 Guide to Performance, Validation, and Clinical Integration

This article provides a comprehensive framework for researchers and drug development professionals to benchmark Tumor Microenvironment (TME) scoring algorithms.

Benchmarking TME Scoring Algorithms: A 2025 Guide to Performance, Validation, and Clinical Integration

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to benchmark Tumor Microenvironment (TME) scoring algorithms. It covers foundational concepts, current methodologies, and real-world applications, drawing on the latest 2025 clinical data. A strong focus is placed on troubleshooting common algorithm failures, optimizing for clinical reliability, and implementing rigorous validation protocols that compare AI performance against human expert pathologists. The guide synthesizes key performance metrics and future directions to ensure TME algorithms are robust, reproducible, and ready for impactful clinical decision-making.

The Tumor Microenvironment and the Critical Need for Algorithmic Scoring

Defining the Tumor Microenvironment (TME) and Its Clinical Significance

The tumor microenvironment (TME) is the cellular ecosystem in which cancer cells exist, comprising blood vessels, immune cells, fibroblasts, signaling molecules, and the extracellular matrix (ECM) [1]. Rather than being a passive bystander, the TME actively determines cancer behavior through dynamic interactions that influence all aspects of cancer biology, including growth, angiogenesis, metastasis, and therapeutic resistance [2] [1]. The conceptual understanding of the TME dates back to Stephen Paget's 1889 "seed and soil" theory, which proposed that metastatic success depends not only on tumor cell properties (the seed) but also on the host environment (the soil) [2]. This concept has evolved into a modern understanding of cancer as a complex, evolving ecosystem rather than merely a cell-autonomous disease [2].

The clinical significance of the TME lies in its fundamental roles in immune evasion, angiogenesis, metabolism, and therapy resistance [3]. These processes occur through diverse pathways that are increasingly being targeted therapeutically. The TME provides the immediate "soil" that sustains tumor cell survival and expansion, while simultaneously interacting with broader systemic factors in what is termed the tumor macroenvironment (TMaE) [3]. This local-systemic interplay creates both constraints and opportunities for cancer intervention, making TME characterization essential for advancing precision oncology.

TME Composition and Key Components

Cellular Constituents

The TME contains numerous cellular components that collectively create a permissive niche for tumor progression. Cancer-associated fibroblasts (CAFs) are among the most prevalent and diverse cell types in the TME, originating from various sources including resident fibroblasts, pericytes, mesenchymal stem cells, and cells undergoing transdifferentiation [2]. Once activated by signals such as TGF-β, PDGF, and IL-1 from tumor cells, CAFs express markers including platelet-derived growth factor receptor beta (PDGFRB), fibroblast activation protein (FAP), and α-smooth muscle actin (alpha-SMA) [2]. Their functions extend from ECM remodeling to immune suppression through the secretion of factors like CXCL12, which excludes CD8+ T cells from tumor nests [2].

Immune cells within the TME display considerable functional plasticity. Tumor-associated macrophages (TAMs) often polarize to an M2-like phenotype that promotes angiogenesis, matrix remodeling, and immune evasion [2]. Myeloid-derived suppressor cells (MDSCs) and regulatory T cells (Tregs) further contribute to immunosuppression, while varying proportions of cytotoxic T cells and natural killer (NK) cells determine the potential for effective anti-tumor immunity [4]. Endothelial cells and pericytes are essential for tumor vasculature development and stabilization, affecting both perfusion and metastatic dissemination [2]. The functional diversity of these cellular components creates spatial heterogeneity within tumors, with hypoxic niches overlapping with dense ECM and immunosuppressive zones, while perivascular regions may harbor infiltrating immune cells and cancer stem cells [2].

Non-cellular Components

The extracellular matrix (ECM) provides structural support and modulates cellular behavior through adhesion, polarity, and receptor-mediated signaling [2]. Composed of laminins, collagens, fibronectin, and hyaluronan, the ECM undergoes continuous remodeling during tumor progression through enzymes including matrix metalloproteinases (MMPs) and LOX [2]. These alterations increase matrix stiffness, create physical barriers to drug penetration, and release soluble factors that guide cell migration and angiogenesis.

A complex network of soluble factors including cytokines, chemokines, and growth factors (e.g., TGF-β, VEGF, interleukins, and CXCL12) forms core signaling axes in the TME [2]. These mediators maintain inflammatory states and inhibit productive immune surveillance. For example, CAF-secreted CXCL12 establishes paracrine loops with cancer cell CXCR4 that promote immune cell exclusion and metastatic spread [2]. Additionally, exosomes transfer bioactive molecules between cells, mediating therapy resistance and regulating intercellular communication [2].

Table 1: Key Components of the Tumor Microenvironment

| Component Type | Key Elements | Primary Functions |

|---|---|---|

| Cellular | Cancer-associated fibroblasts (CAFs), Tumor-associated macrophages (TAMs), Myeloid-derived suppressor cells (MDSCs), Regulatory T cells (Tregs), Endothelial cells, Pericytes | ECM remodeling, immune suppression, angiogenesis, metabolic reprogramming |

| Non-cellular | Collagens, fibronectin, laminins, hyaluronan, matrix metalloproteinases (MMPs) | Structural support, mechanotransduction, drug penetration barrier, migration pathways |

| Soluble Factors | TGF-β, VEGF, CXCL12, interleukins, growth factors | Immune cell recruitment/exclusion, angiogenesis induction, inflammation maintenance |

| Vesicles | Exosomes, extracellular vesicles | Transfer of resistance traits, intercellular communication, signaling regulation |

Computational Frameworks for TME Analysis

TME Scoring Algorithms and Comparison

The heterogeneity of the TME has prompted the development of computational frameworks for systematic characterization. These tools aim to quantify TME features and predict therapeutic responses, particularly to immunotherapy. Below is a comparative analysis of representative approaches:

Table 2: Comparison of TME Scoring Algorithms and Their Performance

| Algorithm/Model | Key Components | Cancer Types Validated | Performance Metrics | Clinical Utility |

|---|---|---|---|---|

| TMEtyper [5] | Pan-cancer TME signature integrating cellular composition, pathway activities, intercellular communication networks | Pan-cancer (11 immunotherapy cohorts) | Defined 7 TME subtypes; Lymphocyte-Rich Hot subtype associated with superior outcomes | Predicts immunotherapy response; identifies causal regulators via structural causal modeling |

| ARGS Model [6] | 12 angiogenesis-related gene signatures; integrated machine learning system | Bladder cancer (BLCA) | Stratifies patients into high/low-risk groups; significant TME remodeling in high-risk group | Assesses angiogenic activity; predicts chemotherapy sensitivity; identifies MYH11 as post-treatment biomarker |

| TIIC Signature Score [4] | 137 TIIC-related genes refined to 5-key signature; multiple machine learning techniques | Colorectal cancer (CRC); also validated across solid tumors | Outperformed 22 existing prognostic models; correlated with metabolic characteristics and chromosomal instability | Predicts immunotherapy efficacy; correlates with immune infiltration patterns |

These computational approaches demonstrate the evolving sophistication in TME characterization, moving beyond simple cell type enumeration to integrated network-based analyses. The TMEtyper framework employs consensus clustering with topological feature extraction to define seven distinct TME subtypes with prognostic implications [5]. Its analytical pipeline combines ensemble machine learning with a convolutional neural network for robust subtype classification and uses structural causal modeling to reconstruct underlying regulatory networks [5]. Similarly, the TIIC signature score leverages multiple machine learning techniques—including Random Survival Forest (RSF), LASSO regression, and Cox proportional hazards regression—to refine prognostic gene selection from single-cell RNA sequencing data [4].

Methodological Workflows for TME Analysis

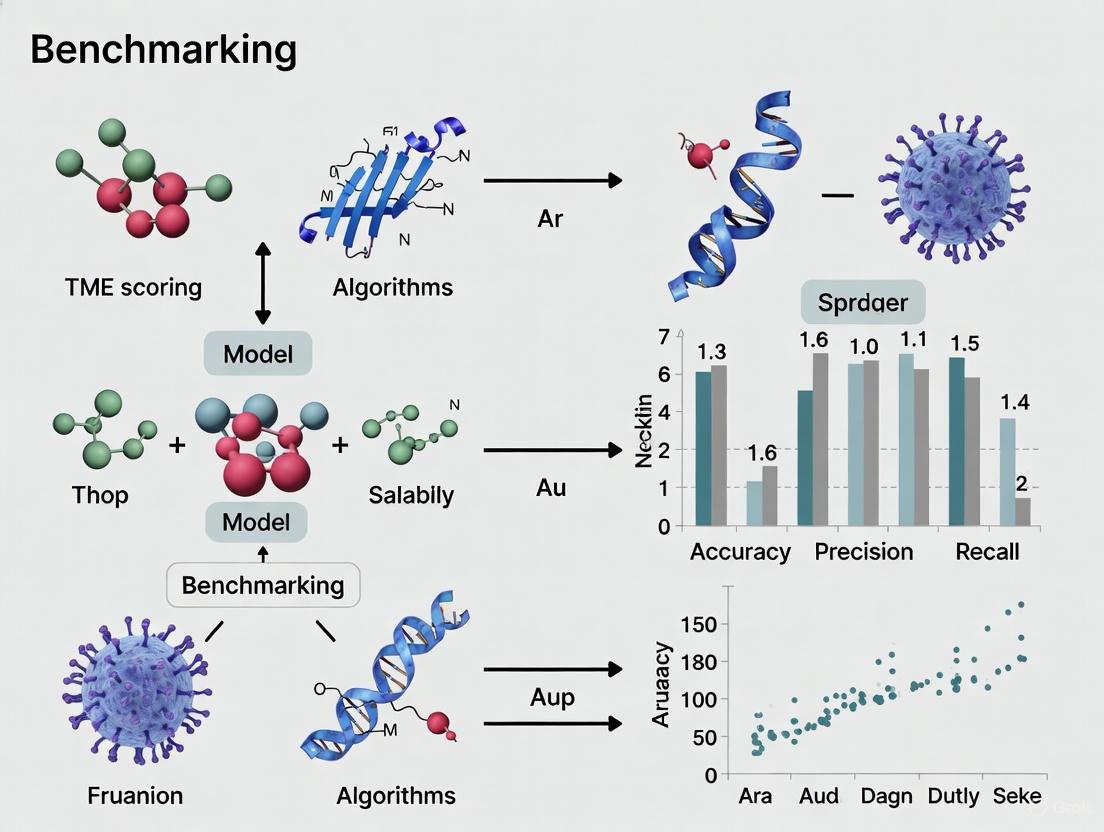

The experimental and computational workflows for TME characterization typically follow a multi-stage process integrating diverse data types and analytical techniques. The following diagram illustrates a generalized workflow for TME scoring and analysis:

This workflow begins with data acquisition from multiple sources, including single-cell RNA sequencing (scRNA-seq), bulk transcriptomics, and clinical annotations [4]. The preprocessing and quality control stage involves normalization, batch effect correction, and filtering using tools such as the Seurat package for scRNA-seq data [4]. Feature extraction encompasses differential expression analysis, pathway enrichment (GO, KEGG, GSVA), and cell type deconvolution [6] [4]. Model training employs various machine learning approaches including LASSO regression, random survival forests, and neural networks to build predictive signatures [5] [6]. Finally, rigorous validation across independent cohorts precedes clinical application for prognosis and treatment response prediction [5] [4].

Key Signaling Pathways in TME Biology

The functional properties of the TME are governed by intricate signaling networks that mediate communication between cellular components. The following diagram illustrates core pathways involved in TME regulation:

The CXCL12-CXCR4 axis represents a crucial signaling mechanism where CAF-secreted CXCL12 creates chemokine gradients that physically exclude CD8+ T cells from tumor nests while promoting metastatic spread [2] [1]. This pathway is clinically targeted by agents such as NOX-A12, which disrupts CXCL12 signaling to facilitate immune cell infiltration into tumors [1]. The TGF-β pathway serves pleiotropic functions, inducing epithelial-mesenchymal transition (EMT), stimulating CAF activation, and promoting immune suppression through multiple mechanisms including Treg induction and CD8+ T cell inhibition [2].

VEGF-mediated angiogenesis drives the development of abnormal tumor vasculature characterized by leakiness and poor perfusion, which in turn exacerbates hypoxia and metastatic potential [2] [6]. Anti-angiogenic therapies targeting VEGF signaling have shown transient benefits, with more durable responses observed when combined with immune checkpoint inhibitors [2]. Immune checkpoint molecules including PD-L1 engage with PD-1 on T cells to attenuate anti-tumor immunity, with PD-L1 expression levels serving as predictive biomarkers for immune checkpoint inhibitor response in cancers such as non-small cell lung cancer (NSCLC) [7]. Hypoxia-inducible factors (HIFs) activate transcriptional programs that promote glycolytic metabolism, angiogenesis, and stemness, further adapting both tumor and stromal cells to thrive in nutrient-deprived conditions [2].

Experimental Protocols for TME Characterization

scRNA-seq Data Processing and TIIC Signature Development

The development of tumor-infiltrating immune cell (TIIC) signatures exemplifies a comprehensive methodology for TME characterization [4]. The protocol involves:

Data Acquisition and Quality Control: Single-cell RNA sequencing data from CRC tumor specimens (e.g., GSE166555 from GEO database) is processed using the Seurat package. Quality thresholds are applied: mitochondrial content <10%, UMI counts between 200-20,000, and gene counts between 200-5,000 [4].

Normalization and Batch Correction: Data normalization identifies the top 2,000 variable genes. The ScaleData function transforms data while regressing out cell cycle effects (S.Score, G2M.Score). The harmony package addresses batch effects across specimens [4].

Cell Type Annotation: Canonical markers define major cell populations: EPCAM, KRT18, KRT19 for epithelial cells; DCN, THY1, COL1A1 for fibroblasts; PECAM1, CLDN5 for endothelial cells; CD3D, CD3E for T cells; NKG7, GNLY for NK cells; CD79A for B cells; LYZ, CD68 for myeloid cells; and KIT for mast cells [4].

Differential Expression Analysis: The FindAllMarkers function identifies differentially expressed genes between immune cells and CRC cells using thresholds of p-value <0.05, |log2FC| >0.25, and expression ratio >0.1 [4].

Machine Learning-Based Signature Refinement: Multiple algorithms including Random Survival Forest (RSF), LASSO regression, and Cox proportional hazards regression refine TIIC-related genes from initial candidates down to a focused prognostic signature [4].

Angiogenesis-Related Gene Signature Construction

The methodology for developing angiogenesis-related gene signatures (ARGS) employs an integrated machine learning framework [6]:

Differential Expression Analysis: Compare 19 normal and 412 tumor tissues in TCGA-BLCA to identify angiogenesis-related genes with |log2FC| >1 and FDR <0.05.

Functional Enrichment: Perform Gene Ontology (GO) and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway analysis using clusterProfiler R package to elucidate biological functions.

Feature Selection: Apply Unicox, Multicox, and LASSO regression (1,000 iterations) using glmnet R package to select prognostic genes while avoiding overfitting.

ARGS Score Calculation: Compute scores using the formula: ARGS score = Σ(expression level of genen × coefficientn). Define risk groups based on median score cutoff.

Validation: Assess prognostic capacity through receiver operating characteristic (ROC) curve analysis, principal component analysis (PCA), and t-distributed stochastic neighbor embedding (t-SNE).

Model Comparison: Evaluate performance against 113 algorithms from 18 machine learning methods including glmBoost, random forests, gradient boosting machines, survival SVMs, and XGBoost.

Table 3: Essential Research Reagents and Computational Tools for TME Analysis

| Category | Specific Tools/Reagents | Primary Application | Key Features |

|---|---|---|---|

| Computational Packages | TMEtyper R package [5] | TME subtyping and classification | Integrates 231 TME signatures; employs consensus clustering and neural networks |

| Seurat [4] | scRNA-seq data analysis | Quality control, normalization, cell type annotation, differential expression | |

| clusterProfiler [6] | Functional enrichment analysis | GO, KEGG, and GSEA analysis for pathway interpretation | |

| glmnet [6] [4] | Feature selection | LASSO and Cox regression for prognostic gene selection | |

| Databases | TCGA [6] [4] | Genomic and clinical data | Multi-omics data from >20,000 primary cancers across 33 cancer types |

| GEO [6] [4] | Transcriptomic data | Public repository for microarray and sequencing data | |

| Molecular Signatures Database [6] | Gene sets | Curated collections of angiogenesis and immune-related genes | |

| CistromeDB [6] | Transcription factor data | Genome-wide mapping of regulatory elements | |

| Experimental Reagents | DMEM medium with FBS [4] | Cell culture | Maintenance of CRC cell lines (LoVo, SW480) and normal epithelial cells (NCM460) |

| TRIzol reagent [4] | RNA extraction | Isolation of high-quality total RNA for transcriptomic analysis | |

| SYBR Premix Ex Taq [4] | qRT-PCR | Quantitative assessment of gene expression with high sensitivity | |

| Therapeutic Agents | NOX-A12 [1] | CXCL12 signaling inhibition | Disrupts chemokine gradients to enhance immune cell infiltration |

| Immune checkpoint inhibitors [7] | PD-1/PD-L1 axis blockade | Reverses T-cell exhaustion; requires PD-L1 scoring for patient selection |

Clinical Translation and Therapeutic Implications

TME characterization has profound clinical implications, enabling more precise prognostication, treatment stratification, and therapeutic development. The established correlation between specific TME subtypes and clinical outcomes underscores their translational relevance. For instance, the Lymphocyte-Rich Hot subtype identified by TMEtyper consistently associates with superior outcomes following immunotherapy [5]. Similarly, high TIIC signature scores in colorectal cancer correlate with improved survival and enhanced response to immune checkpoint blockade [4].

Therapeutic strategies targeting the TME encompass several approaches:

Imm Checkpoint Blockade: Anti-PD-1/PD-L1 antibodies reverse T-cell exhaustion, with treatment selection guided by PD-L1 tumor proportion scoring (TPS) in cancers like NSCLC [7]. Pathologist evaluation remains the gold standard, though AI algorithms show emerging potential for scoring consistency [7].

Stromal-Targeting Agents: CXCL12 inhibition with NOX-A12 disrupts chemokine gradients to facilitate immune cell infiltration, while CAF-directed approaches aim to counteract ECM remodeling and immunosuppressive signaling [2] [1].

Anti-angiogenic Therapies: VEGF pathway inhibitors normalize tumor vasculature and modulate immune cell trafficking, with enhanced efficacy when combined with immunotherapy in cancers such as renal cell carcinoma and hepatocellular carcinoma [2].

Emerging Modalities: Artificial intelligence-driven drug design (AIDD) enables development of novel therapeutic compounds such as Saikosaponin D (SSD), identified as a potential anti-angiogenic agent for bladder cancer treatment [6].

The integration of TME classification into clinical trial designs and routine practice holds promise for advancing personalized oncology. However, challenges remain in standardizing analytical frameworks, validating biomarkers across diverse populations, and effectively targeting the dynamic interplay between cancer cells and their microenvironmental niche.

Key TME Components and Biomarkers for Algorithmic Assessment

The tumor microenvironment (TME) is a complex ecosystem comprising immune cells, stromal cells, blood vessels, and extracellular matrix that surrounds tumor cells. This dynamic interface plays a critical role in cancer progression, immune evasion, and therapeutic response [8]. TME biomarker research systematically investigates cellular, molecular, spatial, and functional features within this non-tumor cellular niche to identify measurable indicators that can predict treatment outcomes and guide therapeutic strategies [9].

The limitations of traditional single-analyte biomarkers such as PD-L1 expression, microsatellite instability (MSI), or tumor mutational burden (TMB) have driven the development of sophisticated algorithmic approaches that capture the TME's complexity [10] [11]. These multi-dimensional biomarkers leverage transcriptomic data, machine learning algorithms, and spatial profiling to classify TME phenotypes with greater predictive power for response to immunotherapies and anti-angiogenic agents [10] [11]. The integration of high-throughput data generation with advanced computational methods represents a paradigm shift in precision oncology, enabling more accurate patient stratification and treatment selection [10].

Key TME Components for Algorithmic Assessment

Algorithmic assessment of the TME focuses on two dominant biological axes: immune infiltration/activation and pathological angiogenesis. These core components form the foundation for classifying TME phenotypes and predicting therapeutic vulnerabilities [10] [11].

Immune Biology Axis

The immune component of the TME encompasses multiple cell types and signaling pathways that collectively determine anti-tumor immune activity:

- Cytotoxic Immune Cells: CD8+ T cells represent the primary effector population responsible for direct tumor cell killing. Their presence, particularly in the tumor core, correlates with improved responses to immune checkpoint inhibitors (ICIs) [12].

- Immune Checkpoint Molecules: Proteins such as PD-1, PD-L1, CTLA-4, LAG-3, and TIM-3 function as regulatory mechanisms that can be co-opted by tumors to suppress anti-tumor immunity [12] [13].

- Macrophage Polarization States: M1 macrophages typically exhibit anti-tumor functions and secrete pro-inflammatory cytokines, while M2 macrophages promote immunosuppression, angiogenesis, and tissue remodeling [10] [12].

- Myeloid-Derived Suppressor Cells (MDSCs): These immature myeloid cells create a strongly immunosuppressive milieu through multiple mechanisms including nutrient depletion, T-cell inhibition, and recruitment of regulatory T cells [10].

- Cytokine and Chemokine Signaling: IFNγ plays a particularly crucial role in coordinating anti-tumor immune responses by enhancing antigen presentation, activating effector cells, and upregulating PD-L1 expression [12].

Angiogenesis Biology Axis

The vascular component of the TME consists of abnormal blood vessels that support tumor growth and create a hostile microenvironment:

- Pathological Vasculature: Tumor blood vessels are typically disorganized, leaky, and inefficient, contributing to hypoxia, acidosis, and impaired drug delivery [10].

- Pro-Angiogenic Signaling: VEGF signaling represents the dominant pathway driving pathological angiogenesis, with multiple family members (VEGF-A, -B, -C, -D) and receptors (VEGFR1-3) contributing to vascular abnormalities [10].

- Hypoxia Response Pathways: Regions of low oxygen tension activate HIF-1α signaling, which further stimulates VEGF production and creates a feed-forward loop promoting angiogenesis [10].

Stromal and Metabolic Components

Beyond immune and vascular elements, additional TME features contribute to tumor progression and therapy resistance:

- Cancer-Associated Fibroblasts (CAFs): These activated fibroblasts produce dense fibrotic stroma that physically impedes drug penetration and creates immunosuppressive niches [8].

- Metabolic Alterations: The TME exhibits distinct metabolic profiles including areas of hypoxia, nutrient deprivation, and acidic pH that influence immune cell function and therapeutic efficacy [9].

- Spatial Organization: The topographic distribution of cells within the TME—whether immune cells are excluded, infiltrated, or clustered in tertiary lymphoid structures—provides critical prognostic information beyond mere cell abundance [9].

Major Algorithmic Approaches for TME Assessment

Multiple computational frameworks have been developed to quantify and classify TME states using transcriptomic, proteomic, and imaging data. These approaches range from gene signature-based methods to complex machine learning models that integrate multiple data modalities.

Table 1: Comparison of Major TME Scoring Algorithms

| Algorithm/Biomarker | Core Methodology | TME Components Assessed | Cancer Types Validated | Therapeutic Predictions |

|---|---|---|---|---|

| Xerna TME Panel [10] [11] | Artificial neural network (ANN) with 124-gene input | Angiogenesis, Immune activity across 4 subtypes | Pan-tumor (gastric, ovarian, melanoma, colorectal) | Anti-angiogenics, Immunotherapies |

| TIDE (Tumor Immune Dysfunction and Exclusion) [13] | Gene-set-like competitive method | T-cell dysfunction, exclusion, myeloid-derived suppressor cells | Multiple cancer types | Anti-PD-1, anti-CTLA-4 response |

| CYT Score [13] | Self-contained average expression of GZMA and PRF1 | Cytotoxic T-cell activity | Multiple cancer types | Anti-CTLA-4, anti-PD-1 response |

| Immunophenoscore (IPS) [13] | Self-contained weighted sum of 162 genes | Multiple immune cell types, immunomodulators | Multiple cancer types | Anti-CTLA-4, anti-PD-1 response |

| IFN-γ Score [13] | Self-contained average of 6 genes | IFN-γ signaling pathway activity | Multiple cancer types | Anti-PD-1 response |

| TGFβ/IFNγ-based Classifier [12] | Unsupervised clustering of immune cell subsets | TGFβ1 and IFNγ-related immune cell populations | Soft tissue sarcomas, RMS | Immune checkpoint blockade |

The Xerna TME Panel: A Machine Learning Framework

The Xerna TME Panel employs a sophisticated artificial neural network (ANN) architecture to classify tumors into four distinct TME subtypes based on the relative dominance of immune and angiogenic signatures [10] [11]:

- Angiogenic (A) Subtype: Characterized by a dense, pathological vasculature with minimal immune cell infiltration. These tumors are theoretically most susceptible to anti-angiogenic therapies [10] [11].

- Immune Active (IA) Subtype: Features robust immune infiltration with activated lymphocytes and M1-polarized macrophages. This phenotype predicts response to immunotherapies [10] [11].

- Immune Suppressed (IS) Subtype: Contains immunosuppressive cell populations (M2 macrophages, MDSCs, Tregs) combined with prominent angiogenic features. This subtype may benefit from combination approaches targeting both pathways [10] [11].

- Immune Desert (ID) Subtype: Lacks significant gene expression related to either immune or angiogenic processes, presenting a microenvironment largely devoid of both vasculature and immune infiltration [10] [11].

Xerna TME Panel Neural Network Architecture

Transcriptomic Biomarkers in Benchmark Studies

Large-scale benchmarking efforts have systematically evaluated the performance of transcriptomic biomarkers for predicting response to immune checkpoint blockade. The ICB-Portal study curated 29 published datasets with matched transcriptome and clinical data from over 1,400 patients treated with ICBs, assessing 48 scoring systems derived from 39 transcriptomic biomarkers [13]. These biomarkers were categorized into:

- Gene-set-like methods (self-contained hypothesis): Rely on predefined lists of marker genes without reference to non-marker genes in the transcriptome (e.g., CYT score, IFN-γ score) [13].

- Gene-set-like methods (competitive hypothesis): Calculate scores based on ranks of marker genes compared to non-marker genes (e.g., ssGSEA-based methods) [13].

- Deconvolution-like methods: Infer cellular composition and interactions from whole transcriptome data [13].

This comprehensive benchmark revealed that most biomarkers showed poor stability and robustness across different datasets, with TIDE and CYT scores demonstrating competitive performance for ICB response prediction, while PASS-ON and EIGS_ssGSEA showed the strongest association with clinical outcomes [13].

Table 2: Performance Metrics of Selected TME Biomarkers from Independent Validations

| Biomarker | Accuracy | Sensitivity | Specificity | PPV | NPV | Validation Context |

|---|---|---|---|---|---|---|

| Xerna TME Panel [10] [11] | Superior to PD-L1 CPS | Superior to MSI-H | Superior to PD-L1 CPS | Superior to PD-L1 CPS | Superior to MSI-H | Gastric cancer immunotherapy cohort |

| PD-L1 CPS (>1) [10] | Benchmark | Benchmark | Benchmark | Benchmark | Benchmark | Gastric cancer immunotherapy cohort |

| MSI-H [10] | Benchmark | Benchmark | Benchmark | Benchmark | Benchmark | Gastric cancer immunotherapy cohort |

| TIDE [13] | Competitive | - | - | - | - | Pan-cancer ICB response prediction |

| CYT Score [13] | Competitive | - | - | - | - | Pan-cancer ICB response prediction |

Experimental Protocols for TME Biomarker Development

The development and validation of robust TME biomarkers requires standardized experimental workflows spanning data generation, algorithm training, and clinical validation.

Xerna TME Panel Development Protocol

The development of the Xerna TME Panel followed a rigorous methodology aligned with Good Machine Learning Practice guidelines [10] [11]:

Dataset Curation and Preprocessing:

- Training cohort consisted of 298 patients from the Asian Cancer Research Group (ACRG) gastric cancer dataset (GSE62254) [10] [11].

- Data from raw microarray expression (CEL) files were processed using the expresso function from the affy R package with robust multi-array average (RMA) background correction, quantile normalization, and median polish summarization [10] [11].

- Validation datasets included four independent cohorts representing different cancer types (gastric, ovarian, melanoma) and treatment modalities (anti-angiogenic agents, immunotherapies) [10] [11].

Feature Set Optimization:

- A novel "feature transferability" metric was developed to quantify the consistency of each gene's expression across different platforms (microarray, RNA-seq) and tissue types [10] [11].

- The final feature set consisted of 124 genes roughly evenly split between angiogenic and immune biological axes, with a subset of genes not weighted in the model [10] [11].

Model Training and Architecture:

- An artificial neural network (ANN) of multilayer perceptron type with two neurons in the hidden layer was trained on the ACRG data [10] [11].

- Hyperparameters were tuned using repeated 10-fold cross-validation [10] [11].

- The model employs a hyperbolic tangent (tanh) activation function: fᵢ(x) = tanh(wᵢ·xᵢ + bᵢ), where fᵢ is the output of the i-th neuron, wᵢ·xᵢ is a weighted sum of input connections, and bᵢ is the intercept bias [10] [11].

- Training iterated until the loss failed to improve by at least 1e-4 for 10 consecutive iterations, with a maximum of 1000 epochs [10] [11].

TME Biomarker Development Workflow

TGFβ/IFNγ-Based Immune Phenotyping Protocol

A distinct approach focused on soft tissue sarcomas employed TGFβ1 and IFNγ-related immune cell subsets to define TME phenotypes [12]:

Data Acquisition and Immune Deconvolution:

- Analyzed publicly available RNA sequencing data (GSE108022) from primary rhabdomyosarcoma samples [12].

- Utilized CIBERSORTx deconvolution algorithm to assess relative fractions of distinct immune cell subtypes [12].

- Performed Spearman correlation analysis between TGFB1/IFNG transcript levels and individual immune cell scores [12].

Immune Cluster Identification:

- Selected significantly correlated immune cell subtypes (CD8+ T cells, naïve B cells, M1 & M0 macrophages, activated NK cells, resting mast cells, monocytes, eosinophils) for further analysis [12].

- Conducted unsupervised hierarchical cluster analysis to identify distinct immune clusters [12].

- Validated findings in the TCGA-SARC cohort using similar analytical approaches [12].

Functional Characterization:

- Evaluated transcript levels of immune checkpoint and IFNγ-related genes across identified clusters [12].

- Applied a 25-gene signature including CD274, PDCD1, CTLA4, and various chemokines/cytokines to characterize immune phenotypes [12].

- Compared IFNγ immune signature scores between clusters with different cellular compositions [12].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for TME Biomarker Studies

| Reagent/Platform | Function | Application Context |

|---|---|---|

| CIBERSORTx [12] | Digital cytometry for deconvoluting immune cell fractions from bulk RNA-seq data | Immune phenotyping in sarcomas, pan-cancer analyses |

| Single-sample GSEA (ssGSEA) [13] [9] | Competitive gene-set enrichment analysis for pathway activity quantification | Immune and stromal signature scoring, TME subtyping |

| Affy R Package with RMA [10] [11] | Microarray data preprocessing with background correction and normalization | Transcriptomic data standardization for model training |

| Multiplex Immunofluorescence [9] | Simultaneous detection of multiple protein markers in tissue sections | Spatial TME analysis, immune cell localization |

| NanoString GeoMx Digital Spatial Profiler [9] | Spatially resolved whole transcriptome analysis from tissue sections | Region-specific TME characterization, tumor-immune interface |

| TIDE Algorithm [13] | Computational framework modeling tumor immune evasion mechanisms | ICB response prediction in multiple cancer types |

| Artificial Neural Network Frameworks [10] [11] | Machine learning architecture for complex pattern recognition | TME phenotype classification, response prediction |

Comparative Performance Across Cancer Types

The clinical utility of TME biomarkers depends on their performance across diverse cancer types and therapeutic contexts. Validation studies have demonstrated variable predictive value depending on cancer histology and treatment modality.

Performance in Gastric Cancer

In gastric cancer cohorts, the Xerna TME Panel demonstrated superior performance compared to established biomarkers [10] [11]:

- Outperformed PD-L1 combined positive score (>1) in accuracy, specificity, and positive predictive value for predicting response to anti-PD-1/PD-L1 immunotherapies [10] [11].

- Surpassed microsatellite instability-high (MSI-H) status in sensitivity and negative predictive value [10] [11].

- Showed 1.6-to-7-fold enrichment of clinical benefit across multiple therapeutic hypotheses [10] [11].

Performance in Soft Tissue Sarcomas

The TGFβ/IFNγ-based classifier identified distinct immune phenotypes with differential clinical outcomes [12]:

- Immune-high clusters demonstrated enriched immune cell infiltration, elevated IFNγ-related signatures, and favorable clinical outcomes [12].

- Immune-low clusters were enriched for immunosuppressive cell types and exhibited poor survival [12].

- CHEK1 emerged as a key node associated with immunosuppressive phenotypes, suggesting potential as a therapeutic target in combination with immune checkpoint inhibition [12].

Pan-Cancer Applicability

Large-scale benchmarking efforts have revealed important considerations for pan-cancer application of TME biomarkers [13]:

- Most biomarkers show variable performance across different cancer types, highlighting the need for histology-specific validation [13].

- Only a limited number of biomarkers (TIDE, CYT) demonstrated consistent predictive value across multiple cancer types [13].

- The integration of multiple biomarker approaches may be necessary to achieve robust predictive performance across diverse cancer contexts [13].

Algorithmic assessment of TME components represents a transformative approach in precision oncology, moving beyond single-analyte biomarkers to capture the complex interplay of immune, stromal, and vascular elements within the tumor ecosystem. The Xerna TME Panel and similar multi-dimensional biomarkers demonstrate superior predictive performance compared to traditional approaches, with validated utility across multiple cancer types and therapeutic modalities [10] [11].

Future developments in TME biomarker research will likely focus on several key areas [8] [9]:

- Spatial Profiling Integration: Incorporating spatial relationships between cellular components through technologies like multiplex immunofluorescence and spatial transcriptomics [9].

- Multi-Modal Data Fusion: Combining transcriptomic, genomic, proteomic, and clinical data within unified machine learning frameworks [8] [9].

- Dynamic Biomarker Monitoring: Developing approaches to track TME evolution during therapy through liquid biopsy and serial assessment [8].

- Improved Clinical Trial Design: Implementing adaptive enrichment strategies that incorporate TME biomarkers to enhance patient selection and trial efficiency [9].

As these technologies mature, algorithmic assessment of TME components is poised to become standard practice in oncology, enabling more precise matching of patients to optimal therapies based on the unique biological context of their tumors.

The Challenge of Variability in Manual Pathologist Scoring

In the field of oncology, the precise assessment of tumor microenvironment (TME) biomarkers serves as the cornerstone for patient stratification and therapy selection. Among these biomarkers, programmed death-ligand 1 (PD-L1) expression in non-small cell lung cancer (NSCLC) has emerged as a critical predictive biomarker for response to immune checkpoint inhibitor therapy [7] [14]. The tumor proportion score (TPS), which quantifies the percentage of PD-L1-positive tumor cells, directly influences therapeutic decisions at established clinical cutoffs (≥1% and ≥50%) [14]. However, the current gold standard—manual scoring by pathologists—is shadowed by inherent subjectivity, leading to concerning levels of interobserver variability that can significantly impact clinical trial outcomes and patient care [7] [15] [16]. This guide objectively compares the performance between manual pathologist scoring and artificial intelligence (AI) algorithms, framing the analysis within broader efforts to benchmark TME scoring algorithms for research and clinical applications.

Quantitative Performance Comparison: Pathologists vs. AI Algorithms

Key Performance Metrics at Clinical Cutoffs

A direct comparative study evaluated the performance of six pathologists and two commercial AI algorithms in scoring PD-L1 expression across 51 NSCLC cases [7] [14]. The interobserver agreement among pathologists and the agreement between AI algorithms and the median pathologist score were quantified using Fleiss' kappa statistics, interpreted as follows: <0.20 (slight), 0.21-0.40 (fair), 0.41-0.60 (moderate), 0.61-0.80 (substantial), 0.81-1.00 (almost perfect) [7].

Table 1: Interobserver Agreement Among Pathologists at Different TPS Cutoffs

| Assessment Method | TPS <1% (Kappa) | Agreement Level | TPS ≥50% (Kappa) | Agreement Level |

|---|---|---|---|---|

| Pathologists (Light Microscopy) | 0.558 | Moderate | 0.873 | Almost Perfect |

| Pathologists (Whole Slide Images) | Similar results to light microscopy | Moderate | Similar results to light microscopy | Almost Perfect |

Table 2: AI Algorithm Agreement with Median Pathologist Score

| AI Algorithm | TPS ≥50% (Kappa) | Agreement Level |

|---|---|---|

| uPath Software (Roche) | 0.354 | Fair |

| PD-L1 Lung Cancer TME App (Visiopharm) | 0.672 | Substantial |

The data reveals a crucial insight: pathologists demonstrate significantly higher consistency at the clinically critical TPS ≥50% cutoff, while the performance of AI algorithms varies substantially between different commercial solutions [7] [14]. This variability is not isolated to PD-L1 scoring. Similar challenges exist in other diagnostic areas, such as the grading of oral epithelial dysplasia (OED), where survey data from 132 pathologists identified that the frequency of reporting and continuing medical education attendance significantly impact grading consistency [15].

Intraobserver Consistency and AI Performance Gaps

The same study provided critical data on the self-consistency of individual pathologists and the comparative performance of AI.

Table 3: Intraobserver Consistency and AI Performance Metrics

| Performance Aspect | Metric | Result / Value |

|---|---|---|

| Pathologist Intraobserver Consistency | Cohen's Kappa (Range) | 0.726 to 1.0 |

| AI Performance (uPath Software) | Agreement at TPS ≥50% | Fair (Kappa: 0.354) |

| AI Performance (Visiopharm App) | Agreement at TPS ≥50% | Substantial (Kappa: 0.672) |

Pathologists demonstrated high intraobserver consistency, indicating that individual pathologists are generally reproducible in their own scoring over time [7]. The variable performance between the two AI algorithms highlights that AI solutions are not universally equivalent and require rigorous, independent validation before deployment in research or clinical settings [7] [14]. This performance gap underscores the need for continued refinement of AI tools to match the reliability of expert human evaluation, particularly in critical clinical decision-making contexts [7].

Experimental Protocols for Benchmarking TME Scoring Algorithms

Study Design and Cohort Specifications

The referenced comparative study employed a rigorous protocol designed to enable direct comparison between human and algorithmic performance [7] [14].

Table 4: Experimental Study Design and Cohort Details

| Parameter | Specification |

|---|---|

| Study Design | Retrospective, blinded comparison |

| Cohort Size | 51 consecutive NSCLC patients (2020) |

| Tumor Types | 34 adenocarcinomas, 17 squamous cell carcinomas |

| Sample Types | 26 bronchoscopy biopsies, 25 surgical resections |

| Pathologists | 6 (5 pulmonary specialists, 1 in training) |

| AI Algorithms | uPath PD-L1 (SP263) software (Roche), PD-L1 Lung Cancer TME application (Visiopharm) |

| Washout Period | Minimum 1 month between scoring sessions |

The study utilized formalin-fixed paraffin-embedded (FFPE) samples stained with PD-L1 (SP263 clone) according to manufacturer protocols [14]. All samples contained a minimum of 100 tumour cells, as confirmed by haematoxylin-eosin (H&E) re-evaluation, ensuring adequate material for reliable scoring [14].

Scoring Methodology and Statistical Analysis

The scoring protocol and statistical methods were designed to mirror real-world clinical practice while enabling robust quantitative comparisons.

Scoring Procedures:

- Pathologist Scoring: Evaluations were performed using both light microscopy and whole slide images (WSI) after a washout period. Any intensity of partial or complete membranous staining was considered positive. Pathologists recorded the percentage of positively stained tumour cells using a standardized scale: 0%, 1%, 5%, 10%, and up to 100% in 10% increments [14].

- AI Algorithm Scoring: The two AI algorithms were applied to digitally scanned WSIs. Algorithm 1 (Roche uPath) required manual selection of the tumour area by a pathologist before analysis, while Algorithm 2 (Visiopharm) operated without this requirement [14].

Statistical Analysis: Agreement metrics were calculated using Fleiss' kappa for interobserver agreement and Cohen's kappa for intraobserver consistency. The median pathologist score served as the reference standard for comparing AI algorithm performance [7].

Signaling Pathways and Workflow Diagrams

PD-1/PD-L1 Signaling Pathway in the Tumor Microenvironment

Diagram 1: PD-1/PD-L1 Checkpoint Pathway. This diagram illustrates the mechanism by which tumor cells expressing PD-L1 engage with PD-1 receptors on T-cells, leading to T-cell inhibition and ultimately tumor immune evasion. This pathway is the therapeutic target of immune checkpoint inhibitors, making accurate PD-L1 scoring critical for patient selection [14].

Experimental Workflow for Scoring Variability Assessment

Diagram 2: Scoring Variability Study Workflow. This workflow outlines the parallel assessment of the same sample set by human pathologists and AI algorithms, enabling direct comparison of scoring consistency and agreement metrics [7] [14].

Research Reagent Solutions for TME Scoring Studies

Table 5: Essential Research Reagents and Platforms for TME Scoring Validation

| Reagent / Platform | Function / Application | Example Products / Clones |

|---|---|---|

| IHC Antibody Clones | Detection of PD-L1 expression on tumor and immune cells | SP263, 22C3, 28-8, SP142 [14] |

| Digital Slide Scanners | Creation of whole slide images for digital analysis | PANORAMIC1000 (3DHISTECH), Ventana DP200 (Roche) [14] |

| AI Analysis Software | Automated quantification of biomarker expression | uPath PD-L1 (Roche), PD-L1 Lung Cancer TME (Visiopharm) [7] [14] |

| Slide Management Systems | Storage, viewing, and management of digital pathology images | CaseCenter (3DHISTECH) [14] |

| Reference Standards | Validation of staining quality and scoring accuracy | Positive and negative control tissues [14] |

The comparative data presented in this guide demonstrates that while AI algorithms show promise for standardizing TME scoring, they currently exhibit variable performance and have not yet consistently matched the reliability of expert pathologists, particularly at clinically critical decision thresholds [7] [14]. This variability in both manual and digital scoring has profound implications for clinical trial design and drug development. In therapeutic areas like metabolic dysfunction-associated steatohepatitis (MASH), where histologic scoring is the gold standard for clinical trial endpoints, reader variability can confound the measurement of true drug effects and potentially cause promising therapies to fail due to assessment inconsistency rather than lack of efficacy [16].

For researchers and drug development professionals, these findings highlight the critical need for:

- Rigorous validation of any AI scoring tool against expert pathologist consensus before implementation

- Standardized scoring protocols that minimize subjective interpretation of staining patterns

- Multi-reader strategies for critical endpoint assessment in clinical trials to mitigate individual reader variability

As AI technology continues to evolve, the optimal path forward appears to be a synergistic approach that leverages the computational power and consistency of AI algorithms while maintaining human expert oversight for complex cases and quality control [16] [14]. This balanced methodology promises to enhance the reliability of TME scoring in both research and clinical practice, ultimately supporting more robust drug development and more precise patient stratification for immunotherapy.

The quantitative assessment of the Tumor Microenvironment (TME) is a cornerstone of modern oncology, with critical implications for drug development and patient stratification. Immune checkpoint inhibitors targeting the PD-1/PD-L1 axis have revolutionized non-small cell lung cancer (NSCLC) treatment, where PD-L1 expression, measured as the Tumor Proportion Score (TPS), serves as a critical predictive biomarker for therapeutic response [14] [7]. However, traditional pathological assessment, reliant on manual microscopy, is challenged by subjectivity, labor-intensiveness, and significant inter-observer variability. Artificial Intelligence (AI) promises to overcome these limitations by offering standardized, scalable analytical pipelines capable of extracting novel insights from complex tissue architecture. This guide objectively compares the current performance of AI algorithms against human pathologists in TME scoring, providing researchers and drug development professionals with a data-driven evaluation of this rapidly evolving field.

Performance Comparison: Pathologists vs. AI Algorithms

A 2025 comparative study evaluated the effectiveness of six pathologists versus two commercially available AI algorithms in scoring PD-L1 expression in 51 SP263-stained NSCLC cases [14] [7]. The results, summarized in the table below, reveal key performance differentiators.

Table 1: Comparative Performance at Critical PD-L1 TPS Cutoffs

| Evaluator | Metric | TPS <1% (Kappa) | TPS ≥50% (Kappa) | Intra-observer Consistency (Kappa Range) |

|---|---|---|---|---|

| Pathologists (Group) | Interobserver Agreement | 0.558 (Moderate) | 0.873 (Almost Perfect) | 0.726 to 1.0 (High) [14] |

| AI: uPath (Roche) | Agreement with Median Pathologist | — | 0.354 (Fair) | — |

| AI: Visiopharm | Agreement with Median Pathologist | — | 0.672 (Substantial) | — |

The data indicates that while AI algorithms can achieve substantial agreement with expert pathologists, their performance is not yet uniformly consistent across platforms. Pathologists demonstrate higher consensus, particularly at the clinically critical high (≥50%) TPS cutoff [14]. This underscores the continued need for human expertise in the diagnostic loop and highlights that AI tools require further refinement to match the reliability of expert evaluation in clinical decision-making contexts.

Experimental Protocols and Methodologies

Understanding the experimental design is crucial for interpreting the comparative data and applying these insights to novel research.

Study Cohort and Sample Preparation

The referenced analysis utilized a cohort of 51 consecutive NSCLC patient samples (34 adenocarcinomas, 17 squamous cell carcinomas) from 2020, comprising 26 bronchoscopy biopsies and 25 surgical resections [14]. All samples were formalin-fixed and paraffin-embedded (FFPE). PD-L1 staining was performed on 4-μm-thick sections using the VENTANA PD-L1 (SP263) Assay on a BenchMark ULTRA platform, with appropriate controls. A critical pre-analysis step was the re-evaluation of Haematoxylin & Eosin (H&E)-stained slides to confirm the presence of a minimum of 100 tumour cells, ensuring sample adequacy [14].

Evaluation Workflow: Human vs. AI Scoring

The scoring process involved parallel paths for human and AI assessment, facilitating a direct comparison.

Diagram 1: PD-L1 Scoring Experimental Workflow

Scoring Criteria and Statistical Analysis

For both pathologists and AI, PD-L1 expression was evaluated only on tumour cells, with any intensity of partial or complete membranous staining regarded as positive [14]. Pathologists recorded the percentage of positive cells in specific increments (0%, 1%, 5%, 10%, then 10% increments to 100%). The study's statistical core lay in measuring interobserver agreement (consistency between different pathologists/AI) and intraobserver agreement (self-consistency of pathologists after a washout period) using Fleiss' Kappa and Cohen's Kappa, respectively [14]. These metrics were calculated at the clinically decisive TPS cutoffs of 1% and 50%.

The Scientist's Toolkit: Essential Research Reagents and Materials

The transition of TME scoring from manual to AI-augmented workflows relies on a suite of specialized reagents and software. The following table details key components used in the featured study that are essential for replicating or designing similar research.

Table 2: Key Research Reagent Solutions for AI-based TME Scoring

| Item | Function / Role | Example from Study |

|---|---|---|

| PD-L1 IHC Assay | Specific detection of PD-L1 protein expression on tumor and immune cells. | VENTANA PD-L1 (SP263) Assay [14] |

| Automated IHC Stainer | Ensures standardized, reproducible staining conditions crucial for quantitative analysis. | BenchMark ULTRA platform (Ventana/Roche) [14] |

| Whole-Slide Scanner | Converts glass slides into high-resolution digital images for AI analysis. | PANORAMIC1000 (3DHISTECH), Ventana DP200 (Roche) [14] |

| AI Scoring Software | Automated image analysis algorithm for quantifying biomarkers like PD-L1 TPS. | uPath PD-L1 (Roche), Visiopharm PD-L1 Lung Cancer TME App [14] |

| Digital Pathology Viewer | Software platform to manage, view, and annotate whole-slide images. | CaseCenter (3DHISTECH) [14] |

The integration of AI into TME scoring holds immense promise for standardizing biomarker quantification, scaling analysis to meet growing diagnostic demands, and potentially uncovering novel histological insights beyond human perception. Current data demonstrates that while advanced AI algorithms can achieve substantial agreement with expert pathologists, human expertise remains the benchmark for reliability, particularly in borderline or complex cases. The future of TME research in drug development lies not in AI replacing pathologists, but in the synergistic combination of computational power and human diagnostic acumen, leading to more precise, reproducible, and insightful patient stratification.

The tumor microenvironment (TME) is a critical determinant of cancer progression, treatment response, and patient outcomes. TME scoring algorithms have emerged as essential computational tools that systematically quantify the cellular composition, spatial architecture, and functional state of the TME. These algorithms transform complex multi-omics and imaging data into reproducible, quantitative scores that can predict immunotherapy responses and patient survival. The field has progressed from purely research-oriented tools to clinically validated systems, with 2025 marking a significant inflection point in their adoption for precision oncology and drug development. This evolution is characterized by the integration of artificial intelligence (AI), extensive multi-omics data, and rigorous benchmarking frameworks that validate their clinical utility.

Classification and Comparison of Major TME Scoring Algorithms

TME scoring algorithms can be broadly categorized into three main types: transcriptomics-based deconvolution methods, spatial image analysis tools, and integrated multi-omics platforms. The table below summarizes the key characteristics, technologies, and clinical applications of major algorithms available in 2025.

Table 1: Overview of Major TME Scoring Algorithms in 2025

| Algorithm Name | Algorithm Type | Input Data | Core Technology | Primary Output | Clinical Application |

|---|---|---|---|---|---|

| TMEtyper [5] | Integrated Computational Framework | Transcriptomics | Pan-cancer TME signature + CNN + Structural Causal Modeling | 7 TME Subtypes | Immunotherapy response prediction |

| TMEscore [17] | Signature-based Scoring | Transcriptomics | PCA/z-score from gene signatures | Continuous TMEscore | Prognosis in gastric cancer |

| TME-Analyzer [18] | Spatial Image Analysis | Multiplexed immunofluorescence | Interactive GUI with Voronoi segmentation | Cellular distances & densities | Survival prediction in TNBC |

| AIM-MASH [16] | AI-Pathology Tool | H&E & Trichrome stained slides | Deep learning-based feature detection | Histological component scores | MASH clinical trial endpoints |

| CIBERSORT-based Scheme [19] | Immune Deconvolution | Transcriptomics | Support vector regression | 22 immune cell fractions | Ovarian cancer subtyping |

Performance Benchmarking and Experimental Data

Predictive Performance for Immunotherapy Response

Robust validation across independent cohorts is essential for establishing clinical utility of TME scoring algorithms. The following table summarizes the demonstrated predictive performance of major algorithms in key clinical contexts.

Table 2: Clinical Predictive Performance of TME Scoring Algorithms

| Algorithm | Validation Cohort | Clinical Endpoint | Performance | Key Biomarker |

|---|---|---|---|---|

| TMEtyper [5] | 11 immunotherapy cohorts | ICB treatment response | Strong predictive power | Lymphocyte-Rich Hot subtype associated with superior outcomes |

| TME-Analyzer [18] | Independent TNBC cohort (MIBI-TOF) | Overall survival | Significant prediction | 10-parameter classifier based on cellular distances |

| TMEscore [17] | Gastric cancer cohort | Prognosis & immunotherapy relevance | Significant stratification | TMEscore correlated with TME phenotypes & genomic traits |

| Ovarian Cancer TME Scheme [19] | TCGA & GEO cohorts | Overall survival | Significant differences | TMEC3 subtype showed longest OS |

Concordance with Established Methods

As new algorithms emerge, their concordance with established methods must be rigorously evaluated. The TME-Analyzer demonstrated less than 20% root mean square error when compared to commercial software inForm and open-source tool QuPath for quantifying cellular densities and distances [18]. This level of concordance with established platforms provides confidence for translational adoption while offering enhanced usability and interactive features.

Detailed Experimental Protocols and Methodologies

Computational Framework Implementation (TMEtyper)

TMEtyper represents a comprehensive approach that integrates multiple analytical components into a unified framework for TME subtyping [5]:

Signature Integration: Combines 231 TME signatures encompassing cellular compositions, pathway activities, and intercellular communication networks.

Network-Based Clustering: Applies consensus clustering coupled with topological feature extraction to delineate TME subtypes.

Machine Learning Classification: Implements an ensemble machine learning approach combined with a convolutional neural network (CNN) for robust subtype classification.

Causal Inference: Utilizes structural causal modeling to reconstruct underlying regulatory networks and identify key hub genes specific to each subtype.

Validation Framework: Employs cross-validation across 11 independent immunotherapy cohorts to verify predictive power.

The workflow can be visualized as follows:

Spatial Image Analysis Workflow (TME-Analyzer)

The TME-Analyzer implements an interactive, customizable workflow for analyzing multiplexed imaging data [18]:

Image Loading: Compatible with various fluorescence and high-dimensional images containing a nuclear marker.

Foreground Selection: Intensity histograms per channel guide threshold selection and background correction.

Compartment Segmentation: Defines tumor and stroma regions based on marker expression.

Nucleus/Cell Segmentation: Utilizes either manual watershed algorithms or machine learning approaches, followed by Voronoi cell segmentation.

Cell Phenotyping: Implements flow cytometry-like gating with real-time back-projection to tissue images for visualization and adjustment.

Data Analysis and Export: Quantifies tissue areas, cellular numbers, densities, and intercellular distances, exporting single-cell and tissue-level information.

This comprehensive protocol enables researchers to account for the high inter- and intra-patient heterogeneity inherent in cancer tissue images.

TME Scoring Scheme Development

The methodology for developing a TME scoring scheme typically involves [19]:

Immune Cell Infiltration Quantification: Using deconvolution algorithms like CIBERSORT to estimate scores of 22 immune cell types based on LM22 signature matrix.

TME Subtype Identification: Applying ConsensusClusterPlus for unsupervised clustering of samples based on immune infiltration patterns.

Differential Expression Analysis: Identifying genes differentially expressed between TME subtypes using DESeq2.

Genomic Subtyping: Employing non-negative matrix factorization (NMF) based on differentially expressed genes to identify genomic subtypes.

Scoring Scheme Construction: Using k-means algorithm and principal component analysis (PCA) to develop a quantitative TME score that summarizes the TME infiltration pattern of individual patients.

Successful implementation of TME scoring algorithms requires specific computational tools and data resources. The following table details essential components of the TME researcher's toolkit in 2025.

Table 3: Essential Research Reagents and Computational Tools for TME Scoring

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| CIBERSORT [19] | Deconvolution Algorithm | Estimates 22 immune cell type fractions from transcriptomic data | Web portal |

| ConsensusClusterPlus [19] | R Package | Unsupervised clustering for defining TME subtypes | Bioconductor |

| TMEtyper [5] | R Package | Comprehensive TME characterization and subtyping | Open-source |

| TME-Analyzer [18] | Python GUI | Interactive spatial analysis of multiplexed images | Open-source |

| TMEscore [17] | R Package | Calculates TMEscore using PCA or z-score | GitHub |

| LM22 [19] | Signature Matrix | Gene signatures for 22 immune cell types | CIBERSORT portal |

| TCGA/ GEO Datasets [19] | Data Resources | Multi-omics data for validation | Public repositories |

Clinical Translation and Validation Frameworks

Regulatory Validation Pathways

The transition of TME scoring algorithms from research tools to clinically validated systems requires rigorous regulatory validation. The AIM-MASH system represents a pioneering example of this pathway, having undergone comprehensive multisite analytical and clinical validation across approximately 13,000 independent reads from over 1,400 biopsies across four completed global MASH clinical trials [16]. This validation framework, developed in partnership with the FDA and EMA, demonstrates the stringent requirements for clinical implementation.

Benchmarking Standards

Effective benchmarking of TME scoring algorithms must address several critical dimensions [20]:

All-in-One Training Paradigm: Evaluating performance when a single unified model is trained across all samples, rather than maintaining separate models for each time series or cancer type.

Zero-Shot Inference: Assessing detection performance on previously unseen data without retraining or fine-tuning.

Event-Based Evaluation Metrics: Moving beyond simple accuracy metrics to event-based evaluation that aligns with clinical endpoints.

Comprehensive Leaderboards: Maintaining continuously updated evaluation platforms similar to the GLUE Leaderboard in NLP, but tailored for TME scoring tasks.

Future Directions and Implementation Challenges

While TME scoring algorithms have made significant advances, several challenges remain for widespread clinical implementation. The integration of multi-modal data sources, including transcriptomics, proteomics, and digital pathology, represents the next frontier for algorithm development. Standardization of scoring thresholds across different cancer types and demonstration of clinical utility in prospective trials are essential for regulatory approval. Furthermore, the development of user-friendly interfaces that enable pathologists and clinicians to interact with and trust algorithmic outputs will be crucial for real-world adoption. As these challenges are addressed, TME scoring algorithms are poised to become integral components of precision oncology, guiding therapeutic decisions and accelerating drug development.

Inside the Black Box: How Leading TME Scoring Algorithms Work

The tumor microenvironment (TME) plays a fundamental role in cancer progression, with its soluble and cellular components significantly influencing the efficacy of advanced therapies like CAR-T cells [21]. As of 2025, computational algorithms for quantifying and interpreting the TME have become indispensable tools in oncology research and drug development. These algorithms transform complex multimodal data—from genomic sequencing to digital pathology—into actionable biological insights. This guide provides an objective comparison of leading TME scoring algorithm architectures, framing their performance within a rigorous benchmarking context essential for researchers, scientists, and drug development professionals. The focus is on architectural principles, quantitative performance metrics under standardized experimental conditions, and the practical research reagents that enable their application.

Algorithm Architectures and Methodologies

Commercial TME algorithms can be broadly categorized by their core computational approach and primary data input. The following section details the architectural frameworks of prominent solutions.

Deep Learning-Based Quantitative Analysis

This architecture employs deep learning models for the end-to-end analysis of complex biological images, particularly whole-slide images (WSIs) from immunohistochemistry (IHC).

- Core Principle: The algorithm utilizes a fully automated pipeline based on deep learning to precisely identify and quantify subcellular compartments—nuclei, membrane, and cytoplasm—from IHC-stained tissue sections [22].

- Architectural Workflow:

- Input: A whole-slide image (WSI) of an IHC-stained tissue sample.

- Preprocessing: Optical density separation is applied to differentiate between hematoxylin and 3,3'-diaminobenzidine (DAB) staining components [22].

- Segmentation: A specialized CellViT nuclear segmentation algorithm precisely identifies cell nuclei. This is complemented by a region growing algorithm to delineate membranes and cytoplasmic regions [22].

- Quantification: The expression intensities for each segmented cellular component (nuclear, membrane, cytoplasmic) are calculated based on the staining parameters.

- Output: Automated, quantitative metrics for biomarker expression across the entire tissue sample.

- Key Advantage: This method achieves greater accuracy in specific quantitative metrics compared to traditional manual interpretation, reducing subjectivity and increasing throughput [22].

Computational Protein Design and De Novo Receptor Engineering

Moving beyond descriptive scoring, a more interventionist architectural paradigm involves the de novo computational design of synthetic biosensors that actively interpret TME signals.

- Core Principle: This platform performs de novo bottom-up assembly of allosteric receptors with programmable input-output behaviors. These receptors are engineered to respond to specific soluble TME factors, such as vascular endothelial growth factor (VEGF) or colony-stimulating factor 1 (CSF1), by initiating desired intracellular co-stimulation or cytokine signals in T cells [21].

- Architectural Workflow:

- Input Definition: Specification of the target TME ligand (e.g., VEGF, CSF1) and the desired output signaling pathway.

- Computational Modeling: A protein design platform is used to model and assemble receptor structures in silico. This process often leverages existing protein data bank (PDB) structures for foundational components [21].

- Allosteric Design: The receptor is engineered to undergo conformational changes upon ligand binding, thereby triggering the pre-programmed intracellular signal [21].

- Output: A designed synthetic receptor, such as a TME-sensing switch receptor for enhanced response to tumors (T-SenSER), which can be genetically encoded into therapeutic cells [21].

- Key Advantage: It enables the creation of custom therapeutic circuits that logically process TME inputs, moving from passive scoring to active, targeted cellular intervention. Combination of a CAR with a T-SenSER in human T cells has been shown to enhance anti-tumour responses in models of lung cancer and multiple myeloma in a VEGF- or CSF1-dependent manner [21].

The diagram below illustrates the core computational and experimental workflow for developing and validating these algorithms.

Performance Benchmarking and Experimental Data

Objective comparison requires standardized evaluation. The table below summarizes key performance metrics for the described algorithmic architectures, based on published experimental validations.

Table 1: Quantitative Performance Benchmarking of TME Algorithm Architectures

| Algorithm Architecture | Primary Input Data | Key Performance Metric | Reported Result | Experimental Model | Citation |

|---|---|---|---|---|---|

| Deep Learning-Based IHC Quantification | Whole-Slide IHC Images | Accuracy & Recall in Nuclear/Membrane/Cytoplasmic Segmentation | "Excellent" performance in accuracy and recall | Animal cell whole-slide images | [22] |

| T-SenSER (VEGF-Targeting) | Soluble VEGF in TME | Enhancement of Anti-Tumor Response | Enhanced anti-tumor response | Human T cells in lung cancer and multiple myeloma models | [21] |

| T-SenSER (CSF1-Targeting) | Soluble CSF1 in TME | Enhancement of Anti-Tumor Response | Enhanced anti-tumor response | Human T cells in lung cancer and multiple myeloma models | [21] |

Detailed Experimental Protocols

To ensure reproducibility, the core experimental methodologies used to generate the benchmark data are outlined below.

Protocol for Validating Deep Learning-Based IHC Quantification:

- Sample Preparation: Tissue sections are stained using standard IHC protocols with hematoxylin and DAB.

- Image Acquisition: Whole-slide images (WSIs) of the stained sections are captured at high resolution using a digital slide scanner.

- Algorithm Processing: The WSIs are processed through the deep learning pipeline, which involves:

- Optical Density Separation: Deconvoluting the image to separate the hematoxylin (nuclear) and DAB (biomarker) signals [22].

- Nuclear Segmentation: Applying the CellViT algorithm to identify and segment individual cell nuclei [22].

- Region Growing: Using the segmented nuclei as seeds, a region growing algorithm delineates the cell membrane and cytoplasmic boundaries [22].

- Quantification: The intensity of biomarker expression is quantified within each segmented cellular compartment.

- Validation: Algorithmic outputs for segmentation accuracy and expression quantification are compared against manual pathologist interpretation or pre-established ground truth datasets. Performance is measured using standard metrics like accuracy, precision, and recall [22].

Protocol for Validating Computationally Designed T-SenSERs:

- Receptor Design & Cloning: The T-SenSER targeting VEGF or CSF1 is computationally designed and its genetic sequence is cloned into a lentiviral or retroviral vector [21].

- T Cell Engineering: Human primary T cells are activated and transduced with the viral vector to express the T-SenSER construct, often in combination with a CAR.

- In Vitro Functional Assay: Transduced T cells are co-cultured with tumor cells in the presence of the target ligand (VEGF or CSF1). T cell activation, cytokine production (e.g., IFN-γ, IL-2), and cytotoxic activity are measured to confirm ligand-dependent functionality [21].

- In Vivo Efficacy Model:

- Animal Model: Immunodeficient mice are engrafted with human tumor cell lines (e.g., lung cancer, multiple myeloma).

- Treatment: Mice are infused with the T-SenSER-engineered T cells.

- Monitoring: Tumor volume is tracked over time and compared to control groups (e.g., T cells with CAR only). Tumor infiltration and persistence of T cells may be analyzed ex vivo [21].

- Dependency Confirmation: The specific dependency on the target ligand (VEGF or CSF1) is confirmed using appropriate controls, demonstrating that the enhanced anti-tumor response is directly linked to the engineered sensing pathway [21].

The signaling logic of a computationally designed synthetic receptor is complex. The following diagram details the input-output relationship of a T-SenSER.

The Scientist's Toolkit: Essential Research Reagents

Implementing and validating these TME algorithms requires a suite of specialized research reagents and tools. The following table catalogs key solutions for researchers in this field.

Table 2: Key Research Reagent Solutions for TME Algorithm Development and Validation

| Reagent / Material | Function in TME Algorithm Workflow | Specific Application Example |

|---|---|---|

| IHC Staining Kits (Hematoxylin & DAB) | Enables visualization of target biomarkers on tissue sections for subsequent image analysis and algorithm training/validation. | Generating whole-slide images for deep learning-based quantification of protein expression [22]. |

| CellViT Nuclear Segmentation Algorithm | A deep learning-based tool for precisely identifying and segmenting cell nuclei in whole-slide images; a core component of the image analysis architecture. | Automated nuclear segmentation as the first step in quantifying cellular compartments [22]. |

| Lentiviral/Retroviral Vector Systems | Delivery vehicles for stably introducing genes encoding synthetic receptors (like T-SenSERs) into primary human T cells. | Engineering T cells to express computationally designed biosensors for functional validation [21]. |

| Recombinant Human Cytokines/Growth Factors (e.g., VEGF, CSF1) | Purified ligands used for in vitro stimulation to test the specificity and functionality of engineered biosensing receptors. | Validating the input-output behavior of T-SenSERs in controlled cell culture assays [21]. |

| Protein Data Bank (PDB) Structural Data | Provides atomic-level protein structures used as templates or building blocks for computational protein design and de novo receptor assembly. | Informing the computational modeling and design of allosteric receptor domains [21]. |

| Dimeric MultiDomain Biosensor Builder Software | Custom computational platform for the modeling and de novo assembly of multi-domain protein biosensors. | Designing the T-SenSER receptors with programmable signaling activity [21]. |

Comparative Performance of Multi-Omic Profiling and Analysis Techniques

The integration of Hematoxylin and Eosin (H&E) staining, Immunohistochemistry (IHC), and molecular profiling data (DNA, RNA) is revolutionizing the quantitative analysis of the Tumor Microenvironment (TME). The table below summarizes the performance of various platforms and algorithms used in this multi-omic workflow.

| Technology / Method | Primary Function | Key Performance Metrics | Notable Findings / Advantages |

|---|---|---|---|

| Same-Section ST/SP (Xenium/COMET) [23] [24] | Integrated Spatial Transcriptomics & Proteomics | Enables single-cell RNA-protein correlation; Low systematic transcript-protein correlations observed [23] [24] | Eliminates section-to-section variation; Facilitates direct concordance studies and region-specific marker analysis [23] [24]. |

| Imaging-Based ST Platforms (Xenium, CosMx, MERFISH) [25] | Spatial Transcriptomics Profiling | Variable transcripts per cell and unique gene counts; Performance depends on panel design and tissue age [25]. | CosMx detected the highest transcript counts; Xenium multimodal segmentation yielded lower counts than unimodal [25]. |

| AI for PD-L1 Scoring [7] | Automated Biomarker Quantification | Fair to substantial agreement with pathologists (Fleiss' kappa: 0.354 to 0.672 at 50% TPS cutoff) [7]. | Highlights the need for further AI refinement to match expert reliability in clinical decision-making [7]. |

| Multi-Omics Data Integration Methods (e.g., SNF, MOFA+) [26] [27] | Computational Data Fusion | Accuracy, robustness, and clinical significance of identified cancer subtypes [26]. | Integrating more omics data does not always improve performance; selection of data types and methods is critical [26]. |

Detailed Experimental Protocols for Multi-Omic Integration

Same-Tissue-Section Spatial Multi-Omics Workflow

This protocol enables the co-profiling of RNA and protein from a single tissue section, ensuring perfect spatial registration.

- Sample Preparation: Use consecutive 5 µm sections from Formalin-Fixed Paraffin-Embedded (FFPE) human lung carcinoma tissue [23] [24].

- Spatial Transcriptomics:

- Perform Xenium In Situ Gene Expression analysis per manufacturer's instructions [23] [24].

- Utilize a targeted gene panel (e.g., 289-plex human lung cancer panel) [23] [24].

- The process involves hybridization, ligation, and amplification of gene-specific barcodes, followed by cyclic imaging [23] [24].

- Spatial Proteomics:

- Histology and Registration:

- Conduct H&E staining on the post-assayed section [23] [24].

- Co-register DAPI images from Xenium and COMET to the H&E image using a non-rigid spline-based algorithm in software like Weave [23] [24].

- Apply cell segmentation masks to generate an integrated dataset of gene expression and protein intensity for the same cells [23] [24].

Cross-Platform Spatial Transcriptomics Comparison

This methodology provides an objective assessment of different ST platforms for TME profiling.

- Sample Design: Use serial sections from FFPE tissue microarrays (TMAs) containing lung adenocarcinoma and pleural mesothelioma samples [25].

- Platform Profiling: Subject serial TMA sections to multiple commercial ST platforms, such as CosMx (1,000-plex panel), MERFISH (500-plex panel), and Xenium (e.g., 289-plex + 50 custom genes) [25].

- Data Analysis and Benchmarking:

- Compare transcripts per cell and unique gene counts, normalized for panel size [25].

- Assess signal-to-noise by comparing target gene probe expression to negative control probes [25].

- Evaluate cell segmentation accuracy by examining transcript presence in cells and cell area sizes [25].

- Validate cell type annotations against pathologists' evaluations of multiplex immunofluorescence (mIF) and H&E-stained sections [25].

Computational Multi-Omics Integration for Cancer Subtyping

This protocol outlines a computational approach for integrating bulk omics data to discover molecular subtypes.

- Data Pre-processing: Collect and pre-process multiple omics data types (e.g., genome, epigenome, transcriptome) from the same patient cohort (e.g., TCGA) [26].

- Method Selection and Integration:

- Select representative integration algorithms from categories such as:

- Apply these methods to all possible combinations of the available omics data types [26].

- Performance Evaluation:

- Assess the accuracy of the identified subtypes using clustering accuracy metrics and clinical significance (e.g., survival analysis) [26].

- Evaluate the robustness and computational efficiency of the methods [26].

- Investigate the influence of different omics data types and their combinations on subtyping effectiveness [26].

Integrated Multi-Omic Analysis Workflow

The Scientist's Toolkit: Key Reagents and Platforms

The following table details essential reagents, technologies, and software critical for executing robust multi-omic TME studies.

| Item / Technology | Function in Workflow | Specifications / Examples |

|---|---|---|

| FFPE Tissue Sections [23] [25] | Standard biospecimen for preserving tissue architecture and biomolecules. | Typically 5 µm thick sections mounted on specialized slides [23] [25]. |

| Spatial Transcriptomics Platforms [23] [25] | In-situ profiling of RNA expression with spatial context. | Xenium (10x Genomics), CosMx (NanoString), MERFISH (Vizgen); utilize targeted gene panels (e.g., 289-plex to 1,000-plex) [23] [25]. |

| Hyperplex IHC / Spatial Proteomics [23] [24] | In-situ profiling of protein expression with spatial context. | COMET platform (Lunaphore); uses cyclical staining/elution with antibody panels (e.g., 40 markers) [23] [24]. |