Beyond the Mouse: Addressing Species Mismatch in Animal Models with Human-Relevant New Approach Methodologies

This article examines the critical challenge of species mismatch in biomedical research, where traditional animal models often fail to accurately predict human responses.

Beyond the Mouse: Addressing Species Mismatch in Animal Models with Human-Relevant New Approach Methodologies

Abstract

This article examines the critical challenge of species mismatch in biomedical research, where traditional animal models often fail to accurately predict human responses. It explores the scientific and regulatory drivers, led by recent FDA initiatives, that are accelerating a paradigm shift toward New Approach Methodologies (NAMs). The scope includes a foundational review of the limitations of animal models, a methodological guide to implementing advanced tools like organ-on-chip and organoids, strategies for troubleshooting and validating these systems, and a comparative analysis of their predictive power versus conventional approaches. Tailored for researchers and drug development professionals, this resource provides a comprehensive roadmap for adopting human-relevant research models to enhance drug discovery and development.

The Problem of Species Mismatch: Why Animal Models Are Failing Drug Development

Welcome to the Technical Support Center for Species Mismatch Research. This resource is designed for researchers and drug development professionals navigating the critical challenge of low predictability in biomedical research. A foundational thesis in our field is that a widespread mismatch between traditional animal models and human biology is a primary contributor to this crisis, leading to failed clinical trials, wasted resources, and delayed treatments.

This guide provides direct, actionable troubleshooting advice and FAQs to help you identify, understand, and overcome the specific issues posed by species mismatch in your experiments.

Frequently Asked Questions (FAQs)

What is species mismatch, and how does it impact my research?

Answer: Species mismatch refers to the fundamental biological differences between animal models and humans that undermine the external validity of preclinical research. These differences can be genetic, immunological, physiological, or related to disease presentation.

The impact is significant and quantifiable. It directly leads to the poor translation of findings from the bench to the bedside. For example, despite over a thousand drugs showing promise in animal models of stroke, only one has translated to clinical use, with its benefits still being controversial [1]. This high failure rate demonstrates the costly "predictability gap" created by species mismatch.

My drug candidate works perfectly in animal models but fails in human trials. What went wrong?

Answer: This is a classic symptom of species mismatch. The problem likely occurred at one or more of these stages:

- Unrepresentative Animal Samples: Laboratory animals are often young, genetically identical, and healthy, lacking the comorbidities (e.g., hypertension, obesity) and genetic diversity of human patient populations [2] [1]. Your drug may not work in older, genetically diverse humans with multiple health conditions.

- Fundamental Physiological Differences: Key systems may operate differently. For instance, the immune system of a mouse is adapted to deal with ground-level pathogens, while the human system is better at managing airborne viruses [3]. A therapy targeting an immune pathway might not engage the same way in humans.

- Poorly Modeled Disease Complexity: Animal models often induce a disease state rapidly in a controlled setting, failing to capture the slow, progressive, and complex nature of many human chronic diseases [1].

How can I improve the translatability of my preclinical findings?

Answer: You can take several steps to mitigate the risk of species mismatch:

- Incorporate Human-Relevant Models Early: Integrate New Approach Methodologies (NAMs) like organ-on-chip (OOC) systems or organoids into your workflow before moving to complex animal models [4] [5]. These systems use human cells and can provide more human-relevant data on efficacy and toxicity.

- Use Humanized Mouse Models: For research requiring an in vivo context, consider "humanized" mouse models. These are immunodeficient mice engrafted with human hematopoietic stem cells (HSCs) or peripheral blood mononuclear cells (PBMCs) to create a more accurate model of the human immune system for studying cancer, autoimmune diseases, and infections [3].

- Rigorously Design Animal Studies: Ensure your animal studies are both internally and externally valid. This includes using animals of appropriate age and sex, modeling comorbidities, and aligning treatment timelines with clinically relevant scenarios (e.g., treating after disease onset, not just prophylactically) [2] [1].

Troubleshooting Guides

Problem: Unexpected Immune Response or Lack of Efficacy

Symptoms: A therapeutic shows no effect or causes an adverse immune reaction in a humanized model or in clinical trials, despite success in standard animal models.

Diagnosis: This is frequently due to immunological species differences. The mouse and human Major Histocompatibility Complex (MHC) / Human Leukocyte Antigen (HLA) systems have important functional differences; for example, HLA molecules in humans can bind to a broader range of pathogen-derived peptides [3]. A treatment designed around a mouse-specific immune pathway is likely to fail in humans.

Solution:

- Verify Your Model: If using a humanized mouse model, confirm successful engraftment and reconstitution of the human immune system. A failed or low-efficiency engraftment will not provide useful data [3].

- Check Cell Quality: The quality of the starting human cells (HSCs or PBMCs) is critical. Use a reliable vendor to ensure accurate cell counts and high cell viability to avoid underpowered or failed experiments [3].

- Switch to a Human-Based System: For early-stage screening, use human immune cell cultures or immune-compatible OOC models to de-risk your pipeline before proceeding to in vivo studies [6] [4].

Problem: Inability to Model Complex Human Disease Pathology

Symptoms: Your animal model does not replicate the key cellular or molecular hallmarks of the human disease you are studying.

Diagnosis: The animal model lacks the complexity of the human condition. Many animal models are acute and do not capture the chronic, multi-factorial progression of human diseases like neurodegenerative disorders or complex metabolic syndromes [1].

Solution:

- Adopt a Multi-Model Approach: Do not rely on a single animal model. Use a tiered strategy combining several NAMs to answer specific biological questions [4]. For example, use inner ear organoids to study hair cell differentiation and in vitro synapse models to study functional neuronal connections, rather than a single complex animal model [4].

- Utilize Patient-Derived Organoids: Create disease-specific models using induced pluripotent stem cells (iPSCs) derived from patients. These organoids can reflect the underlying human biology and disease mechanisms in a reproducible, patient-specific manner without the confounding variables of species differences [6].

- Leverage Computational Models: Use artificial intelligence (AI) and machine learning to create "digital twins" that simulate disease progression or drug effects in human biological systems, helping to prioritize the most promising candidates for further testing [5].

Key Quantitative Data on the Translation Crisis

The following table summarizes the stark reality of the predictability gap in preclinical research, highlighting why addressing species mismatch is urgent.

Table 1: The Preclinical Translation Crisis at a Glance

| Aspect of Crisis | Quantitative Evidence | Implication for Researchers |

|---|---|---|

| Overall Drug Development Success | Only ~500 of several thousand human diseases have any approved treatments [1]. | High unmet medical need; current models are failing to produce cures. |

| Clinical Trial Failure Rates | 52% of failures in Phase II/III trials are due to efficacy issues; 24% due to safety[cite [1]]. | Animal models are poor predictors of both whether a drug will work in humans and if it is safe. |

| Specific Example: Stroke Research | Over 1,000 drugs successful in animal models; only 1 translated to clinical use [1]. | The predictive value of traditional animal models for neurology is exceptionally low. |

| Specific Example: Liver Toxicity | Liver chips detected 87% of drugs that were hepatotoxic in humans but not in animal models [4]. | Human-based OOC models can outperform animals in critical safety assessments. |

Experimental Protocols & Workflows

Protocol: Establishing a Humanized Mouse Model for Immunological Studies

This protocol outlines the key steps for creating a humanized mouse model via CD34+ hematopoietic stem cell (HSC) engraftment, a common method for studying the human immune system in vivo [3].

1. Principle: Immunodeficient mice are preconditioned to create space in the bone marrow and then injected with human HSCs. These cells engraft and reconstitute a human immune system within the mouse, allowing for the study of human-specific pathogens, cancer immunotherapies, and autoimmune diseases.

2. Reagents and Materials:

- Immunodeficient mice (e.g., NSG strain)

- Source of human CD34+ HSCs (e.g., umbilical cord blood, bone marrow)

- Irradiation equipment (for preconditioning)

- Myeloablative chemotherapeutic agent (alternative preconditioning)

- Sterile PBS for cell resuspension

- Flow cytometry reagents for immune phenotyping (e.g., antibodies against human CD45, CD3, CD19)

3. Procedure:

- Step 1: Preconditioning. Subject immunodeficient mice to sublethal irradiation or myeloablative chemotherapy. This eliminates the mouse's own bone marrow cells, creating a niche for the human cells to engraft [3].

- Step 2: Cell Preparation. Isolate or thaw a high-quality, viable batch of human CD34+ HSCs. Critical Step: The entire sample size for the experiment should ideally come from the same donor to minimize batch effects. Ensure cell count is accurate and viability is high [3].

- Step 3: Engraftment. Intravenously inject the prepared CD34+ HSCs into the preconditioned mice.

- Step 4: Reconstitution and Validation. Allow 12-16 weeks for the human immune system to reconstitute. Validate successful engraftment by periodically testing mouse peripheral blood via flow cytometry for the presence of human immune cells (e.g., human CD45+ cells) [3].

4. Key Troubleshooting:

- Low Engraftment: This is often due to inadequate preconditioning, low quality/viability of the starting HSCs, or an insufficient number of cells injected [3].

- Graft-versus-Host Disease (GvHD): More common when using peripheral blood mononuclear cells (PBMCs). Using naïve CD34+ cells from cord blood can reduce this risk [3].

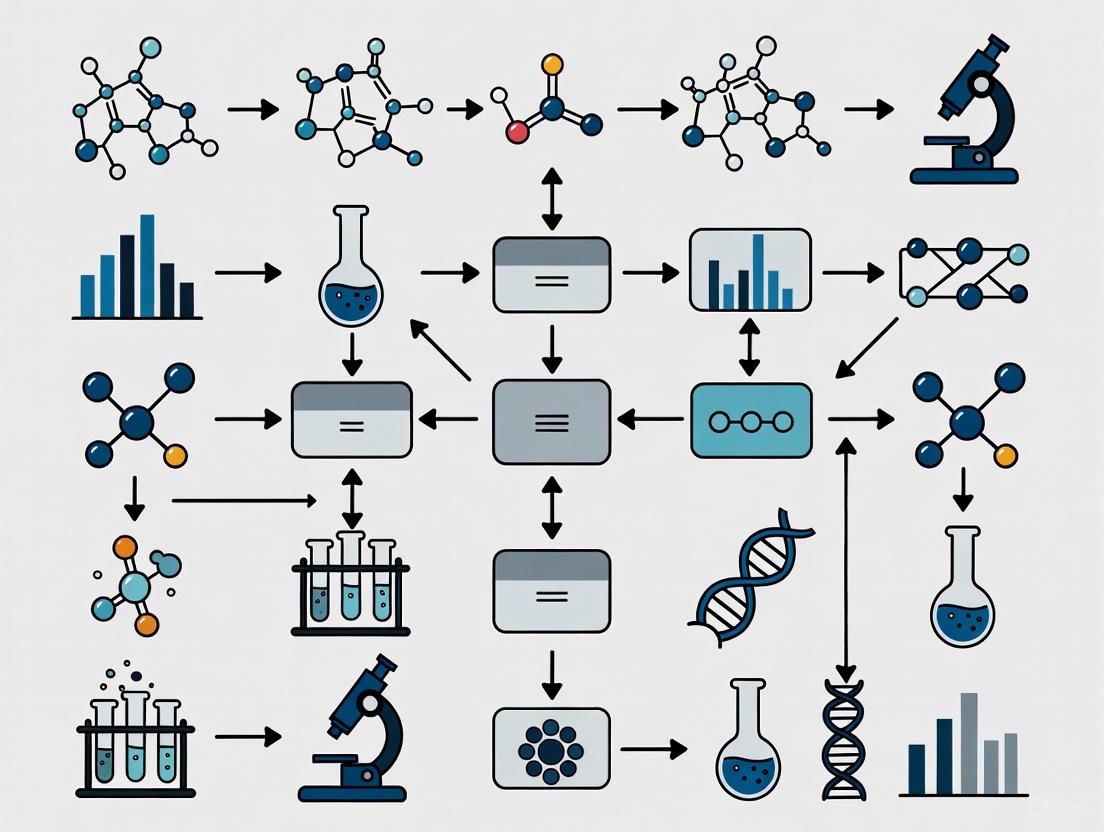

The workflow for creating and validating a humanized mouse model can be visualized as follows:

Workflow: Integrating New Approach Methodologies (NAMs) in Drug Discovery

This workflow describes a strategy to reduce reliance on animal models by front-loading human-relevant testing, thereby mitigating species mismatch early in the discovery process [4] [5].

1. Principle: Use a combination of AI, organ-on-chip (OOC), and organoid technologies to create a more predictive, human-relevant pipeline for evaluating drug safety and efficacy before considering animal studies.

2. Procedure:

- Step 1: AI-Powered In Silico Screening. Use machine learning models to screen virtual compound libraries for desired activity and predict potential absorption, distribution, and toxicity profiles [5].

- Step 2: Human Organoid Testing. Take hits from the AI screen and test them on patient-derived organoids. This provides a 3D, human-cell-based model for initial efficacy and mechanistic studies [6] [4].

- Step 3: Single-Organ Chip Validation. Progress the most promising candidates to single-organ chips (e.g., liver-chip, heart-chip) for more sophisticated safety and toxicity profiling under dynamic fluid flow conditions [4].

- Step 4: Multi-Organ Chip Analysis. For candidates requiring systemic understanding, use multi-organ chips (e.g., liver-heart-chip) to study inter-organ cross-talk and detect off-target effects [4].

- Step 5: Targeted, Hypothesis-Driven Animal Testing. Only after successful passage through previous stages, use animal models for final, specific questions that require a whole-body context (e.g., systemic toxicity, behavior), with study designs informed by the human-relevant NAM data [4].

The following diagram illustrates this integrated, human-first workflow:

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Overcoming Species Mismatch

| Item | Function & Rationale |

|---|---|

| CD34+ Hematopoietic Stem Cells (HSCs) | The starting material for creating humanized mouse models. Sourced from umbilical cord blood or bone marrow, these cells reconstitute the human immune system in immunodeficient mice, allowing for the study of human-specific immunology [3]. |

| Induced Pluripotent Stem Cells (iPSCs) | Patient-derived cells that can be reprogrammed into any cell type. Used to create patient-specific organoids that model human disease without species differences, ideal for drug screening and mechanistic studies [6]. |

| Organ-on-Chip (OOC) Systems | Microfluidic devices lined with human cells that simulate organ-level physiology and mechanics (e.g., blood flow, breathing motions). They provide human-relevant data on drug efficacy, toxicity, and disease mechanisms that animal models often miss [4]. |

| AI/ML Software for Digital Twins | Computational models that simulate a biological process or entire organ. They use AI to predict drug safety and efficacy in a human context based on existing data, helping to prioritize experiments and reduce animal testing [5]. |

| High-Quality Cell Culture Matrices | Advanced hydrogels and extracellular matrix (ECM) substitutes that provide a human-relevant 3D microenvironment for growing organoids and OOC systems, crucial for maintaining realistic cell function and morphology [6]. |

FAQs: Troubleshooting Preclinical Development

Q1: Why do monoclonal antibodies (mAbs) that show promise in preclinical models often fail in late-stage human trials?

Several key factors, often related to translational gaps between animal models and human biology, contribute to this failure:

- Incomplete Understanding of Mechanism of Action (MOA): Proceeding to Phase III trials without a complete understanding of the drug's MOA and its interaction with the human disease pathway is a common reason for failure. For instance, a failure to fully understand the biology of targets previously thought to be essential for cancer has led to late-stage trial termination [7].

- Suboptimal Dosing Strategies: Initial dosing regimens established in clinical trials are often refined post-approval as real-world data is gathered. A review of FDA-approved mAbs found that 21% underwent dosing changes for their initial indication, with a median time to change of 37.5 months. These changes include dose increases or decreases, and adjustments for specific patient populations [8].

- Limitations of Animal Models: Neurotoxin-based animal models (e.g., using MPTP, rotenone for Parkinson's disease, or scopolamine for Alzheimer's disease) may not fully recapitulate the complex, systemic pathology of human neurodegenerative diseases. While useful for screening, they often cannot beat the predictive value of newer genetic models for diseases like Alzheimer's [9] [10].

Q2: What are the common pitfalls in modeling neuroinflammation for drug discovery?

The primary pitfall is the failure of a single model to capture the entirety of human disease pathology.

- Over-Reliance on Single Models: Animal models of Alzheimer's disease (AD) based on specific frameworks like amyloid-β plaques or neurofibrillary tangles may not accurately represent the disease in people. This has contributed to the poor translation of preclinical results to the clinic [10].

- Species-Specific Immune Responses: The immune response in rodents can differ significantly from humans. For example, the first AD vaccine, AN-1792, showed promise in mouse models but caused encephalitis in human trials. The immune response was inconsistent among patients and varied drastically from the murine models, highlighting a critical species mismatch [11].

- Model Selection: For neuroinflammation, different inductors like Lipopolysaccharide (LPS) and poly I:C are used to mimic bacterial or viral infections, respectively. However, the resulting neuroinflammation might only represent an acute response, not the chronic, low-grade inflammation seen in many human diseases [12].

Q3: How can we better validate the translational relevance of our animal models?

A multi-faceted approach is necessary:

- Use Multiple Models: No single model is perfect. Research should utilize a spectrum of models (e.g., neurotoxin-based, genetic, and neuroinflammation-induced) to test therapeutic candidates. The field is encouraged to use all available tools, including in vivo, in vitro, and in silico models [9] [10].

- Incorporate Human Biomarkers: Where possible, validate findings against human biomarkers. In Alzheimer's research, the hypothetical biomarker model posits that Aβ accumulation is an early event, followed by tau pathology and neuroinflammation, which are more closely correlated with clinical symptoms. Effective therapies should demonstrate target engagement on these relevant biomarkers [11].

- Focus on Patient Selection in Model Design: Models should be evaluated based on how well they inform patient selection for clinical trials. Factors like genetic risk (e.g., APOE ε4 status in AD), stage of disease, and presence of comorbidities are critical for trial success and should be reflected in modeling strategies [13] [11].

Troubleshooting Guide: Late-Stage Failures

This guide analyzes specific failure cases to identify root causes and preventive strategies.

Table: Analysis of Late-Stage Monoclonal Antibody Failures

| Case Study (Therapeutic Area) | Agent (Company) | Reported Failure Reason | Root Cause & Lessons Learned |

|---|---|---|---|

| Small Cell Lung Cancer (Oncology) [7] | Rovalpituzumab tesirine - Rova-T (AbbVie) | Did not improve overall survival compared to standard chemotherapy; toxicity issues. | • Strategic/Commercial Factors: Proceeded to Phase III based on poor-quality Phase II data.• Toxicity: Off-target toxicities were a major limiting factor.• Trial Design: Incomplete understanding of the drug's mechanism and the biological context of its target (DLL3). |

| Recurrent Glioblastoma (Oncology) [7] | Depatuxizumab mafodotin - Depatux-M (AbbVie) | Lack of survival benefit compared to placebo. | • Efficacy: Despite targeting a relevant receptor (EGFR), the antibody-drug conjugate did not provide a survival advantage in a difficult-to-treat cancer.• Toxicity: Ocular toxicity was a noted adverse event, highlighting the importance of a favorable risk-benefit profile. |

| Alzheimer's Disease (Neuroscience) [11] | Bapineuzumab (Early anti-amyloid mAb) | Failed to demonstrate clinical efficacy. | • Dosing: Concerns over adverse events (ARIA) led to inadequate drug exposure (<3% the amount of later mAbs).• Patient Selection: ~25% of the trial sample did not have the target amyloid pathology, diluting the potential treatment effect. |

Experimental Protocols for Model Validation

Protocol 1: Establishing a Neuroinflammation Model using Systemic LPS Administration

Purpose: To induce systemic and central neuroinflammation in rodents, mimicking aspects of sickness behavior and neuroinflammation seen in conditions like ME/CFS [12].

Materials:

- Lipopolysaccharide (LPS) from E. coli (e.g., serotype 055:B5).

- Sterile phosphate-buffered saline (PBS).

- Adult C57BL/6 mice or Sprague-Dawley rats.

- Syringes and needles for intraperitoneal (i.p.) injection.

Procedure:

- Preparation: Reconstitute LPS in sterile PBS to a working concentration. Prepare a vehicle control of PBS alone.

- Dosing: Administer LPS via i.p. injection. A typical dose for mice is 0.5-1 mg/kg. Control animals receive an equal volume of PBS.

- Post-Injection Monitoring:

- Behavioral Analysis: Measure locomotor activity and exploratory behavior in an open field apparatus at 2-4 hours and 24 hours post-injection. A significant decrease in activity is expected in the LPS group.

- Tissue Collection: At the peak of the cytokine response (e.g., 2-4 hours post-injection), euthanize animals and collect brain regions of interest (e.g., hippocampus, cortex) and blood plasma.

- Biomarker Analysis:

- Cytokines: Measure pro-inflammatory cytokines (IL-1β, IL-6, TNF-α) in brain homogenates and plasma using ELISA.

- Microglial Activation: Analyze brain sections using IBA-1 immunohistochemistry to assess microglial morphology and activation state.

Troubleshooting: If the inflammatory response is too severe or lethal, titrate the LPS dose downward. Ensure all solutions are prepared endotoxin-free to avoid unintended activation.

Protocol 2: Validating a Parkinson's Disease Model using Systemic Rotenone Administration

Purpose: To create a rodent model that reproduces dopaminergic neurodegeneration and key pathological features of Parkinson's disease [9].

Materials:

- Rotenone (e.g., dissolved in DMSO and then mixed with a carrier like sunflower oil).

- Sunflower oil (vehicle).

- Osmotic minipumps or equipment for daily subcutaneous (s.c.) injections.

- Adult C57BL/6 mice.

Procedure:

- Preparation: For continuous infusion, load rotenone solution into osmotic minipumps. For intermittent dosing, prepare daily s.c. injection solutions.

- Dosing: Systemically administer rotenone. A common regimen for mice is a continuous s.c. infusion at 2-3 mg/kg/day for 2-4 weeks via minipump. Control animals receive the vehicle.

- Post-Treatment Validation:

- Motor Function: Conduct behavioral tests such as the rotarod test, pole test, and open field assay to quantify motor deficits.

- Post-Mortem Analysis:

- Immunohistochemistry: Process brain sections (substantia nigra and striatum) for tyrosine hydroxylase (TH) to quantify the loss of dopaminergic neurons and terminals.

- Biochemistry: Assess markers of oxidative stress and mitochondrial complex I inhibition in brain tissues.

Troubleshooting: The model is sensitive to rotenone batch and animal strain. Monitor animals closely for weight loss and general health. Adjust the dose or duration if mortality is high.

Signaling Pathways & Failure Analysis

The following diagram illustrates the complex pathway from therapeutic concept to market, highlighting key failure points discussed in the case studies.

Therapeutic Development Pathway and Failure Points

The Scientist's Toolkit: Research Reagent Solutions

This table details key reagents and their functions in the experiments and case studies discussed.

Table: Essential Research Reagents for mAb and Neuroinflammation Research

| Reagent / Material | Function / Application | Considerations for Species Mismatch |

|---|---|---|

| Lipopolysaccharide (LPS) [12] | A TLR4 ligand used to induce systemic and central neuroinflammation in rodent models, mimicking bacterial infection. | Rodent immune responses to LPS can differ in sensitivity and cytokine profile from humans. Results may not translate directly. |

| Poly I:C [12] | A synthetic double-stranded RNA that acts as a TLR3 ligand, used to model viral infection-induced neuroinflammation. | As with LPS, the neuroinflammatory cascade and subsequent behavioral changes in rodents may not fully represent human conditions. |

| Rotenone [9] | A natural toxin that inhibits mitochondrial complex I, used to create a rodent model of dopaminergic neurodegeneration for Parkinson's disease. | The systemic administration in rodents produces a model with key features of PD, but may not replicate the slow, progressive etiology of the human disease. |

| Anti-Aβ Monoclonal Antibodies (e.g., Aducanumab, Lecanemab) [13] [11] | Passive immunotherapies designed to target and facilitate the clearance of amyloid-β in Alzheimer's disease. | Early failures (e.g., Bapineuzumab) highlight that target engagement in mouse models does not guarantee clinical efficacy without optimal dosing and patient selection. |

| Single Antigen Beads (SAB) [14] | Luminex-based beads used for highly sensitive detection of HLA antibodies, crucial in transplant immunology. | Discrepancies in antigen quantity on these beads versus human cell surfaces can lead to false positives/negatives, impacting clinical decisions. |

Conceptual Foundations of Mismatch

What is evolutionary mismatch and why is it relevant to animal model research?

Evolutionary mismatch describes a state of disequilibrium whereby an organism that evolved in one environment develops a phenotype that is harmful to its fitness or well-being in another environment [15]. In translational biomedical research, this concept is crucial because it explains how physiological and behavioral traits adapted to a species' ancestral environment may become maladaptive in laboratory settings, potentially compromising research validity [16]. For animal models, this means that species-specific adaptations to their natural evolutionary environments may create significant divergences when these animals are used to model human conditions [17].

How does developmental mismatch differ from evolutionary mismatch?

Developmental mismatch occurs at the individual level when there's a discrepancy between the environment experienced during early development and the environment encountered later in life [17]. This contrasts with evolutionary mismatch, which operates across generations and involves adaptations to ancestral environments becoming maladaptive in modern environments [18]. Both concepts are relevant to animal research: evolutionary mismatch affects species selection, while developmental mismatch can impact how laboratory conditions (e.g., early life stress vs. standard housing) influence experimental outcomes [17].

Troubleshooting Guides for Species Mismatch

How to identify potential species mismatch in experimental design?

Table 1: Common Indicators of Species Mismatch in Preclinical Research

| Indicator | Description | Potential Impact |

|---|---|---|

| Differential Drug Metabolism | Significant variations in metabolic pathways or rates compared to humans | Incorrect dosing predictions, toxicology misses |

| Immune System Divergence | Fundamental differences in immune cell populations or response patterns | Failed translation of inflammatory or immunology findings |

| Physiological Process Variation | Different mechanisms for similar biological functions (e.g., bone healing, neural repair) | Misidentification of therapeutic mechanisms |

| Behavioral Response Disparity | Species-specific behaviors that confound behavioral tests | Invalid assessment of neuroactive compounds or interventions |

What strategies can minimize mismatch-related experimental bias?

- Implement Rigorous Controls: Always include appropriate positive and negative control groups, and consider using littermate controls for genetically modified strains to maintain consistent genetic background [19].

- Standardize Environmental Factors: Control for variables such as diet type, light cycles, housing density, and noise levels that may interact with species-specific adaptations [20] [19].

- Account for Developmental Stage: Recognize that age impacts major biological changes; ensure consistent age matching across experimental groups [20].

- Monitor Microbiome Effects: Be aware that pathogen exclusion status and microbiome composition can significantly influence experimental outcomes, particularly in metabolic and immunological studies [19].

- Validate Across Multiple Species: When possible, confirm critical findings in multiple species with different evolutionary trajectories to identify conserved biological mechanisms [20].

Experimental Protocols for Mismatch Evaluation

Protocol: Assessing Metabolic Mismatch in Animal Models

Background: Modern humans experience metabolic diseases like diabetes and heart disease that may result from mismatch between evolved physiology and modern environments [16]. This protocol helps evaluate similar mismatches in animal models.

Materials:

- Research species (e.g., mice, rats, non-human primates)

- Control diet (approximating evolutionary diet)

- Challenge diet (high fats/sugars, representing modern diet)

- Metabolic cages for energy expenditure measurement

- Body composition analyzer (DEXA, MRI)

- Glucose and insulin tolerance test supplies

- Tissue preservation reagents

Methodology:

- Acclimatization Phase: House animals under standardized conditions for 2 weeks with control diet.

- Baseline Measurements: Record body weight, body composition, fasting glucose, and insulin levels.

- Dietary Intervention: Randomize animals into control diet and challenge diet groups using proper randomization methods.

- Monitoring Phase: Track food intake, body weight, and energy expenditure weekly for 8-12 weeks.

- Endpoint Assessments: Perform glucose and insulin tolerance tests, collect tissues for histological analysis.

- Data Analysis: Compare metabolic parameters between groups, specifically looking for exaggerated responses in challenge diet groups that may indicate evolutionary mismatch.

Table 2: Research Reagent Solutions for Mismatch Studies

| Reagent/Resource | Function in Mismatch Research | Validation Requirements |

|---|---|---|

| Genetically Defined Animal Strains | Controls for genetic background effects on phenotypic expression | Regular backcrossing to defined background; genetic monitoring every 4 generations [19] |

| Species-Appropriate Diets | Mimics ancestral or modern nutritional environments for metabolic mismatch studies | Macronutrient analysis; consistency across batches |

| Behavioral Test Apparatus | Quantifies species-specific behavioral responses to experimental conditions | Calibration against known pharmacological controls; environment standardization |

| Pathogen Monitoring Systems | Controls for microbiome and immune status differences affecting experimental outcomes | Regular serological and molecular screening; barrier facility maintenance |

Visualization of Mismatch Concepts

Evolutionary Mismatch Mechanism

Species Selection Decision Framework

Frequently Asked Questions

How can we distinguish true evolutionary mismatch from poor experimental design?

True evolutionary mismatch manifests as consistent, biologically plausible divergences that align with known species-specific evolutionary histories. Poor experimental design typically produces random or inconsistent results that improve with methodological refinements. To distinguish between them:

- Conduct systematic literature reviews to establish consistent patterns across studies [21]

- Compare results across multiple species with different evolutionary trajectories

- Verify that environmental conditions appropriately match the research question

- Consult with evolutionary biologists when interpreting species-specific responses

The most prevalent mismatch issues in rodent research include:

- Metabolic mismatches: Laboratory diets versus evolutionary diets leading to altered metabolic responses [16]

- Social structure disruptions: Artificial housing conditions that disrupt natural social hierarchies and stress responses [17] [16]

- Immunological differences: Divergent immune system development and function due to pathogen-controlled environments [19]

- Circadian rhythm disruptions: Artificial light cycles interfering with natural circadian biology [17]

How does developmental plasticity contribute to mismatch in animal models?

Developmental plasticity allows organisms to adjust their phenotype based on early environmental cues, preparing them for similar conditions in adulthood [18] [17]. In research settings, when early life conditions (e.g., standard laboratory housing) differ dramatically from experimental conditions later in life, this can create developmental mismatches that confound results. For example, animals raised in enriched versus deprived environments may respond differently to the same experimental intervention later in life [17].

What documentation practices help identify mismatch issues?

Implement comprehensive documentation of:

- Genetic background: Complete breeding records and genetic monitoring procedures [19]

- Environmental conditions: Detailed records of housing, diet, light cycles, and environmental enrichment [22] [20]

- Developmental history: Early life experiences and any pre-experimental manipulations

- Procedure variations: Any deviations from standardized protocols that might interact with species-specific traits [20]

- Unexpected responses: Careful documentation of anomalous findings that might indicate mismatch phenomena

The journey from a promising discovery in an animal model to an approved human therapy is a long and complex one. A foundational analysis of this pipeline, published in PLOS Biology, examined 122 systematic reviews encompassing 367 therapeutic interventions across 54 human diseases [23]. The study quantified the transition rates at key stages, revealing that only 5% of therapies that show efficacy in animal studies ultimately achieve regulatory approval for human use [23]. This low rate has become a central concern in biomedical research.

However, this figure tells only part of the story. The same analysis found that the transition rate from animal studies to human trials is much higher, at 50%, and 40% of interventions advance to randomized controlled trials (RCTs) in humans [23]. Furthermore, a meta-analysis showed an 86% concordance between positive results in animal studies and subsequent positive results in human clinical trials [23] [24]. This indicates that when an animal study is well-designed and shows a clear benefit, those findings are very often replicated in human studies. The core problem, therefore, is not necessarily that animal findings are universally wrong, but that the entire path from bench to bedside is fraught with challenges, many of which stem from a fundamental species mismatch [1].

Table: Key Transition Rates in the Drug Development Pipeline

| Development Stage | Transition Rate | Typical Timeframe (Years) |

|---|---|---|

| From animal studies to any human study | 50% | 5 |

| From animal studies to a Randomized Controlled Trial (RCT) | 40% | 7 |

| From animal studies to regulatory approval | 5% | 10 |

| Concordance of positive results (animal to human) | 86% | - |

FAQ: The Core Problem of Species Mismatch

This section addresses the most common questions researchers have about the translational success rate and its underlying causes.

Q1: If 86% of positive animal results are confirmed in humans, why is the final approval rate only 5%?

The high concordance rate for efficacy does not guarantee a drug's ultimate success. Therapies can fail for numerous reasons after showing initial promise:

- Safety and Toxicity: A compound may be effective but cause unforeseen adverse reactions in humans that were not present in the animal model due to species-specific physiology or metabolism [1].

- Commercial and Strategic Decisions: Some programs are terminated for non-scientific reasons, such as lack of funding, strategic portfolio shifts by a pharmaceutical company, or insufficient market potential.

- Efficacy in Larger Trials: An intervention might show a small but statistically significant effect in early, smaller human trials but fail to demonstrate a clinically meaningful benefit in the larger, more rigorous Phase III trials required for approval [25].

Q2: What are the primary limitations in animal model design that contribute to this gap?

Several recurring issues in the design and execution of animal studies undermine their predictive value:

- Lack of Robust Study Design: Many animal studies do not implement key measures to reduce bias, such as blinding of investigators and randomization of animals to treatment groups [23] [24].

- Inadequate Statistical Power: Studies often use too few animals, making them underpowered to detect a true treatment effect or to reliably estimate its size [24].

- Unrepresentative Animal Subjects: Laboratory animals are typically young, healthy, and genetically homogeneous. In contrast, human patients are often older, have multiple health conditions (comorbidities), and are genetically diverse [24] [1]. Testing a neuroprotective drug in a healthy young mouse, for example, may not predict its effect in an elderly human stroke patient with hypertension and diabetes [1].

- Misaligned Outcomes: Animal studies frequently focus on molecular or mechanistic endpoints, while human trials prioritize clinically relevant outcomes that matter to patients, such as overall survival or functional improvement [24].

Q3: Beyond general design, what are the inherent problems of "species mismatch"?

Even a perfectly designed animal study faces inherent biological challenges when translating to humans:

- Physiological and Genetic Differences: Fundamental differences in metabolism, immune system function, drug receptor specificity, and anatomy exist between species [26] [3] [1]. For instance, the human immune system is more complex than that of mice and better manages airborne pathogens, while mice are more adept at handling ground-level pathogens [3].

- Inability to Model Complex Human Diseases: Many animal models capture only a single facet of a complex human disease. They often cannot replicate the slow progression of chronic diseases (e.g., Alzheimer's), the influence of a patient's lifetime of environmental exposures, or the complexity of having multiple simultaneous diseases [2] [1].

- Poor Clinical Applicability: The timing and method of treatment administration in animal models can be highly unrealistic. A drug might be given to an animal before or immediately after disease induction, whereas humans often begin treatment long after the disease has developed [1].

Troubleshooting Guide: Mitigating Species Mismatch in Your Research

This guide provides actionable strategies to help researchers design more predictive and translatable animal studies.

| Challenge | Root Cause | Solutions & Best Practices |

|---|---|---|

| Poor Predictive Power for Efficacy | Unrealistic animal models; misaligned endpoints. | • Incorporate Comorbidities: Use aged animals or induce common co-existing conditions (e.g., hypertension in stroke models) [1].• Align Endpoints: Select outcome measures that are directly relevant to the human clinical condition (e.g., functional recovery over purely molecular changes) [24]. |

| Unexpected Human Toxicity | Fundamental species differences in physiology and immunology. | • Utilize Humanized Models: Employ "humanized" mouse models engrafted with human immune cells to better predict immunogenic responses [3].• Leverage Human-Ex Vivo Systems: Use technologies like Ex Vivo Metrics, which involve perfusing and testing drugs on intact, ethically donated human organs (e.g., liver, lung) to study human-specific absorption, metabolism, and toxicity [27]. |

| Irreproducible & Biased Results | Flawed experimental design and low statistical power. | • Implement Experimental Rigor: Adhere to the ARRIVE guidelines. Always use randomization, blinding, and calculate the necessary sample size (a priori power analysis) to ensure the study is adequately powered [24].• Improve Model Validity: Critically assess whether your model has strong face validity (resembles the human condition) and construct validity (based on a similar underlying cause) [2]. |

| Failure in Late-Stage Clinical Trials | The animal model does not reflect the target human population or clinical setting. | • Mimic Clinical Timing: Administer treatments after the onset of symptoms in the animal model, not prophylactically, to better simulate the human treatment scenario [1].• Test in Multiple Models: Confirm key findings in more than one animal model or species to increase confidence in the robustness of the therapeutic effect. |

The following diagram maps the key failure points in the translational pipeline and the corresponding solutions to overcome them.

The Scientist's Toolkit: Essential Research Reagents & Models

This table details key materials and models that can be employed to bridge the species gap.

Table: Key Resources for Enhancing Translational Research

| Tool / Reagent | Function & Application | Key Consideration |

|---|---|---|

| Humanized Mouse Models | Immunodeficient mice engrafted with human hematopoietic stem cells (HSCs) or peripheral blood mononuclear cells (PBMCs) to create a "human-like" immune system for studying cancer immunotherapies, infectious diseases, and graft-versus-host disease [3]. | CD34+ HSCs from cord blood support broader immune reconstitution; PBMCs are easier to harvest but primarily engraft T cells. Source from high-quality vendors to ensure cell viability and consistent engraftment [3]. |

| Ex Vivo Human Organ Perfusion (Ex Vivo Metrics) | Intact, ethically donated human organs (e.g., liver, lung, intestine) are kept viable by blood perfusion. This system allows for direct study of human-specific drug absorption, metabolism, and toxicity without species extrapolation [27]. | Provides near-human physiological context but is not a high-throughput system. Organs are severed from central nervous and full immune systems, which may limit the study of some complex toxicities [27]. |

| Aged or Genetically Diverse Animal Strains | Using older animals or outbred (genetically diverse) strains instead of standard young, inbred mice better models the aged and heterogeneous human patient population, improving the predictive value for chronic diseases [1]. | More costly and logistically challenging to maintain than standard lab strains. Phenotypes may be less uniform, requiring larger sample sizes. |

| CRISPR-Cas9 Gene Editing Systems | Used to create more genetically accurate animal models by introducing human disease-associated mutations or "humanizing" specific genes or pathways in animal models to better reflect human biology [3]. | Requires careful validation to ensure the edited gene function aligns with human physiology and does not cause unintended compensatory effects in the animal. |

A New Toolkit: Implementing Organ-Chips, Organoids, and AI in Preclinical Research

New Approach Methodologies (NAMs) are defined as any in vitro, in chemico, or computational (in silico) method that, when used alone or in combination, enables improved chemical safety assessment through more protective and/or relevant models, thereby contributing to the replacement, reduction, and refinement (3Rs) of animal testing [28] [29]. The driving force behind their development is the growing recognition of the inherent limitations of traditional animal models, particularly the problem of species mismatch, where physiological differences between animals and humans lead to unreliable predictions for human health [1].

A predominant reason for the poor rate of translation from bench to bedside is the failure of preclinical animal models to predict clinical efficacy and safety [1]. This is largely an issue of external validity—the extent to which findings from one species can be reliably applied to another [1]. Issues such as unrepresentative animal samples, the inability to mimic complex human conditions, and fundamental species differences consistently undermine the translational value of animal data [1]. Furthermore, traditional animal studies can be time-consuming, expensive, and often do not reveal the underlying physiological mechanisms of toxicity [30]. NAMs present an opportunity to overcome these challenges by providing human-relevant data that can lead to more predictive safety assessments and a new paradigm for risk assessment [28].

NAMs Fundamentals: Core Components and Workflows

NAMs encompass a broad spectrum of technologies and approaches. The following diagram illustrates a generalized workflow for implementing these methodologies in a safety assessment context.

The core components of NAMs can be categorized as follows:

- Computational Methods (In Silico): These include tools like quantitative structure-activity relationship (QSAR) models and chemical databases for toxicity prediction and chemical prioritization [28]. The Toxicity Estimation Software Tool (TEST) is one such example that predicts toxicity from a chemical's physical structure [31].

- Bioactivity and Toxicity Profiling (In Vitro): This involves using human-based in vitro assays, ranging from simple 2D cell cultures to complex 3D models and microphysiological systems (MPS), such as organ-on-a-chip devices [28]. These systems are designed to model pertinent human biological pathways and mechanisms of action.

- Toxicokinetics and Dosimetry: This critical component uses methods like the high-throughput toxicokinetics (httk) R package to evaluate how the body absorbs, distributes, metabolizes, and excretes a chemical, and to extrapolate effective doses from in vitro assays to relevant human exposures [31].

- Exposure Science: Exposure-based safety assessment is a fundamental premise of NAMs. Tools like the Stochastic Human Exposure and Dose Simulation (SHEDS-HT) model and the ChemExpo Knowledgebase are used to generate robust exposure estimates for risk assessment [28] [31].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential resources and tools used in the development and application of NAMs.

| Tool/Resource Name | Type | Primary Function & Application |

|---|---|---|

| C. elegans [30] [32] | Non-Mammalian Model Organism | A tiny transparent roundworm used as a screening model to identify chemicals potentially toxic to mammals, helping to reduce mammalian animal use. |

| Organoids & Microphysiological Systems (MPS) [28] [32] | Advanced In Vitro Model | Complex 3D tissue cultures (organoids) or multi-cellular systems on chips (MPS) that better mimic human organ structure and function for more relevant toxicity testing. |

| ToxCast Database/InvitroDB [31] | Bioactivity Database | A high-throughput screening database that provides bioactivity data for thousands of chemicals across hundreds of automated in vitro assays. |

| CompTox Chemicals Dashboard [31] | Computational Database | A web-based application providing access to data for hundreds of thousands of chemicals, including physicochemical properties, hazard, exposure, and toxicity data. |

| httk R Package [31] | Toxicokinetic Tool | An open-source software package used to perform high-throughput toxicokinetic modeling and in vitro to in vivo extrapolation (IVIVE). |

| Defined Approaches (DAs) [28] | Testing Strategy | Fixed data interpretation procedures that combine information from specified in silico, in chemico, and/or in vitro sources to reach a defined prediction without animal testing. |

Troubleshooting Common NAMs Challenges: FAQs

Q1: Our NAMs data is often questioned for not perfectly replicating historical animal study results. How should we address this?

This is a common challenge rooted in a misunderstanding of NAMs' purpose. It is critical to clarify that NAMs do not aim to recapitulate the animal test without the animal [28]. Instead, they provide more human-relevant information to enable an exposure-based safety assessment.

- Recommended Protocol: When facing this challenge, implement the following validation and communication steps:

- Benchmark for Protection, Not Correlation: Evaluate your NAMs based on their ability to identify human-relevant hazards and provide a protective point of departure for risk assessment, rather than their correlation with rodent data [28].

- Cite Performance Data: Reference existing success stories. For example, for defined endpoints like skin sensitization, combinations of human-based in vitro approaches have demonstrated similar or better performance compared to the traditional mouse Local Lymph Node Assay (LLNA) [28].

- Contextualize Animal Model Limitations: Proactively educate stakeholders that the rodent "gold standard" itself has a poor human toxicity predictivity rate, estimated to be between 40% and 65% [28]. Framing NAMs as a solution to the limitations of animal models, rather than a failed replica, is key.

Q2: What is the best strategy to gain regulatory acceptance for NAMs-based safety assessments?

Regulatory acceptance is a significant barrier, but progress is being made through strategic data generation and submission.

- Recommended Protocol:

- Use OECD-Validated Defined Approaches (DAs): For specific endpoints like skin sensitization or eye irritation, begin by adopting DAs that are already incorporated into OECD Test Guidelines (e.g., TG 497) [28]. This provides a clear and accepted regulatory pathway.

- Generate and Submit Data for "Safe-to-Use" Decisions: Initially, use NAMs to build a case that a chemical is "safe to use" for a specific exposure scenario, rather than attempting a full hazard characterization for classification and labeling [28]. A risk-based approach that integrates robust exposure science is often more readily applicable under current frameworks.

- Leverage Public Training Resources: Direct your team and regulators to training resources from agencies like the U.S. EPA, which offers extensive materials on tools like the CompTox Chemicals Dashboard, ToxCast, and httk [31]. This builds confidence and familiarity with the methodologies.

Effective data integration is one of the most critical technical challenges in implementing NAMs.

- Recommended Protocol:

- Adopt a Defined Approach Framework: For a given endpoint, pre-define the specific battery of tests (in silico, in chemico, in vitro) and the data interpretation procedure (DIP) that will be used to integrate the results into a final prediction [28]. This eliminates ad-hoc and potentially biased integration.

- Utilize Bioactivity-Exposure Ratios (BER): Integrate your in vitro bioactivity data (e.g., from ToxCast) with high-throughput exposure predictions (e.g., from the SHEDS-HT model or SEEM exposure model) to calculate a BER. A sufficiently high BER indicates a wide margin of safety and can support a risk-based conclusion [31].

- Invest in Computational Workflows: Leverage advanced data analytics tools, application programming interfaces (APIs), and potentially machine learning techniques to automate data aggregation and analysis from various sources like the CompTox Dashboard and invitroDB [31] [33].

Q4: Our complex 3D in vitro models are highly variable. How can we improve reliability?

Variability in advanced in vitro models is a known issue that can be managed through rigorous quality control.

- Recommended Protocol:

- Implement Strict QC Measures: Establish strict, standardized protocols for cell culture, including the use of characterized cell sources, consistent media formulations, and controlled passage numbers [33].

- Use Integrated Quality Metrics: Incorporate functional and structural quality control checkpoints relevant to your model's biology. For example, in a liver model, regularly monitor the expression and activity of key cytochrome P450 enzymes, albumin secretion, and urea synthesis.

- Benchmark with Reference Chemicals: Validate each new batch of your model against a set of reference chemicals with known positive and negative outcomes to ensure consistent performance over time [33]. Documenting this reproducibility is essential for building confidence in your data.

Visual Guide: The Defined Approach (DA) for Regulatory Safety Assessment

For specific endpoints, Defined Approaches offer a standardized method for integrating NAMs data. The following diagram outlines the general logic flow for a DA, such as those used for skin sensitization.

A major challenge in biomedical research and drug development is the reliance on animal models, which often fail to accurately predict human physiological responses. This species mismatch is a root cause of high drug candidate failure rates in clinical trials, as data from animals frequently do not translate to humans [34]. Organ-on-a-Chip (OoC) technology presents a transformative alternative. These microfluidic devices are lined with living human cells cultured under dynamic fluid flow, recapitulating organ-level physiology and pathophysiology with high fidelity [34]. This technical support center provides troubleshooting guidance for implementing these systems to advance more human-relevant research.

Troubleshooting Guides

Common Experimental Issues and Solutions

| Problem Category | Specific Symptom | Possible Causes | Recommended Solutions | Prevention Tips |

|---|---|---|---|---|

| Bubble Formation | Visible bubbles in microchannels; Sudden changes in flow resistance or TEER. | - Rapid pressure changes.- Inadequate medium degassing.- Temperature fluctuations. | 1. Stop flow immediately.2. Flush channels slowly with degassed, pre-warmed medium.3. Use integrated bubble traps if available. | - Always degas medium before use.- Use pressure-driven flow control for stability.- Pre-warm medium before perfusion. |

| Cell Viability Issues | Low viability post-seeding; Detachment in channels. | - High shear stress during seeding.- Incorrect medium composition.- Bubble events.- Contamination. | - Quantify and document shear stress during seeding.- Verify cell-specific medium requirements.- Check for sterility breaches. | - Use a syringe pump for low, steady flow during seeding.- Validate perfusion flow rates for each cell type.- Implement strict sterile technique. |

| Barrier Function Failure | Low or declining Transepithelial/Transendothelial Electrical Resistance (TEER). | - Inappropriate cell seeding density.- Non-physiological shear stress.- Imperfect cell differentiation. | - Optimize initial cell density for confluency.- Calibrate flow to apply physiological shear stress (e.g., 1–10 dyn/cm² for endothelium).- Confirm differentiation status before assay. | - Integrate real-time TEER measurement.- Establish a timeline for expected barrier maturation. |

| Material & Contamination | Unusual cell morphology; Cloudy medium. | - Bacterial or fungal contamination.- Cytotoxicity from device material (e.g., PDMS leaching). | - Discard contaminated chips and cultures.- For suspected cytotoxicity: precondition device by soaking in medium or switch to glass/alternative polymer chips. | - Use devices certified for cell culture.- Follow established sterilization protocols. |

Multi-Organ Chip System Challenges

| Problem | Specific Symptom | Possible Causes | Recommended Solutions |

|---|---|---|---|

| Communication Failure | Expected organ crosstalk not observed; Metabolite not detected in target organ. | - Incorrect flow direction between organs.- Unsuitable common medium.- Tubing causing excessive dilution. | - Map flow to match human physiology (e.g., gut to liver).- Test and tailor a universal medium that supports all organ types.- Minimize interconnecting tubing dead volume. |

| Viability in One Organ | One organ model degenerates while others are healthy. | - Organ-specific medium requirements not met.- Toxic metabolite accumulation. | - Consider using a multi-organ platform that allows for some compartment-specific medium customization. |

| Scalability & Data Variation | High chip-to-chip variability in large experiments. | - Inconsistent cell seeding.- Manual protocol steps open to interpretation.- Batch-to-batch cell variation. | - Automate cell seeding and medium exchange where possible.- Develop and adhere to Standard Operating Procedures (SOPs).- Use well-characterized, consistent cell sources. |

Frequently Asked Questions (FAQs)

Q1: How do I justify using an Organ-on-Chip model over a traditional animal model for my research? The primary justification is human biological relevance. OoCs use human cells, replicate human tissue-tissue interfaces, and experience human-relevant mechanical cues, directly addressing the species mismatch of animal models [34] [35]. This can lead to better prediction of human drug efficacy, toxicity, and disease mechanisms. Furthermore, OoCs can be derived from patient-specific cells, enabling personalized medicine approaches not feasible with inbred animal models [34].

Q2: My team is new to this technology. Should we start with a single-organ or a multi-organ system? Begin with a single-organ chip. A focused model allows your team to master the fundamentals of microfluidic cell culture—such as bubble management, flow control, and assay integration—without the added complexity of inter-organ interactions [36]. Once you have established robust protocols and baseline data for a single organ, you can then progress to multi-organ systems.

Q3: What are the key considerations for selecting cells for my Organ-on-Chip? The choice involves a trade-off between physiological relevance and practicality:

- Primary human cells: Highest functionality but have limited availability, donor variability, and can be difficult to culture long-term [37] [38].

- Immortalized cell lines: Offer consistency and ease of use but may have reduced functionality compared to primary cells [38].

- Induced Pluripotent Stem Cells (iPSCs): Provide a powerful source for patient-specific or disease-specific cells, but differentiation protocols must be robust and yield mature cell types [34] [37]. The key is to align your cell source with your experimental question.

Q4: Our organ-on-chip data sometimes conflicts with our historical animal data. Which should we trust? This conflict often reveals the core limitation of animal models. When this occurs, prioritize investigating the human-specific biology in your OoC model. Use the OoC to perform mechanistic studies (e.g., probing specific human signaling pathways) to explain the discrepancy [37]. This human-relevant insight is a key advantage of the technology, though further validation against human clinical data is the ultimate goal.

Q5: What are the biggest hurdles in scaling up Organ-on-Chip technology for routine drug screening? The main challenges are standardization, scalability, and validation [37].

- Standardization: Moving from custom, lab-made PDMS devices to mass-produced, consistent platforms.

- Scalability: Ensuring reliable, large-scale sourcing of high-quality human cells (e.g., via iPSC biomanufacturing) and developing fully documented, "plug-and-play" protocols.

- Validation: Systematically demonstrating that OoCs can consistently and accurately predict human clinical outcomes for regulatory bodies and the pharmaceutical industry.

Essential Experimental Protocols

Protocol 1: Establishing a Basic Barrier Model (e.g., Vascular or Intestinal)

This protocol outlines the steps to create a functional tissue barrier, a fundamental unit in many Organ-on-Chip models.

1. Device Preparation:

- Select a chip with at least one chamber and an integrated porous membrane.

- Sterilize the chip according to manufacturer's instructions (e.g., UV light, ethanol flush).

- Coat the membrane with the appropriate extracellular matrix (ECM) protein (e.g., Collagen IV for endothelium) and incubate (typically 1-2 hours at 37°C).

2. Cell Seeding:

- Aspirate the coating solution from the device.

- Prepare a high-density cell suspension (e.g., 5-10 million cells/mL for endothelial cells).

- Introduce the cell suspension into the target channel and incubate under static conditions for a period (e.g., 1-4 hours) to allow for initial cell attachment.

- Gently initiate low-flow perfusion with culture medium to remove non-adherent cells and provide nutrients.

3. Barrier Maturation:

- Continue perfusion culture for several days to allow the cells to form a confluent monolayer.

- If real-time Transepithelial/Transendothelial Electrical Resistance (TEER) measurement is available, monitor the values daily. A steady increase followed by a plateau indicates the formation of a tight barrier.

- The model is ready for experimentation once a stable, high TEER value is achieved.

Protocol 2: Linking Two Organs in a Multi-Organ System

This protocol describes the fluidic coupling of two established single-organ models.

1. Pre-culture and Individual Validation:

- Culture each organ chip (e.g., a Gut-Chip and a Liver-Chip) separately until they are functionally mature.

- Confirm the functionality of each organ individually (e.g., gut barrier integrity, liver albumin production).

2. Fluidic Connection:

- Connect the "outlet" channel of the first organ (Gut-Chip) to the "inlet" channel of the second organ (Liver-Chip) using sterile, gas-impermeable tubing. This mimics the physiological portal vein connection from the intestine to the liver.

- Use a common medium circulation reservoir that is compatible with both organ types.

- Initiate perfusion through the entire system at a flow rate calculated based on physiological scaling laws [39].

3. System Equilibration and Monitoring:

- Allow the connected system to equilibrate for 24-48 hours.

- Monitor the viability and function of both organs during this period.

- The system can then be dosed with a compound (e.g., an oral drug) in the first organ (Gut-Chip), and its effect and metabolism can be studied as it is transported to the second organ (Liver-Chip).

Critical Workflow and Signaling Visualization

Organ-on-Chip Experimentation Workflow

The diagram below outlines the key decision points and stages in a typical Organ-on-Chip experiment, from planning to data interpretation.

Signaling Pathways in Inflammatory Crosstalk

This diagram illustrates an example of complex cell signaling that can be modeled in an Organ-on-Chip, such as a Lung Alveolus Chip responding to an inflammatory trigger.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Importance in OoC | Technical Notes |

|---|---|---|

| Microfluidic Chip | The physical platform housing the cell culture. Typically made of PDMS for its oxygen permeability and optical clarity, or other polymers/glass. | PDMS can absorb small molecules; consider surface coating or alternative materials for specific drug studies [37]. |

| Extracellular Matrix (ECM) | A hydrogel scaffold (e.g., Collagen, Matrigel) that provides structural support and biochemical signals to cells, promoting 3D organization and function. | The choice of ECM is organ-specific and critical for mimicking the native cellular microenvironment [36]. |

| Human Cells | The biological component that defines the organ's function. Sources include primary cells, iPSC-derived cells, or immortalized cell lines. | iPSCs are powerful for creating patient-specific disease models [34]. Ensure cell maturity and functionality for the target organ. |

| Flow Control System | A pump (e.g., pressure-driven or syringe pump) that perfuses culture medium through the chip, providing nutrients, removing waste, and applying physiological shear stress. | Pressure-driven systems offer more stable, pulse-free flow, which is beneficial for controlling shear stress [36]. |

| Specialized Culture Medium | A fluid formulation that supplies essential nutrients, growth factors, and hormones to sustain the cells. May be shared in multi-organ systems or compartment-specific. | Low-serum or defined formulations help reduce experimental variability [36]. |

| Integrated Sensors | Probes that enable real-time, non-invasive monitoring of physiological parameters (e.g., TEER for barrier integrity, oxygen, pH). | Integration moves the platform from a simple Organ-on-a-Chip to a more powerful "Lab-on-a-Chip" [40]. |

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using human organoids over traditional animal models in drug development? Human organoids are 3D cultures derived from human stem cells that replicate the structure and function of human organs. They address the critical limitation of species mismatch inherent in animal models, which often leads to poor translation of drug safety and efficacy findings to humans [41] [42]. Organoids incorporate human genetic diversity and can be personalized from specific patients, providing a more cost-effective and human-relevant system for disease modeling and drug screening [43] [41].

Q2: My patient-derived organoid cultures are failing due to delays in getting tissue samples from the clinic to the lab. What can I do? This is a common challenge. You can implement these two preservation methods to improve viability:

- Short-term refrigerated storage: If the processing delay is 6-10 hours, wash the tissue with an antibiotic solution and store it at 4°C in Dulbecco’s Modified Eagle Medium (DMEM)/F12 medium supplemented with antibiotics [44].

- Cryopreservation: For delays exceeding 14 hours, cryopreservation is preferable. After an antibiotic wash, preserve the tissue in a freezing medium (e.g., 10% fetal bovine serum, 10% DMSO in 50% L-WRN conditioned medium) [44]. Expect a 20-30% variability in live-cell viability between these methods [44].

Q3: A major criticism of organoids is their lack of standardization and reproducibility. How is the field addressing this? The field is actively tackling this through several key strategies:

- Automation and AI: Integrating automation and artificial intelligence to standardize protocols and remove human bias, ensuring cells receive consistent care for more reliable model generation [41].

- Validated, Assay-Ready Models: There is a growing availability of pre-validated organoids that have undergone rigorous testing to confirm they accurately mimic biological processes, allowing researchers to start experiments immediately [41].

- Advanced Imaging Frameworks: Unified computational pipelines, like the LSTree workflow, are being developed to standardize the analysis of organoid imaging data, turning complex images into quantifiable "digital organoids" [45].

Q4: How can I model drug absorption or host-microbiome interactions when the organoid lumen is inaccessible? You can generate "apical-out" organoids. This protocol involves a transition from the typical "basolateral-out" polarity to a configuration where the apical surface faces the culture medium. This provides direct access to the luminal surface for assays studying drug permeability, pathogen interactions, and co-culture with microbes or immune cells [44].

Q5: Organoids develop a necrotic core when they grow beyond a certain size. What are the proposed solutions? Size limitation due to poor nutrient diffusion is a recognized challenge. Current strategies to overcome this include:

- Bioreactors: Using stirred bioreactor systems to improve diffusion and allow for scale-up production [41].

- Vascularization: A major trend is the development of vascularized organoid models by co-culturing with endothelial cells, which enhances nutrient delivery and mimics human physiology more closely [41].

- Integration with Organ-Chips: Combining organoids with microfluidic Organ-Chips incorporates dynamic fluid flow and mechanical cues, which enhances cellular differentiation and function while improving nutrient access [41].

Troubleshooting Guides

Guide 1: Low Organoid Formation Efficiency

| Symptom | Possible Cause | Solution |

|---|---|---|

| Low cell viability leading to poor organoid formation. | Delays in tissue processing after collection. | Implement strict cold-chain logistics. For minimal delays (≤6-10h), use refrigerated storage with antibiotics. For longer delays, use cryopreservation [44]. |

| Incorrect tissue sampling site, failing to capture stem cell niches. | Ensure strategic sampling from crypt regions for intestinal organoids, as these contain the most active stem cells [44]. | |

| Contamination of cultures. | Inadequate antibiotic wash during initial tissue processing. | Perform a thorough wash of the tissue sample with a cold antibiotic solution (e.g., penicillin-streptomycin) in Advanced DMEM/F12 medium before processing or storage [44]. |

Guide 2: Overcoming Physiological Limitations

| Challenge | Impact on Research | Advanced Solution |

|---|---|---|

| Lack of Vascularization | Limits organoid size, leads to necrotic cores; prevents study of systemic drug delivery [41]. | Co-culture with endothelial cells to induce blood vessel formation [41]. Integrate with Organ-Chips that provide microfluidic channels to simulate blood flow [41]. |

| Inaccessible Luminal Space | Prevents direct study of nutrient absorption, host-microbiome interactions, and apical drug exposure [44]. | Generate "apical-out" organoids by manipulating cell polarity protocols to flip the organoid structure, exposing the lumen to the culture medium [44]. |

| Limited Scalability & Reproducibility | Hinders high-throughput drug screening and consistent experimental outcomes [41]. | Adopt automated bioreactors and AI-driven image analysis (e.g., convolutional neural networks) to standardize production and characterization [41] [45]. |

Anatomical Distribution of Colorectal Tissues for Organoid Development

Strategic sampling is crucial for building representative disease models. The table below outlines the distribution of advanced colorectal neoplasms, which should guide the collection of normal, polyp, and tumor tissues for organoid generation [44].

| Anatomical Site | Percentage of Advanced Neoplasms |

|---|---|

| Rectum | 34.1% |

| Sigmoid Colon | 10.7% |

| Descending Colon | 36.0% |

| Transverse Colon | 2.5% |

| Ascending Colon | 16.6% |

Note for Researchers: Proximal and distal colon cancers have distinct molecular profiles. Proximal cancers (cecum to transverse colon) show a higher prevalence of MSI-H status, CIMP-H, and BRAF mutations. Ensure your sampling and biobanking strategy accounts for this heterogeneity to enable meaningful clinical correlations [44].

Experimental Protocol: Generating Patient-Derived Colorectal Organoids

This detailed protocol for generating organoids from colorectal tissues (normal, polyps, and tumors) is adapted from a standardized, high-efficiency method [44].

Materials and Reagents

| Research Reagent | Function / Explanation |

|---|---|

| Advanced DMEM/F12 | Base culture medium providing essential nutrients for cell growth. |

| Penicillin-Streptomycin | Antibiotic solution to prevent microbial contamination during tissue collection and processing. |

| L-WRN Conditioned Medium | Conditioned medium containing Wnt3a, R-spondin, and Noggin—essential growth factors for maintaining intestinal stem cells and promoting organoid formation and growth [44]. |

| Matrigel | A proprietary extracellular matrix (ECM) that provides a 3D scaffold mimicking the in vivo basement membrane, crucial for organoid structure and development. |

| EGF (Epidermal Growth Factor) | A growth factor that stimulates cell proliferation within the organoid. |

| Noggin | A BMP signaling pathway inhibitor that promotes the formation and growth of intestinal epithelial organoids. |

| R-spondin 1 | A protein that potentiates Wnt signaling, essential for the maintenance and self-renewal of intestinal stem cells. |

Step-by-Step Methodology

Tissue Procurement and Transport:

- Collect human colorectal tissue samples under sterile conditions immediately after colonoscopy or surgical resection, following IRB-approved protocols and with patient consent.

- CRITICAL STEP: Transfer the tissue in a tube containing 5–10 mL of cold Advanced DMEM/F12 medium supplemented with antibiotics (e.g., penicillin-streptomycin). Prompt handling is vital to preserve tissue integrity and cell viability [44].

Tissue Processing and Crypt Isolation:

- Wash the tissue thoroughly with an antibiotic solution to minimize contamination.

- Mechanically and enzymatically dissociate the tissue to isolate intact crypts, which contain the intestinal stem cells necessary for organoid formation.

Culture Establishment:

- Embed the isolated crypts in Matrigel droplets, which provides the necessary 3D environment.

- Culture the embedded crypts in a specialized medium supplemented with key growth factors, including EGF, Noggin, and R-spondin (often provided as L-WRN conditioned medium) [44]. This combination supports the long-term expansion and maintenance of the epithelial cell diversity found in the original tissue.

Generating Apical-Out Organoids (for luminal access):

- To study drug permeability or host-microbe interactions, established basolateral-out organoids can be transitioned to an apical-out polarity.

- This involves specific culture manipulations that disrupt cell-extracellular matrix interactions, leading to a reversal of polarity so the apical surface faces the outside environment [44].

Workflow and Signaling Diagrams

Organoid Generation and Analysis Workflow

Core Signaling Pathway for Intestinal Organoids

Integrating AI and Machine Learning for Predictive Toxicology and Discovery

Frequently Asked Questions (FAQs)

FAQ 1: How can AI models specifically address the problem of species mismatch in traditional toxicology? AI models mitigate species mismatch by leveraging human-relevant data, such as in vitro human cell assays, toxicogenomics, and human-specific omics profiles, to predict human toxicity directly. This reduces the reliance on animal models, which often fail to accurately predict human-specific toxicities due to physiological and metabolic differences. Machine learning (ML) integrates these diverse datasets to uncover complex, human-specific toxicity mechanisms, providing a more accurate safety assessment for human populations [46] [47].

FAQ 2: What are the most critical data quality considerations when training an ML model for toxicity prediction? The key considerations are Veracity (quality and reliability of the source data) and Variety (integration of diverse data types). "Big data" does not equate to "good data." It is crucial to use high-quality, well-curated datasets for training. This involves rigorous data quality checks, addressing data biases, and ensuring the data is representative of the human biological context to which the model will be applied. Using poor-quality data will lead to unreliable and non-generalizable model predictions [47] [48].

FAQ 3: Our QSAR model is accurate on training data but performs poorly on new chemicals. What might be the cause? This is typically a problem of model overfitting or the black box nature of some complex ML models. The model may have learned noise or specific patterns from the training set that are not generalizable. Solutions include:

- Applying stricter validation techniques like cross-validation and external validation sets [46].

- Ensuring the chemical space of your new compounds is well-represented in the training data.

- Using simpler models or methods for feature selection to improve interpretability and generalizability [48].

- Leveraging explainable AI (XAI) techniques to unravel the mechanisms behind predictions and identify potential reasons for failure [47].

FAQ 4: Can AI-generated toxicity predictions be submitted to regulatory bodies? While AI is increasingly used to support internal decision-making in drug discovery, regulatory endorsement is still evolving. A major limitation is the lack of interpretability of some "black box" AI models. Regulatory agencies require transparent and mechanistic understanding for safety assessments. To improve acceptability, researchers should:

- Focus on developing interpretable models.

- Provide robust validation data against traditional methods.

- Use AI predictions as part of a weight-of-evidence approach, complemented by other data sources [47] [49].

- Adhere to established guidance, such as that from the Society of Toxicology (SOT), which emphasizes human oversight and review of AI-generated content [49].

Troubleshooting Guides

Problem: Low Predictive Accuracy in Adverse Drug Reaction (ADR) Models

| Step | Action | Rationale |

|---|---|---|

| 1 | Audit input data for quality and relevance. | Model accuracy is capped by data quality. Inconsistent or irrelevant data is a primary cause of failure [46] [48]. |

| 2 | Integrate diverse data types (e.g., chemical properties, omics data, EHRs). | ADRs are complex; multi-modal data provides a more complete biological picture and improves model robustness [46]. |

| 3 | Test multiple ML algorithms (e.g., Random Forest, Support Vector Machines, Neural Networks). | No single algorithm is optimal for all data types or problems. Comparative testing identifies the best-performing model for your specific dataset [50]. |

| 4 | Validate the model using rigorous techniques like k-fold cross-validation and an external hold-out test set. | Ensures the model is not overfitted and can generalize to new, unseen data [46]. |

Problem: High Uncertainty in PBPK Parameter Predictions

| Step | Action | Rationale |

|---|---|---|