Bridging the Diagnostic Divide: Innovative Strategies and Technologies for Low-Resource Settings

This article provides a comprehensive analysis of the diagnostic challenges prevalent in low- and middle-income countries (LMICs) and outlines a multi-faceted framework for developing effective solutions.

Bridging the Diagnostic Divide: Innovative Strategies and Technologies for Low-Resource Settings

Abstract

This article provides a comprehensive analysis of the diagnostic challenges prevalent in low- and middle-income countries (LMICs) and outlines a multi-faceted framework for developing effective solutions. It explores the foundational burden of diseases and systemic gaps, reviews established and emerging point-of-care (POC) technologies like lateral flow assays and molecular diagnostics, and delves into critical design criteria for usability and integration. Furthermore, it examines methodological approaches for validating diagnostic tools in real-world contexts and compares their impact on patient safety and health outcomes. Designed for researchers, scientists, and drug development professionals, this review synthesizes current evidence and expert consensus to guide the creation of accessible, accurate, and affordable diagnostic interventions.

The Diagnostic Landscape in LMICs: Understanding the Burden and Systemic Gaps

The Dual Burden of Communicable and Non-Communicable Diseases

Technical Support Center: Troubleshooting Guides and FAQs

This section provides practical solutions for common operational and diagnostic challenges faced by researchers working on integrated disease management in low-resource settings (LRS).

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical barriers to implementing integrated diagnostic protocols in LRS? Research highlights several interconnected barriers. Systemic challenges include inadequate financing, lack of essential equipment, and human resource shortages (high workload, inadequate training) [1]. From a patient perspective, key barriers are inaccessibility, unaffordability, lack of medications, and inadequate health-related information from providers [2].

FAQ 2: How can we improve diagnostic accuracy in low-prevalence settings where prior probability of serious disease is low? Relying solely on hypothesis-driven, deductive testing can be inefficient. A more effective method involves inductive foraging, where the patient is allowed to describe their problem without interruption, followed by triggered routines—general, non-hypothesis-specific questions about related symptoms. This process explores the problem space more efficiently and helps gather diagnostic cues that might otherwise be missed [3].

FAQ 3: What core criteria should be considered when designing an integrated diagnosis intervention for a primary care setting in an LRS? A recent Delphi study established 18 consensus criteria. Critical design criteria include ensuring the availability of effective treatments after diagnosis, aligning the intervention with national policies and local priorities, securing sustainable financing, and integrating with existing primary healthcare services and data systems rather than creating parallel, vertical programs [4].

FAQ 4: What strategies can mitigate diagnostic errors in complex cases? Diagnostic errors stem from both cognitive biases and system failures. Mitigation strategies include enhancing clinician training on diagnostic reasoning, implementing standardized diagnostic protocols where possible, and fostering effective communication within healthcare teams. Technological advancements, including artificial intelligence (AI) and machine learning, are also showing promise in enhancing diagnostic precision [5] [6].

Troubleshooting Common Experimental and Field Challenges

Challenge 1: High Rate of Unreliable Results from Manual Blood Culture Techniques.

- Issue: Inconsistent microbial growth or contamination in equipment-free blood culture bottles.

- Solution: Implement a short, standardized protocol for evaluating blood culture bottles. A proposed protocol includes assessing bottle media composition, volume of blood inoculated, and incubation conditions against a reference standard to validate performance before widespread field use [7].

Challenge 2: Low Patient Uptake and Adherence to NCD Screening Programs.

- Issue: Target population is not utilizing available screening services for diseases like hypertension or diabetes.

- Solution: Address modifying factors as outlined in the Health Belief Model. Key facilitators include providing free or low-cost medicines and services, ensuring geographical accessibility, reducing waiting times, and fostering positive patient-provider interactions [2]. Community engagement and education to improve knowledge and self-efficacy are also crucial.

Challenge 3: Research Participation Hindered by Low Literacy and Logistical Barriers.

- Issue: Potential participants cannot read consent forms or have difficulty traveling to study sites.

- Solution: Adapt research methodologies to the local context. Obtain informed consent using oral or pictorial messages. Compensate participants for transportation costs and time, and be flexible in the face of disruptive environmental factors like poor road conditions or political unrest [8].

Summarized Quantitative Data

| Challenge Category | Specific Subthemes |

|---|---|

| Financing | Poor financial management, Lack of a defined budget |

| Equipment & Infrastructure | Lack of diagnostic tests, Inadequate physical space |

| Human Resources | High workload, Inadequate training, Low motivation, Burnout |

| Payment Mechanism | Issues with per-capita allocation, method of payment, and incentives |

| Information System | Multiple databases, Poor data sharing, Low data quality |

| Referral System | Weak provider coordination, Problems with electronic referral |

| Health Insurance | Insufficient service coverage, Low attention to quality of care |

| Community Engagement | Weak education initiatives, Underutilization of local capacities |

| Category | Facilitators | Barriers |

|---|---|---|

| Economic | Free medicines, Low-cost services | Service inaffordability |

| Access & Convenience | Geographical accessibility, Less waiting time | Geographical inaccessibility |

| Clinical Interaction | Positive provider interaction, Health improvement | Inadequate health information from providers |

| Knowledge & Support | Support from family and peers | Low knowledge of NCD care, Lack of reminders/follow-up |

| Health Systems | - | Lack of medications and equipment |

Experimental Protocols for Key Methodologies

- Aim: To qualitatively explore challenges of managing NCDs from the perspective of family physicians.

- Methodology:

- Study Design: Conventional qualitative content analysis.

- Participant Selection: Purposive sampling with snowball method. Select 17 general practitioners with at least 5 years of experience in the public-private partnership model.

- Data Collection: Conduct semi-structured, in-depth interviews (30-60 minutes each). Audio-record and transcribe interviews verbatim.

- Data Analysis: Use the Graneheim and Lundman approach. Import transcripts into qualitative data analysis software (e.g., MAXQDA 2020). Perform coding to identify meaning units, condensed meaning units, codes, subthemes, and main themes.

- Ethical Considerations: Obtain ethical approval from a relevant institutional review board. Secure informed consent from all participants before interviews.

- Aim: To establish core criteria for designing integrated diagnosis interventions in primary care settings in LRS.

- Methodology:

- Expert Panel Assembly: Recruit an international panel of ~55 experts from diverse professions (implementers, policymakers, academics) and geographical regions, with a focus on Africa.

- Process: Conduct a two-round online Delphi process.

- Round 1: Present an initial list of criteria derived from a realist synthesis. Experts rate the importance of each criterion. Predefined consensus threshold (e.g., 70%) is used to retain, remove, or re-rate criteria.

- Round 2: Experts re-rate criteria that did not reach consensus in Round 1.

- Data Analysis: Calculate the percentage of experts rating each criterion as "critical to include." Criteria meeting the consensus threshold are included in the final set of core design criteria.

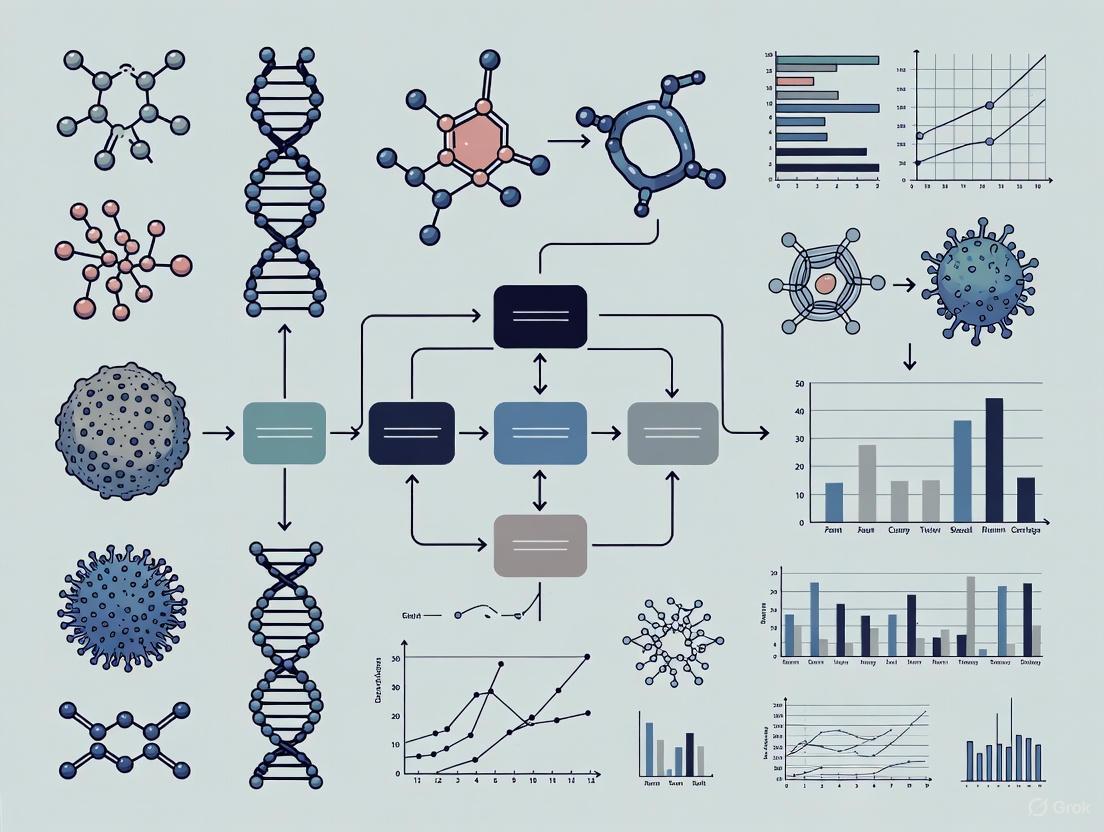

Visualized Workflows and Pathways

Diagnostic Pathway

Integrated Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Diagnostic Research in Low-Resource Settings

| Item | Function/Application | Key Considerations for LRS |

|---|---|---|

| 3D-Printed Molecular Devices [7] | Low-cost, automated sample preparation and molecular detection (e.g., qPCR). | Enables local production and repair; reduces dependency on complex supply chains. |

| Open-Source Slide-Scanning Microscope [7] | Automated digital pathology for remote diagnosis and telemedicine. | Modular and cost-effective alternative to commercial slide scanners. |

| Antigen-Based Rapid Diagnostic Tests (RDTs) [7] | Quick, equipment-free detection of pathogens (e.g., Salmonella, Malaria). | Ideal for point-of-care use; requires training for correct interpretation and reading. |

| Multiplex Nucleic Acid Amplification Tests (NAATs) [7] | Simultaneous detection of multiple pathogens (e.g., HIV/TB) from a single sample. | Maximizes efficiency and conserves patient sample; can be more complex to run. |

| MPT64 Antigen Detection Test [7] | Improves rapid diagnosis of extrapulmonary tuberculosis (EPTB). | Simple immunochromatographic test; crucial where culture confirmation is slow/unavailable. |

| Equipment-Free Blood Culture Bottles [7] | Microbial culture without continuous electricity (incubators). | Widespread use; requires rigorous protocol to ensure reliability. |

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center is designed for researchers and professionals tackling the unique challenges of diagnostic development and drug discovery for low-resource settings (LRS). The guidance below is framed within a broader thesis on overcoming diagnostic challenges in these environments, focusing on practical, actionable solutions.

Frequently Asked Questions (FAQs)

Q1: What are the essential characteristics of an ideal diagnostic test for a low-resource setting? An ideal diagnostic test for a limited-resource setting must balance performance with practical constraints. Key characteristics include [9]:

- User-Friendly: Requires minimal training to operate and interpret.

- Rapid Results: Short turnaround time to enable immediate treatment decisions.

- Robust & Stable: No requirement for refrigerated storage; stable at high temperatures for over a year.

- Minimal Sample Pre-processing: Works with simple biological samples like whole blood, serum, or saliva with little to no preparation.

- Portable and Inexpensive: Low cost per test and equipment that is easy to transport.

- High Sensitivity and Specificity: Accurate and reliable performance to ensure correct diagnosis.

Q2: Our research team is facing high costs and delays in the drug discovery phase. What strategies can we adopt to improve efficiency? The early stages of drug discovery are notoriously expensive and long, often taking 8-12 years and costing over $1 billion per successful drug [10]. To improve efficiency, consider these approaches [11]:

- Insource Specialized Talent: Integrate skilled scientists into your team as a mobile, embedded unit to capitalize on existing laboratory space and equipment without the long-term commitment of a full hire, especially during intense, short-term projects.

- Strengthen Business and Management Skills: Equip your scientific team with crucial soft skills like project management, communication, and critical thinking to minimize errors and delays that have high financial costs.

- Leverage AI for Hit Identification: Use artificial intelligence software to accelerate the identification and optimization of novel drug candidates, while carefully evaluating and mitigating associated risks to data security and intellectual property.

Q3: What are the most significant barriers to adopting digital health technologies in emerging economies, and how can they be overcome? The digital transformation of healthcare in emerging economies faces deep-rooted challenges. A systematic analysis identifies the most critical barriers and their interconnected nature [12]:

- Primary Causal Challenges: The most significant barriers are "digital literacy, training, and upgrading deficits" and "cultural and behavioral adaptation to digital health." These act as causal factors that exacerbate other problems.

- The Interconnected Nature of Barriers: Challenges like limited infrastructure, high costs, and inadequate regulatory support are often effects driven by the primary deficits in digital literacy and cultural adaptation. Targeted interventions on the primary causes can lead to systemic improvements.

Q4: How can we address the severe talent and skill shortages in our research organization? Avoiding a skills gap analysis can lead to significant financial and performance costs [13]. A proactive strategy is essential:

- Conduct a Skills Gap Analysis: Begin by identifying the specific skills your employees possess today and the skills that will be in demand for your future projects. This clarity is the first step in building a development and growth culture.

- Invest in Continuous Training: Address the "short half-life" of technical skills by implementing ongoing development programs. This increases employee engagement and retention, as people are more likely to stay with employers who invest in their professional growth.

- Develop Agile Teams: Build a resilient workforce capable of reacting rapidly to unprecedented changes in the research landscape, such as shifts in the drug supply chain or new technological disruptions.

Troubleshooting Common Experimental and Implementation Hurdles

Problem: High failure rate of drug candidates in late-stage clinical trials due to lack of efficacy.

- Background: Approximately 90% of drug candidates that enter clinical trials fail, with lack of efficacy accounting for ~40-50% of these failures [10].

- Solution:

- Improve Preclinical Models: Evolve traditional screening systems and translational assays to better predict human efficacy and minimize late-stage attrition.

- Utilize Biomarkers: Incorporate biomarker-driven development to stratify patient populations and increase the probability of trial success. Trials that utilize biomarkers have roughly double the success rate of those that do not [10].

- Adopt Adaptive Trial Designs: Implement clinical trial designs that allow for modifications based on interim data, making the process more efficient and informative.

Problem: Difficulty in achieving high-quality molecular diagnostics in settings with unstable electrical power and limited infrastructure.

- Background: Standard laboratory instruments require sophisticated infrastructure and stable electrical power, which is often unavailable in rural areas [9].

- Solution: Develop or Leverage Low-Cost, Equipment-Free or Portable Platforms.

- Protocol for Equipment-Free Blood Cultures: In settings without automated incubators and shakers, "manual" blood culture bottles can be used. A protocol for their use involves [7]:

- Inoculate the blood culture bottle with the patient's sample.

- Incubate at 35±2°C using a simple, low-cost incubator or even ambient temperature with careful monitoring.

- Perform daily visual inspection for signs of growth (turbidity, hemolysis, gas production).

- Subculture to solid media upon indication of growth or routinely at 24-48 hours.

- Adopt 3D-Printed or Open-Source Devices: Utilize low-cost, 3D-printed automated microscopes or slide-scanners [7] and open-source, modular microscope systems [7] that can be built and repaired locally, reducing cost and infrastructure dependence.

- Protocol for Equipment-Free Blood Cultures: In settings without automated incubators and shakers, "manual" blood culture bottles can be used. A protocol for their use involves [7]:

Problem: Low sensitivity of a rapid diagnostic test for a specific pathogen.

- Background: Lateral Flow Immunoassays (LFIA) are the cornerstone of POC testing but may sometimes lack sufficient sensitivity.

- Solution: Investigate Alternative Nucleic Acid Amplification Technologies (NAATs) that are suited to low-resource settings.

- Experimental Protocol for Reverse Transcription Recombinase Polymerase Amplification (RT-RPA) [7]:

- Sample Preparation: Lyse the sample (e.g., from a swab or tissue) to release RNA. Simple, heater-based lysis methods are sufficient.

- Amplification Reaction:

- Prepare a master mix containing recombinase, primers specific to the target pathogen (e.g., rabies virus), polymerase, and nucleotides.

- Add the extracted RNA to the master mix.

- Incubate at a constant low temperature (e.g., 39-42°C) for 15-20 minutes. A simple heat block or even body heat can be used.

- Detection: Results can be visualized using a simple lateral flow dipstick, eliminating the need for expensive fluorescent scanners.

- Experimental Protocol for Reverse Transcription Recombinase Polymerase Amplification (RT-RPA) [7]:

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents and materials essential for developing and deploying diagnostics for low-resource settings.

| Item | Function in Diagnostics | Application Example in LRS |

|---|---|---|

| Lateral Flow Strips | Platform for rapid, immunoassay-based detection of antigens or antibodies. The sample moves via capillary action. | Core technology in rapid tests for malaria, HIV, dengue, and tuberculosis [9]. |

| Nucleic Acid Amplification Tests (NAATs) | Enzymatic systems that amplify specific DNA or RNA sequences for highly sensitive pathogen detection. | Used in portable PCR and isothermal (e.g., RPA, LAMP) devices for detecting infectious diseases like SARS-CoV-2, rabies, and tuberculosis [7]. |

| MPT64 Antigen | A specific antigen secreted by M. tuberculosis complex bacteria. | Used in immunochromatographic tests for the rapid and accurate diagnosis of extrapulmonary tuberculosis from tissue samples [7]. |

| Open-Source Software (OpenPhControl) | Provides a low-cost, customizable platform for controlling laboratory instruments and experiments. | Enables the construction of a reliable and inexpensive pH-stat device using available hardware [7]. |

| 3D-Printing Filaments (e.g., PLA, ABS) | Raw material for fabricating custom labware, device housings, and mechanical parts at very low cost. | Used to create components for automated microscopes, sample preparation devices, and even real-time PCR machines [7]. |

Experimental Workflow and Diagnostic Pathways

Diagnostic Test Integration Pathway

The following diagram outlines the logical workflow for integrating a new diagnostic tool within a low-resource health system, highlighting key decision points and potential bottlenecks.

Technology Selection Logic

This diagram provides a high-level decision tree for selecting an appropriate diagnostic technology platform based on the needs and constraints of the target environment.

The Impact of Diagnostic Errors on Patient Safety and Mortality

Diagnostic errors represent a significant threat to patient safety, contributing to substantial mortality and preventable harm. The table below summarizes key quantitative data on their prevalence and impact [6] [14] [15].

Table 1: Epidemiological Impact of Diagnostic Errors

| Metric | Estimated Figure | Context / Population |

|---|---|---|

| Annual Deaths (US) | 40,000 - 80,000 | Attributable to diagnostic errors in hospitals [6] [14]. |

| Affected Patients (US) | Over 250,000 | Americans experiencing a diagnostic error in hospitals annually [14]. |

| Emergency Department Error Rate | 5.7% of visits | Affecting approximately 7 million patients annually in the US [15]. |

| Serious Harm from ED Errors | 0.3% of visits | Resulting in preventable disability or death [15]. |

| Overall Diagnostic Error Rate | 10-15% | Approximated across most areas of clinical medicine [6]. |

Understanding and Categorizing Diagnostic Errors

Definitions and Framing

A diagnostic error is defined as "the failure to (a) establish an accurate and timely explanation of the patient’s health problem(s) or (b) communicate that explanation to the patient" [15]. Another broader definition encompasses "any mistake or failure in the diagnostic process leading to a misdiagnosis, a missed diagnosis, or a delayed diagnosis" [15].

Categories of Diagnostic Error

Diagnostic errors can be partitioned into three primary categories, a framework crucial for developing targeted troubleshooting guides [15].

Diagnostic Error Categories Diagram

Troubleshooting Guides and FAQs for Researchers

This section provides a structured framework for identifying and addressing the root causes of diagnostic errors, tailored for researchers and developers working in low-resource settings.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common disease areas associated with serious diagnostic errors? Over half of all serious diagnostic errors are related to cardiovascular diseases, infections, and cancers [15].

FAQ 2: What is a "closed-loop" communication system and why is it critical? This is a recommended practice where the process of ordering a test, reviewing the result, and communicating that result to the patient is formally completed and verified. It ensures that abnormal results are not missed or lost to follow-up [14].

FAQ 3: How can we mitigate cognitive biases in the diagnostic process? Mitigation strategies include fostering multidisciplinary team reviews, implementing diagnostic time-outs to re-assess initial assumptions, and using cognitive aids or checklists to broaden the differential diagnosis [6] [15].

FAQ 4: What are the key challenges in developing diagnostic tests for emerging threats in low-resource settings? Key challenges include delayed access to well-characterized clinical samples and reagents, lack of centralized logistical support, difficulties with international material transfer agreements, and the absence of a "gold standard" method for validation during early outbreak stages [16].

Systematic Troubleshooting Protocol for Diagnostic Processes

When a diagnostic process fails (e.g., high rate of false negatives), follow this systematic protocol to identify the source of error [17] [18].

Diagnostic Troubleshooting Workflow

Step-by-Step Guide:

- Verify and Replicate: Unless cost or time prohibitive, repeat the assay or diagnostic procedure. A simple human error in execution (e.g., pipetting error, incorrect timing) may be the cause [17].

- Assess Result Validity: Critically evaluate whether the unexpected result is a true failure or a scientifically valid outcome. Revisit the literature to determine if the result, while unexpected, is biologically plausible (e.g., low analyte expression in a specific tissue) [17].

- Check Controls: Scrutinize the results of all positive and negative controls. If a positive control fails, it strongly indicates a problem with the protocol, reagents, or equipment rather than the patient samples [17].

- Audit Materials and Equipment:

- Reagents: Check expiration dates and storage conditions. Reagents can be sensitive to improper storage or may come from a compromised batch [17] [16].

- Equipment: Verify calibration and proper function of all instruments used in the process [18].

- Compatibility: Ensure all components (e.g., primary and secondary antibodies, enzymes and substrates) are compatible [17].

- Isolate Variables Systematically: Generate a list of potential variables that could cause the observed error (e.g., sample preparation time, incubation temperature, reagent concentration, washing steps). Change only one variable at a time in subsequent experiments to conclusively identify the root cause. Document every change and outcome meticulously [17] [18].

The Scientist's Toolkit: Essential Research Reagent Solutions

For researchers developing and validating diagnostic tests, particularly for use in low-resource settings, access to well-characterized materials is paramount. The table below details essential sample types and their critical function in ensuring test accuracy and reliability [16].

Table 2: Key Research Reagent Solutions for Diagnostic Test Validation

| Reagent / Sample Type | Critical Function in Validation |

|---|---|

| Clinical Samples (High/Low Analyte) | Assess analytical sensitivity, limit of detection, and test reproducibility [16]. |

| Pre-symptomatic Patient Samples | Determine test ability to diagnose early infection, often associated with low pathogen levels [16]. |

| Cross-Reactivity Panels | Assess test specificity using samples from patients with similar symptoms but a different infection [16]. |

| Acute & Convalescent Samples | Verify test performance across different stages of infection and immune response [16]. |

| Diverse Demographic Samples | Evaluate diagnostic accuracy across varying ethnicities, ages, and genders to ensure equitable performance [16]. |

| Quantified Pathogen Stocks | Create standardized "contrived" samples by spiking a known amount of pathogen into a clinical matrix for controlled experiments [16]. |

| Common Interfering Substances | Identify potential false positives or negatives caused by common compounds (e.g., lipids, bilirubin) or medications [16]. |

Frequently Asked Questions

FAQ 1: What is the primary diagnostic challenge caused by disease-specific vertical programs in low-resource settings? The core challenge is the inability to manage comorbidities and chronic conditions effectively. Health systems designed around single-disease programs create significant gaps in care for patients with multiple health needs. This fragmented approach often results in missed opportunities for diagnosis and poor continuity of care. For example, while HIV/TB co-infection is commonly addressed, the emerging challenge of managing non-communicable diseases (NCDs) and mental health disorders in this population remains largely unaddressed by vertical programs [4].

FAQ 2: What criteria are critical for designing successful integrated diagnosis interventions? International expert consensus has established 18 core criteria for effective integrated diagnosis. Key criteria include ensuring the intervention is purpose-driven for the local context, developing an effective treatment linkage system, and securing political and financial commitment. Other critical factors include workforce capabilities, practical requirements like reliable electricity for equipment, and clearly defined treatment pathways that exist beyond the diagnostic moment [4].

FAQ 3: How does the current global research landscape affect diagnostic challenges in low-resource settings? A significant misalignment exists between global research efforts and actual disease burden. Research production disproportionately focuses on diseases affecting high-income, research-intensive regions, while conditions representing the largest share of the disability-adjusted life years (DALYs) in low- and middle-income countries (LMICs), such as cardiovascular diseases and respiratory infections, receive less attention. This divergence challenges the principle that research should be a public good responsive to societal needs [19].

FAQ 4: What impact do recent global funding shifts have on diagnostic systems? The end of the "golden age" of global health funding poses severe risks to diagnostic continuity. Recent U.S. administration aid freezes have blocked billions in global health funding, halting programs for HIV (PEPFAR), malaria trials, and WHO contributions. This retreat from multilateralism, combined with post-COVID austerity and debt crises in LMICs, constrains domestic health investments and threatens to deepen disparities in health outcomes [20].

Troubleshooting Guides

Problem: High rates of missed diagnoses and low service uptake at the primary care level.

- Step 1: Contextual Analysis: Assess the facility-specific context. Do not introduce diagnostic tools without first considering enabling aspects of the health system [4].

- Step 2: Check Enabling Factors: Evaluate practical barriers, including the electricity requirements of instruments versus availability at the facility, healthcare worker skills, and the functionality of referral and treatment pathways [4].

- Step 3: Integrate Patient-Centric Design: Design the diagnostic intervention to provide same-day, multiple-disease testing during a single patient visit to increase convenience and access [4].

Problem: Research and development efforts do not align with the most pressing diagnostic needs in the target population.

- Step 1: Conduct a Disease Burden Alignment Check: Analyze the local distribution of DALYs and compare it to the focus of current research and diagnostic service offerings. Link your research questions directly to identified gaps [19] [21].

- Step 2: Adopt an Interdisciplinary Approach: Review literature and seek collaborations outside your primary discipline to construct a more comprehensive understanding of complex health issues [21].

- Step 3: Engage Local Practitioners: Hold discussions with frontline healthcare providers to identify "real world" problems that may be understudied in academic literature but are critical for effective diagnosis and care [21].

Data Presentation

Table 1: Critical Criteria for Designing Integrated Diagnosis Interventions

This table summarizes the core criteria established by international expert consensus for designing effective same-day integrated diagnosis interventions in primary care settings in LMICs [4].

| Criterion Category | Specific Requirement | Rationale & Impact |

|---|---|---|

| Intervention Purpose & Model | Must be purpose-driven for the specific local context and population served. | Prevents one-size-fits-all approaches that fail due to unaddressed local needs and resource constraints. |

| Health System Linkage | Requires an effective system for linking diagnosis to treatment and care. | Diagnosis is only one step in the care pathway; without a functional linkage, the intervention fails to improve health outcomes. |

| Governance & Funding | Needs sustained political and financial commitment. | Ensures program longevity and resilience against shifting donor priorities and funding cuts. |

| Workforce & Infrastructure | Must align with healthcare workforce capabilities and existing infrastructure (e.g., electricity). | Prevents suboptimal outcomes and device abandonment resulting from unrealistic technical or operational demands. |

Table 2: Divergence Between Global Research Effort and Disease Burden

This table illustrates the misalignment between the proportion of global research publications and the global burden of disease (measured in Disability-Adjusted Life Years, or DALYs) for selected disease areas, based on an analysis of 8.6 million articles (1999-2021) [19].

| Disease Area | Global Research Proportion | Global Disease Burden (DALYs) Proportion | Alignment Status |

|---|---|---|---|

| Neoplasms | Higher | Lower | Over-researched relative to burden |

| Neurological Disorders | Higher | Lower | Over-researched relative to burden |

| Cardiovascular Diseases | Lower | Higher | Under-researched relative to burden |

| Maternal & Neonatal Disorders | Lower | Higher | Under-researched relative to burden |

| Respiratory Infections & TB | Lower | Higher | Under-researched relative to burden (pre-2020) |

| Diabetes & Kidney Diseases | Approximately Equal | Approximately Equal | Aligned |

Experimental Protocols

Protocol: Delphi Method for Establishing Expert Consensus on Diagnostic Criteria

This methodology is used to establish formal consensus among experts on critical criteria for complex health interventions, such as integrated diagnosis [4].

1. Objective: To establish core criteria for designing same-day integrated diagnosis interventions in primary care settings in LMICs.

2. Expert Panel Recruitment:

- Panel Composition: Engage an international panel of experts (typically 10-50) from diverse professions and geographies. The panel should include:

- Implementers: Frontline providers (clinicians, nurses, laboratory specialists).

- Policymakers/Funders: Decision-makers from global health organizations (e.g., WHO, Global Fund) and ministries of health.

- Academics: Researchers from academic institutions with a focus on integrated healthcare or diagnostics.

- Sampling: Use purposeful sampling based on knowledge and experience, ensuring geographic and occupational diversity.

3. Delphi Process:

- Round 1:

- Present an initial set of criteria derived from a prior evidence synthesis (e.g., a realist review).

- Experts rate each criterion based on how critical it is for inclusion (e.g., on a Likert scale).

- Analysis: Predefined consensus thresholds are applied (e.g., >70% agreement for "critical to include").

- Outcome: Criteria reaching consensus are retained; those not reaching consensus are revised or removed.

- Round 2 (and subsequent rounds):

- Experts re-rate the revised list of criteria, often with feedback on the group's initial responses.

- The process continues iteratively until a stable consensus is reached on a final set of criteria.

- Consensus Measurement: The final output is a list of criteria that have met the predefined agreement threshold.

The Scientist's Toolkit

Research Reagent Solutions for Health Systems Investigation

| Item/Tool | Function in Health Systems Research |

|---|---|

| Delphi Method Protocol | A structured communication technique used to systematically transform expert opinion into group consensus on complex topics like diagnostic integration criteria [4]. |

| Disability-Adjusted Life Year (DALY) Metrics | A standardized quantitative measure of disease burden that combines years of life lost due to premature mortality and years lived with disability. Used to align research priorities with population health needs [19]. |

| Large Language Models (LLMs) for Data Triangulation | Used to create a comprehensive crosswalk between vast scientific publication databases and global disease burden data, enabling large-scale analysis of research-disease alignment [19]. |

| Kullback-Leibler Divergence (KLD) | An information-theoretic metric used to quantify the degree of divergence between the distribution of research publications and the distribution of disease burden (DALYs) over time [19]. |

Diagnostic Integration Workflow

Research-Disease Burden Alignment Analysis

From Theory to Practice: Designing and Implementing Effective Point-of-Care Diagnostics

FAQs: Addressing Key Challenges in Integrated Diagnosis

FAQ 1: What are the most critical design criteria for integrated diagnosis interventions in low-resource settings? An international Delphi consensus study established 18 core criteria deemed critical for designing integrated diagnosis interventions in primary care settings in low- and middle-income countries (LMICs). The study engaged 55 experts from diverse professions and regions, particularly Africa. Key criteria that reached consensus include considerations for robust health system integration, patient-centered design, and practical implementation factors tailored to local contexts and resource constraints [4].

FAQ 2: How can diagnostic tests be better designed for use in low-resource settings? Diagnostic tests for low-resource settings must be designed as fit-for-purpose, considering specific local challenges. Key requirements include [22]:

- Robustness and Ease of Use: Equipment must be reliable and operable with minimal training.

- Cost-Effectiveness: Tests must be affordable for health systems and patients.

- Environmental Suitability: Designs must account for factors like unreliable electricity, heat, dust, and humidity.

- Local Pathogen Relevance: Test targets (e.g., for AMR) must be relevant to local circulating pathogens and resistance profiles, requiring thorough local evaluation.

FAQ 3: What are the common pitfalls when introducing new diagnostic tools in LMICs? A common failure pattern is introducing diagnostic tools without fully considering enabling aspects of the health system. This includes overlooking the electricity requirements of instruments relative to facility capacity, healthcare workforce capabilities, and ensuring functional referral and treatment pathways post-diagnosis. Success requires a holistic view of the entire diagnostic and care pathway [4].

FAQ 4: What is the role of Point-of-Care (POC) tests in integrated diagnosis? POC tests are a crucial component. Lateral Flow Immunoassays (LFIAs), for example, are impactful due to their low cost, ruggedness, rapid results, and ease of use, making them well-suited for remote settings with poor laboratory infrastructure. They allow for immediate clinical decision-making at the site of patient care [9].

FAQ 5: How does "Integrative Diagnostics" differ from simply using multiple diagnostic tests? Integrative Diagnostics (ID) is a vision where data from various diagnostic sources (radiology, pathology, laboratory medicine) are aggregated and contextualized using informatics tools, rather than remaining in separate "silos." This synthesis provides a unified, holistic view to facilitate more accurate diagnosis and direct clinical action, helping to overcome the fragmentation that can lead to diagnostic errors [23].

Core Criteria for Intervention Design

The following table summarizes the 18 criteria established by expert consensus for designing integrated diagnosis interventions [4].

| Criterion Category | Core Design Consideration | Brief Explanation |

|---|---|---|

| Health System Integration | Link to care and treatment | Ensures a functional pathway for positive diagnoses, including treatment access. |

| Laboratory system and network | Establishes robust specimen referral mechanisms and quality assurance. | |

| Supply chain management | Secures reliable delivery of diagnostic commodities and reagents. | |

| Data management and use | Implements systems for recording, reporting, and utilizing diagnostic data. | |

| Technology & Infrastructure | Equipment and infrastructure | Considers placement, maintenance, and utility requirements (e.g., power, water). |

| Choice of diagnostic technology | Selects tools appropriate for the specific use case and health system tier. | |

| Personnel & Training | Training and competency | Ensures healthcare workers have the skills to perform tests and interpret results. |

| Staffing and workload | Allocates sufficient human resources to manage the integrated service workload. | |

| Supportive supervision | Provides ongoing oversight and support for quality service delivery. | |

| Patient-Centered Design | Service accessibility | Designs services to be physically, financially, and culturally accessible. |

| Patient communication and support | Provides clear communication of results and appropriate counseling. | |

| Patient follow-up | Establishes mechanisms for tracking patients and ensuring continuity of care. | |

| Financing & Sustainability | Financing and resources | Secures sustainable funding for both initial setup and ongoing operational costs. |

| Cost and cost-effectiveness | Evaluates the affordability and economic value of the intervention. | |

| Governance & Planning | Leadership and governance | Ensures clear accountability and management structures. |

| Planning and preparation | Involves thorough situational analysis and stakeholder engagement before rollout. | |

| Policy and regulatory environment | Operates within a supportive policy, legal, and regulatory framework. | |

| Strategic alignment | Aligns the intervention with national health strategies and priorities. |

Experimental Protocol: Establishing Expert Consensus

Objective: To establish international consensus on the core criteria for designing integrated diagnosis interventions for primary care in low-resource settings [4].

Methodology: A Two-Round Delphi Process

- Expert Panel Recruitment: Fifty-five experts were purposefully sampled for diversity across profession (implementers, policymakers/funders, researchers) and geography (with a focus on Africa).

- Survey Rounds:

- Round 1: Experts rated an initial set of 33 criteria. A pre-determined consensus threshold of 70% for "critical to include" was used.

- Result: 14 criteria reached consensus as critical, and 9 were removed.

- Round 2: 48 of the original 55 experts rated the remaining criteria.

- Result: 4 additional criteria reached consensus.

- Final Output: Consensus was achieved on a final set of 18 core criteria.

Diagnostic Pathway and Intervention Design

Integrated Diagnosis Intervention Framework

Research Reagent Solutions for Low-Resource Diagnostics

| Reagent / Material | Function in Diagnostic Testing | Key Considerations for Low-Resource Settings |

|---|---|---|

| Lateral Flow Strips | Rapid, qualitative/quantitative detection of antigens/antibodies. | No refrigeration needed; long shelf-life; minimal training required; low cost per test [9]. |

| Point-of-Care Nucleic Acid Tests | Isothermal amplification (e.g., RPA, LAMP) for pathogen DNA/RNA. | Can be packaged for use without complex lab infrastructure; faster than traditional PCR [7]. |

| Stabilized Reagents | Lyophilized (freeze-dried) enzymes and chemicals for reactions. | Remains stable at higher temperatures, reducing cold chain dependency [22]. |

| Multiplex Assay Panels | Simultaneous detection of multiple pathogens from a single sample. | Increases diagnostic efficiency and can reduce overall cost by testing for several diseases at once [4] [23]. |

| Sample Preparation Kits | Simplified extraction and purification of nucleic acids or antigens. | Designed for minimal steps and without the need for centrifuges or other heavy equipment [7]. |

Technical Support Center: Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between the ASSURED and REASSURED frameworks? The core difference lies in the addition of real-time connectivity and ease of specimen collection to the original criteria. The ASSURED framework, established by the World Health Organization (WHO), defined the ideal test for developing countries as being Affordable, Sensitive, Specific, User-friendly, Rapid and robust, Equipment-free, and Deliverable to end-users [24]. The updated REASSURED framework incorporates advances in digital technology and mobile health (m-health), emphasizing diagnostics that can inform disease control strategies in real-time [25]. The full REASSURED acronym stands for: Real-time connectivity, Ease of specimen collection, Affordable, Sensitive, Specific, User-friendly, Rapid and robust, Equipment-free or simple, and Deliverable to end-users [26] [27].

FAQ 2: Why is "ease of specimen collection" now a critical criterion? The development of a diagnostic test that uses hard-to-obtain samples, such as venous blood, is of limited value in a low-resource setting without a trained professional to collect the sample. Tests that use easy-to-obtain and non-invasive samples, such as finger-prick blood, nasal or oral swabs, or urine, are far more accessible and practical for point-of-care (POC) use [27]. This enhances the test's deliverability and ultimate impact.

FAQ 3: How does "real-time connectivity" strengthen health systems? The ability to transmit results globally in real-time is crucial for rapid outbreak response and informed decision-making at both individual and population levels [26]. It enables better disease surveillance, enhances the efficiency of healthcare systems, allows for remote consultation, and ensures that results can be tracked and aggregated for public health action, even from the most remote locations [25].

FAQ 4: What are common trade-offs when developing a REASSURED diagnostic? It is challenging for any single diagnostic to perfectly fulfill all REASSURED criteria, and trade-offs are often necessary [27]. For example:

- Nucleic Acid Tests (NAT) typically offer high sensitivity and specificity but often require equipment and complex sample preparation, conflicting with the equipment-free and user-friendly goals.

- Antigen-based lateral flow assays are highly user-friendly, rapid, and largely equipment-free but may have lower sensitivity and specificity compared to NATs [27]. The key is to optimize the test for its specific intended use and context.

FAQ 5: What is a major challenge in ensuring "user-friendliness"? Even the most simple tests can be performed incorrectly without adequate and ongoing training. A study in South Africa found that only 3% of HIV rapid diagnostic tests (RDTs) were performed correctly [24]. With an estimated 150 million tests performed annually worldwide, a 99% accuracy rate would still potentially generate 1.5 million incorrect results each year [24]. This highlights the critical need for clear instructions, minimal steps, and robust training programs.

Troubleshooting Common Experimental & Implementation Challenges

| Challenge | Potential Root Cause | Recommended Solution |

|---|---|---|

| Low test sensitivity in field settings | Sample degradation during transport/ storage; incorrect sample collection; deviation from protocol. | Implement stable, ambient-temperature reagents; simplify sample collection (e.g., finger-prick); use integrated, all-in-one devices to minimize user steps [24] [27]. |

| High false-positive rate | Cross-reactivity with non-target analytes or organisms; insufficient test specificity for local pathogen strains. | Conduct thorough validation using samples from the target population and region; employ a two-test algorithm for confirmation where resources allow [24]. |

| Poor user adoption & high error rate | Complex multi-step protocols; lack of or insufficient training for end-users. | Design tests requiring 2-3 simple steps; develop visual job aids and instructions; establish ongoing quality assurance and proficiency testing programs [24] [9]. |

| Results not being communicated or acted upon | Fragmented health systems; lack of integrated data management; no connectivity. | Incorporate real-time connectivity features; use readers or mobile platforms to standardize results and automatically transmit data to health information systems [26] [25]. |

| Test failure in high-temperature/humidity | Lack of robustness; reagents not stable outside cold chain. | Perform rigorous environmental stress testing during development; use lyophilized reagents and materials that withstand supply chain stresses [24]. |

Experimental Protocols for Key Diagnostic Evaluations

Protocol 1: Assessing Diagnostic Sensitivity and Specificity against a Reference Standard

Objective: To determine the analytical and clinical performance of a new REASSURED diagnostic test by comparing it to an accepted laboratory-based reference method.

Methodology:

- Sample Collection: Collect a panel of well-characterized clinical samples (e.g., blood, swab, urine) from the target population. The panel should include samples positive and negative for the target condition, confirmed by the reference standard.

- Blinded Testing: Test all samples using the new diagnostic test and the reference standard in a blinded manner. Personnel performing the tests should be unaware of the reference results.

- Data Analysis: Construct a 2x2 contingency table to compare the results.

- Calculation: Calculate key performance metrics:

- Sensitivity = [A / (A + C)] x 100

- Specificity = [D / (B + D)] x 100

- Positive Predictive Value (PPV) = [A / (A + B)] x 100

- Negative Predictive Value (NPV) = [D / (C + D)] x 100

Table: Contingency Table for Diagnostic Accuracy

| Reference Standard Positive | Reference Standard Negative | |

|---|---|---|

| New Test Positive | True Positives (A) | False Positives (B) |

| New Test Negative | False Negatives (C) | True Negatives (D) |

Protocol 2: Field Evaluation of User-Friendliness and Robustness

Objective: To evaluate the practical usability and durability of the diagnostic test under real-world, low-resource conditions.

Methodology:

- Site Selection: Select field sites that represent the intended use environment, considering factors like climate, infrastructure, and available expertise.

- User Recruitment: Enlist end-users with varying levels of training (e.g., community health workers, nurses, technicians) to perform the test.

- Observation & Data Collection:

- Observe and record the time taken to perform the test from start to result (Rapid).

- Note any errors or difficulties encountered during each step of the protocol (User-friendly).

- Expose test kits to local environmental conditions (temperature, humidity) for a defined period and then re-test known samples to check performance (Robust).

- Administer a short questionnaire to users to assess their confidence and perception of the test's ease of use.

- Analysis: Summarize the success rate, error frequency, time-to-result, and failure modes. The test should be easy to perform in a few steps and withstand supply chain challenges without requiring refrigeration [24].

Visualization of Framework Evolution and Workflow

ASSURED to REASSURED Evolution

REASSURED Diagnostic Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents and Materials for REASSURED Diagnostic Development

| Item | Function | Key Considerations for Low-Resource Settings |

|---|---|---|

| Lateral Flow Nitrocellulose Membrane | The platform for capillary flow and the immobilization of capture reagents (e.g., antibodies, oligonucleotides). | Must have consistent pore size and flow characteristics for robustness and reproducibility [9]. |

| Gold Nanoparticles / Colored Latex Beads | Visual labels for detection. Conjugated to detection antibodies or oligonucleotides. | Provide a equipment-free, visual readout. Gold nanoparticles often offer higher sensitivity [9]. |

| Lyophilized Reagents | Stable, dry-form enzymes, primers, and probes for nucleic acid amplification tests (NAATs). | Eliminates the cold chain, critical for deliverability and robustness in settings without reliable refrigeration [27]. |

| Stabilization Buffers | Protect biological reagents (e.g., antibodies) from denaturation due to heat and humidity. | Essential for maintaining test sensitivity and specificity throughout the product's shelf life in challenging environments [24]. |

| Low-Cost Polymerase | Enzyme for nucleic acid amplification in isothermal or PCR-based tests. | Must be affordable and stable at ambient temperatures to meet affordability and equipment-free/simple goals [27]. |

Troubleshooting Guide: Resolving Common LFIA Development Challenges

This guide addresses frequent technical issues encountered during the development and optimization of Lateral Flow Immunoassays (LFIAs), with particular consideration for their application in low-resource settings.

Flow and Membrane Issues

Problem: Slow or incomplete flow on the strip.

- Possible Causes & Solutions:

- Cause: Improper membrane selection (pore size too small). Solution: Select a membrane with a larger pore size (e.g., 15-25 µm) to increase flow rate, balancing the need for adequate analyte interaction time [28].

- Cause: High humidity degrading the nitrocellulose membrane. Solution: Handle and store NC membranes in a controlled environment with relative humidity (RH) below 40%. Use desiccants in packaging [28] [29].

- Cause: Inadequate sample pad pre-treatment. Solution: Optimize pre-treatment of the sample pad with surfactants (e.g., <0.05% Tween-20) and blockers (e.g., 1% BSA) in carbonate or tris buffer to ensure steady, uniform sample flow [28].

Problem: Backflow of liquid on the strip.

- Possible Causes & Solutions:

- Cause: Insufficient absorbent pad capacity. Solution: Increase the thickness or length of the cellulose absorbent pad to enhance liquid uptake and maintain consistent capillary flow [28].

Signal and Detection Issues

Problem: False positive results in sandwich assays.

- Possible Causes & Solutions:

- Cause: Non-specific binding of the conjugate to the test line. Solution: Add non-ionic surfactants to pads to minimize hydrophobic interactions, or increase NaCl concentration to reduce hydrophilic interactions [30].

- Cause: Specific interference from human anti-animal antibodies (HAAA). Solution: Incorporate heteroblockers into the sample pad pre-treatment buffer to prevent this interference [30].

- Cause: Antibody cross-reactivity. Solution: Perform epitope mapping for capture and detector antibodies to confirm specificity. Use monoclonal antibodies with minimal cross-reactivity [28] [29].

Problem: False negative results in sandwich assays.

- Possible Causes & Solutions:

- Cause: Conjugate released too quickly and runs ahead of the analyte. Solution: Optimize the sugar concentration (e.g., sucrose) in the conjugate dispensing buffer to control release kinetics [28] [30].

- Cause: Detector or capture antibodies have insufficient binding rates (on-rates). Solution: Use a slower membrane to increase interaction time and/or optimize the position of the test line [30].

- Cause: Antibody activity destroyed by membrane surfactants. Solution: Test membrane compatibility and consider replacing the antibody or membrane type [30].

Problem: Weak or no signal at the control line.

- Possible Causes & Solutions:

- Cause: Faulty conjugation or inactivation of control line antibodies. Solution: Re-optimize the conjugation process for control antibodies and ensure proper stability with carbohydrates in the conjugate pad [28] [31].

- Cause: Insufficient control line antibody concentration. Solution: Titrate and increase the concentration of the antibody immobilized at the control line [28].

Problem: High background noise across the membrane.

- Possible Causes & Solutions:

- Cause: Inadequate blocking of the membrane or pads. Solution: Incorporate blockers like BSA (1%), casein (0.1-0.5%), or gelatin (0.05-0.1%) into the sample pad or conjugate pad pretreatment protocols [28].

- Cause: Conjugate aggregation. Solution: Improve conjugate blocking and optimize the dispensing buffer to prevent particle aggregation. Using monoclonal antibodies can also help reduce mechanical retention [30].

Consistency and Manufacturing Issues

Problem: Test-to-test variability with artificial samples.

- Possible Causes & Solutions:

- Cause: Non-uniform coating of the conjugate pad. Solution: Ensure uniform spreading of detector particles during the coating process, using either dipping or spraying methods [28].

- Cause: Inconsistent overlapping of membrane components. Solution: Ensure precise, consistent overlapping of the sample pad, conjugate pad, nitrocellulose membrane, and absorbent pad on the backing card [31].

Table: Summary of Critical Membrane Properties

| Parameter | Considerations | Impact on Assay |

|---|---|---|

| Pore Size | Ranges from 1-20 µm [28]. | Smaller pores increase wicking time and interaction, potentially enhancing sensitivity [28]. |

| Capillary Flow Time | Time for liquid to travel and fill the membrane strip [31]. | A more accurate parameter than pore size for selecting membrane material and ensuring consistent flow [31]. |

| Wicking Rate | Speed at which fluid moves through the membrane. | Affects the time available for antigen-antibody binding; can be optimized for sensitivity [28] [32]. |

| Protein Holding Capacity | The amount of protein the membrane can immobilize. | Directly impacts the amount of capture antibody that can be bound to the test and control lines [32]. |

Frequently Asked Questions (FAQs)

Q1: What are the key considerations when selecting a nitrocellulose membrane for an LFIA destined for a low-resource setting? The selection must balance performance with environmental robustness. Key factors include:

- Pore Size/Flow Rate: Choose a pore size (typically 8-15 µm for serum, 15 µm for whole blood) that provides an optimal flow rate, balancing speed with sufficient analyte-antibody interaction time for sensitivity [28].

- Stability: NC membranes are vulnerable to moisture. Select membranes with consistent quality and ensure robust, moisture-proof packaging with desiccants to maintain stability in high-humidity environments [28] [29].

- Protein Binding Capacity: Ensure the membrane has adequate capacity to immobilize the required amount of capture antibody (typically 50-500 ng per strip) for a strong, reliable signal [28] [32].

Q2: How can I improve the thermal stability and shelf-life of my LFIA in locations without reliable cold chain storage?

- Reagent Formulation: Use heat-stable reagent formulations, such as antibodies known for their robustness [29].

- Conjugate Pad Preservation: Incorporate carbohydrates (e.g., sucrose) into the conjugate pad buffer. When dried, these form a protective matrix around the detector particles, stabilizing them during storage at elevated temperatures [31].

- Packaging: Employ multi-layered, moisture-barrier packaging and include desiccants to protect against heat and humidity, aiming for a shelf life of 12-24 months [29].

Q3: Why is the pH of the conjugation buffer so critical, and how do I optimize it? The pH of the conjugation buffer determines the electrostatic interaction between the antibody and the nanoparticle (e.g., colloidal gold). An incorrect pH will lead to incomplete conjugation or particle aggregation, reducing sensitivity and increasing background [28].

- Optimization Method: Perform a salt aggregation test. Mix conjugation buffer, antibody, and colloidal gold at different pH gradients in the presence of 2M sodium chloride. The optimum pH is the highest pH at which no color change or precipitation occurs, indicating no salt-induced aggregation and full antibody binding to the particles [28].

Q4: What are the primary causes of non-specific binding, and how can they be mitigated? Non-specific binding manifests as high background or false positives.

- Causes: Hydrophobic or hydrophilic interactions between the conjugate and membrane; interfering substances in samples (e.g., lipids, proteins); cross-reactivity of antibodies [30].

- Mitigation Strategies:

- Blocking: Use blockers like BSA, casein, or commercial blocking proteins in the sample pad and conjugate buffer [28].

- Surfactants: Add non-ionic surfactants (e.g., Tween-20, Triton X-100) to the running buffer or pad pretreatment to minimize hydrophobic interactions [28] [30].

- Antibody Selection: Use high-affinity, high-specificity monoclonal antibodies. For some challenges, using F(ab)2 fragments in the conjugate can help significantly [30].

Experimental Protocols for Key Optimizations

Protocol 1: Optimizing Conjugate Pad Release Kinetics

Objective: To ensure the conjugate is released uniformly and in sync with the sample flow, preventing false negatives.

- Preparation: Prepare conjugate pads with a series of sucrose concentrations (e.g., 2%, 5%, 10%) in the dispensing buffer.

- Coating: Apply a fixed amount of gold nanoparticle-antibody conjugate to the pre-treated pads and dry at 37°C for 1 hour.

- Testing: Assemble test strips with the different conjugate pads. Run a standard sample containing a known concentration of the analyte.

- Evaluation: Visually or using a strip reader, assess the intensity of the test line and the cleanliness of the background. The optimal sugar concentration provides a strong, clear test line with minimal background.

- Rationale: Sugar acts as a preservative and resolubilization agent. The right concentration ensures the conjugate re-suspends completely and migrates with the sample front [28] [31].

Protocol 2: Verifying Conjugation Efficiency via Salt Aggregation Test

Objective: To empirically determine the ideal pH for conjugating an antibody to colloidal gold nanoparticles.

- Preparation: Prepare a series of small tubes with colloidal gold solutions adjusted to different pH values (e.g., from 7.0 to 9.5, in 0.5 increments) using 0.1M K2CO3.

- Addition: To each tube, add a fixed, small volume of the antibody solution and mix gently.

- Incubation: Let stand for 2-5 minutes.

- Stress Test: Add a fixed volume of 10% NaCl solution to each tube and mix.

- Analysis: Observe the tubes for color change from red to blue/gray, which indicates aggregation.

- Determination: The optimal pH for conjugation is the highest pH value at which the solution remains red and shows no sign of aggregation after salt addition. Using a pH 0.5 higher than this is recommended for the actual conjugation [28].

Visualization of LFIA Architecture and Flow

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Reagents and Materials for LFIA Development

| Item | Function | Key Considerations |

|---|---|---|

| Nitrocellulose Membrane | The platform for capillary flow and immobilization of capture antibodies at test and control lines [28] [31]. | Pore size (1-20 µm), capillary flow time, protein binding capacity, and humidity sensitivity are critical selection parameters [28] [32]. |

| Colloidal Gold Nanoparticles | The most common label (detector particle); provides red color upon accumulation at test lines [28] [31]. | Particle size (20-80 nm, 40 nm is common); requires precise pH control during antibody conjugation for stability [28]. |

| Monoclonal Antibodies | Primary biorecognition elements for both capture and detection; provide high specificity [28] [31]. | Must have high affinity and low cross-reactivity. Epitope mapping for sandwich assays is essential to ensure distinct binding sites [28]. |

| Blocking Agents (BSA, Casein) | Proteins used to coat membranes and pads to minimize non-specific binding and reduce background noise [28]. | Typical concentrations: BSA (1%), Casein (0.1-0.5%). Optimize to block all non-specific sites without interfering with specific binding [28]. |

| Surfactants (Tween-20) | Added to buffer systems to control flow characteristics and reduce hydrophobic interactions that cause non-specific binding [28] [30]. | Use at low concentrations (<0.05%). Critical for ensuring uniform sample wicking and release of conjugate from the pad [28]. |

| Carbohydrates (Sucrose) | Used as a stabilizer and resolubilization agent in the conjugate pad to protect detector antibodies during drying and storage [31]. | Concentration must be optimized to ensure complete release of conjugate upon sample application without delaying flow [28] [30]. |

| Conjugation Buffer | The medium in which antibodies are conjugated to nanoparticles; its pH and molarity are critical for success [28]. | pH must be optimized for each antibody-nanoparticle pair, typically near or slightly above the isoelectric point of the antibody [28]. |

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center is designed for researchers and scientists employing Lab-in-a-Cartridge and smartphone-based diagnostic technologies in low-resource settings. The guides below address common experimental and operational challenges.

Frequently Asked Questions (FAQs)

Q1: What does the REASSURED criteria for modern Point-of-Care Testing (POCT) devices stand for? The updated REASSURED criteria define the standards for ideal POCT devices suitable for low-resource environments. The acronym stands for Real-time connectivity, Ease of specimen collection, Affordable, Sensitive, Specific, User-friendly, Rapid and Robust, Equipment-free, and Deliverable to end-users [33].

Q2: How can Machine Learning (ML) improve the accuracy of test line interpretation in Lateral Flow Assays (LFAs)? Self-administration and reading of POCT by less trained staff can lead to diagnostic inaccuracies. ML algorithms, particularly supervised learning models like Convolutional Neural Networks (CNNs), can be embedded into smartphone-based readers to process images of the LFA. This reduces false positives and negatives by providing a quantitative interpretation of results, including faint test lines that are difficult for the human eye to classify [33].

Q3: Our cartridge-based nucleic acid test is displaying connectivity issues, failing to transmit results to the central surveillance database. What are the first steps in troubleshooting? Connectivity is critical for real-time surveillance. Please follow this initial troubleshooting protocol:

- Verify Data Link: Ensure the POCT device or the connected smartphone has an active cellular or Wi-Fi connection.

- Power Cycle: Turn the device off and on again. This simple step can often resolve minor software glitches affecting connectivity [34].

- Inspect the Cartridge Reader: Check the physical ports and cables connecting the cartridge reader to the smartphone or data transmitter for any visible damage.

- Check Server Status: Confirm that the central database server is operational and accessible.

Troubleshooting Common Experimental Issues

Issue: Consistently Faint Test Lines on Lateral Flow Assays (LFAs) Leading to Ambiguous Results

| Potential Cause | Explanation | Resolution Protocol |

|---|---|---|

| Suboptimal Antibody Pair | The capture/detection antibody pair may have low affinity or specificity for the target antigen. | Re-optimize the assay conditions or source a new, validated antibody pair. Run a standard series with a known antigen concentration to recalibrate. |

| Insufficient Sample Volume | The sample flow is inadequate to deliver a sufficient number of target analytes to the test line. | Precisely calibrate and adhere to the required sample volume. Use a calibrated pipette for loading the sample. |

| Incorrect Buffer Formulation | The running buffer may not effectively support the antibody-antigen interaction or the flow dynamics. | Prepare a fresh batch of buffer according to the exact protocol, ensuring correct pH and salt concentration. |

| Hardware Imperfection | The smartphone reader or optical sensor may not be capturing the image under consistent lighting conditions. | Implement a standardized imaging box to control ambient light. Use an ML algorithm trained to account for and correct such variations in the image data [33]. |

Issue: Low Signal-to-Noise Ratio in Smartphone-based Microfluidic Immunoassays

| Potential Cause | Explanation | Resolution Protocol |

|---|---|---|

| Non-Specific Binding | Proteins or other components in the sample are binding to the microfluidic channel walls, creating background noise. | Include blocking agents like BSA or casein in the buffer. Increase the stringency of wash steps. |

| Suboptimal Image Capture | Images are taken with motion blur, inconsistent focus, or improper white balance. | Use a fixed-position smartphone holder. Employ an app that allows for manual control of focus and exposure. |

| Background Fluorescence | The cartridge material or reagents have inherent autofluorescence. | Switch to low-fluorescence plastics. Include a "no-analyte" negative control to establish and computationally subtract the background signal. |

| Complex Sample Matrix | Biological samples (e.g., blood, saliva) contain many components that interfere with the signal. | Incorporate sample preparation/filtration steps into the cartridge design. Use sample purification columns or filters prior to loading [35]. |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key reagents and materials essential for developing and running experiments with Lab-in-a-Cartridge and smartphone-based diagnostics.

| Item | Function/Explanation |

|---|---|

| Bead-based Immunoassay Kits | These kits use beads in solution (as opposed to a solid phase) which offer more sites for ligand binding, potentially yielding higher sensitivity and allowing for multiplexed, quantitative detection of multiple targets from a single specimen [35]. |

| Microfluidic Discs/Cartridges | These are miniaturized platforms, often in disc form, that reproduce all steps of a traditional ELISA. They are rapid and inexpensive to manufacture, and when paired with a reader, allow for high-throughput, automated analysis with small sample volumes [35]. |

| Quantum Dots | These are fluorescent semiconductor nanoparticles (10-100 atoms in diameter) used as labels in ultrasensitive tests. They can be applied to detect low-abundance pathogens and for high-throughput screening of drug resistance mutations [35]. |

| Multiplexed Vertical Flow Assay (VFA) | A paper-based sensor platform that allows for the simultaneous detection of multiple biomarkers. Its design can be computationally optimized using machine learning to determine the best immunoreaction conditions, enhancing performance and reducing cost per test [33]. |

| ML-Enhanced Diagnostic Software | Software incorporating supervised learning algorithms (e.g., CNNs, SVMs) for automated analysis of POCT data. It improves sensitivity, enables multiplexing, and provides quantitative results from complex signals, reducing user interpretation errors [33]. |

Experimental Protocol: ML-Assisted Analysis for a Multiplexed Vertical Flow Assay (VFA)

This protocol details the methodology for processing and interpreting results from a multiplexed VFA using a smartphone and machine learning, suitable for low-resource settings.

Workflow Overview:

Materials:

- Developed multiplexed VFA cartridge.

- Smartphone with camera and custom image capture app.

- Standardized imaging box to control lighting.

- Pre-trained machine learning model (e.g., a Convolutional Neural Network) integrated into a smartphone app or connected software.

Methodology:

- Image Acquisition: After running the VFA, place the cartridge into the standardized imaging box. Use the smartphone app to capture a high-resolution image of the assay result under consistent lighting conditions [33].

- Data Preprocessing: The captured image is automatically preprocessed by the software. This step involves:

- Denoising: Reducing visual noise from the image.

- Background Subtraction: Correcting for uneven illumination or background color.

- Normalization: Scaling image intensities to a standard range.

- Augmentation (if needed): Artificially expanding the training dataset by creating modified versions of the image (e.g., rotated, scaled) to improve model robustness [33].

- Model Optimization & Feature Selection: The preprocessed image is fed into the pre-trained ML model. In the training phase of the model, the dataset is typically split into 60% for training, 20% for validation, and 20% for blind testing. The model's configuration is optimized to learn the relationship between the input image patterns and the target outcomes (e.g., analyte concentration) [33].

- Blind Testing & Result Interpretation: The optimized model analyzes the new, unseen image from the VFA. It extracts features from the test zones and provides a quantitative interpretation of the results (e.g., concentration values for multiple biomarkers) along with a confidence score for the prediction. This eliminates the subjectivity of visual interpretation [33].

- Data Transmission: The quantitative results, along with the image and metadata (e.g., GPS location, timestamp), are transmitted via the smartphone's data connection to a central surveillance database for real-time monitoring and analysis [35].

The Role of User-Centered Design and Human Factors Engineering

In low-resource settings research, diagnostic challenges such as equipment variability, limited user training, and environmental constraints can compromise data integrity. User-Centered Design (UCD) and Human Factors Engineering provide a systematic framework to develop resilient diagnostic tools and protocols. By focusing on the needs, capabilities, and contexts of researchers, these approaches reduce human error and ensure reliable results despite infrastructural limitations [36] [37]. This technical support center applies these principles to create effective troubleshooting guides and FAQs, empowering scientists to overcome common experimental hurdles.

Frequently Asked Questions (FAQs)

Human-Centered Design

What is Human-Centered Design (HCD) and why is it critical for diagnostics in low-resource settings?

HCD is an approach that makes interactive systems more usable by focusing on the user and applying human factors, ergonomics, and usability techniques [36]. For diagnostics, it ensures that tools and protocols are designed around the specific constraints of the field—such as unstable power, limited technical expertise, or dusty environments—making them safer, more effective, and more likely to be adopted correctly [36] [37].

What are the phases of a Human-Centered Design process?

The ISO 9241-210 standard defines four iterative activity phases [36]:

- Understand the context of use: Identify the users, their tasks, and their operating environment.

- Specify user requirements: Detail the user needs that must be met for the product to be successful.

- Produce design solutions: Create prototypes and mock-ups.

- Evaluate the design: Assess the solutions against the user requirements [36].

Troubleshooting Guide Fundamentals

How does a troubleshooting guide align with UCD principles?

A troubleshooting guide is a practical application of UCD. It is a structured, user-focused tool that helps researchers independently diagnose and resolve problems, reducing downtime and frustration. By providing clear, step-by-step instructions, it empowers users of all skill levels, making the entire diagnostic system more usable and reliable [38] [39].

What are the key features of an effective troubleshooting guide?

A well-designed guide should have [38] [39]:

- Clear problem identification: Precise descriptions of symptoms and error messages.

- Step-by-step instructions: A logical sequence to follow without skipping steps.

- Visual aids: Diagrams or screenshots to clarify complex steps.

- Common pitfalls: Warnings about frequent mistakes to avoid.

When should a troubleshooting issue be escalated to the engineering team?

Escalate when all solutions in the guide have been exhausted, the issue affects critical operations, or it is a novel problem not covered in existing documentation. Always provide the engineering team with detailed evidence, such as logs, error codes, and the steps already taken [39] [40].

Troubleshooting Guides

Guide 1: Inconsistent Assay Results

Issue or Problem Statement An diagnostic assay (e.g., ELISA, lateral flow) produces inconsistent or variable results between users or test runs [39].

Symptoms or Error Indicators

- High coefficient of variation between replicate samples.

- Control samples falling outside acceptable ranges.

- Faint, uneven, or absent test bands in visual readouts [39].

Environment Details

- Document the ambient temperature and humidity.

- Note the specific assay kit, manufacturer, and lot number.

- Record the equipment used (e.g., pipettes, plate reader) and their calibration dates [39].

Possible Causes

- Improper sample storage or handling.

- Pipetting technique inconsistencies.

- Deviations from the recommended incubation timings or temperatures.

- Reagent degradation or contamination [39].

Step-by-Step Resolution Process

- Verify reagent integrity: Check expiration dates and ensure reagents have been stored correctly. Visually inspect for precipitates or discoloration.

- Confirm protocol adherence: Review the standard operating procedure (SOP) with the user. Watch them perform key steps to identify technique deviations.

- Check equipment calibration: Confirm that pipettes, timers, and incubators are within calibration specifications.

- Run a controlled experiment: Repeat the assay using a fresh set of reagents and a single, trained operator to isolate user variability.

- Document findings: Record all observations, measurements, and any corrective actions taken [39].

Escalation Path or Next Steps If the problem persists after controlled testing, escalate to the lab manager or the assay manufacturer's technical support. Provide the full dataset, calibration records, and details of the controlled experiment [39].

Guide 2: Portable Analyzer Connectivity Failure

Issue or Problem Statement A portable analyzer (e.g., spectrophotometer, qPCR machine) fails to sync or transmit data to a laptop or tablet [38].

Symptoms or Error Indicators

- "Device not found" or "Connection failed" error messages on the computer.

- The analyzer appears to be functioning but data is not received.

- Intermittent connection that drops during data transfer [38].

Environment Details

- Type of connection: USB, Bluetooth, Wi-Fi.

- Operating system and version of the computer/tablet.