Comparative Analysis of Imaging Modalities for Early Detection: Advancing Precision in Disease Diagnosis and Drug Development

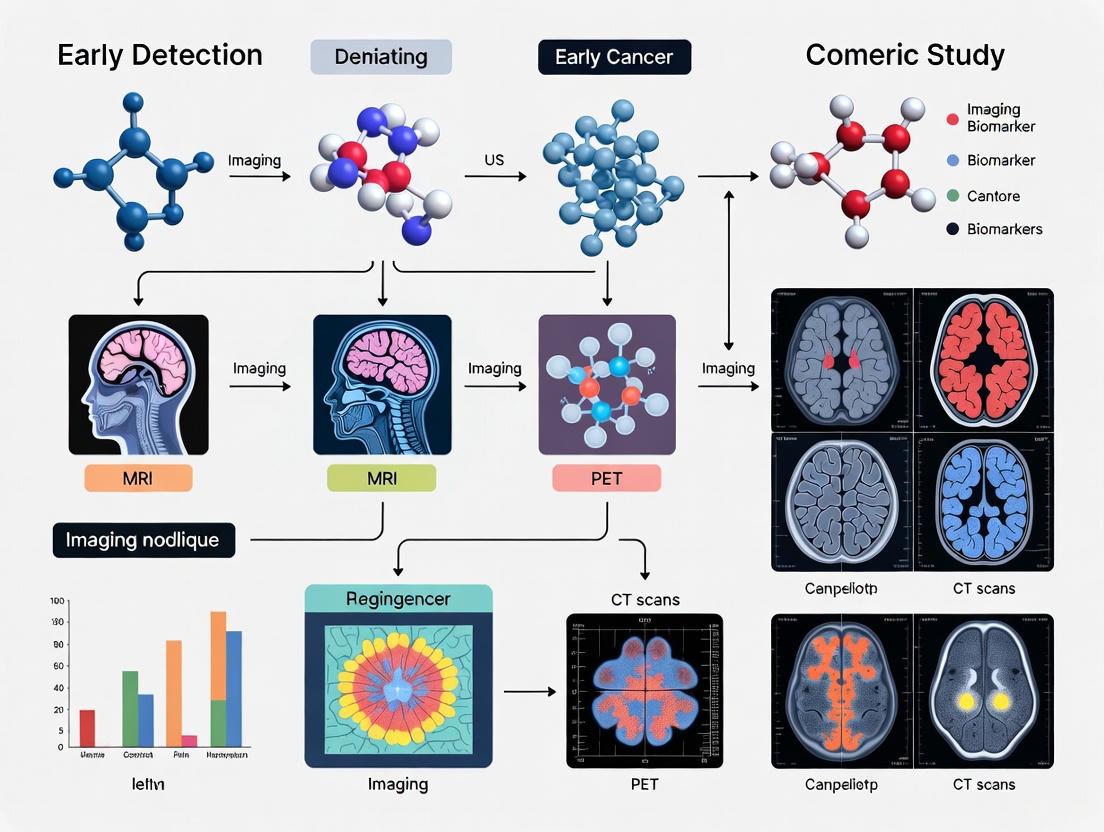

This article provides a comprehensive comparative analysis of established and emerging imaging modalities for early disease detection, with a specific focus on applications in drug discovery and development.

Comparative Analysis of Imaging Modalities for Early Detection: Advancing Precision in Disease Diagnosis and Drug Development

Abstract

This article provides a comprehensive comparative analysis of established and emerging imaging modalities for early disease detection, with a specific focus on applications in drug discovery and development. It explores the foundational principles, technical capabilities, and limitations of key technologies including CT, MRI, PET, ultrasound, and advanced hybrid systems. The content examines methodological applications across therapeutic areas, addresses optimization strategies through AI and multimodal fusion, and critically evaluates validation frameworks for imaging biomarkers. Designed for researchers, scientists, and drug development professionals, this review synthesizes current trends, technological innovations, and practical considerations for implementing imaging technologies in preclinical and clinical research to enhance diagnostic precision and accelerate therapeutic development.

Fundamental Principles and Evolving Landscape of Diagnostic Imaging Technologies

The selection of an appropriate imaging modality is a critical determinant of success in early detection research and therapeutic development. Technological advancements have given researchers a sophisticated toolkit for non-invasively probing living systems, yet each modality possesses distinct strengths and limitations rooted in its underlying physical principles. This guide provides a comparative analysis of three core imaging modalities—Magnetic Resonance Imaging (MRI), Positron Emission Tomography (PET), and Computed Tomography (CT)—focusing on their technical mechanisms, diagnostic performance, and specific applications in a research context. By objectively comparing their performance using recent experimental data, this resource aims to inform evidence-based decision-making for researchers, scientists, and drug development professionals engaged in the comparative study of imaging biomarkers for early disease detection.

Technical Principles and Physical Mechanisms

Understanding the fundamental physics underlying each imaging technology is essential for selecting the right tool for a specific research question and for accurately interpreting the resulting data.

Magnetic Resonance Imaging (MRI)

MRI functions by harnessing the magnetic properties of atomic nuclei, most commonly hydrogen protons found abundantly in water and fat molecules within the body [1]. When placed in a strong, static magnetic field (B0), these randomly oriented proton spins align either parallel or antiparallel to the field, creating a net magnetization vector [2]. The application of a radiofrequency (RF) pulse at the specific resonant frequency (Larmor frequency) tips this net magnetization away from its alignment with B0. After the RF pulse is turned off, the protons release the absorbed energy as they return to their equilibrium state; this emitted signal is detected by RF coils [1] [2]. The contrast in an MR image is determined by the rate at which the protons recover in the longitudinal plane (T1 relaxation) and lose coherence in the transverse plane (T2 relaxation) [2]. By manipulating the sequence of RF pulses (pulse sequences), researchers can generate images that are weighted to emphasize differences in tissue-specific T1 or T2 relaxation times, or proton density.

Positron Emission Tomography (PET)

PET is a molecular imaging technique that detects pairs of gamma rays emitted indirectly by a positron-emitting radiotracer, most commonly ¹⁸F-fluorodeoxyglucose ([¹⁸F]FDG), which is introduced into the body [3]. [¹⁸F]FDG is a glucose analog that is taken up by cells with high metabolic activity, such as cancer cells. Once inside the cell, it becomes trapped after phosphorylation, accumulating in proportion to the tissue's glucose metabolic rate [4]. As the radiotracer decays, it emits a positron that annihilates with a nearby electron, producing two 511-keV gamma photons traveling in nearly opposite directions. The PET scanner detects these photon pairs simultaneously ("coincidence detection"), allowing the system to pinpoint the line along which the annihilation occurred. By collecting millions of these events, the system reconstructs a three-dimensional map of radiotracer concentration, which reflects functional processes like glucose metabolism [3].

Computed Tomography (CT)

CT imaging is based on the principle of radiation attenuation. An X-ray source rotates around the patient, and a ring of detectors on the opposite side measures the intensity of the X-rays after they have passed through the body [5]. Different body tissues attenuate the X-ray beam to varying degrees; dense materials like bone absorb more radiation than soft tissues. The attenuation data from multiple angles is then processed using sophisticated reconstruction algorithms to generate cross-sectional images [5]. The degree of attenuation is quantified in Hounsfield Units (HU), which form the basis of CT image contrast. The move from single-slice to multislice CT (MSCT) scanners has dramatically increased the speed and resolution of image acquisition, enabling rapid volumetric assessment of anatomy [5].

Table 1: Fundamental Physical Principles of Core Imaging Modalities

| Modality | Signal Origin | Contrast Mechanism | Energy Source | Primary Information |

|---|---|---|---|---|

| MRI | Nuclear spin of protons (e.g., in H₂O) | T1/T2 relaxation times, proton density, flow | Magnetic fields & radio waves | Anatomical / Functional (e.g., diffusion, perfusion) |

| PET | Positron emission from radionuclide | Concentration of radioactive tracer | Administered radiopharmaceutical | Metabolic / Molecular function |

| CT | Attenuation of X-rays | Tissue electron density | Ionizing radiation (X-rays) | Anatomical / Structural |

Performance Comparison in Clinical Research

Direct, head-to-head comparisons are invaluable for understanding the relative strengths of these modalities in specific research scenarios, such as oncology.

PET vs. MRI in Detecting Tumor Recurrence

A 2024 meta-analysis provides a robust, quantitative comparison of [¹⁸F]FDG PET/CT and MRI for detecting recurrence or residual tumors at the primary site in patients with nasopharyngeal carcinoma (NPC) [6]. The analysis, which included five studies encompassing 1,908 patients, found that PET imaging demonstrated significantly higher pooled sensitivity (93.3%) compared to MRI (80.1%) [6]. This indicates that PET is more reliable for correctly identifying patients who truly have a recurrent or residual tumor. The specificities of the two modalities, however, were statistically similar (PET: 93.8% vs. MRI: 91.8%), meaning both are equally good at correctly ruling out disease in patients without recurrence [6]. The area under the curve (AUC), a measure of overall diagnostic performance, was 0.978 for PET/CT and 0.924 for MRI, a difference that was not statistically significant (p=0.23) [6].

Table 2: Diagnostic Performance in Nasopharyngeal Carcinoma Recurrence (Meta-Analysis Data) [6]

| Diagnostic Metric | [¹⁸F]FDG PET/CT | MRI |

|---|---|---|

| Pooled Sensitivity | 93.3% (95% CI: 91.3–94.9%) | 80.1% (95% CI: 77.2–82.8%) |

| Pooled Specificity | 93.8% (95% CI: 92.2–95.2%) | 91.8% (95% CI: 90.1–93.4%) |

| Area Under the Curve (AUC) | 0.978 | 0.924 |

| Heterogeneity (I²) for Sensitivity | 52.6% | 68.3% |

| Heterogeneity (I²) for Specificity | 0% | 94.3% |

Synergistic Potential of Hybrid Systems

The complementary nature of metabolic and anatomical imaging has driven the development of hybrid systems, most notably PET/CT and, more recently, PET/MRI. PET/CT synergistically combines the high functional sensitivity of PET with the detailed anatomical reference provided by CT, improving the anatomical localization of functional abnormalities [3]. PET/MRI represents a further advancement, offering the same metabolic information with the superior soft-tissue contrast of MRI and a reduced radiation dose compared to PET/CT [4]. In brain imaging, for example, PET/MRI allows for the correlation of metabolic information from PET with detailed anatomical, functional, and microstructural information from advanced MRI sequences (e.g., diffusion-weighted and perfusion-weighted imaging) [4]. This is particularly powerful in neuro-oncology for differentiating tumor recurrence from treatment-related changes and for precise tumor characterization [4].

Experimental Protocols for Validation Studies

To ensure the validity and reproducibility of comparative imaging studies, rigorous experimental protocols must be followed.

Protocol for a Head-to-Head Comparison Study

The meta-analysis on NPC provides a template for a robust comparative study design [6]. Key methodological considerations include:

- Patient Population: Clearly defined patient cohort (e.g., post-treatment NPC patients suspected of recurrence). Studies should include a minimum of 20 patients to reduce small-study effects [6].

- Imaging Acquisition: Both imaging modalities ([¹⁸F]FDG PET/CT and MRI) must be performed within a short, predefined interval (e.g., a maximum of 2 months) to ensure disease status remains unchanged between scans [6]. A minimum interval (e.g., 2 months) should be enforced between the completion of therapy and post-treatment imaging to allow for treatment-related inflammation to subside [6].

- Reference Standard: A reliable reference standard is required to classify true positive, true negative, false positive, and false negative cases. This is often based on histopathology from biopsy/surgery or long-term clinical follow-up.

- Data Analysis: Data extraction should be performed on a per-patient basis, calculating true positive (TP), false negative (FN), false positive (FP), and true negative (TN) values for each modality. Pooled estimates for sensitivity, specificity, and AUC can then be calculated and compared using appropriate statistical software [6].

Quantitative Imaging Analysis Techniques

Beyond visual assessment, quantitative analysis of image data provides objective biomarkers.

- CT Texture and Density Analysis: In interstitial lung disease (ILD), quantitative CT (qCT) techniques can objectively measure disease burden. These include:

- Histogram Analysis: Calculating global metrics like mean lung density (MLD) or the lowest fifth percentile of the density histogram, which correlate with physiological measures of disease severity [7].

- Texture-Based Analysis: Using software like the Computer Aided Lung Informatics for Pathology Evaluation and Rating (CALIPER) to identify and quantify specific parenchymal patterns (e.g., honeycombing, ground-glass opacification) based on 3D histogram features and morphological analysis [7].

- PET Standardized Uptake Value (SUV): The SUV is a semi-quantitative measure of the radiotracer concentration within a tissue, normalized to the injected dose and patient body weight. It is a fundamental metric for assessing metabolic activity in PET studies [4] [7].

The Scientist's Toolkit: Research Reagents and Essential Materials

The following table details key reagents and materials essential for conducting research with these imaging modalities.

Table 3: Essential Research Reagents and Materials for Core Imaging Modalities

| Item | Function/Application | Exemplars / Notes |

|---|---|---|

| PET Radiotracers | Target specific biological processes to provide functional/metabolic data. | [¹⁸F]FDG: Glucose metabolism marker [6] [4].[¹⁸F]FET / [¹¹C]MET: Amino acid transport, brain tumor imaging [4].[¹⁸F]FLT: Marker of cellular proliferation [4]. |

| MRI Contrast Agents | Alter local magnetic properties to enhance tissue contrast in T1- or T2-weighted images. | Gadolinium-based agents: Assess blood-brain barrier integrity, perfusion, and lesion enhancement [4] [2]. |

| CT Contrast Media | Intravenous or oral agents that increase X-ray attenuation to enhance vascular and tissue contrast. | Iodinated contrast agents: Visualize blood vessels, organ perfusion, and tissue vascularity. |

| Texture Analysis Software | Computer-based tools for objective quantification of imaging features and patterns. | CALIPER: Quantifies lung parenchymal pathology in CT [7].AMFM: Recognizes and quantifies HRCT patterns [7]. |

| Hybrid Imaging Probes | Emerging multimodal agents designed for use with combined systems like PET/MRI. | Multimodal probes that contain both a radionuclide for PET and a metallic ion for MRI contrast [4]. |

The early detection of diseases like breast cancer is a cornerstone of modern healthcare, directly influencing patient survival and treatment outcomes. For researchers and clinicians, selecting the appropriate imaging modality is a critical decision, balancing factors such as diagnostic accuracy, cost, accessibility, and patient-specific characteristics. Traditional methods, including mammography, ultrasound, and magnetic resonance imaging (MRI), each possess distinct strengths and limitations, making them suited for particular clinical scenarios. The emergence of artificial intelligence (AI) as a powerful decision-support tool is now fundamentally reshaping this landscape. This guide provides an objective, data-driven comparison of established imaging modalities for early breast cancer detection, with a specific focus on their integration with AI systems. It synthesizes recent advances and experimental data to serve as a reference for researchers, scientists, and drug development professionals engaged in comparative imaging research.

Comparative Analysis of Imaging Modalities

The diagnostic performance of each modality is influenced by its inherent technological principles. The following section offers a detailed, data-centric comparison of the primary imaging techniques used in breast cancer detection.

Table 1: Comparative Performance of Breast Cancer Imaging Modalities

| Modality | Best Use Case | Reported Sensitivity | Reported Specificity | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Mammography (X-Ray) | Population-based screening | 54.5% [8] | Not explicitly quantified in results | Cornerstone of population screening; widely available and cost-effective [8]. | Limited sensitivity, particularly in dense breast tissue [8]. |

| Digital Breast Tomosynthesis (DBT) | Enhancing lesion conspicuity in screening | Increases vs. mammography [8] | Increases vs. mammography (reduces recall rates) [8] | Increases detection of invasive cancers; reduces recall rates for normal findings [8]. | Higher radiation dose than standard mammography; requires specialized equipment. |

| Ultrasound | Complementary test for dense breasts; cyst/solid differentiation | 67.2% [8] | Lower than mammography [8] | Effective in dense breasts; useful for targeted evaluation and biopsy guidance [8]. | Operator dependence; modestly lower specificity when used for screening [8]. |

| Magnetic Resonance Imaging (MRI) | High-risk screening; diagnostic problem-solving | 94.6% [8] | Lower than mammography (higher false positives) [8] | Highest sensitivity of all modalities; excellent for soft tissue characterization [8]. | Lower specificity, high cost, and limited availability restrict use in average-risk populations [8]. |

| AI-Assisted Mammography | Radiologist decision-support, particularly for less experienced readers | 86.5-88.0% (Radiologist with AI) [9] | 59.2-60.5% (Radiologist with AI) [9] | Significantly improves radiologists' diagnostic accuracy and consistency; especially beneficial for dense breasts [9]. | Performance depends on training data; risks of miscalibration and domain shift across institutions [8]. |

Table 2: Impact of AI Assistance on Radiologist Performance (Malaysian Reader Study Data) This table summarizes key quantitative findings from a 2025 reader study, demonstrating the tangible impact of AI assistance across radiologists of varying experience [9].

| Reader Group | Metric | Without AI | With AI |

|---|---|---|---|

| Senior Radiologist | Sensitivity | 86.5% | 88.0% |

| (12 years exp.) | Specificity | 60.5% | 59.2% |

| General Radiologist | Sensitivity | 83.1% | 85.3% |

| (6 years exp.) | Specificity | 58.6% | 59.7% |

| Trainee Radiologist | Positive Predictive Value (PPV) | 56.9% | 74.6% |

| (2 years exp.) | Negative Predictive Value (NPV) | 90.3% | 92.2% |

Advanced AI Architectures in Medical Imaging

The integration of AI into medical imaging is propelled by sophisticated deep learning architectures, each with unique capabilities for analyzing image data.

Core Deep Learning Models

- Convolutional Neural Networks (CNNs): Architectures like AlexNet, VGGNet, and ResNet have fundamentally transformed medical image analysis. They excel at detecting localized patterns, such as microcalcifications or masses. ResNet, with its skip connections, mitigates the vanishing gradient problem, enabling the training of very deep networks for analyzing complex datasets like DBT [8].

- Vision Transformers (ViTs): ViTs represent a shift from convolutional operations to self-attention mechanisms. They process images as sequences of patches, allowing them to capture complex morphological and spatial relationships across the entire image. This makes them particularly effective for analyzing breast tumors that span multiple regions. Studies report ViTs achieving accuracy rates of up to 99.92% in mammography classification and 99.99% on the BreakHis histopathology dataset [8].

- Generative Adversarial Networks (GANs): GANs are used for data augmentation, helping to address the common challenge of data scarcity and class imbalance in medical datasets. They can generate synthetic medical images that are used to augment training data, improving model robustness, though this requires rigorous quality control [8].

Multimodal Integration Frameworks

The frontier of AI in diagnostics involves integrating multiple data sources. Frameworks like MultiParkNet (developed for Parkinson's disease) illustrate the power of this approach. While not a breast cancer model, its architecture is informative: it uses specialized sub-networks (e.g., CNNs for images, LSTM networks for sequential data) to process different data types (e.g., audio, drawing tasks, neuroimaging), followed by a fusion mechanism like multi-head attention to create a comprehensive diagnostic picture [10]. This principle of multi-modal fusion is directly applicable to integrating mammography, MRI, and genomic data for a holistic breast cancer assessment.

Experimental Protocols & Workflows

A 2025 reader study provides a robust experimental model for evaluating AI assistance in mammography interpretation [9]. The workflow and AI analysis pathway are detailed below.

Detailed Methodology

- Study Design: A retrospective, cross-sectional study was conducted using 434 digital mammograms. A key feature was the use of a six-week washout period between reading sessions to minimize recall bias [9].

- AI Software & Algorithm: The study employed Lunit INSIGHT MMG, an FDA-approved AI system. The software analyzes mammograms and assigns a malignancy probability score from 0% to 100%. A pre-validated threshold of 10% was used to triage findings; scores below this were deemed clinically insignificant [9].

- Image Assessment Protocol: Four readers with varying experience levels independently reviewed the same set of images in two separate, blinded sessions: first without and then with AI assistance. They assigned BI-RADS classifications based on the ACR BI-RADS 5th edition lexicon [9].

- Statistical Analysis: Diagnostic performance was quantified using sensitivity, specificity, Positive Predictive Value (PPV), Negative Predictive Value (NPV), and the Area Under the receiver operating characteristic Curve (AUC). The gold standard for final diagnosis was histopathological results or a minimum two-year follow-up for stability [9].

The Scientist's Toolkit: Research Reagent Solutions

For researchers aiming to replicate or build upon such studies, the following table details key computational and data resources.

Table 3: Essential Research Resources for AI in Medical Imaging

| Item / Resource | Function / Description | Example in Context |

|---|---|---|

| Annotated Medical Image Datasets | Curated sets of medical images with expert annotations (e.g., lesion boundaries, BI-RADS scores) for training and validating AI models. | Datasets like BreakHis for histopathology or proprietary mammography collections from hospitals used to train CNNs and ViTs [8]. |

| Deep Learning Frameworks | Software libraries that provide the building blocks for designing, training, and deploying deep neural networks. | TensorFlow and PyTorch are used to implement architectures like ResNet, DenseNet, and Vision Transformers (ViTs) [8]. |

| AI-Based Analysis Software | Specialized software that integrates with clinical systems to provide real-time decision support. | Lunit INSIGHT MMG was used in the reader study to provide malignancy scores to radiologists [9]. |

| High-Performance Computing (HPC) | Computing systems with powerful GPUs (Graphics Processing Units) essential for processing large medical images and training complex models. | Cloud GPU rentals or on-premise clusters are used to train multi-modal frameworks like MultiParkNet, with costs estimated at $50-100/month [10]. |

| Interoperability Standards (HL7/FHIR) | Standards for data exchange that enable the integration of AI tools into existing hospital IT systems like Picture Archiving and Communication System (PACS). | Critical for clinical deployment, allowing AI frameworks to seamlessly interface with Electronic Medical Record (EMR) systems [10]. |

This guide provides a comparative analysis of three advanced imaging technologies—Photon-Counting CT (PCCT), Advanced PET Tracers, and Novel MRI Techniques—focusing on their performance metrics, underlying methodologies, and potential to enhance early detection research.

The following tables summarize the core operating principles and key performance data for the featured technologies, providing a basis for objective comparison.

Table 1: Technical Specifications and Performance Data of Emerging Imaging Technologies

| Technology | Key Technical Principle | Key Performance Metrics (vs. Alternative) | Clinical/Research Application Highlights |

|---|---|---|---|

| Photon-Counting CT (PCCT) | Direct conversion of individual X-ray photons to electrical signals with energy discrimination [11]. | Radiation Dose: 40-60% reduction in cardiovascular imaging [12]; 90.55% effective dose reduction for lung nodule detection [13].Spatial Resolution: Ultra-high-resolution (UHR) at 0.2 mm [12] [14].Coronary Stenosis (UHR): 100% sensitivity, 98.8% specificity [14]. | Superior visualization of small anatomical structures; spectral imaging for tissue characterization; virtual non-contrast imaging [12] [11]. |

| Advanced PET Tracers | Radiolabeled molecules targeting specific biological pathways (e.g., Aβ plaques, FAP proteins). | [18F]D3FSP vs [18F]AV-45: Near-identical binding (SUVR: 1.65±0.23 vs 1.65±0.21; DVR: 1.37±0.13 vs 1.36±0.14) [15].68Ga-FAPI-PET/CT: Improved detection of pancreatic ductal adenocarcinoma (PDA) vs FDG-PET/CT [16]. | Enables in vivo molecular profiling. Critical for patient selection in targeted therapies (e.g., anti-Aβ treatments) [15] [16]. |

| Novel MRI Techniques | Advanced acquisition sequences (e.g., 3D qDESS) paired with sophisticated computational reconstruction (e.g., Deep Learning, HODMD). | 6-Minute Knee MRI (3D qDESS): Sensitivity: 95% (menisci), 83% (cartilage); high inter-reader agreement (0.86 menisci) [17].HODMD Algorithm: High-fidelity 3D heart reconstruction and recovery of corrupted data from limited snapshots [18]. | Dramatically reduced scan times while maintaining diagnostic quality; enables 3D dynamic organ modeling from sparse data [18] [17]. |

Table 2: Impact on Workflow, Cost, and Quantitative Research

| Technology | Workflow & Economic Impact | Role in Quantitative Biomarker & Radiomics Research |

|---|---|---|

| Photon-Counting CT (PCCT) | Cost Savings: Projected saving of \$794.50 per patient in stable chest pain evaluation over 10 years [14].Contrast Reduction: Protocols using 30-40 mL of contrast are feasible [12]. | Provides high-resolution conventional and spectral datasets (e.g., iodine maps, VMI), enriching feature extraction. Improves plaque characterization and enables myocardial tissue analysis [11]. |

| Advanced PET Tracers | Requires robust, prospective, multi-center head-to-head studies for validation, which are complex and costly [19]. | Superior diagnostic performance does not automatically translate to improved patient outcomes. Comprehensive evaluation across technical, clinical, and cost-effectiveness domains is required [19]. |

| Novel MRI Techniques | Throughput: 6-minute protocols significantly improve patient comfort and scanner capacity [17].Data Recovery: HODMD provides a reduced-order model (ROM) to generate new data for machine learning training sets [18]. | Deep learning reconstruction enables fast, quantitative imaging. Algorithms can extract subtle, data-driven patterns for disease classification and organ dynamics modeling [18]. |

Detailed Experimental Protocols

To ensure reproducibility and critical evaluation, this section details the specific methodologies from key cited studies.

Photon-Counting CT for Coronary Artery Disease

A prospective study evaluated PCCT in diagnosing coronary artery stenosis using invasive coronary angiography (ICA) as a reference standard [14].

- Scanner and Protocol: Imaging was performed using a clinical PCCT system. Each patient was scanned in both Standard-Resolution (SR) and Ultra-High-Resolution (UHR) modes.

- Image Reconstruction: Spectral datasets were reconstructed into virtual monoenergetic images (VMI) and virtual non-contrast (VNCa) images using quantum iterative reconstruction (QIR).

- Data Analysis: Two blinded readers evaluated the images for the presence of significant stenosis (≥50% lumen reduction). Diagnostic accuracy metrics (sensitivity, specificity) were calculated per vessel segment against ICA. Quantitative plaque analysis, including volume and characterization, was also performed.

Head-to-Head Comparison of PET Tracers in Alzheimer's Disease

A study directly compared the deuterated tracer [18F]D3FSP with the FDA-approved [18F]AV-45 (florbetapir) in patients with Alzheimer's Disease (AD) [15].

- Study Population: Eight patients with a clinical diagnosis of probable AD.

- Imaging Protocol: Each patient underwent two separate 90-minute dynamic PET/CT scans on a GE Advance scanner, one with each tracer, within a four-week period. Administered activity was approximately 300 MBq for both.

- Image and Metabolite Analysis: Standardized uptake value ratios (SUVRs) from 50-70 minutes post-injection and distribution volume ratios (DVRs) for 43 brain regions were calculated, using cerebellar gray matter as a reference. Plasma metabolite analysis was performed to assess tracer stability.

- Statistical Comparison: Linear regression and correlation analysis were used to compare the SUVR and DVR values obtained from the two tracers across all brain regions.

Accelerated Knee MRI with Deep Learning

A prospective study compared the diagnostic performance of several accelerated ~6-minute knee MRI protocols against a conventional 15-30 minute 2D FSE protocol [17].

- Protocols: Five MRI sequences were performed on each participant:

- Conventional 2D FSE (reference standard).

- 2D FSE with DL reconstruction.

- 3D Cube.

- 3D quantitative Double-Echo Steady-State (qDESS).

- Thin-slice 2D FSE.

- Reader Evaluation: Five radiologists, blinded to the protocol, evaluated the images for pathologies in menisci, cartilage, ligaments, and bone marrow. They also scored overall diagnostic image quality.

- Statistical Analysis: Inter-reader agreement and inter-method agreement were assessed using Gwet's AC. Sensitivity and specificity for detecting pathologies were calculated for each accelerated protocol using the conventional protocol as a reference.

Data-Driven Analysis and Reconstruction of Cardiac Cine MRI

A novel computational technique, Higher Order Dynamic Mode Decomposition (HODMD), was applied to analyze and reconstruct cardiac cine MRI data [18].

- Data Acquisition: Cine MRI datasets from multiple slices of a mouse heart were used, with each slice comprising 20 snapshots (K=20) covering one cardiac cycle.

- Algorithm Application:

- Data Organization: Image data for each slice was organized into a fourth-order tensor.

- Dimensionality Reduction & Denoising: High Order Singular Value Decomposition (HOSVD) was applied with a tolerance (εSVD=5x10^-4) to reduce data dimensionality and clean noise.

- Pattern Identification: The DMD algorithm was applied to the reduced data (with εDMD=5x10^-4) to identify the dominant spatial modes, frequencies, and growth rates governing the heart's motion.

- Reconstruction & Expansion: A reduced-order model (ROM) was built from the identified modes and frequencies. This ROM was used for 3D reconstruction by interpolating between slices, repairing corrupted data, and generating new, synthetic data snapshots to expand the original database.

Visualizing Workflows and Relationships

The following diagrams illustrate the logical workflows and key technological differentiators described in the experimental protocols.

PCCT's Intrinsic Spectral Advantage

PET Tracer Comparison Methodology

Data-Driven MRI Analysis Pipeline

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Featured Imaging Technologies

| Category | Item | Primary Function in Research |

|---|---|---|

| PCCT | Quantum Iterative Reconstruction (QIR) | Advanced software algorithm that reduces noise and corrects geometric distortions in PCCT images, preserving fine anatomical detail, especially in low-dose protocols [14]. |

| PCCT | Virtual Monoenergetic Image (VMI) Reconstructions | Spectral dataset derived from PCCT's multi-energy data, allowing researchers to retrospectively generate images at different keV levels to optimize contrast-to-noise ratio for specific tissues [12] [11]. |

| PET Tracers | [18F]D3FSP ([18F]P16-129) | Deuterated version of florbetapir; used in head-to-head studies to evaluate the impact of deuteration on pharmacokinetics and binding metrics for Aβ plaque imaging [15]. |

| PET Tracers | 68Ga-FAPI | A radiotracer targeting fibroblast activation protein (FAP), which is overexpressed in the stroma of many carcinomas. Used to investigate improved detection and staging of cancers like pancreatic ductal adenocarcinoma [16]. |

| Novel MRI | 3D qDESS (Quantitative Double-Echo Steady-State) | An accelerated 3D MRI sequence that provides high-resolution images and quantitative T2 maps simultaneously, enabling fast and comprehensive assessment of joint tissues like menisci and cartilage [17]. |

| Novel MRI | HODMD (Higher Order Dynamic Mode Decomposition) | A linear data-driven algorithm adapted from fluid dynamics. Used to analyze dynamic MRI data, identify underlying spatio-temporal patterns, and reconstruct or extrapolate image data [18]. |

Radiation Safety, Biological Considerations, and Risk-Benefit Analysis

Radiation safety is a paramount concern in medical imaging, balancing the undeniable diagnostic benefits of ionizing radiation against its potential biological risks. For researchers and clinicians engaged in early detection research, a thorough understanding of these principles is fundamental to designing ethical and effective studies. Radiation protection in medicine operates on three core principles: justification (ensuring the procedure does more good than harm), optimization (keeping doses As Low as Reasonably Achievable, or the ALARA principle), and dose limitation (applying limits to occupational and public exposure) [20]. This guide provides a comparative analysis of imaging modalities, framed within these safety and risk-benefit considerations, to inform protocol development in early detection research.

Comparative Analysis of Imaging Modalities for Early Detection

The selection of an imaging modality involves weighing factors including diagnostic accuracy, radiation dose, and inherent risks and benefits. The following section provides a data-driven comparison of several key imaging technologies.

Comparison of Breast Cancer Detection in Dense Breast Tissue

The challenge of detecting breast carcinoma in dense breast tissue illustrates the critical need for comparative modality analysis. Dense tissue can mask tumors on mammography, necessitating supplemental imaging techniques [21].

Table 1: Comparative Diagnostic Performance of Breast Imaging Modalities

| Imaging Modality | Technical Principle | Key Strength | Key Limitation | Reported Sensitivity in Dense Breasts | Reported Specificity & False Positive Notes |

|---|---|---|---|---|---|

| Full-Field Digital Mammography (FFDM) | Low-dose X-rays | Gold standard for population-based screening; effective for detecting calcifications. | Reduced sensitivity in dense breast tissue, as tumors and dense tissue both appear white. | Found to detect only 19% of cancers in one study [21]. | N/A |

| Abbreviated MRI (aMRI) | Powerful magnets and radio waves, often with contrast. | Exceptional precision and differentiation capabilities; high sensitivity in dense tissue. | Longer scan time than mammography/ultrasound; higher cost; propensity for false positives. | 96.55% [21]; Cancer detection rate of 17.4 per 1000 examinations [22]. | Slightly elevated false-positive rates [21]. |

| Automated Whole Breast Ultrasound (ABUS) | Sound waves | Strong differentiation prowess; not compromised by breast density. | Operator-dependent variability; can yield false positives. | 37.5% in high-risk cohorts [21]; Cancer detection rate of 4.2 per 1000 examinations [22]. | Can yield false positives [21]. |

| Contrast-Enhanced Mammography (CEM) | Combination of low-dose X-rays and iodinated contrast. | High diagnostic yield similar to MRI. | Involves ionizing radiation and risk of contrast reactions. | Cancer detection rate of 19.2 per 1000 examinations [22]. | N/A |

Interim results from the BRAID randomized controlled trial highlight the significant differences in performance between these supplemental techniques. The cancer detection rate for Abbreviated MRI (17.4 per 1000) and Contrast-Enhanced Mammography (19.2 per 1000) was significantly higher than for ABUS (4.2 per 1000). Furthermore, the cancers detected by MRI and CEM were half the size of those found with ABUS, indicating a clear advantage for earlier detection [22].

Radiation Dose and Biological Effects Across Modalities

Understanding the biological effects of radiation is crucial for risk-benefit analysis. Effects are categorized as either deterministic or stochastic [20].

- Deterministic Effects: These are dose-dependent phenomena that occur when a specific exposure threshold is exceeded. Examples include skin reddening, hair loss, and cataracts. Dose limits for radiation workers are set below these thresholds to prevent such effects [20] [23].

- Stochastic Effects: These effects, primarily cancer and genetic mutations, occur with a certain probability without a defined safe threshold. The risk is assumed to increase linearly with dose, forming the basis for the conservative linear no-threshold (LNT) model used in radiation protection [20] [23]. It is estimated that a 1 rem (10 mSv) whole-body dose could result in approximately 4 additional fatal cancers in a population of 10,000 individuals [23].

Radiation dose from medical imaging can be contextualized against natural background radiation, which averages about 3.1 mSv (310 mrem) per year in the United States [24].

Table 2: Typical Effective Doses of Common Imaging Procedures

| Procedure or Source | Typical Effective Dose | Equivalent Time of Natural Background Exposure |

|---|---|---|

| Natural Background Radiation (Annual) | 3.1 mSv [24] | N/A |

| Chest X-ray (2-view) | 0.2 mRem (0.002 mSv) [23] | < 1 day |

| CT Scan (Whole Trunk) | 1.5 rem (15 mSv) [23] | ~5 years |

| Regulatory Public Dose Limit (Annual) | 1 mSv [24] | ~4 months |

| Regulatory Occupational Dose Limit (Annual) | 50 mSv [24] | ~16 years |

Experimental Protocols and Risk-Benefit Framework

Methodology of a Comparative Imaging Trial

The BRAID trial provides a robust model for designing comparative imaging studies. Key methodological elements include [22]:

- Study Design: A multi-center randomized controlled trial (RCT), the gold standard for evaluating diagnostic efficacy.

- Population: Recruitment of women (age 50-70) with dense breasts and a negative mammogram.

- Interventions & Randomization: Independent allocation of participants to one of several intervention arms (e.g., aMRI, ABUS, CEM) or a standard-of-care control group (FFDM), varied by modality availability at each centre.

- Primary Outcome: Cancer detection rate, defined as the percentage of women with a positive supplemental imaging result that led to a histologically confirmed breast cancer.

- Analysis: Intention-to-treat analysis using network meta-analysis, treating each site as a separate study to account for center-level variations.

BRAID Trial Workflow

The Risk-Benefit Analysis Framework

A formal cost-risk-benefit analysis, as recommended by ICRP, provides a structure for justifying and optimizing imaging protocols. The net benefit (B) of a procedure can be conceptualized as [25]:

B = V - (P + X + Y)

Where:

- V is the gross benefit of the examination, derived from true-positive and true-negative diagnoses.

- P is the production cost of the operation (equipment, maintenance, staff).

- X is the cost of achieving a selected level of protection (training, optimization, radiation safety infrastructure).

- Y is the cost of the radiation detriment, which includes both the radiation risk (RX) and the diagnostic detriment (RD) from false-positive and false-negative outcomes [25].

This framework forces explicit consideration of not only the direct clinical benefit and financial cost but also the costs associated with ensuring safety and the potential harms from both radiation and diagnostic inaccuracy.

Essential Research Toolkit for Imaging Studies

Table 3: Research Reagent Solutions and Essential Materials

| Item / Solution | Function in Research Context |

|---|---|

| Personal Protective Equipment (PPE) | Lead aprons (0.25-0.5 mm thickness), thyroid shields, and leaded glasses (≥0.25 mm lead equivalent) are critical for protecting researchers and staff in fluoroscopic environments. Leaded glasses can reduce lens exposure by 90% [20]. |

| Physical Shielding | Ceiling-suspended lead acrylic shields and portable rolling shields can reduce effective radiation dose to staff by over 90% when used correctly [20]. |

| Dosimeters | Devices worn by research staff to measure cumulative radiation exposure. They should be worn both inside and outside lead aprons to monitor effectiveness of PPE and are essential for auditing exposure and ensuring ALARA compliance [20]. |

| Iodinated Contrast Agents | Used in modalities like Contrast-Enhanced Mammography (CEM) to improve vascular visualization. Researchers must account for potential adverse reactions, which can range from minor to severe [22]. |

| Gadolinium-Based Contrast Agents | Used in Magnetic Resonance Imaging (MRI) to enhance image contrast by altering the magnetic properties of nearby water molecules. Safety profiles are generally favorable compared to iodinated contrast [22]. |

The comparative analysis of imaging modalities reveals a landscape defined by trade-offs. In breast cancer detection, Abbreviated MRI and Contrast-Enhanced Mammography offer superior sensitivity in dense tissue compared to ultrasound, but with associated increases in cost and the potential for false positives or contrast reactions [21] [22]. A rigorous, ethical approach to early detection research must be grounded in the core principles of radiation safety: justification, optimization, and dose limitation. By employing structured frameworks like cost-risk-benefit analysis and adhering to robust experimental protocols, researchers can generate evidence that maximizes diagnostic benefits while minimizing radiation risks, thereby advancing the field of early disease detection in a safe and responsible manner.

The integration of Artificial Intelligence (AI) into medical imaging represents a paradigm shift, moving from a focus on isolated diagnostic tools to the development of optimized, data-driven assessment pathways. This transformation is critically important for early detection research, where the precise and timely identification of pathology can significantly alter patient outcomes and clinical trial endpoints. The selection of an imaging modality is no longer solely based on its inherent capabilities but also on how its performance is augmented by AI algorithms. This guide provides an objective comparison of contemporary imaging modalities, framed within the broader thesis of optimizing early detection strategies. It synthesizes current experimental data and detailed methodologies to serve researchers, scientists, and drug development professionals in making evidence-based decisions for their investigative workflows.

Comparative Performance of Imaging Modalities

Diagnostic Performance in Breast Lesion Assessment

The evaluation of screen-recalled breast lesions is a common challenge in early detection. A 2024 meta-analysis of 54 studies provides a direct comparison of five imaging modalities, offering crucial data for selecting assessment pathways [26].

Table 1: Diagnostic Performance of Imaging Modalities in Assessing Screen-Recalled Breast Lesions [26]

| Imaging Modality | Pooled Sensitivity (%) | 95% CI for Sensitivity | Pooled Specificity (%) | 95% CI for Specificity |

|---|---|---|---|---|

| Contrast-Enhanced Mammography (CEM) | 95 | 90 – 97 | 73 | 63 – 81 |

| Magnetic Resonance Imaging (MRI) | 93 | 88 – 96 | 69 | 55 – 81 |

| Digital Breast Tomosynthesis (DBT) | 91 | 87 – 94 | 85 | 75 – 91 |

| Handheld Ultrasound (HHUS) | 90 | 86 – 93 | 65 | 46 – 80 |

| Digital Mammography (DM) | 85 | 78 – 90 | 77 | 66 – 85 |

The data indicates that CEM, MRI, DBT, and HHUS all demonstrate excellent sensitivity (>90%) in correctly identifying cancerous lesions, outperforming DM alone [26]. For specificity, which is the ability to correctly dismiss benign lesions, DBT and DM show superior performance [26]. This trade-off highlights the importance of modality selection based on the clinical question: high-sensitivity tests like CEM and MRI may be preferable for ruling out disease, while high-specificity tests like DBT are valuable for confirming a malignant diagnosis and reducing false positives.

AI-Assisted Performance in Neurological Disorders

AI's impact is particularly pronounced in complex diagnostic areas like neurology. A 2024 systematic review and meta-analysis evaluated the performance of AI-assisted PET imaging in diagnosing Parkinson's Disease (PD) [27].

Table 2: Diagnostic Performance of AI-Assisted PET Imaging for Parkinson's Disease [27]

| Classification Task | PET Tracer Type | Pooled AUC | 95% CI for AUC | Pooled Sensitivity (%) | Pooled Specificity (%) |

|---|---|---|---|---|---|

| PD vs. Normal Control | Presynaptic Dopamine | 0.96 | 0.94 – 0.97 | 91.47 | 88.23 |

| PD vs. Normal Control | Glucose Metabolism (18F-FDG) | 0.90 | 0.87 – 0.93 | 83.66 | 83.81 |

| PD vs. Atypical Parkinsonism | Presynaptic Dopamine | 0.93 | 0.91 – 0.95 | 89.54 | 89.07 |

| PD vs. Atypical Parkinsonism | Glucose Metabolism (18F-FDG) | 0.97 | 0.96 – 0.99 | Information Not Extracted | Information Not Extracted |

The study concluded that AI-assisted PET imaging provides acceptable to excellent diagnostic performance across different classification tasks and tracer types [27]. Subgroup analyses further revealed that deep learning (DL) algorithms applied to 18F-FDG PET data showed a higher pooled AUC (0.93) compared to machine learning (ML) algorithms (0.87), and that studies with larger sample sizes (>100) demonstrated better performance (AUC 0.94) than those with smaller samples (AUC 0.86) [27].

Experimental Protocols and Methodologies

Systematic Review Methodology for Modality Comparison

The comparative data presented in this guide is largely derived from rigorous systematic reviews and meta-analyses. The methodology for such studies is standardized to ensure comprehensive and unbiased evidence synthesis.

Systematic Review Workflow

Key steps of the protocol include [26] [27] [28]:

- Search Strategy: A systematic search is conducted across multiple electronic databases (e.g., PubMed, Scopus, Web of Science, Embase) using predefined search terms combined with Boolean operators.

- Inclusion/Exclusion Criteria: Strict eligibility criteria are applied. For example, studies might be included if they provide data to calculate sensitivity and specificity and use an established reference standard like histopathology. Studies are typically excluded if they focus on symptomatic populations or are not published in English.

- Study Selection and Quality Assessment: The selection process follows the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flow diagram. The quality of included studies is assessed using tools like QUADAS-C (Quality Assessment of Diagnostic Accuracy Studies-Comparative) to evaluate risk of bias.

- Data Extraction and Synthesis: Data is extracted independently by multiple reviewers using a pre-designed template. Extracted information includes study characteristics, population details, imaging protocols, and diagnostic performance metrics. Meta-analysis is performed using specialized software (e.g., MetaDisc 2.0, R) to derive pooled estimates of sensitivity, specificity, and area under the curve (AUC).

AI Model Development and Validation Workflow

The development of AI models for image analysis follows a structured pipeline to ensure robustness and generalizability.

AI Model Development

Detailed methodology for AI-assisted imaging studies [27] [28]:

- Data Curation: Researchers collect a dataset of medical images (e.g., PET, CT, MRI) from patients with confirmed diagnoses (e.g., PD, cancer) and normal controls. Data is often sourced from clinical archives or public databases.

- Image Preprocessing: Images are preprocessed to standardize formats, correct for artifacts, and normalize intensity values. This step is crucial for model performance.

- Algorithm Development and Training: The dataset is partitioned into training, validation, and test sets. Various AI algorithms, including Machine Learning (ML) and Deep Learning (DL) models such as Convolutional Neural Networks (CNNs), are trained on the training set to learn the mapping between image features and diagnosis.

- Validation and Testing: Model performance is evaluated on the held-out test set to obtain unbiased estimates of accuracy, sensitivity, and specificity. The highest standard of validation involves external testing on a completely independent dataset from a different institution.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials and Tools for Imaging and AI Studies

| Item/Solution | Function/Description | Example Application in Research |

|---|---|---|

| PET Imaging Tracers | Radiolabeled molecules that target specific physiological processes. | 18F-FDG (glucose metabolism) and presynaptic dopamine tracers are used as quantitative biomarkers for differentiating neurodegenerative disorders [27]. |

| AI/ML Algorithms | Computational models that learn patterns from data. | Deep Learning (DL) and Machine Learning (ML) algorithms are trained on imaging datasets to automate diagnosis and classification tasks [27] [28]. |

| Meta-Analysis Software | Statistical software packages for synthesizing study data. | MetaDisc 2.0 and R packages (e.g., metafor) are used to calculate pooled diagnostic performance metrics and generate forest plots [26]. |

| Cloud-Native Platforms & FHIR Standards | Enables seamless data exchange and integration of AI tools. | Foundational infrastructure for building integrated, scalable research ecosystems that connect PACS, EHRs, and analytics tools [29]. |

| Convolutional Neural Networks (CNNs) | A class of deep neural networks most commonly applied to analyzing visual imagery. | Used for noise reduction and artifact removal in low-dose CT and MRI scans, enhancing image quality without increasing radiation dose [28]. |

The comparative analysis presented in this guide underscores a central theme in modern imaging research: the convergence of advanced imaging hardware and intelligent software is creating new paradigms for early detection. The data reveals that no single modality is universally superior; rather, each offers a unique profile of sensitivity and specificity that can be selected and enhanced based on the clinical or research context. The integration of AI is not a distant future but a present reality, demonstrably improving diagnostic accuracy from breast cancer screening to neurological differential diagnosis. For researchers and drug developers, this evolving landscape offers powerful tools but also demands a sophisticated understanding of comparative modality performance, rigorous experimental methodologies, and the computational toolkit required to leverage these technologies fully. The future of early detection lies in personalized, AI-optimized imaging pathways that are both precise and efficient.

Methodological Implementations and Therapeutic Applications in Drug Development Pipelines

Target identification and validation represent critical, early-phase hurdles in the drug discovery pipeline. Within this framework, molecular imaging has emerged as an indispensable technology, enabling researchers to non-invasively visualize and quantify biological processes in living systems. Two particularly powerful approaches are probe development, which involves designing agents to bind specific molecular targets, and reporter gene imaging, which genetically engineers cells to report on specific physiological activities. This guide provides a comparative analysis of these imaging modalities, evaluating their performance against other alternatives by synthesizing current experimental data and protocols. The objective is to furnish researchers, scientists, and drug development professionals with a clear, data-driven understanding of the capabilities, requirements, and optimal applications of each technology within the context of early detection research. By integrating recent advancements, including artificial intelligence (AI) and novel functional representation methods, this guide aims to illuminate the path toward more precise and efficient target validation strategies.

Comparative Analysis of Imaging Modalities

The selection of an appropriate imaging modality is fundamental to the success of a target validation study. Each technology offers a unique balance of strengths and limitations in terms of sensitivity, resolution, cost, and translational potential. The following section provides an objective, data-oriented comparison of the most prominent modalities used in probe development and reporter gene imaging.

Table 1: Performance Comparison of Key Imaging Modalities in Target Validation

| Imaging Modality | Spatial Resolution | Depth Penetration | Key Strengths | Primary Limitations | Translatability to Clinic |

|---|---|---|---|---|---|

| Radionuclide Reporter Imaging | 1-2 mm (PET) | Unlimited | High sensitivity; quantitative; clinically established | Requires reporter gene delivery; radiation exposure; expensive [30] | High [30] |

| Fluorescence Imaging | 2-3 mm | < 1 cm | High-throughput; low cost; multiplexing capability | Limited tissue penetration; high autofluorescence [30] | Low (primarily preclinical) |

| Bioluminescence Imaging | 3-5 mm | 1-2 cm | Very high sensitivity; low background | Requires substrate injection; low spatial resolution [30] | Low (primarily preclinical) |

| Magnetic Resonance Imaging | 25-100 μm | Unlimited | Excellent anatomical detail; high soft-tissue contrast | Low sensitivity for molecular targets; high cost [31] | High |

| Photoacoustic Imaging | < 100 μm | 1-3 cm | High optical contrast with ultrasound depth | Limited by skull interference for brain imaging [31] | Emerging |

Recent trends in the summer of 2025 highlight the growing integration of artificial intelligence (AI) across these modalities. For instance, AI-native imaging viewers are now delivering sub-second load times and workflow automation for PET/CT, while new AI tools like ProCUSNet have demonstrated a 44% improvement in lesion detection on ultrasound [32]. Furthermore, advanced modalities like photon-counting CT are gaining traction for their ability to deliver higher resolution at lower radiation doses, enhancing both safety and data quality in longitudinal studies [32].

Probe Development: Mechanisms and Experimental Protocols

Probe development focuses on creating molecular agents that can bind with high specificity to a target of interest, such as a cell surface receptor, enzyme, or pathological aggregate. The efficacy of a probe is contingent on its binding affinity, specificity, and pharmacokinetic profile.

Key Probe Types and Their Applications

- Amyloid-Targeting PET Tracers: In Alzheimer's disease (AD) research, PET tracers like Pittsburgh compound B (PiB) and florbetapir are used to detect and quantify amyloid-β (Aβ) plaques in the brain. This remains the only non-invasive clinical method for quantifying cortical Aβ load, crucial for patient stratification and monitoring therapeutic efficacy [31].

- Novel PET Tracers: Emerging tracers continue to improve diagnostic precision. A recent example is Ga-68 Trivehexin, a novel PET tracer that has shown promise in more accurately detecting breast cancer lesions and fibrotic lung tissue compared to traditional agents [32].

- Nanoparticle-Based Probes: The integration of nanotechnology with imaging platforms is a significant trend. Nanomaterials can enhance diagnostic specificity, sensitivity, and stability. They are often engineered as multifunctional agents capable of both diagnosis (as a contrast agent) and therapy (as a drug carrier), a paradigm known as theranostics [31].

Experimental Protocol for Probe Validation

A standard protocol for validating a novel imaging probe involves a series of in vitro, ex vivo, and in vivo experiments.

- In Vitro Binding Assays: Determine the affinity (Kd) and specificity of the probe for its target using methods like surface plasmon resonance or radioligand binding assays on purified proteins or cell membranes.

- Cell Uptake and Blocking Studies: Incubate the probe with cells expressing the target (and target-negative controls). A specific blocking study, where cells are pre-treated with an unlabeled competitor molecule, should show significant reduction in probe uptake, confirming specificity.

- In Vivo Biodistribution and Kinetics: Administer the radiolabeled or labeled probe to animal models (e.g., wild-type and disease models). At predetermined time points, euthanize the animals, harvest organs, and measure the radioactivity or probe concentration in each tissue to understand pharmacokinetics and off-target accumulation.

- In Vivo Imaging and Histological Correlation: Perform longitudinal imaging (e.g., PET, fluorescence) in live animals. Post-imaging, brains or tissues are collected for histological analysis (e.g., immunohistochemistry for the target). A strong correlation between the imaging signal and the histologically confirmed target density validates the probe's accuracy [31].

Reporter Gene Imaging: Principles, Protocols, and Data Analysis

Reporter gene imaging involves the genetic modification of cells to express a "reporter gene." The protein product of this gene can then interact with an externally administered probe to generate a detectable signal, serving as a surrogate for the activity of a specific promoter or biological pathway of interest [30].

Classes of Reporter Systems

Reporter systems are broadly categorized into two classes:

- Constitutive Reporters: These are always "on" and are primarily used for tracking the location and survival of transfected or transplanted cells in vivo [30].

- Inducible Reporters: These function as molecular-genetic sensors. Their expression is regulated by specific endogenous transcription factors or signaling pathways, allowing researchers to monitor biological processes like specific signaling pathway activation [30].

Common Reporter Gene/Probe Pairs

The choice of reporter gene is dictated by the imaging modality and the biological question.

- Radionuclide-Based Pairs: The herpes simplex virus type 1 thymidine kinase (HSV1-tk) reporter gene is a well-established example. It phosphorylates and traps radiolabeled probes like 18F-FEAU inside transduced cells, allowing for detection with PET [30].

- Bioluminescent Pairs: The firefly luciferase (FLuc) enzyme reacts with its substrate, D-luciferin, in an ATP-dependent reaction to emit visible light, detectable with sensitive CCD cameras [30].

- Fluorescent Pairs: The green fluorescent protein (GFP) and its variants fluoresce when exposed to light of a specific wavelength. While extremely useful for in vitro and intravital microscopy, its clinical translation is limited by tissue penetration and autofluorescence [30].

Experimental Protocol for Reporter Gene Imaging

A standard workflow for a reporter gene study using a radionuclide-based system is detailed below.

- Reporter Gene Delivery (Pretargeting): The reporter gene cassette must be delivered to the target cells in vivo. This can be achieved via:

- Mechanical methods (e.g., electroporation).

- Chemical methods (e.g., lipid or nanoparticle carriers).

- Biological methods (e.g., viral vectors like adenovirus or lentivirus) [30].

- Reporter Probe Administration: After allowing sufficient time for gene expression (e.g., 24-48 hours), the complementary imaging probe (e.g., 18F-FEAU for HSV1-tk) is administered systemically.

- Image Acquisition and Reconstruction: Imaging (e.g., PET/CT) is performed at optimal time points post-injection to capture probe accumulation. CT provides anatomical context for the functional PET signal.

- Data Analysis and Quantification: Regions of interest (ROIs) are drawn over the target area and reference tissues (e.g., muscle) to quantify the signal. Standardized Uptake Values (SUVs) or target-to-background ratios are calculated to objectively measure reporter gene expression.

Diagram 1: Reporter gene imaging workflow.

The Scientist's Toolkit: Key Reagents and Materials

Successful execution of imaging experiments requires a suite of specialized reagents and tools. The following table details essential components for probe development and reporter gene studies.

Table 2: Essential Research Reagent Solutions for Imaging Studies

| Reagent/Material | Function/Description | Example Application |

|---|---|---|

| Reporter Gene Constructs | Plasmids or viral vectors containing genes like HSV1-tk, FLuc, or GFP under constitutive or inducible promoters. | Engineered into cells to serve as a detectable marker for a biological process of interest [30]. |

| Imaging Probes/Substrates | Radiolabeled compounds (e.g., 18F-FEAU), luciferin, or fluorescent dyes that interact with the reporter protein. | Administered to animals to generate the imaging signal corresponding to reporter gene expression [30]. |

| AI-Assisted Analysis Software | Tools like ProCUSNet or AI-native PET viewers that automate and enhance image interpretation. | Improving lesion detection rates (e.g., 44% improvement with ProCUSNet) and accelerating workflow [32]. |

| Advanced Data Analysis Platforms | Software such as FlowJo, which employs dimensionality reduction (t-SNE, UMAP) and clustering algorithms. | Used for analyzing complex, high-parameter data from cytometry or, by extension, imaging-based single-cell analyses [33]. |

| Functional Representation Tools (FRoGS) | A deep learning model that represents gene signatures by their biological functions rather than just identities. | Enhances compound-target prediction by extracting weak pathway signals from sparse gene signatures [34]. |

Emerging Trends and Future Outlook

The field of imaging for target validation is rapidly evolving, driven by several key technological convergences. First, the rise of AI and machine learning is no longer limited to image analysis but is now being applied to the functional interpretation of the underlying biology. The FRoGS (Functional Representation of Gene Signatures) approach exemplifies this, using a deep learning model to project gene signatures onto a functional space, dramatically improving the sensitivity of identifying shared pathways and compound-target pairs from transcriptomic data [34]. This addresses a fundamental limitation of traditional gene-identity-based comparison methods.

Second, multimodality imaging is becoming standard practice, combining the high sensitivity of optical or nuclear imaging with the detailed anatomy provided by MRI or CT. This is particularly evident in complex disease research like Alzheimer's, where the integration of PET (for Aβ) and quantitative MRI (for atrophy) provides a more comprehensive pathological picture [31] [32]. Finally, the line between diagnostics and therapeutics is blurring with the advancement of "imaging-guided therapy" or theranostics. This approach uses the targeting mechanism of an imaging probe to deliver therapeutic agents, enabling highly precise treatment and simultaneous monitoring of efficacy [31]. These trends, combined with continuous improvements in hardware like photon-counting CT, promise a future where imaging provides even deeper, more functional, and actionable insights into the earliest stages of disease.

Diagram 2: Functional representation of gene signatures.

High-throughput imaging (HTI), often termed high-content analysis (HCA), has become an indispensable tool in modern drug discovery pipelines. This approach combines automated microscopy with multi-parametric image analysis to visualize and quantitatively capture cellular features at a massive scale [35] [36]. Unlike conventional plate-reader based methods that provide a single data point per well, HTI preserves cellular integrity and spatial information while generating rich, single-cell data on complex morphological and functional phenotypes [35]. This capability is particularly valuable for early detection research in oncology, where subtle cellular changes precede macroscopic disease manifestations. The current HTI market, valued at approximately $32 billion in 2025 and projected to reach $82.9 billion by 2035, reflects the critical adoption of these technologies across pharmaceutical and biotechnology sectors [37]. This growth is fueled by advancements in automation, analytical technologies, and the rising need for more physiologically relevant screening models that can better predict compound efficacy and toxicity in early development phases.

Comparative Analysis of High-Throughput Screening Modalities

Technology Performance Benchmarking

High-throughput screening encompasses several technological approaches, each with distinct advantages for specific applications in early detection research. The table below provides a structured comparison of the primary screening modalities used in compound screening and optimization.

Table 1: Performance Comparison of High-Throughput Screening Modalities

| Screening Modality | Throughput Capacity | Key Strengths | Detection Limitations | Optimal Application in Early Detection |

|---|---|---|---|---|

| Cell-Based Assays [37] | Moderate-High (39.4% market share) | Provides physiologically relevant data; predictive accuracy in live-cell systems [37] | Limited to observable cellular phenotypes; potential for false positives [37] | Functional assessment of compound effects in biological systems; target identification [37] |

| Ultra-High-Throughput Screening [37] | Very High (12% projected CAGR) | Unprecedented ability to screen millions of compounds quickly; explores chemical space thoroughly [37] | Requires sophisticated automation and data management infrastructure [37] | Primary screening of vast compound libraries; identification of novel therapeutic options [37] |

| High-Content Analysis/Imaging [35] [36] | Moderate-High (Multiplexed) | Multiparametric data at single-cell level; spatial and kinetic information; unbiased phenotypic discovery [35] | Complex data analysis; computational intensity; specialized expertise required [35] | Discovery of cellular disease mechanisms; morphological profiling; complex phenotype analysis [35] |

| Label-Free Technologies [38] | Moderate | No label interference with biology; measures native cellular properties | Limited specificity for molecular targets | Functional cellular responses; receptor signaling pathways |

| High-Throughput Mass Spectrometry [38] | Emerging (High potential) | Label-free detection of enzymatic reactions and cellular metabolites; rich, high-resolution outputs [38] | Higher cost per sample; specialized instrumentation | Detection of previously difficult-to-detect reactions; metabolic profiling |

Quantitative Data on Cellular Feature Extraction

The power of high-throughput imaging lies in its ability to quantitatively measure diverse cellular parameters. The following data, compiled from experimental studies, illustrates the scope of features that can be extracted through automated image analysis.

Table 2: Quantitative Cellular Features Extracted via High-Throughput Imaging

| Cellular Feature Category | Specific Measurable Parameters | Typical Assay Readouts | Data Points per Experiment (Example) |

|---|---|---|---|

| Morphological Features [35] | Cell area, perimeter, shape factors, neurite length, branching [35] | Cell rounding, membrane blebbing, neurite outgrowth [35] | Up to 1,000 features per cell [35] |

| Intensity-Based Features [36] | Marker expression levels, phosphorylation status, compound accumulation [36] | Protein expression, post-translational modifications, drug uptake [36] | Multiple fluorescence channels |

| Textural Features [35] | Granularity, spatial pattern organization, chromatin condensation [35] | Cytoplasmic granularity, nuclear morphology, cytoskeletal organization [35] | Complex pattern analysis |

| Spatial/Topological Features [35] | Organelle positioning, protein co-localization, nuclear/cytoplasmic ratio [35] | Mitochondrial network integrity, receptor internalization, transcription factor translocation [35] | Relative distance measurements |

| Temporal/Dynamic Features [38] | Calcium oscillations, ion flux kinetics, membrane potential changes [38] | GPCR signaling, channel gating, metabolic activity [38] | Time-series data from live-cell imaging |

Experimental Protocols for High-Throughput Imaging

Standardized Workflow for Phenotypic Screening

A robust HTI pipeline integrates multiple steps from assay preparation to data analysis. The following protocol outlines a generalized workflow suitable for most phenotypic screening applications.

Table 3: Detailed HTI Experimental Protocol for Compound Screening

| Protocol Step | Technical Specifications | Quality Control Measures | Critical Parameters |

|---|---|---|---|

| Assay Development & Plate Preparation [35] | 384-well or 1536-well microplates; cell seeding density optimization; controls placement [35] | Uniform cell confluence verification; edge effect minimization; positive/negative controls [35] | Cell viability >95%; Z' factor >0.5 [35] |

| Compound Treatment & Perturbation [35] | Automated liquid handling; concentration-response curves (e.g., 10-point, 1:3 serial dilution); DMSO normalization [35] | Pin tool transfer verification; compound solubility assessment; plate mapping accuracy | Final DMSO concentration ≤0.1%; vehicle control inclusion |

| Cell Staining & Fixation [36] | Multiplexed fluorescence labeling; fixation (e.g., 4% PFA); permeabilization (0.1% Triton X-100); antibody validation [36] | Signal-to-background ratio optimization; antibody titration; dye stability assessment [36] | Minimal spectral overlap; appropriate filter sets |

| Image Acquisition [35] | Automated microscopy (20x objective typical); 4-9 sites per well; multiple fluorescence channels; autofocus algorithms [35] | Focus quality assessment; illumination uniformity; camera linearity; fluorophore bleaching monitoring [35] | >500 cells per condition; minimal well-to-well variation |

| Image Analysis & Feature Extraction [35] | Segmentation algorithms (nuclear, cytoplasmic, membrane); intensity thresholding; morphological operations [35] | Segmentation accuracy verification; outlier detection; batch effect correction [35] | >90% segmentation accuracy; minimal false positives/negatives |

| Data Analysis & Hit Selection [38] | Multi-parametric statistical analysis; Z-score normalization; machine learning classification [38] | Assay robustness (Z'-factor); replicate correlation; hit confirmation rate assessment [38] | Statistical significance (p<0.01); effect size thresholding |

Workflow Visualization

The following diagram illustrates the complete high-throughput imaging workflow, from experimental setup to data analysis and hit identification.

Research Reagent Solutions for High-Throughput Imaging

The quality and selection of reagents critically determine the success of high-throughput imaging experiments. The following table details essential materials and their specific functions in HTI workflows.

Table 4: Essential Research Reagents for High-Throughput Imaging Applications

| Reagent Category | Specific Examples | Primary Function in HTI | Application Notes |

|---|---|---|---|

| Viability & Cytotoxicity Markers [36] | HCS LIVE/DEAD Green Kit, Propidium Iodide, Annexin V [36] | Distinguish live/dead cells; quantify compound toxicity [36] | Multiplex with phenotypic markers; early apoptosis detection |

| Nuclear & Cytoplasmic Stains [36] | Hoechst 33342, DAPI, HCS NuclearMask stains, HCS CellMask [36] | Cell segmentation; nuclear morphology analysis; plating uniformity QC [36] | Hoechst for live-cell; DAPI for fixed cells; concentration titration critical |

| Organelle-Specific Probes [36] | MitoTracker, ER-Tracker, LysoTracker, HCS Mitochondrial Health Kit [36] | Assess organelle morphology and function; mitotoxicity screening [36] | Validate specificity per cell type; consider compartment pH differences |

| Antibodies & Immunofluorescence [36] | Phospho-specific antibodies, Cell Signaling antibodies, Alexa Fluor conjugates [36] | Detect post-translational modifications; protein localization and expression [36] | Extensive validation required; species cross-reactivity checking |

| Functional Assay Reagents [36] | CellROX oxidative stress, Fluo-4 calcium, FluxOR potassium assay [36] | Measure reactive oxygen species; ion flux; signaling pathway activation [36] | Kinetic measurements; loader dye optimization; signal stability |

| Cell Painting Kits [35] | Multiplexed dye sets (nuclei, Golgi, actin, mitochondria, ER) [35] | Generate morphological fingerprints for phenotypic profiling [35] | Standardized protocol enables cross-experiment comparison |

| Secondary Detection Reagents [36] | Cross-adsorbed fluorescent secondary antibodies, Fab fragments [36] | Signal amplification with minimal background; multiplexing capability [36] | Host species matching; minimal cross-talk between channels |

Advanced Applications in Early Detection Research

Pathway-Centric Screening Approaches

High-throughput imaging enables researchers to move beyond single-target screening to pathway-centric approaches that better reflect disease complexity. Pharmacotranscriptomics-based drug screening (PTDS) represents a particularly advanced application, where gene expression changes following drug perturbation are measured at scale [39]. This approach generates high-dimensional data that, when combined with artificial intelligence, can elucidate compound effects on signaling pathways and complex disease networks [39]. For early cancer detection, HTI modalities can visualize cellular and molecular alterations that precede anatomical changes, offering a window for pre-symptomatic detection when combined with radiomics and AI algorithms [40]. These pathway-centric approaches are especially valuable for traditional Chinese medicine research, where HTI helps deconvolute the complex efficacy of multi-component natural products [39].

Signaling Pathway Analysis

The following diagram illustrates how high-throughput imaging enables the dissection of complex signaling pathways through multiparametric phenotypic measurements.

High-throughput imaging represents a transformative approach for compound screening and optimization, particularly in the context of early detection research. The technology's ability to provide multiparametric data at single-cell resolution enables researchers to move beyond simplistic efficacy measures to comprehensive mechanistic profiling. While cell-based assays currently dominate the market share for high-throughput screening, ultra-high-throughput screening and high-content imaging are experiencing the most rapid growth, reflecting the field's trajectory toward more information-rich screening paradigms [37]. The integration of artificial intelligence with HTI data analysis, coupled with advancements in 3D cell culture models and CRISPR-based perturbation tools, promises to further enhance the predictive power of these approaches [35] [39]. For researchers focused on early disease detection, high-throughput imaging offers an unparalleled window into the subtle cellular changes that precede overt pathology, enabling the identification of therapeutic interventions at increasingly early stages of disease progression.

Small animal imaging is an indispensable tool in preclinical research, enabling non-invasive visualization of disease processes and treatment effects over time. These technologies provide insights into anatomical, physiological, and molecular changes, thereby strengthening the translational power of biomedical research [41]. The choice of imaging modality involves careful consideration of resolution, sensitivity, cost, and application suitability. This guide provides an objective comparison of three core modalities—Micro-CT, Micro-PET, and Optical Imaging—focusing on their performance characteristics, experimental protocols, and applications in early detection research to inform researchers, scientists, and drug development professionals.

Key Characteristics of Small Animal Imaging Modalities

The table below summarizes the fundamental technical attributes and primary applications of Micro-CT, Micro-PET, and Optical Imaging modalities.

Table 1: Fundamental characteristics of small animal imaging modalities.

| Feature | Micro-CT | Micro-PET | Optical Imaging |

|---|---|---|---|

| Primary Measurement | X-ray attenuation (anatomy) | Radiotracer concentration (metabolism/function) | Photon emission (bioluminescence/fluorescence) |

| Spatial Resolution | < 100 µm to 11 µm [42] [43] | ~1.5 mm (preclinical); 3-4 mm (clinical TB-PET) [44] | Millimeters (in vivo) [45] |

| Key Strength | High-resolution bone/structural anatomy | Quantitative metabolic/functional data | High sensitivity, low cost, throughput |

| Primary Applications | Bone architecture, cancer monitoring, organ volumes [46] [42] | Cancer, neurology, cardiology, therapy response [47] [48] | Gene expression, cell tracking, infection studies [45] |

| Radiation/Ionizing | Yes [49] | Yes | No |

Quantitative Performance Comparison

Performance metrics, often assessed using standardized phantoms, provide critical data for modality selection. The following table compares quantitative performance data from recent evaluations.

Table 2: Quantitative performance metrics for Micro-CT and Micro-PET systems.

| Modality & System | Spatial Resolution | Sensitivity | Key Metric & Result | Source |

|---|---|---|---|---|

| Micro-CT (Photon-Counting) | Up to 11 µm [43] | Not Applicable | Material decomposition for calcium content analysis [43] | [43] |

| Preclinical PET (easyPET.3D) | ~0.97 mm (tangential) [47] | 0.23% (absolute) [47] | Scatter Fraction: 16% [47] | [47] |

| Clinical TB-PET (Biograph Quadra) | 3-4 mm [44] | Very High (long axial FOV) [44] | Recovery Coefficient (5 mm rod): 1.17 [44] | [44] |

| Preclinical PET (Inveon DPET) | ~1.5 mm [44] | High [44] | Recovery Coefficient (5 mm rod): 1.09 [44] | [44] |

Detailed Experimental Protocols

NEMA NU-4 Protocol for PET Performance Evaluation

The National Electrical Manufacturers Association (NEMA) NU 4-2008 standard provides specific procedures for evaluating the performance of small animal PET scanners [47]. Key measurements include: