CONCH and Beyond: How Vision-Language Models Are Revolutionizing Computational Pathology

This article explores the transformative impact of vision-language foundation models (VLMs), with a focus on CONCH, in computational pathology.

CONCH and Beyond: How Vision-Language Models Are Revolutionizing Computational Pathology

Abstract

This article explores the transformative impact of vision-language foundation models (VLMs), with a focus on CONCH, in computational pathology. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive overview of how these models leverage massive image-text datasets to overcome data scarcity and enable versatile AI applications. We delve into the foundational architecture of models like CONCH, detail their methodological applications in tasks from classification to slide retrieval, and address critical optimization challenges such as prompt engineering. The article further validates their performance through comparative benchmarking against other foundation models and concludes with an analysis of future directions for integrating these powerful tools into biomedical research and clinical workflows.

The Foundation Model Revolution: What Are VLMs and Why Do They Matter for Pathology?

The development of robust artificial intelligence (AI) models for medical imaging, particularly in computational pathology, faces a fundamental constraint: the scarcity of high-quality, expert-annotated data. Deep learning methods are notoriously data-hungry, requiring large amounts of pixel-by-pixel annotated images to learn effectively [1]. Creating such datasets demands specialized expert labor, substantial time, and significant cost—resources that are particularly scarce for many medical conditions and clinical settings [1]. This data scarcity problem creates a critical bottleneck in developing accurate, generalizable AI tools for disease diagnosis, prognosis, and biomarker prediction from medical images.

Within this challenging landscape, computational pathology presents unique complexities. Whole Slide Images (WSIs) are gigapixel-sized digital pathology scans that contain immense amounts of visual information at cellular and sub-cellular levels. Traditional supervised learning approaches require pathologists to meticulously label these massive images, an process that is both time-consuming and economically prohibitive at scale. This limitation is especially acute for rare diseases, where collecting large patient cohorts is particularly challenging [2], and for complex tasks such as cancer subtyping, biomarker prediction, and outcome prognosis [3].

Vision-Language Models as a Solution: The CONCH Framework

Core Architecture and Training Methodology

Vision-language models (VLMs) represent a paradigm shift in addressing data scarcity by learning from both images and their corresponding textual descriptions. The CONCH (CONtrastive learning from Captions for Histopathology) framework exemplifies this approach through task-agnostic pretraining on diverse sources of histopathology images and biomedical text [4] [5]. This model is specifically designed to overcome labeled data limitations by leveraging over 1.17 million image-caption pairs, creating a foundation model that can be transferred to a wide range of downstream tasks with minimal or no further supervised fine-tuning [5].

The CONCH architecture employs contrastive learning to align visual representations from histopathology images with textual embeddings from corresponding captions in a shared latent space. This training methodology enables the model to learn rich, transferable representations without requiring explicit manual annotations for specific diagnostic tasks. By learning the relationships between visual patterns in tissue samples and their textual descriptions in medical literature and reports, CONCH develops a foundational understanding of histopathologic entities that mirrors how human pathologists teach each other and reason about morphological features [5].

Comparative Performance Benchmarks

Recent comprehensive benchmarking studies demonstrate how VLMs like CONCH effectively overcome data scarcity challenges. When evaluated on 31 clinically relevant tasks across morphology, biomarkers, and prognostication, CONCH achieved state-of-the-art performance despite being trained on significantly less data than some competing approaches [3].

Table 1: Performance Comparison of Pathology Foundation Models Across Task Types

| Model Type | Model Name | Morphology Tasks (Mean AUROC) | Biomarker Tasks (Mean AUROC) | Prognosis Tasks (Mean AUROC) | Overall Mean AUROC |

|---|---|---|---|---|---|

| Vision-Language | CONCH | 0.77 | 0.73 | 0.63 | 0.71 |

| Vision-Only | Virchow2 | 0.76 | 0.73 | 0.61 | 0.71 |

| Vision-Only | Prov-GigaPath | 0.69 | 0.72 | 0.61 | 0.69 |

| Vision-Only | DinoSSLPath | 0.76 | 0.68 | 0.59 | 0.69 |

Notably, CONCH's vision-language pretraining enables superior performance in data-scarce settings. In evaluations of low-prevalence biomarkers (such as BRAF mutations present in only 10% of cases), CONCH maintained robust performance where vision-only models typically struggled [3]. This capability is particularly valuable for real-world clinical applications where positive cases for specific molecular subtypes may be naturally rare.

Experimental Protocols and Methodologies

CONCH Pretraining Framework

The CONCH model was developed using a multi-stage pretraining approach designed to maximize learning from limited labeled data:

Data Curation and Preparation: Collected 1.17 million histopathology image-caption pairs from diverse sources, ensuring representation across multiple tissue types, stain types (H&E, IHC, special stains), and disease conditions [4]. The training corpus intentionally excluded large public slide collections like TCGA, PAIP, and GTEX to minimize risks of data contamination in future benchmark evaluations [4].

Contrastive Pretraining: Implemented contrastive learning objectives to align image and text embeddings using a dual-encoder architecture. The training employed a temperature-scaled cross-entropy loss to maximize the similarity between corresponding image-text pairs while minimizing similarity between non-matching pairs [5].

Multi-scale Image Encoding: Processed whole slide images at multiple magnifications (20×, 10×, 5×) to capture both cellular-level details and tissue-level architectural patterns. This approach enabled the model to learn morphological features at different hierarchical levels, from subcellular structures to tissue organization [4].

Text Encoder Specialization: Utilized a biomedical-domain adapted transformer architecture for processing captions, which was pretrained on medical literature and pathology reports to develop domain-specific language understanding [5].

Zero-Shot Evaluation Methodology

To validate CONCH's capability to overcome data scarcity, researchers employed rigorous zero-shot evaluation protocols across 14 diverse benchmarks [5]:

Image Classification: Evaluated on multiple tissue classification tasks without task-specific fine-tuning, using text prompts such as "a histopathology image of [class name]" to generate classification weights from the text encoder.

Cross-Modal Retrieval: Measured performance on both image-to-text and text-to-image retrieval tasks, assessing the model's ability to associate visual patterns with appropriate pathological descriptions.

Segmentation: Applied the model to tissue segmentation tasks through text-guided inference using prompts describing different tissue types and morphological structures.

Captioning: Generated descriptive captions for histopathology images by leveraging the aligned vision-language representations to produce morphologically accurate descriptions.

The evaluation framework comprehensively assessed the model's transferability across different disease types, tissue sites, and diagnostic tasks, consistently demonstrating that CONCH could be applied to downstream tasks with minimal or no additional labeled data [5].

Complementary Approaches for Data Scarcity

Multi-Task Learning Frameworks

Multi-task learning (MTL) provides another powerful approach to addressing data scarcity by enabling simultaneous training of a single model that generalizes across multiple tasks [2]. The UMedPT (Universal Biomedical Pretrained Model) framework demonstrates how MTL can efficiently utilize different label types and data sources to pretrain image representations applicable to all tasks:

Table 2: Multi-Task Learning Performance with Limited Data

| Task Type | Dataset | Metric | ImageNet Performance (100% Data) | UMedPT Performance (1% Data) | Data Reduction |

|---|---|---|---|---|---|

| Tissue Classification | CRC-WSI | F1 Score | 95.2% | 95.4% | 99% |

| Disease Diagnosis | Pneumo-CXR | F1 Score | 90.3% | 93.5% | 95% |

| Object Detection | NucleiDet-WSI | mAP | 0.71 | 0.71 | 50% |

The UMedPT architecture incorporates shared blocks (including an encoder, segmentation decoder, and localization decoder) along with task-specific heads [2]. This design enables the model to leverage annotations from multiple sources and types (classification, segmentation, object detection) while maintaining task-specific performance. In rigorous evaluations, UMedPT matched ImageNet pretraining performance with only 1-50% of the training data across various biomedical imaging tasks [2].

Synthetic Data Generation

Advanced synthetic data generation techniques provide another pathway to overcome data scarcity. A novel AI tool developed by UC San Diego researchers demonstrates how generating synthetic image-mask pairs can augment small datasets effectively [1]:

Generator Training: Train a generative model to produce synthetic images from segmentation masks, which are color-coded overlays indicating healthy or diseased regions.

Data Augmentation: Create artificial image-mask pairs to augment small datasets of real expert-annotated examples.

Feedback Loop Implementation: Establish a continuous feedback loop where the system refines generated images based on how well they improve model performance, ensuring synthetic data is specifically tailored to enhance segmentation capabilities rather than merely appearing realistic [1].

This approach has been shown to reduce data requirements by 8-20 times while maintaining or improving performance compared to standard methods, making it particularly valuable for rare conditions with limited available data [1].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Resources for VLM Development

| Resource Name | Type | Function in Research | Application in Data Scarcity Context |

|---|---|---|---|

| CONCH Model Weights | Pre-trained Model | Provides foundational vision-language understanding for histopathology | Enables zero-shot transfer to new tasks without additional labeled data [4] |

| Virchow2 | Vision Foundation Model | Offers competitive alternative for vision-only tasks | Serves as strong baseline for biomarker prediction tasks [3] |

| UMedPT Framework | Multi-task Model | Supports learning across multiple biomedical imaging modalities | Reduces data requirements by leveraging complementary tasks [2] |

| Mass-340K Dataset | Whole Slide Image Collection | Provides large-scale pretraining corpus for slide-level models | Enables training of general-purpose slide representations without manual annotations [6] |

| PathChat | Generative AI Copilot | Generates synthetic fine-grained captions for histopathology images | Creates training data for vision-language alignment without manual annotation [6] |

| TCGA/CPTAC | Public Cancer Datasets | Serves as benchmarking resources for model evaluation | Provides standardized evaluation frameworks despite data scarcity in target domains [7] |

Implementation Workflows: Technical Diagrams

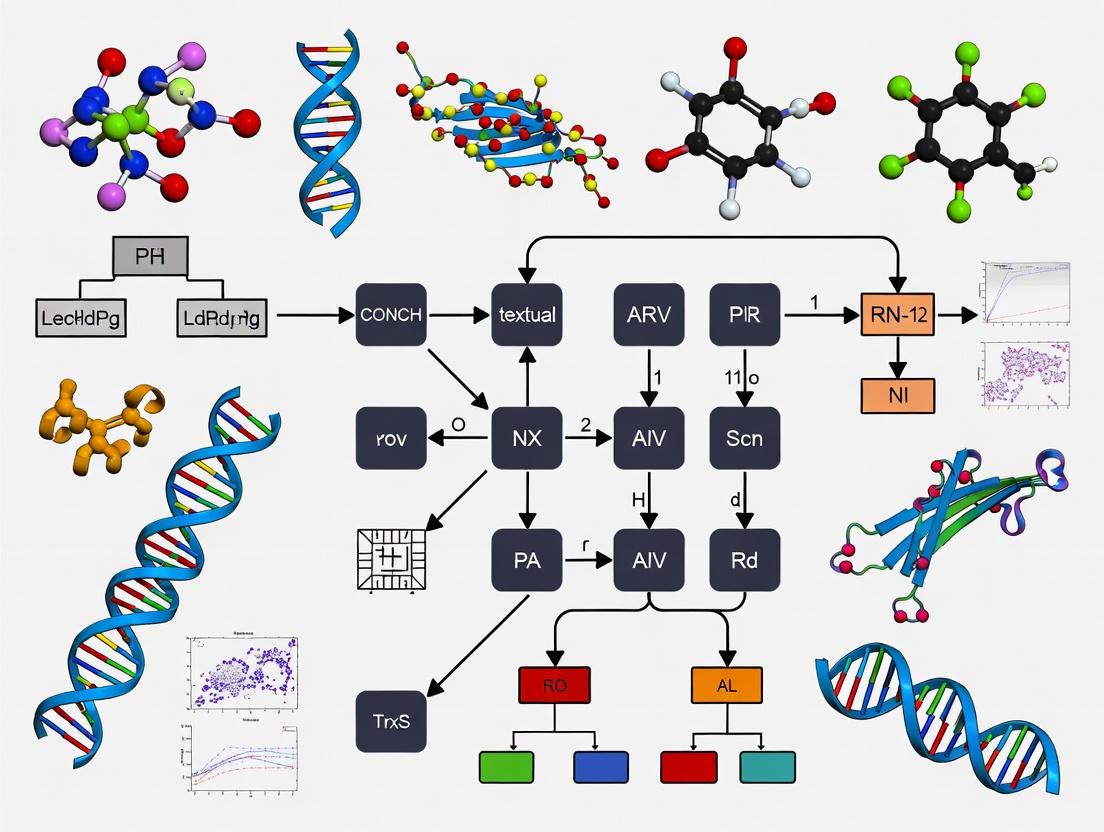

CONCH Vision-Language Pretraining Workflow

Multi-Task Learning for Data Efficiency

Vision-language models like CONCH represent a fundamental shift in addressing data scarcity challenges in computational pathology and medical AI. By leveraging contrastive learning on image-text pairs and multi-task training strategies, these approaches significantly reduce dependency on large, expensively annotated datasets while maintaining robust performance across diverse clinical tasks. The demonstrated ability of CONCH to achieve state-of-the-art results with minimal fine-tuning across 31 clinical tasks underscores the transformative potential of this paradigm [3].

Future research directions should focus on enhancing model generalization across rare diseases, improving integration with multimodal patient data, and developing more efficient adaptation techniques for specialized clinical applications. As these foundation models continue to evolve, they promise to accelerate the development of accurate, accessible, and deployable AI tools that can overcome the fundamental constraint of data scarcity in medical imaging and ultimately enhance patient care across diverse healthcare settings.

What is a Vision-Language Model? Bridging Visual and Textual Understanding

Vision-Language Models (VLMs) represent a transformative class of artificial intelligence (AI) that seamlessly integrates computer vision with natural language processing. These multimodal systems learn the complex relationships between visual data and textual information, enabling them to generate text from visual inputs or comprehend natural language prompts within a visual context [8]. This technical guide delves into the core architecture, training methodologies, and evolving capabilities of VLMs, with a specific focus on their groundbreaking applications in computational pathology research. We examine how specialized models like CONCH and TITAN are leveraging VLM technology to advance cancer diagnosis, prognosis, and biomarker discovery from gigapixel whole-slide images (WSIs), thereby bridging the critical gap between visual histopathological patterns and expert clinical language.

At their foundation, Vision-Language Models are built upon a synergistic architecture that processes and aligns information from two distinct modalities: images and text. The core components work in concert to create a unified understanding [8] [9] [10].

- Vision Encoder: This component, often a Vision Transformer (ViT) or a convolutional neural network (CNN), is responsible for extracting vital visual features from an image or video input. It transforms the input into a structured numerical representation known as visual embeddings ((Z_{(i)})). A ViT, for instance, processes an image by dividing it into patches and treating them as sequences, akin to tokens in a language model, and then implements self-attention across these patches [8].

- Language Encoder: Typically a transformer-based Large Language Model (LLM) such as GPT or BERT, this module captures the semantic meaning and contextual associations within the text. It converts textual prompts into dense vector representations called textual embeddings ((Z_{(t)})) [8] [9].

- Projection/Fusion Layer: A critical component that aligns the visual and textual embeddings into a shared, joint multimodal embedding space. This is usually implemented as a small multilayer perceptron (MLP) or a transformer block. This shared space allows the model to perform cross-modality reasoning, linking textual concepts with concrete visual evidence [9] [10].

- Autoregressive Decoder: In the final step, the combined multimodal embeddings serve as an input prefix for an autoregressive language model. This decoder generates a text-based response one token at a time, with each new token conditioned on the previously generated tokens and the multimodal context [9].

The following diagram illustrates the flow of information through these core components:

Training Paradigms and Technical Evolution

The effectiveness of VLMs is largely determined by their training strategies. These methods enable the model to learn the intricate correlations between images and text, forming the basis of their multimodal capabilities [8].

Foundational Training Methods

- Contrastive Learning: This approach, exemplified by models like CLIP (Contrastive Language-Image Pretraining), maps image and text embeddings from their respective encoders into a joint embedding space. The model is trained on datasets of image-text pairs to minimize the distance between embeddings of matching pairs and maximize it for non-matching pairs. This creates a powerful shared space where semantically similar concepts from different modalities are close together [8] [11]. SigLIP is a recent evolution that replaces the softmax-based contrastive loss with a pairwise sigmoid loss, improving training parallelization [11].

- Generative Model Training: This strategy focuses on learning to generate new data. For VLMs, this includes image-to-text generation (producing captions or descriptions from an input image) and text-to-image generation (producing images from input text) [8].

- Masking: In this self-supervised technique, the model learns to predict randomly obscured parts of an input text or image. In masked language modeling, VLMs learn to fill in missing words in a text caption given an unmasked image, and in masked image modeling, they learn to reconstruct hidden pixels in an image given an unmasked caption [8].

- Hybrid Approaches: Modern models often combine multiple objectives. CoCa (Contrastive Captioner), for instance, uses a multi-task loss function that incorporates both contrastive learning and captioning objectives, aiming to get the benefits of both worlds [11].

The Evolution of VLM Capabilities

The VLM landscape has rapidly evolved from simple matching models to sophisticated reasoning systems [12] [9].

Table: Evolution of Vision-Language Models

| Era | Representative Models | Key Innovation | Capabilities |

|---|---|---|---|

| ~2015-2019 | Show & Tell, ViLBERT | CNN+RNN; Early Transformer architectures | Basic image captioning |

| ~2021 | CLIP, SimVLM | Effective zero-shot alignment via contrastive learning | Zero-shot image classification |

| ~2022 | Flamingo, BLIP-1 | Cross-attention layers for few-shot learning | Few-shot multimodal prompting |

| ~2023-2024 | GPT-4V, Gemini, LLaVA | Fully integrated multimodal architectures | Advanced multimodal reasoning, dialog |

| 2025+ | Gemini 2.5, Qwen2.5-Omni, Any-to-any models | "Thinker-Talker" architectures, MoE decoders | Any-to-any modality, agentic capabilities, long-context understanding |

Recent architectural trends are pushing the boundaries of what VLMs can achieve. The emergence of any-to-any models like Qwen2.5-Omni allows for input and output across any combination of modalities (image, text, audio, video) through novel architectures that may employ multiple encoders and decoders [12]. The use of Mixture-of-Experts (MoE) decoders, where a router dynamically selects specialized sub-networks ("experts") to process different inputs, has shown enhanced performance and inference efficiency in models like Kimi-VL and DeepSeek-VL2 [12]. Furthermore, the field is seeing a rise of powerful yet smaller models (e.g., SmolVLM, Gemma3-4b) with less than 2 billion parameters, making advanced VLM capabilities feasible for deployment on consumer hardware and edge devices [12].

VLMs in Computational Pathology: A Case Study

Computational pathology presents a unique set of challenges that VLMs are uniquely suited to address. The field involves the analysis of gigapixel Whole Slide Images (WSIs), which are massive digital files of tissue sections, and correlating their complex visual patterns with rich textual descriptions found in pathology reports [6] [13].

The Critical Need for VLMs in Pathology

Traditional AI models in pathology often rely solely on image data, which is a stark contrast to how human pathologists teach and reason—by integrating visual morphology with descriptive language [4]. Translating the capabilities of patch-based foundation models to address patient- and slide-level clinical challenges remains complex due to the immense scale of WSIs and the frequent scarcity of labeled data for rare diseases [6]. VLMs bridge this gap by enabling zero-shot and few-shot learning, where models can perform tasks without or with minimal task-specific training data, guided instead by natural language prompts [11] [13].

Specialized Pathology VLMs: CONCH and TITAN

Several specialized VLMs have been developed to tackle the specific needs of computational pathology.

- CONCH (CONtrastive learning from Captions for Histopathology): A visual-language foundation model pretrained on the largest histopathology-specific vision-language dataset at its time of release—1.17 million image-caption pairs [4]. CONCH is based on the CoCa (Contrastive Captioner) architecture, which unifies contrastive and generative learning objectives [13]. This allows CONCH to be transferred to a wide range of downstream tasks involving histopathology images and text, achieving state-of-the-art performance on image classification, segmentation, captioning, and cross-modal retrieval [4]. Its training did not use large public slide collections like TCGA, reducing the risk of data contamination when evaluating on public benchmarks or private datasets [4].

- TITAN (Transformer-based pathology Image and Text Alignment Network): A more recent multimodal whole-slide foundation model pretrained on 335,645 WSIs [6]. Its pretraining strategy involves three stages: 1) vision-only unimodal pretraining on region-of-interest (ROI) crops, 2) cross-modal alignment with synthetic fine-grained morphological descriptions at the ROI-level (423k pairs), and 3) cross-modal alignment at the WSI-level with clinical reports (183k pairs) [6]. This extensive training enables TITAN to generate general-purpose slide representations for tasks like cancer subtyping, prognosis, and even generate pathology reports, demonstrating strong performance in low-data regimes and zero-shot settings [6].

The workflow for developing and applying such foundational models in pathology is complex, involving both visual and linguistic data at multiple scales, as shown below:

Benchmarking and Performance in Pathology Tasks

Comprehensive benchmarking studies have evaluated the performance of general and pathology-specific VLMs across a wide array of diagnostic tasks. One such study evaluated 31 foundation models—including general vision models (VM), general vision-language models (VLM), pathology-specific vision models (Path-VM), and pathology-specific vision-language models (Path-VLM)—across 41 tasks from TCGA, CPTAC, and external datasets [14].

Table: Benchmarking Model Performance on TCGA Tasks (Adapted from [14])

| Model Category | Example Models | Mean Average Performance* on 19 TCGA Tasks | Key Finding |

|---|---|---|---|

| Pathology-specific VM | Virchow2, UNI, Prov-GigaPath | Virchow2: 0.706 ± 0.10 | Pathology-specific vision models (Path-VM) secured top rankings. |

| Pathology-specific VLM | CONCH, PLIP, Quilt-Net | Evaluated but overall Path-VM outperformed Path-VLM. | Effective domain alignment is critical; model complexity alone does not guarantee superiority. |

| General VLM | CLIP, iBOT | Lower than pathology-specific models. | Domain-specific training provides a significant performance advantage. |

| Fusion Model | Integration of top models | Superior generalization across external tasks and tissues. | Combining models can enhance robustness and generalization. |

*Performance Metric: Average of balanced accuracy, precision, recall, and F1 score.

A key insight from these benchmarks is that model size and pretraining data size do not consistently correlate with improved performance in pathology, challenging assumptions about scaling in histopathological applications [14]. The study also found that a fusion model, which integrated top-performing foundation models, achieved superior generalization across external tasks and diverse tissues [14].

Furthermore, research has shown that prompt engineering is a critical factor in the real-world application of pathology VLMs. A systematic study on zero-shot diagnostic pathology found that prompt design significantly impacts model performance, with the CONCH model achieving the highest accuracy when provided with precise anatomical references [13]. Performance consistently degraded when anatomical precision in the prompt was reduced, highlighting the importance of domain-appropriate, precise language when instructing these models for diagnostic tasks [13].

The Scientist's Toolkit: Research Reagent Solutions

For researchers and drug development professionals aiming to work with VLMs in computational pathology, the following table details key resources and their functions.

Table: Essential Research Reagents for VLM Development in Computational Pathology

| Resource / Tool | Type | Primary Function | Relevance to Pathology |

|---|---|---|---|

| CONCH [4] | Pre-trained VLM | Provides a foundation model for transfer learning on histopathology tasks. | Excels at classification, segmentation, captioning, and retrieval; avoids TCGA data contamination. |

| TITAN [6] | Pre-trained Slide Foundation Model | Generates general-purpose slide-level representations from WSIs. | Enables zero-shot classification, cancer retrieval, and report generation without fine-tuning. |

| Quilt-1M / Quilt-Instruct [13] | Dataset | Provides 1M image-text pairs and 107k Q/A pairs for training/finetuning. | Supplies large-scale, diverse histopathology data for contrastive and instruction-tuning. |

| LAION-5B [10] | Dataset | Massive dataset of 5B+ general image-text pairs. | Used for pretraining general vision encoders; supports multilingual VLM training. |

| ImageNet [8] [10] | Dataset | Over 14M labeled images for general object recognition. | Foundational pretraining for vision backbones, though less specific than pathology datasets. |

| Hugging Face Transformers / vLLM [12] [9] | Software Library / Inference Engine | Simplifies model integration, fine-tuning, and efficient serving of large models. | Accelerates experimentation and deployment of pathology VLMs in research and clinical workflows. |

| Virchow2 [14] | Pre-trained Pathology VM | A top-performing pathology-specific vision model for slide encoding. | Delivered highest performance in cross-task benchmarking; robust feature extractor. |

Vision-Language Models represent a paradigm shift in AI, moving from unimodal understanding to a integrated, multimodal reasoning that more closely mirrors human cognition. Their architecture, which hinges on the creation of a joint embedding space for visual and textual information, enables a powerful set of capabilities from visual question answering to complex contextual reasoning. In computational pathology, specialized VLMs like CONCH and TITAN are not merely incremental improvements but foundational technologies that address core challenges in the field. By learning from vast corpora of image-text pairs, they bridge the gap between the subtle visual language of histopathology and the explicit descriptions used by experts, enabling more accurate, generalizable, and accessible AI tools for cancer diagnosis, prognosis, and biomarker discovery. As research continues to focus on improving efficiency, reducing hallucinations, and enhancing agentic capabilities, VLMs are poised to become an indispensable tool in the pursuit of precision medicine.

The diagnosis and treatment of complex diseases, notably cancer, rely heavily on the histological examination of tissue samples by pathologists. The recent digitization of pathology has opened the door for artificial intelligence (AI) to augment and enhance this process, a field known as computational pathology [15]. However, traditional AI models in this domain face significant challenges. They are typically trained for a single, specific task using large volumes of meticulously labeled data, which is labor-intensive and difficult to acquire for thousands of potential diagnoses and rare diseases. Moreover, these models utilize only image data, ignoring the rich semantic context found in pathology reports, textbooks, and scientific literature—a stark contrast to how human pathologists teach and reason [15] [16].

Vision-Language Models (VLMs) pretrained on large-scale image-text pairs from the general web have demonstrated remarkable capabilities. Applying these general-purpose models to histopathology, however, often leads to subpar performance due to the vast domain shift between natural images and complex medical histology [15]. CONCH (CONtrastive learning from Captions for Histopathology) was developed to address this gap. It is a visual-language foundation model specifically designed for computational pathology, pretrained on the largest collection of histopathology-specific image-caption pairs to date [4] [15]. By learning a shared representation space for both tissue images and medical text, CONCH achieves state-of-the-art performance across a wide array of tasks without requiring task-specific labels, marking a substantial leap toward versatile and accessible AI for pathology [4] [15] [17].

CONCH Architecture & Pretraining Methodology

CONCH's design and training regimen are engineered to develop a deep, contextual understanding of histopathology imagery through language.

Model Architecture

CONCH is built upon the CoCa (Contrastive Captioners) framework, a state-of-the-art VLM architecture [15] [18]. It consists of three core components:

- A Vision Encoder: A Vision Transformer (ViT-B/16) that processes input histopathology images, breaking them down into patches to generate image embeddings [18].

- A Text Encoder: A 12-layer transformer network (L12-E768-H12) that processes corresponding text captions [18].

- A Multimodal Fusion Decoder: A transformer that attends to both the image embeddings from the vision encoder and the text representations, enabling deep cross-modal interaction [15].

This architecture allows CONCH to be trained with multiple objectives, leading to a more robust and versatile model compared to those trained with a single objective.

Pretraining Framework

The model was trained using a combination of two distinct objectives, as illustrated in the workflow below.

Diagram 1: CONCH pretraining workflow.

- Image-Text Contrastive Loss: This objective forces the model to align matched image-caption pairs closely in a shared embedding space while pushing non-matching pairs apart. It enables tasks like image-text retrieval and zero-shot classification by learning a unified representation [15] [18].

- Captioning Loss: This generative objective trains the multimodal decoder to predict the text caption autoregressively given the input image. This enhances the model's ability to understand fine-grained visual details and describe them in natural language [15].

Pretraining Dataset

A key differentiator for CONCH is its pretraining dataset. The model was trained on 1.17 million histopathology image-caption pairs curated from diverse sources, including:

- PubMed Central Open Access (PMC-OA): A vast repository of biomedical literature [18].

- Internally curated sources: Ensuring diversity in stains (H&E, IHC, special stains) and tissue types [4] [18].

Notably, CONCH was not pretrained on large public slide collections like TCGA, which minimizes the risk of data contamination when evaluating on popular benchmarks [4] [18].

Quantitative Performance Evaluation

CONCH has been rigorously evaluated on a suite of 14 diverse benchmarks, consistently outperforming other general-purpose and biomedical VLMs.

Zero-Shot Classification

Zero-shot classification tests a model's ability to make predictions on novel tasks without any further task-specific training. As shown in the table below, CONCH demonstrates superior performance across both slide-level and region-of-interest (ROI)-level tasks.

Table 1: Zero-shot classification performance of CONCH versus other vision-language models. Accuracy is reported as balanced accuracy for most tasks; Cohen's κ (KC) and Quadratic κ (QK) are used for subjective grading tasks.

| Task Level | Task Name (Dataset) | CONCH | PLIP | BiomedCLIP | OpenAI CLIP |

|---|---|---|---|---|---|

| Slide-Level | NSCLC Subtyping (TCGA) | 90.7% | 78.7% | 75.9% | 73.3% |

| RCC Subtyping (TCGA) | 90.2% | 80.4% | 76.7% | 74.6% | |

| BRCA Subtyping (TCGA) | 91.3% | 50.7% | 55.3% | 53.2% | |

| LUAD Pattern (DHMC) | KC: 0.200 | KC: 0.080 | KC: 0.040 | KC: 0.010 | |

| ROI-Level | CRC Tissue (CRC100k) | 79.1% | 67.4% | 66.7% | 65.2% |

| LUAD Tissue (WSSS4LUAD) | 71.9% | 62.4% | 60.1% | 58.8% | |

| Gleason Pattern (SICAP) | QK: 0.690 | QK: 0.580 | QK: 0.550 | QK: 0.510 |

CONCH achieves a dramatic improvement on challenging tasks like BRCA subtyping, outperforming the next-best model by nearly 35% [15]. This indicates its robust capability to capture clinically relevant morphological features directly from language-aligned visual representations.

Beyond Classification: Diverse Task Performance

CONCH's capabilities extend far beyond classification, enabling a wide spectrum of applications.

Table 2: CONCH performance on retrieval, segmentation, and captioning tasks.

| Task Category | Benchmark | CONCH Performance | Comparative Performance |

|---|---|---|---|

| Image-to-Text Retrieval | Pathology-specific Retrieval | State-of-the-Art | Outperforms PLIP, BiomedCLIP, and OpenAI CLIP [15] |

| Text-to-Image Retrieval | Pathology-specific Retrieval | State-of-the-Art | Outperforms PLIP, BiomedCLIP, and OpenAI CLIP [15] |

| Tissue Segmentation | Multi-tissue Segmentation | State-of-the-Art | Achieves superior performance in segmenting various tissue types [4] [15] |

| Image Captioning | Pathology Captioning | State-of-the-Art | Generates accurate descriptions of histopathology images [15] |

Experimental Protocols for Downstream Application

A principal advantage of CONCH is its adaptability to various downstream tasks with minimal effort. Below are detailed methodologies for key applications.

Zero-Shot ROI and Whole-Slide Image (WSI) Classification

For zero-shot classification, the class names are converted into a set of text prompts (e.g., "a histology image of invasive ductal carcinoma"). CONCH computes the similarity between the image embedding and all text prompt embeddings, assigning the class of the most similar prompt.

Protocol:

- Prompt Engineering: Create an ensemble of text prompts for each class (e.g., "photo of breast ILC", "microscopic view of invasive lobular carcinoma"). Ensembling multiple prompts per class significantly boosts performance [15].

- Feature Encoding: For a given image, compute its embedding using the CONCH vision encoder. Simultaneously, compute the text embeddings for all prompts using the CONCH text encoder.

- Similarity Calculation: Calculate the cosine similarity between the image embedding and all text embeddings.

- Prediction: For ROI-level classification, assign the class label associated with the prompt yielding the highest similarity score [15].

- WSI Aggregation (MI-Zero): For gigapixel WSIs, the slide is divided into tiles. Steps 2-4 are performed on each tile. The tile-level scores are then aggregated (e.g., by averaging or taking the maximum) to produce a final slide-level prediction [15].

Diagram 2: Zero-shot WSI classification with CONCH (MI-Zero).

Linear Probing and Fine-Tuning

When labeled data is available, CONCH's representations can serve as a powerful foundation for supervised learning.

Protocol:

- Feature Extraction: Use the frozen CONCH vision encoder to extract feature embeddings from all images in the dataset.

- Classifier Training (Linear Probing): Train a simple linear classifier (e.g., a logistic regression model or a single linear layer) on top of the frozen CONCH features. This tests the quality of the representations themselves [18].

- End-to-End Fine-Tuning: For maximum performance, the entire CONCH model (or parts of it) can be unfrozen and fine-tuned on the labeled downstream task. This allows the model to adapt its representations to the specific task at hand [18].

The Scientist's Toolkit: Essential Research Reagents

To implement CONCH in a research environment, the following tools and resources are essential.

Table 3: Key resources for working with the CONCH model.

| Resource Name | Type | Description & Function |

|---|---|---|

| CONCH GitHub Repository [4] | Code & Documentation | The official repository containing installation instructions, usage examples, and links to the model weights. |

| CONCH Hugging Face Hub [18] | Model Weights | The gated repository where researchers can request access to download the pretrained model weights. |

| PyTorch [18] | Software Framework | The deep learning framework required to run CONCH. |

| Python 3.10+ | Programming Language | The supported programming language for the CONCH codebase. |

| TIMM & OpenCLIP [18] | Software Libraries | Core libraries upon which CONCH is built for model implementation and training. |

| Histopathology Datasets (e.g., TCGA, CRC100K) [15] | Benchmark Data | Public and private datasets for evaluating model performance on various downstream tasks. |

Access and Licensing

Access to the CONCH model weights is managed through Hugging Face Hub. Researchers must:

- Agree to a license that permits non-commercial, academic research use only (CC-BY-NC-ND 4.0) [18].

- Register with an institutional email address; requests from personal email domains are denied [18].

- Contact the authors for any commercial use or licensing inquiries [18].

Limitations, Future Directions, and the Evolving Landscape

While CONCH represents a significant advancement, several limitations and future directions are noteworthy.

- Computational Burden: Processing gigapixel WSIs requires significant memory and computational resources, often necessitating tiling and feature aggregation techniques [15].

- Decoder Weights: The multimodal decoder weights are not included in the public release as an added precaution to prevent potential leakage of proprietary or protected health information [18]. This limits the ability to reproduce the captioning results.

- Focus on ROI-level: The original CONCH model primarily processes image patches (ROIs). While it can be used for WSIs via aggregation methods like MI-Zero, newer models like TITAN are being developed as native whole-slide foundation models that build upon CONCH's capabilities [6].

- Annotation-Free Specialization: Recent research shows that CONCH's performance on specific downstream tasks can be further enhanced through continued pretraining on task-relevant image-caption pairs, even without manual annotations [19]. This presents a promising pathway for effortless model specialization.

The field is rapidly evolving, with models like TITAN [6] and PathologyVLM [20] pushing the boundaries further. CONCH, however, remains a foundational pillar—a powerful, publicly available, and extensively validated VLM that has set a new standard for general-purpose representation learning in computational pathology.

Computational pathology, a field at the intersection of computer science and pathology, leverages digital technology and artificial intelligence (AI) to enhance diagnostic accuracy and efficiency [21]. The field has made significant strides in automatically analyzing pathology images for tasks ranging from pathological structure segmentation to tumor classification and prognosis analysis [22]. However, traditional AI models in histopathology have faced fundamental limitations: they typically leverage only image data, require labor-intensive labeling by expert pathologists, and are designed for specific tasks and diseases, making their development unscalable across thousands of possible diagnoses [23] [15].

The practice of pathology fundamentally integrates visual and linguistic information—pathologists examine tissue morphology and communicate findings through written reports, journal articles, and educational textbooks [15]. CONCH (CONtrastive learning from Captions for Histopathology) addresses this gap by introducing a visual-language foundation model that mirrors how human pathologists teach and reason about histopathologic entities [23]. By pretraining on 1.17 million histopathology image-caption pairs through task-agnostic learning, CONCH represents a paradigm shift from task-specific models toward a general-purpose foundation model capable of multiple downstream applications with minimal or no further supervised fine-tuning [18] [4].

CONCH Architecture and Training Methodology

Model Architecture Components

CONCH is built on a multi-component architecture that processes and aligns visual and textual information:

- Vision Encoder: A Vision Transformer (ViT-B/16) with 90 million parameters processes histopathology images by splitting them into 16×16 pixel patches and converting them into numerical representations [18].

- Text Encoder: A 12-layer transformer model with 768-dimensional hidden states and 12 attention heads (110 million parameters) processes biomedical text [18].

- Multimodal Fusion Mechanism: Based on the CoCa (Contrastive Captioners) framework, CONCH uses a combination of contrastive alignment objectives that seek to align the image and text modalities in the model's representation space and a captioning objective that learns to predict the caption corresponding to an image [15].

This architectural approach enables rich cross-modal reasoning, allowing the model to understand the relationships between visual patterns in tissue samples and their textual descriptions in medical literature and reports.

Training Data Composition

CONCH was pretrained on diverse sources of histopathology images and biomedical text, creating the largest histopathology-specific vision-language dataset to date [4]. The training data comprised:

- 1.17 million histopathology image-caption pairs curated from publicly available PubMed Central Open Access (PMC-OA) and internally curated educational resources [18] [15].

- Diverse stain types including not only traditional H&E (hematoxylin and eosin) but also IHC (immunohistochemical) stains and special stains, enabling more robust representation learning across different staining protocols [18].

- Notably excluded large public histology slide collections such as TCGA, PAIP, and GTEX, which are routinely used in benchmark development, thereby minimizing the risk of data contamination when evaluating on public benchmarks or private histopathology slide collections [18].

The model was trained for approximately 21.5 hours on 8 Nvidia A100 GPUs using fp16 automatic mixed-precision, making the training process computationally efficient despite the massive dataset [18].

Figure 1: CONCH Architecture and Training Objectives. The model processes images and text through separate encoders, aligning them in a shared multimodal representation space using contrastive and captioning losses.

Experimental Framework and Benchmarking

Evaluation Benchmarks and Datasets

CONCH was comprehensively evaluated on a suite of 14 diverse benchmarks spanning multiple task types and anatomical sites [15]. The evaluation framework was designed to test the model's capabilities across different levels of complexity and clinical relevance:

Slide-level Classification Tasks:

- TCGA BRCA: Invasive breast carcinoma subtyping

- TCGA NSCLC: Non-small-cell lung cancer subtyping

- TCGA RCC: Renal cell carcinoma subtyping

- DHMC LUAD: Lung adenocarcinoma histologic pattern classification

Region-of-Interest (ROI) Level Tasks:

- CRC100k: Colorectal cancer tissue classification

- WSSS4LUAD: LUAD tissue classification

- SICAP: Gleason pattern classification

Additional tasks included cross-modal image-to-text and text-to-image retrieval, image segmentation, and image captioning, providing a comprehensive assessment of the model's multimodal capabilities [15].

Zero-Shot Transfer Methodology

A key innovation of CONCH is its zero-shot transfer capability, allowing the model to be directly applied to downstream classification tasks without requiring further labeled examples for supervised learning or fine-tuning [15]. The experimental methodology for zero-shot evaluation involved:

- Text Prompt Engineering: Representing class names using predetermined text prompts, with each prompt corresponding to a class. An ensemble of multiple text prompts for each class was created to capture different phrasings of the same concept (e.g., "invasive lobular carcinoma (ILC) of the breast" and "breast ILC") [15].

- Similarity Matching: Classifying images by matching them with the most similar text prompt in the model's shared image-text representation space using cosine similarity [15].

- Whole Slide Image (WSI) Processing: For gigapixel WSIs, CONCH utilized MI-Zero, which divides a WSI into smaller tiles and subsequently aggregates individual tile-level scores into a slide-level prediction [15].

This approach enables a single pretrained foundation model to be applied to different downstream datasets with an arbitrary number of classes, overcoming the limitation of traditional models that require training anew for every task [15].

Table 1: CONCH Zero-Shot Performance on Slide-Level Classification Tasks

| Task | Dataset | CONCH Performance | Next Best Model | Performance Gap |

|---|---|---|---|---|

| NSCLC Subtyping | TCGA NSCLC | 90.7% accuracy | PLIP: 78.7% | +12.0% |

| RCC Subtyping | TCGA RCC | 90.2% accuracy | PLIP: 80.4% | +9.8% |

| BRCA Subtyping | TCGA BRCA | 91.3% accuracy | BiomedCLIP: 55.3% | +36.0% |

| LUAD Pattern Classification | DHMC LUAD | κ = 0.200 | PLIP: κ = 0.080 | +0.120 |

Table 2: CONCH Zero-Shot Performance on ROI-Level Classification Tasks

| Task | Dataset | CONCH Performance | Next Best Model | Performance Gap |

|---|---|---|---|---|

| Gleason Pattern Classification | SICAP | Quadratic κ = 0.690 | BiomedCLIP: κ = 0.550 | +0.140 |

| Colorectal Cancer Tissue Classification | CRC100k | 79.1% accuracy | PLIP: 67.4% | +11.7% |

| LUAD Tissue Classification | WSSS4LUAD | 71.9% accuracy | PLIP: 62.4% | +9.5% |

Key Research Applications and Workflows

Multi-Scale Analysis Capabilities

CONCH enables histopathology analysis at multiple resolutions, from subcellular features to whole-slide level patterns:

- ROI-level Classification: Direct application to region-of-interest images for tasks like Gleason pattern grading in prostate cancer or tissue type classification in colorectal cancer [15].

- Whole Slide Image Analysis: Using multiple instance learning (MIL) approaches to aggregate tile-level predictions for slide-level classification, crucial for clinical diagnostic applications where entire slides must be assessed [15].

- Cross-Modal Retrieval: Both image-to-text and text-to-image retrieval, allowing pathologists to find similar cases based on either visual patterns or textual descriptions [23] [15].

Visualization and Interpretability

A significant advantage of CONCH's approach is the inherent interpretability it offers:

- Similarity Heatmaps: When classifying a WSI, CONCH can generate heatmaps visualizing the cosine-similarity score between each tile in the slide and text prompts corresponding to diagnostic labels [15].

- Region Identification: Regions with high similarity scores are deemed by the model to be close matches with specific diagnoses (e.g., invasive ductal carcinoma), providing visual explanations for the model's predictions and helping pathologists understand which tissue regions contributed most to the classification [15].

Figure 2: Zero-Shot Whole Slide Image Analysis Workflow. CONCH processes gigapixel WSIs by tiling, feature extraction, and similarity calculation, generating both diagnostic predictions and interpretable heatmaps.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Resources for CONCH Implementation

| Resource | Type | Specification | Function/Purpose |

|---|---|---|---|

| CONCH Model Weights | Pretrained Model | ViT-B/16 (90M) + Text Encoder (110M) | Foundation model for transfer learning and zero-shot tasks [18] |

| Histopathology Image Data | Dataset | 1.17M image-caption pairs from PMC-OA & educational resources | Pretraining and fine-tuning data [15] |

| Whole Slide Images | Dataset | TCGA, DHMC LUAD, SICAP, CRC100k, WSSS4LUAD | Benchmark evaluation and clinical validation [23] |

| Computational Hardware | Infrastructure | 8 × Nvidia A100 GPUs | Model training and inference [18] |

| CONCH Python Package | Software Library | pip install git+https://github.com/Mahmoodlab/CONCH.git | Model implementation and integration [4] |

| Hugging Face Hub | Model Repository | huggingface.co/MahmoodLab/CONCH | Model weight distribution and access control [18] |

Impact and Future Directions

The development of CONCH represents a substantial leap over concurrent visual-language pretrained systems for histopathology [23]. By demonstrating state-of-the-art performance across 14 diverse benchmarks—including histology image classification, segmentation, captioning, and cross-modal retrieval—CONCH has established a new paradigm for general-purpose foundation models in computational pathology [15].

The model's impact is evidenced by its rapid adoption in the research community, with numerous studies building upon CONCH for applications including:

- Multimodal survival prediction in oncology [4]

- Spatial transcriptomics integration with histopathology images [4]

- Rare cancer subtyping and classification [4]

- Explainable AI systems for pathological diagnosis [4]

Future directions for visual-language foundation models in pathology include addressing remaining challenges in model reliability and reproducibility, developing more efficient architectures for clinical deployment, and advancing multimodal reasoning capabilities that more closely mimic human pathological reasoning [22] [21]. As noted in recent analyses, the field is moving toward building increasingly comprehensive foundation models to reach more general applications, with generative methods providing new perspectives on addressing long-standing challenges in computational pathology [22].

The training data advantage achieved through 1.17 million carefully curated image-caption pairs has proven foundational to CONCH's success, enabling a single model to facilitate a wide array of machine learning-based workflows while requiring minimal or no further supervised fine-tuning [23]. This approach dramatically reduces the annotation burden that has traditionally constrained computational pathology research and brings the field closer to realizing AI systems that can genuinely assist pathologists across the full spectrum of diagnostic challenges.

The Shift from Task-Specific Models to General-Purpose Foundational AI

The field of computational pathology is undergoing a fundamental transformation, moving away from isolated task-specific models toward versatile general-purpose foundation models. This paradigm shift addresses critical limitations in traditional approaches, where artificial intelligence (AI) models were typically designed for single tasks—such as cancer subtyping or metastasis detection—using carefully labeled datasets. These task-specific models proved difficult to scale across thousands of potential diagnoses and were particularly constrained for rare diseases where annotated data is scarce [15]. The emergence of vision-language models (VLMs) represents a pivotal advancement, mirroring how human pathologists teach and reason about histopathologic entities by integrating visual patterns with textual knowledge [15]. Within this context, foundation models like CONCH (CONtrastive learning from Captions for Histopathology) and TITAN (Transformer-based pathology Image and Text Alignment Network) are redefining the possibilities of AI in pathology by enabling zero-shot transfer learning, cross-modal retrieval, and multimodal reasoning without task-specific fine-tuning [4] [6] [15]. This technical guide examines the architectural principles, training methodologies, and experimental evidence driving this transformative shift, with particular focus on applications for pathology research and drug development.

Foundation Model Architectures: Beyond Single-Task Design

Core Architectural Principles

General-purpose foundation models for computational pathology share several defining characteristics that differentiate them from their task-specific predecessors. Unlike conventional deep learning models that process only images, VLMs jointly learn from both histopathology images and corresponding textual data, creating aligned representation spaces where visual and linguistic concepts share a common embedding [24] [15]. The CONCH model, for instance, employs three core components: an image encoder, a text encoder, and a multimodal fusion decoder [15]. This architecture enables the model to learn rich, transferable representations from web-scale image-text pairs that are almost infinitely available on the internet, overcoming the data scarcity challenges that plagued earlier approaches [25].

The transition from patch-level to whole-slide image analysis represents another critical architectural advancement. While early foundation models focused on region-of-interest (ROI) level analysis, newer architectures like TITAN operate directly on entire whole-slide images (WSIs), which present significant computational challenges due to their gigapixel size [6]. TITAN addresses this by dividing each WSI into non-overlapping patches of 512×512 pixels at 20× magnification, extracting 768-dimensional features for each patch using CONCHv1.5, and then processing the resulting feature grid with a Vision Transformer (ViT) architecture [6]. This approach preserves spatial context while managing computational complexity through attention with linear bias (ALiBi) for long-context extrapolation [6].

Multimodal Pretraining Strategies

Vision-language foundation models employ sophisticated pretraining strategies that simultaneously leverage visual and textual data. CONCH utilizes a framework based on CoCa (Contrastive Captioning), combining contrastive alignment objectives that align image and text modalities in the model's representation space with a captioning objective that learns to predict captions corresponding to images [15]. This dual approach enables the model to develop a deep semantic understanding of histopathologic entities and their textual descriptions.

TITAN implements an even more comprehensive three-stage pretraining paradigm: (1) vision-only unimodal pretraining on ROI crops using the iBOT framework for masked image modeling and knowledge distillation; (2) cross-modal alignment of generated morphological descriptions at ROI-level; and (3) cross-modal alignment at WSI-level with clinical reports [6]. This multistage approach allows the model to capture histomorphological semantics at multiple scales of resolution and abstraction, from individual cellular features to slide-level diagnostic patterns.

Table 1: Comparison of Major Foundation Models in Computational Pathology

| Model | Architecture Type | Training Data Scale | Core Pretraining Objectives | Key Capabilities |

|---|---|---|---|---|

| CONCH | Vision-language (patch-based) | 1.17M image-caption pairs [4] | Contrastive alignment, captioning [15] | Zero-shot classification, cross-modal retrieval, segmentation [4] |

| TITAN | Vision-language (slide-level) | 335,645 WSIs, 423k synthetic captions [6] | Masked image modeling, knowledge distillation, vision-language alignment [6] | Slide-level representation, report generation, rare cancer retrieval [6] |

| PLIP | Vision-language (patch-based) | Not specified | Contrastive learning [15] | Image-text retrieval, zero-shot classification [15] |

| BiomedCLIP | Vision-language (general biomedical) | Not specified | Domain-adapted contrastive learning [15] | Zero-shot classification on medical images [15] |

Experimental Evidence: Performance Benchmarks and Validation

Quantitative Performance Assessment

Rigorous evaluation across diverse benchmarks demonstrates the superior capabilities of foundation models compared to traditional approaches. CONCH has been extensively evaluated on 14 diverse benchmarks encompassing image classification, segmentation, captioning, and cross-modal retrieval tasks [15]. In slide-level cancer subtyping tasks, CONCH achieved remarkable zero-shot accuracy of 90.7% on non-small cell lung cancer (NSCLC) subtyping and 90.2% on renal cell carcinoma (RCC) subtyping, outperforming the next-best model (PLIP) by 12.0% and 9.8% respectively [15]. On the more challenging task of invasive breast carcinoma (BRCA) subtyping, CONCH attained 91.3% accuracy while other models performed at near-random chance levels (50.7%-55.3%) [15].

The TITAN model demonstrates similarly impressive performance, particularly in resource-limited clinical scenarios involving rare diseases [6]. By leveraging both real pathology reports and synthetic captions generated through a multimodal generative AI copilot, TITAN produces general-purpose slide representations that excel in few-shot and zero-shot learning settings [6]. This capability is particularly valuable for rare cancer retrieval and cross-modal retrieval between histology slides and clinical reports, where traditional supervised approaches struggle due to insufficient training examples.

Table 2: Zero-Shot Classification Performance of CONCH Across Cancer Types

| Cancer Type | Task Description | CONCH Performance | Next-Best Model Performance | Performance Gap |

|---|---|---|---|---|

| NSCLC | Non-small cell lung cancer subtyping | 90.7% accuracy [15] | 78.7% (PLIP) [15] | +12.0% [15] |

| RCC | Renal cell carcinoma subtyping | 90.2% accuracy [15] | 80.4% (PLIP) [15] | +9.8% [15] |

| BRCA | Invasive breast carcinoma subtyping | 91.3% accuracy [15] | 55.3% (BiomedCLIP) [15] | +36.0% [15] |

| LUAD | Lung adenocarcinoma pattern classification | Cohen's κ of 0.200 [15] | κ of 0.080 (PLIP) [15] | +0.120 [15] |

Protocol for Zero-Shot Evaluation

The experimental protocol for evaluating zero-shot capabilities of foundation models involves specific methodologies that differ from traditional supervised learning. For classification tasks, researchers first represent class names using predetermined text prompts, where each prompt corresponds to a class [15]. An image is then classified by matching it with the most similar text prompt in the model's shared image-text representation space using cosine similarity [15]. To improve robustness, ensembles of multiple text prompts are often created for each class, as different phrasings of the same concept (e.g., "invasive lobular carcinoma of the breast" vs. "breast ILC") can significantly impact performance [15].

For whole-slide image analysis, the MI-Zero framework is employed, which divides a WSI into smaller tiles and aggregates individual tile-level scores into a slide-level prediction [15]. This approach not only generates diagnostic predictions but also produces similarity heatmaps that visualize regions of high agreement between image tiles and text prompts, offering interpretability insights [15]. This capability for visual explanation represents a significant advantage over black-box task-specific models.

Practical Implementation: The Scientist's Toolkit

Implementing foundation models in computational pathology research requires specific computational resources and methodological components. The table below details key elements of the research toolkit for working with models like CONCH and TITAN.

Table 3: Essential Research Reagent Solutions for Foundation Model Implementation

| Component | Specifications | Function/Purpose |

|---|---|---|

| Whole-Slide Images | Gigapixel resolution (≥ 8,192 × 8,192 pixels at 20× magnification) [6] | Primary visual data input for slide-level analysis |

| Patch Encoders | CONCHv1.5 (768-dimensional features) [6] | Feature extraction from individual image patches |

| Text Prompts | Anatomically precise descriptions with domain-specific terminology [26] | Enabling zero-shot classification through textual guidance |

| Synthetic Captions | Generated via PathChat or similar multimodal generative AI [6] | Augmenting training data with fine-grained morphological descriptions |

| Vision Transformers | ViT architecture with ALiBi for long-context extrapolation [6] | Processing sequences of patch features for slide-level encoding |

Prompt Engineering Framework

Effective deployment of vision-language models requires systematic prompt engineering, particularly for specialized domains like pathology. Research demonstrates that prompt design significantly impacts model performance, with precise anatomical references and domain-specific terminology dramatically improving diagnostic accuracy [26]. A structured ablative study on cancer invasiveness and dysplasia status revealed that the CONCH model achieves highest accuracy when provided with detailed anatomical context, and performance consistently degrades when anatomical precision is reduced [26].

This research further indicates that model complexity alone does not guarantee superior performance; instead, effective domain alignment and domain-specific training are critical for optimal results [26]. These findings establish foundational guidelines for prompt engineering in computational pathology, highlighting the importance of incorporating precise clinical terminology and anatomical references when formulating text prompts for zero-shot evaluation.

Visualizing Workflows and Architectures

CONCH Training and Inference Workflow

TITAN Multi-Stage Pretraining Architecture

Implications for Research and Drug Development

The shift to general-purpose foundation models has profound implications for pathology research and drug development. These models enable unprecedented scalability across diverse pathological tasks without retraining, significantly reducing the resources required to develop AI tools for new diseases or biomarkers [15]. For pharmaceutical research, this capability is particularly valuable for biomarker discovery and clinical trial analysis, where multiple tissue-based biomarkers often need evaluation across different cancer types.

The cross-modal retrieval capabilities of models like CONCH and TITAN facilitate novel research approaches, allowing scientists to search vast histopathology databases using textual queries or to generate descriptive reports for unusual morphological patterns [6] [15]. This functionality accelerates histopathology data mining for drug response biomarkers and enables more efficient correlation of morphological patterns with clinical outcomes. Furthermore, the strong performance of these models in low-data regimes makes them particularly suitable for rare disease research, where collecting large annotated datasets has traditionally been challenging [6].

As these foundation models continue to evolve, they are poised to become indispensable tools in computational pathology, potentially transforming how pathologists and researchers interact with histopathology data. Future developments will likely focus on integrating additional data modalities, such as genomic profiles and spatial transcriptomics, creating even more comprehensive multimodal foundation models for precision oncology [24].

From Theory to Practice: How CONCH is Applied in Computational Pathology Workflows

Zero-shot classification represents a paradigm shift in machine learning applied to computational pathology. Unlike traditional supervised models that require extensive labeled datasets for each new diagnostic task, zero-shot learning enables models to recognize and categorize diseases without having seen any labeled examples of those specific conditions beforehand [27]. This capability is particularly transformative for pathology, where labeled data for rare diseases or novel tissue morphologies is often scarce, costly to produce, and requires expert annotation. The core mechanism enabling this capability is the use of auxiliary information—typically in the form of textual descriptions, semantic attributes, or embedded representations—that allows models to bridge the gap between seen and unseen classes [27]. For vision-language models in pathology, this means aligning visual patterns in histology images with textual descriptions of diseases in a shared semantic space, creating a foundational understanding that can generalize to new diagnostic challenges without task-specific fine-tuning.

The significance of this approach within computational pathology research cannot be overstated. Traditional deep learning models for whole slide image (WSI) analysis face substantial bottlenecks due to their dependency on large, expertly annotated datasets for each new diagnostic category [6] [28]. Foundation models like CONCH and TITAN bypass this limitation by leveraging pretraining on massive, diverse datasets of histopathology images and corresponding textual data [4] [6]. This pretraining enables them to perform generalized zero-shot learning (GZSL), where they can handle both categories seen during pretraining and entirely novel categories, making them exceptionally versatile tools for diagnostic pathology across diverse tissues and diseases [27].

Vision-Language Foundation Models for Pathology

Core Architecture and Alignment Mechanisms

Vision-language foundation models for pathology, such as CONCH (CONtrastive learning from Captions for Histopathology) and TITAN (Transformer-based pathology Image and Text Alignment Network), employ sophisticated architectures designed to align visual patterns in tissue images with clinical or morphological concepts expressed in text [4] [6]. These models typically consist of two parallel encoder networks: a vision encoder that processes histopathology region-of-interests (ROIs) or whole slide images, and a text encoder that processes corresponding pathological descriptions, reports, or synthetic captions.

The fundamental innovation lies in how these models learn a joint embedding space where representations from both modalities can be directly compared [27] [29]. During pretraining, contrastive learning objectives train the model to maximize the similarity between embeddings of matching image-text pairs while minimizing the similarity between non-matching pairs [6] [28]. For instance, an image of lymph node tissue with metastatic carcinoma would be brought closer to its textual description ("poorly differentiated carcinoma cells with irregular nuclei") in this shared space, while being pushed away from unrelated descriptions. This alignment creates a rich semantic representation where visual morphological patterns are directly linked to clinical concepts, enabling the model to perform zero-shot classification by measuring the similarity between an unseen image's visual embedding and embeddings of various disease descriptions [26] [28].

Key Models: CONCH and TITAN

CONCH is a vision-language foundation model specifically designed for computational pathology, pretrained on what was at its development the largest histopathology-specific vision-language dataset of 1.17 million image-caption pairs [4]. Unlike models pretrained solely on natural images, CONCH captures fine-grained histopathological semantics, making its representations particularly suited for medical tasks. The model demonstrates state-of-the-art performance across diverse benchmarks including image classification, segmentation, captioning, and cross-modal retrieval, all without requiring task-specific fine-tuning [4].

TITAN represents a more recent advancement as a multimodal whole-slide foundation model trained on an even larger scale—335,645 whole-slide images with corresponding pathology reports and 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology [6]. TITAN introduces a three-stage pretraining paradigm: (1) vision-only unimodal pretraining on ROI crops, (2) cross-modal alignment of generated morphological descriptions at the ROI-level, and (3) cross-modal alignment at the WSI-level with clinical reports [6]. This comprehensive approach enables TITAN to extract general-purpose slide representations and generate pathology reports that generalize effectively to resource-limited clinical scenarios, including rare disease retrieval and cancer prognosis.

Table 1: Key Vision-Language Foundation Models for Computational Pathology

| Model | Training Data Scale | Architecture | Key Capabilities | Unique Advantages |

|---|---|---|---|---|

| CONCH | 1.17M image-caption pairs [4] | Vision-Language Transformer | Image classification, segmentation, captioning, cross-modal retrieval [4] | Did not use large public slide collections (TCGA, PAIP) for pretraining, reducing contamination risk [4] |

| TITAN | 335,645 WSIs + 423k synthetic captions [6] | Transformer with ALiBi for long-context | Slide representation, zero-shot classification, rare cancer retrieval, report generation [6] | Leverages synthetic captions and attention with linear bias for handling large WSIs [6] |

Experimental Protocols and Methodologies

Data Preparation and Preprocessing

The experimental pipeline for evaluating zero-shot classification in pathology begins with comprehensive data preparation. For whole slide image analysis, models like TITAN process WSIs by dividing them into non-overlapping patches of 512×512 pixels at 20× magnification [6]. Each patch is then encoded into a 768-dimensional feature vector using a pretrained patch encoder such as CONCH v1.5 [6]. These patch features are spatially arranged in a two-dimensional grid that replicates their original positions within the tissue, preserving crucial spatial context [6]. To handle the computational challenge of processing gigapixel WSIs, the methodology employs random cropping of the feature grid, typically sampling region crops of 16×16 features covering an area of 8,192×8,192 pixels [6]. For vision-language alignment, textual descriptions of diseases and morphological features are tokenized and encoded using transformer-based text encoders. The specific descriptive prompts used for zero-shot classification are critically important, as demonstrated in systematic prompt engineering studies [26].

Zero-Shot Inference Protocol

In zero-shot evaluation, models are assessed on their ability to classify images into novel categories without any task-specific training examples. The experimental protocol follows a standardized process: First, textual descriptions (prompts) for all target classes are encoded into the joint embedding space using the pretrained text encoder [26] [30]. These class embeddings remain fixed during inference. Next, the target histopathology image (either ROI or entire WSI) is processed through the vision encoder to generate its visual embedding [28]. The classification decision is then made by computing similarity scores (typically using cosine similarity) between the visual embedding and all class text embeddings [27] [29]. The class with the highest similarity score is assigned as the prediction. This approach enables remarkable flexibility, as new diseases can be added to the classification system simply by providing new textual descriptions, without any retraining or fine-tuning [26] [30].

Evaluation Metrics and Benchmarking

Comprehensive evaluation of zero-shot classification performance employs standard metrics including precision, recall, accuracy, and area under the receiver operating characteristic curve (AUC-ROC) [31] [26]. For medical applications, additional metrics such as sensitivity, specificity, and F1-score are often reported to provide a complete picture of diagnostic capability. Benchmarking typically involves comparing zero-shot performance against supervised baselines and other foundation models across multiple tissue types and disease categories [6] [26]. Crucially, evaluation datasets are carefully curated to include both common and rare conditions to properly assess generalization capability [6]. Studies often employ cross-validation strategies that test models on completely unseen disease categories or tissue types to rigorously evaluate true zero-shot performance [26] [30].

Quantitative Performance Analysis

Diagnostic Accuracy Across Disease Types

Zero-shot classification models have demonstrated impressive performance across diverse diagnostic tasks in pathology. In a comprehensive evaluation of a GPT-based foundational model for electronic health records (extended to pathology concepts), researchers reported an average top-1 precision of 0.614 and recall of 0.524 for predicting next medical concepts [31]. For 12 major diagnostic conditions, the model demonstrated strong zero-shot performance with high true positive rates while maintaining low false positives [31]. In specialized pathology applications, vision-language models like CONCH and TITAN have shown particular strength in classifying cancers and rare diseases. For instance, in cross-modal retrieval tasks, TITAN significantly outperformed previous slide foundation models, enabling effective retrieval of rare cancer types based on both image and text queries [6]. The performance advantage was especially pronounced in low-data regimes and for rare conditions where supervised models typically struggle due to insufficient training examples.

Comparative Performance Across Model Types

Systematic comparisons between model architectures reveal important patterns in zero-shot capability. In a study investigating zero-shot diagnostic pathology across 3,507 WSIs of digestive pathology, CONCH achieved the highest accuracy when provided with precise anatomical references in the prompts [26]. The research demonstrated that prompt engineering significantly impacts model performance, with detailed anatomical and morphological descriptions yielding superior results compared to generic disease names [26]. Similarly, in plant pathology (serving as a proxy for general tissue diagnostics), CLIP-based models demonstrated remarkable robustness when tested on real-world field images, significantly outperforming conventional CNN models trained on curated datasets like PlantVillage [30]. This suggests that the zero-shot approach offers particular advantages for real-world applications where image variability is high and controlled training datasets are unavailable.

Table 2: Zero-Shot Classification Performance Across Domains

| Domain/Model | Task | Performance Metrics | Key Findings |

|---|---|---|---|

| EHR GPT Model [31] | Medical concept prediction | Average top-1 precision: 0.614, recall: 0.524 [31] | Strong performance across 12 diagnostic conditions with high true positive rates and low false positives |

| CONCH [26] | Digestive pathology diagnosis | Highest accuracy with precise anatomical prompts [26] | Prompt engineering significantly impacts performance; anatomical context is critical |

| CLIP-based Models [30] | Plant disease classification | Superior performance on real-world field images [30] | Outperformed CNN models trained on curated datasets, demonstrating strong domain adaptation |

The Scientist's Toolkit: Essential Research Reagents

Implementing and researching zero-shot classification in pathology requires specific computational "reagents" and resources. The table below details key components and their functions in the experimental pipeline.

Table 3: Essential Research Reagents for Zero-Shot Pathology Classification

| Research Reagent | Function | Examples/Specifications |

|---|---|---|

| Whole Slide Images (WSIs) | Primary data source for model training and evaluation | 335k-1M+ WSIs across multiple organ types [4] [6] |

| Patch Encoders | Feature extraction from image patches | CONCH v1.5 (768-dimensional features) [6] |

| Text Encoders | Processing disease descriptions and prompts | Transformer-based architectures (BERT, CLIP text encoder) [27] [29] |

| Vision-Language Models | Core classification architecture | CONCH, TITAN, Quilt-Net, Quilt-LLAVA [4] [6] [26] |

| Annotation Tools | Dataset creation and validation | Software for ROI annotation, text-image pairing |

| Prompt Templates | Structured disease descriptions | Anatomically precise prompts with morphological details [26] |

| Similarity Metrics | Decision function for classification | Cosine similarity, Euclidean distance [27] |

Workflow Visualization

Zero-Shot Classification Workflow in Computational Pathology

Prompt Engineering Framework

Systematic Prompt Design

Prompt engineering has emerged as a critical factor influencing zero-shot classification performance in computational pathology. Research demonstrates that systematically designed prompts significantly enhance model accuracy by providing richer contextual information [26]. Effective prompt engineering involves structured variation across multiple dimensions: domain specificity (including precise medical terminology), anatomical precision (specifying tissue types and locations), instructional framing (directing the model's analytical approach), and output constraints (defining the classification task clearly) [26]. For instance, a prompt like "A photomicrograph of colon mucosa showing invasive adenocarcinoma characterized by irregular glandular structures and desmoplastic reaction" substantially outperforms a generic prompt like "colon cancer" because it provides specific morphological features that the vision encoder can match against visual patterns in the tissue [26].

Ablation Studies and Prompt Optimization