Digital Sensing and AI: Revolutionizing Point-of-Care Cancer Diagnosis

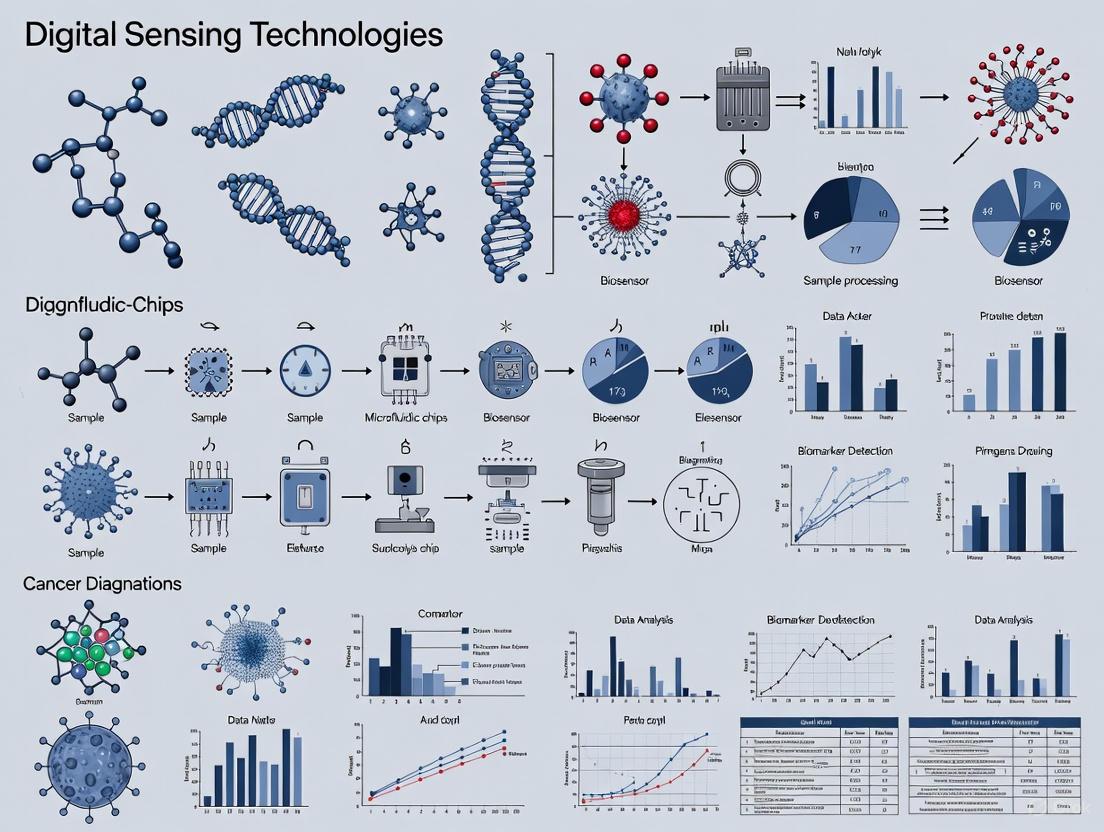

This article explores the transformative impact of digital sensing technologies integrated with artificial intelligence for point-of-care (POC) cancer diagnostics.

Digital Sensing and AI: Revolutionizing Point-of-Care Cancer Diagnosis

Abstract

This article explores the transformative impact of digital sensing technologies integrated with artificial intelligence for point-of-care (POC) cancer diagnostics. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning foundational principles, methodological applications, optimization challenges, and comparative validation. The review covers cutting-edge advancements in biosensors, liquid biopsies, and portable imaging systems, alongside AI-driven data interpretation that enhances diagnostic accuracy and accessibility. It critically addresses current technical and implementation barriers while evaluating performance against traditional laboratory methods, offering a forward-looking perspective on integrating these technologies into clinical workflows and precision oncology.

The New Frontier: Core Principles and Technologies Shaping POC Cancer Diagnostics

Application Notes: Digital Sensor Platforms in Point-of-Care Cancer Diagnostics

Digital sensor platforms represent a transformative class of diagnostic systems that integrate advanced sensors with digital technology to revolutionize data collection, processing, and transmission. These platforms serve as the backbone of modern monitoring systems, enabling real-time, high-precision, and automated diagnostics that are particularly crucial for point-of-care (POC) cancer applications [1]. By leveraging nanotechnology, biorecognition elements, and artificial intelligence (AI), these systems facilitate early detection, precise diagnosis, and personalized treatment methods for cancer, directly at or near the site of patient care [1] [2].

The operational framework of a digital sensor platform typically involves a biosensor component for biological recognition, a transducer that converts the biological response into a digital signal, and a data processing unit where AI algorithms interpret the results. This integration enables the conversion of molecular recognition of target analytes into actionable diagnostic insights [2]. The convergence of these technologies is paving the way for proactive cancer management, improving survival rates and quality of life through timely and targeted interventions [1].

Key Technology Categories and Applications

Digital sensor platforms for cancer diagnostics manifest primarily in three forms, each with distinct applications and value propositions in point-of-care settings.

Table 1: Key Digital Sensor Platform Categories for Cancer Diagnostics

| Technology Category | Primary Function | Key Applications in Cancer Care | Representative Examples |

|---|---|---|---|

| Biosensors & Lab-on-a-Chip (LOC) [1] | Miniaturized devices for analyzing biomarkers in bodily fluids | Liquid biopsies for circulating tumor DNA (ctDNA); detection of tumor-associated antigens [1] [3] | Multiplexed lateral flow immunoassays (LFIAs); electrochemical sensors for ctDNA [3] |

| Wearable Sensors [1] [2] | Continuous, non-invasive physiological monitoring | Tracking patient activity, vital signs, and metabolic markers for treatment monitoring and early complication detection [2] [4] | Smart shirts or patches for monitoring respiration, heart rate, and gait [4] |

| Portable Imaging Systems [3] | Non-invasive, high-resolution visualization of tissues | Detection of epithelial precancers; ensuring complete tumor resection with negative margins [3] | Optical coherence tomography (OCT); fluorescence-guided microscopy [3] |

Quantitative Performance Data of Select POC Assays

The performance of molecular diagnostics adapted for point-of-care use is critical for their clinical adoption. The following table summarizes key metrics for emerging technologies.

Table 2: Performance Metrics of Emerging POC Molecular Diagnostics for Cancer

| Assay Technology | Target Biomarker | Reported Sensitivity | Reported Specificity | Time-to-Result |

|---|---|---|---|---|

| Loop-mediated isothermal amplification (LAMP) [3] | Nucleic acids (e.g., viral oncogenes, ctDNA) | High (comparable to PCR) | High (robust against inhibitors) | ~30-60 minutes [3] |

| Multiplexed Lateral Flow Immunoassays (LFIAs) [3] | Proteins (e.g., CEA, AFP, CA-125) | Enhanced via nanomaterials (quantum dots) | Enhanced via nanomaterials; challenges with cross-reactivity | < 30 minutes [3] |

| Fluorescence-based LFIA Readouts [3] | Proteins and nucleic acids | Higher than visual LFIAs | Higher than visual LFIAs | < 30 minutes [3] |

Experimental Protocols

Protocol 1: Detection of Circulating Tumor DNA using LAMP-based Liquid Biopsy

Principle: This protocol describes a method for detecting tumor-specific genetic mutations (e.g., from KRAS or EGFR) in circulating cell-free DNA (cfDNA) from blood samples using Loop-Mediated Isothermal Amplification (LAMP). LAMP is ideal for POC settings as it operates at a constant temperature (60-70°C), eliminating the need for complex thermal cycling equipment used in traditional PCR [3].

Workflow:

Materials:

- Reagents:

- Plasma sample (100 µL).

- LAMP master mix (containing Bst DNA polymerase with strand-displacing activity, dNTPs, and reaction buffer).

- Sequence-specific LAMP primers (F3, B3, FIP, BIP) designed against the target mutation.

- Fluorescent intercalating dye (e.g., SYBR Green) or colorimetric dye (e.g., hydroxy naphthol blue).

- Nuclease-free water.

- Equipment:

- Portable, low-cost isothermal heater or dry bath.

- Microcentrifuge.

- Micropipettes and tips.

- Portable fluorometer or smartphone-based reader for result interpretation [3].

Procedure:

- Sample Preparation: Isolate plasma from fresh whole blood via centrifugation. Extract cfDNA from the plasma using a quick spin-column or crude lysis protocol. The robustness of LAMP allows for minimal nucleic acid purification [3].

- Reaction Setup: In a 0.2 mL tube, combine the following on ice:

- 12.5 µL of 2x LAMP master mix.

- 5 µL of primer mix (containing all four LAMP primers).

- 2.5 µL of extracted cfDNA template.

- Nuclease-free water to a final volume of 25 µL.

- Amplification: Place the reaction tube in a pre-heated isothermal block at 65°C for 45 minutes.

- Detection:

- Fluorescent Method: Include a fluorescent dye in the master mix. After amplification, use a portable fluorometer to measure fluorescence. A significant increase over the baseline indicates a positive result.

- Colorimetric Method: Include a metal indicator like hydroxy naphthol blue. A color change from violet to sky blue indicates a positive amplification.

- Data Interpretation: Integrate the readout device with a smartphone or tablet running a machine learning algorithm. The AI model can automate the interpretation of positive/negative results, reducing reliance on trained personnel and providing a clear diagnostic output [2] [3].

Protocol 2: Multiplexed Lateral Flow Immunoassay for Cancer Antigen Detection

Principle: This protocol outlines the procedure for simultaneously detecting multiple tumor-associated antigens (e.g., Carcinoembryonic Antigen (CEA), Alpha-fetoprotein (AFP)) in serum or plasma using a multiplexed Lateral Flow Immunoassay (LFIA). The assay uses specific capture antibodies immobilized in distinct test lines on a nitrocellulose membrane and detection antibodies conjugated to colored or fluorescent nanoparticles [3].

Workflow:

Materials:

- Reagents:

- Patient serum or plasma sample (50-100 µL).

- Multiplexed LFIA test strip with test lines (T1 for CEA, T2 for AFP) and a control line (C).

- Running buffer.

- Equipment:

- Flat surface for test strip development.

- Timer.

- Smartphone with a dedicated app and, if using fluorescent nanoparticles, a simple external optical attachment [3].

Procedure:

- Assay Initiation: Place the multiplexed LFIA strip on a flat, dry surface. Apply 100 µL of the patient serum sample to the sample pad.

- Liquid Migration: Immediately add 2-3 drops of running buffer to the sample pad. The liquid will migrate via capillary action across the conjugate pad, membrane, and into the absorbent pad.

- Complex Formation and Capture: As the sample migrates, it rehydrates the nanoparticle-antibody conjugates in the conjugate pad. If present, the target antigens (CEA, AFP) bind to these conjugates. The complexes continue to flow across the membrane until they are captured by the immobilized antibodies at the respective test lines, generating a visible or fluorescent line.

- Control Verification: The control line should always appear, indicating proper fluid flow and assay validity.

- Result Reading and Interpretation: After 15 minutes, use a smartphone camera to capture an image of the test strip. A dedicated app, powered by a deep learning model (e.g., a convolutional neural network), should be used to:

The Scientist's Toolkit: Research Reagent Solutions

The development and execution of advanced digital sensor platforms require a suite of specialized reagents and materials.

Table 3: Essential Research Reagents and Materials for Digital Sensor Platforms

| Item | Function/Description | Application Example |

|---|---|---|

| Biorecognition Elements [2] | Molecules (antibodies, aptamers, DNA probes) that specifically bind to the target analyte. | Immobilization on sensor surfaces or conjugation to nanoparticles for capturing cancer biomarkers. |

| Nanomaterial Labels [3] | Quantum dots, lanthanide-doped nanoparticles, or gold nanoparticles that serve as signal reporters. | Used in multiplexed LFIAs to enhance sensitivity and enable simultaneous detection of multiple targets. |

| Bst DNA Polymerase [3] | A strand-displacing DNA polymerase essential for isothermal amplification. | Key enzyme in LAMP assays for amplifying ctDNA targets without thermal cycling. |

| Lyophilized Reagent Pellets [3] | Pre-mixed, freeze-dried reagents to enhance stability and ease-of-use. | Used in POC test kits to eliminate cold-chain requirements and simplify assay steps in the field. |

| AI/ML Software Platforms [2] [5] | Machine learning (e.g., CNN for images) and deep learning algorithms for data analysis. | Used for interpreting complex sensor data, imaging results, and strip tests to improve diagnostic accuracy. |

Cancer biomarkers, including proteins, circulating tumor DNA (ctDNA), and exosomes, are biological molecules that provide crucial information about the presence, progression, and behavior of cancer [6]. The advent of digital sensing technologies has catalyzed a paradigm shift in oncology, enabling minimally invasive monitoring and point-of-care (POC) diagnostics [7] [1]. These technologies leverage advancements in biosensors, lab-on-a-chip (LOC) devices, and artificial intelligence (AI) to facilitate early detection, precise diagnosis, and personalized treatment planning [1]. The integration of these tools is transforming the landscape of cancer care by making diagnostics more accessible, efficient, and tailored to individual patient profiles.

Established and Emerging Cancer Biomarkers

Classification and Clinical Utility

Biomarkers are indispensable across the entire cancer care continuum, from screening and early detection to diagnosis, treatment selection, and monitoring of therapeutic responses [6]. They can be broadly classified based on their molecular nature and clinical application.

Table 1: Key Classes of Cancer Biomarkers and Their Characteristics

| Biomarker Class | Examples | Associated Cancers | Key Characteristics & Clinical Role |

|---|---|---|---|

| Protein Biomarkers | PSA, CA-125, CEA, AFP [8] [6] | Prostate, Ovarian, Colorectal, Liver [8] [6] | Widely used but often lack specificity; can be elevated in benign conditions [6]. |

| Circulating Tumor DNA (ctDNA) | Mutations in KRAS, EGFR, TP53 [7] [6] | Lung, Breast, Colorectal, and many others [7] [6] | Fragments of tumor-derived DNA in blood; enables non-invasive genotyping, therapy monitoring, and early detection [7]. |

| Exosomes | Exosomal PD-L1, CD63, EpCAM [7] [9] | Melanoma, NSCLC, Colorectal, and others [7] [9] | Nanovesicles (30-150 nm) carrying proteins, lipids, and nucleic acids; reflect functional activity of parental tumor cells [7] [9]. |

| Circulating Tumor Cells (CTCs) | CTCs detected by CellSearch [7] | Metastatic Breast, Prostate, Colorectal [7] | Intact tumor cells in circulation; used for prognosis in metastatic disease [7]. |

Performance Metrics of Emerging Biomarker Assays

The analytical performance of assays for novel biomarkers is critical for their clinical translation. Sensitivity and limit of detection (LOD) are key parameters.

Table 2: Analytical Performance of Selected Emerging Biomarker Detection Technologies

| Biomarker | Detection Technology | Limit of Detection (LOD) | Reported Sensitivity / Specificity |

|---|---|---|---|

| TNFα (Model Protein) | 3D-Printed Smartphone Colorimetric Biosensor [10] | 19 pg/mL [10] | Well correlated with standard ELISA [10]. |

| Ferritin | Graphene FET-based ELISA (G-ELISA) [11] | Lower than unamplified gFET (specific value not stated) [11] | Enhanced sensitivity via enzymatic amplification [11]. |

| Exosomal miRNA | Not Specified [7] | Not Applicable | 97% sensitivity for distinguishing early-stage pancreatic cancer [7]. |

| ctDNA Methylation | Sequencing [7] | Not Applicable | Detected breast cancer up to 2 years before diagnosis with 100% specificity [7]. |

Experimental Protocols for Biomarker Analysis

Protocol 1: Exosome Isolation and Detection via Immunoaffinity

Principle: This protocol isolates exosomes from biofluids using antibodies against specific surface markers (e.g., CD63, CD81) and detects them via an enzyme-linked immunosorbent assay (ELISA) format, which can be adapted for colorimetric or electrochemical readouts [9].

Materials:

- Sample: Blood plasma, serum, or cell culture medium.

- Antibodies: Capture antibody (e.g., anti-CD63), detection antibody (e.g., biotinylated anti-target antigen), and enzyme-conjugated streptavidin (e.g., streptavidin-urease) [9] [11].

- Buffers: Phosphate-buffered saline (PBS), washing buffer (PBS with 0.05% Tween 20), blocking buffer (e.g., 0.1% gelatin or BSA in PBS) [10].

- Equipment: Microplate reader, or for POC use, a smartphone-based colorimetric sensor or graphene field-effect transistor (gFET) setup [10] [11].

Procedure:

- Coating: Immobilize the capture antibody on a solid surface (e.g., microplate well or gFET sensor) and incubate overnight at 4°C.

- Blocking: Wash the surface and add blocking buffer for 1 hour at 37°C to prevent non-specific binding [10].

- Sample Incubation: Add the pre-cleared biofluid sample and incubate for 1-2 hours. Exosomes expressing the target surface marker will be captured.

- Washing: Wash thoroughly to remove unbound material.

- Detection: Incubate with a biotinylated detection antibody specific to a different epitope on the exosome or target antigen.

- Signal Amplification: Incubate with enzyme-conjugated streptavidin (e.g., urease).

- Readout:

- Colorimetric: Add an enzyme substrate (e.g., 3,3',5,5'-Tetramethylbenzidine (TMB)).- Stop the reaction with acid and measure the absorbance [10].

- gFET-based (G-ELISA): Add urea. The urease enzyme hydrolyzes urea, causing a local pH change that is transduced by the gFET as a shift in the Dirac point (VI), providing a digital readout [11].

Protocol 2: ctDNA Analysis via Liquid Biopsy and Sequencing

Principle: This protocol involves the extraction of cell-free DNA (cfDNA) from blood, followed by the enrichment and analysis of ctDNA using next-generation sequencing (NGS) to identify tumor-specific mutations or methylation patterns [7] [6].

Materials:

- Sample: Blood collected in cell-stabilizing tubes.

- Reagents: cfDNA extraction kit, library preparation kit, sequencing buffers, and bioinformatics software.

- Equipment: Centrifuge, thermocycler, and next-generation sequencer [7].

Procedure:

- Blood Collection and Processing: Draw blood and separate plasma via centrifugation to remove cells and debris.

- cfDNA Extraction: Isolate total cfDNA from the plasma using a commercial extraction kit.

- Library Preparation: Prepare a sequencing library from the cfDNA. For ultra-sensitive detection, use techniques like droplet digital PCR (ddPCR) or targeted sequencing panels.

- Sequencing: Perform NGS on the prepared libraries.

- Bioinformatic Analysis: Align sequences to a reference genome and use specialized algorithms to identify low-frequency tumor-derived mutations, copy number alterations, or methylation profiles against a background of wild-type DNA [7] [6].

The Scientist's Toolkit: Key Research Reagent Solutions

Successful experimentation in cancer biomarker research relies on a suite of specialized reagents and materials.

Table 3: Essential Research Reagents and Materials for Biomarker Analysis

| Item | Function/Application | Specific Examples |

|---|---|---|

| Specific Antibodies | Recognition elements for immunoassays; used for capture and detection of protein biomarkers and exosomal surface proteins. | Anti-human TNFα antibody [10]; Anti-ferritin monoclonal antibody [11]; Anti-CD63/CD81 for exosome capture [9]. |

| Enzyme Conjugates | Signal generation and amplification in ELISA and related assays. | Horseradish peroxidase (HRP) [10]; Streptavidin-urease for G-ELISA [11]. |

| Specialized Substrates | Generate measurable signal (colorimetric, electrochemical) upon enzyme action. | 3,3',5,5'-Tetramethylbenzidine (TMB) for HRP [10]; Urea for urease enzyme in G-ELISA [11]. |

| Surface Chemistry Reagents | Functionalize sensor surfaces for robust biomolecule immobilization and to reduce non-specific binding. | Vinylsulfonated polyethyleneimine (VS-PEI) for graphene functionalization [11]; Polyethylene glycol (PEG) as an antifouling agent [11]. |

| NGS Library Prep Kits | Prepare cfDNA/ctDNA for sequencing; enable target enrichment and adapter ligation. | Kits for preparing 3'-end enriched cDNA libraries for sequencing [12]. |

| Exosome Isolation Kits | Isulate and purify exosomes from complex biofluids based on size, precipitation, or immunoaffinity. | Kits based on polymeric precipitation or ultrafiltration [9]. |

Biosensors are analytical devices that combine a biological recognition element with a physicochemical transducer to detect specific analytes with remarkable sensitivity and specificity [13]. The convergence of nanotechnology and advanced biorecognition materials has profoundly transformed these tools, enabling unprecedented capabilities in point-of-care (POC) cancer diagnostics. These technologies facilitate early detection, continuous monitoring, and personalized treatment strategies by detecting biomarkers at ultralow concentrations, significantly improving diagnostic accuracy and prognostic assessments [13] [1]. This document details application notes and experimental protocols for leveraging these advanced biosensors within digital sensing frameworks for cancer research and diagnostics.

Key Technology Platforms and Performance Metrics

Advanced biosensor platforms are characterized by their high sensitivity, miniaturization, and multiplexing capabilities. The table below summarizes the performance of prominent nanotechnology-enhanced biosensors relevant to cancer diagnostics.

Table 1: Performance Metrics of Advanced Biosensing Platforms

| Technology Platform | Sensitivity | Detection Limit (RIU) | Figure of Merit (RIU⁻¹) | Key Applications in Oncology |

|---|---|---|---|---|

| PCF-SPR Biosensor [14] | 125,000 nm/RIU (Wavelength) | 8 × 10⁻⁷ | 2112.15 | Label-free cancer cell detection, chemical sensing |

| Graphene Metasurface Biosensor [15] | 4000 nm/RIU | 0.078 | 16.000 | Viral detection (e.g., COVID-19 biomarkers) |

| Dual-Gist PCF Biosensor [15] | 115,999 nm/RIU | 8.66 × 10⁻⁷ | Information Missing | General bioanalytical sensing |

| X-shaped SPR-PCF Biosensor [15] | 29,000 nm/RIU | 1.72 × 10⁻⁶ | 558 | Broad-range biomarker detection |

| MoS₂-based SPR Sensor [15] | 25,800 nm/RIU (Dual-mode) | Information Missing | Information Missing | Refractive index sensing and temperature response |

Application Notes

Note 001: AI-Optimized Design of PCF-SPR Biosensors

Background: Photonic Crystal Fiber-based Surface Plasmon Resonance (PCF-SPR) biosensors are sophisticated optical platforms that detect minute refractive index variations near the sensor surface, which occur when biomarkers bind to the recognition layer [14].

Significance for Cancer Diagnosis: This technology is particularly valuable for label-free cancer cell detection and monitoring biomarker-antibody interactions in real-time, providing insights into tumor progression [13] [14].

Key Advantages:

- Machine Learning Integration: ML regression models (Random Forest, XGBoost) can predict optical properties like effective index and confinement loss with high accuracy, drastically accelerating sensor optimization and reducing computational costs compared to traditional simulation methods [14].

- Explainable AI (XAI): SHAP analysis identifies critical design parameters, revealing that wavelength, analyte refractive index, gold thickness, and pitch are the most influential factors for maximizing sensitivity [14].

- Performance: Achieves high wavelength sensitivity and low confinement loss, making it suitable for detecting low-abundance cancer biomarkers [14].

Note 002: Integrated Systems for Point-of-Care Cancer Diagnostics

Background: The conceptual "OncoCheck" model exemplifies an integrated approach designed for resource-limited settings. It combines liquid biopsy, point-of-care testing (POCT), and AI-driven data analysis [16].

Significance for Cancer Diagnosis: This system aims to bridge global cancer care disparities by enabling early, sensitive, and affordable cancer screening outside centralized laboratories, potentially transforming cancer management in low- and middle-income countries (LMICs) [16].

Key Advantages:

- Accessibility: POCT integration allows for rapid diagnostics in non-laboratory settings, improving access for remote and underserved populations [16].

- Cost-Effectiveness: Liquid biopsy is a more economical option than traditional screening methods like MRI, significantly reducing detection costs [16].

- Comprehensive Profiling: Liquid biopsy analyzes various components, including circulating tumor DNA (ctDNA) and extracellular vesicles, providing a non-invasive window into tumor heterogeneity and treatment response [16].

Experimental Protocols

Protocol 001: Fabrication and Functionalization of a PCF-SPR Biosensor

Objective: To fabricate a high-sensitivity PCF-SPR biosensor and functionalize its surface for the specific detection of a target cancer biomarker.

Workflow: The experimental procedure for biosensor preparation and measurement is outlined below.

Materials:

- Photonic Crystal Fiber (PCF)

- Metallic deposition source (Gold, 99.99% purity)

- Biorecognition elements (e.g., monoclonal antibodies specific to the target cancer biomarker, DNA aptamers)

- Surface activation reagents (e.g., 11-Mercaptoundecanoic acid for gold surface)

- Coupling agents (e.g., EDC/NHS for carboxyl group activation)

- Blocking buffer (e.g., 1% Bovine Serum Albumin in PBS)

- Washing buffer (e.g., PBS with 0.05% Tween 20)

- Analyte samples (e.g., purified protein, synthetic biomarkers spiked in buffer, or patient serum)

Procedure:

- Sensor Fabrication:

- Begin with a standard PCF substrate.

- Using a sputter coater or thermal evaporator, deposit a thin, uniform film of gold (typically 40-60 nm) onto the exterior or interior channels of the PCF.

- Surface Functionalization:

- Clean the gold-coated sensor with oxygen plasma and ethanol.

- Immerse the sensor in a 1 mM solution of 11-Mercaptoundecanoic acid in ethanol for 12-16 hours to form a self-assembled monolayer (SAM).

- Rinse thoroughly with ethanol and deionized water to remove unbound thiols.

- Activate the carboxyl terminal groups by treating with a fresh mixture of EDC (0.4 M) and NHS (0.1 M) in water for 30 minutes.

- Rinse with buffer and immediately incubate with the biorecognition element (e.g., 50 µg/mL antibody in phosphate buffer, pH 7.4) for 2 hours.

- Rinse again to remove unbound antibodies.

- Block non-specific binding sites by incubating with 1% BSA for 1 hour.

- Perform a final rinse, and store the functionalized sensor in PBS at 4°C until use.

- Sample Introduction and Measurement:

- Place the functionalized sensor in a flow cell apparatus.

- Establish a stable baseline by flowing running buffer.

- Introduce the sample containing the target analyte and allow binding to proceed for a predetermined time.

- Monitor the output light spectrum using a spectrometer. The binding of the target biomarker will cause a shift in the resonance wavelength.

- Use the wavelength shift to calculate sensitivity and analyte concentration.

AI Integration:

- Use a dataset of sensor design parameters and their corresponding performance metrics to train ML regression models (e.g., Random Forest, XGBoost).

- Employ the trained model to predict the performance of new design configurations.

- Apply SHAP analysis to the model's predictions to interpret the impact of each design parameter (e.g., gold thickness, pitch) on the final sensor performance [14].

Protocol 002: OncoCheck-Inspired Liquid Biopsy Analysis at Point-of-Care

Objective: To detect cancer biomarkers from a liquid biopsy sample (e.g., blood) using an integrated POCT device coupled with AI-based interpretation.

Workflow: The streamlined process for point-of-care liquid biopsy analysis is visualized in the following diagram.

Materials:

- Portable POCT device (e.g., electrochemical reader, compact fluorescence detector)

- Single-use test cartridges/strips pre-functionalized with capture probes (e.g., antibodies for CTCs, probes for ctDNA)

- Blood collection kit (venipuncture or fingerstick)

- Microcentrifuge (portable, for plasma separation if required)

- Lysis and wash buffers (provided with the test kit)

- Smartphone or tablet for data collection and transmission

Procedure:

- Sample Preparation:

- Collect a peripheral blood sample (1-10 mL in EDTA tubes).

- Process the sample within 2 hours of collection. Centrifuge at 2000 × g for 10 minutes to separate plasma.

- Carefully transfer the plasma layer to a new tube without disturbing the buffy coat.

- POCT Analysis:

- Load the prepared plasma sample into the sample chamber of the POCT cartridge.

- Insert the cartridge into the portable reader device.

- The device automatically mixes the sample with internal reagents, facilitates the binding of biomarkers to the capture elements, and generates a quantifiable signal (e.g., electrical current, optical density).

- The assay is typically completed within 10-30 minutes.

- Data Digitization and AI Analysis:

- The reader digitizes the raw signal and transmits it via Bluetooth or USB to a connected smartphone or local hub.

- The data is uploaded to a cloud-based or local AI analytics platform.

- Pre-trained machine learning models analyze the multiplexed biomarker data, correcting for potential assay variations and patient-specific factors.

- The AI system generates a diagnostic report indicating the probability of disease, potential cancer subtype, or level of treatment response.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Nanobiosensor Development and Cancer Diagnostics

| Research Reagent / Material | Function / Application | Examples / Specifications |

|---|---|---|

| Plasmonic Nanomaterials [13] [14] | Enhances signal transduction in optical biosensors via Surface Plasmon Resonance. | Gold nanoparticles (40-60 nm), thin gold films (50 nm). |

| 2D Materials & Metasurfaces [15] | Provides high surface area and tunable optoelectronic properties for enhanced sensitivity. | Graphene, MoS₂ (Molybdenum Disulfide), Black Phosphorus. |

| Biorecognition Elements [13] | Provides high specificity for binding to target cancer biomarkers. | Monoclonal Antibodies, Single-Stranded DNA Aptamers, Engineered Proteins. |

| Phase-Change Materials [15] | Enables dynamic tunability and reconfigurability of sensor properties. | GST (Germanium-Antimony-Tellurium, amorphous/crystalline phases). |

| Liquid Biopsy Components [16] | Serves as the analyte for non-invasive cancer detection and monitoring. | Circulating Tumor DNA (ctDNA), Extracellular Vesicles/Exosomes. |

| AI/ML Software Tools [14] [16] | Used for sensor design optimization, data analysis, and predictive diagnostics. | Python with Scikit-learn, XGBoost, SHAP library for model interpretation. |

Liquid biopsy represents a transformative approach in oncology, enabling the minimally invasive detection and analysis of tumor-derived components from biofluids. The three primary biomarkers—Circulating Tumor DNA (ctDNA), Circulating Tumor Cells (CTCs), and Extracellular Vesicles (EVs)—provide complementary information for cancer diagnosis, prognosis, and treatment monitoring [17] [18]. These biomarkers originate from tumors and circulate in bodily fluids such as blood, offering a real-time snapshot of tumor heterogeneity and evolution [19].

Compared to traditional tissue biopsy, liquid biopsy provides significant clinical advantages: it is minimally invasive, allows for real-time monitoring of treatment response and resistance, captures tumor heterogeneity, and enables early detection of recurrence [18] [19]. The integration of these liquid biopsy approaches with emerging digital sensing technologies is paving the way for advanced point-of-care cancer diagnostics.

Table 1: Core Liquid Biopsy Biomarkers and Characteristics

| Biomarker | Origin | Key Components | Half-Life | Primary Clinical Applications |

|---|---|---|---|---|

| CTC | Cells shed from primary or metastatic tumors | Intact tumor cells with DNA, RNA, proteins | 1-2.5 hours [18] | Prognostic assessment, metastasis research, drug resistance studies [17] [20] |

| ctDNA | Apoptotic or necrotic tumor cells [17] | Tumor-derived DNA fragments | ~2 hours [18] | Treatment monitoring, mutation detection, minimal residual disease detection [17] [20] |

| EV | Secreted by tumor cells [17] | DNA, RNA, proteins, lipids, metabolites [17] | Varies | Early cancer detection, monitoring tumor dynamics [17] |

Analysis of Circulating Tumor Cells (CTCs)

CTCs are intact tumor cells that detach from primary or metastatic sites and enter the circulation [17]. They are exceptionally rare, with approximately 1-10 CTCs per 10^9 hematological cells in patient blood, presenting significant technical challenges for their isolation and detection [19]. CTC analysis provides a comprehensive biological entity capable of yielding dynamic information on DNA, RNA, and proteins, offering unmatched advantages in transcriptomic, proteomic, and signal colocalization assessments [17].

Detailed Experimental Protocols

CTC Enrichment and Isolation Methods

CTC isolation strategies exploit differences between tumor cells and blood cells based on biological properties (e.g., surface protein expression) or physical properties (e.g., size, density, deformability) [17] [19].

Table 2: CTC Enrichment and Isolation Technologies

| Method | Principle | Advantages | Limitations | Examples/Systems |

|---|---|---|---|---|

| Immunomagnetic Positive Enrichment | Uses antibody-labeled magnetic beads targeting epithelial markers (e.g., EpCAM) [17] | High purity; FDA-cleared | Misses CTCs undergoing epithelial-mesenchymal transition (EMT) | CellSearch [17] [20] |

| Immunomagnetic Negative Enrichment | Removes hematopoietic cells using CD45-targeted antibodies [17] | Independent of CTC surface markers | Risk of CTC loss during white blood cell removal | EasySep depletion kit [19] |

| Microfluidic Technology | Uses fluid mechanics and surface markers for separation [17] | High sensitivity; improved capture efficiency | Limited to EpCAM-positive CTCs | CTC-Chip, HB-Chip [19] [20] |

| Membrane Filtration | Separates by cell size (CTCs are generally larger) [17] | Good cell integrity; not limited by surface markers | Low purity; misses small CTCs | ISET [20] |

| Density Gradient Centrifugation | Separates by density differences [17] | Can separate CK positive and negative cells | Low separation efficiency | Ficoll-based methods [17] |

CTC Detection and Characterization Methods

Following enrichment, CTCs are typically identified using:

- Immunofluorescence (IF): Staining for epithelial markers (CK8,18,19), leukocyte exclusion marker (CD45), and nuclear staining (DAPI) [17] [19]

- Molecular Characterization: Using PCR to detect tumor-specific transcripts (e.g., EpCAM, MUC-1, HER-2) [19]

- ScMet-Seq Protocol: A recently developed method combining metabolic marker (HK2) immunostaining with single-cell low-pass whole genome sequencing for CNA profiling [21]

scMet-Seq Protocol for CTC Detection

The scMet-Seq protocol represents an advanced approach for sensitive and accurate CTC detection:

- HK2-Based Immunostaining: Suspicious CTCs (sCTCs) are rapidly identified in body fluids using immunostaining for hexokinase 2 (HK2), a metabolic function-associated marker, combined with cytokeratin (CK), CD45, and DAPI [21]

- Click Chemistry-Based Cell Fixation: Amine- and sulfhydryl-reactive crosslinkers form amine-to-amine and amine-to-sulfhydryl crosslinks among biomolecules, significantly improving genome integrity compared to traditional PFA fixation [21]

- Single-Cell Retrieval: Individual fixed sCTCs are retrieved for sequencing

- Tn5 Transposome-Based WGS: A low-cost transposome-based whole genome sequencing protocol combines whole genome amplification and library construction into a single step, reducing processing time from 8.5 hours to 3 hours with 97% cost reduction compared to MALBAC protocol [21]

- CNA Profiling and Analysis: Single-cell low-pass WGS (mean depth: 0.2×) profiles copy number alterations; a positive test requires ≥2 sCTCs with CNA burden >0.02 exhibiting concordant CNA profiles [21]

Research Reagent Solutions

Table 3: Essential Reagents for CTC Analysis

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Immunomagnetic Enrichment Reagents | EpCAM-coated magnetic beads; CD45 depletion beads | CTC enrichment positive/negative selection [17] [19] |

| Immunofluorescence Staining Reagents | Anti-pan-CK, anti-CD45, DAPI | CTC identification and enumeration [17] [19] |

| Cell Fixation Reagents | Amine- and sulfhydryl-reactive crosslinkers | Preserving cellular integrity and nucleic acids [21] |

| Nucleic Acid Amplification Kits | Tn5 transposome-based WGA kits | Whole genome amplification from single cells [21] |

CTC Analysis Workflow: From Sample Collection to Clinical Application

Analysis of Circulating Tumor DNA (ctDNA)

ctDNA consists of short DNA fragments (approximately 20-50 base pairs) released into the circulation through apoptosis, necrosis, or active secretion from tumor cells [18] [20]. It typically represents 0.1-1.0% of total cell-free DNA in cancer patients, with higher concentrations observed in advanced disease [18]. ctDNA has a short half-life of approximately 2 hours, enabling real-time monitoring of tumor dynamics [18].

Detailed Experimental Protocols

Sample Collection and Processing

- Blood Collection: Collect peripheral blood (typically 10-20 mL) in Streck or EDTA tubes to prevent nucleic acid degradation

- Plasma Separation: Centrifuge blood within 2-4 hours of collection at 800-1600 × g for 10-20 minutes to separate plasma from blood cells

- Secondary Centrifugation: Perform high-speed centrifugation (16,000 × g for 10 minutes) to remove residual cells and debris

- Storage: Store plasma at -80°C until DNA extraction

ctDNA Extraction and Quantification

Extract ctDNA using commercial kits optimized for low-abundance cell-free DNA:

- QIAamp Circulating Nucleic Acid Kit (Qiagen)

- Maxwell RSC ccfDNA Plasma Kit (Promega)

- NEXTprep-Mag cfDNA Isolation Kit (Bioo Scientific)

Quantify ctDNA using fluorometric methods (Qubit dsDNA HS Assay) or quantitative PCR (e.g., Alu sequence amplification)

ctDNA Analysis Methods

Table 4: ctDNA Detection and Analysis Technologies

| Method | Principle | Sensitivity | Applications | Examples |

|---|---|---|---|---|

| Next-Generation Sequencing (NGS) | High-throughput sequencing of multiple genomic regions | Varies (0.1%-5%) [20] | Comprehensive mutation profiling, discovery | Whole genome, targeted panels [20] |

| Droplet Digital PCR (ddPCR) | Partitioning samples into nanoliter droplets for absolute quantification | 0.01%-0.1% [20] | Monitoring known mutations, treatment response | EGFR mutation detection [20] |

| BEAMing | Beads, Emulsion, Amplification, and Magnetics | ~0.01% | Ultrasensitive detection of rare mutations | KRAS, TP53 mutations [18] |

| ARMS-PCR | Amplification Refractory Mutation System | ~1% | Detection of specific point mutations | EGFR T790M [20] |

Research Reagent Solutions

Table 5: Essential Reagents for ctDNA Analysis

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Blood Collection Tubes | Streck Cell-Free DNA BCT, EDTA tubes | Sample stabilization and preservation |

| Nucleic Acid Extraction Kits | QIAamp Circulating Nucleic Acid Kit | ctDNA isolation from plasma |

| Library Preparation Kits | KAPA HyperPrep Kit, Illumina cfDNA Library Prep | NGS library construction |

| Target Enrichment Panels | IDT xGen Panels, Twist Panels | Capture cancer-relevant genes |

ctDNA Analysis Workflow: From Blood Draw to Clinical Interpretation

Analysis of Extracellular Vesicles (EVs)

EVs are lipid-bilayer enclosed particles secreted by cells that carry molecular cargo including DNA, RNA, proteins, lipids, and metabolites [17]. Tumor-derived EVs play crucial roles in driving malignant cell behavior, including stimulating tumor growth, suppressing immune responses, inducing angiogenesis, and facilitating metastasis [17]. EV analysis provides a comprehensive snapshot of tumor-derived material, making them particularly attractive as cancer biomarkers.

Detailed Experimental Protocols

EV Isolation and Enrichment Methods

- Ultracentrifugation: The gold standard method involving sequential centrifugation steps culminating at 100,000-200,000 × g for 70-120 minutes

- Precipitation-Based Kits: Commercial polymer-based precipitation kits (e.g., ExoQuick, Total Exosome Isolation Reagent) offering simplicity but potential co-precipitation of contaminants

- Size-Exclusion Chromatography: Separates EVs from soluble proteins based on size, providing high purity but potential sample dilution

- Immunoaffinity Capture: Uses antibodies against EV surface markers (CD9, CD63, CD81) for specific subpopulation isolation

- Microfluidic Isolation: Emerging technologies using immunoaffinity or size-based capture in microfluidic devices

EV Characterization and Cargo Analysis

- Nanoparticle Tracking Analysis: Determines EV size distribution and concentration

- Transmission Electron Microscopy: Visualizes EV morphology and structure

- Western Blotting: Confirms presence of EV markers (CD9, CD63, CD81, TSG101) and absence of negative markers (calnexin)

- RNA Extraction and Analysis: Isolate EV RNA using commercial kits (e.g., miRCURY RNA Isolation Kit) followed by RNA sequencing or qRT-PCR for miRNA/mRNA profiling

- Protein Analysis: Mass spectrometry or immunoassays for protein cargo characterization

Research Reagent Solutions

Table 6: Essential Reagents for EV Analysis

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| EV Isolation Kits | ExoQuick, Total Exosome Isolation Reagent | Rapid EV precipitation from biofluids |

| Immunoaffinity Beads | CD63/CD81/CD9 magnetic beads | Specific subpopulation isolation |

| EV Characterization Kits | ExoELISA kits, MACSPlex exosome kits | EV quantification and phenotyping |

| RNA Extraction Kits | miRCURY RNA Isolation Kit | RNA isolation from EV preparations |

Integration with Digital Sensing and Point-of-Care Technologies

The convergence of liquid biopsy with digital sensing technologies is creating transformative opportunities for point-of-care cancer diagnostics [22] [1]. Microfluidic biosensors have emerged as powerful platforms for detecting liquid biopsy biomarkers, providing enhanced sensitivity, specificity, and rapid analysis in compact formats [23].

Microfluidic and Sensor Integration

Advanced microfluidic devices integrate multiple operational elements to manipulate liquid samples at nano- or micro-scales, offering advantages of compact size, portability, minimal sample consumption, and shortened processing time [23]. These devices can be integrated with various detection modalities:

- Electrochemical Sensors: High sensitivity for detecting low-concentration biomarkers [23]

- Fluorescence Detection: Utilizing fluorescent labeling for high specificity [23]

- Surface Enhanced Raman Scattering (SERS): Nanoparticle-enhanced signal amplification for improved detection capabilities [23]

- Multiplexed Lateral Flow Immunoassays: Simultaneous detection of multiple cancer biomarkers in low-cost, portable formats [3]

Artificial Intelligence and Machine Learning Integration

AI and ML algorithms are being embedded into point-of-care testing platforms to enhance the accuracy, sensitivity, and efficiency of liquid biopsy analysis [24]. ML applications include:

- Image Analysis: Automated interpretation of fluorescence signals or cell morphology using convolutional neural networks [24]

- Signal Processing: Enhanced detection of low-abundance biomarkers through advanced signal processing algorithms [24]

- Predictive Modeling: Identification of complex patterns in multi-omics data for improved diagnostic classification [24]

- Sensor Optimization: Computational co-optimization of sensor hardware and design parameters [24]

Emerging Point-of-Care Platforms

- Portable Nucleic Acid Amplification: Loop-mediated isothermal amplification (LAMP) provides practical alternatives to PCR in decentralized settings, operating at constant temperatures (60°C-70°C) without thermal cycling [3]

- Integrated Imaging Systems: Portable optical coherence tomography and fluorescence-guided microscopy systems for noninvasive visualization of cellular changes [3]

- Wearable Sensors: Continuous monitoring devices for tracking cancer biomarkers in real-time [1]

Technical Considerations and Challenges

While liquid biopsy technologies show tremendous promise, several challenges remain for their widespread clinical implementation:

Sensitivity and Specificity: Detecting rare biomarkers (e.g., CTCs, low-frequency mutations) against high background noise requires ongoing improvement in detection limits [17] [23]

Standardization: Lack of standardized protocols across platforms affects reproducibility and comparability of results [19]

Analytical Validation: Rigorous validation of pre-analytical, analytical, and post-analytical phases is essential for clinical adoption [19]

Cost and Accessibility: Reducing costs and complexity is crucial for implementation in resource-limited settings [3]

Regulatory Approval: Navigating regulatory pathways for novel diagnostic platforms requires substantial evidence of clinical utility [24]

The ongoing integration of liquid biopsy with advanced digital sensing technologies, microfluidics, and artificial intelligence promises to address these challenges, ultimately enabling more accessible, affordable, and precise cancer diagnostics for point-of-care applications.

The REASSURED criteria represent a modern blueprint for developing ideal point-of-care (POC) diagnostic tests, establishing a benchmark for accessibility, affordability, and accuracy in disease detection. Originally introduced by the World Health Organization (WHO) as the ASSURED criteria (Affordable, Sensitive, Specific, User-friendly, Rapid and robust, Equipment-free, and Deliverable), the framework has been updated to reflect technological advancements, evolving into REASSURED with the addition of Real-time connectivity and Ease of specimen collection [25] [26]. This expanded framework now serves as a critical guideline for designing next-generation POC devices, particularly for complex applications such as cancer diagnosis and management where timely, accurate, and accessible testing can significantly impact patient outcomes [27].

The transition from ASSURED to REASSURED marks a significant evolution in diagnostic standards, incorporating digital technology and mobile health (m-health) capabilities to create connected diagnostic systems [25]. These innovations are particularly relevant for cancer care, where continuous monitoring and real-time data transmission can enable proactive management and personalized treatment strategies [1]. The REASSURED framework aims to ensure that diagnostic tests not only detect diseases accurately but also deliver this information promptly to healthcare providers, enabling immediate clinical decision-making even in remote or resource-limited settings [28] [27].

Table 1: The Evolution from ASSURED to REASSURED Criteria

| ASSURED Criteria | Additional REASSURED Elements | Description |

|---|---|---|

| Affordable | Real-time connectivity | Integration with digital networks for instant data transmission |

| Sensitive | Ease of specimen collection | Use of non-invasive or easily obtainable samples |

| Specific | ||

| User-friendly | ||

| Rapid & Robust | ||

| Equipment-free | ||

| Deliverable |

Comprehensive Analysis of REASSURED Components

Real-time Connectivity

Real-time connectivity enables the instantaneous transmission of diagnostic results to healthcare professionals and central databases, facilitating remote consultation and timely medical intervention. This capability is particularly valuable for cancer management in underserved regions where specialist access is limited [25]. The integration of Internet of Things (IoT) technologies with POC devices allows for continuous monitoring of cancer biomarkers, creating a dynamic data stream for personalized treatment adjustments [27]. Furthermore, connected diagnostic systems contribute to broader public health initiatives by enabling real-time disease surveillance and epidemiological tracking [26].

Ease of Specimen Collection

This criterion emphasizes the importance of non-invasive or minimally invasive sample collection methods such as finger-prick blood, saliva, urine, or nasal swabs [26]. For cancer diagnostics, this translates to developing tests that utilize liquid biopsies (blood) instead of traditional tissue biopsies, significantly reducing patient discomfort and improving testing accessibility [27]. Simplified specimen collection is particularly crucial for decentralized testing scenarios where trained phlebotomists may not be available, enabling patient self-collection or testing by individuals with minimal technical training [29].

Affordable

Affordability remains a cornerstone of the REASSURED framework, ensuring diagnostic tests are accessible across diverse socioeconomic settings. This is especially important for cancer screening programs implemented in low-resource regions [28] [25]. Affordable diagnostics enable widespread screening, potentially detecting cancers at earlier, more treatable stages. Cost-effectiveness also facilitates frequent monitoring of cancer recurrence or treatment response, which is crucial for long-term disease management [27].

Sensitive

High sensitivity ensures that diagnostic tests can detect low concentrations of cancer biomarkers, enabling early disease identification when treatment is most effective [26]. Achieving sufficient sensitivity is particularly challenging for cancer biomarkers that may be present in minute quantities during early disease stages. Advanced detection methodologies including CRISPR-based systems, isothermal amplification, and enhanced signal amplification strategies are being employed to improve detection limits without compromising test simplicity or cost [30].

Specific

Specificity refers to a test's ability to accurately distinguish target biomarkers from non-target molecules in complex biological samples, minimizing false-positive results [26]. For cancer diagnostics, high specificity is crucial due to the heterogeneous nature of cancer biomarkers and their potential cross-reactivity with other molecules. Multiplexed detection systems that simultaneously identify multiple biomarker signatures can enhance diagnostic specificity by providing confirmatory data points within the same test platform [26].

User-friendly

User-friendly designs enable operation by individuals with minimal technical training, making sophisticated cancer diagnostics accessible in primary care settings or even patient homes [28] [27]. This characteristic is essential for democratizing cancer screening beyond specialized oncology centers. Simple, intuitive interfaces with clear instructions reduce operator error and ensure result reliability regardless of the testing environment or user expertise [29].

Rapid and Robust

Rapid results delivery enables immediate clinical decision-making, while robustness ensures consistent performance across varying environmental conditions [26]. For cancer diagnostics, rapid turnaround times facilitate same-day consultation and treatment planning, potentially reducing patient anxiety and improving adherence to follow-up care. Robustness is particularly important for POC devices deployed in field settings where controlled laboratory conditions cannot be maintained [29].

Equipment-free or Simple

This criterion emphasizes minimal reliance on complex instrumentation, favoring self-contained test formats that require little to no additional equipment [25]. While some cancer diagnostic platforms may incorporate simple readers for quantitative results, the core assay should function without sophisticated laboratory infrastructure. Paper-based microfluidic devices and lateral flow platforms represent promising approaches that meet this criterion while maintaining analytical performance [30] [27].

Deliverable to End-users

Deliverability encompasses the entire test distribution pipeline, ensuring that diagnostics reach their intended users while maintaining stability during storage and transport [26]. For cancer diagnostics targeting remote populations, this requires robust packaging, extended shelf-life without refrigeration, and seamless integration into existing supply chains. This criterion completes the framework by addressing the practical challenges of implementing POC diagnostics in real-world settings [28].

Experimental Protocols for REASSURED-Compliant Assay Development

Protocol 1: Development of a Multiplex Lateral Flow Immunoassay for Cancer Biomarkers

Objective: To develop a multiplex lateral flow assay (LFA) capable of simultaneously detecting three cancer biomarkers (CEA, CA-125, and PSA) in serum samples, complying with REASSURED criteria.

Materials:

- Nitrocellulose membrane (25 mm width)

- Conjugate pad (glass fiber)

- Sample pad (cellulose)

- Absorption pad (cellulose)

- Gold nanoparticle-antibody conjugates (anti-CEA, anti-CA-125, anti-PSA)

- Test line antibodies (capture antibodies for each biomarker)

- Control line antibody (goat anti-mouse IgG)

- Phosphate buffered saline (PBS), pH 7.4

- Blocking solution (1% BSA in PBS)

- Serum samples (patient and control)

Procedure:

- Membrane Preparation: Cut nitrocellulose membrane to appropriate size (approximately 30 cm length).

- Test Line Deposition: Using a lateral flow reagent dispenser, deposit three distinct test lines for CEA, CA-125, and PSA at 1.5 μL/cm flow rate with 5 mm spacing between lines.

- Control Line Deposition: Deposit control line (goat anti-mouse IgG) 5 mm downstream from the last test line.

- Membrane Drying: Dry membrane at 37°C for 12 hours in a low-humidity environment.

- Conjugate Pad Preparation: Apply gold nanoparticle-antibody conjugates (mixed solution of all three detection antibodies) to conjugate pad at 5 μL/cm, then dry at 37°C for 2 hours.

- Assembly: Layer components in order: sample pad, conjugate pad, nitrocellulose membrane, absorption pad on backing card with 2 mm overlaps between components.

- Lamination: Laminate assembled card using a lateral flow laminator.

- Cutting: Cut laminated card into 4 mm wide strips using an automated cutter.

- Cassette Housing: Place individual strips into plastic cassettes.

- Quality Control: Test each batch with positive and negative controls to verify performance.

Implementation Considerations:

- For real-time connectivity, integrate with a smartphone reader application that captures test line intensities and transmits results to healthcare providers.

- Ensure equipment-free operation by designing the test for visual interpretation with clear positive/negative reference guides.

- Maintain affordability by optimizing reagent volumes and using cost-effective materials without compromising performance.

Protocol 2: Smartphone-Based Quantitative Analysis of POC Cancer Tests

Objective: To implement a smartphone-based reader system for quantitative interpretation of POC cancer diagnostic tests, enabling real-time connectivity and data transmission.

Materials:

- Smartphone with camera (minimum 12 MP)

- 3D-printed test holder with consistent lighting

- Image processing application (custom-developed)

- Color calibration card

- Reference test strips with known biomarker concentrations

Procedure:

- Apparatus Setup:

- 3D-print test holder that positions the test strip at a fixed distance from the smartphone camera.

- Incorporate LED lighting with diffuser to ensure consistent illumination.

- Include slots for color calibration card in each image.

Software Development:

- Develop image capture interface with manual and automatic capture options.

- Implement color calibration algorithm using reference card in each image.

- Create test line detection algorithm using edge detection and pattern recognition.

- Incorporate intensity quantification relative to control line.

- Develop concentration calculation based on pre-established calibration curve.

Calibration:

- Image reference strips with known biomarker concentrations (0, 1, 5, 10, 25, 50 ng/mL).

- Establish calibration curve for each biomarker using signal intensity ratios (test/control).

- Validate calibration with independent test set.

Testing Protocol:

- Place test strip in holder after 15-minute development time.

- Position color calibration card in designated slot.

- Open application and capture image.

- Allow automated analysis: color correction, line detection, intensity measurement.

- Record quantitative result for each biomarker.

- Transmit encrypted results to electronic health record or healthcare provider.

Validation:

- Compare smartphone quantification results with laboratory standard (ELISA).

- Assess inter-operator and inter-device variability.

- Validate data transmission security and reliability.

Implementation Considerations:

- This protocol enhances REASSURED compliance by adding real-time connectivity while maintaining equipment simplicity through use of ubiquitous smartphones.

- The approach supports multiplex detection through precise quantification of multiple test lines.

- User-friendly design is maintained through automated analysis and intuitive interface.

Table 2: Research Reagent Solutions for REASSURED-Compliant Cancer Diagnostics

| Reagent/Material | Function | Application Examples | REASSURED Criteria Enhanced |

|---|---|---|---|

| Gold nanoparticles | Signal generation in lateral flow assays | Conjugate with detection antibodies for visual test lines | Affordable, Equipment-free, User-friendly |

| Cell-free expression systems | Synthetic biology-based detection | Engineered biosensors for cancer biomarkers | Affordable, Deliverable, Equipment-free |

| CRISPR-Cas systems | Nucleic acid detection | Detection of cancer-specific mutations | Sensitive, Specific |

| Paper-based microfluidics | Liquid handling platform | Microfluidic paper analytical devices (μPADs) | Equipment-free, Affordable, Deliverable |

| Smartphone readers | Result quantification and connectivity | Quantitative analysis and data transmission | Real-time connectivity, Affordable |

Application in Cancer Diagnosis: Specific Considerations

The implementation of REASSURED criteria in cancer diagnostics addresses several unique challenges in oncology care, particularly regarding early detection, monitoring, and accessibility. Cancer management presents distinct requirements compared to infectious disease diagnostics, including the need for quantitative results, multiplexed detection, and longitudinal monitoring [27].

Early Detection Challenges

Many cancers, including pancreatic, ovarian, and prostate cancer, show no obvious symptoms in early stages, making screening programs essential for timely intervention [27]. REASSURED-compliant POC devices can enable widespread screening, particularly in regions with limited access to advanced medical imaging or laboratory facilities. For example, tests detecting cancer-specific biomarkers in easily obtainable samples like blood, urine, or saliva could facilitate regular screening at the primary care level [27].

Multiplexed Detection for Cancer Heterogeneity

Tumor heterogeneity presents a significant challenge in cancer diagnosis and treatment selection. REASSURED-compliant multiplexed diagnostics that simultaneously detect multiple cancer biomarkers or mutation signatures can provide more comprehensive diagnostic information from a single sample [26]. This approach is particularly valuable for identifying appropriate targeted therapies and assessing treatment response. Multiplexed vertical flow assays and advanced lateral flow platforms with multiple test lines represent promising formats for such applications [24].

Integration with Digital Health Technologies

The incorporation of real-time connectivity in cancer POC devices enables seamless integration with broader digital health ecosystems. This connectivity supports tele-oncology consultations, remote monitoring of cancer survivors, and population-level cancer surveillance [1] [27]. When combined with artificial intelligence algorithms for result interpretation, connected POC devices can enhance diagnostic accuracy while maintaining accessibility [24].

Technical Considerations for Cancer Biomarker Detection

Implementing REASSURED criteria for cancer biomarkers presents unique technical challenges compared to infectious disease targets. Cancer biomarkers often exist at lower concentrations, requiring enhanced sensitivity without compromising other criteria. Additionally, the quantitative nature of many cancer biomarkers necessitates accurate measurement rather than simple presence/absence detection [30]. Approaches such as signal amplification strategies, improved reporter systems, and integrated readers can address these challenges while maintaining REASSURED compliance [30] [26].

The REASSURED framework provides a comprehensive set of criteria to guide the development of next-generation POC devices optimized for cancer diagnosis and management. By addressing all aspects from specimen collection to result delivery, REASSURED-compliant devices have the potential to transform cancer care through improved accessibility, timely diagnosis, and personalized monitoring. Continued innovation in diagnostic technologies, particularly in multiplexing capabilities, connectivity features, and user-centered design, will further enhance the implementation of this framework in oncology practice. As these technologies evolve, REASSURED criteria will serve as an essential benchmark ensuring that advances in cancer diagnostics translate to meaningful improvements in patient outcomes across diverse healthcare settings.

The disproportionate burden of cancer in low- and middle-income countries (LMICs), which account for nearly two-thirds of global cancer deaths, underscores an urgent need for diagnostic innovation [3]. Point-of-care technologies (POCTs) represent a paradigm shift in cancer management by decentralizing complex diagnostic procedures, thus providing rapid, cost-effective testing directly at or near the site of patient evaluation [3]. The World Health Organization's ASSURED criteria (Affordable, Sensitive, Specific, User-friendly, Rapid and Robust, Equipment-free, and Deliverable) provide a foundational framework for developing these technologies for low-resource settings [30]. The integration of digital sensing technologies, particularly artificial intelligence (AI) and machine learning (ML), is now poised to transform these platforms from simple diagnostic tools into sophisticated clinical decision-support systems capable of matching the analytical performance of centralized laboratories [31]. This application note details the current protocols and technological advances in POC cancer diagnostics, providing researchers with practical methodologies for implementation in resource-constrained environments.

Technological Platforms and Performance Metrics

Established and Emerging POC Platforms

Table 1: Comparison of Major POC Diagnostic Platforms

| Device Type | Advantages | Disadvantages | Primary Cancer Applications |

|---|---|---|---|

| Lateral Flow Test (LFT) | Fast, Inexpensive, Stable, Versatile, Equipment-free [30] | Primarily qualitative output, Poor sensitivity [30] | Detection of tumor antigens (e.g., CEA, AFP, CA-125) [3] |

| Microfluidic Paper-Based Device (μPAD) | Fast, Inexpensive, Very small sample volume, Enables multiplexing [30] | Sample evaporation, Non-uniform sample distribution, Sensitivity challenges [30] | Multiplexed detection of protein biomarkers [30] |

| Nucleic Acid-Based (e.g., LAMP) | Isothermal (no thermal cycler needed), Robust, High sensitivity, Works with crude samples [3] | Added assay complexity, Challenges in accurate quantification [30] | Detection of viral oncogenes (HPV, HBV), circulating tumor DNA [3] |

| Portable Imaging Systems | Non-invasive, High-resolution visualization, Real-time decision support [3] | Cost can be prohibitive, Requires some user training | Optical coherence tomography for epithelial cancers [3] |

| Wearable Sensors | Continuous monitoring, Minimally invasive, Real-time data streaming [1] [31] | Limited to small molecules and electrophysiological signals [30] [31] | Monitoring of metabolic markers, potentially liquid biopsy components [31] |

Performance of Commercially Available Lateral Flow Tests

Table 2: Example Performance of Commercial Lateral Flow Assays

| Company | Product Name | Target Disease/Condition | Analyte/Antigen | Sensitivity | Specificity |

|---|---|---|---|---|---|

| Alere | Alere Determine TB LAM | Tuberculosis in HIV+ patients | Lipoarabinomannan (LAM) Ag | - | - |

| Alere | Binax NOW | Malaria | Plasmodium Ag | P. falciparum: 99.7%, P. vivax: 93.5% | P. falciparum: 94.2%, P. vivax: 99.8% |

| Alere | Alere Determine HIV-1/2 Ag/Ab Combo | AIDS | HIV-1/2 antibodies and free HIV-1 p24 Ag | - | 99.75% |

| Quidel Corp. | Quick Vue RSV Test | Infantile bronchiolitis | Respiratory syncytial virus (RSV) Ag | 92% (swab) | 92% (swab) |

| IMMY | CrAg A | Cryptococcal meningitis | C. neoformans, C. gattii | 100% | 94% |

Detailed Experimental Protocols

Protocol: Loop-Mediated Isothermal Amplification (LAMP) for Cancer-Associated Viral DNA

Principle: This protocol describes the detection of oncogenic viral DNA (e.g., HPV16/18, Hepatitis B) using isothermal amplification, eliminating the need for complex thermal cycling equipment [3]. LAMP uses a strand-displacing DNA polymerase and 4-6 primers recognizing distinct regions of the target DNA to achieve high amplification efficiency at a constant temperature of 60-70°C.

Workflow Diagram:

Materials & Reagents:

- Sample: Crude lysate from swab or liquid biopsy (e.g., plasma).

- LAMP Primer Mix: Custom designed primers (F3, B3, FIP, BIP) for target DNA.

- Isothermal Master Mix: Contains Bst 2.0 or 3.0 DNA polymerase, betaine, dNTPs, MgSO4, and reaction buffer.

- Fluorescent Dye: SYTO-9, Calcein, or Hydroxy Naphthol Blue (HNB) for visual detection.

- Equipment: Portable dry bath or block heater (65°C), UV light (if using calcein), or naked eye for color change.

Procedure:

- Sample Preparation: Mix 50 µL of crude sample with 10 µL of prepared lysis buffer. Incubate at room temperature for 5 minutes. Centrifuge briefly to pellet debris.

- Reaction Setup: In a 0.2 mL tube, combine:

- 12.5 µL of 2x LAMP Master Mix

- 5 µL of Primer Mix (FIP/BIP: 1.6 µM each; F3/B3: 0.2 µM each)

- 1 µL of fluorescent dye (if used)

- 2.5 µL of sample supernatant

- Nuclease-free water to a final volume of 25 µL

- Amplification: Place tubes in a pre-heated dry bath at 65°C for 30 minutes.

- Detection:

- Visual (HNB): A color change from violet to sky blue indicates a positive result.

- UV (Calcein): Green fluorescence under UV light indicates a positive result.

- Turbidity: Positive amplification leads to a white precipitate (magnesium pyrophosphate), visible as turbidity.

Protocol: Multiplexed Lateral Flow Immunoassay for Protein Biomarkers

Principle: This protocol enables the simultaneous detection of multiple cancer-associated protein biomarkers (e.g., CEA, AFP, CA-125) on a single test strip [3]. It employs specific capture antibodies immobilized in distinct test lines and detection antibodies conjugated to colored nanoparticles (e.g., gold, latex).

Workflow Diagram:

Materials & Reagents:

- Lateral Flow Strip: Comprising sample pad, conjugate pad, nitrocellulose membrane with test and control lines, and absorbent pad.

- Capture Antibodies: Monoclonal antibodies specific to target biomarkers (e.g., anti-CEA, anti-AFP) for test lines.

- Detection Antibodies: Different monoclonal antibodies for the same biomarkers, conjugated to gold nanoparticles (AuNPs) or fluorescent latex beads.

- Running Buffer: Phosphate buffer with surfactants (e.g., Tween-20) to ensure optimal flow and binding.

- Sample: Serum or plasma (50-100 µL).

- Reader (Optional): Smartphone-based reader or portable fluorescence scanner for quantification.

Procedure:

- Strip Preparation: Test and control lines are dispensed onto the nitrocellulose membrane using a precision dispenser. The conjugate pad is pre-treated with the lyophilized antibody-nanoparticle conjugates. The strip components are assembled and cut to size.

- Assay Execution: Apply 100 µL of serum sample to the sample pad. Add 2-3 drops of running buffer to facilitate sample movement.

- Incubation: Allow the strip to develop at room temperature for 15-20 minutes.

- Result Interpretation:

- Qualitative: The appearance of colored test lines alongside the control line indicates a positive result.

- Quantitative: Use a smartphone camera with a dedicated adapter and an app to capture the image of the strip. The pixel intensity of the test lines is analyzed to generate a concentration value based on a pre-loaded calibration curve.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for POC Development

| Reagent/Material | Function | Example Application | Considerations for Low-Resource Settings |

|---|---|---|---|

| Gold Nanoparticles (AuNPs) | Visual detection label; conjugate to antibodies or oligonucleotides. | Colorimetric reporter in lateral flow assays [32]. | Highly stable, do not require refrigeration, cost-effective. |

| Bst DNA Polymerase 2.0/3.0 | Strand-displacing enzyme for isothermal DNA amplification. | Core enzyme in LAMP assays for nucleic acid detection [3]. | Robust, works at constant 65°C, tolerant to sample inhibitors. |

| Lyophilized Reagents | Pre-mixed, dried reaction components for long-term storage. | Master mixes for amplification or antibody conjugates in LFTs [3]. | Eliminates cold chain, extends shelf-life, enhances portability. |

| Cell-Free Expression (CFE) Systems | Synthetic biology system using cellular machinery without intact cells. | Biosensing of small molecules, ions, and nucleic acids [30]. | Can be lyophilized and rehydrated on demand; highly programmable. |

| Quantum Dots / Lanthanide-doped Nanoparticles | Fluorescent reporters for enhanced sensitivity and multiplexing. | Signal amplification in multiplexed LFIAs and μPADs [3]. | Enable quantitative readout and lower limits of detection. |

| CRISPR-Cas Systems (e.g., Cas13a) | Nucleic acid detection with high specificity; collateral cleavage activity. | Specific detection of amplified DNA/RNA products; SHERLOCK protocol [30]. | Provides single-base pair specificity, can be coupled with isothermal amplification. |

Data Integration and AI-Enhanced Analysis

The integration of AI, particularly machine learning (ML), elevates POC devices from simple readers to intelligent diagnostic systems [31]. Figure 3 below outlines the workflow for integrating POC data with AI analysis.

AI-Enhanced POC Data Workflow:

Protocol for Smartphone-Based Quantification of Lateral Flow Assays:

- Image Capture: Place the developed lateral flow strip in a simple, 3D-printed cradle that minimizes ambient light interference. Use a smartphone to capture an image of the detection zone.

- Pre-processing: The dedicated smartphone application automatically corrects for perspective, identifies the test and control lines, and converts the image to a suitable color space (e.g., HSV).

- Feature Extraction: The app extracts the mean pixel intensity or integrated density for each test line.

- ML-Based Quantification: A pre-trained model (e.g., a regression model) maps the extracted features to biomarker concentrations. This model is calibrated against a set of standards with known concentrations, accounting for sample matrix variability where possible [30].

- Output: The app displays the quantitative result, provides an interpretation (e.g., "elevated"), and can optionally store the data or transmit it to a central health information system.

The convergence of microfluidics, nanomaterials, synthetic biology, and artificial intelligence is rapidly advancing the capabilities of point-of-care technologies for cancer diagnosis. The protocols and tools detailed in this application note provide a practical roadmap for researchers and drug development professionals to develop and implement these life-saving technologies. By adhering to the ASSURED/REASSURED criteria and focusing on robust, user-centric design, the next generation of POC devices holds the potential to dramatically narrow global health disparities and make quantitative, early cancer diagnosis a reality for all populations, regardless of resource setting.

From Lab to Clinic: Operational Technologies and AI-Driven Workflows

Loop-mediated isothermal amplification (LAMP) as a PCR alternative

Loop-mediated isothermal amplification (LAMP) represents a paradigm shift in nucleic acid amplification technology, offering a rapid, sensitive, and cost-effective alternative to conventional polymerase chain reaction (PCR). As molecular diagnostics increasingly transition toward point-of-care (POC) settings, particularly in the realm of cancer detection and infectious disease diagnosis, LAMP technology addresses critical limitations of PCR-based methods, including instrument dependency, operational complexity, and prolonged turnaround times [33] [34]. The isothermal nature of LAMP eliminates the need for thermal cyclers, thereby reducing both operational costs and technical barriers for implementation in resource-limited environments [35].

This application note provides a comprehensive technical overview of LAMP methodology, detailing its fundamental principles, comparative advantages over PCR, and detailed protocols for assay development and validation. With a specific focus on applications in cancer research and diagnostics, we frame LAMP within the broader context of digital sensing technologies for point-of-care cancer diagnosis, highlighting its potential to revolutionize molecular detection paradigms through simplified workflows without compromising analytical performance [34].

Fundamental Mechanism

LAMP is an autocatalytic nucleic acid amplification process that operates at a constant temperature ranging between 60-65°C, utilizing a strand-displacing DNA polymerase (typically Bst polymerase) and four to six specifically designed primers that recognize distinct regions of the target sequence [35] [36]. The amplification mechanism involves three primary stages: (1) initial structure generation, (2) cycling amplification, and (3) elongation and recycling. Unlike PCR, which relies on thermal denaturation cycles to separate DNA strands, LAMP employs strand-displacement activity to initiate and sustain amplification, forming characteristic loop structures that serve as initiation sites for subsequent amplification cycles [35].

The core primer set includes Forward Inner Primer (FIP), Backward Inner Primer (BIP), Forward Outer Primer (F3), and Backward Outer Primer (B3), with the optional addition of loop primers (LF and LB) to accelerate reaction kinetics. These primers work synergistically to generate stem-loop DNA structures with inverted repeats at each end, enabling exponential amplification through self-primed strand displacement DNA synthesis [36]. The entire process yields tremendous amplification—approximately 11 μg of DNA in a 25 μL reaction, representing a 55-fold greater yield compared to conventional PCR [37].

Comparative Analysis: LAMP vs. PCR Technologies

Table 1: Performance comparison of LAMP with various PCR methodologies

| Parameter | LAMP | Conventional PCR | Nested PCR | Real-time PCR |

|---|---|---|---|---|

| Amplification Temperature | Constant (60-65°C) | Thermal cycling (30-40 cycles) | Two-step thermal cycling | Thermal cycling (40-45 cycles) |

| Reaction Time | 15-60 minutes [35] [38] | 2-4 hours | 4-6 hours | 1-2 hours [35] |

| Limit of Detection (Entamoeba histolytica) | 1 trophozoite [36] | 1,000 trophozoites [36] | 100 trophozoites [36] | 100 trophozoites [36] |

| Instrument Requirement | Heating block or water bath | Thermal cycler | Thermal cycler | Real-time thermal cycler |

| Sample Purity Tolerance | High (resistant to inhibitors) [39] | Moderate | Low | Low |

| Primer Specificity | High (6-8 binding regions) | Moderate (2 binding regions) | High (4 binding regions) | Moderate (2 binding regions) |

| Amplicon Detection | Colorimetric, turbidity, fluorescence, lateral flow [37] [35] | Gel electrophoresis | Gel electrophoresis | Fluorescence |

| Quantification Capability | Possible with real-time monitoring [40] | No | No | Yes |

| Throughput Potential | Moderate to High | Moderate | Low | High |

| Cost per Reaction | Low | Low to Moderate | Moderate | High |

The comparative data demonstrates LAMP's superior sensitivity, detecting a single trophozoite of Entamoeba histolytica compared to 100-1000 for PCR methods [36]. This exceptional sensitivity, coupled with rapid turnaround times and minimal instrumentation requirements, positions LAMP as an ideal technology for point-of-care diagnostic applications.

LAMP Protocol for Molecular Detection

Primer Design Considerations

Effective LAMP assay design begins with strategic primer selection targeting 6-8 distinct regions within a 150-300 bp sequence. The following criteria ensure optimal amplification efficiency:

- Target Selection: Identify highly conserved genomic regions with minimal sequence variation. For cancer applications, focus on mutation hotspots, fusion genes, or differentially expressed transcripts [34].

- Primer Design Tools: Utilize specialized software such as Primer Explorer V4 or open-source alternatives with LAMP-specific algorithms [35].

- Sequence Parameters: Primers should exhibit 40-60% GC content, melting temperatures of 60-65°C for FIP/BIP, and 55-60°C for F3/B3, with minimal secondary structure or self-complementarity [36].

- Validation: Confirm specificity through BLAST analysis against relevant genomic databases before synthesis [35].

Table 2: LAMP primer sequences for SARS-CoV-2 detection

| Primer Name | Sequence (5' → 3') | Modification | Function |

|---|---|---|---|

| N2-F3 | ACCAGGAACTAATCAGACAAG | None | Outer forward primer |

| N2-B3 | GACTTGATCTTTGAAATTTGGATCT | None | Outer reverse primer |