Foundation Models for Biomarker Prediction from H&E Slides: Methods, Applications, and Clinical Translation

This article explores the transformative role of foundation models in predicting biomarkers directly from routine H&E-stained histopathology slides.

Foundation Models for Biomarker Prediction from H&E Slides: Methods, Applications, and Clinical Translation

Abstract

This article explores the transformative role of foundation models in predicting biomarkers directly from routine H&E-stained histopathology slides. Aimed at researchers, scientists, and drug development professionals, it covers the foundational concepts of pathology-specific foundation models like PLUTO and Virchow2, details methodologies for fine-tuning and applying them to tasks such as predicting EGFR, PD-L1, and MSI status. The content further addresses key challenges in model optimization and troubleshooting, and critically examines validation frameworks, including real-world silent trials and multi-reader studies, that are essential for clinical adoption. By synthesizing the latest research, this article serves as a comprehensive guide for developing robust, clinically impactful computational pathology tools.

The Rise of Pathology Foundation Models: Core Concepts and Pretraining Strategies

Foundation models are transforming computational pathology by providing versatile, pre-trained deep learning networks that serve as a starting point for developing specialized tools. These models are trained on massive, diverse datasets of histopathology whole-slide images (WSIs) using self-supervised learning (SSL) techniques, allowing them to learn general-purpose representations of histomorphological patterns without requiring manual annotations [1] [2]. A key application driving their adoption is biomarker prediction from routine hematoxylin and eosin (H&E) stained slides, which creates opportunities for more accessible and cost-effective precision oncology [3] [4]. By analyzing morphological patterns in H&E images that are invisible to the human eye, these models can predict molecular alterations, genomic subtypes, and protein biomarkers directly from standard tissue sections [3]. This capability is particularly valuable when tissue is limited for additional molecular tests or when rapid screening is needed before confirmatory testing. The transition from generic encoders to specialized tools represents a paradigm shift in how computational pathology approaches clinical problem-solving, moving from task-specific model development to adaptation of powerful foundational representations.

Taxonomy of Pathology Foundation Models

Architectural Paradigms and Training Approaches

Pathology foundation models employ distinct architectural paradigms and training methodologies, each with specific advantages for biomarker prediction tasks. Vision-only models like Virchow2 are trained exclusively on WSIs using SSL techniques such as contrastive learning and masked image modeling, learning morphological features without textual guidance [2]. These models typically process gigapixel WSIs by dividing them into smaller patches, encoding each patch into an embedding, and then aggregating these embeddings using attention mechanisms to form slide-level representations [3]. Vision-language models like CONCH and TITAN incorporate both histology images and corresponding pathology reports during training, enabling cross-modal alignment where visual patterns are linked with semantic descriptions [1] [2]. This approach allows the models to not only recognize morphological patterns but also understand their diagnostic significance. The multimodal whole-slide foundation model TITAN employs a three-stage pretraining strategy: vision-only unimodal pretraining on region crops, cross-modal alignment with synthetic morphological descriptions at the region level, and finally cross-modal alignment with clinical reports at the whole-slide level [1].

Table: Major Pathology Foundation Models and Their Characteristics

| Model Name | Model Type | Pretraining Data Scale | Key Architectural Features | Notable Applications |

|---|---|---|---|---|

| CONCH | Vision-Language | 1.17M image-caption pairs | Cross-modal alignment | Overall highest performer across morphology, biomarker, and prognosis tasks [2] |

| Virchow2 | Vision-Only | 3.1M WSIs | Self-supervised learning | Superior performance in biomarker prediction tasks [2] |

| TITAN | Multimodal Vision-Language | 335,645 WSIs + 182,862 reports | Three-stage pretraining with knowledge distillation | Zero-shot classification, cross-modal retrieval, report generation [1] |

| Prov-GigaPath | Vision-Only | 171,000 WSIs | Transformer-based whole-slide encoding | Strong performance in biomarker prediction [2] |

Performance Benchmarking Across Clinical Tasks

Independent benchmarking studies have evaluated foundation models across diverse clinical tasks including morphological classification, biomarker prediction, and prognostic analysis. In comprehensive assessments spanning 31 tasks across 6,818 patients and 9,528 slides, CONCH and Virchow2 demonstrated the highest overall performance, with mean AUROCs of 0.71 across all tasks [2]. For biomarker-specific prediction (19 tasks including mutation status and molecular subtypes), Virchow2 and CONCH both achieved mean AUROCs of 0.73, followed closely by Prov-GigaPath at 0.72 [2]. Performance varies significantly based on task characteristics, with vision-language models generally excelling in tasks requiring conceptual understanding of tissue morphology, while vision-only models show particular strength in pure pattern recognition for biomarker prediction. Importantly, models trained on diverse tissue sites consistently outperform those trained on single cancer types, suggesting that morphological diversity in pretraining enhances feature learning and generalizability [2].

Table: Foundation Model Performance Across Task Categories

| Task Category | Top Performing Model(s) | Mean AUROC | Key Strengths |

|---|---|---|---|

| Morphological Tasks (n=5) | CONCH | 0.77 | Tissue classification, anomaly detection [2] |

| Biomarker Prediction (n=19) | Virchow2, CONCH | 0.73 | Mutation prediction, molecular subtype classification [2] |

| Prognostic Tasks (n=7) | CONCH | 0.63 | Survival analysis, treatment response prediction [2] |

| Low-Data Scenarios | Virchow2, PRISM | Varies by task | Maintaining performance with limited training samples [2] |

Application Note: Biomarker Prediction from H&E Slides

Experimental Protocols for Predictive Model Development

Protocol 1: Weakly-Supervised Biomarker Prediction Using Multiple Instance Learning

Purpose: To predict patient-level biomarker status from H&E whole-slide images using weakly supervised learning, without requiring detailed manual annotations [3].

Materials:

- Whole-slide images: Formalin-fixed, paraffin-embedded (FFPE) tissue sections stained with H&E, scanned at 20× or 40× magnification [5]

- Biomarker labels: Patient-level genomic or protein expression data from sequencing, PCR, or IHC [5]

- Computational resources: High-performance GPU workstations with ≥16GB VRAM

- Software frameworks: Python with PyTorch or TensorFlow, and specialized libraries like CLAM or HIStology warehousing toolkit

Procedure:

- Whole-Slide Image Preprocessing:

Feature Extraction:

Multiple Instance Learning:

- Implement an attention-based aggregation mechanism to combine patch-level features into slide-level representations [3]

- Train with patient-level labels using weak supervision, allowing the model to identify informative regions

- Use transformer-based architectures for modeling long-range dependencies between tissue regions [1]

Model Validation:

- Perform rigorous external validation on cohorts from different institutions [5]

- Assess generalizability across scanner types, staining protocols, and patient populations

- Use bootstrap sampling to compute confidence intervals for performance metrics

Protocol 2: Multimodal Integration of H&E and IHC Using Dual-Modality Transformers

Purpose: To enhance biomarker prediction accuracy by integrating features from both H&E and immunohistochemistry (IHC) whole-slide images [6].

Materials:

- Paired H&E and IHC slides: From the same tissue block with spatial correspondence

- Computational resources: High-memory GPU servers capable of processing large multimodal inputs

- Registration algorithms: For aligning H&E and IHC tissue sections

Procedure:

- Dual-Modality Preprocessing:

- Process H&E and IHC slides through separate tissue segmentation pipelines [6]

- Apply rigid or non-rigid registration to align corresponding tissue regions between modalities

- Extract matched patch pairs from both modalities

Modality-Specific Feature Extraction:

- Use foundation models optimized for each stain type

- Process H&E patches through models pre-trained on large H&E datasets

- Use IHC-specific encoders or adapt foundation models for IHC processing

Cross-Modality Fusion:

- Implement dual-transformer architecture with cross-attention mechanisms [6]

- Allow information exchange between H&E and IHC feature representations

- Use late fusion with learned weighting for optimal modality integration

Joint Training and Validation:

- Train with combined loss functions addressing both modality alignment and prediction accuracy

- Validate on held-out test sets with ablation studies to quantify modality contributions

- Assess clinical utility through survival analysis and treatment response correlation [6]

Quantitative Performance of Biomarker Prediction Models

Real-world performance of foundation models for biomarker prediction varies by cancer type, biomarker class, and model architecture. The EAGLE model, fine-tuned for EGFR mutation prediction in lung adenocarcinoma, achieved AUCs of 0.847 on internal validation and 0.870 on external validation across multiple international institutions [5]. In a prospective silent trial simulating real-world deployment, EAGLE maintained an AUC of 0.890, demonstrating robust generalization to novel cases [5]. For microsatellite instability (MSI) prediction in colorectal cancer, dual-modality approaches integrating H&E and IHC have achieved exceptional performance, with AUROCs exceeding 0.97 [6]. Similarly, PD-L1 prediction in breast cancer has reached AUROCs of 0.96 using combined H&E and IHC information [6]. Cross-modality learning approaches like HistoStainAlign, which predicts IHC staining patterns directly from H&E images, have demonstrated weighted F1 scores of 0.830 for PD-L1, 0.735 for P53, and 0.723 for Ki-67 in gastrointestinal and lung tissues [7].

Table: Performance of Specialized Biomarker Prediction Models

| Model | Biomarker | Cancer Type | Performance | Validation Cohort |

|---|---|---|---|---|

| EAGLE [5] | EGFR mutation | Lung adenocarcinoma | AUC: 0.847 (internal), 0.870 (external) | 8,461 slides across 5 institutions |

| DuoHistoNet [6] | MSI/MMRd | Colorectal cancer | AUROC: >0.97 | 20,820 cases |

| DuoHistoNet [6] | PD-L1 | Triple-negative breast cancer | AUROC: >0.96 | 15,173 cases |

| HistoStainAlign [7] | PD-L1 (from H&E) | Gastrointestinal/Lung | F1: 0.830 | Paired H&E-IHC slides |

Successful implementation of foundation models for biomarker prediction requires both computational resources and carefully curated biomedical data. The following table outlines key components of the research toolkit for developing and validating these models.

Table: Essential Research Reagents and Computational Resources

| Resource Category | Specific Items | Function/Application | Implementation Notes |

|---|---|---|---|

| Data Resources | Curated whole-slide image repositories with paired genomic data | Model training and validation | MSKCC, TCGA, institutional biobanks; requires IRB approval [5] |

| Foundation Models | CONCH, Virchow2, TITAN, Prov-GigaPath | Feature extraction and transfer learning | Select based on task: CONCH for multimodal, Virchow2 for biomarker prediction [2] |

| Software Frameworks | PyTorch, TensorFlow, MONAI, Whole Slide Processing libraries | Model development and inference | Optimize for multi-GPU training and large-scale inference |

| Validation Frameworks | Statistical analysis packages, bootstrap resampling tools | Performance assessment and confidence interval estimation | Implement cross-validation at patient level to prevent data leakage |

| Computational Infrastructure | High-performance GPUs (NVIDIA A100, H100), cloud computing platforms | Handling large-scale whole-slide image processing | Require ≥16GB VRAM for processing gigapixel whole-slide images |

Foundation models represent a transformative advancement in computational pathology, providing powerful base architectures that can be adapted for diverse biomarker prediction tasks. The evolution from generic encoders to specialized tools has been accelerated by large-scale pretraining and innovative multimodal approaches. Current research demonstrates that these models can achieve clinical-grade performance for predicting molecular biomarkers including EGFR, MSI, PD-L1, and others directly from H&E images [5] [6]. The emerging paradigm of "precision pathology" leverages these computational tools to extract maximal information from standard histology slides, potentially reducing reliance on more costly and tissue-consuming molecular assays [4]. Future development will likely focus on improving model interpretability, enhancing generalizability across diverse patient populations and laboratory protocols, and integrating multimodal data sources for comprehensive tissue analysis. As these technologies mature, foundation models are poised to become indispensable tools in both diagnostic pathology and oncology drug development, enabling more personalized treatment approaches through accessible biomarker assessment.

The advent of foundation models (FMs) in computational pathology represents a paradigm shift, enabling the extraction of biomarkers from routine hematoxylin and eosin (H&E)-stained whole slide images (WSIs) without extensive task-specific labeling [8] [9]. These models, pretrained on millions of histopathology images using self-supervised learning (SSL), learn generalizable representations that can be fine-tuned for specific predictive tasks. This document details the application of three significant architectures—Virchow2, TITAN, and PLUTO-4—within the context of biomarker prediction research, providing structured data, experimental protocols, and analytical workflows for scientific practitioners.

Model Architectures and Technical Specifications

Virchow2: A Scalable Vision Transformer for Pathology

Virchow2 is a vision transformer (ViT)-based foundation model specifically designed for computational pathology. It exemplifies the scaling of both data and model size to achieve state-of-the-art performance on tile-level tasks [8].

- Architecture and Training: Virchow2 is a 632 million parameter ViT-H model. Its larger variant, Virchow2G, scales to 1.85 billion parameters (ViT-G). Both models were trained using a domain-adapted DINOv2 self-supervised learning algorithm on a massive dataset of 1.7 billion tiles extracted from 3.1 million WSIs [8] [9]. These slides were sourced from a diverse, global cohort of 225,401 patients and included nearly 200 tissue types, as well as both H&E and immunohistochemistry (IHC) stains, scanned at multiple magnifications (5x, 10x, 20x, 40x) [9].

- Domain-Specific Innovations: A key innovation in Virchow2's training is the incorporation of domain-specific augmentations and regularization techniques to address the unique characteristics of histopathology data, which is repetitive, pose-invariant, and contains minimal but meaningful color variation compared to natural images [8].

Table 1: Technical Specifications of Featured Foundation Models

| Model | Architecture | Parameters | Training Data (Tiles) | Training Data (WSIs) | Core Algorithm | Context/Key Feature |

|---|---|---|---|---|---|---|

| Virchow2 | Vision Transformer (ViT-H) | 632 Million | 1.7 Billion | 3.1 Million [8] [9] | DINOv2 [9] | Mixed magnification (5x, 10x, 20x, 40x); Diverse stains (H&E, IHC) [8] [9] |

| Virchow2G | Vision Transformer (ViT-G) | 1.85 Billion | 1.9 Billion [9] | 3.1 Million [8] | DINOv2 [9] | Scaled-up version of Virchow2 [8] |

| TITAN | Memory-driven Transformer | Information not in search results | Information not in search results | Information not in search results | Neural Long-Term Memory [10] [11] | "Surprise metric" for memory retention [11] [12] |

| PLUTO-4 | Information not in search results | Information not in search results | Information not in search results | Information not in search results | Information not in search results | Information not in search results |

TITAN: A Memory-Driven AI Architecture

The TITAN architecture introduces a fundamental advancement in AI design by moving beyond the stateless nature of standard Transformers. It is inspired by the human brain's memory system and is designed to handle long-context sequences more effectively, which has potential implications for complex data analysis like multi-modal biomarker integration [10] [11].

- Core Innovation: TITAN incorporates a neural long-term memory module that works in tandem with the standard attention mechanism (short-term memory). This allows the model to persist and utilize historical information beyond a fixed context window, much like a student referring to semester notes rather than relying solely on immediate recall [11].

- The "Surprise Metric": A critical feature for memory management is a "surprise metric," which prioritizes storing information that violates the model's expectations. This mimics human cognitive processes and ensures efficient use of memory resources by focusing on novel or anomalous data points [11] [12]. This is particularly relevant for biomarker discovery, where rare or unexpected morphological patterns could be of critical importance.

- Implementation: Practical implementations of these memory principles, such as the Titan Memory MCP Server, demonstrate its use as an external neural memory system for AI agents, enabling online learning and adaptation across sessions [12].

PLUTO-4

Specific, detailed architectural and training data information for the PLUTO-4 model was not available within the provided search results.

Application Notes for Biomarker Prediction

Performance Benchmarking

Foundation models are typically evaluated on a battery of downstream tasks to assess their generalizability and potency for biomarker-related applications.

- Virchow2 Performance: Virchow2 and Virchow2G have demonstrated state-of-the-art performance on twelve tile-level tasks, surpassing other top-performing models. This robust performance across a variety of tasks underscores its utility as a powerful feature extractor for histopathology images [8].

- Domain Generalization and Scanner Bias: A significant challenge in deploying models clinically is their performance on out-of-domain data, such as images from a different scanner. A benchmark study evaluating multiple FMs, including UNI, Virchow2, and Prov-GigaPath, found that most are susceptible to scanner bias, manifesting as differences in feature embeddings and a drop in classification performance on data from a held-out scanner [13]. This highlights the critical need for rigorous domain generalization testing in biomarker prediction workflows.

Table 2: Model Performance and Benchmarking Insights

| Model | Reported Performance | Key Strengths | Limitations & Considerations |

|---|---|---|---|

| Virchow2 | State-of-the-art on 12 tile-level tasks [8] | Massive, diverse dataset; Multi-magnification and multi-stain training; Proven strong feature extractor. | Susceptible to scanner bias, like most FMs [13]. |

| TITAN | Information not in search results | Potential for long-context analysis of multi-modal data; Novelty detection via "surprise metric". | Practical application in computational pathology is still exploratory. |

| PLUTO-4 | Information not in search results | Information not in search results | Information not in search results |

| General FM Insight | SSL-trained pathology encoders outperform models pretrained on natural images [9]. | Reduces dependency on labeled data; Can be fine-tuned for numerous downstream tasks. | High computational demand for training and inference [13]. |

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational "reagents" and resources required for working with pathology foundation models.

Table 3: Essential Research Reagents and Resources

| Item | Function/Description | Example/Note |

|---|---|---|

| Whole Slide Images (WSIs) | The primary raw data; gigapixel digital scans of stained tissue sections. | H&E-stained are standard; IHC-stained add diversity [8]. |

| Tile Datasets | Small, fixed-size image crops extracted from WSIs used for model training and inference. | Virchow2 was trained on 1.7B tiles [8]. |

| Self-Supervised Learning (SSL) Algorithm | The method used to pretrain the model on unlabeled data by creating a pretext task. | DINOv2 is a prevalent choice for pathology FMs [8] [9]. |

| Vision Transformer (ViT) Architecture | A neural network architecture that uses self-attention mechanisms to process images. | Base architecture for Virchow2 and many other FMs [8] [9]. |

| Computational Hardware (GPUs) | High-performance graphics processing units are essential for training and fine-tuning large FMs. | Can be a barrier to entry; noted environmental concern [13]. |

| Benchmarking Datasets | Curated datasets with labels for specific tasks used to evaluate model performance and generalizability. | Critical for assessing biomarker prediction capability [9]. |

Experimental Protocols

Protocol 1: Tile-Level Feature Extraction for Downstream Task Fine-Tuning

This is a standard workflow for leveraging a pretrained foundation model like Virchow2 for a specific biomarker prediction task.

Tile-Level Feature Extraction and Fine-Tuning Workflow

Procedure:

- Input & Preprocessing: Obtain gigapixel WSIs. Use a tissue detection algorithm to identify and mask out irrelevant background areas [9].

- Tiling: Extract representative image tiles (e.g., 512x512 pixels) from the foreground tissue regions at a specified magnification (e.g., 20x). This step is computationally necessary due to the immense size of WSIs [8] [9].

- Feature Extraction: Pass each tile through the pretrained foundation model (e.g., Virchow2). Extract the feature embeddings from the model's output layer. These embeddings are high-dimensional, dense vector representations of the tile's morphological content [9].

- Aggregation: For slide-level prediction tasks, aggregate the feature embeddings from all tiles of a single WSI. This can be done via methods like averaging, max-pooling, or using a more advanced attention-based Multiple Instance Learning (MIL) aggregator.

- Fine-Tuning: Use the extracted feature embeddings (tile-level or slide-level) to train a downstream predictive model. This can be a simple classifier like a logistic regression model or a shallow neural network. For optimal performance, the entire foundation model can be fine-tuned end-to-end on the labeled biomarker data, which allows the model's weights to adapt to the specific task.

Protocol 2: Benchmarking Model Robustness to Scanner-Induced Domain Shift

This protocol assesses a model's susceptibility to technical variation, a critical step for ensuring equitable clinical deployment.

Benchmarking Model Robustness to Scanner Variation

Procedure:

- Dataset Curation: A novel dataset is required, comprising the same glass histological slides scanned using two different scanner platforms (Scanner A and Scanner B). This setup allows for a targeted analysis of covariate shift due to scanner bias alone [13].

- Feature Extraction: Use the foundation model (e.g., Virchow2, PLUTO-4) in inference mode to extract feature embeddings from all tiles of all slides from both scanners.

- Quantify Representation Shift: Calculate the distributional shift between the feature embeddings from Scanner A and Scanner B. This can be done using metrics like Maximum Mean Discrepancy (MMD) or a novel "Robustness Index" [13].

- Performance Assessment: Designate slides from Scanner A as the in-domain (ID) training set and slides from Scanner B as the out-of-domain (OOD) test set. Train a biomarker classifier on the ID features and evaluate its performance on the OOD features. A significant drop in performance (e.g., accuracy, AUC) indicates model sensitivity to scanner bias [13].

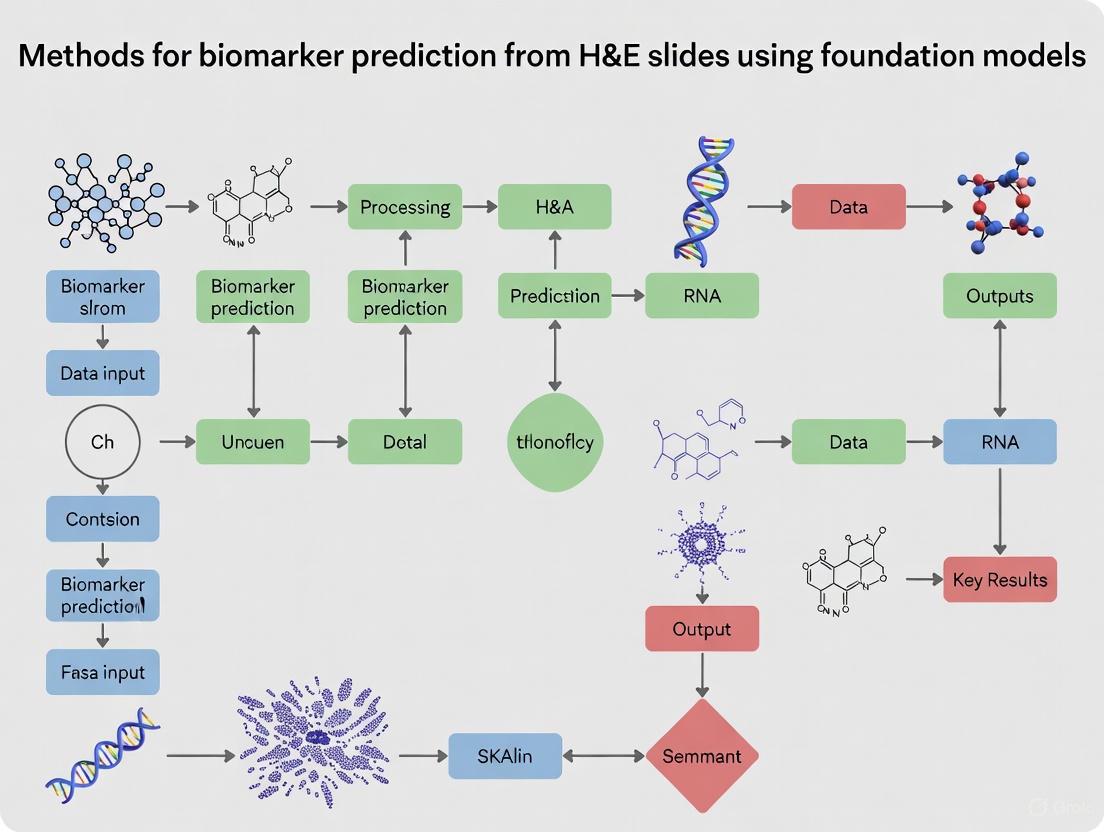

Visualized Workflows and Logical Frameworks

High-Level Logical Framework for Biomarker Discovery

This diagram outlines the overarching process from model pretraining to clinical insight.

Foundation Model Workflow for Biomarker Discovery

The advent of self-supervised learning (SSL) has initiated a paradigm shift in computational pathology, directly addressing the critical bottleneck of manual annotation for histopathological whole-slide images (WSIs). By leveraging vast repositories of unlabeled data, SSL enables the development of foundation models that learn powerful, transferable representations of tissue morphology. These models, pretrained on multi-million slide datasets, form the cornerstone of modern approaches for biomarker prediction from routine H&E stains, thereby accelerating precision oncology and drug development [14] [15].

Foundation models like Prov-GigaPath, Virchow, and CONCH represent a new class of tools that move beyond single-task models. They are characterized by their pretraining on extraordinarily diverse and large-scale datasets, often encompassing millions of slides and billions of image tiles, and their ability to be adapted with high data efficiency to a wide array of downstream clinical tasks, from mutation prediction to cancer subtyping [2] [15]. This document delineates the core pretraining paradigms, provides protocols for their application, and offers a toolkit for researchers engaged in the development of biomarker prediction models.

Core Pretraining Paradigms & Model Architectures

The landscape of pathology foundation models is shaped by a few dominant SSL pretraining paradigms, each with distinct architectural implications. The table below summarizes the core characteristics of these approaches.

Table 1: Core Self-Supervised Pretraining Paradigms in Computational Pathology

| Pretraining Paradigm | Core Mechanism | Key Advantage | Exemplar Models |

|---|---|---|---|

| Masked Image Modeling (MIM) | Reconstructs randomly masked portions of the input image. | Excels at learning robust, contextual feature representations of tissue structures. | UNI [14], Prov-GigaPath (partial) [15] |

| Contrastive Learning | Learns by maximizing agreement between differently augmented views of the same image and minimizing it for different images. | Produces feature spaces where semantically similar samples are clustered together. | DINOv2-based models (Athena, Virchow) [16] |

| Multi-Modal Learning | Aligns representations from different modalities (e.g., image and text) into a shared embedding space. | Enables zero-shot reasoning and leverages rich semantic information from paired text. | CONCH [2], PLIP [17] |

| Hierarchical Modeling | Employs multi-stage encoding to capture features from cell-, tissue-, and slide-level contexts. | Specifically designed for the gigapixel nature of WSIs, capturing both local and global context. | Prov-GigaPath [15], HIPT [14] |

A critical architectural challenge in computational pathology is processing gigapixel WSIs, which can contain tens of thousands of image tiles. The GigaPath architecture, which leverages LongNet's dilated attention mechanism, represents a state-of-the-art solution to this problem. It allows the model to efficiently process entire slides as long sequences of tokens, capturing both local patterns in individual tiles and global morphological patterns across the whole slide [15]. The following diagram illustrates the workflow of a typical hierarchical foundation model.

Benchmarking Foundation Models for Biomarker Prediction

Independent benchmarking is crucial for selecting the appropriate foundation model for a specific research goal. A comprehensive evaluation of 19 foundation models across 31 clinical tasks on external cohorts revealed key performance trends. The vision-language model CONCH and the vision-only model Virchow2 consistently achieved top-tier performance across morphological, biomarker, and prognostic tasks [2].

Table 2: Benchmarking Performance of Select Pathology Foundation Models (Adapted from [2])

| Foundation Model | Model Type | Avg. AUROC (All Tasks) | Avg. AUROC (Biomarker Tasks) | Key Characteristic |

|---|---|---|---|---|

| CONCH | Vision-Language | 0.71 | 0.73 | Trained on 1.17M image-caption pairs [2]. |

| Virchow2 | Vision-Only | 0.71 | 0.73 | Trained on 3.1M WSIs; strong all-around performer [2]. |

| Prov-GigaPath | Vision-Only | 0.69 | 0.72 | Open-weight model; excels in long-context, whole-slide modeling [15]. |

| UNI | Vision-Only | 0.68 | N/A | General-purpose model trained on 100M+ patches from 100k slides [14]. |

| PLIP | Vision-Language | 0.64 | N/A | Pretrained on histology images and text from social media [17]. |

A critical finding for drug development and research in rare biomarkers is that foundation models demonstrate remarkable data efficiency. In low-data scenarios simulating rare molecular events, models like PRISM and Virchow2 maintained robust performance even when downstream training cohorts were reduced to 75 patients [2]. Furthermore, an ensemble of complementary models (e.g., CONCH and Virchow2) was shown to outperform individual models in 55% of tasks, highlighting a practical strategy to boost predictive accuracy [2].

Detailed Experimental Protocols

Protocol 1: Feature Extraction for Downstream Biomarker Prediction

This protocol describes how to use a pretrained foundation model as a feature extractor to train a classifier for a specific biomarker prediction task (e.g., Microsatellite Instability (MSI) status).

Input Data Preparation:

- WSI Tiling: For each whole-slide image in your cohort, perform tissue segmentation to exclude background areas. Tile the remaining tissue regions into non-overlapping 256x256 or 224x224 pixel patches at a specified magnification (e.g., 20x). [3]

- Patch Sampling (Optional): For computational feasibility, you may randomly sample a representative subset of patches per WSI (e.g., 410 patches as in Athena [16]) or use all patches.

Feature Extraction:

- Load a pretrained foundation model (e.g., CONCH, Virchow2, or a publicly available model like Prov-GigaPath).

- Using the model's patch encoder, compute a feature vector for each valid tile from the previous step. This results in a set of feature vectors for each WSI.

Multiple Instance Learning (MIL) Aggregation:

- Model Training: The set of feature vectors for a WSI constitutes a "bag" of instances. Train an attention-based multiple instance learning (ABMIL) model, such as a transformer aggregator, on these bags using the patient-level biomarker labels [3] [17].

- Inference: The trained MIL model will learn to assign attention weights to the most diagnostically relevant tiles and aggregate their features to produce a final slide-level prediction for the biomarker.

The workflow for this protocol, along with the alternative end-to-end approach, is summarized below.

Protocol 2: Self-Supervised Pretraining with Limited Data

For researchers aiming to develop a domain-specific model where large-scale pretraining data is scarce, this protocol outlines a data-efficient strategy.

Leverage Transfer Learning:

- Model Initialization: Begin with a model already pretrained on a large, diverse dataset, such as a DINOv2 model trained on natural images or a general histopathology model like UNI. This provides a strong feature prior. [16]

Maximize Data Diversity:

- Focus on WSI Variety: Prioritize the diversity of whole-slide images over the sheer number of patches extracted from each. A collection of 282,000 WSIs from multiple institutions, countries, and scanner types (even with only 115 million total patches) can yield a highly robust model like Athena. [16]

- Random Patch Sampling: Instead of complex sampling heuristics, employ a random patch selection strategy from tissue regions across the diverse WSI set. This simple approach efficiently captures the underlying data distribution.

Continued Self-Supervised Pretraining:

- Use a self-supervised framework like DINOv2 to continue pretraining the initialized model on your target domain dataset. Incorporate domain-appropriate augmentations (e.g., vertical flips) [16].

- The resulting domain-adapted model can then be used for downstream tasks via Protocol 1.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential "research reagents" – key software and data components – required for working with pathology foundation models.

Table 3: Essential Research Reagents for Biomarker Prediction Research

| Item | Function & Utility | Exemplars / Notes |

|---|---|---|

| Pretrained Foundation Models | Provides off-the-shelf, powerful feature extractors for H&E images, eliminating the need for pretraining from scratch. | Prov-GigaPath (open-weight), CONCH, Virchow2. Access often requires a license or research agreement. |

| Feature Extraction Pipelines | Software to standardize the process of WSI tiling, patch selection, and feature vector serialization. | CLAM [17], TIAToolbox, or custom scripts based on PyTorch/TensorFlow. |

| Multiple Instance Learning (MIL) Aggregators | Algorithms to combine patch-level features into a single slide-level prediction using weak labels. | Attention-based MIL (ABMIL) [3], Transformer-MIL (TransMIL) [17]. |

| Whole-Slide Image (WSI) Datasets | Public and proprietary datasets for training and, more importantly, benchmarking model performance. | TCGA (The Cancer Genome Atlas), CAMELYON16 [14] [16], GTEx [16]. |

| Computational Resources | Hardware necessary for processing gigapixel images and running large transformer models. | High-performance GPUs (e.g., H200, A100) with substantial VRAM (>40GB). Distributed training across multiple nodes is often essential [16]. |

Within the field of computational pathology, the prediction of biomarkers from routinely acquired Hematoxylin & Eosin (H&E) stained whole-slide images (WSIs) using foundation models represents a paradigm shift in precision oncology. While H&E images contain a wealth of morphological information, their true predictive power is often unlocked through multimodal integration with complementary data sources, such as pathology reports and genomic profiles. This integration addresses the intrinsic limitations of any single data modality, creating a more comprehensive representation of the tumor microenvironment [18] [19]. Foundation models, pretrained on massive datasets via self-supervised learning (SSL), provide a powerful basis for this endeavor, as they learn versatile and transferable feature representations that can be adapted with limited labeled data for downstream biomarker prediction tasks [1] [9]. This document outlines the key methodologies and experimental protocols for aligning H&E images with pathology reports and genomic data to enhance the accuracy and generalizability of biomarker prediction models.

Foundation Models Enabling Multimodal Integration

The development of large-scale pathology foundation models (PFMs) is a critical first step for multimodal learning. These models are typically pretrained on millions of histopathology image patches in a self-supervised manner, learning robust feature representations without the need for manual annotations [9]. The table below summarizes several key foundation models relevant for multimodal integration.

Table 1: Key Pathology Foundation Models for Multimodal Learning

| Model Name | Architecture | Pretraining Data Scale | Key Pretraining Algorithm(s) | Multimodal Capabilities |

|---|---|---|---|---|

| TITAN [1] | Vision Transformer (ViT) | 335,645 WSIs | Visual SSL + Vision-Language Alignment | Generates slide representations; cross-modal retrieval; report generation. |

| Prov-GigaPath [15] | Vision Transformer (LongNet) | 1.3 billion tiles from 171,189 WSIs | DINOv2 + Masked Autoencoder | Vision-language pretraining; whole-slide context modeling. |

| UNI [9] | ViT-Large | 100 million tiles from 100,000 WSIs | DINOv2 | Strong baseline features for various tasks. |

| PathoDuet [20] | ViT with pretext token | Not Specified | Cross-scale positioning; Cross-stain transferring | Covers both H&E and IHC stains. |

| Phikon [9] | ViT-Base | 43 million tiles from 6,093 WSIs | iBOT | Publicly available model for transfer learning. |

Protocols for Multimodal Data Alignment and Integration

Effective multimodal integration requires carefully designed protocols to process each data modality and align them in a shared representation space. The following sections detail these methodologies.

Protocol 1: Vision-Language Pretraining with Pathology Reports

This protocol describes how to align WSI representations with their corresponding pathology reports, enabling cross-modal search and zero-shot classification [1].

A. Materials and Data Preparation

- H&E Whole-Slide Images (WSIs): A large dataset of WSIs, ideally spanning multiple organ sites and cancer types.

- Pathology Reports: The paired clinical text reports for each WSI.

- Synthetic Captions: (Optional) For finer-grained alignment, generate detailed morphological descriptions of image regions using a multimodal generative AI copilot (e.g., PathChat) [1].

- Pretrained Patch Encoder: A model like CONCH, pre-trained on histopathology patches, to convert image patches into feature vectors [1].

B. Experimental Workflow

- Feature Extraction: Process each WSI by dividing it into non-overlapping patches (e.g., 512x512 pixels at 20x magnification). Use the pretrained patch encoder to extract a feature vector for each patch, arranging them spatially into a 2D feature grid.

- Slide-Level Encoding: Employ a Vision Transformer (ViT) model, such as TITAN, to process the 2D feature grid. Use a cropping strategy to create multiple views of the WSI for self-supervised learning and leverage attention with linear biasses (ALiBi) to handle long sequences [1].

- Text Encoding: Process the pathology reports (and synthetic captions) with a language model encoder (e.g., a transformer) to obtain text embeddings.

- Contrastive Alignment: Fine-tune the slide encoder and text encoder using a contrastive learning objective (e.g., a vision-language contrastive loss). The goal is to minimize the distance between the slide representation and its paired report representation in the shared embedding space while maximizing the distance from unpaired reports [1] [15].

C. Outcome Assessment

- Perform cross-modal retrieval: query with a slide to find relevant reports and vice versa.

- Evaluate on zero-shot classification tasks by using text prompts for different disease subtypes.

Diagram 1: Vision-Language Pretraining Workflow.

Protocol 2: Integrating Genomic Data for Survival Analysis

This protocol outlines the integration of WSIs and genomic data for a clinically relevant task such as survival prediction, using a Mixture of Experts (MoE) architecture [21] [22].

A. Materials and Data Preparation

- WSIs and Genomic Profiles: Paired data from cohorts like The Cancer Genome Atlas (TCGA).

- Genomic Processing: Convert raw genomic data into biologically interpretable features. This can be achieved through:

B. Experimental Workflow

- WSI Representation Learning:

- Patch Feature Extraction: Use a pretrained PFM (e.g., Phikon, UNI) to extract features from all patches in a WSI.

- Patch Clustering: Cluster similar patch features to identify morphological prototypes, reducing complexity and enhancing feature robustness [21].

- Attention Pooling: Aggregate the patch-level features into a slide-level representation using an attention mechanism [21].

- Genomic Representation Learning: Process the pathway enrichment scores or gene signatures through a fully connected neural network to obtain a genomic embedding.

- Multimodal Fusion with MoE:

- Implement a MoE architecture (e.g., as in SurMoE or MICE) containing multiple "expert" networks [21] [22].

- The MoE layer dynamically routes the slide-level and genomic embeddings to specialized experts. A gating network determines the combination of experts for each input, capturing both cancer-specific and cross-cancer patterns [22].

- Use cross-modal attention to model the intricate relationships between the pathological and genomic features [21].

- Prediction: The fused multimodal representation is fed into a final output layer for survival prediction, typically using a Cox proportional hazards model.

C. Outcome Assessment

- Evaluate model performance using the Concordance Index (C-index) on held-out test sets and independent external cohorts to validate generalizability.

- Perform ablation studies to quantify the contribution of each modality.

Table 2: Key Reagent Solutions for Multimodal Integration Research

| Research Reagent / Resource | Type | Function in Experiment | Example Source / Implementation |

|---|---|---|---|

| Pretrained Patch Encoder | Software Model | Extracts foundational feature representations from H&E image patches. | CONCH [1], CTransPath [9] |

| Whole-Slide Foundation Model | Software Model | Encodes entire gigapixel WSIs into a single, general-purpose slide-level representation. | TITAN [1], Prov-GigaPath [15] |

| Vision-Language Model | Software Model | Aligns image and text data into a shared semantic space for cross-modal tasks. | TITAN (vision-language fine-tuned) [1] |

| Mixture of Experts (MoE) Layer | Algorithm / Architecture | Dynamically selects specialized sub-networks to handle heterogeneous data patterns. | SurMoE [21], MICE [22] |

| Gene Set Enrichment Analysis | Bioinformatics Method | Converts high-dimensional genomic data into interpretable pathway-level features. | GSEA software, KEGG/Reactome databases [21] [18] |

Diagram 2: Genomic Data Integration via Mixture of Experts.

Performance Benchmarking of Multimodal Approaches

Evaluating the performance of multimodal models against unimodal baselines and existing state-of-the-art methods is crucial. The following table synthesizes quantitative results from recent studies.

Table 3: Benchmarking Performance of Multimodal Models on Clinical Tasks

| Model / Approach | Task | Key Metric & Performance | Comparison vs. Baselines |

|---|---|---|---|

| MICE [22] | Pan-cancer Prognosis Prediction (Internal Cohorts) | Average C-index: 0.710 | Outperformed unimodal and other multimodal models by 3.8% to 11.2% in C-index. |

| MICE [22] | Pan-cancer Prognosis Prediction (Independent Cohorts) | C-index Improvement | Outperformed comparators by 5.8% to 8.8% in C-index, demonstrating strong generalizability. |

| Prov-GigaPath [15] | EGFR Mutation Prediction (on TCGA) | AUROC / AUPRC | Attained an improvement of 23.5% in AUROC and 66.4% in AUPRC compared to the second-best model. |

| SurMoE [21] | Multi-modal Survival Analysis (5 TCGA datasets) | C-index | Outperformed state-of-the-art methods with an average increase of 2.29% in C-index. |

| JWTH [23] | Biomarker Detection (8 cohorts, 4 biomarkers) | Balanced Accuracy | Achieved up to 8.3% higher balanced accuracy, with an average improvement of 1.2% over prior PFMs. |

| TITAN [1] | Rare Disease Retrieval & Cancer Prognosis | Not Specified | Outperformed both region-of-interest (ROI) and slide foundation models in few-shot and zero-shot settings. |

The integration of H&E images with pathology reports and genomic data represents the frontier of computational pathology. Foundation models serve as the cornerstone for this integration, providing a pathway to develop robust, generalizable, and data-efficient AI tools for biomarker discovery and patient stratification. The protocols outlined herein for vision-language pretraining and genomic integration via advanced architectures like Mixture of Experts provide a actionable roadmap for researchers. As the field evolves, focusing on the standardization of multimodal benchmarks and the development of more sophisticated fusion techniques will be critical for translating these powerful models into clinical practice to support personalized therapy decisions and improve patient outcomes.

The prediction of biomarkers from standard hematoxylin and eosin (H&E)-stained whole slide images (WSIs) represents a transformative advancement in computational pathology, enabling unprecedented efficiency in precision oncology. This paradigm leverages foundation models trained through self-supervised learning (SSL) on vast amounts of unannotated data, serving as a base for diverse downstream tasks with minimal task-specific labeling [24]. The core advantages driving this revolution include transfer learning, which allows knowledge acquired from large, diverse datasets to be applied to specific clinical problems; data efficiency, which enables robust model performance even with limited annotated examples; and enhanced generalization, which ensures consistent performance across varied datasets and clinical settings. These capabilities are particularly crucial in biomedical contexts where large, labeled datasets are scarce, and clinical translation demands models that are both accurate and reliable [24] [25]. The integration of these principles facilitates the discovery and validation of novel imaging biomarkers, accelerating their widespread translation into clinical settings for improved patient diagnosis, prognosis, and treatment selection.

Key Advantages and Quantitative Performance

Foundation models pretrained using self-supervised learning on extensive, unlabeled datasets create a robust starting point for developing task-specific biomarkers. This approach significantly reduces the demand for large, expensively annotated training samples in downstream applications [24]. Evaluations across multiple clinical tasks consistently demonstrate that foundation model implementations achieve superior performance compared to conventional supervised learning and other state-of-the-art pretrained models, particularly when training dataset sizes are very limited [24].

Table 1: Performance of Foundation Models in Biomarker Prediction Tasks

| Cancer Type | Prediction Task | Model/Aproach | Performance (AUC) | Key Advantage Demonstrated |

|---|---|---|---|---|

| Non-Small Cell Lung Cancer (NSCLC) [26] | ROS1 Fusion | Vision Transformer + Two-Stage Fine-Tuning | 0.85 | Transfer Learning for rare biomarkers |

| Non-Small Cell Lung Cancer (NSCLC) [26] | ALK Fusion | Vision Transformer + Two-Stage Fine-Tuning | 0.84 | Transfer Learning for rare biomarkers |

| Multiple [24] | Lesion Anatomical Site | Foundation Model (Fine-Tuned) | mAP: 0.857 | Data Efficiency & Generalization |

| Multiple [24] | Lung Nodule Malignancy | Foundation Model (Fine-Tuned) | AUC: 0.944 | Generalization to out-of-distribution tasks |

| Colorectal Cancer (CRC) & Breast Cancer (BRCA) [6] | MSI/MMRd Status | DuoHistoNet (H&E + IHC) | AUROC > 0.97 | Enhanced via multi-modal transfer |

| Breast Cancer (BRCA) [6] | PD-L1 Status | DuoHistoNet (H&E + IHC) | AUROC: 0.96 | Enhanced via multi-modal transfer |

The power of transfer learning is exemplified in scenarios involving rare biomarkers. For instance, predicting rare ROS1 and ALK fusions in NSCLC is challenging due to the low prevalence (1-2% for ROS1, <5% for ALK) of these events. A two-stage specialized training procedure—first training a model on a composite biomarker label (RAN: ROS1, ALK, or NTRK fusions) and then fine-tuning on the specific target biomarker—achieved excellent ROC AUCs of 0.85 for ROS1 and 0.84 for ALK. This method consistently outperformed models trained directly on the target biomarker, especially for ROS1, demonstrating effective knowledge transfer from a related, larger task [26].

Furthermore, foundation models show remarkable stability to input variations and strong associations with underlying biology, providing confidence in their clinical applicability. A foundation model for cancer imaging biomarkers demonstrated significantly less performance degradation compared to baseline methods when the amount of training data for the downstream task was progressively reduced from 100% to 10%. In some cases, a simple linear classifier applied to features extracted from the frozen foundation model even outperformed compute-intensive, fully supervised deep learning models, highlighting a highly data-efficient pathway for biomarker development [24].

Experimental Protocols and Workflows

Protocol 1: Foundation Model Pretraining and Application

This protocol outlines the procedure for self-supervised pretraining of a foundation model on a diverse set of radiographic lesions and its subsequent application to a downstream biomarker prediction task, such as distinguishing malignant from benign lung nodules [24].

Materials and Reagents:

- Dataset of Lesion ROIs: A large, diverse cohort of lesion regions of interest (ROIs) identified on medical images (e.g., 11,467 CT lesions from 2,312 patients) [24].

- Computational Resources: High-performance computing cluster with multiple GPUs (e.g., NVIDIA A100 or V100).

- Software Frameworks: Python libraries, including PyTorch or TensorFlow, and specialized libraries for SSL (e.g., VISSL).

Procedure:

- Data Curation: Collect a large, diverse set of unlabeled medical images. Extract and curate lesion ROIs from these images to form the pretraining dataset.

- Self-Supervised Pretraining: Train a convolutional encoder using a contrastive SSL strategy like the modified SimCLR approach.

- Generate augmented views of each lesion ROI by applying random transformations (e.g., cropping, rotation, color jitter, blurring).

- The model learns to produce similar feature embeddings for different augmented views of the same lesion and dissimilar embeddings for views of different lesions.

- Downstream Application (Two Methods):

- A) Feature Extraction: Use the pretrained foundation model as a fixed feature extractor. Process input images through the encoder to generate a feature vector. Train a simple linear classifier (e.g., logistic regression) on these features using a small, labeled dataset for the specific biomarker task.

- B) Fine-Tuning: Initialize a new model for the downstream task with the weights from the pretrained foundation model. The entire model is then trained end-to-end on the labeled biomarker dataset, allowing the initial layers to adapt slightly to the new task.

Protocol 2: Two-Stage Training for Rare Biomarkers

This protocol is designed for predicting rare genetic alterations, such as gene fusions, where positive cases are scarce. It leverages transfer learning from a related, larger task to boost performance [26].

Materials and Reagents:

- WSI Dataset: A large cohort of H&E-stained WSIs with slide-level labels for fusions (e.g., 33,014 NSCLC patients).

- Feature Extractor: A pretrained vision transformer (e.g., MoCo-V3) for converting WSIs into feature matrices.

- Computational Resources: GPU servers with ample memory for handling whole slide images.

Procedure:

- Composite Model Training:

- Create a composite label (e.g., "RAN") for samples positive for any of the related rare fusions (ROS1, ALK, or NTRK).

- Train a transformer-based feature aggregation model using this composite dataset. This model learns general features associated with the presence of any fusion driver.

- Target-Specific Fine-Tuning:

- Take the model trained in Step 1 and use its weights to initialize a new model for the specific target biomarker (e.g., ROS1-only).

- Fine-tune this model on the dataset labeled specifically for the target biomarker. Use a learning rate 10 times smaller than that used for direct training to avoid catastrophic forgetting.

- This two-stage approach (train-finetune) has been shown to achieve higher ROC AUC than direct training on the small target dataset.

Foundation Model Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Biomarker Prediction Research

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| Formalin-Fixed Paraffin-Embedded (FFPE) Tissue Sections [6] [26] | The standard source material for generating H&E and IHC whole slide images in retrospective and prospective studies. | Ensure consistent tissue processing protocols. Block age and quality can impact DNA/RNA integrity for molecular correlation. |

| H&E Staining Reagents [27] [26] | Routine staining for morphological assessment; the primary input for most AI-based biomarker prediction models. | Standardize staining protocols across participating sites to minimize technical variation and improve model generalizability. |

| Immunohistochemistry (IHC) Kits [6] | Provide protein-level biomarker status for model training and validation (e.g., PD-L1 22C3 pharmDx, MMR antibodies). | Use FDA-approved/validated kits for clinical-grade validation. Key for creating ground truth labels. |

| Multiplexed Immunofluorescence (mIF) Panels [27] | High-plex method for definitive cell type identification using lineage markers (e.g., pan-CK, CD3, CD68); creates high-quality ground truth for cell classification models. | Allows for labeling multiple markers on a single tissue section, crucial for spatial biology and understanding the tumor microenvironment. |

| Next-Generation Sequencing (NGS) Assays [6] [26] | Molecular profiling to define genomic ground truth (e.g., MSI status, ROS1/ALK fusions, TMB) for training and validating predictive models. | Targeted panels or whole-exome sequencing can be used. Essential for linking morphology to genotype. |

| Whole Slide Image Scanners [6] | Digitize glass slides to create gigapixel whole slide images (WSIs) for computational analysis. | Use scanners from major vendors (e.g., Philips, Leica) at high magnification (40x). Ensure consistent calibration. |

Visualization of Complex Workflows and Relationships

Cross-Modality and Cell Classification Workflow

Advanced frameworks extend beyond H&E analysis to integrate multiple data types, enhancing predictive accuracy and enabling novel discovery. The HistoStainAlign framework exemplifies cross-modality learning, which predicts IHC staining patterns directly from H&E WSIs using a contrastive training strategy to align feature embeddings from paired H&E and IHC images [28]. This eliminates the need for costly and time-consuming IHC staining in some prescreening scenarios. At the cellular level, automated cell annotation leverages multiplexed immunofluorescence (mIF) to define cell types based on protein markers. These labels are transferred to co-registered H&E images at single-cell resolution, creating a large, accurately labeled dataset to train a robust deep learning model for classifying major cell types (tumor cells, lymphocytes, etc.) on standard H&E images [27].

Advanced Analysis Workflows

The integration of transfer learning, data-efficient model design, and rigorous validation protocols establishes a powerful new paradigm for biomarker discovery from routine H&E slides. Foundation models, pretrained on large, diverse datasets, provide a versatile and robust starting point for developing a wide array of diagnostic, prognostic, and predictive biomarkers, significantly reducing the barrier of limited annotated data [24]. Future efforts will focus on expanding these approaches to rare diseases, incorporating dynamic health indicators, strengthening multi-omics integration, and leveraging edge computing for low-resource settings [29]. As these models continue to evolve, they hold the strong potential to become indispensable tools in clinical pathology, enhancing the precision and efficiency of cancer patient evaluation and contributing to more personalized patient care [6].

From Model to Microscope: Fine-Tuning and Application in Biomarker Discovery

The emergence of pathology foundation models (PFMs), pre-trained on millions of histopathology images, has revolutionized the development of artificial intelligence (AI) biomarkers for precision oncology. These models learn powerful, general-purpose representations of tissue morphology that can be efficiently adapted to specific predictive tasks. Fine-tuning has therefore become a critical bridge, transforming these foundational representations into robust clinical tools capable of predicting key biomarkers—such as gene mutations, protein expression, and immune markers—directly from routine hematoxylin and eosin (H&E)-stained whole slide images (WSIs). This document outlines the principal fine-tuning strategies and provides detailed protocols for adapting PFMs to biomarker prediction tasks, enabling researchers to leverage these powerful models effectively within their own research and development pipelines.

Core Fine-Tuning Strategies and Performance

The adaptation of PFMs for biomarker prediction employs a spectrum of strategies, ranging from simple linear probing to complex, hierarchically integrated approaches. The choice of strategy is dictated by factors such as dataset size, computational resources, and the biological scale of the morphological features relevant to the biomarker.

Table 1: Comparative Performance of Fine-Tuning Strategies on Various Biomarkers

| Biomarker | Cancer Type | Strategy | Key Architecture | Performance (AUC) | Cohort Size (N) |

|---|---|---|---|---|---|

| EGFR Mutation [5] | Lung Adenocarcinoma | Fine-tuning Foundation Model | Custom CNN | 0.847 (Internal) 0.890 (Prospective) | 8,461 Slides |

| MSI Status [30] | Colorectal Cancer | Feature-based MIL | Deepath-MSI | 0.976 (Test) 0.978 (Real-world) | 5,070 WSIs |

| ROS1 Fusion [26] | NSCLC | Two-Stage Fine-tuning | Vision Transformer (ViT) | 0.85 (Holdout) | 33,014 Patients |

| ALK Fusion [26] | NSCLC | Two-Stage Fine-tuning | Vision Transformer (ViT) | 0.84 (Holdout) | 33,014 Patients |

| IHC Biomarkers [31] | GI Cancers | Supervised Learning | ResNet-50 | 0.90 - 0.96 (P40, Pan-CK, etc.) | 134 WSIs |

| Spatial Gene Expression [32] | Pan-Cancer | Generative Pretraining | STPath Transformer | PCC: 0.266 (Top 200 HVGs) | 983 WSIs |

From Linear Probing to Hierarchical Integration

Early approaches for leveraging PFMs often relied on linear probing, where the pre-trained encoder is frozen, and only a simple linear classifier (e.g., logistic regression) attached to the global [CLS] token is trained. While computationally efficient, this method fails to leverage the rich local and cellular morphological information encoded in the patch tokens, limiting its performance for biomarkers reliant on fine-grained features [23].

To overcome this, advanced strategies like the Joint-Weighted Token Hierarchy (JWTH) have been developed. JWTH integrates large-scale self-supervised pretraining with cell-centric post-tuning. It uses an attention pooling mechanism to fuse the global class token with refined local/cellular tokens, creating a comprehensive representation. This hierarchical integration has been shown to outperform standard linear probing, achieving up to an 8.3% higher balanced accuracy in biomarker detection tasks [23].

Feature Extraction with Multiple Instance Learning (MIL)

For tasks with only slide-level labels, feature extraction coupled with Multiple Instance Learning (MIL) is a dominant strategy. In this paradigm, a pre-trained PFM acts as a fixed feature extractor, converting image tiles into feature vectors. An aggregator model (e.g., a transformer or attention-based MIL) then processes these features to produce a slide-level prediction. This weakly supervised approach is highly effective and computationally less intensive than full fine-tuning. For instance, the Deepath-MSI model for microsatellite instability in colorectal cancer uses this strategy to achieve an AUC of 0.98, demonstrating clinical-grade specificity of 92% at a 95% sensitivity threshold [30].

Two-Stage and Composite-Task Fine-Tuning

For predicting rare biomarkers—such as ROS1 fusions in NSCLC, which occur in only 1-2% of patients—a two-stage fine-tuning strategy is highly beneficial. This method involves first training the model on a larger, related task before fine-tuning on the specific, low-prevalence target.

A proven protocol is to first train a model on a composite label (e.g., "RAN" - positive for any ROS1, ALK, or NTRK fusion) to teach the model general features of kinase fusions. The model is then fine-tuned specifically on the rare biomarker of interest. This approach has been shown to increase the ROC AUC for ROS1 fusion prediction from 0.83 (direct training) to 0.86, effectively mitigating the challenges of class imbalance [26].

Cell-Centric and Spatial Fine-Tuning

Some biomarkers require understanding of cellular morphology and spatial relationships. Cell-centric fine-tuning enhances a PFM's ability to capture nuclear and cellular details by incorporating a regularization objective during post-tuning that reinforces biologically meaningful cues [23]. This is often enabled by automated cell annotation and classification models trained using multiplexed immunofluorescence (mIF) to generate high-quality, human-free cell labels on H&E images, achieving an overall cell classification accuracy of 86-89% [27].

For predicting complex biomarkers like spatial gene expression, generative pretraining on paired WSI and spatial transcriptomics data is used. Models like STPath are trained on a masked gene expression prediction objective, learning to infer the expression of thousands of genes across tissue spots directly from histology. This allows them to predict spatial gene expression without dataset-specific fine-tuning, achieving a 6.9% improvement in Pearson correlation over baseline methods [32].

Diagram 1: Finetuning strategy workflow for biomarker tasks.

Detailed Experimental Protocols

Protocol: Fine-Tuning a Foundation Model for EGFR Mutation Prediction

This protocol is adapted from the development of the EAGLE model for predicting EGFR mutational status in lung adenocarcinoma from H&E slides [5].

- Objective: To adapt a pre-trained pathology foundation model to predict EGFR mutation status in lung adenocarcinoma biopsies and resection specimens.

Materials:

- Dataset: A large, multi-institutional cohort of H&E-stained whole slide images (WSIs) from lung adenocarcinoma patients, with ground truth EGFR status confirmed by next-generation sequencing (e.g., MSK-IMPACT) or PCR. The cohort should include primary and metastatic specimens to ensure robustness (N > 5,000 slides recommended).

- Foundation Model: A publicly available PFM (e.g., UNI, Gigapath, or an open-source model like the one used in [5]).

- Computational Resources: High-performance computing cluster with multiple GPUs (e.g., NVIDIA A100 or V100), sufficient VRAM (>40GB recommended), and storage for large-scale WSIs.

Methods:

Data Preprocessing:

- Tiling: Segment tissue regions using Otsu's thresholding or a similar algorithm. Subdivide the tissue into non-overlapping image tiles (e.g., 256x256 or 512x512 pixels) at a target magnification (e.g., 20x or 40x).

- Stain Normalization & Augmentation: Apply stain normalization (e.g., Vahadane or Macenko method) to minimize inter-site variation. Implement staining augmentation (e.g., RandStainNA [23]) during training to improve model robustness to color shifts.

- Quality Control: Filter out tiles with insufficient tissue, excessive blur, or artifacts.

Model Fine-Tuning:

- Architecture: The PFM serves as the feature encoder. Replace the model's final classification head with a task-specific head (e.g., a multi-layer perceptron) for binary classification (EGFR mutant vs. wild-type).

- Training Regime:

- Loss Function: Use binary cross-entropy loss.

- Optimizer: Use Adam or AdamW optimizer with a carefully tuned learning rate (typically a small value, e.g., 1e-5 to 1e-4, as the pre-trained weights are already well-initialized).

- Handling Multiple Tiles: Use an attention-based multiple instance learning (MIL) aggregator to combine tile-level features into a single slide-level prediction and loss.

- Validation: Monitor performance on a held-out validation set, using AUC as the primary metric. Employ early stopping to prevent overfitting.

Validation and Deployment:

- Internal & External Validation: Rigorously evaluate the final model on a completely held-out internal test set and multiple external cohorts from different institutions and scanner types to assess generalization [5].

- Prospective Clinical Validation: Conduct a silent prospective trial where the model is run on consecutive, new cases in real-time to simulate clinical deployment and confirm performance under real-world conditions [5].

Protocol: Two-Stage Fine-Tuning for Rare Fusions (ROS1/ALK)

This protocol details the specialized training procedure for predicting rare biomarkers like ROS1 and ALK fusions in NSCLC, where positive cases are scarce [26].

- Objective: To develop a predictive model for a rare biomarker (e.g., ROS1 fusion, prevalence 1-2%) by first learning from a larger, related task.

Materials:

- Dataset: A large NSCLC cohort (e.g., >30,000 patients) with slide-level labels for fusions. For the composite task, create a "RAN" label (positive for any ROS1, ALK, or NTRK fusion). Ensure the holdout set is strictly isolated.

- Model: A vision transformer (ViT) model (e.g., MoCo-V3) pre-trained in a self-supervised manner on a large histopathology corpus.

Methods:

- Stage 1: Composite Model Training:

- Objective: Train a model to predict the composite "RAN" label.

- Procedure: Use the standard feature extraction and aggregation pipeline. Train the model until convergence on the RAN prediction task. This model learns generalizable features associated with kinase fusions.

- Stage 2: Target-Specific Fine-Tuning:

- Objective: Adapt the composite model to the specific rare biomarker (e.g., ROS1).

- Procedure: Initialize the model weights with the pre-trained RAN model. Fine-tune the entire model using only the data for the target biomarker (e.g., ROS1-positive and negative slides).

- Hyperparameters: Use a significantly smaller learning rate (e.g., 10x smaller) than in Stage 1 to allow for gentle refinement without catastrophic forgetting.

- Evaluation:

- Compare the performance (ROC AUC) of this two-stage "train-finetune" model against a model trained directly on the rare biomarker. The two-stage model should show a superior and more stable ROC AUC [26].

- Stage 1: Composite Model Training:

Table 2: The Scientist's Toolkit - Key Research Reagents and Resources

| Resource/Reagent | Function/Application | Specifications & Notes |

|---|---|---|

| H&E Whole Slide Images | Primary input data for model development. | Formalin-fixed, paraffin-embedded (FFPE) tissue; scanned at 20x or 40x magnification; formats: .svs, .tiff [5] [30]. |

| Molecular Ground Truth | Gold standard labels for model training and validation. | Derived from NGS, PCR, IHC, or FISH. Critical for supervised learning [5] [26]. |

| Multiplexed Immunofluorescence | Automated, high-quality cell type annotation for cell-centric models. | Defines cell types (tumor, lymphocyte, etc.) via protein markers (pan-CK, CD3, etc.) for transfer to H&E [27]. |

| Spatial Transcriptomics Data | Enables training of models for spatial gene expression prediction. | Paired H&E and ST data for generative pretraining of models like STPath [32]. |

| Pre-trained Pathology Foundation Model | Base model for transfer learning. | Models include UNI, Gigapath, or CONCH. Can be used as a frozen feature extractor or for full fine-tuning [23] [32]. |

| Stain Normalization Tool | Reduces technical variance between slides from different sources. | Algorithms like Vahadane or Macenko; crucial for multi-center studies [31]. |

| Multiple Instance Learning Aggregator | Combines tile-level features for slide-level prediction. | Attention-based MIL or transformer aggregators are standard for weakly supervised learning [30] [26]. |

Diagram 2: Two-stage finetuning for rare biomarkers.

The prediction of biomarkers from routine hematoxylin and eosin (H&E)-stained whole-slide images (WSIs) using foundation models represents a paradigm shift in computational pathology. This approach allows for the detection of subtle morphological features associated with molecular alterations, potentially reducing the need for additional costly molecular testing while preserving valuable tissue for comprehensive genomic sequencing [33]. The workflow from raw WSI to predictive biomarker signatures involves multiple critical steps, each with unique technical considerations that significantly impact downstream model performance and clinical applicability. This application note provides a detailed breakdown of the core processing pipeline, focusing on the transition from gigapixel WSIs to analyzable feature representations suitable for foundation model training and inference.

Whole-Slide Image Processing Pipeline

Whole-slide images present unique computational challenges due to their massive size, often comprising tens of thousands of image tiles and occupying several gigabytes of memory when unpacked [34]. A standard gigapixel slide may contain between 10,000 to 70,121 image tiles, creating significant processing hurdles [15]. This massive scale prevents direct analysis of entire slides, necessitating specialized processing pipelines that balance computational efficiency with preservation of biologically relevant information.

The primary challenges in WSI analysis include:

- Memory constraints: Standard computational hardware cannot process entire WSIs simultaneously

- Data variability: Differences in tissue preparation, staining protocols, and scanner models introduce unwanted technical variance

- Artifact contamination: Presence of pen marks, folding artifacts, out-of-focus regions, and background tissue can confound analysis

- Information preservation: Critical morphological features must be retained despite necessary data reduction steps

Workflow Diagram

Diagram 1: Whole-slide image processing workflow from raw image to feature embedding.

Detailed Protocol: Slide Pre-processing

Tissue Detection and Masking

Purpose: To identify and segment relevant tissue regions from slide background, reducing computational load and minimizing false positives from non-tissue areas.

Methods:

- Otsu's thresholding: Automatic global thresholding method that separates foreground (tissue) from background by minimizing intra-class intensity variance [35]

- Manual annotation: Using tools like QuPath [35] or Slideflow Studio [35] to delineate specific regions of interest (ROIs)

- Deep learning-based segmentation: Custom models (e.g., U-Net architectures) trained to identify specific tissue types or pathological structures

Protocol Parameters:

- Implementation: scikit-image or OpenCV libraries

- Default Otsu's threshold: Determined automatically from image histogram

- Morphological operations: Optional post-processing to remove small holes (closing) or isolate connected regions (opening)

Artifact Detection and Removal

Purpose: To identify and exclude regions with technical artifacts that may confound downstream analysis.

Common Artifacts and Detection Methods:

Table 1: Common whole-slide image artifacts and detection methods

| Artifact Type | Detection Method | Implementation |

|---|---|---|

| Out-of-focus regions | Gaussian blur filtering [35] or DeepFocus model [35] | scikit-image Gaussian filter with σ=3-5 or custom CNN |

| Pen marks | Color thresholding in HSV space | OpenCV inRange() function with hue-specific thresholds |

| Folding artifacts | Texture analysis and intensity variance | Local binary patterns (LBP) or Gabor filters |

| Air bubbles | Circular Hough transform | OpenCV HoughCircles() function |

Protocol:

- Apply Gaussian blur filter with kernel size adapted to magnification level

- Calculate focus metric (variance of Laplacian) for each tile

- Exclude tiles with focus metric below empirically determined threshold (e.g., <100 for 20× magnification)

- For pen mark detection, convert RGB to HSV color space and apply hue-specific masking

- Remove connected components identified as artifacts using morphological operations

Stain Normalization

Purpose: To minimize technical variance introduced by differences in staining protocols, scanner models, and laboratory procedures.

Methods:

- Color deconvolution: Separates H&E channels using predefined or learned stain vectors [34]

- Histogram matching: Adjusts intensity distributions to match a reference slide [34]

- Deep learning-based normalization: Cycle-consistent generative adversarial networks (CycleGANs) for unsupervised stain transfer

Protocol (Color Deconvolution):

- Convert RGB image to optical density (OD) space: OD = -log10(I/I_white)

- Apply Beer-Lambert transformation to separate stain concentrations

- Define stain vectors for hematoxylin and eosin (typically [0.65, 0.70, 0.29] for H and [0.07, 0.99, 0.11] for E)

- Normalize stain intensities across slides using reference values

- Reconstruct normalized RGB image from adjusted stain concentrations

Tiling Strategies and Implementation

Technical Considerations for Tiling

The conversion of whole-slide images into smaller, manageable tiles is necessitated by both computational constraints and the requirements of deep learning architectures. Proper tiling strategies must balance several competing factors, including context preservation, computational efficiency, and morphological feature integrity.

Key Tiling Parameters:

- Tile size: Typically 256×256 or 512×512 pixels at target magnification

- Magnification level: Usually 20× for cellular-level features, 10× for tissue architecture, or 5× for global context

- Overlap: Optional overlapping tiles (e.g., 10-25%) to ensure continuous feature extraction and reduce edge artifacts

- Jitter: Random positional variations during training for data augmentation

Tiling Protocol

Purpose: To extract representative sub-regions from whole-slide images suitable for deep learning model input while preserving biologically relevant information.

Equipment and Software:

- Slideflow [35], TIAToolbox [35], or custom Python scripts with OpenSlide/VIPS

- GPU acceleration (cuCIM [35]) for improved performance

Step-by-Step Protocol:

- Set extraction parameters:

- Target magnification: 20× (0.5 microns/pixel equivalent)

- Tile size: 512×512 pixels

- Overlap: 0% for inference, 25% for training with data augmentation

- Format: JPEG (lossy, smaller size) or PNG (lossless, larger size)

Filter non-informative tiles:

Store tiles efficiently:

- Use TFRecord format for optimized data loading during training [35]

- Include spatial metadata (slide coordinates, magnification level) with each tile

Quality control:

- Randomly sample 1% of tiles from each slide for visual inspection

- Verify tissue preservation and focus across different slide regions

Performance Metrics:

- Slideflow can extract tiles at 40× magnification in approximately 2.5 seconds per slide [35]

- Typical extraction rates: 200-500 tiles per minute depending on hardware and slide complexity

Feature Embedding with Foundation Models

Foundation Model Architectures for Digital Pathology

Foundation models pre-trained on large-scale histopathology datasets have emerged as powerful tools for generating informative feature embeddings from pathology images. These models capture hierarchical morphological patterns that can be transferred to various downstream prediction tasks, including biomarker detection.

Table 2: Comparison of pathology foundation models for feature embedding

| Model | Architecture | Training Data | Embedding Dimension | Key Features |

|---|---|---|---|---|

| Prov-GigaPath [15] | Vision Transformer with LongNet | 1.3B tiles from 171K slides | 768-1024 | Whole-slide context with dilated attention |

| TITAN [1] | Vision Transformer | 335K WSIs across 20 organs | 768 | Multimodal alignment with pathology reports |

| CONCH [1] | Vision Transformer | 100M+ histology patches | 768 | ROI-level feature representation |

| CTransPath [15] | Transformer-CNN hybrid | 15M tissue patches | 768 | Combined local and global features |

Embedding Generation Protocol

Purpose: To convert image tiles into compact, semantically meaningful feature vectors that capture morphologic patterns relevant to biomarker status.

Equipment and Software:

- Pre-trained foundation model (e.g., Prov-GigaPath, TITAN, CONCH)

- GPU with ≥12GB VRAM for efficient inference

- Python deep learning frameworks (PyTorch, TensorFlow)

Step-by-Step Protocol:

Tile preprocessing: