Foundation Models for Weakly Supervised Whole Slide Image Classification: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive exploration of using foundation models for weakly supervised classification of histopathological Whole Slide Images (WSIs).

Foundation Models for Weakly Supervised Whole Slide Image Classification: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive exploration of using foundation models for weakly supervised classification of histopathological Whole Slide Images (WSIs). Tailored for researchers and drug development professionals, it covers the foundational principles of self-supervised learning and Multiple Instance Learning (MIL) that underpin this approach. The content details state-of-the-art methodologies, including specific foundation models like CONCH and Virchow2, and frameworks such as CLAM. It addresses critical challenges like data scarcity and model selection, offering practical optimization strategies. Finally, the article synthesizes evidence from recent large-scale clinical benchmarks, providing performance comparisons and validation techniques to guide the development of robust, clinically applicable computational pathology tools.

Core Concepts: Demystifying Foundation Models and Weak Supervision in Computational Pathology

Whole Slide Imaging (WSI) is a transformative technology that involves the high-resolution digitization of entire glass pathology slides to create digital images that can be viewed, shared, and analyzed computationally [1] [2]. These gigapixel-sized digital files, known as Whole Slide Images (WSIs), have become fundamental to modern digital pathology, enabling remote diagnostics, collaborative reviews, and the application of artificial intelligence (AI) in pathological analysis [1] [3].

The creation of a WSI involves scanning glass slides using a motorized microscope with a digital camera system that captures numerous individual image tiles at high magnification, which are then computationally stitched together to form a single, comprehensive digital image [3]. This process allows pathologists and researchers to examine specimens on computer screens with the ability to zoom, pan, and annotate specific regions of interest, replicating and enhancing the capabilities of traditional light microscopy [1].

The Annotation Bottleneck in Computational Pathology

Despite the advantages of digitization, a significant challenge hindering the development of AI models for computational pathology is the annotation bottleneck. This refers to the immense difficulty and cost associated with obtaining pixel-level or region-level annotations required for training supervised deep learning models [4].

The annotation bottleneck arises from several factors:

- WSI Complexity and Size: A single WSI can exceed 10 billion pixels, making comprehensive annotation extremely time-consuming [4].

- Specialized Expertise Required: Accurate pathological annotation demands highly trained pathologists whose time is limited and valuable [1].

- Inter-observer Variability: Annotation consistency suffers from subjective interpretations between different pathologists [4].

- Data Heterogeneity: Variations in staining protocols, scanner models, and tissue processing create domain shifts that complicate annotation standardization [4].

This bottleneck severely constrains the scalability of AI solutions in pathology and has motivated research into alternative learning paradigms, particularly weakly supervised learning approaches that can utilize more readily available annotation types [4].

Foundation Models and Weakly Supervised Learning

Foundation Models (FMs) and Weakly Supervised Learning represent promising approaches to address the annotation bottleneck in computational pathology [4]. Foundation Models are large-scale models pre-trained on broad data that can be adapted to various downstream tasks, while weakly supervised learning utilizes imperfect or higher-level annotations (such as slide-level labels) instead of detailed pixel-level annotations [5].

The integration of these approaches enables researchers to develop AI models for WSI classification with significantly reduced annotation requirements. Current research explores multiple paradigms:

- Foundation Model + Multi-Instance Learning (MIL): This established paradigm learns mappings from image patterns to slide-level labels without requiring detailed local annotations [4].

- Vision-Language Models (VLM): These models align image representations with pathological text descriptions to learn richer semantic understanding [4].

- Multi-Agent Systems: Frameworks like WSI-Agents utilize specialized collaborative agents for different analytical tasks, balancing versatility and accuracy [6].

Table 1: Quantitative Performance of Deep Learning Models in CRC Molecular Subtyping

| Model | Sub-image Level Accuracy | Slide Level Accuracy | CMS2 Subtype Accuracy |

|---|---|---|---|

| VGG16 | 53.04% | 51.72% | 75.00% |

| VGG19+Dropout | 53.04% | 51.72% | 75.00% |

| VGG24+Dropout | 53.04% | 51.72% | 75.00% |

| VGG24+BN+Dropout | 53.04% | 51.72% | 75.00% |

| Inception v3 | 53.04% | 51.72% | 75.00% |

| Resnet18 | 53.04% | 51.72% | 75.00% |

| Resnet34 | 53.04% | 51.72% | 75.00% |

| Resnet50 | 53.04% | 51.72% | 75.00% |

Note: Data adapted from a study on colorectal cancer (CRC) consensus molecular subtype (CMS) classification using WSIs [7].

Experimental Protocols for Weakly Supervised WSI Classification

Protocol: Weakly Supervised Classification Using Slide-Level Labels

This protocol outlines a methodology for training classification models using only slide-level labels, based on established approaches in computational pathology research [4] [7].

Materials and Equipment

- Whole Slide Images (WSIs) in compatible formats (.svs, .ndpi, .tiff)

- High-performance computing workstation with GPU acceleration

- WSI processing software (e.g., ASAP, QuPath)

- Deep learning framework (PyTorch or TensorFlow)

Procedure

Data Preprocessing

- Convert WSIs to manageable patches (e.g., 256×256 pixels)

- Filter out non-tissue regions using HSV color space transformation and thresholding

- Perform data augmentation (flipping, rotation, color variation)

- Normalize staining variations across different scanners

Feature Extraction

- Utilize pre-trained foundation models (e.g., CONCH, Virchow) for patch-level feature extraction

- Process all patches from each WSI through the feature extractor

- Aggregate patch-level features into slide-level representations

Model Training with Multi-Instance Learning

- Implement attention-based MIL pooling mechanisms

- Train classifier using only slide-level labels

- Optimize using Adam optimizer with learning rate 0.0001

- Validate performance on held-out test set

Interpretation and Analysis

- Generate attention heatmaps to identify informative regions

- Perform quantitative evaluation on independent test set

- Compare against ground truth and pathologist interpretations

Protocol: Foundation Model Assisted Weak Segmentation

This protocol adapts methods from foundation model research to generate segmentation seeds using only image-level supervision [5].

Procedure

Foundation Model Integration

- Initialize with pre-trained CLIP and SAM models

- Design task-specific prompts for classification and segmentation

- Keep foundation model weights frozen during initial training

Prompt Learning

- Learn classification-specific prompts using image-level labels

- Learn segmentation-specific prompts using contrastive losses

- Incorporate activation loss supervised by coarse seed maps

Seed Generation

- Process images through prompt-enhanced foundation models

- Generate high-quality segmentation seeds

- Use seeds as pseudo-labels for training segmentation networks

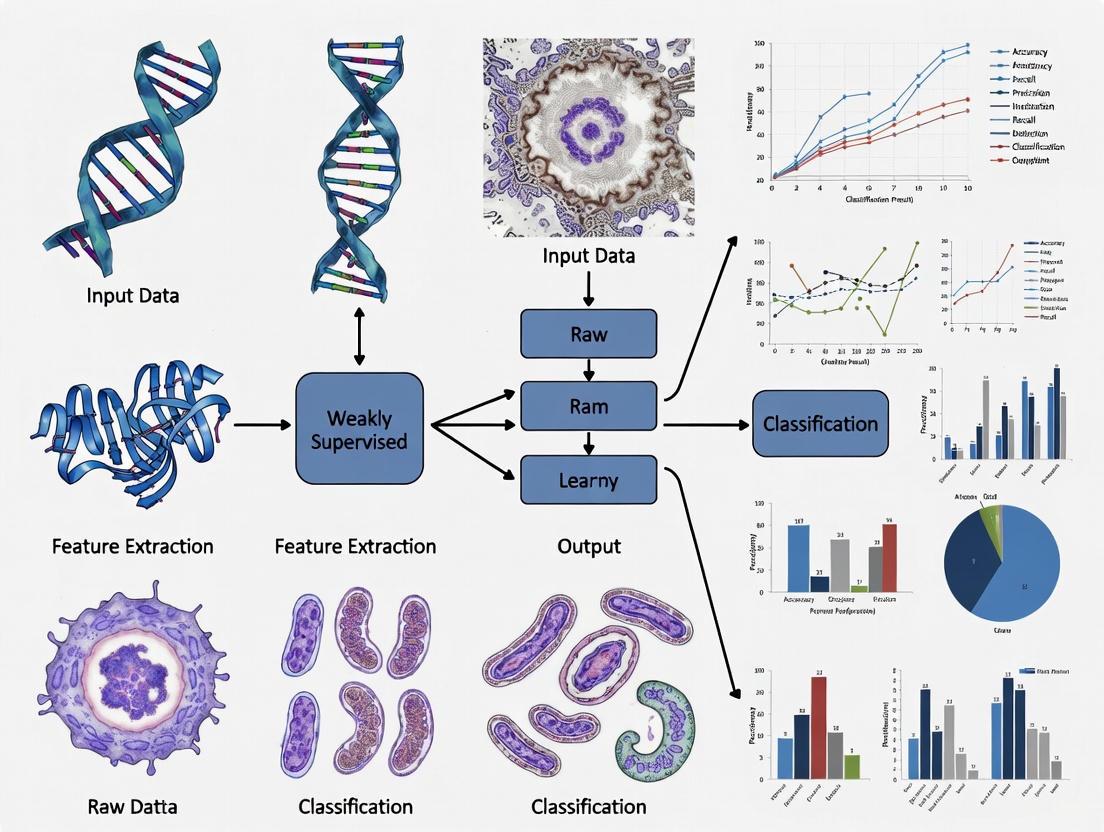

Visualization of Methodologies

Weakly Supervised WSI Classification Workflow

The Annotation Bottleneck in Pathology AI

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Computational Pathology

| Item | Function | Application Notes |

|---|---|---|

| Whole Slide Scanners (e.g., Aperio GT 450) | Digitizes glass slides into WSIs | For research use only; not for diagnostic procedures [3] |

| WSI Viewing Software (e.g., ImageScope) | Enables visualization and basic annotation of digital slides | Supports remote collaboration and multi-user access [2] |

| Deep Learning Frameworks (PyTorch, TensorFlow) | Provides infrastructure for model development and training | Essential for implementing weakly supervised algorithms [7] |

| Pre-trained Foundation Models (e.g., CONCH, Virchow) | Offers pre-learned feature representations for pathology images | Reduces need for extensive task-specific training data [4] |

| Computational Pathology Platforms (e.g., paiwsit.com) | Data management and analysis systems for WSI datasets | Facilitates storage, organization, and analysis of large WSI collections [7] |

| Annotation Tools (e.g., ASAP, QuPath) | Enables region-of-interest marking and labeling | Critical for generating ground truth data for validation [7] |

| High-Performance Computing (GPU servers) | Accelerates model training and inference | Necessary for processing gigapixel-sized WSIs efficiently [7] |

Future Perspectives

The integration of foundation models with weakly supervised learning approaches represents a promising path forward for addressing the annotation bottleneck in computational pathology [4]. Emerging research directions include:

- Unified Generative and Understanding Models: Developing models that can simultaneously perform comprehension and generation tasks for virtual staining and data augmentation [4].

- AI Agent Frameworks: Creating specialized AI agents that can simulate pathological reasoning through multi-step processes, enhancing interpretability and trust [6].

- Enhanced Vision-Language Models: Improving the alignment between visual features and pathological semantics to bridge the gap between pixel recognition and true semantic understanding [4].

As these technologies mature, they hold the potential to significantly reduce the dependency on extensive manual annotation while maintaining diagnostic accuracy, ultimately accelerating the adoption of AI-assisted tools in clinical and research pathology workflows.

What are Foundation Models? From Self-Supervised Learning (SSL) to Pre-trained Feature Extractors

Foundation models represent a paradigm shift in artificial intelligence (AI), characterized by their training on vast, broad datasets using self-supervised learning (SSL) techniques, which enables them to serve as versatile base models for a wide array of downstream tasks [8] [9]. These models, typically built on transformer architectures, have demonstrated remarkable adaptability across multiple domains including natural language processing, computer vision, and—more recently—computational pathology [8] [9] [10]. The term "foundation model" was coined by researchers at Stanford University to describe models that are "incomplete but serve as the common basis from which many task-specific models are built via adaptation" [9]. In the context of computational pathology, these models are particularly valuable due to their ability to learn generalized representations from unlabeled data, which can then be fine-tuned for specific diagnostic tasks with limited annotations [11] [10].

The significance of foundation models stems from their scalability and adaptability. Rather than developing AI systems from scratch for each specific task, researchers can use foundation models as starting points to develop specialized applications more quickly and cost-effectively [8]. This is especially relevant in medical imaging domains like whole slide image (WSI) analysis, where labeled data is scarce and expensive to obtain [11] [12]. Foundation models employ transfer learning to apply knowledge gained from one task to others, making them suitable for expansive domains including computer vision and natural language processing [9]. Their self-supervised learning approach allows them to create labels directly from input data without human annotation, setting them apart from previous machine learning architectures that relied on supervised or unsupervised learning [8].

Foundation Models in Computational Pathology

The Unique Challenges of Whole Slide Images

Computational pathology presents distinctive challenges that foundation models are particularly well-suited to address. Whole slide images (WSIs) are gigapixel-sized digital scans of tissue sections, creating significant computational hurdles for analysis [11]. These images exhibit tremendous spatial heterogeneity, with diagnostic regions often representing only tiny fractions of the entire slide—creating what researchers describe as "needles in a haystack" problems [11]. Traditional supervised deep learning approaches require extensive manual annotation of regions of interest, which is time-consuming, expensive, and subject to inter-observer variability among pathologists [11] [13].

The field has increasingly adopted weakly supervised learning paradigms, particularly multiple-instance learning (MIL), where only slide-level labels are available during training without precise localization of diagnostically relevant regions [11] [13]. This approach aligns well with clinical practice where pathologists provide overall diagnoses without pixel-level annotations. However, these methods often require large datasets of WSIs with slide-level labels and can suffer from poor domain adaptation and interpretability issues [11]. Foundation models pretrained using self-supervised learning on large, diverse histopathology datasets offer a promising solution to these challenges by providing robust, transferable feature representations that can be adapted to various diagnostic tasks with limited labeled data [10] [14].

Self-Supervised Learning Approaches for Pathology

Self-supervised learning has emerged as a powerful paradigm for training foundation models in computational pathology, effectively addressing the annotation bottleneck. SSL methods create supervisory signals directly from the data itself without human annotation, allowing models to learn rich visual representations from large-scale unlabeled WSI datasets [10] [14]. The SPT (Slide Pre-trained Transformers) framework exemplifies this approach by treating WSIs as collections of tokens and applying NLP-inspired transformation strategies including splitting, cropping, and masking to generate different "views" for self-supervised pretraining [14].

Multiple SSL objectives have been adapted for histopathology, including:

- Contrastive learning (e.g., SimCLR): Maximizes agreement between differently augmented views of the same image while minimizing agreement with other images [14]

- Self-distillation (e.g., BYOL): Uses a momentum encoder to predict representations of augmented views without negative samples [14]

- Regularization-based methods (e.g., VICReg): Applies variance, invariance, and covariance constraints to learn robust representations [14]

- Masked image modeling: Randomly masks portions of the input and trains the model to reconstruct the missing parts [10]

These approaches enable foundation models to capture morphologically meaningful patterns in histology images, forming a robust basis for downstream diagnostic tasks.

Pre-trained Feature Extractors for WSI Classification

Architectural Approaches

Table 1: Comparison of Foundation Model Architectures in Computational Pathology

| Model | Architecture | Modality | Pretraining Data | Key Features |

|---|---|---|---|---|

| TITAN [10] | Vision Transformer | Multimodal (image + text) | 335,645 WSIs + reports | Cross-modal alignment, slide-level representations |

| CLAM [11] | Attention-based MIL | Visual | Task-specific datasets | Data-efficient, attention-based localization |

| SPT [14] | Transformer | Visual | Diverse WSI collections | Multiple view generation, Fourier positional encoding |

| UNI [15] | Transformer | Visual | Large-scale histology patches | Patch-level foundational representations |

| Virchow2 [15] | Transformer | Visual | Large-scale histology patches | Competitive feature extraction capabilities |

Foundation models for computational pathology employ diverse architectural strategies to handle the unique challenges of WSIs. The TITAN (Transformer-based pathology Image and Text Alignment Network) model represents a comprehensive approach, using a Vision Transformer (ViT) backbone pretrained on 335,645 WSIs through visual self-supervised learning and vision-language alignment with corresponding pathology reports [10]. TITAN introduces several innovations to address computational challenges, including processing non-overlapping patches of 512×512 pixels at 20× magnification, using random cropping of feature grids, and employing attention with linear bias (ALiBi) for long-context extrapolation [10].

The CLAM (Clustering-constrained Attention Multiple-instance Learning) framework takes a different approach, specifically designed for data-efficient WSI processing using only slide-level labels [11]. CLAM uses attention-based learning to identify subregions of high diagnostic value and instance-level clustering to refine the feature space [11]. This method generates interpretable heatmaps that highlight regions contributing to classifications, enhancing model transparency without requiring pixel-level annotations during training.

Diagram 1: Weakly Supervised WSI Classification Workflow

Benchmarking Performance

Table 2: Performance Comparison of Weakly Supervised Methods on Cancer Subtyping Tasks

| Method | Architecture | RCC Subtyping (AUROC) | NSCLC Subtyping (AUROC) | Lymph Node Metastasis Detection (AUROC) | Data Efficiency |

|---|---|---|---|---|---|

| CLAM [11] | Attention-based MIL | 0.99 | 0.97 | 0.96 | High |

| ViT-based [13] | Vision Transformer | >0.90 | >0.90 | >0.90 | Medium |

| CNN-based MIL [13] | Convolutional Neural Network | >0.90 | >0.90 | >0.90 | Low-Medium |

| Classical Weakly Supervised [13] | CNN with averaging | >0.90 | >0.90 | >0.90 | Low |

Recent benchmarking studies have demonstrated the effectiveness of foundation models in various computational pathology tasks. In a comprehensive comparison of six AI algorithms across six clinical problems, Vision Transformers (ViTs) were found to outperform convolutional neural networks (CNNs) in clinically relevant prediction tasks, suggesting they could become the new standard in the field [13]. Surprisingly, for predicting molecular alterations, classical weakly-supervised workflows consistently outperformed more complex multiple-instance learning approaches, highlighting the importance of method selection based on specific clinical tasks [13].

The data efficiency of foundation models is particularly notable. CLAM has demonstrated high performance with limited annotations, achieving excellent results in renal cell carcinoma (RCC) and non-small-cell lung cancer (NSCLC) subtyping as well as lymph node metastasis detection with AUROC scores above 0.96 across all tasks [11]. This efficiency is crucial for rare cancers where large annotated datasets are unavailable.

Experimental Protocols

Protocol 1: Implementing CLAM for WSI Classification

Purpose: To implement a weakly supervised classification pipeline for whole slide images using the CLAM framework with foundation model feature extractors.

Materials and Reagents:

- Whole slide images (WSIs) in SVS or other standard formats

- High-performance computing environment with GPU acceleration

- Python 3.8+ with PyTorch and CLAM repository

Procedure:

Data Preparation:

- Organize WSIs into directory structure with slide-level labels

- Perform tissue segmentation to exclude background regions

- Extract patches of size 256×256 or 512×512 at desired magnification (typically 20×)

Feature Extraction:

- Use pretrained foundation models (UNI, Virchow2, or PLIP) to convert patches into feature embeddings

- Save features as .h5 or .pt files with spatial coordinates preserved

- Example code snippet:

Model Training:

- Split data into training/validation sets (typically 80/20 or 70/30)

- Configure CLAM with appropriate parameters:

- Train with slide-level labels using attention-based multiple instance learning

- Monitor training with accuracy and loss metrics

Interpretation and Evaluation:

- Generate attention heatmaps to visualize diagnostically relevant regions

- Calculate slide-level predictions and performance metrics (AUROC, accuracy)

- Perform statistical analysis of model performance

Troubleshooting Tips:

- For large datasets, use data loaders with appropriate batch sizes

- If attention maps are diffuse, adjust clustering strength parameters

- For poor performance, try different foundation model feature extractors

Protocol 2: Cross-modal Foundation Model Pretraining

Purpose: To pretrain a multimodal foundation model for pathology images and text using self-supervised learning.

Materials and Reagents:

- Large-scale dataset of WSIs (100,000+ slides)

- Corresponding pathology reports or synthetic captions

- High-performance computing cluster with multiple GPUs

Procedure:

Data Collection and Curation:

- Gather diverse WSIs across multiple organ systems and stain types

- Collect corresponding pathology reports or generate synthetic captions using generative AI

- Ensure data privacy compliance and ethical approvals

Vision-Only Pretraining:

- Apply iBOT framework for masked image modeling at patch level

- Use knowledge distillation with teacher-student framework

- Train with random cropping and feature grid sampling

Cross-modal Alignment:

- Implement image-text contrastive learning (ITC)

- Use masked language modeling (MLM) for text encoding

- Apply image-text matching (ITM) objectives

Evaluation and Validation:

- Assess representation quality through linear probing on multiple tasks

- Perform zero-shot classification and cross-modal retrieval tests

- Validate on rare cancer retrieval to test generalization

Timeline: 4-8 weeks depending on dataset size and computational resources

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| CLAM [11] | Software Framework | Weakly supervised WSI classification with interpretability | GitHub: mahmoodlab/CLAM |

| TITAN [10] | Foundation Model | Multimodal slide representation learning | Upon request |

| UNI [15] | Feature Extractor | Patch-level foundational representations | Open source |

| Virchow2 [15] | Feature Extractor | Competitive pathology-specific features | Research license |

| Hugging Face [8] | Model Repository | Access to community-shared models and datasets | huggingface.co |

| Amazon Bedrock [8] | API Service | Access to foundation models through API | AWS console |

| PMC [11] [16] | Data Repository | Access to scientific literature and datasets | pmc.ncbi.nlm.nih.gov |

The integration of foundation models with weakly supervised learning approaches represents a transformative development in computational pathology. These models address critical challenges in the field, including data efficiency, interpretability, and generalizability across domains and institutions [11] [10]. The ability to leverage self-supervised learning on large unlabeled datasets followed by fine-tuning on small annotated collections makes foundation models particularly valuable for medical applications where expert annotations are scarce and expensive [12] [10].

Future research directions include developing more sophisticated multimodal foundation models that integrate histology with genomic, transcriptomic, and clinical data [10]. There is also growing interest in federated learning approaches that enable model training across institutions without sharing sensitive patient data [13]. As noted in benchmarking studies, the field would benefit from standardized evaluation frameworks and more comprehensive comparisons of emerging foundation models against established baselines [13].

In conclusion, foundation models trained through self-supervised learning have established a new paradigm for computational pathology research. By serving as versatile, powerful feature extractors, these models enable more data-efficient and interpretable whole slide image classification, accelerating the development of AI-assisted diagnostic tools that can ultimately enhance patient care. The protocols and frameworks described in this article provide researchers with practical guidance for leveraging these advanced techniques in their own computational pathology workflows.

Understanding Weakly Supervised Learning and the Multiple Instance Learning (MIL) Paradigm

The advancement of computational pathology is significantly constrained by the enormous cost and expertise required for detailed annotation of gigapixel Whole Slide Images (WSIs). Weakly Supervised Learning (WSL) has emerged as a powerful paradigm to overcome this bottleneck by enabling model development using only slide-level labels, which are more readily available from diagnostic reports, rather than exhaustive, expert-made region-of-interest or pixel-level annotations [11] [17]. This approach is particularly vital for medical image analysis, where acquiring large-scale, fully-annotated datasets is often impractical [18]. Within WSL, Multiple Instance Learning (MIL) has become the predominant framework, treating each WSI as a "bag" containing thousands of unlabeled image patches ("instances") and learning from a single label assigned to the entire bag [19] [11].

The application of MIL in pathology is typically governed by the standard MIL assumption: a bag (slide) is positive if at least one of its instances (patches) is positive, and negative if all instances are negative [19] [17]. This formulation naturally fits various diagnostic tasks, such as detecting cancer metastasis or subtyping tumours, where a slide is positive for a disease if it contains at least one region with malignant cells [11] [17]. The primary challenge lies in effectively aggregating information from thousands of instances to make accurate slide-level predictions while also identifying which instances were most critical for the decision—a key to model interpretability.

Fundamental MIL Architectures and Benchmark Performance

Multiple architectural approaches have been developed to implement the MIL paradigm for WSIs. The following table summarizes the core characteristics and representative performance of key methods.

Table 1: Comparison of Fundamental MIL Architectures for WSI Classification

| Architecture | Key Principle | Advantages | Limitations | Representative Performance (AUC) |

|---|---|---|---|---|

| Instance-based MIL | Classifies each instance individually; aggregates predictions via max-pooling [19]. | Simple, intuitive implementation. | Instance-level classifier may be poorly trained due to lack of labels; can introduce error [19]. | ~0.92-0.95 for lung carcinoma classification [17] |

| Embedding-based MIL | Maps instances to embeddings; aggregates into a single slide-level representation for classification [19]. | More flexible and often higher performance; end-to-end training [19]. | Lacks inherent interpretability [19]. | High performance on various subtyping tasks [11] |

| Attention-based MIL (AMIL) | Uses a neural network to assign an attention score to each instance, weighting their contribution to the bag-level representation [19] [11]. | Differentiable; provides instance-level interpretability via attention scores [19]. | Computationally more complex than max-pooling. | 0.97 for tumor detection in TCGA-LUSC [20] |

| Clustering-constrained ATT (CLAM) | Adds an instance-level clustering loss to attention-based MIL to refine the feature space [11]. | Data-efficient; provides interpretable heatmaps; suitable for multi-class tasks [11]. | Requires additional loss hyperparameter tuning. | 0.975-0.988 across four independent test sets for lung carcinoma [11] |

| HybridMIL | Combines CNNs with Broad Learning Systems (BLS) to capture multi-level features and global semantics [18]. | Captures multi-level feature correlations; good for classification and localization [18]. | Complex architecture design. | Surpasses other MIL models by up to 8.5% in classification accuracy [18] |

The transition from simpler instance-based methods to more advanced attention-based and hybrid models has led to significant gains in both accuracy and interpretability. For example, in a landmark study on lung carcinoma classification, a weakly supervised MIL model outperformed a fully-supervised approach, achieving AUCs between 0.974 and 0.988 on four independent test sets, demonstrating exceptional generalization [11] [17]. The adoption of attention mechanisms has been particularly transformative, as it allows models to learn which regions of a slide are most indicative of the diagnosis without any spatial labels during training [19] [11].

The Emergence of Whole-Slide Foundation Models

A recent paradigm shift in the field is the development of whole-slide foundation models pre-trained on massive, diverse datasets of histopathology images. These models aim to learn general-purpose, transferable representations of WSIs that can be readily adapted to various downstream tasks with minimal task-specific labeling.

Table 2: Overview of Whole-Slide Foundation Models for Digital Pathology

| Model Name | Key Innovation | Pre-training Scale | Modality | Reported Performance |

|---|---|---|---|---|

| Prov-GigaPath [21] | Adapts LongNet for ultra-long-context modeling of entire WSIs (tens of thousands of tiles). | 171,189 WSIs (1.3B image tiles) [21] | Vision & Vision-Language | SOTA on 25/26 tasks; 23.5% AUROC improvement for EGFR mutation prediction vs. second-best [21]. |

| TITAN [10] | A multimodal vision-language model aligned with pathology reports and synthetic captions. | 335,645 WSIs [10] | Vision-Language | Excels in zero-shot classification, rare cancer retrieval, and report generation [10]. |

| CLAM [11] | An attention-based MIL framework with instance-level clustering for data-efficient learning. | Not a foundation model; a method designed for data-efficient learning on smaller datasets [11]. | Vision | Highly data-efficient; achieves high performance with hundreds, not thousands, of labeled WSIs [11]. |

These foundation models address key limitations of earlier patch-based approaches by explicitly modeling the long-range context and global tissue architecture within a slide [10] [21]. For instance, Prov-GigaPath uses a novel Vision Transformer (ViT) architecture with dilated self-attention to process sequences of tens of thousands of tile embeddings, effectively capturing both local and global patterns across the gigapixel slide [21]. In benchmarks spanning cancer subtyping and mutation prediction, Prov-GigaPath attained state-of-the-art performance on 25 out of 26 tasks, demonstrating the power of large-scale, whole-slide pre-training [21].

Integrated Experimental Protocols

This section outlines detailed protocols for implementing a modern, foundation-model-enhanced MIL pipeline for WSI classification.

Protocol: WSI Preprocessing and Feature Extraction

Purpose: To standardize gigapixel WSIs and convert them into a structured set of feature vectors suitable for MIL model input.

Tissue Segmentation:

- Process the WSI using automated tissue segmentation algorithms to exclude background regions (e.g., glass slide, air bubbles) [11]. This drastically reduces the number of patches to process.

Patch Extraction:

Feature Embedding:

- Instead of using raw pixel values, extract a low-dimensional feature vector for each patch using a pre-trained encoder.

- Recommended Encoder: Use a foundation model encoder like Prov-GigaPath's tile encoder or other powerful pre-trained networks (e.g., CONCH) [10] [21]. This transfer learning step is critical for performance.

- The output is a set of feature vectors

{h_1, h_2, ..., h_K}, whereKis the number of patches, which constitutes the "bag" for the WSI [19] [11].

WSI Preprocessing and Feature Extraction Workflow

Protocol: Attention-Based Deep MIL with a Foundation Model Encoder

Purpose: To train an interpretable, high-performance classifier using slide-level labels.

Input Preparation:

- Input the bag of feature vectors

{h_1, h_2, ..., h_K}obtained from Protocol 4.1.

- Input the bag of feature vectors

Attention Network:

- Pass each feature vector

h_kthrough a small, fully-connected neural network to generate a scalar attention scorea_k[19] [11]. The scores measure the relative importance of each patch. a_k = w^T (tanh(V h_k^T) ⊙ sigm(U h_k^T))where V, U, and w are learnable parameters [19].- Normalize the scores across all patches in the slide using a softmax function to create attention weights [19].

- Pass each feature vector

MIL Pooling:

Classification and Output:

- Pass the slide-level representation

zthrough a final classification layerg(e.g., a fully-connected network) to predict the slide-level class probabilityϴ(X)[19]. - The model is trained end-to-end by minimizing a standard classification loss (e.g., cross-entropy) using the slide-level labels [11].

- Pass the slide-level representation

Attention-based MIL Architecture

Protocol: Data-Efficient Learning with CLAM

Purpose: To achieve high performance with limited labeled data, a common scenario in clinical research.

Steps 1-3 of Protocol 4.2: Follow the same feature extraction and attention-based MIL process [11].

Instance-Level Clustering:

- In addition to the slide-level classification loss, introduce an instance-level clustering loss [11].

- For a given slide, use the model's attention scores to identify "high-attention" and "low-attention" patches.

- Apply a clustering loss (e.g., a form of cluster center distance loss) to encourage the model to separate the features of high-attention patches from low-attention patches, effectively refining the feature space [11].

- Under the mutual exclusivity assumption, you can treat high-attention patches from negative classes as "false positives" and add corresponding loss terms [11].

Joint Training:

- The total loss is a weighted sum of the slide-level classification loss and the instance-level clustering loss.

- This auxiliary task acts as a strong regularizer and enables the model to learn effectively from fewer labeled slides [11].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Tools and Resources for MIL-based WSI Research

| Category / Item | Specific Examples | Function & Utility |

|---|---|---|

| Public WSI Datasets | The Cancer Genome Atlas (TCGA), CAMELYON-16, TCGA-LUNG, BRACS [22] [11] [20] | Provide large, well-characterized, and often publicly available WSI datasets with slide-level and sometimes pixel-level labels for training and benchmarking. |

| Pre-trained Patch Encoders | CONCH, CTransPath, DINOv2 [10] [21] | Act as powerful feature extractors for image patches. Using models pre-trained on large histopathology or natural image datasets is a form of transfer learning that significantly boosts performance. |

| Whole-Slide Foundation Models | Prov-GigaPath, TITAN [10] [21] [23] | Provide off-the-shelf, general-purpose slide-level representations. Can be used as strong feature extractors for entire WSIs or fine-tuned for specific tasks, often achieving state-of-the-art results. |

| MIL Software Frameworks | CLAM [11] | Open-source Python packages that provide high-throughput, easy-to-use pipelines for WSI processing and MIL model training, facilitating rapid prototyping and experimentation. |

| Computational Hardware | High-Performance Compute (HPC) Clusters, GPUs (NVIDIA) | Essential for processing gigapixel WSIs and training large deep-learning models, especially foundation models with billions of parameters. |

The fusion of the Multiple Instance Learning paradigm with large-scale foundation models represents the cutting edge of computational pathology. MIL provides a principled framework for learning from weak, slide-level labels, while foundation models like Prov-GigaPath and TITAN inject powerful, general-purpose representations pre-trained on hundreds of thousands of slides. This synergy enables the development of more accurate, data-efficient, and interpretable AI tools for tasks ranging from cancer diagnosis and subtyping to mutation prediction. As these technologies mature, they hold the profound potential to become integral components of the digital pathology workflow, assisting pathologists and accelerating the pace of biomedical discovery.

The analysis of Whole Slide Images (WSIs) in digital pathology presents a unique computational challenge due to the gigapixel size of the images, which can comprise tens of thousands of individual image tiles. Foundation models, trained on broad data using self-supervision and adapted to various downstream tasks, have emerged as powerful solutions, particularly in scenarios with scarce labeled data. Within this paradigm, three key architectural families have proven instrumental: Convolutional Neural Networks (CNNs), Vision Transformers (ViTs), and Vision-Language Models (VLMs). CNNs bring inductive biases beneficial for learning local hierarchical features, while ViTs excel at capturing long-range dependencies and global context through self-attention mechanisms. VLMs extend these capabilities by integrating visual and textual information, enabling tasks that require semantic understanding. In the context of weakly supervised learning for WSI classification—where only slide-level labels are available—these architectures form the backbone of Multiple Instance Learning (MIL) frameworks, allowing models to learn from bags of instances (patches) without costly pixel-level or patch-level annotations. Their adaptation and scaling for gigapixel images represent an active and critical area of research [24] [25].

Core Architectural Principles

Convolutional Neural Networks (CNNs): CNNs, such as ResNet, operate on the principles of locality and weight sharing. Their inductive bias assumes that nearby pixels are related, making them highly effective at extracting hierarchical local features like edges and textures from image data. They process images through sliding convolutional filters and are known for their parameter efficiency [25]. In WSI analysis, they are often used as tile-level feature extractors.

Vision Transformers (ViTs): ViTs split an image into a sequence of fixed-size patches, linearly embed them, and process them through a standard Transformer encoder. The core of the ViT is the self-attention mechanism, which allows the model to weigh the importance of all other patches when encoding a specific patch. This enables ViTs to capture global context and long-range dependencies across the entire image, a significant advantage for understanding complex tissue structures [26] [25].

Vision-Language Models (VLMs): VLMs like CONCH and PLIP are typically dual-encoder models that process image and text inputs independently, projecting them into a shared latent representation space. They are trained using contrastive learning objectives that pull the embeddings of matching image-text pairs closer together while pushing non-matching pairs apart. This alignment allows for capabilities such as zero-shot classification, image-to-text retrieval, and text-to-image retrieval by using natural language prompts to query visual data [27] [24].

Quantitative Performance Comparison

The table below summarizes the reported performance of these architectures across various pathology tasks, highlighting their respective strengths.

Table 1: Performance Comparison of Key Architectures on Pathology Tasks

| Architecture | Model Example | Task | Dataset | Performance Metric | Score | Key Advantage |

|---|---|---|---|---|---|---|

| CNN-based | ResNet (Weakly-Supervised) | Microorganism Enumeration [26] | Multiple Microbiology Datasets | Overall Performance | Better | Proven reliability, strong local feature extraction |

| ViT-based | CrossViT (Weakly-Supervised) [26] | Microorganism Enumeration [26] | Multiple Microbiology Datasets | Competent Results | Competent | Computational efficiency on homogeneous data |

| ViT-based | ViT-WSI [28] | Brain Tumor Subtyping | TCGA, In-house | Patient-Level AUC | 0.960 (IDH1) | High interpretability, powerful feature discovery |

| ViT-based | ViT-WSI [28] | Molecular Marker Prediction | TCGA, In-house | Patient-Level AUC | 0.874 (p53), 0.845 (MGMT) | Predicts molecular status from H&E stains |

| VLM | CONCH (Zero-shot) [27] | NSCLC Subtyping | TCGA NSCLC | Accuracy | 90.7% | Large margin over other VLMs, no task-specific training |

| VLM | CONCH (Zero-shot) [27] | RCC Subtyping | TCGA RCC | Accuracy | 90.2% | +9.8% over next-best (PLIP) |

| VLM | CONCH (Zero-shot) [27] | BRCA Subtyping | TCGA BRCA | Accuracy | 91.3% | ~35% improvement over other VLMs |

| Foundation Model | Prov-GigaPath [24] | Pan-Cancer Mutation Prediction | TCGA (18 genes) | Macro AUROC / AUPRC | +3.3% / +8.9% (vs. SOTA) | Whole-slide context modeling, scales to billions of tiles |

Application Notes and Experimental Protocols

Protocol 1: Weakly-Supervised WSI Classification using a ViT-based MIL Framework

This protocol outlines the methodology for major primary brain tumor classification using the ViT-WSI model, a representative ViT-based approach for weakly supervised learning [28].

- Objective: To perform end-to-end classification of WSIs (e.g., brain tumor typing and subtyping) using only slide-level labels and to identify discriminative histological features.

Materials:

- Dataset: WSIs from The Cancer Genome Atlas (TCGA) and/or institutional archives.

- Label Type: Slide-level diagnostic labels (e.g., glioblastoma, astrocytoma).

- Computing: High-memory GPU servers capable of processing thousands of image patches per WSI.

Methodology:

- WSI Preprocessing: Tissue patches are extracted from the WSI at a specified magnification (e.g., 20x). Each patch is processed through a pre-trained feature extractor (e.g., a CNN trained on histopathology data) to obtain a feature vector. The entire WSI is represented as a bag of these feature vectors.

- Feature Projection: The feature vectors are projected into a lower-dimensional space and combined with positional encoding to retain spatial information.

- Vision Transformer Encoder: The sequence of projected patch features is fed into a standard Vision Transformer encoder. The self-attention mechanism within the ViT models the interrelationships between all patches in the WSI, creating a contextualized embedding for each patch.

- Classification Head: The [CLS] token output from the ViT encoder, which aggregates global slide information, is connected to a multi-layer perceptron (MLP) for the final slide-level classification.

- Interpretation via Attribution: A gradient-based attribution analysis (e.g., calculating gradients of the output class with respect to the input patch features) is performed. This highlights the patches that most contributed to the prediction, effectively localizing diagnostic histological features without any pixel-level supervision.

Key Considerations: This end-to-end approach avoids the separate feature extraction and aggregation steps of older MIL methods. The quality of the initial feature extractor can significantly impact final performance.

Protocol 2: Zero-Shot WSI Classification using a Vision-Language Foundation Model

This protocol describes how to use a VLM like CONCH for zero-shot classification of WSIs, which requires no task-specific training data [27].

- Objective: To classify WSIs into diagnostic categories using only natural language descriptions of the classes.

- Materials:

- Pre-trained VLM: A model like CONCH, pretrained on histopathology image-caption pairs.

- Text Prompts: A set of hand-crafted text descriptions for each diagnostic class (e.g., "A whole slide image of invasive ductal carcinoma of the breast").

Methodology:

- Prompt Engineering: Create an ensemble of multiple text prompts for each class to improve robustness (e.g., "breast invasive ductal carcinoma," "a histology image of IDC").

- Text Embedding: Use the VLM's text encoder to generate embedding vectors for all prompts across all classes.

- WSI Tiling and Image Embedding: Divide the target WSI into tiles. Use the VLM's image encoder to generate an embedding vector for each tile.

- Similarity Calculation: Compute the cosine similarity between each tile's image embedding and the text embeddings of all class prompts.

- Score Aggregation: For each class, aggregate the similarity scores across all tiles in the WSI (e.g., by averaging the top-k most similar tiles, or using a method like MI-Zero). The class with the highest aggregated score is the predicted label.

- Heatmap Visualization (Optional): To create an interpretable heatmap, visualize the cosine similarity scores between tiles and the predicted class's text prompt across the WSI canvas.

Key Considerations: Performance is highly dependent on the quality and diversity of the text prompts. This method is particularly powerful for rare diseases or new tasks where collecting labeled data is infeasible.

Table 2: Essential Research Reagents for Weakly Supervised WSI Analysis

| Reagent / Resource | Type | Description | Representative Examples |

|---|---|---|---|

| TCGA (The Cancer Genome Atlas) | Dataset | A large, publicly available repository of WSIs with associated genomic and clinical data, serving as a primary benchmark. | TCGA-BRCA, TCGA-LUAD, TCGA-RCC [27] [28] |

| Prov-Path | Dataset | A very large-scale, real-world dataset from a health network; used for pre-training foundation models. | Prov-GigaPath was pretrained on 1.3 billion tiles from 171k slides [24] |

| CONCH | Vision-Language Model | A VLM pretrained on 1.17M histopathology image-caption pairs; enables zero-shot transfer. | Used for classification, segmentation, and retrieval [27] |

| Prov-GigaPath | Foundation Model | An open-weight, ViT-based foundation model pretrained on Prov-Path; excels at whole-slide context modeling. | Used for cancer subtyping and mutation prediction [24] |

| DINOv2 | Algorithm | A self-supervised learning method used for pre-training feature extractors without labels. | Used as the tile encoder in the Prov-GigaPath model [24] |

| MI-Zero | Algorithm | A method for aggregating tile-level predictions in a WSI for zero-shot VLM classification. | Used with CONCH for gigapixel WSI classification [27] |

Advanced Integrated Workflow and Emerging Paradigms

Building on the core protocols, this section outlines a sophisticated, integrated workflow that leverages the strengths of both ViTs and VLMs, and introduces emerging paradigms like weakly semi-supervised learning.

Integrated Workflow: Combining ViT Feature Encoding with VLM Zero-Shot Triage

A powerful approach for real-world deployment involves creating a multi-stage pipeline. This protocol uses a VLM for high-throughput, zero-shot triage of easy cases, and a fine-tuned ViT model for complex diagnostics on uncertain cases [27] [28] [24].

- Objective: To maximize diagnostic accuracy and efficiency by combining the broad, zero-shot capabilities of VLMs with the task-optimized performance of fine-tuned ViTs.

- Materials: A pre-trained VLM, a ViT architecture (e.g., ViT-WSI, Prov-GigaPath), and a dataset with slide-level labels for fine-tuning.

- Methodology:

- Initial Triage: Process all incoming WSIs through the zero-shot VLM pipeline (Protocol 2).

- Confidence Thresholding: Assign cases where the VLM's prediction confidence exceeds a pre-defined, high threshold to the "Automated Diagnosis" stream. These cases are reported automatically.

- Expert Routing: Route all low-confidence cases (e.g., difficult or rare subtypes) for fine-tuned analysis. These WSIs are processed by a specialized, fine-tuned ViT model (following Protocol 1), which was trained on a curated dataset of complex cases.

- Pathologist in the Loop: The predictions from the fine-tuned ViT, along with its interpretability heatmaps, are presented to a pathologist for final review and diagnosis.

- Key Considerations: This workflow optimizes pathologist workload by automating clear-cut cases and providing decision support for challenging ones. The confidence threshold is a critical parameter that requires careful calibration based on clinical requirements.

Protocol 3: Weakly Semi-Supervised Learning with Cross-Consistency (CroCo)

The CroCo framework addresses a realistic clinical scenario where only a small fraction of WSIs have bag-level labels, and the rest are unlabeled. It moves beyond standard weakly supervised learning into a weakly semi-supervised paradigm [29].

- Objective: To achieve robust bag-level and instance-level classification using a limited set of labeled WSIs and a large set of unlabeled WSIs.

- Materials:

- Dataset: A collection of WSIs where only a small subset has slide-level labels.

- Model: A dual-branch architecture with heterogeneous classifiers.

- Methodology:

- Dual-Branch Architecture: The CroCo framework consists of two branches:

- Upper Branch (Bag-based): An attention-based MIL pooler that aggregates instance features to make a bag-level prediction.

- Lower Branch (Instance-based): A classifier that assigns a pseudo-label to each instance.

- Two-Level Cross Consistency:

- Bag-Level Consistency: The bag-level predictions from both branches are enforced to be consistent for the same unlabeled WSI.

- Instance-Level Consistency: The attention scores from the upper branch are used as pseudo-labels to supervise the instance classifier in the lower branch, and vice-versa. For labeled positive bags, the instance-level pseudo-labels are exchanged between branches.

- Training: The model is trained jointly on the labeled data (using true labels) and the unlabeled data (using the consistency constraints).

- Dual-Branch Architecture: The CroCo framework consists of two branches:

- Key Considerations: This method efficiently leverages the unlabeled data by exploiting the multi-granularity (bag and instance) consistency between two different model perspectives, significantly improving performance when labeled data is scarce.

In computational pathology, the classification of Whole Slide Images (WSIs) presents a unique computational challenge due to their gigapixel size, often comprising tens of thousands of image tiles [24]. Multiple Instance Learning (MIL) has emerged as the predominant weakly supervised framework for this task, where a WSI is treated as a "bag" containing numerous patches or "instances" [30]. A significant breakthrough has been the integration of foundation models as powerful feature extractors for these instances. These models, pre-trained on massive datasets, provide generalized, information-rich tile embeddings that dramatically enhance the performance of MIL frameworks on critical tasks such as cancer subtyping, biomarker prediction, and prognosis stratification [31]. This application note explores the synergy between pathology foundation models and MIL, providing a quantitative benchmark of current models, detailed protocols for their implementation, and visualization of the leading architectures driving innovation in the field.

Benchmarking Pathology Foundation Models

Recent independent benchmarking studies have comprehensively evaluated numerous pathology foundation models across thousands of slides and dozens of clinically relevant tasks. The table below summarizes the performance of top-performing models based on their mean Area Under the Receiver Operating Characteristic curve (AUROC) across different task categories.

Table 1: Benchmarking Performance of Leading Pathology Foundation Models

| Foundation Model | Model Type | Morphology Tasks (Mean AUROC) | Biomarker Tasks (Mean AUROC) | Prognosis Tasks (Mean AUROC) | Overall Mean AUROC |

|---|---|---|---|---|---|

| CONCH | Vision-Language | 0.77 | 0.73 | 0.63 | 0.71 |

| Virchow2 | Vision-Only | 0.76 | 0.73 | 0.61 | 0.71 |

| Prov-GigaPath | Vision-Only | - | 0.72 | - | 0.69 |

| DinoSSLPath | Vision-Only | 0.76 | - | - | 0.69 |

| BiomedCLIP | Vision-Language | - | - | 0.61 | 0.66 |

This benchmarking study, which involved 19 foundation models and 31 tasks across 6,818 patients, revealed that CONCH, a vision-language model trained on 1.17 million image-caption pairs, and Virchow2, a vision-only model trained on 3.1 million WSIs, achieved the highest overall performance [31]. A key finding was that foundation models trained on distinct cohorts learn complementary features; an ensemble combining CONCH and Virchow2 predictions outperformed individual models in 55% of tasks [31]. Furthermore, the research indicated that data diversity in pre-training may outweigh data volume, as CONCH outperformed BiomedCLIP despite being trained on far fewer image-caption pairs [31].

Table 2: Key Characteristics of Top-Performing Foundation Models

| Foundation Model | Pre-training Dataset Scale | Architecture | Key Strengths |

|---|---|---|---|

| CONCH | 1.17M image-caption pairs [31] | Vision-Language [31] | Highest overall performance, excels in morphology tasks [31] |

| Virchow2 | 3.1M WSIs [31] | Vision-Only [31] | Top performance in biomarker prediction, strong in low-data settings [31] |

| Prov-GigaPath | 171,189 WSIs (1.3B tiles) [24] | Vision-Transformer (LongNet) [24] | State-of-the-art whole-slide context modeling [24] |

| TCv2 | Openly available data (TCGA, CPTAC) [32] | Supervised Multi-task Learning [32] | Resource-efficient training, state-of-the-art in cancer subtyping [32] |

Experimental Protocols for MIL-Based WSI Classification

Feature Extraction Using Foundation Models

Objective: To generate high-quality tile-level feature embeddings from a gigapixel WSI using a pre-trained foundation model for downstream MIL aggregation.

Materials and Reagents:

- Whole Slide Image (WSI): A gigapixel image file (e.g., in .svs, .ndpi format).

- Pre-trained Foundation Model: Such as CONCH, Virchow2, or Prov-GigaPath.

- Computational Resources: A GPU-equipped workstation (e.g., NVIDIA A100) with sufficient VRAM (>16GB recommended).

Procedure:

- WSI Pre-processing:

- Load the WSI using a library like OpenSlide.

- Tile the WSI at a specified magnification level (e.g., 20x) into non-overlapping patches of standard size (e.g., 256x256 pixels).

- Perform background detection and filtering to remove non-tissue tiles using thresholding algorithms (e.g., based on color or intensity).

- Feature Extraction:

- Load the pre-trained weights of the chosen foundation model.

- Pass each valid image tile through the model to extract a feature vector.

- For vision transformer-based models like Prov-GigaPath, this yields an embedding for each tile [24].

- The output is a feature matrix of dimensions N x D, where N is the number of tiles and D is the dimensionality of the feature vector.

MIL Aggregation and Classification

Objective: To aggregate tile-level features into a slide-level representation and perform a classification task (e.g., mutation prediction).

Materials and Reagents:

- Feature Matrix: The N x D matrix from the previous protocol.

- MIL Framework: Such as an Attention-based MIL (ABMIL) or a Transformer-based aggregator.

Procedure:

- Feature Aggregation:

- Input the feature matrix into the MIL model.

- The model learns to assign attention weights to each tile, identifying diagnostically relevant regions.

- A slide-level embedding is generated as a weighted sum of all tile features.

Classification Head:

- The slide-level embedding is passed through a classifier, typically a fully connected neural network layer, to produce a prediction (e.g., BRAF mutation positive/negative).

Training and Evaluation:

- Train the MIL model end-to-end using slide-level labels. The foundation model's feature extractor can be frozen or fine-tuned.

- Evaluate performance on a held-out test set using metrics such as AUROC and AUPRC.

Advanced Methodologies and Visualization

Workflow Diagram

The following diagram illustrates the end-to-end pipeline for WSI classification using foundation models within an MIL framework.

The CAMCSA Architecture

To address limitations of standard attention-based MIL, novel architectures like CAMCSA (Class Activation Map with Cross-Slide Augmentation) have been developed [30] [33]. The diagram below details its structure.

The WSICAM module introduces Class Activation Map (CAM) theory to generate instance scores that more accurately represent each tile's contribution to the final slide-level classification, moving beyond simple feature similarity [30] [33]. The CSA module leverages these scores to select significant instances from two different slides, creating a "mixed bag" with a blended label. This augmentation technique enhances model generalization and is particularly effective for imbalanced datasets [30].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Resources for Implementing Foundation Model-Driven WSI Analysis

| Category / Item | Specification / Example | Function & Application Note |

|---|---|---|

| Foundation Models | CONCH, Virchow2, Prov-GigaPath, TCv2 [31] [24] [32] | Pre-trained feature extractors. Select based on task: vision-language for morphology, large-scale vision-only for biomarkers. |

| MIL Aggregators | ABMIL, Transformer-based, CAMCSA [31] [30] | Algorithms to aggregate tile features. Transformer-based and advanced methods like CAMCSA often outperform simple attention. |

| Computational Hardware | NVIDIA A100/A6000 GPU [32] | Accelerates feature extraction and model training. Essential for processing gigapixel WSIs in a feasible time. |

| WSI Datasets | TCGA, CPTAC, Camelyon16, in-house cohorts [32] | Source of WSIs for training and validation. Ensure external validation cohorts to test generalizability [31]. |

| Software Libraries | Python, PyTorch, OpenSlide, HiMLS | Core programming environment and tools for WSI handling, model implementation, and data management. |

The synergy between foundation models and MIL frameworks represents a paradigm shift in computational pathology. By providing powerful, general-purpose feature representations, foundation models like CONCH and Virchow2 have significantly elevated the performance ceiling for weakly supervised WSI classification on tasks ranging from biomarker prediction to prognosis. The experimental protocols and advanced methodologies detailed herein provide a roadmap for researchers to leverage these tools effectively. Future progress will likely be driven by more data-efficient and explainable architectures, such as CAMCSA and TCv2, further cementing the role of foundation models as the cornerstone of digital pathology analysis.

From Theory to Practice: Implementing Weakly Supervised Pipelines with Foundation Models

Whole Slide Image (WSI) classification is a cornerstone of computational pathology, enabling automated diagnosis, prognosis, and biomarker prediction from gigapixel tissue scans. A typical WSI analysis pipeline involves a sequence of critical steps: tissue segmentation to distinguish relevant tissue from background, patching to divide the gigapixel image into manageable segments, and feature extraction to convert pixel data into meaningful numerical representations. The emergence of foundation models, pre-trained on massive datasets via self-supervised learning, has revolutionized feature extraction, facilitating robust and data-efficient downstream analysis. Furthermore, the paradigm of weakly supervised learning, which utilizes only slide-level labels for training, has gained significant traction as it alleviates the need for expensive and tedious pixel-level annotations. This document details the application notes and protocols for constructing a WSI classification pipeline within the context of using foundation models for weakly supervised whole-slide image classification research.

Core Building Blocks of the WSI Pipeline

The processing of a WSI involves a multi-stage pipeline where each stage is critical for the overall performance of the classification system. The workflow progresses from raw WSI input to a final slide-level prediction, with intermediate steps of tissue segmentation, patching, and feature extraction feeding into a weakly supervised classification model. The following diagram illustrates the logical flow and key decision points within this pipeline.

Tissue Segmentation

Purpose and Goal: The initial and crucial step in WSI analysis is tissue segmentation, which involves identifying and separating biologically relevant tissue regions from the background (e.g., glass slide, air bubbles, pen marks). This process drastically reduces the computational load by ensuring that subsequent processing is focused only on meaningful areas.

Detailed Protocol:

- Input: A gigapixel WSI at its highest magnification level.

- Thumbnail Generation: Generate a low-resolution thumbnail (e.g., 5x or 10x magnification) of the entire WSI to simplify the segmentation task.

- Color Normalization (Optional): Apply color normalization techniques to standardize stain variations across different scanners and laboratories, improving segmentation robustness.

- Segmentation Model:

- Model Architecture: A U-Net is a commonly used architecture for this semantic segmentation task. An in-house trained U-Net model can be utilized to segment tissue versus background on the low-magnification thumbnail [34].

- Output: A binary mask where pixels corresponding to tissue are labeled 1, and background pixels are labeled 0.

- Mask Application: The generated binary mask is used to filter out background regions during the patching step. Only patches that fall within the tissue mask are processed further.

Patching and Representative Patch Selection

Purpose and Goal: Given the gigapixel size of WSIs, they must be divided into smaller, manageable patches for analysis. A key challenge is selecting a representative subset of patches that capture the morphological diversity of the tissue while minimizing redundancy, particularly from uninformative normal regions.

Detailed Protocol:

- Input: The original WSI and its corresponding tissue segmentation mask.

- Grid-based Patching: Overlay a grid on the WSI at a specified magnification (typically 20x) and extract patches of a fixed size (e.g., 256x256 or 512x512 pixels).

- Foreground Filtering: Discard any patch where less than a certain threshold (e.g., 50%) of its area is covered by the tissue mask.

- Representative Patch Selection: Several strategies exist to select the most informative patches and avoid processing thousands of redundant ones.

- Clustering-based Mosaics: As implemented in the Yottixel tool, patches can be clustered based on color and texture features. A representative subset (a "mosaic") is selected from each cluster to ensure morphological diversity [34].

- Anomaly Detection with a "Normal Atlas": For tasks focused on pathology, a powerful strategy is to prune patches of normal tissue. An atlas of normal tissue can be created using one-class classifiers (e.g., Isolation Forest, one-class SVM) trained on deep features from confirmed normal WSIs. This model can then identify and filter out "normal" patches from a new WSI, leaving a concise set of patches enriched for abnormal morphology [34]. This can reduce the number of patches by 30-50% without sacrificing performance.

Feature Extraction Using Foundation Models

Purpose and Goal: Feature extraction transforms image patches into a low-dimensional, numerical vector that encapsulates the salient visual and semantic information. Foundation models, pre-trained on vast histopathology datasets, have become the preferred method for this, providing highly transferable and powerful features.

Detailed Protocol:

- Input: The selected representative patches from the previous step.

- Foundation Model Selection: Choose a pre-trained foundation model based on the task and data availability. Recent benchmarks indicate that vision-language models like CONCH and vision-only models like Virchow2 are top performers across various tasks, including morphology assessment, biomarker prediction, and prognosis [31].

- Feature Extraction:

- Process: Pass each image patch through the foundation model's encoder. The output from a pre-final layer (e.g., a 768-dimensional or 1024-dimensional vector) is extracted as the feature embedding for that patch.

- Output: A set of feature vectors, one for each patch, which collectively represent the WSI. These features serve as input to the weakly supervised classification models.

Table 1: Benchmarking of Select Pathology Foundation Models for Feature Extraction

| Foundation Model | Model Type | Key Strength | Reported Avg. AUROC (Morphology) | Reported Avg. AUROC (Biomarker) | Reported Avg. AUROC (Prognosis) |

|---|---|---|---|---|---|

| CONCH [31] | Vision-Language | Highest overall performance, especially in morphology and prognosis | 0.77 | 0.73 | 0.63 |

| Virchow2 [31] | Vision-Only | Top performance in biomarker prediction, close second overall | 0.76 | 0.73 | 0.61 |

| TITAN [10] | Multimodal WSI | Generates general-purpose slide representations; excels in low-data scenarios | N/A | N/A | N/A |

| DinoSSLPath [31] | Vision-Only | Strong all-around performer | 0.76 | 0.69 | N/A |

Weakly Supervised Classification with Slide-Level Labels

With feature embeddings extracted for every patch in a WSI, the next step is to aggregate them to make a single slide-level prediction using only the overall slide label. This is typically framed as a Multiple Instance Learning (MIL) problem.

Attention-based Multiple Instance Learning

Clustering-constrained Attention Multiple Instance Learning (CLAM) is a seminal data-efficient method that creates a slide-level representation from patch features [11].

Detailed Protocol:

- Input: A bag of feature vectors

{x₁, x₂, ..., xₙ}for a WSI and its slide-level labelY. - Attention Branch: The model uses an attention-based pooling module to assign an attention score

aₖto each patch, representing its importance for the slide's classification. - Slide-level Representation: A weighted average of all patch features is computed using the attention scores, resulting in a single, refined slide-level feature vector.

- Classification: This slide-level vector is passed through a fully connected layer for classification.

- Instance-level Clustering (Key Innovation): CLAM introduces an auxiliary learning objective. During training, it uses the attention scores to generate pseudo-labels for the most and least attended patches, encouraging the model to cluster patch-level features in a semantically meaningful way. This provides additional supervision and improves data efficiency [11].

The internal workflow of CLAM, from patch embedding aggregation to slide-level classification and the auxiliary clustering process, is detailed below.

Joint Segmentation and Classification

WholeSIGHT is a graph-based method that performs weakly supervised segmentation and classification simultaneously, using only slide-level labels [35] [36].

Detailed Protocol:

- Input: A WSI.

- Tissue Graph Construction: The WSI is converted into a graph where nodes represent superpixels (small, homogeneous tissue regions) and edges represent the interactions between adjacent regions [36].

- Graph Neural Network (GNN): A GNN processes the tissue graph to contextualize node embeddings.

- Dual-Headed Architecture:

- Output: At inference time, the model outputs both the slide-level class and a segmentation map highlighting the histological regions of interest.

Table 2: Comparison of Weakly Supervised Methods for WSI Analysis

| Method | Core Architecture | Key Innovation | Outputs | Notable Application |

|---|---|---|---|---|

| CLAM [11] | Attention-based MIL | Instance-level clustering for data efficiency | Slide-level classification & attention heatmaps | Subtyping of RCC and NSCLC; lymph node metastasis detection |

| WholeSIGHT [35] [36] | Graph Neural Network | Generates node pseudo-labels from graph attribution | Joint slide-level classification and semantic segmentation | Gleason pattern segmentation and grading in prostate cancer |

| DG-WSDH [37] | Dynamic Graph + Hashing | Deep hashing for classification and image retrieval | Slide-level classification & binary codes for retrieval | WSI and patch-level retrieval tasks |

Experimental Protocols

Protocol: Benchmarking Foundation Models as Feature Extractors

Objective: To evaluate and select the most suitable foundation model for a specific weakly supervised task (e.g., cancer subtyping).

Materials:

- Dataset: A cohort of WSIs with slide-level labels, split into training, validation, and test sets.

- Foundation Models: Pre-trained models such as CONCH, Virchow2, UNI, etc.

- Software: A weakly supervised framework like CLAM.

Procedure:

- Pre-processing: For all WSIs in the cohort, perform tissue segmentation and patching at 20x magnification (e.g., 256x256 px).

- Feature Extraction: Extract feature embeddings for each patch using each foundation model under evaluation.

- Model Training: For each set of features, train an identical weakly supervised model (e.g., CLAM) using the same training/validation split and hyperparameters.

- Evaluation: Compare the performance of the models on the held-out test set using metrics such as Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC). Assess statistical significance.

Expected Output: A performance ranking of foundation models for the specific task, guiding the optimal model selection [31].

Protocol: Implementing Weakly Supervised Segmentation with WholeSIGHT

Objective: To train a model that can simultaneously classify a WSI and segment its diagnostically relevant regions using only slide-level labels.

Materials:

- Dataset: WSIs with slide-level labels (e.g., Gleason grade). Pixel-level annotations for a test set are required for segmentation evaluation.

- Software: The WholeSIGHT codebase.

Procedure:

- Graph Construction: For each WSI, generate a tissue graph. This involves:

- Superpixel Generation: Use an algorithm like SLIC to oversegment the WSI into superpixels.

- Node Feature Extraction: Extract features from each superpixel region, potentially using a pre-trained CNN.

- Edge Definition: Connect nodes based on spatial proximity of superpixels.

- Model Training: Train the WholeSIGHT model end-to-end.

- The graph classification head is trained via the slide-level label.

- The node classification head is trained using pseudo-labels generated from the graph classification head via a method like Grad-CAM.

- Inference and Evaluation:

- Classification: Evaluate slide-level prediction accuracy on the test set.

- Segmentation: Generate the segmentation map by inferring node classes and compare against pixel-level ground truth using metrics like Dice Similarity Coefficient (DSC) [36].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources for WSI Analysis

| Resource Name | Type | Function/Purpose | Reference/Resource |

|---|---|---|---|

| CLAM | Software Package | A high-throughput, data-efficient framework for weakly supervised WSI classification using attention-based MIL. | GitHub Repository [11] |

| WholeSIGHT | Software Package | A graph-based method for joint weakly supervised segmentation and classification of WSIs. | GitHub Repository [35] |

| CONCH / Virchow2 | Foundation Model | Pre-trained models for extracting state-of-the-art feature embeddings from histopathology patches. | [Publication & Model Zoos] [31] |

| Yottixel | Software Tool | Creates a mosaic of representative patches from a WSI for efficient downstream processing. | [Publication] [34] |

| Normal Tissue Atlas | Method/Protocol | A one-class classifier approach to filter out normal tissue patches, improving patch selection efficiency. | [Publication] [34] |

The advent of digital pathology has generated vast quantities of whole slide images (WSIs), creating unprecedented opportunities for artificial intelligence to transform diagnostic practices. Foundation models, pre-trained on massive datasets using self-supervised learning (SSL), have emerged as powerful tools that learn general-purpose feature representations from unlabeled histopathology images. These models address a critical bottleneck in computational pathology: the scarcity of expensive, expert-curated labels. Unlike task-specific models that require extensive labeled data for each new application, foundation models can be adapted to diverse downstream tasks with minimal fine-tuning, making them particularly valuable for weakly supervised learning scenarios where only slide-level labels are available.

This paradigm is especially relevant for WSI classification, where gigapixel images are too large to process directly. Weakly supervised multiple instance learning (MIL) approaches overcome this by treating a WSI as a "bag" of smaller image patches ("instances"), allowing models to predict slide-level labels while potentially identifying diagnostically relevant regions. Foundation models serve as superior feature extractors for these patches, capturing rich morphological patterns that generalize across various diagnostic tasks, from cancer subtyping and biomarker prediction to rare disease detection. This document provides a detailed overview of five leading pathology foundation models—CONCH, Virchow2, UNI, CTransPath, and Phikon—focusing on their architectures, performance, and practical applications in weakly supervised WSI classification research.

Model Specifications and Comparative Analysis

Technical Specifications of Leading Pathology Foundation Models

| Model Name | Architecture Type | Training Data Scale | Parameters | Primary Training Data Sources | Key Innovation / Focus |

|---|---|---|---|---|---|

| CONCH [27] [38] | Vision-Language (Multimodal) | 1.17M image-caption pairs [27] | 86 million [39] | Diverse public sources (PubMed, Twitter); No TCGA/PAIP [38] | Contrastive learning aligning images with biomedical text |

| Virchow2 [31] | Vision-Only (ViT) | 3.1 million WSIs [31] | Information Missing | Proprietary (MSKCC) [40] | Extreme scale; DINOv2 self-supervised learning |

| UNI [41] | Vision-Only (ViT) | 100k WSIs (UNI v1); >200M images from 350k+ WSIs (UNI2) [41] | 307 million (UNI v1) [40] | Mass-100K (proprietary) [39] | General-purpose feature extraction; Scalability |

| CTransPath [42] | Hybrid CNN-Transformer | 15.6M patches from 32.2k WSIs [43] | Information Missing | TCGA, PAIP (public) [42] | Combines local (CNN) and global (Transformer) feature learning |

| Phikon [40] [31] | Vision-Only (ViT) | 6,000 WSIs [39] | 86.4 million [39] | TCGA (public) [39] | SSL adaptation for pathology with a smaller dataset |

Performance Benchmarking Across Key Domains

Recent independent benchmarking studies provide critical insights into the real-world performance of these models. A comprehensive evaluation of 19 foundation models across 31 clinical tasks—including morphology, biomarkers, and prognostication—revealed that CONCH and Virchow2 achieved the highest overall performance [31].

Table: Model Performance Across Task Types (Mean AUROC) [31]

| Model | Morphology (5 tasks) | Biomarkers (19 tasks) | Prognosis (7 tasks) | Overall (31 tasks) |

|---|---|---|---|---|

| CONCH | 0.77 | 0.73 | 0.63 | 0.71 |

| Virchow2 | 0.76 | 0.73 | 0.61 | 0.71 |

| Prov-GigaPath | 0.72 | 0.72 | 0.60 | 0.69 |

| DinoSSLPath | 0.76 | 0.68 | 0.59 | 0.69 |

| UNI | 0.71 | 0.68 | 0.60 | 0.68 |

| Virchow | 0.70 | 0.67 | 0.60 | 0.67 |

| CTransPath | 0.70 | 0.67 | 0.59 | 0.67 |

| Phikon | 0.69 | 0.65 | 0.58 | 0.65 |

| PLIP | 0.67 | 0.63 | 0.58 | 0.64 |

This benchmark demonstrates that CONCH, a vision-language model, performs on par with Virchow2, a vision-only model trained on nearly three times as many WSIs, highlighting the value of incorporating textual information during pre-training [31].

Experimental Protocols for Weakly Supervised WSI Classification

Standardized Workflow for Downstream Task Evaluation

Implementing a foundation model for a weakly supervised classification task involves a multi-stage pipeline. The diagram below illustrates the key steps from WSI processing to slide-level prediction.

Detailed Protocol Steps

Step 1: WSI Preprocessing and Patching

- Tissue Segmentation: Use saturation thresholding or other algorithms to distinguish tissue regions from background [44]. This critical step eliminates non-informative image areas.