Foundation Models in Computational Pathology: A Comprehensive Guide for Biomedical Research

Foundation models are transforming computational pathology by providing versatile AI trained on massive datasets of histopathology images.

Foundation Models in Computational Pathology: A Comprehensive Guide for Biomedical Research

Abstract

Foundation models are transforming computational pathology by providing versatile AI trained on massive datasets of histopathology images. This article explores how these models, pretrained via self-supervised learning on millions of whole slide images, enable powerful applications in cancer diagnosis, biomarker prediction, and patient prognosis with minimal task-specific labeling. We detail the core methodologies, from vision-only to multimodal architectures, and address key implementation challenges like data scarcity and computational cost. Through rigorous benchmarking and validation studies, we compare leading models like Virchow, CONCH, and PathOrchestra, providing insights for researchers and drug development professionals to select and optimize these tools for precision medicine and therapeutic R&D.

What Are Pathology Foundation Models? Core Concepts and Evolutionary Shift

Foundation models (FMs) are transforming computational pathology by serving as large-scale, adaptable artificial intelligence (AI) systems trained on extensive datasets. These models leverage self-supervised learning on diverse histopathology images and, in many cases, paired textual data, to develop general-purpose feature representations. Once trained, they can be efficiently adapted to a wide array of downstream clinical and research tasks with minimal task-specific labeling, thereby addressing critical challenges such as data scarcity, annotation costs, and the need for generalizable tools in diagnostic pathology. This whitepaper delineates the core architectural principles, pretraining methodologies, and adaptation techniques of pathology FMs. It further provides a quantitative analysis of current state-of-the-art models, detailed experimental protocols for their application, and a curated toolkit of essential research resources, offering a comprehensive technical guide for researchers and drug development professionals in the field.

The field of computational pathology has been revolutionized by the advent of whole-slide scanners, which convert glass slides into high-resolution digital images [1]. Traditional AI models in pathology were typically designed for a single, specific task—such as cancer grading or metastasis detection—and required large, expensively annotated datasets for training [2] [3]. This paradigm proved difficult to scale across the thousands of possible diagnoses and complex tasks in pathology.

Foundation models represent a fundamental shift. As defined by a Stanford AI institute, a foundation model is "any model that is trained on broad data (generally using self-supervision at scale) that can be adapted (e.g., fine-tuned) to a wide range of downstream tasks" [2]. In contrast to traditional deep learning models, FMs are characterized by their very large model size, use of transformer architectures, and ability to achieve state-of-the-art performance on adapted tasks while also demonstrating medium to high performance on untrained tasks [2]. As shown in Table 1, the key differentiators of FMs include their pretraining on very large datasets without labeled data and their adaptability to many tasks.

Table 1: Core Differentiators: Foundation Models vs. Non-Foundation Models

| Characteristics | Foundation Model | Non-Foundation Model (Deep Learning Model) |

|---|---|---|

| Model Architecture | (Mainly) Transformer | Convolutional Neural Network |

| Model Size | Very Large | Medium to Large |

| Applicable Task Scope | Many | Single |

| Performance on Untrained Tasks | Medium to High | Low |

| Data Amount for Model Training | Very Large | Medium to Large |

| Use of Labeled Data for Training | No | Yes |

In computational pathology, FMs are trained on hundreds of thousands of whole-slide images (WSIs) and histopathology region-of-interests (ROIs) [4] [5]. This large-scale pretraining captures the vast morphological diversity of tissue structures and cellular patterns, encoding them into versatile and transferable feature representations [4]. These representations serve as a "foundation" for models that predict clinical endpoints from WSIs, such as diagnosis, biomarker status, or patient prognosis [4] [1]. The resulting models demonstrate remarkable capabilities in low-data regimes and for rare diseases, which are common challenges in clinical practice [4] [6].

Architectural Frameworks and Training Methodologies

Core Model Architectures

Pathology foundation models employ sophisticated architectures designed to handle the unique challenges of gigapixel whole-slide images.

Visual Transformer (ViT) based Slide Encoders: Models like TITAN (Transformer-based pathology Image and Text Alignment Network) use a Vision Transformer as a core component to create a general-purpose slide representation [4]. A pivotal innovation is handling the long and variable input sequences of WSIs, which can exceed 10,000 tokens. To manage this computational complexity, TITAN constructs an input embedding space by dividing each WSI into non-overlapping patches, from which features are extracted using a pre-trained patch encoder. These features are spatially arranged into a 2D grid, and the model uses attention with linear bias (ALiBi) to enable long-context extrapolation during inference [4].

Multimodal Visual-Language Models: Models like CONCH (CONtrastive learning from Captions for Histopathology) and the multimodal version of TITAN integrate image and text data [4] [6]. CONCH, for instance, is based on the CoCa (Contrastive Captioners) framework and comprises an image encoder, a text encoder, and a multimodal fusion decoder [6]. It is trained using a combination of contrastive alignment objectives, which align the image and text modalities in a shared representation space, and a captioning objective that learns to generate captions corresponding to an image [6].

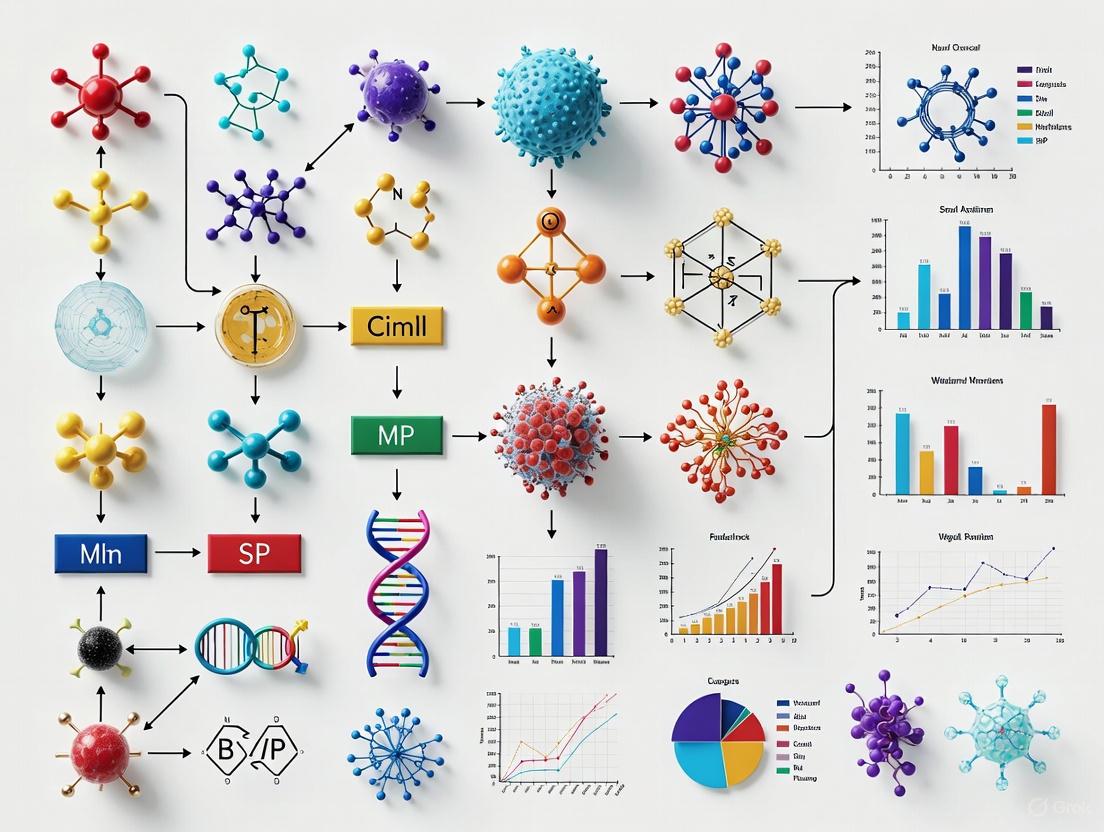

The following diagram illustrates the high-level conceptual workflow of a multimodal foundation model like CONCH or TITAN, from data processing to task-agnostic pretraining.

Large-Scale Pretraining Strategies

The pretraining of pathology FMs is a multi-stage process that leverages vast datasets to instill robust, generalizable knowledge.

Unimodal Visual Pretraining: The initial stage often involves self-supervised learning (SSL) on large collections of WSIs without labels. For example, TITAN's first stage is a vision-only pretraining on 335,645 WSIs using the iBOT framework, which combines masked image modeling and knowledge distillation [4]. This teaches the model fundamental histomorphological patterns.

Multimodal Alignment: To equip the model with language capabilities, a subsequent stage aligns visual features with textual descriptions. TITAN undergoes two cross-modal alignment stages: one with 423,122 synthetic fine-grained ROI captions generated by a generative AI copilot, and another with 182,862 slide-level clinical reports [4]. This process enables capabilities like text-to-image retrieval and pathology report generation.

PathOrchestra, another comprehensive FM, was trained on 287,424 slides from 21 tissue types across three centers [5]. The diversity of the pretraining data—covering multiple organs, stains, scanner types, and specimen types (FFPE and frozen)—is a critical factor in the model's subsequent generalization capability [4] [5].

Quantitative Analysis of State-of-the-Art Foundation Models

The performance of pathology FMs has been rigorously evaluated across a wide spectrum of tasks. The table below summarizes the scale and key capabilities of several leading models.

Table 2: Comparative Analysis of Pathology Foundation Models

| Model | Training Data Scale | Key Architectures/Techniques | Reported Performance Highlights |

|---|---|---|---|

| TITAN [4] | 335,645 WSIs; 423k synthetic captions; 183k reports | Vision Transformer (ViT), iBOT SSL, ALiBi, multimodal alignment | Outperforms ROI and slide FMs in linear probing, few-shot/zero-shot classification, rare cancer retrieval, and report generation. |

| PathOrchestra [5] | 287,424 WSIs from 21 tissues | Self-supervised vision encoder | Accuracy >0.950 in 47/112 tasks, including pan-cancer classification and lymphoma subtyping; generates structured reports. |

| CONCH [6] | 1.17M image-caption pairs | Contrastive learning & captioning (CoCa framework) | SOTA zero-shot accuracy: 90.7% (NSCLC), 90.2% (RCC), 91.3% (BRCA); excels at cross-modal retrieval and segmentation. |

| Tissue Concepts [7] | 912,000 patches from 16 tasks | Supervised multi-task learning | Matches self-supervised FM performance on major cancers using only ~6% of the training data. |

Key performance insights from these models include:

- CONCH demonstrated a significant leap in zero-shot classification, outperforming other visual-language models like PLIP and BiomedCLIP by over 10-35% on tasks such as non-small cell lung cancer (NSCLC) and renal cell carcinoma (RCC) subtyping [6].

- PathOrchestra showcased exceptional breadth, achieving high accuracy across 112 tasks including digital slide preprocessing, pan-cancer classification, and biomarker assessment, demonstrating its clinical readiness [5].

- TITAN emphasizes handling resource-limited scenarios, showing strong performance in rare disease retrieval and cancer prognosis without requiring fine-tuning or clinical labels [4].

- The Tissue Concepts model highlights that supervised multi-task learning can be a data-efficient path to building capable FMs, achieving performance comparable to large-scale self-supervised models with drastically less data [7].

Experimental Protocols for Downstream Task Adaptation

Applying a pretrained foundation model to a specific problem involves several established protocols. The choice of method depends on the amount of labeled data available for the downstream task.

Zero-Shot and Few-Shot Evaluation

In the zero-shot setting, the model performs a task without any further task-specific training. For classification, this is typically achieved by leveraging the model's multimodal alignment.

- Protocol: Class names are converted into a set of text prompts (e.g., "invasive ductal carcinoma of the breast," "breast IDC"). The image (or image tiles from a WSI) is then matched with the most similar text prompt in the model's shared image-text representation space using cosine similarity [6]. For WSIs, an aggregation method like MI-Zero can be used to combine tile-level scores into a slide-level prediction [6].

- Application: This method is ideal for rapid prototyping, generating initial hypotheses, or applications where acquiring labeled data is impossible.

Linear Probing and Fine-Tuning

For tasks with limited labeled data, linear probing and fine-tuning are standard approaches.

- Linear Probing Protocol: The weights of the pretrained FM are frozen. A simple linear classifier (e.g., a single fully connected layer) is then trained on top of the fixed features extracted by the FM for the new task. This tests the quality of the learned representations [4] [6].

- Full Fine-Tuning Protocol: The entire FM (or a significant portion of it) is further trained on the labeled data for the downstream task. This allows the model to adjust its pre-learned features to the specifics of the new task. Fine-tuning requires more data than linear probing but can achieve higher performance.

- Application: These methods are the most common for adapting a general FM to a specific clinical task, such as grading a specific cancer type or predicting a specific biomarker.

The following diagram outlines the decision workflow for selecting the appropriate adaptation protocol based on data availability and task goals.

The development and application of pathology FMs rely on a suite of key "reagent" solutions— computational tools, datasets, and infrastructure.

Table 3: Essential Research Reagents for Pathology Foundation Model Research

| Research Reagent | Function/Description | Exemplars in Literature |

|---|---|---|

| Pre-trained Patch Encoders | Extracts foundational feature representations from small image patches; often used as a preprocessing step for slide-level FMs. | CONCH [6] |

| Large-Scale WSI Datasets | Diverse, multi-organ collections of whole-slide images used for large-scale self-supervised pretraining. | Mass-340K (335,645 WSIs) [4], PathOrchestra Dataset (287,424 WSIs) [5] |

| Multimodal Datasets (Image-Text Pairs) | Paired histopathology images and textual descriptions (reports, synthetic captions) for training visual-language models. | 1.17M image-caption pairs (CONCH) [6], 423k synthetic captions + 183k reports (TITAN) [4] |

| Synthetic Caption Generators | Multimodal generative AI copilots that generate fine-grained morphological descriptions for ROIs, providing scalable supervision. | PathChat (used by TITAN) [4] |

| Benchmark Suites | Curated collections of public and private datasets for standardized evaluation of FMs across multiple tasks. | 14 diverse benchmarks (CONCH) [6], 112 tasks (PathOrchestra) [5] |

| Multiple Instance Learning (MIL) Frameworks | Algorithms for aggregating patch-level or tile-level predictions to form a slide-level diagnosis or score. | Attention-based MIL (ABMIL) used in PathOrchestra [5] |

Foundation models represent a paradigm shift in computational pathology, moving from a one-model-one-task approach to a versatile, scalable framework where a single, broadly trained model can be efficiently adapted to countless downstream applications. Their demonstrated success in tasks ranging from pan-cancer classification and rare disease retrieval to biomarker assessment and report generation underscores their transformative potential for both research and clinical practice [4] [5] [6].

The future of pathology FMs lies in several key directions: the development of generalist medical AI that integrates pathology FMs with models from other medical domains (e.g., radiology, genomics) [2]; continued scaling of model and dataset size; improved efficiency for clinical deployment; and rigorous real-world validation to address challenges related to diagnostic accuracy, cost, patient confidentiality, and regulatory ethics [1]. As these models continue to evolve, they are poised to become an indispensable tool in the pathologist's arsenal, enhancing diagnostic precision, personalizing treatment plans, and ultimately improving patient outcomes.

The field of computational pathology stands at a pivotal moment, poised to revolutionize cancer diagnosis, prognosis, and treatment planning through artificial intelligence. However, for years, progress has been constrained by the fundamental limitations of traditional supervised learning approaches. These models, which learn from vast amounts of meticulously labeled data, face particular challenges in histopathology where annotation costs are prohibitive and inter-observer variability among pathologists complicates ground truth establishment [2]. The average pathologist earns approximately $149 per hour, with annotation costs reaching $12 per slide when assuming just five minutes of annotation time [2]. This economic reality, coupled with the gigapixel complexity of whole-slide images (WSIs), has created a significant bottleneck in developing robust AI systems for histopathology.

Foundation models represent a paradigm shift in computational pathology, moving from task-specific models to general-purpose AI systems trained on broad data that can be adapted to diverse downstream tasks [8] [2]. These models leverage self-supervised learning (SSL) to learn transferable feature representations from unlabeled pathology images, fundamentally overcoming the annotation dependency that has plagued traditional supervised approaches. The emergence of models like TITAN [4], UNI [9], and Virchow [9] demonstrates how this new paradigm enables applications ranging from rare disease retrieval to cancer prognosis without task-specific fine-tuning.

Table 1: Comparison of Traditional Supervised Learning vs. Foundation Models in Computational Pathology

| Characteristic | Traditional Supervised Learning | Foundation Models |

|---|---|---|

| Model Architecture | Convolutional Neural Networks (CNN) [2] | Transformer-based [4] [2] |

| Training Data | Medium to large labeled datasets [2] | Very large unlabeled datasets (335k+ WSIs) [4] [9] |

| Applicable Tasks | Single task [2] | Many downstream tasks [2] |

| Performance on Untrained Tasks | Low [2] | Medium to high [2] |

| Annotation Dependency | High (pathologist-intensive) [2] | Minimal (self-supervised) [4] [9] |

| Generalization | Limited to training distribution | Strong out-of-distribution performance [4] |

The Limitations of Traditional Supervised Learning

Annotation Burden and Scalability Challenges

In traditional supervised learning, each diagnostic task requires pathologists to manually annotate thousands of histopathology images, creating an unsustainable scalability barrier. This process is not only time-consuming but also economically prohibitive for healthcare institutions [2]. The problem intensifies for rare diseases where cases are scarce, and for complex tasks like predicting patient prognosis or genetic mutations from histology, where ground truth labels may require expensive molecular testing or long-term clinical follow-up.

Technical Limitations in Model Performance

Beyond resource constraints, supervised learning models face fundamental technical limitations. These models frequently suffer from overfitting—learning patterns too specifically from training data and failing to generalize to unseen data [10]. In dynamic clinical environments where data distribution frequently changes, supervised models often fail to adapt without retraining [10]. Additionally, these models demonstrate limited adaptability to completely new scenarios unseen during training, unlike human pathologists who can apply reasoning to novel cases [10].

Foundation Models: A New Paradigm for Computational Pathology

Core Architectural Principles

Foundation models in computational pathology represent a fundamental shift in approach, centered on three key principles: self-supervised learning on massive unlabeled datasets, transformer architectures for whole-slide representation, and multimodal alignment.

The cornerstone of this paradigm is self-supervised learning (SSL), which enables models to learn visual representations from the inherent structure of histopathology data without manual annotations [9]. Algorithms like DINOv2 [9], iBOT [4], and masked autoencoders [9] have proven particularly effective for pathology images. These methods create learning objectives from the data itself, such as predicting missing parts of an image or identifying different augmentations of the same tissue region.

Transformer architectures form the backbone of modern pathology foundation models, enabling long-range context modeling across gigapixel whole-slide images [4]. Unlike traditional convolutional neural networks that process local regions independently, transformers use self-attention mechanisms to capture relationships between distant tissue regions, mirroring how pathologists integrate local cytological features with global architectural patterns.

Multimodal learning represents the third pillar, with models like TITAN aligning visual representations with corresponding pathology reports and synthetic captions [4]. This cross-modal alignment enables capabilities such as text-based image retrieval, pathology report generation, and zero-shot classification without explicit training.

Implementation Framework: The TITAN Model

The TITAN (Transformer-based pathology Image and Text Alignment Network) model exemplifies the foundation model paradigm in practice [4]. Its implementation involves a sophisticated three-stage framework:

Stage 1: Vision-only Pretraining TITAN employs the iBOT framework for visual self-supervised learning on 335,645 whole-slide images [4]. The model processes WSIs by first dividing them into non-overlapping 512×512 pixel patches at 20× magnification, encoding each patch into 768-dimensional features using a pretrained patch encoder [4]. These features are spatially arranged in a 2D grid preserving tissue topography. To handle variable WSI sizes, the model randomly crops 16×16 feature regions (covering 8,192×8,192 pixels), then samples multiple global (14×14) and local (6×6) crops for self-supervised learning [4]. The architecture uses Attention with Linear Biases (ALiBi) extended to 2D, enabling extrapolation to long contexts at inference by biasing attention based on Euclidean distance between features in the tissue [4].

Stage 2: ROI-Level Cross-Modal Alignment The vision model is aligned with 423,122 synthetic fine-grained captions generated using PathChat, a multimodal generative AI copilot for pathology [4]. This enables the model to understand regional morphological descriptions.

Stage 3: WSI-Level Cross-Modal Alignment Finally, the model aligns whole-slide representations with 182,862 clinical pathology reports, enabling slide-level reasoning and report generation capabilities [4].

Figure 1: TITAN Foundation Model Architecture and Training Pipeline

Experimental Validation and Benchmarking

Performance Across Clinical Tasks

Rigorous evaluation of pathology foundation models reveals their substantial advantages over traditional supervised approaches. In comprehensive benchmarks assessing disease detection and biomarker prediction across multiple institutions, SSL-trained pathology models consistently outperform models pretrained on natural images [9]. For disease detection tasks, foundation models achieve AUCs above 0.9 across all evaluated tasks, demonstrating robust diagnostic capability [9].

The TITAN model specifically excels in resource-limited clinical scenarios including rare disease retrieval and cancer prognosis, operating without any fine-tuning or clinical labels [4]. It outperforms both region-of-interest (ROI) and slide foundation models across multiple machine learning settings: linear probing, few-shot and zero-shot classification, rare cancer retrieval, cross-modal retrieval, and pathology report generation [4]. This generalizability is particularly valuable for rare cancers where collecting large annotated datasets is impractical.

Table 2: Benchmark Performance of Public Pathology Foundation Models on Clinical Tasks

| Model | Training Data | Key Architectures | Clinical Task Performance |

|---|---|---|---|

| TITAN [4] | 335,645 WSIs + 423K synthetic captions + 183K reports | ViT with ALiBi, iBOT, VLA | Superior performance on zero-shot classification, rare cancer retrieval, report generation |

| UNI [9] | 100M tiles from 20 tissue types | ViT-L, DINO | State-of-the-art across 33 tasks including classification, segmentation, retrieval |

| Phikon [9] | 43.3M tiles from 13 anatomic sites | ViT-B, iBOT | Strong performance on 17 downstream tasks across 7 cancer indications |

| Virchow [9] | 2B tiles from 1.5M slides | ViT-H, DINO | SOTA on tile-level and slide-level benchmarks for tissue classification and biomarker prediction |

| Prov-GigaPath [9] | 1.3B tiles from 171K WSIs | DINO, MAE, LongNet | Strong performance on 17 genomic prediction and 9 cancer subtyping tasks |

Methodologies for Model Evaluation

Robust evaluation of pathology foundation models requires standardized benchmarking protocols. Recent initiatives have established comprehensive clinical benchmarks using real-world data from multiple medical centers [9]. The evaluation methodology typically encompasses:

Linear Probing: Assessing representation quality by training a linear classifier on frozen features while varying training set sizes (from 1% to 100% of available labels) [9]. This measures how well the model captures diagnostically relevant features.

Few-Shot Learning: Evaluating model performance with very limited labeled examples (e.g., 1-100 samples per class) to simulate rare disease scenarios [4].

Zero-Shot Classification: Testing model capability to recognize disease categories without any task-specific training, particularly for multimodal models using text prompts [4].

Cross-Modal Retrieval: Measuring the model's ability to retrieve relevant histology images given text queries, and vice versa [4].

Slide Retrieval: Assessing retrieval of diagnostically similar slides from a database, valuable for identifying rare cases and clinical decision support [4].

External validation across multiple institutions is crucial for assessing model generalizability and mitigating dataset-specific biases [9]. Performance should be measured on clinical data generated during standard hospital operations rather than curated research datasets alone.

Essential Research Toolkit for Pathology Foundation Models

Table 3: Research Reagent Solutions for Pathology Foundation Model Development

| Component | Function | Examples & Specifications |

|---|---|---|

| Whole-Slide Image Data | Model pretraining and validation | Mass-340K (335,645 WSIs) [4], TCGA [9], multi-institutional clinical cohorts [9] |

| Computational Infrastructure | Handling gigapixel images and model training | High-end GPUs, distributed training frameworks, patch encoding pipelines [4] [9] |

| Patch Encoders | Feature extraction from image patches | CONCH [4], self-supervised models (DINOv2, iBOT) [9] |

| Annotation Platforms | Limited supervision for fine-tuning | Digital pathology annotation tools, slide-level labels from reports [4] |

| Multimodal Data | Vision-language pretraining | Pathology reports [4], synthetic captions [4], genomic data [2] |

| Benchmarking Frameworks | Standardized model evaluation | Clinical benchmark datasets [9], automated evaluation pipelines [9] |

Future Directions and Clinical Implementation

Emerging Research Frontiers

The development of pathology foundation models continues to evolve rapidly, with several promising research directions emerging. Increased scale and diversity in pretraining data remains a priority, with recent models expanding to millions of slides across hundreds of tissue types [9]. Multimodal integration represents another frontier, with models incorporating not only pathology images and reports but also genomic, transcriptomic, and clinical data to enable more comprehensive patient characterization [2].

The rise of generative capabilities in pathology foundation models opens new possibilities for synthetic data generation, augmentation of rare diseases, and educational applications [11]. Additionally, research into explainability and interpretability is crucial for clinical adoption, helping pathologists understand model predictions and building trust in AI-assisted diagnoses [12].

Pathways to Clinical Deployment

Translating pathology foundation models from research to clinical practice requires addressing several critical challenges. Regulatory approval pathways must be established for these general-purpose models, which differ fundamentally from single-task devices [1]. Integration with clinical workflows presents technical and usability challenges, requiring seamless incorporation into digital pathology platforms and laboratory information systems.

Ongoing validation and monitoring is essential to ensure model performance generalizes across diverse patient populations and institution-specific practices [9]. Finally, education and training for pathologists will be crucial for effective human-AI collaboration, ensuring that clinicians can appropriately interpret model outputs and maintain ultimate diagnostic responsibility.

The ultimate vision is the development of generalist medical AI that integrates pathology foundation models with models from other medical domains (radiology, genomics, electronic health records) to provide comprehensive diagnostic support and enable truly personalized medicine [2]. As these technologies mature, they have the potential to transform pathology from a predominantly descriptive discipline to a quantitative, predictive science that enhances patient care through more accurate diagnoses, prognostic insights, and tailored treatment recommendations.

Computational pathology is undergoing a revolutionary transformation, driven by the emergence of foundation models capable of analyzing gigapixel whole-slide images (WSIs) with unprecedented sophistication [11]. These models represent a paradigm shift from task-specific algorithms to general-purpose visual encoders that learn transferable feature representations from vast repositories of histopathology data [13]. The development of these models is propelled by three interconnected technological forces: unprecedented data scale, advanced self-supervised learning (SSL) algorithms, and specialized transformer architectures [4] [13]. This convergence addresses critical challenges in pathology artificial intelligence (AI), including data imbalance, annotation dependency, and the need for robust generalization across diverse tissue types and disease conditions [12]. Foundation models are increasingly demonstrating remarkable capabilities across diagnostic, prognostic, and predictive tasks, establishing a new cornerstone for computational pathology research and clinical application [1].

The Critical Role of Data Scale in Model Performance

The performance of pathology foundation models exhibits a strong correlation with the scale and diversity of their pretraining datasets. Model generalization improves significantly when trained on larger datasets encompassing varied tissue types, staining protocols, and scanner variations [13].

Table 1: Data Scale of Representative Pathology Foundation Models

| Model Name | Tiles (Billions) | Whole-Slide Images (WSIs) | Tissue Types | Primary Algorithm |

|---|---|---|---|---|

| Virchow2 [13] | 1.7 | 3.1 million | ~200 | DINOv2 |

| Prov-GigaPath [13] | 1.3 | 171,189 | 31 | DINOv2, LongNet |

| UNI [13] | 0.1 | 100,000 | 20 | DINOv2 |

| TITAN [4] | - | 335,645 | 20 | iBOT, Vision-Language |

| Phikon [13] | 0.043 | 6,093 | 13 | iBOT |

Massive datasets enable models to learn invariant features across technical variations (e.g., stain heterogeneity) and biological variations (e.g., tissue morphology) [14]. For instance, the Virchow2 model, trained on 3.1 million WSIs across nearly 200 tissue types, achieves state-of-the-art performance by capturing a vast spectrum of histopathological patterns [13]. Similarly, the TITAN model leverages 335,645 WSIs and 423,122 synthetic captions to create general-purpose slide representations applicable to rare disease retrieval and cancer prognosis without further fine-tuning [4]. This scaling trend underscores the critical importance of large-scale, curated data repositories for developing robust pathology foundation models.

Self-Supervised Learning Algorithms for Annotation-Efficient Training

Self-supervised learning has emerged as the dominant paradigm for pretraining pathology foundation models, effectively addressing the annotation bottleneck in medical imaging. SSL algorithms learn powerful feature representations by formulating pretext tasks from unlabeled data, eliminating the need for costly manual annotations [13] [14].

Core SSL Algorithms and Their Adaptations

The table below summarizes key SSL algorithms and their implementation in computational pathology.

Table 2: Self-Supervised Learning Algorithms in Computational Pathology

| Algorithm | Core Mechanism | Key Pathology Adaptations | Representative Models |

|---|---|---|---|

| DINOv2 [13] [14] | Self-distillation with noise-resistant loss functions | Multi-magnification training, stain normalization augmentations | UNI, Virchow, Prov-GigaPath, Phikon-v2 |

| iBOT [4] [13] | Combines masked image modeling with online tokenizer | Hierarchical masking strategies, tissue-aware cropping | TITAN, Phikon |

| Masked Autoencoders (MAE) [13] | Reconstructs randomly masked image patches | Semantic-aware masking preserving tissue structures | Prov-GigaPath (slide-level) |

Domain-Specific Optimizations for Histopathology

Effective application of SSL in pathology requires domain-specific optimizations. Unlike natural images, WSIs exhibit unique characteristics including gigapixel resolutions, known physical scale of pixels, and redundant morphological patterns across populations [14]. Key adaptations include:

- Morphology-Preserving Augmentations: Replacement of standard crop-and-resize operations with Extended-Context Translation (ECT) to prevent distortion of cell and tissue shapes [14]. ECT uses a larger source field of view to minimize resizing artifacts while maintaining view overlap.

- Multi-Scale Learning: Training on tiles extracted from multiple magnifications (5×, 10×, 20×, 40×) to capture features manifesting at different resolutions [13] [14].

- Stain-Invariant Color Augmentations: Color perturbation strategies specifically designed to address variations in staining protocols and scanner types without reflecting underlying pathological differences [14].

These domain-specific modifications enable models to learn features that are invariant to technical artifacts while remaining sensitive to biologically relevant morphological patterns.

Transformer Architectures for Whole-Slide Image Analysis

Transformer architectures have revolutionized computational pathology by enabling long-range context modeling across gigapixel WSIs. While convolutional neural networks (CNNs) remain effective for local feature extraction, transformers excel at capturing global tissue microenvironment relationships [4] [13].

Architectural Innovations for Gigapixel Images

Standard transformer architectures face computational challenges when processing WSIs due to the quadratic complexity of self-attention mechanisms. Several innovative approaches have emerged to address this limitation:

- Hierarchical Processing: Models like TITAN employ a two-stage architecture where a patch encoder first extracts features from local image regions, followed by a slide-level transformer that aggregates these features into a whole-slide representation [4].

- Long-Range Context Modeling: The TITAN model incorporates Attention with Linear Biases (ALiBi) extended to 2D, enabling extrapolation to longer contexts during inference based on relative Euclidean distances between tissue regions [4].

- Multi-Resolution Architectures: Some frameworks implement hierarchical backbones that capture both cellular-level details and tissue-level context, optimized for transformer-based processing of WSIs [15].

Vision-Language Multimodal Integration

Multimodal transformer architectures represent a significant advancement in pathology AI. The TITAN model demonstrates how vision-language pretraining aligns image representations with pathological concepts [4]. By incorporating pathology reports and synthetic captions generated from AI copilots, these models enable cross-modal retrieval, zero-shot classification, and pathology report generation [4]. This multimodal alignment creates more clinically relevant representations that capture the semantic relationships between morphological features and diagnostic interpretations.

Experimental Protocols and Benchmarking

Rigorous evaluation frameworks are essential for assessing the clinical relevance and generalizability of pathology foundation models. Standardized benchmarks enable comparative analysis across different architectures and training approaches.

Performance Metrics and Downstream Tasks

Comprehensive model evaluation encompasses diverse clinical tasks including cancer subtyping, biomarker prediction, survival analysis, and rare cancer retrieval [4] [13]. The table below summarizes key evaluation metrics and benchmarks for pathology foundation models.

Table 3: Performance Benchmarks of Pathology Foundation Models on Clinical Tasks

| Model | Linear Probing Accuracy | Few-Shot Learning | Zero-Shot Classification | Slide Retrieval | Report Generation |

|---|---|---|---|---|---|

| TITAN [4] | Outperforms ROI & slide foundations | State-of-the-art | Enabled via vision-language | Superior rare cancer retrieval | Generates clinical reports |

| Virchow2 [13] | State-of-the-art on 12 tasks | High data efficiency | Not specified | Not specified | Not applicable |

| UNI [13] | Strong performance across 33 tasks | Effective with limited labels | Limited capability | Good performance | Not applicable |

| Prov-GigaPath [13] | Excellent for genomics & subtyping | Not specified | Not specified | Not specified | Not applicable |

Clinical Workflow Integration and Efficiency Gains

Beyond traditional performance metrics, foundation models demonstrate significant value in clinical workflow optimization. Studies show AI integration can reduce diagnostic time by approximately 90% in pathology and radiology fields [16]. These efficiency gains manifest through various mechanisms:

- Workload Reduction: AI models filter non-diagnostic regions, reducing data volume requiring pathologist review by significant margins [16].

- Decision Support: Foundation models provide annotated images highlighting suspicious regions, enhancing diagnostic confidence without fully automating the process [16].

- Independent Diagnosis: In limited scenarios, AI systems can achieve diagnostic performance comparable to human experts, particularly for well-defined classification tasks [16].

Successful development and application of pathology foundation models requires specialized computational resources and data infrastructure. The following toolkit outlines essential components for researchers in this field.

Table 4: Research Reagent Solutions for Pathology Foundation Model Development

| Resource Category | Specific Examples | Function & Application |

|---|---|---|

| Pretrained Models | TITAN, UNI, Virchow, Prov-GigaPath, Phikon, CTransPath | Transfer learning, feature extraction, model fine-tuning for specific tasks |

| SSL Algorithms | DINOv2, iBOT, Masked Autoencoders (MAE) | Self-supervised pretraining on unlabeled whole-slide images |

| Architecture Components | Vision Transformers (ViT), Swin Transformers, ALiBi Positional Encoding | Long-range context modeling, gigapixel image processing |

| Pathology Datasets | TCGA, CAMELYON16, PANNUKE, Proprietary Institutional Repositories | Model training, validation, and benchmarking across diverse tissue types |

| Computational Infrastructure | High-memory GPU clusters, Distributed Training Frameworks, Large-scale Storage | Handling gigapixel images, training billion-parameter models |

| Domain-Specific Augmentations | Extended-Context Translation, Stain Normalization, Multi-Magnification Sampling | Preserving histological semantics while enhancing data diversity |

The convergence of data scale, self-supervised learning, and transformer architectures has established a powerful foundation for computational pathology research. These technological drivers enable models that generalize across diverse clinical scenarios, particularly in resource-limited settings such as rare disease diagnosis [4]. As the field evolves, key challenges remain in standardizing model evaluation, ensuring regulatory compliance, and addressing ethical considerations around data privacy and algorithmic bias [1]. Future research directions will likely focus on multimodal integration with genomic and clinical data, federated learning to leverage decentralized data sources, and developing more efficient architectures for real-time clinical deployment. The ongoing maturation of pathology foundation models promises to significantly enhance diagnostic accuracy, personalize treatment strategies, and deepen our understanding of disease biology through AI-powered histomorphological analysis.

The field of computational pathology is undergoing a fundamental transformation, moving from specialized, task-specific deep learning models toward large-scale, adaptable foundation models (FMs). This shift mirrors the revolution witnessed in natural language processing and computer vision, representing a new paradigm for developing artificial intelligence (AI) in healthcare [2] [17]. Traditional deep learning models have provided substantial benefits in automating pathology tasks but face inherent limitations in scalability, generalization, and annotation dependency. Foundation models, trained on vast quantities of unlabeled data through self-supervised learning (SSL), overcome these barriers by learning universal histopathological representations that can be adapted to numerous downstream tasks with minimal fine-tuning [18] [17]. This whitepaper provides an in-depth technical analysis of the core differences between these two approaches, focusing on architectural principles, training methodologies, performance characteristics, and practical implementation for researchers, scientists, and drug development professionals engaged in precision oncology and computational pathology research.

Fundamental Architectural and Methodological Divergences

The distinction between foundation models and traditional deep learning in pathology begins at the most fundamental level of architecture, training data utilization, and learning paradigms. These differences explain the divergent capabilities and applications of each approach.

Model Architecture and Scalability

Traditional deep learning models in pathology typically employ Convolutional Neural Networks (CNNs) as their backbone architecture. These models are designed with a specific task in mind, such as tumor classification or cell segmentation, and their architecture is optimized accordingly [2] [19]. CNNs excel at capturing local spatial features through their convolutional filters but have limited capacity for modeling long-range dependencies in whole-slide images (WSIs) due to their inherent locality bias.

Foundation models predominantly utilize Vision Transformer (ViT) architectures, which leverage self-attention mechanisms to capture global context across entire image regions [4] [18]. The transformer architecture enables processing of variable-length sequences of image patches, making it particularly suitable for gigapixel WSIs that must be divided into thousands of patches. This architectural advantage allows FMs to model relationships between geographically distant tissue structures that may be pathologically significant but are missed by CNN-based approaches [20] [18].

Table 1: Core Architectural Differences Between Traditional Deep Learning and Foundation Models

| Characteristic | Traditional Deep Learning Model | Foundation Model |

|---|---|---|

| Primary Architecture | Convolutional Neural Networks (CNNs) | Vision Transformer (ViT) |

| Model Size | Medium to large | Very large |

| Context Processing | Local receptive fields | Global self-attention |

| Input Flexibility | Fixed input dimensions | Variable sequence length |

| Parameter Count | Millions to hundreds of millions | Hundreds of millions to billions |

Training Paradigms and Data Requirements

The training methodologies for these two approaches differ radically in their fundamental objectives and data requirements:

Traditional deep learning models rely exclusively on supervised learning, requiring large datasets of histopathology images with expert annotations for each specific task [2] [21]. This creates a significant bottleneck in development, as pathology annotations are time-consuming and expensive to acquire. The annotation cost alone is substantial—approximately $12 per slide assuming a pathologist salary of $149 per hour and 5 minutes of annotation time per slide [2]. These models learn exclusively from the labeled examples provided, with their knowledge strictly bounded by the diversity and quality of the annotated dataset.

Foundation models employ self-supervised learning (SSL) during pre-training, allowing them to learn from massive volumes of unlabeled histopathology images [18] [17]. SSL techniques include:

- Masked Image Modeling (MIM): Randomly masking portions of input images and training the model to reconstruct the missing parts [4] [18].

- Contrastive Learning: Learning representations by maximizing agreement between differently augmented views of the same image while distinguishing them from other images [20] [18].

- Self-Distillation: Using a student-teacher framework where the student network learns to match the output of the teacher network when presented with different augmented views of the same image [20].

This self-supervised pre-training phase allows FMs to learn general-purpose visual representations of histopathological morphology without any manual annotations. Once pre-trained, FMs can be adapted to specific tasks with minimal labeled examples through fine-tuning, few-shot learning, or even zero-shot learning in some cases [4] [22].

Table 2: Training Paradigm Comparison

| Aspect | Traditional Deep Learning Model | Foundation Model |

|---|---|---|

| Learning Paradigm | Supervised learning | Self-supervised learning + transfer learning |

| Data Requirements | Large labeled datasets for each task | Massive unlabeled datasets + minimal labels for adaptation |

| Annotation Dependency | High | Low |

| Primary Training Objective | Task-specific loss minimization | Pre-training: SSL objective; Fine-tuning: Task-specific objective |

| Example SSL Algorithms | Not applicable | DINO, iBOT, Masked Autoencoding, Contrastive Learning |

Multimodal Integration Capabilities

A distinctive capability of foundation models is their inherent capacity for multimodal integration, which remains challenging for traditional deep learning approaches.

Traditional deep learning models typically operate on single data modalities (e.g., H&E stained WSIs) and require specialized architectures to incorporate additional data types. Integrating pathology images with genomic data or clinical text often requires complex, custom-designed fusion networks that are difficult to optimize and scale [2] [23].

Foundation models can be designed from the ground up to process and align multiple data modalities through architectures that create joint embedding spaces [4] [18]. For example:

- CONCH and PLIP create aligned representations of histopathology images and textual descriptions [20].

- TITAN integrates whole-slide images with pathology reports and synthetic captions through cross-modal alignment [4].

- CLOVER integrates pathology images with multi-omics data for improved prognosis prediction [17].

This multimodal capability allows FMs to capture complex relationships between tissue morphology, clinical context, and molecular features, enabling more comprehensive pathological analysis [2] [17].

Performance and Functional Capabilities

The architectural and methodological differences between traditional deep learning and foundation models translate directly to divergent performance characteristics and functional capabilities across various pathology tasks.

Accuracy and Generalization

Comprehensive benchmarking studies reveal significant performance differences between these approaches:

Traditional deep learning models typically achieve high performance on the specific tasks and datasets they were trained on but often suffer from performance degradation when applied to data from different institutions, staining protocols, or scanner types [19] [22]. This limited generalization capability stems from their narrower training data distribution and architectural constraints.

Foundation models demonstrate superior generalization across diverse datasets and tissue types [22]. In a comprehensive benchmark evaluating 31 AI foundation models across 41 tasks, pathology-specific foundation models consistently outperformed general vision models and traditional approaches [22]. Notably, Virchow2 achieved the highest performance across multiple tasks and datasets, demonstrating the generalization capability of large-scale FMs [22]. FMs also show remarkable performance in low-data regimes, achieving state-of-the-art results on rare cancer types with minimal fine-tuning [4] [17].

Table 3: Performance Comparison on Pathology Tasks

| Performance Metric | Traditional Deep Learning Model | Foundation Model |

|---|---|---|

| Task Specificity | Single task | Multiple downstream tasks |

| Performance on Trained Tasks | High to state-of-the-art | State-of-the-art |

| Performance on Untrained Tasks | Low | Medium to high |

| Data Efficiency | Requires large labeled datasets per task | High efficiency with few-shot learning |

| Cross-Institutional Generalization | Limited without explicit domain adaptation | Superior due to diverse pre-training |

| Rare Disease Performance | Limited by annotated examples | Strong even with minimal examples |

Operational Efficiency and Clinical Applicability

The operational characteristics of these models have significant implications for their clinical integration:

Traditional deep learning models incur high initial development costs due to annotation requirements but may have lower computational demands during inference. However, developing separate models for each task creates maintenance challenges and workflow integration complexity in clinical environments [2] [21].

Foundation models have extremely high pre-training costs—reaching millions of dollars for the largest models—but offer significantly reduced adaptation costs for new tasks [17]. Once deployed, a single FM can serve multiple clinical applications, simplifying integration and maintenance. The emerging capability for zero-shot and few-shot learning further enhances their operational efficiency in clinical settings where labeled data may be scarce [4].

Experimental Framework and Validation

Rigorous experimental validation is essential for evaluating both traditional deep learning models and foundation models in pathology. This section outlines key methodologies and benchmarks used to assess model performance and robustness.

Representative Experimental Protocols

Benchmarking Foundation Models: A comprehensive evaluation framework for pathology FMs should include multiple assessment dimensions [22]:

- Linear Probing: Freezing the pre-trained backbone and training a linear classifier on top of the extracted features to evaluate representation quality.

- End-to-End Fine-Tuning: Unfreezing all parameters and fine-tuning the entire model on downstream tasks.

- Few-Shot Learning: Evaluating performance with limited labeled examples (e.g., 1, 5, 10, or 20 samples per class).

- Cross-Modal Retrieval: For multimodal FMs, testing the ability to retrieve relevant images given text queries and vice versa.

- Slide-Level Representation Learning: Assessing the quality of whole-slide representations for tasks such as cancer subtyping, biomarker prediction, and survival prognosis [4].

Representational Similarity Analysis (RSA): This methodology, borrowed from computational neuroscience, enables quantitative comparison of the internal representations learned by different models [20]. RSA involves:

- Extracting embeddings from multiple models for a standardized set of histopathology image patches.

- Computing Representational Dissimilarity Matrices (RDMs) that capture pairwise distances between embeddings.

- Comparing RDMs across models to quantify representational similarity and diversity.

- Analyzing slide-dependence and disease-dependence of representations to understand model robustness.

Recent RSA studies have revealed that FMs with similar training paradigms (vision-only vs. vision-language) do not necessarily learn similar representations, and that stain normalization can reduce slide-specific biases in FM representations [20].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Experimental Resources for Pathology Foundation Model Research

| Resource Category | Examples | Function and Application |

|---|---|---|

| Pre-Trained Models | UNI, Virchow, CONCH, PLIP, Prov-GigaPath, TITAN | Provide foundational representations for downstream pathology tasks without training from scratch |

| Benchmark Datasets | TCGA, CPTAC, Camelyon, internal validation sets | Standardized evaluation of model performance and generalization capabilities |

| Evaluation Frameworks | Linear probing, few-shot evaluation, cross-modal retrieval, survival analysis | Systematic assessment of model capabilities across diverse task types |

| Computational Infrastructure | High-end GPUs (NVIDIA A100/H100), distributed training frameworks, cloud computing platforms | Enable model training, fine-tuning, and deployment at scale |

| Pathology-Specific Libraries | TIAToolbox, QuPath, Whole-Slide Imaging processing libraries | Facilitate preprocessing, annotation, and analysis of whole-slide images |

Implementation Workflows

The implementation of foundation models in pathology research follows distinct workflows that differ significantly from traditional deep learning approaches. The following diagram illustrates the core architectural and workflow differences between these paradigms:

Diagram 1: Architectural and Workflow Comparison Between Traditional Deep Learning and Foundation Models in Pathology

Technical Implementation Considerations

Implementing foundation models in pathology research requires addressing several technical considerations:

Data Preprocessing and Augmentation:

- Whole-slide images must be divided into patches (typically 256×256 or 512×512 pixels at 20× magnification) [4] [18].

- Stain normalization techniques are often applied to reduce domain shift between institutions [20].

- For multimodal FMs, text data (pathology reports, captions) requires tokenization and preprocessing to align with image patches.

Model Selection Criteria:

- Vision-only vs. Vision-Language: Vision-language models (e.g., CONCH, PLIP) enable zero-shot capabilities and cross-modal retrieval, while vision-only models (e.g., UNI, Virchow) may offer superior performance on standard vision tasks [20] [22].

- Model Scale: Larger models generally perform better but require more computational resources for fine-tuning and inference [22].

- Pre-training Data: Models pre-trained on diverse, multi-institutional datasets typically generalize better [4] [22].

Fine-tuning Strategies:

- Linear Probing: Training only a linear classifier on frozen features provides a rapid performance baseline.

- Full Fine-tuning: Updating all parameters typically achieves the best performance but requires more computational resources and careful regularization to prevent overfitting.

- Parameter-Efficient Fine-tuning (PEFT): Techniques like LoRA (Low-Rank Adaptation) can adapt large FMs with minimal parameter updates.

Future Directions and Research Opportunities

The development of foundation models in computational pathology is rapidly evolving, with several promising research directions emerging:

Generalist Medical AI: The integration of pathology FMs with foundation models from other medical domains (radiology, genomics, clinical notes) to create comprehensive diagnostic systems that leverage complementary information [2] [23].

Improved Multimodal Alignment: Developing more sophisticated techniques for aligning histopathological image features with textual descriptions, genomic data, and clinical outcomes to enhance model interpretability and clinical utility [4] [18].

Federated Learning: Enabling multi-institutional collaboration on FM development without sharing sensitive patient data, addressing data privacy concerns while improving model generalization [23] [17].

Explainability and Interpretability: Developing specialized explainable AI (XAI) techniques tailored to the unique characteristics of pathology FMs, enabling pathologists to understand and trust model predictions [21] [17].

Resource-Efficient Adaptation: Creating methods to adapt large FMs for clinical deployment in resource-constrained environments, including model compression, knowledge distillation, and efficient fine-tuning techniques.

Foundation models represent a paradigm shift in computational pathology, offering significant advantages over traditional deep learning approaches in terms of generalization, data efficiency, and multimodal capabilities. While traditional CNN-based models excel at specific tasks with sufficient labeled data, their specialized nature limits their scalability and adaptability across the diverse challenges of pathological diagnosis and research. Foundation models, built on transformer architectures and pre-trained through self-supervised learning on massive datasets, provide a versatile foundation that can be efficiently adapted to numerous downstream tasks with minimal fine-tuning. The emerging capabilities of these models in whole-slide representation learning, cross-modal understanding, and few-shot adaptation position them as transformative tools for advancing precision oncology and pathology research. However, challenges remain in computational requirements, explainability, and clinical validation that warrant continued research and development. As the field evolves, foundation models are poised to become indispensable components of the pathology research toolkit, enabling more accurate, efficient, and comprehensive analysis of histopathological data for drug development and clinical research.

How Pathology Foundation Models Work: Architectures and Real-World Applications

Computational pathology foundation models (CPathFMs) are large-scale deep learning models trained on vast amounts of unlabeled histopathology data using self-supervised learning (SSL) techniques [24]. Unlike traditional models that require extensive manual annotations for each specific task, foundation models learn general-purpose representations of tissue morphology that can be adapted to various downstream applications through transfer learning [25]. The emergence of CPathFMs represents a paradigm shift in digital pathology, enabling robust performance across diverse diagnostic tasks including tumor detection, subtyping, grading, and biomarker prediction [26] [27].

The development of effective CPathFMs faces significant challenges due to the inherent complexity of histopathological data. Whole-slide images (WSIs) are gigapixel-sized, present remarkable variability in tissue morphology, and exhibit differences in staining protocols and scanning equipment across institutions [24] [25]. Two core SSL techniques have proven particularly effective in addressing these challenges: contrastive learning and masked image modeling. These approaches enable models to learn rich, generalizable feature representations without relying on costly manual annotations, forming the technical foundation for next-generation computational pathology tools [26] [27] [25].

Technical Foundations

Self-Supervised Learning in Computational Pathology

Self-supervised learning has emerged as a foundational paradigm for pre-training CPathFMs by leveraging the inherent structure of unlabeled histopathological images [25]. SSL methods create supervisory signals directly from the data itself, bypassing the need for manual annotations that are expensive, time-consuming, and subject to inter-observer variability [24]. This approach is particularly valuable in computational pathology, where expert pathologist annotations are a scarce resource [25].

The SSL framework typically involves two phases: pre-training on large-scale unlabeled datasets to learn general visual representations, followed by fine-tuning on smaller labeled datasets for specific downstream tasks [25]. This paradigm has demonstrated remarkable success in natural image analysis and has been effectively adapted to the computational pathology domain [27] [25]. Among various SSL techniques, contrastive learning and masked image modeling have shown the most promise for histopathology image analysis due to their ability to capture both global and local tissue patterns [25].

Contrastive Learning Frameworks

Contrastive learning operates on the principle of measuring similarities and differences between data points [25]. In computational pathology, this typically involves maximizing agreement between differently augmented views of the same image while minimizing agreement with other images [28] [25]. Several specialized contrastive frameworks have been adapted for pathology image analysis:

DINO (self-Distillation with NO labels) employs a student-teacher paradigm where the student network learns to match the output of a teacher network after centering operations [25]. The teacher network is updated via an exponential moving average (EMA) of the student weights, providing stable training without labels [25]. This approach has been successfully scaled to million-image datasets in pathology, demonstrating strong representation learning capabilities [26] [27].

DINOv2 enhances DINO by integrating iBOT, which incorporates Masked Image Modeling (MIM) [25]. MIM randomly masks portions of input images and trains the model to reconstruct the masked areas, enabling the model to learn valuable representations by understanding both local cellular structures and broader tissue contexts [25]. This combination has proven particularly effective for histopathology applications, improving generalization across diverse pathology datasets [25].

Supervised Contrastive Learning (SCL) extends the contrastive framework to leverage available labels by pulling together samples from the same class while pushing apart samples from different classes [29]. HistopathAI implements this approach through a hybrid network that merges SCL strategies with cross-entropy loss, specifically tailored for imbalanced histopathology datasets [29].

Prototypical Contrastive Learning, as implemented in the SongCi model for forensic pathology, learns a set of prototype representations that capture both tissue-specific and cross-tissue features [30]. This approach distills redundant information from high-resolution WSIs into a lower-dimensional prototype space, enabling efficient representation of diverse tissue patterns [30].

Masked Image Modeling Architectures

Masked Image Modeling (MIM) has emerged as a powerful self-supervised pre-training strategy inspired by masked language modeling in natural language processing [25]. In MIM, random portions of input images are masked, and the model is trained to reconstruct the missing portions based on the surrounding context [25]. This approach forces the model to learn meaningful representations of tissue structures and their spatial relationships.

The Masked Autoencoder (MAE) architecture implements MIM through an asymmetric encoder-decoder design [25]. The encoder operates only on visible patches, making the process computationally efficient, while the lightweight decoder reconstructs the original image from the encoded representations and mask tokens [25]. For histopathology images, this approach enables models to learn hierarchical features spanning cellular morphology to tissue architecture.

iBOT integrates MIM with online tokenization, combining the benefits of masked reconstruction with the representation stability of contrastive learning [25]. This hybrid approach has been incorporated into DINOv2, which has served as the foundation for several state-of-the-art pathology models, including UNI and Virchow [26] [27] [25].

Key Methodologies and Implementations

Foundation Models in Computational Pathology

Recent years have witnessed the development of several pioneering foundation models that implement contrastive learning and MIM at unprecedented scales in computational pathology:

Virchow is a 632 million parameter Vision Transformer (ViT) model trained on approximately 1.5 million H&E-stained WSIs from 100,000 patients at Memorial Sloan Kettering Cancer Center using the DINOv2 algorithm [26]. This represents 4-10× more WSIs than prior training datasets in pathology [26]. The model employs a multiview student-teacher self-supervised approach that leverages both global and local regions of tissue tiles to learn embeddings of WSI tiles [26]. Virchow has demonstrated exceptional performance in pan-cancer detection, achieving 0.95 specimen-level area under the receiver operating characteristic curve (AUC) across nine common and seven rare cancers [26].

UNI is a general-purpose self-supervised model pretrained on the "Mass-100K" dataset comprising more than 100 million tissue patches from 100,426 diagnostic H&E WSIs across 20 major tissue types [27]. Using DINOv2 with a ViT-Large architecture, UNI was evaluated on 34 computational pathology tasks of varying diagnostic difficulty [27]. The model demonstrates capabilities such as resolution-agnostic tissue classification and slide classification using few-shot class prototypes, achieving superior performance in classifying up to 108 cancer types in the OncoTree classification system [27].

SongCi introduces a visual-language model specifically tailored for forensic pathology applications, leveraging advanced prototypical cross-modal self-supervised contrastive learning [30]. Pretrained on a multi-center dataset comprising over 16 million high-resolution image patches and 471 unique diagnostic outcomes, SongCi employs a prototypical contrastive learning strategy that distills WSI patches into a lower-dimensional prototype space [30]. The model then uses cross-modal contrastive learning to align image representations with textual descriptions of gross findings and diagnostic outcomes [30].

HistopathAI implements a hybrid network structure that merges supervised contrastive learning strategies with cross-entropy loss, specifically designed for imbalanced histopathology datasets [29]. The framework employs Hybrid Deep Feature Fusion (HDFF) to combine feature vectors from both EfficientNetB3 and ResNet50, creating comprehensive representations of histopathology images [29]. Using a stepwise methodology that transitions from feature learning to classifier learning, HistopathAI has achieved state-of-the-art classification accuracy across multiple public datasets [29].

Quantitative Comparison of Foundation Models

Table 1: Performance Comparison of Major Foundation Models on Pan-Cancer Detection

| Model | Architecture | Pretraining Data | Pan-Cancer AUC | Rare Cancer AUC | Key Innovation |

|---|---|---|---|---|---|

| Virchow [26] | ViT (632M params) | 1.5M WSIs, 100K patients | 0.950 | 0.937 | Largest pathology foundation model; DINOv2 training |

| UNI [27] | ViT-Large | 100M patches, 100K WSIs | 0.940 | - | General-purpose model; 34 downstream tasks |

| HistopathAI [29] | EfficientNetB3 + ResNet50 | 7 public + 1 private dataset | State-of-art on all tested datasets | - | Supervised contrastive learning; hybrid feature fusion |

| SongCi [30] | Visual-language model | 16M patches, 2,228 vision-language pairs | - | - | Prototypical cross-modal contrastive learning |

Table 2: Technical Specifications of Major Foundation Model Training Approaches

| Model | SSL Algorithm | Multi-modal | Batch Size | Training Iterations | Embedding Dimension |

|---|---|---|---|---|---|

| Virchow [26] | DINOv2 | No | - | - | - |

| UNI [27] | DINOv2 | No | - | 50K-125K | - |

| SongCi [30] | Prototypical CL + Cross-modal CL | Yes (vision + language) | - | - | 933 prototypes |

| CTransPath [27] | Contrastive Learning | No | - | - | - |

Experimental Protocols and Methodologies

Model Pre-training with DINOv2

The DINOv2 self-supervised learning framework has been widely adopted for pre-training computational pathology foundation models [26] [27] [25]. The following protocol outlines the key methodological steps:

Data Preparation: Collect large-scale whole-slide images (WSIs) from diverse tissue types and preparation protocols. For Virchow, this involved 1.5 million H&E-stained WSIs from 100,000 patients, while UNI utilized 100,426 diagnostic H&E WSIs across 20 major tissue types [26] [27]. Extract tissue patches at multiple magnification levels (typically 20×, 10×, and 5×) to capture both cellular and architectural features.

Multi-crop Data Augmentation: Generate multiple augmented views for each patch using random combinations of transformations including color jittering, Gaussian blur, solarization, and random resized crops [25]. This creates the "student" and "teacher" views essential for the self-distillation process.

Self-Distillation Training: Implement the student-teacher framework where the student network learns to match the output distributions of the teacher network for different augmented views of the same image [25]. The teacher network parameters are updated via an exponential moving average (EMA) of the student parameters [25]. The training objective minimizes the cross-entropy loss between the student and teacher output distributions.

Multi-scale Feature Learning: Process image patches at multiple resolutions to capture both fine-grained cellular details and broader tissue architecture. This is particularly important in histopathology where diagnostic features span multiple scales [26].

Masked Image Modeling Integration: For DINOv2, incorporate masked patch modeling where random portions of input patches are masked and the model is trained to reconstruct the missing content [25]. This encourages the model to learn robust representations based on contextual understanding of tissue structures.

Prototypical Contrastive Learning for Forensic Pathology

The SongCi model introduces a specialized prototypical contrastive learning approach for forensic pathology applications [30]:

Prototype Learning: Each WSI is segmented into a collection of patches, and an image encoder extracts patch-level representations. These representations are projected into a low-dimensional space defined by shared prototypes across WSIs [30]. SongCi learned 933 prototypes using this self-supervised method.

Prototype Visualization and Analysis: Organize prototypes using dimensionality reduction techniques (UMAP) to identify both intra-tissue prototypes (encoding tissue-specific features) and inter-tissue prototypes (encoding shared histopathological features across organs) [30]. This enables the model to capture both specialized and generalizable patterns.

Cross-modal Alignment: Implement a gated-attention-boosted multi-modal block that integrates representations from paired WSI and gross key findings to align with forensic examination outcomes [30]. This creates a shared embedding space for visual and textual representations.

Zero-shot Inference: For unseen subjects, use gross key findings and corresponding WSIs to generate potential outcomes as textual queries. The model predicts final diagnostic results and provides explanatory factors highlighting critical elements associated with predictions [30].

Evaluation Methodologies for Foundation Models

Robust evaluation of computational pathology foundation models requires diverse tasks and datasets:

Pan-Cancer Detection: Evaluate model performance on detecting both common and rare cancers across various tissues [26]. Use specimen-level labels and measure area under the receiver operating characteristic curve (AUC) at both slide and specimen levels [26]. Include out-of-distribution data from external institutions to assess generalization capability.

Large-scale Multi-class Classification: Construct hierarchical cancer classification tasks following established oncology classification systems (e.g., OncoTree) [27]. Include both common and rare cancer types to evaluate model performance across diverse disease entities. Report top-K accuracy (K = 1, 3, 5), weighted F1 score, and AUROC performance metrics [27].

Few-shot and Zero-shot Learning: Assess model capability to adapt to new tasks with limited labeled examples [27]. Use class prototypes for prompt-based slide classification and evaluate performance with varying numbers of training examples [27].

Biomarker Prediction: Evaluate model performance on predicting molecular biomarkers from routine H&E images [26] [28]. This includes predicting genetic mutations, gene expression levels, and molecular subtypes based solely on morphological patterns in H&E-stained sections [28].

Performance Comparison and Scaling Laws

Foundation models in computational pathology demonstrate clear scaling laws where performance improves with increased model size, data diversity, and training duration [27].

Data Scaling: UNI demonstrated a +4.2% performance increase in top-1 accuracy when scaling from Mass-1K (1 million images) to Mass-22K (16 million images), and a further +3.7% increase when scaling to Mass-100K (100 million images) on the 43-class OncoTree cancer type classification task [27]. Similar trends were observed for the more challenging 108-class OncoTree code classification task [27].

Model Scaling: Comparing ViT-Base and ViT-Large architectures revealed that larger model architectures continue to benefit from increased data size, while smaller models may plateau in performance with very large datasets [27]. This highlights the importance of matching model capacity to dataset scale.

Algorithm Selection: DINOv2 consistently outperformed alternative self-supervised learning algorithms like MoCoV3 across various data scales and model architectures [27], establishing it as the current leading approach for pathology foundation models.

Table 3: Impact of Scaling on Model Performance (OncoTree Classification Tasks)

| Model Scale | Data Scale | OT-43 Top-1 Accuracy | OT-108 Top-1 Accuracy | Training Iterations |

|---|---|---|---|---|

| ViT-L/ Mass-1K [27] | 1M images, 1,404 WSIs | Baseline | Baseline | 50,000 |

| ViT-L/ Mass-22K [27] | 16M images, 21,444 WSIs | +4.2% | +3.5% | 50,000 |

| ViT-L/ Mass-100K [27] | 100M images, 100,426 WSIs | +7.9% | +6.5% | 125,000 |

The Scientist's Toolkit

Table 4: Essential Research Reagents and Computational Resources for Pathology Foundation Models

| Resource Category | Specific Tools/Components | Function/Purpose | Examples/Notes |

|---|---|---|---|

| Model Architectures | Vision Transformer (ViT) [26] [27] | Base architecture for processing image patches using self-attention mechanisms | Scalable to hundreds of millions of parameters |

| Convolutional Neural Networks (CNNs) [29] | Alternative backbone for feature extraction, often used in hybrid approaches | EfficientNetB3, ResNet50 in HistopathAI [29] | |

| SSL Frameworks | DINO/DINOv2 [26] [27] [25] | Self-distillation with no labels; combines contrastive learning with masked image modeling | Used in Virchow, UNI, and other state-of-the-art models |

| Prototypical Contrastive Learning [30] | Learns prototype representations for efficient encoding of diverse tissue patterns | Implemented in SongCi for forensic pathology | |

| Supervised Contrastive Learning (SCL) [29] | Leverages available labels to improve feature separation in embedding space | Used in HistopathAI for imbalanced datasets | |

| Data Processing Tools | WSInfer [31] | Toolbox for deep learning model deployment on whole-slide images | Provides end-to-end workflow for patch extraction and inference |

| QuPath [31] | Open-source platform for digital pathology image analysis | Used for visualization of model predictions as heatmaps | |

| Computational Resources | High-Performance GPUs [31] | Accelerate training of large foundation models | AMD Radeon Instinct MI210 (64GB RAM) or similar [31] |

| Network-Attached Storage (NAS) [31] | Store and manage large whole-slide image collections | Qnap NAS or similar systems with high-speed connectivity |

Foundation models (FMs) in computational pathology are large-scale artificial intelligence models pre-trained on vast datasets of histopathology images and, in some cases, associated text. These models learn universal feature representations that can be adapted to diverse downstream diagnostic tasks with minimal additional training, thereby addressing critical challenges such as data imbalance and heavy annotation dependency in medical AI [32] [12]. The development of these models is primarily organized around three distinct architectural paradigms: vision-only encoders that process image data alone, vision-language models (VLMs) that align visual and textual information, and whole-slide encoders designed to handle gigapixel whole-slide images (WSIs). Each architecture offers unique capabilities and addresses different aspects of the computational pathology workflow, from basic morphological analysis to integrated diagnostic reporting [33] [32].

Vision-Only Encoders: Learning from Images Alone

Core Architecture and Pre-training Methodology