Foundation Models vs. CNNs in Computational Pathology: A Paradigm Shift in Medical AI

This article provides a comprehensive analysis for researchers and drug development professionals on the pivotal differences between foundation models (FMs) and traditional convolutional neural networks (CNNs) in computational pathology.

Foundation Models vs. CNNs in Computational Pathology: A Paradigm Shift in Medical AI

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the pivotal differences between foundation models (FMs) and traditional convolutional neural networks (CNNs) in computational pathology. We explore the foundational shift from supervised, task-specific CNNs to large-scale, self-supervised FMs, detailing their distinct architectural principles, training methodologies, and data requirements. The scope extends to practical applications in diagnosis, prognosis, and biomarker prediction, while critically addressing performance benchmarking, computational burdens, and robustness challenges such as site-specific bias and geometric fragility. Finally, we synthesize validation evidence and discuss the future trajectory of generalist AI in advancing precision medicine.

From Task-Specific Tools to General-Purpose Foundations: Core Paradigms in Pathology AI

The emergence of digital pathology, characterized by the digitization of histopathological slides into high-resolution whole-slide images (WSIs), has created unprecedented opportunities for artificial intelligence (AI) to transform diagnostic workflows [1]. Within this domain, two deep neural network architectures have proven particularly influential: Convolutional Neural Networks (CNNs) and Transformer models. These architectures possess fundamentally different inductive biases—the inherent assumptions and preferences a model embeds to guide its learning process. CNNs are intrinsically biased toward processing local spatial correlations, making them highly effective for analyzing cellular morphology and tissue texture. In contrast, Transformers leverage self-attention mechanisms to model global contextual relationships, enabling them to capture long-range dependencies across disparate tissue regions—a capability critical for understanding complex tissue architecture and tumor microenvironment interactions [2]. The recent advent of foundation models, large-scale neural networks pre-trained on vast datasets, has further accentuated this architectural dichotomy, presenting researchers and clinicians with critical choices for model selection and development. This technical guide examines the core inductive biases of CNNs and Transformers, their implications for computational pathology tasks, and how their integration is shaping the next generation of pathology AI.

Core Architectural Principles and Inductive Biases

Convolutional Neural Networks: The Locality Prior

CNNs are fundamentally designed around the principle of locality and translation invariance. Their architectural inductive biases are hard-coded through a series of operations that explicitly assume the importance of local spatial patterns.

Local Receptive Fields: Convolutional operations use filters that traverse the input image with small, localized receptive fields (e.g., 3×3 or 5×5 pixels). This design forces the network to focus on local features such as edges, corners, and texture patterns in its initial layers, progressively building more complex, hierarchical representations in deeper layers [2]. In histopathology, this makes CNNs exceptionally adept at identifying nuclear morphology, mitotic figures, and local glandular structures.

Translation Equivariance: The weight sharing characteristic of convolutional filters means the same filter is applied across all spatial positions of the input. This creates translation equivariance, where shifting the input results in a corresponding shift in the feature map output. This property is invaluable in pathology because a malignant cell or architectural pattern remains diagnostically significant regardless of its position within a tissue section [2].

Hierarchical Feature Extraction: CNNs naturally extract features in a hierarchical manner, with early layers capturing simple patterns and subsequent layers combining these into increasingly complex constructs. This bottom-up processing mirrors how pathologists first identify individual cellular characteristics before assessing tissue-level organization.

The primary limitation of CNNs lies in their constrained receptive fields. Even deep networks with many layers have difficulty modeling long-range spatial relationships without additional architectural modifications, as their fundamental operation remains locally bounded [2].

Transformer Architectures: The Global Context Prior

Transformers, originally developed for natural language processing, operate on a fundamentally different principle: the self-attention mechanism. This mechanism allows the model to weigh the importance of all elements in the input sequence when processing each individual element.

Global Context via Self-Attention: The self-attention mechanism computes pairwise interactions between all patches (tokens) in an image. For an input sequence of tokens, it calculates query, key, and value vectors for each token, then computes attention weights as:

Attention(Q, K, V) = softmax(QKᵀ/√dₖ)V[2]This operation enables each patch to directly influence, and be influenced by, every other patch in the image, regardless of their relative positions. For WSIs, this allows the model to identify relationships between geographically distant but diagnostically linked tissue regions.

Positional Encoding: Unlike CNNs that inherently understand spatial relationships through convolution, Transformers require explicit positional encodings to incorporate spatial information. These encodings, added to the patch embeddings, inform the model about the relative or absolute positions of patches in the original image [2].

Minimal Spatial Inductive Bias: Transformers intentionally incorporate minimal prior assumptions about spatial relationships, enabling them to learn complex, non-local patterns directly from data. This flexibility comes at the cost of requiring substantial training data to discover spatial relationships that CNNs assume by design.

Vision Transformers (ViTs) adapt this architecture for images by dividing the input into fixed-size, non-overlapping patches, linearly embedding each patch, and processing the resulting sequence through standard Transformer encoder layers [2].

Comparative Analysis of Architectural Properties

Table 1: Core Architectural Properties of CNNs vs. Transformers

| Property | Convolutional Neural Networks (CNNs) | Transformer Models |

|---|---|---|

| Primary Inductive Bias | Locality & Translation Equivariance | Global context & Minimal spatial assumptions |

| Receptive Field | Local (grows hierarchically but remains limited) | Global (from first layer) |

| Core Operation | Convolution & Pooling | Self-Attention & Layer Normalization |

| Spatial Understanding | Built-in via convolution kernels | Learned via positional encodings |

| Parameter Efficiency | High (weight sharing) | Lower (dense attention matrices) |

| Data Requirements | Moderate | Substantial |

| Feature Integration | Hierarchical & local-to-global | Any-to-any & context-aware |

Empirical Performance in Pathology Applications

Benchmarking Studies and Direct Comparisons

Rigorous empirical evaluations have quantified the performance differences between CNN and Transformer architectures across various pathology tasks. A comprehensive 2025 study trained and evaluated 14 deep learning models—including both CNN-based and Transformer-based architectures—on the BreakHis breast cancer dataset [3]. The findings reveal nuanced performance characteristics:

Binary Classification Performance: In the less complex binary classification task (benign vs. malignant), multiple models achieved excellent performance. CNN-based models including ResNet50, RegNet, and ConvNeXT, along with the Transformer-based foundation model UNI, all reached an area under the curve (AUC) of 0.999. The best overall performance was achieved by ConvNeXT (a CNN variant), which attained an accuracy of 99.2%, specificity of 99.6%, and F1-score of 99.1% [3].

Multi-class Classification Performance: In the more challenging eight-class classification task, performance differences became more pronounced. The best-performing model was the fine-tuned foundation model UNI (Transformer-based), which attained an accuracy of 95.5%, specificity of 95.6%, and F1-score of 95.0% [3]. This suggests that Transformer architectures may particularly excel in complex classification scenarios requiring discrimination between subtle morphological subtypes.

Micro-Metastasis Detection: For particularly challenging tasks like lymph node micro-metastasis detection in breast cancer, hybrid approaches have shown promise. One study developed MetaTrans, a novel network combining meta-learning with Transformer and CNN components, which demonstrated superior performance on multi-center datasets compared to pure CNN or Transformer baselines [4].

Table 2: Experimental Performance Comparison on Pathology Tasks

| Model Architecture | Task | Dataset | Key Metric | Performance |

|---|---|---|---|---|

| ConvNeXT (CNN) | Binary Classification | BreakHis | Accuracy | 99.2% |

| UNI (Transformer) | 8-class Classification | BreakHis | Accuracy | 95.5% |

| Virchow (Foundation) | Pan-Cancer Detection | Multi-Cancer | AUC | 0.950 |

| rMetaTrans (Hybrid) | Micro-Metastasis Detection | BLCN-MiD | Recall | ~95% (inferred) |

| MSNet (Hybrid) | Lung Adenocarcinoma | Private Dataset | Accuracy | 96.55% |

Foundation Models in Pathology

Foundation models represent a paradigm shift in computational pathology. These models are pre-trained on massive, diverse datasets using self-supervised learning, producing versatile feature representations that can be adapted to various downstream tasks with minimal fine-tuning.

Virchow: Trained on approximately 1.5 million H&E-stained WSIs from 100,000 patients, Virchow is a 632 million parameter Vision Transformer. It enables pan-cancer detection with an AUC of 0.950 across nine common and seven rare cancers, demonstrating remarkable generalization capability [5]. This performance highlights how scale and diversity in pre-training can overcome Transformers' traditional data hunger.

TITAN: The Transformer-based pathology Image and Text Alignment Network is a multimodal whole-slide foundation model pre-trained on 335,645 WSIs. TITAN incorporates both visual self-supervised learning and vision-language alignment with corresponding pathology reports, enabling capabilities like zero-shot classification and cross-modal retrieval without task-specific fine-tuning [6].

UNI: As one of the first general-purpose pathology models trained via self-supervised learning on more than 100,000 diagnostic-grade H&E-stained whole slide images, UNI demonstrated strong performance across 34 computational pathology tasks and exhibits notable capabilities in resolution-agnostic classification and few-shot learning [3].

These foundation models overwhelmingly leverage Transformer architectures due to their superior scalability and ability to capture the long-range dependencies necessary for whole-slide analysis.

Implementation and Workflow Methodologies

Experimental Protocols for Model Development

The development of performant models in computational pathology follows carefully designed experimental protocols that account for the unique challenges of histopathological data.

Whole-Slide Image Processing: WSIs are gigapixel-sized images that cannot be processed directly. The standard methodology involves dividing WSIs into smaller patches (typically 256×256 or 512×512 pixels at 20× magnification). For example, the Virchow foundation model processes patches of 512×512 pixels at 20× magnification, extracting 768-dimensional features for each patch [5] [6].

Multi-Magnification Analysis: Many approaches employ a multi-stage process inspired by pathological practice. The MetaTrans network, for instance, uses separate models for different magnification levels: a tissue-recognition model for low magnification (4×) regions of interest and a cell-recognition model for high magnification (10×) images [4]. This approach mirrors how pathologists first scan at low power to identify suspicious areas before examining cellular details at higher magnification.

Weakly Supervised Learning: Given the difficulty of pixel-level annotations, slide-level labels are often used in a multiple instance learning framework. Features from individual patches are aggregated to make slide-level predictions, using methods like attention-based pooling or transformer aggregators [5].

Data Augmentation and Normalization: Histopathology images exhibit significant variability in staining protocols and scanner characteristics. Standard preprocessing includes color normalization techniques like histogram matching or more advanced deep learning approaches to improve model robustness and generalization [1].

Hybrid Architectures: Integrating Local and Global Context

Recognizing the complementary strengths of CNNs and Transformers, researchers have developed hybrid architectures that leverage both local feature extraction and global contextual modeling.

Fusion Architectures: One approach jointly combines CNN and Vision Transformer modules, where the CNN processes the input image to produce local feature embeddings, while the ViT computes global embeddings from patch sequences. These representations are then concatenated to form a joint feature vector for classification [2]. Empirical results on breast cancer histopathology images demonstrate that ViT+CNN fusion models consistently outperform standalone CNN or ViT models [2].

MSNet for Lung Adenocarcinoma: This framework employs a dual data stream input method, combining Swin Transformer and MLP-Mixer models to address global information between patches and local information within each patch. The model uses a Multilayer Perceptron (MLP) module to fuse these local and global features for classification, achieving 96.55% accuracy on lung adenocarcinoma pathology images [7].

MetaTrans for Micro-Metastasis: Designed for limited-data scenarios, MetaTrans optimizes Transformer architecture with meta-learning and CNN components to improve detection of lymph node micro-metastasis in breast cancer. The network is trained end-to-end and remains effective even when sample sizes are significantly smaller than those available for macro-metastases [4].

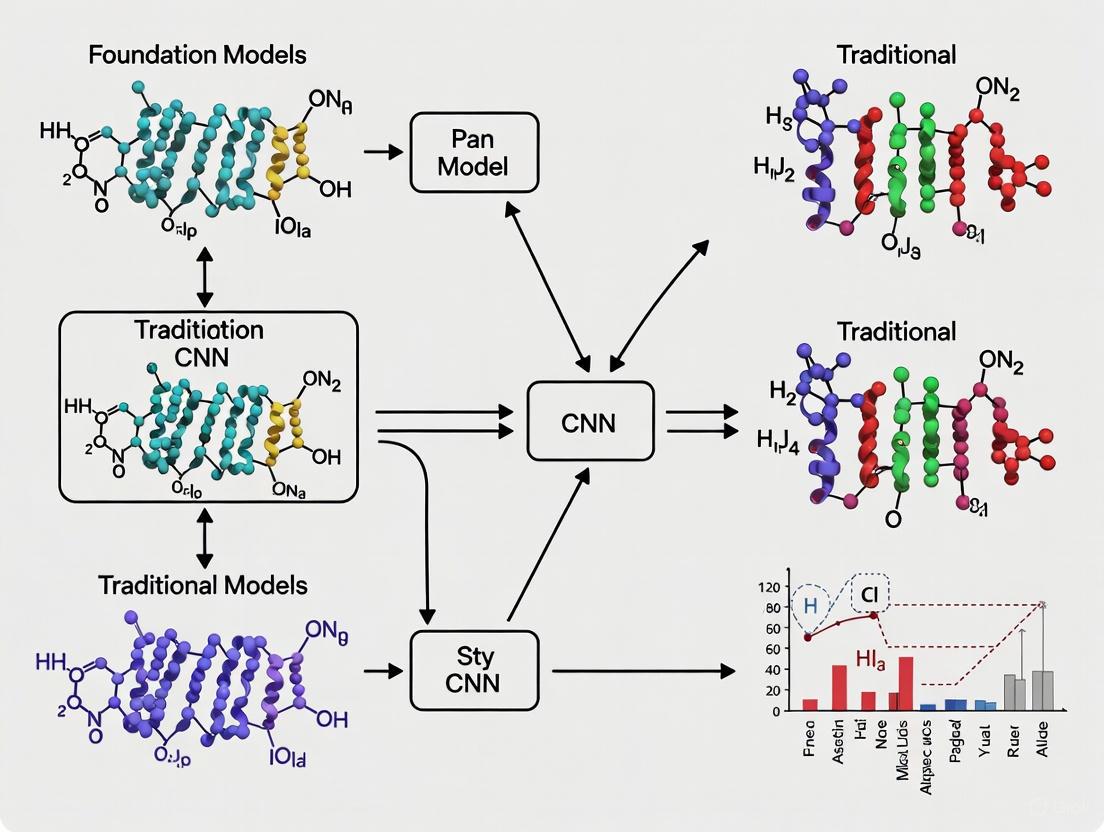

Diagram 1: Hybrid CNN-Transformer Workflow for Digital Pathology

Successful implementation of CNN and Transformer models in pathology requires careful selection of computational frameworks, datasets, and evaluation methodologies.

Table 3: Essential Research Reagents for Pathology AI Development

| Resource Category | Specific Examples | Function & Application |

|---|---|---|

| Public Datasets | BreakHis, CAMELYON, TCGA, LC25000 | Benchmark model performance on standardized datasets [3] [7] [4] |

| Foundation Models | Virchow, UNI, TITAN, CONCH | Pre-trained feature extractors for transfer learning [3] [5] [6] |

| Computational Frameworks | PyTorch, TensorFlow, MONAI | Model development, training, and inference pipelines |

| Whole-Slide Processing | OpenSlide, CuCIM, HistomicsUI | Efficient handling and patch extraction from gigapixel WSIs [4] |

| Annotation Tools | ASAP, QuPath, Digital Slide Archive | Region-of-interest marking and label creation |

| Evaluation Metrics | AUC, Accuracy, F1-Score, Cohen's Kappa | Quantitative performance assessment [3] |

| Interpretability Methods | Grad-CAM, Attention Rollout, Attention Maps | Model decision explanation and verification [2] |

Diagram 2: Architecture Selection Framework for Pathology Tasks

The inductive biases of CNNs and Transformers create a complementary relationship in computational pathology rather than a competitive one. CNNs provide efficient, locally-biased feature extraction well-suited to cellular-level analysis and scenarios with limited data. Transformers offer global contextual modeling capabilities essential for understanding tissue architecture and tumor microenvironment interactions, particularly valuable in complex diagnostic tasks. Foundation models, predominantly Transformer-based, are demonstrating remarkable capabilities in pan-cancer detection and rare cancer identification, achieving clinical-grade performance that matches or exceeds specialized models [5].

The future of pathology AI lies not in choosing between these architectures but in strategically combining them. Hybrid models that leverage CNN-Transformer fusion, multimodal approaches integrating histopathology with genomic and clinical data, and foundation models adapted through efficient fine-tuning represent the most promising directions. As these technologies mature, their successful clinical integration will depend not only on architectural advancements but also on addressing challenges in interpretability, robustness, and seamless workflow integration—ultimately fulfilling the promise of AI-powered precision pathology.

The field of computational pathology is undergoing a profound transformation, driven by a paradigm shift from traditional, supervised convolutional neural networks (CNNs) to large-scale foundation models trained with self-supervised learning. This transition represents more than merely a technical improvement—it constitutes a fundamental reimagining of how artificial intelligence models learn from histopathology data. Traditional CNNs, while revolutionary in their own right, face inherent limitations in generalizability, annotation dependency, and computational efficiency when applied to the gigapixel-scale complexity of whole slide images. Foundation models address these challenges through pre-training on massive, uncurated datasets, capturing fundamental biological representations rather than merely memorizing annotated patterns. This technical guide examines the architectural, methodological, and practical distinctions between these approaches within pathology research, providing researchers and drug development professionals with a comprehensive framework for understanding this critical evolution in digital pathology.

Fundamental Architectural Divergence

Traditional CNN Architectures in Pathology

Convolutional Neural Networks defined the first wave of deep learning success in computational pathology. Their architecture is fundamentally grounded in inductive biases well-suited to image data—local connectivity, spatial invariance, and hierarchical feature learning. CNNs process images through stacked convolutional layers that progressively extract features from low-level edges and textures to high-level morphological patterns, with pooling operations providing spatial invariance and reducing dimensionality [8]. This architectural approach enables CNNs to effectively learn from pixel-level annotations for tasks such as nuclei segmentation, mitosis detection, and tumor region identification [9].

In pathology specifically, the U-Net architecture with its encoder-decoder structure and skip connections became the benchmark for segmentation tasks, while variants like ResNet and VGG were widely adopted for classification [10]. However, a critical limitation of these architectures is their fixed receptive field, which restricts their ability to capture long-range dependencies in histopathology images—a significant drawback when analyzing tissue microenvironments and architectural patterns that extend across large spatial distances [10].

Foundation Model Architectures

Pathology foundation models predominantly leverage transformer-based architectures, specifically Vision Transformers (ViTs), which process images as sequences of patches using self-attention mechanisms. Unlike CNNs' local processing, self-attention enables global contextual understanding from the initial layers, capturing relationships between distant tissue regions that are clinically significant but challenging for CNNs to model effectively [11]. The scale of these models is substantially larger, with parameter counts ranging from millions in traditional CNNs to hundreds of billions in foundation models like Prov-GigaPath [11].

Table 1: Architectural Comparison Between CNNs and Pathology Foundation Models

| Characteristic | Traditional CNNs | Pathology Foundation Models |

|---|---|---|

| Core Architecture | Convolutional layers with pooling | Vision Transformers (ViTs) with self-attention |

| Receptive Field | Local, increases hierarchically | Global from first layer |

| Parameter Scale | Millions (e.g., ResNet-50: ~25M) | Hundreds of millions to billions (e.g., UNI: 303M, Virchow: 631M) [11] |

| Primary Strengths | Local feature extraction, translation invariance | Long-range dependency modeling, contextual understanding |

| Handling Whole Slide Images | Requires tiling and separate processing | Can process patch sequences with positional encoding |

This architectural evolution enables foundation models to develop a more comprehensive understanding of tissue architecture and cellular relationships, mirroring how human pathologists integrate local cytological details with global tissue patterns to reach diagnoses.

Training Paradigms: From Supervision to Self-Supervision

Traditional Supervised Learning

Supervised learning for CNNs requires extensive datasets with pixel-level or tile-level annotations meticulously labeled by pathologists. This paradigm dominated early computational pathology research, with models trained to map input images to specific outputs based on these annotations. The process involves forward propagation of image data through the network, calculation of loss between predictions and ground truth labels, and backward propagation for weight optimization [8]. While effective for specific tasks, this approach suffers from several critical limitations: (1) annotation bottleneck—the time and expertise required to label datasets limits scale; (2) task specificity—models excel only at the tasks they were trained on, with poor transfer learning capabilities; and (3) annotation bias—models inherit the subjective interpretations and potential biases of the annotating pathologists [9].

Self-Supervised Learning for Foundation Models

Self-supervised learning represents a fundamental shift from task-specific supervision to pretext task learning on unlabeled data. SSL methods create learning signals directly from the data itself, enabling models to learn generally useful representations without manual annotation. The core principle involves pre-training on vast unlabeled datasets—often millions of whole slide images—followed by minimal fine-tuning on specific downstream tasks [11].

Table 2: Self-Supervised Learning Methods in Pathology Foundation Models

| SSL Category | Representative Algorithms | Mechanism | Pathology Examples |

|---|---|---|---|

| Contrastive Learning | MoCo v3, DINO, DINOv2 | Learning representations by contrasting similar and dissimilar samples | CTransPath (SRCL), Virchow (DINOv2) [11] |

| Masked Image Modeling | MAE, iBOT | Reconstructing masked portions of input images | Phikon (iBOT) [11], MAE-based models [11] |

| Self-Distillation | DINO, BYOL | Student network learning from teacher network without labels | UNI, Virchow (DINOv2) [11] |

The scale of data used for pre-training pathology foundation models dwarfs that available for supervised approaches. For instance, UNI was trained on 100 million tiles from 100,000 diagnostic whole slide images [11], while Virchow utilized 2 billion tiles from 1.5 million slides [11]. This massive scale enables the learning of robust, generalizable representations that capture the fundamental biological and morphological patterns in histopathology images across diverse tissue types, staining protocols, and disease states.

Quantitative Performance Benchmarks

Recent comprehensive benchmarking studies reveal the substantial performance advantages of foundation models across diverse pathology tasks. Campanella et al. demonstrated that SSL-trained pathology models consistently outperform both supervised models and models pre-trained on natural images across six clinical tasks spanning three anatomical sites and two institutions [11]. Similarly, a clinical benchmark of public SSL pathology foundation models showed that models pre-trained with DINOv2, such as UNI and Phikon-v2, achieve state-of-the-art performance on tissue classification, mutation prediction, and survival analysis tasks [11].

Table 3: Performance Comparison of Selected Pathology Foundation Models

| Model | Architecture | SSL Algorithm | Training Data | Reported Performance Advantages |

|---|---|---|---|---|

| CTransPath | Hybrid CNN-Transformer | SRCL (MoCo-based) | 15.6M tiles, 32K slides | Superior to ImageNet pre-trained models for WSI classification [11] |

| UNI | ViT-Large | DINOv2 | 100M tiles, 100K slides | SOTA across 33 diverse tasks [11] |

| Virchow | ViT-Huge | DINOv2 | 2B tiles, 1.5M slides | Outperforms previous models for rare cancer detection [11] |

| Phikon-v2 | ViT | DINOv2 | 460M tiles, 58K slides | Robust performance across 8 slide-level tasks with external validation [11] |

A critical advantage of foundation models is their data efficiency in downstream tasks. SSL pre-trained models achieve strong performance with limited labeled examples—one study demonstrated that such models require only 25% of labeled data to achieve 95.6% of full performance compared to 85.2% for supervised baselines, representing a 70% reduction in annotation requirements [12]. This data efficiency significantly accelerates research and development cycles in both academic and pharmaceutical settings.

Robustness and Generalization Challenges

Despite their impressive capabilities, pathology foundation models face significant robustness challenges, particularly regarding sensitivity to technical variations between medical centers. A recent landmark study evaluated ten publicly available pathology foundation models and found that most current models remain unrobust to medical center differences, with their embedding spaces more strongly organized by medical center signatures than by biological features [13].

The study introduced a novel Robustness Index (RI) that measures the degree to which biological features dominate confounding features in model embeddings. Formally defined as:

[ Rk = \frac{\sum{i=1}^n \sum{j=1}^k \mathbf{1}(yj = yi)}{\sum{i=1}^n \sum{j=1}^k \mathbf{1}(cj = c_i)} ]

Where (y) represents biological class, (c) represents medical center, and (k) is the number of nearest neighbors considered [13]. Alarmingly, only one of the ten evaluated models achieved a robustness index greater than one, indicating that for most models, medical center confounders dominated over biological features in their representation spaces [13]. This technical confounder sensitivity poses significant challenges for clinical deployment where models must perform consistently across diverse healthcare settings with variations in staining protocols, scanner equipment, and tissue processing methods.

Experimental Protocols and Methodologies

Protocol for Traditional Supervised CNN Training

A standardized protocol for training CNNs for pathology image analysis involves these critical steps:

Data Preparation: Extract patches from whole slide images (WSIs) at appropriate magnification (typically 20×). Standard patch sizes range from 256×256 to 1024×1024 pixels. Apply stain normalization to reduce color variation between institutions.

Annotation: Generate pixel-level or patch-level annotations through expert pathologist review. Common annotation types include segmentation masks for nuclei or tumor regions, and categorical labels for tissue types or disease states.

Data Augmentation: Apply transformations including rotation, flipping, color jittering, and elastic deformations to increase dataset diversity and improve model robustness.

Model Selection: Choose appropriate CNN architecture (U-Net for segmentation, ResNet for classification) either training from scratch or using transfer learning from ImageNet pre-trained weights.

Training: Optimize model parameters using supervised loss functions (cross-entropy for classification, Dice loss for segmentation) with appropriate batch sizes and learning rate schedules.

Validation: Evaluate performance on held-out test sets from the same institution, with careful monitoring for overfitting using techniques such as early stopping.

Protocol for Self-Supervised Foundation Model Pre-training

The emerging standard methodology for pre-training pathology foundation models comprises:

Unlabeled Data Curation: Collect large-scale, diverse histopathology datasets spanning multiple cancer types, tissue sources, and medical centers. Models like Virchow2 incorporate 3.1 million WSIs from nearly 200 tissue types [11].

Pretext Task Design: Implement SSL algorithms such as DINOv2 or MAE that create supervisory signals from the data itself without human annotation.

Multi-Scale Processing: Extract image patches at multiple magnifications (e.g., 5×, 10×, 20×, 40×) to capture both tissue-level architecture and cellular-level details [11].

Large-Scale Distributed Training: Leverage substantial computational resources (typically hundreds of GPUs) for extended training periods (days to weeks) on billion-patch datasets.

Embedding Space Validation: Analyze resulting feature spaces using methodologies like the Robustness Index to quantify organization by biological versus confounding features [13].

Protocol for Robustness Evaluation

To assess model robustness to medical center variations, researchers should implement:

Multi-Center Dataset Curation: Collect data from at least 3-5 independent medical centers with documented variations in staining protocols and scanning equipment.

Robustness Index Calculation: For each sample, identify k-nearest neighbors in the model's embedding space (typically k=50). Calculate the ratio of neighbors sharing biological class versus medical center identity [13].

Cross-Center Performance Evaluation: Measure model performance separately for each medical center, analyzing performance degradation relative to the training center.

Confusion Matrix Analysis: Examine whether classification errors correlate with medical center identity rather than biological similarity.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Essential Computational Tools for Pathology Foundation Model Research

| Tool Category | Specific Solutions | Primary Function | Application in Research |

|---|---|---|---|

| Deep Learning Frameworks | PyTorch, TensorFlow | Model implementation and training | Core infrastructure for developing and training both CNNs and foundation models |

| Whole Slide Image Processing | QuPath, PyHIST, OpenSlide | WSI annotation, tiling, and preprocessing | Essential for data preparation and patch extraction from gigapixel WSIs [8] |

| Computational Pathology Libraries | TIAToolbox, HistoML | Domain-specific algorithms and utilities | Provides standardized implementations of pathology-specific processing pipelines |

| Self-Supervised Learning Implementations | DINOv2, MAE reference code | SSL algorithm implementations | Critical for reproducing state-of-the-art pre-training methodologies |

| Benchmarking Platforms | Clinical pathology benchmarks [11] | Standardized model evaluation | Enables fair comparison across different architectures and training regimes |

| Computational Resources | GPU clusters (H100, A100), cloud computing | Large-scale model training | Essential for training foundation models on million-slide datasets |

The transition from supervised learning on labeled data to large-scale self-supervision represents a fundamental paradigm shift in computational pathology. While foundation models demonstrate remarkable capabilities and generalization potential, important challenges remain in achieving true robustness to technical variations between medical centers. Future research directions include: (1) developing novel SSL objectives specifically designed to learn stain-invariant and scanner-invariant representations; (2) creating efficient fine-tuning methodologies that preserve pre-trained knowledge while adapting to new tasks and domains; and (3) establishing standardized benchmarking frameworks that rigorously evaluate model performance across diverse clinical settings [13] [11].

The ongoing SLC-PFM NeurIPS 2025 competition highlights the continued momentum in this field, providing researchers with unprecedented access to large-scale pathology datasets and establishing standardized evaluation protocols across 23 clinically relevant tasks [14]. As foundation models continue to evolve, they hold immense promise for transforming pathology research and clinical practice—enabling more accurate diagnoses, revealing novel biomarkers, and ultimately improving patient outcomes through computational advances that capture the complex biological reality of disease processes.

The field of computational pathology is undergoing a profound transformation, driven by a fundamental shift in its underlying data philosophy. The traditional approach, reliant on medium-scale, meticulously curated datasets for training task-specific Convolutional Neural Networks (CNNs), is being challenged by a new paradigm that leverages very large, unlabeled corpora of whole slide images (WSIs) to train foundational vision models. This shift mirrors the revolution witnessed in natural language processing and general computer vision but is uniquely complex due to the gigapixel size, structural heterogeneity, and clinical stakes of pathology data. This whitepaper delineates the core differences between traditional CNNs and foundation models within pathology research, examining the technical, methodological, and philosophical underpinnings of this transition. It provides a comprehensive guide for researchers and drug development professionals navigating this new landscape, complete with quantitative benchmarks, experimental protocols, and essential toolkits.

Core Architectural and Methodological Differences

The transition from CNNs to foundation models represents more than a simple improvement in scale; it constitutes a fundamental redesign of model architecture, learning objectives, and data utilization.

Traditional CNNs: The Curated Data Paradigm

Traditional CNNs, such as ResNet50, have been the workhorses of early computational pathology. Their development follows a supervised learning approach that is heavily dependent on human expertise and curation.

- Architecture: CNNs are characterized by their inductive biases, such as translation equivariance, built directly into their architecture through convolutional layers. These biases make them highly data-efficient, enabling effective learning from smaller, annotated datasets [15].

- Data Requirements: These models require extensive pixel-level annotations for tasks like segmentation and detection. Creating these datasets is time-consuming, expensive, and represents a significant bottleneck for scaling applications [16] [17].

- Scope and Limitations: CNNs are typically designed as task-specific models. A model trained for prostate cancer grading cannot be applied to lung cancer subtyping without retraining. This narrow focus limits their generalizability and increases the cumulative resource investment for developing multiple diagnostic tools [16].

Foundation Models: The Unlabeled Corpora Paradigm

Pathology foundation models, such as UNI, Prov-GigaPath, and Virchow, represent a paradigm shift toward large-scale, self-supervised learning on diverse, unlabeled data.

- Architecture: Most modern foundation models are based on Vision Transformers (ViTs). Unlike CNNs, ViTs have minimal inherent inductive biases, which allows them to learn more complex, generalizable patterns directly from data when trained on a massive scale [15] [18].

- Data Utilization: These models are pretrained using self-supervised learning (SSL) algorithms like DINOv2 or masked autoencoding on vast, unlabeled datasets comprising millions of tissue tiles. This process creates a universal feature extractor without the need for manual annotations [18] [11].

- Scope and Promise: The goal is a general-purpose model that can be adapted with minimal effort (e.g., via linear probing or light fine-tuning) to a wide array of downstream tasks—from cancer subtyping and mutation prediction to biomarker identification—across different organs and diseases [11].

Table 1: Fundamental Differences Between Traditional CNNs and Pathology Foundation Models

| Feature | Traditional CNNs | Pathology Foundation Models |

|---|---|---|

| Core Architecture | Convolutional layers with strong inductive biases | Vision Transformers with minimal inductive biases |

| Learning Paradigm | Supervised learning | Self-supervised learning (SSL) |

| Primary Data Source | Medium-scale, curated, labeled datasets | Very large-scale, unlabeled whole slide image corpora |

| Annotation Requirement | High (pixel-/slide-level) | None for pre-training |

| Typical Model Scope | Task-specific | General-purpose, adaptable to many tasks |

| Representative Examples | ResNet50, U-Net [16] | Prov-GigaPath, UNI, Virchow [15] [18] [11] |

Quantitative Performance Benchmarks

Empirical evidence demonstrates the tangible benefits of the foundation model approach, particularly in performance and robustness, though not without caveats.

Diagnostic Accuracy and Task Performance

Foundation models have consistently demonstrated superior performance on standardized benchmarks. In a systematic assessment on clinical datasets, foundation models outperformed models pretrained on natural images (e.g., ImageNet) across a variety of tasks [11]. A specific study on kidney disease classification reported that foundation models achieved an Area Under the Receiver Operating Characteristic curve (AUROC) of over 0.980 on internal validation for diagnosing healthy controls, acute interstitial nephritis, and diabetic kidney disease. Crucially, in external validation, the performance of a traditional ImageNet-pretrained ResNet50 "markedly dropped," while the foundation models maintained robust performance [15]. Prov-GigaPath attained state-of-the-art performance on 25 out of 26 benchmark tasks, including cancer subtyping and genomic mutation prediction, showing significant improvements over the next-best methods [18].

Data Scale and Model Size

The power of foundation models is unlocked by training on datasets that are orders of magnitude larger than those used for traditional CNNs.

Table 2: Scale Comparison of Representative Pathology Models

| Model | Architecture | Training Data (Tiles / Slides) | Parameters | SSL Algorithm |

|---|---|---|---|---|

| CTransPath | Hybrid CNN-Transformer | 16M / 32K [11] | 28M [11] | SRCL (MoCo) |

| Phikon | Vision Transformer (ViT-Base) | 43M / ~6K [11] | 86M [11] | iBOT |

| UNI | ViT-Large | 100M / 100K [15] [11] | 303M [11] | DINOv2 |

| Virchow | ViT-Huge | 2B / ~1.5M [11] | 631M [11] | DINOv2 |

| Prov-GigaPath | GigaPath (LongNet) | 1.3B / 171K [15] [18] | 1.1B [11] | DINOv2 + MAE |

Critical Analysis: Challenges and Limitations of the New Paradigm

Despite their promise, foundation models in pathology face significant challenges that temper the enthusiasm around a simple "bigger is better" narrative.

- Domain Mismatch and Robustness: A core failure mode is domain mismatch. Models can be sensitive to variations in staining protocols, scanner types, and tissue preparation across different medical centers. One multi-center study found that for most foundation models, embeddings grouped more strongly by the source hospital than by biological class, leading to performance drops of 15-25% on external validation [19]. This highlights a critical lack of robustness for direct clinical deployment.

- Information Bottleneck and Task Complexity: Foundation models often function by compressing large image patches into small embedding vectors, which can create an information bottleneck. While these compressed representations excel at simple tasks like disease detection, their performance can drop to near-chance levels for more complex tasks such as biomarker prediction or immunotherapy response forecasting, which require nuanced tissue context [20].

- Computational Cost and Adaptation Issues: The immense size of foundation models creates a practical barrier to adaptation. Full fine-tuning is often unstable and computationally prohibitive, forcing researchers to rely on "linear probing" (training a simple classifier on frozen embeddings). This contradicts the foundational model premise of easy adaptation and can yield suboptimal performance compared to end-to-end training of smaller, task-specific models [19]. One study noted that foundation models could consume up to 35× more energy than a task-specific model [19].

Experimental Protocols for Model Evaluation

For researchers seeking to validate and compare these models, a standardized experimental protocol is essential. The following workflow, specifically for slide-level classification, is widely adopted and can be applied to both public and proprietary datasets.

Step 1: Data Curation and Preprocessing

- Dataset Curation: Assemble a dataset of WSIs with slide-level labels (e.g., diagnosis, mutation status). Ensure patient-level splits to avoid data leakage. Multi-center datasets are preferred for robust evaluation [15] [11].

- Patch Extraction: Use a tool like Slideflow to tile WSIs at a standard magnification (e.g., 20x) into non-overlapping patches (e.g., 256x256 pixels) [15].

- Background Filtering: Apply filters (e.g., Otsu's thresholding, Gaussian blur) to remove non-tissue background patches [15].

Step 2: Feature Extraction

- Encoder Selection: Choose a pretrained foundation model (e.g., UNI, Phikon, Prov-GigaPath) or a baseline CNN (e.g., ImageNet-pretrained ResNet50) as the feature extractor.

- Embedding Generation: Process each tissue patch through the encoder to generate a feature vector (embedding). This converts a WSI from a bag of pixels into a bag of feature vectors [15] [11].

Step 3: Slide-Level Aggregation and Classification

- Multiple Instance Learning (MIL): Since WSIs are too large for direct processing, treat each WSI as a "bag" of patch instances. Use an MIL aggregator to combine patch features into a single slide-level representation. Common aggregators include:

- Model Training & Validation: Train the MIL model (aggregator + classifier) on the slide-level features and labels. Perform k-fold cross-validation and, critically, external validation on a held-out dataset from a different institution to assess generalizability [15].

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of foundation models requires a suite of computational tools and resources. The table below details key components for building and evaluating pathology foundation models.

Table 3: Essential Research Reagents for Pathology Foundation Model Research

| Reagent / Resource | Type | Function & Application | Exemplars / Notes |

|---|---|---|---|

| Large-Scale WSI Datasets | Data | Pretraining and benchmarking foundation models. Scale and diversity are critical. | TCGA, KPMP, JP-AID [15]; Proprietary datasets (e.g., Prov-Path, MSKCC data) [18] [11]. |

| Self-Supervised Learning (SSL) Algorithms | Algorithm | Enables pretraining on unlabeled images by defining a pretext task. | DINOv2, iBOT, Masked Autoencoder (MAE) [11]. |

| Vision Transformer (ViT) | Model Architecture | Backbone network for most foundation models; processes images as sequences of patches. | ViT-Base, ViT-Large, ViT-Huge [11]; GigaPath for whole-slide context [18]. |

| Multiple Instance Learning (MIL) Frameworks | Model Architecture | Aggregates patch-level features for slide-level prediction without patch-level labels. | ABMIL, CLAM, TransMIL [15]. |

| Computational Framework | Software | Libraries for WSI processing, model training, and visualization. | Slideflow, PyTorch, TIAToolbox [15]. |

The shift from medium-scale curated datasets to very large unlabeled corpora represents a fundamental evolution in the data philosophy of computational pathology. Foundation models, pretrained with self-supervised learning on massive WSI datasets, offer a powerful and versatile alternative to traditional, task-specific CNNs. Their demonstrated superiority in benchmark tasks and improved robustness marks a significant leap forward.

However, this new paradigm is not a panacea. Challenges related to domain robustness, computational burden, and effective adaptation remain active areas of research. The path forward likely involves a synthesis of scale and specificity—developing larger, more diverse pretraining datasets while also innovating in domain-robust architectures and efficient fine-tuning methods. For researchers and drug developers, mastering this new paradigm is essential. It promises to unlock deeper biological insights from pathology images, accelerate biomarker discovery, and ultimately, contribute to more personalized and effective patient therapies.

The field of computational pathology is undergoing a fundamental transformation, moving from specialized, task-specific models to general-purpose, scalable foundations. Traditional Convolutional Neural Networks (CNNs) have long been the workhorse of digital pathology image analysis, but their architectural limitations constrain their ability to leverage massive datasets and develop generalized representations of histological structures. Foundation models, trained on broad data at unprecedented scale, represent a paradigm shift not merely in size but in core capability and application philosophy. These models differ fundamentally in their approach to expressiveness—the ability to capture and represent complex pathological patterns—and scalability—the capacity to improve performance with increasing model and data size. Understanding these differences is crucial for researchers, scientists, and drug development professionals seeking to leverage artificial intelligence for advancing precision medicine and therapeutic discovery.

The term "foundation model," popularized by the 2021 Stanford Report, refers to any model "trained on broad data (generally using self-supervision at scale) that can be adapted to a wide range of downstream tasks" [21]. What makes these models foundational is not their specific architecture but their applicability across diverse domains through adaptation mechanisms like prompting and fine-tuning. In pathology, this transition mirrors the broader AI landscape where companies are increasingly training foundation models to solve highly specialized problems, meet compliance requirements, and build core competency in the technology [21].

Architectural Foundations: CNN vs. Foundation Model Design

Traditional CNN Architecture in Pathology

Convolutional Neural Networks have demonstrated remarkable success in pathological image analysis through their hierarchical feature extraction approach. CNN-based architectures such as ResNet50, VGG16, DenseNet121, and EfficientNet employ a series of convolutional layers that progressively detect increasingly complex patterns—from edges and textures in early layers to specific cellular structures and tissue organizations in deeper layers [3]. This inductive bias toward translational invariance and local feature detection makes CNNs particularly well-suited for identifying morphological patterns in histopathological images where local cellular arrangements carry significant diagnostic meaning.

The expressiveness of CNNs is fundamentally constrained by their receptive fields—the segment of input image that affects a particular neuron's activation. Although deeper networks expand receptive fields through pooling and strided convolutions, they primarily capture local spatial hierarchies rather than global contextual relationships across entire whole slide images (WSIs). This limitation becomes particularly significant in pathology, where diagnostic interpretation often requires understanding spatial relationships between distant tissue regions and integrating contextual information across multiple scales.

Foundation Model Architecture and Scaling Principles

Foundation models in pathology, particularly transformer-based architectures like UNI and Prov-GigaPath, employ self-attention mechanisms that enable global receptive fields from the initial processing stages [3] [22]. Unlike CNNs that process images through local convolutional filters, vision transformers typically divide images into patches and process them through self-attention layers that can model relationships between all patches simultaneously. This architectural difference fundamentally enhances expressiveness by capturing long-range dependencies across entire tissue sections without being constrained by local receptive fields.

The scalability of foundation models emerges from both architectural considerations and training methodology. The transformer architecture demonstrates remarkably consistent scaling behavior—performance predictably improves with increased model parameters, training data, and computational budget [21]. This scalability enables foundation models to leverage massive unlabeled datasets through self-supervised learning objectives, learning rich representations of histopathological structures without requiring expensive manual annotations. Prov-GigaPath, for instance, was trained on 1.3 billion image patches extracted from 171,189 whole slide images, demonstrating the massive data scaling potential of these approaches [3].

Table 1: Architectural Comparison Between CNN and Foundation Models in Pathology

| Characteristic | Traditional CNN | Pathology Foundation Model |

|---|---|---|

| Core Architecture | Convolutional layers with local receptive fields | Transformer blocks with self-attention mechanisms |

| Receptive Field | Local, expands through network depth | Global from initial layers |

| Training Data Scale | Thousands to hundreds of thousands of images | Millions to billions of image patches [3] |

| Parameter Count | Typically millions to low hundreds of millions | Hundreds of millions to billions |

| Primary Learning Approach | Supervised learning with labeled data | Self-supervised pre-training followed by fine-tuning |

| Context Integration | Limited to local spatial hierarchies | Whole-slide and cross-slide relationships |

| Representative Models | ResNet50, VGG16, EfficientNet [3] | UNI, Prov-GigaPath, GigaPath [3] [22] |

Quantitative Performance Comparison

Experimental Benchmarking in Diagnostic Tasks

Comparative studies provide compelling evidence for the performance advantages of scaled foundation models in complex pathology tasks. A 2025 comprehensive analysis evaluated 14 deep learning models—including both CNN-based and transformer-based architectures—on the BreakHis v1 dataset for breast cancer classification [3]. In binary classification tasks, which present relatively low complexity, multiple models achieved excellent performance, with CNN-based models (ResNet50, RegNet, ConvNeXT) and the transformer-based foundation model UNI all reaching an AUC of 0.999 [3].

The expressiveness advantage of foundation models becomes particularly evident in more complex diagnostic scenarios. In eight-class classification tasks with increased complexity, performance differences among architectures became more pronounced. The best-performing model was the fine-tuned foundation model UNI, which attained an accuracy of 95.5% (95% CI: 94.4–96.6%), a specificity of 95.6% (95% CI: 94.2–96.9%), an F1-score of 95.0% (95% CI: 93.9–96.1%), and an AUC of 0.998 (95% CI: 0.997–0.999) [3]. This superior performance in complex multi-class scenarios demonstrates how foundation models leverage their expansive pre-training to maintain discriminative power across fine-grained diagnostic categories.

Table 2: Performance Comparison on Breast Cancer Classification (BreakHis v1 Dataset)

| Model Type | Model Name | Binary Classification AUC | Eight-Class Classification Accuracy | Eight-Class F1-Score |

|---|---|---|---|---|

| CNN-Based | ConvNeXT | 0.999 | Not reported | Not reported |

| CNN-Based | ResNet50 | 0.999 | Not reported | Not reported |

| CNN-Based | RegNet | 0.999 | Not reported | Not reported |

| Foundation Model | UNI (fine-tuned) | 0.999 | 95.5% | 95.0% |

| Foundation Model | UNI (zero-shot) | Poor performance | Poor performance | Poor performance |

Beyond Classification: Advanced Predictive Capabilities

The scalability of foundation models enables capabilities that extend far beyond traditional classification tasks. The HE2RNA model demonstrates how deep learning can predict RNA-Seq expression profiles from H&E-stained whole slide images alone, creating a bridge between histological morphology and molecular profiling [23]. Through a multitask weakly supervised approach trained on matched WSIs and RNA-Seq data from TCGA, HE2RNA learned to predict expression levels for thousands of genes with statistically significant correlation to ground truth measurements [23].

This capability to map histological patterns to molecular phenotypes represents a quantum leap beyond traditional CNN applications. For instance, HE2RNA accurately predicted expression of immune-related genes (C1QB, NKG7, ARHGAP9) across multiple cancer types and identified pathway-level activities including angiogenesis, hypoxia, DNA repair, and immune responses [23]. Similarly, a 2021 study demonstrated that attention-based multiple instance learning could predict gene expression from H&E-stained tissues with sufficient accuracy to discriminate fulminant-like pulmonary tuberculosis in murine models, achieving sensitivity and specificity of 0.88 and 0.95 respectively [24].

Experimental Protocols and Methodologies

Foundation Model Pre-training Protocol

The development of pathology foundation models follows a rigorous multi-stage protocol centered on self-supervised learning at scale. The pre-training phase typically leverages massive unlabeled datasets comprising hundreds of thousands to millions of whole slide images from diverse tissue types and disease states [3] [22].

Data Curation and Preprocessing: Whole slide images are partitioned into smaller patches (typically 256×256 pixels) at multiple magnification levels. UNI, for instance, was trained on more than 100 million image tiles extracted from over 100,000 diagnostic-grade H&E-stained whole slide images across 20 major tissue types [3]. Quality control procedures remove artifacts, blurry regions, and non-tissue areas.

Self-Supervised Learning Objective: Models learn through pretext tasks that don't require manual annotations. Common approaches include masked image modeling (where the model learns to predict randomly masked portions of the image) and contrastive learning (where the model learns to identify different augmentations of the same image). Prov-GigaPath employed the novel GigaPath architecture incorporating LongNet to handle giga-pixel scale context [3].

Training Infrastructure and Scale: Training occurs on specialized hardware, typically GPU clusters, for weeks or months. The exponential relationship between model size, data quantity, and computational requirements necessitates substantial infrastructure investment. Companies increasingly invest in on-premises training infrastructure, trading flexibility for predictable architecture and availability [21].

Transfer Learning and Fine-tuning Protocol

The true power of foundation models emerges through adaptation to specific downstream tasks. The fine-tuning protocol enables researchers to leverage pre-trained representations for specialized applications with limited labeled data.

Task-Specific Data Preparation: Depending on the target application, researchers curate labeled datasets typically ranging from hundreds to thousands of annotated examples. For classification tasks, slide-level or region-level labels are prepared; for segmentation tasks, pixel-level annotations are required.

Model Adaptation: The pre-trained foundation model serves as a feature extractor, with the final layers modified or replaced to suit the specific task. During fine-tuning, all or most model weights are updated using task-specific data. The learning rate is typically set lower than during pre-training to avoid catastrophic forgetting of general representations.

Performance Validation: Models are evaluated using standard metrics appropriate to the task (accuracy, AUC, F1-score for classification; Dice coefficient for segmentation) with rigorous cross-validation or hold-out testing. Clinical validation often involves multiple independent datasets to assess generalizability across institutions and staining protocols.

Molecular Prediction Protocol

The prediction of molecular features from histology represents one of the most advanced applications of foundation models in pathology. The HE2RNA protocol exemplifies this approach [23]:

Multi-modal Data Alignment: Whole slide images are aligned with matched molecular data (RNA-Seq, protein expression, genetic mutations) from the same samples. The TCGA database frequently serves as this data source, providing paired histology and molecular profiling.

Weakly-Supervised Training: Models learn to predict molecular features using only slide-level labels without regional annotations. The HE2RNA model employs a multitask approach where each task corresponds to predicting the expression level of a specific gene [23].

Spatial Expression Mapping: Through interpretable design, these models can generate virtual spatialization of gene expression, creating heatmaps that localize molecular activity to specific tissue regions. This spatial prediction is validated through comparison with immunohistochemistry staining on independent datasets [23].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for Pathology Foundation Model Research

| Reagent / Resource | Function and Application | Example Specifications |

|---|---|---|

| Whole Slide Images (WSIs) | Digital representations of histopathology slides for model training and validation | H&E-stained, 40x magnification, gigapixel resolution [22] |

| Annotation Software | Tools for labeling regions of interest, cell types, and pathological structures | Digital pathology platforms with collaborative annotation features |

| Computational Infrastructure | Hardware for training and deploying large-scale models | High-performance GPU clusters with specialized memory [21] |

| Molecular Datasets | Paired genomic, transcriptomic, or proteomic data for multi-modal learning | RNA-Seq, mutation calls, protein expression data [23] |

| Benchmark Datasets | Standardized datasets for model evaluation and comparison | Publicly available datasets like BreakHis, TCGA [3] [23] |

| Experiment Tracking Systems | Software for managing training runs, hyperparameters, and results | Specialized MLops platforms (e.g., Neptune.ai) [21] |

Implementation Challenges and Considerations

Data Scalability and Infrastructure Requirements

The scalability advantages of foundation models come with substantial computational costs that present implementation challenges. Training foundation models requires specialized hardware infrastructure, virtually always utilizing GPUs with extensive memory and processing capabilities [21]. The scale of data processing is immense—Prov-GigaPath processed 1.3 billion image patches, requiring sophisticated data pipelines and distributed training approaches [3]. Organizations must weigh investments in on-premises infrastructure against cloud-based solutions, balancing computational demands against data privacy and compliance requirements, particularly in healthcare settings with strict regulatory frameworks [21] [25].

Domain Adaptation and Generalization

While foundation models demonstrate remarkable generalization capabilities, their application to specific pathology domains requires careful adaptation. Studies consistently show that foundation model encoders used without fine-tuning produce generally poor performance on specialized classification tasks [3]. The zero-shot capabilities that appear in natural language foundation models are less pronounced in computational pathology, necessitating targeted fine-tuning with domain-specific data. This adaptation process requires both technical expertise in machine learning and domain knowledge in pathology to ensure models learn clinically relevant features and maintain diagnostic accuracy across tissue types, staining protocols, and scanner variations.

Future Directions and Strategic Implications

The evolution toward foundation models in computational pathology represents more than a technical improvement—it constitutes a fundamental shift in how pathological analysis is conceptualized and implemented. The scalability of these models enables continuous improvement with additional data and computational resources, creating virtuous cycles of capability enhancement [21]. For pharmaceutical development, this progression offers opportunities to identify novel biomarkers, predict treatment response, and accelerate therapeutic discovery through more sophisticated analysis of histological patterns [25].

The strategic implications for healthcare institutions and drug development companies are substantial. Successful foundation model strategies are characterized by early proof-of-concept projects, building deep expertise across all aspects of training, application-based performance evaluation, and maintaining focus on core objectives [21]. As these technologies mature, they promise to transform pathology from a primarily descriptive discipline to a quantitative, predictive science capable of extracting profound insights from the morphological patterns that underlie disease processes.

The trajectory is clear: while traditional CNNs will continue to serve specific, limited-scope applications, foundation models represent the future of computational pathology—more expressive, more scalable, and ultimately more capable of capturing the extraordinary complexity of human disease through histopathological analysis.

Harnessing Foundation Models and CNNs for Real-World Pathology Tasks

The analysis of whole-slide images (WSIs) presents a unique computational challenge due to their gigapixel size and the complex, context-dependent nature of pathological diagnosis. Traditional convolutional neural networks (CNNs) have provided a foundation for automated analysis but face significant limitations in handling WSIs' extreme resolution and weak supervision requirements. The emergence of foundation models (FMs) pretrained on massive datasets represents a paradigm shift, offering more powerful and transferable feature representations. This whitepaper examines the critical technical evolution from traditional CNNs to foundation models within multiple instance learning (MIL) frameworks for computational pathology. We provide a comprehensive analysis of how this integration enhances diagnostic accuracy, robustness, and generalization across diverse clinical scenarios, supported by quantitative benchmarks, implementation protocols, and practical research frameworks.

Foundation Models vs. Traditional CNNs: A Technical Paradigm Shift

Fundamental Architectural and Training Differences

The transition from traditional CNNs to foundation models in pathology represents more than incremental improvement—it constitutes a fundamental shift in approach. Traditional CNNs such as ResNet and VGG, typically pretrained on natural image datasets like ImageNet, operate as feature extractors that capture general texture patterns but lack domain-specific morphological understanding [19]. These models struggle with the exceptional complexity of tissue morphology, where diagnostic interpretation depends on multi-scale contextual relationships that natural image-trained models fail to capture adequately [19].

Foundation models address these limitations through self-supervised learning on massive histopathology-specific datasets. Unlike CNNs trained with supervised learning on limited annotated data, FMs leverage self-supervised objectives such as masked image modeling and contrastive learning, pretrained on millions of histology image patches [6] [26]. This enables learning of rich, transferable representations of tissue microstructure without reliance on scarce manual annotations. Architecturally, while CNNs process individual patches in isolation, newer FMs like TITAN employ Vision Transformers (ViTs) that can capture long-range dependencies across entire WSIs by processing sequences of patch embeddings in a spatially-aware manner [6].

Performance and Generalization Benchmarks

Table 1: Quantitative Performance Comparison Between CNN and Foundation Models

| Model Category | Representative Models | AUROC Range | Key Strengths | Major Limitations |

|---|---|---|---|---|

| Traditional CNNs | ResNet, VGG | 0.916-0.909 [27] | Computational efficiency, architectural simplicity | Limited domain specificity, poor cross-site generalization |

| Pathology Foundation Models | CONCH, Virchow, UNI, TITAN | 0.984-0.992 [27] [6] | Superior transfer learning, multimodal capabilities, site robustness | Computational intensity, training instability, security vulnerabilities [19] |

Empirical evaluations demonstrate the significant performance advantage of foundation models over traditional approaches. The PEAN system, which incorporates pathologists' visual attention patterns, achieved an accuracy of 96.3% and AUC of 0.992 on internal testing, outperforming CNN-based models by substantial margins [27]. Similarly, the TITAN foundation model outperformed supervised baselines and existing multimodal slide foundation models across diverse tasks including cancer subtyping, biomarker prediction, and outcome prognosis [6].

Critical for clinical deployment, foundation models exhibit enhanced robustness across healthcare institutions. While traditional CNNs often suffer from performance degradation due to site-specific biases, uncertainty-aware FM ensembles like PICTURE maintained diagnostic accuracy (AUROC 0.924-0.996) across five independent international cohorts [26]. However, systematic evaluations reveal that despite their advantages, pathology FMs still exhibit fundamental weaknesses including low absolute accuracy in some contexts (F1 scores ~40-42% in zero-shot retrieval), geometric instability, and concerning security vulnerabilities to adversarial attacks [19].

MIL Frameworks: The Bridge Between FMs and WSI Classification

The MIL Paradigm in Computational Pathology

Multiple instance learning provides the essential computational framework for managing the extreme dimensionality of WSIs by treating each slide as a "bag" containing thousands to millions of individual patch "instances." Standard MIL approaches aggregate patch-level predictions to generate slide-level diagnoses while identifying diagnostically relevant regions [28]. Traditional attention-based MIL (ABMIL) frameworks process patch embeddings independently, overlooking critical spatial relationships between neighboring tissue regions [29].

Recent advances have addressed this limitation through spatially-aware architectures. The GABMIL framework explicitly captures inter-instance dependencies while maintaining computational efficiency, achieving up to 7 percentage point improvement in AUPRC over standard ABMIL [29]. Similarly, SMMILe leverages instance-based MIL to achieve superior spatial quantification without compromising WSI classification performance, demonstrating that explicit modeling of patch relationships is essential for accurate morphological interpretation [28].

Integrating Foundation Models with MIL Frameworks

The integration of FMs with MIL frameworks creates a powerful synergy for WSI analysis. FMs provide superior patch embeddings that capture rich morphological features, while MIL frameworks enable effective aggregation of these features for slide-level prediction. This combination has proven particularly effective in challenging diagnostic scenarios. For instance, the PICTURE system integrates nine different pathology foundation models within an ensemble MIL framework to differentiate glioblastoma from primary central nervous system lymphoma, achieving an AUROC of 0.989 with validation across five independent cohorts [26].

Table 2: Performance of Integrated FM-MIL Frameworks Across Cancer Types

| Framework | Architecture | Datasets | Key Results | Clinical Application |

|---|---|---|---|---|

| SMMILe [28] | Superpatch-based measurable MIL | 6 cancer types, 3,850 WSIs | Matches/exceeds SOTA classification with outstanding spatial quantification | Metastasis detection, subtype prediction, grading |

| AttriMIL [30] | Attribute-aware MIL with multi-branch scoring | 5 public datasets | Superior bag classification and disease localization | Differentiating subtle tissue variations |

| PICTURE [26] | Uncertainty-aware FM ensemble | 2,141 CNS slides | AUROC 0.989, validated across 5 cohorts | Differentiating glioblastoma from mimics |

| TITAN [6] | Multimodal vision-language FM | 335,645 WSIs | Superior few-shot and zero-shot classification | Rare disease retrieval, cancer prognosis |

The AttriMIL framework demonstrates how MIL can be enhanced through attribute-aware mechanisms that quantify pathological attributes of individual instances, establishing region-wise and slide-wise constraints to model instance correlations during training [30]. This approach captures intrinsic spatial patterns and semantic similarities between patches, enhancing sensitivity to challenging instances and subtle tissue variations that are critical for accurate diagnosis.

Experimental Protocols and Implementation Frameworks

WSI Preprocessing and Feature Extraction

A standardized preprocessing pipeline is essential for reproducible WSI analysis. The following protocol, synthesized from multiple studies, ensures consistent input data quality:

- Whole-Slide Scanning: Utilize FDA-approved WSI scanners (e.g., Philips Ultra-Fast Scanners) with quality control procedures to maintain consistent sharpness and color fidelity [31].

- Tissue Segmentation: Apply automated algorithms to detect and segment tissue regions, excluding background areas. The PICTURE system identifies patches containing mostly blank backgrounds using color and cell density profiles [26].

- Patch Extraction: Tessellate WSIs into non-overlapping patches, typically 256×256 or 512×512 pixels at 20× magnification. Studies indicate that using larger patches (512×512) reduces sequence length without compromising morphological information [6].

- Color Normalization: Standardize stain variations using statistical methods (e.g., based on mean and standard deviation of pixel intensities) or deep learning-based normalization to mitigate site-specific batch effects [26].

- Feature Extraction: Process patches through frozen foundation model encoders to generate feature embeddings. The TITAN model uses CONCHv1.5 to extract 768-dimensional features for each 512×512 patch [6].

MIL Model Training and Optimization

Following feature extraction, implement MIL training with the following considerations:

- Feature Grid Construction: Spatially arrange patch embeddings into a 2D grid replicating their original positions in the tissue [6]. This preserves spatial context essential for morphological assessment.

- Data Augmentation: Apply feature-space augmentations including vertical and horizontal flipping, and posterization to enhance robustness [6]. The iBOT framework uses global and local cropping from feature grids for self-supervised pretraining [6].

- Spatial Context Integration: Implement spatially-aware MIL aggregation. GABMIL captures inter-instance dependencies through interaction-aware representations, while TransMIL employs transformer architectures to model long-range dependencies [29].

- Uncertainty Quantification: Integrate epistemic uncertainty measures using Bayesian inference, deep ensembles, and normalizing flows to identify out-of-distribution samples and enhance model reliability [26].

Evaluation Metrics and Validation

Comprehensive model assessment should include:

- Slide-Level Classification: Area Under Receiver Operating Characteristic Curve (AUROC), accuracy, F1-score, and balanced accuracy across multiple testing cohorts [26].

- Spatial Quantification: Patch-level localization accuracy measured against pathologists' annotations or eye-tracking data [27] [28].

- Robustness Evaluation: Performance consistency across multiple institutions, scanners, and staining protocols, quantified through Robustness Index (RI) [19].

- Clinical Utility: Diagnostic concordance with expert pathologists, time savings, and performance on rare or challenging cases [27].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Tools for FM-MIL Integration

| Resource Category | Specific Tools | Function | Key Features |

|---|---|---|---|

| Foundation Models | CONCH, Virchow, UNI, CTransPath, Phikon [26] | Patch feature extraction | Self-supervised learning on pathology-specific datasets |

| MIL Frameworks | ABMIL, TransMIL, GABMIL, SMMILe, AttriMIL [28] [30] [29] | Slide-level prediction from patches | Spatial context modeling, attention mechanisms |

| Whole-Slide Datasets | TCGA, Camelyon16, in-house collections [28] [26] | Model training and validation | Multi-center, multi-cancer, paired clinical data |

| Computational Tools | PyTorch, VOSviewer, Whole-Slide Processing Libraries [32] | Implementation and analysis | Support for large-scale WSI processing |

The integration of foundation models with multiple instance learning frameworks represents a significant advancement in computational pathology, enabling more accurate, robust, and interpretable analysis of whole-slide images. This technical synergy addresses critical limitations of traditional CNN-based approaches by combining domain-specific pretraining with spatially-aware aggregation mechanisms. Despite persistent challenges including computational demands, security vulnerabilities, and validation requirements, the FM-MIL paradigm shows tremendous promise for clinical translation. Future developments will likely focus on multimodal integration, federated learning approaches to enhance data privacy, and specialized architectures designed specifically for the hierarchical organization of tissue morphology. As these technologies mature, they hold the potential to transform pathological diagnosis, biomarker discovery, and personalized treatment planning in oncology and beyond.

The rapidly emerging field of computational pathology has demonstrated tremendous promise in developing objective prognostic models from histology images, yet most approaches remain limited by their unimodal focus [33]. Traditional diagnostic workflows in pathology integrate morphological assessment with molecular profiling and clinical data, creating a pressing need for computational frameworks that can similarly fuse these heterogeneous data streams. Multimodal integration represents a transformative approach that simultaneously examines pathology whole slide images (WSIs) and molecular profile data to predict patient outcomes and discover prognostic biomarkers that would remain invisible to unimodal analysis [33].

Within this paradigm, a fundamental shift is occurring from traditional Convolutional Neural Networks (CNNs) to pathology foundation models pretrained using self-supervised learning on massive datasets. This technical evolution enables more robust feature extraction and dramatically improves performance across diverse downstream tasks, particularly when integrated with multimodal data sources [15]. The capacity to align histopathological patterns with genomic alterations and clinical reports represents a critical advancement toward precision oncology, offering the potential to identify novel biomarkers and improve patient risk stratification beyond the capabilities of single-modality analysis [33] [34].

Foundation Models vs. Traditional CNNs: Core Architectural Differences

Fundamental Technical Distinctions

Pathology foundation models fundamentally differ from traditional CNNs in their architecture, training methodology, and data requirements. CNNs extract spatial patterns using small convolutional kernels across multiple layers and possess strong inductive biases, which enables high performance with limited datasets but prevents them from fully leveraging large-scale data [15]. In contrast, Vision Transformers (ViTs) utilized in foundation models employ self-attention mechanisms with minimal inductive biases, allowing them to outperform CNNs when trained on extensive pathology image datasets [15].

The training approaches further differentiate these architectures. Traditional CNNs typically utilize supervised learning with ImageNet initialization, requiring extensive labeled data for effective training. Pathology foundation models employ self-supervised learning (SSL) pretrained on massive unlabeled datasets comprising millions of pathology image patches, learning generalized representations that transfer effectively to various diagnostic tasks with minimal fine-tuning [15]. This fundamental difference in training paradigm enables foundation models to develop a more comprehensive understanding of histopathological structures and their variations.

Performance Implications in Pathology Tasks