From Barriers to Breakthroughs: A Strategic Guide to Matching Implementation Strategies with Determinants

This article provides a comprehensive guide for researchers and drug development professionals on systematically matching implementation strategies to contextual determinants.

From Barriers to Breakthroughs: A Strategic Guide to Matching Implementation Strategies with Determinants

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on systematically matching implementation strategies to contextual determinants. It covers foundational principles, practical methodological tools, and advanced approaches for troubleshooting and validation. By integrating the latest evidence and frameworks like the Consolidated Framework for Implementation Research (CFIR), this guide aims to enhance the precision and effectiveness of implementation efforts in biomedical and clinical settings, ultimately accelerating the translation of evidence-based interventions into practice.

Understanding the Core: Key Determinants and Implementation Strategy Fundamentals

Defining Implementation Determinants, Barriers, and Facilitators

The successful integration of evidence-based practices into routine care hinges on understanding the factors that influence the implementation process. Implementation determinants are the barriers and facilitators that predict or explain the success or failure of implementation efforts [1]. Within the broader context of matching implementation strategies to determinants, a critical first step is the systematic identification and categorization of these determinants. This protocol outlines standardized approaches for defining implementation determinants, with specific application notes for researchers in healthcare and drug development.

The Consolidated Framework for Implementation Research (CFIR) serves as a widely adopted determinant framework that includes 48 constructs and 19 subconstructs across five major domains: Innovation, Outer Setting, Inner Setting, Individuals, and Implementation Process [1]. This framework provides a systematic structure for identifying and categorizing determinants, offering a common language and taxonomy that enables comparability across studies and settings. Proper identification of determinants enables researchers to subsequently select tailored implementation strategies that address specific barriers and leverage existing facilitators [2].

Theoretical Framework and Definitions

Core Definitions and Conceptual Distinctions

Implementation Determinants: Factors that act as barriers or facilitators to implementation success, operating across multiple contextual levels [1]. These factors can be prospectively assessed to predict implementation outcomes or retrospectively evaluated to explain outcomes that have already occurred.

Barriers: Factors that impede the adoption, implementation, or sustainability of an evidence-based intervention. In a recent systematic review of mental health interventions in schools, barriers constituted 61.6% (N = 207) of identified implementation factors [3].

Facilitators: Factors that enhance or enable the adoption, implementation, or sustainability of an evidence-based intervention. These represented 38.4% (N = 129) of implementation factors in the same systematic review [3].

Implementation Outcomes: The effects of deliberate and purposive actions to implement new treatments, practices, and services, which can include adoption, fidelity, penetration, sustainability, and implementation cost [1] [4].

CFIR Domain Specifications

The updated CFIR framework organizes determinants into five domains with clearly defined boundaries [1]:

Table: CFIR Domains and Construct Specifications

| Domain | Key Constructs | Definition and Boundaries |

|---|---|---|

| Innovation | Evidence strength, Adaptability, Design quality | The evidence-based intervention being implemented. Must be clearly distinguished from implementation strategies. |

| Outer Setting | Patient needs, External policies, Peer pressure | The economic, political, and social context surrounding the organization. |

| Inner Setting | Structural characteristics, Networks, Culture, Implementation climate | The organizational context where implementation occurs, including resources and leadership. |

| Individuals | Knowledge, Self-efficacy, Beliefs | The roles and characteristics of individuals involved in implementation. |

| Implementation Process | Planning, Engaging, Executing, Reflecting | The activities and strategies used to implement the innovation. |

Diagram 1: CFIR Determinants Domain Structure. This diagram illustrates the five major domains of implementation determinants and their key constructs as defined by the Consolidated Framework for Implementation Research.

Methodological Protocols for Determinant Assessment

Mixed-Methods Assessment Approach

A mixed-methods approach combining qualitative and quantitative data collection provides the most comprehensive assessment of implementation determinants. The CFIR Leadership Team recommends a five-step process for conducting implementation research using the framework [1]:

Step 1: Study Design - Define research questions and implementation outcomes, then specify domain boundaries specific to the project context.

Step 2: Data Collection - Employ semi-structured interviews, focus groups, surveys, or observational methods to assess determinants.

Step 3: Data Analysis - Use directed content analysis informed by CFIR constructs, with rigorous coding procedures.

Step 4: Data Interpretation - Interpret findings to identify which determinants distinguish between implementation success and failure.

Step 5: Knowledge Dissemination - Report findings to inform implementation strategy selection.

Qualitative Assessment Protocol

Interview Guide Development:

- Develop semi-structured interview guides using CFIR constructs as a priori codes [5] [6]

- Include open-ended questions about experiences with the innovation and implementation process

- Probe for both barriers and facilitators across all relevant domains

- Pilot test and refine guides with content experts

Sampling Strategy:

- Employ purposive sampling to ensure representation of key stakeholders [6] [5]

- Target individuals with diverse roles, experiences, and perspectives

- Sample until reaching thematic saturation (typically 20-30 participants) [5]

Data Collection:

- Conduct individual interviews or focus groups

- Audio record and professionally transcribe interviews

- Collect demographic and contextual data to characterize the sample

Analysis Procedure:

- Use directed content analysis informed by CFIR constructs [6] [5]

- Develop a codebook with definitions for CFIR constructs

- Apply both a priori codes (CFIR constructs) and emergent codes

- Use multiple coders and establish inter-rater reliability

- Analyze data within and across interviews to identify themes

- Write analytic memos and hold team meetings to discuss emerging patterns [6]

Quantitative Assessment Protocol

Survey Development:

- Adapt existing CFIR-based instruments when available

- Develop items that operationalize CFIR constructs

- Include both closed-ended and open-ended response options

- Ensure items measure both presence and strength of determinants

Data Collection:

- Administer to larger samples to assess generalizability

- Collect data at multiple time points to track changes

- Ensure adequate response rates through follow-up procedures

Analysis Procedure:

- Conduct descriptive analyses to characterize determinants

- Perform comparative analyses to identify determinants associated with outcomes

- Use multivariate analyses to examine complex relationships

- Integrate quantitative and qualitative findings

Application Notes: Case Examples and Data Synthesis

Case Example Synthesis

Recent applications of determinant assessment across diverse healthcare contexts demonstrate consistent methodological approaches and findings:

Table: Determinant Assessment Case Examples

| Study Context | Methodology | Key Barriers | Key Facilitators | Implementation Outcomes |

|---|---|---|---|---|

| Moral Injury Interventions for Nurses [5] | Qualitative interviews using CFIR (N=25) | Resource costs, leadership support gaps, inability to take breaks, professional image concerns | Unit-specific tailoring, team social support, desire for change, high motivation to provide quality care | Improved identification of determinants to inform intervention development |

| School-Based Mental Health Interventions [3] | Systematic review of 26 studies | Scheduling conflicts, low mental health prioritization, logistical challenges | Leadership support, age-appropriate design, staff engagement | Identified need for integration into school structures and alignment with academic priorities |

| ED Buprenorphine Initiation [6] | PRISM-guided qualitative interviews (N=28) | Organizational culture constraints, clinician training gaps, patient connection challenges | Tailored implementation, organizational commitment, training support | Informed development of multilevel implementation strategies |

Determinant Rating and Prioritization Protocol

After identifying determinants, systematic rating and prioritization enables focused strategy development:

Rating Criteria:

- Strength: Intensity of the determinant's influence (low/medium/high)

- Valence: Whether the determinant acts as a barrier (-) or facilitator (+)

- Prevalence: How widely the determinant is experienced across stakeholders

- Modifiability: The extent to which the determinant can be changed

Prioritization Matrix:

- Create a 2x2 matrix with "strength of influence" and "modifiability" as axes

- Prioritize determinants with high strength and high modifiability

- Acknowledge but deprioritize determinants with low modifiability

Stakeholder Validation:

- Present preliminary findings to stakeholders for validation

- Use member checking to verify interpretation accuracy [5]

- Incorporate feedback into final determinant prioritization

Table: Implementation Determinant Research Reagent Solutions

| Tool/Resource | Function | Application Notes |

|---|---|---|

| CFIR Technical Assistance Website (www.cfirguide.org) [1] | Repository of tools, templates, and guidance | Provides interview guides, coding guidelines, and memo templates; updated regularly by CFIR Leadership Team |

| CFIR Construct Coding Guidelines [1] | Standardized definitions and coding rules | Ensures consistent application of CFIR constructs across team members and studies |

| ERIC Implementation Strategy Taxonomy [4] | Menu of 73 implementation strategies with definitions | Enables systematic linking of determinants to potential implementation strategies |

| AACTT Framework [4] | Specifies implementation outcomes by Action, Actor, Context, Target, and Time | Improves alignment between determinants, strategies, and outcomes through behavioral specification |

| Implementation Strategy Mapping Methods [2] | Step-by-step process for linking determinants to strategies | Guides systematic matching of determinants to implementation strategies using codesign approaches |

Determinant-to-Strategy Matching Framework

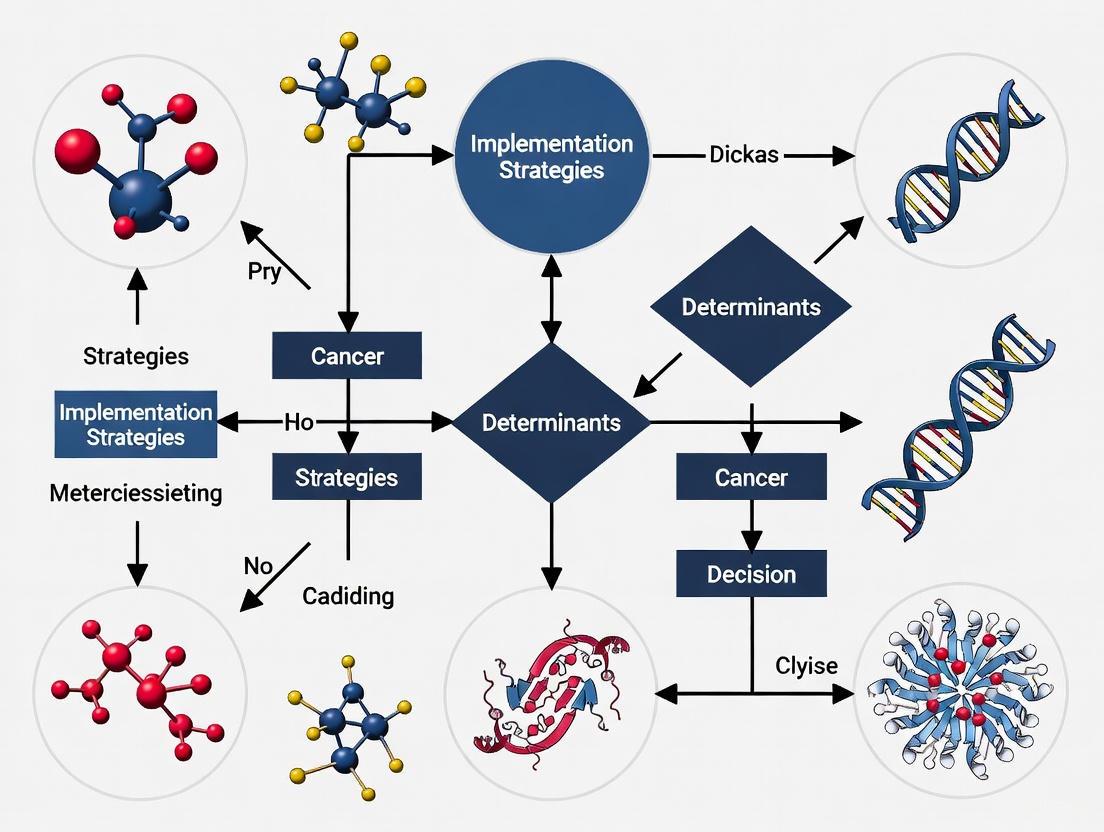

The ultimate goal of defining implementation determinants is to inform the selection and tailoring of implementation strategies. The following diagram illustrates the complete pathway from determinant assessment to strategy implementation:

Diagram 2: Determinant to Strategy Matching Pathway. This workflow illustrates the systematic process from initial determinant assessment through implementation strategy selection and evaluation.

The matching process involves:

Systematic Linking: Using established matching tools like the CFIR-ERIC Matching Tool to connect specific determinants to evidence-based implementation strategies [2]

Codesign Approach: Engaging stakeholders in collaborative sessions to contextualize and adapt strategies to local settings [2]

Specification: Clearly defining implementation strategies using Proctor's naming, definition, and operationalization framework [4]

Tailoring: Modifying strategy bundles to address the unique constellation of determinants in specific contexts

This systematic approach to defining implementation determinants provides the essential foundation for the broader thesis of matching implementation strategies to determinants research, enabling more effective and efficient translation of evidence into practice.

The Consolidated Framework for Implementation Research (CFIR) is one of the most highly cited determinant frameworks in implementation science, designed to predict or explain barriers and facilitators to implementation success [7] [1]. First published in 2009 and updated in 2022 based on extensive user feedback, the CFIR provides a structured, comprehensive menu of constructs that influence the implementation of evidence-based innovations across diverse settings [7] [8]. The framework consolidates constructs from multiple implementation theories and models into a single overarching framework, offering a practical guide for systematic assessment of contextual factors that impact implementation effectiveness [8].

The CFIR serves as a determinant framework that categorizes contextual factors (independent variables) that may influence implementation outcomes (dependent variables) [1]. Its overarching aim is to help researchers and practitioners identify critical barriers and facilitators that predict or explain implementation success or failure [7]. The framework has been applied extensively in healthcare settings but has also been used in education, agriculture, community settings, and low- and middle-income countries [7] [8]. As of late 2023, the original CFIR article had been cited over 10,000 times in Google Scholar and over 4,600 times in PubMed, demonstrating its substantial impact on the field [8].

The CFIR Framework: Domains and Constructs

The updated CFIR organizes 48 constructs and 19 subconstructs across five major domains that collectively capture the multidimensional nature of implementation contexts [1] [9]. The framework was revised based on feedback from experienced users obtained through both literature review and surveys, with updates addressing important critiques including better centering innovation recipients and adding determinants to equity in implementation [7]. The five domains form an interconnected system that shapes implementation outcomes.

Innovation Domain

The Innovation Domain encompasses characteristics of the "thing" being implemented—whether a clinical treatment, educational program, or service [9]. This domain includes eight key constructs that capture how stakeholders perceive the innovation itself, which significantly influences implementation success.

Table 1: Innovation Domain Constructs

| Construct Name | Construct Definition |

|---|---|

| Innovation Source | The degree to which the group that developed and/or visibly sponsored use of the innovation is reputable, credible, and/or trustable |

| Innovation Evidence-Base | The degree to which the innovation has robust evidence supporting its effectiveness |

| Innovation Relative Advantage | The degree to which the innovation is better than other available innovations or current practice |

| Innovation Adaptability | The degree to which the innovation can be modified, tailored, or refined to fit local context or needs |

| Innovation Trialability | The degree to which the innovation can be tested or piloted on a small scale and undone |

| Innovation Complexity | The degree to which the innovation is complicated, reflected by its scope and/or the nature and number of connections and steps |

| Innovation Design | The degree to which the innovation is well designed and packaged, including how it is assembled, bundled, and presented |

| Innovation Cost | The degree to which the innovation purchase and operating costs are affordable |

Outer Setting Domain

The Outer Setting Domain encompasses the larger context in which the inner setting exists, such as hospital systems, school districts, or states [9]. This domain captures external influences on implementation, including seven core constructs and associated subconstructs that shape how external factors facilitate or hinder implementation efforts.

Table 2: Outer Setting Domain Constructs

| Construct Name | Construct Definition |

|---|---|

| Critical Incidents | The degree to which large-scale and/or unanticipated events disrupt implementation and/or delivery of the innovation |

| Local Attitudes | The degree to which sociocultural values and beliefs encourage the Outer Setting to support implementation and/or delivery of the innovation |

| Local Conditions | The degree to which economic, environmental, political, and/or technological conditions enable the Outer Setting to support implementation and/or delivery of the innovation |

| Partnerships & Connections | The degree to which the Inner Setting is networked with external entities, including referral networks, academic affiliations, and professional organization networks |

| Policies & Laws | The degree to which legislation, regulations, professional group guidelines and recommendations, or accreditation standards support implementation and/or delivery of the innovation |

| Financing | The degree to which funding from external entities (e.g., grants, reimbursement) is available to implement and/or deliver the innovation |

| External Pressure | The degree to which external pressures drive implementation and/or delivery of the innovation |

Inner Setting Domain

The Inner Setting Domain encompasses the immediate environment where implementation occurs, such as specific hospitals, schools, or units within organizations [9]. This domain includes both general organizational characteristics and innovation-specific factors, with eleven key constructs that capture the institutional context that either enables or constrains implementation.

Table 3: Inner Setting Domain Constructs

| Construct Name | Construct Definition |

|---|---|

| Structural Characteristics | The degree to which infrastructure components support functional performance of the Inner Setting |

| Relational Connections | The degree to which there are high quality formal and informal relationships, networks, and teams within and across Inner Setting boundaries |

| Communications | The degree to which there are high quality formal and informal information sharing practices within and across Inner Setting boundaries |

| Culture | The degree to which there are shared values, beliefs, and norms across the Inner Setting |

| Tension for Change | The degree to which the current situation is intolerable and needs to change |

| Compatibility | The degree to which the innovation fits with workflows, systems, and processes |

| Relative Priority | The degree to which implementing and delivering the innovation is important compared to other initiatives |

| Incentive Systems | The degree to which tangible and/or intangible incentives and rewards and/or disincentives and punishments support implementation and delivery of the innovation |

| Mission Alignment | The degree to which implementing and delivering the innovation is in line with the overarching commitment, purpose, or goals in the Inner Setting |

| Available Resources | The degree to which resources are available to implement and deliver the innovation |

| Access to Knowledge & Information | The degree to which guidance and/or training is accessible to implement and deliver the innovation |

Individuals: Roles & Characteristics Domain

The Individuals Domain captures the roles and characteristics of people involved in or affected by implementation [9]. This domain is organized into two subdomains: Roles (documenting specific positions and responsibilities) and Characteristics (capturing individual attributes that influence implementation), with nine role constructs and four characteristic constructs.

CFIR Individuals Domain Structure

Implementation Process Domain

The Implementation Process Domain encompasses the activities and strategies used to implement the innovation [9]. This domain includes six key constructs that capture the active implementation efforts, distinguishing the implementation process (activities that end after implementation) from the innovation itself (which continues when implementation is complete).

Table 4: Implementation Process Domain Constructs

| Construct Name | Construct Definition |

|---|---|

| Teaming | The degree to which individuals join together, intentionally coordinating and collaborating on interdependent tasks, to implement the innovation |

| Assessing Needs | The degree to which individuals collect information about priorities, preferences, and needs of people |

| Assessing Context | The degree to which individuals collect information to identify and appraise barriers and facilitators to implementation and delivery of the innovation |

| Planning | The degree to which individuals identify roles and responsibilities, outline specific steps and milestones, and define goals and measures for implementation success in advance |

| Tailoring Strategies | The degree to which individuals choose and operationalize implementation strategies to address barriers, leverage facilitators, and fit context |

| Engaging | The degree to which individuals attract and encourage participation in implementation and/or the innovation |

Key Determinants in Implementation Success

Research has identified specific CFIR constructs that consistently emerge as key determinants with the strongest impact on implementation outcomes. A 2025 systematic review of 48 studies that used the Damschroder & Lowery rating system identified eight key determinants that most frequently play the biggest role in implementation processes [10]. This rating system quantifies qualitative data by assessing both the valence (positive or negative effect) and magnitude (strength of effect) of determinants, ranging from -2 (major barrier) to +2 (major facilitator) [10].

Table 5: Key Determinants in Implementation Processes

| Key Determinant | CFIR Domain | Impact Description |

|---|---|---|

| Leadership Engagement | Inner Setting | Commitment, involvement, and accountability of leaders at multiple levels |

| Formally Appointed Internal Implementation Leaders | Individuals: Roles | Individuals with formal responsibility for leading implementation efforts |

| Compatibility | Inner Setting | Fit between innovation and existing workflows, systems, and processes |

| Available Resources | Inner Setting | Allocation of sufficient staffing, time, space, and equipment |

| External Change Agents | Outer Setting | Individuals outside the organization who support implementation |

| Champions | Individuals: Roles | Individuals who dedicate themselves to supporting and driving the implementation |

| Relative Advantage | Innovation | Perception that the innovation is better than current practice |

| Key Stakeholders | Individuals: Roles | Individuals affected by or influencing the implementation |

These key determinants provide a strategic starting point for researchers and practitioners deciding where to focus assessment and intervention efforts when faced with the comprehensive list of CFIR constructs [10]. The identification of these factors across multiple studies suggests they have consistent and substantial influence on implementation success regardless of the specific innovation or context.

Application Protocols: Using CFIR in Implementation Research

The CFIR Leadership Team has developed a structured five-step approach to guide researchers in applying CFIR throughout the research process [1]. This methodology ensures systematic application of the framework from study design through knowledge dissemination, facilitating rigorous and comprehensive assessment of implementation determinants.

Step 1: Study Design

The initial phase involves defining the research focus and implementation outcome. Researchers must specify whether they are using CFIR prospectively (to assess determinants of anticipated implementation outcomes) or retrospectively (to explain actual implementation outcomes) [1]. This critical distinction shapes all subsequent methodological decisions.

Protocol 1: Defining Implementation Outcomes

- Identify the innovation being implemented and distinguish it from implementation strategies [9]

- Select appropriate implementation outcomes that measure success or failure of implementation

- Define domain boundaries specific to the project to enable accurate attribution to implementation outcomes [1]

- Document the Inner and Outer Settings including type, location, and boundaries between them [9]

Step 2: Data Collection

This phase involves selecting appropriate methods to capture CFIR constructs. While qualitative methods like semi-structured interviews and focus groups are commonly used, quantitative surveys and mixed methods approaches are also valuable [1] [11]. The selection of data collection methods should align with research questions, resources, and epistemological orientation.

Protocol 2: CFIR-Informed Data Collection

- Develop data collection instruments with questions mapped to relevant CFIR constructs [1]

- Determine sampling strategy based on units of analysis (individual, team, organizational levels) [11]

- Select participants who have power and/or influence over implementation outcomes [9]

- Pilot test instruments to ensure comprehensiveness and clarity

Step 3: Data Analysis

Analytical approaches for CFIR-informed data include both qualitative framework analysis and quantitative methods, including rating constructs based on their influence on implementation outcomes [10] [1]. The Damschroder & Lowery rating system enables quantification of qualitative findings by assessing both the direction and strength of each construct's influence.

CFIR Data Analysis Workflow

Step 4: Data Interpretation

Interpretation involves synthesizing findings to identify which determinants are most critical to address and understanding how constructs interact across domains to influence implementation outcomes [1]. This phase moves beyond describing individual constructs to developing a comprehensive understanding of their collective impact.

Protocol 3: Interpreting CFIR Findings

- Identify difference-maker constructs that strongly distinguish between implementation success and failure [1]

- Analyze construct interactions across domains to understand systemic influences

- Compare perspectives across different stakeholder groups

- Contextualize findings within the specific implementation stage and setting

Step 5: Knowledge Dissemination

The final step involves sharing findings to inform implementation practice and contribute to implementation science. Effective dissemination includes both traditional academic outputs and tailored products for stakeholders involved in implementation [1] [11].

Protocol 4: Disseminating CFIR Findings

- Share rapid feedback with stakeholders after data collection to inform refinements [11]

- Develop tailored reports addressing barriers and facilitators identified

- Publish scholarly outputs to contribute to implementation science knowledge

- Archive data and instruments for potential replication or secondary analysis

Matching Implementation Strategies to CFIR Determinants

A critical application of CFIR in implementation research is guiding the selection of implementation strategies to address specific barriers identified through context assessment. The CFIR-ERIC Implementation Strategy Matching Tool provides a systematic approach for linking barriers to potential strategies [12].

The CFIR-ERIC Matching Process

The matching process connects barriers identified using CFIR with implementation strategies from the Expert Recommendations for Implementing Change (ERIC) compilation [12]. This tool was developed based on survey responses from implementation experts who identified strategies most likely to address specific CFIR barriers.

Table 6: CFIR Barrier to Strategy Matching Examples

| CFIR Barrier Domain | Example CFIR Construct | Highly Endorsed ERIC Strategies |

|---|---|---|

| Inner Setting | Available Resources | Access new funding, Change record systems, Fund and contract for clinical innovations |

| Individuals | Innovation Deliverers Capability | Conduct educational meetings, Develop educational materials, Provide ongoing consultation |

| Implementation Process | Assessing Needs | Assess for readiness and identify barriers and facilitators, Conduct local needs assessment |

| Outer Setting | Policies & Laws | Involve patients/consumers and family members, Develop partnerships |

Application in Implementation Planning

The matching tool is particularly valuable during implementation planning to proactively address anticipated barriers, and during implementation to refine strategies in response to emerging challenges [12]. A case illustration from the Telephone Lifestyle Coaching program in Veterans Affairs medical centers demonstrated how seven key CFIR barriers were matched with highly-endorsed implementation strategies, with "Identify and Prepare Champions" emerging as the strategy with the highest cumulative endorsement across multiple barriers [12].

Successful application of CFIR requires leveraging available tools and resources developed by the CFIR Leadership Team and user community. These resources provide practical guidance for operationalizing the framework throughout the research process.

Table 7: Essential CFIR Research Resources

| Resource Type | Description | Source/Availability |

|---|---|---|

| CFIR Construct Coding Guidelines | Detailed guidance for applying CFIR constructs in qualitative analysis | CFIR User Guide [1] |

| CFIR Technical Assistance Website | Central repository for tools, templates, and updates | www.cfirguide.org [8] |

| CFIR-ERIC Strategy Matching Tool | Matrix linking CFIR barriers to implementation strategies | Downloadable from CFIR website [12] |

| Implementation Research Worksheet | Template for documenting CFIR application throughout research process | CFIR User Guide [1] |

| Construct Example Questions | Interview and survey questions mapped to CFIR constructs | CFIR User Guide [1] |

These resources support both novice and experienced CFIR users in applying the framework consistently and rigorously. The CFIR Leadership Team continues to develop and refine these tools based on user feedback and advancing methodological standards in implementation science [1].

The Consolidated Framework for Implementation Research provides a comprehensive, structured approach to identifying determinants that influence implementation success across diverse contexts and innovations. Its systematic organization of constructs across five domains offers researchers and practitioners a practical framework for assessing contextual factors, explaining implementation outcomes, and informing strategy selection. The continued evolution of CFIR through user feedback and methodological refinement ensures its ongoing relevance and utility for advancing implementation science and practice.

As implementation science matures, CFIR remains a foundational framework for understanding the complex interplay of factors that determine implementation success. Its application facilitates both theoretical advancement and practical improvement in implementing evidence-based innovations across healthcare and other settings, ultimately contributing to more effective translation of research into practice.

The successful implementation of evidence-based interventions in healthcare and drug development is a complex process, heavily influenced by a wide array of contextual factors. These factors, known as implementation determinants, can either act as barriers or facilitators to the adoption, integration, and sustainment of new practices [10]. While numerous determinants have been identified, the pressing challenge for researchers and practitioners lies in identifying which of these factors exert the strongest influence on implementation outcomes. A more systematic understanding of these key determinants enables the development of more effective, efficient, and tailored implementation strategies [13]. This application note synthesizes evidence from recent systematic reviews to identify the most critical determinants and provides structured protocols for integrating this knowledge into the implementation strategy matching process, a core component of advanced implementation research.

Key Findings from Systematic Reviews

Identification of Key Determinants

A pivotal 2025 systematic review conducted by Schmitt et al. analyzed 48 studies that utilized a standardized rating system to assess the magnitude and valence of implementation determinants, as defined by the Consolidated Framework for Implementation Research (CFIR) [10] [14]. This review identified eight key determinants that were found to play the most substantial and frequent role in implementation processes:

- Leadership Engagement: The involvement, commitment, and accountability of leaders and managers.

- Formally Appointed Internal Implementation Leaders: Individuals from within the organization who are formally designated with implementation responsibilities.

- Compatibility: The degree of fit between the intervention and existing values, workflows, and perceived needs.

- Available Resources: The allocation of sufficient funding, time, and other necessary assets.

- External Change Agents: Individuals from outside the organization who influence the implementation effort.

- Champions: Supportive individuals who actively and enthusiastically drive the implementation process.

- Relative Advantage: The perceived superiority of the new intervention compared to existing alternatives.

- Key Stakeholders: Individuals who are affected by or can influence the implementation effort [10] [14].

This review highlighted that while quantifying qualitative data can remove some nuance, focusing on these key determinants helps researchers and practitioners prioritize factors most likely to influence the success of their implementation efforts [10].

Evidence on Frequently Tested Implementation Strategies

Complementing the work on determinants, a 2024 systematic review of 129 experimentally tested implementation strategies provided evidence on the most commonly applied and effective strategies across diverse health and human service settings [15]. Using the Expert Recommendations for Implementing Change (ERIC) taxonomy, the review found that strategies were often used in combination, with the most frequent being:

- Distribute Educational Materials

- Conduct Educational Meetings

- Audit and Provide Feedback

- External Facilitation [15]

The review noted that nineteen implementation strategies were frequently tested and associated with significantly improved outcomes, though many others lacked sufficient testing to draw firm conclusions [15].

The table below synthesizes the key determinants and aligns them with exemplary implementation strategies, providing a foundational guide for the matching process.

Table 1: Key Implementation Determinants and Associated Implementation Strategies

| Key Determinant (CFIR Construct) | Definition | Exemplary Implementation Strategies (from ERIC/ISAC) |

|---|---|---|

| Leadership Engagement | Involvement, commitment, and accountability of leaders and managers [10]. | Secure executive sponsorship, Identify and prepare champions [15] |

| Formally Appointed Internal Implementation Leaders | Individuals from within the organization formally designated with implementation responsibilities [10]. | Build a implementation team, Shadow other experts [13] |

| Compatibility | The fit between the intervention and existing values, workflows, and needs [10]. | Tailor strategies, Adapt and tailor to context, Develop resource sharing agreements [13] [15] |

| Available Resources | The allocation of sufficient funding, time, and other necessary assets [10]. | Access new funding, Alter incentive/allowance structures, Fund and contract for the EBI [13] |

| External Change Agents | Individuals from outside the organization who influence the implementation effort [10]. | Centralize technical assistance, Facilitation, Create a learning collaborative [15] |

| Champions | Individuals who actively and enthusiastically drive the implementation [10]. | Identify and prepare champions, Use an implementation advisor [13] [15] |

| Relative Advantage | The perceived superiority of the new intervention versus alternatives [10]. | Conduct local consensus discussions, Develop educational materials [15] |

| Key Stakeholders | Individuals who are affected by or can influence the implementation [10]. | Organize implementation teams, Conduct local needs assessment [13] [15] |

Experimental Protocols

Protocol 1: Determinant Identification and Rating Using the CFIR Framework

Objective: To systematically identify and assess the strength and valence (positive or negative influence) of implementation determinants in a specific context prior to strategy selection.

Background: The CFIR framework offers a comprehensive, multi-level taxonomy of constructs known to influence implementation. The Damschroder & Lowery (2013) rating system allows for the quantification of these determinants, moving beyond simple identification to an assessment of their impact [10].

Materials and Reagents:

- Interview/Focus Group Guides: Semi-structured guides based on CFIR constructs.

- Data Recording Equipment: Audio recorders and transcription software.

- Qualitative Data Analysis Software: e.g., NVivo, Dedoose, or MAXQDA.

- Rating Spreadsheet: A matrix with CFIR constructs and a rating scale from -2 to +2.

Procedure:

- Planning and Preparation:

- Form a multidisciplinary assessment team including researchers and relevant practitioners.

- Select the CFIR constructs most relevant to your project from the original 2009 or updated 2022 framework. The eight key determinants provide a prioritized starting point [10].

- Data Collection:

- Conduct semi-structured interviews and/or focus groups with key stakeholder groups (e.g., leadership, clinical staff, patients).

- Probe for experiences, perceptions, and beliefs related to the implementation of the evidence-based intervention, mapping responses to CFIR constructs.

- Data Analysis and Rating:

- Transcribe and de-identify audio recordings.

- Code the qualitative data deductively using the selected CFIR constructs as a codebook.

- For each construct, assign a rating based on the criteria below. This should be done by at least two independent raters, with discrepancies resolved through consensus.

-2: Major barrier (strong negative influence)-1: Minor barrier0: Neutral/mixed influence+1: Minor facilitator+2: Major facilitator (strong positive influence) [10]

- Synthesis:

- Create a determinant profile for the implementation site, highlighting the highest-rated barriers (

-2) and facilitators (+2). These are the primary targets for strategy matching.

- Create a determinant profile for the implementation site, highlighting the highest-rated barriers (

Protocol 2: The ISAC Match Process for Strategy Selection and Tailoring

Objective: To provide a pragmatic, rapid, and collaborative process for selecting and tailoring implementation strategies to address prioritized determinants in community settings, particularly within integrated research-practice partnerships (IRPPs).

Background: The ISAC Match process was developed to address the lack of community-friendly guidance for moving from determinants to strategies. It emphasizes a strength-based approach, considering both barriers and facilitators, and ensures strategies are feasible within the specific context [13].

Materials and Reagents:

- Prioritized List of Determinants: Output from Protocol 1.

- ISAC Compilation: A list of 40 implementation strategies tailored for community settings.

- ERIC Compilation: A list of 73 implementation strategies, originally developed for clinical contexts.

- Facilitation Materials: Whiteboards, sticky notes, and voting tools (e.g., dots for prioritization).

Procedure:

- Step 1: Conduct Contextual Inquiry (if needed):

- Review existing data (e.g., from Protocol 1) on barriers and facilitators. If information is sufficient, proceed. If gaps exist, conduct rapid contextual inquiry using methods like rapid qualitative analysis or a "brainwriting premortem" to identify potential failures [13].

- Output: A confirmed and comprehensive list of key determinants.

- Step 2: Identify Existing Implementation Strategies:

- Engage practitioners in a discussion to identify strategies already in use within the organization that could support implementation. This builds on existing strengths and infrastructure.

- Output: A list of current, potentially reinforcing, strategies.

- Step 3: Select New Implementation Strategies:

- Use the ISAC guidance tool to map potential new strategies to the prioritized determinants, considering the level of the determinant (e.g., individual, organizational) and the desired implementation outcome [13].

- If working in a clinical-community hybrid setting, supplement with the ERIC compilation.

- Prioritize the list of potential new strategies based on feasibility and importance using a 2x2 grid or a nominal group technique [13].

- Output: A shortlist of high-priority, candidate implementation strategies.

- Step 4: Tailor Implementation Strategies:

- For each shortlisted strategy, conduct a tailoring session using methods like a brainwriting premortem ("Why might this strategy fail in our context?") or liberating structures [13].

- Collaboratively refine the strategy's specifics—who, what, when, how—to ensure it fits the local context, culture, and resources.

- Output: A set of tailored, context-specific implementation strategies with a clear plan for deployment.

Visualizations

Key Determinant Identification Workflow

The diagram below outlines the systematic process for identifying and rating key implementation determinants, from initial planning to the final synthesis used for strategy selection.

Strategy Matching and Tailoring Process

This diagram illustrates the four-step ISAC Match process, a collaborative and iterative approach for selecting and tailoring implementation strategies based on identified determinants.

The Scientist's Toolkit

Table 2: Essential Reagents and Resources for Determinants Research

| Item | Category | Function/Application in Research |

|---|---|---|

| CFIR Codebook | Conceptual Framework | Provides standardized definitions and interview prompts for implementation determinants, ensuring consistent data collection and analysis [10]. |

| Damschroder & Lowery Rating Scale | Analytical Tool | Enables quantification of qualitative data by assigning magnitude/valence scores (-2 to +2) to determinants, allowing for prioritization [10] [14]. |

| ERIC Strategy Compilation | Strategy Repository | A taxonomy of 73 implementation strategies primarily developed for clinical settings; used for selecting strategies to address barriers [13] [15]. |

| ISAC Strategy Compilation | Strategy Repository | A community-focused compilation of 40 strategies; essential for research in non-clinical settings like public health or social services [13]. |

| Qualitative Data Analysis Software (NVivo, Dedoose) | Software | Facilitates organization, coding, and analysis of large volumes of qualitative data (interviews, focus groups) collected during contextual inquiry. |

| RE-AIM Framework | Evaluation Framework | Guides the assessment of implementation outcomes (Reach, Effectiveness, Adoption, Implementation, Maintenance) to evaluate strategy impact [15]. |

What Are Implementation Strategies? Distinguishing 'What' from 'How'

Implementation strategies are the methods and techniques used to enhance the adoption, implementation, and sustainability of evidence-based interventions. This article delineates the critical distinction between the "what" (evidence-based interventions) and the "how" (implementation strategies) within implementation science. Framed for determinants research, it provides a structured overview of strategy classifications, evidence on effectiveness, and detailed protocols for selecting and tailoring strategies to address specific implementation barriers. Designed for researchers and drug development professionals, the content includes quantitative evidence summaries, experimental protocols for strategy application, and visual tools to guide the matching of strategies to contextual determinants.

In implementation science, the core challenge is not merely discovering effective interventions but ensuring their integration into routine practice. This process hinges on a fundamental distinction: the "what" versus the "how." The "what" is the Evidence-Based Intervention (EBI)—a program, practice, drug, or policy that has been empirically shown to improve outcomes [16]. The "how" is the implementation strategy—defined as methods or techniques used to enhance the adoption, implementation, and sustainability of an EBI [17] [18]. For drug development professionals, this translates to distinguishing the therapeutic agent (the "what") from the strategies required to ensure its appropriate prescription, adherence, and integration into formularies and clinical pathways (the "how"). The precise specification of these strategies is a prerequisite for scientific reproducibility, testing, and building a cumulative evidence base on how best to implement [18].

A Framework for Classifying Implementation Strategies

Organizing the multitude of documented implementation strategies into a coherent framework is essential for selecting, reporting, and researching them. The following table summarizes a classification system that categorizes strategies based on their primary purpose and function [17] [19].

Table 1: A Classification of Implementation Strategy Types

| Strategy Class | Definition | Example Actions |

|---|---|---|

| Dissemination Strategies | Target knowledge, awareness, and intentions to adopt an innovation by developing and sharing key messages [17]. | Develop and distribute educational materials; use mass media [17] [16]. |

| Implementation Process Strategies | Enable the planning and delivery of an innovation through distinct stages, including assessing context and engaging stakeholders [17]. | Assess for readiness and identify barriers; audit and provide feedback; use an implementation team [17] [16]. |

| Integration Strategies | Aim to weave an innovation into the fabric of a specific setting, often involving modifications to existing structures and roles [17] [19]. | Revise professional roles; adapt the EBI; assess and redesign workflows [17] [16] [20]. |

| Capacity-Building Strategies | Increase the motivation, capability, and general resources of individuals and organizations to engage in implementation [17] [19]. | Conduct educational meetings; provide ongoing training; identify and prepare champions [17] [16]. |

| Scale-Up Strategies | Build system-level capacity to implement a policy, practice, or service across multiple settings or populations [17]. | Use train-the-trainer models; develop system infrastructure like data systems [17]. |

Another prevalent taxonomy in the field is the Expert Recommendations for Implementing Change (ERIC), which compiles 73 discrete implementation strategies grouped into clusters [16]. The following workflow diagram synthesizes these classification systems into a practical pathway for the strategy selection process, integral to determinants research.

The Evidence Base: Effectiveness of Implementation Strategies

The selection of implementation strategies should be informed by evidence of their effectiveness. A major landscape review assessed the strength of evidence for common implementation strategies, providing critical insight for researchers designing implementation trials [20]. The following tables summarize the evidence for strategies that showed the strongest support for improving implementation and health outcomes, respectively.

Table 2: Evidence for Strategies Improving Implementation Outcomes (e.g., Adoption, Fidelity)

| Implementation Strategy | Percentage of Studies Showing Improvement | Overall Evidence Strength |

|---|---|---|

| Assess and redesign workflows | 100% (8 of 8 studies) | Moderate |

| Conduct cyclical small tests of change | 90% (9 of 10 studies) | Indirect |

| Audit and provide feedback | 88% (14 of 16 studies) | Supportive |

| Prepare patients/consumers | 86% (6 of 7 studies) | Supportive |

| Remind clinicians | 86% (6 of 7 studies) | Moderate |

| Assess for readiness/identify barriers | 85% (11 of 13 studies) | Indirect |

| Clinical decision support tools | 83% (5 of 6 studies) | Supportive |

| Facilitate collaborative learning | 83% (5 of 6 studies) | Moderate |

| Provide implementation facilitation | 79% (15 of 19 studies) | Supportive |

| Promote adaptability within the EBP | 75% (9 of 12 studies) | Supportive |

| Centralize technical assistance | 71% (5 of 7 studies) | Limited |

Table 3: Evidence for Strategies Improving Health Outcomes

| Implementation Strategy | Percentage of Studies Showing Improvement | Overall Evidence Strength |

|---|---|---|

| Centralize technical assistance | 75% (3 of 4 studies) | Limited |

| Conduct cyclical small tests of change | 57% (4 of 7 studies) | Indirect |

| Clinical decision support tools | 50% (2 of 4 studies) | Supportive |

| Prepare patients/consumers | 50% (2 of 4 studies) | Supportive |

| Assess and redesign workflows | 50% (3 of 6 studies) | Moderate |

| Provide implementation facilitation | 45% (5 of 11 studies) | Supportive |

| Assess for readiness/identify barriers | 38% (3 of 8 studies) | Indirect |

| Audit and provide feedback | 36% (4 of 11 studies) | Supportive |

| Promote adaptability within the EBP | 33% (3 of 9 studies) | Supportive |

| Remind clinicians | 33% (1 of 3 studies) | Moderate |

| Facilitate collaborative learning | 40% (2 of 5 studies) | Moderate |

A key finding from the evidence is that implementation strategies are most often used and studied in combination. For instance, "conduct educational meetings" and "distribute educational materials" are frequently bundled with other strategies [20]. This underscores the importance of multi-faceted, tailored approaches rather than relying on single, discrete strategies.

Experimental Protocols for Matching Strategies to Determinants

A critical objective in implementation science is to move from a one-size-fits-all approach to a precision-based model where strategies are systematically matched to contextual determinants. The following protocols provide a methodological roadmap for this process.

Protocol 1: Determinant Identification using the COM-B Model

The COM-B model provides a framework for diagnosing barriers to implementation, positing that for a behavior (B) to occur, individuals and teams must have the Capability (C), Opportunity (O), and Motivation (M) to perform it [21].

- Define the Target Behavior: Formulate the core implementation behavior in specific terms. Example: "Clinical pharmacists in the cardiology clinic will initiate a conversation with physicians about switching eligible patients from Drug A to the new, more cost-effective Evidence-Based Drug B within 2 weeks of its formulary addition."

- Data Collection on Determinants: Use mixed methods to gather data on barriers and enablers.

- Methods: Conduct semi-structured interviews and focus groups with key stakeholders (e.g., physicians, pharmacists, nurses). Supplement with quantitative surveys, such as the Organizational Readiness for Implementing Change (ORIC) or tailored questionnaires.

- Probes: Structure inquiries around the COM-B components:

- Capability: "Do providers know the new drug's inclusion criteria and dosing protocol?" (Psychological) "Do they have the skills to administer it?" (Physical)

- Opportunity: "Is the new drug readily available in the automated dispensing cabinet?" (Physical) "Do social norms support its use among senior clinicians?" (Social)

- Motivation: "Do providers believe the new drug offers a significant patient benefit over the old standard?" (Reflective) "Is there a feeling of automaticity or habit to prescribe the old drug?" (Automatic)

- Analysis and Synthesis: Thematically analyze qualitative data and triangulate with quantitative findings. Create a determinant matrix categorized by COM-B and by level (e.g., individual, team, organization).

Protocol 2: Strategy Selection using Implementation Mapping

Implementation Mapping is a systematic process for selecting and tailoring implementation strategies based on the identified determinants [22].

- Generate Strategy Options: Based on the determinant matrix from Protocol 1, brainstorm potential implementation strategies. Utilize existing compilations like the ERIC taxonomy [16] to ensure a comprehensive consideration of options.

- Example Determinant: "Lack of knowledge about the new drug's administration schedule" (Capability Barrier).

- Example Strategy: "Conduct interactive educational outreach visits."

- Select and Tailor Strategies: Evaluate the feasibility, practicality, and likely effectiveness of the generated strategies. Use a structured tool, such as the StrategEase Tool [22], to map strategies to specific determinants. This step involves customizing the broad strategy to the local context—for example, determining the content, format, and timing of the educational outreach visits.

- Specify Implementation Strategy Components: To ensure replicability and precise measurement, specify the operational details of each chosen strategy as recommended by Proctor et al. [18]:

- Actor: Who performs the strategy? (e.g., clinical pharmacy specialist)

- Action: What is the specific activity? (e.g., one-on-one, 15-minute educational visit)

- Action Target: What determinant is being addressed? (e.g., provider knowledge about administration)

- Temporality: When and how often? (e.g., within first month of launch, repeated for new staff)

- Dose: Duration and intensity? (e.g., 15-minute session)

- Implementation Outcome: What is the expected result? (e.g., increased knowledge, adoption)

- Develop a Causal Pathway Diagram (CPD): Create a visual model that hypothesizes the mechanism through which the strategy is expected to work. The CPD links the strategy to the determinant, the resultant change in behavior, and the ultimate implementation and health outcomes, making the theory of change explicit and testable [16].

The Scientist's Toolkit: Key Reagents for Implementation Research

For researchers embarking on implementation trials, the following "reagents" or core resources are essential for rigorous study design and execution.

Table 4: Essential Reagents for Implementation Science Research

| Tool/Resource Name | Function in Research | Application Context |

|---|---|---|

| ERIC Taxonomy [18] [16] | A compiled menu of 73 defined implementation strategies. | Serves as a standardized "periodic table" of strategies for selection, naming, and reporting. |

| Consolidated Framework for Implementation Research (CFIR) [19] | A meta-theoretical framework of constructs that influence implementation. | Used to systematically identify and categorize determinants (barriers and enablers) across multiple levels. |

| Interactive Systems Framework (ISF) [19] | Distinguishes three systems: Synthesis & Translation, Support, and Delivery. | Helps classify the "actor" for a strategy, clarifying roles and necessary capacities for implementation. |

| Proctor's Reporting Guidelines [18] | A checklist for specifying implementation strategies (actor, action, target, etc.). | Ensures methodological precision and reproducibility in describing intervention "how-to". |

| Causal Pathway Diagram (CPD) [16] | A visual tool for mapping hypothesized mechanistic links between a strategy and outcomes. | Makes the theory of change explicit, guiding evaluation of strategy mechanisms and effectiveness. |

The systematic application of implementation strategies is what bridges the chasm between clinical discovery and public health impact. For the drug development community, mastering the "how" is as critical as innovating the "what." This involves a disciplined, research-driven process: using frameworks like COM-B to diagnose implementation barriers, consulting evidence on strategy effectiveness, and employing structured protocols like Implementation Mapping to tailor strategies to specific determinants. By treating implementation strategies as a core, specifiable component of research and development, scientists and professionals can significantly accelerate the reliable and equitable integration of new therapies into the care that patients receive.

In implementation science, the successful adoption of evidence-based interventions (EBIs) in real-world settings hinges on a critical, often overlooked, process: the systematic matching of implementation strategies to specific contextual determinants. Implementation strategies are the "how" – the methods or techniques used to enhance the adoption, implementation, and sustainment of evidence-based interventions [16]. Their effectiveness is not universal; it is maximized when they are precisely selected to address specific barriers and leverage facilitators, known as determinants, within a given context. This application note provides researchers and drug development professionals with structured protocols and tools to master this matching process, thereby increasing the efficiency and impact of their implementation efforts.

Background and Key Concepts

Defining Core Constructs

- Evidence-Based Interventions (EBIs): The "what" that is being implemented. These are the programs, practices, principles, procedures, products, or policies that have been demonstrated to improve health behaviors or outcomes [16]. In drug development, this could be a new therapeutic protocol or a validated clinical guideline.

- Implementation Strategies: The actions taken to enhance the adoption, implementation, and sustainability of EBIs. The Expert Recommendations for Implementing Change (ERIC) project has compiled a standardized taxonomy of 73 such strategies, grouped into nine clusters for easier application [16] [23].

- Determinants of Implementation: The barriers and facilitators that influence the implementation process. These can exist at multiple levels of the social ecological model, including the individual (e.g., clinician knowledge), organizational (e.g., clinic workflow), and system levels (e.g., reimbursement policies).

The Rationale for Matching

The premise is straightforward: employing a strategy that does not target a primary barrier is an inefficient use of resources and is unlikely to succeed. For instance, if the main barrier is a lack of clinician knowledge, then an educational strategy is well-matched. However, if the barrier is a flawed payment system, the same educational strategy will fail unless paired with a financial strategy like altering incentive structures [16]. A 2024 systematic review of 129 experimentally tested implementation studies found that commonly used strategies, including Distribute Educational Materials, Conduct Educational Meetings, Audit and Provide Feedback, and External Facilitation, were often associated with significantly improved outcomes when applied appropriately [23].

Quantitative Synthesis of Experimentally Tested Strategies

The following table summarizes data from a systematic review of 129 methodologically rigorous studies (2010-2022) that experimentally tested implementation strategies, providing a quantitative evidence base for strategy selection [23].

Table 1: Experimentally Tested Implementation Strategies and Their Associated Outcomes

| Implementation Strategy (ERIC Taxonomy) | Frequency in Experimental Arms (n=129 studies) | Outcomes Most Frequently Assessed | Association with Significant Improvement |

|---|---|---|---|

| Distribute Educational Materials | 99 | Effectiveness, Implementation | Frequently associated with positive outcomes |

| Conduct Educational Meetings | 96 | Effectiveness, Implementation | Frequently associated with positive outcomes |

| Audit and Provide Feedback | 76 | Effectiveness, Implementation | Yes |

| External Facilitation | 59 | Implementation, Adoption | Yes |

| Tailor Strategies | 45 | Reach, Adoption | Data varies |

| Identify and Prepare Champions | 44 | Implementation, Maintenance | Yes |

| Use Train-the-Trainer Strategies | 39 | Implementation | Data varies |

| Develop Educational Materials | 37 | Effectiveness | Data varies |

| Build a Coalition | 36 | Adoption | Data varies |

| Conduct Ongoing Training | 36 | Implementation, Effectiveness | Yes |

Protocols for Matching Strategies to Determinants

Protocol 1: Determinant Identification and Analysis

Objective: To systematically identify and prioritize barriers and facilitators to the implementation of a specific EBI in a given context.

Methodology:

- Preparation:

- Conduct a Literature Review: Identify known determinants for the EBI or similar interventions in comparable settings.

- Form an Implementation Team: Assemble a multi-disciplinary team including clinicians, staff, administrators, and patient representatives.

- Develop a Preliminary Determinant Framework: Use an established framework (e.g., Consolidated Framework for Implementation Research - CFIR) to guide data collection.

Data Collection (Mixed-Methods):

- Surveys: Administer quantitative surveys to a broad group of stakeholders to assess perceived barriers and facilitators across different domains (e.g., evidence strength, organizational culture).

- Focus Groups & Interviews: Conduct qualitative focus groups and individual interviews with key informants to explore determinants in depth and understand their interrelationships.

- Observation: Directly observe the implementation context to identify workflow and environmental barriers not captured through self-report.

Data Analysis and Synthesis:

- Quantitative Analysis: Use descriptive statistics from surveys to rank-order determinants by perceived strength and prevalence. Employ factor analysis to identify underlying constructs [24].

- Qualitative Analysis: Employ thematic analysis or content analysis to code interview and focus group transcripts, identifying major themes and specific determinants [24].

- Triangulation and Prioritization: Integrate quantitative and qualitative findings to create a consolidated list of determinants. Work with the implementation team to prioritize determinants based on their perceived impact and mutability.

Protocol 2: Strategy Selection and Specification

Objective: To select and precisely specify the implementation strategies that will be used to address the prioritized determinants.

Methodology:

- Matching Process:

- Utilize a matching tool, such as a concept mapping approach or the ERIC compatibility matrix, to link prioritized determinants to candidate implementation strategies [16].

- For example, if a key barrier is "forgetting to use the EBI," a matched strategy would be "provide reminders." If the barrier is "lack of motivation," a matched strategy could be "alter incentive/allowance structures" [16].

Strategy Specification:

- For each selected strategy, use the Proctor et al. (2013) guidelines for reporting to ensure replicability. Specify the actor, action, target, temporal, dose, and outcome for every strategy [16].

- Example: Instead of "we will use audit and feedback," specify "the quality improvement team (actor) will generate monthly reports on EBI adherence (action) and email them to prescribing clinicians (target) for six months (temporal) with a comparison to the clinic average (dose) to increase adherence rates (outcome)."

Causal Pathway Diagramming:

- Develop a visual model, such as a Causal Pathway Diagram (CPD) or Logic Model, to hypothesize how the strategies will work [16].

- This diagram should map the links between the specific strategies, the mechanisms of action (how they address determinants), intermediate outcomes (e.g., increased knowledge), and final implementation outcomes (e.g., fidelity).

Diagram Title: Causal Pathway from Determinant to Outcome

Protocol 3: Experimental Testing of Matched Strategies

Objective: To quantitatively evaluate the impact of the matched implementation strategies on implementation and effectiveness outcomes.

Methodology:

- Study Design:

- Randomized Controlled Trials (RCTs): The gold standard for establishing efficacy. Implementation-focused RCTs often randomize at the cluster level (e.g., clinics, hospitals) to avoid contamination [25].

- Stepped-Wedge Designs: A pragmatic design where all clusters eventually receive the implementation strategy in a staggered fashion, allowing each cluster to serve as its own control [25].

- Interrupted Time Series (ITS): A strong quasi-experimental design used when randomization is not feasible, involving multiple measurements before and after the strategy's introduction [25].

Outcome Measurement:

- Classify and measure outcomes using the RE-AIM framework (Reach, Effectiveness, Adoption, Implementation, Maintenance) [23].

- Implementation Outcome: The primary outcome is often the degree of fidelity to the EBI. This is measured through standardized checklists, chart reviews, or electronic data extraction.

- Effectiveness Outcome: Measure the impact of the EBI on the intended patient-level outcomes (e.g., symptom reduction, biomarker levels).

Data Analysis:

- For RCTs and stepped-wedge designs, use multilevel regression models (e.g., mixed-effects models) to account for clustering of patients within sites, comparing outcomes between intervention and control conditions [24] [25].

- For ITS designs, use segmented regression analysis to test for a change in level and trend following the introduction of the implementation strategy [25].

- Report both statistical significance and effect sizes to convey the magnitude of the strategy's impact.

Diagram Title: Workflow for Experimental Testing of Strategies

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Implementation Strategy and Determinant Research

| Resource Name/Type | Function/Purpose | Example Use Case |

|---|---|---|

| ERIC Taxonomy | A standardized compilation of 73 implementation strategy terms and definitions. Provides a common language for researchers and practitioners. | Used to precisely name and define the strategies being employed or tested in a study protocol or publication [16] [23]. |

| RE-AIM Framework | An evaluation framework to classify outcomes into five dimensions: Reach, Effectiveness, Adoption, Implementation, and Maintenance. | Guides the comprehensive planning and measurement of implementation outcomes beyond simple fidelity, assessing real-world impact [23]. |

| Determinant Frameworks (e.g., CFIR) | Theoretical frameworks that catalog potential barriers and facilitators across multiple domains (e.g., intervention, inner setting, outer setting). | Serves as a structured codebook for qualitative data analysis and ensures a comprehensive assessment of contextual factors. |

| Causal Pathway Diagram (CPD) | A visual tool for mapping the hypothesized causal relationships between strategies, mechanisms, and outcomes. | Used during the study design phase to articulate the theory of change and identify key mediators to measure [16]. |

| Statistical Analysis Software (e.g., R, Stata) | Platforms capable of running advanced multilevel and time-series statistical models. | Essential for analyzing clustered data from RCTs or stepped-wedge designs and accounting for within-site correlations [24] [25]. |

The Practitioner's Toolkit: Methods and Processes for Effective Matching

A Stepwise Guide to Using the CFIR-ERIC Implementation Strategy Matching Tool

A fundamental challenge in implementation science is moving from the identification of contextual barriers to the selection of appropriate implementation strategies. The CFIR-ERIC Implementation Strategy Matching Tool was developed to address this challenge by providing a systematic, evidence-informed approach for selecting strategies based on identified determinants [26]. This tool bridges two key resources: the Consolidated Framework for Implementation Research (CFIR), used to assess contextual determinants (barriers and facilitators), and the Expert Recommendations for Implementing Change (ERIC), a compilation of 73 discrete implementation strategies [12] [26].

This guide provides a detailed protocol for using the CFIR-ERIC Matching Tool, framing its application within the broader context of matching implementation strategies to determinants research. The process enables researchers and implementation practitioners to tailor strategies to their specific context, thereby enhancing the potential for successful implementation of evidence-based practices [27].

Background and Development

The CFIR-ERIC Matching Tool was developed through research involving 169 implementation experts who participated in an online survey to match ERIC strategies to CFIR-based barriers [26] [28]. Participants were presented with barrier descriptions based on CFIR constructs and asked to rank up to seven ERIC strategies that would best address each barrier [26].

A key finding from this development process was the considerable heterogeneity in expert recommendations. Across the 39 CFIR barriers, an average of 47 different ERIC strategies were endorsed at least once for each barrier, indicating few consistent relationships between specific barriers and strategies [26] [28]. Despite this variability, the tool provides a structured starting point for strategy selection by aggregating expert endorsements.

Table 1: Key Development Metrics of the CFIR-ERIC Matching Tool

| Development Aspect | Description | Source |

|---|---|---|

| Expert Participants | 169 implementation researchers and practitioners | [26] |

| Response Rate | 39% (169 of 435 invited) | [26] |

| CFIR Barriers Assessed | 39 constructs from the CFIR framework | [26] |

| ERIC Strategies | 73 discrete implementation strategies | [12] |

| Endorsement Heterogeneity | Average of 47 different strategies endorsed per barrier | [26] [28] |

| Consensus Strategies | 33 strategy-barrier combinations endorsed by >50% of experts | [28] |

Phase 1: Context Assessment and Barrier Identification

Step 1: Conduct a Context Assessment

Before using the matching tool, you must first conduct a comprehensive assessment of your implementation context using the CFIR framework. The CFIR includes 39 constructs organized across five domains: Intervention Characteristics, Outer Setting, Inner Setting, Characteristics of Individuals, and Implementation Process [26].

Protocol: Utilize CFIR-based data collection tools, such as:

- CFIR Construct Example Questions: Customizable, open-ended questions for each construct [29]

- 14-Item pCAT: A short survey for quantitative ratings of key CFIR constructs [29]

- Interview Guides: Structured questions based on CFIR constructs [30]

Data Collection Methods:

- Conduct individual interviews with key stakeholders (clinicians, administrators, patients)

- Facilitate focus groups to identify shared perspectives

- Administer surveys to assess prevalence of specific determinants

- Review relevant documents and organizational records

Step 2: Identify and Prioritize Barriers

Analyze the data collected to identify specific barriers to implementation. The CFIR Coding Guidelines can be used to systematically code qualitative data to specific CFIR constructs [29].

Protocol for Data Analysis:

- Transcribe and clean qualitative data from interviews and focus groups

- Code data to CFIR constructs using established coding guidelines [29]

- Create a determinant matrix that maps identified barriers to specific CFIR constructs using the CFIR Construct x Inner Setting Matrix Template [29]

- Prioritize barriers based on their perceived impact on implementation success, feasibility to address, and prevalence across stakeholders

Phase 2: Strategy Selection Using the Matching Tool

Step 3: Access and Navigate the Matching Tool

The CFIR-ERIC Matching Tool is available as a downloadable file from the official CFIR website [12] [31].

Protocol:

- Navigate to the CFIR guide website (cfirguide.org)

- Access the "Strategy Design" page or use the direct link to download the Updated CFIR x ERIC Matching File [12] [31]

- Provide contact information when prompted to download the tool [31]

- Open the downloaded file, which typically appears as a matrix with CFIR constructs and ERIC strategies

Step 4: Input Identified Barriers

Enter the prioritized CFIR barriers identified in Step 2 into the matching tool.

Protocol:

- Locate the section of the tool for specifying high-priority CFIR-based barriers

- Select the specific CFIR constructs that represent your identified barriers

- If using the digital tool, these selections will automatically generate a list of potential strategies

Step 5: Interpret Strategy Recommendations

The tool provides a prioritized list of ERIC strategies based on expert endorsements from the original research [26] [28].

Interpretation Protocol:

- Color-coded endorsements: The tool uses a color-coding system where:

- Cumulative Percent: The "Cumulative Percent" metric indicates the strength of endorsement for a strategy across all selected barriers [12]

- Prioritization: Strategies with higher cumulative percentages and green coding should be prioritized for consideration

Table 2: Interpretation of CFIR-ERIC Matching Tool Outputs

| Output Indicator | Interpretation | Action Implication |

|---|---|---|

| Green-coded Cell | Strategy endorsed by >50% of experts for a specific barrier | Strong candidate for inclusion in implementation plan |

| Yellow-coded Cell | Strategy endorsed by 20-49% of experts for a specific barrier | Moderate evidence; consider for inclusion |

| Cumulative Percent | Summed endorsement across all selected barriers | Higher percentage indicates broader expert support |

| Strategy Ranking | Ordered list of strategies based on expert endorsements | Prioritize higher-ranked strategies in implementation planning |

The following diagram illustrates the complete workflow from context assessment to strategy implementation:

Phase 3: Strategy Operationalization and Tracking

Step 6: Operationalize Selected Strategies

Once strategies are selected using the matching tool, they must be fully specified and operationalized for your specific context.

Protocol for Strategy Specification: Following Proctor et al.'s recommendations for reporting implementation strategies, specify each strategy by [32] [27]:

- Actor: Who will enact the strategy?

- Action: What specific activities will be performed?

- Action Target: What is the strategy intended to change?

- Temporality: When and how often will the strategy be deployed?

- Dose: What is the duration and intensity of the strategy?

- Implementation Outcomes Affected: Which outcomes (e.g., acceptability, feasibility) is the strategy expected to influence?

- Justification: Why was this strategy selected? (Reference the CFIR-ERIC Matching Tool results)

Implementation Mapping: Fernandez et al.'s Implementation Mapping process provides a systematic approach for operationalizing strategy choices based on the matching tool, explicitly identifying and designing strategies based on hypothesized underlying change theories [28].

Step 7: Track Strategy Implementation and Modifications

Systematically track the use of implementation strategies over time to enable evaluation of their effectiveness and documentation of adaptations.

Protocol for Strategy Tracking: The Longitudinal Implementation Strategy Tracking System (LISTS) methodology provides a structured approach for this purpose [32]. LISTS includes three components:

- Strategy Assessment: Documents strategy specification, reporting, and modification elements

- Data Capture Platform: Enables consistent documentation of strategy use

- User's Guide: Procedures for using the tracking system [32]

Tracking Elements:

- Document when each strategy is initiated and concluded

- Record any modifications to strategy delivery (dose, timing, approach)

- Note contextual factors that influence strategy implementation

- Track resources required for each strategy

Case Illustration