From Controlled Systems to Living Organisms: A Comparative Analysis of Emergent Behaviors in In Vitro and In Vivo Models

This article provides a comprehensive analysis of emergent behaviors—complex system-level properties arising from component interactions—across in vitro and in vivo environments.

From Controlled Systems to Living Organisms: A Comparative Analysis of Emergent Behaviors in In Vitro and In Vivo Models

Abstract

This article provides a comprehensive analysis of emergent behaviors—complex system-level properties arising from component interactions—across in vitro and in vivo environments. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles defining these behaviors, examines advanced methodologies for their study and application, addresses common challenges in model optimization and interpretation, and establishes frameworks for rigorous validation and correlation. By synthesizing insights from tissue engineering, microbial ecology, computational modeling, and pharmaceutical development, this review serves as a strategic guide for leveraging the distinct advantages of each model system to accelerate biomedical discovery and therapeutic translation.

Defining Emergence: Fundamental Principles Across Experimental Models

What is Emergent Behavior? From Simple Rules to Complex System Outcomes

Emergent behavior describes the phenomenon where complex, system-wide patterns arise from the relatively simple, local interactions of individual components, without being explicitly programmed or directed by a central authority [1] [2]. This concept is a cornerstone for researchers studying intricate systems, from the collective motion of cells in a tissue to the sophisticated coordination of AI agents.

In the context of drug development, understanding emergence is critical. The physiological response to a drug is not merely the sum of individual cellular reactions, but an emergent property of the complex, multi-scale interactions throughout a biological system. This article explores how different research models—in vivo (within a living organism) and in vitro (in a controlled laboratory environment)—are used to study these emergent behaviors, comparing their capabilities, applications, and limitations.

Defining Emergent Behavior

At its core, emergent behavior is unpredictable from individual components alone. One cannot deduce the complex outcome by studying a single agent in isolation [1]. The key principle is that simple, localized rules governing individual agents give rise to organized, global complexity [2].

Classic examples include:

- Flocking Birds: Each bird follows simple rules like avoiding collisions and aligning with neighbors, leading to the emergent, complex pattern of a swirling flock [1].

- Traffic Jams: Individual drivers adjusting speed based on the car ahead can collectively create "phantom jams" without a central cause [1].

- Multi-Agent AI: In AI systems, individual neurons or agents performing simple operations can, through interaction, give rise to an unexpected capacity for complex pattern recognition or strategy [2].

The diagram below illustrates this universal principle across biological, robotic, and AI systems.

In Vivo vs. In Vitro Research: A Primer

To understand how emergent behavior is studied, one must first distinguish between the two primary research models.

- In Vivo (from Latin "within the living"): Research conducted on whole, living organisms, such as animals or humans [3]. This approach preserves the full biological context.

- In Vitro (from Latin "in glass"): Research conducted outside a living organism in a controlled lab environment, such as in petri dishes or test tubes [3].

The Scientist's Toolkit: Key Research Models and Materials

The following table details the essential models and their components used in studying complex biological behaviors.

| Model/Material | Type | Key Components & Functions |

|---|---|---|

| Animal Models [3] | In Vivo | Drosophila (fruit fly): Genetic/behavioral studies. Zebrafish: Embryonic development & toxicology. Rodents (mice/rats): Efficacy & toxicity before human trials. |

| Complex In Vitro Models (CIVMs) [4] [5] | In Vitro | Organ-Chips: Microfluidic devices with human cells; mimic blood flow, breathing [5]. Organoids: 3D structures from stem cells; self-organize into organ-like tissues [4]. |

| Multi-Agent Reinforcement Learning (MARL) [6] | In Silico | Software Agents: Learn adaptive strategies via reward/punishment. Grid World: Simulated 2D environment for agent interaction. |

| Pluripotent Stem Cells (PSCs) [4] | Cell Source | Embryonic/Induced PSCs: Can differentiate into any cell type; used to generate organoids. |

| Extracellular Matrix (e.g., Matrigel) [4] | 3D Scaffold | Bio-polymer Scaffold: Provides structural support & biochemical cues for 3D cell growth. |

Research Approaches for Emergent Behavior

The choice between in vivo and in vitro models represents a trade-off between physiological completeness and experimental control, which directly impacts how emergent behaviors can be observed and understood.

In Vivo Research: Capturing Systemic Emergence

In vivo studies are the traditional gold standard for observing emergent behaviors in a full physiological context, such as a drug's systemic effect [3]. Researchers might use a mouse model to study the emergent immune response to a tuberculosis (TB) vaccine, which arises from complex interactions between cytokines, antibodies, and various immune cell types [7].

Key Strengths:

- Full Physiological Relevance: Captures the complete, systemic context with all metabolic processes and organ interactions intact [3].

- Ideal for Studying Chronic, Multi-organ Effects: Essential for observing emergent outcomes like systemic toxicity [3].

Inherent Limitations:

- High Cost and Low Throughput: Experiments are expensive, time-consuming, and logistically complex [3].

- Ethical Considerations: Involves significant ethical implications regarding animal use [3].

- Difficulty in Pinpointing Mechanisms: The very complexity that allows for emergent behavior can make it hard to identify the specific, simple rules that caused it [8].

In Vitro Research: Isolating Emergent Pathways

Conventional 2D cell cultures (e.g., a monolayer of liver cells in a dish) are limited in their ability to exhibit emergent tissue-level functions because they lack a realistic microenvironment [4]. This has driven the development of Complex In Vitro Models (CIVMs), such as organoids and Organ-Chips, which are designed to recapitulate enough biological structure to allow for the emergence of more physiologically relevant behaviors [4] [5].

Key Strengths:

- Enhanced Control and Reproducibility: Allows researchers to reduce systemic variables and hone in on specific interactions [3].

- Human-Relevance: Uses human cells, avoiding species-translation issues that can mislead drug development [5].

- High-Throughput Potential: More amenable to scaling for rapid screening of drug candidates [5].

Inherent Limitations:

- Simplified Biology: May lack the full complexity (e.g., a complete immune system) required for some emergent behaviors [3].

- Technical Challenges: Requires strict conditions to maintain the viability and functionality of human tissues [3].

In Silico Research: Modeling Emergence from the Ground Up

Beyond wet-lab models, computational approaches like Multi-Agent Reinforcement Learning (MARL) provide a powerful platform to study the principles of emergence directly. In a 2D grid-world pursuit-evasion game, researchers can define simple fundamental actions for pursuer agents (e.g., flank, engage, ambush) [6]. Through training, more complex, composite behaviors like "pincer flank attacks" or "serpentine movement" emerge from the agents' interactions without being explicitly programmed [6]. This mirrors how simple rules in biological systems can give rise to complex outcomes.

Direct Comparison: Model Capabilities and Data

The table below summarizes the quantitative and qualitative performance of these research approaches in studying emergent behaviors, based on recent experimental data.

| Research Approach | Example System / Model | Key Emergent Behavior / Outcome Observed | Performance & Experimental Data |

|---|---|---|---|

| In Vivo [7] | Mouse model for TB vaccine | Cascade of immune interactions leading to T-cell activation & antibody production | Model revealed critical path; predicted & confirmed that B-cell elimination had little impact on vaccine response. |

| In Vitro (CIVM) [5] | Liver-Chip (Emulate Bio) | Drug-Induced Liver Injury (DILI) - a systemic toxicological outcome | Correctly identified 87% of known DILI-causing drugs (n=18), outperforming animal models & spheroids. |

| In Silico (MARL) [6] | Pursuit-Evasion on 2D Grid | Cooperative strategies (e.g., "lazy pursuit", "pincer flank attack") | Achieved 99.9% success rate in 1,000 trials; clustering analysis identified 4 key emergent strategies. |

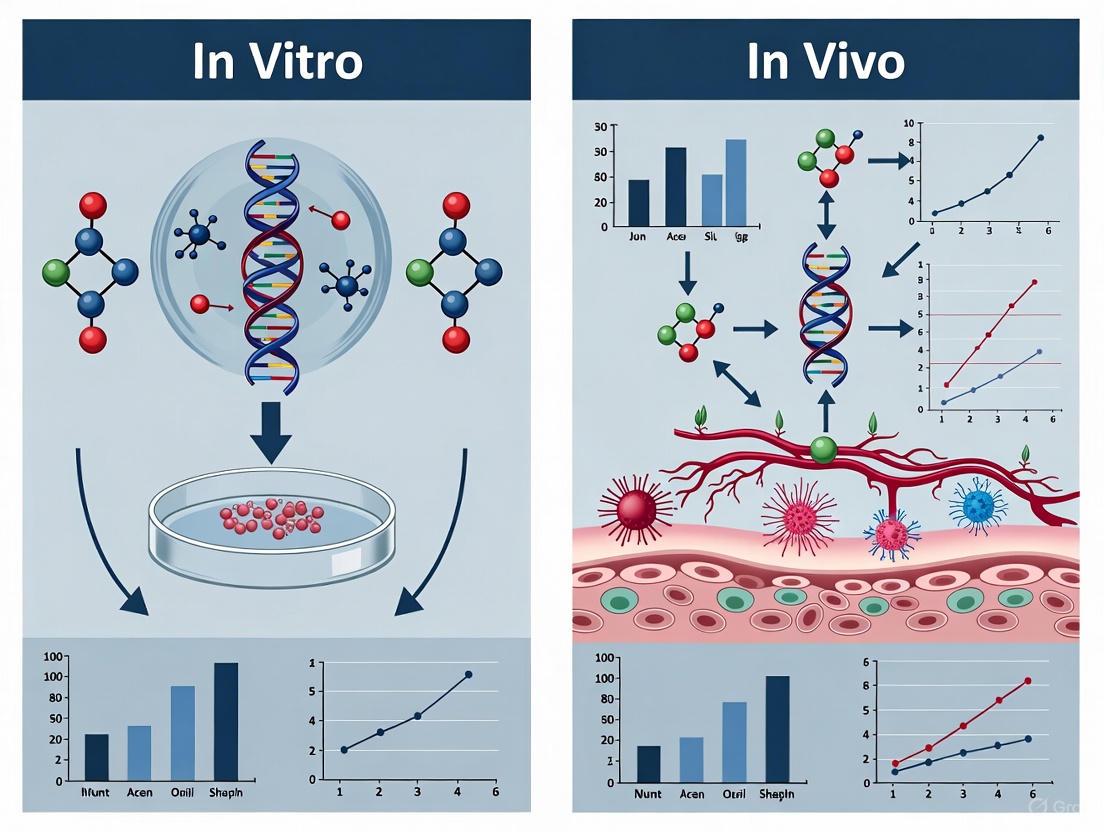

The following diagram contrasts the fundamental workflows of in vivo and advanced in vitro studies, highlighting their different paths to observing emergent behavior.

Detailed Experimental Protocols

To illustrate how data on emergent behavior is generated, here are the methodologies from two key studies cited in this guide.

Protocol 1: Analyzing Emergent Immune Response with Probabilistic Graphical Networks

This in vivo study focused on the emergent immune response to a tuberculosis vaccine in mice [7].

System Perturbation & Data Collection:

- Mice were vaccinated via different routes (intravenous, inhalation, injection).

- Longitudinal data was collected pre-vaccination, post-vaccination, and post-TB infection.

- Approximately 200 variables were measured, including levels of cytokines, antibodies, and diverse immune cell populations (n ≈ 30 animals).

Computational Modeling & Analysis:

- Data was integrated into a probabilistic graphical network model.

- A mathematical technique called graphical lasso was applied to filter out indirect correlations and identify the most essential, direct interactions between variables.

- The model output a "roadmap" of the immune response, highlighting the critical path of interactions that led to the emergent protective immunity.

Model Validation:

- The model's prediction was tested by experimentally suppressing a subset of immune cells (B cells). The result confirmed the model's forecast that this would have little impact, validating its accuracy [7].

Protocol 2: Identifying Emergent Pursuit Strategies with Multi-Agent Reinforcement Learning

This in silico study investigated emergent cooperative behaviors in a 2D grid-world pursuit-evasion game [6].

Agent & Environment Setup:

- A bounded 2D grid world was created as the simulation environment.

- Both pursuer and evader agents were equipped with a set of six fundamental actions (Flank, Engage, Ambush, Drive, Chase, Intercept).

Training & Strategy Generation:

- Agents were trained using Multi-Agent Reinforcement Learning (MARL) algorithms.

- During training, agents learned to combine fundamental actions into 21 types of composite actions.

- The training success was evaluated over 1,000 randomized trials.

Behavior Identification & Clustering:

- The full set of agent trajectories from the trials was treated as statistical samples.

- A K-means clustering methodology was applied to these trajectory data to automatically group and identify distinct, recurring behavioral patterns.

- This quantitative analysis identified four key emergent cooperative strategies, such as "serpentine corner encirclement" and "pincer flank attack" [6].

The study of emergent behavior does not pit in vivo against in vitro approaches but rather highlights their powerful complementarity. In vivo research remains indispensable for observing and confirming emergent behaviors in their full, authentic biological context. Meanwhile, advanced in vitro models (CIVMs) and in silico simulations are transformative, providing the control and analytical tools to deconstruct these complex outcomes, identify their root causes, and build predictive models.

For drug development professionals, this integrated toolkit is reducing reliance on animal testing and overcoming the species-translation gap, as evidenced by regulatory programs like the FDA's ISTAND, which has now accepted its first Organ-Chip model [5]. The future of understanding and harnessing emergent behavior lies in strategically applying these models to ask the right questions at the right time, ultimately leading to safer, more effective medicines.

In vivo systems, defined as experimental approaches conducted within living organisms, provide an indispensable platform for understanding complex biological interactions that cannot be fully replicated in artificial environments. The Latin term "in vivo" literally means "within the living," [9] distinguishing these approaches from their in vitro counterparts which occur in controlled laboratory settings outside of living organisms. For researchers and drug development professionals, this distinction is crucial—in vivo experimentation reveals how biological molecules, drugs, and treatment strategies perform in the complex, integrated environment of a whole organism, where multiple biological systems interact simultaneously [10].

The fundamental advantage of in vivo systems lies in their capacity to capture emergent behaviors and system-level responses that arise from the dynamic interplay between cells, tissues, and organs. These emergent properties cannot be reliably predicted by studying isolated components alone. In drug development specifically, in vivo studies are essential for evaluating side effects, bioavailability, and disease progression within the context of a complete biological system [10]. While more expensive and time-consuming than in vitro approaches, in vivo models provide the most realistic assessment of how interventions will perform in clinical settings, making them irreplaceable in the translational research pipeline.

Comparative Analysis: In Vivo versus In Vitro Systems

Fundamental Distinctions and Complementary Applications

The choice between in vivo and in vitro methods represents a critical decision point in research design, with each approach offering distinct advantages and limitations. In vitro systems (from Latin "in glass") involve experiments conducted outside living organisms in controlled environments like test tubes or petri dishes [10]. These methods allow researchers to study isolated cells, tissues, or biological processes with high precision and minimal confounding variables. In contrast, in vivo systems embrace the complexity of whole organisms, testing hypotheses in living systems where drugs and treatments interact with multiple organs and biological processes simultaneously [10] [9].

The most effective research strategies often move from in vitro tests to in vivo studies as treatments show promise. This sequential approach allows for initial safety and efficacy screening in simplified systems before progressing to more complex whole-organism studies [10]. For example, researchers might first examine how a drug affects specific cell samples in culture before advancing to animal studies and eventual human trials. This methodological progression represents the gold standard in therapeutic development, leveraging the strengths of both approaches while mitigating their respective limitations.

Table 1: Core Differences Between In Vivo and In Vitro Research Approaches

| Parameter | In Vivo Systems | In Vitro Systems |

|---|---|---|

| Experimental Environment | Inside living organisms | Controlled laboratory settings (test tubes, petri dishes) |

| Complexity Level | High - incorporates multiple interacting systems | Low - isolated cells or components |

| Control Over Variables | Limited - numerous uncontrollable factors | High - tight control over experimental conditions |

| Cost & Duration | Expensive and time-consuming | Cost-effective and rapid results |

| Primary Strengths | Reveals whole-organism responses, bioavailability, side effects | Precise mechanism analysis, high-throughput screening |

| Key Limitations | Ethical considerations, complex data interpretation | Limited predictive value for whole-organism responses |

Emergent Properties Exclusive to In Vivo Systems

In vivo systems uniquely capture emergent behaviors that arise from the integrated functioning of multiple biological systems. These system-level properties cannot be adequately studied through reductionist in vitro approaches alone. For example, research using sophisticated in vivo tracking technologies has revealed dynamic cellular behaviors in intact organisms, such as the trafficking of immune cells to graft sites and their functional differentiation within living tissues [11]. These processes involve complex signaling and cellular interactions that only occur in the context of complete physiological systems.

Advanced imaging technologies have further expanded our ability to observe emergent properties in living systems. Dynamic in vivo imaging techniques now enable researchers to capture physiological processes in real-time, such as respiratory function, mucociliary clearance, and treatment delivery in animal models [12]. These approaches reveal complex system behaviors—like coordinated lung motion during breathing or immune cell recruitment to sites of inflammation—that emerge only at the whole-organism level. The capacity to observe these integrated processes in living organisms represents a critical advantage of in vivo systems for understanding complex physiology and disease mechanisms.

Experimental Approaches and Model Systems in In Vivo Research

Chemical, Endogenous Substance, and Heavy Metal-Induced Models

Chemical induction methods represent important approaches for creating animal models of human diseases, particularly for conditions like Alzheimer's disease (AD). These models use various substances to induce pathologies that mimic human disease processes, allowing researchers to study disease mechanisms and potential interventions. The streptozotocin model, for instance, involves intracerebroventricular administration and induces neuroinflammation and oxidative stress, modeling sporadic AD [13]. Similarly, scopolamine models create cholinergic dysfunction without surgical procedures, while colchicine and okadaic acid models primarily induce tau hyperphosphorylation, a key pathology in several neurodegenerative diseases [13].

Endogenous substances and heavy metals also serve as important tools for disease modeling. Amyloid-β1-42 administration creates models that exhibit amyloid-β aggregation and neuroinflammation, while acrolein exposure induces oxidative stress and neuroinflammation [13]. Heavy metals like aluminum are used to create models that display oxidative stress and neurofibrillary tangle formation with relatively low mortality rates. Each of these approaches has distinct advantages and limitations, with varying degrees of construct validity for specific human disease processes.

Table 2: Selected In Vivo Disease Models and Their Key Characteristics

| Model Type | Major Pathology Induced | Key Advantages | Administration Method | Timeline for Pathology |

|---|---|---|---|---|

| Streptozotocin | Neuroinflammation, Oxidative stress | Models sporadic AD (most prevalent form) | ICV/ 3 mg/kg | 21 days |

| Scopolamine | Cholinergic dysfunction | Enables multitarget therapy evaluation | ICV/ 2 mg/kg | 13 days |

| Amyloid-β1-42 | Amyloid-β aggregation, Neuroinflammation | Exhibits predictive, face, and construct validity | ICV/ 80 μmol/L or Intrahippocampal/ 1 µg/µL | 15 days |

| Heavy Metals | Oxidative stress, Neurofibrillary tangles | Low mortality rates, easy administration | Intraperitoneally/ 100 mg/kg or Orally/ 150-300 mg/kg | 25 days |

Transgenic and Genetic Models

Transgenic animal models represent sophisticated tools for studying diseases with genetic components. These models are developed through knock-in or knock-out of specific genes associated with human diseases. The PDAPP model, which overexpresses human APP under the PDGF promoter, shows high pathological similarity with Alzheimer's patients but presents challenges in standardizing and differentiating between functional and pathogenic Aβ [13]. The APP23 model, expressing APP751 cDNA under a neuron-specific murine Thy-1 promoter, primarily affects hippocampus and neocortex regions but does not develop neurofibrillary tangles.

More complex transgenic models include the APP/PS1 mouse (combining APPswe with PS1dE9 mutations), which develops amyloid plaque morphology similar to humans and allows production of homozygous lines, though it shows late onset of cognitive dysfunction [13]. The 3×Tg or LaFerla mouse incorporates three mutated genes (APP, PSEN1, MAPT tau) and develops both amyloid plaques and neurofibrillary tangles, though evaluation is challenging due to multiple gene stimulation. The 5×FAD model, incorporating five familial AD mutations, shows prominent amyloid plaque deposition similar to AD patients but lacks tau pathology [13]. Each model offers specific advantages for studying different aspects of disease pathogenesis.

Advanced Imaging and Tracking Technologies

Advanced in vivo imaging technologies have revolutionized our ability to observe biological processes in real-time within living organisms. For example, endoscopic confocal microscopy combined with in vivo flow cytometry enables longitudinal tracking of islet allograft-infiltrating T cells in live mice [11]. This approach allows researchers to monitor immune responses dynamically without the need for invasive tissue biopsies, providing temporal information about progressing immune reactions.

Similarly, dynamic phase-contrast X-ray imaging using compact light sources enables non-invasive visualization of physiological processes like respiratory function. This technology captures regional delivery of respiratory treatments, lung motion, and mucociliary clearance—key measures of respiratory health—in small animal models [12]. The high flux density provided by modern sources like the Munich Compact Light Source (MuCLS) allows capture of low-noise lung images at exposure times as short as 50 milliseconds, minimizing motion blur and enabling precise observation of dynamic processes [12]. These technologies greatly enhance physiological understanding and accelerate therapy development by allowing direct observation of biological processes in living systems.

Methodologies and Protocols for Key In Vivo Experiments

Color-Coded T Cell Tracking in Live Mice

The color-coded T cell tracking methodology enables real-time observation of immune cell behavior in living animals, providing powerful insights into immunologic processes [11]. This approach begins with preparing C57BL/6 Rag1−/− recipient mice that lack lymphocytes through adoptive transfer of 1 × 10^6 nTreg cells (DsRed−CD4+GFP+) purified from C57BL/6 Foxp3-eGFP regulatory T cell reporter mice together with 9 × 10^6 Teff cells (DsRed+CD4+GFP−) purified from C57BL/6 DsRed–knock-in mice [11]. The following day, researchers place a DBA/2 allogeneic islet graft underneath the left renal capsule.

The core innovation of this method is the color-coded system that distinguishes T cell subsets: Teff cells appear red, nTreg cells appear green, and iTreg cells (converted from Teff cells) appear yellow [11]. This color distinction enables clear identification and tracking of different cell populations. For imaging, researchers use endoscopic confocal microscopy with a 1.24-mm diameter endomicroscope inserted through a small skin incision, allowing repeated imaging of the allograft site with minimal surgical manipulation [11]. This setup enables serial imaging of the same mice at multiple time points (e.g., days 3, 5, 7, 10, 12, and 14 after transplantation), providing kinetic data on T cell infiltration patterns under different treatment conditions.

In Vivo Dynamic Phase-Contrast X-Ray Imaging

Dynamic phase-contrast X-ray imaging at compact light sources enables non-invasive visualization of physiological processes in live animals [12]. The methodology utilizes the Munich Compact Light Source (MuCLS), which provides a partially spatially coherent, low divergence, quasi-monochromatic X-ray beam in a laboratory environment. The technique is particularly valuable for respiratory imaging due to the strong phase contrast between tissue and air.

The experimental setup involves positioning anesthetized mice in the X-ray beam path with a sample-detector distance of approximately 1 meter to enable propagation-based phase-contrast X-ray imaging (PB-PCXI) [12]. For respiratory studies, mice may be connected to a ventilator that provides sustained inflation, with a trigger sent to the detector to capture images at the same point of each breath cycle. This synchronization minimizes motion blur and enables monitoring over extended periods. Exposure times as short as 50 milliseconds are used to freeze motion, made possible by the high flux density of the MuCLS source [12]. For treatment delivery studies, researchers administer liquids (sometimes mixed with contrast-enhancing iodine) via micro-syringe with controlled infusion pumps, allowing real-time observation of distribution dynamics in the airways.

Essential Research Reagents and Tools for In Vivo Studies

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for In Vivo Experimentation

| Reagent/Tool | Function/Application | Example Use Cases |

|---|---|---|

| Chemical Inducers | Modeling specific disease pathologies | Streptozotocin (neuroinflammation), Scopolamine (cholinergic dysfunction) [13] |

| Endogenous Substances | Mimicking natural pathological processes | Amyloid-β1-42 (amyloid aggregation), Acrolein (oxidative stress) [13] |

| Heavy Metals | Inducing specific neurotoxic effects | Aluminum, Fluoride (oxidative stress, neurofibrillary tangles) [13] |

| Reporter Mice | Tracking specific cell populations in vivo | Foxp3-eGFP T cell reporters, DsRed-knock-in mice [11] |

| Contrast Agents | Enhancing visualization in imaging studies | Iodine mixtures for X-ray imaging, fluorescent dyes [12] |

| Immunomodulatory Agents | Manipulating immune responses | CD154-specific monoclonal antibody, rapamycin [11] |

Data Management and Experimental Design Considerations

Best Practices for In Vivo Data Science

Effective data management is crucial for robust in vivo research, particularly when aggregating results from multiple studies. Following best practices for data science enhances the value and utility of in vivo generated data [14]. Researchers should build datasets in suitable digital formats, with comma-separated values (CSV) files recommended for numerical and categorical data due to their compatibility with statistical and machine learning tools [14]. The variety of data sources from in vivo experimentation may necessitate different data structures, such as separate tables with metadata on experimental treatments, pathogens, and experimental datapoints.

A critical principle in in vivo data management is entering data at the smallest experimental unit possible, typically per-animal data, even if group means or other summary measures are the ultimate analysis goal [14]. This approach allows for future dataset expansion and facilitates easy mean value updates using code. Each experimental unit should have a unique identifier, defined as "the biological entity subject to an intervention independently of all units" [14]. Additionally, researchers should include as many datapoints as possible during initial dataset building and enter data with the highest granularity possible, including both non-normalized and normalized values from the start to enable greater analytical flexibility [14].

Ethical Considerations and the Three R's

In vivo experimentation carries significant ethical responsibilities that researchers must address throughout their study designs. Ethical principles of in vivo research are guided by the three R's framework: reduction, refinement, and replacement [14]. These principles compel investigators to reduce animal distress, use fewer animals, and pursue non-animal alternatives whenever possible. Data sharing of in vivo results and data science incorporating these findings represent underutilized areas that support these ethical goals [14].

Recent efforts to improve reporting of in vivo data, such as the ARRIVE guidelines, and to facilitate data sharing, following the FAIR guiding principles, highlight the importance of responsible research conduct [14]. These frameworks emphasize that in vivo data can benefit from increased consistency and public availability, supporting both scientific advancement and ethical research practices. Proper experimental design that minimizes animal use while maximizing data quality represents both a scientific and ethical imperative for researchers working with in vivo systems.

In vivo systems remain indispensable for capturing the systemic complexity of living organisms, providing insights that cannot be obtained through reductionist approaches alone. While in vitro methods offer valuable control and precision for studying isolated processes, in vivo approaches reveal how these processes function within the integrated environment of a whole organism. The continuing development of sophisticated in vivo models—from chemically-induced and transgenic systems to advanced imaging technologies—ensures that researchers have increasingly powerful tools for understanding complex biology and developing effective therapeutics.

The future of biological research lies in the strategic integration of both in vivo and in vitro approaches, leveraging the strengths of each to overcome their respective limitations. This dual methodology represents the gold standard for progressing from initial discoveries to clinical applications, enabling researchers to understand both detailed mechanisms and whole-system responses [10]. As in vivo technologies continue to advance, particularly in imaging and data science applications, they will further enhance our ability to observe and understand the emergent behaviors that define living systems.

In the study of complex biological behaviors, researchers are often faced with a fundamental choice: pursue physiological relevance within living organisms (in vivo) or seek mechanistic clarity in controlled laboratory environments (in vitro). This dichotomy is particularly pronounced when investigating emergent phenomena—complex behaviors that arise from relatively simple interactions between biological components, which cannot be fully predicted by studying individual parts in isolation. In vivo studies, conducted within living organisms like animals or humans, offer the advantage of observing biological processes in their natural, complex environment with full physiological relevance [15]. In contrast, in vitro studies, performed outside living organisms with isolated cells, tissues, or biological molecules, provide unprecedented experimental control but often lack the systemic complexity of whole organisms [15] [16].

The central thesis of this guide is that these approaches are not mutually exclusive but rather complementary methodologies. Advanced in vitro systems are increasingly being engineered to recapitulate key aspects of living tissues, creating controlled microenvironments that bridge the gap between simple cell cultures and whole organisms. These engineered systems enable researchers to deconstruct emergent behaviors into measurable parameters while maintaining sufficient biological complexity to study phenomena that mirror in vivo reality. The following sections provide a detailed comparison of these approaches, experimental methodologies for studying emergence, and specific protocols that illustrate how engineered microenvironments can yield insights into complex biological processes.

Comparative Analysis: In Vivo vs. In Vitro Approaches for Emergent Behavior Research

Fundamental Methodological Differences

Table 1: Core Characteristics of In Vivo and In Vitro Approaches

| Characteristic | In Vivo Systems | In Vitro Systems |

|---|---|---|

| Experimental Context | Within living organisms (animals, humans) [15] | Outside living organisms (lab containers) [15] |

| System Complexity | High - intact biological systems with all native interactions [15] | Variable - from simple 2D cultures to complex 3D models [17] |

| Environmental Control | Low - numerous confounding variables present [3] | High - precise manipulation of specific variables [3] [16] |

| Physiological Relevance | High - reflects real biological context [15] | Limited - simplified representation of biology [16] |

| Throughput Capacity | Low - time-consuming and resource-intensive [3] | High - rapid screening of multiple conditions [15] |

| Cost Considerations | High - animal maintenance, ethical oversight [3] | Lower - reduced resource requirements [15] [16] |

| Ethical Considerations | Significant - especially for animal studies [15] [3] | Reduced - aligned with 3Rs principles [3] |

Quantitative Comparison of Research Outputs

Table 2: Performance Metrics for Studying Emergent Phenomena

| Research Parameter | In Vivo Systems | Engineered In Vitro Systems |

|---|---|---|

| Observation of Systemic Effects | Comprehensive - can study multi-organ interactions [15] [3] | Limited - typically focused on specific tissue/organ models [16] |

| Temporal Resolution | Lower - limited by imaging depth, animal viability | Higher - direct visualization possible with live-cell imaging [18] |

| Spatial Resolution | Variable - limited by tissue opacity, animal motion | Excellent - super-resolution techniques applicable [18] |

| Molecular Mechanism Elucidation | Indirect - requires inference from systemic responses | Direct - precise manipulation of specific pathways [19] [16] |

| Data Reproducibility | Lower - significant biological variability [3] | Higher - controlled environment reduces variables [3] [16] |

| Translation to Human Biology | Good but species differences exist [15] | Improving with human cell-derived organoids [17] |

Experimental Focus: Biomolecular Condensates as an Emergent Phenomenon

Research Background and Significance

Biomolecular condensates represent a compelling example of emergent phenomena in cell biology. These transient, membrane-less organelles form through liquid-liquid phase separation and play crucial roles in organizing cellular biochemistry, particularly in processes like transcriptional regulation [18]. The Cissé Laboratory has pioneered approaches to study these structures, demonstrating that RNA polymerase II (Pol II) and transcription mediators form dynamic clusters in living cells [18]. These clusters exhibit properties of biomolecular condensates and are associated with super-enhancer-controlled genes, representing an emergent property of the transcription machinery that cannot be understood by studying individual molecular components in isolation.

The formation and regulation of these condensates illustrate how relatively simple interactions between proteins and nucleic acids can give rise to complex organizational structures that fundamentally influence gene expression patterns. Studying this phenomenon requires approaches that can capture both the molecular interactions and the collective behaviors that emerge in cellular environments. The following section details specific methodologies for investigating such emergent phenomena across different experimental systems.

Detailed Experimental Protocols

In Vivo Protocol: Studying Transcription Dynamics in Live Cells

Objective: To observe real-time dynamics of RNA polymerase II clustering in live mammalian cells [18].

Materials and Reagents:

- Mammalian cell lines (e.g., human cell lines)

- Fluorescent protein tags (e.g., GFP-tagged Pol II)

- Culture media appropriate for cell line

- Glass-bottom culture dishes for microscopy

- Live-cell imaging compatible environmental chamber

Procedure:

- Cell Preparation: Engineer cell lines to express fluorescently tagged RNA polymerase II subunits using CRISPR/Cas9 gene editing or transient transfection [18].

- Environmental Control: Plate cells on glass-bottom dishes and maintain at 37°C with 5% CO₂ in an environmental chamber mounted on the microscope stage.

- Image Acquisition: Use lattice light-sheet microscopy or similar advanced imaging techniques to minimize phototoxicity while capturing high-resolution temporal data [18].

- Data Collection: Acquire time-lapse images over several hours with appropriate temporal resolution (e.g., 1-5 second intervals) to capture cluster dynamics.

- Perturbation Studies: Introduce specific inhibitors or activators of transcription to observe effects on cluster formation and stability.

- Data Analysis: Quantify cluster size, lifetime, and dynamics using single-particle tracking and cluster analysis algorithms.

Key Measurements:

- Cluster formation rates under different transcriptional conditions

- Residence time of Pol II within clusters

- Correlation between cluster dynamics and gene expression outputs

Engineered In Vitro Protocol: Reconstituting Biomolecular Condensates

Objective: To reconstitute and manipulate biomolecular condensates in a controlled microenvironment to study the physical principles governing their emergence [18].

Materials and Reagents:

- Purified recombinant proteins (Pol II, Mediator complexes)

- Fluorescently labeled nucleic acids

- Microfluidic chambers or glass slides with patterned surfaces

- Buffers with controlled ionic strength and molecular crowding agents

- High-sensitivity fluorescence microscope with TIRF or confocal capabilities

Procedure:

- Surface Preparation: Pattern glass surfaces with appropriate chemical treatments to control wetting properties and mimic cellular interfaces.

- Solution Preparation: Mix purified protein components in buffers containing molecular crowders (e.g., PEG, Ficoll) to mimic intracellular conditions.

- Assembly Observation: Introduce protein solutions into observation chambers and monitor phase separation using fluorescence microscopy.

- Environmental Manipulation: Systematically vary conditions such as temperature, ionic strength, and component concentrations to determine phase boundaries.

- Dynamic Perturbation: Use light-induced targeting approaches to locally manipulate condensate formation in real-time [18].

- Functional Assays: Incorporate DNA templates and nucleotide precursors to assess transcriptional activity within condensates.

Key Measurements:

- Phase diagrams mapping condensate formation under different conditions

- Material properties of condensates (viscosity, surface tension)

- Recruitment kinetics of additional components to condensates

- Relationship between condensate physical properties and functional outputs

Table 3: Research Reagent Solutions for Emergent Phenomena Studies

| Reagent/Technology | Function in Research | Example Applications |

|---|---|---|

| CRISPR/Cas9 Systems [17] | Precise genetic manipulation | Tagging endogenous proteins, knocking out genes to test necessity in emergent behaviors |

| Live-Cell Compatible Fluorophores [18] | Real-time visualization of molecular dynamics | Tracking protein cluster formation and dissolution in living cells |

| Organoid Culture Systems [17] | 3D tissue models with emergent tissue-level behaviors | Studying cell differentiation patterns, tissue organization, and organ-level functions |

| Molecular Crowders (PEG, Ficoll) | Mimic intracellular crowding environment | Inducing phase separation in purified systems to study condensate formation |

| Microfluidic Chambers | Precise control over cellular microenvironment | Creating spatial gradients, controlling shear forces, and patterning cell cultures |

| Super-Resolution Microscopes (PALM/STORM, STED, Minflux) [18] | Imaging beyond diffraction limit | Visualizing nanoscale organization within biomolecular condensates |

| Bioinformatics Tools | Analysis of complex datasets from emergence studies | Identifying patterns in transcriptomic data, modeling network behaviors |

Conceptual Framework: Experimental Pathways for Emergence Research

Workflow for Deconstructing Emergent Phenomena

Signaling Pathways in Biomolecular Condensate Formation

The study of emergent biological phenomena requires a sophisticated integration of both in vivo and in vitro approaches. While in vivo systems provide the essential physiological context in which these phenomena naturally occur, engineered in vitro systems offer the experimental control necessary to deconstruct the underlying mechanisms. The future of this field lies in developing increasingly sophisticated in vitro models—such as organs-on-chips, 3D bioprinted tissues, and advanced organoid systems—that capture more of the complexity of living organisms while maintaining the manipulability of traditional in vitro approaches [3] [17].

For researchers investigating emergent behaviors, a sequential approach that begins with observation in vivo, proceeds through mechanistic deconstruction in vitro, and concludes with validation back in vivo represents the most powerful strategy. This integrated methodology leverages the respective strengths of each system while mitigating their individual limitations. As engineered microenvironments become increasingly sophisticated in their ability to recapitulate tissue-level and organ-level behaviors, they will continue to expand our capacity to understand, predict, and ultimately manipulate the emergent phenomena that underlie both normal physiology and disease states.

Key Differences in Complexity, Control, and Physiological Relevance

For researchers in drug development and biomedical science, the choice between in vitro (outside a living organism) and in vivo (within a living organism) models is fundamental. This guide provides a detailed, data-driven comparison of these approaches, focusing on their complexity, control, and physiological relevance to inform your experimental design.

Defining the approaches

The core distinction lies in the experimental environment. In vivo models involve testing within a whole, living organism, such as animals, providing a real-time, systemic context [3] [20]. In contrast, in vitro models are conducted externally in controlled laboratory environments, such as petri dishes or test tubes, using isolated cells or tissues [3] [21].

A third category, ex vivo, refers to experiments using tissues or organs extracted from a living organism but maintained viable under specific conditions, retaining some native architecture and function [3].

Comparative Analysis: In Vivo vs. In Vitro

The table below summarizes the core differences between these two methodologies across key parameters important for research design.

| Aspect | In Vivo Models | In Vitro Models |

|---|---|---|

| Definition & Scope | Within a whole, living organism (e.g., rodents, zebrafish) [20]; provides a holistic, systemic view [3]. | In a controlled lab environment using isolated cells or tissues (e.g., 2D culture, organoids) [20]; focuses on specific components. |

| Complexity & Physiological Relevance | High physiological relevance; preserves complex interactions between cells, tissues, and organs [3] [20]. Accurately reflects pharmacokinetics and whole-body response [20]. | Low to moderate physiological relevance in traditional 2D cultures; lacks systemic context [21]. Advanced models (e.g., Organ-Chips) improve relevance by mimicking tissue environments [22] [21]. |

| Level of Experimental Control | Low control; high interference from systemic variables and inter-individual biological variability [3]. | High control over the cellular environment (e.g., nutrients, temperature); minimal interference from systemic variables [3] [20]. |

| Cost & Resources | High cost due to animal care, monitoring, and ethical oversight; resource-intensive [3] [20]. | Cost-effective; less expensive setup and maintenance, amenable to high-throughput screening [20] [21]. |

| Time to Results | Long duration; involves extensive planning, execution, and analysis [3] [20]. | Rapid results; quicker experiments due to controlled, simplified conditions [20]. |

| Ethical Considerations | Significant ethical concerns, particularly regarding animal use; requires stringent oversight [3] [20]. | Viewed as more ethical; aligns with the 3Rs principle (Replacement, Reduction, Refinement) to minimize animal use [3] [20]. |

Experimental Models and Protocols

In Vivo Experimental Models

In vivo research employs various animal models, each selected for specific study objectives [3]:

- Rodents (mice and rats): Used to assess pharmacokinetics, toxicity, and efficacy of new compounds before human clinical trials [3].

- Zebrafish (Danio rerio): Ideal for studying embryonic development, toxicology, and gene function due to transparent embryos and external development [3] [23].

- Drosophila melanogaster (fruit fly): Widely used in genetic and neurobehavioral research for its ease of genetic manipulation [3].

Sample In Vivo Protocol: Drug Efficacy and Toxicity in Rodents

- Lead Compound Identification: Candidates are first screened using in vitro methods for initial activity and cytotoxicity [23].

- Animal Model Selection: Choose a relevant rodent strain, often genetically engineered to model a specific human disease.

- Dosing Regimen: Administer the drug candidate to the animals via a relevant route (e.g., oral gavage, injection) at multiple dosages.

- In-Life Monitoring: Observe animals for signs of toxicity, behavioral changes, and overall health.

- Sample Collection: At defined endpoints, collect blood for pharmacokinetic (PK) analysis and tissues for histopathological examination.

- Data Analysis: Evaluate drug efficacy (e.g., tumor shrinkage) and safety (e.g., organ toxicity) to determine suitability for clinical trials.

In Vitro Experimental Models

In vitro models range from simple 2D cultures to advanced, complex systems [4] [21]:

- 2D Cell Culture: Monolayers of immortalized or primary cells grown on flat plastic or glass surfaces [4] [21].

- 3D Cell Culture (Spheroids & Organoids): Cells grown in three-dimensional structures that better mimic tissue architecture and cell-cell interactions [4]. Organoids are particularly advanced as they are self-organizing and can recapitulate organ-specific functions [4].

- Organ-on-a-Chip (OOC): Microfluidic devices containing living human cells that simulate the activities, mechanics, and physiological responses of entire organs [22] [21]. These systems can incorporate fluid flow, mechanical forces, and multiple cell types.

Sample In Vitro Protocol: Establishing Patient-Derived Organoids for Drug Screening

- Tissue Acquisition & Digestion: Obtain human tissue sample via biopsy or surgery. Mechanically and enzymatically digest the tissue into small fragments or single cells.

- Matrix Embedding: Mix the cell suspension with a basement membrane extract (e.g., Matrigel) which provides a 3D scaffold for growth.

- Culture in Specialized Media: Plate the matrix-cell mixture and overlay with a defined, organ-specific culture medium. The medium is supplemented with specific growth factors, signaling agonists, and inhibitors (e.g., Wnt agonists, EGF, BMP) to support stem cell maintenance and differentiation [4].

- Organoid Growth & Expansion: Culture for days to weeks, with regular medium changes, to allow self-organization into organoids.

- Drug Exposure & Assaying: Treat organoids with drug candidates. Assess outcomes using assays for cell viability (e.g., ATP-based assays), immunohistochemistry, or transcriptomic analysis to evaluate efficacy and toxicity [4].

Research Reagent Solutions

The following table details key materials and their functions essential for conducting research in this field.

| Reagent / Material | Function in Research |

|---|---|

| Basement Membrane Extract (e.g., Matrigel) | A scaffold derived from mouse tumor tissue used to support the 3D growth and self-organization of cells into structures like organoids [4]. |

| Defined Culture Media | Tailored nutrient solutions containing specific growth factors, cytokines, and small molecules to maintain cell viability, promote differentiation, and recapitulate in vivo signaling pathways [4]. |

| Primary Human Cells | Cells isolated directly from human tissue (e.g., from surgery), which retain physiological relevance and are used in advanced in vitro models like Organ-Chips [3] [22]. |

| Immortalized Cell Lines | Cells (often derived from cancers) that have been altered to divide indefinitely, providing a consistent, scalable, and cost-effective resource for high-throughput screening, particularly in 2D culture [3] [21]. |

| Microfluidic Biochips | Engineered devices containing tiny channels and chambers that allow for dynamic fluid flow and mechanical stimulation, forming the physical basis of Organ-on-a-Chip systems [22] [24]. |

In vivo and in vitro models are not mutually exclusive but are complementary tools in the research ecosystem [3] [20]. The trend in biomedical research is moving toward the integration of these approaches. In vitro data can inform and refine in vivo studies, reducing animal use and increasing efficiency.

A significant development is the rise of advanced complex in vitro models (CIVMs) like Organ-on-Chip technology and sophisticated organoids [22] [4]. These models aim to bridge the gap between traditional in vitro simplicity and in vivo complexity by incorporating human cells, 3D architecture, mechanical forces, and multiple cell types [21] [24]. This progression is supported by evolving regulatory frameworks, such as the U.S. FDA Modernization Act 2.0, which now authorize the use of certain alternatives to animal testing for investigating drug safety and efficacy [4] [25]. For researchers, the optimal strategy involves a careful consideration of the research question, required physiological complexity, available resources, and ethical guidelines, potentially leveraging both traditional and emerging technologies to achieve the most predictive and translatable outcomes.

In the study of complex biological systems, emergent properties present a fundamental challenge for researchers and drug development professionals. These properties—including drug efficacy, systemic toxicity, and disease progression—arise from nonlinear interactions across multiple biological scales, from molecular networks to whole organisms, and cannot be fully understood by examining individual components in isolation [26]. The translational challenge in biomedical research lies in effectively bridging these scales to predict clinical outcomes from basic mechanistic knowledge [27].

The choice between in vitro and in vivo methodologies fundamentally shapes how researchers can study these emergent behaviors. In vivo approaches provide full physiological context but introduce ethical concerns and high variability, while in vitro systems offer greater control but lack the integrated complexity of whole organisms [3]. This guide compares how two powerful computational frameworks—Agent-Based Models (ABMs) and Systems Biology models—enable researchers to navigate this methodological landscape, providing objective performance comparisons and experimental protocols to inform research design.

Core Principles and Conceptual Frameworks

Agent-Based Modeling: A Bottom-Up Approach to Emergence

Agent-Based Modeling is a rule-based, discrete-event computational methodology that simulates the actions and interactions of autonomous agents to understand the emergence of system-level patterns [27]. ABMs originate from cellular automata and are characterized by several key principles:

- Focus on individual components: ABMs explicitly represent individual entities (cells, organisms) as computational objects with distinct properties and behavioral rules [28]

- Local interactions: Agents operate with "bounded knowledge," responding only to their immediate environment rather than global system states [27]

- Parallelism and stochasticity: Multiple agent instances operate in parallel, with intrinsic stochasticity generating heterogeneous behavioral trajectories across populations [27]

- Spatial explicitness: Most ABMs incorporate spatial relationships through grid-based or network-based environments [27]

- Modular structure: New agent-types or rules can be added without reengineering the entire simulation [27]

The fundamental insight of ABMs is that emergent system dynamics arise from the aggregate of individual interactions, much like flocking behavior emerges from simple rules followed by individual birds rather than a central controller [27].

Systems Biology: A Network-Based Approach to Complexity

Systems Biology employs mathematical modeling and computational analysis to study the integrated dynamics of biological networks across multiple organizational scales [29] [30]. This framework is characterized by:

- Network-centric perspective: Biological components are represented as nodes (genes, proteins, metabolites) within interconnected networks [29]

- Multi-omics integration: Data from genomic, proteomic, transcriptional, and metabolic layers are combined to construct comprehensive interaction maps [29]

- Dynamic modeling: Ordinary differential equations (ODEs) or partial differential equations (PDEs) commonly describe the kinetic behavior of network components [30]

- Focus on regulatory motifs: Key network structures like feedback loops, feed-forward loops, and bistable switches are identified as critical control points [30]

Systems Biology aims to predict system behavior by understanding the topological properties and dynamic interactions within biological networks, moving beyond single-pathway analyses to capture cross-scale integration [29].

Comparative Theoretical Foundations

The table below summarizes the core conceptual differences between these approaches:

Table 1: Fundamental Principles of ABM and Systems Biology Frameworks

| Aspect | Agent-Based Models | Systems Biology Models |

|---|---|---|

| Primary focus | Individual agents and local interactions | Network topology and global dynamics |

| Representation | Discrete computational objects | Continuous concentrations/differential equations |

| Spatial handling | Explicit (grids, networks) | Often implicit or continuum-based |

| Stochasticity | Intrinsic through probabilistic rules | Often deterministic; optional noise terms |

| Emergence mechanism | Bottom-up from agent interactions | System-level solutions to equation systems |

| Scale integration | Natural through agent hierarchies | Explicit through multi-scale modeling |

Performance Comparison: Predictive Capabilities Across Biological Scales

Capturing Multi-Scale Emergent Behaviors

The ability to predict emgent behaviors across biological scales represents a critical test for computational frameworks. Drug efficacy and toxicity exemplify such emergent properties, arising from interactions across molecular, cellular, tissue, and organ levels [26]. The following table compares how each framework addresses this challenge:

Table 2: Performance in Capturing Emergent Properties Across Scales

| Biological Scale | ABM Performance & Characteristics | Systems Biology Performance & Characteristics |

|---|---|---|

| Molecular Networks | Limited direct representation; rules may abstract molecular details | Excellent representation via ODE/PDE models of signaling pathways |

| Cellular Responses | Strong representation of heterogeneity and cell-state transitions | Population averages; limited single-cell heterogeneity |

| Tissue-Level Dynamics | Excellent spatial patterning; cell-cell interactions; microenvironment | Often requires separate tissue-scale models; less spatial detail |

| Organ/System Function | Emerging capability through multi-scale ABMs | Strong through physiologically-based pharmacokinetic models |

| Temporal Dynamics | Discrete time steps; potential for high resolution | Continuous time; natural for kinetic modeling |

| Experimental Validation | Growing calibration methods (e.g., SMoRe ParS) [31] | Established parameter estimation; model fitting algorithms |

Representation of Biological Heterogeneity

A key distinction emerges in how each framework handles biological heterogeneity, a crucial factor in personalized medicine and variable drug responses:

- ABMs naturally capture cell-to-cell variability and population heterogeneity through distinct agent instances with individualized properties and behavioral trajectories [27] [28]. This enables studying how minor differences in individual cells lead to significant population-level effects.

- Systems Biology models typically operate with population averages, though newer approaches incorporate variability through parameter distributions or subpopulations [30]. The emerging Enhanced Pharmacodynamic (ePD) models explicitly account for how genomic, epigenomic, and posttranslational variations affect drug response in individual patients [30].

Predictive Accuracy in Therapeutic Applications

Both frameworks show complementary strengths in predicting therapeutic outcomes:

- ABMs excel in contexts where spatial organization and local interactions drive outcomes, such as tumor growth, immune cell infiltration, and tissue regeneration [28]. For example, ABMs of cancer cell populations can predict emergent resistance patterns arising from cellular heterogeneity and microenvironmental constraints [31].

- Systems Biology approaches demonstrate superior performance when molecular network topology and kinetic parameters determine system behavior, such as in signaling pathway responses to targeted therapies [30]. ePD models of EGFR inhibition successfully predict how different genomic backgrounds lead to variable tumor responses to the same drug dose [30].

Experimental Protocols and Methodological Implementation

Agent-Based Model Development Workflow

The following diagram illustrates the core workflow for developing and validating an ABM for studying emergent behaviors:

Diagram 1: ABM Development and Validation Workflow

Detailed Protocol for ABM Implementation:

Agent Rule Definition: Translate biological knowledge into computational rules governing agent behavior, including:

- State transitions (e.g., cell cycle progression, apoptosis)

- Environmental responses (e.g., chemotaxis, contact inhibition)

- Interaction protocols (e.g., cell-cell signaling, resource competition) [28]

Model Calibration: Employ parameter estimation methods such as:

- SMoRe ParS (Surrogate Modeling for Reconstructing Parameter Surfaces): Uses explicitly formulated surrogate models to bridge ABMs with experimental data [31]

- Bayesian calibration: Incorporates prior knowledge and uncertainty quantification

- Direct inference: Compares simulation outputs with experimental time-course data [31]

Simulation Execution: Conduct multiple runs with different random seeds to:

- Account for stochasticity through Monte Carlo sampling

- Determine minimum run numbers using variance stability metrics (e.g., coefficient of variation) [32]

- Explore parameter spaces to identify behavioral regimes

Output Analysis: Apply specialized techniques for ABM outputs:

- Variance stabilization to determine appropriate sample sizes

- Spatio-temporal analysis of pattern formation

- Sensitivity analysis to identify critical parameters [32]

Systems Biology Model Construction Protocol

The diagram below outlines the methodology for constructing systems biology models:

Diagram 2: Systems Biology Model Construction Methodology

Detailed Protocol for Systems Biology Modeling:

Network Reconstruction: Build interaction networks using:

- Protein-protein interaction (PPI) data from databases and experimental studies

- Gene co-expression networks using Pearson Correlation Coefficient (PCC) or mutual information [29]

- Regulatory interactions from transcription factor binding and chromatin accessibility data

Mathematical Formulation: Translate networks into dynamic models through:

- Ordinary Differential Equations (ODEs) for well-mixed systems

- Partial Differential Equations (PDEs) for spatial dynamics

- Constraint-based models (e.g., flux balance analysis) for metabolic networks

Parameter Estimation: Calibrate model parameters using:

- Time-course data of molecular species and phenotypic responses

- Model-fitting algorithms (weighted least squares, maximum likelihood, Bayesian inference) [30]

- Identifiability analysis to ensure parameter determinacy

Systems Analysis: Characterize emergent dynamics through:

- Bifurcation analysis to identify regime shifts and bistability

- Sensitivity analysis to determine critical nodes and parameters

- Control theory applications for designing therapeutic interventions [29]

Computational Frameworks and Software Tools

Table 3: Essential Computational Tools for Emergence Modeling

| Tool Category | Specific Examples | Primary Function | Framework Compatibility |

|---|---|---|---|

| ABM Platforms | MASON library [28], NetLogo [33] | Multi-agent scheduling and simulation | ABM-focused |

| ODE/PDE Solvers | DifferentialEquations.jl [33], FEniCS [33] | Numerical solution of differential equations | Systems Biology |

| Network Analysis | Cytoscape, NetworkX | Topological analysis of molecular networks | Systems Biology |

| Model Calibration | SMoRe ParS [31], Bayesian tools | Parameter estimation and uncertainty quantification | Both frameworks |

| Spatial Modeling | OpenFoam [33], CompuCell3D | Spatial simulations and tissue modeling | Both frameworks |

| Multi-Scale Integration | FURM [27], enhanced PD models [30] | Cross-scale knowledge representation | Both frameworks |

Experimental Data Requirements for Model Parameterization

Successful implementation of both frameworks requires specific types of experimental data:

For ABM Parameterization:

For Systems Biology Parameterization:

Integrated Applications: Bridging In Vitro and In Vivo Research

Connecting Computational and Experimental Domains

The relationship between computational modeling and experimental approaches forms a continuous cycle of knowledge generation, as illustrated below:

Diagram 3: Iterative Research Cycle Integrating Modeling and Experimentation

Case Study: Cancer Cell Growth and Drug Response

A compelling example of framework integration comes from studies of cancer cell populations and their response to chemotherapeutic agents:

ABM Application: Norton et al. developed an ABM of SNU-1 human gastric cancer cells that simulated population responses to oxaliplatin, incorporating cell cycle dynamics, drug-induced arrest, and contact inhibition [31]. The model was calibrated using in vitro growth inhibition assays and flow cytometry cell cycle data.

Systems Biology Integration: Enhanced Pharmacodynamic (ePD) models of EGFR inhibition demonstrate how systems biology can predict variable drug responses based on genomic and epigenomic alterations in individual patients [30]. These models incorporate specific mutations, methylation patterns, and miRNA expression levels to forecast tumor growth outcomes under targeted therapies.

Performance Metrics and Validation Standards

Robust validation requires multiple complementary approaches:

Quantitative Metrics:

Qualitative Assessments:

- Biological plausibility of emergent patterns

- Mechanistic interpretability of model components

- Expert evaluation of system behaviors [26]

The comparison between Agent-Based and Systems Biology modeling frameworks reveals complementary strengths rather than competitive superiority. ABMs provide unparalleled capability for representing spatial heterogeneity, individual variability, and bottom-up emergence through discrete, rule-based simulations. Systems Biology approaches offer powerful tools for understanding network dynamics, multi-omics integration, and molecular mechanism through continuous mathematical formulations.

The choice between frameworks should be guided by specific research questions: ABMs excel when spatial context, individual heterogeneity, and local interactions drive emergent behaviors, while Systems Biology models prove superior when molecular network topology and kinetic parameters determine system outcomes. The most promising direction for the field involves hybrid approaches that leverage the strengths of both frameworks, creating multi-scale models that seamlessly integrate molecular details with tissue-level phenomena.

For researchers navigating the complex landscape of in vitro and in vivo studies of emergent behaviors, this comparison provides both methodological guidance and practical implementation tools. By selecting the appropriate computational framework and applying rigorous validation standards, scientists can enhance predictive accuracy in drug development and advance our fundamental understanding of biological complexity.

Tools and Techniques: Engineering and Analyzing Emergent Systems

In the context of comparing in vitro versus in vivo emergent behaviors, advanced in vitro platforms represent a paradigm shift in biomedical research. Traditional two-dimensional (2D) cell cultures often fail to replicate the complexity of living tissues, while animal studies (in vivo) face challenges in translating results to human physiology due to species-specific differences [35] [36]. Organ-on-a-Chip (OoC) technology bridges this gap by using microfluidic devices lined with living human cells to create three-dimensional (3D) microenvironments that simulate human organ physiology [37] [38]. These microphysiological systems provide a sophisticated platform for drug development, disease modeling, and personalized medicine, offering human-relevant data while adhering to the 3Rs principle (Replace, Reduce, Refine) in animal testing [3].

The fundamental advantage of these advanced systems lies in their ability to replicate not just the cellular composition but also the dynamic mechanical forces and tissue-tissue interfaces critical to organ function [35]. For instance, breathing motions in lung alveoli, peristalsis in the gut, and blood flow-induced shear stress in vessels can be incorporated into these models [37]. This capability enables researchers to study emergent behaviors—complex physiological responses that arise from the interaction of multiple cell types and tissue structures—in a controlled human-relevant system that sits between traditional in vitro models and full in vivo organisms.

Technology Comparison: OoC Platforms Versus Traditional Models

The following table summarizes the key differences between advanced OoC platforms and traditional research models across critical parameters that influence their application in drug development and disease research.

Table 1: Comparative Analysis of Research Models

| Parameter | Traditional 2D In Vitro | Advanced OoC Platforms | In Vivo Models |

|---|---|---|---|

| Physiological Relevance | Low; lacks tissue-level complexity and 3D architecture [36] | Medium-High; recapitulates tissue-tissue interfaces and mechanical cues [35] [37] | High; full biological complexity within a living organism [36] [3] |

| Systemic Interaction | None; isolated cellular responses only [3] | Emerging; via linked multi-organ systems [39] | Complete; full organ system cross-talk [36] |

| Throughput & Cost | High throughput, low cost per sample [36] | Medium-High; improving with platforms like AVA (96 chips) [40] | Low throughput, high cost [3] |

| Data Human Relevance | Limited; often uses immortalized cell lines [36] | High; utilizes primary human cells and stem cells [35] [37] | Variable; significant species-specific differences [37] |

| Temporal Resolution | High; for cellular processes | High; real-time monitoring with integrated sensors [41] | Limited by invasive measurement techniques |

| Regulatory Acceptance | Well-established for early screening | Growing; FDA Modernization Act 2.0 authorizes use [37] | Gold standard for preclinical trials [36] |

Key Design Principles and Material Considerations

Core Architectural Components

Effective OoC platforms incorporate several biomimetic design principles to recreate organ-specific microenvironments. The central design typically features hollow microfluidic channels lined with living human organ-specific cells and human blood vessel cells separated by a porous membrane, which allows for the recreation of tissue-tissue interfaces [37]. These devices incorporate dynamic fluid flow to simulate blood perfusion and deliver nutrients, while also enabling the application of mechanical forces (e.g., cyclic stretch for lungs, peristalsis for gut) that are critical for maintaining tissue function [35] [38]. The 3D extracellular matrix (ECM) environment provides biochemical and biophysical cues that direct cell differentiation and organization, surpassing the capabilities of traditional 2D cultures [35].

Biomaterials in OoC Fabrication

The selection of materials is crucial for the biological fidelity and experimental reliability of OoC devices. The table below outlines key materials and their applications in OoC development.

Table 2: Essential Biomaterials for Organ-on-Chip Platforms

| Material | Key Properties | Common Applications | Considerations |

|---|---|---|---|

| PDMS (Polydimethylsiloxane) | Transparent, gas-permeable, flexible, easy to fabricate [41] | Most widely used material for prototyping and research [38] | Can absorb small hydrophobic molecules, potentially uncrosslinked oligomers may leach [41] |

| Polymers (PMMA, PS) | Rigid, minimal drug absorption, optically clear [41] | High-throughput screening plates, organ-on-chip consumables (e.g., Chip-R1) [40] | Less gas-permeable than PDMS, requires different fabrication methods [41] |

| Hydrogels (Natural & Synthetic) | Tunable mechanical properties, biocompatible, mimic ECM [35] [41] | 3D cell culture matrices, support tissue morphogenesis and differentiation | Batch-to-batch variability (natural hydrogels), complex characterization |

| Extracellular Matrix (ECM) Proteins | Native biochemical composition, cell adhesion motifs | Coating channels to enhance cell attachment and function | Animal-derived (e.g., Matrigel) can introduce variability |

Experimental Workflow and Protocol Guidelines

Standardized OoC Development and Operation

The development and implementation of OoC platforms follow a systematic workflow to ensure biological relevance and data reliability. The diagram below illustrates the key stages from design to data analysis.

Diagram 1: Experimental workflow for OoC platform development and application, highlighting the staged process from initial design to final data validation.

Detailed Methodological Framework

The experimental workflow for OoC systems involves several critical stages that require careful optimization:

Chip Fabrication: Devices are typically created using soft lithography with PDMS, where a silicon master mold is fabricated via photolithography. PDMS base and curing agent are mixed, poured over the mold, and baked. The cured PDMS is then bonded to a glass slide or another PDMS layer after oxygen plasma treatment [38]. For high-throughput applications, injection molding of thermoplastics like polystyrene is employed [40].

Cell Culture Protocol:

- Cell Source Selection: Utilize primary human cells, patient-derived stem cells (iPSCs), or established cell lines based on research objectives [35].

- Channel Functionalization: Coat microfluidic channels with ECM proteins (e.g., collagen IV, fibronectin) at 100-200 µg/mL for 2 hours at 37°C or overnight at 4°C to promote cell attachment.

- Cell Seeding: Introduce cell suspensions at optimized densities (e.g., 1-10 million cells/mL) into appropriate channels. Allow cell attachment for 15-60 minutes before initiating flow.

- Tissue Maturation: Gradually increase flow rates from 0.1 to 30 µL/hour over 3-10 days to allow tissue development and differentiation under physiologically relevant shear stresses [38].

Experimental Intervention: After tissue maturation, introduce test compounds (drug candidates, toxins) at physiologically relevant concentrations through the vascular channel. For pharmacokinetic studies, collect effluent at timed intervals for analysis. For multi-organ systems, compounds can be perfused through a sequential organ network [39].

Analysis and Validation:

- Real-time monitoring: Use integrated or microscope-based imaging systems to track barrier integrity (TEER), cell viability, and morphological changes.

- Endpoint assays: Fix tissues for immunohistochemistry (presence of specific proteins), extract RNA for transcriptomic analysis, or collect effluent for cytokine secretion profiling (ELISA).

- Functional validation: Compare OoC responses to known clinical outcomes of reference compounds to validate predictive capacity [35].

The Research Toolkit: Essential Reagents and Equipment

Successful implementation of OoC technology requires specific research tools and reagents. The following table details the essential components of an OoC research toolkit.

Table 3: Essential Research Reagent Solutions for OoC Platforms

| Tool Category | Specific Examples | Function & Application |

|---|---|---|

| Microfluidic Controllers | Elveflow OB1; Emulate Zoë-CM2 [40] [39] | Precisely control fluid flow and pressure to mimic physiological perfusion |

| OoC Consumables | Emulate Chip-S1 (Stretchable); Chip-R1 (Rigid); Mimetas OrganoPlate [40] [39] | Microfluidic devices that house the engineered tissues; various designs for different organs |

| Biosensors | Transepithelial/transendothelial electrical resistance (TEER) electrodes; oxygen sensors [41] [38] | Non-invasively monitor tissue barrier integrity and metabolic activity in real-time |

| ECM Hydrogels | Collagen I; Matrigel; fibrin; hyaluronic acid-based [35] [41] | Provide a 3D scaffold that supports cell growth and tissue morphogenesis |

| Cell Culture Media | Organ-specific media; serum-free formulations; differentiation cocktails | Support the viability and maintain the functional phenotype of cells within the chip |

| Imaging Compatible Equipment | Confocal microscope with environmental chamber; high-content imaging systems [40] | Enable real-time, high-resolution visualization of cellular responses within the chips |

Current Applications and Impact Assessment

Translational Applications in Drug Development

OoC technology is demonstrating significant impact across multiple domains of pharmaceutical research and development:

Drug Safety Assessment: Liver-Chip models are being used by companies including Boehringer Ingelheim and Daiichi Sankyo for cross-species drug-induced liver injury (DILI) prediction and comparative liver toxicity studies. Similarly, Kidney-Chip models have been validated for antisense oligonucleotide de-risking at UCB [40].

Disease Modeling: Institut Pasteur has developed intestinal inflammation-on-chip models to identify novel inflammatory bowel disease (IBD) therapies. Queen Mary University of London has created personalized synovium-cartilage chips for understanding patient-specific inflammation in osteoarthritis [40].

Neurovascular Studies: Bayer has developed a blood-brain barrier (BBB)-chip for translational studies to improve CNS drug development prediction. The U.S. Air Force Research Laboratory (AFRL) has utilized Brain-Chip platforms with machine learning to rapidly detect neurotoxin exposure [40].

Multi-Organ Interactions: Companies including TissUse pioneer Multi-Organ-Chip technology, integrating up to ten miniaturized human organs on a single platform to provide a systemic understanding of drug effects, including pharmacokinetics and disease modeling [39].

Quantitative Impact Analysis

The adoption of OoC platforms is generating measurable improvements in research and development efficiency:

Cost Reduction: The use of OoCs can reduce research, development, and innovation (RDI) costs by 10-30%, positioning it as a promising health innovation [35].

Throughput Enhancement: Next-generation systems like the AVA Emulation System achieve a four-fold drop in consumable spend and require up to 50% fewer cells and media per sample compared to previous generation technology. They also reduce hands-on, in-lab time by more than half through automation [40].

Data Richness: A typical 7-day experiment on advanced platforms can generate >30,000 time-stamped data points from daily imaging and effluent assays, with post-takedown omics pushing the total into the millions, providing a rich foundation for machine-learning pipelines [40].

Comparative Analysis: OoC Versus In Vivo Emergent Behaviors

The relationship between OoC and in vivo models is complementary rather than competitive. The following diagram illustrates how these approaches intersect and differ in studying emergent biological behaviors.