From Single Cells to Clinical Practice: A Roadmap for Validating Biomarkers Discovered by Single-Cell Sequencing

Single-cell sequencing has revolutionized biomarker discovery by revealing cellular heterogeneity and identifying novel cell-type-specific signatures with high resolution.

From Single Cells to Clinical Practice: A Roadmap for Validating Biomarkers Discovered by Single-Cell Sequencing

Abstract

Single-cell sequencing has revolutionized biomarker discovery by revealing cellular heterogeneity and identifying novel cell-type-specific signatures with high resolution. However, translating these discoveries into clinically validated tools presents significant methodological and analytical challenges. This article provides a comprehensive roadmap for researchers and drug development professionals, covering the foundational principles of single-cell biomarker discovery, methodological approaches for robust assay development, strategies for troubleshooting technical and biological variability, and rigorous statistical frameworks for clinical validation. By synthesizing current best practices and emerging trends, this guide aims to bridge the critical gap between pioneering single-cell research and clinically applicable diagnostic and predictive biomarkers, ultimately accelerating the development of precision medicine.

Unraveling Cellular Heterogeneity: The Foundation of Single-Cell Biomarker Discovery

In the evolving landscape of personalized medicine, biomarkers serve as critical molecular signposts that illuminate intricate pathways of health and disease, bridging the gap between benchside discovery and bedside application [1]. The FDA-NIH Biomarker Working Group defines a biomarker as "a characteristic that is objectively measured and evaluated as an indicator of normal biological processes, pathogenic processes, or pharmacologic responses to a therapeutic intervention" [2]. These measurable indicators can take the form of molecules, genes, proteins, cells, hormones, enzymes, or physiological traits, helping researchers and clinicians detect, diagnose, and track diseases with increasing precision [1].

Biomarkers are broadly categorized based on their functional roles and clinical applications, with diagnostic, prognostic, and predictive biomarkers representing three fundamental categories that guide clinical decision-making [3]. Understanding the distinctions between these biomarker types is essential for appropriate study design, therapeutic strategy, and patient management in clinical practice and research settings. The emergence of sophisticated technologies like single-cell RNA sequencing (scRNA-seq) has further refined our ability to discover and validate these biomarkers at unprecedented resolution, revealing cellular heterogeneity that was previously obscured by bulk analysis methods [4] [5].

Defining the Fundamental Biomarker Types

Diagnostic Biomarkers

Diagnostic biomarkers are used to detect or confirm the presence of a specific disease or medical condition [3]. These biomarkers can also provide valuable information about the characteristics of a disease, enabling clinicians to make accurate and timely diagnoses. The key function of diagnostic biomarkers is to answer the fundamental question: "Does this patient have the disease?"

For a diagnostic biomarker to be clinically useful, it must demonstrate high sensitivity (ability to correctly identify those with the disease) and specificity (ability to correctly identify those without the disease) [1]. An effective diagnostic biomarker should also be easy to measure using available technology, cost-effective for widespread implementation, and consistent in performance across diverse populations [1].

Table 1: Key Characteristics and Examples of Diagnostic Biomarkers

| Characteristic | Description | Exemplary Biomarkers |

|---|---|---|

| Primary Function | Detect or confirm disease presence | Prostate-specific antigen (PSA), C-reactive protein (CRP) |

| Measurement Timing | At time of suspected diagnosis | Carcinoembryonic antigen (CEA), Neuron-specific enolase (NSE) |

| Sample Types | Tissue, blood, urine, other body fluids | CA-125 for ovarian cancer in blood |

| Key Attributes | High sensitivity and specificity | Elevated CRP indicates inflammation |

Prognostic Biomarkers

Prognostic biomarkers predict the likelihood of future clinical outcomes, including disease recurrence or progression, in patients who have already been diagnosed with a disease [6] [3]. Unlike diagnostic biomarkers that focus on current disease status, prognostic biomarkers look forward to anticipate the natural course of the disease, independent of any specific treatment. They address the clinical question: "What is the likely course of this patient's disease?"

These biomarkers help clinicians understand how aggressive a disease might be and identify patients who may benefit from more intensive monitoring or treatment approaches [6] [3]. Prognostic biomarkers are often identified from observational studies that track patient outcomes over time, and they regularly serve to stratify patients based on their risk profile [6].

Table 2: Key Characteristics and Examples of Prognostic Biomarkers

| Characteristic | Description | Exemplary Biomarkers |

|---|---|---|

| Primary Function | Predict disease outcome or progression | Ki-67 (MKI67), p53 (TP53) |

| Measurement Timing | After diagnosis, before treatment selection | BRAF mutation status in melanoma |

| Sample Types | Tumor tissue, blood, body fluids | High Ki-67 indicates aggressive tumors |

| Key Attributes | Correlates with disease aggressiveness | Identifies high-risk patient subgroups |

Predictive Biomarkers

Predictive biomarkers identify individuals who are more likely than similar individuals without the biomarker to experience a favorable or unfavorable effect from exposure to a specific medical product or environmental agent [6]. These biomarkers are directly linked to treatment decisions and form the cornerstone of personalized medicine by helping match the right therapy to the right patient. They answer the critical question: "Is this patient likely to respond to this specific treatment?"

The identification of predictive biomarkers generally requires a comparison of treatment to control in patients with and without the biomarker [6]. In some circumstances, compelling preclinical and early clinical evidence may justify definitive clinical trials only in populations enriched for the putative predictive biomarker, as was the case with BRAF inhibitor development for BRAF V600E-positive melanoma [6].

Table 3: Key Characteristics and Examples of Predictive Biomarkers

| Characteristic | Description | Exemplary Biomarkers |

|---|---|---|

| Primary Function | Predict response to specific therapy | HER2/neu status, EGFR mutations |

| Measurement Timing | Before treatment initiation | PD-L1 (CD274), NRAS |

| Sample Types | Tumor tissue, blood (liquid biopsy) | HER2 positivity predicts trastuzumab response |

| Key Attributes | Treatment-specific predictive value | RAS mutations predict lack of anti-EGFR response |

Comparative Analysis of Biomarker Types

Clinical Applications and Distinctions

Understanding the nuanced differences between prognostic and predictive biomarkers is particularly important, as these categories are frequently confused but have distinct clinical implications [6]. A prognostic biomarker provides information about the patient's overall disease outcome regardless of specific treatments, while a predictive biomarker provides information about the effect of a specific therapeutic intervention.

The FDA-NIH Biomarker Working Group illustrates this distinction with clear examples: Figure 1A shows how a difference in survival associated with biomarker status in patients receiving an experimental therapy might be misinterpreted as evidence of predictive value. However, when survival curves for patients receiving standard therapy are added in Figure 1B, it becomes apparent that the same survival differences according to biomarker status exist with standard therapy, indicating the biomarker is prognostic but not predictive [6].

In contrast, Figure 2A and Figure 2B demonstrate a scenario where a biomarker initially appears non-informative but upon full analysis proves to be predictive, showing that biomarker-positive patients who do worse on standard therapy derive clear benefit from the experimental therapy [6]. This distinction has profound implications for clinical trial design and therapeutic decision-making.

Evaluation Methodologies and Statistical Approaches

Different biomarker types require distinct methodological approaches for validation and clinical implementation. Simple methods for evaluating these biomarkers have been developed to facilitate their translation into clinical practice [2].

For prognostic biomarkers, researchers typically compare two risk prediction models in a validation sample: Model 1 based on standard predictors, and Model 2 based on standard predictors plus the new prognostic biomarker [2]. The validation sample should represent the target population, potentially using stratified nested case-control designs. Rather than relying solely on statistical measures like changes in the area under the ROC curve, a decision-analytic approach that weighs the costs of biomarker assessment against the anticipated net benefit of improved risk prediction is recommended [2].

For predictive biomarkers, a multivariate subpopulation treatment effect pattern plot involving risk difference or responders-only benefit function can help identify promising subgroups in randomized trials [2]. This approach is particularly valuable for determining whether a biomarker identifies patients who are most likely to benefit from a specific intervention.

Table 4: Methodological Approaches for Different Biomarker Types

| Biomarker Type | Key Evaluation Method | Statistical Considerations | Clinical Validation Requirements |

|---|---|---|---|

| Diagnostic | Sensitivity/specificity analysis | ROC curves, positive/negative predictive values | Comparison to gold standard in relevant population |

| Prognostic | Risk prediction model comparison | Decision curve analysis, net reclassification improvement | Prospective observation of natural disease history |

| Predictive | Treatment-by-biomarker interaction | Subpopulation treatment effect pattern plots | Randomized comparison of treatment vs. control in biomarker-defined groups |

Single-Cell Sequencing in Biomarker Discovery and Validation

Technological Advancements and Workflow

The emergence of single-cell RNA sequencing (scRNA-seq) technology has revolutionized our capacity to study cell functions in complex tissue microenvironments [4]. Traditional transcriptomic approaches, such as microarrays and bulk RNA sequencing, lacked the resolution to distinguish signals from heterogeneous cell populations or rare cell types, limiting their clinical utility for biomarker discovery [4]. Since its inception in 2009, scRNA-seq has evolved as a powerful tool for revisiting somatic evolution and functions under physiological and pathological conditions, enabling researchers to dissect cellular heterogeneity at unprecedented resolution [4] [7].

The fundamental scRNA-seq workflow begins with sample preparation and dissociation, followed by single-cell capture, transcript barcoding, reverse transcription, cell lysis, cDNA amplification, and culminates in library construction and sequencing [4]. The technology has diversified into multiple platforms, including droplet-based systems (e.g., 10× Genomics Chromium) and plate-based fluorescence-activated cell sorting (FACS), each with distinct advantages for particular applications [4]. For cells exceeding size limitations of droplet-based systems (typically >30μm), plate-based FACS employing larger nozzles offers a viable alternative [4].

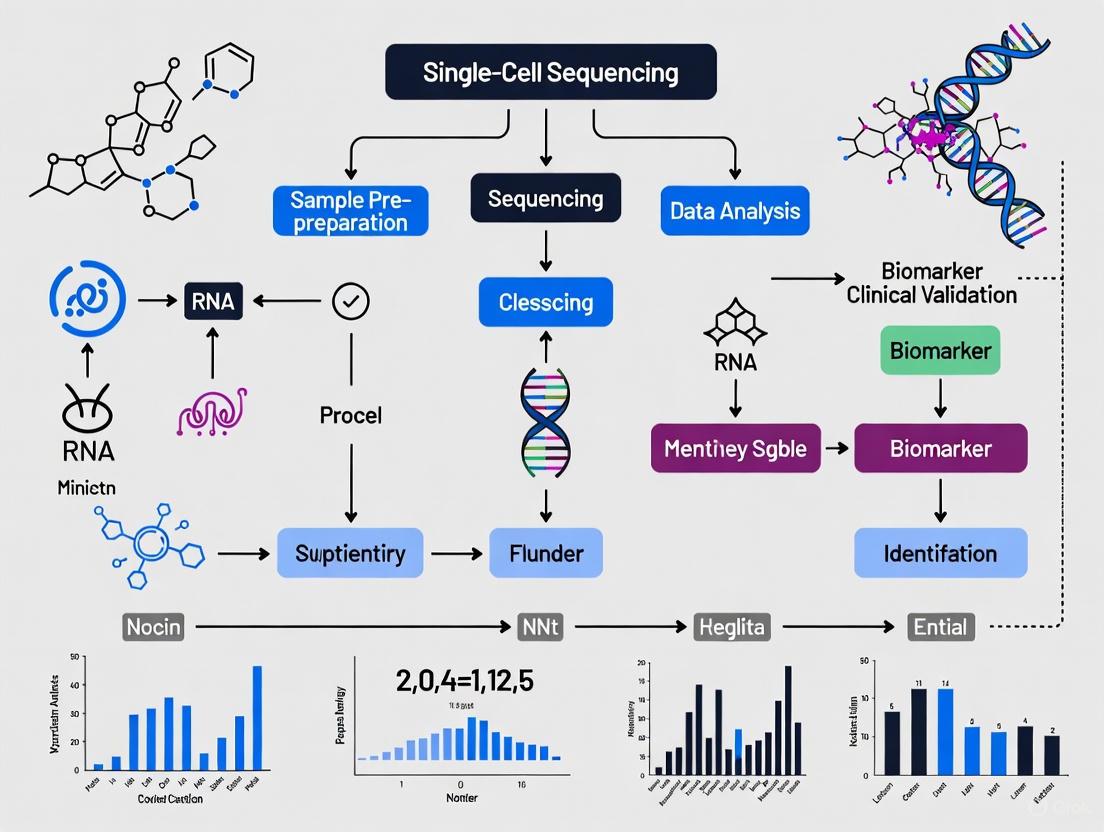

SCS Biomarker Discovery Workflow: This diagram illustrates the key steps in single-cell sequencing for biomarker discovery, from sample preparation through data analysis.

Application in Biomarker Heterogeneity Studies

Single-cell technologies have proven particularly valuable for unraveling biomarker heterogeneity, which presents a significant challenge in clinical validation. A compelling example comes from a 2025 study investigating CDK4/6 inhibitor resistance in breast cancer, where scRNA-seq revealed marked intra- and inter-cell-line heterogeneity in established resistance biomarkers [5]. Researchers performed single-cell RNA sequencing of seven palbociclib-naïve luminary breast cancer cell lines and their palbociclib-resistant derivatives, analyzing 10,557 cells total (5,116 parental and 5,441 resistant cells) [5].

This study demonstrated that transcriptional features of resistance could be observed in naïve cells and correlated with sensitivity levels (IC50) to palbociclib [5]. Resistant derivatives showed transcriptional clusters that significantly varied for proliferative, estrogen response signatures, or MYC targets. The marked heterogeneity was validated in the FELINE trial, where ribociclib-resistant tumors developed higher clonal diversity and greater transcriptional variability for resistance-associated genes compared to sensitive ones [5]. This heterogeneity challenges the validation of clinical biomarkers and may facilitate resistance development.

Experimental Protocols for scRNA-seq Biomarker Studies

The methodology for scRNA-seq biomarker studies requires careful optimization at each step to generate high-quality data. Sample preparation is particularly crucial, with protocols needing adjustment for variables including cellular dimensions, viability, and cultivation conditions [4]. Single-cell suspensions are typically procured through enzymatic and mechanical dissociation techniques, followed by capture using methodologies such as droplet-based systems or FACS [4].

For the data analysis phase, specialized bioinformatic tools are essential. The SEURAT platform and Galaxy Europe Single Cell Lab provide valuable resources for processing scRNA-seq data [4]. Quality control procedures must exclude subpar data from individual cells, which may arise from compromised cell viability, inefficient mRNA recovery, or inadequate cDNA synthesis [4]. Standard QC criteria encompass evaluation of relative library size, number of detected genes, and proportion of reads aligning with mitochondrial genes [4].

Following quality control, principal component analysis is commonly employed for dimensionality reduction, often augmented by advanced machine learning algorithms like t-distributed stochastic neighbor embedding (t-SNE) and Gaussian process latent variable modeling (GPLVM) [4]. Cells are then categorized into subpopulations based on transcriptome profiles, with trajectory-inference methodologies helping trace linear differentiation pathways and multifaceted fate decisions [4].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Implementing robust single-cell sequencing studies for biomarker validation requires specialized reagents and platforms. The table below details key solutions essential for conducting these sophisticated analyses.

Table 5: Essential Research Reagent Solutions for Single-Cell Biomarker Studies

| Reagent/Platform | Function | Application Context |

|---|---|---|

| 10× Genomics Chromium | Droplet-based single-cell partitioning | High-throughput cell capture and barcoding |

| Parse Biosciences Evercode v3 | Combinatorial barcoding chemistry | Scalable profiling of up to 10 million cells |

| Fluidigm C1 | Automated microfluidic cell capture | Plate-based single-cell isolation |

| SEURAT | scRNA-seq data analysis platform | Quality control, clustering, and differential expression |

| BEAMing Technology | Circulating tumor DNA mutation detection | Non-invasive biomarker monitoring in plasma |

| Single-Cell Combinatorial Indexing (SCI-seq) | Low-cost library construction | Somatic cell copy number variation detection |

Signaling Pathways and Biomarker Mechanisms

Understanding the signaling pathways in which biomarkers operate provides critical insights into their biological significance and potential therapeutic implications. Biomarkers frequently function within complex interconnected networks that drive disease progression and treatment response.

Biomarker Signaling Network: This diagram illustrates key signaling pathways containing important predictive biomarkers, showing how mutations in genes like RAS can affect treatment response.

The interconnected nature of these pathways explains why biomarkers like RAS mutations serve as negative predictive biomarkers for anti-EGFR therapies in colorectal cancer [8]. When RAS is mutated, it results in permanent activation of signaling pathways that control cell proliferation, differentiation, adhesion, apoptosis, and migration, independent of EGFR status [8]. This understanding has direct clinical implications, as anti-EGFR antibodies like cetuximab and panitumumab are only effective in patients with wild-type RAS tumors [8].

Diagnostic, prognostic, and predictive biomarkers each serve distinct but complementary roles in clinical practice and research. Diagnostic biomarkers answer "What disease does the patient have?", prognostic biomarkers address "What is the likely disease course?", and predictive biomarkers determine "Which treatment is most appropriate?" [6] [3]. The emergence of single-cell sequencing technologies has dramatically enhanced our ability to discover and validate these biomarkers at unprecedented resolution, revealing heterogeneity that impacts treatment response and resistance mechanisms [4] [5].

As we look toward the future of biomarker analysis, the integration of artificial intelligence with multi-omics approaches and the advancement of liquid biopsy technologies promise to further transform this landscape [9]. Single-cell analysis in particular is expected to become more sophisticated and widely adopted, providing deeper insights into tumor microenvironments and rare cell populations that drive disease progression [9]. These technological advances, combined with evolving regulatory frameworks and patient-centric approaches, will continue to drive the field of personalized medicine forward, ultimately improving patient outcomes through more precise biomarker-guided therapeutic strategies.

Article Contents

- Introduction: The Resolution Revolution in Sequencing

- Fundamental Differences: Bulk RNA-seq vs. Single-Cell RNA-seq

- Key Applications: Where scRNA-seq Reveals What Bulk Sequencing Cannot

- Experimental Deep Dive: Protocol and Workflow

- Case Study 1: Unraveling Therapy Resistance in Breast Cancer

- Case Study 2: Deconvoluting the Pancreatic Cancer Microenvironment

- The Scientist's Toolkit: Essential Reagents and Solutions

- Conclusion and Future Perspectives

The advent of next-generation sequencing marked a significant milestone in molecular biology, with bulk RNA sequencing (bulk RNA-seq) becoming a cornerstone for profiling gene expression. However, this approach provides only a population-level average, obscuring critical cellular differences within complex tissues. The limitations of bulk sequencing become particularly consequential when studying highly heterogeneous samples like tumors, where rare but biologically critical cell populations—such as therapy-resistant clones or cancer stem cells—can drive disease progression and treatment failure. The emergence of single-cell RNA sequencing (scRNA-seq) has fundamentally addressed this blind spot by enabling researchers to profile gene expression at the resolution of individual cells. This technological shift has been transformative for biomarker discovery and clinical validation, moving the field beyond population averages to reveal the precise cellular underpinnings of disease mechanisms [10] [11].

This guide provides an objective comparison of bulk and single-cell RNA sequencing, with a focused examination of how scRNA-seq overcomes the inherent limitations of bulk approaches. Through experimental data, detailed protocols, and case studies, we will demonstrate how single-cell resolution is revealing critical but rare cell populations that were previously masked in bulk analyses, thereby advancing the development of more precise diagnostic and therapeutic strategies.

Fundamental Differences: Bulk RNA-seq vs. Single-Cell RNA-seq

The core distinction between these two methodologies lies in their fundamental unit of analysis. Bulk RNA-seq processes RNA from a mixture of thousands to millions of cells, resulting in a single, averaged gene expression profile for the entire sample. In contrast, scRNA-seq isolates, barcodes, and sequences RNA from individual cells within a sample, generating thousands of distinct transcriptome profiles [10] [12].

A common analogy is that a bulk RNA-seq readout is like viewing a forest from a distance, seeing only the collective canopy, while a scRNA-seq readout is like examining every single tree individually, understanding its species, health, and unique position [10]. This difference in resolution has profound implications for what each technology can detect, especially in the context of cellular heterogeneity [10] [11].

Table 1: Core Methodological Comparison of Bulk RNA-seq and scRNA-seq

| Feature | Bulk RNA-seq | Single-Cell RNA-seq |

|---|---|---|

| Resolution | Population average | Individual cells |

| Detection of Rare Cells | Masks rare cell types (<1-5% of population) | Capable of identifying rare cell types (<0.1% of population) [11] |

| Insight into Heterogeneity | None; provides a homogeneous signal | Reveals and quantifies cellular heterogeneity [10] |

| Ideal Application | Differential expression between conditions (e.g., diseased vs. healthy tissue) | Defining cell types/states, developmental trajectories, and rare cell populations [10] |

| Cost (per sample) | Lower | Higher |

| Data Complexity | Lower; more straightforward analysis | Higher; requires specialized bioinformatics [10] |

| Sample Input | Total RNA from cell population | Viable single-cell suspension |

The critical advantage of scRNA-seq is its ability to unmask cellular heterogeneity. In a tumor, for instance, bulk sequencing might indicate moderate expression of a specific oncogene across the sample. scRNA-seq, however, can reveal that this signal is actually driven by a small, aggressive subpopulation of cells, while the majority of tumor cells do not express it. This level of insight is indispensable for understanding complex biological systems and disease mechanisms [10] [5] [12].

Key Applications: Where scRNA-seq Reveals What Bulk Sequencing Cannot

The applications of scRNA-seq are particularly powerful in situations where cellular identity and state are not uniform. These include:

- Characterizing Heterogeneous Cell Populations: scRNA-seq can systematically catalog all cell types and states present in a complex tissue, such as distinguishing different immune cell subtypes in a tumor or identifying novel neuronal types in the brain [10].

- Discovering Rare Cell Populations: The technology is uniquely suited for identifying and characterizing rare, functionally important cells that are invisible to bulk sequencing. This includes cancer stem cells responsible for tumor initiation and metastasis, drug-tolerant persister cells that survive therapy, and rare progenitor cells during development [11] [12].

- Reconstructing Developmental Lineages: By analyzing transcriptional relationships between individual cells, scRNA-seq can infer developmental trajectories and lineage pathways, answering questions about how cells transition from one state to another during differentiation or disease progression [10].

- Defining Disease-Specific Cell States: It can reveal specific transcriptional programs activated in subpopulations of cells under disease conditions, such as T-cell exhaustion in cancer or the pro-fibrotic state of fibroblasts in diseased lungs [13] [14].

Experimental Deep Dive: Protocol and Workflow

A typical high-throughput scRNA-seq experiment, such as those performed on the 10x Genomics Chromium platform, follows a multi-step workflow that is more complex than bulk RNA-seq, primarily due to the need to handle individual cells [10] [15].

Sample Preparation and Single-Cell Suspension: The process begins with tissue dissection and dissociation into a viable single-cell suspension using enzymatic or mechanical methods. Cell viability and concentration are critical quality control points at this stage. This step is a major source of potential artifacts, as the dissociation process can induce stress-related gene expression [10] [15]. As an alternative, single-nucleus RNA sequencing (snRNA-seq) can be used for samples that are difficult to dissociate or for frozen tissues, as nuclei are more easily isolated and lack the stress response of whole cells [4] [15].

Single-Cell Partitioning and Barcoding: The single-cell suspension is loaded onto a microfluidic chip, where each cell is encapsulated in a nanoliter-scale droplet (Gel Bead-in-emulsion, or GEM) together with a gel bead. Each bead is coated with oligonucleotides containing a cell barcode (unique to each bead), a unique molecular identifier (UMI), and a poly(dT) sequence for mRNA capture. This ensures that all cDNA derived from a single cell shares the same barcode, and every unique mRNA molecule is labeled with a UMI to control for amplification biases [10] [15] [12].

Library Preparation and Sequencing: Within the droplets, cells are lysed, and mRNA is reverse-transcribed into barcoded cDNA. The cDNA is then purified, amplified, and used to construct a sequencing library. Finally, the libraries are sequenced on a high-throughput platform [10].

The following diagram illustrates this core workflow, highlighting the steps that enable single-cell resolution.

Case Study 1: Unraveling Therapy Resistance in Breast Cancer

The marked heterogeneity of biomarkers associated with resistance to CDK4/6 inhibitors (a mainstay treatment for luminal breast cancer) has been a major clinical challenge. A 2025 study used scRNA-seq to investigate this heterogeneity at an unprecedented resolution [5].

Experimental Protocol:

- Cell Lines: Seven palbociclib-sensitive (PDS) luminal breast cancer cell lines and their palbociclib-resistant derivatives (PDR) were used.

- Single-Cell RNA Sequencing: scRNA-seq was performed on both PDS and PDR models. A total of 10,557 high-quality cells (median genes/cell >3000) were selected for analysis.

- Data Analysis: Uniform Manifold Approximation and Projection (UMAP) was used for dimensionality reduction and visualization. Established biomarkers of resistance (e.g.,

CCNE1,RB1,CDK6) and Hallmark gene sets were analyzed for expression. An ordinary least squares (OLS) approach was applied to predict if single cells transcriptomically resembled sensitive or resistant populations [5].

Key Findings and Comparison to Bulk Data: The scRNA-seq analysis revealed a marked intra- and inter-cell-line heterogeneity in resistance biomarkers that is completely obscured in bulk sequencing.

- Heterogeneity of Known Markers: While bulk analysis confirmed known trends like

CCNE1upregulation in resistant lines, scRNA-seq showed the degree of upregulation varied dramatically between individual cells within the same resistant population. Similarly, the expression of other resistance markers likeFAT1andFGFR1was highly heterogeneous [5]. - Presence of Resistant-like Cells in Naïve Populations: A critical finding was that small subpopulations of cells in the treatment-naïve (PDS) cultures already exhibited a transcriptional profile similar to resistant (PDR) cells. These "PDR-like" cells, which could serve as a reservoir for developing resistance, are impossible to detect with bulk sequencing [5].

- Diverse Pathway Enrichment: Enrichment analysis of Hallmark signatures showed that each PDR model had a distinctive pattern of pathway activation (e.g., "MTORC1 signaling," "Estrogen Response Early"), suggesting multiple convergent paths to resistance. Bulk analysis would only reveal the dominant pathway, missing this critical diversity [5].

Table 2: Heterogeneity of Resistance Markers Revealed by scRNA-seq in Breast Cancer Cell Lines

| Biomarker / Pathway | Bulk RNA-seq Finding | scRNA-seq Revelation |

|---|---|---|

| CCNE1 | Upregulated in resistant derivatives. | The level of upregulation is highly heterogeneous across cells within a resistant population [5]. |

| RB1 | Downregulated in resistant derivatives. | Expression loss is not uniform; some cells retain higher RB1 levels [5]. |

| Interferon Response | Can be elevated in resistant models. | Only a subset of resistant cell lines and a subpopulation of cells within them show strong interferon signature [5]. |

| Proliferative State | Resistant population appears homogeneous. | Resistant cells cluster into distinct transcriptional groups with varying proliferative, estrogen response, and MYC target signatures [5]. |

| Pre-existing Resistance | Not detectable. | Rare "PDR-like" cells pre-exist in drug-naïve populations, predicting adaptive response [5]. |

This study demonstrates that resistance is not a uniform state acquired by a whole cell population, but rather a heterogeneous and dynamic process driven by distinct subpopulations. This complexity likely explains the difficulty in validating a single, universal biomarker for CDK4/6 inhibitor resistance in the clinic [5].

Case Study 2: Deconvoluting the Pancreatic Cancer Microenvironment

Pancreatic ductal adenocarcinoma (PDAC) is characterized by an aggressive, therapy-resistant nature and a complex tumor immune microenvironment (TIME). A 2025 scRNA-seq study sought to better understand the immune landscape of PDAC, with a focus on T-cell exhaustion, a state of T-cell dysfunction that limits anti-tumor immunity [13].

Experimental Protocol:

- Data Source: Researchers performed a re-analysis of a publicly available human PDAC scRNA-seq dataset, which included cells from primary tumors and adjacent normal tissues.

- Cell Type Identification: Cells were clustered and annotated based on their transcriptomic profiles to identify major cell types, including cancer cells and T-cells.

- Differential Expression and Network Analysis: Upregulated genes in cancer cells and T-cells from PDAC samples versus normal were identified. Pathway analysis (Reactome) was performed, and protein-protein interaction (PPI) networks were constructed to identify hub genes.

- Validation: Hub gene expression was validated using external databases (GEPIA2, TISCH2), and overall survival analysis was performed [13].

Key Findings and Comparison to Bulk Data: Bulk sequencing of PDAC tumors provides an averaged view of the TIME, conflating signals from cancer cells, immune cells, and stromal cells. scRNA-seq successfully deconvoluted this mixture.

- Identification of T-cell Substates: The study was able to precisely characterize the exhausted T-cell population within the PDAC TIME, distinguishing it from functional effector or memory T-cells. Bulk sequencing can indicate the overall presence of T-cells but cannot reveal their functional state [13].

- Discovery of Novel Biomarkers: The analysis unraveled 16 novel markers associated with cancer cells and T-cells in PDAC. These hub genes, central to the protein interaction networks, provide new potential targets for overcoming T-cell exhaustion and improving immunotherapy responses [13].

- Cell-Type-Specific Pathways: By analyzing pathways separately in cancer cells and T-cell clusters, the study provided a clear, cell-type-specific map of dysregulated biological processes in PDAC. This is a significant advantage over bulk sequencing, where the cellular origin of pathway activation is often ambiguous [13].

The Scientist's Toolkit: Essential Reagents and Solutions

Successfully implementing a scRNA-seq experiment requires careful selection of reagents and platforms. The following table details key solutions and their critical functions in the workflow.

Table 3: Key Research Reagent Solutions for scRNA-seq Experiments

| Reagent / Solution | Function | Key Considerations | |

|---|---|---|---|

| Tissue Dissociation Kit | Enzymatically and/or mechanically dissociates tissue into a single-cell suspension. | Optimization is tissue-specific; harsh digestion can reduce viability and induce stress genes. Working at 4°C can minimize stress responses [15]. | |

| Viability Stain (e.g., DAPI) | Distinguishes live from dead cells. | High viability (>80%) is crucial; high dead cell content can sequester barcoding beads and reduce data quality. | |

| Barcoded Gel Beads | Contains cell barcodes and UMIs for labeling all mRNA from a single cell. | Platform-specific (e.g., 10x Genomics). Determines the number of cells that can be multiplexed in a single run. | |

| Partitioning Chip & Reagents | Creates the microfluidic environment for generating GEMs. | Must be matched to the desired cell number recovery (e.g., Chip K for 10K cells). | |

| Reverse Transcriptase & Amplification Kit | Converts barcoded RNA into stable cDNA and amplifies it for library construction. | High-fidelity enzymes are critical to minimize amplification bias and errors. | |

| Library Preparation Kit | Prepares the final, barcoded cDNA pool for sequencing on a specific platform (e.g., Illumina). | The following diagram maps how these key tools are integrated into the workflow, from tissue to data. |

The objective comparison presented in this guide unequivocally demonstrates that scRNA-seq overcomes the fundamental limitation of bulk RNA-seq by revealing the cellular heterogeneity inherent to biological systems. The case studies in breast and pancreatic cancers provide experimental evidence that critical, often rare, cell populations—such as pre-resistant cancer subclones and exhausted T-cells—are not just academic curiosities but central players in disease pathology and treatment response. The ability to identify these populations and define their unique transcriptional signatures is accelerating the discovery of novel, more precise biomarkers [5] [13] [11].

The future of clinical biomarker validation will increasingly rely on single-cell and spatial multi-omics technologies. While challenges in data complexity and cost remain, ongoing advancements in microfluidics, sequencing chemistry, and automated bioinformatics pipelines are making scRNA-seq more accessible and scalable [10] [4]. The integration of scRNA-seq with other omics layers, such as spatial transcriptomics and proteomics, and the application of AI for data interpretation, will further enrich our understanding of disease biology within its tissue context [14] [9]. For researchers and drug developers, embracing single-cell resolution is no longer an option but a necessity for uncovering the true drivers of disease and developing transformative, targeted therapies.

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical science by enabling the high-throughput measurement of gene expression in individual cells, thereby revealing cellular heterogeneity that was previously masked by bulk analysis techniques [4] [16] [11]. This technology provides a high-resolution view of cellular diversity and function, making it an powerful tool for biomarker discovery across a wide spectrum of medical research [17]. By moving beyond the averaging effects of traditional bulk sequencing, scRNA-seq allows researchers to identify rare cell populations, delineate complex cellular relationships within tissues, and uncover novel biomarkers with high specificity and sensitivity [4] [11]. This article objectively compares the performance of scRNA-seq in four key application areas—radiation dosimetry, cancer research, neurology, and immunology—by examining its specific capabilities, validated biomarkers, and experimental data supporting its clinical utility.

Experimental Protocols in Single-Cell Sequencing

The standard scRNA-seq workflow involves multiple critical steps that ensure the generation of high-quality, interpretable data. While specific protocols may vary slightly depending on the technological platform, the core methodology remains consistent across applications [4] [16].

Sample Preparation and Single-Cell Isolation

The process begins with the preparation of a viable single-cell suspension from tissue samples through a combination of enzymatic and mechanical dissociation techniques. Accurate sample preparation is crucial for generating high-quality transcriptome data, with protocols requiring optimization for variables such as cellular dimensions, viability, and cultivation conditions [4]. Individual cells are then isolated using various methodologies:

- Droplet-based systems (e.g., 10× Genomics Chromium System): Utilize microfluidic innovations to facilitate rapid, simultaneous profiling of thousands of cells within discrete droplets, though this system constrains cell diameter to less than 30 µm [4].

- Plate-based fluorescence-activated cell sorting (FACS): Employs nozzles of up to 130 µm, offering a feasible alternative for capturing larger cells [4].

- Microwell-based approaches: Provide another high-throughput option for single-cell capture [16].

For clinical research applications, single-nuclei RNA sequencing (snRNA-seq) presents a viable alternative that doesn't require immediate processing of clinical samples, allowing valuable specimens to be snap-frozen and stored properly for later analysis [4].

Library Preparation and Sequencing

Upon cell capture, all transcripts from individual cells are barcoded with unique molecular identifiers (UMIs) to enable multiplexing and track transcript origins. The subsequent steps include:

- Reverse transcription (RT) of mRNA into barcoded cDNA

- Cell lysis and cDNA amplification via polymerase chain reaction (PCR)

- Construction of sequencing libraries

- High-throughput sequencing using next-generation sequencers [4]

Library construction approaches vary; 3' end enrichment methods are cost-effective and produce reduced sequencing noise, while full-length transcript libraries typically offer superior transcriptome insights, such as alternative splicing and isoforms [4].

Data Processing and Bioinformatics Analysis

The massive datasets generated by scRNA-seq require sophisticated bioinformatic processing [4]. Standard workflow includes:

- Quality Control: Filtering data based on doublets, mitochondrial content, erythrocyte gene expression, and other parameters to exclude low-quality cells [4] [16] [18].

- Normalization and Scaling: Using functions like "NormalizeData" and "ScaleData" in Seurat to standardize expression values [19] [18].

- Feature Selection: Identifying highly variable genes (typically 2,000) for downstream analysis [19] [18].

- Dimensionality Reduction: Applying principal component analysis (PCA) followed by visualization techniques such as t-distributed stochastic neighbor embedding (t-SNE) or uniform manifold approximation and projection (UMAP) [4] [16] [19].

- Clustering and Cell Type Annotation: Using algorithms to identify cell subpopulations and annotate them with biological cell types through marker gene selection methods [4] [20].

- Differential Expression Analysis: Identifying significantly changed genes between conditions or cell types using methods like the Wilcoxon rank-sum test, Student's t-test, or logistic regression [20].

For batch correction across multiple samples, tools such as Harmony, Seurat's canonical correlation analysis (CCA), or mutual nearest neighbors (MNN) are employed to correct for technical variations [16] [19].

Comparative Performance Across Application Domains

The application of scRNA-seq technologies has led to significant advances across multiple research domains, with each field leveraging its capabilities to address domain-specific challenges. The table below summarizes key performance metrics and notable biomarkers identified through scRNA-seq across four major application areas.

| Application Domain | Key Identified Biomarkers/Cell Populations | Resolution Advantage | Clinical/Research Utility | Supporting Experimental Data |

|---|---|---|---|---|

| Radiation Dosimetry | HARS-predictive genes [17]; Specific radiation-responsive biomarkers in individual cell types [17] | Identifies cell-specific features of dose-response genes beyond bulk NGS capabilities [17] | Rapid triage in nuclear emergencies; understanding individual cell sensitivity to radiation [17] | Targeted NGS of 1000 samples in <30 hours identified 4 HARS genes; Detection within 2h-3d post-irradiation [17] |

| Cancer Research | Immunoregulatory C2 IGFBP3+ melanoma subtype; FOSL1 transcription factor [19]; Tumor antigen-specific TProlif_Tox T-cells [21] | Reveals tumor microenvironment heterogeneity and rare, immunomodulatory malignant cell subtypes [19] [21] | Identifies drug resistance mechanisms; predicts immunotherapy response; discovers novel therapeutic targets [19] | FOSL1 knockdown increased apoptosis, decreased migration/proliferation (A375, MEWo cells) [19]; Multi-omic analysis of pre/post-radiation HNSCC biopsies [21] |

| Neurology | Cell-type-specific transcriptional profiles in neurons/glia; Preclinical-stage cellular aberrations [11] | Detects early gene expression changes in nerve cells before overt symptoms [11] | Early diagnosis of neurodegenerative diseases (Alzheimer's, Parkinson's); disease monitoring [11] | Analysis of brain tissue/CSF-derived cells; Identification of molecular signatures for emerging neurodegeneration [11] |

| Immunology | Tumor-infiltrating lymphocyte (TIL) subpopulations (TProlif_Tox); Regulatory, naïve T-cell clones [21]; Immune cell repertoire diversity (scTCR-seq, scBCR-seq) [16] | Characterizes immune repertoire and identifies specific functional T-cell states driving response/resistance [16] [21] | Understanding immunotherapy resistance mechanisms; guiding immune-oncology strategies [21] | Longitudinal scRNA+TCRseq of HNSCC biopsies showed rapid depletion of TProlif_Tox post-radiation, repopulation by regulatory clones [21] |

Key Signaling Pathways and Regulatory Networks

Visualizing the molecular interactions and signaling pathways discovered through scRNA-seq is crucial for understanding disease mechanisms. The following diagram illustrates a key signaling network identified in melanoma research:

Diagram Title: Melanoma Neuro-Immune Signaling Network

The FOSL1-regulated IGFBP3+ melanoma subtype (C2) functions as a neuro-immunoregulatory hub, mediating signaling to myeloid/plasmacytod dendritic cells via the MHC-II pathway and to fibroblasts/pericytes via the PROS pathway [19]. These interactions have roles in neuroimmunology, neuroinflammation, and pain regulation within the tumor microenvironment [19].

Research Reagent Solutions Toolkit

Successful single-cell sequencing experiments rely on a suite of specialized reagents and computational tools. The following table details essential solutions used in the featured studies.

| Product/Tool | Category | Primary Function | Application Example |

|---|---|---|---|

| 10× Genomics Chromium | Hardware/Reagents | Single-cell capture, barcoding, and library preparation | High-throughput cell profiling in cancer studies [4] |

| Seurat | Software | scRNA-seq data analysis, integration, and visualization | QC, clustering, and differential expression in melanoma and diabetes studies [4] [19] [18] |

| Scanpy | Software | scRNA-seq data analysis in Python | Alternative analysis pipeline to Seurat [20] [16] |

| Harmony | Software/Algorithm | Batch effect correction and data integration | Integrating multiple samples in melanoma studies [16] [19] |

| CellChat | Software/Algorithm | Inference and analysis of cell-cell communication | Predicting interactions between malignant cells and other cell types [19] |

| CIBERSORT | Software/Algorithm | Deconvolution of immune cell types from bulk data | Quantifying immune cell infiltration in T2D islet samples [18] |

| DoubletFinder | Software/Algorithm | Detection and removal of doublet cells | Quality control in melanoma data processing [19] |

| PySCENIC | Software/Algorithm | Inference of transcription factor regulatory networks | Revealing TF networks in melanoma subtypes [19] |

| CytoTRACE | Software/Algorithm | Prediction of cellular differentiation state | Identifying differentiation potency in melanoma subtypes [19] |

Single-cell RNA sequencing has established itself as a transformative technology across diverse research domains, from radiation biology to clinical oncology, by providing unprecedented resolution for detecting cellular heterogeneity and identifying novel biomarkers. The comparative analysis presented demonstrates that while the core technology remains consistent, its application yields field-specific insights that advance both fundamental understanding and clinical translation. In radiation dosimetry, scRNA-seq enables the identification of cell-specific radiation responses beyond the capabilities of traditional biodosimetry methods. In cancer research, it reveals intricate tumor microenvironment interactions and therapy resistance mechanisms. For neurological disorders, it offers hope for early detection by identifying subtle cellular changes preceding clinical symptoms. In immunology, it delineates the complex dynamics of immune cell populations in health and disease. The continued evolution of single-cell multi-omics approaches, integration with spatial transcriptomics, and advancement of computational analytical frameworks will further solidify scRNA-seq's role as an indispensable tool for biomarker discovery and validation in precision medicine.

The biomarker development pipeline represents a systematic, multi-stage process designed to transform raw biological data into validated, clinically actionable insights. This pipeline methodically progresses from initial discovery to full clinical implementation, with the overarching goal of identifying objectively measurable indicators of biological processes, pathological states, or responses to therapeutic interventions [22]. In the era of precision medicine, biomarkers have become indispensable tools, moving healthcare away from a one-size-fits-all model toward more personalized strategies for disease diagnosis, prognosis, and treatment selection [23].

The emergence of sophisticated technologies—particularly single-cell sequencing and artificial intelligence—has fundamentally reshaped this pipeline. These advances allow researchers to decipher disease complexity with unprecedented resolution, capturing the intricate heterogeneity within cell populations that traditional bulk analysis methods inevitably obscure [4] [11]. This technological evolution is critical for developing robust biomarkers that can successfully navigate the arduous path from discovery to clinical adoption, a journey notoriously marked by high failure rates and translational challenges [24] [25].

Pipeline Stages: From Discovery to Clinical Implementation

The biomarker development pipeline can be conceptualized as a multi-stage funnel, with numerous candidates entering at the discovery phase but only a select few emerging as clinically validated tools. The following diagram illustrates the key stages and their interconnected nature.

Stage 1: Discovery

The discovery phase initiates the pipeline, focusing on identifying potential biomarker candidates from complex biological data sources.

- Data Acquisition: Researchers collect biological samples appropriate for their disease context, which may include tissues, blood, urine, or other bodily fluids [24] [25]. For single-cell analyses, this begins with creating high-quality single-cell suspensions through combined enzymatic and mechanical dissociation techniques [4].

- Preprocessing: Raw data undergoes rigorous cleaning, harmonization, and standardization to ensure comparability and reproducibility [24] [26]. This includes quality control procedures to exclude subpar data from individual cells that may arise from compromised cell viability, inefficient mRNA recovery, or inadequate cDNA synthesis [4].

- Feature Extraction: Advanced computational methods, including AI and machine learning algorithms, identify meaningful patterns and potential biomarker signatures from high-dimensional data [24] [23]. This stage leverages techniques such as principal component analysis (PCA) and t-distributed stochastic neighbor embedding (t-SNE) for dimensionality reduction and visualization of cellular heterogeneity [4].

Stage 2: Validation

The validation stage subjects discovery-phase candidates to rigorous testing to confirm their analytical and clinical performance.

- Analytical Validation: This step establishes that the biomarker test itself is reliable, reproducible, and accurate in measuring the intended analyte [23]. It requires demonstrating adequate sensitivity, specificity, and precision according to established regulatory standards.

- Clinical Validation: Biomarker candidates must prove their clinical utility by demonstrating reliability, sensitivity, and specificity across large, diverse populations through extensive statistical analysis against established clinical endpoints [24]. This phase often involves retrospective and prospective studies using well-characterized patient cohorts [26].

Stage 3: Clinical Implementation

Successful clinical implementation integrates validated biomarkers into healthcare workflows to guide patient management decisions.

- Regulatory Approval: Biomarker tests must undergo review by regulatory bodies (e.g., FDA, EMA), which are increasingly implementing more streamlined approval processes for biomarkers validated through large-scale studies and real-world evidence [9].

- Clinical Integration: Implemented biomarkers are incorporated into clinical practice guidelines, requiring educational initiatives for healthcare providers and the development of decision support systems to ensure appropriate utilization [24] [23].

Single-Cell Sequencing: A Transformative Technology

Single-cell RNA sequencing (scRNA-seq) has emerged as a revolutionary technology in biomarker discovery, overcoming the limitations of traditional bulk sequencing approaches that average signals across heterogeneous cell populations [4] [11]. By providing high-resolution data at the individual cell level, scRNA-seq enables the identification of rare cell types, characterization of tumor microenvironment diversity, and dissection of cellular heterogeneity driving disease progression and treatment resistance [4] [5].

The following workflow details the core experimental protocol for scRNA-seq biomarker discovery:

Experimental Protocol: scRNA-seq for Biomarker Discovery

Sample Preparation and Single-Cell Isolation

- Sample Acquisition: Obtain fresh tissue samples or bodily fluids relevant to the disease context under study [4]. For neurological studies, cerebrospinal fluid (CSF) may be collected; for tumor studies, tissue biopsies or liquid biopsy samples (blood, urine) are appropriate [25] [11].

- Tissue Dissociation: Create single-cell suspensions using optimized enzymatic and mechanical dissociation protocols tailored to the specific tissue type [4]. Critical parameters include cellular dimensions, viability, and cultivation conditions.

- Cell Isolation: Separate individual cells using fluorescence-activated cell sorting (FACS), microfluidic systems, or droplet-based platforms [4] [11]. The Chromium system from 10× Genomics represents a widely used droplet-based platform that facilitates simultaneous profiling of thousands of cells [4].

Single-Cell Capture and Barcoding

- Cell Capture: Isolate individual cells into separate reaction chambers using microfluidic devices [4]. For cells exceeding 30μm in diameter, plate-based FACS with nozzles of up to 130μm provides a viable alternative [4].

- mRNA Capture and Barcoding: Within each reaction chamber, cellular mRNA transcripts are captured by poly-dT oligonucleotides containing unique molecular identifiers (UMIs) and cell barcodes that tag all transcripts from the same cell [4].

cDNA Synthesis and Amplification

- Reverse Transcription: Perform reverse transcription to convert barcoded mRNA into cDNA [4].

- cDNA Amplification: Amplify cDNA using polymerase chain reaction (PCR) to generate sufficient material for library construction [4]. Droplet-based systems employ pooled PCR coupled with cell barcoding techniques to markedly enhance throughput [4].

Library Preparation and Sequencing

- Library Construction: Prepare sequencing libraries from the amplified, barcoded cDNA. Libraries with 3' end enrichment are cost-effective and produce reduced sequencing noise, while full-length transcript libraries offer superior transcriptome insights, including alternative splicing and isoform information [4].

- High-Throughput Sequencing: Sequence libraries using next-generation sequencing platforms (e.g., Illumina) with sufficient depth to adequately capture the cellular transcriptome [4].

Bioinformatic Analysis and Biomarker Identification

- Quality Control: Process raw sequencing data through bioinformatic QC procedures to exclude low-quality cells using criteria such as relative library size, number of detected genes, and proportion of mitochondrial genes [4].

- Data Normalization and Integration: Normalize data to account for technical variability and integrate multiple datasets if applicable [4].

- Dimensionality Reduction and Clustering: Apply PCA, t-SNE, or uniform manifold approximation and projection (UMAP) algorithms to visualize cellular heterogeneity and identify distinct cell subpopulations [4] [5].

- Differential Expression Analysis: Identify genes significantly differentially expressed between conditions (e.g., healthy vs. disease, treatment-responsive vs. resistant) using specialized packages [4].

- Biomarker Candidate Selection: Select potential biomarker genes based on statistical significance, effect size, and biological relevance for further validation [5].

Research Reagent Solutions for scRNA-seq

Table 1: Essential research reagents and platforms for single-cell sequencing biomarker discovery

| Reagent Category | Specific Products/Platforms | Key Functions | Technical Considerations |

|---|---|---|---|

| Single-Cell Isolation Platforms | 10× Genomics Chromium, Fluidigm C1, Flow Cytometry with FACS | Separation of individual cells from tissues/body fluids | Throughput, cell size compatibility, viability preservation |

| Reverse Transcription & Amplification Kits | SMART-seq, Maxima H Minus Reverse Transcriptase | cDNA synthesis from single-cell mRNA with UMIs | Sensitivity, coverage bias, amplification efficiency |

| Library Prep Kits | Nextera, Illumina RNA Prep | Sequencing library construction from amplified cDNA | Insert size selection, complexity preservation, cost |

| Sequencing Reagents | Illumina NovaSeq, NextSeq | High-throughput sequencing of barcoded libraries | Read length, depth requirements, cost per cell |

| Bioinformatic Tools | SEURAT, Galaxy Europe Single Cell Lab | Quality control, normalization, clustering, differential expression | Computational requirements, user expertise, reproducibility |

Comparative Performance of Biomarker Technologies

The evolving landscape of biomarker technologies offers researchers multiple platforms with distinct strengths and limitations. The following comparison highlights key technologies used in modern biomarker development:

Table 2: Comparative analysis of biomarker discovery technologies

| Technology | Resolution | Key Applications | Throughput | Cost | Limitations |

|---|---|---|---|---|---|

| Single-Cell RNA Sequencing | Single-cell | Cellular heterogeneity, rare cell populations, tumor microenvironment | Medium to High | High | Complex data analysis, high cost, technical noise |

| Spatial Transcriptomics | Single-cell with spatial context | Tissue architecture, cell-cell interactions, tumor microenvironment organization | Medium | High | Limited resolution in some platforms, high cost |

| Liquid Biopsy (ctDNA) | Bulk tissue representation | Early cancer detection, monitoring treatment response, minimal residual disease | High | Medium to High | Low analyte concentration in early disease stages |

| DNA Methylation Analysis | Base-level (bulk or single-cell) | Early cancer detection, tumor classification, origin determination | High | Medium | Tissue-of-origin challenges, bioinformatic complexity |

| Proteomic Platforms | Protein-level (bulk or single-cell) | Signaling pathway analysis, drug target engagement, functional biomarkers | Low to Medium | Medium to High | Limited multiplexing, dynamic range constraints |

Single-Cell Sequencing in Breast Cancer Resistance Biomarkers

A compelling application of scRNA-seq in biomarker discovery comes from research on CDK4/6 inhibitor resistance in breast cancer. A 2025 study performed scRNA-seq on seven palbociclib-naïve luminal breast cancer cell lines and their palbociclib-resistant derivatives, analyzing 10,557 cells total (5,116 parental and 5,441 resistant cells) [5].

Key findings demonstrated that established biomarkers and pathways related to CDK4/6 inhibitor resistance present marked intra- and inter-cell-line heterogeneity. Transcriptional features of resistance could already be observed in naïve cells, correlating with levels of sensitivity (IC50) to palbociclib [5]. Resistant derivatives showed transcriptional clusters that significantly varied for proliferative, estrogen response signatures, or MYC targets [5].

This heterogeneity was validated in the FELINE trial, where ribociclib-resistant tumors developed higher clonal diversity at the genetic level and showed greater transcriptional variability for genes associated with resistance compared to sensitive ones [5]. The study successfully identified a potential signature of resistance inferred from the cell-line models that separated sensitive from resistant tumors and revealed higher heterogeneity in resistant versus sensitive cells [5].

Clinical Validation Frameworks and Challenges

The transition from discovery to clinical implementation represents the most significant hurdle in the biomarker pipeline. The clinical validation pathway requires meticulous planning and execution, as illustrated below:

Key Challenges in Biomarker Validation

Despite technological advances, significant challenges persist in biomarker development and validation:

- Data Heterogeneity and Standardization: Inconsistent data formats, processing methods, and analytical pipelines create reproducibility crises and undermine scientific trust [24] [26]. For example, different teams processing EEG data at different frequencies can produce conflicting results, invalidating comparisons [24].

- Limited Generalizability: Findings from small, homogeneous cohorts often fail to generalize across larger, diverse populations [24] [26]. This is particularly problematic for scRNA-seq studies, where specialized bioinformatic support remains indispensable for appropriate data interpretation [4].

- Clinical Translation Barriers: Substantial barriers including high implementation costs, regulatory hurdles, and workflow integration challenges impede clinical adoption [26] [25]. The "valley of death" between discovery and clinical application is evidenced by the stark contrast between thousands of biomarker publications and the minimal number achieving FDA approval [24] [25].

- Analytical Validation Requirements: Rigorous proof that a biomarker works in real-world settings, not just controlled lab environments, requires demonstration of reliability, sensitivity, and specificity across large, diverse populations [24].

Validation Strategies for Single-Cell Sequencing Biomarkers

Successful validation of scRNA-seq-derived biomarkers requires addressing these challenges through structured approaches:

- Independent Cohort Validation: Confirm biomarker performance in independent patient cohorts that reflect target population diversity [5]. The FELINE trial validation of breast cancer resistance signatures exemplifies this approach [5].

- Multi-Omics Integration: Strengthen biomarker validity through integration with complementary data types (genomic, proteomic, spatial) to develop comprehensive molecular disease maps [27] [26].

- Longitudinal Sampling: Implement serial sampling designs to capture dynamic biomarker changes over time, as trajectories often provide more comprehensive predictive information than single measurements [26] [22].

- Standardized Bioinformatics: Adopt standardized analytical frameworks and quality control metrics to enhance reproducibility [4]. Open-source initiatives like the Digital Biomarker Discovery Pipeline (DBDP) promote toolkits, reference methods, and community standards to overcome development challenges [24].

The biomarker development pipeline continues to evolve rapidly, driven by technological innovations in single-cell sequencing, multi-omics integration, and artificial intelligence. While significant challenges remain in translating discoveries to clinical practice, structured approaches that prioritize rigorous validation, standardization, and clinical utility assessment offer promising pathways forward.

The integration of AI and machine learning algorithms into biomarker analysis represents a particularly promising frontier, enabling identification of complex patterns in high-dimensional data that traditional methods overlook [23] [26]. Similarly, the emergence of multi-cancer early detection (MCED) tests based on DNA methylation patterns in liquid biopsies highlights the potential for minimally invasive biomarker platforms to transform cancer screening and monitoring [23] [25].

As single-cell technologies become more accessible and computational methods more sophisticated, the next decade will likely witness an acceleration in clinically validated biomarkers derived from these approaches. However, success will depend not only on technological advancement but also on addressing the fundamental challenges of standardization, validation, and implementation that have historically constrained biomarker translation. By learning from both successes and failures in the field, researchers can continue to advance the critical pathway from biomarker discovery to meaningful clinical impact.

From Data to Assays: Methodological Strategies for Translating Single-Cell Findings

Single-cell technologies have revolutionized biomarker discovery and clinical validation research by enabling the precise dissection of cellular heterogeneity within complex tissues. These approaches have moved beyond bulk tissue analysis to reveal distinct cellular subpopulations, rare cell types, and dynamic transitional states that were previously obscured. The integration of single-cell RNA sequencing (scRNA-seq), single-cell Assay for Transposase-Accessible Chromatin using sequencing (scATAC-seq), and Cellular Indexing of Transcriptomes and Epitopes by sequencing (CITE-seq) provides a comprehensive multi-modal framework for understanding the complex relationship between chromatin state, gene expression, and protein expression at single-cell resolution [28] [29] [4]. This technological triad forms the cornerstone of modern investigative pathology, allowing researchers to uncover novel biomarkers with enhanced predictive power for disease diagnosis, prognosis, and therapeutic response.

The clinical translation of single-cell biomarkers requires technologies capable of capturing the full complexity of the tumor microenvironment while maintaining cellular context. Spatial omics technologies have emerged as a powerful complement to single-cell methods, preserving the architectural context of cell-cell interactions that is lost in dissociated single-cell preparations [30] [31]. When integrated with single-cell multi-omics data, spatial profiling enables the validation of candidate biomarkers within their native tissue architecture, providing critical insights into cellular neighborhoods and spatial patterns of disease progression. This review provides a comprehensive comparison of core single-cell technologies, their experimental parameters, and their application in clinical biomarker research.

Core Single-Cell Technology Specifications

Table 1: Technical specifications and performance metrics of core single-cell technologies

| Technology | Measured Analytes | Key Applications in Biomarker Research | Throughput (Cells) | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| scRNA-seq | mRNA transcripts | Cell type identification, differential expression, transcriptional states, rare cell population discovery [4] | Thousands to millions [29] | High-throughput, extensive benchmarking, well-established analysis pipelines [4] [32] | Limited to transcriptome only, loses spatial context |

| scATAC-seq | Accessible chromatin regions | Regulatory element activity, transcription factor binding, epigenetic mechanisms [28] [33] | Thousands to hundreds of thousands [33] | Identifies regulatory drivers of disease, links non-coding variants to function [28] | Lower library complexity than scRNA-seq, computationally challenging [33] |

| CITE-seq | mRNA + surface proteins | Immune cell phenotyping, protein expression validation, cell surface biomarker discovery [29] [32] | Thousands to millions [32] | Direct protein measurement complements transcriptomics, validates potential targets [32] | Limited to surface proteins, antibody panel design required |

| Spatial Transcriptomics | mRNA with spatial context | Tumor microenvironment mapping, cellular neighborhood analysis, spatial biomarker validation [30] [31] | Tissue area-dependent | Preserves architectural context, validates single-cell findings in situ [30] | Lower resolution than dissociated methods, higher cost |

Experimental Protocol Details and Methodological Considerations

Sample Preparation and Quality Control

Optimal sample preparation is critical for generating high-quality single-cell data, particularly for clinical validation studies where sample quality may vary. For scRNA-seq, accurate sample preparation is crucial for generating high-quality transcriptome data, with protocols requiring optimization for variables such as cellular dimensions, viability, and cultivation conditions [4]. Single-cell suspensions are typically procured through a combination of enzymatic and mechanical dissociation techniques, which must be carefully optimized to preserve cell viability while achieving complete dissociation [4]. For frozen archival tissues, single-nuclei RNA sequencing (snRNA-seq) presents a viable alternative, as it does not require immediate processing of clinical samples and allows for the analysis of biobanked specimens [4].

Quality control metrics vary by technology but generally include assessments of cell viability, library complexity, and technical artifacts. For scATAC-seq data, key quality metrics include fragment number per cell, transcription start site (TSS) enrichment, and nucleosome signal [28] [33]. Low-quality cells in scRNA-seq data are typically identified based on unique gene counts, total counts, and mitochondrial percentage [28] [4]. For CITE-seq data, additional quality controls include antibody-derived tag (ADT) counts and the separation between signal and background for each protein marker [29] [32].

Platform Selection and Experimental Design

Droplet-based microfluidic systems, particularly the 10x Genomics Chromium platform, represent the most widely adopted approach for single-cell genomics due to their high throughput and commercial availability [29] [4]. These systems partition individual cells into nanoliter-scale droplets containing barcoded beads, enabling massively parallel barcoding of thousands of cells in parallel [29]. The choice between full-length transcript protocols (e.g., SMART-seq2) and 3'-counting methods (e.g., 10x Genomics) depends on the research objectives, with the former providing superior transcriptome insights including alternative splicing and isoforms, while the latter offers higher throughput and reduced sequencing noise [4].

Experimental design must carefully consider control samples, replication strategies, and cell loading concentrations. Species-mixing experiments using human and mouse cells are a gold-standard technique for benchmarking and quantifying cell doublets, which occur when two or more cells are mistakenly encapsulated together [29]. As cells are Poisson-loaded into droplets, higher cell densities raise the probability of doublet formation, requiring careful optimization of cell loading concentrations or the use of computational doublet detection methods [29]. For multi-omics studies, the higher costs and technical complexity of these approaches must be balanced against the additional biological insights gained from paired modality measurements [28] [34].

Experimental Workflows and Data Analysis

Integrated Multi-Omics Workflow

Diagram 1: Integrated multi-omics workflow for biomarker discovery, combining scATAC-seq, scRNA-seq, and CITE-seq technologies with spatial validation.

Computational Analysis Pipelines

Data Preprocessing and Quality Control

The computational analysis of single-cell data requires specialized pipelines for each modality. For scATAC-seq data, the PUMATAC pipeline provides a universal preprocessing approach that includes cell barcode error correction, adapter trimming, reference genome alignment, and mapping quality filtering [33]. The Signac package in R is widely used for scATAC-seq data analysis, including peak calling, dimension reduction, and integration with scRNA-seq data [28]. For scRNA-seq data, the Seurat package provides comprehensive tools for quality control, normalization, and clustering, while the DoubletFinder package can identify potential doublets [28].

Quality control thresholds vary by technology and experimental protocol. For scATAC-seq, typical QC metrics include nCountpeaks (2000-30,000), nucleosome signal (<4), and TSS enrichment (>2) [28]. For scRNA-seq, common filters include nCountRNA (<50,000), nFeature_RNA (500-6,000), and mitochondrial percentage (<25%) [28]. For CITE-seq data, additional quality controls focus on the antibody-derived tags, including checks for background signal and nonspecific binding [29] [32].

Data Integration and Multi-Omic Analysis

The integration of multiple modalities presents both computational challenges and opportunities for biological discovery. Multi-omics technologies enable the joint profiling of multiple modalities within individual cells, offering the potential to uncover new cross-modality relationships [34]. However, multi-omics data remain scarcer than their single-modality counterparts due to higher costs, and often show poorer data quality for each individual modality [34]. Computational methods like scPairing have been developed to integrate and generate single-cell multi-omics data by pairing separate unimodal datasets, effectively creating artificially paired data that closely resemble true multi-omics data [34].

For clustering analysis, benchmarking studies have evaluated 28 computational algorithms on paired transcriptomic and proteomic datasets [32]. The top-performing methods across both omics modalities include scAIDE, scDCC, and FlowSOM, with FlowSOM also offering excellent robustness [32]. For users prioritizing memory efficiency, scDCC and scDeepCluster are recommended, while TSCAN, SHARP, and MarkovHC are recommended for users who prioritize time efficiency [32].

Key Research Reagent Solutions

Table 2: Essential research reagents and platforms for single-cell multi-omics studies

| Reagent/Platform | Function | Key Features | Considerations for Biomarker Studies |

|---|---|---|---|

| 10x Genomics Chromium | Single-cell partitioning and barcoding | High-throughput, multi-ome capabilities (RNA+ATAC) | Widely adopted, standardized workflows, commercial support [28] [29] |

| Chromium Next GEM Chip Kits | Microfluidic cell partitioning | Sub-Poisson loading efficiency, consistent performance | Optimal cell loading critical for doublet rates [28] [29] |

| Single Cell Multiome ATAC + Gene Expression | Simultaneous RNA and chromatin accessibility profiling | Paired measurements from same cells | Direct correlation of regulatory elements with gene expression [28] |

| CITE-seq Antibodies | Oligonucleotide-conjugated antibodies for protein detection | Multiplexed protein measurement alongside transcriptome | Panel design crucial, validation required for specificity [29] [32] |

| Nuclei Isolation Reagents | Tissue dissociation and nuclei preparation | Preservation of nuclear RNA and chromatin accessibility | Essential for frozen archival samples [28] [4] |

| Single Cell 3' Reagent Kits | Library preparation for gene expression | 3' counting method with UMIs | Higher throughput but limited splice variant information [4] [35] |

Applications in Clinical Biomarker Validation

Cancer Regulatory Programs and Therapeutic Targets

Single-cell multi-omics analysis has revealed critical cancer regulatory elements and transcriptional programs with significant clinical implications. A comprehensive study integrating scATAC-seq and scRNA-seq data from eight distinct carcinoma tissues identified extensive open chromatin regions and constructed peak-gene link networks that reveal distinct cancer gene regulation and genetic risks [28]. This approach identified cell-type-associated transcription factors that regulate key cellular functions, such as the TEAD family of TFs, which widely control cancer-related signaling pathways in tumor cells [28]. In colon cancer, tumor-specific TFs that are more highly activated in tumor cells than in normal epithelial cells were identified, including CEBPG, LEF1, SOX4, TCF7, and TEAD4, which are pivotal in driving malignant transcriptional programs and represent potential therapeutic targets [28].

Tumor Microenvironment and Immunotherapy Biomarkers

The application of spatial and single-cell omics has significantly advanced biomarker discovery in tumor immunotherapy by addressing critical challenges such as tumor heterogeneity, immune evasion, and variability within the tumor microenvironment (TME) [30]. Immunotherapeutic strategies, including immune checkpoint inhibitors and adoptive T-cell transfer, have demonstrated promising clinical outcomes; however, their efficacy is limited by low response rates and the incidence of immune-related adverse events (irAEs) [30]. Spatial omics integrates molecular profiling with spatial localization, providing comprehensive insights into the cellular organization and functional states within the TME, thereby enabling the identification of spatial biomarkers of therapeutic response [30].

Comparative studies of spatial transcriptomics platforms using formalin-fixed paraffin-embedded (FFPE) tumor samples have demonstrated the capability of these technologies to characterize the tumor microenvironment at single-cell resolution [31]. These studies have revealed intricate differences between ST platforms and highlighted the importance of parameters such as probe design in determining data quality [31]. The integration of spatial technologies with single-cell multi-omics data provides a powerful approach for validating candidate biomarkers within their architectural context, bridging the gap between cellular identity and tissue function.

The integration of scRNA-seq, scATAC-seq, and CITE-seq technologies provides a powerful multi-modal framework for clinical biomarker discovery and validation. Each technology offers complementary strengths: scRNA-seq reveals cellular heterogeneity and transcriptional states, scATAC-seq identifies regulatory mechanisms driving disease, and CITE-seq validates protein-level expression of candidate biomarkers. The convergence of these approaches with spatial profiling technologies and advanced computational methods creates an unprecedented opportunity to understand disease mechanisms at cellular resolution, accelerating the development of precision medicine approaches across diverse human diseases.

As these technologies continue to evolve, key challenges remain in standardization, data integration, and clinical translation. However, the rapid pace of innovation in single-cell multi-omics promises to further enhance our understanding of cellular biology in health and disease, ultimately leading to more precise diagnostic, prognostic, and predictive biomarkers for clinical application.

The study of biological systems has evolved significantly from single-omics investigations to integrated multi-omics approaches. This paradigm shift enables researchers to construct comprehensive molecular portraits of health and disease by simultaneously analyzing genomic, transcriptomic, and proteomic data layers. The integration of these diverse data types provides unprecedented insights into complex biological processes, disease mechanisms, and therapeutic opportunities, particularly in the context of single-cell sequencing biomarker validation research [36] [14]. This guide objectively compares the performance of different multi-omics integration strategies and presents supporting experimental data to inform researchers, scientists, and drug development professionals about the current landscape of holistic molecular signature discovery.

Multi-omics Integration Strategies: A Comparative Analysis

Horizontal vs. Vertical Integration Approaches

Multi-omics integration strategies can be broadly categorized into horizontal and vertical approaches, each with distinct advantages and applications in biomedical research.