Hybrid STGCN-ViT Models: Revolutionizing Early Detection of Neurological Disorders with Integrated Spatiotemporal Analysis

This article explores the transformative potential of hybrid STGCN-ViT (Spatial-Temporal Graph Convolutional Networks-Vision Transformer) models for the early diagnosis of neurological disorders (NDs) such as Alzheimer's disease and brain tumors.

Hybrid STGCN-ViT Models: Revolutionizing Early Detection of Neurological Disorders with Integrated Spatiotemporal Analysis

Abstract

This article explores the transformative potential of hybrid STGCN-ViT (Spatial-Temporal Graph Convolutional Networks-Vision Transformer) models for the early diagnosis of neurological disorders (NDs) such as Alzheimer's disease and brain tumors. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning from foundational concepts and model architecture to implementation, optimization, and validation. By synthesizing the latest research, including performance benchmarks from OASIS and Harvard Medical School datasets where these models achieved over 94% accuracy and AUC-ROC, this guide serves as a technical deep dive and a roadmap for integrating advanced machine learning into biomedical research and clinical development pipelines to enable precision medicine.

The Critical Need for Advanced Diagnostics: Understanding Neurological Disorders and the Limits of Current Methods

Early diagnosis of neurological disorders (NDs) such as Alzheimer's disease (AD), Parkinson's disease (PD), and brain tumors (BT) represents a critical challenge in modern healthcare [1]. These conditions cause minor, progressive changes in the brain's anatomy that are often difficult to detect in initial stages using conventional diagnostic approaches [1]. Magnetic Resonance Imaging (MRI) serves as a vital tool for visualizing these disorders, yet standard techniques reliant on human analysis can be inaccurate, time-consuming, and insufficient for detecting the subtle early-stage symptoms necessary for effective treatment intervention [1]. The integration of advanced deep learning architectures, particularly hybrid models combining Spatial-Temporal Graph Convolutional Networks (STGCN) and Vision Transformers (ViT), offers promising solutions to these diagnostic limitations by enhancing analytical accuracy and enabling earlier detection through comprehensive spatial-temporal feature extraction [1].

The Critical Diagnostic Gap in Neurological Care

The Critical Importance of Early Detection

Early diagnosis of neurological disorders is fundamental for implementing timely therapeutic interventions that can slow disease progression and significantly improve patient quality of life [1]. In Alzheimer's disease, early detection at the mild cognitive impairment (MCI) stage provides the best opportunity for intervention before significant neurodegeneration occurs [2]. Similarly, for aggressive conditions like glioblastoma multiforme, early detection is crucial given the poor prognosis with median survival of less than 15 months even with advanced treatments [3]. The capacity to identify neurological disorders in their nascent stages allows healthcare providers to initiate targeted treatment strategies when they are most effective, potentially altering disease trajectories and improving long-term patient outcomes.

Fundamental Challenges in Early Diagnosis

Several intrinsic factors complicate the early diagnosis of neurological disorders. The human brain exhibits remarkable anatomical complexity, and early-stage neurological disorders often manifest through subtle changes that are difficult to distinguish from normal variations or age-related alterations [1]. Traditional diagnostic methods relying on subjective clinical assessment of motor symptoms in Parkinson's disease or cognitive evaluation in Alzheimer's disease often only identify abnormalities after significant neurodegeneration has already occurred [4]. Misdiagnosis rates can be as high as 25% in early-stage Parkinson's disease, highlighting the critical need for more objective biomarkers to support clinical decision-making [4]. Additionally, the reliance on highly specialized practitioners for image interpretation creates diagnostic bottlenecks, particularly in underserved or remote regions where access to neurological expertise is limited [1].

Table 1: Key Challenges in Early Diagnosis of Neurological Disorders

| Challenge Category | Specific Limitations | Impact on Diagnosis |

|---|---|---|

| Pathological Complexity | Subtle anatomical changes in early stages [1] | Difficult to distinguish from normal brain variations |

| Diagnostic Subjectivity | Reliance on human interpretation of MRI scans [1] | Inter-observer variability and inconsistency |

| Technical Limitations | Standard MRI analysis captures spatial but not temporal dynamics [1] | Inability to track progressive changes critical for early detection |

| Resource Constraints | Requirement for highly specialized practitioners [1] | Diagnostic delays, particularly in remote areas |

| Methodological Gaps | Inability of conventional models to capture long-range dependencies [1] | Reduced accuracy in identifying distributed patterns of neurodegeneration |

Hybrid Deep Learning Architectures: Bridging the Diagnostic Gap

The Evolution of Deep Learning in Neurological Diagnosis

Deep learning has revolutionized medical image analysis by providing automated systems capable of detecting complex patterns in medical images that human observers might miss [1]. Convolutional Neural Networks (CNNs) initially emerged as the preferred architecture for MRI-based diagnostics, leveraging their ability to learn hierarchical features through convolutional layers that detect edges, textures, and tumor-like surfaces [3]. However, traditional CNNs operating with fixed receptive fields cannot adequately capture the long-range dependencies critical for identifying distributed neurological disorders [1]. The subsequent integration of Recurrent Neural Networks (RNNs) with CNNs aimed to address temporal relationships between MRI slices, though these hybrid models often faced challenges with vanishing gradients when modeling extended temporal sequences [1].

The recent emergence of Vision Transformers (ViT) has introduced a paradigm shift in medical image analysis [2]. By replacing convolutional operations with self-attention mechanisms, ViTs can capture global relationships across entire images more effectively than traditional CNN architectures [5]. This capability is particularly valuable for analyzing the brain's complex structure, where pathological changes may be distributed across multiple regions [2]. Transformers process images as sequences of patches, allowing the model to handle global connections that CNNs cannot effectively capture [3].

The STGCN-ViT Hybrid Framework

The STGCN-ViT model represents a novel hybrid architecture that integrates convolutional networks, spatial-temporal graph convolutional networks, and vision transformers to address limitations of previous approaches [1]. This integrated framework leverages the strengths of each component: EfficientNet-B0 for spatial feature extraction from high-resolution images, STGCN for modeling temporal dependencies and tracking progression across brain regions, and ViT with self-attention mechanisms to focus on crucial areas and significant spatial patterns in medical scans [1].

The model generates a spatial-temporal graph representing anatomical variations by partitioning spatial features into regions and reducing them, enabling the network to monitor progression across multiple brain areas [1]. This approach addresses a critical limitation of conventional models by explicitly modeling both spatial and temporal dynamics, which is essential for capturing the progressive nature of neurological disorders [1].

Table 2: Performance Comparison of Deep Learning Architectures in Neurological Disorder Detection

| Architecture | Disorder | Dataset | Key Metrics | References |

|---|---|---|---|---|

| STGCN-ViT | Alzheimer's, Brain Tumors | OASIS, HMS | Accuracy: 93.56-94.52%, Precision: 94.41-95.03%, AUC-ROC: 94.63-95.24% [1] | |

| 2D ConvKAN | Parkinson's | PPMI | AUC: 0.973, 97% faster training than conventional CNNs [4] | |

| 3D ConvKAN | Parkinson's | PPMI | AUC: 0.600 (generalization to early-stage cases) [4] | |

| ResNet101-ViT | Alzheimer's | OASIS | Accuracy: 98.7%, Sensitivity: 99.68%, Specificity: 97.78% [2] | |

| Hybrid-RViT | Alzheimer's | OASIS | Training Accuracy: 97%, Testing Accuracy: 95% [5] | |

| ViT-CapsNet | Brain Tumors | BRATS2020 | Accuracy: 90%, Precision: 90%, Recall: 89% [3] | |

| DenseVU-ED | Brain Tumors | BraTS2020 | Segmentation Accuracy: 98.91%, Dice Scores: ET:0.902, WT:0.966 [6] |

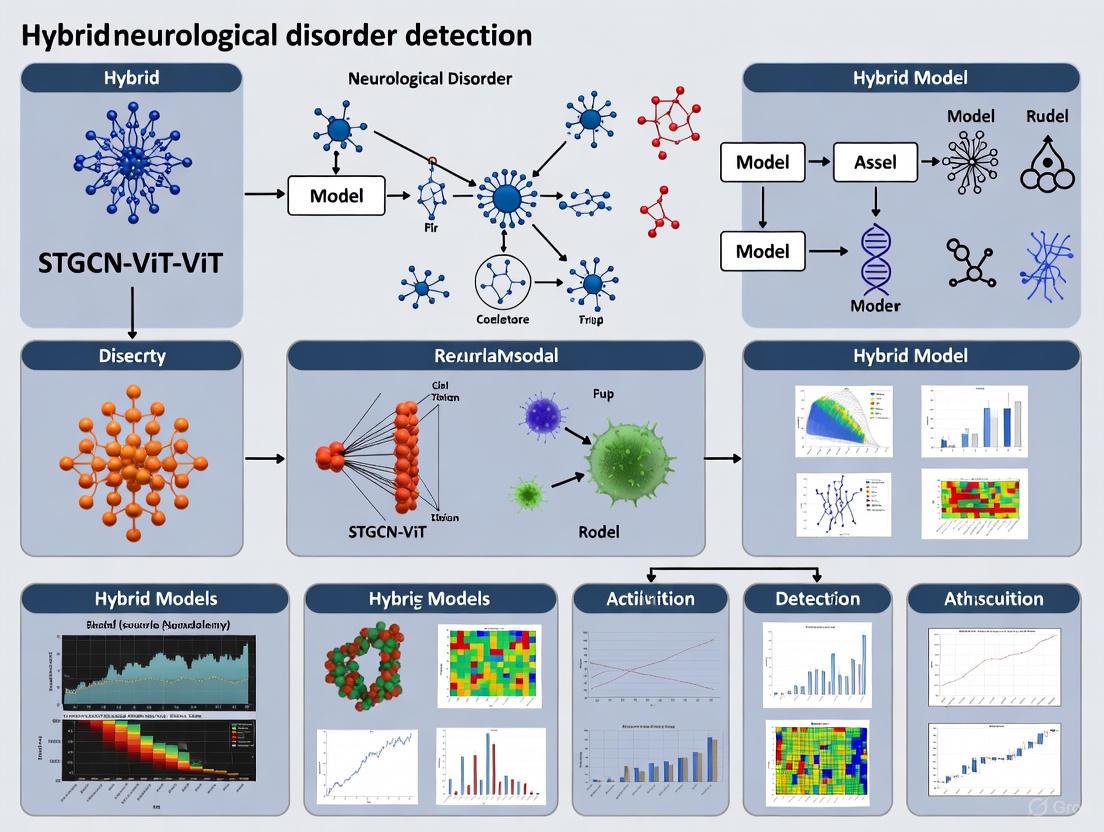

Figure 1: Diagnostic challenges and hybrid model solutions. The diagram illustrates how the STGCN-ViT architecture addresses fundamental limitations in neurological disorder diagnosis through integrated spatial-temporal analysis and attention mechanisms.

Experimental Protocols for Hybrid Model Implementation

STGCN-ViT Model Development Protocol

Dataset Preparation and Preprocessing

- Data Sources: Utilize standardized neuroimaging datasets such as OASIS (Open Access Series of Imaging Studies) for Alzheimer's disease research or BRATS2020 for brain tumor studies [1] [3]. Ensure appropriate data use agreements are in place before access [5].

- Image Enhancement: Apply preprocessing filters to improve image quality. Adaptive Median Filters (AMF) effectively reduce noise while preserving edges, and Laplacian filters enhance fine details and tissue boundaries in MRI scans [2].

- Data Augmentation: Address class imbalance through rotation, flipping, and scaling transformations. For neurological disorders with limited early-stage samples, targeted augmentation of underrepresented classes (e.g., mild dementia, early-stage tumors) is crucial [2] [3].

- Volumetric Processing: For 3D MRI data, implement specialized processing pipelines. For Parkinson's disease detection, 3D ConvKAN architectures have demonstrated superior generalization to early-stage cases compared to 2D approaches (AUC 0.600 vs. 0.378) [4].

Model Architecture Configuration

- Spatial Feature Extraction: Implement EfficientNet-B0 as the foundational spatial feature extractor to analyze high-resolution images [1]. Modify standard ResNet or GoogLeNet architectures to reduce computational cost while maintaining feature extraction capabilities [2].

- Temporal Graph Construction: Partition spatial features into regional representations and transform into graph structures where nodes represent brain regions and edges represent anatomical connectivity [1]. STGCN components model these spatial-temporal dependencies to track disease progression across multiple brain regions [1].

- Attention Mechanism Implementation: Configure Vision Transformer components with multi-head self-attention to focus on clinically significant regions [1] [2]. Modify standard ViT architecture to increase attention vertices while managing computational requirements [2].

Model Training and Validation

- Training Protocol: Implement cross-validation with subject-level separation to prevent data leakage. For Parkinson's disease detection, rigorous subject-level evaluation methodologies are essential for assessing generalizability [4].

- Performance Metrics: Evaluate models using comprehensive metrics including accuracy, precision, recall, F1-score, and AUC-ROC [1] [3]. For segmentation tasks, incorporate dice similarity coefficient for tumor subregions [6].

- Interpretability Analysis: Apply Explainable AI (XAI) techniques such as Grad-CAM, SHAP, and LIME to visualize model focus areas and decision processes, enhancing clinical trust and transparency [7] [6].

Cross-Disorder Validation Framework

Multi-Dataset Evaluation

- Within-Dataset Performance: Assess model performance on the same dataset used for training using appropriate cross-validation techniques [4].

- Cross-Dataset Generalizability: Evaluate trained models on independent datasets to test robustness across different imaging protocols and patient populations [4]. For Parkinson's disease detection, models trained on external cohorts (NEUROCON, Tao Wu) should be validated on early-stage PPMI datasets [4].

- Clinical Stage Stratification: Ensure representative inclusion of early-stage cases across all validation sets to properly assess early detection capabilities [4] [2].

Figure 2: STGCN-ViT experimental workflow. The diagram outlines the comprehensive protocol for implementing hybrid models from data preprocessing through multi-stage validation and interpretation.

Essential Research Reagent Solutions

Table 3: Critical Research Resources for Hybrid Model Development

| Resource Category | Specific Examples | Research Application |

|---|---|---|

| Neuroimaging Datasets | OASIS (Alzheimer's), BRATS2020 (Brain Tumors), PPMI (Parkinson's) [1] [4] [3] | Model training, validation, and benchmarking across disorders |

| Preprocessing Tools | Adaptive Median Filters, Laplacian Sharpening Filters [2] | Image quality enhancement, noise reduction, and feature preservation |

| Computational Frameworks | TensorFlow, PyTorch, MONAI | Implementation of hybrid architectures and training pipelines |

| Base Architectures | EfficientNet-B0, ResNet-50/101, Vision Transformers [1] [2] [5] | Foundational components for spatial feature extraction and attention mechanisms |

| Interpretability Tools | Grad-CAM, SHAP, LIME [7] [6] | Model decision visualization and clinical validation |

| Evaluation Metrics | AUC-ROC, Dice Score, Precision, Recall, F1-Score [1] [6] [3] | Comprehensive performance assessment across classification tasks |

The diagnostic challenge in early detection of neurological disorders stems from the complex interplay of subtle anatomical changes, limitations in conventional imaging analysis, and the progressive nature of these conditions. Hybrid deep learning architectures, particularly the STGCN-ViT framework, represent a transformative approach that bridges critical gaps in both spatial and temporal analysis of neuroimaging data. By integrating convolutional networks for spatial feature extraction, graph convolutional networks for modeling temporal dynamics, and vision transformers for global attention mechanisms, these models achieve superior accuracy in classifying Alzheimer's disease, Parkinson's disease, and brain tumors while enabling earlier detection. The experimental protocols and resource frameworks outlined provide researchers with comprehensive methodologies for implementing these advanced architectures, offering promising pathways toward clinically deployable tools that can significantly improve patient outcomes through timely intervention.

Limitations of Conventional MRI Analysis and Human Interpretation in Clinical Practice

Conventional Magnetic Resonance Imaging (MRI) serves as a cornerstone for diagnosing neurological disorders, providing invaluable, non-invasive visualization of brain anatomy. However, its reliance on qualitative, human-centric interpretation presents significant limitations for modern precision medicine and drug development. This application note details the core constraints of conventional MRI analysis, framed within the context of advancing quantitative biomarkers and artificial intelligence (AI), specifically highlighting the rationale for sophisticated models like hybrid Spatial-Temporal Graph Convolutional Networks and Vision Transformers (STGCN-ViT) in neurological research.

Core Limitations of Conventional MRI

The standard paradigm of qualitative MRI analysis is hampered by several intrinsic and operational challenges that affect diagnostic consistency, sensitivity, and quantitative tracking.

Subjectivity and Variability in Human Interpretation

The diagnostic process is inherently vulnerable to human factors, leading to inconsistent interpretations.

- Inter-reader Variability: Different radiologists can arrive at divergent conclusions from the same MRI scan. This subjectivity is particularly critical in early-stage neurological disorders (NDs) like Alzheimer's disease, where subtle anatomical changes are easily missed or interpreted differently [1].

- Lack of Standardized Guidelines: In many areas of MRI, a lack of medical society guidelines with specific imaging parameters means protocols are primarily determined by local expertise and personal preferences. This contributes to wide variability in both image acquisition and interpretation [8].

Qualitative Nature and Arbitrary Units

Conventional MRI provides contrast-weighted images in arbitrary units, limiting their utility as objective biomarkers.

- Non-Quantitative Output: Unlike quantitative MRI (qMRI), which estimates physical tissue parameters (e.g., T1 in ms, ADC in mm²/s), conventional MRI relies on relative signal intensities (T1-weighted, T2-weighted) that are not directly comparable across scanners, sites, or timepoints [9]. This hinders the precise monitoring of disease progression or treatment response essential for clinical trials.

- Focus on Macroscopic Morphology: Conventional structural MRI offers little insight into the underlying microstructure and physiology of the brain, providing measures of regional volume or cortical thickness but failing to detect microscopic changes in tissue integrity that precede gross atrophy [10].

Technical and Operational Challenges

Multiple technical and workflow factors further degrade the consistency and quality of MRI-based diagnosis.

- Artifact Vulnerability: Certain sequences, such as MR cholangiopancreatography and diffusion-weighted imaging (DWI), remain highly vulnerable to artifacts (e.g., motion, susceptibility). Results in clinical practice can be inconsistent, even at sites with a high level of expertise [8].

- Scanner and Protocol Variability: Heterogeneity across scanner vendors, platforms, and imaging protocols (e.g., differences in gradient strengths, slew rates, reconstruction filters) leads to inconsistent outputs, confounding multi-site research and reducing the generalizability of findings [11].

- Workflow Pressures: In clinical environments, competing demands of increasing patient volume and pressure to increase throughput limit the time available for staff to learn and implement new, more advanced techniques, leading to a significant delay in translating methodological advances into practice [8].

Table 1: Key Limitations of Conventional MRI Analysis and Their Impact on Research and Clinical Practice

| Limitation Category | Specific Challenge | Impact on Research & Clinical Practice |

|---|---|---|

| Human Interpretation | Inter-reader variability and subjectivity [1] | Reduces diagnostic reproducibility and agreement in multi-center trials |

| Lack of standardized reporting for artifacts [8] | Hinders systematic quality improvement and issue tracking across sites | |

| Data Characteristics | Qualitative data in arbitrary units [9] | Limits utility as an objective biomarker for tracking subtle changes over time |

| Insensitive to microscopic tissue changes [10] | Unable to detect early pathology before macroscopic structural damage occurs | |

| Technical & Operational | Scanner and protocol variability [11] | Confounds multi-site research findings and limits generalizability |

| High vulnerability to specific artifacts [8] | Compromises diagnostic reliability and can lead to inaccurate interpretations |

Quantitative Evidence and Experimental Validation of Limitations

Empirical data and structured experiments are crucial for quantifying these limitations and validating improved methodologies. The following protocol outlines a approach for benchmarking performance against advanced models.

Experimental Protocol: Benchmarking Diagnostic Accuracy and Robustness

1. Objective: To quantitatively compare the performance of conventional human interpretation against an automated hybrid AI model (STGCN-ViT) in the early detection of neurological disorders from MRI data, assessing accuracy, inter-rater reliability, and robustness to technical variability.

2. Datasets:

- Primary Dataset: Open Access Series of Imaging Studies (OASIS) for Alzheimer's disease applications [1] [12].

- Secondary Dataset: Data from Harvard Medical School (HMS) for general neurological disorder validation [1] [12].

- Inclusion Criteria: T1-weighted, T2-weighted, and FLAIR MRI scans from patients with early-stage NDs and matched healthy controls.

3. Experimental Arms:

- Arm A (Conventional Analysis): A panel of radiologists will independently review conventional MRI scans, providing diagnostic classifications and confidence scores.

- Arm B (AI-Assisted Analysis): The STGCN-ViT model will process the same scans to generate classification outputs [1].

4. Key Performance Metrics:

- Diagnostic Accuracy, Precision, Recall/Sensitivity, Specificity.

- Area Under the Receiver Operating Characteristic Curve (AUC-ROC).

- Inter-rater reliability (Fleiss' Kappa for Arm A).

5. Robustness Analysis: Introduce controlled technical variations (e.g., simulated noise, minor artifacts) to a subset of images and re-evaluate the performance of both arms to assess resilience.

Table 2: Quantitative Comparison of Diagnostic Performance from a Representative Study

| Model / Method | Accuracy (%) | Precision (%) | AUC-ROC Score | Reported Key Advantage |

|---|---|---|---|---|

| Conventional Human Interpretation | Not Explicitly Quantified | Not Explicitly Quantified | Not Applicable | Established clinical standard, provides holistic context |

| Proposed STGCN-ViT Model (Group A) [1] | 93.56 | 94.41 | 94.63 | Integrates spatial-temporal features for early detection |

| Proposed STGCN-ViT Model (Group B) [1] | 94.52 | 95.03 | 95.24 | Superior performance on independent validation dataset |

| Logistic Regression on MRI [1] | 97.00 (for BT) | Not Specified | Not Specified | Demonstrates baseline capability of ML for specific tasks |

| 2D-U-Net + Radiomics [1] | 95.30 | Not Specified | Not Specified | High accuracy in predicting MRI image quality |

The data in Table 2, derived from a study investigating a hybrid STGCN-ViT model, illustrates the potential of advanced AI to achieve high, quantifiable performance metrics that are not consistently reported for conventional human interpretation alone [1]. The integration of spatial feature extraction (via CNN), temporal dynamics (via STGCN), and self-attention mechanisms (via ViT) addresses the inability of conventional methods to capture the complex spatio-temporal progression of neurological diseases [1].

Workflow Comparing Conventional and AI-Driven MRI Analysis

The Scientist's Toolkit: Research Reagent Solutions

Transitioning from qualitative assessment to quantitative, AI-powered analysis requires a suite of specialized tools and resources.

Table 3: Essential Research Materials and Tools for Advanced MRI Analysis

| Item / Resource | Function / Description | Relevance to Model Development |

|---|---|---|

| hMRI Toolbox [10] | Open-source toolbox for generating quantitative parameter maps (R1, R2*, MTSat, PD) from multi-parametric MRI (MPM) data. | Provides standardized input features (qMRI maps) that are more robust than conventional weighted images for model training. |

| FSL (FMRIB Software Library) [10] | A comprehensive library of analysis tools for FMRI, MRI, and DTI brain imaging data. Used for image registration, distortion correction, and diffusion metric calculation (FA, MD). | Critical for pre-processing steps, including aligning dMRI data to MPM space and extracting diffusion-based biomarkers. |

| Multi-parametric MPM Protocol [10] | A protocol using multi-echo 3D FLASH acquisitions to simultaneously capture quantitative R1, R2*, MTSat, and PD maps. | Serves as a source of co-registered, multi-contrast quantitative data that reveals different tissue properties for a holistic view. |

| High-Resolution dMRI Protocol [10] | A diffusion MRI protocol with multiple b=0 acquisitions and many diffusion directions to compute Fractional Anisotropy (FA) and Mean Diffusivity (MD). | Provides microstructural integrity metrics that are complementary to qMRI relaxometry measures, enriching the feature set for models like STGCN. |

| OASIS & ADNI Datasets [1] [12] | Large-scale, open-access neuroimaging databases containing MRI data from patients with Alzheimer's disease and other disorders, alongside healthy controls. | Essential for training and validating AI models on real-world, clinically relevant data, ensuring generalizability. |

| STGCN-ViT Model Architecture [1] [12] | A hybrid deep learning model integrating Convolutional Neural Networks (CNN), Spatial-Temporal Graph Convolutional Networks (STGCN), and Vision Transformers (ViT). | Directly addresses the limitations of conventional analysis by capturing both spatial features and temporal disease dynamics for early diagnosis. |

The field of medical imaging is undergoing a profound transformation, driven by the rapid integration of artificial intelligence (AI) and machine learning (ML) technologies. This evolution marks a significant departure from traditional, often manual interpretation of medical images toward data-driven, automated, and assistive systems that support clinical decision-making at unprecedented levels [13]. The initial adoption of conventional machine learning approaches, which relied heavily on handcrafted feature extraction and traditional classifiers, has progressively given way to sophisticated deep learning architectures capable of learning hierarchical representations directly from raw image data.

This paradigm shift is particularly evident in neurology, where the early diagnosis of neurological disorders (ND) such as Alzheimer's disease (AD) and brain tumors (BT) presents unique challenges due to subtle changes in brain anatomy that can be difficult to detect through human analysis alone [1] [12]. Magnetic Resonance Imaging (MRI) serves as a vital tool for diagnosing and visualizing these disorders, yet standard techniques contingent upon human analysis can be inaccurate, time-consuming, and may miss early-stage symptoms crucial for effective treatment [1]. The integration of ML, particularly deep learning (DL), has opened new avenues for addressing these limitations by providing automated diagnostic systems that deliver accurate findings with minimal margin for error [1].

The emergence of foundation models (FMs) represents the latest frontier in this evolution. These models, trained on broad data using self-supervision at scale, can be adapted to a wide range of downstream tasks, effectively addressing the persistent challenge of labeled data scarcity in medical imaging [14]. This review traces the technological trajectory from traditional models to contemporary deep learning approaches, with particular emphasis on hybrid architectures such as the STGCN-ViT model for neurological disorder detection, while providing detailed application notes and experimental protocols for research implementation.

Evolution of Machine Learning Approaches in Medical Imaging

Traditional Machine Learning Models

Traditional machine learning approaches in medical imaging predominantly relied on handcrafted feature extraction followed by classification using standard algorithms. These methods utilized techniques such as texture analysis, edge detection, and statistical modeling to extract diagnostic patterns from medical images [15]. In neurological applications, features derived from MRI scans—including morphological measurements, texture descriptors, and intensity-based statistics—were fed into classifiers such as Support Vector Machines (SVM), Random Forests, and k-Nearest Neighbors (k-NN) for tasks like Alzheimer's disease classification and brain tumor detection [15].

While these methods were interpretable and aligned with established medical practices, they proved labor-intensive, highly reliant on expert-driven feature engineering, and struggled to generalize across diverse datasets [15]. Their performance was ultimately constrained by the quality and comprehensiveness of the engineered features, which often failed to capture the complex, hierarchical patterns present in medical images.

Deep Learning Revolution

The advent of deep learning, particularly Convolutional Neural Networks (CNNs), transformed medical image analysis by enabling automatic learning of spatial hierarchies directly from raw image data [1] [15]. CNNs demonstrated remarkable capabilities in detecting anomalies and abnormalities within brain imaging studies, making them invaluable tools for diagnosing neurological disorders [1]. Their capacity to learn relevant features automatically from data significantly reduced the dependency on manual feature engineering and consistently outperformed traditional methods across various medical imaging tasks.

More recently, Vision Transformers (ViTs) have emerged as a powerful alternative to CNN-based architectures. By employing self-attention mechanisms, ViTs can concurrently focus on multiple image regions, capturing global contextual information that may be challenging for CNNs with their localized receptive fields [1] [7]. This capability proves particularly valuable for identifying subtle, distributed patterns associated with early-stage neurological disorders.

Hybrid and Foundation Models

The most recent advancements involve the development of hybrid models that combine the strengths of multiple architectures, and foundation models pre-trained on vast, diverse datasets. Hybrid models such as STGCN-ViT integrate spatial feature extraction capabilities of CNNs, temporal modeling of Spatial-Temporal Graph Convolutional Networks (STGCN), and global contextual understanding of Vision Transformers to achieve comprehensive analysis of neurological disorders [1]. Meanwhile, foundation models address the critical challenge of data scarcity in medical imaging by leveraging self-supervised learning on large unlabeled datasets before being fine-tuned for specific clinical tasks with limited annotations [14].

Table 1: Evolution of Machine Learning Approaches in Medical Imaging

| Approach | Key Characteristics | Advantages | Limitations | Representative Applications |

|---|---|---|---|---|

| Traditional ML | Handcrafted features, statistical classifiers | Interpretable, lower computational需求 | Limited representation learning, expert-dependent feature engineering | Brain tumor classification using texture features + SVM |

| Deep Learning (CNNs) | Hierarchical feature learning, convolutional operations | Automatic feature extraction, state-of-the-art performance on many tasks | Large labeled datasets required, limited global context | Alzheimer's detection from MRI, brain tumor segmentation |

| Vision Transformers | Self-attention mechanisms, global context modeling | Superior long-range dependency capture, scalability | Computationally intensive, data-hungry | Whole-slide image analysis, multi-scale medical image classification |

| Hybrid Models | Combined architectures (CNN + ViT + STGCN) | Leverage complementary strengths, spatiotemporal analysis | Implementation complexity, training challenges | STGCN-ViT for neurological disorder progression tracking |

| Foundation Models | Large-scale self-supervised pre-training, task adaptation | Reduced annotation needs, strong generalization | Computational resources, deployment challenges | Multi-institutional medical image analysis across modalities |

The STGCN-ViT Framework for Neurological Disorder Detection

The STGCN-ViT model represents a cutting-edge hybrid framework that strategically integrates convolutional networks, graph neural networks, and transformer architectures to address the complex challenge of neurological disorder detection from medical images. This model specifically addresses critical limitations in existing approaches, including the inadequate capture of long-range dependencies by standard CNNs, the inability to explicitly model temporal progression patterns, and the insufficient integration of both spatial and temporal features in a balanced manner [1].

The architecture employs EfficientNet-B0 for spatial feature extraction from high-resolution medical images, leveraging its proven efficiency and accuracy in visual recognition tasks [1]. The spatial-temporal graph convolutional network (STGCN) component then models temporal dependencies by representing the brain as a graph where nodes correspond to anatomical regions and edges represent structural or functional connectivity, enabling tracking of disease progression across multiple timepoints [1]. Finally, the Vision Transformer (ViT) module incorporates self-attention mechanisms to focus on clinically relevant regions and significant spatial patterns in the scans, providing global contextual understanding [1].

Experimental Protocol for STGCN-ViT Implementation

Materials and Dataset Preparation:

- Datasets: Utilize the Open Access Series of Imaging Studies (OASIS) and Harvard Medical School (HMS) benchmark datasets [1]

- Data Preprocessing: Apply standard neuroimaging preprocessing pipeline including skull stripping, intensity normalization, and spatial registration to a standardized template

- Data Augmentation: Implement random rotations, flipping, intensity variations, and elastic deformations to improve model generalization

- Hardware Requirements: High-performance computing environment with multiple GPUs (NVIDIA A100 or equivalent recommended) for efficient training of deep neural networks

Implementation Protocol:

- Spatial Feature Extraction:

- Initialize EfficientNet-B0 with pre-trained weights

- Process individual MRI slices through the network

- Extract feature maps from intermediate layers

- Apply adaptive pooling to standardize feature dimensions

Spatial-Temporal Graph Construction:

- Parcellate brain images into anatomical regions using standard atlases (AAL, Harvard-Oxford)

- Construct graph nodes from regional features

- Establish edges based on structural connectivity or spatial proximity

- Incorporate temporal dimension by connecting corresponding regions across sequential scans

STGCN Processing:

- Implement graph convolutional layers to capture spatial dependencies

- Apply temporal convolutional layers to model progression patterns

- Utilize skip connections to maintain gradient flow

- Employ batch normalization and dropout for training stability

Vision Transformer Integration:

- Partition STGCN outputs into patches and flatten

- Add positional embeddings and learnable classification token

- Process through multi-head self-attention layers

- Apply multi-layer perceptron head for final classification

Model Training:

- Initialize with Xavier uniform weight initialization

- Use AdamW optimizer with learning rate of 1e-4

- Implement cosine annealing learning rate scheduler

- Employ cross-entropy loss with label smoothing

- Train for 200-300 epochs with early stopping

Model Evaluation:

- Perform k-fold cross-validation (typically 5-fold)

- Evaluate on hold-out test set with balanced class distribution

- Assess using multiple metrics: accuracy, precision, recall, F1-score, and AUC-ROC

STGCN-ViT Architecture Workflow

Performance Analysis and Comparative Evaluation

Quantitative Results of STGCN-ViT Model

The STGCN-ViT model has demonstrated exceptional performance in neurological disorder detection tasks. When evaluated on standard benchmark datasets, the model achieved remarkable metrics that underscore its potential for clinical implementation. On Group A datasets, the approach attained an accuracy of 93.56%, precision of 94.41%, and an Area under the Receiver Operating Characteristic Curve (AUC-ROC) score of 94.63% [1]. For the more challenging Group B datasets, the model attained even better results with an accuracy of 94.52%, precision of 95.03%, and AUC-ROC score of 95.24% [1].

These results significantly outperform both standard and transformer-based models, providing compelling evidence for the model's utility in real-time medical applications and its potential for accurate early-stage neurological disorder diagnosis [1]. The consistency of high performance across different dataset groups further validates the robustness and generalizability of the approach.

Table 2: Performance Comparison of Medical Imaging AI Models

| Model Architecture | Application Domain | Accuracy | Precision | Recall | AUC-ROC | Dataset |

|---|---|---|---|---|---|---|

| STGCN-ViT [1] | Neurological Disorders | 93.56%-94.52% | 94.41%-95.03% | - | 94.63%-95.24% | OASIS, HMS |

| PDSCNN-RRELM [16] | Brain Tumor Classification | 99.22% | 99.35% | 99.30% | - | Brain MRI |

| CNN-ViT Ensemble [7] | Cervical Cancer Diagnosis | 95.10%-99.18% | 95.01%-99.15% | 95.01%-99.18% | - | Mendeley LBC, SIPaKMeD |

| AMRI-Net + EDAL [15] | Multi-modal Integration | 94.95% | - | - | - | ISIC, HAM10000, OCT2017, Brain MRI |

| U-Net Based [13] | Liver Segmentation | - | - | - | - | CT/MRI (HCC) |

| Random Forest [13] | Prostate Cancer Lymph Node Prediction | - | - | - | - | mp-MRI |

Ablation Studies and Component Analysis

Rigorous ablation studies conducted with the STGCN-ViT framework have demonstrated the complementary value of each architectural component. When evaluated independently, the EfficientNet-B0 spatial feature extraction component provided solid baseline performance but lacked temporal understanding crucial for tracking disease progression [1]. The STGCN module alone effectively captured spatiotemporal dynamics but struggled with global contextual relationships in individual scans [1]. The Vision Transformer component excelled at identifying spatially distributed patterns through self-attention mechanisms but lacked explicit temporal modeling capabilities [1].

The integrated framework demonstrated synergistic performance exceeding the arithmetic sum of individual components, validating the architectural hypothesis that spatial, temporal, and global contextual features provide complementary information for neurological disorder diagnosis [1]. This comprehensive approach proved particularly advantageous for early-stage detection where subtle changes across both spatial and temporal dimensions provide the most valuable diagnostic information [1].

Research Reagent Solutions and Computational Tools

Table 3: Essential Research Reagents and Computational Tools

| Resource Category | Specific Tools/Platforms | Function/Purpose | Application in Neurological Disorder Detection |

|---|---|---|---|

| Medical Imaging Datasets | OASIS, HMS, ADNI | Benchmark datasets for model training and validation | Provide standardized MRI data for neurological disorder classification |

| Deep Learning Frameworks | PyTorch, TensorFlow, MONAI | Model implementation, training, and evaluation | Enable development of hybrid architectures like STGCN-ViT |

| Medical Imaging Libraries | NiBabel, DICOM, ITK-SNAP | Medical image reading, processing, and visualization | Handle neuroimaging data format conversion and preprocessing |

| Graph Neural Network Libraries | PyTorch Geometric, DGL | Implementation of graph-based components | Construct and process brain region graphs in STGCN |

| Model Interpretation Tools | SHAP, Grad-CAM, Attention Visualization | Explain model predictions and decision processes | Provide insights into regions of interest in MRI scans |

| Computational Infrastructure | NVIDIA GPUs, Google Colab, AWS | High-performance computing resources | Accelerate training of computationally intensive hybrid models |

| Evaluation Metrics | Scikit-learn, MedPy | Performance assessment and statistical analysis | Quantify classification accuracy, precision, recall, AUC-ROC |

Implementation Protocols for Key Experimental Procedures

Protocol 1: Multi-modal Data Integration and Preprocessing

Objective: To standardize the acquisition and preprocessing of multi-modal medical imaging data for robust model training.

Materials:

- Multi-modal neuroimaging data (T1-weighted, T2-weighted, DTI, fMRI)

- High-performance computing environment with adequate storage

- Medical image processing software (ANTs, FSL, FreeSurfer)

Procedure:

- Data Acquisition and Quality Control

- Acquire structural and functional MRI scans following standardized protocols

- Perform quality assessment using automated tools (MRIQC)

- Exclude datasets with excessive motion artifacts or acquisition errors

Image Preprocessing

- Apply N4 bias field correction for intensity inhomogeneity

- Perform skull stripping using hybrid approach (BET + manual verification)

- Execute spatial normalization to standard template (MNI space)

- Conduct intensity normalization across all scans

Data Augmentation

- Implement geometric transformations (rotation, scaling, elastic deformations)

- Apply intensity-based augmentations (noise injection, contrast adjustment)

- Utilize generative models (GANs) for synthetic data generation if needed

Dataset Partitioning

- Split data into training (70%), validation (15%), and test (15%) sets

- Ensure stratified sampling to maintain class distribution

- Implement cross-validation splits for robust evaluation

Quality Control Measures:

- Visual inspection of preprocessing results

- Quantitative assessment of registration accuracy

- Monitoring of data leakage between splits

Protocol 2: STGCN-ViT Model Training and Optimization

Objective: To establish a standardized protocol for training and optimizing the hybrid STGCN-ViT model.

Materials:

- Preprocessed and partitioned neuroimaging dataset

- Python 3.8+ with deep learning frameworks (PyTorch 1.9+)

- GPU cluster with minimum 16GB VRAM per GPU

Procedure:

- Model Configuration

- Initialize EfficientNet-B0 with ImageNet pre-trained weights

- Configure STGCN with 64-128-256 hidden dimensions

- Set up ViT with 6 attention heads and 512 embedding dimensions

- Implement progressive learning rate warmup

Training Procedure

- Execute mixed-precision training for memory efficiency

- Apply gradient clipping (max norm: 1.0) for training stability

- Implement label smoothing (epsilon: 0.1) for regularization

- Utilize exponential moving average of model weights

Hyperparameter Optimization

- Conduct Bayesian hyperparameter search

- Optimize learning rate (search space: 1e-5 to 1e-3)

- Tune dropout rates (search space: 0.1 to 0.5)

- Optimize batch size (range: 8-32 based on GPU memory)

Regularization Strategies

- Apply stochastic depth (rate: 0.1-0.3)

- Implement weight decay (range: 1e-4 to 1e-2)

- Use data augmentation throughout training

- Employ early stopping with patience of 20 epochs

Validation Framework:

- Monitor training and validation metrics simultaneously

- Perform model selection based on validation AUC-ROC

- Conduct statistical significance testing of results

Experimental Workflow for Medical Imaging AI

Future Directions and Implementation Challenges

Emerging Trends and Research Opportunities

The field of machine learning in medical imaging continues to evolve rapidly, with several promising research directions emerging. Foundation models pre-trained on large-scale, multi-modal medical imaging datasets represent a paradigm shift from task-specific models to general-purpose visual encoders that can be adapted to various downstream applications with minimal fine-tuning [14]. The integration of imaging data with complementary information sources, including clinical records, genomic data, and proteomic profiles, presents opportunities for developing more comprehensive diagnostic and prognostic models [13].

Explainable AI (XAI) techniques are becoming increasingly important for clinical translation, with methods such as SHAP (SHapley Additive exPlanations) and attention visualization providing insights into model decision-making processes [16] [7]. The development of federated learning frameworks addresses critical concerns regarding data privacy and security, enabling multi-institutional collaboration without sharing sensitive patient data [13]. Meanwhile, the emergence of generative AI models offers potential solutions to data scarcity challenges through synthetic data generation and data augmentation [15].

Implementation Challenges and Considerations

Despite the remarkable progress, significant challenges remain in the widespread clinical implementation of ML-based medical imaging systems. Data scarcity and annotation costs continue to constrain model development, particularly for rare neurological disorders [14]. Model generalizability across different scanner types, imaging protocols, and patient populations represents a persistent challenge that requires careful attention to domain adaptation techniques [15].

The computational complexity of hybrid models like STGCN-ViT presents practical deployment challenges in resource-constrained clinical environments [1]. Regulatory approval and standardization processes for AI-based medical devices remain complex and time-consuming, necessitating robust validation across diverse clinical settings [13]. Finally, integration with existing clinical workflows and picture archiving and communication systems (PACS) requires thoughtful interface design and user experience optimization to ensure seamless adoption by healthcare professionals [15].

Addressing these challenges will require collaborative efforts between computer scientists, clinical researchers, regulatory specialists, and healthcare providers to ensure that advanced machine learning technologies can fulfill their potential to revolutionize neurological disorder diagnosis and patient care.

Application Notes: The Spatiotemporal Challenge in Neurology

The Diagnostic Gap in Current Neurological Practice

The progression of neurological disorders (NDs) unfolds across both spatial and temporal dimensions, creating a critical analytical gap in conventional diagnostic approaches. Standard neuroimaging techniques frequently capture static anatomical representations, failing to integrate the dynamic temporal patterns essential for early detection and prognosis. Spatial dynamics refer to the specific brain regions and networks affected by a disease, while temporal dynamics capture the sequence, timing, and evolution of pathological changes. The integration of these dimensions remains a significant challenge in clinical neurology, limiting both diagnostic precision and therapeutic development [1] [17].

Evidence increasingly demonstrates that distinct spatiotemporal progression patterns correlate with specific clinical outcomes across multiple neurological conditions. Research on autoimmune demyelinating diseases has identified discrete atrophy subtypes with unique prognostic implications:

- In Multiple Sclerosis (MS), three spatial atrophy subtypes emerge: cortical (severe cognitive decline), spinal cord (high relapse frequency), and subcortical (severe physical disability) [18] [19]

- In Neuromyelitis Optica Spectrum Disorders (NMOSD), spatial subtypes include cortical (severe cognitive/physical disability), spinal cord (high relapses), and cerebellar (favorable prognosis) [19]

- White Matter Hyperintensity (WMH) progression follows distinct spatiotemporal trajectories: fronto-parietal (delayed onset, more hypertension), radial (widespread progression), and temporo-occipital (more atrial fibrillation) [20]

The clinical impact of these patterns is substantial. For instance, the fronto-parietal WMH subtype shows higher 1-year ischemic stroke recurrence, while the temporo-occipital subtype correlates with worse 3-month outcomes post-stroke [20]. Similarly, advanced stages in MS spinal cord and subcortical atrophy subtypes associate with severe physical disability and cognitive decline [19]. These findings underscore the prognostic value of spatiotemporal analysis for stratified medicine in neurology.

Limitations of Current Analytical Methods

Conventional analytical frameworks face fundamental limitations in capturing the integrated spatiotemporal nature of neurological disease progression:

- Static Imaging Bias: Traditional MRI analysis provides spatial information but typically misses temporal dynamics essential for understanding disease evolution [1]

- Isolated Network Analysis: Most brain network studies examine isolated sequences from sliding windows, failing to capture higher-order spatiotemporal topological patterns [21]

- Temporal Modeling Deficits: Standard Convolutional Neural Networks (CNNs) excel at spatial feature extraction but lack mechanisms for modeling temporal dependencies in disease progression [1]

- Single-Modality Limitations: Approaches relying solely on functional or structural connectivity miss complementary information crucial for comprehensive assessment [21]

These limitations directly impact clinical utility, particularly for early intervention where subtle spatiotemporal signatures often precede overt symptoms. The inability to capture progressive spatial redistribution of pathology over time represents a critical diagnostic gap with implications for drug development and clinical trial design.

Experimental Protocols & Data Presentation

Quantitative Evidence for Spatiotemporal Dynamics

Table 1: Spatiotemporal Subtype Classification in Neurodegenerative and Autoimmune Disorders

| Condition | Spatiotemporal Subtypes | Key Identifying Features | Clinical Correlations |

|---|---|---|---|

| Alzheimer's Disease & MCI [17] | Dynamic Functional Connectivity Patterns | Altered connectivity in hippocampus, amygdala, precuneus, insula | 83.9% accuracy distinguishing AD from healthy controls |

| White Matter Hyperintensities [20] | Fronto-parietal (21%) | Progression from frontal to parietal lobes | Delayed onset, more hypertension, higher 1-year stroke recurrence |

| Radial (46%) | Widespread progression across all lobes | - | |

| Temporo-occipital (33%) | Progression from temporal to occipital lobes | More atrial fibrillation, coronary heart disease, worse 3-month outcomes | |

| Multiple Sclerosis [18] [19] | Cortical | Prominent cortical atrophy | Severe cognitive decline |

| Spinal Cord | Significant cord involvement | High number of relapses | |

| Subcortical | Subcortical gray matter atrophy | Severe physical disability | |

| NMOSD [18] [19] | Cortical | Cortical atrophy patterns | Severe cognitive and physical disability |

| Spinal Cord | Longitudinal extensive cord lesions | High number of relapses | |

| Cerebellar | Cerebellar involvement | Favorable prognosis |

Table 2: Performance Metrics of Advanced Spatiotemporal Analysis Models

| Model/Approach | Disorder | Accuracy | Precision | AUC-ROC | Key Innovation |

|---|---|---|---|---|---|

| STGCN-ViT [1] [12] | General Neurological Disorders | 93.56%-94.52% | 94.41%-95.03% | 94.63%-95.24% | Integrates spatial (CNN), temporal (STGCN), and attention (ViT) mechanisms |

| Dynamic-GRNN [17] | Alzheimer's Disease | 83.9% | - | 83.1% | Combines sliding windows with spatial encoding and dynamic graph pooling |

| Multi-channel Spatio-temporal Graph Attention [21] | Epilepsy & Alzheimer's | Outperforms benchmarks | - | - | Integrates structural and functional connectivity with contrastive learning |

Detailed Experimental Protocols

Protocol 1: STGCN-ViT Model Implementation for Neurological Disorder Classification

Purpose: To implement a hybrid deep learning model that integrates spatial, temporal, and attention mechanisms for early ND detection.

Materials:

- MRI datasets (OASIS, Harvard Medical School)

- Python 3.8+, PyTorch 1.10+

- NVIDIA GPU with ≥12GB VRAM

- Medical image preprocessing tools (ANTs, FSL)

Methodology:

Data Preprocessing

- Convert DICOM to NIfTI format

- Perform skull stripping, intensity normalization, and spatial normalization to MNI space

- Apply data augmentation (rotation, flipping, intensity variations)

Spatial Feature Extraction with EfficientNet-B0

- Utilize pre-trained EfficientNet-B0 backbone for initial spatial feature extraction

- Extract feature maps from convolutional layers at multiple resolutions

- Generate spatial feature tensor of dimensions [B, C, H, W]

Spatio-Temporal Graph Construction

- Partition brain into anatomical regions based on AAL atlas

- Reduce regional features through average pooling

- Construct spatial-temporal graph where nodes represent brain regions and edges represent anatomical connectivity

- Incorporate temporal dimension through sequential scanning

Temporal Dynamics Modeling with STGCN

- Apply spatial-temporal graph convolutional layers

- Model information flow across brain regions using Chebyshev polynomial approximation

- Capture temporal evolution with temporal convolutional layers

- Output temporal feature embeddings

Attention Mechanism with Vision Transformer

- Flatten spatial-temporal features into sequence

- Add positional encodings to preserve spatial information

- Process through multi-head self-attention layers

- Enable model to focus on discriminative spatiotemporal patterns

Classification and Validation

- Final classification through fully connected layers with softmax activation

- Validate using 10-fold cross-validation

- Assess performance using accuracy, precision, recall, F1-score, and AUC-ROC

Validation Metrics: Achieved accuracy of 93.56%-94.52%, precision of 94.41%-95.03%, and AUC-ROC of 94.63%-95.24% on neurological disorder classification tasks [1] [12].

Protocol 2: Dynamic Functional Connectivity Analysis for Alzheimer's Disease

Purpose: To identify early Alzheimer's disease through spatiotemporal analysis of dynamic functional connectivity patterns.

Materials:

- Resting-state fMRI data from ADNI dataset

- Processing pipelines (DPARSF, SPM12)

- Python with NetworkX, PyTorch Geometric

- High-performance computing cluster

Methodology:

fMRI Preprocessing

- Remove first 4 volumes for magnetization equilibrium

- Apply slice timing correction and head motion realignment

- Normalize to MNI space with 3mm isotropic voxels

- Apply temporal filtering (0.01-0.1 Hz) to reduce low-frequency drift

Dynamic Functional Connectivity Construction

- Apply sliding window approach (window size=60-100s, step=1TR)

- Calculate Pearson Correlation Coefficient between brain regions for each window

- Implement Slide Piecewise Aggregation to enhance node features and suppress noise

- Construct dynamic brain network sequence

Graph Neural Network Processing

- Apply spatiotemporal encoding to capture dynamic interactions

- Implement self-attention graph pooling to select key nodes

- Utilize Dynamic Graph Recurrent Neural Network architecture

- Extract spatiotemporal features from dynamic connectivity patterns

Classification and Biomarker Identification

- Train classifier to distinguish Early Mild Cognitive Impairment, AD, and healthy controls

- Identify key affected regions: hippocampus, amygdala, precuneus, insula

- Validate model accuracy through cross-validation

Validation Metrics: Achieved 83.9% accuracy and 83.1% AUC in distinguishing AD from healthy controls [17].

Visualization Framework

STGCN-ViT Model Architecture

Dynamic Functional Connectivity Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Resources for Spatiotemporal Neurological Analysis

| Resource Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Neuroimaging Datasets | OASIS [1], ADNI [17] [21], UK Biobank [20] | Provide standardized, annotated neuroimaging data for model training and validation | Multi-center studies, algorithm benchmarking, longitudinal analysis |

| Spatiotemporal ML Models | STGCN-ViT [1] [12], Dynamic-GRNN [17], Multi-channel Spatio-temporal Graph Attention [21] | Integrated analysis of spatial and temporal dynamics in brain networks | Early disease detection, progression forecasting, subtype classification |

| Software Libraries | PyTorch Geometric, TensorFlow, EEGlab, SPM12, DPARSF, FreeSurfer | Implement graph neural networks, signal processing, and statistical analysis | Model development, neuroimaging preprocessing, feature extraction |

| Analysis Frameworks | Subtype and Stage Inference (SuStaIn) [18] [20] [19] | Identify distinct spatiotemporal trajectories of disease progression | Disease subtyping, staging, progression modeling |

| Computational Infrastructure | NVIDIA GPUs (≥12GB VRAM), HPC clusters | Accelerate model training and large-scale neuroimaging analysis | Deep learning model training, large dataset processing |

| Multi-modal Integration Tools | Spatial ARP-seq, Spatial CTRP-seq [22] | Simultaneously profile epigenome, transcriptome, and proteome in tissue sections | Molecular mechanism exploration across central dogma layers |

The early diagnosis of neurological disorders (NDs), such as Alzheimer's disease (AD) and Brain Tumors (BT), is highly challenging due to the subtle anatomical changes these conditions cause in the brain. Magnetic Resonance Imaging (MRI) is a vital tool for visualizing these disorders; however, standard diagnostic techniques that rely on human analysis can be inaccurate, time-consuming, and may miss the early-stage symptoms necessary for effective treatment [23] [12]. While Convolutional Neural Networks (CNNs) and other deep learning models have improved spatial feature extraction from medical images, they frequently fail to capture temporal dynamics, which are significant for a comprehensive analysis of disease progression [23].

To address these limitations, a novel hybrid model, the STGCN-ViT, has been developed. This model integrates the strengths of three powerful components: CNNs for spatial feature extraction, Spatial–Temporal Graph Convolutional Networks (STGCN) for capturing temporal dependencies, and Vision Transformers (ViT) with self-attention mechanisms for focusing on crucial spatial patterns [23]. This integration represents a conceptual leap by providing a unified framework that simultaneously models the spatial and temporal evolution of neurological disorders, leading to more accurate and early diagnosis.

Quantitative Performance Analysis

The STGCN-ViT model has been rigorously evaluated on benchmark datasets, including the Open Access Series of Imaging Studies (OASIS) and data from Harvard Medical School (HMS). The model's performance demonstrates its superiority over standard and transformer-based models [23].

Table 1: Performance Metrics of the STGCN-ViT Model on Different Datasets [23]

| Dataset Group | Accuracy (%) | Precision (%) | AUC-ROC Score (%) |

|---|---|---|---|

| Group A | 93.56 | 94.41 | 94.63 |

| Group B | 94.52 | 95.03 | 95.24 |

Table 2: Comparative Analysis of STGCN-ViT Against Other Model Components

| Model or Component | Primary Function | Key Advantage | Application in Neurology |

|---|---|---|---|

| EfficientNet-B0 | Spatial Feature Extraction | Analyzes high-resolution images accurately and efficiently [23]. | Extracts detailed anatomical features from brain MRI scans. |

| Spatial-Temporal GCN (STGCN) | Temporal Feature Extraction | Models progression patterns and dependencies across different brain regions over time [23]. | Tracks the progression of atrophy or lesion development. |

| Vision Transformer (ViT) | Feature Refinement via Self-Attention | Identifies complex, long-range dependencies and subtle patterns in image data [24] [23]. | Highlights critical, distributed biomarkers of early-stage disease. |

| Standard CNN | Spatial Feature Extraction | Effective at recognizing general visual patterns [24]. | Limited by fixed receptive fields and inability to model long-range dependencies [23]. |

Experimental Protocols

Protocol 1: Data Preprocessing and Feature Extraction

This protocol outlines the initial steps for preparing MRI data and extracting foundational features.

- Input Data: Begin with T1-weighted and T2-weighted MRI scans from datasets such as OASIS or HMS [23].

- Spatial Feature Extraction:

- Utilize a pre-trained EfficientNet-B0 model as the foundational feature extractor [23].

- Process the MRI scans through EfficientNet-B0 to generate high-quality spatial feature maps. This step effectively captures detailed anatomical structures.

- Graph Construction:

- Partition the extracted spatial features into distinct regions representing different areas of the brain.

- Construct a spatial-temporal graph where each node corresponds to a brain region. The features of each node are the reduced-dimensionality feature vectors from the CNN.

- The edges between nodes represent the anatomical or functional connections, creating a graph that encapsulates both spatial relationships and the potential for temporal tracking [23].

Protocol 2: STGCN-ViT Model Architecture and Training

This protocol details the core architecture and the procedure for training the hybrid model.

- Temporal Modeling with STGCN:

- Feed the constructed spatial-temporal graph into the STGCN component.

- The STGCN applies graph convolutions to model the temporal dependencies and progression patterns across the sequenced brain region data [23].

- Feature Refinement with Vision Transformer (ViT):

- The output from the STGCN, enriched with spatial-temporal information, is further processed by a Vision Transformer.

- The ViT employs a self-attention mechanism to weigh the importance of different features and regions, focusing on the most discriminative patterns for disease classification [23].

- Model Training and Evaluation:

- Train the integrated STGCN-ViT model using backpropagation and a suitable optimizer (e.g., Adam) with a cross-entropy loss function.

- Evaluate the model on a held-out test set, reporting standard metrics including accuracy, precision, recall, F1-score, and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC) [23].

Model Architecture and Workflow Visualization

Diagram 1: STGCN-ViT Model Workflow

Diagram 2: Logical Relationship: Problem to Solution

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for STGCN-ViT Research

| Item Name | Function / Description | Specification / Example |

|---|---|---|

| OASIS Dataset | A publicly available neuroimaging dataset providing a large set of MRI data for studying neurological disorders [23]. | Used for training and validating the model for conditions like Alzheimer's disease. |

| Harvard Medical School (HMS) Dataset | A benchmark dataset of brain MRIs, used for evaluating model performance on tasks such as brain tumor detection [23]. | Provides high-quality, annotated medical images. |

| EfficientNet-B0 | A pre-trained CNN backbone for efficient and accurate initial spatial feature extraction from high-resolution MRI scans [23]. | Provides a balance between accuracy and computational efficiency. |

| Spatial-Temporal Graph Convolutional Network (STGCN) | A specialized neural network designed to model data with both spatial and temporal dependencies, crucial for tracking disease progression [23]. | Captures how anatomical changes evolve across the brain over time. |

| Vision Transformer (ViT) | A transformer-based model for image recognition that uses self-attention to identify globally important features for classification [23]. | Excels at capturing long-range dependencies in image data. |

Architecture in Action: Deconstructing the STGCN-ViT Hybrid Model for Medical Imaging

The integration of EfficientNet-B0, Spatio-Temporal Graph Convolutional Networks (STGCN), and Vision Transformer (ViT) represents a sophisticated hybrid approach designed to overcome the limitations of individual deep learning models in analyzing complex medical imaging data. This architecture is particularly impactful in the domain of neurological disorder (ND) detection, where it addresses the critical challenge of capturing both subtle spatial features and their temporal progression in image sequences such as MRI scans. Conventional models often prioritize either spatial or temporal features, but fail to effectively synthesize both, which is essential for identifying early-stage disorders like Alzheimer's disease and brain tumors where anatomical changes are minimal and evolve over time [12] [1]. The proposed hybrid model, termed STGCN-ViT, strategically delegates tasks: EfficientNet-B0 acts as a powerful spatial feature extractor, STGCN models the temporal dynamics and relationships between anatomical regions, and the ViT leverages a self-attention mechanism to focus on the most diagnostically relevant features across the entire image [12] [1]. This synergistic combination has demonstrated superior performance, achieving accuracies over 94% and AUC-ROC scores exceeding 95% on benchmark datasets like OASIS and those from Harvard Medical School, thereby providing a robust tool for real-time clinical applications [12] [1].

Component Fundamentals and Specifications

EfficientNet-B0: The Spatial Feature Extractor

EfficientNet-B0 serves as the foundational spatial feature extractor within the hybrid model. It is the baseline model of the EfficientNet family, which introduced a revolutionary compound scaling method that uniformly scales the network's depth (number of layers), width (number of channels), and input image resolution using a fixed set of coefficients [25] [26]. This principled approach ensures a more efficient balance between model complexity and performance compared to haphazard scaling of single dimensions.

- Architecture and Core Components: The network begins with a stem layer composed of a single 2D convolution with a 3x3 kernel and a stride of 2, followed by batch normalization and a Swish activation function, which provides an initial down-sampling and feature extraction [27]. The body of EfficientNet-B0 is composed of a series of Mobile Inverted Bottleneck Convolution (MBConv) blocks, many of which incorporate Squeeze-and-Excitation (SE) modules to adaptively recalibrate channel-wise feature responses [25] [26]. A key feature of these blocks is depthwise separable convolution, which factorizes a standard convolution into a depthwise convolution (applying a single filter per input channel) and a pointwise convolution (1x1 convolution to combine outputs), drastically reducing computational cost and parameters without significant loss in representational power [25].

- Role in the Hybrid Model: In the STGCN-ViT pipeline, EfficientNet-B0 is responsible for processing individual, high-resolution MRI images. Its primary role is to extract rich, hierarchical, and spatially meaningful features from the brain's anatomy. The model's high efficiency and accuracy, stemming from its compound scaling and MBConv design, make it ideal for this initial processing stage, setting a strong foundation for subsequent temporal and attention-based analysis [12] [1].

Table 1: Detailed Specifications of EfficientNet-B0 Base Network

| Stage | Operator | Resolution | #Channels | #Layers |

|---|---|---|---|---|

| 1 | Conv3x3 | 224x224 | 32 | 1 |

| 2 | MBConv1, k3x3 | 112x112 | 16 | 1 |

| 3 | MBConv6, k3x3 | 112x112 | 24 | 2 |

| 4 | MBConv6, k5x5 | 56x56 | 40 | 2 |

| 5 | MBConv6, k3x3 | 28x28 | 80 | 3 |

| 6 | MBConv6, k5x5 | 14x14 | 112 | 3 |

| 7 | MBConv6, k5x5 | 14x14 | 192 | 4 |

| 8 | MBConv6, k3x3 | 7x7 | 320 | 1 |

| 9 | Conv1x1 & Pooling & FC | 7x7 | 1280 | 1 |

Source: Adapted from [25]

Spatial-Temporal Graph Convolutional Network (STGCN): The Temporal Dynamics Model

The STGCN component is tasked with modeling the temporal dynamics and structural relationships between different brain regions over time. While traditional CNNs are powerful for spatial data, they struggle with non-Euclidean data structures like graphs. STGCN extends Graph Convolutional Networks (GCNs) by performing convolution operations on graph-structured data across both spatial and temporal dimensions [28].

- Core Concepts: The input to the STGCN is a spatio-temporal graph constructed from the features extracted by EfficientNet-B0. In this graph:

- Architecture and Mechanism: The STGCN uses a layered architecture of spatio-temporal graph convolution blocks. Each block typically consists of:

- A spatial graph convolution that aggregates feature information from a node's topological neighbors. This is often implemented using a spectral-based or message-passing approach.

- A temporal convolution (e.g., a 1D convolution with a kernel size of 9) that is applied to the sequence of each node's features, capturing its evolution over time [28]. Skip connections are frequently employed between these blocks to mitigate gradient degradation and allow for the training of deeper networks [28]. This design enables the STGCN to effectively monitor the progression of various brain regions, which is a crucial diagnostic indicator for many neurological disorders [12] [1].

Vision Transformer (ViT): The Attention-Based Feature Refiner

The Vision Transformer (ViT) component introduces a powerful self-attention mechanism to the hybrid model, enabling it to weigh the importance of different features and image patches globally. Originally designed for natural language processing, ViT adapts the transformer architecture for computer vision tasks [29] [30].

- Input Processing and Architecture:

- Patch Embedding: The input image (or feature map) is decomposed into a sequence of fixed-size, non-overlapping patches. Each patch is flattened and linearly projected into an embedding vector [29] [30].

- Positional Encoding: Since the transformer architecture is permutation-invariant, learnable positional encodings are added to the patch embeddings to retain spatial information about the original location of each patch [29].

- Transformer Encoder: The sequence of embedded patches is fed into a standard transformer encoder. The core of this encoder is the multi-head self-attention (MSA) mechanism, which allows the model to simultaneously focus on different patches from the entire sequence and compute their contextual relationships. This is followed by a Multi-Layer Perceptron (MLP) for further processing [30].

- Role in the Hybrid Model: In the STGCN-ViT model, the ViT module is not applied directly to raw images but to the refined spatio-temporal features. Its function is to apply a final, global attention-based feature refinement. By using self-attention, the ViT can identify and focus on the most critical spatial patterns and temporal dependencies identified by the previous components, potentially capturing long-range dependencies that convolutional filters might miss. This leads to a more comprehensive feature set for the final classification of neurological disorders [12] [1].

Table 2: Comparative Analysis of Model Components in Neurological Disorder Detection

| Feature | EfficientNet-B0 | STGCN | Vision Transformer (ViT) |

|---|---|---|---|

| Primary Role | Spatial Feature Extraction | Temporal Dynamics Modeling | Attention-Based Feature Refinement |

| Core Mechanism | MBConv Blocks & Compound Scaling | Spatial-Temporal Graph Convolutions | Multi-Head Self-Attention |

| Input Type | 2D High-Resolution MRI Slices | Spatio-Temporal Graph of Brain Regions | Sequence of Feature Embeddings |

| Output | High-Level Spatial Feature Maps | Temporal Feature Evolution Trajectories | Context-Aware, Weighted Feature Representations |

| Key Advantage | High Accuracy & Computational Efficiency | Models Complex Regional Brain Interactions | Captures Global Context & Long-Range Dependencies |

| Data Requirements | Moderate (Benefits from pre-training) | Requires longitudinal/sequence data | Large datasets for full potential |

Source: Compiled from [12] [25] [29]

Integrated Experimental Protocol for Neurological Disorder Detection

This protocol details the methodology for implementing the hybrid STGCN-ViT model to detect neurological disorders such as Alzheimer's Disease from a series of MRI scans.

Workflow and Signaling Pathway

The following diagram illustrates the logical flow and integration of the three core components within the hybrid model.

Diagram 1: STGCN-ViT Model Workflow for ND Detection. This diagram outlines the sequential processing of medical images, from spatial feature extraction through temporal modeling to final attention-based classification. AD: Alzheimer's Disease; BT: Brain Tumor.

Step-by-Step Procedure

Data Acquisition and Preprocessing

- Datasets: Utilize public benchmark datasets such as the Open Access Series of Imaging Studies (OASIS) or proprietary datasets from institutions like Harvard Medical School (HMS) [12] [1].

- Preprocessing: Standardize all 3D MRI volumes (e.g., T1-weighted). Apply standard pre-processing steps including skull-stripping, intensity normalization, and co-registration to a common template (e.g., MNI space). Data augmentation techniques like random rotation and flipping can be applied to improve model generalization.

Spatial Feature Extraction with EfficientNet-B0

- Input: Individual 2D slices or preprocessed 3D patches from the MRI volumes.

- Protocol: a. Initialize the EfficientNet-B0 model with weights pre-trained on a large-scale dataset like ImageNet. b. Remove the original classification head (global pooling and fully connected layers). c. Process each MRI slice through the network to generate a high-dimensional spatial feature map. d. For each brain scan, aggregate these feature maps to create a comprehensive spatial feature representation [12] [1] [26].

Spatio-Temporal Graph Construction and Modeling

- Graph Construction: a. Node Definition: Parcellate the brain into distinct anatomical regions using an atlas (e.g., AAL). b. Node Features: For each region, reduce the corresponding spatial features from EfficientNet-B0 (e.g., via global average pooling) to form the initial node feature vector. c. Edge Definition: Construct an adjacency matrix defining the connections between nodes. This can be based on structural connectivity (from DTI), functional connectivity, or simply anatomical proximity [12] [1] [28].

- STGCN Processing: a. Build a spatio-temporal sequence by linking graph snapshots from consecutive patient visits. b. Feed this sequence into the STGCN model. c. The STGCN performs graph convolutions spatially and 1D convolutions temporally, outputting a refined feature representation that encapsulates the temporal evolution of each brain region [12] [28].

Attention-Based Feature Refinement with ViT

- Input Preparation: The output features from the STGCN are serialized into a sequence of embeddings. If necessary, a learnable

[CLS]token is prepended to the sequence [30]. - ViT Processing:

a. Add positional encodings to the sequence to retain order information.

b. Process the sequence through multiple transformer encoder layers, each employing multi-head self-attention.

c. The output corresponding to the

[CLS]token (or the averaged output of all tokens) is used as the final, context-aware representation of the patient's brain scan sequence for classification [12] [1] [30].

- Input Preparation: The output features from the STGCN are serialized into a sequence of embeddings. If necessary, a learnable

Model Output and Evaluation

- Classification Head: The final feature vector from the ViT is passed through a fully connected layer with a softmax activation function to generate probability scores for each diagnostic class (e.g., Healthy, Alzheimer's Disease, Brain Tumor) [12].

- Evaluation Metrics: Validate the model on a held-out test set. Report standard performance metrics including Accuracy, Precision, Recall, F1-Score, and the Area Under the Receiver Operating Characteristic Curve (AUC-ROC). The model should be compared against standard and transformer-based baseline models [12] [1].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for STGCN-ViT Research

| Research Reagent | Function & Specification | Example/Tool |

|---|---|---|

| Benchmark Neuroimaging Datasets | Provides standardized, annotated data for training and validation. Must contain longitudinal MRI data. | OASIS, ADNI, Harvard Medical School (HMS) datasets [12] [1] |

| Computational Framework | Software environment for building, training, and evaluating complex deep learning models. | PyTorch, TensorFlow, Keras with specialized libraries for GNNs (e.g., PyTorch Geometric) |

| Anatomical Brain Atlas | Digital template for parcellating the brain into distinct regions of interest (Nodes for STGCN). | Automated Anatomical Labeling (AAL) Atlas, Harvard-Oxford Atlas |

| Graph Construction Tool | Utility to define spatial relationships (edges) between brain regions for building the graph input for STGCN. | Custom scripts based on DTI tractography or anatomical proximity matrices [28] |

| Pre-trained EfficientNet-B0 Weights | Provides a robust initialization for the spatial feature extractor, significantly improving convergence and performance. | Weights from ImageNet or specialized medical imaging competitions |