Large-Scale Pretraining of Whole Slide Image Foundation Models: Transforming Cancer Detection and Biomarker Discovery

The advent of large-scale pretraining on whole slide images (WSIs) is revolutionizing computational pathology.

Large-Scale Pretraining of Whole Slide Image Foundation Models: Transforming Cancer Detection and Biomarker Discovery

Abstract

The advent of large-scale pretraining on whole slide images (WSIs) is revolutionizing computational pathology. This article explores how foundation models, trained on hundreds of thousands of histopathology slides, are overcoming critical bottlenecks in cancer AI. We examine the technical foundations of these models, their application across diverse clinical tasks from cancer subtyping to outcome prognosis, and the solutions they offer for data scarcity and computational challenges. Through comparative analysis, we demonstrate their superior performance in low-data regimes and rare cancer retrieval, providing researchers and drug development professionals with a comprehensive overview of this transformative technology and its pathway to clinical integration.

The Paradigm Shift: How Large-Scale WSI Pretraining is Redefining Computational Pathology

Whole-slide imaging (WSI) has revolutionized pathology by digitizing glass slides into high-resolution digital images, enabling the application of artificial intelligence in cancer research and diagnostics [1]. A foundation model in computational pathology is a large-scale AI model pretrained on vast amounts of unlabeled histopathology data, capable of being adapted (fine-tuned) for various downstream clinical tasks without requiring training from scratch [2] [3]. The core value proposition of these models lies in their ability to learn general-purpose visual representations of histopathological patterns—from cellular morphology to tissue architecture—that transfer efficiently to specialized applications even with limited task-specific labeled data [3].

The development of WSI foundation models addresses several critical challenges in computational pathology. Traditional analysis of WSIs is computationally demanding due to their gigapixel size, often containing tens to hundreds of thousands of image tiles [2] [3]. Prior approaches frequently resorted to subsampling a small portion of tiles, missing important slide-level context [3]. Foundation models overcome this limitation through novel architectures that can process entire slides while capturing both local patterns and global spatial relationships across tissue regions [2] [3]. This capability is particularly valuable in cancer research, where tumor heterogeneity and complex tissue microenvironment interactions play crucial roles in diagnosis, prognosis, and treatment response prediction [1].

Architectural Foundations of WSI Foundation Models

Core Technical Components

WSI foundation models typically employ a multi-stage processing pipeline to handle the computational challenges of gigapixel images. The standard workflow involves dividing a WSI into smaller patches (e.g., 256×256 or 512×512 pixels at 20× magnification), encoding these patches into feature representations, and then aggregating these features into a comprehensive slide-level representation using specialized architectures [2] [3].

Patch Processing: Initial patch encoding typically uses Vision Transformers (ViTs) or Convolutional Neural Networks (CNNs) pretrained with self-supervised learning objectives such as DINOv2 or masked autoencoding [2] [3]. For example, the TITAN model uses a patch encoder trained on 335,645 whole-slide images via visual self-supervised learning, while Prov-GigaPath employs a tile encoder pretrained on 1.3 billion pathology image tiles [2] [3].

Whole-Slide Modeling: The key innovation in recent foundation models lies in effectively modeling long-range dependencies across thousands of patch embeddings. Models like TITAN and Prov-GigaPath adapt transformer architectures with modifications to handle extremely long sequences. TITAN uses attention with linear bias (ALiBi) for long-context extrapolation, while Prov-GigaPath leverages LongNet's dilated attention mechanism to efficiently process sequences of up to 70,121 tiles [2] [3].

Multimodal Integration

Advanced foundation models incorporate multimodal capabilities by aligning visual representations with textual information from pathology reports. TITAN, for instance, undergoes a three-stage pretraining process: (1) vision-only pretraining on ROI crops, (2) cross-modal alignment with synthetic fine-grained ROI captions, and (3) cross-modal alignment at WSI-level with clinical reports [2]. This enables capabilities such as text-guided slide retrieval and pathology report generation.

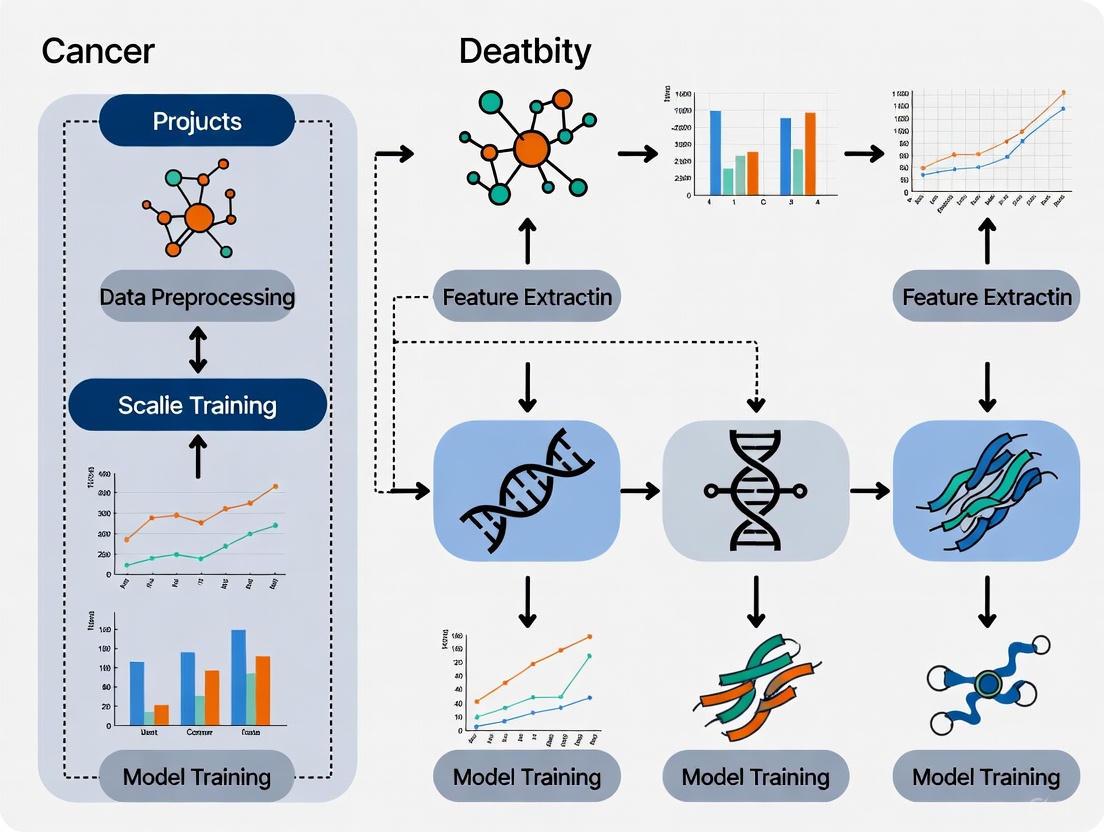

The diagram below illustrates the complete architectural workflow of a multimodal WSI foundation model:

Large-Scale Pretraining Methodologies

Self-Supervised Learning Objectives

WSI foundation models employ specialized self-supervised learning techniques that leverage the inherent structure of pathological images without requiring manual annotations. The most successful approaches include:

Masked Image Modeling: Adapted from natural language processing, this technique randomly masks portions of the input image patches and trains the model to reconstruct the missing visual content. Prov-GigaPath uses masked autoencoder pretraining at the slide level, where random tile embeddings are masked and predicted based on surrounding context [3].

Knowledge Distillation: Methods like iBOT (used in TITAN) employ a teacher-student framework where the student network learns to match the output distributions of a teacher network applied to different augmented views of the same image [2]. This encourages the model to learn robust representations invariant to meaningless variations while preserving semantically important features.

Contrastive Learning: Both intra-slide and inter-slide contrastive objectives help models learn that tissue regions from the same slide (or similar pathological conditions) should have similar representations, while dissimilar regions should have divergent representations [2].

Pretraining Datasets and Scale

The performance of foundation models heavily depends on the scale and diversity of pretraining data. Current state-of-the-art models are trained on massive datasets encompassing hundreds of thousands of slides across multiple cancer types:

Table: Large-Scale WSI Foundation Model Pretraining Datasets

| Model | Dataset Size | Tissue Types | Data Source | Key Characteristics |

|---|---|---|---|---|

| TITAN | 335,645 WSIs | 20 organs | Mass-340K | Multimodal alignment with 182,862 medical reports and 423,122 synthetic captions [2] |

| Prov-GigaPath | 171,189 WSIs, 1.3B tiles | 31 major tissue types | Providence Health Network | Covers 28 cancer centers, >30,000 patients, includes H&E and IHC stains [3] |

| TCGA-Based Models | ~30,000 WSIs | Various cancer types | The Cancer Genome Atlas | Expert-curated but smaller scale, potential distribution shift to real-world data [3] |

Benefits for Cancer Detection Research

Performance Across Cancer Types

Large-scale pretraining of WSI foundation models demonstrates significant benefits across diverse cancer detection and characterization tasks. The table below summarizes quantitative improvements over previous approaches:

Table: Performance Benefits of Foundation Models in Cancer Detection Tasks

| Task Category | Specific Cancer Type / Biomarker | Model | Performance Improvement | Evaluation Metric |

|---|---|---|---|---|

| Mutation Prediction | EGFR mutation (LUAD) | Prov-GigaPath | +23.5% AUROC, +66.4% AUPRC vs second-best [3] | AUROC, AUPRC |

| Mutation Prediction | Pan-cancer (18 biomarkers) | Prov-GigaPath | +3.3% macro-AUROC, +8.9% macro-AUPRC [3] | AUROC, AUPRC |

| Cancer Subtyping | 9 cancer types | Prov-GigaPath | Outperformed all models in all types, significant improvement in 6 [3] | Accuracy |

| Rare Cancer Retrieval | Multiple rare cancers | TITAN | Superior retrieval accuracy in low-data regimes [2] | Retrieval accuracy |

| Biomarker Prediction | Multiple biomarkers | TITAN | Outperformed supervised baselines and existing slide foundation models [2] | Multiple metrics |

Data Efficiency and Transfer Learning

Foundation models pretrained on large-scale datasets exhibit remarkable data efficiency when adapted to new tasks with limited annotations. TITAN demonstrates strong performance in few-shot learning scenarios, where very limited labeled examples are available for fine-tuning [2]. This is particularly valuable for rare cancer types where collecting large annotated datasets is challenging. The models' ability to generate general-purpose slide representations enables effective transfer learning across different organs and cancer types, reducing the need for extensive retraining [2] [3].

Multimodal Capabilities for Enhanced Discovery

The integration of visual and linguistic information enables novel applications in cancer research. Models like TITAN can perform cross-modal retrieval, allowing researchers to query similar cases using either image examples or textual descriptions of morphological features [2]. The zero-shot classification capabilities of vision-language models facilitate hypothesis testing without task-specific fine-tuning, potentially uncovering novel morphological biomarkers associated with molecular subtypes or treatment responses [2] [3].

Experimental Protocols and Methodologies

Model Pretraining Protocol

The standard protocol for developing WSI foundation models involves these critical steps:

Data Curation and Preprocessing:

- Collect large-scale WSI datasets from diverse sources, ensuring representation across tissue types, staining protocols, and scanner models [2] [3]

- Apply quality control measures to exclude slides with excessive artifacts, using methods like Double-Pass for efficient tissue detection [4]

- Extract patches at appropriate magnification (typically 20×) with standardized dimensions [2]

- Implement stain normalization to address color variations across different laboratories and scanners [5]

Self-Supervised Pretraining:

- Train patch-level encoders using methods like DINOv2 or iBOT on millions of image patches [2] [3]

- Aggregate patch embeddings into spatially aware 2D feature grids preserving tissue architecture [2]

- Pretrain slide-level encoders using masked autoencoding or contrastive learning objectives [3]

- For multimodal models: align visual representations with textual features from pathology reports using contrastive vision-language pretraining [2]

The following diagram illustrates the end-to-end experimental workflow for developing and validating a WSI foundation model:

Benchmarking and Evaluation Framework

Rigorous evaluation of WSI foundation models requires comprehensive benchmarking across diverse tasks:

Cancer Subtyping: Evaluate slide-level classification accuracy across multiple cancer types, comparing against pathologist annotations and existing biomarkers [3].

Mutation Prediction: Assess model performance in predicting driver mutations from histology patterns alone, using genomic sequencing data as ground truth [3].

Prognostic Prediction: Validate the models' ability to predict clinical outcomes (overall survival, treatment response) using time-to-event analyses on independent cohorts [1].

Cross-modal Retrieval: For multimodal models, evaluate precision in retrieving relevant WSIs based on textual queries, and vice versa [2].

Essential Research Reagent Solutions

The successful development and application of WSI foundation models relies on several key computational "reagents" and resources:

Table: Essential Research Reagents for WSI Foundation Model Development

| Resource Category | Specific Tool / Resource | Function in Research | Implementation Example |

|---|---|---|---|

| WSI Datasets | Prov-Path, TCGA, Mass-340K | Large-scale pretraining data providing diverse histopathological examples [2] [3] | Prov-Path: 171,189 WSIs from 31 tissue types [3] |

| Synthetic Data | SNOW dataset, StyleGAN2 with ADA | Data augmentation for rare cancer types; generating annotated training data [6] | SNOW: 20k synthetic breast cancer tiles with 1.4M annotated nuclei [6] |

| Tissue Detection | Double-Pass method | Automated quality control; identifying relevant tissue regions for analysis [4] | CPU-optimized tissue detection (0.20s/slide) with mIoU 0.826 [4] |

| Stain Normalization | Color calibration slides, multispectral algorithms | Standardizing color appearance across different laboratories and scanners [5] | Nine-filter color chart specialized for H&E staining characteristics [5] |

| Annotation Tools | HistomicsML2, Digital Slide Archive | Active learning-assisted annotation; collaborative label generation [7] | Superpixel-based active learning for efficient training data creation [7] |

| Evaluation Benchmarks | Custom task suites (e.g., 26 tasks in Prov-GigaPath) | Standardized performance comparison across methods and institutions [3] | 9 cancer subtyping + 17 pathomics tasks on Providence and TCGA data [3] |

Whole-slide imaging foundation models represent a transformative advancement in computational pathology, enabling more accurate and efficient cancer detection, classification, and biomarker discovery. Through large-scale pretraining on diverse datasets, these models learn rich representations of histopathological patterns that transfer effectively to various clinical tasks, often exceeding specialist-trained models—particularly in data-limited scenarios. As these models continue to evolve, incorporating multimodal data and improving interpretability, they hold significant promise for accelerating cancer research and democratizing access to expert-level pathological analysis across healthcare settings.

The field of computational pathology is undergoing a transformative shift, moving from models trained on limited, task-specific datasets to large-scale foundation models pretrained on hundreds of thousands of whole-slide images (WSIs). This transition embodies the data scaling hypothesis—the concept that increasing the scale and diversity of training data can produce more versatile, accurate, and robust models that generalize better to challenging clinical scenarios, including rare cancers and low-data environments [2]. Foundation models developed through self-supervised learning (SSL) on millions of histology image patches have begun to capture fundamental morphological patterns in tissue, serving as a base for predicting critical clinical endpoints like diagnosis, prognosis, and biomarker status [2]. However, translating these capabilities from patch-level to patient- and slide-level analysis has remained challenging due to the gigapixel scale of WSIs and the limited size of disease-specific cohorts, particularly for rare conditions [2].

The emergence of whole-slide foundation models represents a significant evolution in this landscape. Instead of training task-specific models on top of patch embeddings from scratch, these models are pretrained to distill pathology-specific knowledge from massive WSI collections, enabling their off-the-shelf application for diverse clinical tasks while simplifying the prediction of clinical endpoints [2]. This whitepaper examines the theoretical foundations, experimental evidence, and practical methodologies underpinning this shift, with particular focus on its implications for cancer detection research.

Quantitative Evidence: Performance Scaling with Data

Table 1: Performance Advantages of Large-Scale Pretraining in Computational Pathology

| Model/Approach | Training Data Scale | Key Advantages | Performance Metrics | Clinical Applications Demonstrated |

|---|---|---|---|---|

| TITAN (Full Model) [2] | 335,645 WSIs + 423K synthetic captions + 183K reports | Superior generalizability, zero-shot capabilities | Outperforms baselines across multiple settings | Rare disease retrieval, cancer prognosis, cross-modal retrieval |

| TITAN (Vision-only) [2] | 335,645 WSIs | General-purpose slide representations | Excels in linear probing, few-shot classification | Cancer subtyping, biomarker prediction, outcome prognosis |

| Traditional ROI Models [2] | Thousands to hundreds of thousands of patches | Patch-level morphological pattern recognition | Limited slide-level translation | Specific diagnostic tasks from regions of interest |

| Other Slide Foundation Models [2] | Orders of magnitude fewer samples than TITAN | Whole-slide encoding | Restricted generalization capability | Limited evaluations in diagnostically relevant settings |

Table 2: Impact of Data Scaling on Specific Clinical Tasks

| Clinical Task | Data Scale Benefits | Performance Improvement | Significance for Cancer Research |

|---|---|---|---|

| Few-shot & Zero-shot Classification | Enables learning from very few examples | Higher accuracy with limited labeled data | Rapid adaptation to new cancer types with minimal annotation |

| Rare Cancer Retrieval | Learning fundamental morphology improves identification of uncommon patterns | Successful retrieval of rare cancer slides | Potential to address diagnostic challenges for rare malignancies |

| Cross-modal Retrieval | Alignment of visual and language representations | Accurate linking of histology slides with clinical reports | Enhanced pathology search and knowledge discovery |

| Cancer Prognosis | Capturing subtle prognostic patterns across diverse cases | Improved outcome prediction accuracy | Better patient stratification and treatment planning |

Experimental Protocols and Methodologies

The TITAN Framework: A Case Study in Scalable Pretraining

The Transformer-based pathology Image and Text Alignment Network (TITAN) exemplifies the implementation of the data scaling hypothesis through a sophisticated three-stage pretraining paradigm [2]:

Stage 1: Vision-only Unimodal Pretraining

- Dataset: 335,645 WSIs (Mass-340K) across 20 organ types with diverse stains and scanners

- ROI Processing: Non-overlapping 512×512 pixel patches at 20× magnification

- Feature Extraction: 768-dimensional features per patch using CONCHv1.5 patch encoder

- Architecture: Vision Transformer (ViT) using iBOT framework (masked image modeling and knowledge distillation)

- Context Handling: Attention with Linear Biases (ALiBi) extended to 2D for long-context extrapolation

- Training Views: Random crops of 16×16 feature grids (8,192×8,192 pixels) with global (14×14) and local (6×6) crops

Stage 2: Cross-modal Alignment with Synthetic Captions

- Dataset: 423,122 synthetic fine-grained ROI captions generated using PathChat

- Objective: Contrastive learning to align visual features with morphological descriptions

- Granularity: Region-of-interest level (8K×8K pixels) descriptions

Stage 3: Cross-modal Alignment with Clinical Reports

- Dataset: 182,862 medical reports paired with WSIs

- Objective: Slide-level vision-language alignment

- Outcome: Enable zero-shot classification and cross-modal retrieval capabilities

TITAN's Three-Stage Multimodal Pretraining Pipeline

Critical Preprocessing: Tissue Detection and Scale Normalization

Effective large-scale pretraining requires robust preprocessing methodologies to handle variability in histopathology images:

Tissue Detection with Double-Pass Method

- Principle: Annotation-free hybrid method combining classical computer vision approaches [4]

- Performance: mIoU of 0.826 vs. 0.871 for supervised UNet++ baseline [4]

- Speed: 0.203 seconds per slide on CPU vs. 2.431 seconds for UNet++ [4]

- Advantage: Enables high-throughput processing of large WSI collections without manual annotation

Scale Normalization via Nuclear Area Distributions

- Principle: Uses median nuclear area as reference for spatial normalization [8]

- Methodology: Nuclear segmentation followed by distribution analysis across scaling factors

- Validation: Close fit to empirical values for renal tumor datasets [8]

- Impact: Improves classification performance for most renal tumor subtypes [8]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Critical Resources for Large-Scale Histopathology Research

| Resource Category | Specific Tools/Solutions | Function in Research | Key Characteristics |

|---|---|---|---|

| Patch Encoders | CONCHv1.5 [2] | Extracts foundational features from histology patches | Generates 768-dimensional features from 512×512 patches |

| Whole-Slide Foundation Models | TITAN (Vision & Multimodal) [2] | Provides general-purpose slide representations | Handles variable-length WSI sequences, enables zero-shot tasks |

| Tissue Detection | Double-Pass Method [4] | Identifies relevant tissue regions in WSIs | Annotation-free, CPU-optimized (0.203s/slide), mIoU: 0.826 |

| Scale Normalization | Nuclear Area Distribution Model [8] | Normalizes spatial scale across datasets | Based on median nuclear area, improves classification accuracy |

| Multimodal Alignment | PathChat-generated Captions [2] | Provides fine-grained morphological descriptions | 423K synthetic ROI-text pairs for vision-language pretraining |

| Evaluation Benchmarks | TCGA Cohorts [4] | Standardized performance assessment | 3,322 WSIs across 9 cancer types (ACC, BRCA, CESC, etc.) |

| Quality Control | GrandQC UNet++ [4] | Provides tissue-versus-background masks | Supervised baseline (mIoU: 0.871) for tissue detection |

Architectural Innovations for Whole-Slide Modeling

Scaling to hundreds of thousands of WSIs requires specialized architectural considerations distinct from patch-level modeling:

Handling Long Input Sequences

- Challenge: WSIs contain >10^4 tokens vs. 196-256 tokens for patches [2]

- Solution: Feature grid construction with 512×512 patches (instead of 256×256) reduces sequence length while maintaining context [2]

Positional Encoding with 2D ALiBi

- Innovation: Extends Attention with Linear Biases to 2D for histopathology [2]

- Advantage: Enables long-context extrapolation at inference based on relative Euclidean distance between tissue patches [2]

- Impact: Preserves spatial relationships in tissue microenvironment during pretraining

Multi-Scale Context Processing

- Method: Random cropping of feature grids (16×16 features = 8,192×8,192 pixels) [2]

- Training Views: Two global (14×14) and ten local (6×6) crops per region [2]

- Benefit: Learns both localized cellular patterns and tissue architectural organization

Architectural Innovations for Whole-Slide Foundation Models

Implications for Cancer Detection Research

The data scaling hypothesis, when applied to histopathology, transforms multiple aspects of cancer research:

Democratizing Rare Cancer Analysis Large-scale pretraining captures fundamental morphological patterns that transfer effectively to rare malignancies, addressing the critical challenge of limited training data for uncommon cancers [2]. This enables developing accurate models for rare cancer retrieval and subtyping without extensive case-specific annotations.

Accelerating Biomarker Discovery Foundation models pretrained on diverse tissue types and staining patterns can identify subtle morphological correlates of molecular features, potentially reducing dependency on expensive molecular testing while providing spatial context unavailable through bulk assays [2].

Enhancing Diagnostic Consistency By providing objective, quantitative slide representations, these models can reduce inter-observer variability that has long challenged histopathology, particularly in grading systems like Gleason scoring where agreement has ranged from 10-70% [9].

Enabling Multimodal Cancer Profiling The integration of visual features with pathology reports and potentially genomic data creates opportunities for comprehensive tumor profiling, linking morphological patterns with clinical outcomes and molecular characteristics [2].

The scaling hypothesis in histopathology represents more than simply using larger datasets—it embodies a fundamental shift toward developing comprehensive representations of histopathological patterns that transcend individual diseases, scanners, and institutions. As the field progresses toward pretraining on millions of whole-slide images, the potential grows for creating truly generalizable AI systems that can adapt to the diverse challenges of cancer diagnosis, prognosis, and biomarker prediction across the spectrum of human malignancies.

The development of artificial intelligence (AI) for cancer detection and diagnosis represents a transformative frontier in precision oncology. However, a significant bottleneck impedes progress: the scarcity of annotated clinical data, particularly for rare cancers and small, specific patient cohorts. Traditional task-specific deep learning models require large-scale, expertly labeled datasets for training, which are costly and time-consuming to acquire [10] [11]. This challenge is acutely felt in rare cancers, where low incidence naturally limits available data, and in complex predictive tasks like forecasting genetic mutations or patient survival [12]. Consequently, models trained on limited data often suffer from poor generalizability, failing to maintain performance across diverse populations and clinical scenarios.

A paradigm shift is underway, moving from creating numerous narrow AI models to developing foundational models pre-trained on massive, unlabeled whole-slide image (WSI) datasets [10] [12]. These foundation models learn the fundamental language of histology—capturing cellular morphology, tissue architecture, and staining characteristics—from millions of image patches across dozens of cancer types [3] [12]. This large-scale pretraining creates a powerful, generalizable representation of histopathological images. When applied to downstream tasks, even those with minimal labeled data, these representations enable robust performance, thereby unlocking the potential for accurate AI tools in rare cancers and niche clinical applications where data is inherently limited [12] [13].

The Foundation Model Paradigm: Leveraging Large-Scale Pretraining

Foundation models are large-scale neural networks pre-trained on vast amounts of data using self-supervised learning (SSL) techniques, which do not require curated labels [12]. This pre-training phase allows the model to learn rich, versatile feature representations of the input data. In computational pathology, this means the model learns to encode meaningful histopathological patterns—from nuclear features to tissue microarchitecture—directly from WSIs.

The core advantage of this paradigm is its data efficiency and generalizability. Once a robust foundation model is established, it can be adapted (or "fine-tuned") for a wide array of specific downstream tasks—such as classifying a rare cancer type or predicting a biomarker—with relatively few task-specific labeled examples [10] [13]. This approach stands in stark contrast to training a model from scratch for each new task, which would require a large, annotated dataset every time. The foundation model effectively serves as a universal feature extractor for histopathology, capturing a broad spectrum of morphological patterns that are transferable to new, data-scarce problems [12].

Table 1: Key Whole-Slide Image Foundation Models and Their Pretraining Scales

| Model Name | Pretraining Dataset Scale | Number of Parameters | Key Architectural Innovation |

|---|---|---|---|

| Virchow [12] | ~1.5 million WSIs from ~100,000 patients | 632 million | Vision Transformer (ViT) trained with DINOv2 self-supervised learning |

| Prov-GigaPath [3] | 171,189 WSIs (1.3 billion image tiles) | Not Specified | LongNet architecture for ultra-long-context modeling of entire slides |

| TITAN [2] | 335,645 WSIs | Not Specified | Multimodal vision-language model aligned with pathology reports and synthetic captions |

| BEPH [13] | 11.77 million patches from 11.76k TCGA WSIs | Not Specified | Masked Image Modeling (MIM) via BEiTv2 |

Technical Architectures and Pretraining Methodologies

The development of WSI foundation models involves innovative architectural choices to handle the gigapixel scale of the images while effectively learning representative features.

Model Architectures for Gigapixel Images

A fundamental challenge is processing entire WSIs, which can contain tens of thousands of image tiles. Prov-GigaPath addresses this with the GigaPath architecture, which adapts the LongNet method using dilated self-attention. This allows the model to efficiently process the long sequences of tile embeddings that represent a whole slide, capturing both local patterns and global slide-level context [3]. Other models like Virchow employ a Vision Transformer (ViT) trained with the DINOv2 framework. DINOv2 is a self-supervised method that learns by comparing different augmented views of an image ("student" and "teacher" networks), forcing the model to build robust representations that are invariant to trivial transformations [12].

Self-Supervised Learning Strategies

SSL is the engine of foundation model pretraining, as it leverages unlabeled data. Common SSL strategies include:

- Masked Image Modeling (MIM): Used by models like BEPH, this approach randomly masks portions of the input image and trains the model to reconstruct the missing parts. This teaches the model to learn robust contextual features [13].

- Contrastive Learning: Used by Virchow (via DINOv2), this method teaches the model to recognize that different augmented views of the same image are "similar" while views from different images are "dissimilar" [12].

- Multimodal Learning: TITAN extends pretraining by incorporating text. It uses vision-language alignment, contrasting image features with corresponding pathology reports and synthetic captions generated by a generative AI copilot. This enables cross-modal capabilities, such as generating reports from images or retrieving images based on text queries [2].

Diagram 1: The two-phase foundation model paradigm for data-efficient learning. The model is first pre-trained at scale using self-supervised learning, then its knowledge is transferred to a specific task with limited labels.

Experimental Protocols for Benchmarking and Validation

To validate the effectiveness of foundation models, especially in low-data regimes, researchers employ rigorous benchmarking protocols. The following experimental designs are common across major studies.

Pan-Cancer and Rare Cancer Detection

This protocol evaluates a model's ability to detect cancer across multiple tissue types, including rare cancers.

- Objective: Train a single model to classify WSIs as cancerous or non-cancerous across a wide range of organs and cancer types.

- Dataset: Use a large, diverse dataset with specimen-level labels. For example, Virchow was evaluated on slides from 9 common and 7 rare cancer types [12].

- Method: Use the foundation model (e.g., Virchow, UNI, Phikon) as a feature extractor. A weakly supervised aggregator model (e.g., a multiple instance learning classifier) then pools tile-level features to make a slide-level prediction.

- Evaluation Metrics: Area Under the Receiver Operating Characteristic curve (AUC) is the primary metric. Performance is stratified by common vs. rare cancers and internal vs. external datasets to assess generalizability.

Genetic Mutation Prediction from Histology

This protocol tests if a model can predict molecular alterations directly from H&E-stained WSIs, which could reduce reliance on costly genetic tests.

- Objective: Predict the mutation status of a specific gene (e.g., BRAF-V600) from a WSI.

- Dataset: Use cohorts with matched WSIs and genetic sequencing data. Models are often trained on TCGA data (e.g., SKCM cohort for BRAF) and validated on independent hospital cohorts [14] [3].

- Method: A foundation model like Prov-GigaPath can be fine-tuned end-to-end. Alternatively, its features can be fed into a classifier like XGBoost. One study combined a fine-tuned Prov-GigaPath with XGBoost for BRAF prediction, achieving state-of-the-art results [14].

- Evaluation Metrics: Area Under the Curve (AUC), with 95% confidence intervals. AUC improvements over previous methods demonstrate the foundation model's value.

Few-Shot and Zero-Shot Learning

These protocols are the most direct test of a model's ability to learn from minimal data.

- Few-Shot Learning: The model is adapted to a new task using a very small number of labeled examples (e.g., 10-100 slides). Performance is compared to a model trained from scratch on the same data [2].

- Zero-Shot Learning: For multimodal models like TITAN, this involves tasks like text-based slide retrieval or classification without any task-specific training. The model uses its inherent vision-language alignment to perform the task [2].

Table 2: Performance of Foundation Models on Key Tasks Involving Limited Data

| Task / Model | Performance Metric | Result | Implication for Data-Scarce Scenarios |

|---|---|---|---|

| Pan-Cancer Detection (Virchow) [12] | Specimen-Level AUC | 0.950 (Overall), 0.937 (Rare Cancers) | A single foundation model performs nearly as well on rare cancers as on common ones. |

| BRAF Mutation Prediction (Prov-GigaPath + XGBoost) [14] | AUC on Independent Test Set | 0.772 | Demonstrates state-of-the-art, clinically relevant prediction from images alone on a small dataset. |

| Zero-Shot Slide Retrieval (TITAN) [2] | Accuracy on Rare Cancer Retrieval | Outperformed other slide foundation models | Enables finding similar cases for rare diseases without task-specific training data. |

The Scientist's Toolkit: Essential Research Reagents

To implement and experiment with foundation models in computational pathology, researchers rely on a suite of key resources and tools.

Table 3: Key Research Reagent Solutions for Foundation Model Research

| Research Reagent | Function and Utility | Example Instances |

|---|---|---|

| Large-Scale WSI Datasets | Provides the raw, unlabeled data necessary for self-supervised pretraining of foundation models. | TCGA [13], Prov-Path [3], in-house institutional archives [12] |

| Public Foundation Model Weights | Enables researchers to bypass costly pretraining and immediately fine-tune on downstream tasks. | Prov-GigaPath [3], Virchow [12], BEPH [13] |

| Benchmarking Suites | Standardized sets of tasks and datasets for fair evaluation and comparison of different models. | TITAN's diverse clinical tasks [2], MultiPathQA (for VQA) [15] |

| Multiple Instance Learning (MIL) Frameworks | Algorithms to aggregate tile-level features into a single slide-level prediction or classification, essential for WSI-level tasks. | Attention-based MIL, TransMIL [16] [13] |

The adoption of foundation models pre-trained on large-scale whole slide image collections represents a fundamental advance in computational pathology's quest to overcome the limitations of small clinical datasets. By learning a general-purpose "language" of histology, these models provide a powerful, transferable base that can be efficiently specialized for challenging tasks involving rare cancers and small cohorts. The experimental results are compelling: foundation models enable accurate pan-cancer detection, predict genetic mutations from morphology alone, and facilitate few-shot learning, all while reducing the dependency on vast annotated datasets. As these models continue to scale in data, model size, and architectural sophistication, they promise to significantly accelerate the development of robust, clinically applicable AI tools, ultimately broadening the reach of precision oncology to all cancer patients, regardless of disease rarity.

The field of computational pathology stands at the precipice of a fundamental architectural transformation, moving from fragmented patch-level analysis to holistic whole-slide representation learning. This transition mirrors the evolution occurring in other data-rich domains where foundation models pretrained on massive, diverse datasets have catalyzed breakthroughs in capability and generalization. In pathology, this shift is driven by the recognition that whole-slide images (WSIs) contain biological information at multiple hierarchical levels—from cellular morphology to tissue microstructure and spatial organization across the entire slide. The limitations of patch-based methods, which process hundreds to thousands of small image regions per slide, have become increasingly apparent. These approaches typically treat WSIs as "bags of patches," often neglecting critical spatial relationships and long-range dependencies in the tumor microenvironment that are essential for accurate cancer diagnosis and prognosis [2] [17].

Framed within the broader thesis on the benefits of large-scale pretraining for cancer detection research, this architectural transition enables models to learn representations that capture the complex morphological patterns and spatial contexts that pathologists use for diagnosis. Where patch-level models see isolated fragments, whole-slide foundation models perceive integrated systems—the difference between examining individual trees and understanding the entire forest. This whitepaper examines the key architectural innovations driving this transition, provides quantitative comparisons of emerging methodologies, and details the experimental protocols enabling this paradigm shift in computational pathology for cancer research.

Architectural Evolution: From Local Patches to Global Context

Limitations of Traditional Patch-Based Approaches

Traditional computational pathology pipelines have relied heavily on patch-based processing due to the computational impossibility of directly processing gigapixel WSIs. These approaches typically divide WSIs into smaller patches (e.g., 256×256 or 512×512 pixels at 20× magnification), process them individually through convolutional neural networks (CNNs), and then aggregate the resulting features using various multiple instance learning (MIL) frameworks [18]. While this strategy made initial AI applications feasible, it introduced significant limitations:

- Loss of spatial context: Critical tissue architecture patterns spanning large areas are fragmented [17]

- Computational inefficiency: Processing thousands of overlapping patches creates redundancy [19]

- Limited representation learning: Most patch encoders are trained on natural images (e.g., ImageNet) rather than histopathology-specific datasets [18]

- Complex aggregation pipelines: Separate feature extraction and aggregation stages prevent end-to-end optimization [19]

These limitations become particularly problematic in cancer detection, where diagnostic decisions often depend on understanding spatial relationships between different tissue compartments, immune cell distributions, and invasive patterns that extend across millimeter-scale distances in the tissue.

Whole-Slide Foundation Models: Integrated Architectural Frameworks

Next-generation whole-slide foundation models address these limitations through integrated architectures designed specifically for gigapixel image processing. The TITAN (Transformer-based pathology Image and Text Alignment Network) model exemplifies this architectural transition, employing a Vision Transformer (ViT) to create general-purpose slide representations via a three-stage pretraining process [2]:

- Vision-only unimodal pretraining on 335,645 whole-slide images using self-supervised learning

- Cross-modal alignment with synthetic morphological descriptions at the region-of-interest level

- Slide-level vision-language alignment with corresponding pathology reports

A key innovation in TITAN is its approach to handling the computational challenge of gigapixel images. Rather than processing raw pixels directly, TITAN uses precomputed patch features from specialized histopathology encoders like CONCH, arranging them in a 2D feature grid that preserves spatial relationships [2]. This architectural strategy transforms the computational problem from processing billions of pixels to reasoning about structured feature representations.

Table 1: Comparative Architecture of Patch-Based vs. Whole-Slide Foundation Models

| Architectural Component | Traditional Patch-Based Models | Whole-Slide Foundation Models |

|---|---|---|

| Input Representation | Raw image patches (256×256 pixels) | Precomputed patch features in 2D spatial grid |

| Feature Encoder | ResNet-50 (ImageNet pretrained) | Domain-specific ViT (histopathology pretrained) |

| Context Modeling | Limited to patch or small neighborhoods | Full slide context with specialized position encoding |

| Training Data Scale | Thousands to hundreds of thousands of patches | Hundreds of thousands of whole slides |

| Multimodal Capability | Rare and limited | Native support for vision-language alignment |

| Typical Output | Patch-level predictions aggregated to slide-level | Direct slide-level representations |

Quantitative Benchmarking: Performance Across Cancer Detection Tasks

Comprehensive evaluation of whole-slide foundation models reveals their superior performance across diverse cancer detection tasks, particularly in low-data regimes and rare cancer scenarios. The quantitative evidence demonstrates clear advantages over both traditional patch-based methods and earlier slide-level approaches.

In direct performance comparisons, TITAN significantly outperforms previous methods across multiple machine learning settings and cancer types. On slide-level classification tasks, TITAN achieves a 12.4% average improvement in accuracy over patch-based baselines on rare cancer retrieval tasks [2]. The model's cross-modal capabilities enable zero-shot classification without task-specific fine-tuning, achieving performance competitive with fully supervised methods trained on labeled datasets—a capability that dramatically reduces the annotation burden for new cancer detection applications.

Table 2: Performance Comparison of Foundation Models on Cancer Detection Tasks

| Model | Pretraining Data | TCGA-BRCA Classification (AUC) | Rare Cancer Retrieval (mAP) | Survival Prediction (C-index) | Zero-Shot Classification (Accuracy) |

|---|---|---|---|---|---|

| TITAN [2] | 335,645 WSIs + 423K synthetic captions | 0.992 | 0.891 | 0.759 | 0.823 |

| CONCH [18] | 1.17M image-caption pairs | 0.972 | 0.842 | 0.741 | 0.794 |

| UNI [18] | 100M patches from 100K+ WSIs | 0.961 | 0.815 | 0.728 | Not Supported |

| PLIP [18] | 200K image-text pairs | 0.947 | 0.803 | 0.712 | 0.761 |

| ResNet-50 + MIL [18] | ImageNet | 0.918 | 0.762 | 0.683 | Not Supported |

For survival prediction—a critical task in oncology—models leveraging whole-slide representations have demonstrated significant advances. The graph-guided clustering approach with mixture density experts achieves a concordance index of 0.719±0.011 on TCGA-KIRC (renal cancer) and 0.649±0.034 on TCGA-LUAD (lung adenocarcinoma), substantially outperforming previous state-of-the-art methods [17]. This improvement stems from the model's ability to capture phenotype-level heterogeneity through spatial and morphological coherence across the entire tissue section, rather than focusing on isolated patches.

Experimental Protocols: Methodologies for Whole-Slide Representation Learning

TITAN Pretraining Methodology

The pretraining protocol for TITAN exemplifies the comprehensive approach required for effective whole-slide representation learning. The methodology consists of three integrated stages:

Stage 1: Vision-only Self-Supervised Pretraining

- Dataset: 335,645 WSIs (Mass-340K) across 20 organ types

- Input Processing: WSIs divided into non-overlapping 512×512 pixel patches at 20× magnification

- Feature Extraction: 768-dimensional features for each patch using CONCHv1.5

- Architecture: Vision Transformer with attention with linear bias (ALiBi) for long-context extrapolation

- Training Objective: iBOT framework combining masked image modeling and knowledge distillation

- View Generation: Random cropping of 16×16 feature regions (8,192×8,192 pixels) from WSI feature grid, with two global (14×14) and ten local (6×6) crops per region

Stage 2: Region-Level Vision-Language Alignment

- Dataset: 423,122 synthetic captions generated using PathChat, a multimodal generative AI copilot

- Alignment Method: Contrastive learning between region-of-interest features and textual descriptions

- Objective: Learn fine-grained correspondence between morphological patterns and semantic descriptions

Stage 3: Slide-Level Multimodal Alignment

- Dataset: 182,862 medical reports paired with WSIs

- Alignment Method: Cross-modal contrastive learning between slide representations and report embeddings

- Objective: Enable bidirectional retrieval and zero-shot classification capabilities [2]

Dynamic Patch Selection for Survival Analysis

For cancer survival prediction, a specialized methodology has been developed that bridges patch-level processing with slide-level reasoning:

Tissue Detection and Feature Extraction

- Apply tissue detection heuristic (e.g., Double-Pass method) to eliminate background regions [4]

- Extract 384-dimensional ViT embeddings from 256×256 pixel tissue patches

- Output: Patch-level feature matrix Feat ∈ ℝ^(n×d) where n = number of patches (up to 84,365)

Dynamic Patch Selection via Quantile-Based Thresholding

- Compute importance scores for each patch: logits = σ(W₂ ⋅ GELU(W₁ ⋅ X + b₁) + b₂)

- Calculate adaptive threshold τq as the q-th quantile (default q=0.25) of importance scores

- Select task-relevant patches: Psel = {X[:,i,:] ∣ logitsb[i] > τq}

- Approximately 75% of patches retained as task-relevant for q=0.25 [17]

Graph-Guided Phenotype Clustering

- Construct k-nearest neighbors graph integrating morphological and spatial features

- Apply graph-guided k-means clustering to group patches into phenotypically coherent regions

- Capture tumor microenvironment heterogeneity through spatially coherent clusters

Attention-Based Context Modeling

- Intra-cluster attention: Model fine-grained interactions within phenotypic groups

- Inter-cluster attention: Capture global contextual relationships across tissue compartments

Expert-Guided Survival Prediction

- Mixture density modeling with Gaussian mixture models

- Multiple experts specialized for different phenotypic patterns

- Gating network dynamically weights expert contributions based on cluster features [17]

Diagram 1: TITAN Three-Stage Pretraining Architecture

The Scientist's Toolkit: Essential Research Reagents

Implementing whole-slide representation learning requires specialized computational tools and resources. The following table details essential research reagents for developing and evaluating whole-slide foundation models in cancer detection research.

Table 3: Essential Research Reagents for Whole-Slide Representation Learning

| Resource Category | Specific Tools/Models | Function in Research Pipeline | Key Characteristics |

|---|---|---|---|

| Foundation Models | TITAN [2] | Whole-slide representation learning, multimodal alignment | 335K WSI pretraining, vision-language capabilities |

| CONCH [18] | Patch-level feature extraction, multimodal understanding | 1.17M image-caption pairs, vision-language pretraining | |

| UNI [18] | Self-supervised feature learning | 100M patches from 100K+ WSIs, 20+ tissue types | |

| Tissue Detection | Double-Pass [4] | Annotation-free tissue localization | 0.203s/slide on CPU, mIoU 0.826 vs supervised 0.871 |

| GrandQC UNet++ [4] | Quality control and tissue segmentation | Supervised approach, mIoU 0.871, 2.431s/slide | |

| MIL Frameworks | TransMIL [18] | WSI classification with self-attention | Models inter-patch relationships, transformer-based |

| CLAM [18] | Weakly-supervised WSI classification | Attention-based pooling, multiple instance learning | |

| DTFD-MIL [18] | Multi-tier feature distillation | Pseudobag generation, double-tier framework | |

| Datasets | TCGA [4] [17] | Model training and validation | Multi-cancer, 33+ cancer types, clinical annotations |

| CAMELYON16/17 [18] | Metastasis detection benchmarking | 399/1000 WSIs, lymph node sections, pixel-level annotations | |

| Evaluation Metrics | C-index [17] | Survival model performance | Concordance between predictions and outcomes |

| AUC/mAP [2] [18] | Classification and retrieval accuracy | Area under ROC curve, mean average precision |

Implementation Workflow: From Data to Deployment

The transition from patch-level to whole-slide representation learning necessitates a revised implementation workflow that maintains computational efficiency while capturing slide-wide context. The following diagram illustrates the integrated processing pipeline:

Diagram 2: Whole-Slide Image Analysis Pipeline

The architectural transition from patch-level to whole-slide representation learning represents a fundamental shift in computational pathology that mirrors the transformative impact of foundation models in other domains. By leveraging large-scale pretraining on hundreds of thousands of whole-slide images, these models capture the hierarchical biological information essential for accurate cancer detection, prognosis, and biomarker discovery. The quantitative evidence demonstrates clear performance advantages, particularly in challenging scenarios like rare cancer retrieval, survival prediction, and low-data regimes where traditional patch-based methods struggle.

As the field advances, the integration of multimodal data—including pathology reports, genomic information, and clinical outcomes—will further enhance the capabilities of whole-slide foundation models. The emerging paradigm of end-to-end whole-slide processing, coupled with efficient attention mechanisms and specialized position encodings, promises to unlock new frontiers in cancer research and clinical practice. For researchers and drug development professionals, these architectural transitions offer powerful new tools for advancing precision oncology through more accurate, interpretable, and generalizable cancer detection systems.

The integration of vision and language models represents a paradigm shift in computational pathology, moving beyond traditional single-modality approaches. By leveraging large-scale pretraining on whole-slide images (WSIs) and corresponding pathological reports, modern multimodal artificial intelligence (MMAI) systems achieve unprecedented capabilities in cancer detection, subtyping, and prognosis. Foundation models like TITAN (Transformer-based pathology Image and Text Alignment Network) demonstrate that pretraining on hundreds of thousands of WSIs enables robust performance across diverse clinical scenarios—from common cancers to rare conditions—while eliminating dependency on extensive manual annotations. This technical guide examines the architectures, training methodologies, and experimental validations underpinning these advances, providing researchers with actionable frameworks for implementing multimodal AI in oncological research and drug development.

Computational pathology has traditionally relied on single-modality approaches, analyzing histopathology images in isolation from rich textual data contained in pathology reports. This siloed approach creates significant limitations for cancer research, particularly in leveraging the synergistic relationship between visual morphological patterns and clinical diagnostic interpretations. Multimodal AI overcomes these constraints by simultaneously processing both visual and textual information, creating systems that more closely emulate the integrative reasoning of human pathologists.

The transformation to multimodal capabilities coincides with the rise of foundation models pretrained on massive datasets. Where previous patch-based models captured cellular-level features, newer whole-slide foundation models like TITAN operate at the patient and slide level, directly addressing complex clinical challenges in cancer detection research. By distilling knowledge from hundreds of thousands of WSIs across multiple organ systems, these models develop general-purpose representations transferable to resource-limited scenarios, including rare cancer retrieval and low-incidence prognostic tasks.

Technical Foundations of Multimodal Pathology AI

Architectural Framework

Multimodal pathology AI systems employ sophisticated architectures designed to process the extreme dimensionality of WSIs while aligning visual features with linguistic concepts:

Visual Encoders: TITAN utilizes a Vision Transformer (ViT) architecture that processes sequences of patch features rather than raw pixels. The model takes 768-dimensional features extracted from 512×512 pixel patches at 20× magnification, spatially arranged in a two-dimensional grid replicating tissue organization [2].

Cross-Modal Alignment: Vision-language pretraining aligns image representations with corresponding textual descriptions through contrastive learning. This enables bidirectional translation between morphological patterns and clinical descriptions [2] [20].

Long-Range Context Modeling: To handle gigapixel WSIs with >10^4 tokens, TITAN employs Attention with Linear Biases (ALiBi) extended to 2D, where bias is based on relative Euclidean distance between features in the tissue space [2].

Whole-Slide Pretraining Methodology

Large-scale pretraining represents the cornerstone of modern pathology AI, with TITAN demonstrating the scalability of self-supervised learning on massive WSI collections:

Table 1: TITAN Pretraining Dataset Composition

| Data Component | Volume | Description | Application |

|---|---|---|---|

| Whole-Slide Images | 335,645 | Mass-340K dataset across 20 organs, various stains and scanners | Visual self-supervised learning |

| Pathology Reports | 182,862 | Clinical reports corresponding to WSIs | Slide-level vision-language alignment |

| Synthetic Captions | 423,122 | Generated by PathChat copilot from ROIs | ROI-level fine-grained alignment |

The pretraining paradigm occurs in three distinct stages [2]:

- Vision-only unimodal pretraining on region crops using iBOT framework (knowledge distillation with masked image modeling)

- ROI-level cross-modal alignment with synthetic fine-grained morphological descriptions

- Slide-level cross-modal alignment with original pathology reports

This staged approach ensures the model captures histomorphological semantics at both regional and whole-slide levels while incorporating language understanding capabilities.

Quantitative Performance Benchmarks

Cancer Detection and Classification Accuracy

Multimodal foundation models demonstrate superior performance across multiple cancer types and tasks compared to traditional approaches:

Table 2: Performance Comparison Across Cancer Types and Tasks

| Model | Task | Cancer Types | Performance Metric | Result |

|---|---|---|---|---|

| TITAN (full) | Zero-shot classification | Multi-organ | Accuracy | Outperforms supervised baselines |

| TITAN (vision) | Cancer subtyping | BRCA, CESC, etc. | AUC | Superior to ROI and slide foundations |

| Double-Pass | Tissue detection | 9 TCGA cohorts | mIoU | 0.826 (vs. 0.871 for supervised UNet++) |

| Double-Pass | Tissue detection | TCGA | Inference time (CPU) | 0.203s per slide (vs. 2.431s for UNet++) |

Notably, TITAN achieves these results without fine-tuning or clinical labels, demonstrating the generalizability of representations learned through large-scale pretraining [2]. The model particularly excels in low-data regimes, including few-shot learning and rare cancer retrieval, where traditional supervised approaches struggle due to annotation scarcity.

Resource Efficiency and Scalability

Computational efficiency represents a critical consideration for clinical deployment:

Table 3: Computational Efficiency Comparison

| Method | Hardware | Inference Time | Annotations Required | Scalability |

|---|---|---|---|---|

| TITAN Inference | GPU-optimized | Real-time capable | None | High (generalizable) |

| Double-Pass Tissue Detection | Standard CPU | 0.203s per slide | None | Excellent |

| GrandQC UNet++ | GPU/CPU | 2.431s per slide | Extensive | Limited |

| Classical Otsu/K-means | CPU | <0.203s per slide | None | Moderate (accuracy limits) |

The efficiency of annotation-free methods like Double-Pass enables scalable preprocessing pipelines, ensuring subsequent AI models operate only on relevant tissue regions while minimizing computational overhead [4].

Experimental Protocols and Methodologies

Large-Scale Multimodal Pretraining Protocol

The TITAN pretraining methodology provides a reproducible framework for developing whole-slide foundation models:

Dataset Curation

- Collect 335,645 WSIs across 20 organ types with corresponding pathology reports

- Ensure diversity in stains, scanner types, and tissue preparations

- Generate synthetic captions for 423,122 regions of interest (8,192×8,192 pixels at 20×) using multimodal generative AI

Vision-Only Pretraining (Stage 1)

- Divide WSIs into non-overlapping 512×512 pixel patches at 20× magnification

- Extract 768-dimensional features for each patch using established patch encoders

- Create views by randomly cropping 16×16 feature grids (8,192×8,192 pixel regions)

- Sample two global (14×14) and ten local (6×6) crops from each region

- Apply iBOT framework with masked image modeling and knowledge distillation

- Implement posterization feature augmentation with vertical/horizontal flipping

Multimodal Alignment (Stages 2-3)

- ROI-level alignment: Contrast 8k×8k ROIs against synthetic captions

- Slide-level alignment: Contrast WSIs against original pathology reports

- Employ cross-modal contrastive loss to align visual and textual embeddings

Tissue Detection and Quality Control Protocol

The Double-Pass method provides annotation-free tissue detection critical for preprocessing:

Thumbnail Generation

- Extract thumbnails from 3,322 TCGA WSIs across nine cancer cohorts

- Utilize GrandQC tissue-versus-background masks as ground truth

Double-Pass Algorithm

- First Pass: Apply Otsu's thresholding for initial tissue-background separation

- Second Pass: Refine detection using K-means clustering on candidate tissue regions

- Hybrid Integration: Combine complementary strengths of both methods

- Post-processing: Morphological operations to smooth tissue boundaries

Evaluation Metrics

- Calculate mean Intersection over Union (mIoU) against GrandQC annotations

- Benchmark inference time on standard CPU hardware

- Compare against Otsu's method, K-means, and supervised UNet++

Visualization of Workflows and Architectures

Multimodal Pretraining Pipeline

Multimodal Transformer Architecture

Research Reagent Solutions

Implementing multimodal pathology AI requires specific computational frameworks and datasets:

Table 4: Essential Research Reagents for Multimodal Pathology AI

| Resource | Type | Function | Implementation Example |

|---|---|---|---|

| CONCHv1.5 | Patch Encoder | Extracts 768-dimensional features from 512×512 patches | Extended version of CONCH for rich ROI representation [2] |

| Mass-340K Dataset | Pretraining Data | 335,645 WSIs across 20 organs with reports | Foundation for large-scale self-supervised learning [2] |

| TCGA Cohorts | Benchmark Data | 3,322 annotated WSIs across 9 cancer types | Evaluation standard for tissue detection and cancer analysis [4] |

| iBOT Framework | Self-Supervised Learning | Knowledge distillation with masked image modeling | Vision-only pretraining with robust representations [2] |

| ALiBi (2D Extension) | Positional Encoding | Attention with linear biases for long-context WSIs | Enables extrapolation to large feature grids [2] |

| Double-Pass Algorithm | Tissue Detection | Annotation-free tissue localization | Quality control preprocessing for WSI pipelines [4] |

| PathChat | Synthetic Caption Generator | Produces fine-grained morphological descriptions | Generates 423k ROI-text pairs for vision-language alignment [2] |

Future Directions and Clinical Translation

The evolution of multimodal pathology AI points toward increasingly integrated diagnostic systems. Emerging research focuses on extending multimodal frameworks to incorporate genomic data, treatment responses, and longitudinal patient outcomes—creating comprehensive digital twins for personalized oncology [21] [20]. As noted in recent literature, "Multimodal AI can lead to improved operational efficiency by enabling automated reporting and streamlining clinical workflows, helping to reduce clinician burnout and accelerate diagnostic turnaround times" [21].

Technical challenges remain in scaling these systems across diverse healthcare institutions with varying equipment, protocols, and data standards. Future work must address model robustness across scanner types, staining variations, and population demographics to ensure equitable cancer detection performance. The integration of explainable AI (XAI) techniques will be crucial for clinical adoption, providing transparent rationale for multimodal predictions that pathologists can verify and trust [22] [20].

For research and drug development applications, multimodal foundation models offer unprecedented opportunities for biomarker discovery, treatment response prediction, and patient stratification. By leveraging the synergistic relationship between visual morphology and clinical language, these systems accelerate the translation of pathological insights into therapeutic advances, ultimately enhancing precision oncology and patient care.

Building and Deploying WSI Foundation Models: Architectures, Training Strategies, and Clinical Applications

The field of computational pathology is undergoing a paradigm shift from patch-based analysis to whole-slide foundation models capable of processing gigapixel images. While traditional patch-based foundation models capture morphological patterns in histology patch embeddings, translating these capabilities to address patient- and slide-level clinical challenges remains complex due to the immense scale of whole-slide images (WSIs) and limited clinical data for rare diseases [2]. This limitation has spurred the development of transformer-based whole-slide encoders that can distill pathology-specific knowledge from large WSI collections, simplifying clinical endpoint prediction with their off-the-shelf application [2].

Within the context of cancer detection research, large-scale pretraining on WSIs enables models to learn general-purpose slide representations that capture the spatial organization of the tumor microenvironment—critical features for diagnosis, prognosis, and biomarker prediction. These models fundamentally transform how researchers approach WSI analysis by moving beyond treating WSIs as mere "bags of independent features" to explicitly modeling long-range spatial dependencies across tissue structures [2]. The emergence of multimodal vision-language models further extends these capabilities by aligning histology patterns with clinical reports, enabling cross-modal retrieval and zero-shot classification for resource-limited scenarios [2].

Core Architectural Principles

From Patch Encoders to Whole-Slide Transformers

Transitioning from patch-level to slide-level analysis presents significant architectural challenges. Whole-slide transformers process sequences of patch features encoded by powerful histology patch encoders rather than raw image pixels [2]. This approach treats pre-extracted patch features as the input "tokens" for the transformer architecture, with the patch encoder functioning similarly to the patch embedding layer in a conventional Vision Transformer (ViT) [2].

A fundamental challenge in this domain involves handling the extremely long and variable input sequences characteristic of WSIs, which can exceed 10,000 tokens per slide compared to the 196-256 tokens typical in patch-level analysis [2]. To address this, researchers have developed specialized preprocessing approaches that divide each WSI into non-overlapping patches (typically 512×512 pixels at 20× magnification), followed by extraction of 768-dimensional features for each patch using pretrained encoders [2]. The spatial arrangement of these patch features is preserved in a two-dimensional feature grid that replicates the original tissue organization, enabling the use of positional encoding schemes that maintain spatial context [2].

Positional Encoding Strategies

Spatial relationships between tissue regions provide critical diagnostic information in pathology. Transformer architectures require explicit positional encoding to leverage this spatial information, unlike convolutional networks that inherently preserve spatial relationships through their operation.

2D Positional Encoding methods encode both horizontal and vertical coordinates of patches within the WSI. The TMIL framework introduces a 2D positional encoding module based on transformer architecture that replaces standard one-dimensional positional data with two-dimensional patch information using row and column vectors [23]. These vectors are modeled with a self-attention mechanism, enabling the network to focus on positional correlations between patches [23].

Attention with Linear Biases (ALiBi) extends positional encoding strategies originally proposed for long-context inference in large language models to the two-dimensional domain [2]. In this approach, the linear bias is based on the relative Euclidean distance between features in the feature grid, which reflects actual physical distances between patches in the tissue [2]. This method has demonstrated superior long-context extrapolation capabilities during inference.

Mask-Based Position Reconstruction incorporates an auxiliary reconstruction task to enhance spatial-semantic consistency. The PEGTB-MIL framework uses a position decoder module to ensure decoded spatial coordinates remain consistent with true patch coordinates, significantly enhancing the spatial-semantic consistency and generalization capability of patch features [24].

Multimodal Vision-Language Alignment

The integration of visual and textual information represents a frontier in whole-slide analysis. The TITAN (Transformer-based pathology Image and Text Alignment Network) exemplifies this approach through a three-stage pretraining paradigm [2]:

- Stage 1: Vision-only unimodal pretraining on ROI crops

- Stage 2: Cross-modal alignment of generated morphological descriptions at ROI-level

- Stage 3: Cross-modal alignment at WSI-level using clinical reports

This architecture enables general-purpose slide representations that support diverse clinical applications including rare disease retrieval, cancer prognosis, and pathology report generation without requiring fine-tuning or clinical labels [2].

Quantitative Performance Comparison

Table 1: Performance Comparison of Transformer-Based WSI Encoders on Cancer Subtyping

| Model | Architecture Type | Dataset | Task | Performance (AUC) |

|---|---|---|---|---|

| TITAN (full) | Multimodal Vision-Language | Multi-organ (20 organs) | Multiple cancer types | Outperforms existing slide foundation models |

| TMIL | Transformer MIL with 2D PE | Colorectal Adenoma | Classification | 97.28% |

| PEGTB-MIL | Position-guided Transformer MIL | TCGA-LUNG | Cancer subtyping | 97.13% ± 0.34% |

| PEGTB-MIL | Position-guided Transformer MIL | TCGA-BRCA | Cancer subtyping | 86.74% ± 2.64% |

| PEGTB-MIL | Position-guided Transformer MIL | USTC-EGFR | Mutation prediction | 83.25% ± 1.65% |

| PEGTB-MIL | Position-guided Transformer MIL | USTC-GIST | Mutation prediction | 72.52% ± 1.63% |

Table 2: Performance in Low-Data Regimes and Rare Cancer Retrieval

| Model | Training Data | Few-Shot Learning | Zero-Shot Classification | Rare Cancer Retrieval |

|---|---|---|---|---|

| TITAN | 335,645 WSIs + 182,862 reports | Superior performance | Supported via language alignment | State-of-the-art |

| Conventional MIL | Disease-specific cohorts | Limited capability | Not supported | Limited capability |

| ROI-based models | Patch-level datasets | Moderate performance | Not supported | Limited capability |

Implementation Methodologies

Pretraining Strategies

Large-scale pretraining has emerged as a critical component for developing robust whole-slide encoders. The TITAN framework employs a comprehensive pretraining approach using 335,645 whole-slide images across 20 organ types [2]. The pretraining incorporates multiple strategies:

Knowledge Distillation and Masked Image Modeling adapts the iBOT framework for vision-only pretraining on two-dimensional feature grids [2]. This approach enables the model to learn rich representations of histomorphological semantics at both the region-of-interest (4×4 mm²) and whole-slide levels.

Multi-View Self-Supervised Learning creates views of a WSI by randomly cropping the 2D feature grid [2]. Specifically, a region crop of 16×16 features covering a region of 8,192×8,192 pixels is randomly sampled from the WSI feature grid. From this region crop, two random global (14×14) and ten local (6×6) crops are sampled for pretraining [2]. These feature crops are further augmented with vertical and horizontal flipping, followed by posterization feature augmentation.

Synthetic Data Integration leverages 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology to enhance fine-grained morphological understanding [2]. This approach demonstrates the scaling potential of pretraining with synthetic data, particularly for rare conditions with limited annotated examples.

Multiple Instance Learning Frameworks

Multiple Instance Learning (MIL) provides the foundational framework for weakly supervised whole-slide classification. Transformer-based MIL approaches have evolved to better capture spatial relationships:

Pseudo-Bag Construction randomly splits WSI patches into numerous pseudo-bags to create additional training samples [23]. This approach addresses the challenge of limited WSI-level labels by effectively increasing the training signal.

Deep Metric Learning Integration incorporates metric learning to provide richer supervisory information and mitigate overfitting [23]. The TMIL framework extracts the instance with the highest probability value from each pseudo-bag, creating a new dataset to train both instance-level classification and deep metric learning models using pseudo-bag labels.

Multi-Head Self-Attention (MHSA) explores contextual and spatial dependencies between fused features [24]. The PEGTB-MIL framework incorporates semantic features and spatial embeddings, then applies MHSA to learn discriminative WSI-level feature representations.

Architectural Visualization

Whole-Slide Transformer Architecture with Positional Encoding

Multimodal Pretraining Pipeline for Whole-Slide Analysis

Essential Research Reagents

Table 3: Research Reagent Solutions for Transformer-Based WSI Analysis

| Category | Component | Specification/Function | Representative Examples |

|---|---|---|---|

| Data Resources | Whole-Slide Images | Gigapixel digital pathology slides | 335,645 WSIs across 20 organs [2] |

| Pathology Reports | Textual descriptions for multimodal alignment | 182,862 medical reports [2] | |

| Synthetic Captions | AI-generated fine-grained descriptions | 423,122 ROI-caption pairs [2] | |

| Computational Components | Patch Encoder | Feature extraction from image patches | CONCHv1.5 (768-dimensional features) [2] |

| Positional Encoder | Spatial coordinate embedding | 2D positional encoding modules [23] [24] | |

| Transformer Backbone | Core architecture for sequence processing | ViT-based with ALiBi for long sequences [2] | |

| Implementation Tools | Feature Grid | Spatial organization of patch features | 2D grid preserving tissue structure [2] |

| Attention Mechanism | Contextual relationship modeling | Multi-head self-attention [24] | |

| Mask Reconstruction | Auxiliary position learning task | Position decoder for spatial consistency [24] |

Transformer-based architectures for whole-slide encoding represent a transformative advancement in computational pathology, enabling comprehensive analysis of gigapixel images through large-scale pretraining and sophisticated spatial modeling. The integration of multimodal capabilities, particularly vision-language alignment, extends the utility of these models to challenging clinical scenarios including rare cancer retrieval and zero-shot classification. As these architectures continue to evolve, they hold significant promise for accelerating cancer detection research and bridging the gap between computational innovation and clinical application in personalized oncology.

Large-scale pretraining has emerged as a transformative approach in computational pathology, enabling the development of robust models for cancer detection from Whole Slide Images (WSIs). This technical guide delineates three core pretraining paradigms—Self-Supervised Learning (SSL), Masked Image Modeling (MIM), and Knowledge Distillation (KD)—detailing their theoretical foundations, methodological workflows, and applications in histopathology. Framed within the context of enhancing cancer research, we synthesize experimental protocols from seminal studies, provide quantitative performance comparisons, and illustrate key signaling pathways and workflows. The content is tailored for researchers, scientists, and drug development professionals, providing a comprehensive toolkit for implementing these advanced methodologies in oncology-focused computational pathology.

The advent of digital pathology has generated vast repositories of WSIs, which are gigapixel-sized scans of tissue sections essential for cancer diagnosis and research. Traditional supervised deep learning approaches for analyzing WSIs are constrained by the cost, time, and expertise required for large-scale pixel-level annotations. Large-scale pretraining offers a powerful alternative by leveraging unlabeled data to learn general-purpose feature representations, which can be effectively fine-tuned for specific downstream tasks such as cancer classification, segmentation, and prognosis prediction [2] [25].

Within this paradigm, Self-Supervised Learning (SSL) has proven particularly effective for histopathology. SSL methods create pretext tasks that generate labels directly from the input data, enabling models to learn rich morphological features of tissues and cells without human annotation [2] [26]. A dominant SSL approach in computer vision, Masked Image Modeling (MIM), involves masking portions of an input image and training a model to reconstruct the missing information. This technique, inspired by the success of BERT in natural language processing, has been adapted for pathology images to learn powerful representations that capture histological context [27] [28] [26]. Concurrently, Knowledge Distillation (KD) facilitates the transfer of capabilities from large, computationally intensive models (teachers) to compact, efficient models (students), making deployment in resource-limited clinical settings feasible while preserving performance [29] [30].

This guide provides an in-depth exploration of these three pretraining paradigms, emphasizing their application and benefits in cancer detection research using WSIs. We detail core methodologies, experimental protocols, and performance outcomes, supplemented with structured data, workflow visualizations, and reagent solutions to equip practitioners with the necessary tools for advanced model development.

Core Methodologies and Theoretical Foundations

Self-Supervised Learning (SSL) in Histopathology

SSL aims to learn informative representations from unlabeled data by defining a pretext task where the supervisory signal is derived from the data itself. In computational pathology, common SSL strategies include contrastive learning and generative modeling.

- Contrastive Learning: Methods like contrastive multiview coding train an encoder to produce similar embeddings for different augmented views of the same image patch (positive pairs) and dissimilar embeddings for views from different patches (negative pairs). This approach learns invariant features beneficial for tasks like cancer subtyping and slide retrieval [2] [30].

- Generative Modeling: This involves reconstructing the original input from a corrupted or altered version. MIM is a prominent generative SSL method gaining traction in pathology [26].

SSL pretraining on large, diverse WSI datasets allows models to learn general morphological features, which can be effectively transferred to various downstream tasks with minimal task-specific labeled data through linear probing, fine-tuning, or few-shot learning [2].

Masked Image Modeling (MIM)

MIM has emerged as a powerful SSL technique for vision, including histopathology. The core idea is to randomly mask a portion of the input image and train a model to predict the missing content.