Mass-100K & Mass-340K: The Pathology Foundation Model Datasets Powering a New Era in AI-Driven Diagnostics

This article provides a comprehensive analysis of the Mass-100K and Mass-340K datasets, foundational resources revolutionizing computational pathology.

Mass-100K & Mass-340K: The Pathology Foundation Model Datasets Powering a New Era in AI-Driven Diagnostics

Abstract

This article provides a comprehensive analysis of the Mass-100K and Mass-340K datasets, foundational resources revolutionizing computational pathology. Tailored for researchers and drug development professionals, it explores the scale, composition, and origins of these datasets. The content details their critical role in training versatile models like UNI and TITAN for tasks ranging from cancer subtyping to biomarker prediction. It further examines the methodologies for leveraging these datasets, addresses key computational challenges, and validates their performance against established benchmarks. Finally, the discussion synthesizes how these datasets are accelerating the development of robust, general-purpose AI tools for clinical and research applications.

Unpacking Mass-100K and Mass-340K: The Foundational Data Powering Next-Gen Pathology AI

In the rapidly evolving field of computational pathology (CPath), the development of robust foundation models is critically dependent on large-scale, diverse, and well-curated datasets. Among the most significant resources enabling recent advancements are the Mass-100K and Mass-340K datasets, which have served as the foundational pretraining corpora for pioneering models such as UNI and TITAN [1] [2]. These datasets have pushed the boundaries of scale and diversity in histopathology data, moving the field beyond the constraints of earlier collections like The Cancer Genome Atlas (TCGA). This technical guide provides a comprehensive analysis of the scale, composition, and origin of these two pivotal datasets, framing them within the broader context of pathology foundation model research. Understanding their precise characteristics is essential for researchers, scientists, and drug development professionals aiming to leverage, evaluate, or build upon these foundational resources.

The Mass-100K and Mass-340K datasets represent consecutive generations of scale and complexity in histopathology data collection. Mass-100K, introduced with the UNI model, marked a significant step up from previous benchmarks [1]. Its successor, Mass-340K, expanded this paradigm further in both volume and multimodal richness for the development of TITAN, a whole-slide foundation model [2]. The table below provides a detailed quantitative comparison of their core characteristics.

Table 1: Core Characteristics of Mass-100K and Mass-340K Datasets

| Characteristic | Mass-100K Dataset | Mass-340K Dataset |

|---|---|---|

| Total Number of Whole Slide Images (WSIs) | 100,426 diagnostic H&E-stained WSIs [1] | 335,645 WSIs [2] |

| Total Number of Image Patches | >100 million tissue patches [1] | Information Not Specified |

| Data Volume | >77 TB of data [1] | Information Not Specified |

| Major Tissue Types | 20 major tissue types [1] | 20 organs [2] |

| Data Sources | Massachusetts General Hospital (MGH), Brigham and Women's Hospital (BWH), Genotype-Tissue Expression (GTEx) consortium [1] | Internal dataset (implied from MGH/BWH), includes 182,862 medical reports [2] |

| Associated Foundation Model | UNI [1] [3] | TITAN (Transformer-based pathology Image and Text Alignment Network) [2] |

| Key Innovation | Scale and diversity for self-supervised patch-encoder pretraining [1] | Scale combined with multimodal alignment (images + reports + synthetic captions) for whole-slide representation learning [2] |

Detailed Profile of Mass-100K

Composition and Curation

The Mass-100K dataset was explicitly designed to overcome the limitations of previous datasets like TCGA, which primarily contained primary cancer histology slides [1]. Its composition of over 100 million image patches from more than 100,000 diagnostic hematoxylin and eosin (H&E)-stained whole-slide images was curated to provide a rich source of information for learning objective characterizations of histopathologic biomarkers [1]. The dataset's massive scale and diversity across 20 major tissue types were instrumental in training UNI, a general-purpose self-supervised vision encoder based on a Vision Transformer Large (ViT-L) architecture [1] [4].

Experimental Validation and Scaling Laws

The utility of Mass-100K was demonstrated through rigorous experiments establishing scaling laws in computational pathology. Researchers systematically evaluated the impact of data scale by creating subsets of the full dataset: Mass-1K (1 million images, 1,404 WSIs) and Mass-22K (16 million images, 21,444 WSIs) [1]. When used to pretrain the UNI model for a large-scale, hierarchical cancer classification task based on the OncoTree system (covering 108 cancer types), a clear positive correlation between pretraining data volume and downstream task performance was observed [1]. The model pretrained on the full Mass-100K dataset outperformed those trained on the smaller subsets, demonstrating a critical characteristic of a foundation model: improved performance on various tasks when trained on larger datasets [1].

Table 2: Key Experiments Demonstrating Mass-100K's Utility

| Experiment Purpose | Experimental Setup | Key Findings |

|---|---|---|

| Establishing Scaling Laws | Pretraining UNI on Mass-1K, Mass-22K, and Mass-100K subsets. Evaluation on OncoTree cancer classification (OT-43 and OT-108 tasks) using an Attention-Based Multiple Instance Learning (ABMIL) classifier [1]. | Performance increased significantly with data scale. From Mass-22K to Mass-100K, top-1 accuracy increased by +3.7% on OT-43 and +3.0% on OT-108 (P < 0.001) [1]. |

| Benchmarking Against Other Models | Comparing UNI (pretrained on Mass-100K) to other encoders like CTransPath (TCGA, PAIP) and REMEDIS (TCGA) on the same OncoTree classification tasks [1]. | UNI outperformed all baseline models by a wide margin, demonstrating the advantage of its large-scale and diverse pretraining dataset [1]. |

Detailed Profile of Mass-340K

Composition and Multimodal Expansion

The Mass-340K dataset represents a generational leap, not only in the number of WSIs but also in its multimodal nature. It was assembled to train TITAN, a multimodal whole-slide foundation model [2]. Beyond the 335,645 WSIs, the dataset incorporates 182,862 medical reports and 423,122 synthetic fine-grained captions generated using a multimodal generative AI copilot for pathology [2]. This structure enables a three-stage pretraining strategy: 1) vision-only unimodal pretraining, 2) cross-modal alignment with generated morphological descriptions at the region-of-interest (ROI) level, and 3) cross-modal alignment at the WSI level with clinical reports [2].

Advancements in Whole-Slide Representation

A pivotal innovation facilitated by Mass-340K is the shift from patch-level to whole-slide image representation learning. While patch-based models like UNI require an additional aggregation model (e.g., an ABMIL) for slide-level tasks, TITAN is designed to directly produce a general-purpose slide-level representation [2]. The dataset's scale and multimodal annotations were crucial for this advancement. The pretraining process involves dividing WSIs into non-overlapping patches at 20x magnification, extracting features using a powerful patch encoder, and then processing the spatially arranged 2D feature grid with a Vision Transformer to model long-range dependencies across the entire slide [2].

Experimental Workflows and Validation Protocols

Workflow for Patch-Based Foundation Models (e.g., UNI)

The typical experimental workflow for building and validating a patch-based foundation model like UNI using Mass-100K involves a self-supervised learning approach, followed by transfer learning on downstream tasks. The following diagram illustrates this multi-stage process.

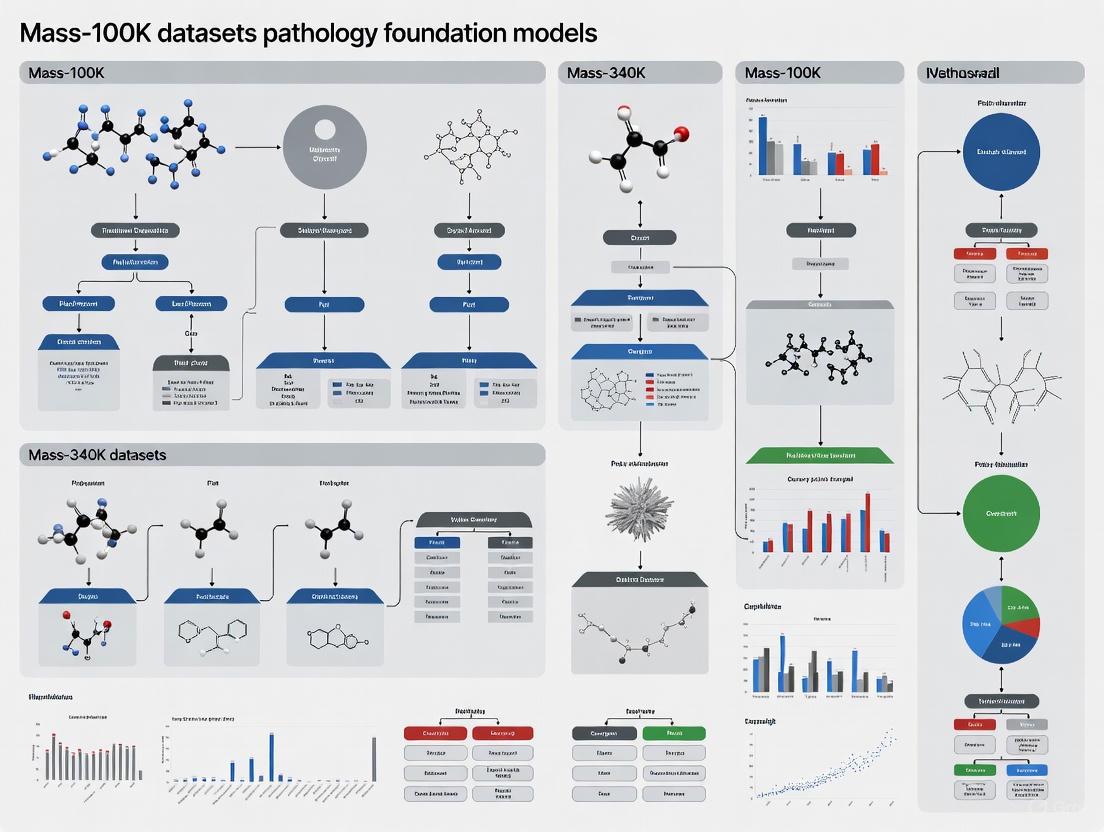

Figure 1: Workflow for training and applying a patch-based foundation model like UNI.

Validation via Whole-Slide Image Retrieval

A critical methodology for validating the quality of embeddings learned by foundation models like UNI is zero-shot whole-slide image (WSI) retrieval. This protocol tests the model's ability to find semantically similar cases in a large database without task-specific fine-tuning, directly assessing the generalizability and semantic richness of the features [4]. A standard protocol is outlined below:

- Data: The Cancer Genome Atlas (TCGA) diagnostic slides, comprising 11,444 WSIs from 9,339 patients across 23 organs and 117 cancer subtypes, serve as a standard benchmark [4].

- Search Framework: The Yottixel search engine is often used due to its flexible topology, which allows for the integration of various deep learning models. It uses an unsupervised "mosaic" patching method to create a compact, representative set of patches from each WSI [4].

- Patching: WSIs are segmented into distinct regions via color composition clustering (k-means). A small percentage (e.g., 2%) of representative 224x224 pixel patches are then selected from each region to form the WSI's mosaic [4].

- Embedding and Indexing: Each patch is passed through the foundation model (e.g., UNI) to generate an embedding. These patch embeddings are used to build a search index for the entire database [4].

- Evaluation Metric: Performance is measured using the macro-averaged F1-score for top-1, top-3, and top-5 WSI retrievals. The macro-average ensures balanced evaluation across all cancer subtypes, regardless of class prevalence [4].

Key Validation Result: In a comprehensive benchmark, the UNI model (Yottixel-UNI) achieved a top-5 retrieval F1 score of 42% ± 14%, outperforming the baseline DenseNet model (27% ± 13%) and demonstrating competitive performance with other contemporary foundation models like Virchow and GigaPath [4].

The Researcher's Toolkit

The following table details key computational tools and resources essential for working with and evaluating large-scale pathology datasets and foundation models.

Table 3: Essential Research Reagents and Computational Tools

| Tool / Resource | Type | Primary Function | Relevance to Mass-100K/340K |

|---|---|---|---|

| UNI Model Weights | Foundation Model | Provides pretrained patch encoder for feature extraction from histology patches [3]. | Direct output of Mass-100K pretraining; used as a feature extractor for downstream tasks [1] [3]. |

| TITAN Model | Multimodal Whole-Slide Foundation Model | Generates general-purpose slide-level representations and enables cross-modal tasks like report generation [2]. | Direct output of Mass-340K pretraining; represents the next generation of slide-level models [2]. |

| Yottixel | Search Engine / Framework | Enables efficient whole-slide image search and retrieval using patch-based embeddings [4]. | Key framework for the zero-shot evaluation of foundation model embeddings on retrieval tasks [4]. |

| ABMIL (Attention-Based MIL) | Algorithm | Aggregates patch-level features into a slide-level representation for prediction tasks [1]. | Standard algorithm used to evaluate patch-based models like UNI on slide-level classification tasks [1]. |

| DINOv2 | Self-Supervised Learning Algorithm | Framework for self-supervised pretraining combining knowledge distillation and masked image modeling [1]. | The SSL algorithm used to pretrain the UNI model on the Mass-100K dataset [1]. |

| Vision Transformer (ViT) | Model Architecture | Neural network architecture that uses self-attention to process sequences of image patches [1] [2]. | Core architecture for both UNI (ViT-L) and TITAN [1] [2]. |

| TCGA (The Cancer Genome Atlas) | Public Dataset | A large public repository of cancer-related WSIs and molecular data [1]. | Serves as the primary benchmark dataset for evaluating models pretrained on Mass-100K/340K [1] [4]. |

The Mass-100K and Mass-340K datasets are cornerstone resources that have fundamentally shaped the landscape of computational pathology. Mass-100K established the critical importance of scale and diversity for training general-purpose patch encoders, while Mass-340K has further advanced the field by enabling multimodal, whole-slide foundation models. The rigorous experimental protocols established for their validation, particularly in challenging zero-shot retrieval settings, provide a robust framework for evaluating future models. As the field progresses, these datasets and the models they spawned serve as both a foundation and a benchmark, guiding ongoing research toward more generalizable, robust, and clinically applicable AI tools in pathology and drug development.

The development of powerful computational pathology foundation models (CPathFMs) is intrinsically linked to the scale, diversity, and quality of the histopathology data used for their training [5]. These models, which learn rich feature representations from unlabeled whole-slide images (WSIs) via self-supervised learning, have demonstrated remarkable potential in automating complex pathology tasks such as diagnosis, prognosis, and biomarker discovery [5]. However, their performance and generalizability are critically dependent on the data they are trained on. The "target population of images" an AI solution may encounter in its intended use is vast, distributed across multiple dimensions of variability including patient demographics, specimen sampling, slide processing, and imaging protocols [6]. To create models that are robust to this biological and technical heterogeneity, training datasets must be correspondingly diverse and representative. This technical guide delves into the core aspects of data compilation for CPathFMs, with a specific focus on the Mass-340K dataset, analyzing its composition, sourcing, and the methodologies it enables.

The Mass-340K dataset represents a significant scaling of its predecessor, Mass-100K, and stands as a cornerstone for training large-scale pathology foundation models. The following table summarizes the core quantitative attributes of the Mass-340K dataset as used in the development of the TITAN (Transformer-based pathology Image and Text Alignment Network) model [2].

Table 1: Composition of the Mass-340K Dataset

| Attribute | Description | Scale/Value |

|---|---|---|

| Total WSIs | Number of whole-slide images | 335,645 |

| Medical Reports | Accompanying pathology reports | 182,862 |

| Synthetic Captions | Fine-grained ROI captions generated via AI copilot (PathChat) | 423,122 |

| Organ Diversity | Number of different organ types represented | 20 |

| Stain Types | Includes H&E and other staining protocols | Multiple |

| Scanner Types | Various scanner models used for digitization | Multiple |

The Mass-340K dataset was designed with diversity as a key principle, distributed across 20 organ types, different stains, diverse tissue types, and scanned with various scanner types [2]. This diversity has proven to be a critical factor in developing patch encoders that generalize well, a principle that was successfully translated to the slide level with TITAN. The dataset is used for multi-stage pretraining, involving vision-only self-supervised learning on region-of-interest (ROI) crops, followed by cross-modal alignment using both synthetic captions and original pathology reports [2].

Data Sourcing and Institutional Partnerships

Large-scale pathology datasets are often compiled through collaborations with multiple medical and research institutions. These partnerships are essential for accessing a wide variety of cases that reflect real-world clinical practice.

The Mass-340K dataset is an internal dataset, and while its specific institutional sources are not exhaustively detailed in the provided context, major academic medical centers like Massachusetts General Hospital (MGH) and Brigham and Women's Hospital (BWH) are consistently featured as key contributors in the computational pathology research ecosystem [5]. Furthermore, public data sources play an indispensable role in benchmarking and model development.

Table 2: Key Data Sources in Computational Pathology

| Data Source | Type | Role and Relevance |

|---|---|---|

| MGH, BWH | Academic Medical Centers | Often sources of large, diverse, real-world clinical pathology data for model training and validation [5]. |

| GTEx (Genotype-Tissue Expression) | Public Research Program | Provides a rich resource of normal, non-diseased tissue samples, crucial for understanding baseline biology and changes in disease [7]. |

| TCGA (The Cancer Genome Atlas) | Public Database | A foundational source for cancer genomics and associated histopathology images across multiple cancer types [5]. |

| Camelyon Series | Public Benchmark Dataset | Widely used for evaluating metastasis detection in breast cancer; recently refined into the "Camelyon+" dataset with cleaned labels and expanded annotations [8]. |

| HuBMAP (Human BioMolecular Atlas Program) | Public Research Consortium | Aims to construct a 3D reference atlas of the healthy human body, providing multiscale data from organs down to cells and biomarkers [7]. |

Initiatives like HuBMAP involve experts from over 20 consortia and are critical for establishing a Common Coordinate Framework (CCF) that helps harmonize multimodal data, including 3D organ models, histology images, and single-cell omics data [7]. Mapping new experimental data into such a reference atlas enables powerful comparisons between healthy and diseased tissue.

Experimental Protocols and Workflow Methodologies

The utility of a large-scale dataset like Mass-340K is realized through sophisticated experimental protocols. The pretraining of the TITAN model exemplifies a modern, multi-stage methodology for building a multimodal whole-slide foundation model.

TITAN's Multi-Stage Pretraining Workflow

The pretraining strategy for TITAN consists of three distinct stages to ensure that the resulting slide-level representations capture histomorphological semantics at both the region and whole-slide levels [2].

- Stage 1: Vision-Only Unimodal Pretraining. This stage uses the 335,645 WSIs from Mass-340K for visual self-supervised learning. The core technique adapts the iBOT framework, which combines masked image modeling and knowledge distillation, to the slide level. The input to the model is not raw pixels but a 2D grid of pre-extracted patch features (768-dimensional features from CONCHv1.5). The model is trained by creating multiple views of a WSI through random cropping of this feature grid and applying augmentations like flipping and posterization [2].

- Stage 2: Cross-Modal Alignment at ROI-Level. In this stage, the vision model is aligned with language. The training uses 423,122 pairs of high-resolution ROIs (8,192 x 8,192 pixels) and corresponding synthetic captions generated by PathChat, a multimodal generative AI copilot for pathology. This step teaches the model to associate fine-grained visual patterns with descriptive text [2].

- Stage 3: Cross-Modal Alignment at WSI-Level. The final stage aligns entire WSIs with their corresponding pathology reports. This training uses 182,862 WSI-report pairs from Mass-340K, enabling the model to understand slide-level clinical context and summaries [2].

The following diagram illustrates this integrated workflow, from data input to final model capabilities.

Handling Gigapixel WSIs and Long-Range Context

A significant technical challenge in slide-level modeling is handling the gigapixel size of WSIs. TITAN addresses this by:

- Feature Grid Construction: Dividing each WSI into non-overlapping 512x512 pixel patches at 20x magnification and extracting 768-dimensional features for each patch using a pre-trained patch encoder (CONCHv1.5). These features are spatially arranged in a 2D grid [2].

- Context Modeling with Transformers: Using a Vision Transformer (ViT) to process the feature grid. To handle long and variable input sequences, TITAN uses Attention with Linear Biases (ALiBi), extended to 2D. This allows the model to extrapolate to longer contexts during inference than seen in training, based on the relative Euclidean distance between features in the grid [2].

The Scientist's Toolkit: Essential Research Reagents and Materials

To replicate or build upon research involving datasets like Mass-340K, scientists rely on a suite of computational tools, models, and benchmark datasets. The table below catalogues key resources referenced in the context of modern computational pathology research.

Table 3: Key Research Reagents and Solutions for Computational Pathology

| Resource Name | Type | Function and Description |

|---|---|---|

| CONCH / CONCHv1.5 | Patch Encoder Model | A foundational model trained via contrastive learning on image-caption pairs. Used to extract foundational feature representations from histology image patches [2] [8]. |

| TITAN | Whole-Slide Foundation Model | A Transformer-based multimodal model that produces general-purpose slide representations from a grid of patch features, enabling tasks like classification, retrieval, and report generation [2] [8]. |

| DINOv2 / iBOT | Self-Supervised Learning Algorithm | A self-supervised training framework that uses knowledge distillation and masked image modeling to learn powerful visual representations without labeled data [2] [5]. |

| Camelyon+ | Benchmark Dataset | A cleaned and re-annotated version of the Camelyon-16 and -17 datasets for breast cancer metastasis detection, providing reliable labels for model evaluation [8]. |

| Protege Evaluation Datasets | Evaluation Benchmark | A set of multi-modal datasets (e.g., combining EMR, pathology slides, imaging) specifically curated for unbiased evaluation of healthcare AI models, independent of training data [9]. |

| HuBMAP CCF (Common Coordinate Framework) | Spatial Reference Framework | A 3D open-source atlas that enables registration and integration of multimodal tissue data (histology, omics) within a standardized spatial context of the human body [7]. |

| PLUTO | Pathology Foundation Model | PathAI's foundation model, used to extract biologically-relevant features from WSIs for downstream tasks like toxicology assessment [10]. |

The Mass-340K dataset exemplifies the critical trend towards large-scale, diverse, and multimodal data collection in computational pathology. Its composition—spanning hundreds of thousands of WSIs from multiple organs, stains, and scanners, and augmented with both real and synthetic textual descriptions—provides the essential fuel for training transformative foundation models like TITAN. The experimental protocols that leverage this data, including multi-stage pretraining and sophisticated context modeling, are as important as the data itself. For researchers and drug development professionals, understanding the provenance, structure, and application of these data resources is paramount. The future of robust, clinically applicable AI in pathology hinges on continued efforts to compile representative datasets, develop standardized benchmarks like Camelyon+ and Protege's offerings, and build upon the foundational tools and methodologies that this deep dive has outlined.

Addressing the Limitations of Previous Datasets like TCGA for Foundation Model Pretraining

The development of powerful foundation models in computational pathology has been historically constrained by the limited scale and diversity of available training data. Prior to the creation of recent large-scale datasets, models were primarily trained on resources like The Cancer Genome Atlas (TCGA), which contains approximately 29,000 whole-slide images (WSIs) spanning 32 cancer types [1]. While valuable, TCGA and similar collections present significant limitations for foundation model pretraining, including restricted sample sizes that inhibit the scaling laws crucial for robust feature learning, a predominant focus on primary cancer histology that limits morphological diversity, and insufficient representation of rare diseases and varied tissue types [1]. These constraints have fundamentally limited the generalizability and clinical applicability of pathology AI models across real-world diagnostic scenarios. To overcome these challenges, researchers have pioneered the creation of massively scaled, diversified histology datasets specifically designed for foundation model pretraining, notably Mass-100K and its expanded successor Mass-340K, which have enabled unprecedented advances in self-supervised learning for computational pathology.

Dataset Architectures: Mass-100K and Mass-340K

Core Specifications and Composition

The Mass-100K and Mass-340K datasets represent foundational resources specifically engineered to overcome the scaling limitations of previous pathology data collections. The table below summarizes their core architectural specifications:

Table 1: Core Specifications of Mass-100K and Mass-340K Datasets

| Specification | Mass-100K Dataset | Mass-340K Dataset |

|---|---|---|

| Total Whole-Slide Images (WSIs) | 100,426+ diagnostic H&E-stained WSIs [1] | 335,645 WSIs [2] |

| Tissue Patches/ROIs | >100 million images [1] [11] | Not explicitly quantified (builds upon Mass-100K) |

| Organ/Tissue Types | 20 major tissue types [1] | 20 organ types [2] |

| Data Sources | Massachusetts General Hospital (MGH), Brigham and Women's Hospital (BWH), Genotype-Tissue Expression (GTEx) consortium [1] | Expanded institutional collection (assumed similar sources as Mass-100K) |

| Primary Application | Pretraining of UNI foundation model [1] [11] | Pretraining of TITAN multimodal foundation model [2] |

| Multimodal Pairing | Not specified | 182,862 medical reports [2] |

Methodological Advancements Over Previous Datasets

These datasets incorporate several methodological innovations that directly address TCGA's limitations. Mass-340K specifically enables multimodal vision-language pretraining by incorporating paired pathology reports and synthetic captions, facilitating cross-modal learning between histology images and clinical text [2]. The datasets employ diversified sampling strategies across multiple organ systems and tissue types, contrasting with TCGA's cancer-dominated profile [1]. They also establish scaling laws for computational pathology, demonstrating that increasing pretraining data size consistently improves downstream performance on complex diagnostic tasks [1]. Furthermore, they support rare disease representation through inclusion of diverse cancer subtypes and morphological patterns essential for robust generalizability [11].

Experimental Frameworks and Pretraining Methodologies

Foundation Model Pretraining workflows

The Mass-100K and Mass-340K datasets have enabled the development of sophisticated pretraining methodologies that leverage self-supervised learning (SSL) at unprecedented scales. The following diagram illustrates the core pretraining workflow for models trained on these datasets:

Technical Implementation Details

The pretraining of foundation models on these datasets involves several technically sophisticated components. For visual feature extraction, WSIs are divided into non-overlapping patches of 512×512 pixels at 20× magnification, with 768-dimensional features extracted for each patch using specialized encoders like CONCH [2]. The Transformer architecture employs attention with linear bias (ALiBi) to handle long sequences of patch features while preserving spatial relationships across gigapixel WSIs [2]. For multimodal alignment, contrastive learning objectives align image features with corresponding pathology reports and synthetically generated fine-grained morphological descriptions [2]. The self-supervised objectives utilize masked image modeling and knowledge distillation (iBOT framework) to learn morphological representations without manual annotations [2].

Performance Benchmarking and Validation

Experimental Protocols and Evaluation Metrics

Rigorous benchmarking against existing pathology foundation models demonstrates the performance advantages enabled by Mass-100K and Mass-340K. The evaluation framework encompasses multiple clinically relevant domains:

Table 2: Performance Benchmarking Across Clinical Tasks

| Evaluation Domain | Specific Tasks | Superior Performing Models | Key Performance Metrics |

|---|---|---|---|

| Cancer Subtyping | 43-class and 108-class OncoTree classification [1] | UNI (trained on Mass-100K) [1] | Top-1 accuracy: +7.2% over baselines [1] |

| Rare Disease Retrieval | Cross-modal retrieval and zero-shot classification [2] | TITAN (trained on Mass-340K) [2] | Outperforms existing slide foundation models [2] |

| Multi-task Benchmarking | 41 tasks across TCGA, CPTAC, and external datasets [12] | Virchow2 ranks first (0.706 mean performance) [12] | Balanced accuracy, precision, recall, F1 score [12] |

| Biomarker Prediction | Molecular alteration prediction from histology [1] | UNI and other Mass-100K trained models [1] | AUROC, F1 scores across multiple cancer types [1] |

Scaling Law Validation

Experimental validation on the Mass-100K dataset demonstrates clear scaling laws in computational pathology. When evaluating the UNI model on the 108-class OncoTree classification task, performance increased by +3.5% in top-1 accuracy when scaling from Mass-1K (1,404 WSIs) to Mass-22K (21,444 WSIs), with further gains of +3.0% when scaling to the full Mass-100K dataset (100,426 WSIs) [1]. This scaling relationship demonstrates that increased pretraining data volume directly enhances model capability on complex, clinically relevant classification tasks, validating the core hypothesis behind creating these large-scale datasets.

Essential Research Infrastructure

The development and application of foundation models pretrained on Mass-100K/Mass-340K requires specialized computational resources and methodological components:

Table 3: Essential Research Reagents for Pathology Foundation Model Development

| Resource Category | Specific Tools/Components | Function/Purpose |

|---|---|---|

| Foundation Models | UNI, TITAN, CONCH [2] [11] | Pretrained encoders providing transferable feature representations for diverse downstream tasks |

| SSL Algorithms | DINOv2, iBOT, masked autoencoders [2] [1] | Self-supervised learning frameworks for unsupervised representation learning from unlabeled images |

| Model Architectures | Vision Transformers (ViT-Large, ViT-Huge) [2] [1] | Neural network backbones capable of processing sequences of patch embeddings from WSIs |

| Multimodal Alignment | Contrastive language-image pretraining [2] | Learning joint embeddings between histology images and textual reports/captions |

| Benchmarking Frameworks | PathoROB, clinical task collections [12] [13] | Standardized evaluation pipelines to assess model robustness and clinical utility |

The creation of Mass-100K and Mass-340K datasets represents a paradigm shift in computational pathology, directly addressing the scaling limitations of previous resources like TCGA. By providing orders of magnitude more diverse histology images across multiple tissue types and pairing them with clinical reports, these datasets have enabled the development of foundation models with significantly enhanced capabilities for cancer subtyping, rare disease identification, and multimodal reasoning. The experimental protocols and scaling laws established through their use provide a roadmap for future dataset development in medical AI. As the field progresses, increasing focus on multi-institutional data collection to reduce site-specific bias [13], incorporation of additional multimodal data sources such as genomics and proteomics [14], and development of more efficient pretraining methodologies [15] will further advance the clinical applicability of pathology foundation models. These resources collectively establish a new foundation for data-driven discovery in diagnostic pathology and precision medicine.

The field of computational pathology is undergoing a fundamental transformation, moving from specialized task-specific models toward general-purpose foundation models capable of addressing diverse clinical challenges. This paradigm shift is largely driven by the creation of massive histopathology datasets and advances in self-supervised learning techniques. Central to this transition are the Mass-100K and Mass-340K datasets—comprehensive collections of whole-slide images that have enabled the development of foundational models like UNI and TITAN. These models demonstrate unprecedented capabilities across a wide spectrum of pathology tasks, from cancer subtyping and rare disease identification to prognostic prediction and report generation. This technical review examines the architectural innovations, training methodologies, and evaluation frameworks underpinning this transformative shift, with particular focus on how large-scale datasets are redefining the boundaries of computational pathology.

Computational pathology (CPath) has traditionally relied on task-specific models trained for specialized applications such as tumor detection, cancer grading, or biomarker prediction. These conventional approaches typically utilized supervised learning on limited annotated datasets, constraining their generalizability and requiring extensive labeling efforts for each new clinical task. The emergence of foundation models represents a pivotal shift toward unified architectures pretrained on massive unlabeled datasets that can be adapted to numerous downstream tasks with minimal fine-tuning.

The limitations of task-specific models become particularly apparent when facing real-world diagnostic challenges. Pathologists routinely navigate thousands of possible diagnoses across diverse tissue types and disease categories, requiring models with broad rather than narrow expertise [1]. Early transfer learning approaches using models pretrained on natural images (e.g., ImageNet) struggled with the unique characteristics of histopathology data, including minimal color variation, rotation-agnosticism, and hierarchical tissue organization [16]. This gap prompted the development of pathology-specific foundation models trained on extensive histopathology datasets.

Two landmark datasets have catalyzed this paradigm shift: Mass-100K and Mass-340K. These datasets provide the scale and diversity necessary for training general-purpose models that capture the complex morphological patterns present in human tissues across health and disease states. The Mass-100K dataset comprises over 100,000 diagnostic H&E-stained whole-slide images (WSIs) from 20 major tissue types, while the expanded Mass-340K dataset contains 335,645 WSIs with corresponding pathology reports and synthetic captions [2] [1]. The creation of these datasets has enabled the development of foundation models that demonstrate remarkable versatility across diverse machine learning settings, including zero-shot learning, few-shot adaptation, and multimodal reasoning.

The Foundation Dataset Ecosystem: Mass-100K and Mass-340K

Dataset Composition and Scale

The Mass-100K and Mass-340K datasets represent unprecedented collections of histopathology data that have enabled the training of general-purpose foundation models. The table below summarizes the key characteristics of these datasets:

Table 1: Composition of Mass-100K and Mass-340K Datasets

| Characteristic | Mass-100K Dataset | Mass-340K Dataset |

|---|---|---|

| Total WSIs | 100,426+ | 335,645 |

| Tissue patches | >100 million | Not specified |

| Organ types | 20 | 20 |

| Data volume | >77 TB | Not specified |

| Additional data | - | 182,862 medical reports + 423,122 synthetic captions |

| Sources | MGH, BWH, GTEx consortium | Not specified |

| Stain types | H&E | Multiple stains |

| Scanner types | Various | Various |

The Mass-100K dataset was specifically designed to address the limitations of previous datasets like The Cancer Genome Atlas (TCGA), which primarily contained oncology-focused slides from a limited number of cancer types [1]. By incorporating diverse tissue types from both cancerous and non-cancerous sources, including the Genotype-Tissue Expression (GTEx) consortium, Mass-100K provides a more comprehensive representation of histopathological morphology [1]. This diversity has proven essential for developing models that generalize across various clinical scenarios and tissue types.

The Mass-340K dataset extends this concept further by incorporating not only additional WSIs but also multimodal data in the form of pathology reports and synthetically generated captions [2]. The inclusion of 423,122 synthetic captions generated using PathChat (a multimodal generative AI copilot for pathology) provides fine-grained morphological descriptions at the region-of-interest level, enabling more sophisticated vision-language pretraining [2]. This combination of visual and textual data creates a rich training environment for models learning to associate histological patterns with clinical descriptions.

Data Diversity and Clinical Representativeness

Both datasets explicitly address the critical need for diversity in foundation model pretraining. The 20 organ types encompass major tissue systems, ensuring broad coverage of human anatomy. Additionally, the inclusion of various stain types (beyond standard H&E) and scanner manufacturers enhances model robustness to technical variations commonly encountered in clinical practice [2]. This diversity is particularly valuable for rare diseases and conditions where limited data would otherwise constrain model development.

The scale of these datasets aligns with emerging principles of foundation model development, where increased data volume and diversity consistently lead to improved downstream performance [1]. In ablation studies, researchers observed performance improvements of +3.5% to +4.2% in top-1 accuracy when scaling from smaller datasets (Mass-1K) to the full Mass-100K collection for cancer classification tasks [1]. Similar scaling benefits likely extend to the even larger Mass-340K dataset, though comprehensive ablation studies have not been reported for this expanded collection.

Architectural Foundations: From Patch-Level to Slide-Level Modeling

The Multiple Instance Learning Framework

Whole-slide images in computational pathology present unique computational challenges due to their gigapixel resolution (often exceeding 100,000 × 100,000 pixels). The standard approach for handling these massive images employs a multiple instance learning framework, where WSIs are treated as "bags" of smaller patches (instances) [16]. Formally, this relationship can be expressed as:

Table 2: Multiple Instance Learning Formulation

| Component | Mathematical Representation | Description |

|---|---|---|

| WSI patches | ( \boldsymbol{X}={\boldsymbol{x}i}{i=1}^N \in \mathbb{R}^{N \times h \times w \times 3} ) | N non-overlapping patches from tessellated WSI |

| Feature extraction | ( \boldsymbol{z}i = \mathcal{M}e(\boldsymbol{x}_i) ) | Extractor ( \mathcal{M}_e ) generates patch features |

| Feature aggregation | ( \boldsymbol{h} = \mathcal{M}_g(\boldsymbol{Z}) ) | Aggregator ( \mathcal{M}_g ) produces slide-level features |

| Bag label assignment | ( Y = \begin{cases} 1 & \exists i, yi = 1 \ 0 & \forall i, yi = 0 \end{cases} ) | Slide-level label determined by patch labels |

In conventional MIL pipelines, feature extraction typically relies on models pretrained on natural images (e.g., ImageNet-pretrained ResNet-50). However, these models struggle with pathology-specific characteristics, prompting the development of specialized pathology foundation models that serve as more effective feature extractors [16].

Model Architectures and Design Innovations

Foundation models in pathology have embraced transformer-based architectures, which have demonstrated remarkable success in both natural language processing and computer vision. The table below compares key architectural characteristics of prominent pathology foundation models:

Table 3: Architecture Comparison of Pathology Foundation Models

| Model | Architecture | Parameters | Base Method | Input Modality | Scale |

|---|---|---|---|---|---|

| UNI | ViT-Large | Not specified | DINOv2 | Histology patches | Large |

| CONCH | ViT-B/16 | 86.3M | iBOT/CoCa | Whole-slide, Text | Base |

| TITAN | ViT with ALiBi | Not specified | iBOT distillation | Whole-slide, Text | Large |

| CTransPath | Swin-T/14 | 28.3M | MoCov3 | Histology patches | Small |

| PLIP | ViT-B/32 | 87M | CLIP | Pathology, Text | Base |

| Phikon | ViT-S/B/L/16 | 21.7M/85.8M/307M | iBOT | Histology patches | Small/Base/Large |

UNI utilizes a vision transformer (ViT-Large) architecture pretrained using DINOv2 self-supervised learning on the Mass-100K dataset [1] [17]. This approach enables the model to learn powerful, transferable representations without requiring labeled data during pretraining. UNI's design focuses on creating a general-purpose visual encoder that can be applied to various tasks, from region-of-interest classification to whole-slide analysis.

TITAN (Transformer-based pathology Image and Text Alignment Network) introduces several architectural innovations to address the challenges of whole-slide modeling [2]. The model employs a vision transformer that operates on pre-extracted patch features rather than raw pixels, effectively using patch encoders as "patch embedding layers" in a conventional ViT. To handle variable-length WSI sequences, TITAN incorporates Attention with Linear Biases (ALiBi), originally developed for long-context inference in large language models, extended to 2D for preserving spatial relationships in tissue sections [2].

CONCH represents a multimodal approach that aligns visual and textual representations through contrastive learning [17] [11]. Trained on over 1.17 million histopathology image-text pairs, CONCH demonstrates strong performance on tasks including rare disease identification, tumor segmentation, and cross-modal retrieval. The model's architecture enables natural language interaction, allowing pathologists to search for morphologies of interest using descriptive text [11].

Training Methodologies: Self-Supervised and Multimodal Learning

Self-Supervised Learning Paradigms

Foundation models in pathology predominantly utilize self-supervised learning (SSL) to leverage large-scale unlabeled datasets. SSL generates supervisory signals automatically through pretext tasks, allowing models to learn meaningful representations without manual annotation [16]. Given an input image ( \boldsymbol{x} ), a transformation function ( \mathcal{T}(\cdot) ) generates a modified version ( \tilde{\boldsymbol{x}} = \mathcal{T}(\boldsymbol{x}) ) with corresponding pseudo-label ( \tilde{y} ). The model ( \mathcal{M}e(\cdot) ) then extracts features and predicts ( \hat{y} = \mathcal{M}e(\tilde{\boldsymbol{x}}) ), with the learning objective minimizing the difference between ( \hat{y} ) and ( \tilde{y} ).

Different foundation models employ distinct SSL approaches:

UNI utilizes DINOv2, a self-distillation method that learns robust representations by matching feature distributions between different augmented views of the same image [1] [17]. This approach has demonstrated remarkable transferability to downstream tasks without task-specific fine-tuning.

TITAN employs iBOT framework, which combines masked image modeling with online tokenizer distillation [2]. This approach allows the model to learn both local and global visual contexts by reconstructing masked portions of the input while maintaining consistency between teacher and student networks.

CONCH adapts the CLIP (Contrastive Language-Image Pre-training) framework to pathology, aligning visual and textual representations through contrastive learning [17] [11]. This enables cross-modal retrieval and zero-shot classification capabilities.

Multimodal Pretraining Strategies

TITAN introduces a sophisticated three-stage pretraining approach that progressively builds capabilities from visual to multimodal understanding:

Stage 1: Vision-Only Unimodal Pretraining TITAN first undergoes self-supervised pretraining on region-of-interest (ROI) crops using the iBOT framework [2]. The model learns to encode histomorphological patterns by processing 8,192 × 8,192 pixel regions at 20× magnification, with data augmentation including random cropping, flipping, and posterization feature augmentation [2].

Stage 2: Cross-Modal Alignment with Synthetic Captions The vision encoder is aligned with textual descriptions using 423,122 synthetically generated ROI captions created through PathChat [2]. This stage enables fine-grained understanding of morphological patterns and their semantic descriptions.

Stage 3: Cross-Modal Alignment with Pathology Reports Finally, the model learns slide-level vision-language correspondence using 182,862 pairs of WSIs and clinical reports [2]. This stage bridges whole-slide visual patterns with diagnostic terminology and clinical observations.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Essential Research Reagents for Pathology Foundation Model Development

| Resource | Type | Function | Representative Examples |

|---|---|---|---|

| Pretraining Algorithms | Software | Self-supervised learning methods | DINOv2, iBOT, MoCoV3, CLIP |

| Model Architectures | Software | Neural network backbones | Vision Transformer (ViT), Swin Transformer |

| Whole-Slide Processing | Software | WSI handling and patch extraction | HistomicsML, CLAM, HIPT |

| Evaluation Frameworks | Software | Benchmarking and assessment | Multiple Instance Learning (MIL), Linear Probing |

| Public Datasets | Data | Pretraining and evaluation | TCGA, GTEx, CAMELYON16 |

| Computational Resources | Hardware | Model training and inference | High-memory GPUs, Distributed training systems |

Experimental Evaluation and Performance Benchmarking

Comprehensive Evaluation Frameworks

Foundation models in pathology undergo rigorous evaluation across diverse tasks to assess their generalizability and clinical utility. The experimental protocols typically encompass multiple machine learning settings:

- Linear Probing: Frozen features are used to train linear classifiers for specific tasks, testing feature quality without fine-tuning [2] [1]

- Few-Shot Learning: Models are adapted with very limited labeled examples (e.g., 1-10 samples per class) [1]

- Zero-Shot Evaluation: Models perform tasks without any task-specific training, particularly for multimodal models [2]

- Weakly Supervised Learning: Slide-level labels are used without patch-level annotations via multiple instance learning [1] [16]

UNI was evaluated on 34 distinct clinical tasks spanning various difficulty levels and clinical scenarios [1]. These included nuclear segmentation, primary and metastatic cancer detection, cancer grading and subtyping, biomarker screening, molecular subtyping, organ transplant assessment, and large-scale pan-cancer classification with up to 108 cancer types in the OncoTree system [1].

TITAN was assessed across diverse clinical tasks including cancer subtyping, biomarker prediction, outcome prognosis, rare cancer retrieval, cross-modal retrieval, and pathology report generation [2]. The model's performance was measured in both resource-rich and resource-limited scenarios to test its robustness in practical clinical settings.

Performance Comparison and Scaling Laws

Experimental results demonstrate the superior performance of foundation models compared to previous approaches. The table below summarizes key performance comparisons:

Table 5: Performance Comparison of Pathology Foundation Models

| Model | Evaluation Tasks | Key Results | Comparative Advantage |

|---|---|---|---|

| UNI | 34 tasks including OT-43 and OT-108 cancer classification | Outperformed CTransPath and REMEDIS by wide margin; +3.5-4.2% improvement with data scaling | Demonstrates scaling laws; effective in few-shot settings |

| TITAN | Cancer prognosis, rare disease retrieval, report generation | Outperforms ROI and slide foundation models in zero-shot and few-shot settings | Strong multimodal capabilities; effective cross-modal retrieval |

| CONCH | 14 tasks including rare disease identification, segmentation | State-of-the-art in zero-shot learning and cross-modal retrieval | Excellent vision-language alignment |

UNI demonstrates clear scaling laws, with performance improvements of +3.5% to +4.2% when increasing pretraining data from Mass-1K to Mass-100K [1]. This scaling behavior aligns with observations in natural image foundation models and underscores the importance of dataset size in developing capable pathology models.

TITAN shows particular strength in low-data regimes, outperforming both region-of-interest and slide-level foundation models across machine learning settings including linear probing, few-shot, and zero-shot classification [2]. The model also demonstrates impressive capabilities in rare cancer retrieval, successfully identifying matching cases even for uncommon cancer types with limited training examples.

The paradigm shift from task-specific models to general-purpose foundation models represents a transformative development in computational pathology. The creation of massive datasets like Mass-100K and Mass-340K has enabled the training of models with unprecedented versatility and clinical applicability. These foundation models, including UNI, TITAN, and CONCH, demonstrate strong performance across diverse tasks while reducing the need for extensive labeled data through zero-shot and few-shot learning capabilities.

Looking forward, several research directions promise to further advance the field. Federated learning approaches may enable training on even larger datasets while preserving patient privacy [16]. Multimodal integration beyond vision and text—including genomic, proteomic, and clinical data—could create more comprehensive patient representations [2]. Efficient adaptation methods like prompt tuning and adapter layers may make foundation models more accessible for clinical deployment [16]. Finally, rigorous clinical validation through prospective trials remains essential to translate these technical advances into improved patient care.

The emergence of pathology foundation models marks a significant milestone in the integration of artificial intelligence into diagnostic medicine. By capturing the complex morphological patterns present in human tissues across health and disease states, these models have the potential to augment pathological diagnosis, enhance diagnostic accuracy, and ultimately improve patient outcomes across a broad spectrum of medical conditions.

From Data to Diagnosis: Methodologies and Real-World Applications of Models Trained on Mass-Scale Datasets

The development of powerful foundation models in computational pathology has been constrained by the limited scale and diversity of available histopathology data. To address this challenge, researchers have introduced large-scale datasets such as Mass-100K and Mass-340K, which serve as critical resources for pretraining general-purpose models. These datasets enable the application of advanced self-supervised learning (SSL) methodologies like DINOv2 and vision-language alignment, moving beyond the limitations of previous approaches that relied predominantly on public datasets like The Cancer Genome Atlas (TCGA) [1] [18].

Mass-100K represents a pivotal scaling effort in histopathology pretraining, comprising over 100 million images from more than 100,000 diagnostic H&E-stained whole slide images (WSIs) across 20 major tissue types [1]. This dataset forms the foundation for UNI, a general-purpose self-supervised model that demonstrates remarkable transfer learning capabilities across diverse clinical tasks. Building upon this effort, Mass-340K expands significantly in scale with 335,645 WSIs, enabling the development of TITAN (Transformer-based pathology Image and Text Alignment Network) - a multimodal whole-slide foundation model that incorporates both visual self-supervised learning and vision-language alignment with corresponding pathology reports and synthetic captions [2]. These datasets provide the extensive and diverse pretraining data necessary for developing pathology foundation models that can generalize across a wide spectrum of diagnostic scenarios, including rare diseases and complex clinical conditions.

Table 1: Core Dataset Specifications for Pathology Foundation Model Pretraining

| Dataset | Whole Slide Images (WSIs) | Image Patches/ROIs | Tissue Types | Key Characteristics | Primary Models |

|---|---|---|---|---|---|

| Mass-100K | 100,402+ H&E WSIs [18] | 100,130,900 images (75.8M @256×256, 24.3M @512×512) [18] | 20 major tissue types [1] | Sourced from MGH, BWH, and GTEx; excludes public benchmarks to prevent data contamination [18] | UNI [1] |

| Mass-340K | 335,645 WSIs [2] | Not explicitly stated | 20 organ types [2] | Includes 182,862 medical reports and 423,122 synthetic captions; diverse stains and scanner types [2] | TITAN, TITANV [2] |

Core Technical Methodologies

Self-Supervised Learning with DINOv2 Framework

The DINOv2 (self-DIstillation with NO labels) framework represents a breakthrough in self-supervised learning for computer vision, enabling the pretraining of models without extensive labeled datasets [19] [20]. This approach is particularly valuable in computational pathology, where expert annotations are scarce and costly to obtain. DINOv2 employs a knowledge distillation technique where a larger "teacher" model trains a smaller "student" model to mimic its output, effectively transferring knowledge without manual labels [19].

The technical implementation of DINOv2 incorporates several key components that contribute to its effectiveness. The framework utilizes an image-level objective through self-distillation with multi-crop strategies, where different augmented views of the same image are processed by both teacher and student networks [19] [18]. Additionally, it employs a patch-level objective through masked image modeling, randomly masking portions of the input patches during training [19]. The approach also includes KoLeo regularization on [CLS] tokens to prevent dimensional collapse and encourage uniform distribution of features in the embedding space [18]. For model scaling, DINOv2 uses a functional distillation pipeline that compresses large models into smaller variants with minimal performance loss, enabling efficient inference [19].

In the context of pathology foundation models, UNI adapts the DINOv2 framework specifically for histopathology data by training on the Mass-100K dataset. The implementation utilizes a Vision Transformer Large (ViT-L/16) architecture with patch size of 16, embedding dimension of 1024, 16 attention heads, and MLP feed-forward networks, totaling approximately 300 million parameters [18]. The training regimen employs fp16 mixed precision using PyTorch-FSDP for 125,000 iterations with a substantial batch size of 3072, requiring approximately 1024 GPU hours on Nvidia A100 hardware [18].

Diagram 1: DINOv2 Training Workflow for Pathology

Vision-Language Alignment in Histopathology

Vision-language alignment represents a sophisticated multimodal learning approach that connects histopathological visual patterns with clinical and morphological descriptions. This methodology addresses a significant limitation in vision-only models by incorporating rich supervisory signals found in pathology reports, enabling capabilities such as zero-shot visual-language understanding and cross-modal retrieval [2].

The TITAN model implements vision-language alignment through a structured three-stage pretraining strategy. Stage 1 involves vision-only unimodal pretraining on Mass-340K using region-of-interest (ROI) crops, building foundational visual representations [2]. Stage 2 performs cross-modal alignment of generated morphological descriptions at the ROI-level, utilizing 423,122 pairs of high-resolution ROIs (8,192×8,192 pixels) and synthetic captions generated from PathChat, a multimodal generative AI copilot for pathology [2]. Stage 3 conducts cross-modal alignment at the whole-slide level with 182,862 pairs of WSIs and clinical reports, enabling slide-level multimodal understanding [2].

This multimodal approach requires specialized architectures to handle the unique challenges of gigapixel WSIs. TITAN employs a Vision Transformer architecture that processes sequences of patch features encoded by powerful histology patch encoders rather than raw pixels [2]. To manage computational complexity from long input sequences, the model uses attention with linear bias (ALiBi) for long-context extrapolation, where the linear bias is based on the relative Euclidean distance between features in the feature grid [2]. The model creates multiple views of a WSI by randomly cropping 2D feature grids and sampling both global (14×14) and local (6×6) crops for iBOT pretraining, with additional feature augmentation through vertical/horizontal flipping and posterization [2].

Diagram 2: Vision-Language Alignment Architecture

Experimental Protocols and Evaluation Metrics

Benchmarking Frameworks and Tasks

The evaluation of pathology foundation models pretrained on Mass-100K and Mass-340K datasets involves comprehensive benchmarking across diverse clinical tasks to assess their generalization capabilities. For UNI, researchers conducted extensive evaluations across 34 representative computational pathology tasks of varying diagnostic difficulty [1]. These tasks include ROI-level classification for basic tissue characterization, nuclear segmentation for cellular-level analysis, primary and metastatic cancer detection for diagnostic applications, cancer grading and subtyping for prognostic assessment, biomarker screening and molecular subtyping for predictive purposes, and organ transplant assessment for specialized clinical scenarios [1].

A particularly rigorous evaluation involves large-scale, hierarchical cancer classification based on the OncoTree cancer classification system. This benchmark includes two tasks that vary in diagnostic difficulty: OT-43 (43-class OncoTree cancer type classification) and OT-108 (108-class OncoTree code classification) [1]. Notably, 90 out of the 108 cancer types are designated as rare cancers, providing a challenging test for model generalization on underrepresented conditions [1].

For TITAN, evaluation encompasses diverse clinical tasks including linear probing for transfer learning assessment, few-shot and zero-shot classification for data-efficient learning scenarios, rare cancer retrieval for specialized diagnostic applications, cross-modal retrieval for vision-language integration, and pathology report generation for generative capabilities [2].

Table 2: Performance Evaluation of Pathology Foundation Models on Key Benchmarks

| Model | Pretraining Data | OncoTree-43 (Top-1 Accuracy) | OncoTree-108 (Top-1 Accuracy) | Zero-Shot Classification | Cross-Modal Retrieval |

|---|---|---|---|---|---|

| UNI | Mass-100K (100K+ WSIs) [1] | Significant improvements over previous SOTA (exact metrics not specified in sources) [1] | +3.5-4.2% performance increase with data scaling [1] | Not primary focus | Not primary focus |

| TITAN | Mass-340K (335K+ WSIs) [2] | Outperforms both ROI and slide foundation models [2] | Superior performance in rare cancer retrieval [2] | Enabled via vision-language alignment [2] | Enabled via shared embedding space [2] |

| CTransPath | TCGA + PAIP [21] | Lower performance compared to UNI [1] | Lower performance compared to UNI [1] | Not supported | Not supported |

Adaptation Strategies and Data Efficiency

A critical aspect of foundation model evaluation involves assessing their adaptability to various downstream tasks under different data constraints. Recent benchmarking studies have examined four pathology-specific foundation models (CTransPath, Lunit, Phikon, and UNI) across 14 datasets through two primary scenarios: consistency assessment and flexibility assessment [21].

In the consistency assessment scenario, which evaluates how well foundation models adapt to different datasets within the same task, researchers found that parameter-efficient fine-tuning (PEFT) approaches were both efficient and effective for adapting pathology-specific foundation models to diverse datasets [21]. In the flexibility assessment scenario under data-limited environments, foundation models benefited more from few-shot learning methods that involve modification only during the testing phase rather than during training [21].

These findings highlight the practical utility of models like UNI and TITAN in real-world clinical settings, where labeled data may be scarce for specific tasks or rare conditions. The ability to perform well in few-shot and zero-shot settings is particularly valuable for clinical applications involving rare diseases or novel biomarkers where large annotated datasets are unavailable [2] [21].

Implementation and Practical Applications

The Scientist's Toolkit: Research Reagent Solutions

Implementing SSL with DINOv2 and vision-language alignment for pathology foundation models requires specific computational tools and frameworks. The following table summarizes essential "research reagents" for this domain.

Table 3: Essential Research Reagents for Pathology Foundation Model Development

| Tool/Resource | Type | Function | Example Usage |

|---|---|---|---|

| DINOv2 Framework | Software Library | Self-supervised learning with knowledge distillation | Pretraining visual encoders on unlabeled histopathology images [22] [20] |

| UNI Model Weights | Pretrained Model | Feature extraction from histopathology images | Downloadable via Hugging Face for research use [18] |

| Timm Library | Software Library | Vision model architecture and training utilities | Loading UNI model architecture and transforms [18] |

| PyTorch-FSDP | Training Framework | Fully Sharded Data Parallel for distributed training | Efficient mixed-precision training of large models [18] |

| ViT-L/16 Architecture | Model Architecture | Vision Transformer with large configuration | Backbone network for UNI and related models [18] |

| Mass-100K/Mass-340K | Pretraining Dataset | Large-scale histopathology image collections | Training data for foundation models (access restricted) [2] [1] |

| PathChat | Generative AI Tool | Synthetic caption generation for pathology images | Creating fine-grained ROI captions for vision-language alignment [2] |

Code Implementation and Feature Extraction

For researchers seeking to utilize existing pathology foundation models, UNI provides accessible implementation pathways through the Hugging Face ecosystem. The model can be loaded using the timm library after proper authentication:

Feature extraction from histopathology regions of interest (ROIs) follows a straightforward process:

These pre-extracted features can then be utilized for various downstream tasks including ROI classification (via linear probing or k-nearest neighbors), slide classification (using multiple instance learning frameworks), and content-based image retrieval [18].

The development of pathology foundation models using SSL with DINOv2 and vision-language alignment on datasets like Mass-100K and Mass-340K represents a transformative advancement in computational pathology. These approaches enable the creation of general-purpose visual representations that transfer effectively across diverse clinical tasks, particularly in challenging low-data regimes and for rare disease conditions.

The integration of vision-language capabilities through models like TITAN opens new possibilities for AI-assisted pathology, including cross-modal retrieval, automated report generation, and zero-shot diagnostic inference. As these methodologies continue to evolve, we anticipate further scaling of pretraining data, refinement of multimodal alignment techniques, and expanded clinical validation across diverse healthcare settings.

Future research directions likely include the incorporation of additional modalities such as genomic data, development of more efficient adaptation techniques for clinical deployment, and creation of standardized benchmarking frameworks to ensure rigorous evaluation of model capabilities and limitations. The ongoing release of foundation models like UNI and TITAN to the research community promises to accelerate innovation in AI-driven histopathology and potentially transform diagnostic workflows in clinical practice.

The field of computational pathology stands at the precipice of a revolution driven by artificial intelligence and digital transformation. Traditional pathology practice has relied on manual microscopic examination of tissue specimens, a process that is both time-consuming and subject to inter-observer variability [23]. The advent of whole-slide scanners in the 1990s enabled the creation of high-resolution digital images of entire specimens, paving the way for quantitative analysis of histopathological images using computational methods [23]. However, the development of specialized AI models for each diagnostic task proved impractical due to the immense annotation burden on pathologists, whose expertise is both costly and limited in availability [23].

Foundation models represent a paradigm shift in medical artificial intelligence by enabling models that can be adapted to many downstream, clinically relevant tasks without task-specific training from scratch [11]. These models are trained on broad data using self-supervision at scale and can be adapted to a wide range of downstream tasks [23]. In histopathology, where a single whole-slide image (WSI) contains a staggering 100,000 × 100,000 pixels—an immense wealth of biological information—the application of foundation models is particularly promising [24]. The development of Vision Transformers (ViTs) has been instrumental in this transformation, as their architecture is particularly well-suited to handling the gigapixel-scale dimensions of WSIs while capturing both local and global tissue contexts [2] [1].

This technical guide explores the architectural backbones of ViTs for whole-slide image analysis, framed within the context of the Mass-100K and Mass-340K datasets developed by Mass General Brigham researchers. These datasets represent two of the largest collections of histopathology data created for self-supervised learning in computational pathology and have served as the foundation for pioneering models like UNI and TITAN that are pushing the boundaries of what's possible in diagnostic medicine [2] [1] [11].

The Foundation: Mass-100K and Mass-340K Datasets

Dataset Composition and Scaling Laws

The Mass-100K and Mass-340K datasets represent monumental achievements in data collection for computational pathology research. The Mass-100K dataset consists of more than 100 million tissue patches from 100,426 diagnostic H&E-stained whole-slide images across 20 major tissue types collected from Massachusetts General Hospital (MGH) and Brigham and Women's Hospital (BWH), as well as the Genotype-Tissue Expression (GTEx) consortium [1]. This dataset provides a rich source of information for learning objective characterizations of histopathologic biomarkers and has been instrumental in establishing scaling laws for foundation models in computational pathology [1].

The Mass-340K dataset represents an even more ambitious expansion, comprising 335,645 whole-slide images with corresponding pathology reports and 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology [2]. The dataset is distributed across 20 organs, different stains, diverse tissue types, and various scanner types, ensuring remarkable diversity that has proven to be a key factor in successful model development [2]. This extensive collection addresses a critical challenge in computational pathology: limited clinical data in disease-specific cohorts, especially for rare clinical conditions [2].

Table 1: Composition of Mass-100K and Mass-340K Datasets

| Dataset Metric | Mass-100K | Mass-340K |

|---|---|---|

| Total Whole-Slide Images | 100,402 WSIs | 335,645 WSIs |

| Tissue Patches/Images | >100 million | >100 million (estimated) |

| Organ Types | 20 | 20 |

| Additional Data | - | 182,862 medical reports; 423,122 synthetic captions |

| Primary Use Cases | UNI foundation model | TITAN multimodal foundation model |

| Data Sources | MGH, BWH, GTEx | MGH, BWH, and other Mass General Brigham sources |

Research has demonstrated clear scaling laws for foundation models in computational pathology. When scaling UNI from Mass-1K (1 million images, 1,404 WSIs) to Mass-22K (16 million images, 21,444 WSIs) to Mass-100K, performance increased by +4.2% and +3.7% respectively on challenging 43-class OncoTree cancer type classification tasks [1]. Similar improvements were observed on even more complex 108-class OncoTree code classification tasks, confirming that increasing dataset size and diversity directly enhances model performance on diagnostically relevant tasks [1].

Dataset Curation and Ethical Considerations

The curation of these massive datasets followed rigorous ethical standards. All experiments were conducted in accordance with the Declaration of Helsinki, the International Ethical Guidelines for Biomedical Research Involving Human Subjects (CIOMS), the Belmont Report and the U.S. Common Rule [25]. Anonymized archival tissue samples were retrieved from tissue banks in accordance with regulations and with approval from relevant ethics committees, with informed consent obtained from all patients as part of tissue bank protocols [25].

The datasets were designed to include diverse tissue types beyond just cancerous specimens, incorporating inflammatory, infectious, and normal tissue to enhance model generalizability [18]. This diversity is crucial for developing models that can operate effectively in real-world clinical settings where the range of specimens encompasses the full spectrum of pathological conditions.

ViT Architectures for Whole-Slide Image Analysis

Hierarchical Feature Extraction Approaches

Vision Transformers have emerged as the dominant architectural backbone for whole-slide image analysis in computational pathology due to their ability to capture long-range dependencies and multi-scale features. The fundamental challenge in applying ViTs to WSIs lies in the gigapixel resolution of the images, which makes direct processing computationally infeasible. To address this, researchers have developed hierarchical approaches that extract features at multiple levels.

The UNI model employs a Vision Transformer (ViT-Large) architecture pretrained using the DINOv2 self-supervised learning framework on the Mass-100K dataset [1] [18]. The model processes individual tissue patches at 20× magnification, typically sized 256×256 or 512×512 pixels, and learns representations through a combination of DINO self-distillation loss with multi-crop, iBOT masked-image modeling loss, and KoLeo regularization on [CLS] tokens [18]. This approach enables the model to learn powerful, transferable representations without requiring labeled data during pretraining.

Table 2: Vision Transformer Architectures for Whole-Slide Image Analysis

| Architectural Component | UNI Model | TITAN Model |

|---|---|---|

| Base Architecture | ViT-L/16 (ViT-Large) | Vision Transformer (ViT) |

| Patch Size | 16×16 | Processes 512×512 patches at 20× |

| Input Resolution | 224×224 for patches | 8,192×8,192 region crops |

| Embedding Dimension | 1024 | 768 (from CONCH v1.5 patch encoder) |

| Attention Heads | 16 | Variable |

| Parameters | 0.3B (300 million) | Not specified |

| Pretraining Framework | DINOv2 | iBOT knowledge distillation + multimodal alignment |

The TITAN model introduces a more sophisticated approach specifically designed for whole-slide analysis. Instead of using tokens from partitioned image patches directly, the slide encoder takes a sequence of patch features encoded by powerful histology patch encoders like CONCH v1.5 [2] [26]. This means TITAN's pretraining occurs in the embedding space based on pre-extracted patch features, with the patch encoder functioning as the 'patch embedding layer' in a conventional ViT [2]. To preserve spatial context, patch features are arranged in a two-dimensional feature grid replicating the positions of corresponding patches within the tissue [2].

Handling Gigapixel Images and Long-Range Dependencies

A significant innovation in TITAN is its approach to handling the computational complexity of gigapixel whole-slide images. The model constructs input embedding space by dividing each WSI into non-overlapping patches of 512×512 pixels at 20× magnification, followed by extraction of 768-dimensional features for each patch with CONCH v1.5 [2]. To address large and irregularly shaped WSIs, TITAN creates views by randomly cropping the 2D feature grid, sampling region crops of 16×16 features covering a region of 8,192×8,192 pixels [2].

From these region crops, TITAN samples two random global (14×14) and ten local (6×6) crops for iBOT pretraining, applying augmentations including vertical and horizontal flipping followed by posterization feature augmentation [2]. Perhaps most innovatively, TITAN uses attention with linear bias (ALiBi) for long-context extrapolation at inference time, extending this technique—originally proposed for large language models—to 2D by basing linear bias on the relative Euclidean distance between features in the feature grid [2]. This approach reflects the actual distances between patches in the tissue and enables more effective modeling of long-range dependencies in whole-slide images.

Experimental Protocols and Methodologies

Pretraining Strategies and Self-Supervised Learning

The development of foundation models for computational pathology relies heavily on self-supervised learning techniques that leverage unlabeled data. The UNI model employs the DINOv2 self-supervised learning framework, which has been shown to yield strong, off-the-shelf representations for downstream tasks without need for further fine-tuning with labeled data [1]. The training regimen consists of 125,000 iterations with a batch size of 3072, using fp16 mixed-precision training via PyTorch-FSDP, totaling approximately 1024 GPU hours on 4×8 Nvidia A100 80GB hardware [18].

TITAN employs a more complex three-stage pretraining strategy to ensure that slide-level representations capture histomorphological semantics at both the region-of-interest (ROI) and whole-slide levels [2]:

- Vision-only unimodal pretraining: Using the Mass-340K dataset on ROI crops with iBOT framework, which combines masked image modeling and knowledge distillation [2].

- Cross-modal alignment at ROI-level: Incorporating 423,000 pairs of 8k×8k ROIs and captions generated using PathChat, a multimodal generative AI copilot for pathology [2].

- Cross-modal alignment at WSI-level: Utilizing 183,000 pairs of WSIs and clinical reports to enable slide-level vision-language understanding [2].

This multi-stage approach allows TITAN to develop both visual and linguistic understanding of histopathological features, enabling sophisticated capabilities like pathology report generation and cross-modal retrieval between images and text [2].

Evaluation Frameworks and Downstream Tasks

Comprehensive evaluation across diverse clinical tasks is essential for validating foundation models in pathology. UNI was assessed on 34 distinct clinical tasks of varying diagnostic difficulty, including nuclear segmentation, primary and metastatic cancer detection, cancer grading and subtyping, biomarker screening and molecular subtyping, organ transplant assessment, and several pan-cancer classification tasks that include subtyping to 108 cancer types in the OncoTree cancer classification system [1].

For weakly supervised slide classification, researchers followed the conventional paradigm of first pre-extracting patch-level features from tissue-containing patches in the WSI using a pretrained encoder, followed by training an attention-based multiple instance learning (ABMIL) algorithm [1]. Performance was measured using top-K accuracy (K = 1, 3, 5) as well as weighted F1 score and area under the receiver operating characteristic curve (AUROC) to reflect the label complexity challenges of these tasks [1].

TITAN was evaluated across even more diverse machine learning settings, including linear probing, few-shot and zero-shot classification, rare cancer retrieval, cross-modal retrieval, and pathology report generation [2]. The model's performance was assessed on tasks specifically designed to test generalization to resource-limited clinical scenarios such as rare disease retrieval and cancer prognosis [2]. Without any fine-tuning or requiring clinical labels, TITAN can extract general-purpose slide representations and generate pathology reports, demonstrating remarkable versatility for clinical applications [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for ViT Development in Computational Pathology

| Research Reagent | Function | Implementation Examples |

|---|---|---|

| CONCH v1.5 Patch Encoder | Extracts visual features from histology patches at 512×512 resolution | Used in TITAN to create patch feature embeddings; provides 768-dimensional features [2] [26] |

| DINOv2 Framework | Self-supervised learning for vision transformers | Used in UNI pretraining; combines distillation with no labels, iBOT masked modeling, and KoLeo regularization [1] [18] |