Multimodal AI in Pathology: How Integrated Data is Building the Next Generation of Diagnostic Foundation Models

The integration of multimodal data is fundamentally advancing computational pathology, enabling the development of powerful foundation models that move beyond analyzing isolated image patches to interpret whole-slide images (WSIs) in...

Multimodal AI in Pathology: How Integrated Data is Building the Next Generation of Diagnostic Foundation Models

Abstract

The integration of multimodal data is fundamentally advancing computational pathology, enabling the development of powerful foundation models that move beyond analyzing isolated image patches to interpret whole-slide images (WSIs) in a broader clinical context. This article explores how models like TITAN and MPath-Net are leveraging combined data from histology images, pathology reports, and genomics via self-supervised and vision-language learning. We detail the technical methodologies, including transformer architectures and fusion strategies, that allow these models to excel in tasks from cancer subtyping and rare disease retrieval to prognostic prediction. The analysis further addresses critical challenges such as data standardization and model interpretability, provides comparative performance validation against unimodal and human benchmarks, and outlines the future trajectory of these technologies for enhancing diagnostic accuracy, personalizing treatment, and accelerating drug discovery.

The New Paradigm: Understanding Multimodal Pathology Foundation Models

Defining Multimodal Foundation Models in Computational Pathology

Computational pathology is undergoing a transformative shift, moving from single-modality analysis to integrated multimodal approaches. Foundation models represent a breakthrough in artificial intelligence (AI), where models are pre-trained on broad data at scale and can be adapted to a wide range of downstream tasks. In pathology, multimodal foundation models are defined as AI systems pre-trained on diverse data types—particularly histopathology images and corresponding textual reports—that learn general-purpose representations transferable to various clinical challenges without task-specific fine-tuning. These models fundamentally differ from previous AI systems in their ability to handle multiple data modalities simultaneously, leverage self-supervised learning to overcome annotation bottlenecks, and demonstrate emergent capabilities including zero-shot reasoning and cross-modal retrieval.

The development of these models addresses critical limitations in traditional computational pathology approaches, which have predominantly focused on encoding histopathology regions-of-interest (ROIs) into feature representations via self-supervised learning [1]. While effective for specific tasks, translating these patch-based advancements to address complex clinical challenges at the patient and slide level remains constrained by limited clinical data in disease-specific cohorts, especially for rare clinical conditions [1]. Multimodal foundation models represent an evolutionary leap by integrating complementary data sources to create more robust, generalizable, and clinically applicable AI systems for pathological diagnosis and research.

Core Architectural Principles and Technical Foundations

Multimodal Data Integration Framework

Multimodal foundation models in pathology are built upon sophisticated architectures designed to process and align heterogeneous data types. The core challenge lies in effectively integrating whole-slide images (WSIs), which are gigapixel in size and contain complex spatial information at multiple scales, with unstructured textual data from pathology reports and other clinical annotations. This integration occurs through several key mechanisms:

- Cross-modal alignment: Mapping visual patterns in histology to semantic concepts in text through contrastive learning objectives that bring corresponding image-text pairs closer in a shared embedding space while pushing non-corresponding pairs apart [1]

- Hierarchical feature extraction: Processing WSIs at multiple magnification levels to capture both cellular-level details and tissue-level architectural patterns

- Contextual reasoning: Leveraging transformer architectures with efficient attention mechanisms to model long-range dependencies across massive WSIs while maintaining computational feasibility

The fundamental architecture employs a dual-stream encoder framework, with separate but interacting pathways for visual and textual data, converging in a shared representation space where cross-modal reasoning occurs [1] [2].

Technical Innovations Enabling Whole-Slide Modeling

Several technical innovations have been crucial to enabling effective foundation models for pathology WSIs:

- Handling extreme sequence lengths: WSIs can contain >100,000 patches, far exceeding standard transformer capabilities. Solutions include adaptive sampling, hierarchical modeling, and efficient attention mechanisms such as Attention with Linear Biases (ALiBi) extended to 2D, which uses relative Euclidean distance between features in the feature grid [1]

- Knowledge distillation: Leveraging pre-trained patch encoders to create meaningful feature representations before whole-slide modeling [1]

- Multi-scale cropping strategies: Employing both global (14×14 features) and local (6×6 features) crops from region crops of 16×16 features covering 8,192×8,192 pixels at 20× magnification [1]

- Synthetic data generation: Using generative AI copilots for pathology to create fine-grained morphological descriptions that expand training data [1]

The TITAN Model: A Case Study in Implementation

Architecture and Training Methodology

The Transformer-based pathology Image and Text Alignment Network (TITAN) represents a state-of-the-art example of a multimodal whole-slide foundation model [1] [2]. TITAN's implementation provides a valuable case study in how the theoretical principles of multimodal foundation models are realized in practice. The model is pre-trained on an extensive dataset termed Mass-340K, consisting of 335,645 WSIs and 182,862 medical reports distributed across 20 organs, different stains, diverse tissue types, and various scanner types [1].

Table 1: TITAN Training Data Composition

| Data Type | Volume | Purpose | Details |

|---|---|---|---|

| Whole-Slide Images | 335,645 | Visual self-supervised learning | Mass-340K dataset, 20 organ types |

| Medical Reports | 182,862 | Slide-level vision-language alignment | Corresponding pathology reports |

| Synthetic Captions | 423,122 | ROI-level vision-language alignment | Generated via multimodal generative AI copilot |

TITAN's training occurs in three distinct stages to ensure that the resulting slide-level representations capture histomorphological semantics at both the region-of-interest (ROI) and whole-slide image levels [1]:

- Vision-only unimodal pre-training on Mass-340K using ROI crops with iBOT framework (masked image modeling and knowledge distillation)

- Cross-modal alignment of generated morphological descriptions at ROI-level (423,122 pairs of 8k×8k ROIs and captions)

- Cross-modal alignment at WSI-level (182,862 pairs of WSIs and clinical reports)

This staged approach allows the model to first learn robust visual representations before incorporating linguistic correspondences at progressively broader contextual levels.

Implementation Workflow

The following diagram illustrates TITAN's three-stage training workflow and architectural approach:

Experimental Protocols and Performance Benchmarks

Evaluation Methodologies

Multimodal foundation models in pathology are evaluated across diverse clinical tasks to assess their generalization capabilities. Standard evaluation protocols include [1]:

- Linear probing: Training a linear classifier on top of frozen features to evaluate representation quality

- Few-shot classification: Learning from very limited labeled examples (typically 1-16 samples per class)

- Zero-shot classification: Making predictions without any task-specific training examples

- Cross-modal retrieval: Finding relevant images given text queries and vice versa

- Rare cancer retrieval: Identifying rare disease subtypes based on slide similarity

- Pathology report generation: Generating textual descriptions from whole-slide images

These evaluations are conducted across multiple disease domains and organ systems to assess model robustness and generalizability beyond the training distribution.

Performance Benchmarks

Comprehensive benchmarking demonstrates that multimodal foundation models consistently outperform both ROI-based and slide-based foundation models across machine learning settings. The table below summarizes key performance comparisons:

Table 2: Performance Comparison of Pathology Foundation Models

| Model Type | Linear Probing Accuracy | Few-Shot Learning (16 samples) | Zero-Shot Classification | Cross-Modal Retrieval |

|---|---|---|---|---|

| ROI Foundation Models | Baseline | Baseline | Not supported | Limited capabilities |

| Slide Foundation Models (Vision-Only) | +3-5% over ROI | +5-8% over ROI | Not supported | Not supported |

| TITAN (Multimodal) | +8-12% over ROI | +12-18% over ROI | 75-85% accuracy | 0.45-0.55 mAP |

Beyond these quantitative metrics, TITAN demonstrates particularly strong performance in resource-limited clinical scenarios such as rare disease retrieval and cancer prognosis without requiring fine-tuning or clinical labels [1]. The model generates pathology reports that closely align with human expert interpretations and enables retrieval of similar cases across institutional boundaries, addressing critical challenges in diagnostic consistency and expertise distribution.

Essential Research Toolkit for Multimodal Pathology AI

Implementing and researching multimodal foundation models in pathology requires specialized tools and resources. The following table outlines key components of the research toolkit:

Table 3: Essential Research Reagents and Computational Tools

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Patch Encoders | CONCH, CTransPath, PLIP | Feature extraction from image patches (256×256 or 512×512 pixels at 20×) |

| Whole-Slide Processing | OpenSlide, CUDA-enabled whole-slide libraries | Handling gigapixel WSI loading, patching, and management |

| Multimodal Alignment | CLIP-based architectures, Cross-modal transformers | Aligning visual features with textual descriptions |

| Synthetic Data Generation | PathChat, Generative AI copilots | Creating fine-grained morphological descriptions to augment training data |

| Evaluation Frameworks | Multiple instance learning (MIL) setups, Cross-modal retrieval metrics | Assessing model performance across diverse clinical tasks |

Critical to the success of these models is the availability of large-scale multimodal datasets, though these often remain institutional. The Mass-340K dataset used for TITAN training includes 335,645 WSIs across 20 organ types with corresponding pathology reports, providing the scale and diversity necessary for robust foundation model development [1]. Publicly available datasets such as The Cancer Genome Atlas (TCGA) provide valuable resources for validation, though their scale is typically insufficient for pre-training [3].

Integration of Multimodal Data: Challenges and Solutions

Technical and Methodological Challenges

The integration of multimodal data in pathology foundation models presents several significant challenges that researchers must address:

- Non-commensurability: Different data modalities quantify distinct biological phenomena using heterogeneous physical units and representations [4]

- Spatial heterogeneity: Multimodal medical images feature specific spatial resolutions independent of the coordinate systems on which they are standardized [4]

- Missing data: Multimodal medical datasets are frequently incomplete as patients may not undergo identical testing protocols [4]

- Interpretability: The complexity of multimodal analysis methods creates challenges in explaining model decisions, requiring specialized expertise in data acquisition, processing, and analysis [4]

Additional computational challenges include handling the extreme size of whole-slide images (typically exceeding 100,000×100,000 pixels), managing memory constraints during training, and developing efficient inference methods for clinical deployment.

Emerging Solutions and Architectural Innovations

The field has developed several innovative approaches to address these multimodal integration challenges:

- Knowledge distillation from patch encoders: Using pre-trained patch encoders to create meaningful feature representations before whole-slide modeling, significantly reducing computational complexity [1]

- Cross-attention mechanisms: Allowing different modalities to interact through attention layers that learn to focus on relevant information across modalities [1] [2]

- Multi-scale feature pyramids: Extracting features at multiple magnification levels (e.g., 5×, 10×, 20×, 40×) to capture both cellular details and tissue architecture

- Synthetic data augmentation: Generating realistic training examples through generative AI to address data scarcity, particularly for rare conditions [1]

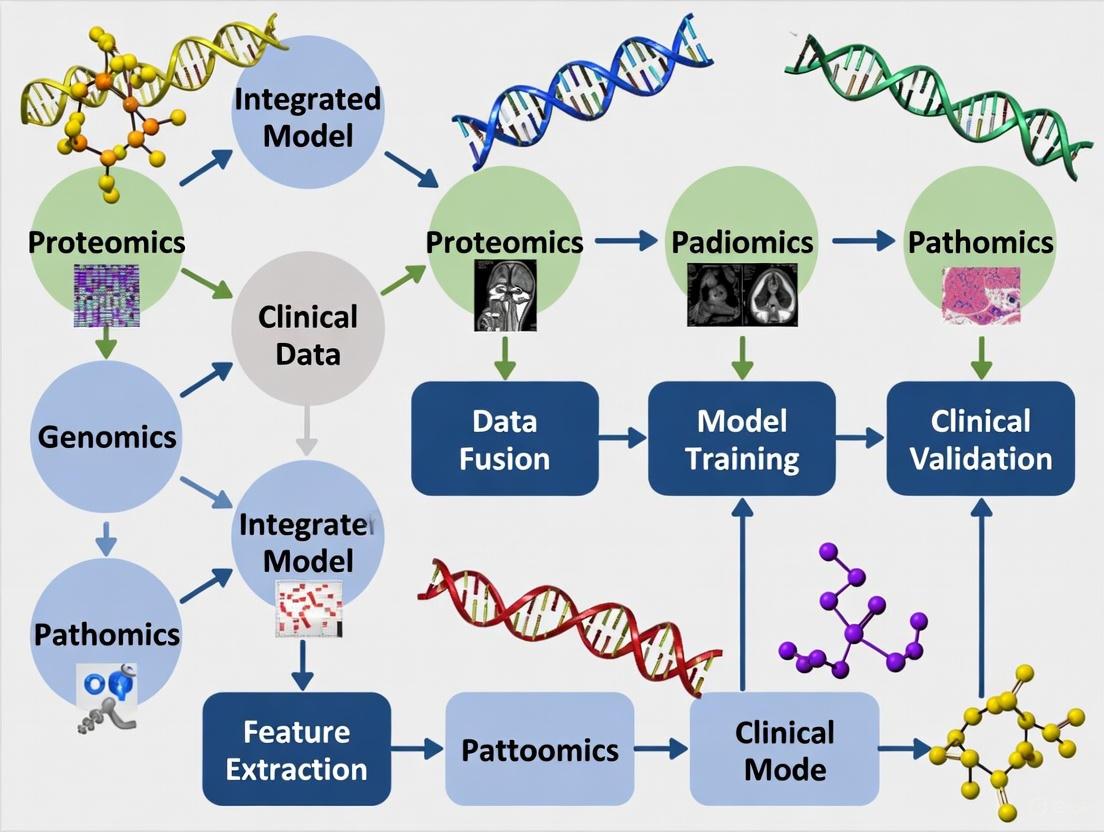

The following diagram illustrates the multimodal data integration pipeline with its key challenges and solutions:

Future Directions and Clinical Translation

Emerging Research Frontiers

Multimodal foundation models in computational pathology are rapidly evolving, with several promising research directions emerging:

- Whole-slide language models: Developing models that can generate comprehensive pathology reports directly from WSIs, describing morphological features, diagnostic impressions, and clinical correlations [1] [2]

- Multimolecular integration: Incorporating genomics, transcriptomics, and proteomics data alongside histology and text to enable more comprehensive tumor profiling [3]

- Longitudinal modeling: Tracking disease progression and treatment response across multiple time points by integrating serial biopsies with clinical data

- Causal representation learning: Moving beyond correlation to understand causal relationships between morphological features and clinical outcomes

- Federated learning frameworks: Enabling multi-institutional collaboration without sharing sensitive patient data, crucial for model generalization

The integration of synthetic data generation represents a particularly promising avenue, with models like TITAN already leveraging 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology [1]. This approach significantly expands training data diversity and scale while mitigating privacy concerns associated with real patient data.

Clinical Implementation Considerations

Translating multimodal foundation models from research to clinical practice requires addressing several practical considerations:

- Regulatory approval: Navigating FDA (U.S. Food and Drug Administration) and other regulatory pathways for AI-based medical devices, as exemplified by recent approvals for Paige Prostate Detect and Paige PanCancer Detect [3]

- Workflow integration: Embedding model capabilities into existing pathology workflows without disrupting clinical efficiency

- Interpretability and explainability: Providing transparent reasoning for model predictions to build clinician trust and facilitate adoption

- Generalization across institutions: Ensuring model performance remains robust across variations in staining protocols, scanner types, and reporting practices

- Computational infrastructure: Deploying efficient inference methods that can handle whole-slide images within clinically acceptable timeframes

The field is progressing toward assistive AI tools that can enhance pathologist productivity and diagnostic consistency while respecting the central role of human expertise in pathological diagnosis. As these models continue to evolve, they hold significant promise for addressing longstanding challenges in pathology, including diagnostic variability, rare disease identification, and prediction of treatment response from routine histology.

The field of computational pathology is undergoing a fundamental transformation, moving from the analysis of isolated image patches to a holistic understanding of entire Whole Slide Images (WSI). This critical shift is driven by the recognition that tissue architecture and long-range spatial relationships within a gigapixel WSI carry profound diagnostic and prognostic significance. While patch-level analysis has been the cornerstone of histopathology AI, allowing for the application of powerful deep learning models to manageable image regions, it inherently fragments the biological context. The intricate interactions between tumor cells, stroma, and immune populations—which occur across millimeter-scale distances—are often lost when tissue is divided into smaller, independently analyzed patches [1].

This evolution toward WSI-level analysis is particularly crucial within the broader thesis of multimodal data integration in pathology foundation model research. The next generation of pathological intelligence requires models that can not only process the vast visual information contained in a WSI but also semantically align this information with clinical reports, genomic data, and structured medical knowledge [5]. Foundation models like TITAN (Transformer-based pathology Image and Text Alignment Network) represent this new paradigm, having been pretrained on hundreds of thousands of whole slide images to produce general-purpose slide representations that can be applied to diverse clinical tasks without requiring task-specific fine-tuning [1] [2]. This capability is transformative for resource-limited scenarios, including rare disease analysis, where large, annotated datasets are unavailable.

Technical Foundations: From Patches to Whole Slides

The Limitations of Patch-Level Analysis

Traditional patch-based approaches in computational pathology treat a Whole Slide Image as a "bag" of hundreds or thousands of smaller image patches, typically extracted at high magnification (e.g., 256×256 pixels at 20× magnification). While this enables the application of standard convolutional neural networks (CNNs) to histology data, it introduces significant limitations:

- Context Fragmentation: Pathological diagnoses often depend on recognizing architectural patterns (e.g., gland formation in adenocarcinoma, invasion patterns in carcinoma) that extend beyond the field of view of a single patch.

- Information Loss: Critical spatial relationships between different tissue regions—such as the spatial distribution of tumor-infiltrating lymphocytes or the organization of the tumor-stroma interface—are disrupted.

- Computational Inefficiency: Processing thousands of patches per slide creates massive computational overhead and challenges in aggregating patch-level predictions into a coherent slide-level diagnosis [1] [5].

Whole-Slide Imaging Technology

Whole Slide Imaging scanners form the technological bedrock of this paradigm shift, enabling the digitization of entire glass slides into high-resolution digital images. These systems employ sophisticated hardware and software to capture and assemble gigapixel images through two primary methods:

- Tile-Based Scanning: Uses a robotics-controlled motorized slide stage to obtain numerous square image frames that are assembled into a mosaic pattern with 2-5% overlap, then "stitched" together into a seamless image.

- Line-Based Scanning: Relies on a servomotor-based slide stage that moves linearly along a single axis, producing long, uninterrupted strips of images that simplify the alignment process [6].

Modern WSI scanners can capture an entire slide at high resolution (typically using 20× or 40× objectives) in 1-3 minutes, generating files that can be several gigabytes in size. The essential components of these systems include a microscope with lens objectives, light source (bright field and/or fluorescent), robotics for slide handling, digital cameras for image capture, and specialized computers with software for image management and viewing [6].

Architectural Innovations for WSI-Level Processing

The transition to effective WSI analysis required novel neural architectures capable of handling the extraordinary scale of whole slide images. The key innovation has been the development of transformer-based models that can process long sequences of patch embeddings while modeling their spatial relationships.

Table: Key Differences Between Patch-Level and WSI-Level Analysis

| Feature | Patch-Level Analysis | WSI-Level Analysis |

|---|---|---|

| Input Size | 256×256 to 512×512 pixels | Entire gigapixel slide (10^9+ pixels) |

| Context Preservation | Limited to patch field-of-view | Preserves tissue architecture and long-range spatial relationships |

| Primary Models | CNNs, Vision Transformers (ViTs) | Multiple Instance Learning (MIL), Hierarchical Transformers, Slide Foundation Models |

| Computational Requirements | Moderate (GPU memory: 4-12GB) | High (GPU memory: 16+GB, often multi-GPU) |

| Data Annotation | Patch-level labels required | Slide-level or region-level labels sufficient |

| Multimodal Integration | Challenging | Native through cross-attention mechanisms |

The TITAN model exemplifies this architectural shift, employing a Vision Transformer (ViT) that takes as input a sequence of patch features encoded by powerful histology patch encoders. Rather than processing raw pixels, TITAN operates on pre-extracted patch features arranged in a two-dimensional grid that preserves spatial context. To handle the variable and extensive nature of WSI feature grids (often >10^4 tokens), TITAN introduces several key innovations:

- Random Cropping for Self-Supervised Learning: Creates views of a WSI by randomly cropping the 2D feature grid into region crops of 16×16 features covering 8,192×8,192 pixels.

- Multi-Scale Processing: Samples both global (14×14) and local (6×6) crops from region crops for comprehensive context understanding.

- 2D Attention with Linear Biases (ALiBi): Extends the ALiBi position encoding to two dimensions, where the linear bias is based on the relative Euclidean distance between features in the grid, reflecting actual tissue distances [1].

Diagram Title: WSI Analysis Workflow in Foundation Models

Multimodal Integration: The Path to General-Purpose Pathology AI

Vision-Language Pretraining Paradigm

The integration of multiple data modalities represents a cornerstone of modern pathology foundation model research. The TITAN model demonstrates a sophisticated three-stage pretraining approach that aligns visual features with textual information at different granularities:

Stage 1: Vision-Only Unimodal Pretraining

- Objective: Learn robust visual representations from 335,645 WSIs without clinical labels

- Method: Self-supervised learning using the iBOT framework (knowledge distillation with masked image modeling) on randomly cropped feature regions

- Output: TITAN_V - a vision-only foundation model capable of generating general-purpose slide representations [1]

Stage 2: ROI-Level Vision-Language Alignment

- Objective: Ground fine-grained visual features with morphological descriptions

- Method: Contrastive learning between 423,122 synthetic ROI captions (generated by PathChat, a multimodal generative AI copilot) and corresponding image regions (8K×8K pixels at 20×)

- Result: Fine-grained understanding of histological patterns and terminology alignment [1]

Stage 3: Slide-Level Vision-Language Alignment

- Objective: Connect entire slide representations with comprehensive diagnostic reports

- Method: Contrastive learning between 182,862 medical reports and corresponding whole slide images

- Result: Holistic slide-level semantic understanding and report generation capabilities [1]

Experimental Protocols and Methodologies

Large-Scale Pretraining Protocol

The Mass-340K dataset used for TITAN pretraining exemplifies the scale requirements for effective pathology foundation models:

- Dataset Composition: 335,645 WSIs across 20 organ types with diverse stains, tissue types, and scanner variants

- Data Diversity Strategy: Intentional inclusion of variability in staining protocols, scanning systems, and tissue preservation methods to enhance model robustness

- Preprocessing Pipeline:

- Non-overlapping patch extraction (512×512 pixels at 20× magnification)

- Feature extraction using CONCHv1.5 patch encoder (768-dimensional features)

- 2D feature grid construction preserving spatial relationships [1]

Zero-Shot and Few-Shot Evaluation Framework

To validate the generalizability of WSI foundation models, researchers employ comprehensive evaluation protocols across diverse clinical tasks:

- Linear Probing: Training a linear classifier on frozen slide representations to assess feature quality

- Few-Shot Classification: Fine-tuning with limited labeled examples (e.g., 1-100 samples per class) to simulate low-resource scenarios

- Zero-Shot Classification: Direct inference without task-specific training using natural language prompts

- Cross-Modal Retrieval: Measuring the model's ability to retrieve relevant WSIs given text queries and vice versa

- Rare Cancer Retrieval: Testing performance on rare malignancies with minimal training data [1] [2]

Table: Quantitative Performance Comparison of Foundation Models

| Model | Pretraining Data | Linear Probing (AUC) | Few-Shot (5-shot AUC) | Zero-Shot Accuracy | Cross-Modal Retrieval (R@1) |

|---|---|---|---|---|---|

| TITAN (Ours) | 335,645 WSIs + 423K captions + 183K reports | 0.941 | 0.893 | 0.782 | 0.651 |

| TITAN_V | 335,645 WSIs (vision-only) | 0.928 | 0.865 | N/A | N/A |

| Previous SOTA | 100K-200K patches | 0.901 | 0.812 | 0.695 | 0.523 |

| Supervised Baseline | Task-specific labels | 0.895 | 0.801 | N/A | N/A |

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of WSI analysis requires a comprehensive ecosystem of specialized tools, platforms, and data resources. The following table details key solutions actively used in computational pathology research:

Table: Essential Research Reagents and Platforms for WSI Analysis

| Tool/Platform | Type | Primary Function | Key Features |

|---|---|---|---|

| TITAN Model | Foundation Model | General-purpose slide representation learning | Multimodal (vision + language), zero-shot capabilities, cross-modal retrieval |

| HALO Platform | Image Analysis Software | Quantitative tissue analysis | AI-powered segmentation, multiplex analysis, high-throughput processing |

| Aiforia Create | AI Development Platform | Deep learning model development | Cloud-based, no-code interface, pre-trained models for pathology |

| QuPath | Open-Source Software | Whole slide image analysis | Smart annotation tools, cell detection, extensible scripting |

| CONCH Patch Encoder | Feature Extractor | Patch-level feature representation | Self-supervised learning, generalizable features across tissue types |

| PathChat | Generative AI | Synthetic caption generation for training | Multimodal conversational AI for pathology, generates fine-grained descriptions |

Implementation Workflow: From Data to Deployment

The practical implementation of WSI-level analysis involves a multi-stage pipeline that transforms raw slide data into actionable insights. The following diagram illustrates the comprehensive workflow for training and applying WSI foundation models:

Diagram Title: WSI Foundation Model Training Pipeline

Future Perspectives and Challenges

The shift from patch-level to WSI analysis, while transformative, presents several significant challenges that the research community must address:

- Computational Complexity: Processing gigapixel images remains computationally intensive, requiring innovative solutions for memory efficiency and inference speed.

- Data Heterogeneity: Variability in staining protocols, scanner types, and tissue preparation methods introduces domain shift problems that impact model generalizability.

- Regulatory and Validation Hurdles: Clinical deployment of WSI foundation models requires rigorous validation and regulatory approval pathways that are still evolving.

- Interpretability and Trust: As noted in recent surveys, pathologists' adoption of AI systems depends heavily on diagnostic accuracy and interpretability, with concerns about AI diagnostic errors representing a significant barrier [7].

Future research directions will likely focus on scaling laws for pathology foundation models, improved multimodal fusion techniques, and efficient fine-tuning methods that adapt general-purpose models to specific institutional needs while maintaining performance across diverse patient populations.

The critical shift from patch-level to Whole-Slide Image analysis represents a fundamental maturation of computational pathology, enabling a more holistic understanding of tissue morphology that aligns with the complex, spatially-organized nature of disease processes. This transition, coupled with multimodal data integration through foundation models like TITAN, creates unprecedented opportunities for generalizable pathology AI that can operate in diverse clinical scenarios—from common malignancies to rare diseases where traditional supervised approaches are impractical. As the field advances, the integration of WSI analysis with complementary modalities including genomic data, proteomics, and clinical outcomes will further accelerate the development of comprehensive diagnostic and prognostic tools that ultimately enhance patient care across the global healthcare ecosystem.

The integration of histology images, textual pathology reports, and genomic data is transforming computational pathology. This multimodal approach enables the development of powerful foundation models that improve diagnostic accuracy, prognostic prediction, and biomarker discovery. By leveraging self-supervised learning and novel fusion techniques, these models can overcome the limitations of single-modality analysis, particularly in data-scarce scenarios such as rare diseases. This technical guide examines the core data modalities, integration frameworks, and experimental protocols driving innovation in pathology foundation models, providing researchers with methodologies and resources to advance precision oncology.

Computational pathology stands at the forefront of a paradigm shift from unimodal to multimodal artificial intelligence (AI) systems. While histology whole-slide images (WSIs) provide rich information on tissue morphology and cellular structure, they represent just one dimension of the complex cancer landscape [1] [8]. The integration of textual pathology reports and genomic data creates a more comprehensive representation of disease mechanisms, enabling more accurate diagnosis, prognosis, and therapeutic prediction. This integration addresses critical challenges in cancer care, including diagnostic variability, limited data for rare cancers, and the complex interplay between morphological and molecular features [8] [9].

Foundation models pretrained on large-scale multimodal datasets have emerged as a powerful solution to these challenges. Models such as TITAN (Transformer-based pathology Image and Text Alignment Network) demonstrate that visual self-supervised learning combined with vision-language alignment can produce general-purpose slide representations transferable across diverse clinical tasks without requiring fine-tuning [1] [2]. Similarly, frameworks like PS3 showcase how integrating pathology reports with histology images and biological pathways enables more accurate cancer survival prediction [10]. The resulting multimodal systems outperform traditional single-modality approaches across multiple cancer types and clinical scenarios, heralding a new era in computational pathology.

Core Data Modalities: Technical Specifications and Processing

Histology Whole-Slide Images (WSIs)

Whole-slide images are high-resolution digital scans of tissue sections, typically exceeding 1 gigapixel in size [8]. The computational challenges posed by WSIs include their immense scale, irregular tissue shapes, and need for specialized processing pipelines. Standard preprocessing involves tissue segmentation, patching, and feature extraction using pretrained encoders.

Technical Processing Pipeline:

- Resolution: 8,192 × 8,192 pixels at 20× magnification for region-of-interest (ROI) analysis [1]

- Patching: Division into non-overlapping patches of 512×512 or 256×256 pixels

- Feature Extraction: Using pretrained models (e.g., CONCH) to generate 768-dimensional feature vectors per patch [1]

- Spatial Arrangement: Patch features are arranged in a 2D grid replicating original tissue positions to preserve spatial context [1]

Textual Pathology Reports

Pathology reports contain unstructured text summarizing histological findings, diagnosis, and clinical context. These reports provide high-level clinical semantics that complement the morphological details in WSIs [8] [11]. Natural language processing (NLP) techniques extract meaningful features from these textual descriptions.

Text Processing Approaches:

- Structured Annotation: Using transformer models to automatically annotate features from free-text reports, including cancer progression, tumor sites, and receptor status [9]

- Feature Encoding: Sentence-BERT or ClinicalBERT models generate 512-dimensional text embeddings [8]

- Diagnostic Prototypes: Self-attention mechanisms identify and extract diagnostically relevant text segments for standardized representation [10]

Genomic Profiling Data

Genomic data provides molecular characterization of tumors, including gene expression, mutations, and pathway activities. This modality reveals underlying biological mechanisms that may not be visible in histology images alone [10] [12].

Genomic Data Processing:

- Pathway-Based Representation: Genes are grouped into predefined biological pathways using binary masking [10]

- Expression Quantification: Gene expression vectors transformed into fixed-size embeddings using self-normalizing neural networks [10]

- Molecular Subtyping: Identification of characteristic genomic patterns (e.g., microsatellite instability, chromosomal instability) [13]

Table 1: Core Data Modalities in Computational Pathology

| Modality | Data Format | Key Features | Extraction Methods |

|---|---|---|---|

| Histology Images | Gigapixel whole-slide images | Tissue morphology, cellular structure, tumor microenvironment | Patched feature extraction with ViT or ResNet architectures |

| Textual Reports | Unstructured clinical text | Diagnostic summary, clinical context, morphological descriptions | NLP transformers (ClinicalBERT, Sentence-BERT) |

| Genomic Data | Gene expression, mutations | Molecular subtypes, pathway activities, biomarkers | Pathway enrichment analysis, expression quantification |

Multimodal Integration Frameworks and Architectures

Vision-Language Foundation Models

Vision-language models align histopathological images with corresponding textual descriptions to learn joint representations. TITAN employs a three-stage pretraining approach: (1) vision-only unimodal pretraining on ROI crops, (2) cross-modal alignment with synthetic morphological descriptions at ROI-level, and (3) cross-modal alignment at WSI-level with clinical reports [1]. This progressive training strategy enables the model to capture both local and global contextual relationships between images and text.

The model architecture uses a Vision Transformer (ViT) that operates on pre-extracted patch features rather than raw pixels. To handle long sequences of patch features, TITAN implements attention with linear bias (ALiBi) for long-context extrapolation, where the linear bias is based on the relative Euclidean distance between features in the 2D grid [1]. This approach preserves spatial relationships while managing computational complexity.

Prototype-Based Multimodal Fusion

The PS3 framework introduces a prototype-based approach to integrate pathology reports, histology images, and biological pathways [10]. This method addresses modality heterogeneity by creating standardized representations for each data type:

- Diagnostic Prototypes: Use self-attention mechanisms to extract diagnostically relevant sections from pathology reports

- Histological Prototypes: Apply Gaussian mixture models to cluster image patches into morphological prototypes

- Pathway Prototypes: Employ binary pathway masks and self-normalizing networks to create fixed-size genomic representations

These prototypes are fused using a multimodal transformer that models both intra-modality and cross-modality interactions through attention mechanisms between all possible modality pairs [10].

Knowledge Distillation from Unpaired Data

CLIP-IT addresses the challenge of limited paired image-text datasets by leveraging unpaired external text reports as privileged information during training [11]. The framework uses a CLIP model pretrained on histology image-text pairs from a separate dataset to retrieve the most relevant unpaired textual report for each image in the downstream unimodal dataset. Knowledge from these semantically relevant texts is distilled into the vision model during training, while LoRA-based adaptation mitigates the semantic gap between unaligned modalities [11]. This approach enables multimodal training without requiring paired annotations in the target dataset.

Diagram 1: Multimodal Integration Workflow in PS3 Framework

Experimental Protocols and Performance Analysis

Benchmark Datasets and Evaluation Metrics

Multimodal pathology models are typically evaluated on large-scale datasets encompassing multiple cancer types. The Cancer Genome Atlas (TCGA) represents a primary resource, containing WSIs, molecular profiles, and clinical data across 33 cancer types [8] [12]. Internal datasets such as Mass-340K (335,645 WSIs with corresponding reports) provide substantial pretraining resources [1].

Key Evaluation Metrics:

- Classification Performance: Accuracy, precision, recall, F1-score, area under the curve (AUC)

- Survival Prediction: Concordance index (C-index), hazard ratios

- Retrieval Tasks: Recall@k, mean average precision (mAP)

- Prognostic Stratification: Kaplan-Meier survival analysis, log-rank test

Quantitative Performance Comparison

Table 2: Performance Comparison of Multimodal Pathology Models

| Model | Integrated Modalities | Key Tasks | Performance Highlights |

|---|---|---|---|

| TITAN [1] [2] | WSIs, pathology reports, synthetic captions | Cancer subtyping, rare cancer retrieval, report generation | Outperforms ROI and slide foundation models in linear probing, few-shot/zero-shot classification |

| MPath-Net [8] | WSIs, pathology reports | Cancer subtype classification | 94.65% accuracy, 0.9553 precision, 0.9472 recall, 0.9473 F1-score on TCGA kidney and lung cancers |

| PS3 [10] | WSIs, pathology reports, transcriptomics | Cancer survival prediction | Outperforms clinical, unimodal, and multimodal baselines across 6 TCGA datasets |

| CLIP-IT [11] | WSIs, unpaired external reports | Histology image classification | Improves accuracy by up to 4.4% on PCAM, 3.6% on BACH, and 1.5% on CRC datasets |

Ablation Studies and Modality Importance

Multimodal attribution analysis reveals the relative importance of different modalities for specific prediction tasks. Studies demonstrate that integrated models consistently outperform unimodal approaches across cancer types. For example, multimodal deep learning models for pan-cancer prognosis show improved performance over image-only or genomic-only models in the majority of 14 cancer types analyzed [12]. Similarly, models incorporating NLP-derived features from clinical notes outperform those based solely on genomic data or cancer stage for overall survival prediction [9].

The specific advantage of each modality varies by clinical task:

- Histology images provide critical information for morphological classification and tumor microenvironment assessment

- Pathology reports contribute diagnostic context and clinical synthesis

- Genomic data enables molecular subtyping and pathway-level insights for targeted therapy

Essential Research Reagents and Datasets

Table 3: Key Research Resources for Multimodal Pathology

| Resource | Type | Key Features | Application |

|---|---|---|---|

| TCGA [8] [12] | Multi-modal dataset | 20,000+ primary cancers, WSIs, genomics, clinical data | Model training and validation across cancer types |

| MSK-CHORD [9] | Clinicogenomic dataset | 24,950 patients, NLP-annotated notes, genomics, outcomes | Survival prediction, metastasis research |

| CONCH [1] [11] | Vision-language model | Pretrained on histology image-text pairs | Feature extraction, cross-modal retrieval |

| PLIP [10] | Medical vision-language model | Pretrained on pathology images and text | Text and image encoding in multimodal frameworks |

Computational Infrastructure Requirements

Implementing multimodal pathology foundation models requires substantial computational resources:

- GPU Memory: Large models like TITAN require significant VRAM for processing gigapixel WSIs and long text sequences

- Storage: Mass-340K dataset with 335,645 WSIs represents substantial storage requirements [1]

- Processing: Transformer architectures with efficient attention mechanisms necessary for handling long sequences of patch features

Diagram 2: End-to-End Multimodal Foundation Model Architecture

Future Directions and Challenges

The field of multimodal computational pathology faces several important challenges and research directions. Data scarcity, particularly for rare cancers, remains a significant obstacle that may be addressed through synthetic data generation and data augmentation techniques [1]. Model interpretability is another critical area, with attention mechanisms and feature attribution methods providing insights into model decisions [8] [12].

Future research will likely focus on:

- Scalable Pretraining: Expanding model and dataset sizes to improve generalizability

- Efficient Fusion Methods: Developing more effective techniques for integrating heterogeneous modalities

- Clinical Translation: Validating models in real-world settings and streamlining regulatory approval

- Multimodal Retrieval: Enhancing cross-modal search capabilities for clinical decision support

As multimodal foundation models continue to evolve, they hold the potential to transform cancer diagnosis and treatment by providing comprehensive, AI-powered pathological analysis that integrates morphological, clinical, and molecular dimensions.

The field of computational pathology is undergoing a revolutionary shift from supervised learning on specific tasks to the development of general-purpose foundation models through self-supervised learning (SSL) and vision-language pretraining on massive datasets. This paradigm shift addresses fundamental limitations in traditional approaches, including the labor-intensive annotation of whole-slide images (WSIs) and the inability to generalize across diverse diagnostic tasks and rare diseases. Foundation models pretrained using SSL on millions of histology image patches capture morphological patterns in histology patch embeddings, such as tissue organization and cellular structure, serving as a "foundation" for models that predict clinical endpoints from WSIs [1]. The integration of multimodal data, particularly the alignment of pathology images with textual reports and captions, represents a crucial advancement that more closely mirrors how human pathologists teach and reason about histopathologic entities [14]. This technical review examines the methodologies, performance benchmarks, and implementation frameworks underpinning this transformative approach, providing researchers and drug development professionals with a comprehensive resource for leveraging these technologies in oncology and broader pathology applications.

Self-Supervised Learning Methods for Pathology Foundation Models

Core Algorithmic Approaches

Self-supervised learning for pathology foundation models employs several sophisticated algorithms designed to learn meaningful representations from unlabeled histopathology data. These methods eliminate the need for manual annotation by creating pretext tasks that enable models to learn inherent data structures and patterns.

- Contrastive Learning (SimCLR, MoCo, SwAV, VICReg): These frameworks learn representations by maximizing agreement between differently augmented views of the same image while discriminating between different images. The MoCo v3 algorithm, for instance, was used to train CTransPath, which combines convolutional layers with the Swin Transformer model [15].

- Self-Distillation (DINO, DINOv2, BYOL): These methods employ a teacher-student network architecture where the student network learns to match the output of the teacher network for different augmented views of the same image. DINOv2 has been particularly successful, forming the foundation for models including UNI, Virchow, Phikon-v2, and Prov-GigaPath [15].

- Masked Image Modeling (MAE, iBOT, BEiT): These approaches mask portions of the input image and train the model to reconstruct the missing parts. iBOT combines masked image modeling with contrastive learning and has been successfully applied to histology data in Phikon [15] [1]. MAE has also been explored, though studies have shown DINO's superiority for pathology foundation model pretraining [15].

Table 1: Key Self-Supervised Learning Algorithms in Pathology Foundation Models

| Algorithm Category | Representative Models | Core Mechanism | Training Scale |

|---|---|---|---|

| Self-Distillation | UNI, Virchow, Phikon-v2, Prov-GigaPath | Teacher-student knowledge transfer | 100M-2B tiles [15] |

| Masked Image Modeling | Phikon (iBOT) | Reconstruction of masked image regions | 43.3M tiles [15] |

| Contrastive Learning | CTransPath (MoCo v3) | Positive/negative sample discrimination | 15.6M tiles [15] |

Architectural Innovations

The transition to foundation models has driven significant architectural evolution in computational pathology:

- Hybrid Convolutional-Transformer Models: CTransPath combines convolutional layers' inductive biases with the Swin Transformer's global attention mechanism, leveraging the strengths of both architectural paradigms [15].

- Vision Transformers (ViTs): Pure transformer architectures have demonstrated remarkable performance, with models ranging from ViT-base to ViT-giant (ViT-huge and ViT-giant in Virchow2 and Virchow2G) [15].

- Multi-Scale Processing: Models like Virchow2 explicitly leverage multiple magnifications (5x, 10x, 20x, and 40x) to capture hierarchical tissue structures [15].

- Hierarchical Pretraining: Prov-GigaPath employs a two-stage approach with tile-level pretraining using DINOv2 followed by slide-level pretraining using a masked autoencoder and LongNet [15].

Vision-Language Multimodal Integration

Multimodal Pretraining Frameworks

Vision-language foundation models represent a groundbreaking advancement by aligning histopathology images with textual descriptions, enabling cross-modal understanding and zero-shot reasoning capabilities.

- CONCH (CONtrastive learning from Captions for Histopathology): Developed using diverse sources of histopathology images, biomedical text, and over 1.17 million image-caption pairs through task-agnostic pretraining [14]. Based on the CoCa framework, CONCH uses an image encoder, a text encoder, and a multimodal fusion decoder, trained using a combination of contrastive alignment objectives and a captioning objective that learns to predict captions corresponding to images [14].

- TITAN (Transformer-based pathology Image and Text Alignment Network): A multimodal whole-slide vision-language model pretrained on 335,645 WSIs via visual self-supervised learning and vision-language alignment with corresponding pathology reports and 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology [1]. TITAN's pretraining employs a sophisticated three-stage approach: (1) vision-only unimodal pretraining on region-of-interest (ROI) crops, (2) cross-modal alignment of generated morphological descriptions at ROI-level, and (3) cross-modal alignment at WSI-level with clinical reports [1].

- Quilt-1M: The largest public vision-language histopathology dataset, containing 1 million image-text pairs curated from YouTube educational histopathology videos, Twitter, research papers, and general internet sources [16]. This dataset enabled training of QuiltNet through Contrastive Language-Image Pre-training (CLIP), demonstrating strong performance on zero-shot and linear probing tasks across 13 diverse patch-level datasets [16].

Technical Implementation of Multimodal Alignment

The architecture of multimodal foundation models requires careful design decisions to handle the unique challenges of histopathology data:

- Handling Long Input Sequences: WSIs can generate >10^4 tokens at slide-level versus 196-256 tokens at patch-level. TITAN addresses this by dividing each WSI into non-overlapping patches of 512×512 pixels at 20× magnification, extracting 768-dimensional features for each patch, then randomly cropping the 2D feature grid for processing [1].

- Positional Encoding Schemes: TITAN uses attention with linear bias (ALiBi) extended to 2D for long-context extrapolation, where the linear bias is based on the relative Euclidean distance between features in the feature grid [1].

- Cross-Modal Contrastive Objectives: Models learn a shared embedding space where paired images and texts have similar representations, enabling cross-modal retrieval and zero-shot classification [14].

Table 2: Major Vision-Language Models in Computational Pathology

| Model | Training Data Scale | Architecture | Key Capabilities |

|---|---|---|---|

| CONCH | 1.17M image-caption pairs | Image encoder, text encoder, multimodal decoder | Classification, segmentation, captioning, cross-modal retrieval [14] |

| TITAN | 335,645 WSIs + 423K synthetic captions | ViT with cross-modal alignment | Slide representation, report generation, zero-shot classification [1] |

| QuiltNet | 1M image-text pairs (Quilt-1M) | CLIP-based architecture | Zero-shot classification, cross-modal retrieval [16] |

Experimental Protocols and Benchmarking

Standardized Evaluation Frameworks

Robust benchmarking is essential for comparing foundation models across diverse clinical tasks. The clinical benchmark established by Campanella et al. provides a comprehensive evaluation framework using pathology datasets comprising clinical slides associated with clinically relevant endpoints including cancer diagnoses and various biomarkers generated during standard hospital operation from three medical centers [15].

Benchmarking Protocol:

- Model Encoding: Extract features from pathology images using pretrained foundation models without fine-tuning.

- Task-Specific Evaluation: Assess features on diverse downstream tasks including disease detection, biomarker prediction, and cancer subtyping.

- Performance Aggregation: Compare models using standardized metrics including AUC, accuracy, and Cohen's kappa across multiple datasets.

For vision-language models, the evaluation encompasses both vision-only and cross-modal tasks:

- Zero-shot Classification: Class labels are converted to text prompts, with images classified by matching them with the most similar text prompt in the model's shared image-text representation space [14].

- Cross-modal Retrieval: Measuring both text-to-image and image-to-text retrieval capabilities using recall metrics [16] [14].

- Slide-level Prediction: For gigapixel WSIs, methods like MI-Zero divide the slide into smaller tiles and aggregate individual tile-level scores into a slide-level prediction [14].

Quantitative Performance Comparison

Table 3: Performance Benchmark of Foundation Models on Clinical Tasks

| Model | BRCA Subtyping (AUC) | NSCLC Subtyping (AUC) | RCC Subtyping (AUC) | Zero-shot Retrieval (Recall@1) |

|---|---|---|---|---|

| CONCH | 91.3% [14] | 90.7% [14] | 90.2% [14] | 68.4% [14] |

| PLIP | 50.7% [14] | 78.7% [14] | 80.4% [14] | 52.1% [14] |

| BiomedCLIP | 55.3% [14] | 75.2% [14] | 77.9% [14] | 49.8% [14] |

| Phikon-v2 | >90% (across 8 tasks) [15] | >90% (across 8 tasks) [15] | >90% (across 8 tasks) [15] | N/A |

For disease detection tasks, all foundation models show consistent performance with AUCs above 0.9 across all tasks, significantly outperforming ImageNet-pretrained models [15]. In zero-shot settings, CONCH demonstrates substantial improvements over competing vision-language models, outperforming PLIP by 12.0% on NSCLC subtyping and 9.8% on RCC subtyping [14].

Implementation Workflows

The development and application of pathology foundation models follow structured workflows that can be visualized and implemented systematically.

Self-Supervised Pretraining Workflow

Figure 1: Self-Supervised Learning Workflow for Pathology Foundation Models

Multimodal Vision-Language Pretraining

Figure 2: Vision-Language Multimodal Pretraining Architecture

The Scientist's Toolkit: Essential Research Reagents

Implementation of pathology foundation models requires specific computational resources and datasets. The following table details key components necessary for successful experimentation and deployment.

Table 4: Essential Research Reagents for Pathology Foundation Model Development

| Resource Category | Specific Examples | Function & Utility | Access Information |

|---|---|---|---|

| Pretrained Models | CONCH, Phikon, UNI, CTransPath | Feature extraction, transfer learning, zero-shot evaluation | Publicly available on Hugging Face, GitHub [15] [14] |

| Benchmark Datasets | TCGA (BRCA, NSCLC, RCC), CRC100k, SICAP | Model evaluation, performance comparison | Publicly available with restrictions [15] [14] |

| Vision-Language Datasets | Quilt-1M, CONCH pretraining data | Multimodal model training, cross-modal learning | Quilt-1M publicly available; CONCH data requires institutional approval [16] [14] |

| SSL Frameworks | DINOv2, iBOT, MAE implementations | Self-supervised pretraining recipe implementation | Publicly available on GitHub [15] |

| Computational Resources | High-memory GPU servers (A100/H100) | Handling large-scale WSI processing and model training | Cloud providers (AWS, GCP, Azure) and institutional HPC |

Future Directions and Challenges

Despite significant progress, several challenges remain in the development and deployment of pathology foundation models. Data standardization and privacy protection require robust solutions while ensuring regulatory compliance [17]. Model training and deployment face computational bottlenecks when processing large-scale and biased multimodal datasets [17]. Additionally, model interpretability must be enhanced to provide clinically meaningful explanations that gain physician trust [17].

Future research directions include:

- Scaled-Up Training: Current models are trained on millions to billions of tiles, but natural image domains suggest continued benefits from further scaling [15].

- Whole-Slide Foundation Models: Transitioning from tile-level to whole-slide representations, as demonstrated by TITAN, will better capture tissue microenvironment context [1].

- Multimodal Integration Expansion: Incorporating additional data modalities such as genomics, transcriptomics, and spatial transcriptomics for more comprehensive tumor characterization [17].

- Synthetic Data Generation: Leveraging generative AI for creating realistic training data, as exemplified by TITAN's use of 423,122 synthetic captions [1].

The SLC-PFM NeurIPS 2025 competition represents a coordinated community effort to advance the field, providing participants with access to the MSK-SLCPFM dataset with approximately 300 million images from 39 cancer types for developing next-generation pathology foundation models [18]. Such initiatives will accelerate innovation and establish standardized benchmarks for the entire research community.

As pathology foundation models continue to evolve, they hold the potential to transform cancer diagnosis and treatment planning by providing clinicians with powerful AI-assisted tools that generalize across diverse disease presentations and patient populations, ultimately advancing the goals of precision medicine in oncology and beyond.

In the field of computational pathology, the development of robust artificial intelligence (AI) models is fundamentally constrained by a pervasive challenge: the limited availability of large, well-annotated clinical datasets. This scarcity is particularly pronounced for rare diseases and specialized clinical tasks, where collecting thousands of annotated samples is infeasible [19] [1]. Such data limitations severely compromise model generalizability, leading to performance degradation when applied to real-world patient populations with diverse characteristics.

Multimodal data integration presents a transformative pathway to overcome these constraints. By synthesizing complementary information from histopathology, genomics, radiology, and clinical reports, foundation models can learn more comprehensive representations of the tumor microenvironment [20] [21]. The central premise is that orthogonally derived data complement one another, thereby augmenting information content beyond that of any individual modality [20]. This approach mirrors how pathologists synthesize information from multiple sources to reach diagnostic conclusions [22].

This technical guide examines cutting-edge methodologies that leverage multimodal integration to address data scarcity challenges, with a focus on architectural innovations, pretraining strategies, and transfer learning protocols that enhance data efficiency in pathology AI research.

Technical Approaches for Small Cohort Challenges

Foundation Model Pretraining at Scale

Current research demonstrates that large-scale pretraining on diverse, multimodal datasets establishes a foundational representation that can be effectively adapted to specialized tasks with minimal fine-tuning. This paradigm shift addresses the core limitation of small annotated cohorts by transferring knowledge acquired from broad data sources to specific clinical applications.

Table 1: Large-Scale Multimodal Pretraining Datasets

| Dataset/Model | Sample Size | Modalities Included | Cancer Types/Areas | Key Innovations |

|---|---|---|---|---|

| TITAN [1] | 335,645 WSIs; 182,862 reports | WSIs, pathology reports, synthetic captions | 20 organs | Visual-language pretraining; synthetic data generation |

| CLIMB [23] | 4.51M patient samples | 2D/3D imaging, text, time series, genomics | 96 clinical conditions across 13 domains | Unified benchmark across diverse modalities |

| MICE [21] | 11,799 patients | WSIs, clinical reports, genomics | 30 cancer types | Collaborative expert module for pan-cancer analysis |

| Mass-340K [1] | 335,645 WSIs | WSIs, medical reports | 20 organ types | Diversity across stains, scanners, tissue types |

The TITAN (Transformer-based pathology Image and Text Alignment Network) model exemplifies this approach, employing a three-stage pretraining strategy: (1) vision-only self-supervised learning on region crops, (2) cross-modal alignment with generated morphological descriptions at the region-of-interest level, and (3) cross-modal alignment at the whole-slide image level with clinical reports [1]. This methodology enables the model to learn general-purpose slide representations that transfer effectively to resource-limited scenarios, including rare disease retrieval and cancer prognosis.

Multimodal Integration Architectures

Effective architectural design is crucial for leveraging complementary information across modalities. Advanced fusion strategies enable models to capture both shared and modality-specific patterns, enhancing robustness when annotated cohorts are small.

Table 2: Multimodal Integration Architectures for Data Efficiency

| Model | Architecture Approach | Fusion Strategy | Data Efficiency Performance |

|---|---|---|---|

| MICE [21] | Collaborative multi-expert module | Combination of MoE, specialized, and consensual experts | Achieves comparable performance with 50% less fine-tuning data |

| TITAN [1] | Vision Transformer with 2D ALiBi | Cross-modal vision-language alignment | Superior few-shot and zero-shot classification capabilities |

| Foundation Models [22] | Swarm learning architectures | Decentralized learning without centralized data sharing | Preserves privacy while improving generalizability across populations |

The MICE framework introduces a novel collaborative expert module comprising three distinct components: (1) an overlapping mixture-of-experts (MoE) group that captures cross-cancer patterns through input-conditioned routing, (2) a specialized expert group that extracts cancer-specific knowledge, and (3) a consensual expert that integrates shared patterns across all cancer types [21]. This architecture collaboratively extracts holistic representations essential for generalizable pan-cancer prognosis prediction, effectively addressing the limitations of small, cancer-type-specific datasets.

Leveraging Synthetic Data and Augmentation

Synthetic data generation has emerged as a powerful strategy to overcome annotation scarcity. TITAN incorporates 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology, enhancing the model's language alignment capabilities without requiring manual annotation of additional image-text pairs [1]. This approach demonstrates the potential of leveraging generative AI to create fine-grained morphological descriptions at scale, effectively expanding limited training datasets.

(Figure 1: Overcoming small cohort limitations through foundation model pretraining and synthetic data.)

Experimental Protocols and Methodologies

Multimodal Pretraining Protocol

The pretraining methodology for TITAN employs a sophisticated approach to learning from gigapixel whole-slide images (WSIs). The protocol involves:

Feature Extraction: Dividing each WSI into non-overlapping 512×512 pixel patches at 20× magnification, followed by extraction of 768-dimensional features for each patch using the CONCH model [1].

Spatial Context Preservation: Arranging patch features in a 2D feature grid replicating their spatial positions within the tissue, enabling the use of positional encoding.

Multi-Scale Cropping: Randomly sampling region crops of 16×16 features (covering 8,192×8,192 pixels) from the WSI feature grid, then generating two random global (14×14) and ten local (6×6) crops for self-supervised learning.

Masked Image Modeling: Applying the iBOT framework for vision-only pretraining on the 2D feature grid with posterization feature augmentation [1].

Long-Context Extrapolation: Using Attention with Linear Biases (ALiBi) extended to 2D, where linear bias is based on the relative Euclidean distance between features in the grid, reflecting actual distances between tissue patches [1].

Evaluation Metrics and Benchmarks

Rigorous evaluation is essential for validating model performance in data-limited scenarios. Established benchmarks include:

Few-Shot Learning: Measuring model performance when fine-tuned with limited labeled examples (e.g., 1%, 10%, 50% of available data) [21].

Zero-Shot Classification: Assessing model capability to classify samples without task-specific fine-tuning, particularly through cross-modal retrieval between histology slides and clinical reports [1].

Cross-Modal Retrieval: Evaluating the model's ability to retrieve relevant histology images given text queries, and vice versa [1].

Rare Cancer Retrieval: Testing performance on diagnostically challenging cases with minimal training examples [1].

MICE demonstrated substantial improvements in C-index ranging from 3.8% to 11.2% on internal cohorts and 5.8% to 8.8% on independent cohorts compared to unimodal and state-of-the-art multimodal models, showcasing superior generalizability despite data limitations [21].

(Figure 2: Multimodal integration architecture for comprehensive tumor characterization.)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function/Purpose | Application in Small Cohorts |

|---|---|---|---|

| CONCH [1] | Patch Encoder | Extracts meaningful features from histology patches | Provides foundational feature representations for slide-level models |

| iBOT Framework [1] | Self-Supervised Algorithm | Masked image modeling with knowledge distillation | Enables pretraining without extensive annotations |

| ALiBi [1] | Positional Encoding | Attention with linear biases for long-context extrapolation | Handles variable-sized WSIs without retraining |

| PathChat [1] | Generative AI Copilot | Generates synthetic fine-grained morphological descriptions | Creates additional training data without manual annotation |

| Swarm Learning [22] | Decentralized Learning | Model training across institutions without data sharing | Increases effective dataset size while preserving privacy |

| Digital Slide Scanners | Hardware | Converts glass slides into high-resolution WSIs | Creates digital biobanks for large-scale pretraining |

Implementation Considerations for Clinical Translation

Successful implementation of these approaches requires addressing several practical considerations:

Computational Infrastructure: Processing gigapixel whole-slide images demands significant computational resources, particularly for transformer architectures with long input sequences [1].

Data Standardization: Variability in image acquisition across scanners and institutions necessitates robust normalization techniques. Collaborative efforts, such as those in India establishing standardized protocols for image acquisition and analysis, demonstrate pathways to address this challenge [19].

Annotation Efficiency: Active learning strategies that prioritize the most informative samples for expert annotation can maximize the value of limited pathology resources [19].

Regulatory and Privacy Concerns: Federated learning approaches enable model development without centralizing sensitive patient data, addressing privacy constraints while facilitating multi-institutional collaboration [22].

The CLIMB benchmark demonstrates that multitask pretraining significantly improves performance on understudied domains, achieving up to 29% improvement in ultrasound and 23% in ECG analysis over single-task learning in few-shot scenarios [23]. This underscores the value of broad pretraining for enhancing data efficiency in specialized clinical applications.

Future Directions

The trajectory of multimodal foundation models points toward increasingly sophisticated approaches for overcoming data limitations:

Generative AI Integration: Advanced synthesis of multimodal patient data, including histopathology, genomics, and clinical reports, to create expansive training corpora [1] [24].

Cross-Institutional Collaborations: Federated learning frameworks that enable model development across multiple healthcare systems without sharing protected health information [22].

Automated Annotation Systems: AI-assisted tools that reduce the manual burden of data labeling while maintaining diagnostic accuracy [25].

Explainable AI (XAI) Techniques: Methods such as saliency maps and feature attribution that foster clinical trust and provide interpretability for model predictions [22].

As these technologies mature, they promise to transform the data scarcity challenge from an insurmountable barrier to a manageable consideration in computational pathology research, ultimately accelerating the development of robust AI systems that generalize across diverse patient populations and clinical scenarios.

Architectures and Clinical Implementations: Building and Applying Multimodal Models

The integration of multimodal data—combining histopathology images, genomic sequences, clinical notes, and more—is revolutionizing computational pathology. Foundation models trained on these diverse data types promise to enhance cancer diagnosis, prognostication, and biomarker discovery. However, a significant challenge lies in the inherent nature of biomedical information, which often exists as non-Euclidean data, such as graphs representing molecular structures or patient relationships. Traditional deep learning architectures like Convolutional Neural Networks (CNNs), which excel with grid-like data (e.g., images), struggle to capture the complex, irregular relationships within this non-Euclidean space. This whitepaper explores two core architectures at the forefront of this challenge: Transformers and Graph Neural Networks (GNNs), detailing their principles, applications, and methodological protocols for multimodal integration in pathology.

Transformer Architecture

Originally designed for sequential natural language data, Transformers have been successfully adapted for computer vision and multimodal tasks. Their core mechanism is self-attention, which allows the model to weigh the importance of different parts of the input data, regardless of their order or direct proximity [26].

- Self-Attention Mechanism: The input sequence is projected into three spaces: Query (Q), Key (K), and Value (V). The attention score is computed as

Attention(Q, K, V) = softmax(QK^T / √d_k)V, whered_kis the dimension of the key vectors [27]. This allows each element in the sequence to interact with every other element, capturing long-range dependencies. - Parallelization: Unlike recurrent neural networks (RNNs) that process data sequentially, Transformers process the entire input in parallel, making them highly scalable [26].

- Positional Encoding: Since self-attention is permutation-invariant, positional encodings (e.g., sinusoidal functions) are added to the input embeddings to incorporate the order of the sequence [27].

In pathology, Vision Transformers (ViTs) divide whole-slide images (WSIs) into patches, treating them as a sequence for analysis [1] [28]. Multimodal transformers can also integrate imaging data with clinical notes or genomic sequences by using cross-attention mechanisms between different data modalities [28].

Graph Neural Network (GNN) Architecture

GNNs are specifically designed for data structured as graphs, consisting of nodes (e.g., patients, cells, genes) and edges (the relationships between them). This makes them naturally suited for non-Euclidean data [26].

- Message-Passing Framework: A common paradigm for GNNs is Message-Passing Neural Networks (MPNNs). In each layer, nodes aggregate information from their neighboring nodes, update their own features based on this aggregated "message," and a final readout function may pool information across all nodes to create a graph-level representation [27].

- Topological Modeling: GNNs do not require a fixed grid structure, allowing them to flexibly model complex and irregular topological structures, such as the intricate shapes of tumors or protein interaction networks [29].

- Inductive Bias: The structure of GNNs incorporates an inductive bias for relational data, enabling them to learn from the connections within the graph explicitly.

Comparative Analysis

Table 1: Comparative properties of Transformers and GNNs for non-Euclidean data.

| Property | Transformer | Graph Neural Network (GNN) |

|---|---|---|

| Core Data Structure | Sequences, patches (adapted) | Graphs (nodes & edges) |

| Core Mechanism | Self-attention | Message passing, neighborhood aggregation |

| Receptive Field | Global from the first layer | Increases with network depth |

| Handling of Topology | Limited; relies on positional encoding | Native; inherent in graph structure |

| Computational Complexity | O(N²) with sequence length N | Approximately O(N × K) with N nodes and K avg. neighbors |

| Key Strength | Global context, parallelization | Explicit relational reasoning, irregular structure modeling |

Experimental Results and Performance Benchmarks

Empirical evaluations demonstrate the distinct advantages and application-specific performance of these architectures.

A pioneering pure GNN model, U-GNN, designed for medical image segmentation, has demonstrated remarkable superiority. In experiments on multi-organ and cardiac segmentation datasets, U-GNN achieved a 6% improvement in the Dice Similarity Coefficient (DSC) and an 18% reduction in the Hausdorff Distance (HD) compared to state-of-the-art CNN- and Transformer-based models [29]. This highlights GNNs' potent capability in capturing complex topological structures like irregular tumor boundaries.

Conversely, in the domain of multimodal whole-slide foundation models, Transformer-based architectures have shown significant promise. The TITAN model, pretrained on 335,645 whole-slide images and aligned with pathology reports and synthetic captions, excels in tasks like zero-shot classification, rare cancer retrieval, and cross-modal report generation [1]. Its ability to encode entire WSIs into general-purpose slide representations simplifies downstream clinical endpoint prediction.

However, a critical evaluation of pathology foundation models reveals overarching challenges. Systematic assessments show that many models, including large-scale Transformers, suffer from low diagnostic accuracy (e.g., F1 scores around 40-42%), lack of robustness to site-specific bias, and significant geometric fragility where performance drops with simple image rotations [30]. This indicates that architectural choice alone does not guarantee success; domain-specific adaptation and rigorous validation are paramount.

Table 2: Quantitative performance of featured architectures in specific applications.

| Architecture | Model Name | Task | Key Metric | Reported Performance |

|---|---|---|---|---|

| Pure GNN | U-GNN [29] | Tumor segmentation | Dice Similarity Coefficient (DSC) | 6% improvement over SOTA |

| Hausdorff Distance (HD) | 18% reduction | |||

| Transformer | ViGPT2/BEiTGPT2 [28] | Medical report generation | BLEU, ROUGE-L | Outperformed recurrent baselines |

| Multimodal Transformer | TITAN [1] | Slide retrieval, zero-shot classification | Retrieval accuracy, AUC | Outperformed existing slide foundation models |

Detailed Experimental Protocols

Protocol 1: U-GNN for Medical Image Segmentation

Objective: To segment tumors and organs from medical images using a pure GNN-based U-shaped architecture that leverages topological modeling [29].

- Data Preprocessing: Resize input medical images (e.g., from CT or MRI) to a fixed resolution of H × W. Apply standard augmentation techniques like rotation, flipping, and color jittering.

- Graph Construction:

- Patch Node Creation: The input image is divided into non-overlapping patches. Each patch is linearly projected into an initial node feature vector.

- Multi-Order Similarity Graph Construction: The graph topology is not fixed but learned. Instead of relying only on first-order similarity (direct feature similarity between two nodes), a higher-order similarity is computed. This involves considering whether the surrounding neighborhoods of two nodes are also similar, which is crucial for medical images with large homogeneous regions. This step establishes connections between node pairs, defining the graph's edges.

- U-GNN Model Architecture:

- Encoder: The encoder consists of multiple stages, each containing several Vision GNN blocks. Between stages, a downsampling operation reduces the spatial resolution (H, W) by a factor of 2 and simultaneously doubles the feature dimension (C).

- Latent Space: The bottleneck layer processes the feature map at the lowest resolution using multiple Vision GNN blocks to learn high-level abstract features.

- Decoder: The decoder is symmetric to the encoder. It consists of multiple stages of Vision GNN blocks with upsampling operations between them to restore the spatial resolution. The feature dimension is halved after each upsampling.

- Skip Connections: Feature maps from the encoder are concatenated with the corresponding decoder feature maps to recover spatial information lost during downsampling.

- Node Information Aggregation: Within each Vision GNN block, after the graph is constructed, a multi-order information aggregation is performed. This is a message-passing step where each node updates its feature by aggregating information from its connected neighbors in the graph, combining both local and global cues.

- Training & Evaluation:

- Loss Function: A combination of Dice Loss and Cross-Entropy Loss is typically used for segmentation tasks.

- Optimization: Train using the Adam optimizer.

- Evaluation Metrics: The model is evaluated on a hold-out test set using the Dice Similarity Coefficient (DSC) and Hausdorff Distance (HD).

Diagram 1: U-GNN segmentation workflow.

Protocol 2: Multimodal Transformer for Whole-Slide Analysis