Navigating the Computational Maze: Overcoming Key Challenges in Single-Cell Sequencing Data Analysis

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by enabling the dissection of gene expression at unprecedented resolution, but it generates complex, high-dimensional data posing significant computational challenges.

Navigating the Computational Maze: Overcoming Key Challenges in Single-Cell Sequencing Data Analysis

Abstract

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by enabling the dissection of gene expression at unprecedented resolution, but it generates complex, high-dimensional data posing significant computational challenges. This article provides a comprehensive guide for researchers and drug development professionals addressing four critical needs: understanding foundational data characteristics like sparsity and technical noise; selecting appropriate methodologies from an evolving toolkit of machine learning and bioinformatics tools; implementing optimization strategies for data quality and batch effects; and validating results through rigorous benchmarking. By synthesizing current computational approaches and highlighting emerging solutions, this resource aims to equip scientists with practical strategies to transform noisy single-cell data into biologically meaningful insights for drug discovery and clinical translation.

Understanding the Single-Cell Data Landscape: From Technical Noise to Biological Complexity

FAQs: Understanding scRNA-seq Data Characteristics

What are the defining features of scRNA-seq data? scRNA-seq data are defined by three primary characteristics: high-dimensionality, sparsity, and technical variation. High-dimensionality arises because the expression levels of tens of thousands of genes are measured across thousands to millions of individual cells [1] [2]. Sparsity, often called "dropout," results in many zero counts for genes that are actually expressed due to low mRNA quantities and technical limitations [1] [3]. Technical variation includes batch effects from differences in sample preparation, sequencing runs, or platforms, which can obscure true biological signals [4] [5].

Why does my scRNA-seq data contain so many zeros? The high number of zeros, or sparsity, is caused by "dropout events." These occur due to the stochastic nature of gene expression at the single-cell level, the very low starting amounts of mRNA in individual cells, and technical limitations in capturing and sequencing all transcripts [1] [3]. Not all zeros are biologically true; some represent technical failures to detect expressed genes.

What is the impact of high dimensionality on my analysis? High dimensionality complicates statistical analysis and visualization, increases computational demands, and can obscure genuine biological signals with noise. This is often referred to as the "curse of dimensionality" [1] [2]. Dimensionality reduction is an essential step to mitigate these issues by transforming the data into a lower-dimensional space that retains most biological information [1].

How can I distinguish technical variation from true biological variation? Technical variation, or batch effects, are systematic differences in gene expression profiles caused by non-biological factors. Strategies to identify them include careful experimental design, using control samples, and employing quantitative metrics like kBET or LISI after integration [4] [5]. Biological variation is reproducible and can be linked to sample phenotypes or known cell types.

Troubleshooting Guides

Issue: Poor Cell Clustering Due to Batch Effects

Problem: Cells from the same biological group cluster separately based on their batch of origin (e.g., processing date) rather than their cell type.

Solutions:

- Apply a batch correction method: Use algorithms designed to integrate datasets and remove technical artifacts.

- Assess correction quality: After correction, use metrics like the Local Inverse Simpson's Index (LISI) to evaluate batch mixing (higher scores indicate better mixing) and cell type separation [5].

Recommended Tools for Batch Correction [4] [5]: Table: Comparison of Common Batch Correction Tools

| Tool Name | Best For | Key Strength | Key Limitation |

|---|---|---|---|

| Harmony | General use, large datasets | Fast, scalable, preserves biological variation | Limited native visualization tools |

| Seurat Integration | High biological fidelity | Preserves subtle biological differences; comprehensive workflow | Computationally intensive for large datasets |

| Scanorama | Integrating complex batches | Handles non-linear batch effects effectively | Requires familiarity with Python/Scanpy |

| BBKNN | Fast, lightweight correction | Computationally efficient; fast runtime | Less effective for strong non-linear batch effects |

| scANVI | Complex integration with labels | Leverages cell labels to improve correction | Requires GPU; deep learning expertise needed |

Methodology: The typical workflow involves:

- Normalizing the count matrices for each batch separately (e.g., via log-normalization).

- Identifying highly variable genes.

- Applying the batch correction method (e.g., Harmony on PCA embeddings or Seurat's CCA/MNN).

- Visualizing the corrected data with UMAP or t-SNE and evaluating the results with clustering and metrics [5] [2].

Issue: Data Sparsity and Dropout Events

Problem: An excess of zero values in the gene expression matrix is hindering the identification of cell populations and marker genes.

Solutions:

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) compress the data, naturally mitigating the impact of sparsity by combining expression information across cells [1].

- Imputation: Use specialized methods (e.g., deep learning models like variational autoencoders) to infer and fill in likely values for dropout events [1].

- Advanced Normalization: Methods like

SCTransform(regularized negative binomial regression) model technical noise and can be more robust to sparsity [5].

Experimental Protocol for Dimensionality Reduction with PCA [1]:

- Input: A normalized (e.g., log-normalized) gene expression matrix (cells x genes).

- Feature Selection: Use the highly variable genes identified during preprocessing.

- Scaling: Center and scale the expression of each gene to have a mean of zero and variance of one.

- PCA Execution: Perform linear algebra computation to identify principal components (PCs)—new, uncorrelated variables that capture maximum variance.

- PC Selection: Determine the number of PCs to retain for downstream analysis (e.g., using the "elbow" method in a scree plot or selecting PCs that explain a predefined percentage of variance).

- Output: A lower-dimensional matrix of cells x PCs, which is used for clustering and visualization.

Issue: Choosing a Normalization Method

Problem: Inconsistent results in downstream analyses like differential expression due to inappropriate normalization.

Solutions: Table: Common scRNA-seq Normalization Methods

| Method | Principle | Best Suited For |

|---|---|---|

| Log Normalization | Counts are divided by total cellular reads, scaled (e.g., per 10,000), and log-transformed. | Standard datasets where cells have similar RNA content. Default in Seurat/Scanpy [5] [2]. |

| SCTransform | Models gene expression using a regularized negative binomial regression to account for technical covariates. | Datasets with confounding technical variables; provides variance stabilization [5]. |

| Pooling-Based (Scran) | Uses a deconvolution approach by pooling cells to estimate cell-specific size factors. | Heterogeneous datasets with diverse cell types [5] [2]. |

| CLR Normalization | Applies a centered log-ratio transformation to the data. | CITE-seq data (antibody-derived tags) or other multi-modal assays [5]. |

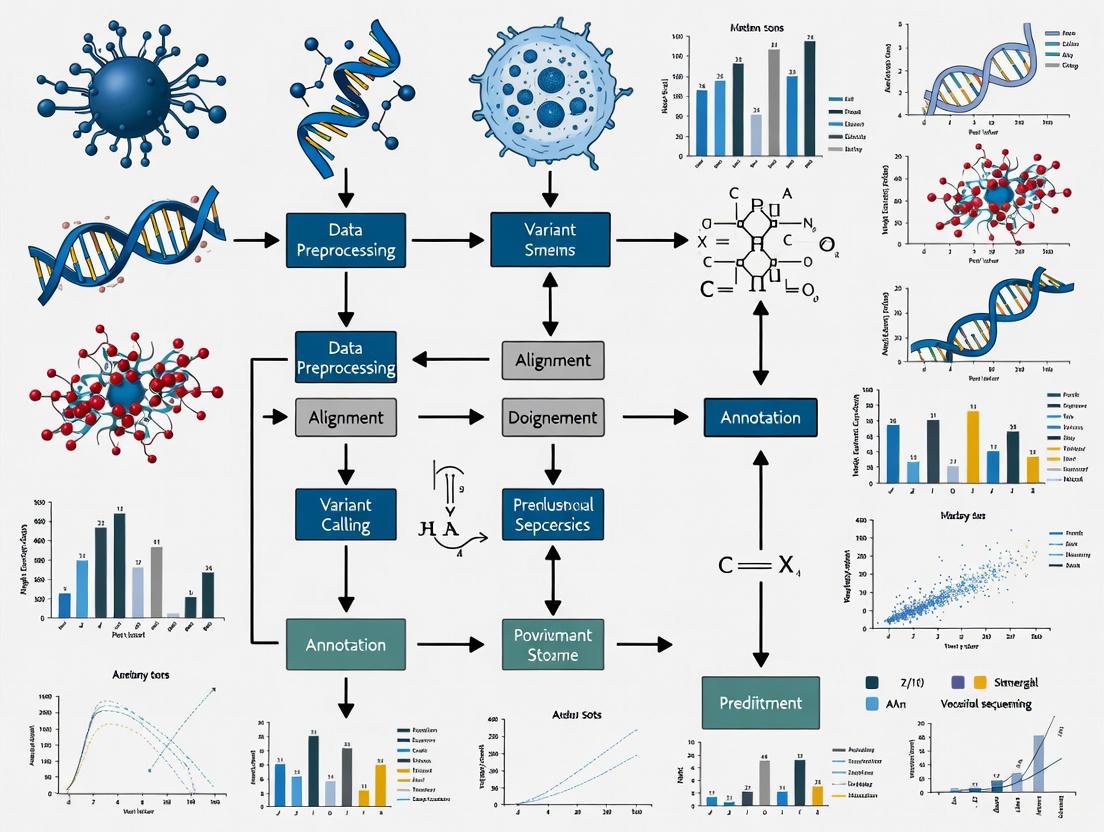

Visualizing scRNA-seq Data Nature and Workflow

scRNA-seq Data Characteristics and Impact

Core Computational Analysis Workflow

The Scientist's Toolkit: Essential Computational Reagents

Table: Key Computational Tools and Resources for scRNA-seq Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| Seurat (R) | A comprehensive toolkit for single-cell analysis. | End-to-end workflow from QC to differential expression and visualization [2]. |

| Scanpy (Python) | A scalable Python-based library for analyzing large single-cell datasets. | Preprocessing, visualization, clustering, and trajectory inference in Python environments [2]. |

| Harmony | Algorithm for batch effect correction. | Integrating datasets from different batches or experiments while preserving biological variation [4] [5]. |

| Scran | R package for normalization. | Calculating pool-based size factors for accurate normalization in heterogeneous datasets [5] [2]. |

| SCTransform | Normalization and variance stabilization method. | Modeling technical noise and improving downstream analysis results [5]. |

| Hyperdimensional Computing (HDC) | A brain-inspired computational framework. | Noise-robust classification and clustering of high-dimensional scRNA-seq data [3]. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized biological research by allowing scientists to profile gene expression at the resolution of individual cells. This capability is crucial for uncovering cellular heterogeneity, identifying rare cell types, and understanding the molecular mechanisms of development and disease. However, the powerful insights gained from scRNA-seq are accompanied by significant computational challenges. Two of the most critical hurdles are the prevalence of missing data, often called "dropout events," and the difficulty in quantifying the uncertainty of measurements and analysis results. This technical support article delves into these specific issues, providing troubleshooting guides and FAQs to help researchers navigate these complex problems during their single-cell data analysis.

## Frequently Asked Questions (FAQs)

FAQ 1: What causes the high number of zeros in my scRNA-seq data? The zeros, or "dropout events," in your data arise from a combination of technical and biological factors [6]. A gene might report a zero expression level because it was not expressing any RNA at the time of measurement (a true biological event, or "structural zero"). Alternatively, the gene could be expressing RNA, but technical limitations of the experimental protocol, such as low RNA capture efficiency or insufficient sequencing depth, prevented its detection (a technical event, or "dropout") [6]. The probability of a dropout is higher for genes with low levels of expression [6].

FAQ 2: How can missing data lead to incorrect biological conclusions? Technical variation in the probability of a gene being detected can vary substantially from cell to cell [6]. This variation can become a major source of cell-to-cell variation in your data. During analyses like clustering or trajectory inference, which rely on calculating distances between cell expression profiles, this technical variability can be confused with genuine biological variation. In confounded experiments, this can result in the false discovery of what appear to be novel cell populations [6].

FAQ 3: Why is quantifying uncertainty so important in single-cell analysis? The amount of genetic material sampled from a single cell is minuscule compared to bulk sequencing experiments, leading to inherently less stable signals and more uncertain data [7]. Properly quantifying this uncertainty prevents it from propagating in an uncontrolled manner through your analysis pipeline. It provides statistically sound qualifiers for your final results, helping you discern whether a cluster of cells represents a truly distinct biological group or is merely an artifact of technical noise or sampling variability [8] [7].

FAQ 4: My scRNA-seq data has batch effects. How does this relate to missing data? Batch effects are a common source of systematic technical variation in high-throughput data [6]. In scRNA-seq, these effects occur when cells from different biological groups or conditions are processed (e.g., captured, cultured, or sequenced) in separate batches. This technical variability can intensify the missing data problem by altering the detection rate of genes between batches. Consequently, cells may appear more different from each other due to their batch of origin rather than their true biological state, which can severely confound downstream analyses [6].

## Troubleshooting Guides

### Problem 1: Identifying and Diagnosing Data Sparsity

Issue: You observe an exceptionally high number of zeros in your count matrix and are concerned about the impact of dropouts.

Steps for Diagnosis:

- Calculate Cell-wise and Gene-wise Metrics: For each cell, calculate the total number of detected genes. For each gene, calculate the number of cells in which it is expressed. Plot the distributions of these values.

- Examine the Relationship: Investigate the relationship between a gene's average expression level and the fraction of zeros observed for that gene. You will typically see that genes with lower average expression have a higher fraction of zeros, which is characteristic of technical dropouts [6].

- Check for Batch Effects: Use visualization tools like t-SNE or UMAP, colored by batch identifier, to see if the proportion of zeros is correlated with technical batches. A high correlation suggests batch effects are exacerbating the missing data problem [6].

### Problem 2: Choosing an Imputation Method

Issue: You need to impute missing values to recover biological signal but are unsure which method to select.

Steps for Resolution:

- Understand Method Assumptions: Different imputation methods are built on different statistical assumptions. Some use deep learning models (e.g.,

cnnImpute,DCA), others employ Bayesian frameworks (e.g.,SAVER,bayNorm), and others use graph or clustering approaches (e.g.,MAGIC,scImpute) [9] [10]. - Consult Performance Benchmarks: Refer to independent evaluation studies that compare methods on datasets similar to yours. Performance can vary significantly across datasets generated by different protocols (e.g., 10x Genomics vs. Smart-Seq2) [10]. The table below summarizes key findings from a recent benchmark.

- Validate on Your Data: There is no one-size-fits-all solution. Test a few top-performing methods on your data and evaluate which one best preserves known biological structures (e.g., separation of established cell types) without introducing excessive noise or false signals.

Table 1: Evaluation of Selected scRNA-seq Imputation Methods

| Method | Underlying Approach | Reported Performance | Considerations |

|---|---|---|---|

| cnnImpute | Convolutional Neural Network (CNN) | Achieved high accuracy in numerical recovery on several benchmark datasets [9]. | Demonstrates effectiveness in preserving cell cluster integrity post-imputation [9]. |

| SAVER | Bayesian-based | Tends to slightly underestimate values but showed consistent, slight improvement on real datasets and good clustering consistency [10]. | A stable and reliable choice for many real datasets. |

| scVI | Variational Autoencoder (VAE) | Tended to overestimate expression values in benchmarks [10]. | A powerful, scalable model-based framework. |

| DCA | Deep Count Autoencoder | Performance varied; it excelled on some simulated datasets but overestimated on some real Smart-Seq2 data [10]. | Can be effective, but performance should be carefully checked. |

| scImpute | Statistical Learning & Clustering | Led to extremely large expression values on some datasets, potentially indicating over-imputation [10]. | Can be powerful but may introduce strong biases. |

### Problem 3: Propagating Uncertainty in Dimensionality Reduction

Issue: You want to understand the confidence in your low-dimensional embedding (e.g., from PCA) and subsequent cell clusters.

Steps for Resolution:

- Use Model-Based Methods: Consider using dimensionality reduction methods that are directly based on a probabilistic model of the count data, such as

scGBM(Generalized Bilinear Model) [8]. Because these methods model the data generation process, they can naturally quantify the uncertainty in each cell's latent position. - Leverage Uncertainty for Clustering: Methods like

scGBMcan use the quantified uncertainties to define a Cluster Cohesion Index (CCI). This index helps assess which clusters are robust and biologically distinct versus those that might be artifacts of sampling variability [8]. - Apply Probabilistic Distortion Operators: For advanced experiment design and analysis, you can use frameworks that incorporate Probabilistic Distortion Operators (PDOs) to explicitly model the effects of measurement errors. This allows for model inference and uncertainty quantification that accounts for these distortions [11].

## Experimental Protocols for Addressing Challenges

### Protocol 1: A Standard Workflow for scRNA-seq Quality Control

A robust quality control (QC) process is the first defense against poor data quality exacerbating missing data and uncertainty issues.

- Compute QC Metrics: For every cell barcode, calculate three key covariates:

- Count Depth: The total number of counts (or UMIs).

- Number of Detected Genes: The number of genes with a count > 0.

- Mitochondrial Gene Fraction: The fraction of counts originating from mitochondrial genes.

- Visualize and Filter: Plot the distributions of these metrics. Filter out outliers that likely correspond to low-quality cells or empty droplets [12].

- Low counts/genes & high mitochondrial fraction: Often indicates broken cells where cytoplasmic mRNA has leaked out.

- Very high counts/genes: May indicate doublets (multiple cells captured together).

- Joint Consideration: Always consider these metrics jointly when setting thresholds to avoid inadvertently filtering out valid cell populations, such as small quiescent cells or large, metabolically active cells [12].

### Protocol 2: An Uncertainty-Aware Framework for Multi-Omics Clustering (scUCAF)

Integrating data from multiple modalities (e.g., RNA and ATAC) is powerful but compounds uncertainty challenges. The scUCAF framework provides a methodology to address this [13].

- Feature Extraction with VAEs: Use independent Variational Autoencoders (VAEs) with a negative binomial distribution to extract latent features from each omics data type. This explicitly models the count-based nature of the data and reduces noise [13].

- Contrastive Learning with Pseudo-Labels: Implement a contrastive learning strategy guided by high-confidence cluster pseudo-labels. This ensures feature consistency across different omics and prevents cells of the same type from being incorrectly treated as negative pairs [13].

- Uncertainty-Aware Fusion: Dynamically integrate the omics features using a gating network that incorporates uncertainty estimates. This mitigates the negative impact of low-quality data from any single omics on the final, fused cell representation used for clustering [13].

Diagram 1: The scUCAF workflow for uncertainty-aware multi-omics clustering.

## The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

Table 2: Key Computational Tools for Addressing Missing Data and Uncertainty

| Tool / Resource | Type | Primary Function | Relevance to Challenges |

|---|---|---|---|

| Unique Molecular Identifiers (UMIs) | Experimental/Molecular Barcode | Tags individual mRNA molecules to correct for amplification bias [12]. | Reduces technical noise in quantification, indirectly mitigating one source of uncertainty. |

| SAVER | Software Package (R) | Bayesian-based imputation to recover true gene expression values [10]. | Directly addresses missing data; noted for reliable performance and improving clustering consistency on real datasets. |

| scVI | Software Package (Python) | Probabilistic generative model for representation learning and imputation [10]. | Handles imputation and normalization while providing a probabilistic framework that accounts for uncertainty. |

| scGBM | Software Package (R) | Model-based dimensionality reduction using a Poisson bilinear model [8]. | Directly quantifies uncertainty in the low-dimensional embedding of cells, aiding in robust cluster analysis. |

| Fisher Information Matrix (FIM) | Mathematical Framework | Quantifies the amount of information data provides about model parameters [11]. | Used for optimal experiment design, predicting how measurement errors affect parameter estimation accuracy. |

## Advanced Analysis: A Model-Based Workflow for Dimensionality Reduction

The standard practice of transforming counts (e.g., log(1+x)) and applying PCA can induce spurious heterogeneity. A model-based approach like scGBM offers a more statistically sound alternative [8].

- Model Formulation: The

scGBMmethod fits a Poisson bilinear model directly to the UMI count matrix. It models the expected count for gene i in cell j as a function of gene-specific and cell-specific intercepts, plus a low-rank matrix factorization that captures the latent cell states [8]. - Fast Estimation: The model is fitted using a fast algorithm based on iteratively reweighted singular value decompositions (SVD), allowing it to scale to datasets with millions of cells [8].

- Uncertainty Quantification: The model quantifies the uncertainty in each cell's latent position. These uncertainties can be propagated to downstream analyses [8].

- Cluster Confidence Assessment: The latent position uncertainties are used to compute a Cluster Cohesion Index (CCI), which helps researchers assess the confidence that a given cluster represents a truly distinct biological population versus an artifact of noise [8].

Diagram 2: The scGBM workflow for model-based dimensionality reduction and uncertainty quantification.

By understanding these core computational hurdles and applying the troubleshooting guides, experimental protocols, and tools outlined above, researchers can enhance the robustness and reliability of their single-cell data analyses, leading to more confident biological discoveries.

Single-cell RNA sequencing (scRNA-seq) has revolutionized transcriptomic studies by allowing gene expression profiling at the single-cell resolution, enabling the dissection of cellular heterogeneity [14]. A fundamental distinction among scRNA-seq technologies lies in their transcript coverage: full-length transcript protocols (e.g., Smart-seq2, MATQ-seq) aim to sequence the entire transcript, while 3'-end (e.g., Drop-seq, 10x Chromium) or 5'-end (e.g., STRT-seq) protocols sequence only the respective ends of transcripts [14] [15]. This choice directly impacts the biological questions you can address and the subsequent computational analysis.

Frequently Asked Questions

Q1: What is the primary technical difference between full-length and 3'/5'-end protocols? Full-length protocols use smart technology or similar to amplify the entire cDNA molecule, providing coverage across all exons. In contrast, 3'/5'-end protocols typically use poly(dT) primers for reverse transcription that bind to the transcript's poly(A) tail, ensuring that only the 3' end (or similarly, the 5' end with specific designs) is captured and amplified. This is often combined with Unique Molecular Identifiers (UMIs) for precise digital quantification [14] [16] [15].

Q2: I need to analyze alternative splicing in a rare cell population. Which protocol should I choose? For alternative splicing analysis, a full-length transcript protocol is mandatory. Methods like Smart-seq2 or MATQ-seq provide coverage across the entire transcript body, allowing you to identify and quantify different exon junctions [14]. If the population is rare, you may need to use a high-sensitivity, plate-based full-length protocol to ensure sufficient gene detection from each cell.

Q3: My project requires profiling 50,000 cells for cell type identification. Is a full-length protocol feasible? For high-throughput cell atlas projects aimed primarily at cell classification, a 3'-end protocol (e.g., 10x Chromium, Drop-seq) is the standard and more cost-effective choice. These droplet-based methods can process thousands to tens of thousands of cells in a single run and provide efficient gene detection for clustering, albeit with 3' bias [17] [15].

Protocol Comparison and Selection Guide

The choice between full-length and tag-based sequencing has profound implications on your data. The table below summarizes the core characteristics of the two approaches.

Table 1: Core Characteristics of Major scRNA-seq Protocol Types

| Feature | Full-Length Protocols | 3'- or 5'-End Protocols |

|---|---|---|

| Primary Applications | Alternative splicing, allele-specific expression, mutation detection, gene fusion discovery | Cell type identification, differential gene expression analysis, large-scale cell atlases |

| Transcript Coverage | Entire transcript length | Restricted to 3' or 5' end (typically ~500 bp) |

| UMI Usage | Less common (e.g., Smart-seq3) | Standard (e.g., 10x Genomics, Drop-seq) |

| Throughput | Low to medium (96 - 1,000 cells) [15] | High to very high (10,000 - 100,000 cells) [15] |

| Sensitivity (Genes/Cell) | High (e.g., 6,500 - 14,000 genes) [15] | Moderate (e.g., 2,000 - 7,000 genes) [15] |

| Strand Specificity | Varies by protocol (Smart-seq2: no; MATQ-seq: yes) [14] | Typically yes [15] |

| Cost per Cell | Higher (e.g., $0.40 - $4.21) [15] | Lower (e.g., $0.01 - $0.50) [15] |

The following workflow diagram outlines the key experimental and analytical decision points when choosing between these protocols.

Troubleshooting Common Experimental and Analytical Challenges

Low RNA Input and Amplification Bias

Problem: scRNA-seq starts with minimal RNA, leading to incomplete reverse transcription, amplification bias, and technical noise. Full-length protocols are especially susceptible to amplification bias as they often use PCR. [18]

Solutions:

- Optimize Cell Lysis: Standardize lysis and RNA extraction to maximize yield and quality. [18]

- Use UMIs: Incorporate Unique Molecular Identifiers (UMIs) to correct for amplification bias. UMIs allow quantification of original mRNA molecules rather than amplified cDNA products. This is a standard feature in most 3'-end protocols (e.g., 10x Genomics, CEL-seq2) and is available in some newer full-length methods (e.g., Smart-seq3). [16] [17]

- Employ Spike-in Controls: Use external RNA controls of known quantity to monitor technical variation and normalization efficiency. [18]

High Dropout Rates (False Negatives)

Problem: "Dropout events" occur when a transcript is not detected in a cell, often affecting lowly-expressed genes. This is a major source of data sparsity. [18]

Solutions:

- Protocol Choice: If studying low-abundance transcripts, select a highly sensitive full-length protocol like Smart-seq2 or MATQ-seq, which generally detect more genes per cell. [14] [17]

- Computational Imputation: Use statistical models and machine learning algorithms (e.g., MAGIC, SAVER) to impute missing expression values based on patterns in the data. Use these results with caution for hypothesis generation. [18]

Batch Effects

Problem: Technical variation between different sequencing runs or experimental batches can confound biological differences. [18]

Solutions:

- Experimental Design: Process all comparison groups simultaneously and randomize samples across library preparations and sequencing lanes. [18]

- Batch Correction Algorithms: Apply computational tools like Combat, Harmony, or Scanorama during data integration to remove systematic technical variation. [18]

Table 2: Summary of Common Challenges and Mitigation Strategies

| Challenge | Affected Protocols | Experimental Solutions | Computational Solutions |

|---|---|---|---|

| Amplification Bias | All, but primarily PCR-based full-length | Use of UMIs; Spike-in controls [18] | UMI-based deduplication; Normalization |

| Low RNA Capture & Dropouts | All, critical for low-expression genes | Choose high-sensitivity protocols (e.g., Smart-seq2) [17] | Imputation algorithms (e.g., MAGIC) [18] |

| Batch Effects | All | Process batches strategically; Randomization | Batch correction tools (Harmony, Combat) [18] |

| Transcript Length Bias | Bulk & full-length scRNA-seq | Switch to 3'-end protocols [19] | Use length-aware normalization methods (e.g., TPM) |

Essential Research Reagent Solutions

Successful scRNA-seq experiments rely on key reagents and materials. The following table lists essential components and their functions.

Table 3: Key Research Reagents and Their Functions in scRNA-seq

| Reagent / Material | Function | Protocol Specific Notes |

|---|---|---|

| Poly(dT) Primers | Binds to poly(A) tail of mRNA for reverse transcription. | Universal in 3'/5'-end protocols; also used in most full-length protocols. [16] [15] |

| Template Switching Oligo (TSO) | Enables synthesis of full-length cDNA; adds universal adapter sequence. | Critical for Smart-seq2 and other full-length methods. [16] |

| Unique Molecular Identifiers (UMIs) | Short random barcodes that tag individual mRNA molecules for accurate quantification. | Standard in 3'/5'-end protocols (e.g., Drop-seq, 10x). Incorporated in primers. [16] [15] |

| Cell Barcodes | Short nucleotide sequences used to label cDNA from individual cells. | Essential for multiplexing in droplet-based (10x) and combinatorial indexing (sci-RNA-seq) methods. [15] |

| Strand-Specific Adapters | Allow determination of the original RNA strand during sequencing. | Important for annotating antisense transcription and accurate transcript assembly. Used in CEL-seq2, MARS-seq. [14] [15] |

| M-MLV Reverse Transcriptase | Enzyme for synthesizing cDNA from RNA template. | Smart-seq2 uses a mutant (RNase H-) for higher yield of full-length cDNA. [16] |

Impact on Downstream Computational Analysis

Your choice of protocol dictates the available computational toolkit. The schematic below illustrates the divergent analytical paths.

Key Analytical Implications:

- Data Normalization: Data from 3'-end protocols with UMIs typically uses count-based normalization (e.g., counts per million). Full-length data without UMIs may use length-dependent measures like FPKM or TPM, though these can be biased in single-cell data. [18] [19]

- Differential Expression: 3'-end data with UMIs provides digital gene expression counts, making it ideal for statistical models like negative binomial distributions (e.g., in Seurat, Scanpy). For full-length data, specialized tools that account for their technical noise are recommended. [14] [17]

- Advanced Analyses: Full-length data uniquely enables the investigation of alternative splicing and allelic expression, as it covers exon-exon junctions and single nucleotide variants across the transcript. These analyses are generally not possible with 3'-end data. [14]

Frequently Asked Questions (FAQs)

Scaling to Higher Dimensionalities

Q: Our analysis of a dataset with over 100,000 cells is stalling due to memory limitations. How can we overcome this?

A: This is a common scaling challenge. You can address it by:

- Using Computational Integration Tools: Employ algorithms specifically designed for large datasets. For example, Harmony is an algorithm that can integrate up to ~1 million cells on a personal computer due to its low memory requirements, being 30 to 50 times more efficient than some other methods [20].

- Leveraging High-Performance Computing: Utilize cloud-based analysis platforms (e.g., 10x Genomics Cloud Analysis) or high-performance computing clusters to access greater computational resources for processing large FASTQ files and generating feature-barcode matrices [21].

Q: What are the key data quality metrics to check when scaling to experiments with a high number of cells?

A: Always perform quality control on each sample individually before integration. Key metrics to check include [21]:

- Cell Recovery Count: Verify the number of cells recovered is close to the experiment's target.

- Reads Confidently Mapped in Cells: This should be high (e.g., >90%).

- Median Genes per Cell: Ensure this is within the expected range for your sample type.

- Mitochondrial Read Percentage: A high percentage can indicate broken or stressed cells. For PBMCs, a threshold of 10% is often used, but this varies by cell type [21].

- Barcode Rank Plot: Inspect for the characteristic "cliff-and-knee" shape, which indicates good separation between cells and background [21].

Data Integration Across Samples and Modalities

Q: Our dataset, combining samples from multiple patients and sequencing batches, shows clusters defined by technical source rather than cell type. How can we correct for this?

A: This is a primary motivation for data integration. The solution involves:

- Using Robust Integration Algorithms: Tools like Harmony are designed to project cells into a shared embedding where cells group by cell type rather than dataset-specific conditions. It accounts for multiple experimental and biological factors simultaneously [20].

- Quantifying Integration Success: Use metrics like Local Inverse Simpson's Index (LISI) to evaluate your integration. The integration LISI (iLISI) measures the effective number of datasets in a cell's neighborhood, while the cell-type LISI (cLISI) measures the separation of cell types. Successful integration yields a high iLISI and a low cLISI [20].

Q: What are the computational challenges specific to integrating single-cell ATAC-seq data?

A: Integrating scATAC-seq data presents unique hurdles due to its intrinsic data characteristics [22]:

- Data Sparsity: Low genomic coverage per cell results in highly sparse data with many missing values.

- Workflow Complexity: The analysis workflow involves numerous steps—quality control, alignment, peak calling, dimension reduction, clustering, and multiomics integration—each with its own methodological challenges.

- Emerging Methods: The field is rapidly developing, with new computational methods, including deep-learning and AI foundation models, being created to address these challenges.

Defining Levels of Resolution

Q: How can we create a cell type map that accurately represents both discrete cell types and continuous transitional states?

A: Moving beyond discrete clusters is a key challenge. You can achieve this by:

- Employing Topology-Preserving Methods: Use algorithms like PAGA (Partition-based Graph Abstraction) which generate structure-rich topologies that recapitulate tissue development and organization. These maps can represent both discrete cell types and continuous trajectories between them [7].

- Implementing Hierarchical Approaches: Tools like HSNE (Hierarchical Stochastic Neighbor Embedding) allow for consistent zooming into more detailed levels of resolution, enabling you to explore your data at multiple levels of granularity [7].

Q: Why is quantifying uncertainty particularly important in single-cell analyses, and how can it be done?

A: The limited biological material per cell leads to high levels of technical noise and measurement uncertainty [7].

- Impact: This uncertainty can affect downstream analyses, such as cell type identification and differential expression.

- Best Practice: The optimal approach is to use analysis tools that actively quantify and propagate this uncertainty from the raw data through to the final results, providing statistically sound qualifiers for your conclusions. This is crucial for tasks like single nucleotide variation (SNV) calling in scDNA-seq data [7].

Troubleshooting Guides

Problem: Poor Data Integration After Running an Integration Algorithm

This guide helps diagnose and resolve issues where batches or datasets remain separate after integration.

| Step | Action | Expected Outcome & Diagnostic Tips |

|---|---|---|

| 1. Pre-check Input Data | Ensure the input data (e.g., the PCA embedding) is appropriate and meets the requirements of the integration tool. | The pre-integration embedding should show some overlap or similar structure in cell types across batches. |

| 2. Verify Key Parameters | Check algorithm-specific parameters. For Harmony, this includes the number of clusters and the strength of the integration penalty. | Iteratively adjusting parameters should improve mixing without erasing biological signal. Use LISI metrics to quantify improvement [20]. |

| 3. Assess Integration Metrics | Calculate integration quality metrics like iLISI (for dataset mixing) and cLISI (for cell type separation). | Successful integration shows a high iLISI (datasets are mixed) and a low cLISI (cell types remain distinct) [20]. |

| 4. Check for Underlying Biology | Investigate if persistent "batch" effects represent strong, real biological differences (e.g., major disease states). | Some biological factors may be so strong that full integration is not technically appropriate or may require specialized methods. |

Problem: Computational Memory Failure During Large-Scale Analysis

This guide addresses the "out-of-memory" errors common when analyzing large single-cell datasets.

| Step | Action | Expected Outcome & Diagnostic Tips |

|---|---|---|

| 1. Profile Memory Usage | Identify which step in your workflow (e.g., normalization, clustering, integration) is consuming the most memory. | This helps you target optimization efforts effectively. |

| 2. Switch to Memory-Optimized Tools | Replace the memory-intensive tool. For integration, switch to algorithms like Harmony, which is designed for low-memory operation on large datasets [20]. | Harmony required only 7.2GB of memory on a 500,000-cell dataset, unlike other tools that failed [20]. |

| 3. Utilize Cloud or HPC Resources | Move the analysis to a platform with higher memory capacity, such as a cloud computing environment or a high-performance computing cluster. | Platforms like the 10x Genomics Cloud Analysis are built for processing large single-cell datasets efficiently [21]. |

| 4. Implement Data Downsampling | As a last resort, if the full dataset is too large, use strategic downsampling to create a smaller, representative subset for initial method testing and debugging. | This should only be used for prototyping, as it reduces the overall power and resolution of the analysis. |

The following table details key computational tools and resources for addressing single-cell data science challenges.

| Tool/Resource Name | Function | Relevant Challenge |

|---|---|---|

| Harmony [20] | A robust, scalable algorithm for integrating multiple single-cell datasets. It projects cells into a shared embedding where they group by cell type rather than technical source. | Data Integration, Scaling |

| PAGA [7] | A method that generates topologies of cell types and states, representing both discrete clusters and continuous transitions, thus allowing for flexible levels of resolution. | Varying Resolution |

| Cell Ranger [21] | A set of analysis pipelines that process raw Chromium single-cell data (FASTQ files) to perform alignment, generate feature-barcode matrices, and conduct initial clustering. | Scaling, Preprocessing |

| Viz Palette [23] [24] | An online tool to test color palettes for accessibility, simulating how they appear to people with different types of color vision deficiencies (CVD). | Data Visualization |

| LISI Metrics [20] | Quantitative metrics (Local Inverse Simpson's Index) to evaluate the success of data integration, measuring both dataset mixing (iLISI) and cell type separation (cLISI). | Data Integration |

| SoupX / CellBender [21] | Computational tools to estimate and remove the profile of ambient RNA, a common background noise in single-cell experiments, from the gene expression counts of genuine cells. | Data Quality, Scaling |

Experimental Protocols & Workflows

Protocol: Best Practices for scRNA-seq Data Preprocessing and QC

Objective: To establish a standardized workflow for quality control and filtering of single-cell RNA-seq data prior to downstream analysis, ensuring the removal of low-quality cells and technical artifacts [21].

Methodology:

- Initial QC with

cellranger multiOutput: Review theweb_summary.htmlfile for critical metrics:- Confirm the number of recovered cells matches expectations.

- Check that "Confidently mapped reads in cells" is high (>90%).

- Verify "Median genes per cell" is within the typical range for your cell type.

- Inspect the Barcode Rank Plot for a clear "cliff-and-knee" shape.

- Interactive Filtering with Loupe Browser: Open the

.cloupefile to perform manual filtering:- Filter by UMI Counts: Remove cell barcodes with extremely high UMI counts (potential multiplets) and very low UMI counts (potential ambient RNA).

- Filter by Feature Count: Similarly, remove outliers with very high or low numbers of detected genes.

- Filter by Mitochondrial Read Percentage: Set a sample-appropriate threshold (e.g., 10% for PBMCs) to remove dead or stressed cells.

- Ambient RNA Removal (Optional but Recommended): For studies of rare cell types or subtle expression patterns, run an ambient RNA correction tool like SoupX or CellBender to computationally subtract background noise [21].

Protocol: A Workflow for Multi-Dataset Integration Using Harmony

Objective: To integrate multiple single-cell datasets (from different batches, technologies, or donors) into a shared embedding, facilitating joint analysis and cell type identification [20].

Methodology:

- Input Preparation: Begin with a low-dimensional embedding of your cells, such as from Principal Components Analysis (PCA). Ensure this embedding meets the requirements for the integration tool.

- Run Harmony: Apply the Harmony algorithm to the PCA embedding, specifying the covariates to integrate over (e.g., dataset, batch).

- Iterative Correction: Harmony iteratively performs the following:

- Soft Clustering: Groups cells into clusters that favor diversity across datasets.

- Correction Factor Calculation: Computes cluster-specific linear correction factors.

- Cell-Specific Correction: Applies a unique, weighted correction factor to each cell.

- Convergence Check: The algorithm iterates until cell cluster assignments are stable.

- Validation with LISI Metrics: Quantify the success of integration by calculating:

- iLISI: Measures the effective number of datasets in a local neighborhood. A higher average value indicates better mixing.

- cLISI: Measures the effective number of cell types in a local neighborhood. A value close to 1 indicates excellent biological signal preservation.

Data Visualization and Conceptual Diagrams

Single-Cell Data Integration Workflow

Mapping Cell Types and States at Varying Resolutions

Computational Toolkits and Analytical Pipelines: From Raw Data to Biological Insights

The primary analysis of single-cell RNA sequencing (scRNA-seq) data, encompassing the computational steps from raw sequencing files (FASTQ) to a gene expression count matrix, forms the foundational layer for all subsequent biological interpretations. This process involves aligning reads to a reference genome, quantifying gene expression, and performing initial quality control to distinguish biological signals from technical artifacts. In the context of research on computational challenges in single-cell sequencing data analysis, a robust primary workflow is paramount. Technical variances, such as amplification bias and batch effects, if not corrected, can confound downstream analyses, leading to inaccurate identification of cell types and states [18]. This guide addresses the specific computational hurdles encountered during this initial phase, providing troubleshooting advice and best practices to ensure the generation of high-quality, reliable data for researchers and drug development professionals.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My dataset has a high percentage of mitochondrial gene counts. What does this indicate and how should I proceed?

- Answer: A high percentage of reads mapping to mitochondrial genes is a strong indicator of cell stress or apoptosis [25]. This is a common quality control metric.

- Action: Filter out cells with mitochondrial counts exceeding a defined threshold. Standard practice is to remove cells with more than 5-20% of counts originating from mitochondrial genes [25]. The exact threshold may depend on your biological system and the observed distribution of this metric across your cells.

Q2: What are the main causes of the high number of zeros in my count matrix, and how does this impact analysis?

- Answer: The prevalence of zero counts, known as "dropout events," arises from a combination of biological factors (a gene not being expressed in a cell) and technical factors (the transcript being missed during capture or amplification) [18] [26]. This data sparsity poses significant challenges for downstream analyses like clustering and differential expression, as it can obscure true biological variation [22] [27].

- Action: During primary analysis, ensure you use unique molecular identifiers (UMIs) to correct for amplification bias [18]. For downstream analysis, consider specialized imputation or denoising methods (e.g., ZILLNB, scImpute, DCA) that are designed to distinguish technical dropouts from true biological zeros [26].

Q3: My analysis shows unexpected cell clustering that seems to be driven by the sample batch rather than biology. How can I correct for this?

- Answer: This is a classic batch effect, a technical variation introduced when samples are processed in different batches, sequencing runs, or using different protocols [18]. It is a major confounder in scRNA-seq analysis.

- Action: Batch correction is typically applied after primary analysis but is a critical step before integration. Tools such as Harmony, Seurat's CCA, or scVI are designed to integrate datasets and remove these technical artifacts while preserving biological variation [28] [25]. The choice of tool can depend on the dataset size and complexity.

Q4: What is a "doublet" and how can I identify them in my data?

- Answer: A doublet occurs when two or more cells are captured together and mistakenly labeled as a single cell in a droplet-based system. This can lead to the misidentification of hybrid cell types [18].

Q5: How do I determine the correct sequencing depth for my scRNA-seq experiment?

- Answer: Sequencing depth is a trade-off between cost and the need to capture lowly expressed genes and rare cell populations. Insufficient depth increases technical noise and dropout rates [18].

- Action: There is no universal answer, as the optimal depth depends on your experimental goals. If the goal is to discover rare cell types, deeper sequencing is required. Standardized pipelines like Cell Ranger from 10x Genomics provide guidelines, and consulting literature on similar biological systems is advisable. Dimensionality reduction techniques can help manage the complexity of deep sequencing data [18].

Common Workflow Errors and Solutions

The table below summarizes frequent issues encountered during the primary analysis workflow, their potential causes, and recommended solutions.

| Error / Issue | Potential Cause | Solution / Best Practice |

|---|---|---|

| Low number of cells recovered | Cell suspension issues, poor viability, clogged microfluidic chip. | Optimize cell dissociation protocol; assess viability before loading; filter out low-quality cells computationally [25] [18]. |

| Low sequencing depth per cell | Inadequate sequencing cycles; overloading the sequencer. | Follow platform-specific recommendations (e.g., from 10x Genomics); ensure proper sample indexing and library quantification [29]. |

| High ambient RNA contamination | Cell rupture during handling, releasing RNA into the solution. | Use computational tools like SoupX to estimate and correct for background RNA contamination [25]. |

| Amplification bias | Stochastic variation during PCR amplification. | Use Unique Molecular Identifiers (UMIs) in your library preparation protocol to tag individual mRNA molecules [18]. |

| Misalignment of reads | Poor quality reference genome or annotation. | Use a standardized alignment workflow (e.g., STAR aligner in Cell Ranger) with a well-curated reference [28]. |

Essential Analysis Workflow: From FASTQ to Count Matrix

The following diagram illustrates the core steps and decision points in the primary bioinformatics workflow for scRNA-seq data.

Primary scRNA-seq Analysis Workflow

Workflow Step Details:

- Quality Control (Raw Data): Using tools like FastQC to assess per-base sequencing quality, adapter contamination, and overall read quality. This step determines the suitability of the raw data for further analysis [29].

- Alignment & Gene Counting: The filtered reads are aligned to a reference genome using spliced-aware aligners like STAR. Following alignment, reads are assigned to genes based on genomic annotation (GTF file) to count the number of reads or UMI counts per gene per cell. This is often handled in an integrated manner by pipelines like Cell Ranger [28].

- Generate Count Matrix: The output of the previous step is a digital gene expression matrix (DGE), where rows represent genes, columns represent cells, and each entry is the count of transcripts for that gene in that cell.

- Cell-level QC Filtering: This critical step filters the count matrix to remove low-quality cells based on metrics like:

- Number of detected genes: Filter out cells with too few genes (potential empty droplets) or too many genes (potential doublets) [25].

- Total counts per cell: Remove cells with very low total UMI counts.

- Mitochondrial gene ratio: Filter out cells with a high percentage of mitochondrial counts, indicating cell stress [25].

- Normalization & Scaling: This step corrects for technical cell-to-cell variation, such as differences in library size (sequencing depth). Methods like scran use pooling of cells to achieve effective normalization [25]. Scaling transforms the data to give genes equal weight in downstream analyses.

The Scientist's Toolkit: Key Research Reagents & Solutions

The table below details essential computational tools and resources that form the core toolkit for scRNA-seq primary analysis.

| Tool / Resource | Function | Key Features |

|---|---|---|

| Cell Ranger [28] | Processing for 10x Genomics Data | End-to-end pipeline that performs alignment, filtering, and count matrix generation using the STAR aligner. Considered the gold standard for 10x data. |

| STAR [28] | Spliced Read Alignment | Accurate and fast aligner for RNA-seq data, capable of handling spliced transcripts. Often used as the core aligner in other pipelines. |

| Scanpy [28] | Python-based Analysis Toolkit | A comprehensive suite for analyzing single-cell data after count matrix generation, including QC, clustering, and trajectory inference. Integrates with scvi-tools. |

| Seurat [28] | R-based Analysis Toolkit | A versatile R package for single-cell genomics. Provides modules for QC, normalization, integration, clustering, and differential expression. |

| DoubletFinder [25] | Doublet Detection | Computational algorithm specifically designed to find and remove doublets in scRNA-seq data. Benchmarked for high accuracy. |

| SoupX [25] | Ambient RNA Correction | A tool to estimate and subtract the background "soup" of ambient RNA contamination from droplet-based scRNA-seq data. |

| scran [25] | Normalization | Uses a pooling-based deconvolution method to compute cell-specific scaling factors, making it effective for normalizing scRNA-seq data. |

The analysis of single-cell RNA sequencing (scRNA-seq) data presents unique computational challenges, including handling cellular heterogeneity, managing technical noise, and integrating multimodal data. Researchers navigating this landscape frequently encounter three dominant computational ecosystems: Scanpy (Python-based), Seurat (R-based), and Bioconductor (R-based). Each ecosystem offers distinct advantages, specialized tools, and workflow philosophies. Scanpy provides a scalable toolkit optimized for large-scale analyses, Seurat offers versatile integration capabilities across multiple data modalities, and Bioconductor emphasizes interoperability and reproducible analysis through coordinated packages. Understanding the technical architecture, capabilities, and optimal use cases for each ecosystem is essential for designing robust analytical pipelines that can address specific research questions in single-cell biology while overcoming common computational challenges.

Ecosystem Comparison: Architectures and Specializations

The table below provides a structured comparison of the three dominant ecosystems, highlighting their core characteristics, strengths, and typical use cases to guide researchers in selecting the appropriate framework.

Table 1: Comparative Overview of Single-Cell Computational Ecosystems

| Feature | Scanpy | Seurat | Bioconductor |

|---|---|---|---|

| Programming Language | Python | R | R |

| Core Data Structure | AnnData object [28] | Seurat object [30] | SingleCellExperiment (SCE) object [28] [31] |

| Primary Strength | Scalability for large datasets (>1 million cells) [28] [32] | Versatility and multi-modal integration [28] [33] | Interoperability and reproducibility [28] [34] |

| Key Packages/Tools | scvi-tools, Squidpy, scvelo [28] [32] | Harmony, Monocle 3 integration [28] [33] | scran, scater, ZINB-WaVE [28] |

| Spatial Transcriptomics | Squidpy [28] [32] | Native support [28] | Various specialized packages |

| Batch Correction | scvi-tools, BBKNN | Harmony, CCA integration [28] [33] | Batchelor, other SCE-compatible methods |

| Typical User | Data scientists scaling to massive datasets | Biologists seeking all-in-one workflow | Method developers, bioinformaticians |

The architectural differences between these ecosystems significantly impact workflow design. Scanpy's AnnData object, jointly built with the anndata library, optimizes memory usage and enables scalable analyses of very large datasets [28] [32]. Seurat employs a modular workflow where data and analyses are stored within a Seurat object, allowing comprehensive multi-assay investigations [30]. Bioconductor utilizes the SingleCellExperiment (SCE) class as a standardized data container that promotes interoperability between different analytical packages [28] [31]. This fundamental difference in data structures influences how researchers move between tools, with Bioconductor particularly emphasizing seamless transitions between specialized methods.

Troubleshooting Guides and FAQs

Ecosystem Selection and Data Preparation

Q: How do I choose between Scanpy, Seurat, and Bioconductor for my single-cell analysis project? A: The choice depends on your computational environment, dataset size, and analytical needs. Consider the following factors:

- Team Expertise: If your team is proficient in Python, Scanpy offers a more natural fit; for R users, Seurat or Bioconductor would be preferable [35].

- Dataset Scale: For datasets exceeding one million cells, Scanpy's architecture is specifically optimized for such scale [28] [32].

- Analysis Type: For specialized spatial transcriptomics, Scanpy with Squidpy or Seurat with its native functions are excellent choices [28]. For method development or academic benchmarking, Bioconductor's SCE ecosystem provides a robust foundation [28] [31].

- Multi-modal Integration: Seurat provides particularly strong capabilities for integrating RNA with ATAC-seq, CITE-seq, and spatial data within a unified object [28] [33].

Q: What is the fundamental data structure used by each ecosystem, and why does it matter? A: Each ecosystem employs a distinct data structure that determines interoperability:

- Scanpy: Uses the AnnData object, which efficiently handles sparse matrix representations and integrates with the broader Python data science stack [28] [32].

- Seurat: Uses the Seurat object, which stores multiple data types (assays, reductions, projections) in a single container, facilitating complex multi-modal analyses [30].

- Bioconductor: Uses the SingleCellExperiment (SCE) object, which serves as an interoperable container designed specifically for bioinformatics packages to exchange data seamlessly [28] [31].

These structures are not directly compatible without conversion tools, so selecting an ecosystem at the project's start prevents costly data reformatting later.

Preprocessing and Quality Control Challenges

Q: How should I handle high mitochondrial percentage cells in each ecosystem? A: Mitochondrial QC is crucial but implemented differently in each ecosystem:

- Seurat: Calculate percentage with

PercentageFeatureSet(pbmc, pattern = "^MT-")and filter usingsubset(pbmc, subset = nFeature_RNA > 200 & nFeature_RNA < 2500 & percent.mt < 5)[30]. - Scanpy: Calculate QC metrics with

sc.pp.calculate_qc_metricsusingmt-in thegene_subsetparameter, then filter based on these calculated metrics. - Bioconductor: Use the

scaterpackage functions likeaddPerCellQC()andquickPerCellQC()to compute and filter based on mitochondrial percentage [28] [31].

Q: What tools effectively address ambient RNA contamination across ecosystems? A: Ambient RNA contamination from droplet-based technologies requires specialized tools:

- CellBender: A deep learning-based tool that effectively removes ambient RNA noise and integrates well with both Scanpy and Seurat workflows [28].

- EmptyDrops: Part of the DropletUtils Bioconductor package, specifically designed to distinguish empty droplets from cell-containing droplets [31].

- SoupX: An R package that estimates and subtracts the ambient RNA profile, compatible with both Seurat and Bioconductor workflows.

Q: How do I address batch effects in integrated datasets across different ecosystems? A: Batch correction methods vary by ecosystem:

- Seurat: Uses reciprocal PCA (RPCA) or canonical correlation analysis (CCA) anchoring through the

IntegrateLayers()function [33], or external tools like Harmony which integrate directly into Seurat pipelines [28]. - Scanpy: Employs scvi-tools for deep generative modeling-based integration or BBKNN for graph-based batch correction [28] [36].

- Bioconductor: Utilizes the batchelor package, specifically designed for batch correction of single-cell data within the SCE framework [31].

Advanced Analysis and Interpretation

Q: What are the recommended approaches for trajectory inference across ecosystems? A: Trajectory analysis tools have different ecosystem affiliations:

- Monocle 3: Works well with both Seurat and Bioconductor ecosystems, providing robust trajectory inference using graph-based abstraction [28].

- Velocyto/PAGA: For RNA velocity analysis, Velocyto (often used with Scanpy) quantifies spliced and unspliced transcripts to infer future cell states [28].

- Slingshot: A Bioconductor package for lineage inference that works directly with SingleCellExperiment objects [31].

Q: How can I perform differential expression analysis across conditions in each ecosystem? A: Differential expression implementation varies:

- Seurat: Provides

FindConservedMarkers()for identifying genes conserved across groups, andFindMarkers()for standard differential expression testing [33]. - Scanpy: Offers

sc.tl.rank_genes_groups()for standard differential expression, with integration available for more sophisticated methods like those in scvi-tools [32] [36]. - Bioconductor: Contains multiple specialized packages for differential expression (e.g., scran, limma) that operate directly on SingleCellExperiment objects [31].

Experimental Protocols and Workflows

Standard scRNA-seq Analysis Workflow

The following workflow diagram illustrates the core steps in a typical single-cell RNA-seq analysis, common across all three ecosystems:

Standard scRNA-seq Analysis Workflow

Multi-omics Data Integration Protocol

For researchers working with multi-omics data (e.g., RNA + ATAC), the following protocol outlines key steps for integration:

Table 2: Multi-omics Integration Methods Across Ecosystems

| Step | Scanpy Approach | Seurat Approach | Bioconductor Approach |

|---|---|---|---|

| Data Input | AnnData objects for each modality | Seurat objects with multiple assays | MultiAssayExperiment with SCE objects |

| Dimension Reduction | scVI, TrVAE | CCA, RPCA | Multi-Omics Factor Analysis (MOFA) |

| Anchor Finding | scANVI label transfer | FindIntegrationAnchors() [33] | Matched biological replicates |

| Joint Visualization | UMAP on integrated space | UMAP on integrated.cca [33] | Combined dimension reduction plots |

| Downstream Analysis | Joint clustering, differential analysis | Identify conserved markers [33] | Cross-modal pattern discovery |

Detailed Methodology for Multi-omics Integration:

- Preprocessing: Independently process each modality using standard workflows (RNA-seq: Scanpy/Seurat/Bioconductor; ATAC-seq: Signac/Azurella/ArchR).

- Feature Selection: Identify highly variable features in each modality to reduce dimensionality before integration.

- Anchor Identification: Use mutual nearest neighbors (MNN) in Seurat, scVI in Scanpy, or weighted-nearest neighbor (WNN) approaches to find corresponding cells across modalities [28] [33].

- Integration: Employ methods that project all cells into a shared low-dimensional space while preserving biological variance and removing technical artifacts.

- Validation: Verify integration quality by checking that similar cell types cluster together regardless of modality and that known biological relationships are preserved.

Spatial Transcriptomics Analysis Workflow

For spatial transcriptomics data, the following diagram illustrates a typical analytical approach:

Spatial Transcriptomics Analysis

Detailed Spatial Analysis Protocol:

- Data Loading: Import spatial data from platforms (10x Visium, MERFISH, Slide-seq) using platform-specific loaders. In Squidpy (Scanpy), use

sq.read.visium(); in Seurat, useLoad10X_Spatial(); in Bioconductor, use specialized packages likeSpatialExperiment[28] [32]. - Quality Control: Calculate spot/cell-level QC metrics including total counts, gene detection, and spatial artifact detection.

- Spatial Analysis:

- Cell-Cell Communication: Infer ligand-receptor interactions using tools like

sq.gr.ligand_receptor_score()in Squidpy orCellChatin R [28]. - Visualization: Plot spatial expression patterns using

sq.pl.spatial_scatter()in Squidpy orSpatialDimPlot()in Seurat.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Single-Cell Analysis

| Tool/Reagent | Ecosystem | Primary Function | Application Context |

|---|---|---|---|

| Cell Ranger [28] | All | Preprocessing 10x Genomics data | Raw FASTQ to count matrix conversion |

| scvi-tools [28] [36] | Scanpy | Deep generative modeling | Probabilistic modeling, batch correction |

| Harmony [28] | Seurat/Scanpy | Efficient batch correction | Merging datasets across batches/donors |

| CellBender [28] | Seurat/Scanpy | Ambient RNA removal | Deep learning-based background noise removal |

| Velocyto [28] | Scanpy | RNA velocity | Inference of cellular dynamics |

| SingleCellExperiment [28] [31] | Bioconductor | Data container | Interoperable object for Bioconductor packages |

| scran [28] | Bioconductor | Robust normalization | Deconvolution-based normalization for UMI data |

| Monocle 3 [28] | All | Trajectory inference | Pseudotime analysis, lineage tracing |

Navigating the computational challenges of single-cell sequencing data analysis requires careful selection of ecosystems and tools tailored to specific research questions. Scanpy excels in handling massive datasets and deep learning applications, Seurat provides versatile multi-modal integration capabilities, and Bioconductor offers unparalleled interoperability for method development and reproducible research. By understanding the strengths, specialized tools, and troubleshooting approaches for each ecosystem, researchers can design robust analytical pipelines that effectively address the inherent complexities of single-cell data, from quality control through advanced interpretation, ultimately accelerating discoveries in basic biology and drug development.

This technical support center addresses key computational challenges in single-cell sequencing data analysis, focusing on two advanced machine learning methodologies: RNA velocity and deep generative models. As single-cell technologies evolve to profile hundreds of thousands to millions of cells across diverse conditions, researchers face unprecedented data scale and complexity. These tools help recover directed dynamic information and model sample-level heterogeneity, moving beyond static snapshots to predictive understandings of cellular processes like development, disease progression, and treatment response. This guide provides practical troubleshooting and methodological support for implementing these cutting-edge approaches within research and drug development pipelines.

Frequently Asked Questions (FAQs)

RNA Velocity

What is RNA velocity and what biological questions can it address? RNA velocity is defined as the time derivative of the gene expression state, which predicts the future state of individual cells on a timescale of hours by distinguishing between unspliced (pre-mRNA) and spliced (mature mRNA) molecules in standard single-cell RNA-sequencing protocols [37] [38]. It is primarily used to analyze time-resolved phenomena such as embryogenesis, tissue regeneration, and cellular differentiation, enabling the recovery of directed dynamic information from static snapshots [39].

My RNA velocity vector field shows unexpected or biologically implausible directions. What could be wrong? Direction errors can arise from several sources [39]:

- Violation of Model Assumptions: The standard steady-state model assumes constant kinetic rates and the presence of cells observed near steady-state expression. Violations, such as heterogeneous subpopulations with different kinetics or failure to capture intermediate transient states, can lead to erroneous vectors.

- Incomplete Kinetic Curvature: Genes with a very low ratio of splicing-to-degradation rates yield phase portraits with minimal curvature, making it difficult for the model to distinguish the correct direction of regulation.

- Time-Dependent Kinetic Rates: If transcription, splicing, or degradation rates change during the process (e.g., a transcriptional boost during erythroid maturation), it can invert or distort the expected curvature in the phase portrait, leading to direction reversal.

- Mature Cell Populations: Analyzing only terminal cell types (e.g., in PBMCs) where mRNA levels have equilibrated can result in arbitrary, false projections because no true dynamics are being captured.

Why do only a subset of genes contribute meaningfully to my velocity analysis? Current RNA velocity models rely on genes that follow simple, interpretable kinetics. In practice, many genes exhibit complex kinetics due to mechanisms like dynamic rate modulation or multiple kinetic regimes across different lineages [39]. Statistical power is also limited to genes where the splicing rate is faster than or comparable to the degradation rate, as this produces the characteristic curvature in the phase portrait necessary for inference. It is normal and recommended to focus on a subset of high-likelihood "dynamical" genes.

Deep Generative Models

What is the advantage of using deep generative models like MrVI over traditional clustering for multi-sample studies? Traditional approaches first cluster cells into predefined states and then compare the frequencies of these clusters across samples. This can oversimplify the data and miss critical effects that manifest only in specific cellular subsets [40]. MrVI, a multi-resolution deep generative model, performs exploratory and comparative analysis without requiring a priori cell clustering. It can de novo identify sample stratifications driven by specific cell subsets and detect differential expression or abundance at single-cell resolution, thereby uncovering effects that would otherwise be overlooked [40].

How can I interpret the latent space of a Variational Autoencoder (VAE) for single-cell data? Standard VAEs are powerful for dimensionality reduction but are often "black boxes." For interpretation, use methods like siVAE (scalable, interpretable VAE), which adds an interpretability regularization term [41]. This enforces a correspondence between the dimensions of the cell-embedding space and a simultaneously learned feature-embedding space (gene loadings). This allows you to identify which genes are most influential along each dimension of the latent space, similar to how PCA loadings are interpreted, but without sacrificing non-linear modeling power [41].

My model fails to integrate data from multiple batches or studies effectively. What strategies can help? Deep generative models like MrVI are explicitly designed to handle technical nuisance covariates, such as batch effects [40]. The key is to use a model that architecturally disentangles these technical effects from the biological variation of interest. In MrVI, this is achieved through a hierarchical model that uses separate latent variables to represent cell state (unaffected by sample covariates) and a cell state that also incorporates the effects of target covariates (like sample ID), while explicitly controlling for nuisance factors [40].

Troubleshooting Guides

Problem 1: Poor Quality RNA Velocity Estimates

Symptoms: Weak, noisy, or incoherent velocity vector fields; vectors pointing away from expected developmental trajectories.

Possible Causes and Solutions:

Cause: Low-quality input data or incorrect quantification of spliced/unspliced reads. Solution:

Cause: The data violates the assumptions of the steady-state model. Solution:

- Switch to a generalized dynamical model such as scVelo, which relaxes the steady-state assumption and can learn gene-specific rates from data using likelihood-based inference [39].

- Visually inspect the phase portraits (unspliced vs. spliced mRNA) for your top genes. Ensure they show clear, directional curvature. Genes with scattered points or straight lines are poor candidates for velocity analysis [39].

Cause: Lack of observable transient states in the dataset. Solution: There is no computational fix for a lack of dynamic information. Re-design the experiment to include more time points or conditions that are likely to capture cells in transition.

Problem 2: Deep Generative Model Fails to Converge or Produces Poor Integrations

Symptoms: Training loss is unstable or does not decrease; the integrated latent space does not align similar cell types from different batches.

Possible Causes and Solutions:

Cause: Improper data pre-processing and normalization. Solution:

- Normalize for sequencing depth using methods tailored for single-cell data (e.g., scran pooling-based normalization). Avoid simple methods like TPM/FPKM designed for bulk RNA-seq [18].

- Use highly variable gene selection as a standard pre-processing step before model training.

Cause: The model architecture or hyperparameters are unsuitable for the dataset scale. Solution:

Cause: The model is not adequately accounting for batch effects. Solution: Employ a model that explicitly accounts for batch as a nuisance covariate in its generative process. For example, MrVI uses a hierarchical structure and a dedicated decoder conditioned on nuisance covariates to disentangle technical variation from biological signals [40].

Experimental Protocols

Protocol 1: Dynamical RNA Velocity Inference with scVelo

Purpose: To reconstruct cellular dynamics and predict future states using a generalized dynamical model that does not assume steady-state conditions.

Materials: A count matrix of spliced and unspliced transcripts (e.g., from velocyto.py or kallisto|bustools).

Methodology:

Data Preprocessing:

- Filter genes and cells based on quality metrics.

- Normalize total cellular counts to a standard scale.

- Log-transform the spliced and unspliced counts.

Model Fitting and Inference:

- First-Order Moments: Compute moments of spliced/unspliced abundances for each cell within its local neighborhood. This smoothens the data and reduces noise.

- Dynamical Modeling: Use the

scv.tl.recover_dynamicsfunction to fit a system of differential equations for each gene, learning transcription, splicing, and degradation rates directly from the data. - Velocity Estimation: Calculate velocities as the residual between the observed spliced abundance and the estimated steady-state based on the learned kinetics.

- Projection and Visualization: Project the velocity vectors onto an existing embedding (e.g., UMAP) to generate the vector field using

scv.pl.velocity_embedding_stream.

Technical Notes: The dynamical model is computationally intensive. Start with a high-confidence subset of genes (e.g., those with high likelihoods from a preliminary fit) for faster iteration. Always validate the inferred directions against known biology.

Protocol 2: Multi-Sample Analysis with MrVI

Purpose: To de novo stratify samples and identify sample-level effects on gene expression and cellular abundance without pre-defined cell clusters.

Materials: A multi-sample single-cell dataset (e.g., from multiple patients or perturbations) with cell-by-gene count matrices and sample-level metadata.

Methodology:

Data Setup: Organize your data into an

AnnDataobject where observations are cells and variables are genes. Register the sample ID for each cell and any nuisance covariates (e.g., batch).Model Initialization and Training:

- Initialize the MrVI model using the

scvi-toolsAPI, specifying the sample and batch covariates. - Train the model using stochastic gradient descent to maximize the evidence lower bound (ELBO). Monitor the loss for convergence.

- Initialize the MrVI model using the

Exploratory and Comparative Analysis:

- Exploratory Analysis (Sample Stratification): Compute a sample-distance matrix for each cell. Use hierarchical clustering on these distances to identify groups of samples that are similar based on specific cellular subpopulations.

- Comparative Analysis (Differential Expression/Abundance): Use the model's counterfactual query functionality. For a given cell, infer its gene expression profile had it come from a different sample group (e.g., case vs. control). Compare these counterfactual profiles to identify genes with significant differential expression.

Technical Notes: MrVI uses a hierarchical deep generative model powered by modern neural network architectures. Its two-level hierarchy disentangles cell-intrinsic variation from sample-level effects, allowing for a nuanced analysis of complex cohort data [40].

Key Diagrams and Workflows

Diagram 1: RNA Velocity Transcriptional Kinetics Model

This diagram illustrates the core biochemical model underlying RNA velocity, showing the relationship between unspliced and spliced mRNA states that enables future state prediction.

Diagram 2: MrVI Hierarchical Model Architecture

This diagram outlines the hierarchical deep generative architecture of MrVI, showing how it disentangles cell-state variation from sample-level effects for multi-resolution analysis.

Research Reagent Solutions

Table 1: Essential Computational Tools for Advanced Single-Cell Analysis

| Tool Name | Type | Primary Function | Key Application |

|---|---|---|---|

| Velocyto | Software Pipeline | Quantification of spliced/unspliced reads from scRNA-seq data. | Initial step for all RNA velocity analyses [37]. |

| scVelo | Python Toolkit | Dynamical modeling of RNA velocity; generalizes the steady-state model. | Inferring complex cellular dynamics and latent time [39]. |

| scvi-tools | Python Library | A scalable, open-source library for deep generative models on single-cell data. | Platform for models like MrVI, scVI, and totalVI [40]. |

| MrVI | Deep Generative Model | Multi-resolution variational inference for multi-sample studies. | Exploratory and comparative analysis of cohort-scale single-cell data [40]. |

| siVAE | Interpretable Deep Learning | Interpretable variational autoencoder for single-cell transcriptomes. | Dimensionality reduction with gene-level interpretation of latent dimensions [41]. |

| CellRank | Python Toolkit | Probabilistic modeling of cell fate transitions using RNA velocity and beyond. | Inferring fate probabilities and initial states across trajectories. |

Troubleshooting Guides & FAQs

Trajectory Inference

Q1: My trajectory inference with Slingshot results in an illogical or overly complex branching structure. What are the primary causes and solutions?

A: This is commonly caused by high dimensionality or noise in the input data.

- Cause 1: Highly variable genes not related to the differentiation process are dominating the PCA.

- Solution: Re-run feature selection focusing on genes with biological relevance to the process. Use domain knowledge to filter gene sets.