Optical Extinction Measurements: A Revolutionary Approach for Plasma Protein Detection in Biomedical Research and Drug Development

This article explores the transformative potential of optical extinction measurements for plasma protein analysis, a rapidly advancing field with significant implications for diagnostics and therapeutic development.

Optical Extinction Measurements: A Revolutionary Approach for Plasma Protein Detection in Biomedical Research and Drug Development

Abstract

This article explores the transformative potential of optical extinction measurements for plasma protein analysis, a rapidly advancing field with significant implications for diagnostics and therapeutic development. We first establish the foundational principles of how light interaction with plasma proteins reveals critical conformational and concentration data. The discussion then progresses to methodological innovations and diverse applications, including multi-cancer early detection and neurological disease biomarker discovery. We address key challenges in troubleshooting and optimizing these assays for complex biological samples. Finally, we present a comprehensive validation framework comparing optical methods against established proteomic platforms, providing researchers and drug development professionals with actionable insights for implementing this powerful technology in their work.

Fundamental Principles: How Light Interaction Reveals Plasma Protein Signatures

The study of light interaction with biological fluids, primarily through extinction (absorption and scattering) phenomena, forms the cornerstone of many analytical techniques in biomedical research and clinical diagnostics. Biological fluids like blood plasma are complex mixtures containing proteins, lipids, and various molecular constituents that collectively determine their optical properties. When light passes through such fluids, its intensity is attenuated through absorption by chromophores and scattering by particles and macromolecules. Quantitative understanding of these processes, governed by the Beer-Lambert law, enables researchers to extract valuable information about molecular concentrations, structural changes, and biomolecular interactions. Within the context of plasma protein detection, these optical principles provide a foundation for both conventional analysis and emerging diagnostic technologies that detect disease-specific conformational changes in plasma proteins.

Theoretical Foundations

The Beer-Lambert Law and Extinction Coefficients

The Beer-Lambert law describes the attenuation of light as it passes through a material, establishing a fundamental relationship between the absorbance of a solution and its concentration. The mathematical expression is:

A = ε · c · l

Where:

- A is the measured absorbance (dimensionless)

- ε is the molar extinction coefficient (typically in L·mol⁻¹·cm⁻¹)

- c is the concentration of the absorbing species (mol/L)

- l is the optical path length (cm) [1] [2]

The extinction coefficient (ε) is a crucial physical parameter that quantifies a substance's ability to absorb light at a specific wavelength. This coefficient is directly proportional to the absorbance of a solution, making it a key parameter in optical experiments and analytical applications across chemistry, biology, and pharmaceutical research [1]. For proteins, the absorbance maximum near 280 nm in the UV spectrum primarily results from aromatic amino acids - tryptophan and tyrosine residues, with minor contributions from phenylalanine and disulfide bonds [2].

Scattering Phenomena in Complex Media

In biological fluids like plasma, scattering events significantly contribute to overall light extinction. The scattering coefficient (μs) represents the probability of photon scattering per unit pathlength, while the reduced scattering coefficient (μs') accounts for anisotropic scattering in diffusive regimes: μs' = μs(1-g), where g is the anisotropy factor representing the average cosine of the scattering angle [3]. In whole blood, scattering originates primarily from refractive index mismatches, especially at plasma-red blood cell interfaces, with reduced scattering coefficients of approximately 13 cm⁻¹ throughout the visible spectrum [4]. The complex interplay between absorption and scattering in biological media necessitates sophisticated computational models, including the radiative transfer equation (RTE) and Monte Carlo simulations, to accurately describe light propagation and interpret measurement data [3].

Quantitative Data on Optical Properties

Table 1: Molar Extinction Coefficients of Aromatic Amino Acids in Proteins at 280 nm

| Amino Acid | Molar Extinction Coefficient (ε) [M⁻¹cm⁻¹] |

|---|---|

| Tryptophan (W) | 5,500 |

| Tyrosine (Y) | 1,490 |

| Phenylalanine (F) | 200 |

| Cystine (C) | 125 (in disulfide bonds) |

Table 2: Typical Absorption and Scattering Parameters of Whole Blood in the Visible-NIR Range

| Parameter | Symbol | Typical Value | Unit |

|---|---|---|---|

| Absorption coefficient (NIR) | μa | ~0.1 | cm⁻¹ |

| Scattering coefficient (NIR) | μs | ~100 | cm⁻¹ |

| Reduced scattering coefficient (NIR) | μs' | ~10 | cm⁻¹ |

| Anisotropy factor | g | ~0.9 | - |

| Reduced scattering mean free path | mfps' | ~1 | mm |

Experimental Protocols

Protocol: Determination of Protein Extinction Coefficient via Spectrophotometry

Spectrophotometry provides a straightforward method for determining extinction coefficients and protein concentrations [1] [2].

Principle: The extinction coefficient of a protein can be calculated from its amino acid composition using the following formula: ε₂₈₀ = (nW × 5,500) + (nY × 1,490) + (nC × 125) where nW, nY, and nC represent the number of tryptophan, tyrosine, and cysteine residues in the protein sequence, respectively [2].

Materials:

- UV-transparent cuvettes (quartz or methacrylate)

- Spectrophotometer (e.g., NanoDrop for microvolume measurements)

- Purified protein sample

- Matching buffer for blank measurement

- Serial dilution of standard protein (e.g., BSA) for calibration curve

Procedure:

- Reagent Preparation: Prepare homogeneous protein solutions free of air bubbles. For absolute concentration determination, prepare a series of standard solutions with known concentrations [1].

- Instrument Setup: Turn on the spectrophotometer and allow it to stabilize. Select the appropriate wavelength (typically 280 nm for proteins). Use a blank solution (solvent only) to calibrate the instrument to zero absorbance [1].

- Standard Solution Measurement: Measure the absorbance of standard solutions with known concentrations. Record the absorbance of each standard at the selected wavelength [1].

- Calibration Curve: Plot a concentration-absorbance calibration curve based on standard measurements. Perform linear regression analysis to determine the slope and intercept [1].

- Sample Measurement: Using the same wavelength, measure the absorbance of the test sample and record the data [1].

- Data Processing and Calculation: Using the calibration curve, calculate the sample concentration, then apply the Beer-Lambert law to determine the extinction coefficient [1].

Considerations:

- Ensure sample absorbance falls within the instrument's linear dynamic range (typically 0.1-1.0 AU)

- Account for nucleic acid contamination using the correction formula: Protein concentration (mg/mL) = 1.55A₂₈₀ - 0.75A₂₆₀

- Control environmental factors (temperature, pH, solvent type) that may influence extinction coefficient determination [1]

Protocol: Multi-Distance Fluence Measurements for Scattering Calibration

This method enables calibration of scattering properties in liquid diffusive media using continuous-wave (CW) measurements [5].

Principle: The effective attenuation coefficient (μeff) is determined from multidistance measurements of fluence rate, Φ(r), inside an infinite medium illuminated by a CW source, based on the solution to the diffusion equation for an infinite medium: Φ(r) = (3μs' / 4πr) × exp(-μeff r) [5].

Materials:

- CW laser source (e.g., at 750 nm for NIR measurements)

- Multiple optical detectors or movable single detector

- Liquid diffusive medium (e.g., Intralipid-20% suspension)

- Thermostated sample container

- Absorption modifier (e.g., Indian ink)

Procedure:

- Sample Preparation: Prepare aqueous suspensions of diffusive medium at varying volume concentrations (ρil).

- Measurement Setup: Immerse point-like isotropic source and detector in infinite medium configuration.

- Data Collection: Measure fluence rate, Φ(r), at multiple distances (r) from the source for each concentration.

- Linear Fitting: Plot ln[rΦ(r)] as a function of r and perform linear fit; the absolute slope equals μeff.

- Scattering Calculation: For each concentration, determine μeff and plot μ²eff(ρil)/ρil versus ρil. The intercept (Iil) and slope (Sil) of the linear fit relate to the intrinsic optical properties: εs'il = Iil/(3εaH₂O) and εail = Sil/(3εs'il) + εaH₂O, where εaH₂O is the absorption coefficient of water [5].

Advantages: This approach achieves high accuracy with standard errors smaller than 2% for both reduced scattering and absorption coefficients when the absorption of the dispersion liquid is known with sufficient accuracy [5].

Applications in Plasma Protein Detection Research

Protein Quantification and Biomarker Discovery

Ultraviolet absorbance measurements at 280 nm provide a rapid, straightforward method for determining protein concentration in biochemical experiments [1] [2]. This approach is widely employed during protein expression, purification, and quantification analyses. The precise determination of protein concentration via extinction coefficients is particularly crucial in biomarker discovery studies, where accurate quantification enables reliable comparisons between patient groups. Recent advances in plasma proteomics utilize affinity-based platforms (SomaScan, Olink) and mass spectrometry methods to measure thousands of proteins simultaneously, with studies identifying distinct molecular signatures for conditions like amyotrophic lateral sclerosis (ALS) through differential abundance analysis of 33 plasma proteins [6] [7].

Novel Diagnostic Applications

Innovative approaches are exploiting extinction measurements for diagnostic purposes. The Carcimun test detects conformational changes in plasma proteins through optical extinction measurements at 340 nm, demonstrating significant differences between cancer patients, healthy individuals, and those with inflammatory conditions [8]. In a prospective study, this test achieved 95.4% accuracy, 90.6% sensitivity, and 98.2% specificity in distinguishing these groups, with mean extinction values of 23.9 for healthy controls, 62.7 for inflammatory conditions, and 315.1 for cancer patients [8]. This application highlights how protein conformational changes in pathological states can alter optical properties, providing a foundation for novel diagnostic technologies.

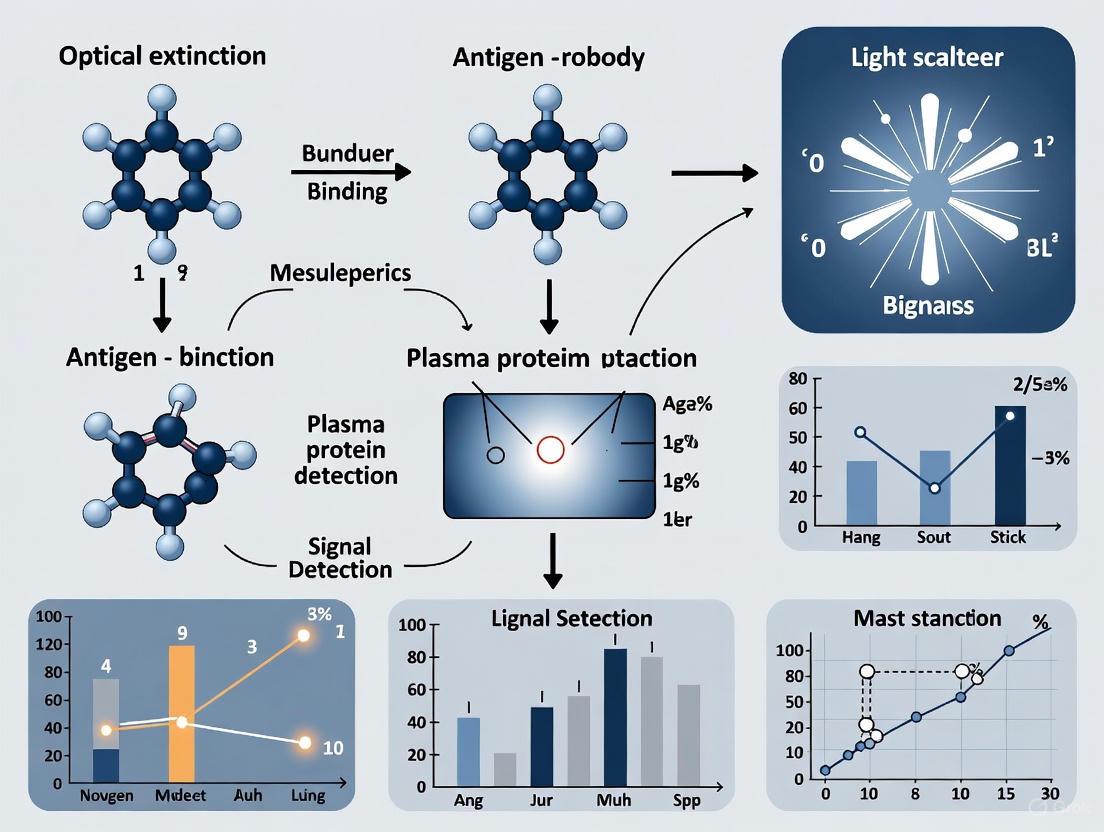

Diagram 1: Experimental workflow for extinction-based analysis of biological fluids, showing main steps from sample collection to result interpretation, with common analytical platforms indicated.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Extinction and Scattering Experiments in Biological Fluids

| Item | Function/Application | Examples/Specifications |

|---|---|---|

| Spectrophotometer | Absorbance measurement for concentration determination | NanoDrop instruments for microvolume measurements; standard UV-Vis with quartz cuvettes [1] [2] |

| Liquid Diffusive Phantoms | Calibration of scattering measurements | Intralipid-20% suspensions; aqueous suspensions with known optical properties [5] |

| Calibrated Absorbers | Absorption coefficient standards | Indian ink solutions; chromophores with characterized extinction coefficients [5] |

| Affinity-Based Proteomic Platforms | High-throughput plasma protein measurement | Olink Explore, SomaScan assays; utilize binding probes (antibodies/aptamers) for specific protein detection [6] |

| Mass Spectrometry Platforms | Untargeted plasma proteome profiling | LC-MS/MS with DIA; nanoparticle-based enrichment (Seer Proteograph) [6] |

| Fluorescent Labels | Specific protein tracking in complex media | Alexa Fluor dyes; covalent labeling for fSPT measurements in serum/plasma [9] |

| Reference Proteins | Calibration standards for quantification | BSA, IgG; solutions with accurately determined concentrations [1] [2] |

The principles of extinction coefficients and scattering provide powerful tools for analyzing biological fluids, particularly in plasma protein detection research. The Beer-Lambert law offers a fundamental framework for quantitative analysis, while sophisticated scattering models enable interpretation of measurements in complex biological media. As proteomic technologies advance, with affinity-based platforms and mass spectrometry methods now capable of measuring thousands of plasma proteins simultaneously, the accurate determination of optical properties remains essential for biomarker discovery and novel diagnostic applications. Emerging technologies that detect disease-specific alterations in plasma protein conformation through optical measurements highlight the continuing relevance of these core physical principles in modern biomedical research and clinical diagnostics.

The detection of protein conformational changes is a cornerstone of modern biochemical research, with profound implications for understanding disease mechanisms, drug action, and fundamental biological processes. Optical spectroscopy methods provide powerful, often label-free tools for probing these structural dynamics in real-time, leveraging the intrinsic relationship between a protein's structure and its interaction with light. Within the broader context of optical extinction measurements for plasma protein detection, these techniques offer exceptional sensitivity for monitoring conformational shifts, binding events, and stability changes under physiologically relevant conditions. This application note details the principles, methodologies, and key applications of contemporary optical techniques for detecting protein conformational changes, with a specific focus on label-free approaches that minimize perturbation to native protein structure and function. We present structured protocols and quantitative comparisons to equip researchers with practical frameworks for implementing these powerful spectroscopic tools in their experimental workflows.

Fundamental Principles of Protein-Light Interactions

Proteins interact with light through several fundamental mechanisms that provide windows into their structural states. The aromatic amino acids tryptophan (Trp), tyrosine (Tyr), and phenylalanine (Phe) possess characteristic ultraviolet absorption spectra due to their π-π* transitions, with molar absorption coefficients that enable concentration determination and environmental sensing [10]. When a protein undergoes conformational changes, the local environment of these chromophores shifts, altering their extinction coefficients and spectral properties [10]. For folded proteins, the absorption spectrum represents the composite contribution of all chromophores within their specific molecular environments, whereas unfolded states typically exhibit spectra predictable from the sum of individual amino acid contributions [10].

Beyond simple absorption, proteins exhibit complex optical behaviors including light scattering, refractive index changes, and anisotropy that correlate with their structural properties. Label-free techniques exploit these intrinsic physical properties by detecting minute changes in scattered light intensity, resonance conditions, or interference patterns that occur when proteins change conformation or engage in interactions [11]. The exceptionally small scattering cross-sections of biomolecules—scaling with the sixth power of particle diameter—present significant detection challenges that have been overcome through advanced enhancement strategies including interference, plasmonics, and optical resonance techniques [11].

Optical Techniques for Detecting Conformational Changes

Interference Microscopy

Interference-based microscopy leverages wave interference between light scattered by a biomolecule and a coherent reference wave to achieve exceptional sensitivity. The foundational equation for interference microscopy is:

[{I}{t}\,=\,{\left|{E}{r}\right|}^{2}\,+\,{\left|{E}{s}\right|}^{2}\,+2\,\left|{E}{r}\right|\,\left|{E}_{s}\right|\mathrm{cos}\phi]

where It is the total detected intensity, Er and Es represent the reference and scattered field amplitudes, and φ is the phase difference between them [11]. For subwavelength particles like proteins, the scattered intensity |Es|² is negligible, making the interference term the primary signal contributor. Interference Scattering Microscopy (iSCAT) has emerged as a leading interferometric method, achieving sensitivity for single proteins in the tens of kilodalton range by functioning as an optical analog of mass spectrometry [11]. Recent innovations like Nanofluidic Scattering Microscopy (NSM) employ nanochannels to minimize axial displacement of freely diffusing molecules, enabling stable signals for both mass and diffusivity measurements [11].

Plasmonic Sensing

Plasmonic detection operates on refractometric principles, where molecular binding or conformational changes alter the local refractive index, shifting the resonance condition of plasmonic modes [11]. While conventional Surface Plasmon Resonance (SPR) probes micron-scale areas containing thousands of molecules, single-particle plasmonic sensors utilizing individual metal nanoparticles achieve single-molecule resolution through highly confined sensing volumes with field extensions approximately 10 times shorter from the metal surface [11]. The first real-time monitoring of biomolecular interactions using single particles was demonstrated in 2008, establishing plasmonics as a powerful approach for probing binding events and associated conformational changes [11].

Optical Weak Measurements

Optical weak measurement represents a novel quantum-inspired approach that enables highly sensitive detection of molecular interactions through minimal perturbation. The technique involves three key steps: pre-selection of a suitable quantum state, weak coupling interaction between the measurement device and quantum system, and post-selection to extract measurement information [12]. Applied to protein-polyphenol interactions, this method detects binding through shifts in the central wavelength of the spectral center, enabling calculation of binding constants and sites with superior sensitivity compared to traditional fluorescence spectroscopy [12]. The technique is particularly valuable for studying non-immobilized biomolecules under native conditions, though it requires careful optimization to mitigate sensitivity to solution fluctuations in complex samples [12].

Single-Molecule Spectroscopy

Single-molecule spectroscopy eliminates ensemble averaging effects, enabling detection of heterogeneities and transient states invisible to conventional measurements. This approach monitors conformational fluctuations through spectral signatures of embedded chromophores whose electronic energy levels respond sensitively to local environmental changes [13]. While room-temperature studies capture proteins under near-native conditions, cryogenic single-molecule spectroscopy narrows absorption bands by freezing out nuclear motions, resolving subtle spectral features that report on protein matrix changes with enhanced sensitivity [13]. The exceptional photostability at low temperatures enables extended observation times, facilitating detection of rare conformational states.

Table 1: Comparison of Optical Techniques for Detecting Protein Conformational Changes

| Technique | Detection Principle | Sensitivity | Temporal Resolution | Key Applications |

|---|---|---|---|---|

| Interference Microscopy (iSCAT) | Interference between scattered and reference light | Single protein molecules (tens of kDa) [11] | Millisecond to second [11] | Real-time molecular tracking, mass profiling, interaction studies [11] |

| Plasmonic Sensing | Refractive index changes affecting resonance conditions | Single molecules (nanoparticle-based) [11] | Real-time (seconds) [11] | Binding kinetics, affinity measurements, conformational shifts [11] |

| Optical Weak Measurements | Quantum weak coupling with pre- and post-selection | High sensitivity for binding events [12] | Real-time monitoring [12] | Protein-polyphenol interactions, binding constants, solution-phase studies [12] |

| Single-Molecule Spectroscopy | Spectral fluctuations of embedded chromophores | Single molecules [13] | Varies (milliseconds at RT; longer at cryogenic) [13] | Conformational dynamics, hidden states, energy landscape mapping [13] |

Quantitative Analysis of Protein Conformational Changes

Spectral Analysis and Protein Concentration Determination

Accurate protein concentration determination forms the foundation for quantitative conformational studies. The established relationship between aromatic amino acid content and UV absorbance enables concentration determination through:

[C=A{280}/ε{280}l]

where C is concentration, A280 is absorbance at 280 nm, ε280 is the molar absorption coefficient, and l is pathlength [10]. The molar absorption coefficient can be calculated from primary sequence data using:

[ε{280}=n{Trp}ε{Trp}+n{Tyr}ε{Tyr}+n{Cys2}ε{Cys_2}]

where n represents the number of each residue type and ε their respective molar absorption coefficients [10]. For precise quantification, multi-wavelength analysis across 250-350 nm provides superior accuracy compared to single-wavelength measurements, particularly when employing model compounds like N-acetyl-l-tyrosinamide (NAYA) and N-acetyl-l-tryptophanamide (NAWA) that mimic the peptide-bonded environment of aromatic residues in proteins [10].

Binding Parameter Quantification

Optical techniques enable precise determination of binding parameters critical for understanding protein-ligand interactions and associated conformational changes. For protein-polyphenol interactions monitored through optical weak measurements, the binding constant (KA) and number of binding sites (n) can be determined from the relationship between polyphenol concentration and spectral shift [12]. These parameters show consistent trends with traditional fluorescence quenching methods while offering advantages of label-free detection and applicability to non-immobilized biomolecules [12]. Similarly, plasmonic sensors provide real-time binding curves from which affinity and kinetic parameters can be extracted, making them invaluable for drug discovery and biomolecular interaction analysis [11].

Table 2: Quantitative Parameters for Protein Conformational Analysis

| Parameter | Optical Signature | Calculation Method | Information Content |

|---|---|---|---|

| Protein Concentration | Absorbance at 280 nm [10] | Beer-Lambert law with multi-wavelength fitting [10] | Total protein quantity, sample purity |

| Binding Constant (KA) | Spectral shift or resonance change [12] | Nonlinear fitting of binding isotherm [12] | Interaction strength, affinity |

| Binding Sites (n) | Amplitude of spectral response [12] | Linear regression of binding data [12] | Stoichiometry of interaction |

| Protein Lifetime/Turnover | Fluorescence pulse-chase ratio [14] | τ = Δt/log(1/fraction pulse) [14] | Protein stability, degradation kinetics |

| Thermodynamic Parameters | Temperature-dependent spectral changes | Van't Hoff analysis or ITC integration | Interaction forces (hydrophobic, H-bonding) [15] |

Experimental Protocols

Protocol: Interference Scattering Microscopy (iSCAT) for Single-Protein Detection

Principle: Detect interference patterns between light scattered from single proteins and a reference wave reflected from a substrate [11].

Materials:

- iSCAT microscope with high-numerical aperture objective

- Laser source (e.g., 405 nm)

- Cover slip with anti-reflective coating

- Protein sample in appropriate buffer

- EMCCD or sCMOS camera

Procedure:

- Sample Preparation: Dilute protein to appropriate concentration (typically pM-nM) in compatible buffer.

- Substrate Preparation: Use cover slips with optimized reflectivity and functionalization if surface immobilization is desired.

- Microscope Alignment: Align interferometer to achieve stable reference wave with optimal phase relationship.

- Background Acquisition: Record reference images without sample for background subtraction.

- Data Acquisition: Illuminate sample and acquire image sequences with appropriate frame rate (typically 0.1-1 kHz).

- Data Analysis:

- Subtract background reference images

- Identify single-molecule scattering signals through spatiotemporal filtering

- Extract intensity traces and compute diffusion coefficients or binding events

- For mass quantification, calibrate with proteins of known molecular weight [11]

Applications: Real-time tracking of molecular transport, protein-protein interactions, and mass analysis of single proteins [11].

Protocol: Optical Weak Measurements for Protein-Polyphenol Interactions

Principle: Exploit quantum weak measurement principles to detect binding-induced spectral shifts with high sensitivity [12].

Materials:

- Optical weak measurement system with calcite beam splitters

- Tunable light source

- Spectrometer or CCD detector

- Protein and polyphenol solutions

- Phosphate-buffered saline (PBS, 0.01 M)

Procedure:

- System Calibration: Align optical components and calibrate wavelength detection.

- Sample Preparation: Prepare protein solution (e.g., BSA, HSA, or hemoglobin at 5-50 μM) in PBS.

- Baseline Measurement: Acquire spectral center wavelength for protein alone.

- Titration Experiment: Add polyphenol (e.g., chlorogenic acid or tea polyphenols) in increments from 2-14 μM.

- Spectral Acquisition: After each addition, measure shift in central wavelength of spectral center.

- Data Analysis:

Applications: Label-free detection of biomolecular interactions, binding constant determination, and food chemistry research [12].

Visualization of Experimental Workflows

Interference Microscopy Workflow

Diagram 1: Interference Microscopy Workflow. Illustration of the optical path and detection scheme for interference-based microscopy techniques like iSCAT, showing how reference and scattered waves combine to generate interference patterns at the detector.

Optical Weak Measurement Process

Diagram 2: Optical Weak Measurement Process. Schematic representation of the three-step quantum measurement process (pre-selection, weak coupling, and post-selection) used in optical weak measurements to detect molecular interactions through minimal perturbation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Optical Protein Detection

| Reagent/Material | Function | Application Notes |

|---|---|---|

| N-acetyl-l-tyrosinamide (NAYA) | Tyr model compound for UV spectroscopy [10] | Mimics peptide-bonded tyrosine environment; use for extinction coefficient determination |

| N-acetyl-l-tryptophanamide (NAWA) | Trp model compound for UV spectroscopy [10] | Provides accurate molar absorption coefficients for tryptophan in proteins |

| Janelia Fluor (JF) HaloTag dyes | Protein labeling for turnover studies [14] | Enable pulse-chase experiments; JF669-HTL and JF552-HTL show high bioavailability |

| Chlorogenic acid | Model polyphenol for interaction studies [12] | Representative phenolic acid for protein-polyphenol binding experiments |

| Photoactive Yellow Protein (PYP) variants | Optogenetic control of protein interactions [16] | Enable light-induced domain swapping for controlling protein-protein interactions |

| Guanidine hydrochloride (GdnHCl) | Protein denaturant for unfolding studies [10] | Use at 6.0 M concentration for denatured state spectral measurements |

Optical methods for detecting protein conformational changes through their optical signatures have evolved dramatically, progressing from bulk spectroscopic measurements to single-molecule sensitivity. The techniques detailed in this application note—spanning interference microscopy, plasmonic sensing, optical weak measurements, and single-molecule spectroscopy—provide researchers with powerful, often complementary approaches for probing protein structure and dynamics. When implemented within the framework of optical extinction measurements for plasma protein detection, these methods enable sensitive, label-free assessment of conformational states, binding events, and stability parameters under physiologically relevant conditions. As these optical technologies continue advancing, they promise to unlock deeper understanding of protein function and facilitate development of novel therapeutic interventions targeting specific protein conformational states.

Optical extinction measurements, which quantify the attenuation of light by a sample, provide a foundational tool for detecting and characterizing proteins in plasma. This application note details the journey from established spectrophotometric methods to cutting-edge, high-plex analyzers, providing researchers and drug development professionals with structured protocols and comparative data to guide their experimental design in plasma proteomics. The ability to accurately measure protein concentration and profile complex mixtures is pivotal for biomarker discovery, therapeutic development, and fundamental biophysical studies.

Fundamental Spectrophotometric Methods

Basic spectrophotometric techniques remain widely used for determining total protein concentration due to their simplicity, cost-effectiveness, and rapid turnaround time. These methods can be broadly categorized into ultraviolet (UV) absorption techniques, which exploit the intrinsic properties of proteins, and colorimetric assays, which rely on a chromogenic reaction.

Ultraviolet Absorption Method

The UV absorption method is a direct, non-destructive technique that uses the absorbance of ultraviolet light by aromatic amino acids in proteins, primarily tryptophan and tyrosine, at 280 nm [17] [18]. The absorption spectrum of Human Serum Albumin (HSA), for example, shows a clear absorption maximum at this wavelength [18]. A key advantage is that the sample can often be recovered after measurement. However, the absorbance differs for each protein depending on its specific amino acid composition, and contamination by nucleic acids, which also absorb strongly in the UV region, can interfere with accurate quantitation [18].

Protocol: Protein Quantitation by UV Absorption at 280 nm

- Instrument Calibration: Power on the UV-Visible spectrophotometer (e.g., Jasco V-630 Bio) and allow it to warm up for 15 minutes. Follow the manufacturer's instructions to initialize and perform a baseline correction with an appropriate blank (e.g., buffer or solvent).

- Sample Preparation: Prepare a series of protein standard solutions (e.g., Bovine Serum Albumin, BSA) at known concentrations within the working range of 50 to 2000 µg/mL [18]. Ensure unknown protein samples are diluted within this quantifiable range.

- Measurement: Pipette the standard and unknown samples into a suitable cuvette (e.g., a 10 mm pathlength quartz cell or a micro cell for smaller volumes). Measure the absorbance at 280 nm against the blank.

- Data Analysis: Construct a standard calibration curve by plotting the absorbance of the standard solutions against their known concentrations. Use the linear regression equation of the standard curve to calculate the concentration of the unknown samples.

Table 1: Performance of the UV Absorption Method for Various Proteins

| Protein | Cell Type | Concentration Range | Calibration Curve Formula | Correlation Coefficient |

|---|---|---|---|---|

| Bovine Serum Albumin (BSA) | 10 mm rectangular | to 2 mg/mL | Y = 0.6652X - 0.0130 | 0.9994 |

| Hen Egg Lysozyme (HEL) | 10 mm rectangular | to 0.5 mg/mL | Y = 0.6474X - 0.0150 | 0.9991 |

| α-Chymotrypsin | 10 mm rectangular | to 0.5 mg/mL | Y = 1.904X - 0.0035 | 0.9997 |

Colorimetric Assay Methods

Colorimetric methods involve adding a reagent to the protein sample to produce a colored complex, the intensity of which is proportional to protein concentration. These methods often offer greater sensitivity than direct UV absorption.

Protocol: Protein Quantitation by the Biuret Method

- Reagent Preparation: Prepare Biuret reagent by adding 60 mL of 10% NaOH to an aqueous solution containing 0.3 g CuSO₄ and 1.2 g Rochelle salt. Adjust the final volume to 200 mL with water. The reagent can be stored in a polyethylene bottle [18].

- Sample and Standard Preparation: Prepare protein standard solutions (e.g., BSA) and unknown samples within the working range of 150 to 9000 µg/mL [18].

- Reaction: Add 2.0 mL of Biuret reagent to 500 µL of each protein solution and mix thoroughly [18].

- Incubation: Allow the reaction to proceed for 60 minutes at room temperature for the color to fully develop and stabilize [18].

- Measurement: Transfer the solution to a cuvette and measure the absorbance at 540 nm against a blank prepared with reagent and buffer.

- Data Analysis: Generate a standard curve from the absorbance values of the standards and use it to determine the concentration of unknown samples.

Table 2: Comparison of Common Colorimetric Protein Assays

| Method | Principle | Concentration Range (BSA) | Advantages | Disadvantages |

|---|---|---|---|---|

| Biuret | Polypeptide chain chelates Cu²⁺ in alkaline solution | 150 - 9000 µg/mL [18] | Simple procedure; constant chromogenic rate for most proteins [18] | Low sensitivity; interfered by Tris, amino acids, ammonium ions [18] |

| Lowry | Reduction of Folin-Ciocalteu reagent by tyrosine/tryptophan | 5 - 200 µg/mL [18] | High sensitivity; widely used [18] | Lengthy, multi-step procedure; interfered by reducing agents [18] |

| BCA | Cu²⁺ reduction and chelation by bicinchoninic acid | 20 - 2000 µg/mL [18] | Simple procedure; high sensitivity; wide range [18] | Interfered by thiols, phospholipids, ammonium sulfate [18] |

| Bradford | Shift in Coomassie Brilliant Blue G250 absorption | 10 - 2000 µg/mL [18] | Very simple and rapid; less affected by contaminants [18] | Variable response for different proteins; interfered by surfactants [18] |

Advanced Analytical Platforms for Plasma Proteomics

While fundamental methods are ideal for total protein concentration, advanced platforms are required for the comprehensive, multiplexed analysis of the plasma proteome, which spans an enormous dynamic range of over 10 orders of magnitude [6]. The two primary approaches are affinity-based techniques and mass spectrometry (MS)-based methods.

Affinity-Based Platforms

These platforms use binding reagents like antibodies or aptamers to specifically target and quantify proteins.

- SomaScan: Utilizes single-stranded DNA aptamers (SOMAmers) that bind to specific target proteins. The assay relies on a single high-affinity binder per target, which can sometimes introduce matrix-dependent bias [6].

- Olink Explore: Uses Proximity Extension Assay (PEA) technology, which requires two different antibodies to bind the same target protein in close proximity. This dual recognition enhances specificity and reduces background noise [6].

- NULISA: A newer technology that also employs an dual-antibody recognition system but is engineered for an even higher sensitivity and lower limit of detection, making it suitable for detecting very low-abundance proteins [6].

Mass Spectrometry-Based Platforms

MS platforms identify and quantify proteins by measuring the mass-to-charge ratio of their proteolytic peptides, offering unique specificity and the ability to detect protein isoforms and post-translational modifications [6].

- MS with Nanoparticle Enrichment (e.g., Seer Proteograph): Uses surface-modified magnetic nanoparticles to enrich proteins from complex samples like plasma based on their physicochemical properties, dramatically increasing proteome coverage, particularly for low-abundance species [6].

- MS with High-Abundance Protein (HAP) Depletion (e.g., Biognosys TrueDiscovery): Employs immunoaffinity columns to remove the most abundant plasma proteins (e.g., albumin, immunoglobulins), thereby reducing dynamic range and allowing for better detection of lower-abundance proteins [6].

- Targeted MS (e.g., SureQuant): Considered a "gold standard" for reliable absolute quantification, this method uses internal standard peptides with optimized detection to achieve high precision and accuracy for a predefined set of proteins [6].

Table 3: Direct Comparison of Advanced Plasma Proteomics Platforms

| Platform | Technology Principle | Key Advantage | Key Limitation | Proteins Detected (in study) |

|---|---|---|---|---|

| SomaScan 11K | Aptamer-based affinity binding [6] | High-throughput, ultra-plex (10,776 assays) [6] | Specificity depends on single aptamer [6] | 9,852 [6] |

| Olink Explore 5K | Proximity Extension Assay (dual Ab) [6] | High specificity from dual antibody recognition [6] | Pre-defined target panel | 5,416 [6] |

| NULISA | Dual-antibody recognition [6] | Very high sensitivity and low limit of detection [6] | Lower overall proteome coverage (377 assays) [6] | 325 [6] |

| MS-Nanoparticle | Nanoparticle enrichment + DIA MS [6] | Deep, unbiased coverage; identifies isoforms/PTMs [6] | Limited depth for very low-abundance proteins [6] | 5,943 [6] |

| MS-IS Targeted | Targeted MS with internal standards [6] | "Gold standard" for absolute quantification [6] | Lower throughput, focuses on predefined targets | 551 [6] |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Kits for Protein Analysis

| Item | Function / Application | Example Products / Notes |

|---|---|---|

| UV-Vis Spectrophotometer | Measuring absorbance for protein quantitation [18] | Jasco V-630 Bio; requires quartz or disposable cuvettes [18] |

| Chromogenic Assay Kits | Provide optimized reagents for colorimetric protein quantitation [18] | Pierce BCA Assay Kit; Dojindo Protein Quantification Kits (Bradford, WST) [18] |

| Plasma Preparation Tubes | Collection and preservation of blood plasma samples [6] | Use of specific anticoagulants (e.g., EDTA, Heparin) is critical [6] |

| High-Abundancy Protein Depletion Kit | Removing top abundant proteins to enhance detection of low-abundance targets in MS [6] | Used in Biognosys TrueDiscovery platform [6] |

| Nanoparticle Enrichment Kit | Enriching proteins from complex biofluids based on physicochemical properties [6] | Seer Proteograph XT Assay Kit [6] |

| Multiplex Immunoassay Panels | Simultaneously quantifying dozens to thousands of predefined protein targets [6] | Olink Explore Panels; SomaScan Assays; NULISA Panels [6] |

| Internal Standard Peptides | Enabling absolute quantification and improved reliability in targeted MS [6] | Biognosys PQ500 Reference Peptides [6] |

The field of plasma protein analysis offers a tiered toolkit, ranging from simple, cost-effective spectrophotometric methods for total protein concentration to sophisticated, high-plex platforms for deep proteome profiling. The choice of instrument and methodology must be aligned with the specific research question, considering the required sensitivity, specificity, throughput, and depth of coverage. As technologies like label-free single-molecule detection [11] and multiplexed affinity assays continue to evolve, the ability to discover and validate protein biomarkers in plasma will become increasingly powerful, further accelerating drug development and precision medicine.

Optical analysis techniques represent a powerful and versatile toolset in modern diagnostic research, enabling the detection and quantification of biomolecules through their interaction with light. These methods are particularly valuable for developing multi-cancer early detection (MCED) tests and other diagnostic assays, as they can identify disease-specific signatures by measuring conformational changes in plasma proteins or other optically active biomarkers [8]. The fundamental principle involves detecting changes in optical properties—such as absorbance, extinction, or fluorescence—that occur when specific biomolecules interact with light, providing a quantifiable signal correlated to pathological states [19].

The Carcimun test exemplifies this approach, utilizing optical extinction measurements at 340 nm to detect conformational changes in plasma proteins that serve as universal markers for malignancy and acute inflammation [8]. Similarly, novel immunodiagnostic platforms employ fluorogenic labeling of amino acid residues in neat blood plasma to create Amino Acid Concentration Signatures (AACS) that distinguish cancerous from non-cancerous states with high specificity [20]. These optical profiles provide a direct window into disease pathophysiology, revealing alterations in protein structure, immune response patterns, and metabolic disturbances that occur during disease progression.

Data Presentation: Quantitative Findings from Optical Biomarker Studies

Table 1: Performance Metrics of the Carcimun Test in Cancer Detection

| Participant Group | Number of Participants | Mean Extinction Value | Comparison to Healthy | Statistical Significance (p-value) |

|---|---|---|---|---|

| Healthy Individuals | 80 | 23.9 | Reference | N/A |

| Inflammatory Conditions* | 28 | 62.7 | 2.6-fold increase | p<0.001 |

| Cancer Patients | 64 | 315.1 | 13.2-fold increase | p<0.001 |

*Inflammatory conditions included fibrosis, sarcoidosis, pneumonia, and benign tumors [8].

Table 2: Diagnostic Performance of Optical Biomarker Tests

| Test Name | Sensitivity | Specificity | Accuracy | Area Under Curve (AUC) | Cancer Types Detected |

|---|---|---|---|---|---|

| Carcimun Test | 90.6% | 98.2% | 95.4% | Not specified | Multiple (Pan-cancer) |

| Immunodiagnostic AACS | 78% | 100% (0% FPR) | Not specified | 0.95 | Breast, colorectal, pancreatic, prostate |

| Plasma Proteomics (ALS) | Not specified | Not specified | Not specified | 98.3% | Amyotrophic Lateral Sclerosis |

FPR: False Positive Rate [8] [20] [7].

Experimental Protocols

Protocol 1: Optical Extinction Measurement for Protein Conformational Changes

Principle: This protocol detects cancer-specific conformational changes in plasma proteins through ultraviolet absorbance measurements at 340 nm, utilizing the Carcimun methodology [8].

Materials:

- Indiko Clinical Chemistry Analyzer (Thermo Fisher Scientific) or equivalent UV-Vis spectrophotometer

- Microcentrifuge tubes

- Pipettes and tips (10-1000 μL)

- 0.9% NaCl solution

- Distilled water (aqua dest.)

- 0.4% acetic acid solution (containing 0.81% NaCl)

- Fresh plasma samples (collected in EDTA or heparin tubes)

Procedure:

- Sample Preparation: Add 70 μL of 0.9% NaCl solution to the reaction vessel, followed by 26 μL of blood plasma, resulting in a total volume of 96 μL with a final NaCl concentration of 0.9%.

- Dilution: Add 40 μL of distilled water, increasing the volume to 136 μL and adjusting the NaCl concentration to 0.63%.

- Incubation: Incubate the mixture at 37°C for 5 minutes to achieve thermal equilibration.

- Baseline Measurement: Record a blank measurement at 340 nm to establish a baseline.

- Acidification: Add 80 μL of 0.4% acetic acid solution (containing 0.81% NaCl), resulting in a final volume of 216 μL with 0.69% NaCl and 0.148% acetic acid.

- Final Measurement: Perform the absorbance measurement at 340 nm using the spectrophotometer.

- Data Analysis: Calculate the extinction value using the formula: Extinction = (Final Absorbance - Baseline Absorbance) × Dilution Factor.

Quality Control: All measurements should be performed in a blinded manner, with personnel unaware of the clinical or diagnostic status of the samples. The previously defined cut-off value of 120 should be used to differentiate between healthy and cancer subjects [8].

Protocol 2: Fluorogenic Labeling for Amino Acid Concentration Signatures

Principle: This protocol measures total concentrations of specific amino acid residues (cysteine, free cysteine, lysine, tryptophan, tyrosine) in neat blood plasma using targeted fluorogenic labeling, creating an embedding that reflects the fractional composition of plasma proteins [20].

Materials:

- UV-Vis spectrophotometer or fluorescence plate reader

- Bioorthogonal fluorogenic labels specific to target amino acid side chains

- Neat patient blood plasma samples

- Reference standards for each target amino acid

- Dilution buffers (appropriate for each label)

- Black-walled microplates or cuvettes to minimize light scattering

Procedure:

- Autofluorescence Assessment: Perform two-dimensional excitation and emission scans on unreacted neat blood plasma to determine background fluorescence at chosen wavelengths.

- Sample Dilution: Dilute plasma samples according to the theoretical total protein concentration (typically 60-80 mg/mL) to place the total concentration of each target amino acid within the quantitative range of the labeling reactions.

- Labeling Reaction: Add fluorogenic labels directly to diluted plasma samples without purification steps. Labels should react exclusively with the side-chains of their targeted amino acid types.

- Incubation: Incubate the reaction mixture according to optimized conditions for each label (typically 30-60 minutes at room temperature).

- Fluorescence Measurement: Measure fluorescence intensity at predetermined excitation and emission wavelengths.

- Background Subtraction: Subtract the autofluorescence background measured in step 1 from the labeled sample fluorescence.

- Calibration Curve: Generate calibration curves using solutions of known amino acid concentration (known protein concentration times known number of targeted amino acid R-groups within the protein sequence).

- Concentration Calculation: Transform raw fluorescence intensities into amino acid concentrations using the calibration curve.

Validation: Verify that the experimentally measured Amino Acid Concentration Signature matches the theoretical AACS calculated from known concentrations of individual plasma proteins using existing proteomic data [20].

Pathway Visualization

Optical Biomarker Discovery Pathway

Experimental Workflow Comparison

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Equipment for Optical Biomarker Research

| Item Name | Function/Application | Specifications/Examples |

|---|---|---|

| UV-Vis Spectrophotometer | Measures absorbance/extinction of samples at specific wavelengths | Indiko Clinical Chemistry Analyzer; Ultrospec 3100 Pro UV/Vis Spectrophotometer [8] [21] |

| Fluorogenic Labels | Bioorthogonal labels that react with specific amino acid side chains to generate fluorescence | Labels targeting cysteine, lysine, tryptophan, tyrosine residues [20] |

| Chromogenic Reagents | Enzyme substrates that produce colored products for detection | Thrombin-specific S-2238 for protein C activity; p-nitroaniline (pNA) quantification [21] |

| Protein C Activator | Activates protein C to measure its activity in coagulation cascade | Protac (0.5 units/mL) from Agkistrodon contortrix venom [21] |

| Plasma Preparation Tubes | Collection and processing of plasma samples | EDTA or heparin tubes for blood collection [8] [7] |

| Reaction Buffers | Maintain optimal pH and ionic strength for assays | Tris-HCl buffers (pH 7.0-8.0) with CaCl₂, NaCl, BSA, and surfactants [21] |

| Reference Standards | Calibration and quantification of target analytes | Human protein C, protein S, FVa, FXa, prothrombin for coagulation assays [21] |

Discussion: Connecting Optical Signatures to Disease Mechanisms

Optical biomarker profiles provide direct insights into pathophysiological processes by detecting structural and compositional changes in proteins and other biomolecules. The significant increase in extinction values observed in cancer patients (mean 315.1) compared to healthy individuals (mean 23.9) using the Carcimun test reflects fundamental alterations in plasma protein conformation that occur during malignant transformation [8]. These changes potentially represent the accumulation of misfolded proteins, post-translational modifications, or alterations in plasma protein composition that serve as universal markers of malignancy.

The Amino Acid Concentration Signature approach reveals how the host immune response to tumor development creates distinct patterns in plasma protein composition, detectable through targeted amino acid quantification [20]. This method effectively captures the immunosurveillance changes that occur during cancer development, including immunoglobulin class switching and alterations to approximately 1700 of 3000 host proteins present within plasma. The differential abundance of specific proteins in conditions like amyotrophic lateral sclerosis (ALS), including neurofilament light chain (NEFL) and leukemia inhibitory factor (LIF), further demonstrates how optical profiles can illuminate disease-specific pathophysiological processes [7].

These optical signatures not only serve as diagnostic tools but also provide windows into the underlying molecular mechanisms of disease, offering potential targets for therapeutic intervention and enabling more personalized treatment approaches based on individual patient biomarker profiles.

Methodological Advances and Translational Applications in Disease Detection

The Carcimun test represents an innovative approach in the landscape of Multi-Cancer Early Detection (MCED) diagnostics, utilizing a unique methodology based on optical extinction measurements of plasma proteins. Unlike genomic-focused liquid biopsy tests that analyze circulating tumor DNA (ctDNA), the Carcimun test detects conformational changes in plasma proteins that occur in the presence of malignancy, offering a distinct complementary technology for cancer screening [22] [23].

This test is grounded in the principle that pathological conditions, including cancer, induce specific alterations in the structural conformation of plasma proteins, which in turn affect how these proteins interact with light. The underlying optical phenomenon follows the Beer-Lambert law, which mathematically describes the relationship between light absorption and the properties of the absorbing material: A = εlc, where A is absorbance, ε is the molar absorptivity (extinction coefficient), l is the path length, and c is the concentration of the absorbing species [2]. In the context of the Carcimun test, the measured "extinction value" reflects changes in the optical properties of plasma proteins resulting from cancer-induced conformational modifications, serving as a universal marker for general malignancy [22] [8].

The test addresses a significant limitation of traditional cancer diagnostic methods - including imaging techniques and tissue biopsies - which are often constrained by invasiveness, cost, and limited sensitivity, particularly for asymptomatic cancers or those located in hard-to-reach anatomical areas [22]. By providing a less invasive blood-based screening option with the potential to detect multiple cancer types simultaneously, the Carcimun test aligns with the growing trend toward comprehensive MCED platforms that can complement existing single-cancer screening methods [23].

Experimental Protocols and Methodologies

Sample Collection and Preparation Protocol

The standardized protocol for the Carcimun test begins with careful sample collection and processing to ensure analytical integrity [22] [8]:

- Blood Collection: Draw approximately 9 mL of venous blood into K3-EDTA tubes for plasma preparation [24].

- Plasma Separation: Centrifuge samples at 3,000 rpm for 5 minutes at room temperature to separate plasma from cellular components [24].

- Sample Aliquoting: Transfer the separated plasma to sterile tubes without additives for analysis [24].

- Blinding Procedure: Code all plasma samples to ensure personnel conducting the measurements remain blinded to the clinical or diagnostic status of samples throughout the testing process [22] [8].

Optical Extinction Measurement Procedure

The core analytical protocol for the Carcimun test involves a carefully optimized sequence of reagent additions and measurements [22] [8]:

- Initial Preparation: Add 70 µL of 0.9% NaCl solution to the reaction vessel, followed by 26 µL of blood plasma, achieving a total volume of 96 µL with a final NaCl concentration of 0.9%.

- Dilution: Introduce 40 µL of distilled water (aqua dest.), increasing the total volume to 136 µL and adjusting the NaCl concentration to 0.63%.

- Incubation: Incubate the mixture at 37°C for 5 minutes to achieve thermal equilibration.

- Baseline Measurement: Record a blank measurement at 340 nm to establish a baseline using a clinical chemistry analyzer.

- Acidification: Add 80 µL of 0.4% acetic acid solution (containing 0.81% NaCl), resulting in a final volume of 216 µL with 0.69% NaCl and 0.148% acetic acid.

- Final Measurement: Perform the definitive absorbance measurement at 340 nm using the Indiko Clinical Chemistry Analyzer (Thermo Fisher Scientific, Waltham, MA, USA) [22] [8].

- Quality Control: Analyze all samples in duplicate to ensure measurement reproducibility [24].

Data Analysis and Interpretation

The analytical workflow transforms raw optical measurements into clinically actionable results:

Calculation of Performance Metrics [22] [8]: The test's diagnostic performance is evaluated using standard statistical measures:

- Sensitivity = True Positives / (True Positives + False Negatives)

- Specificity = True Negatives / (True Negatives + False Positives)

- Accuracy = (True Positives + True Negatives) / Total Participants

- Positive Predictive Value (PPV) = True Positives / (True Positives + False Positives)

- Negative Predictive Value (NPV) = True Negatives / (True Negatives + False Negatives)

The predetermined cut-off value of 120 milli extinction units differentiates between healthy individuals (≤120) and cancer patients (>120), as established in prior validation studies [22] [8] [24].

Key Research Findings and Performance Data

Cohort Characteristics and Study Design

The validation study for the Carcimun test employed a rigorous prospective, single-blinded design with clearly defined participant groups [22] [8]:

Table 1: Study Cohort Composition

| Participant Group | Number of Participants | Age (Years, Mean ± SD) | Sex (Female/Male) |

|---|---|---|---|

| Healthy Volunteers | 80 | 49.1 ± 5.8 | 37/43 |

| Cancer Patients | 64 | 54.8 ± 6.3 | 28/36 |

| Inflammatory Conditions/Benign Tumors | 28 | 51.3 ± 7.4 | 12/16 |

Cancer diagnoses encompassed multiple types and were confirmed through standard clinical methods including imaging techniques and/or histopathological evaluation. All cancer cases were classified as stages I-III at diagnosis, incorporating both symptomatic and asymptomatic presentations to reflect a broad spectrum of clinical scenarios [22] [8]. The inclusion of participants with inflammatory conditions (fibrosis, sarcoidosis, pneumonia) and benign tumors addressed a significant limitation of previous studies by evaluating the test's performance in clinically challenging scenarios where false positives might occur [22].

Quantitative Performance Results

The Carcimun test demonstrated statistically significant differentiation between participant groups, with particularly high extinction values observed in cancer patients [22] [8]:

Table 2: Optical Extinction Values Across Participant Groups

| Participant Group | Extinction Value Range | Mean Extinction Value ± SD | Fold Increase vs. Healthy |

|---|---|---|---|

| Healthy Volunteers | 1 - 110 | 23.9 ± 23.9 | - |

| Cancer Patients | 34 - 795 | 315.1 ± 188.9 | 13.2-fold |

| Inflammatory Conditions/Benign Tumors | 12 - 351 | 62.7 ± 22.7 | 2.6-fold |

Statistical analysis revealed significant differences between groups (one-way ANOVA: F=128.65, p<0.001) with a large effect size (η²=0.60). Post-hoc analyses confirmed significant differences between healthy participants and cancer patients (p<0.001) as well as between cancer patients and those with inflammatory conditions (p<0.001) [22] [8].

Table 3: Diagnostic Performance Metrics of the Carcimun Test

| Performance Metric | Value (%) | Interpretation |

|---|---|---|

| Sensitivity | 90.6 | Proportion of cancer patients correctly identified |

| Specificity | 98.2 | Proportion of healthy individuals correctly identified |

| Accuracy | 95.4 | Overall correctness of the test |

| Positive Predictive Value (PPV) | Reported | Likelihood that a positive test indicates cancer |

| Negative Predictive Value (NPV) | Reported | Likelihood that a negative test rules out cancer |

The test maintained high sensitivity and specificity while minimizing both false positives and false negatives, demonstrating robust performance characteristics suitable for screening applications [22] [25] [8].

Research Reagent Solutions and Essential Materials

Implementation of the Carcimun test protocol requires specific reagents and instrumentation to ensure reproducible results:

Table 4: Essential Research Reagents and Materials

| Item | Specifications | Function in Protocol |

|---|---|---|

| Blood Collection Tubes | K3-EDTA tubes (9 mL) | Anticoagulated plasma collection and preservation |

| Sodium Chloride Solution | 0.9% NaCl | Sample dilution and ionic strength adjustment |

| Acetic Acid Solution | 0.4% in 0.81% NaCl | Protein conformational change induction |

| Clinical Chemistry Analyzer | Indiko Clinical Chemistry Analyzer | Precise optical extinction measurement at 340 nm |

| Centrifuge | Capable of 3,000 rpm | Plasma separation from cellular components |

| Temperature-Controlled Incubator | Maintains 37°C ± 0.5°C | Sample thermal equilibration |

Technical Advantages and Methodological Considerations

Comparative Strengths of the Optical Detection Approach

The Carcimun test offers several distinct advantages over alternative MCED methodologies:

- Alternative to ctDNA-Based Approaches: While tests like GRAIL's Galleri analyze ctDNA methylation patterns, the Carcimun test focuses on protein conformational changes, potentially offering complementary detection capabilities for cancers with low ctDNA shedding [22] [23].

- Technical Practicality: The method utilizes standard clinical chemistry analyzers rather than requiring specialized sequencing instrumentation, potentially enhancing accessibility in routine clinical laboratories [22] [8].

- Robust Performance in Inflammatory Conditions: The test maintains specificity in the presence of conditions like fibrosis, sarcoidosis, and pneumonia, which often confound other cancer detection methods [22] [8].

Analytical Considerations for Implementation

Researchers should consider several methodological aspects when implementing this platform:

- Sample Integrity: Plasma separation must occur promptly after blood collection to prevent protein degradation that could affect extinction measurements [24].

- Standardization Needs: Strict adherence to incubation times, temperature controls, and reagent preparation is essential for reproducible results [22] [8].

- Interference Management: While the test demonstrates specificity in inflammatory conditions, extremely high inflammatory states may still require clinical correlation [22].

- Instrument Calibration: Regular calibration of the spectrophotometric system ensures consistent extinction measurements across different analysis batches [2] [26].

The relationship between protein conformation and optical properties provides a fascinating avenue for cancer diagnostics research. The Carcimun test exemplifies how fundamental principles of protein physics can be translated into practical clinical tools with significant potential impact on early cancer detection strategies.

The accurate analysis of plasma proteins is a cornerstone of modern clinical diagnostics and biomedical research, particularly in the pursuit of novel disease biomarkers. Traditional diagnostic methods, such as imaging and tissue biopsies, are often limited by invasiveness, cost, and sensitivity [25] [8]. In recent years, blood-based multi-cancer early detection (MCED) tests have emerged as a less invasive and potentially more comprehensive approach [25] [8] [27]. Among these, innovative techniques utilizing optical extinction measurements have demonstrated significant potential for differentiating conformational changes in plasma proteins associated with malignancy [25] [8]. This application note establishes standardized procedures for plasma sample analysis within this emerging technological context, providing detailed protocols validated in clinical studies for robust and reproducible results.

Theoretical Principles of Optical Analysis

Optical measurements for protein quantification primarily rely on the Beer-Lambert Law, which describes the relationship between light absorption and the properties of the material through which light is traveling [28] [2] [29]. This fundamental principle is expressed as:

A = εlc

Where A is the measured absorbance (optical density), ε is the molar absorptivity or extinction coefficient (M⁻¹cm⁻¹), l is the path length (cm), and c is the concentration of the absorbing species (M) [2] [29].

For protein analysis, absorbance at 280 nm is most commonly utilized due to strong absorption by aromatic amino acids tryptophan and tyrosine [2] [30]. The molar absorptivity at 280 nm (ε₂₈₀) can be predicted from a protein's amino acid sequence using the following formula:

ε₂₈₀ = (nW × 5,500) + (nY × 1,490) + (nC × 125) [2]

Where nW, nY, and nC represent the number of tryptophan, tyrosine, and cysteine residues, respectively [2].

It is critical to distinguish between absorbance and optical density in methodological documentation. While these terms are often used interchangeably, absorbance specifically quantifies light absorption, whereas optical density includes contributions from both absorption and light scattering, particularly relevant in suspensions of particles or cells [28] [29]. This distinction is crucial when analyzing complex biological samples like plasma, where both dissolved proteins and particulate matter may be present.

Materials and Reagents

Research Reagent Solutions

Table 1: Essential reagents and materials for plasma protein analysis via optical extinction measurements.

| Item | Function | Specifications/Considerations |

|---|---|---|

| Sodium Chloride (0.9%) | Sample dilution and ionic strength adjustment | Maintains physiological osmolarity [8] |

| Distilled Water | Reaction volume adjustment | Must be particle-free; resistivity of 18.2 MΩ·cm recommended for trace analysis [31] |

| Acetic Acid (0.4%) | Denaturant for conformational studies | Final concentration of 0.148% in reaction mixture [8] |

| Reference Standards (BSA, IgG) | Quantification calibration and quality control | Use high-purity, characterized standards [2] |

| Nitric Acid | Cleaning and system preparation | High purity for trace element analysis [31] |

| Quartz Cuvettes | Optical measurement | Required for UV range transparency [2] |

Equipment and Instrumentation

Precise optical analysis requires specific instrumentation capable of accurate measurements in the ultraviolet spectrum. The following equipment is essential:

- UV-Vis Spectrophotometer: Must include capability for measurements at 340 nm [8] and 280 nm [2]. Instrument performance verification should be conducted regularly.

- Analytical Balance: Precision of ±0.1 mg for reagent preparation.

- Thermal Incubator: Maintains stable temperature of 37°C±0.5°C for sample incubation [8].

- Microcentrifuges: For plasma separation from whole blood.

- Pipetting Systems: Calibrated for volumes ranging from 10 μL to 1 mL with appropriate precision.

Experimental Protocols

Plasma Sample Preparation Workflow

Detailed Stepwise Procedure

Plasma Separation

- Collect whole blood using appropriate anticoagulant tubes (EDTA, citrate, or heparin).

- Centrifuge at 2000 × g for 10 minutes at 4°C within 1 hour of collection.

- Carefully aspirate the plasma supernatant without disturbing the buffy coat or red blood cells.

- Aliquot plasma into sterile tubes and store at -80°C if not analyzed immediately.

Sample Preparation for Optical Extinction Measurement

- Thaw frozen plasma aliquots on ice if previously stored.

- Add 70 µL of 0.9% NaCl solution to the reaction vessel.

- Add 26 µL of blood plasma to create a total volume of 96 µL.

- Add 40 µL of distilled water, bringing the total volume to 136 µL and adjusting NaCl concentration to 0.63%.

- Incubate the mixture at 37°C for 5 minutes to achieve thermal equilibration [8].

Spectrophotometric Measurement

- Perform a blank measurement at 340 nm to establish baseline.

- Add 80 µL of 0.4% acetic acid solution (containing 0.81% NaCl).

- The final volume will be 216 µL with 0.69% NaCl and 0.148% acetic acid.

- Perform the final absorbance measurement at 340 nm using a clinical chemistry analyzer or spectrophotometer [8].

Quality Control Measures

- Include control samples with known extinction values in each run.

- Perform all measurements in duplicate or triplicate.

- Ensure consistent path length; for non-standard cuvettes, apply path length correction [28].

Data Analysis and Interpretation

Quantitative Analysis of Protein Concentrations

For protein quantification using absorbance at 280 nm, apply the Beer-Lambert law:

c = A / (ε × l)

Where c is concentration (M), A is measured absorbance, ε is molar absorptivity (M⁻¹cm⁻¹), and l is path length (cm) [2].

When quantifying proteins in mg/mL, use the relationship:

Protein concentration (mg/mL) = A₂₈₀ × (dilution factor) × (MW / ε₂₈₀) [2]

Where MW is the molecular weight in Daltons.

If nucleic acid contamination is suspected, apply the following correction:

Protein concentration (mg/mL) = (1.55 × A₂₈₀) - (0.75 × A₂₆₀) [2]

Performance Metrics in Clinical Validation Studies

Table 2: Performance characteristics of the Carcimun test for cancer detection using optical extinction measurements of plasma proteins [25] [8].

| Parameter | Value | Context |

|---|---|---|

| Accuracy | 95.4% | Differentiation between cancer patients, healthy individuals, and those with inflammatory conditions |

| Sensitivity | 90.6% | Proportion of true positives correctly identified |

| Specificity | 98.2% | Proportion of true negatives correctly identified |

| Mean Extinction Value: Cancer Patients | 315.1 | Significantly higher than healthy controls (p<0.001) |

| Mean Extinction Value: Healthy Individuals | 23.9 | Baseline reference value |

| Mean Extinction Value: Inflammatory Conditions | 62.7 | Demonstrates differentiation from malignant conditions |

| Cut-off Value | 120 | Optimal threshold for differentiating healthy and cancer subjects |

Technological Applications and Case Studies

Multi-Cancer Early Detection Platform

The Carcimun test represents an innovative application of optical extinction measurements for multi-cancer early detection (MCED). This methodology detects conformational changes in plasma proteins through optical extinction measurements at 340 nm, serving as a universal marker for general malignancy [25] [8]. In a prospective clinical validation study with 172 participants, this approach demonstrated high diagnostic accuracy in differentiating cancer patients from healthy individuals and those with inflammatory conditions including fibrosis, sarcoidosis, and pneumonia [25] [8].

Immunodiagnostic Biomarker Detection

Emerging research demonstrates that cancer-specific immune activation induces measurable changes in plasma protein composition, particularly affecting amino acid residues including cysteine, lysine, tryptophan, and tyrosine [27]. These alterations create a distinct signature detectable through optical methods, providing a novel immunodiagnostic approach with significantly stronger signals than ctDNA-based methods [27]. This methodology has demonstrated capability to identify 78% of cancers with 0% false positive rate in a cohort of 97 participants, achieving an AUROC of 0.95 [27].

Troubleshooting and Technical Considerations

Common Analytical Challenges

- High Background Absorbance: Ensure reagents are of high purity, use appropriate blank corrections, and consider alternative wavelengths if interference persists [28] [31].

- Non-Linearity at High Concentrations: Maintain absorbance readings between 0.1 and 1.0 for optimal accuracy [28]. For values exceeding this range, dilute samples appropriately and apply dilution factors in calculations.

- Light Scattering Effects: For turbid samples, consider centrifugation or filtration to remove particulate matter, or use wavelength correction methods [29].

- Path Length Variability: For non-standard measurement vessels, implement path length correction algorithms to normalize data to 1 cm equivalent [28].

Method Validation Parameters

When implementing these protocols, establish method validation including:

- Linearity: Across expected concentration range (typically 0.1-1.0 AU)

- Precision: Intra-assay and inter-assay coefficient of variation

- Limit of Detection: Lowest measurable concentration above background

- Robustness: Consistency across operators, instruments, and reagent lots

Standardized procedures for plasma sample analysis using optical extinction measurements provide a robust framework for detecting protein conformational changes associated with malignant transformation. The protocols outlined in this document, validated in clinical studies, enable researchers to achieve high sensitivity and specificity in differentiating pathological conditions. The incorporation of stringent quality control measures, appropriate sample handling techniques, and validated analytical procedures ensures the reliability and reproducibility required for both research and clinical applications. As this field advances, these standardized protocols will facilitate broader adoption and validation of optical extinction methodologies for plasma protein analysis in cancer detection and other diagnostic applications.

Optical extinction measurement, which quantifies the reduction of light intensity as it passes through a sample due to absorption and scattering, is emerging as a powerful, versatile analytical technique in biomedical research and clinical diagnostics. While its application in cancer detection through multi-cancer early detection (MCED) tests has gained significant attention, its utility extends formidably into the realms of neurodegenerative and inflammatory disease monitoring. This technical note details the experimental protocols, key findings, and reagent solutions for applying optical extinction measurements in these critical disease areas, providing researchers and drug development professionals with practical frameworks for implementation.

The fundamental principle underpinning this technology is the detection of conformational and compositional changes in plasma proteins, which serve as biomarkers for various pathological states. Unlike technologies reliant on circulating tumor DNA (ctDNA), which can present challenges with low abundance in early-stage diseases and analytical sensitivity, the direct assessment of plasma proteomic profiles offers a more universal marker for general malignancy and acute inflammation [8]. Recent advances have demonstrated that optical extinction-based assays can effectively differentiate between healthy individuals, patients with cancer, and those with inflammatory conditions with high accuracy, sensitivity, and specificity [8].

Table 1: Key Advantages of Optical Extinction Measurements in Disease Monitoring

| Feature | Advantage | Application Benefit |

|---|---|---|

| Minimal Sample Volume | Requires only microliters of plasma | Enables high-throughput screening and pediatric applications |

| Rapid Turnaround | Results in <5 minutes per sample [32] | Facilitates near-real-time clinical decision making |

| Cost-Effectiveness | Lower reagent costs and simpler instrumentation than advanced sequencing | Increases accessibility for routine screening |

| Multiplexing Potential | Can detect multiple protein conformations simultaneously | Provides comprehensive biomarker panels from a single assay |

| Non-Invasive Nature | Requires only standard blood draw | Improves patient compliance for serial monitoring |

Optical Extinction Measurements in Neurodegenerative Disease Biomarker Discovery

The application of optical extinction measurements for neurodegenerative disease monitoring primarily focuses on detecting specific conformational changes in plasma proteins associated with pathological processes. The Carcimun test protocol exemplifies this approach, utilizing a straightforward extinction measurement at 340 nm to differentiate protein states indicative of neurodegeneration [8].

Protocol: Plasma Protein Conformational Analysis via Optical Extinction

- Sample Preparation: Combine 70 µL of 0.9% NaCl solution with 26 µL of blood plasma in a reaction vessel, achieving a total volume of 96 µL with a final NaCl concentration of 0.9%.

- Dilution: Add 40 µL of distilled water (aqua dest.), increasing the total volume to 136 µL and adjusting the NaCl concentration to 0.63%.

- Incubation: Incubate the mixture at 37°C for 5 minutes to achieve thermal equilibration.

- Baseline Measurement: Record a blank measurement at 340 nm to establish a baseline reference.

- Acidification: Add 80 µL of 0.4% acetic acid (AA) solution (containing 0.81% NaCl), resulting in a final volume of 216 µL with 0.69% NaCl and 0.148% acetic acid.

- Final Measurement: Perform the definitive absorbance measurement at 340 nm using a clinical chemistry analyzer (e.g., Indiko, Thermo Fisher Scientific) [8].

This protocol capitalizes on the principle that the measured extinction (E) follows the Lambert-Beer law (E = εcd), where ε is the molar extinction coefficient, c is the concentration, and d is the path length [33]. In neurodegenerative diseases, the conformational changes in specific plasma proteins alter their extinction coefficients, enabling detection of pathological states.

Figure 1: Workflow for plasma protein conformational analysis using optical extinction measurements. The protocol involves sequential sample preparation steps followed by spectrophotometric measurement at 340 nm.

Key Biomarkers and Data Analysis

In neurodegenerative disease applications, optical extinction measurements can be integrated with advanced proteomic platforms to identify and validate specific biomarker panels. Recent large-scale studies utilizing multiplex platforms like NULISAseq have identified distinct plasma protein signatures associated with major neurodegenerative conditions, including Alzheimer's disease (AD), Parkinson's disease (PD), Frontotemporal dementia (FTD), and Dementia with Lewy bodies (DLB) [34].

Table 2: Key Plasma Protein Biomarkers in Neurodegenerative Diseases

| Disease | Core Biomarkers | Additional Disease-Specific Proteins | AUC Values |

|---|---|---|---|

| Alzheimer's Disease (AD) | Aβ42/40, p-tau217, p-tau181, GFAP, NfL | MSLN, SAA1 | 0.81 for AD diagnosis [34] |

| Parkinson's Disease (PD) | SNCA, pSNCA-129 | FLT1, PARK7 | 0.96 for Amyloid positivity [34] |

| Dementia with Lewy Bodies (DLB) | p-tau181, NfL, GFAP | Specific signature shared with PD | High similarity to PD profile [34] |

| Frontotemporal Dementia (FTD) | TDP-43, NEFL | Limited distinct proteins (4 identified) | Differentiates from other dementias [34] |

| Amyotrophic Lateral Sclerosis (ALS) | NEFL (log2 fold change = 2.34) | LIF, HSPB6, MEGF10, TPM3 | 98.3% for diagnostic model [7] |

Machine learning approaches significantly enhance the analytical power of extinction-based proteomic data. The Protein-Protein Interaction-based eXplainable Graph Propagational Network (PPIxGPN) represents a novel computational framework that captures synergetic effects between proteins by integrating protein-protein interaction (PPI) networks with independent protein effects [35]. This model utilizes a single graph propagational layer configured in a shallow architecture, ensuring high explainability while effectively capturing the comprehensive properties of the PPI network for neurodegenerative risk prediction [35].

Advanced Applications in Inflammatory Disease Monitoring

Protocol for Inflammatory Biomarker Detection