Optimizing Audit and Feedback Systems to Improve Cancer Screening Follow-Up: A Comprehensive Guide for Researchers and Clinicians

This article provides a comprehensive analysis of audit and feedback (A&F) systems as a critical strategy for improving follow-up after cancer screening, a key challenge in achieving early cancer detection...

Optimizing Audit and Feedback Systems to Improve Cancer Screening Follow-Up: A Comprehensive Guide for Researchers and Clinicians

Abstract

This article provides a comprehensive analysis of audit and feedback (A&F) systems as a critical strategy for improving follow-up after cancer screening, a key challenge in achieving early cancer detection and reducing mortality. Tailored for researchers, scientists, and drug development professionals, it synthesizes foundational evidence, explores methodological frameworks for implementation, and addresses common optimization challenges. The content further examines validation strategies and compares the effectiveness of A&F against other interventions, drawing on recent studies and real-world implementation data. The goal is to equip biomedical professionals with the knowledge to design, evaluate, and refine A&F systems that enhance the entire cancer screening continuum, from initial participation to diagnostic resolution.

The Critical Role of Audit and Feedback in the Cancer Screening Continuum

Defining Audit and Feedback in the Context of Cancer Screening Follow-Up

Audit and Feedback (A&F) is a systematic implementation strategy designed to improve professional practice and healthcare quality by measuring clinical performance against explicit standards and communicating this information back to healthcare providers [1]. In the specific context of cancer screening follow-up, A&F functions as a critical quality improvement mechanism to identify gaps in care and encourage adherence to evidence-based screening guidelines [2] [3]. The underlying theoretical premise is that highly motivated health professionals, when presented with information showing discrepancies between their actual practice and desired performance standards, will shift attention to areas requiring improvement [1].

The A&F process operates as a cyclical quality improvement process involving five core stages: (1) preparation for audit, (2) selection of criteria based on evidence-based guidelines, (3) measurement of performance, (4) implementation of improvements, and (5) sustaining improvements through repeated cycles [1]. For cancer screening programs, this typically focuses on identifying patients overdue for screening or those with abnormal results requiring follow-up, then feeding this information back to primary care providers in a structured, actionable format [3] [4].

Key Components and Implementation Protocols

Core Components of A&F Systems

Effective A&F interventions for cancer screening follow-up comprise several essential components, which can be systematically implemented using standardized protocols. The table below outlines the core components and their implementation specifications.

Table 1: Core Components of Audit and Feedback Systems for Cancer Screening

| Component | Description | Implementation Protocol |

|---|---|---|

| Audit Data Collection | Systematic review of performance based on explicit criteria [1] | Extract data from EHRs, administrative databases, or medical registries; use standardized data extraction tools [3] [5] |

| Performance Comparison | Benchmarking against standards or peers [1] | Compare individual/provider performance to evidence-based guidelines (e.g., ACS, USPSTF) or group averages [6] [4] |

| Feedback Delivery | Structured communication of performance data [1] | Utilize emails, portals, or dashboards; employ behavior change techniques in messaging [4] |

| Actionable Recommendations | Specific guidance for quality improvement [3] | Include clear follow-up actions, identify specific overdue patients, provide resource navigation [7] [3] |

Experimental Protocols for A&F Implementation

Based on recent randomized controlled trials, the following protocols detail methodologies for implementing A&F systems in cancer screening contexts.

Protocol 1: Clinical Decision Support System for Abnormal Results Follow-Up Adapted from Atlas et al. (2025) - Comparing CDSS for abnormal cervical cancer screening [3]

- Objective: Improve follow-up completion for patients with abnormal cervical cancer screening results.

- Design: Cluster randomized controlled trial with primary care clinics as unit of randomization.

- Intervention Groups:

- Usual care

- CDSS alone

- CDSS with patient outreach (± navigation)

- CDSS Implementation:

- System A: Uses natural language processing to evaluate extracted data outside EHR

- System B: Uses commercial EHR functionality with LOINC-defined result fields

- Outcome Measurement: Completion of recommended follow-up at 120 days post-enrollment

- Data Analysis: Manual chart review to assess CDSS accuracy (true positive rate)

Protocol 2: Physician Communication and Engagement Strategy Adapted from Price-Haywood et al. (2014) and Cancer Care Ontario (2018) [2] [4]

- Objective: Increase primary care physician engagement with cancer screening audit reports.

- Design: Factorial randomized experiment (2×2×2) testing email components.

- Participants: Primary care physicians registered for screening activity reports.

- Intervention Components:

- Anticipated Regret: Induce awareness of future regret about not accessing reports (e.g., "How would you feel if a patient had a poor outcome because you missed an abnormal result?")

- Material Incentive: Link report use to available monetary bonuses for achieving screening targets

- Problem Solving: Provide strategies to overcome barriers (e.g., delegate registration, schedule time)

- Outcome Measurement: Email open rates, link click-through rates, report access logs

- Process Evaluation: Semi-structured interviews to understand mechanisms of effect

Quantitative Evidence and Effectiveness Data

Recent studies provide quantitative evidence supporting the effectiveness of A&F strategies for improving cancer screening follow-up. The data below summarize key findings from clinical trials and implementation studies.

Table 2: Effectiveness of Audit and Feedback Interventions in Cancer Screening

| Study/Implementation | Screening Type | Intervention Components | Key Quantitative Findings |

|---|---|---|---|

| Atlas et al. (2025) [3] | Cervical cancer | CDSS with patient outreach ± navigation | Follow-up rates: 23.5% (usual care) vs. 38.2% (CDSS + outreach) (p<0.001); CDSS true positive rate: 61.3-70.4% |

| Price-Haywood et al. (2014) [2] | Colorectal, breast, cervical | Communication training + audit/feedback | Improved patient-centered counseling behaviors; no significant between-group differences in screening rates except mammography |

| Colour-Coding Navigation (2020) [7] | Breast cancer chemotherapy | Triage system (green/yellow/red) + navigation | 80% of non-compliant (Code Red) patients eventually accepted treatment; stratification: 64.8% Green, 27.0% Yellow, 8.2% Red |

| Cancer Care Ontario (2018) [4] | Colorectal, breast, cervical | Monthly email prompts + online audit reports | Baseline: <7% of email recipients clicked link to access reports; association between report use and higher screening rates |

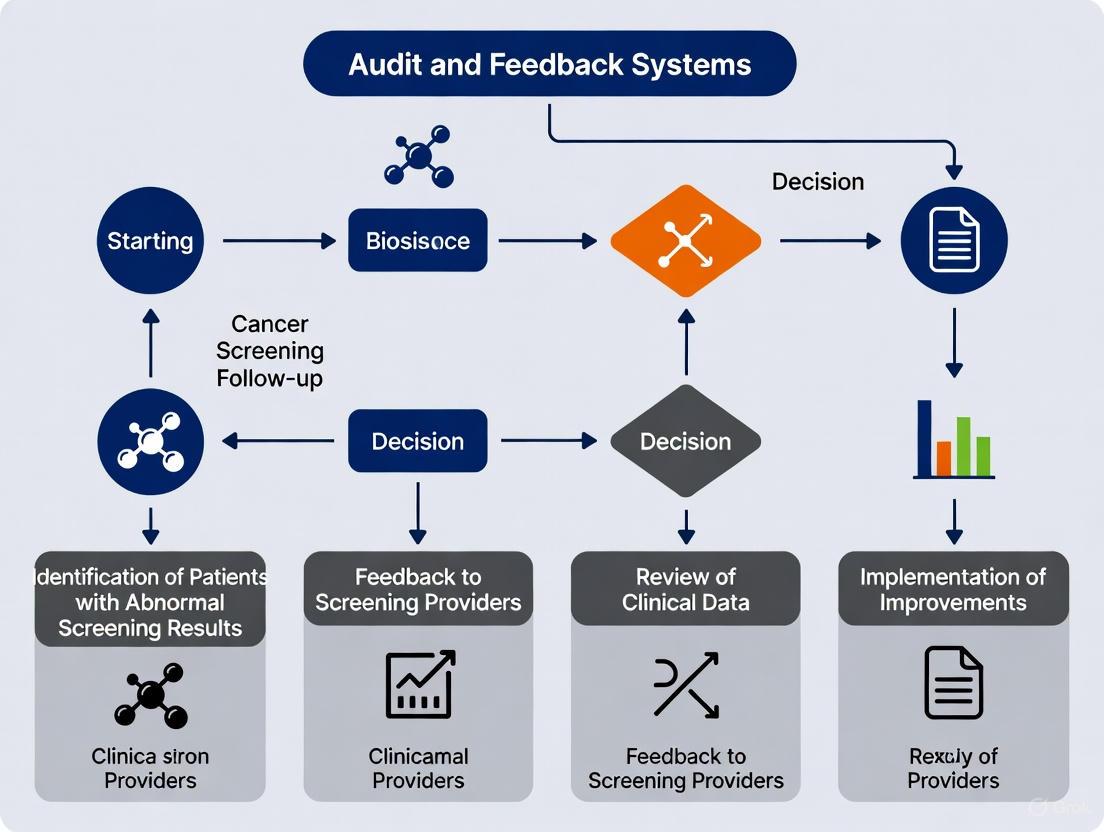

Visualization and Workflow Diagrams

The following diagrams illustrate the theoretical framework and implementation workflows for A&F systems in cancer screening follow-up.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials and Methods for A&F Implementation

| Tool/Resource | Function/Application | Implementation Example |

|---|---|---|

| Electronic Health Records (EHR) | Data source for identifying overdue screening and abnormal results [3] [5] | Extract structured data using LOINC codes or NLP for unstructured data [3] |

| Clinical Decision Support Systems (CDSS) | Automated identification of patients needing follow-up care [3] | Implement systems with rule-based algorithms for screening guidelines [3] |

| Behavior Change Technique (BCT) Taxonomy | Framework for designing persuasive communication [4] | Apply techniques: anticipated regret, material incentives, problem-solving [4] |

| Data Visualization Platforms | Present performance data in cognitively accessible formats [8] | Create simplified reports with high contrast colors, clear labels, and comparative benchmarks [8] |

| Patient Navigation Systems | Address barriers to care completion [7] [3] | Implement color-coded triage (green/yellow/red) with tailored support [7] |

| Health Information Technology Usability Evaluation Scale (Health-ITUES) | Measure usability of A&F interfaces and reports [8] | Customize 20-item scale with Likert responses for specific A&F context [8] |

Cancer screening represents a foundational public health strategy for reducing cancer-related morbidity and mortality through early detection. However, the effectiveness of any screening program is contingent not just on initial participation but on the complete continuum of care, culminating in timely follow-up for abnormal results. Failures in this follow-up phase substantially increase the likelihood of preventable morbidity and mortality, particularly among vulnerable populations where barriers to care include provider shortages and low health insurance coverage [9]. This application note frames this challenge within the critical context of audit and feedback systems, presenting data, protocols, and implementation frameworks to strengthen this lifesaving intervention.

Research consistently demonstrates that follow-up care after abnormal cancer screening remains suboptimal across multiple cancer types [9] [10]. For example, while over 96% of abnormal breast screens receive timely diagnostic follow-up, rates fall to approximately 76% for both cervical and colorectal cancer screening [10]. This gap represents a critical systems failure and a significant opportunity for quality improvement through structured audit and feedback mechanisms, which the Community Preventive Services Task Force (CPSTF) recommends based on sufficient evidence of effectiveness [11].

Quantitative Landscape: Screening and Follow-Up Metrics

Systematic measurement is the foundation of effective audit and feedback. The following tables present key performance indicators across the cancer screening continuum, derived from population-based research, to enable benchmarking and gap identification.

Table 1: Population-Based Cancer Screening Metrics (2013 Data) [10]

| Metric | Breast Cancer | Cervical Cancer | Colorectal Cancer |

|---|---|---|---|

| Screening-eligible Population | 305,568 | 3,160,128 | 2,363,922 |

| Up-to-Date on Testing | 63.5% | 84.6% | 77.5% |

| Abnormal Screening Rate | 10.7% | 4.4% | 4.5% |

| Timely Diagnostic Follow-Up | 96.8% | 76.2% | 76.3% |

| Cancer Detection (per 1000 screens) | 5.66 | 0.17 | 1.46 |

Table 2: Evidence-Based Interventions to Improve Screening Participation and Follow-Up [11]

| Intervention | Breast Cancer | Cervical Cancer | Colorectal Cancer |

|---|---|---|---|

| Patient Navigation Services | Recommended (Strong) | Recommended (Sufficient) | Recommended (Strong) |

| Provider Assessment & Feedback | Recommended (Sufficient) | Recommended (Sufficient) | Recommended (Sufficient) |

| Provider Reminder & Recall Systems | Recommended (Strong) | Recommended (Strong) | Recommended (Strong) |

| Client Reminders | Recommended (Strong) | Recommended (Strong) | Recommended (Strong) |

| Multicomponent Interventions | Recommended (Strong) | Recommended (Strong) | Recommended (Strong) |

Experimental Protocol: Implementing an Audit and Feedback System for Screening Follow-Up

This protocol provides a detailed methodology for establishing an audit and feedback system to monitor and improve timely follow-up after abnormal cancer screening results, adaptable to various healthcare settings.

Objectives and Scope

- Primary Objective: To increase the proportion of patients receiving guideline-concordant timely follow-up after an abnormal breast, cervical, or colorectal cancer screening result.

- Defined Scope: The system should track the screening continuum from abnormal result identification through diagnostic resolution for the target cancers within a defined patient population.

- Population Identification:

- Data Sources: Extract data from Electronic Health Records (EHR), regional cancer screening registries (e.g., New Mexico HPV Pap Registry [10]), and pathology reporting systems.

- Eligibility: Include all screen-eligible individuals per USPSTF guidelines within the defined calendar year.

- Key Variable Abstraction:

- Patient demographics (age, gender, race/ethnicity, insurance status)

- Screening test type and date

- Screening result and date of result communication

- Date of recommended follow-up procedure

- Date of completed follow-up procedure (or documentation of refusal/cancellation)

- Final diagnostic finding (e.g., normal, precancerous lesion, cancer)

- Operational Definitions for Metrics: Utilize standardized definitions to ensure consistency, as exemplified in Table 1. For instance:

Data Analysis and Feedback Reporting

- Calculate Core Metrics: For each cancer type and relevant sub-populations (e.g., by clinic, provider, demographic group), calculate:

- Proportion of abnormal screens receiving timely diagnostic evaluation.

- Median/mean time from abnormal result to diagnostic resolution.

- Cancer detection rates per 1,000 screens.

- Generate Feedback Reports: Create comparative reports for clinical teams and leadership. These should benchmark performance against internal goals, external benchmarks from sources like the PROSPR consortium [10], and prior performance periods.

- Dissemination Schedule: Distribute feedback reports on a quarterly basis to maintain engagement and monitor progress. Supplement with annual comprehensive reports.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Screening Follow-Up Research and Implementation

| Item | Function/Application |

|---|---|

| PROSPR Common Data Elements | Standardized definitions and metrics for harmonized data collection across breast, cervical, and colorectal cancer screening processes, enabling multi-site research and benchmarking [10]. |

| CPSTF Recommended Intervention Guide | Evidence-based repository of effective strategies (e.g., patient navigation, provider reminders) to inform the design of quality improvement initiatives aimed at boosting follow-up rates [11]. |

| Health Information System (HIS) Data | Data extracted from EHRs, claims, and registries used to passively identify cohorts, track outcomes, and automate aspects of the audit and feedback process [10] [12]. |

| Structured Interview Guides | Qualitative data collection tools to understand patient and provider perspectives on barriers and facilitators to follow-up care, essential for tailoring interventions [9]. |

| WCAG Contrast Checker Tool | Accessibility resource to ensure all patient-facing materials (letters, portals) and data visualization dashboards meet contrast standards for readability and inclusivity [13]. |

Integrated Discussion: Synergizing Audit and Feedback with System-Level Interventions

Audit and feedback alone is a necessary but insufficient component for achieving optimal follow-up rates. Its power is maximized when integrated within a suite of evidence-based strategies. Recent research on self-sampling modalities underscores that high patient satisfaction (mean satisfaction scores of 4.0/4.0) and preference for self-sampling (60% in one study) do not automatically translate to complete follow-up, highlighting the need for proactive system support [9]. This is particularly critical for underserved patients, where baseline knowledge is not a prerequisite for accessing follow-up care if robust systems are in place [9].

The organizational determinants of successful screening programs, as identified in a recent systematic review, include centralized coordination, active invitation systems, and integrated quality assurance mechanisms [12]. These features align perfectly with a comprehensive audit and feedback framework. Furthermore, combining this framework with patient navigation services—which the CPSTF strongly recommends for all three cancers—addresses patient-level barriers that audits alone cannot [11]. Digital tools, such as reinforcement learning-based reminders, further enhance this ecosystem when fully integrated [12]. The ultimate goal is a learning health system where continuous audit informs targeted feedback, which in turn activates multi-component interventions (e.g., navigation + reminders + reduced structural barriers), creating a virtuous cycle that closes the follow-up gap and fulfills the public health imperative of cancer screening.

Audit and feedback (A&F) systems represent a cornerstone implementation strategy for improving healthcare quality, including within the critical domain of cancer screening follow-up. The Clinical Performance Feedback Intervention Theory (CP-FIT) provides a theoretical framework for A&F, proposing that it operates through a cyclical feedback process that optimizes individual patient care and modifies organizational care delivery [14]. In cancer screening, where the benefits of early detection can be undermined by failures in the diagnostic cascade after an abnormal result, A&F systems offer a mechanism to ensure completion of the screening process. This application note synthesizes the current evidence base, provides structured experimental protocols, and details essential resources for implementing A&F systems in cancer screening follow-up research, directly supporting the broader thesis that systematically applied A&F significantly improves compliance with recommended pathways.

Quantitative Evidence Base for Audit and Feedback

Robust quantitative evidence supports the efficacy of A&F in clinical settings. A systematic review of A&F involving over 140 randomized trials demonstrates a small to moderate effect (median 4.3% improvement) on professional compliance with desired clinical practice, though the effect size varies widely (from -9% to +70%) depending on design and context [1]. The specific application of A&F within cancer screening systems shows considerable promise. A recent systematic review (2025) on organizational determinants of cancer screening participation found that A&F mechanisms modestly improved adherence, particularly when aligned with quality improvement initiatives [15]. Furthermore, the CanScreen5 global cancer screening repository, encompassing data from 84 countries, underscores the critical importance of robust information systems for tracking performance—a foundational element for effective A&F [16].

Table 1: Documented Efficacy of Audit and Feedback in Healthcare and Cancer Screening

| Context/Study | Reported Effect Size or Outcome | Key Determinants of Success |

|---|---|---|

| General Healthcare (Cochrane Review) | Median 4.3% improvement in compliance; range: -9% to +70% [1] | Focus on poorly performing providers; clear targets and action plans [1] |

| Cancer Screening Programs | Modest improvement in adherence [15] | Alignment with quality improvement initiatives; integration within broader organizational ecosystems [15] |

| Acute Stroke Treatment (tPA) | Development of 5 additional implementation strategies post-feedback (e.g., education, protocol folders, increased access) [14] | Enablement, training, and environmental restructuring as mechanisms of action [14] |

| State-wide Value-Based Healthcare | Operated through 8 mechanistic processes (e.g., ownership, sensemaking, social influence) [17] | Engagement between auditors/clinicians; meaningful indicators; clear improvement plans [17] |

Experimental Protocols for A&F Research

Protocol 1: Multilevel Intervention for Abnormal Cancer Screening Follow-up (mFOCUS)

The multilevel Follow-up of Cancer Screening (mFOCUS) trial provides a rigorous, pragmatic protocol for evaluating A&F in a real-world setting [18].

- Objective: To evaluate the effectiveness of a multilevel intervention on improving the follow-up of abnormal breast, cervical, colorectal, and lung cancer screening results.

- Study Design: A 4-arm, cluster randomized controlled trial (RCT) with primary care sites as the unit of randomization.

- Arms and Interventions:

- Standard Care: Usual follow-up processes without systematic intervention.

- Visit-Based Reminders: EHR-based reminders visible to both patients and providers when the record is accessed.

- Population Health Outreach: Visit-based reminders plus proactive outreach (e.g., letters, calls) from a population health team.

- Patient Navigation: All of the above, plus patient navigation with systematic screening for and referral to address social barriers to care (e.g., transportation, cost).

- Population: Adults overdue for follow-up of an abnormal screening test for breast, cervical, colorectal, or lung cancer, as defined by specific, guideline-based timeframes (e.g., 6 months overdue for a high-risk cervical abnormality requiring colposcopy) [18].

- Primary Outcome: Completion of appropriate follow-up, specific to the abnormal finding, within 120 days of trial eligibility.

- Implementation Notes: The trial operates as a "fail-safe" system, supplementing rather than replacing standard care. It leverages a single Epic EHR across participating sites and obtained a waiver of informed consent, enhancing its pragmatic nature [18].

Protocol 2: Qualitative Investigation of A&F Mechanisms

Understanding how A&F works requires qualitative methodologies to uncover underlying mechanisms [14] [17].

- Objective: To identify the implementation strategies generated by clinical teams in response to A&F and to specify their mechanisms of action.

- Study Design: Qualitative study using semi-structured interviews, analyzed retroductively using a context-mechanism-outcome framework.

- Setting and Participants: Purposive sampling of healthcare providers and administrative staff involved in the clinical area targeted by the A&F intervention (e.g., emergency department staff for acute stroke care).

- Data Collection: Conduct semi-structured interviews grounded in implementation frameworks like the COM-B model or Theoretical Domains Framework. Interviews should continue until thematic saturation is achieved (e.g., 10 interviews) [14].

- Analysis:

- Transcribe interviews verbatim.

- Code transcripts to identify emergent implementation strategies developed internally by staff in response to feedback.

- Analyze the coded strategies to specify the mechanisms of action (e.g., enablement, training, environmental restructuring) through which they are believed to effect change [14].

- Outcome: A refined program theory detailing the causal pathways through which A&F leads to practice improvement in a specific context.

Mechanistic Workflow of Audit and Feedback

The following diagram illustrates the theorized cyclical workflow of an effective audit and feedback intervention, synthesizing elements from CP-FIT and empirical research [1] [14] [17].

A&F Cyclical Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Resources for A&F Cancer Screening Research

| Item/Resource | Function/Application in A&F Research | Exemplars/Specifications |

|---|---|---|

| Electronic Health Record (EHR) System | Primary data source for audit; platform for embedding visit-based reminders. | Epic or similar integrated systems enabling data extraction and clinical decision support [18]. |

| Clinical Data Registry | Centralized repository for tracking screening participants across the entire care continuum (identification, invitation, result, follow-up). | CanScreen5 platform; EU national screening registries [16]. |

| Performance Indicator Set | Standardized metrics for audit, allowing for benchmarking and quality assessment. | 23 priority indicators from CanScreen-ECIS (e.g., detection rate, examination coverage, interval cancer rate) [19]. |

| Feedback Report Template | Structured format for delivering performance data to clinicians and teams. | Incorporates CP-FIT variables: defined goals, peer comparison, clear visualizations (e.g., bar/line graphs), action plan [14]. |

| Qualitative Interview Guides | Tool for investigating the mechanisms of action and contextual factors influencing A&F success. | Guides grounded in COM-B, TDF, or other implementation frameworks [14] [17]. |

| Stakeholder Engagement Framework | Methodology for ensuring clinician buy-in and co-design of the A&F intervention. | APEASE criteria (Affordable, Practical, Effective, Acceptable, Safe, Equitable) for strategy selection [14]. |

Audit and Feedback (A&F) systems represent a critical methodology for improving healthcare quality by closing gaps in the cancer screening continuum. This process involves systematically measuring current practices against benchmarks and delivering structured data to providers to prompt performance improvement. The fragmentation of outpatient care makes timely follow-up of abnormal diagnostic findings a persistent challenge, even with advanced electronic medical record (EMR) systems [20]. Research indicates that critical imaging results may not receive timely follow-up actions in 7.7% of cases, even when providers receive and read results in an integrated EMR system [20]. This Application Note provides a detailed framework for implementing A&F systems that map to each step of the cancer screening pathway, from abnormal result to diagnostic resolution, with specific protocols for researchers studying quality improvement in cancer screening follow-up.

Quantitative Foundations of the Screening Pathway

Effective A&F systems require robust baseline metrics to identify gaps and measure improvement. Population-based research provides critical benchmarks for each phase of the screening continuum.

Table 1: Population-Based Cancer Screening Metrics Across the Care Continuum

| Screening Metric | Breast Cancer | Cervical Cancer | Colorectal Cancer |

|---|---|---|---|

| Screening Participation | 63.5% | 84.6% | 77.5% |

| Percent Abnormal Screens | 10.7% | 4.4% | 4.5% |

| Timely Diagnostic Follow-up | 96.8% | 76.2% | 76.3% |

| Cancer Detection Rate (per 1000 screens) | 5.66 | 0.17 | 1.46 |

Source: PROSPR Consortium, 2013 data [10]

The screening process encompasses multiple vulnerable points where breakdowns can occur. For abnormal imaging results, studies show that 18.1% of critical alerts remain unacknowledged by providers, with trainees having significantly higher risk of non-acknowledgment (OR, 5.58; 95% CI, 2.86-10.89) [20]. Dual communication (alerting multiple providers) paradoxically increases the risk of lack of timely follow-up (OR, 1.99; 95% CI, 1.06-3.48), potentially due to diffusion of responsibility [20]. These quantitative foundations enable researchers to identify specific leakage points in the screening pathway and target A&F interventions accordingly.

Experimental Protocols for A&F System Implementation

Protocol 1: Tracking Critical Result Follow-up

Objective: To quantify and improve follow-up rates for abnormal cancer screening results.

Materials: EMR with alert tracking capability, standardized reporting codes for abnormal findings, audit tracking software.

Methodology:

- Identify Abnormal Results: Utilize predefined standardized codes for abnormal imaging requiring action [20].

- Transmission Protocol: Configure EMR to transmit electronic alerts to View Alert window of ordering providers [20].

- Acknowledgement Tracking: Implement tracking software to determine whether alerts were acknowledged (provider opened message) within two weeks of transmission [20].

- Follow-up Assessment: Review medical records 4 weeks post-transmission for documented follow-up actions (additional testing, consultations, patient notifications) [20].

- Provider Verification: Contact providers directly when documentation is absent to confirm follow-up status [20].

Key Measurements:

- Alert acknowledgement rate within 14 days

- Timely follow-up action rate within 28 days

- Rate of undocumented follow-up actions

Protocol 2: Implementing Multimodal Communication Interventions

Objective: To evaluate the impact of supplemental communication strategies on follow-up rates.

Background: Electronic alerts alone demonstrate significant failure rates, with nearly 10% of unacknowledged alerts lacking timely follow-up [20].

Intervention Components:

- Verceral Communication: Radiologist initiates additional verbal communication for critical findings [20].

- Structured Documentation: Implement standardized fields for documenting follow-up plans in EMR.

- Dual Provider Identification: Designate primary responsibility when multiple providers receive alerts.

Experimental Groups:

- Control: Standard electronic alert system only

- Intervention 1: Electronic alert + verbal communication

- Intervention 2: Electronic alert + structured documentation + clear responsibility assignment

Outcome Measures: Compare rates of timely follow-up across groups using multivariable logistic regression models accounting for clustering effect by providers [20].

Visualization of A&F System Implementation

The following workflow diagram maps the complete A&F system implementation pathway from abnormal result identification to diagnostic resolution and system refinement:

Research Reagent Solutions

Table 2: Essential Research Materials for A&F System Implementation

| Research Component | Function | Implementation Example |

|---|---|---|

| EMR Alert System | Transmits critical result notifications | VA CPRS View Alert system [20] |

| Alert Tracking Software | Monitors provider acknowledgment | Vista alert-management-tracking program [20] |

| Standardized Coding System | Classifies abnormal findings requiring action | Radiology predefined alert codes [20] |

| Audit Protocol | Validates data collection methodology | HEDIS Compliance Audit framework [21] |

| Common Data Elements | Ensures consistent metric definition | PROSPR screening continuum metrics [10] |

Regulatory and Accreditation Context

A&F systems must align with evolving regulatory requirements. For 2025 reporting, key updates include lowered screening age for breast cancer (from 52 to 42 years) and transition of Childhood Immunization Status to ECDS reporting [21]. The Commission on Cancer (CoC) has updated Standard 4.8 for 2025, clarifying that survivorship services must address needs of patients who have completed their first course of treatment and cannot be single events [22]. HEDIS Compliance Audits require standardized methodology providing independent assessment of information systems, data management processes, and final HEDIS rates [21]. Researchers must incorporate these evolving standards into A&F system design to ensure real-world applicability.

Mapping effective A&F systems to the cancer screening pathway requires meticulous attention to quantitative benchmarks, implementation protocols, and regulatory frameworks. The protocols and visualizations presented herein provide researchers with structured approaches to address the critical gap between abnormal result identification and diagnostic resolution. By implementing the structured protocols and utilizing the essential research tools outlined, investigators can develop robust A&F systems that significantly improve timely follow-up rates and ultimately reduce diagnostic delays in cancer care. Future research should focus on optimizing communication systems in increasingly fragmented healthcare environments and exploring the impact of emerging technologies on reducing follow-up gaps.

Audit and feedback (A/F) systems are integral to improving the quality of cancer screening programs. These tools provide healthcare providers with summaries of their clinical performance over a specified period, aiming to bridge the gap between actual and desired practice. Framed within a broader thesis on A/F systems for cancer screening follow-up research, this application note details key quantitative outcomes, experimental protocols, and essential research tools for evaluating the impact of these systems on follow-up rates and cancer mortality. The insights are critical for researchers, scientists, and drug development professionals working to optimize cancer care pathways and validate the effectiveness of quality improvement interventions.

Key Quantitative Outcomes from Audit and Feedback Systems

Empirical evidence demonstrates that A/F systems can positively influence screening participation, a critical step in the early detection of cancer. Key outcomes from a large-scale evaluation of the Primary Care Screening Activity Report (PCSAR) in Ontario, Canada, are summarized in the table below [23].

Table 1: Impact of Physician Engagement with PCSAR on Cancer Screening Participation

| Cancer Screening Program | Adjusted Odds Ratio (AOR) associated with Physician Registration | Adjusted Odds Ratio (AOR) associated with Physician Log-in |

|---|---|---|

| Colorectal Cancer | 1.06 [1.04; 1.09] | 1.07 [1.03; 1.12] |

| Breast Cancer | 1.15 [1.12; 1.19] | 1.18 [1.14; 1.22] |

| Cervical Cancer | 1.10 [1.08; 1.12] | 1.16 [1.13; 1.19] |

This study found that simply having a physician registered to receive the PCSAR was associated with a statistically significant, though modest, increase in the odds of screening participation across all three cancer types [23]. The effect was more pronounced when physicians actively logged in to view their reports, underscoring that engagement with the A/F tool is a key driver for improving screening follow-up rates.

Beyond intermediate outcomes like screening participation, the ultimate goal of screening programs is to reduce cancer-specific mortality. A meta-analysis of follow-up strategies after curative-intent treatment for common cancers provides critical insights [24].

Table 2: Impact of Intensive Follow-Up on Survival for Common Cancers (Meta-Analysis of Low Risk of Bias Studies)

| Cancer Type | Impact on Overall Survival (Hazard Ratio, 95% CI) | Impact on Curative Treatment of Recurrences (Relative Risk, 95% CI) |

|---|---|---|

| Colorectal | 0.99 [0.92 - 1.06] | 1.30 [1.05 - 1.61] |

| Breast | 1.06 [0.92 - 1.23] | Not Available |

| Upper Gastro-Intestinal | 0.78 [0.51 - 1.19] | 0.92 [0.47 - 1.81] |

| Prostate | 1.00 [0.86 - 1.16] | Not Available |

The analysis concluded that for colorectal and breast cancer, high-quality studies do not show a significant impact of intensive follow-up strategies on overall survival [24]. However, for colorectal cancer, intensive follow-up did lead to a 30% increase in the rate of recurrences being treated with curative intent, highlighting an important clinical benefit even in the absence of a clear survival signal [24].

Experimental Protocols for Evaluating A/F Interventions

Protocol 1: Factorial Experiment to Test Email Content for A/F Engagement

Objective: To determine whether emails incorporating specific Behavior Change Techniques (BCTs) increase log-in rates to a web-based A/F tool (the Screening Activity Report, or SAR) [4] [25].

Background: Analytics revealed that 50% of email recipients did not open the original monthly SAR update email, and less than 7% clicked the link to access their report [4]. This protocol was designed to optimize this communication.

Methods:

- Intervention Design:

- A user-centered, co-creation approach was employed, involving focus groups and workshops with both "adopter" (physicians who used the SAR) and "non-adopter" physicians [25].

- Three BCTs from the Behavior Change Technique Taxonomy (v1) were selected for testing [4] [25]:

- Anticipated Regret: Inducing expectations of future regret about not logging in (e.g., "How would you feel if a patient had a poor outcome because you missed an abnormal test result?") [4].

- Material Incentive (Behavior): Linking SAR use to an existing monetary bonus for achieving high screening rates [4].

- Problem Solving: Providing strategies to overcome barriers to access (e.g., tips on delegating access or scheduling time) [4].

- Study Design:

- A pragmatic, 2x2x2 factorial randomized experiment within a Multiphase Optimization Strategy (MOST) framework [4].

- Participants (primary care physicians registered for the SAR) were randomized into one of eight experimental conditions, receiving monthly emails with all possible combinations of the three BCTs (on or off) [4].

- Outcomes:

This protocol provides a robust model for experimentally testing communication strategies to enhance engagement with A/F systems.

Protocol 2: Measuring Mortality Impact - An Alternative to Rate Ratios

Objective: To accurately measure the mortality benefit of cancer screening interventions by using "deaths averted" to avoid dilutional bias inherent in traditional rate ratio analyses [26].

Background: In screening trials, follow-up must continue beyond the active screening period. This includes deaths from cancers that became detectable only after screening ended, which are expected to occur equally in both screening and control groups. Including these "post-screening" cases in a rate ratio calculation dilutes the estimated effect, biasing the result toward unity (no effect) [26].

Methods:

- Data Analysis:

- Calculate the absolute difference in the number of cancer deaths between the screening and control arms. This difference represents the number of deaths averted (DA) [26].

- Adjust for small differences in person-years of follow-up between groups. The expected number of deaths in the screening group (Es) is calculated as: Es = (Observed deaths in control) × (Person-years in screening / Person-years in control) [26].

- DA = Es - Os, where Os is the observed number of deaths in the screening group [26].

- Presentation of Results:

This methodological approach is crucial for researchers aiming to provide an undiluted and more accurate estimate of a screening program's impact on cancer mortality.

Workflow and Logical Diagrams

Research and Impact Pathway for A/F Systems

The following diagram illustrates the logical pathway from A/F intervention through to its key outcomes, integrating both intermediate clinical actions and ultimate mortality effects.

Experimental Design for BCT Testing

This diagram outlines the factorial design used to test the different behavior change techniques in email communications.

The Scientist's Toolkit: Research Reagent Solutions

For researchers aiming to replicate or build upon the studies cited, the following table details key resources and their functions.

Table 3: Essential Research Materials and Tools for A/F and Screening Impact Studies

| Item/Tool Name | Type/Model | Primary Function in Research |

|---|---|---|

| Behavior Change Technique Taxonomy (v1) | Classification System | Provides a standardized framework for defining and reporting active components (BCTs) in behavior change interventions, ensuring replicability [4] [25]. |

| Theoretical Domains Framework (TDF) | Analytical Framework | Used to identify potential determinants of behavior (e.g., emotions, beliefs about consequences) that BCTs are designed to target, informing intervention design [4]. |

| Multiphase Optimization Strategy (MOST) | Research Framework | An engineering-inspired framework for optimizing multicomponent behavioral interventions using factorial experiments to identify which components are active [4]. |

| Process Flow Diagrams (Swimlane Maps) | Quality Improvement Tool | Visual tools used to detail the specific steps, decision points, and responsibilities in a complex intervention, aiding in implementation, adaptation, and fidelity tracking [27]. |

| CanScreen5 Data Platform | Global Data Repository | A harmonized platform for collecting and reporting qualitative and quantitative data on cancer screening programs, enabling benchmarking and cross-program performance analysis [28] [16]. |

| Deaths Averted (DA) Metric | Statistical Method | An alternative to rate ratios for analyzing screening trial data; calculates the absolute difference in cancer deaths between arms to avoid dilutional bias from post-screening cases [26]. |

Building Effective Systems: A Step-by-Step Guide to A&F Implementation

Audit and feedback (A&F) systems represent a cornerstone implementation strategy for improving healthcare quality, particularly in cancer screening follow-up research. Defined as the collection and analysis of performance data (audit) followed by the presentation of clinical performance summaries to healthcare professionals (feedback), A&F programs aim to bridge evidence-practice gaps by influencing provider behavior [29]. When effectively designed and implemented, these systems can significantly impact adherence to cancer screening guidelines, though their success depends on a sophisticated interplay of core components. This article delineates the essential elements of a successful A&F program—assessment, reporting, and feedback loops—within the context of cancer screening research, providing researchers and drug development professionals with structured protocols and analytical frameworks to enhance intervention efficacy and sustainability.

Quantitative Foundations of A&F Effectiveness

Robust assessment forms the empirical foundation of any successful A&F program. Quantitative evaluation provides critical evidence of program impact, informs iterative refinements, and demonstrates value to stakeholders. Research across diverse cancer screening contexts reveals measurable effects on both participant engagement and clinical knowledge outcomes.

Table 1: Quantitative Outcomes from Cancer-Focused A&F and Educational Programs

| Program Characteristic | Program A (Tobacco Cessation) | Program B (Colorectal Cancer Screening) | Program C (Prostate Cancer Screening) | Program D (Caregiver Education) |

|---|---|---|---|---|

| Participants | 195 | 45 | 59 | 132 |

| Program Duration | 4 months | 7 months | 9 months | 7 months |

| Session Frequency | 4 monthly sessions | 7 monthly sessions | 9 monthly sessions | 7 monthly sessions |

| Participant Engagement | Average of 20.15 participants per session across all programs | |||

| Knowledge Increase (5-point scale) | +0.84 average increase across programs | |||

| Confidence Increase (5-point scale) | +0.77 average increase across programs | |||

| Implementation Likelihood | 59% of participants planned to use information within one month |

Source: Adapted from American Cancer Society ECHO Program evaluations [30]

The data in Table 1 illustrates that structured virtual education programs incorporating A&F principles can successfully engage healthcare professionals across multiple cancer domains. Notably, participants demonstrated significant improvements in both knowledge and confidence—essential precursors to behavior change—with the majority intending to rapidly implement learned strategies in clinical practice [30]. These quantitative outcomes provide a benchmark for researchers developing A&F interventions targeting cancer screening improvement.

Experimental Protocol: Measuring A&F Impact on Screening Participation

Objective: To quantitatively evaluate the effect of a multimodal A&F intervention on colorectal cancer screening participation rates within primary care practices.

Materials and Reagents:

- Electronic medical record system with screening-eligible patient population

- Data extraction and aggregation software (e.g., SQL databases, Python/R scripts)

- Secure data visualization platform for feedback reports

- Validated survey instruments for measuring knowledge and confidence (5-point Likert scales)

- Statistical analysis software (e.g., SPSS, GraphPad Prism, R)

Methodology:

- Baseline Assessment Phase (Months 1-3):

- Extract retrospective data on screening rates for 12 months pre-intervention

- Identify eligible patient population using established criteria (e.g., age 45-75, no prior screening, no personal history of CRC)

- Administer pre-intervention surveys to assess clinician knowledge, confidence, and perceived barriers regarding CRC screening guidelines

Intervention Phase (Months 4-9):

- Implement multimodal A&F intervention comprising:

- Monthly performance reports comparing individual and practice-level screening rates to regional benchmarks

- Peer comparison data presented through anonymized rankings

- Actionable recommendations for addressing screening barriers

- Virtual group sessions for case discussion and strategy sharing

- Implement multimodal A&F intervention comprising:

Post-Intervention Assessment (Months 10-12):

- Measure primary outcome: change in screening completion rates

- Administer post-intervention surveys to assess changes in knowledge and confidence

- Conduct semi-structured interviews to identify implementation facilitators and barriers

Statistical Analysis:

- Use paired t-tests or Wilcoxon signed-rank tests to compare pre-post screening rates

- Calculate mean differences in knowledge and confidence scores with 95% confidence intervals

- Perform multivariable regression to identify predictors of screening improvement

This protocol emphasizes the importance of baseline measurement, multimodal intervention components, and mixed-methods assessment to comprehensively evaluate A&F effectiveness in cancer screening contexts [30] [15].

Organizational Frameworks for A&F Implementation

The structural context in which A&F programs operate significantly influences their effectiveness. Research indicates that organizational determinants can either facilitate or impede successful implementation, particularly for complex processes like cancer screening that involve multiple steps and healthcare team members.

Table 2: Organizational Determinants of Successful Cancer Screening Programs

| Organizational Factor | Impact on Screening Participation | Evidence Strength |

|---|---|---|

| Centralized Program Coordination | Higher participation through systematic population management | Strong [15] |

| Active Invitation Systems | 15-30% increase in initial uptake compared to passive approaches | Moderate-Strong [15] |

| Integrated Quality Assurance | Improved adherence through continuous monitoring and improvement | Moderate [15] |

| Community-Based Outreach | Particularly effective for underserved populations (15-25% increases) | Moderate [15] |

| Culturally Tailored Education | Addresses disparities and improves equity in screening access | Moderate [15] |

| Digital Reminder Systems | Significant improvements when integrated with organizational workflows | Moderate [15] |

| Audit and Feedback Mechanisms | Modest improvements, enhanced when aligned with QI initiatives | Moderate [15] |

Organizational infrastructure emerges as a critical determinant of A&F success. As demonstrated in Table 2, programs with centralized coordination, active outreach, and integrated quality assurance mechanisms demonstrate substantially better screening participation outcomes [15]. These findings underscore the importance of addressing organizational context before implementing A&F interventions, as even well-designed feedback may fail without supportive structural elements.

A&F Workflow Visualization

A&F Cycle Diagram: This workflow illustrates the continuous quality improvement process central to effective audit and feedback systems, highlighting the interconnected phases of assessment, reporting, and feedback loops.

Optimized Reporting Protocols for Maximum Impact

The design and delivery of feedback reports significantly influence recipient engagement and subsequent behavior change. Research indicates that reports must balance comprehensiveness with usability to effectively communicate performance data while facilitating actionable insights.

Experimental Protocol: User-Centered Design of A&F Reports

Objective: To develop and test cancer screening feedback reports through iterative user-centered design, maximizing usability, comprehension, and actionability for primary care providers.

Materials and Reagents:

- Prototype reports (original and redesigned versions)

- Screen recording software for usability testing

- Think-aloud protocol guidelines

- System Usability Scale (SUS) questionnaire

- Semi-structured interview guides

- Qualitative data analysis software (e.g., NVivo, Dedoose)

Methodology:

- Initial Design Phase:

- Develop prototype reports incorporating evidence-based design principles (peer comparison, visual benchmarks, actionable recommendations)

- Conduct cognitive walkthroughs with design team to identify obvious usability issues

Iterative Testing Phase:

- Recruit 16-20 family physicians naïve to the reports (non-users)

- Conduct one-on-one usability sessions observing navigation and comprehension

- Employ think-aloud protocol to capture real-time user impressions

- Measure time to complete key tasks (e.g., identify worst-performing metric)

- Administer SUS questionnaire following each session

Redesign and Refinement:

- Analyze usability data to identify navigation barriers and comprehension gaps

- Implement iterative design changes addressing identified issues

- Improve visual hierarchy, data visualization, and action planning guidance

- Enhance connection between performance data and improvement strategies

Field Evaluation:

- Deploy redesigned reports to active users (family physicians receiving reports)

- Conduct semi-structured interviews with 17+ participants until thematic saturation

- Assess alignment with clinical expectations and workflow integration

- Identify persistent barriers to engagement and action

Analysis:

- Calculate SUS scores for quantitative usability assessment

- Employ emergent thematic analysis of interview transcripts

- Code data for expectations, perceived usability, and implementation barriers

This protocol emphasizes the importance of iterative, user-centered design rather than sole reliance on evidence-based guidelines. Research demonstrates that even reports incorporating best practices may fail if they misalign with clinician expectations or workflow realities [29].

Sustaining Effective Feedback Loops

The feedback loop component represents the most dynamic element of A&F systems, transforming static reporting into continuous quality improvement. Effective loops facilitate sense-making, action planning, and iterative refinement based on changed practices.

Sustainability Framework for A&F Programs

Sustainability Framework: This diagram visualizes the Integrated Sustainability Framework (ISF) applied to A&F programs, highlighting the multi-level determinants necessary for maintaining benefits over time [31].

Research indicates that only 39% of A&F trials substantially address sustainability considerations, with limited detail on how sustainability is planned, implemented, or assessed [31]. The most frequent sustainability period in research is 12 months, though real-world programs require longer-term perspectives. Effective sustainability planning extends beyond simple continuation to encompass adaptation and evolution while maintaining core benefits.

Table 3: Essential Research Reagents for A&F Implementation Science

| Reagent/Resource | Function/Application | Implementation Notes |

|---|---|---|

| Electronic Health Record Systems | Data extraction for audit phase; outcome measurement | Ensure structured data fields for screening metrics [29] |

| Data Visualization Platforms | Feedback report generation; performance trending | Prioritize user-centered design; intuitive interfaces [29] |

| Statistical Analysis Software | Performance calculation; significance testing; benchmarking | GraphPad Prism, R, or Excel for descriptive statistics [30] |

| Quality Indicator Specifications | Standardized metric definitions for reliable audit | HEDIS measures provide validated specifications [21] |

| Survey Instruments | Measurement of knowledge, confidence, and usability | 5-point Likert scales for knowledge/confidence; System Usability Scale [30] [29] |

| Clinical Practice Guidelines | Evidence base for recommendations and action plans | ACS or USPSTF screening guidelines for content validity [15] |

| Secure Communication Platforms | Feedback delivery and follow-up communication | Balance accessibility with data security requirements [29] |

This toolkit provides the foundational elements for implementing rigorous A&F research in cancer screening contexts. Particular attention should be paid to the validity of quality indicators and the usability of data visualization platforms, as these components significantly influence recipient engagement and trust in the feedback provided [21] [29].

Discussion and Implementation Considerations

The core components of successful A&F programs—rigorous assessment, user-centered reporting, and sustainable feedback loops—function as an interdependent system rather than discrete elements. Research consistently demonstrates that effectiveness depends on the careful integration of all three components, with particular attention to contextual factors that influence implementation.

A critical implementation challenge involves balancing data comprehensiveness with report usability. Family physicians have expressed that feedback reports must reflect best practices, demonstrate data validity and accuracy, and focus on clinical activities within their control to change [29]. Furthermore, expectations of feasible quality improvement activities must align between report designers and recipients. Even well-designed reports face implementation barriers when misaligned with workflow realities, competing priorities, time constraints, or limited quality improvement skills among recipients [29].

For cancer screening specifically, researchers should consider incorporating emerging organizational strategies that demonstrate effectiveness, including centralized coordination, active invitation systems, culturally tailored education, and digital reminder systems [15]. These approaches complement A&F interventions by creating supportive structural contexts for behavior change. Additionally, the evolving landscape of cancer screening guidelines—such as the lowered breast cancer screening start age from 52 to 42 years in HEDIS MY 2025—necessitates ongoing surveillance of measure specifications and timely updates to audit criteria [21].

Future research directions should address the limited empirical understanding of factors impacting A&F sustainability and the development of frameworks that explicitly consider spread and scale mechanisms. Planning for scalability should extend beyond cost and infrastructure to encompass leadership engagement, policy alignment, and communication strategies that support wider adoption [31]. By addressing these gaps while adhering to the core components outlined herein, researchers can advance the effectiveness and impact of A&F programs in critical cancer screening domains.

Robust public health surveillance data is the cornerstone of effective cancer prevention and control programs, enabling the setting of objectives, planning of interventions, and evaluation of progress [32]. For audit and feedback systems specifically focused on cancer screening follow-up, three primary data sources provide complementary insights: Electronic Health Records (EHRs), cancer registries, and administrative claims data. EHRs contain rich clinical information including diagnoses, procedures, lab results, medications, and vitals that can be accessed in near real-time on large convenience samples of in-care patients [32]. Cancer registries provide reliable population-level cancer incidence and prevalence data but often lack comprehensive information about the complete cascade of care from screening through treatment initiation [32]. Administrative claims data offer detailed billing information across healthcare settings but may lack clinical granularity. When strategically integrated, these sources create a powerful infrastructure for monitoring and improving cancer screening follow-up processes, though significant technical and methodological challenges must be addressed.

Table 1: Core Characteristics of Primary Data Sources for Cancer Screening Research

| Data Source | Primary Content | Key Strengths | Major Limitations | Best Applications in Screening Audit |

|---|---|---|---|---|

| EHR Systems | Clinical narratives, lab results, vital signs, medications, structured clinical data [32] | Rich clinical detail, real-time access, direct capture of clinical care | Data fragmentation, interoperability challenges, documentation variability [33] [34] | Measuring screening adherence, identifying follow-up delays, risk factor assessment |

| Cancer Registries | Cancer site, histology, stage at diagnosis, initial treatment, mortality [32] | Population coverage, standardized data collection, longitudinal tracking | Limited prevention/screening data, reporting delays, incomplete treatment data [32] | Benchmarking cancer incidence, measuring diagnostic stage, survival analysis |

| Claims Data | Billing codes, procedures, diagnoses, pharmacy dispensing, provider details | Complete billing history, cross-setting coverage, large populations | Limited clinical context, coding inaccuracies, financial rather than clinical focus | Healthcare utilization patterns, cost analysis, provider payment models |

Electronic Health Records: Protocols for Data Extraction and Validation

EHR Data Extraction Methodology

The organic evolution of EHRs has resulted in significant challenges for data extraction, including lack of interoperability, difficulty locating critical data, and poor organization of information [33] [34]. A national survey of gynecological oncology professionals found that 92% routinely access multiple EHR systems, with 29% using five or more separate systems, and 17% spend more than half their clinical time searching for patient information [34]. To address these challenges, the following structured protocol enables effective EHR data extraction for cancer screening audit and feedback systems.

Protocol 2.1.1: Multi-System EHR Data Aggregation

- Objective: Consolidate structured clinical data from disparate EHR systems across participating healthcare institutions for cancer screening follow-up assessment.

- Materials: EHR access credentials, secure data transfer environment, data mapping specifications, patient matching algorithms.

- Procedure:

- Engage EHR vendors early to understand capabilities, pricing structures, and limitations around extracting clinical data for quality measures [35].

- Request standardized data extracts in QRDA-I, FHIR, CCDA, or flat-file formats (CSV, JSON) to ensure comprehensive data capture [35].

- Implement extraction-transform-load (ETL) pipelines using tools like Apache NiFi, Talend, or Python/Pandas to normalize disparate data structures [35].

- Apply Common Data Models (CDMs) such as OMOP or FHIR to standardize vocabulary and create interoperable data structures across systems [35].

- Create a centralized, standardized data repository that maintains data integrity and clinical context while enabling longitudinal analysis [35].

- Quality Control: Conduct chart reviews to validate that documentation accuracy is reflected in data extracts, with particular attention to external results not automatically incorporated into the primary EHR [35].

EHR Data Validation and Quality Assessment

A critical challenge in using EHR data for cancer surveillance is ensuring completeness and accuracy. Research indicates that EHR-based measures for risk factor indicators are often similar to estimates from external sources, but cancer screening and vaccination indicators can be substantially underestimated compared to external benchmarks [32]. These discrepancies often occur because screenings and vaccinations may be recorded in sections of the EHR not captured by common data models or because external results are never entered into the primary EHR system [35] [32]. The following protocol addresses these validation challenges.

Protocol 2.2.1: EHR Data Quality Assurance for Cancer Screening Metrics

- Objective: Identify and remediate data quality issues that impact accurate calculation of cancer screening follow-up measures.

- Materials: Source EHR systems, validation sample frames, data quality dashboards, clinical liaisons for chart review.

- Procedure:

- Conduct targeted chart reviews on a representative sample of patients for each provider to ensure documentation accuracy is reflected in data extracts [35].

- Cross-reference ACO-assigned patient lists from CMS (or other relevant sources) against EHR and billing data to ensure complete coverage and accurate attribution [35].

- Identify common data gaps including missing vital signs, tobacco use not recorded in structured fields, external lab results not entered into primary EHR, and incomplete diagnostic coding [35].

- Work closely with clinical teams to ensure documentation occurs in structured fields rather than solely in narrative notes [35].

- Compare EHR-derived estimates against external benchmarks from established surveillance systems to identify systematic biases [32].

- Quality Control: Establish ongoing data quality monitoring with predefined thresholds for key quality measures, implementing corrective actions when thresholds are not met.

Table 2: Common EHR Data Quality Issues and Remediation Strategies in Cancer Screening Contexts

| Data Quality Issue | Impact on Screening Metrics | Detection Method | Remediation Strategy |

|---|---|---|---|

| Missing structured data for cancer screenings [32] | Underestimation of screening rates | Compare EHR data with manual chart review | Implement clinical documentation improvement initiatives |

| External results not entered into primary EHR [35] | Incomplete cancer diagnosis and staging information | Identify patients with missing expected follow-up data | Establish health information exchange interfaces |

| Inconsistent diagnostic coding [35] | Inaccurate patient stratification and risk adjustment | Analyze code distribution patterns across providers | Provide coder education and automated coding suggestions |

| Fragmented data across multiple unconnected EHRs [34] | Incomplete clinical picture impacting follow-up decisions | Survey clinicians on time spent searching for information | Implement master patient index and record linkage algorithms |

Cancer Registries: Linkage Protocols for Enhanced Surveillance

Registry-EHR Integration Methodology

Cancer registries provide reliable data on cancer prevalence and incidence but traditionally lack comprehensive information about the full cascade of engagement with the healthcare system [32]. There has been growing support and adoption of using EHRs to automate and standardize reporting to state central cancer registries [32]. The integration of EHR data with traditional registry structures creates powerful opportunities for enhancing cancer screening audit and feedback systems.

Protocol 3.1.1: Registry-EHR Data Linkage for Comprehensive Cancer Surveillance

- Objective: Create enhanced surveillance capabilities by linking EHR clinical data with population-based cancer registry information.

- Materials: Registry data files, EHR extracts, secure linkage environment, probabilistic matching software, privacy-preserving record linkage protocols.

- Procedure:

- Extract and standardize patient demographics from both EHR and registry sources using common formats for matching variables.

- Apply probabilistic linkage methods using patient attributes such as name, date of birth, gender, address, and phone number [35].

- Implement unique patient identifiers where available to improve linkage accuracy and prevent future duplication.

- Conduct manual review processes for edge cases with conflicting or overlapping records that automated systems cannot resolve [35].

- Validate linkage quality through sampling and verification of matched records against source documents.

- Quality Control: Calculate false-match and non-match rates through rigorous sampling, with established thresholds for linkage quality acceptance.

Administrative Claims Data: Analysis Protocols for Screening Follow-up

Claims-Based Screening Metric Development

Administrative claims data offer valuable insights into healthcare utilization patterns relevant to cancer screening follow-up. The following protocol outlines approaches for leveraging claims data to monitor and improve cancer screening processes.

Protocol 4.1.1: Claims Data Analysis for Cancer Screening Quality Measures

- Objective: Utilize administrative claims data to monitor cancer screening adherence and identify follow-up gaps across healthcare systems.

- Materials: Medical and pharmacy claims files, enrollment data, provider directories, code sets for screening procedures.

- Procedure:

- Identify relevant diagnosis and procedure codes for cancer screenings and follow-up procedures (e.g., ICD-10-CM, CPT/HCPCS codes) [36].

- Apply attribution methodologies to assign patients to providers or accountable care organizations for quality measurement.

- Calculate screening adherence metrics based on guideline-appropriate intervals for specific cancer types.

- Identify potential follow-up gaps by flagging abnormal screening results without subsequent diagnostic procedures within recommended timeframes.

- Analyze patterns of care across demographic groups, providers, and geographic regions to identify disparities.

- Quality Control: Validate claims-based algorithms against clinical data from EHRs when possible to assess accuracy and completeness.

Integrated Data Systems: Advanced Applications for Audit & Feedback

Multi-Source Data Integration Framework

The most powerful approach to cancer screening audit and feedback systems involves strategic integration of EHR, registry, and claims data sources. This integration enables a more comprehensive view of the cancer screening continuum than any single source can provide. The 2022 National Cancer Policy Forum workshop highlighted opportunities for EHR innovations to substantially benefit patient care, quality improvement efforts, research, and cancer surveillance activities [33]. The following protocol outlines a framework for creating these integrated data systems.

Protocol 5.1.1: Multi-Source Data Integration for Cancer Screening Audit & Feedback

- Objective: Create a unified data infrastructure that leverages complementary strengths of EHR, registry, and claims data for comprehensive cancer screening monitoring.

- Materials: Data use agreements, secure computing environment, identity management system, data standardization tools.

- Procedure:

- Establish governance frameworks addressing data sharing, privacy, security, and appropriate use across participating organizations.

- Implement privacy-preserving record linkage to connect patient records across data sources while maintaining confidentiality.

- Develop common data models that accommodate structured and unstructured elements from each source while preserving critical clinical context.

- Create integrated patient timelines that sequence screening events, results, diagnoses, and treatments across all available data sources.

- Build feedback mechanisms that deliver timely, actionable information to clinicians and healthcare systems to improve screening follow-up.

- Quality Control: Implement comprehensive data quality assessment across all integrated sources, with ongoing monitoring of linkage accuracy and completeness.

Table 3: Cancer Screening Quality Measures Enabled by Integrated Data Systems

| Quality Measure Domain | EHR Contribution | Registry Contribution | Claims Contribution | Integrated Capability |

|---|---|---|---|---|

| Breast cancer screening follow-up [35] | Screening mammography results, biopsy reports | Cancer diagnosis confirmation, stage at diagnosis | Screening and diagnostic procedure billing codes | Complete pathway from screening to diagnosis and treatment initiation |

| Colorectal cancer screening adherence | Colonoscopy findings, FIT/fecal occult blood results | Colorectal cancer incidence, stage data | Screening modality claims, polyp surveillance | Longitudinal screening history with appropriate follow-up intervals |

| Cervical cancer prevention [32] | Pap and HPV test results, colposcopy findings | Cervical cancer cases, histology | Screening and procedure billing codes | Coordinated prevention and early detection strategies |

| Lung cancer screening [37] | LDCT results, smoking status documentation | Lung cancer incidence, histology | Screening claims, tobacco cessation counseling | Risk-based screening with appropriate diagnostic follow-up |

The Researcher's Toolkit: Essential Solutions for Data Integration

Table 4: Research Reagent Solutions for Cancer Screening Data Integration

| Tool Category | Specific Solutions | Primary Function | Application Context |

|---|---|---|---|

| Data Standardization | OMOP Common Data Model, FHIR Resources, CDISC Standards | Standardize vocabulary and structure across disparate data sources | Enables cross-system aggregation and analysis of clinical data [35] |

| Record Linkage | Probabilistic Matching Algorithms, Master Patient Index Systems, Privacy-Preserving Record Linkage | Accurately identify the same patient across different data systems | Essential for creating comprehensive patient timelines from fragmented sources [35] |

| Natural Language Processing | Clinical Text Analytics, Named Entity Recognition, Information Extraction Pipelines | Extract structured information from unstructured clinical narratives | Critical for capturing data documented only in free-text notes [33] [34] |

| Quality Measurement | eCQM Value Sets, QRDA Reporting Tools, FHIR-based Measure Evaluation | Calculate standardized quality metrics from structured clinical data | Supports compliance reporting and quality improvement initiatives [35] |

| Data Visualization | Clinical Dashboards, Quality Performance Displays, Longitudinal Patient Timelines | Present complex clinical data in accessible formats for feedback | Enables effective audit and feedback to clinicians and health systems [34] |

Audit and feedback (A&F) is a cornerstone strategy for improving healthcare quality, operating on the principle that highly motivated health professionals, when presented with information showing their clinical practice is inconsistent with evidence-based guidelines or peer performance, will focus attention on areas needing improvement [1]. Within cancer screening follow-up research, A&F systems are instrumental in addressing unwarranted clinical variation—the underuse, overuse, or misuse of services that cannot be explained by patient preference or medical science [38]. The ultimate goal of developing actionable metrics within these systems is to move beyond simple data reporting to a process that motivates change, closes care gaps, and increases population-level screening rates, which have been shown to increase by a median of 13 percentage points for breast, cervical, and colorectal cancer tests through such interventions [39].

Theoretical Foundation and Key Mechanisms

Effective A&F is not merely a data delivery system but a complex intervention operating through specific sociological and psychological mechanisms. A 2023 realist study identified eight core mechanisms through which A&F strategies exert their influence, categorized here as facilitators and barriers [38]:

Table: Key Mechanisms in Audit and Feedback Implementation

| Facilitating Mechanisms | Constraining Mechanisms |

|---|---|

| 1. Clinician ownership and buy-in | 5. Rationalizing current practice (vs. learning) |

| 2. Ability to make sense of information | 6. Perceptions of unfairness and data integrity concerns |

| 3. Motivation through social influence | 7. Development of unimplemented improvement plans |

| 4. Acceptance of responsibility and accountability | 8. Perceived intrusions on professional autonomy |

The Clinical Performance Feedback Intervention Theory suggests effective feedback is cyclical and sequential, becoming less effective if any process within the cycle fails [38]. This cyclical process typically involves five stages: preparing for audit, selecting criteria, measuring performance, making improvements, and sustaining improvements [1]. The design of actionable metrics must account for these mechanisms throughout the cycle.

A Framework for Actionable Metrics

Effective A&F systems require metrics at multiple levels of the care continuum, from broad clinic-level performance to individual provider actions. These metrics should be structured to create a clear pathway from measurement to action.

Table: Actionable Metrics Framework for Cancer Screening Follow-Up

| Level | Metric Category | Specific Metrics | Data Sources | Actionability |

|---|---|---|---|---|

| Clinic-Level | Population Health | % age-eligible patients up-to-date with screening | EHR, Patient Registry (UDS, HEDIS) | Identify system-level care gaps and resource needs |

| Efficiency | Screening completion rate (% referred who complete test) | EHR, Laboratory data | Assess patient follow-through and system barriers | |

| Provider-Level | Clinical Practice | % patients due for screening given a recommendation | EHR, Chart Audit | Target provider communication and recommendation habits |

| Documentation | % patients with screening test ordered | EHR, Billing Data | Improve adherence to screening protocols and documentation | |

| Patient-Level | Outcomes | Diagnostic follow-up rate (% with positive screen completing follow-up) | EHR, Specialist reports | Address care coordination and navigation gaps |

The design of these metrics should prioritize a limited number of indicators that are meaningful to clinicians, with clear targets and action plans specifying necessary steps for achievement [1] [38]. Feedback is more effective when focusing on providers with poor performance at baseline [1].

Experimental Protocols for Implementation and Evaluation

Cluster Randomized Controlled Trial Protocol

This protocol is adapted from Price-Haywood et al.' study comparing audit-feedback with additional communication training [2].

Objective: To evaluate whether training primary care providers (PCPs) in cancer risk communication and shared decision-making, in addition to audit-feedback, improves communication behaviors and increases cancer screening among patients with limited health literacy compared to audit-feedback alone.

Study Design: Four-year cluster randomized controlled trial.

Participants:

- 18 PCPs randomized to intervention or control groups.

- 168 patients with limited health literacy overdue for colorectal, breast, or cervical cancer screening.

Interventions:

- Communication Intervention Group: PCPs received skills training including standardized patient (SP) feedback on counseling behaviors.

- Control Group: PCPs received performance feedback only.

- Both Groups: Underwent chart audits of patients' screening status semiannually up to 24 months and received two annual performance feedback reports.

Key Measures:

- Process Measures: Unannounced SP encounters rating PCP communication behaviors.

- Outcome Measures: Patient knowledge of cancer screening guidelines over 12 months; patient cancer screening rates over 24 months.

Results Summary: The communication intervention group showed significantly higher ratings in general communication about cancer risks and shared decision-making for colorectal cancer screening. However, there were no between-group differences in screening rates except for mammography, and no improvement in patient cancer screening knowledge [2].

Realist Evaluation Protocol for Mechanism Investigation

This protocol is based on the study by Zurynski et al. investigating A&F for reducing clinical variation at scale [38].

Objective: To identify how, why, and in what contexts A&F strategies contribute to reducing unwarranted variation in care at scale.

Design: Realist study using a context-mechanism-outcome (CMO) framework.

Data Sources:

- Initial program theory development: systematic review, realist review, program document review, stakeholder discussions.

- Theory testing: semi-structured interviews with 56 participants.

- Theory validation: expert panels with senior health leaders (n=19), agency staff (n=11), and ministry of health staff (n=21).

Analysis: Retroductive analysis of transcripts coded into the A&F program theory to identify CMO configurations.