Optimizing Biomarker Validation for Clinical Application: A Strategic Framework for Researchers and Drug Developers

This article provides a comprehensive roadmap for researchers and drug development professionals navigating the complex journey of biomarker validation.

Optimizing Biomarker Validation for Clinical Application: A Strategic Framework for Researchers and Drug Developers

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals navigating the complex journey of biomarker validation. Covering the full spectrum from foundational principles to clinical implementation, it explores the statistical frameworks for distinguishing prognostic from predictive biomarkers, details advanced methodological approaches including multi-omics and AI integration, and addresses critical troubleshooting challenges in standardization and data management. With a focus on practical strategies for navigating regulatory landscapes and leveraging real-world evidence, this guide aims to enhance validation success rates and accelerate the translation of promising biomarkers into clinically actionable tools for precision medicine.

Laying the Groundwork: Biomarker Classifications, Discovery Pipelines, and Clinical Context

Biomarker Definitions and Categories

What is a Biomarker?

A biomarker is a defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention, including therapeutic interventions [1]. Molecular, histologic, radiographic, or physiologic characteristics are all types of biomarkers, which are distinct from assessments of how an individual feels, functions, or survives (known as Clinical Outcome Assessments) [2].

The Seven Biomarker Categories

The FDA-NIH Biomarkers, EndpointS, and other Tools (BEST) resource has defined seven primary biomarker categories based on their specific applications in medical product development and clinical practice [2] [1].

Table: The Seven Primary Biomarker Categories According to FDA-NIH BEST Resource

| Biomarker Category | Definition | Primary Function | Examples |

|---|---|---|---|

| Susceptibility/Risk | Indicates potential for developing a disease or condition | Identifies individuals with genetic predisposition or elevated risk | BRCA1/2 mutations for breast/ovarian cancer [3] |

| Diagnostic | Detects or confirms presence of a disease or condition | Identifies individuals with a disease or disease subtype | PSA for prostate cancer, C-reactive protein for inflammation [3] |

| Monitoring | Assesses disease status or response to therapy over time | Tracks disease progression, relapse, or treatment response | Hemoglobin A1c for diabetes, BNP for heart failure [3] |

| Prognostic | Predicts disease outcome or progression independent of treatment | Identifies disease aggressiveness and likely clinical course | Ki-67 in breast cancer, BRAF mutations in melanoma [3] |

| Predictive | Predicts response to a specific therapeutic intervention | Identifies patients likely to benefit from particular treatments | HER2 status in breast cancer, EGFR mutations in NSCLC [4] [3] |

| Pharmacodynamic/Response | Shows biological response to a drug treatment | Demonstrates mechanism of action and biological activity | LDL cholesterol response to statins, blood pressure response to antihypertensives [3] |

| Safety | Indicates potential for toxicity or adverse effects | Monitors drug-induced injury or side effects | Liver function tests, creatinine clearance [3] |

Clinical Applications and Key Distinctions

Diagnostic Biomarkers in Clinical Practice

Diagnostic biomarkers detect or confirm the presence of a disease or condition of interest, or identify individuals with a subtype of the disease [2]. These biomarkers are particularly valuable as medicine moves toward molecular-based disease classification rather than organ-based schemes.

Critical Considerations for Diagnostic Biomarkers:

- Context of Use: A diagnostic biomarker may be useful in one clinical circumstance but misleading in another [2]

- False-Positive Tolerance: Varies by disease context—low tolerance for psychologically devastating or invasive diagnoses (e.g., pancreatic cancer) versus higher tolerance for screening common diseases (e.g., hypertension) [2]

- Validation Complexity: Requires precise definition of operating characteristics across different patient populations and clinical scenarios [2]

Prognostic vs. Predictive Biomarkers: A Critical Distinction

Understanding the difference between prognostic and predictive biomarkers is essential for appropriate clinical application.

Table: Comparison of Prognostic and Predictive Biomarkers

| Characteristic | Prognostic Biomarkers | Predictive Biomarkers |

|---|---|---|

| Primary Function | Provides information about overall disease outcome regardless of therapy [4] | Informs expected clinical outcome based on specific treatment decisions [4] |

| Identification Method | Main effect test of association between biomarker and outcome [4] | Interaction test between treatment and biomarker in statistical models [4] |

| Study Design | Can be identified in retrospective studies, case-control studies, and single-arm trials [4] | Should be identified through randomized clinical trials [4] |

| Clinical Question | "What is the likely disease course?" | "Will this patient respond to this specific treatment?" |

| Examples | STK11 mutation in non-squamous NSCLC [4] | EGFR mutation status for gefitinib response in NSCLC [4] |

Research Design and Statistical Considerations

Proper identification of prognostic and predictive biomarkers requires distinct research approaches:

- Prognostic Biomarker Identification: Can be properly conducted in retrospective studies using biospecimens from cohorts representing the target population [4]

- Predictive Biomarker Identification: Requires data from randomized clinical trials with interaction testing between treatment and biomarker [4]

The IPASS study exemplifies predictive biomarker validation, demonstrating a significant interaction (P<0.001) between EGFR mutation status and treatment response to gefitinib versus carboplatin plus paclitaxel in advanced pulmonary adenocarcinoma [4].

Biomarker Validation Workflow and Methodologies

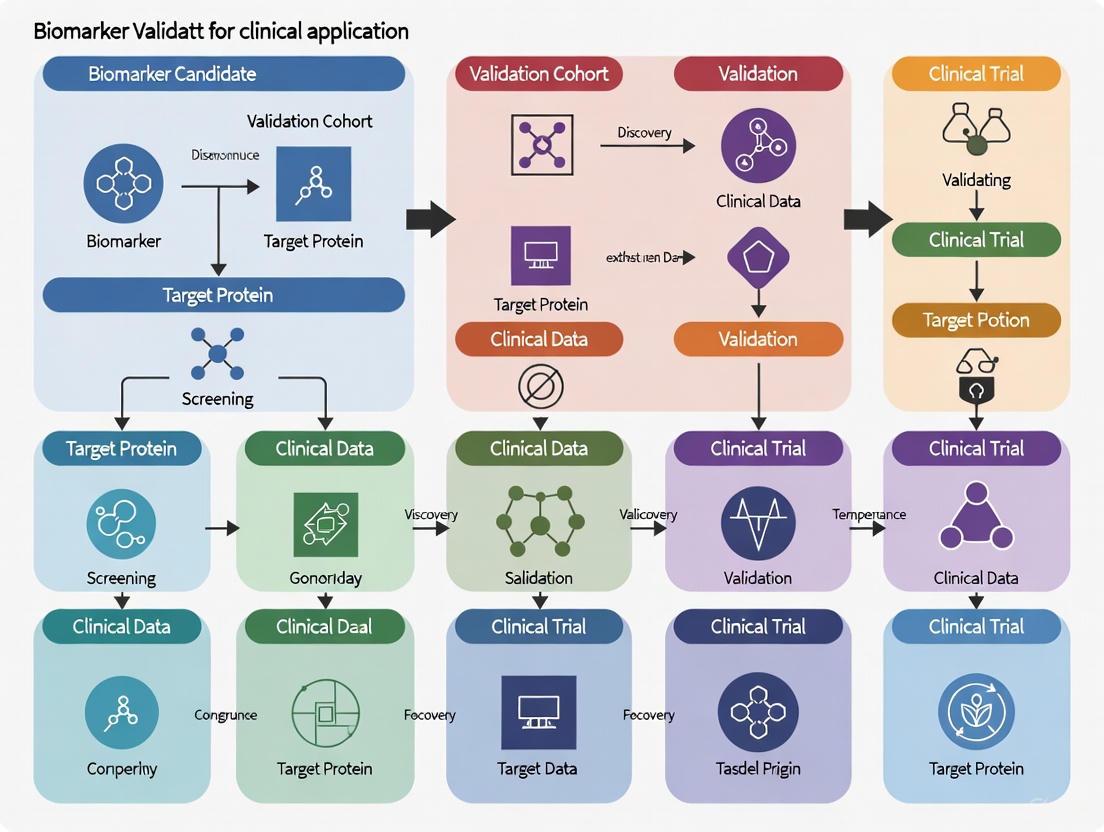

Comprehensive Validation Workflow

The journey from biomarker discovery to clinical implementation follows a structured pathway with multiple validation checkpoints.

Key Validation Metrics and Performance Characteristics

Rigorous statistical evaluation is essential for biomarker validation across multiple performance dimensions.

Table: Essential Biomarker Performance Metrics and Definitions

| Validation Metric | Definition | Interpretation in Clinical Context |

|---|---|---|

| Sensitivity | Proportion of true cases that test positive [4] | Ability to correctly identify individuals with the disease |

| Specificity | Proportion of true controls that test negative [4] | Ability to correctly exclude individuals without the disease |

| Positive Predictive Value | Proportion of test-positive patients who actually have the disease [4] | Clinical utility depends on disease prevalence |

| Negative Predictive Value | Proportion of test-negative patients who truly do not have the disease [4] | Clinical utility depends on disease prevalence |

| Area Under ROC Curve | Measure of discrimination ability ranging from 0.5 (coin flip) to 1.0 (perfect) [4] | Overall performance in distinguishing cases from controls |

| Calibration | How well a marker estimates the risk of disease or event of interest [4] | Agreement between predicted and observed outcomes |

Troubleshooting Common Biomarker Validation Challenges

Pre-Analytical and Laboratory Issues

Multiple technical factors can compromise biomarker data quality during sample collection and processing.

Table: Common Laboratory Challenges and Quality Control Solutions

| Laboratory Challenge | Impact on Biomarker Data | Recommended Solutions |

|---|---|---|

| Temperature Regulation | Degradation of temperature-sensitive biomarkers (nucleic acids, proteins) leading to unreliable results [5] | Standardized protocols for flash freezing, controlled thawing, consistent cold chain logistics [5] |

| Sample Preparation Variability | Introduction of bias affecting downstream analyses (sequencing, mass spectrometry, PCR) [5] | Standardized extraction methods, validated reagents, rigorous quality control checkpoints [5] |

| Contamination | Skewed biomarker profiles through environmental contaminants, cross-sample transfer, or reagent impurities [5] | Dedicated clean areas, routine equipment decontamination, proper handling procedures [5] |

| Human Error in Data Management | Manual errors in sample processing and data recording compromising data integrity [5] | Laboratory automation, barcode systems, electronic laboratory notebooks, competency assessments [5] |

Statistical and Study Design Challenges

Many biomarker validation failures originate from methodological and statistical shortcomings rather than biological irrelevance.

Common Statistical Pitfalls and Solutions:

- Between-Group Significance vs. Classification Success: A statistically significant result in a between-group hypothesis test often does not translate to successful classification. The critical assessment should be probability of classification error (P_ERROR), not just p-values [6]

- Cross-Validation Misapplication: Standard statistical learning texts specifically identify "The wrong and the right way to do cross-validation." When misapplied, cross-validation can produce impressive performance metrics (e.g., sensitivity >0.95) even with random data [6]

- Inadequate Test-Retest Reliability: Failure to establish rigorous test-retest reliability precludes use in longitudinal monitoring. Linear correlation should not be used to quantify reliability; intraclass correlation coefficient (ICC) with proper version selection is required [6]

- Insufficient Sample Sizes: Sample size requirements for reliability studies and prodrome evaluation are far larger than those computed for hypothesis testing purposes [6]

Experimental Protocols and Research Reagents

Essential Research Reagent Solutions

Successful biomarker research requires carefully selected reagents and platforms tailored to specific biomarker classes.

Table: Key Research Reagent Solutions for Biomarker Analysis

| Reagent/Platform | Primary Function | Application Context |

|---|---|---|

| Omni LH 96 Automated Homogenizer | Standardizes sample disruption parameters, ensures uniform processing [5] | Pre-analytical sample preparation for nucleic acid, protein, and metabolite biomarkers |

| Single-Use Omni Tip Consumables | Eliminates cross-sample contamination during homogenization [5] | Maintaining biomarker integrity in high-throughput workflows |

| High-Sensitivity Troponin Assays | Detection of previously undetectable low-level troponin elevations [2] | Refined diagnosis of small episodes of myocardial necrosis |

| Liquid Biopsy Platforms (ctDNA) | Non-invasive circulating tumor DNA analysis for early disease detection [7] | Oncology applications, expanding to infectious and autoimmune diseases |

| Single-Cell Sequencing Platforms | Examination of individual cells within tumors to assess heterogeneity [7] | Identification of rare cell populations driving disease progression |

Multi-Omics Integration Workflow

Contemporary biomarker discovery increasingly relies on integrated analysis across multiple biological layers.

Frequently Asked Questions (FAQs)

Biomarker Definition and Classification

Q: What exactly distinguishes a biomarker from a clinical endpoint? A: Biomarkers are measured indicators of biological processes, while clinical endpoints are direct measures of how a patient feels, functions, or survives. Biomarkers serve various purposes including predicting clinical endpoints, but only validated biomarkers can serve as surrogate endpoints for regulatory approval [2].

Q: Can a single biomarker fall into multiple classification categories? A: Yes, many biomarkers serve multiple purposes. For example, BRCA1 expression acts as both a prognostic biomarker (indicating disease outcome) and a predictive biomarker (for chemotherapy response) in sporadic epithelial ovarian cancer [8]. However, evidence must be developed for each intended use.

Q: What is the difference between prognostic and predictive biomarkers? A: Prognostic biomarkers provide information about overall disease outcome regardless of therapy, while predictive biomarkers inform the expected clinical outcome based on specific treatment decisions. Prognostic biomarkers answer "What is my disease trajectory?" while predictive biomarkers answer "Will this specific treatment work for me?" [4].

Validation and Implementation Challenges

Q: Why do so many promising biomarkers fail in clinical validation? A: Most failures stem from statistical and methodological issues rather than biological irrelevance. Common problems include: confusion between statistical significance and classification utility, misapplication of cross-validation techniques, inadequate test-retest reliability assessment, and insufficient sample sizes for intended applications [6].

Q: What are the key considerations for diagnostic biomarker validation? A: Diagnostic biomarker validation requires careful attention to context of use, false-positive/false-negative tolerance based on disease prevalence and consequences, and demonstration that the biomarker adds substantial information to change clinical decision-making, not just statistical association with disease [2].

Q: How do regulatory agencies like FDA evaluate biomarkers? A: The FDA Biomarker Qualification Program uses a three-stage process: 1) Letter of Intent assessing potential value and feasibility, 2) Qualification Plan detailing development strategy, and 3) Full Qualification Package with comprehensive evidence. Qualification ensures the biomarker can be relied upon for specific interpretation within a stated Context of Use [1].

Technical and Analytical Considerations

Q: What are the most common laboratory issues affecting biomarker data? A: The primary technical challenges include: temperature regulation affecting biomarker stability, sample preparation variability introducing bias, contamination skewing biomarker profiles, and human errors in data management. These can be addressed through automation, standardized protocols, and rigorous quality control [5].

Q: When should multiple biomarkers be combined into panels? A: Biomarker panels are often necessary to achieve better performance than single biomarkers, despite added measurement complexity. Using each biomarker in its continuous state retains maximal information for model development, and dichotomization for clinical decisions should occur in later validation stages [4].

Q: How are emerging technologies like AI and multi-omics changing biomarker discovery? A: AI and machine learning enable analysis of high-dimensional heterogeneous data, identifying complex biomarker-disease associations traditional methods overlook. Multi-omics approaches provide comprehensive biomarker signatures by integrating genomics, proteomics, metabolomics, and transcriptomics data [9] [7].

Technical Troubleshooting Guides

This section addresses common experimental challenges in high-throughput screening (HTS) and multi-omics data integration, providing root cause analysis and actionable solutions.

HTS Troubleshooting: Addressing False Positives and Negatives

Reported Issue: High rates of false positives and negatives in HTS results, leading to wasted resources and missed opportunities [10].

| Troubleshooting Step | Key Actions | Expected Outcome |

|---|---|---|

| Investigate Variability | Standardize manual protocols; use automated liquid handlers with verification features (e.g., DropDetection) [10]. | Reduced inter-user variability and improved assay reproducibility. |

| Automate Data Handling | Implement automated data management and analytical processes to manage vast, multiparametric data [10]. | More reliable hit identification and faster insights. |

| Verify Liquid Handling | Check precision at low volumes; use non-contact dispensers to minimize cross-contamination [10]. | Confirmed dispensing accuracy and reduced experimental artifacts. |

Multi-Omics Data Integration Troubleshooting: Overcoming Technical Noise

Reported Issue: Difficulty integrating data from different omics layers (e.g., genomics, proteomics) due to technical heterogeneity, leading to misleading conclusions [11].

| Troubleshooting Step | Key Actions | Expected Outcome |

|---|---|---|

| Standardize Pre-processing | Apply tailored normalization and batch effect correction for each data type (e.g., RNA-seq, DNA methylation) [12] [11]. | Harmonized data distributions and reduced technical noise. |

| Select Appropriate Integration Method | Choose method based on data structure and biological question. Test multiple algorithms [11]. | Robust identification of shared biological signals across omics layers. |

| Ensure Metadata Completeness | Document all sample, equipment, and software details. Use domain-specific ontologies [12]. | Improved data interpretability, reproducibility, and reuse. |

Biomarker Validation Troubleshooting: Ensuring Clinical Relevance

Reported Issue: A discovered biomarker shows statistical significance in group comparisons but fails to classify individual patients accurately [6].

| Troubleshooting Step | Key Actions | Expected Outcome |

|---|---|---|

| Perform Robust Model Validation | Avoid cross-validation misapplication. Use multiple algorithms (e.g., LASSO, random forests) for model selection [6]. | A validated model with a low probability of classification error (P_ERROR). |

| Assess Clinical Utility | Move beyond p-values. Evaluate sensitivity, specificity, positive predictive value, and area under the ROC curve [6]. | Realistic assessment of the biomarker's diagnostic or predictive performance. |

| Establish Test-Retest Reliability | Quantify reliability using the appropriate Intraclass Correlation Coefficient (ICC), not linear correlation [6]. | Confidence that the biomarker can be used for longitudinal monitoring. |

Frequently Asked Questions (FAQs)

1. What are the most critical steps to ensure a successful multi-omics data integration project?

Success hinges on three pillars: First, design the resource from the user's perspective, not just the data curator's, by defining real use-case scenarios [12]. Second, rigorously preprocess data through standardization and harmonization to make different data types (e.g., transcriptomics, proteomics) compatible [12]. Third, value metadata by thoroughly documenting samples and processes, as this is essential for data interpretation and reuse [12].

2. How can we improve the reproducibility of our High-Throughput Screening (HTS) assays?

The primary strategy is to integrate automation into your workflow. Automated liquid handlers, robotic arms, and integrated systems standardize processes, thereby reducing human error and inter-user variability [10]. Tools with in-built verification, like liquid handlers with drop detection, further enhance reproducibility by documenting and correcting dispensing errors [10].

3. We have a multi-omics dataset from the same patient samples. What integration method should we use?

The choice depends on your biological question. For an unsupervised approach to find hidden sources of variation, MOFA is a powerful tool [11]. If your goal is supervised classification based on known patient groups (e.g., healthy vs. disease), DIABLO is designed for this purpose [11]. To identify shared sample similarity patterns across omics layers, Similarity Network Fusion (SNF) is a network-based method. Best practice is to try multiple methods and compare results [11].

4. Why does our biomarker panel perform well in statistical tests but fail in clinical classification?

A statistically significant p-value in a between-group test does not guarantee successful individual classification [6]. The critical metric is the probability of classification error (P_ERROR). A biomarker must undergo rigorous model validation and its clinical utility must be assessed through metrics like sensitivity, specificity, and predictive values, not just p-values [6].

5. What are the common regulatory challenges in biomarker qualification?

Key challenges include navigating the strict protocols of regulatory agencies like the FDA and managing varying requirements across different international regulators [13]. Furthermore, proving clinical relevance across diverse populations and securing resources for the often lengthy and costly longitudinal studies required for validation are significant hurdles [13].

Methodologies & Data Summaries

Quantitative Analysis of Multi-Omics Integration Methods

The table below summarizes the characteristics of common computational frameworks for integrating matched multi-omics data (where multiple omics layers are measured from the same samples) [11].

| Method | Primary Function | Model Type | Key Output |

|---|---|---|---|

| MOFA | Identifies hidden sources of variation | Unsupervised, Bayesian factorization | Latent factors explaining variance across omics layers [11]. |

| DIABLO | Integrates data for classification | Supervised, multiblock sPLS-DA | Latent components and features predictive of sample groups [11]. |

| SNF | Fuses sample similarity networks | Unsupervised, network-based | A fused network capturing shared patterns across data types [11]. |

| MCIA | Joint analysis of multiple datasets | Unsupervised, multivariate statistics | A shared dimensional space for integrated data visualization [11]. |

Experimental Protocol: Integrating DNA Methylation and RNA-Seq Data

This protocol is adapted from a research study that successfully integrated these data types to identify disease-specific biomarker genes [12].

- Data Acquisition: Retrieve standardized DNA methylation (e.g., beta values of methylated CpG islands) and RNA-seq (e.g., gene expression) data from a public repository like TCGA2BED [12].

- Data Preprocessing: Independently normalize each dataset using standard pipelines for the respective omics type to account for technology-specific noise and batch effects [12] [11].

- Data Integration: Join the two normalized datasets based on common genomic coordinates. For example, map methylation sites at single-nucleotide positions to the genomic regions of genes from the RNA-seq data [12].

- Supervised Analysis: Train multiple supervised classification algorithms (e.g., C4.5, Random Forests) on the integrated dataset to build models that discriminate between case and control samples [12].

- Validation and Documentation: Thoroughly document every step of the analysis and make the software code openly available to ensure reproducibility [12].

Research Reagent Solutions for Multi-Omics Workflows

| Essential Material / Technology | Primary Function in Discovery Workflow |

|---|---|

| Non-Contact Liquid Handler | Precisely dispenses reagents in HTS and assay preparation, reducing volume use and cross-contamination [10]. |

| Single-Cell Sequencing Platform | Enables high-resolution measurement of transcriptomic, epigenomic, and proteomic data at the single-cell level [9]. |

| Mass Spectrometry | The core technology for identifying and quantifying proteins (proteomics) and metabolites (metabolomics) [9]. |

| DNA Methylation Array | Interrogates epigenomic states by measuring methylation levels at specific CpG sites across the genome [12]. |

Workflow Visualizations

Multi-Omics Integration & Validation Workflow

High-Throughput Screening with Automated Troubleshooting

Core Concepts: Intended Use and Context of Use

In biomarker validation, Intended Use and Context of Use are foundational concepts that dictate every subsequent validation decision. Clearly defining these elements at the outset ensures that your validation efforts are targeted, efficient, and meet regulatory expectations.

- Intended Use: This is a precise statement detailing what the biomarker test measures and the specific clinical or biological question it aims to answer. It defines the test's purpose in the context of patient care or research [14].

- Context of Use: This describes the specific application and conditions under which the biomarker will be employed. It outlines the precise role the biomarker will play in drug development or clinical decision-making, including the specific patient population, clinical setting, and how the results will inform decisions [15] [16].

Why This Step is Non-Negotiable The intended use statement is the primary factor guiding the appropriate level and scope of validation required. A higher degree of validation evidence is necessary for biomarkers that pose greater patient risk or have more significant clinical consequences. The U.S. Food and Drug Administration (FDA) emphasizes that a biomarker's qualification is specific to its Context of Use, meaning a biomarker validated for one purpose cannot be assumed valid for another without further evidence [15] [16] [14].

Troubleshooting Guide: Defining Your Intended Use and Context of Use

Researchers often encounter challenges when drafting these critical definitions. The following guide addresses common scenarios to help you formulate robust and clear statements.

| Problem Scenario | Question to Ask | Recommended Action |

|---|---|---|

| Unclear Purpose | Is the biomarker for diagnosis, predicting prognosis, monitoring treatment response, or patient stratification? | Draft a single-sentence purpose. Example: "This biomarker test is intended to identify HER2-low expression status in breast cancer patients to determine eligibility for T-DXd therapy." [14] |

| Vague Population | Have I specified the disease stage, prior treatments, demographics, and exclusion criteria? | List all relevant patient characteristics. Example: "Postmenopausal women with radiographically confirmed knee osteoarthritis." [15] [14] |

| Ambiguous Application | Will the biomarker be used for go/no-go decisions in an early clinical trial, or to support a label claim in a Phase III trial? | Detail the drug development phase and decision point. Example: "For use in Phase II trials to enrich the study population for patients likely to respond to Drug X." [15] |

| Undefined Testing Model | Will testing occur in a central lab or be distributed as a kit to multiple sites? | Specify the delivery model early, as it impacts analytical validation requirements and logistics [14]. |

| Ignoring Risks/Benefits | What are the potential risks to patients if the biomarker result is incorrect? | Document potential patient risks and benefits, as this risk/benefit ratio influences the regulatory evidence required [14]. |

Detailed Experimental Protocol: Drafting and Locking Down Your Definitions

A systematic approach to defining Intended Use and Context of Use ensures no critical element is overlooked. The following protocol provides a methodology for establishing this foundation.

Objective: To create a comprehensive and definitive Intended Use and Context of Use statement that will guide all subsequent biomarker validation activities.

Materials:

- Internal team members (clinical, regulatory, biomarker scientists)

- Preclinical and early clinical data

- Draft Target Product Profile (TPP) for the associated therapeutic (if applicable)

- Regulatory guidance documents (e.g., from FDA Biomarker Qualification Program) [16]

Methodology:

- Initial Scoping Workshop: Convene a cross-functional team to brainstorm and draft an initial intended use statement. Address all elements from the table below, acknowledging any known data gaps.

- Evidence Gap Analysis: Compare the drafted statement against existing data. Identify what additional evidence is required to support the claims in the intended use (e.g., "Do we have data showing biomarker performance in the specified sub-population?").

- Stakeholder Review and Iteration: Circulate the draft to key stakeholders, including potential clinical investigators and regulatory consultants, for feedback. Refine the statement based on this input.

- Protocol Alignment: Ensure that the intended use is accurately reflected in the design of the clinical validation study protocols. The patient population, sample type, and endpoints must align perfectly.

- Final Lock-In: Before initiating full-scale validation studies, formally "lock" the intended use and context of use statements. Any subsequent changes may require significant re-validation or bridging studies, adding cost and time [14].

The Scientist's Toolkit: Essential Components for Your Intended Use Statement

A robust intended use statement is built from specific, well-defined components. The table below details the essential elements that must be established.

| Component | Description | Example / Function |

|---|---|---|

| Intended Patient Population | Precise description of the patients for whom the test is designed, including disease stage, demographics, and prior treatment history. | "Patients with metastatic non-small cell lung cancer who have progressed on platinum-based chemotherapy." |

| Test Purpose | The specific clinical or research question the test results will inform. | "To stratify patients as 'responders' or 'non-responders' based on a predefined biomarker threshold for clinical trial enrollment." |

| Specimen Type | The biological matrix required for testing (e.g., serum, tissue biopsy, plasma). | "Formalin-fixed, paraffin-embedded (FFPE) tumor tissue sections." |

| Intended User | The professional who will perform and interpret the test. | "Board-certified pathologists in a clinical laboratory setting." |

| Associated Product | The drug or therapeutic intervention linked to the biomarker, if any. | "For use with investigational drug ABC123." |

| Benefit/Risk Profile | The potential clinical benefit to the patient and the risks associated with an incorrect result. | "Benefit: Identifies patients likely to experience progression-free survival. Risk: False negative may exclude a patient from beneficial therapy." [14] |

Validation Workflow and Regulatory Pathway

The following diagram illustrates how the defined Intended Use and Context of Use influences the entire validation journey, from initial planning to regulatory submission.

Figure 1. The biomarker validation pathway, driven by the initial definition of Intended Use and Context of Use.

Frequently Asked Questions (FAQs)

Q1: Can I proceed with biomarker validation if my intended use is not fully defined? No. Attempting validation without a locked intended use statement is a high-risk strategy. The intended use dictates the validation strategy, including the patient cohort, statistical endpoints, and level of evidence required. Proceeding without it often leads to costly re-work, failed studies, and regulatory delays [14].

Q2: How specific does the Context of Use need to be for an early-phase clinical trial? Even in early phases, it should be highly specific. For a Phase I trial, you might specify: "For use in assessing target engagement of Drug X in patients with refractory Disease Y, using plasma samples collected at Cmax." This precision ensures the data you collect is fit-for-purpose and can be built upon in later phases [15].

Q3: What is the difference between a "valid biomarker" and a "qualified biomarker"? Validation primarily refers to assessing the biomarker's measurement performance characteristics (e.g., accuracy, precision) to ensure it gives reproducible and accurate data. Qualification is the subsequent evidentiary process of linking a biomarker with biological processes and clinical endpoints for a specific Context of Use. You must first have a validated measurement for a biomarker to be considered for qualification [15].

Q4: When is the right time to engage regulators about my intended use? Early engagement is strongly recommended. For manufacturers intending to market their device commercially, initiating dialogue with regulatory authorities (e.g., via the FDA's Q-Submission process) early in the development process can provide valuable feedback on the proposed intended use and validation plans, de-risking the later stages of development [14].

Frequently Asked Questions (FAQs)

FAQ 1: What are the core statistical metrics for evaluating a diagnostic biomarker, and how do they interrelate?

The core statistical metrics for evaluating a diagnostic biomarker are sensitivity, specificity, positive predictive value (PPV), and negative predictive value (NPV). These metrics help determine how well a biomarker distinguishes between diseased and non-diseased states [17].

- Sensitivity is the probability that a test result will be positive when the disease is present (true positive rate). It is calculated as:

Sensitivity = True Positives / (True Positives + False Negatives)[17] [18]. - Specificity is the probability that a test result will be negative when the disease is not present (true negative rate). It is calculated as:

Specificity = True Negatives / (True Negatives + False Positives)[17] [18]. - Positive Predictive Value (PPV) is the probability that the disease is present when the test is positive [17] [18].

- Negative Predictive Value (NPV) is the probability that the disease is not present when the test is negative [17] [18].

It is crucial to understand that PPV and NPV are highly dependent on the prevalence of the disease in the population being tested. A test will have a better PPV and a worse NPV when a disease is highly prevalent [17].

Table 1: Core Statistical Metrics for a Diagnostic Biomarker

| Metric | Definition | Formula |

|---|---|---|

| Sensitivity | True positive rate; ability to correctly identify diseased individuals | True Positives / (True Positives + False Negatives) |

| Specificity | True negative rate; ability to correctly identify healthy individuals | True Negatives / (True Negatives + False Positives) |

| Positive Predictive Value (PPV) | Probability disease is present given a positive test result | (Sensitivity × Prevalence) / [Sensitivity × Prevalence + (1-Specificity)×(1-Prevalence)] |

| Negative Predictive Value (NPV) | Probability disease is absent given a negative test result | (Specificity × (1-Prevalence)) / [(1-Sensitivity) × Prevalence + Specificity × (1-Prevalence)] |

FAQ 2: How do prognostic and predictive biomarkers differ in their clinical application and statistical validation?

Prognostic and predictive biomarkers serve distinct purposes and require different study designs for validation [4].

- A prognostic biomarker provides information about the overall expected clinical outcome (e.g., overall survival) for a patient, regardless of a specific therapy. It informs on the natural history of the disease. For example, a STK11 mutation is associated with a poorer outcome in non-squamous non-small cell lung cancer (NSCLC) [4]. A prognostic biomarker is identified through a test of association between the biomarker and the outcome in a statistical model, and can be identified in properly conducted retrospective studies [4].

- A predictive biomarker informs the likely response to a specific treatment. It helps determine which therapy a patient should receive. For example, EGFR mutation status in NSCLC predicts a better response to gefitinib compared to carboplatin plus paclitaxel [4]. A predictive biomarker must be identified in secondary analyses of data from a randomized clinical trial, specifically through a statistical test for interaction between the treatment and the biomarker [4].

Table 2: Prognostic vs. Predictive Biomarkers

| Feature | Prognostic Biomarker | Predictive Biomarker |

|---|---|---|

| Clinical Question | What is the patient's overall disease outcome? | Which treatment is the patient likely to respond to? |

| Application | Informs on disease aggressiveness and natural history | Informs treatment selection |

| Study Design for Validation | Retrospective studies from cohorts or single-arm trials | Randomized clinical trials (testing for treatment-by-biomarker interaction) |

| Example | STK11 mutation in NSCLC [4] | EGFR mutation for gefitinib in NSCLC [4] |

FAQ 3: What does "dynamic range" mean in biomarker quantification, and why is it a major technical challenge?

The dynamic range in biomarker quantification refers to the span of concentrations over which an assay can accurately and linearly measure an analyte [19]. The challenge arises because the physiological dynamic range of protein concentrations in human plasma, for example, spans over 10 orders of magnitude (from femtomolar to millimolar), while contemporary detection methods (like mass spectrometry or immunoassays) are typically limited to a quantifiable range of only 3-4 orders of magnitude [19] [20].

This mismatch means that high-abundance proteins (e.g., albumin in plasma) can dominate the analytical signal, suppressing the detection of low-abundance proteins that are often the most biologically relevant as disease biomarkers [20]. This necessitates complex sample handling like splitting samples for differential dilution or amplification, which can introduce variability and non-linear dilution effects, undermining the accuracy and reproducibility of measurements [19].

FAQ 4: How early can biomarker dynamics signal disease onset before clinical symptoms appear?

Longitudinal studies show that biomarker changes can begin decades before clinical symptom onset. In a 30-year study on Alzheimer's disease, change points for core biomarkers were identified many years prior to clinical diagnosis [21]:

- Amyloid-beta (Aβ) pathology accelerated 17.1 years before symptom onset.

- Phosphorylated tau (p-tau) pathology accelerated 15.8 years before onset.

- Neurodegeneration, measured by neurofilament light chain (NfL) and whole-brain white matter volume, accelerated 11.6 years before onset.

- Total ventricle volume acceleration was detected 9.7 years before onset [21].

This supports a temporal sequence in the disease pathological cascade, where certain biomarkers can serve as very early warning signals [21].

Troubleshooting Guides

Problem 1: My biomarker assay lacks sensitivity for low-abundance targets in complex biofluids like plasma.

Solution: This is a common problem due to the high dynamic range of biofluids. Consider these approaches:

- Implement Signal Equalization Strategies: For proximity-based assays (e.g., proximity ligation assay), use tuning mechanisms like probe loading and epitope depletion to adjust the signal output for each analyte individually [19].

- Probe Loading: Increase the concentration of detection antibodies for low-abundance analytes to shift the binding curve upward, enhancing their signal [19].

- Epitope Depletion: For high-abundance analytes, add unlabeled "depletant" antibodies to reduce the probability of signal generation, preventing assay saturation and allowing for simultaneous measurement of low- and high-abundance targets in a single sample without dilution [19].

- Use Enrichment Kits: Employ commercial sample preparation technologies designed for deep proteome analysis. These kits can use bead-based enrichment to remove high-abundance proteins, thereby compressing the dynamic range of the sample and increasing the identification of low-abundance proteins. For example, such methods have been shown to yield a 2.2-fold increase in protein identifications from plasma [20].

Diagram 1: Troubleshooting Low Sensitivity

Problem 2: My biomarker panel demonstrates poor specificity in a validation cohort.

Solution: Poor specificity, leading to false positives, can stem from various issues.

- Re-evaluate Your Biomarker Combination:

- Avoid Dichotomization: Using each biomarker in its continuous form retains maximal information for model development, which can lead to better performance than using pre-defined dichotomized (positive/negative) cut-offs [4].

- Incorporate Variable Selection: During statistical model development, use shrinkage methods (e.g., Lasso regression) or other variable selection techniques to minimize overfitting and build a more robust model that generalizes better to new data [4].

- Control for Multiple Comparisons: If you are evaluating a large number of biomarkers (high-dimensional data), it is essential to control for the false discovery rate (FDR). Methods like the Benjamini-Hochberg procedure should be implemented to reduce the chance of falsely identifying significant associations [4].

- Audit Your Cohort Design: Bias in patient selection is a major cause of failure in validation studies [4]. Ensure your validation cohort adequately represents the target population in terms of ancestry, socioeconomic status, and clinical characteristics. Underrepresentation can lead to models that perform poorly in real-world settings [22].

Problem 3: I need to establish a timeline of biomarker changes for a progressive disease.

Solution: Mapping the temporal sequence of biomarkers requires longitudinal data and specific statistical models.

- Study Design: Collect serial measurements of clinical, cognitive, and biomarker data (from imaging, CSF, or blood) from a cohort over many years. The BIOCARD study, for example, followed participants for 30 years with annual assessments [21].

- Statistical Analysis - Change Point Modeling: Use statistical models like piecewise regression (also known as breakpoint or change point analysis) to analyze longitudinal data. This model fits two or more straight lines to the data sequence and identifies the specific time point (the "change point") where the slope of the trajectory changes most significantly [21].

- Application: This method was used to identify the precise points where the rates of change for Aβ, tau, and MRI volumes accelerated in the years before the clinical onset of Mild Cognitive Impairment (MCI) [21]. Aligning these change points relative to the estimated date of symptom onset allows you to build a timeline of the disease's pathological cascade.

Diagram 2: Timeline Establishment

Research Reagent Solutions & Essential Materials

Table 3: Key Reagents and Technologies for Biomarker Research

| Item / Technology | Primary Function in Biomarker Research |

|---|---|

| Next-Generation Sequencing (NGS) | High-throughput DNA/RNA sequencing to identify genetic mutations, rearrangements, and gene expression patterns linked to diseases [4] [23]. |

| Mass Spectrometry-Based Proteomics | Precise identification and quantification of proteins in complex biological samples; central to both top-down (intact protein) and bottom-up (peptide-based) approaches [23]. |

| Lumipulse G1200 Platform | Fully automated electrochemiluminescence assay system for measuring core cerebrospinal fluid (CSF) biomarkers like Aβ40, Aβ42, and p-tau [21]. |

| Quanterix SIMOA HD-X Platform | Ultra-sensitive digital immunoassay platform for measuring very low-abundance biomarkers in blood and CSF, such as Neurofilament Light Chain (NfL) and GFAP [21]. |

| PreOmics ENRICH Technology | A sample preparation kit that uses bead-based enrichment to deplete high-abundance proteins, compressing the dynamic range of plasma and CSF for deeper proteomic coverage [20]. |

| Protein Microarrays | High-throughput tools for simultaneously detecting proteins (analytical arrays) or studying protein interactions (functional arrays) in complex samples [23]. |

| Polyclonal Antibody Pools (for PLA) | Used in proximity ligation assays (PLA) to capture and detect target proteins. Using polyclonal pools increases the likelihood of binding multiple distinct epitopes on a target [19]. |

| Unique Molecular Identifiers (UMIs) | Short DNA barcodes added to detection antibodies in sequencing-based assays (like PLA) to tag individual molecules, reducing PCR amplification bias and enabling absolute quantification [19]. |

Troubleshooting Guides and FAQs

Why does my biomarker fail to validate in independent cohorts?

A common reason is inadequate statistical power or unaddressed bias during the discovery phase.

- Solution: Conduct a priori power calculations to ensure sufficient sample size and number of events [4]. Implement randomization and blinding during specimen analysis to control for technical batch effects and observer bias [4]. Ensure your discovery cohort accurately represents the target clinical population [4].

How do I choose the right validation technology platform?

The choice depends on the biomarker's molecular nature, required sensitivity, and intended clinical use.

- Solution: For single-plex protein analysis, ELISA is a robust standard, but for greater sensitivity and multiplexing, consider Meso Scale Discovery (MSD) or LC-MS/MS [24]. For cellular biomarkers, flow cytometry is often appropriate [25]. A "fit-for-purpose" approach is recommended, where the validation level matches the biomarker's intended use [24].

What are the major regulatory hurdles for biomarker validation?

The primary hurdle is demonstrating analytical and clinical validity to regulatory standards.

- Solution: Early engagement with regulatory guidance is crucial. The FDA and EMA emphasize precision and accuracy benchmarks and require evidence of robust performance across independent sample sets [25] [24]. A review of EMA qualifications found that 77% of biomarker challenges were linked to assay validity issues, highlighting the need for rigorous analytical validation [24].

How can I improve the translational potential of a preclinical biomarker?

Many biomarkers fail due to limited generalizability from model systems to human populations.

- Solution: Use clinically relevant models such as Patient-Derived Xenografts (PDX) and organoids early in discovery [26]. Integrate multi-omics approaches to build comprehensive biomarker signatures and leverage AI-powered analytics to identify patterns with higher predictive value for human biology [7] [26].

Key Experiment Protocols

Protocol 1: Analytical Validation for a Protein Biomarker Assay

This protocol outlines key experiments to establish the analytical validity of an immunoassay, such as an ELISA or MSD, for quantifying a protein biomarker in serum.

1. Precision and Accuracy Profiling

- Method: Run the assay using quality control (QC) samples at low, medium, and high concentrations across multiple days (e.g., 5 days, 3 runs per day) with two replicates each [27].

- Data Analysis: Calculate inter-assay and intra-assay Coefficient of Variation (CV). A CV of less than 20-30% is often required for robust assays. Assess accuracy by comparing the measured mean concentration to the known spiked concentration [27].

2. Dynamic Range and Sensitivity Determination

- Method: Prepare a standard curve using a serial dilution of the purified biomarker analyte.

- Data Analysis: Fit the standard curve data using a 4- or 5-parameter logistic model. The dynamic range is the concentration between the upper and lower limits of quantification (ULOQ, LLOQ). The LLOQ is the lowest concentration that can be quantified with acceptable precision and accuracy (CV <20%) [24].

Protocol 2: Confirmatory Analysis for a Predictive Biomarker

This protocol describes the statistical analysis to confirm a biomarker's predictive value using data from a randomized clinical trial (RCT).

1. Interaction Test

- Method: In an RCT, test for a statistically significant interaction between the treatment arm and the biomarker status in a statistical model (e.g., a Cox proportional hazards model for a time-to-event outcome) [4].

- Data Analysis: The model should include terms for treatment, biomarker (as a continuous or binary variable), and the treatment-by-biomarker interaction. A significant interaction term (e.g., p < 0.05) provides evidence that the treatment effect differs by biomarker status [4].

2. Stratified Analysis

- Method: After a significant interaction is found, perform a stratified analysis to estimate the treatment effect (e.g., Hazard Ratio) separately within the biomarker-positive and biomarker-negative subgroups [4].

- Data Analysis: This illustrates the direction and magnitude of the treatment benefit in each subgroup, confirming the biomarker's predictive utility [4].

Research Reagent Solutions

Table: Essential reagents and technologies for biomarker development.

| Reagent/Technology | Primary Function in Biomarker Workflow |

|---|---|

| U-PLEX Multiplex Assay (MSD) [24] | Simultaneously measure multiple protein analytes from a single, small-volume sample. |

| Liquid Chromatography Tandem Mass Spectrometry (LC-MS/MS) [24] | High-sensitivity, high-specificity identification and quantification of proteins/peptides. |

| Next-Generation Sequencing (NGS) [23] | High-throughput profiling of DNA/RNA for genomic and transcriptomic biomarker discovery. |

| Patient-Derived Organoids [26] | Physiologically relevant 3D in vitro models for biomarker discovery and drug response testing. |

| CRISPR-Based Functional Genomics [26] | Systematically identify genetic biomarkers that influence drug response. |

Workflow Diagrams

Biomarker Development Pipeline

Validation Framework

Advanced Methodologies and Technological Platforms for Robust Biomarker Validation

Core Parameter Definitions and Benchmarks

The validation of biomarker assays relies on a framework of core parameters to ensure data is reliable and clinically meaningful. The FDA's 2025 Biomarker Guidance reiterates that method validation for biomarker assays must address the same fundamental questions as drug assays, with accuracy, precision, sensitivity, selectivity, and specificity being critical characteristics that define the method [28].

The table below summarizes these core parameters and their target performance benchmarks, which are informed by regulatory standards and industry best practices [28] [24].

| Validation Parameter | Definition | Common Performance Targets & Industry Benchmarks |

|---|---|---|

| Precision | The closeness of agreement between a series of measurements from multiple sampling. It is typically divided into within-run and between-run precision. | Both within-run and between-run precision should demonstrate a coefficient of variation (CV) of ≤20% (often ≤15% for critical biomarkers) [24]. |

| Accuracy | The closeness of agreement between the measured value and a known accepted reference value. | Mean accuracy should be within ±20% of the theoretical value (±15% is a common, more stringent goal). Recovery of spiked analytes often targeted at 80-120% [24]. |

| Sensitivity | The lowest concentration of an analyte that can be reliably distinguished from zero. Often defined as the Lower Limit of Quantification (LLOQ). | LLOQ should be measurable with defined precision and accuracy (e.g., CV ≤20% and accuracy ±20%). Signal-to-noise ratio of ≥5:1 is a common benchmark [24]. |

| Specificity/Selectivity | The ability to unequivocally assess the analyte in the presence of other components, such as matrix interferents or similar molecules. | The measured concentration should remain within ±20% of the nominal value when interferents are present. No significant signal ( |

Troubleshooting Guide: FAQs on Validation Parameter Issues

This section addresses common challenges researchers face when validating these core parameters.

FAQ 1: My precision data shows high CVs. What are the most common sources of this variability? High variability often stems from inconsistencies in pre-analytical and analytical steps [5].

- Check Sample Preparation: Inconsistent sample homogenization or extraction is a primary culprit. Solution: Implement automated homogenization systems (e.g., Omni LH 96) to standardize processing and reduce human error, which can cut manual errors by up to 88% [5].

- Review Reagent Handling: Ensure reagents are prepared and stored consistently. Variations in reagent age, temperature, or pipetting can increase CVs.

- Audit Equipment Calibration: Instrument drift or improper calibration can cause between-run variability. Adhere to a strict maintenance and calibration schedule.

- Control Environmental Factors: Temperature fluctuations in the lab or during sample storage can degrade biomarkers, leading to imprecise results [5].

FAQ 2: My accuracy (recovery) is outside the acceptable range. How can I investigate this? Poor recovery indicates a systematic error in the measurement [24].

- Assay Specificity: Investigate if matrix components (e.g., lipids, hemoglobin) or structurally similar molecules are interfering with the assay. Solution: Use more specific detection methods like LC-MS/MS, which offers superior specificity by separating analytes from interferents based on mass and charge [24].

- Standard Curve Integrity: Verify the integrity of your reference standards and the calibration curve. Prepare fresh standards and ensure the matrix for the standard curve matches your sample matrix as closely as possible.

- Protocol Deviations: Scrutinize the procedure for any deviations, such as incorrect incubation times or temperatures, that could affect the assay's binding or reaction kinetics.

FAQ 3: How can I improve the sensitivity of my biomarker assay? Improving sensitivity allows for the detection of lower-abundance biomarkers [24].

- Adopt Advanced Technologies: Move beyond traditional ELISA. Platforms like Meso Scale Discovery (MSD) that use electrochemiluminescence can offer up to 100 times greater sensitivity and a broader dynamic range [24].

- Optimize Signal Amplification: Evaluate different detection substrates or amplification systems to enhance the signal output relative to background noise.

- Sample Concentration: If possible, concentrate your sample prior to analysis, being mindful of potential effects on the matrix.

FAQ 4: My assay lacks specificity. What strategies can I use? Lack of specificity can lead to false positives or overestimation of analyte concentration [24].

- Cross-Reactivity Testing: Systematically test the assay against known related proteins or metabolites to identify cross-reactivity.

- Antibody Validation: For immunoassays, the antibody is key. Use highly specific, well-validated antibodies. For complex targets, consider switching to an LC-MS/MS-based method, which is less prone to antibody-related cross-reactivity issues [24].

- Sample Clean-Up: Incorporate a sample purification or clean-up step, such as solid-phase extraction, to remove interfering substances before analysis.

FAQ 5: What is the single biggest lab mistake that impacts all these validation parameters? The most significant overarching issue is inconsistent sample handling and preparation, which falls under pre-analytical errors. Studies indicate that pre-analytical errors account for approximately 70% of all laboratory diagnostic mistakes [5]. Inconsistent freezing/thawing cycles, variable processing times, and manual homogenization techniques introduce variability that undermines precision, accuracy, and the reliable detection of true biological signals.

Experimental Protocols for Parameter Validation

Protocol for Precision and Accuracy (Recovery)

This protocol uses Quality Control (QC) samples at low, mid, and high concentrations.

1. Materials:

- Analyte of interest (purified)

- Appropriate biological matrix (e.g., serum, plasma)

- Assay reagents and buffers

- Standard equipment (pipettes, plate reader, LC-MS/MS system)

2. Procedure:

- Prepare QC Samples: Spike the analyte into the biological matrix at three concentrations covering the dynamic range (e.g., near LLOQ, mid-range, and near the top of the range). Prepare a minimum of five replicates per QC level.

- Analyze Samples: Run all QC samples in a single batch for within-run precision. Analyze the same QC samples over at least three different days/batches for between-run precision.

- Calculate Precision: For each QC level, calculate the mean, standard deviation (SD), and coefficient of variation (CV = SD/mean × 100%).

- Calculate Accuracy: For each QC level, calculate the mean measured concentration and express it as a percentage of the nominal (theoretical) concentration: (Mean Measured Concentration / Nominal Concentration) × 100.

3. Interpretation: The assay is acceptable if the CV for precision is ≤20% and the mean accuracy is 80-120% for each QC level (with at least ⅔ of individual samples meeting this criterion) [24].

Protocol for Sensitivity (LLOQ Determination)

1. Procedure:

- Prepare Low-Concentration Samples: Prepare a series of samples at progressively lower concentrations in the relevant matrix.

- Analyze Samples: Analyze a minimum of five replicates of each low-concentration sample, including a blank (matrix without analyte).

- Evaluate Performance: The LLOQ is the lowest concentration where the analyte response is distinguishable from the blank with a signal-to-noise ratio of ≥5:1, and which can be measured with an accuracy of 80-120% and a precision (CV) of ≤20% [24].

Protocol for Specificity/Selectivity

1. Procedure:

- Prepare Interference Samples: Spike the biological matrix with potential interferents (e.g., hemolyzed blood, lipemic serum, or structurally similar molecules) at physiologically relevant high concentrations. Also, prepare control samples with only the analyte and only the interferent.

- Prepare Matrix Blank: Analyze the matrix without any analyte or interferents.

- Analyze and Compare: Analyze all samples and compare the measured values.

- The blank should show no significant signal (e.g., <20% of LLOQ).

- The sample with only the interferent should show no signal.

- The sample with analyte plus interferent should have a measured concentration within ±20% of the sample with only the analyte [24].

Visualization of Workflows and Relationships

Biomarker Validation Workflow

Precision Troubleshooting Logic

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and technologies essential for robust biomarker validation [24] [5].

| Tool / Technology | Function in Validation | Key Application Note |

|---|---|---|

| LC-MS/MS (Liquid Chromatography-Tandem Mass Spectrometry) | Provides high specificity and sensitivity for quantifying biomarkers, especially low-abundance analytes. Superior for multiplexing. | Ideal for overcoming specificity challenges and cross-reactivity. Allows analysis of hundreds to thousands of proteins in a single run [24]. |

| MSD (Meso Scale Discovery) U-PLEX | A multiplexed immunoassay platform using electrochemiluminescence for highly sensitive, simultaneous measurement of multiple biomarkers. | Offers up to 100x greater sensitivity than ELISA and a wider dynamic range. Enables custom biomarker panels, saving sample volume and cost [24]. |

| Automated Homogenizer (e.g., Omni LH 96) | Standardizes the initial sample preparation step, reducing human error and variability in sample disruption. | Critical for ensuring precision. Reduces contamination risk and increases processing efficiency by up to 40%, directly addressing a major source of pre-analytical error [5]. |

| Validated Antibody Pairs | For immunoassays, these are critical reagents that define the assay's specificity, sensitivity, and dynamic range. | Must be rigorously tested for cross-reactivity. A primary cause of assay failure is poor antibody specificity [24]. |

Troubleshooting Guides

Guide 1: Addressing Poor Parallelism in Biomarker Assays

Problem: The measured concentration of your endogenous biomarker shows unacceptably high variability (%CV) upon dilution, and the dilution curve does not run parallel to the standard curve.

Explanation: Poor parallelism indicates that the immunoreactivity of the endogenous biomarker in the patient sample differs from that of the purified standard/calibrator in the substitute matrix [29]. This can be due to the presence of matrix effects (e.g., interfering proteins, salts, pH differences) or intrinsic molecular differences in the biomarker itself (e.g., post-translational modifications, complex formation) that affect antibody binding [29] [30].

Solution Steps:

- Confirm the Result: Repeat the experiment using a fresh aliquot of the original sample. Ensure serial dilutions are performed accurately with the appropriate diluent.

- Investigate the Sample Matrix:

- Try a Different Diluent: The standard diluent may not be compatible with your sample matrix. Test alternative diluents that more closely match the chemical composition of the natural sample matrix [29].

- Increase the Minimum Required Dilution (MRD): A higher dilution factor may reduce matrix interference to a level where it no longer impacts the assay [29].

- Verify Assay Selectivity: Investigate if specific components in the sample (like heterophilic antibodies or binding proteins) are causing interference. This may require additional experiments like spike-and-recovery with the specific sample [30].

- Re-evaluate the Standard: If the biomarker is known to have isoforms or modifications, the purified standard may not be an appropriate reference. Where possible, use a native form of the biomarker as a standard [29].

Guide 2: Recovering from Failed Spike-and-Recovery Experiments

Problem: The percentage of recovered analyte spiked into the natural sample matrix falls outside the acceptable range (typically 80-120%) when compared to spike recovery in the standard diluent [29].

Explanation: A failed spike-and-recovery indicates a significant difference between the natural sample matrix and the substitute matrix used for the standard curve. Components in the sample matrix are interfering with the antibody-analyte binding, either by masking epitopes or affecting the assay chemistry [29] [30].

Solution Steps:

- Check Spike Concentration: Ensure the analyte is spiked at a concentration within the assay's dynamic range. A concentration too high or too low can give unreliable recovery values.

- Optimize the Matrix:

- Modify the Diluent: Adjust the pH, salt concentration, or protein content (e.g., by adding BSA) of the diluent to better mimic the natural sample matrix and reduce interference [29].

- Use a Surrogate Matrix: If the natural matrix is too complex or variable, consider validating a surrogate matrix (e.g., stripped matrix or artificial buffer) for the standard curve, provided parallelism with the natural matrix is confirmed [31] [30].

- Sample Pre-treatment: In some cases, sample purification or extraction steps may be necessary to remove interfering substances before analysis.

Guide 3: Managing Variable Results from Automated Sample Preparation

Problem: Inconsistent biomarker data is generated, potentially due to errors introduced during manual or automated sample preparation.

Explanation: Variability in sample processing (homogenization, liquid handling) can introduce bias and significantly impact downstream analyses, making it difficult to detect true biological signals [5]. Temperature fluctuations during storage or processing can also degrade sensitive biomarkers [5].

Solution Steps:

- Standardize Protocols: Implement and strictly adhere to detailed Standard Operating Procedures (SOPs) for sample preparation, including precise instructions for homogenization speed/duration, extraction methods, and reagent volumes [5].

- Implement Automation: Utilize automated homogenizers and liquid handlers to reduce human error and cross-contamination. One clinical genomics lab reported an 88% decrease in manual errors after automating their workflow [5].

- Control Temperature: Standardize protocols for flash-freezing samples, maintain consistent cold chain logistics, and control thawing conditions to preserve biomarker integrity [5].

- Introduce Quality Controls: Implement rigorous quality control checkpoints, such as using barcoding systems to track samples (reducing mislabeling by up to 85%) and routine equipment calibration [5].

Frequently Asked Questions (FAQs)

Q1: Why is parallelism considered more critical than dilutional linearity for endogenous biomarkers?

A1: While both are important, parallelism directly assesses whether the endogenous biomarker in its natural matrix behaves identically to the purified standard in a substitute matrix across dilutions. Dilutional linearity often uses a sample matrix spiked with the standard, which may not fully capture the complexity of the endogenous biomarker's environment. Parallelism is therefore a more rigorous test for confirming that the standard curve is a true representative for calculating the concentration of the endogenous biomarker, ensuring accurate quantitation [29] [31].

Q2: What is an acceptable %CV for a parallelism experiment?

A2: There is no universal fixed value, as the acceptable %CV should be defined based on the assay's intended use. However, a %CV within 20-30% is generally considered to demonstrate successful parallelism [29]. The exact acceptance criteria should be justified by the researcher based on the biological variability of the biomarker and the clinical decision points.

Q3: How does the new FDA guidance on biomarker validation view parallelism?

A3: The 2025 FDA Bioanalytical Method Validation for Biomarkers guidance directs the use of ICH M10, which includes a requirement for parallelism assessments when using a surrogate matrix or surrogate analyte approach [31]. This underscores the regulatory expectation for demonstrating that the standard curve is valid for measuring the endogenous biomarker in study samples.

Q4: Our spike-and-recovery results are acceptable, but parallelism fails. What does this mean?

A4: This discrepancy suggests that while your assay can detect the pure, spiked analyte added to the matrix (good recovery), it may not be detecting the native, endogenous form of the biomarker with the same efficiency. This is a strong indicator of a difference in immunoreactivity between the native biomarker and the purified standard, potentially due to post-translational modifications or the biomarker being bound to other molecules in the sample [29]. In this case, the parallelism result takes precedence, and the assay may not be suitable for its intended use without further optimization.

Experimental Data & Protocols

Table 1: Interpretation of Parallelism and Recovery Results

| Experimental Result | Typical Acceptance Criteria | Interpretation | Recommended Action |

|---|---|---|---|

| Parallelism (%CV) | 20-30% [29] | Comparable immunoreactivity between endogenous biomarker and standard. | Proceed with assay validation. |

| Parallelism (%CV) | >30% [29] | Significant difference in immunoreactivity or matrix interference. | Investigate matrix, diluent, or standard; do not proceed. |

| Spike-and-Recovery (%) | 80-120% [29] | Minimal matrix interference. Sample matrix and standard diluent are compatible. | Proceed with assay validation. |

| Spike-and-Recovery (%) | <80% or >120% [29] | Significant matrix interference affecting antibody binding. | Optimize sample diluent or minimum required dilution. |

Table 2: Essential Research Reagent Solutions

| Reagent / Solution | Function in Biomarker Assay Validation |

|---|---|

| Sample Diluent | Dilutes samples to a concentration within the assay's dynamic range; its composition is critical for minimizing matrix effects [29]. |

| Surrogate Matrix | A substitute for the natural sample matrix (e.g., buffer, stripped matrix) used to prepare the standard curve when the natural matrix is unavailable or unsuitable [30]. |

| Reference Standard | A highly purified form of the biomarker used to create the calibration curve; its integrity and similarity to the endogenous biomarker are crucial [29]. |

| Quality Control (QC) Samples | Samples with known concentrations (high, mid, low) used to monitor the assay's precision and accuracy during validation and sample analysis [30]. |

Detailed Experimental Protocol: Parallelism Testing

Purpose: To demonstrate that the measured concentration of an endogenous biomarker is consistent across multiple dilutions and that the dilution curve is parallel to the standard curve [29].

Procedure:

- Sample Selection: Identify at least 3 individual samples that have high endogenous concentrations of the biomarker. The concentration of the neat (undiluted) sample should be within the assay's upper limit of quantification (ULOQ) [29].

- Serial Dilution: Perform a 1:2 serial dilution of each sample using the validated sample diluent. Continue diluting until the predicted concentration is at or below the lower limit of quantification (LLOQ). A typical series is: Neat, 1:2, 1:4, 1:8, 1:16 [29].

- Assay Analysis: Run all diluted samples and the standard curve in the same assay batch.

- Data Analysis:

- Calculate the observed concentration for each diluted sample.

- Multiply each observed concentration by its dilution factor to obtain the back-calculated concentration.

- For each original sample, calculate the mean and %CV of all back-calculated concentrations (using only those within the standard curve range).

- Interpretation: A %CV within the pre-defined acceptance criteria (e.g., ≤20-30%) indicates successful parallelism [29].

Workflow and Decision Diagrams

Platform Comparison Table

The following table summarizes the key characteristics of each technology platform to guide your selection.

| Platform | Key Principle | Primary Application in Biomarker Validation | Sample Volume | Multiplexing Capacity | Key Advantages | Key Limitations |

|---|---|---|---|---|---|---|

| ELISA | Antibody-based colorimetric detection [32] | Quantifying single soluble proteins (e.g., cytokines) [32] | 50-100 µL [33] | Single-plex [33] | Widely available, simple protocol | Low throughput, limited dynamic range (1-2 logs) [33] |

| MSD | Electrochemiluminescence detection on carbon electrodes [33] | Multiplex protein quantification (e.g., cytokine panels) [34] | 10-25 µL (for up to 10 analytes) [33] | Up to 10 analytes/well [34] | Broader dynamic range (3-4+ logs), low sample volume, reduced matrix effects [33] | Requires specialized instrumentation |

| NGS | High-throughput sequencing of DNA/RNA libraries [35] | Genomic, transcriptomic, and epigenomic biomarker discovery [35] | Varies by input method | Highly multiplexed (thousands of targets) | Unbiased discovery, high multiplexing | Complex data analysis, library prep artifacts (e.g., adapter dimers) [35] |

| Mass Spectrometry | Mass-to-charge ratio analysis of ions | Targeted or untargeted proteomic and metabolomic profiling [9] | Varies | Highly multiplexed (hundreds to thousands) | High specificity, can detect post-translational modifications | Expensive, requires high expertise, complex sample prep |

| Multiplex Immunoassays (Luminex) | Antibody-coupled magnetic beads with fluorescent detection [36] | Simultaneous quantification of multiple analytes in biofluids [36] | <25 µL [36] | Up to 50 analytes/well [34] | High-throughput, saves sample, comprehensive profiling | Potential bead/antibody cross-reactivity, matrix interference [36] |

Troubleshooting Guides & FAQs

ELISA

Problem: Weak or No Signal

- Cause & Solution: Reagents not at room temperature can cause this. Ensure all reagents sit at room temperature for 15-20 minutes before starting the assay [32].

- Cause & Solution: Inaccurate pipetting or incorrect dilution calculations. Check pipetting technique and double-check all calculations [32].

- Cause & Solution: Wells were scratched by pipette or washing tips. Use caution during aspiration and ensure automated washer tips are calibrated correctly [32].

Problem: High Background

- Cause & Solution: Incomplete washing is a common cause. Ensure thorough washing; invert the plate onto absorbent tissue and tap forcefully to remove residual fluid between steps [32].

- Cause & Solution: Plate sealers were not used or were reused, leading to cross-contamination. Always use a fresh sealer for each incubation step [32].

MSD (Meso Scale Discovery)

Problem: How does MSD compare directly to ELISA? MSD assays require less sample, provide greater sensitivity and a broader dynamic range, and can multiplex up to 10 analytes simultaneously in a single well. MSD protocols are typically faster with fewer wash steps, and the instruments require minimal maintenance [33].

Problem: Can I transfer an existing ELISA to the MSD platform? Yes, transferring ELISAs to the MSD platform is often straightforward and can be accomplished with minimal optimization, sometimes in less than two days [33].

NGS (Next-Generation Sequencing)

Problem: Low Library Yield After Preparation

- Cause & Solution: Degraded nucleic acid input or contaminants. Re-purify the input sample and use fluorometric quantification (e.g., Qubit) instead of absorbance-only methods for accurate measurement [35].

- Cause & Solution: Inefficient adapter ligation. Titrate the adapter-to-insert molar ratio and ensure fresh ligase and optimal reaction conditions are used [35].

Problem: High Duplicate Read Rates or Adapter Dimers

- Cause & Solution: Over-amplification during the PCR enrichment step. Reduce the number of PCR cycles and use the minimal number necessary for library generation [35].

- Cause & Solution: Inefficient cleanup and size selection. Optimize the bead-to-sample ratio during purification to effectively remove short fragments like adapter dimers [35].

Multiplex Immunoassays (Luminex/xMAP)

Problem: Low Bead Counts

- Cause & Solution: Bead aggregation. Vortex beads for 30 seconds before adding them to the plate and ensure the plate is agitated during incubations [37].

- Cause & Solution: Sample debris. Always thaw samples completely, vortex thoroughly, and centrifuge at 10,000 x g for 5-10 minutes to remove particulates before use [36].

- Cause & Solution: Instrument issues. Clean the instrument regularly and run calibration/verification beads before acquiring the plate [37].

Problem: High Background Signal

- Cause & Solution: Detection antibody or SAPE over-incubation. Adhere strictly to the recommended incubation times in the protocol [36].

- Cause & Solution: Incomplete washing. Use a handheld magnetic separator and ensure the plate is firmly attached. Soak wells for 60 seconds during wash steps to reduce non-specific binding [36].

Essential Research Reagent Solutions

This table outlines key materials and their functions for robust assay performance.

| Reagent/Material | Function | Key Considerations for Biomarker Validation |

|---|---|---|

| Plate Sealers | Prevents well-to-well contamination and evaporation during incubations [32] | Use a fresh sealer for each incubation step to ensure integrity [32] |

| Magnetic Bead Separator | Immobilizes magnetic beads during wash steps in multiplex or MSD assays [36] | Ensure the plate is firmly attached to the magnet during washes to prevent bead loss [36] |

| Wash Buffer (with Detergent) | Removes unbound proteins and reagents to reduce background [32] [37] | Always use the buffer provided with the kit. Do not substitute, as osmolarity is critical [37] |

| Assay Buffer | Diluent for standards and samples; maintains protein stability [36] | Do not confuse with Wash Buffer. Using Wash Buffer as an assay diluent can cause low analyte recovery [36] |

| Standard Curves | A series of known analyte concentrations for sample quantification [32] | Prepare fresh from stock for each assay. Qualify the curve for plateaus or abnormal fits during analysis [37] |

Experimental Workflow & Troubleshooting Diagrams

ELISA Workflow

NGS Library Prep Troubleshooting

Multiplex Assay Key Steps

Troubleshooting Common Multi-Omics Integration Challenges

FAQ: Why do my integrated results show poor correlation between omics layers, even when they come from the same samples?

This is a frequent issue often stemming from biological and technical disconnects between molecular layers. For instance, high mRNA transcript levels do not always correlate with high protein abundance due to post-transcriptional regulation, varying turnover rates, or limitations in analytical sensitivity [38]. To troubleshoot:

- Verify Technical Consistency: Ensure samples for all omics assays were collected, processed, and stored under identical conditions to minimize pre-analytical variation.

- Check Data Preprocessing: Confirm that appropriate normalization and batch-effect correction have been applied to each dataset individually before integration. A common mistake is applying the same normalization method to all data types without considering their unique statistical distributions [12] [11].

- Assess Sensitivity: Be aware that the depth of coverage or analytical sensitivity can vary greatly between platforms. A gene detected by RNA-seq might be missing in proteomic data due to the more limited spectrum of current proteomic methods [38].

FAQ: How can I handle missing data in my multi-omics dataset?

Missing data is inherent in multi-omics studies. The optimal strategy depends on whether the data is missing completely at random or for a biological/technical reason.