Overcoming Low Input RNA Challenges in Single-Cell Sequencing: Strategies for Enhanced Sensitivity and Reliability

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by revealing cellular heterogeneity, but its application is often constrained by the challenge of low input RNA.

Overcoming Low Input RNA Challenges in Single-Cell Sequencing: Strategies for Enhanced Sensitivity and Reliability

Abstract

Single-cell RNA sequencing (scRNA-seq) has revolutionized biomedical research by revealing cellular heterogeneity, but its application is often constrained by the challenge of low input RNA. This article provides a comprehensive resource for researchers and drug development professionals, addressing foundational principles, optimized methodological protocols, troubleshooting strategies, and comparative platform analyses. We explore innovative solutions ranging from novel nuclei isolation techniques and advanced library preparation kits to sophisticated computational tools for data normalization and batch effect correction. By synthesizing current advancements and practical guidelines, this resource aims to empower scientists to maximize data quality and biological insights from precious, limited samples across diverse fields including cancer research, neurology, and immunology.

The Fundamental Challenge: Why Low RNA Input Compromises scRNA-seq Sensitivity and Data Quality

Defining the Low Input RNA Landscape in Single-Cell Genomics

Troubleshooting Common Technical Challenges

Working with low-input RNA in single-cell genomics presents specific technical hurdles. The table below outlines common issues, their underlying causes, and recommended solutions to ensure data quality and reliability.

| Challenge | Root Cause | Recommended Solution |

|---|---|---|

| Low RNA Input & Coverage [1] | Incomplete reverse transcription and amplification from minimal starting material leads to technical noise. [1] | Standardize cell lysis/RNA extraction. Use pre-amplification methods to increase cDNA. [1] |

| Amplification Bias [1] | Stochastic variation during PCR causes over-representation of certain genes. [1] | Use Unique Molecular Identifiers (UMIs) to tag original molecules and correct bias. [2] [1] |

| High Dropout Events [1] | Transcripts (often low-abundance) fail to be captured or amplified, creating false negatives. [1] | Apply computational imputation methods that use statistical models to predict missing expression. [1] |

| Ribosomal RNA (rRNA) Contamination [2] [3] | Abundant rRNA consumes sequencing reads, reducing coverage of informative transcripts. [3] | Employ efficient rRNA removal kits (e.g., QIAseq FastSelect) before library prep. [3] |

| Batch Effects [1] | Technical variations between experiment runs cause systematic differences in gene expression profiles. [1] | Use batch correction algorithms (e.g., Combat, Harmony) during data analysis. [1] |

| Cell Doublets [1] | Multiple cells captured in a single droplet misrepresent cell types. [1] | Use computational methods to identify/exclude doublets or cell "hashing" techniques. [1] |

Frequently Asked Questions (FAQs)

Q1: How does single-cell RNA-Seq fundamentally differ from bulk or ultra-low-input RNA-Seq?

While bulk and ultra-low-input RNA-Seq provide an average gene expression profile across thousands to millions of cells, single-cell RNA-Seq (scRNA-Seq) resolves the transcriptome of individual cells. [4] [2] This high-resolution view is critical for identifying distinct cellular subpopulations, discovering rare cell types, and understanding cell-to-cell variation within a seemingly homogeneous sample. [4] [2] Standard scRNA-Seq typically requires at least 50,000 cells as input, though 1 million is recommended. [2]

Q2: What are the key considerations for preparing my sample for scRNA-Seq?

Successful sample preparation is crucial. Key considerations include:

- Cell Viability and Stress: The process of dissociating tissue into a single-cell suspension can stress cells and alter their gene expression. Careful optimization of dissociation protocols is essential to minimize these effects and maximize cell viability. [1]

- Sample Type Flexibility: scRNA-Seq can be performed on a wide variety of sample types, including fresh, frozen, or fixed cells and nuclei, as well as cell cultures, blood, and organoids. [4] If using frozen cells, note that freezing can cause cell death and RNA degradation; single-nucleus RNA sequencing (snRNA-Seq) is often preferred for frozen tissues. [4]

- rRNA Removal: For comprehensive transcriptome analysis, efficient ribosomal RNA (rRNA) removal is critical, especially for low-input samples, to increase reads from mRNA and other valuable RNA species. [2] [3]

Q3: What are UMIs and when should I use them?

UMIs (Unique Molecular Identifiers) are short random sequences used to tag individual mRNA molecules during cDNA synthesis. [2] All PCR-amplified copies of that original molecule will carry the same UMI. This allows bioinformatics tools to correct for PCR amplification bias and errors by "deduplicating" the reads, providing a more accurate count of the original number of RNA molecules. [2] [1] UMIs are highly recommended for deep sequencing (>50 million reads/sample) or with low-input samples where amplification bias is a major concern. [2]

Q4: My scRNA-seq data is very sparse with many zero counts. Is this normal?

Yes, this "sparsity" is a well-known characteristic of scRNA-seq data, primarily caused by dropout events where low-abundance transcripts fail to be detected in individual cells. [1] [5] This can be due to the low starting RNA quantity and technical limitations. Strategies to mitigate this include using more sensitive full-length scRNA-seq methods (e.g., FLASH-seq, Smart-seq3) or targeted approaches like Constellation-Seq, which uses linear amplification to dramatically enrich for specific transcripts of interest and reduce data sparsity. [6] [5]

Featured Experimental Protocol: Constellation-Seq for Enhanced Sensitivity

For researchers needing extreme sensitivity to detect low-abundance transcripts, Constellation-Seq is a powerful targeted enrichment method compatible with standard scRNA-Seq workflows like Drop-Seq and 10x Chromium. [5]

Objective: To overcome the sensitivity limits and high data sparsity of standard scRNA-Seq by selectively enriching for a pre-defined panel of target genes (e.g., transcription factors, rare population markers).[citation:9]

Key Methodology:

- Primer Design: Create a panel of hybrid primers. Each primer contains a transcript-specific sequence adjacent to a universal handle sequence. [5]

- Linear Amplification: Following initial cDNA synthesis, a single-primer linear amplification step is performed using the hybrid primer panel. This step selectively enriches the cDNA for the target genes. Linear amplification is less biased than exponential PCR, preventing highly abundant transcripts from dominating the sequencing library. [5]

- Library Construction: Proceed with standard library preparation protocols (e.g., Nextera XT for 10x Chromium-derived cDNA). [5]

Performance Metrics: The following table summarizes the performance gains of Constellation-Seq compared to standard methods, based on benchmark data. [5]

| Metric | Standard DropSeq | Constellation-Seq | Improvement |

|---|---|---|---|

| Avg. Counts per Cell (52 targets) | Baseline | 2.7x higher | Significant increase in signal [5] |

| Targets Detected | 41 of 49 | 49 of 49 | Captures all true positives [5] |

| Read Utility | N/A | 93.5% | Vast majority of reads are on-target [5] |

| Sensitivity to Expression Change | Baseline | 1.6x more sensitive | Better resolution of biological responses [5] |

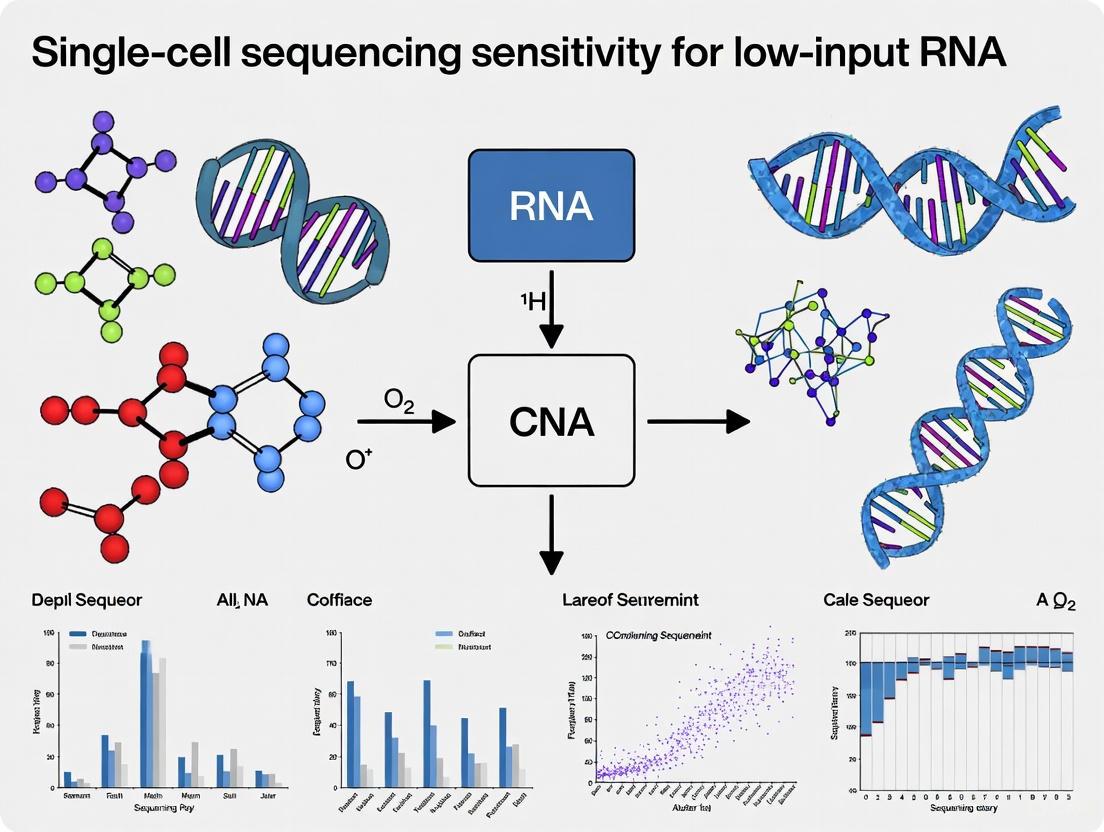

Diagram 1: Constellation-Seq workflow for targeted transcript enrichment.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential reagents and kits that facilitate low-input and single-cell RNA sequencing experiments.

| Item | Function | Example Use Case |

|---|---|---|

| UMIs (Unique Molecular Identifiers) [2] [1] | Tags individual mRNA molecules to correct for PCR amplification bias and enable accurate transcript quantification. | Essential for any low-input or single-cell RNA-seq protocol to ensure quantitative accuracy. [2] |

| ERCC Spike-In Controls [2] | Synthetic RNA molecules of known concentration added to samples to assess technical sensitivity, accuracy, and dynamic range. | Used to standardize and control for technical variation across different experiments or runs. [2] |

| rRNA Depletion Kits [2] [3] | Removes abundant ribosomal RNA (rRNA) to increase the proportion of informative (e.g., mRNA) reads in sequencing. | Critical for samples with degraded RNA (e.g., FFPE) or when studying non-polyadenylated RNAs. [2] [3] |

| Single-Cell Library Prep Kits | Integrated reagents for cell barcoding, reverse transcription, and library construction from single cells. | Kits like the Illumina Single Cell 3' RNA Prep (using PIPseq chemistry) or Parse Biosciences' Evercode kits enable scalable scRNA-seq without specialized microfluidic equipment. [4] [7] |

| Targeted Enrichment Panels | A custom set of probes or primers for selectively amplifying genes of interest to increase sensitivity and reduce cost. | Constellation-Seq uses a custom primer panel for highly sensitive profiling of specific pathways or rare cell markers. [5] |

Troubleshooting Guides

Problem: Incomplete Reverse Transcription and Low cDNA Yield

Question: Why is my reverse transcription (RT) inefficient, leading to poor cDNA yield and inadequate coverage in my single-cell RNA-seq data?

Answer: Incomplete RT is a primary bottleneck in low-input RNA workflows, often resulting from poor RNA integrity, suboptimal reaction conditions, or the presence of inhibitors. This leads to truncated cDNA fragments, 3' bias, and poor representation of transcript diversity [1] [8].

Diagnostic Table: Common Causes and Verification Methods

| Cause | Symptom | Verification Method |

|---|---|---|

| Degraded RNA | Low RNA Integrity Number (RIN); smeared bioanalyzer profile; 3' bias in coverage | Bioanalyzer/TapeStation; 3'/5' bias analysis in QC software [8] |

| Carryover Inhibitors | Low cDNA yield even with good RNA input; suboptimal UV absorbance ratios (260/230 < 1.8) | UV spectroscopy (NanoDrop); spike-in control assay [9] [8] |

| Suboptimal Primer Annealing | Low coverage of transcript 5' ends; failure to detect non-poly(A) transcripts | Targeted PCR for 5' genes; use of different primer types (e.g., random hexamers vs. oligo-dT) [8] [10] |

| Inefficient Reverse Transcriptase | Short cDNA fragments; low yield across all targets | Comparison with high-performance enzyme kits; processivity assays [8] |

Solution Protocol:

- Pre-RT RNA Quality Control: Assess RNA integrity using a bioanalyzer. For single-cell samples, use a fluorescence-based quantification method (e.g., Qubit) instead of UV absorbance for higher accuracy [8].

- RNA Denaturation: Prior to RT, denature secondary structures by heating RNA to 65°C for 5 minutes, then immediately place on ice [8].

- Primer Selection: Use a mix of oligo-dT and random hexamers to ensure coverage of both polyadenylated transcripts and those with strong secondary structures or lacking poly-A tails [8] [11].

- Use a Robust Reverse Transcriptase: Select a high-performance, thermostable reverse transcriptase with high fidelity, processivity, and resistance to common inhibitors. Perform the RT reaction at an elevated temperature (e.g., 50°C) to minimize secondary structures [8].

- Include Controls: Use Unique Molecular Identifiers (UMIs) to correct for amplification bias and digital counting of transcripts. Include external RNA controls to monitor RT efficiency [1] [11].

Problem: Amplification Bias and Non-Uniform Genome Coverage

Question: My single-cell whole-genome amplification (scWGA) shows severe allelic imbalance and uneven coverage. How can I mitigate this amplification bias?

Answer: Amplification bias, a major hurdle in single-cell DNA sequencing, results from the stochastic non-uniform amplification of the genome. This leads to Allelic Dropout (ADO), where one allele fails to amplify, and uneven coverage, complicating variant calling and copy number variation analysis [12] [13].

Quantitative Data: scWGA Kit Performance Comparison [13]

| scWGA Kit | Key Principle | Median Loci Covered* | Reproducibility | Key Limitation |

|---|---|---|---|---|

| Ampli1 | Restriction enzyme (MseI) digestion & ligation | 1095.5 | Best | Fails to amplify regions containing 'TTAA' restriction sites |

| RepliG-SC | Multiple Displacement Amplification (MDA) | 918 | Good | Higher error rate and allelic imbalance |

| PicoPlex | PCR-based method | 750 | High (Tightest IQR) | Lower genomic coverage |

| MALBAC | Quasi-linear pre-amplification | 696.5 | Moderate | Complex protocol |

| TruePrime | - | Significantly Lower | Low | Poor overall performance in comparison |

*Data based on targeted sequencing of 1585 X chromosome loci from a single human ES cell clone [13].

Solution Protocol:

- Cell Quality Check: Begin with high-quality, intact single cells. Damaged cells or cells with fragmented DNA will exacerbate amplification bias [12].

- Kit Selection: Choose a scWGA kit based on your primary experimental goal. Use Ampli1 for maximum coverage and reproducibility or PicoPlex for highly consistent results across cells. For a balance of both, RepliG-SC is a suitable MDA-based option [13].

- Quality Control with Shallow Sequencing: Before deep sequencing, perform a low-coverage (e.g., 0.3x) sequencing run. Use bioinformatics tools like Scellector to rank cells based on amplification quality by analyzing the allele frequency distribution of phased heterozygous SNPs. Balanced amplifications show a Gaussian distribution centered around 50% allele frequency [12].

- Bioinformatic Correction: Utilize computational tools designed to identify and correct for allelic imbalance and other WGA artifacts in the downstream analysis [1] [12].

Problem: Low Library Complexity and High Duplicate Rates

Question: My final NGS library has low complexity and a high rate of PCR duplicates, even though I started with a viable single cell. What went wrong?

Answer: Low library complexity indicates an insufficient number of unique DNA molecules in your library, often stemming from sample loss during purification, over-aggressive size selection, or PCR over-amplification. This reduces the effective sequencing depth and biases downstream analysis [9].

Diagnostic Table: Purification and Amplification Pitfalls

| Step | Error | Consequence |

|---|---|---|

| Purification | Incorrect bead-to-sample ratio; over-drying beads | Loss of desired fragments; inefficient adapter dimer removal [9] |

| Size Selection | Overly stringent size cut-offs | Exclusion of valid fragments, reducing complexity [9] |

| Amplification | Too many PCR cycles; inefficient polymerase | Over-representation of easily amplified fragments; high duplicate rate [9] [10] |

Solution Protocol:

- Miniaturize Reactions: Use precision microdispensing technologies to perform library preparation in nanoliter-scale volumes. This increases reagent and template concentration, enhancing reaction efficiency and significantly reducing reagent costs [10].

- Optimize Cleanup: Precisely follow bead-based cleanup protocols regarding bead-to-sample ratios and incubation times. Avoid over-drying the beads, which makes resuspension inefficient [9].

- Limit PCR Cycles: Use the minimum number of PCR cycles necessary to generate sufficient library material. If yield is low, it is better to repeat the amplification from the leftover ligation product than to over-cycle a weak product [9].

- Leverage UMIs: Incorporate Unique Molecular Identifiers during reverse transcription or early in the library prep. UMIs allow bioinformatic tools to distinguish between unique mRNA molecules and PCR duplicates, enabling accurate digital counting of transcripts [1] [10].

Frequently Asked Questions (FAQs)

Q1: What are the specific challenges of working with low-input RNA from complex tissues like tendon?

The dense, collagen-rich extracellular matrix of tendon tissue makes efficient cell dissociation difficult. Harsh mechanical or enzymatic dissociation can induce stress-response genes, altering the transcriptomic profile. Furthermore, the inherent low cellularity of these tissues means that viable cell yields are often very limited, making every cell count and demanding optimized dissociation protocols to preserve both cell viability and transcriptome integrity [14].

Q2: My scRNA-seq data has many "dropout" events (false negatives). How can I address this?

Dropout events, where a transcript is not detected in a cell where it is expressed, are a key challenge. Solutions include:

- Experimental: Use protocol-specific computational imputation methods. These tools use statistical models to predict the expression of missing genes based on the expression patterns in similar cells [1] [11].

- Computational: Target RNA-seq approaches, which use hybridization probes to enrich for specific genes, can enhance sensitivity and reduce dropouts for genes of interest [10].

Q3: Are there integrated methods to simultaneously profile DNA and RNA from the same single cell?

Yes, emerging technologies like SDR-seq (single-cell DNA–RNA sequencing) are designed for this purpose. SDR-seq combines in situ reverse transcription with multiplexed PCR in droplets to profile hundreds of genomic DNA loci and RNA targets simultaneously in thousands of single cells. This allows for the direct linking of genotypes (e.g., mutations) to transcriptional phenotypes in the same cell, which is crucial for understanding cancer heterogeneity and the functional impact of genetic variants [15].

The Scientist's Toolkit: Essential Reagent Solutions

| Item | Function | Application Note |

|---|---|---|

| High-Performance Reverse Transcriptase | Converts RNA to cDNA with high fidelity, processivity, and inhibitor resistance. | Essential for overcoming RNA secondary structures and ensuring full-length cDNA synthesis from degraded or low-quality samples [8]. |

| Unique Molecular Identifiers (UMIs) | Short random barcodes added to each mRNA molecule during RT. | Allows for the digital counting of original transcripts and correction for PCR amplification bias, leading to accurate quantification [1] [10]. |

| MDA Polymerase (phi29) | Isothermal enzyme for Whole Genome Amplification (WGA). | Provides high yield and long amplicons but is prone to allelic imbalance and coverage unevenness; requires careful QC [12] [13]. |

| Multiplexed PCR Assays | Allows for simultaneous amplification of hundreds to thousands of DNA and RNA targets. | Used in high-throughput targeted single-cell methods like SDR-seq to efficiently profile multiple modalities from the same cell [15]. |

| Bead-Based Cleanup Kits | Size selection and purification of nucleic acids. | Critical for removing primers, adapter dimers, and other contaminants. Precise bead-to-sample ratios are vital to prevent loss of material [9]. |

Experimental Workflow Visualizations

The Impact of Transcriptional Noise and Stochastic Gene Expression in Limited Samples

Technical FAQs: Understanding Noise in scRNA-seq Data

Q1: In our low-input RNA-seq experiments, we observe high gene expression variability. How can we determine if this is biologically meaningful transcriptional noise or merely technical artifact?

Technical artifacts in single-cell RNA sequencing (scRNA-seq) arise from factors like inefficient mRNA capture, low cDNA conversion efficiency, and amplification biases, especially pronounced in ultra-low-input and single-cell protocols [4]. To distinguish true biological noise:

- Utilize Unique Molecular Identifiers (UMIs): Employ kits that incorporate UMIs for error correction of barcode reads. This allows for digital counting of mRNA molecules, correcting for amplification bias and providing a more accurate estimate of true expression levels [4].

- Apply Expression-Level Adjustment: Simply using the Coefficient of Variation (CV) can be misleading, as it is typically negatively correlated with expression levels. Implement analytical adjustments for expression levels using linear/natural log polynomial or local fits. This reveals genes with high median transcriptional noise that are distinct from those with high CVs and are often highly expressed, functionally related, and co-regulated [16].

- Leverage Computational Frameworks: Use tools like the single-cell Stochastic Gene Silencing (scSGS) framework. This method treats instances of zero expression (dropouts) not just as missing data but as potential representations of true transcriptional silencing, allowing for the identification of biologically relevant noise patterns [17].

Q2: Which transcription factors are known to regulate noisy gene expression, and how can we map their binding in our limited cell samples?

Studies in yeast have identified specific transcription factors associated with variability and stochastic processes. Key regulators include Msn2p, Msn4p, Hsf1p, and Crz1p [16]. Genes with high transcriptional noise adjusted for expression levels are heavily regulated by these factors. To map TF binding in low-input scenarios, traditional ChIP-seq is often unsuitable due to its high input requirements. Instead, consider:

- DynaTag: This is a recently developed adaptation of CUT&Tag technology. It uses a physiological intracellular salt solution throughout nuclei handling to preserve sensitive TF-DNA interactions, which are lost under high-salt conditions. DynaTag enables robust mapping of TF occupancy (e.g., OCT4, SOX2, NANOG, MYC) in stem cell and cancer models, and is compatible with both bulk low-input samples and single-cell resolution [18].

- CUT&RUN: This is an alternative to ChIP-seq with lower input requirements, though it may not be as sensitive as DynaTag for all TFs and has not been widely applied at single-cell resolution [18].

Q3: Does transcriptional noise have functional significance, and is it conserved?

Yes, transcriptional noise is not merely random error but can be functional and evolutionarily conserved.

- Functional Insight: The scSGS framework demonstrates that cells transiently silenced for a particular gene show significant changes in the expression of related genes. This natural "perturbation" can be used to infer gene function and regulatory relationships, uncovering biological impacts without the survivorship bias of traditional knockout studies [17].

- Evolutionary Conservation: Research in yeast has shown that S. cerevisiae genes with noisy expression tend to have orthologs with similarly noisy gene expression in C. albicans, indicating that transcriptional noise is under evolutionary selection [16].

- Role in Complex Traits: In humans, genetic variants known as expression noise Quantitative Trait Loci (enQTLs) can regulate gene expression noise. These enQTLs are often distinct from variants regulating mean expression levels (eQTLs) and have been implicated in the variation of complex traits and diseases, such as those related to hematopoietic function [19].

Troubleshooting Guides

Guide 1: Mitigating the Impact of Technical Variation in Low-Input RNA-Seq Workflows

Problem: High technical variation is masking biological signal and inflating estimates of transcriptional noise. Solution: Adopt an integrated, optimized workflow designed for low-input samples.

Table: Key Reagents and Solutions for Low-Input RNA-Seq

| Research Reagent Solution | Function | Example/Kits |

|---|---|---|

| Cell Partitioning Technology | Isolates single cells and creates barcoded RNA-seq libraries. | High-throughput (e.g., droplet-based) or low-throughput (e.g., microwell, sorting) methods [4]. |

| Barcoded Beads/Oligos | Enables mRNA capture and cell-specific barcoding during reverse transcription. | Hydrogel beads with barcoded oligonucleotides (e.g., PIPseq chemistry) [4]. |

| Unique Molecular Identifiers (UMIs) | Tags individual mRNA molecules to correct for amplification bias and enable accurate digital counting [4]. | Incorporated into barcoded oligonucleotides on capture beads. |

| Specialized Library Prep Kits | Prepares sequencing libraries from the amplified cDNA. | Illumina Single Cell 3' RNA Prep kit [4]. |

| Physiological Salt Buffers (for TF mapping) | Preserves specific, dynamic TF-DNA interactions during sample preparation for low-input epigenomics. | DynaTag physiological salt buffer (110 mM KCl, 10 mM NaCl, 1 mM MgCl2) [18]. |

Workflow Diagram:

Guide 2: Designing Experiments to Study Biological Noise

Problem: An experimental design that fails to account for sources of variability, leading to confounded results. Solution: Carefully control and document experimental conditions.

- Replicate Strategically: Include biological replicates (different batches, different source materials) to account for technical and biological variability beyond single-cell heterogeneity.

- Control for Covariates: Account for factors known to influence noise, such as cell type, age, and sex. A large-scale enQTL study revealed that transcriptional noise patterns in human immune cells are age- and gender-dependent [19].

- Choose the Right Resolution: For discovering genetic regulators of noise (enQTLs), large-scale single-cell studies from many individuals are required [19]. For inferring gene function from noise patterns, a single wild-type scRNA-seq dataset analyzed with a framework like scSGS may be sufficient [17].

- Validate Findings Orthogonally: Correlate findings from scRNA-seq noise analysis with results from other modalities. For example, correlate genes identified as having high noise with TF binding data from low-input methods like DynaTag [18].

Experimental Protocols

Protocol 1: Single-Cell Stochastic Gene Silencing (scSGS) Analysis

Purpose: To infer gene function and regulatory relationships by leveraging naturally occurring transcriptional silencing in wild-type scRNA-seq data [17].

Methodology:

- Data Preprocessing: Begin with a wild-type scRNA-seq gene expression count matrix. Filter out low-quality cells and genes with low expression to ensure only viable cells are analyzed.

- Cell Type Annotation: Annotate cell types using canonical marker genes (e.g., from the ScType database) and subset the data to the cell type of interest.

- Identify Highly Variable Genes (HVGs): Use a highly variable gene identification algorithm (e.g., a three-dimensional spline-based HVG algorithm) to select genes suitable for analysis.

- Binarize Target Gene Expression: For the target gene 'g' of interest, binarize its expression across all cells. Cells with any expression (count > 0) are classified as active (GBin = 1). Cells with zero expression are classified as silenced (GBin = 0).

- Split Matrix and Compare: Split the preprocessed count matrix into two subsets: the active subset and the silenced subset. Normalize the gene expression profiles and compare them using a non-parametric statistical test like the Wilcoxon rank-sum test. Calculate the average log2 fold change.

- Identify SGS-Responsive Genes: Genes with a significant P-value (after multiple test correction, e.g., FDR < 0.01) are deemed SGS-responsive.

- Functional Enrichment Analysis: Perform functional enrichment analysis (e.g., GO, KEGG) on the SGS-responsive genes to predict the biological function of the target gene 'g'.

Logical Flow Diagram:

Protocol 2: Mapping Transcription Factors with DynaTag in Low-Input Samples

Purpose: To achieve robust, high-resolution mapping of transcription factor (TF)-DNA interactions in low-input samples and at single-cell resolution [18].

Methodology:

- Sample Preparation: Isolate nuclei from your low-input sample (e.g., sorted cells, small tissue biopsies).

- Antibody Binding: Incubate nuclei with a primary antibody specific to the TF of interest. This is followed by incubation with a secondary antibody.

- pA-Tn5 Binding: Incubate with protein A-Tn5 (pA-Tn5), which binds to the antibody complex.

- Tagmentation in Physiological Buffer: Induce tagmentation (simultaneous cleavage and adapter insertion) by activating pA-Tn5 with Mg2+. Crucially, all nuclei handling and wash steps must be performed with the DynaTag physiological salt buffer (110 mM KCl, 10 mM NaCl, 1 mM MgCl2) to preserve specific TF-DNA interactions.

- DNA Extraction and Purification: After tagmentation, extract and purify the DNA.

- Library Amplification: Amplify the purified DNA with PCR to create the sequencing library.

- Sequencing and Analysis: Sequence the libraries and analyze the data using standard pipelines for TF footprinting and peak calling. The resulting data shows superior signal-to-background ratio and resolution compared to ChIP-seq and CUT&RUN for dynamic TFs [18].

Key Data Tables

Table 1: Key Quantitative Findings from Transcriptional Noise Studies

| Study System | Key Finding | Quantitative Result | Implication |

|---|---|---|---|

| Human Peripheral Blood (1.23M cells) [19] | Identification of genetic loci regulating noise (enQTLs). | 10,770 independent enQTLs for 6,743 genes across 7 immune cell types. | enQTLs are a distinct class of genetic regulator, separate from eQTLs, influencing complex traits. |

| Yeast (S. cerevisiae) [16] | Conservation of transcriptional noise. | Noisy genes in S. cerevisiae have orthologs with noisy expression in C. albicans. | Transcriptional noise is an evolutionarily conserved, selectable feature. |

| Mouse Glioblastoma Model [17] | Validation of scSGS method for gene function (Ccr2). | From 3,048 monocytes, 491 SGS-responsive genes were identified for Ccr2; 72/200 top genes overlapped with in vivo KO DE genes. | Stochastic silencing patterns in wild-type data can reliably reveal gene function. |

| Mouse Embryonic Stem Cells [18] | Performance of DynaTag vs. ChIP-seq/CUT&RUN. | DynaTag showed superior enrichment & resolution at transcription start sites. | Enables precise TF mapping in low-input and single-cell contexts where traditional methods fail. |

Single-cell RNA sequencing (scRNA-seq) has revolutionized genomic research by enabling the examination of gene expression at the resolution of individual cells. Unlike bulk RNA-seq, which averages expression across thousands of cells, scRNA-seq uncovers the cellular heterogeneity within complex tissues, revealing rare cell populations, dynamic transitions, and unique genomic signatures that were previously masked [1] [20]. This high-resolution view is pivotal for breakthroughs in cancer research, immunology, stem cell biology, and drug development. However, the journey from sample preparation to data interpretation is fraught with technical challenges, especially when dealing with the extremely low starting amounts of RNA characteristic of single-cell analysis. This technical support center provides a comprehensive guide to troubleshooting common issues and offers detailed protocols to ensure the success of your scRNA-seq experiments.

Technical Challenges and Troubleshooting Guide

Frequently Asked Questions (FAQs)

Q1: Why does my scRNA-seq data have so many zero values for gene expression, and how can I address this? The prevalence of zeros, or "dropout events," is a hallmark of scRNA-seq data. These occur when a transcript fails to be captured or amplified in a single cell, leading to a false-negative signal. This is particularly problematic for lowly expressed genes and rare cell populations [1]. Mitigation strategies include:

- Computational Imputation: Use statistical models and machine learning algorithms to predict the expression levels of missing genes based on observed patterns in the data [1] [11].

- Experimental Optimization: Standardize cell lysis and RNA extraction protocols to maximize RNA yield and quality. Employing pre-amplification methods can also increase the amount of cDNA before sequencing [1].

Q2: How can I minimize amplification bias in my libraries? Amplification bias arises from stochastic variation during cDNA amplification, leading to a skewed representation of certain genes [1]. The primary solution is to use Unique Molecular Identifiers (UMIs). UMIs are short random barcodes that label each individual mRNA molecule prior to amplification, allowing for accurate quantification and correction for amplification bias during computational analysis [20].

Q3: My data shows strong batch effects between different experimental runs. How can I correct for this? Batch effects are technical variations introduced from different sequencing runs or experimental batches, which can confound biological interpretation [1] [21]. Correction methods include:

- Benchmarking Datasets: Using community-driven standards to establish best practices [1].

- Computational Batch Correction: Apply algorithms such as Combat, Harmony, or Scanorama to remove systematic technical variation and improve data comparability [1].

Q4: What are the best practices for preparing a high-quality single-cell suspension? The process of tissue dissociation to create single-cell suspensions can induce stress and alter gene expression profiles [1] [20].

- Optimized Dissociation: Perform tissue dissociation at lower temperatures (e.g., 4°C) to minimize "artificial transcriptional stress responses" [20].

- Buffer Compatibility: Ensure cells are suspended in an appropriate buffer. Resuspend and wash cells in EDTA-, Mg²⁺-, and Ca²⁺-free 1X PBS to avoid interfering with downstream reverse transcription reactions [22].

- Consider snRNA-seq: For tissues difficult to dissociate (e.g., brain), single-nucleus RNA sequencing (snRNA-seq) is a robust alternative that minimizes dissociation-induced artifacts [20].

Q5: How can I identify and remove cell doublets from my data? Cell doublets occur when multiple cells are captured in a single droplet, leading to misidentification of cell types [1]. Solutions include:

- Cell Hashing: Using antibody-based barcoding to label cells from different samples, allowing for doublet identification during demultiplexing [1].

- Computational Methods: Tools like DoubletFinder can identify and exclude cell doublets from downstream analysis based on aberrant gene expression profiles [23].

Key Technical Challenges and Solutions Table

The table below summarizes major challenges encountered in scRNA-seq experiments and their corresponding solutions.

Table 1: Key Technical Challenges and Solutions in scRNA-seq

| Challenge | Description | Proposed Solutions |

|---|---|---|

| Low RNA Input & Coverage [1] [11] | Incomplete reverse transcription and amplification due to minimal starting material, leading to technical noise. | Standardize lysis/RNA extraction; use pre-amplification methods [1]. |

| Amplification Bias [1] [20] | Stochastic amplification skews representation of specific genes. | Use Unique Molecular Identifiers (UMIs) for correction [1] [20]. |

| Dropout Events [1] [21] | Transcripts fail to be captured/amplified, resulting in false-negative signals (excess zeros). | Apply computational imputation methods to predict missing expression [1]. |

| Batch Effects [1] [21] | Technical variation between experimental batches confounds biological differences. | Use batch correction algorithms (Combat, Harmony, Scanorama) [1]. |

| Cell Doublets [1] [23] | Multiple cells captured in a single droplet, misguiding cell type identification. | Employ cell hashing or computational doublet detection tools [1] [23]. |

| Data Normalization [1] [11] | Accounting for differences in sequencing depth and library size without introducing bias. | Use ML-based clustering and repurpose bulk RNA-seq QC tools for accurate normalization [1]. |

Experimental Workflow and Critical Control Points

The following diagram outlines a generalized scRNA-seq workflow, highlighting key stages where the challenges from Table 1 most commonly arise and where quality control is crucial.

Essential Methodologies and Protocols

Detailed Protocol: scGRO-seq for Nascent RNA Transcription

Objective: To profile genome-wide nascent transcription at single-cell resolution, capturing active gene and enhancer transcription while accounting for the episodic nature of transcription (bursting) [24].

Workflow Overview:

Step-by-Step Methodology [24]:

- Nuclear Run-On with Modified NTPs: Isolate intact nuclei and perform a nuclear run-on reaction in the presence of 3′-(O-propargyl)-NTPs. This incorporates an alkyne group into nascent RNA molecules actively being transcribed by RNA polymerase.

- Single-Cell Compartmentalization: Sort individual nuclei into the wells of a 96-well plate. Each well contains a unique 5′-Azide Single-Cell Barcoded (5′-AzScBc) DNA molecule.

- Click Chemistry Conjugation: Lyse the nuclear membrane with a urea-based buffer. Subsequently, perform a copper(I)-catalyzed azide-alkyne cycloaddition (CuAAC) "click chemistry" reaction. This covalently links the propargyl-labeled nascent RNA from each nucleus to the unique barcoded DNA molecule in its well.

- Library Construction: Pool the contents from all wells. The barcoded nascent RNAs are then reverse transcribed in the presence of a template switching oligonucleotide (TSO), PCR amplified, and prepared for sequencing.

- Data Analysis: Sequence the library and use computational methods to deconvolute the data based on the single-cell barcodes, allowing for the reconstruction of coordinated transcription and enhancer-gene dynamics across thousands of individual cells.

Key Advantages:

- Single-Cell Resolution: Unveils coordinated global transcription and episodic bursting at the level of individual cells.

- Direct Quantification: Estimates transcriptional burst size and frequency by directly quantifying transcribing RNA polymerases.

- Enhanced Insight: Identifies networks of co-transcribed genes and can infer that transcription at super-enhancers often precedes bursting from their associated genes [24].

Protocol: Sensitive Gene Detection to Improve Clustering

Objective: To identify and manage "sensitive genes"—genes with high cell-to-cell variability that respond to environmental stimuli—which can adversely impact unsupervised clustering and cell type annotation [23].

Methodology [23]:

- Initial Processing & Clustering: Perform standard QC, normalization, and a first round of unsupervised clustering (e.g., using Seurat and Louvain algorithm) on the dataset to obtain

Ninitial cell clusters. - Coefficient of Variation (CV) Filtering: For each of the

Nclusters, calculate the CV for all genes. Retain only those genes that rank in the top 2000 by CV in at least half (≥ N/2) of the clusters. - Shannon Entropy Calculation: For each gene that passed the CV filter, calculate its average expression within each of the

Nclusters. Use these values to compute the Shannon entropy, which evaluates the gene's contribution to cluster-to-cluster differences. - Define Sensitive Genes: Designate genes with both high CV (from step 2) and high Shannon entropy (above the median entropy of all filtered genes) as "sensitive genes."

- Refined Analysis: Remove the identified sensitive genes from the expression matrix. Re-select highly variable genes and re-run the unsupervised clustering. This typically yields results closer to ground-truth cell labels, as it reduces noise from stochastic stress responses [23].

The Scientist's Toolkit: Research Reagent Solutions

This table catalogs essential reagents and their critical functions for successful scRNA-seq experiments, as derived from the cited protocols.

Table 2: Essential Research Reagents for scRNA-seq

| Reagent / Material | Function / Explanation | Key Consideration |

|---|---|---|

| Unique Molecular Identifiers (UMIs) [1] [20] | Short random barcodes that label individual mRNA molecules to correct for amplification bias and enable absolute transcript counting. | Essential for the quantitative accuracy of high-throughput droplet-based methods (e.g., 10x Genomics). |

| Template Switching Oligo (TSO) [20] [24] | Facilitates the addition of universal primer sequences during reverse transcription, enabling full-length cDNA amplification. | Critical for SMART-seq-based protocols and the scGRO-seq method. |

| Cell Hashing Antibodies [1] | Antibodies conjugated to sample-specific barcodes allow pooling of multiple samples prior to sequencing, identifying doublets and reducing batch effects. | Improves experimental throughput and cost-effectiveness. |

| Spike-in RNAs [1] | Exogenous RNA controls added in known quantities to the cell lysate. Used to monitor technical variability and normalize data. | Helps distinguish technical noise from biological variation. |

| 3′-(O-propargyl)-NTPs [24] | Modified nucleotides used in run-on assays (e.g., scGRO-seq) to label nascent RNA for subsequent conjugation via click chemistry. | Enables specific capture and barcoding of newly synthesized RNA. |

| 5′-Azide Single-Cell Barcoded DNA [24] | Barcoded DNA molecules that react with propargyl-labeled nascent RNA via click chemistry, assigning a unique cell ID to each cell's transcriptome. | Foundational for single-cell barcoding in plate-based nascent RNA protocols. |

Best Practices for Robust scRNA-seq Experiments

- Conduct Pilot Experiments: Before processing valuable samples, run a pilot study with a few experimental samples and controls. This helps optimize parameters (e.g., PCR cycle number) and identify issues early, saving reagents and time [22].

- Implement Rigorous Controls: Always include positive controls (e.g., 10 pg of control RNA from a cell line similar to your sample) and negative controls (e.g., mock FACS buffer). These are invaluable for troubleshooting cDNA yield and background contamination [22].

- Minimize Handling Time: After cell collection, process samples immediately or snap-freeze them. Rapid handling limits RNA degradation and unwanted changes in the transcriptome [22].

- Maintain a Clean Pre-PCR Workspace: Use dedicated pre- and post-PCR areas, positive air flow, RNase-free reagents, and low-binding plasticware to prevent amplicon and environmental contamination, which is critical when working with ultra-low inputs [22].

- Address Ancestral Diversity in Study Design: For globally impactful research, consciously include ancestrally diverse samples in atlas-building projects (e.g., Human Cell Atlas). This ensures equitable outcomes and a more comprehensive understanding of tissue health and disease [25].

Spatial Context Limitations in Traditional Dissociation-Based Approaches

Frequently Asked Questions (FAQs)

1. What is the core limitation of dissociation-based single-cell RNA sequencing regarding spatial data? Dissociation-based scRNA-seq requires tissue dissociation and cell isolation, which completely removes RNA transcripts from their original spatial context within the tissue. This process destroys all native spatial information about cellular microenvironments, tissue architecture, and cell-cell interactions [26] [27].

2. How does spatial transcriptomics overcome the limitations of traditional scRNA-seq? Spatial transcriptomics technologies measure transcriptomic information while preserving spatial location, allowing researchers to identify RNA molecules in their original spatial context within tissue sections at single-cell or subcellular resolution. This provides valuable insights into tissue organization that are lost with dissociation-based methods [26] [27].

3. What are the main technological categories for spatial transcriptomics?

- Spatial Barcoding: Ligates oligonucleotide barcodes with known spatial locations to RNA molecules prior to sequencing [27].

- In Situ Hybridization: Uses fluorescently-labeled RNA probes to identify complementary sequences while preserving spatial location [27].

- In Situ Sequencing: Employs fluorescent-based direct sequencing to read base pair information from RNA molecules in their original location [27].

4. For low-input RNA research, when should I choose single nuclei versus single cell sequencing? For many applications, entire cell capture is ideal as cytoplasmic mRNA content is higher. However, single nuclei sequencing is preferable for difficult-to-isolate cells (like neurons) and is compatible with multiome studies combining transcriptomics with open chromatin (ATAC-seq) analysis [28].

5. What commercial single-cell platforms support fixed cell sequencing? Several platforms now support fixed cells, including 10x Genomics Chromium, BD Rhapsody, Singleron SCOPE-seq, Parse Evercode, and Scale Biosciences, providing flexibility for experimental design [28].

Troubleshooting Guides

Issue: Transcriptional Artifacts Induced by Cell Dissociation

Problem: Cell dissociation protocols can introduce significant transcriptomic stress responses that confound true biological variation, particularly problematic for low-input RNA studies where these artifacts can overwhelm genuine signals [28].

Solutions:

- Perform digestions on ice to mediate transcriptional stress responses, though this may extend processing times [28].

- Implement fixation-based methods to stop transcriptomic responses immediately after dissociation, using approaches like methanol maceration (ACME) or reversible dithio-bis(succinimidyl propionate) fixation [28].

- Use fluorescence-activated cell sorting with fixed material to eliminate debris while minimizing stress-induced artifacts [28].

Issue: Loss of Spatial Coordination Between Genes

Problem: Dissociation destroys information about transcriptional coordination between neighboring genes, making it impossible to study phenomena like co-bursting of paralogues located in close genomic proximity [29].

Solutions:

- Apply spatial transcriptomics methods that preserve tissue architecture, such as NASC-seq2 for transcriptional bursting analysis or imaging-based platforms like MERFISH and CODEX [29] [30].

- Utilize allele-level analyses available in some spatial methods to control for spurious correlations from cellular heterogeneity when studying gene coordination [29].

Issue: Incomplete Representation of Cellular Diversity

Problem: Dissociation protocols often preferentially lose specific fragile cell types, introducing bias in cellular representation, especially concerning for rare cell populations in low-input research [28].

Solutions:

- Validate cell type recovery using spatial methods like single-molecule RNA fluorescence in situ hybridization (smRNA-FISH) on intact tissue sections [29] [31].

- Compare nuclear and cellular sequencing to identify cell types that show different distributions and optimize protocols accordingly [28].

- Employ tailored dissociation protocols for different tissues when generating comprehensive cell type inventories [28].

Experimental Protocols for Spatial Context Preservation

Protocol 1: NASC-seq2 for Transcriptional Bursting Analysis with Spatial Context

Application: Profiling newly transcribed RNA with allelic resolution to study transcriptional bursting kinetics while preserving some spatial information through coordinated analysis of neighboring cells [29].

Methodology Details:

- Cell Handling: Expose cells to 4-thiouridine (4sU) for 2 hours for metabolic labeling [29].

- Library Construction: Use miniaturized lysis volumes following Smart-seq3xpress methodology, with DMSO-based alkylation in nanoliter volumes [29].

- Sequencing: Employ longer short-read sequencing strategies (PE200) to improve separation of new and old RNAs [29].

- Data Analysis: Apply mixture models to infer probability of 4sU-induced base conversions (Pc) versus library preparation errors (Pe), achieving signal-to-noise ratios of ~20-45 [29].

Protocol 2: Spatial Domain Identification Using spCLUE Framework

Application: Identifying spatially coherent domains across single or multiple tissue slices using contrastive learning [32].

Methodology Details:

- Graph Construction: Build separate graphs for spatial locations and gene expression data to extract complementary insights [32].

- Multi-view Integration: Employ attention mechanisms to integrate representations without relying on ad hoc fusion strategies [32].

- Contrastive Learning: Combine instance-level contrastive learning with clustering-level modules to encourage distinct spatial domain formation [32].

- Batch Correction: Implement batch prompting module for multi-slice analysis to remove technical variation while preserving biological spatial structure [32].

Quantitative Data Comparison

Comparison of Single-Cell and Single-Nuclei RNA Sequencing Approaches

Table 1: Technical comparison of dissociation-based approaches for low-input RNA research

| Parameter | Single-Cell RNA-seq | Single-Nuclei RNA-seq |

|---|---|---|

| Starting Material | Intact cells [28] | Isolated nuclei [28] |

| mRNA Content | Higher (cytoplasmic + nuclear) [28] | Lower (nuclear transcripts only) [28] |

| Cell Types Captured | May miss fragile or large cells [28] | Better for difficult-to-isolate cells [28] |

| Multiome Compatibility | Limited | Compatible with ATAC-seq [28] |

| Spatial Context | Lost during dissociation [26] | Lost during dissociation [26] |

| Transcriptomic State | Steady-state expression [26] | Active transcription bias [28] |

Performance Metrics of Spatial Transcriptomics Technologies

Table 2: Key metrics for spatial transcriptomics technologies that preserve spatial context

| Technology Type | Spatial Resolution | Gene Detection Capacity | Tissue Area Coverage | Key Applications |

|---|---|---|---|---|

| In Situ Hybridization | Subcellular (~10 nm) [27] | Targeted (~10,000 genes) [27] | Limited by microscope field-of-view [27] | High-resolution mapping of known targets [27] |

| Spatial Barcoding | Multicellular to subcellular [27] | Whole transcriptome [27] | Larger tissue areas [27] | Discovery-based studies of unknown targets [27] |

| In Situ Sequencing | Subcellular [27] | Targeted [27] | Limited by field-of-view [27] | Direct sequencing in native spatial context [27] |

Research Reagent Solutions

Table 3: Essential research reagents and materials for spatial context preservation studies

| Reagent/Material | Function | Example Application |

|---|---|---|

| 4-thiouridine (4sU) | Metabolic RNA labeling for nascent transcript detection [29] | Temporal tracking of newly transcribed RNA in NASC-seq2 [29] |

| Dithio-bis(succinimidyl propionate) | Reversible crosslinker for cell fixation [28] | Preserving transcriptomic state during dissociation procedures [28] |

| Unique Molecular Identifiers | Barcodes for counting individual molecules [29] | Quantifying absolute transcript numbers in single-cell protocols [29] |

| Fluorescently-labeled RNA Probes | In situ hybridization for target detection [27] | Visualizing specific RNA molecules in tissue sections [31] |

| Oligonucleotide Barcodes with Spatial Coordinates | Linking RNA molecules to physical locations [27] | Spatial transcriptomics with spatial barcoding methods [27] |

Visualization Diagrams

Diagram 1: Information Loss in Dissociation-Based scRNA-seq

Diagram 2: Spatial Transcriptomics Workflow Alternatives

Advanced Protocols and Practical Applications for Maximizing Low Input RNA Recovery

Frequently Asked Questions (FAQs)

Q1: Why is my nuclei yield low from a small piece of cryopreserved tissue? Low yields often stem from incomplete tissue homogenization or nuclei loss during purification. For low-input samples (e.g., 15 mg), the homogenization technique is critical. Use a controlled, tissue-specific Dounce homogenization protocol [33]. The number of strokes and the type of pestle (loose or tight) must be optimized for each tissue type to ensure complete cell lysis while preserving nuclear integrity [33]. Furthermore, incorporating a density gradient centrifugation step with iodixanol can help purify nuclei from cellular debris, reducing losses [33].

Q2: How can I prevent RNA degradation during nuclei isolation? RNA degradation is typically caused by RNase activity or overly aggressive lysis. To prevent this, add an RNase inhibitor to all buffers used after cell lysis [33] [34]. Keep samples consistently on ice and use pre-cooled buffers. Limit lysis time to 5-10 minutes and monitor it carefully; over-lysing can damage nuclei and release RNA [34]. Perform the entire procedure in an RNase-free environment by treating surfaces with a solution like RNaseZap [34].

Q3: My nuclei suspension is clogging the microfluidic chip. What should I do? Clogging is usually due to nuclear aggregates or incomplete tissue debris removal. To solve this, always filter the nuclei suspension through a 30 µm cell strainer after homogenization [33]. If the problem persists, consider using fluorescence-activated nuclei sorting (FANS) to select for single, intact nuclei. This step also further concentrates the sample and removes debris [33]. Avoid using too much starting tissue, as this can lead to incomplete lysis and a higher concentration of aggregates.

Q4: How do I know if my isolated nuclei are of good quality for snRNA-seq? Quality control is essential. Assess nuclei integrity and count manually using a fluorescent nuclear stain like Propidium Iodide (PI) or 7-AAD [33] [34]. Under a microscope, high-quality nuclei appear single, round, and have sharp borders. Avoid samples with blebbing, ruptured membranes, or DNA halos [34]. Flow cytometry can also be used to confirm that the stained events fall within the expected size range for nuclei [33].

Q5: Can I use this protocol for tissues other than the ones listed? The protocol is designed to be versatile. The core method—using a Dounce homogenizer with a customizable lysis buffer—is a strong starting point for various tissues [33]. However, you will likely need to re-optimize the homogenization parameters (pestle type and number of strokes) for your specific tissue, as its biophysical characteristics (e.g., fibrosis, lipid content) will differ [33] [34]. Always run a small pilot experiment first.

Troubleshooting Guide

| Problem | Potential Cause | Solution |

|---|---|---|

| Low Nuclei Yield | Incomplete tissue dissociation, over-lysis, loss during centrifugation | Optimize Dounce homogenization strokes [33]; reduce lysis time; carefully handle pellet during buffer changes. |

| High Background Debris | Incomplete filtration, tissue not fully homogenized | Filter through 30µm strainer [33]; use density gradient (e.g., iodixanol) purification [33]. |

| Poor RNA Quality in Sequencing | RNase contamination, over-lysed nuclei | Use RNase inhibitors; maintain samples on ice [34]; QC nuclei integrity before sequencing [34]. |

| Nuclear Clumping | Over-concentration of nuclei, insufficient BSA in buffer | Resuspend nuclei at proper concentration; add 0.5-1% BSA to resuspension buffer to prevent adhesion [34]. |

| Incomplete Cell Lysis | Insufficient homogenization, incorrect lysis time/tissue ratio | Re-optimize pestle type and strokes [33]; ensure recommended 5-30mg tissue size [34]. |

Detailed Experimental Protocol

This protocol is adapted from Segovia et al. (2025) for isolating nuclei from low-input (15 mg) cryopreserved tissues [33].

1. Tissue Preparation and Homogenization

- Starting Material: Begin with a 15 mg piece of cryopreserved tissue. Keep it on dry ice until ready to process.

- Mincing: In a pre-cooled mortar on dry ice, mince the tissue into the finest possible pieces using a scalpel.

- Homogenization: Transfer the minced tissue to a 15 mL tube containing 3 mL of ice-cold lysis buffer (10 mM Tris-HCl pH 7.4, 10 mM NaCl, 3 mM MgCl₂, 0.05% NP-40). Perform homogenization on ice using a Dounce homogenizer.

- The choice of pestle (loose or tight) and number of strokes must be optimized per tissue type. The table below provides guidance based on the original study [33]:

- After homogenization, add another 2 mL of ice-cold lysis buffer and incubate on ice for 5 minutes.

2. Nuclei Purification and Washing

- Stop Lysis: Add 5 mL of ice-cold nuclei washing buffer (0.5X PBS, 5% BSA, 0.25% Glycerol, 40 U/mL RNase inhibitor) to stop the lysis reaction.

- Filter: Pass the entire suspension through a 30 µm MACS strainer to remove large debris and aggregates.

- Centrifuge: Centrifuge the filtered flow-through at 1000 g for 10 minutes at 4°C. Carefully decant the supernatant.

- Density Gradient (Optional but Recommended): Resuspend the pellet in 1 mL of nuclei washing buffer. Gently add 1 mL of a 50% iodixanol solution to the suspension. Layer this mixture on top of a 2 mL cushion of 29% iodixanol in a new tube. Centrifuge at 1000 g for 10 minutes at 4°C.

- Final Resuspension: The nuclei will form a pellet. Resuspend this final pellet in 300 µL of nuclei washing buffer.

3. Nuclei Sorting (FANS) and Quality Control

- Staining: Add a nuclear dye like 7-AAD to the nuclei suspension and incubate for 10 minutes on ice, protected from light.

- Sorting: Use a fluorescence-activated cell sorter (e.g., BD FACSAria Fusion) with a 70 µm nozzle. Gate on the positive, singlet events that fall within the expected size range for nuclei (calibrated with size standards) to collect a pure population of intact nuclei [33].

- QC: Count and assess the quality of the sorted nuclei manually with a microscope and a viability stain. At least 90% of nuclei should be single, round, and have sharp borders [34]. Proceed to library preparation only with high-quality nuclei.

Tissue-Specific Homogenization Parameters

| Tissue Type | Recommended Pestle | Number of Strokes | Citation |

|---|---|---|---|

| Brain | Loose (Pestle A) | 15 | [33] |

| Bladder | Tight (Pestle B) | 10 | [33] |

| Lung | Loose (Pestle A) | 10 | [33] |

| Prostate | Tight (Pestle B) | 10 | [33] |

The Scientist's Toolkit: Essential Reagents & Materials

| Item | Function | Example/Note |

|---|---|---|

| Dounce Homogenizer | Mechanically disrupts tissue while preserving nuclei | Critical for low-input samples; requires tissue-specific optimization [33]. |

| NP-40 Detergent | Mild, non-ionic detergent that solubilizes plasma membranes without disrupting nuclear envelopes. | Key component of lysis buffer [33]. |

| RNase Inhibitor | Protects RNA from degradation during the isolation process. | Add to all washing and resuspension buffers [33] [34]. |

| Iodixanol (Optiprep) | Forms a density gradient for purifying nuclei away from cellular debris and organelles. | Used for post-lysis purification [33]. |

| 7-AAD / Propidium Iodide (PI) | Fluorescent dyes that stain DNA, allowing for visualization and sorting of nuclei. | Used for quality control and FANS [33] [34]. |

| BSA (Bovine Serum Albumin) | Acts as a carrier protein to reduce nuclei clumping and adhesion to tube walls. | Add 0.5-1% to wash and resuspension buffers [34]. |

Workflow Visualization

The following diagram illustrates the complete experimental workflow for isolating nuclei from low-input cryopreserved tissue:

Diagram Title: Low-Input Nuclei Isolation Workflow

The logic of the quality control check is crucial for a successful experiment. The following chart outlines the decision process:

Diagram Title: Nuclei Quality Control Logic

In sensitive single-cell and low-input RNA research, the choice of library preparation method is paramount. The decision primarily centers on two approaches: full-length transcript protocols (Whole Transcriptome RNA-Seq) that sequence fragments across the entire RNA molecule, and 3'-end counting protocols (3' mRNA-Seq) that focus sequencing on the 3' end of transcripts to quantify gene expression [35] [36]. Each method presents distinct advantages, limitations, and optimal use cases that researchers must carefully consider when designing experiments, particularly when working with precious limited samples where RNA is scarce.

The table below summarizes the core differences between these fundamental approaches:

Table 1: Core Comparison of Full-Length and 3' RNA-Seq Methods

| Feature | Full-Length Transcript (WTS) | 3'-End Counting (3' mRNA-Seq) |

|---|---|---|

| Primary Application | Transcript isoform discovery, splicing analysis, fusion genes, non-coding RNA [35] | Quantitative gene expression profiling, high-throughput screening [35] |

| Sequencing Read Distribution | Reads cover the entire length of the transcript [36] | Reads are localized to the 3' end of the transcript [36] |

| Key Quantitative Bias | Longer transcripts generate more reads, requiring length normalization [35] [36] | One fragment per transcript, enabling direct counting without length normalization [35] [37] |

| Optimal for Single-Cell/Low-Input | Provides isoform-level information from limited material [4] | Highly efficient and cost-effective for quantifying expression from many samples or cells [35] [4] |

| Typical Sequencing Depth | Higher depth required for full transcript coverage (e.g., 20-50 million reads/sample) [35] | Lower depth sufficient for quantification (e.g., 1-5 million reads/sample) [35] |

| Performance with Degraded RNA (e.g., FFPE) | Challenging due to need for full-length transcript integrity [35] | Robust performance, as it only requires intact 3' ends [35] |

Diagram 1: Protocol Selection Based on Research Goal

Technical Performance and Data Output Comparison

Understanding the quantitative and qualitative outputs of each method is crucial for experimental design and data interpretation. The fundamental difference in how reads are generated—across the entire transcript versus only at the 3' end—drives significant consequences for data analysis and biological conclusions [36].

Table 2: Experimental Data Output and Performance Characteristics

| Performance Metric | Full-Length Transcript | 3'-End Counting |

|---|---|---|

| Detection of Differentially Expressed Genes (DEGs) | Generally detects more DEGs, with bias toward longer transcripts [36] [38] | Detects fewer total DEGs, but more robust for short transcripts [36] [38] |

| Transcript Length Bias | Strong positive correlation: longer transcripts yield more reads [36] | Minimal length bias: equal reads per transcript regardless of length [36] [37] |

| Detection of Short Transcripts | Less effective, especially at lower sequencing depths [36] | Superior detection, recovering hundreds more short transcripts at low depth [36] |

| Pathway Analysis Concordance | Identifies more enriched pathways; considered the "gold standard" [38] | Captures major biological conclusions and top pathways with high consistency [35] [38] |

| Reproducibility | High reproducibility between biological replicates [36] | Similar high levels of reproducibility [36] |

Troubleshooting Guide: FAQs and Solutions

Library Preparation and Experimental Design

Q: My single-cell RNA-seq data shows high amplification bias and technical noise. How can I improve this?

A: This common challenge in low-input workflows can be addressed both technically and computationally [1]:

- Use Unique Molecular Identifiers (UMIs): Incorporate UMIs during reverse transcription to label each original molecule, allowing bioinformatic correction for amplification bias [1].

- Optimize Pre-Amplification: Carefully control the number of amplification cycles to minimize over-amplification artifacts. Use pre-amplification methods designed to maximize cDNA yield before sequencing [1].

- Employ Spike-In Controls: Use external RNA controls of known concentration to quantify technical variation and normalize data accordingly [1].

Q: I am getting a high rate of adapter dimers in my low-input library preps. What is the cause and solution?

A: Adapter dimers (sharp peak ~70-90 bp on bioanalyzer) indicate inefficient ligation or purification [9]:

- Optimize Adapter:Input Ratio: Titrate adapter concentration to find the optimal molar ratio, avoiding excess adapters that promote dimer formation [9].

- Improve Size Selection: Use bead-based cleanup with optimized bead-to-sample ratios to exclude small fragments effectively. Avoid over-drying beads, which leads to inefficient resuspension and sample loss [9].

- Verify Enzyme Activity: Ensure fresh ligase and appropriate buffer conditions, maintaining optimal temperature during ligation [9].

Method Selection and Optimization

Q: When should I definitely choose full-length RNA-seq over 3'-end counting?

A: Opt for full-length protocols when your research question requires [35]:

- Discovery of novel transcript isoforms or alternative splicing events

- Detection of gene fusions or structural variants

- Analysis of non-polyadenylated RNAs (e.g., many non-coding RNAs)

- Working with non-model organisms with poor 3' annotation

Q: When is 3'-end counting the superior choice for low-input studies?

A: 3'-end counting excels in these scenarios [35] [38]:

- Large-scale screening studies requiring cost-effective processing of hundreds to thousands of samples

- Projects focused purely on quantitative gene expression rather than isoform-level analysis

- Working with degraded or challenging samples like FFPE where only the 3' end may be intact

- Experiments with limited sequencing budget where lower sequencing depth per sample is desirable

Q: My 3'-end counting data has low mapping rates. What could be wrong?

A: Low mapping rates in 3'-end counting often trace to annotation issues [35]:

- Verify 3' Annotation Quality: For model organisms, ensure you are using an updated annotation file. For non-model organisms, improved 3' annotation may be necessary, as insufficient transcript end site information dramatically reduces mapping rates [35].

- Check Read Quality: Ensure sequencing quality scores are high and adapter contamination has been properly trimmed.

- Confirm Library Quality: Verify that the library preparation was successful through QC steps like bioanalyzer traces before sequencing.

Detailed Experimental Protocols

3'-End Counting Protocol (e.g., QuantSeq)

The 3'-end counting approach is designed for highly efficient, targeted quantification [35] [36]:

- Poly(A) RNA Selection: Total RNA is reverse transcribed using oligo(dT) primers that bind to the poly(A) tail. This initial priming step simultaneously selects for polyadenylated mRNA and initiates cDNA synthesis.

- Second Strand Synthesis: After RNA template removal, second strand synthesis is performed.

- Library Amplification: The double-stranded cDNA is purified and amplified with a limited number of PCR cycles (typically 10-15) using primers that add platform-specific adapters and sample barcodes.

- Library Purification: The final library is purified using bead-based methods to remove primers, dimers, and other contaminants.

Critical Considerations for Low-Input Applications:

- This protocol is inherently efficient for low-input samples due to its simplicity and minimal number of steps.

- The method generates one sequencing read per transcript molecule, providing direct digital counting without normalization for transcript length [37].

- Sequencing depth requirements are modest (1-5 million reads per sample) compared to full-length protocols [35].

Full-Length Transcript Protocol (e.g., KAPA Stranded mRNA-Seq)

Whole transcriptome approaches provide comprehensive transcript information through a more complex workflow [36]:

- rRNA Depletion or Poly(A) Selection: Total RNA is processed to remove abundant ribosomal RNA (rRNA), either through poly(A) selection to enrich for mRNA or through targeted rRNA depletion to retain non-polyadenylated RNAs.

- RNA Fragmentation: The purified RNA is fragmented into smaller pieces (typically 200-300 nucleotides) using heat and divalent cations.

- Random Primed Reverse Transcription: First strand cDNA synthesis is performed using random hexamer primers, which bind throughout the transcript length.

- Second Strand Synthesis: Second strand synthesis creates double-stranded cDNA with incorporation of dUTP to maintain strand specificity.

- Library Construction: End repair, A-tailing, and adapter ligation are performed to prepare the fragments for sequencing.

- Library Amplification: The adapter-ligated fragments are amplified with PCR using indexed primers.

Critical Considerations for Low-Input Applications:

- Requires higher sequencing depth (typically 20-50 million reads per sample) to achieve adequate coverage across entire transcripts [35].

- More susceptible to biases from degraded RNA samples as it requires intact RNA fragments throughout the transcript body.

- Provides uniform coverage across transcripts, enabling identification of splice variants, sequence polymorphisms, and editing sites [36].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents and Solutions for Low-Input RNA-Seq Studies

| Reagent/Solution | Function | Application Notes |

|---|---|---|

| Poly(dT) Primers | Selects for polyadenylated mRNA by binding to poly(A) tail | Critical for 3'-end counting; determines specificity of reverse transcription [35] |

| Unique Molecular Identifiers (UMIs) | Molecular barcodes that label individual RNA molecules | Essential for correcting amplification bias in single-cell and low-input studies [1] |

| Template-Switching Oligos | Enables full-length cDNA capture in single-cell protocols | Used in SMART-seq2 and related methods for superior transcript coverage [4] |

| Ribonuclease Inhibitors | Protects RNA samples from degradation during processing | Crucial for maintaining RNA integrity in low-input workflows with extended handling times |

| Magnetic Beads (SPRI) | Size selection and purification of nucleic acids | Workhorse for library cleanup; ratio optimization critical for yield and dimer removal [9] |

| ERCC RNA Spike-In Mix | External RNA controls of known concentration | Enables technical variance quantification and normalization between samples [1] |

Diagram 2: Addressing Low-Input RNA Challenges

The choice between full-length and 3'-end counting protocols ultimately depends on the specific research questions, sample type, and resource constraints. For discovery-focused research requiring comprehensive transcriptome characterization, full-length transcript protocols remain the gold standard. For large-scale quantitative studies, especially with challenging samples or limited resources, 3'-end counting protocols offer a robust, cost-effective alternative that delivers highly reproducible gene expression data [35] [36] [38].

As single-cell and low-input RNA sequencing technologies continue to evolve, both approaches will remain essential tools in the researcher's arsenal, each optimized for different but complementary biological applications in the era of precision transcriptomics.

SPLiT-seq (Split-Pool Ligation-based Transcriptome sequencing) is a single-cell RNA sequencing (scRNA-seq) method that labels the cellular origin of RNA through combinatorial barcoding [39]. Unlike methods requiring physical compartmentalization of cells, SPLiT-seq uses the cells themselves as compartments during a series of molecular barcoding steps [39]. Its primary advantage lies in its extraordinary scalability and cost-effectiveness, enabling the profiling of hundreds of thousands to millions of cells or nuclei in a single experiment at a reagent cost on the order of 1 cent per cell or less [40]. This protocol is particularly powerful for large-scale studies, such as whole-organism analysis, as demonstrated by the profiling of approximately 380,000 nuclei from a single E16.5 mouse embryo [40]. The method is compatible with fixed cells or nuclei, allows for efficient sample multiplexing, and requires no customized equipment, making advanced single-cell studies accessible to a broad range of researchers [39] [41].

The following diagram illustrates the core split-pool process central to SPLiT-seq and related combinatorial indexing methods.

Technical Troubleshooting Guide

Common experimental challenges in SPLiT-seq and related protocols often stem from sample quality, enzymatic reaction efficiency, and purification steps. The table below summarizes frequent issues, their root causes, and proven corrective measures [9].

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input & Quality | Low starting yield; smear in electropherogram; low library complexity [9]. | Degraded DNA/RNA; sample contaminants (phenol, salts); inaccurate quantification [9]. | Re-purify input sample; use fluorometric quantification (Qubit) over UV; ensure high purity (260/230 > 1.8) [9]. |

| Fragmentation & Ligation | Unexpected fragment size; inefficient ligation; sharp ~70-90 bp adapter-dimer peaks [9]. | Over-/under-shearing; improper buffer conditions; suboptimal adapter-to-insert ratio [9]. | Optimize fragmentation parameters; titrate adapter:insert ratios; ensure fresh ligase/buffer [9]. |

| Amplification & PCR | Overamplification artifacts; high duplicate rate; sequence bias [9]. | Too many PCR cycles; enzyme inhibitors; primer exhaustion [9]. | Reduce PCR cycles; repeat from leftover ligation product; use high-fidelity polymerase [9]. |

| Purification & Cleanup | Incomplete removal of adapter dimers; high background; significant sample loss [9]. | Incorrect bead:sample ratio; over-dried beads; inadequate washing; pipetting error [9]. | Precisely follow bead cleanup protocols; avoid bead over-drying; implement pipette calibration [9]. |

Advanced Problem: Cell Clumping and RNA Capture in Bacteria

A specific challenge when adapting SPLiT-seq to bacteria (microSPLiT) includes cell clumping after reverse transcription and the difficulty of capturing bacterial mRNA, which lacks polyadenylation. The optimized microSPLiT protocol found that mild sonication after the RT step was necessary to reliably obtain single-cell suspensions. Furthermore, to enrich for bacterial mRNA, treatment of fixed and permeabilized cells with E. coli Poly(A) Polymerase I (PAP) was the most effective method, resulting in about a 2.5-fold enrichment of mRNA reads [42].

Frequently Asked Questions (FAQs)

Q1: What is the major advantage of SPLiT-seq over droplet-based methods? A1: The primary advantages are extreme scalability into the millions of cells and very low cost per cell, as it does not require specialized microfluidic equipment. The entire wet-lab workflow consists of pipetting steps in multi-well plates [39] [43].

Q2: My final library yield is unexpectedly low. What should I check first? A2: First, verify your input sample quality and concentration using a fluorometric method (e.g., Qubit). Then, trace back through the protocol to check for inefficiencies in ligation or over-aggressive purification. Ensure all enzymes and buffers are fresh and that pipetting is accurate [9].

Q3: I see a large peak around 70-90 bp in my BioAnalyzer trace. What is this? A3: This is a classic sign of adapter dimers, indicating inefficient ligation of adapters to your target fragments or inadequate cleanup to remove excess adapters. Titrating your adapter-to-insert ratio and optimizing your bead-based cleanup ratios can resolve this [9].

Q4: How are multiple samples multiplexed in a single SPLiT-seq experiment? A4: Sample multiplexing is natively integrated into the protocol. The barcodes added in the first round of split-pooling can be used as sample indices, allowing up to 96 (or 384 with higher-well plates) different biological samples to be combined at the start of the experiment [39].

Q5: My data processing pipeline is struggling to demultiplex the combinatorial barcodes. What are my options? A5: Several specialized pipelines exist. splitpipe and STARsolo are widely recommended for their speed and accuracy in handling large SPLiT-seq datasets. These tools are designed to correctly handle the complex barcode structure, including data originating from both poly-dT and random hexamer primers [43].

Q6: Can combinatorial indexing be used for targeted RNA or protein analysis? A6: Yes. Methods like Quantum Barcoding (QBC) use the same split-pool principle to barcode targeted RNAs and oligonucleotide-conjugated antibodies within fixed cells. This allows for ultra-high-throughput simultaneous analysis of dozens of proteins and targeted RNA regions via sequencing [44].

Essential Experimental Workflow

The following diagram details the key procedural steps for a successful SPLiT-seq experiment, from sample preparation to sequencing, incorporating critical troubleshooting checkpoints.

Research Reagent Solutions

A successful SPLiT-seq experiment relies on a core set of reagents and tools. The table below lists essential materials and their critical functions within the protocol.

| Item or Reagent | Function in the Protocol | Key Considerations |

|---|---|---|

| Fixed Cells/Nuclei | The starting biological material for the assay. | Must be fixed and permeabilized. Can be fresh or frozen. At least 3 million cryopreserved cells/nuclei per sample is a common recommendation [41]. |

| Barcoded Primers | Well-specific oligonucleotides for the reverse transcription (Round 1). | Contains a well-specific barcode sequence and a poly-dT and/or random hexamer region for priming [39]. |