Self-Supervised Learning in Computational Pathology: A Foundational Guide to Methods, Models, and Clinical Application

The adoption of digital pathology, characterized by massive, annotation-scarce whole-slide images (WSIs), has created a critical need for data-efficient deep learning paradigms.

Self-Supervised Learning in Computational Pathology: A Foundational Guide to Methods, Models, and Clinical Application

Abstract

The adoption of digital pathology, characterized by massive, annotation-scarce whole-slide images (WSIs), has created a critical need for data-efficient deep learning paradigms. Self-supervised learning (SSL) has emerged as a transformative solution, enabling models to learn powerful feature representations from vast unlabeled image archives. This article provides a comprehensive introduction to SSL for pathology image analysis, tailored for researchers and drug development professionals. We explore the foundational principles of SSL, detailing key methodologies like Masked Image Modeling (MIM) and contrastive learning. The guide covers the practical application of these techniques, from building hybrid frameworks to implementing adaptive, semantic-aware data augmentation. We address common optimization challenges and present troubleshooting strategies for domain-specific issues. Finally, we offer a rigorous validation and comparative analysis of current public foundation models, benchmarking their performance on diverse clinical tasks to illuminate the path toward robust, clinically deployable AI tools in pathology.

The Rise of Self-Supervised Learning: Overcoming Pathology's Data Annotation Bottleneck

The diagnostic, prognostic, and therapeutic decisions in modern medicine rely heavily on the analysis of histopathology images. The digitization of these images creates an unprecedented opportunity to develop artificial intelligence (AI) models that can assist pathologists. However, the prevailing success of deep learning in computer vision has been dominated by supervised learning, a paradigm that requires large-scale, expertly annotated datasets. In histopathology, this requirement becomes a critical bottleneck. Annotating gigapixel Whole Slide Images (WSIs) is time-consuming, cost-prohibitive, and demands rare expertise from pathologists, creating a fundamental limitation on the development of robust AI tools [1].

This annotation challenge is compounded by the inherent complexity of the images. A single WSI, often exceeding several gigabytes in size, can contain billions of pixels and hundreds of thousands of biologically meaningful structures like cells and tissue regions. Annotating such images at a sufficient level of detail for supervised learning is practically infeasible for most clinical tasks and institutions. Furthermore, the limited size and diversity of labeled datasets often result in models that fail to generalize to external data from different hospitals or for rare disease conditions [2].

Self-supervised learning (SSL) presents a paradigm shift to overcome these limitations. By enabling models to learn powerful and transferable feature representations directly from unlabeled data, SSL bypasses the massive annotation requirement. Pathology image archives worldwide contain millions of unlabeled WSIs, making them a prime candidate for SSL. This in-depth technical guide explores the core reasons behind this synergy, surveys current SSL methodologies and benchmarks in pathology, and provides a practical toolkit for researchers embarking on this transformative path.

The Technical Synergy Between SSL and Pathology

The application of SSL to pathology is not merely convenient; it is technically well-founded. The very properties that make pathology images challenging for supervised learning make them ideal for self-supervision.

Inherent Data Redundancy and Multi-Scale Structures

A single WSI possesses a high degree of inherent redundancy. Similar cellular and tissue patterns repeat across different areas of a slide and across slides from different patients. SSL methods, particularly contrastive learning, leverage this by creating different augmented "views" of the same image and training the model to recognize that these views are derived from the same source. The model thus learns to map semantically similar tissue patterns to nearby points in the feature space, without any labels.

Furthermore, histology understanding is inherently multi-scale, ranging from sub-cellular details (at 40x magnification) to tissue architecture (at 5x magnification) to the overall spatial organization of a whole slide. Powerful SSL frameworks like iBOT and DINOv2 are designed to learn features across multiple scales simultaneously, making them exceptionally suited for pathology. They can learn to recognize that a patch showing glandular morphology at 20x and a lower-magnification patch showing the overall distribution of these glands are related, capturing biologically meaningful hierarchical structures [3] [2].

The Weakness of Transfer Learning from Natural Images

A common workaround for the data-labeling bottleneck has been to use models pre-trained on natural image datasets like ImageNet. However, a growing body of evidence confirms that this is suboptimal. Models pre-trained on natural images learn features like edges, textures, and shapes of everyday objects, which have a significant domain gap from histopathological features [3] [4].

As established in several benchmarks, domain-aligned pre-training using SSL on histology data consistently outperforms ImageNet pre-training. This performance gap is observed across standard evaluation settings like linear probing and fine-tuning, and is especially pronounced in low-label regimes. The learned features are more relevant and robust for downstream pathology tasks because they are derived from the target domain itself [4].

Table 1: Benchmark Results Showing Superiority of Domain-Specific SSL over ImageNet Pre-training

| Pre-training Method | Backbone Model | Linear Probing Accuracy (%) | Fine-tuning Accuracy (%) | Low-Data Regime (10% labels) |

|---|---|---|---|---|

| Supervised (ImageNet) | ResNet-50 | 78.5 | 85.2 | 65.1 |

| MoCo v2 (ImageNet) | ResNet-50 | 80.1 | 86.5 | 68.3 |

| DINO (TCGA Pathology) | ViT-S | 89.3 | 92.7 | 82.4 |

| iBOT (TCGA Pathology) | ViT-B | 91.2 | 94.1 | 85.8 |

Note: Accuracy values are representative averages across multiple tissue classification tasks. Adapted from [3] [4].

Current SSL Methodologies and Foundation Models in Pathology

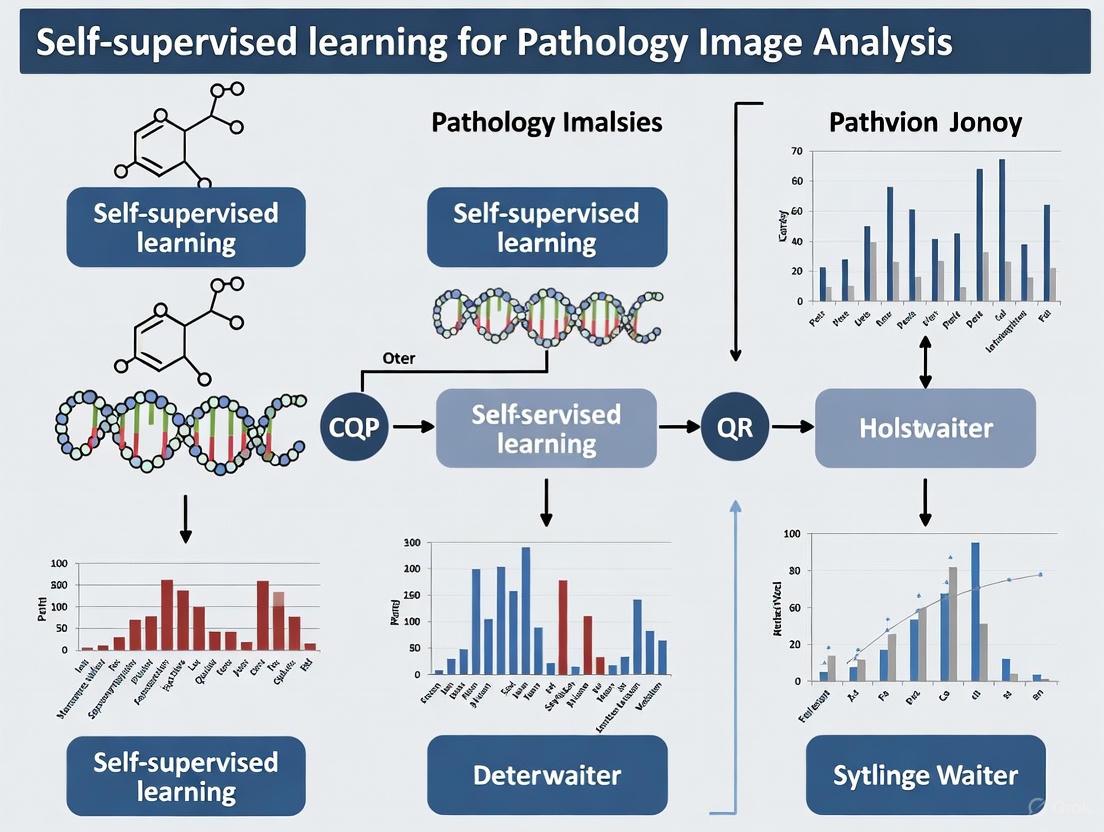

The field has rapidly evolved from generic SSL methods to sophisticated, pathology-specific foundation models. The following experimental workflow outlines the typical pipeline for developing and using a pathology foundation model.

Dominant SSL Algorithms and Adaptation

Several SSL strategies have been successfully adapted for pathology, with contrastive and self-prediction methods currently leading the field.

- Contrastive Learning (e.g., MoCo, DINO): These methods learn representations by contrasting positive pairs (different augmentations of the same image patch) against negative pairs (augmentations of different patches). DINOv2 has become a particularly popular framework for training state-of-the-art pathology foundation models like Virchow and UNI. It uses a knowledge distillation approach without explicit negative pairs, demonstrating remarkable performance in capturing visual concepts [3] [1].

- Masked Image Modeling (MIM - e.g., MAE, iBOT): Inspired by language models like BERT, MIM methods randomly mask a portion of the input image and train the model to reconstruct the missing parts. iBOT is a leading example that combines MIM with online tokenization, achieving superior performance on a wide range of downstream tasks. It has been used to train models like Phikon, which show excellent generalization [3] [2].

- Multimodal Learning: The most advanced models, such as TITAN, go beyond vision-only learning. They align image representations with text from pathology reports or synthetic captions generated by AI copilots. This enables powerful capabilities like zero-shot classification, cross-modal retrieval (e.g., finding a slide based on a text description), and even automated report generation [2].

Benchmarking Public Pathology Foundation Models

Recent months have seen the public release of several powerful pathology foundation models. A 2025 clinical benchmark systematically evaluated these models on datasets from three medical centers, covering disease detection and biomarker prediction tasks [3].

Table 2: Overview of Public Pathology Foundation Models (Adapted from [3])

| Model Name | Architecture | SSL Algorithm | Pre-training Dataset Scale | Reported Clinical Performance (Avg. AUC) |

|---|---|---|---|---|

| CTransPath | Hybrid CNN-Swin-T | MoCo v3 | 15.6M tiles, 32k slides | >0.90 (Disease Detection) |

| Phikon | ViT-Base | iBOT | 43.3M tiles, 6k slides | >0.90 (Disease Detection) |

| UNI | ViT-Large | DINO | 100M tiles, 100k slides | >0.90 (Disease Detection) |

| Virchow | ViT-Huge | DINOv2 | 2B tiles, 1.5M slides | State-of-the-art on tile & slide tasks |

| CONCH | ViT-Base | CLIP-style | Not Specified | Excels in cross-modal tasks |

| TITAN | ViT (Custom) | iBOT + V-L Align | 336k WSIs, 423k captions | Superior zero-shot & rare disease retrieval |

The benchmark reveals that all modern SSL pathology models show consistent and high performance (AUC > 0.9) on disease detection tasks, significantly outperforming ImageNet-supervised baselines. The key trends indicate that increasing model size (e.g., from ViT-Base to ViT-Huge/Giant) and dataset scale and diversity leads to better generalization [3] [2].

Experimental Protocols: A Template for SSL in Pathology

For researchers aiming to implement SSL methods for pathology, the following provides a detailed methodological template.

Data Preprocessing and Tiling Protocol

- Whole Slide Image (WSI) Loading: Use libraries like OpenSlide or CuCIM to read WSIs in their native format (e.g., .svs, .ndpi).

- Quality Control: Manually or automatically filter out slides with excessive artifacts, out-of-focus regions, or insufficient tissue.

- Tissue Segmentation: Apply a tissue segmentation algorithm (e.g., Otsu thresholding, U-Net) to identify foreground tissue regions, excluding background.

- Patch Extraction: From the segmented tissue regions, extract non-overlapping image patches (e.g., 256x256 or 512x512 pixels) at a target magnification (typically 20x or 40x). This process can generate millions of tiles from thousands of slides.

- Stain Normalization (Optional but Recommended): Apply stain normalization techniques (e.g., Macenko, Reinhard) to reduce color variance caused by different staining protocols across laboratories. This improves the robustness of the learned features.

Self-Supervised Pre-training Setup

- Model Selection: Choose a backbone architecture (e.g., ResNet-50, Vision Transformer) and an SSL algorithm (e.g., DINOv2, iBOT).

- Data Augmentation: Define a strong set of augmentations for creating positive pairs. Standard augmentations include:

- Color jitter (brightness, contrast, saturation, hue)

- Gaussian blur

- Random rotation and flipping

- Solarization (for DINO-based methods)

- Training Configuration:

- Optimizer: LARS or AdamW.

- Learning Rate: A warmup period followed by a cosine decay schedule.

- Batch Size: Use the largest batch size feasible on your hardware. For contrastive methods, larger batches (e.g., 1024) are beneficial.

- Training Epochs: Typically 100-300 epochs, though this is dataset-dependent.

Downstream Task Evaluation

To validate the quality of the learned representations, benchmark them on held-out downstream tasks with limited labels.

- Linear Probing: Freeze the entire pre-trained encoder and train only a single linear layer on top of the extracted features for a specific task (e.g., cancer subtyping). This is a strong indicator of feature quality.

- Fine-Tuning: Unfreeze the entire pre-trained model (or its later layers) and train it end-to-end on the downstream task. This often yields higher performance but is more computationally expensive.

- Few-Shot Learning: Evaluate the model's data efficiency by training it with very few labeled examples per class (e.g., 1, 5, or 10 shots).

- Tasks: Common downstream tasks include tile-level classification (e.g., tumor vs. normal), slide-level classification (using multiple-instance learning), and nuclei instance segmentation.

Table 3: The Scientist's Toolkit: Key Research Reagents and Resources

| Item / Resource | Type | Function / Application | Examples / Notes |

|---|---|---|---|

| TCGA, PAIP | Public Dataset | Source of diverse, multi-organ WSIs for pre-training and benchmarking. | Foundation for models like CTransPath and Phikon [3]. |

| OpenSlide / CuCIM | Software Library | Reading and handling large Whole Slide Images in various formats. | Essential for data loading and patch extraction pipelines. |

| VISSL, nnssl | Code Library | Frameworks providing implementations of major SSL algorithms (MoCo, DINO, etc.). | Accelerates development; nnssl is tailored for 3D medical images [5]. |

| Pre-trained Models (e.g., Phikon, UNI) | Model Weights | Off-the-shelf feature extractors for immediate use on downstream tasks. | Available on GitHub or model hubs; check license (often non-commercial) [3] [4]. |

| RandStainNA | Algorithm | Advanced stain normalization technique to improve model generalization. | Addresses the domain shift problem from different staining protocols [4]. |

| Benchmarking Pipelines (e.g., Lunit's) | Evaluation Code | Standardized frameworks to fairly compare model performance on clinical tasks. | Ensures reproducible and clinically relevant evaluation [3] [4]. |

Self-supervised learning is poised to fundamentally reshape computational pathology by turning the field's greatest challenge—the lack of annotations—into its greatest strength through the utilization of massive unlabeled archives. The technical synergy is clear, and the empirical evidence from recent foundation models is compelling. The path forward involves scaling these models on even larger and more diverse datasets, deepening multimodal integration with clinical reports and genomics, and, most critically, rigorous validation on real-world clinical endpoints to bridge the gap between research and patient care. For researchers and drug development professionals, embracing SSL is no longer an option but a necessity to build the next generation of robust, generalizable, and impactful AI tools in pathology.

Self-supervised learning (SSL) has emerged as a transformative paradigm in computational pathology, directly addressing the critical challenge of limited pixel-level annotations for histopathological images [6]. By creating surrogate pretext tasks from unlabeled data, SSL enables models to learn powerful feature representations without costly manual annotation [7] [8]. This capability is particularly valuable for analyzing gigapixel Whole Slide Images (WSIs), where exhaustive annotation is practically impossible [6]. Among various SSL approaches, Contrastive Learning and Masked Image Modeling (MIM) have established themselves as core methodologies, each with distinct mechanisms and strengths for pathological image analysis [9] [1].

This technical guide provides an in-depth examination of these two paradigms, framing them within the practical context of pathology image analysis research. We detail their fundamental principles, experimental protocols, and performance benchmarks, equipping researchers and drug development professionals with the knowledge to select and implement appropriate SSL strategies for their specific challenges in digital pathology.

Core Paradigm 1: Contrastive Learning

Fundamental Principles and Pretext Task Design

Contrastive learning operates on a core principle: it learns representations by bringing semantically similar data points ("positive pairs") closer together in an embedding space while pushing dissimilar points ("negative pairs") farther apart [1]. The underlying assumption is that variations created through data augmentation do not alter an image's fundamental semantic meaning [1]. In pathology, this means that different augmentations of a tissue patch (e.g., staining variations, rotations, cropping) should maintain the same diagnostic significance.

The learning process is typically guided by a contrastive loss function, such as the one used in SimCLR or NT-Xent, which formalizes this attraction and repulsion in the latent space [1]. The model is optimized to minimize the distance between augmented versions of the same image (positive pairs) while maximizing the distance between representations of different images (negative pairs). This approach encourages the model to become invariant to semantically irrelevant transformations and focus on diagnostically meaningful features.

Detailed Experimental Protocol

Implementing contrastive learning for pathology images involves these key methodological steps:

- Patch Extraction: Extract numerous patches from unlabeled WSIs, typically excluding background regions using methods like Otsu's thresholding [10].

- Augmentation Pipeline: Apply a stochastic augmentation strategy to create positive pairs. For pathology images, this should include both general and domain-specific transformations:

- Color Jitter: Simulates staining variations (H&E stain intensity, color consistency).

- Random Rotation/Flipping: Enforces rotational invariance in tissue structures.

- Random Gaussian Blur: Mimics variations in focus and image acquisition.

- Random Cropping and Resizing: Encourages scale invariance for cellular and tissue structures.

- Encoder Training: Process both augmented views through a shared encoder network (e.g., ResNet or Vision Transformer) to obtain latent representations.

- Projection Head: Map representations to a lower-dimensional space where the contrastive loss is applied.

- Loss Optimization: Use a contrastive loss function (e.g., InfoNCE, SupCon) to train the network. For some pathology applications, available weak labels (e.g., primary site information) can be incorporated to define positive pairs in a supervised contrastive setup [11].

Core Paradigm 2: Masked Image Modeling (MIM)

Fundamental Principles and Pretext Task Design

Masked Image Modeling (MIM) is inspired by the success of masked language modeling in Natural Language Processing (NLP) [9] [1]. The core premise involves obscuring a portion of the input data and training a model to predict the missing information based on the surrounding context [9]. For pathology images, this typically means masking random patches of a tissue image and training a model to reconstruct the original pixel values or features of the masked patches.

This approach forces the model to develop a comprehensive understanding of tissue morphology, cellular structures, and their spatial relationships to successfully reconstruct missing regions. Unlike contrastive learning which learns by comparing images, MIM learns by building an internal generative model of tissue structures. Two primary implementations exist: reconstruction-based methods (e.g., Masked Autoencoders - MAE) that directly predict masked content, and contrastive-based methods that compare latent representations of masked and original images [9].

Detailed Experimental Protocol

Implementing MIM for pathology images involves this multi-stage process:

- Patchification and Masking:

- Encoder Processing:

- Process only the visible, unmasked patches through an encoder (typically a Vision Transformer).

- The encoder learns to build contextual representations from the limited visible context.

- Decoder and Reconstruction:

- A lightweight decoder reassembles the encoded visible patches with mask tokens.

- The decoder predicts the missing content (pixel values or features) for masked patches.

- Reconstruction Loss Optimization:

- Compute loss (e.g., Mean Squared Error) between the reconstructed and original patches.

- The model learns meaningful representations by minimizing this reconstruction error.

Comparative Analysis: Performance and Applications

Quantitative Performance Benchmarking

Recent research provides quantitative comparisons of SSL methodologies applied to pathology image analysis. The table below synthesizes performance metrics from recent implementations, highlighting the distinct advantages of each paradigm.

Table 1: Performance Comparison of SSL Paradigms in Pathology Imaging

| Performance Metric | Contrastive Learning | Masked Image Modeling | Hybrid Approach | Notes |

|---|---|---|---|---|

| Data Efficiency | High (reduces annotation needs) [1] | Very High (effective with limited labels) [6] | Exceptional (70% reduction in annotation requirements) [6] | Measured by performance with limited labeled data |

| Dice Coefficient | - | - | 0.825 (4.3% improvement) [6] | Tissue segmentation accuracy |

| mIoU | - | - | 0.742 (7.8% improvement) [6] | Segmentation quality |

| Boundary Error (ASD) | - | - | 9.5% reduction [6] | Boundary delineation accuracy |

| Cross-Dataset Generalization | Good | Very Good | 13.9% improvement [6] | Performance on unseen institutional data |

Comparative Strengths and Applications

Table 2: Strategic Selection Guide for SSL Paradigms in Pathology

| Characteristic | Contrastive Learning | Masked Image Modeling |

|---|---|---|

| Core Mechanism | Learning by comparison | Learning by reconstruction |

| Primary Strength | Robustness to variations; strong feature discrimination [1] | Contextual understanding; fine-grained reconstruction [6] [9] |

| Optimal Application | WSI retrieval, classification, content-based image retrieval [11] [10] | Detailed segmentation tasks, gland/membrane boundary detection [6] |

| Computational Demand | Moderate to high (requires large batch sizes for negative pairs) [1] | Moderate (processes only visible patches) [9] |

| Key Challenge | Requires careful augmentation design; negative sampling strategy [1] | Random masking may obscure critical sparse pathological features [12] |

| Emerging Innovation | Supervised contrastive learning using site information [11] | Semantic-aware masking using domain knowledge [6] [12] |

Integrated and Advanced Approaches

Hybrid Frameworks

The most advanced approaches in computational pathology integrate both contrastive learning and MIM to create hybrid frameworks that capture their complementary strengths [6]. These implementations typically feature:

- Multi-resolution hierarchical architectures designed specifically for gigapixel WSIs that capture both cellular-level details and tissue-level context [6].

- Hybrid self-supervised learning strategies that combine masked autoencoder reconstruction with multi-scale contrastive learning to learn robust feature representations without extensive annotations [6].

- Adaptive semantic-aware data augmentation that preserves histological semantics while maximizing data diversity through learned transformation policies [6].

Research demonstrates that these hybrid frameworks achieve state-of-the-art performance, with one study reporting a Dice coefficient of 0.825 (4.3% improvement), mIoU of 0.742 (7.8% improvement), and significant reductions in boundary error metrics [6]. Notably, these approaches show exceptional data efficiency, requiring only 25% of labeled data to achieve 95.6% of full performance compared to 85.2% for supervised baselines [6].

Domain-Specific Innovations for Pathology

Successful application of SSL in pathology requires domain adaptation beyond generic computer vision approaches:

- Semantic-Aware Masking for MIM: Instead of random masking, methods like MedIM use radiology reports or histological knowledge to guide masking toward diagnostically relevant regions, preventing critical sparse pathological features from being obscured [12].

- Anatomic Site Information for Contrastive Learning: Incorporating available primary site information creates more meaningful positive pairs for contrastive learning, significantly improving WSI retrieval and classification performance [11].

- Multi-Scale Processing: Handling both local cellular details and global tissue architecture through hierarchical frameworks is essential for WSI analysis [6].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Resources for SSL Research in Computational Pathology

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Benchmark Datasets | TCGA-BRCA, TCGA-LUAD, TCGA-COAD, CAMELYON16, PanNuke [6] | Standardized datasets for training and comparative evaluation of SSL models |

| Foundation Models | UNI, Virchow, CONCH, Prov-GigaPath [6] | Large-scale pre-trained models that can be fine-tuned for specific downstream tasks |

| Architecture Frameworks | Vision Transformers (ViT), Masked Autoencoders (MAE), ResNet [6] [9] | Core neural network architectures implementing SSL paradigms |

| Annotation Tools | Digital annotation software, specialized WSI annotation tools [10] | Creating limited labeled data for fine-tuning and evaluation |

| Evaluation Metrics | Dice coefficient, mIoU, Hausdorff Distance, Average Surface Distance [6] | Quantifying segmentation accuracy and boundary delineation performance |

| Computational Resources | High-memory GPU clusters, distributed training frameworks | Handling gigapixel WSIs and large-scale pre-training |

Contrastive learning and Masked Image Modeling represent two powerful, complementary paradigms for self-supervised learning in computational pathology. Contrastive learning excels at learning discriminative features robust to technical variations, making it ideal for classification and retrieval tasks. MIM develops strong contextual understanding through reconstruction, proving particularly effective for detailed segmentation. The most advanced implementations combine these approaches in hybrid frameworks that achieve state-of-the-art performance while dramatically reducing annotation requirements.

As the field evolves, key research directions include developing more sophisticated domain-specific masking strategies, integrating multimodal data (e.g., pathology reports, genomic data), and creating more efficient architectures for processing gigapixel WSIs. These advances will further solidify SSL as a cornerstone technology enabling more accurate, efficient, and accessible computational pathology systems for both research and clinical applications.

The adoption of whole-slide imaging (WSI) has revolutionized pathology by digitizing entire glass slides into high-resolution digital files, enabling new avenues for computational analysis [13] [14]. However, the transition from analyzing small, standardized image patches to processing entire gigapixel WSIs presents a significant scalability problem in computational pathology. Whole-slide images routinely reach resolutions of 100,000 × 100,000 pixels or more, creating files that can exceed several gigabytes in size [15] [14]. This immense scale prevents WSIs from being directly processed using standard deep learning models designed for conventional image sizes, creating a fundamental computational bottleneck [15] [16].

Simultaneously, the field faces a data annotation challenge. Supervised learning approaches require extensive labeled datasets, but annotating gigapixel WSIs at a detailed level demands significant time and expertise from pathologists [17] [18]. Self-supervised learning (SSL) has emerged as a promising solution to this problem by leveraging unlabeled data to pretrain models, substantially reducing the need for task-specific annotations [17] [2]. This technical guide examines the core scalability problem in computational pathology, explores innovative computational frameworks addressing this challenge, and details experimental protocols for implementing these solutions, all within the context of self-supervised learning for pathology image analysis.

The Core Scalability Challenge

Technical Dimensions of the Problem

The scalability problem in WSI analysis manifests across several technical dimensions. First, the memory and computational load is prohibitive; a single WSI cannot be processed in its entirety through standard convolutional neural networks (CNNs) due to GPU memory limitations [15] [16]. Second, there exists a representation learning gap; models must learn meaningful histological features from millions of potential patches while maintaining spatial relationships across tissue structures [2] [16]. Third, multi-scale biological information must be integrated, from cellular-level details to tissue-level architecture and inter-slice relationships [16].

The table below quantifies the core challenges in scaling from patches to whole-slide images:

Table 1: The Scalability Problem: Patches vs. Whole-Slide Images

| Technical Dimension | Standard Image Patches | Whole-Slide Images (WSIs) | Scalability Challenge |

|---|---|---|---|

| Image Resolution | Typically 224×224 to 512×512 pixels [15] | 100,000×100,000+ pixels (gigapixel) [15] [14] | 4-5 orders of magnitude increase in pixel count |

| File Size | Kilobytes to few Megabytes | Several gigabytes per slide [14] | Direct processing impossible with current GPU memory |

| Processing Approach | Direct end-to-end processing | Patch-based extraction & aggregation [15] [16] | Need for complex multi-stage pipelines |

| Spatial Context | Limited field of view | Tissue architecture, tumor microenvironment [13] [16] | Critical biological patterns span millimeters |

| Annotation Granularity | Image-level labels feasible | Pixel-level, region-level, and slide-level annotations needed | Exponentially increasing annotation burden |

The Self-Supervised Learning Opportunity

Self-supervised learning addresses critical bottlenecks in WSI analysis by creating foundational models pretrained on unlabeled data. SSL methods generate their own supervisory signals from the data itself through pretext tasks, such as predicting image rotations, solving jigsaw puzzles, or using contrastive learning to identify similar and dissimilar patches [17] [18]. This approach is particularly valuable in digital pathology, where vast repositories of unlabeled WSIs are available, but detailed annotations are scarce and costly to obtain [17] [18].

Once pretrained using SSL, these foundation models can be fine-tuned for specific diagnostic tasks with relatively small amounts of labeled data, achieving superior performance compared to models trained from scratch [17] [2]. For example, models like CONCH and TITAN have demonstrated that SSL-pretrained features capture robust morphological patterns that transfer effectively across multiple organs and pathology tasks [2].

Computational Frameworks for WSI Analysis

Multiple Instance Learning (MIL)

Multiple Instance Learning (MIL) represents a fundamental framework for addressing the WSI scalability problem. In this approach, a WSI is treated as a "bag" containing hundreds or thousands of smaller patches ("instances") [15]. The model learns to classify the entire slide based on aggregated information from these patches, without requiring detailed annotations for each individual region.

A key innovation in modern MIL approaches is the incorporation of attention mechanisms, which assign learned weights to each patch based on its importance to the final diagnosis [15]. This allows the model to focus on diagnostically relevant regions (e.g., tumor areas) while ignoring less informative tissue (e.g., background, artifacts). The attention-based MIL framework has proven particularly effective for cancer classification and biomarker prediction tasks [19] [15].

Graph-Based Representations

Graph-based methods offer an alternative approach that explicitly models spatial relationships between tissue regions. In this framework, a WSI is represented as a graph where nodes correspond to tissue patches or segmented nuclei, and edges represent spatial adjacency or feature similarity [16]. Graph Convolutional Networks (GCNs) can then process these representations to capture both local morphological features and global tissue architecture.

Recent advances have extended graph-based approaches to leverage inter-slice commonality by connecting graphs across multiple tissue slices from the same biopsy specimen [16]. This method mimics the clinical practice of pathologists who examine multiple slices to reach a comprehensive diagnosis. Research has demonstrated that incorporating these inter-slice relationships significantly improves classification accuracy for stomach and colorectal cancers compared to single-slice analysis [16].

Whole-Slide Foundation Models

The most recent innovation addressing the scalability problem is the development of whole-slide foundation models, such as TITAN (Transformer-based pathology Image and Text Alignment Network) [2]. These models are pretrained on massive datasets of WSIs (e.g., 335,645 slides in TITAN's case) using self-supervised learning objectives, learning general-purpose slide representations that can be applied to diverse downstream tasks without task-specific fine-tuning.

TITAN employs a three-stage pretraining approach: (1) vision-only pretraining on region crops using masked image modeling and knowledge distillation; (2) cross-modal alignment with synthetic fine-grained morphological descriptions; and (3) cross-modal alignment with clinical reports at the slide level [2]. This multi-stage process produces representations that capture both histological patterns and their clinical correlations, enabling strong performance even in low-data regimes and for rare diseases.

Table 2: Comparison of Computational Frameworks for WSI Analysis

| Framework | Core Approach | Key Advantages | Performance Examples |

|---|---|---|---|

| Multiple Instance Learning (MIL) | Treats WSI as "bag" of patches; aggregates patch-level predictions [15] | - Does not require detailed annotations- Attention mechanisms identify critical regions- Computationally efficient | - Accuracy: 87.9% (stomach) [16]- AUROC: 96.8% (stomach) [16] |

| Graph-Based Methods | Represents WSI as graph; captures spatial relationships [16] | - Explicitly models tissue structure- Can integrate multi-slice information- Biological interpretability | - Accuracy: 91.5% (stomach) [16]- AUROC: 98.8% (stomach) [16] |

| Whole-Slide Foundation Models | Self-supervised pretraining on large WSI datasets; produces general-purpose slide embeddings [2] | - Transferable to multiple tasks- Excellent few-shot performance- Enables cross-modal retrieval | - Outperforms ROI and slide foundation models across diverse tasks [2] |

Experimental Protocols and Methodologies

WSI Preprocessing and Patch Extraction

Effective WSI analysis begins with robust preprocessing to handle the immense data volume. The following protocol outlines the essential steps:

Background Removal: Apply algorithms to detect and remove non-tissue background regions. One effective approach converts the WSI to grayscale, creates a binary mask through thresholding, performs morphological operations (hole filling, dilation) to refine the mask, and extracts connected components representing tissue regions [20]. This can reduce storage requirements by 7.11× on average while preserving diagnostic information [20].

Multi-Resolution Patch Extraction: Extract tissue patches at multiple magnification levels (typically 5×, 10×, 20×, and 40×) to capture both contextual and cellular information. For self-supervised pretraining, larger patches (e.g., 8,192 × 8,192 pixels at 20× magnification) are valuable for capturing tissue architecture [2]. For classification tasks, smaller patches (256 × 256 to 512 × 512 pixels) are commonly used [15].

Feature Embedding Extraction: Process each patch through a pretrained encoder (e.g., SSL-pretrained CNN or vision transformer) to extract compact feature representations. These embeddings (typically 512-768 dimensions) dramatically reduce the computational burden compared to processing raw pixels while preserving morphological information [2] [16].

Self-Supervised Learning Methods for Pathology

Self-supervised learning protocols for pathology images employ specialized pretext tasks designed to capture histologically relevant features:

Contrastive Learning Methods (SimCLR, MoCo): Generate augmented views of the same patch and train the model to produce similar embeddings for these related patches while pushing apart embeddings from different patches [17] [18]. This approach has demonstrated strong performance in breast cancer diagnosis and liver disease classification [17].

Masked Image Modeling: Randomly mask portions of the input patch and train the model to reconstruct the masked regions based on the visible context [2]. This forces the model to learn meaningful representations of tissue morphology and spatial relationships.

Context Restoration Tasks: Divide the WSI into tiles and train the model to predict the correct spatial arrangement of these tiles (jigsaw puzzle task) or to predict morphological features in adjacent regions [17] [18]. These tasks encourage the model to learn structural patterns in tissue organization.

Implementation and Optimization Strategies

Successful implementation of WSI analysis pipelines requires careful attention to computational efficiency:

Cloud-Based Scaling: Leverage cloud computing platforms (e.g., AWS) with specialized services for large-scale medical image processing. Implement distributed training across multiple GPUs to handle the computational load of processing thousands of WSIs [19].

Efficient Data Handling: Use optimized file formats (e.g., HDF5) for storing patch features and implement data streaming pipelines to minimize I/O bottlenecks during training [19].

Memory Optimization: Employ gradient checkpointing, mixed-precision training, and model parallelism to fit large models into available GPU memory [2].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for WSI Analysis

| Tool/Category | Specific Examples | Function and Application |

|---|---|---|

| Whole-Slide Scanners | Aperio GT 450, IntelliSite Pathology Solution [14] | Digitizes glass slides into high-resolution WSIs for computational analysis |

| Patch Encoders | CONCH, H-optimus-0, SSL-pretrained ResNet [19] [2] | Extracts meaningful feature representations from image patches for downstream analysis |

| Computational Frameworks | Multiple Instance Learning, Graph Neural Networks, Vision Transformers [15] [16] | Provides algorithmic approaches for aggregating patch-level information into slide-level predictions |

| Cloud AI Infrastructure | Amazon SageMaker, GPU instances (p3.2xlarge, g5.2xlarge) [19] | Offers scalable computing resources for training and deploying large-scale pathology AI models |

| Annotation Platforms | Digital pathology annotation software | Enables pathologists to create labeled datasets for model training and validation |

| Whole-Slide Foundation Models | TITAN, other SSL-pretrained models [2] | Provides general-purpose slide representations transferable to various diagnostic tasks |

The scalability problem in transitioning from patches to whole-slide images represents both a significant challenge and a remarkable opportunity in computational pathology. While the gigapixel scale of WSIs prevents direct application of standard deep learning approaches, innovative computational frameworks—including multiple instance learning, graph-based representations, and whole-slide foundation models—provide effective solutions to this bottleneck. Critically, self-supervised learning has emerged as a powerful paradigm for addressing the annotation burden associated with WSI analysis, enabling models to learn meaningful representations from vast unlabeled image repositories.

As the field advances, the integration of multimodal data (including pathology reports, genomic information, and clinical outcomes) with WSI analysis will likely yield even more powerful diagnostic and prognostic tools. Whole-slide foundation models like TITAN offer a promising direction, demonstrating that general-purpose slide representations can effectively address diverse clinical tasks, particularly in resource-limited scenarios such as rare disease analysis. Through continued methodological innovation and computational optimization, the pathology research community is steadily overcoming the scalability problem, paving the way for more accurate, efficient, and accessible cancer diagnosis and treatment planning.

The digitization of histopathology slides has created unprecedented opportunities for artificial intelligence (AI) to enhance cancer diagnosis, prognosis, and biomarker discovery. Traditional supervised deep learning models in pathology have been constrained by their dependency on vast amounts of expertly annotated data, which is expensive, time-consuming, and often scarce, particularly for rare diseases [21] [22]. Self-supervised learning (SSL) has emerged as a paradigm-shifting approach, enabling models to learn powerful visual representations from the inherent structure of unlabeled data alone [23]. By pre-training on massive collections of unannotated whole slide images (WSIs), SSL produces foundation models (FMs) that generate versatile, general-purpose feature representations (embeddings) adaptable to diverse downstream tasks with minimal task-specific fine-tuning [21] [22].

The development of pathology FMs follows scaling laws observed in other AI domains: performance improves predictably as model size, dataset size, and computational resources increase [21]. This has catalyzed an evolution from smaller, task-specific models to large-scale FMs trained on millions of pathology images. This technical guide examines four pivotal FMs—UNI, Virchow, CONCH, and Phikon—that represent the forefront of this evolution, highlighting their core architectures, training methodologies, and performance across a spectrum of clinical tasks.

Core Technical Concepts of Pathology Foundation Models

Architectural Foundations: From CNNs to Vision Transformers

Foundation models for pathology images primarily utilize two architectural backbones:

- Convolutional Neural Networks (CNNs): Early and some lightweight FMs leverage CNNs, which excel at extracting hierarchical local features (e.g., edges, textures) due to their inductive biases of locality and weight sharing. Models like CTransPath employ hybrid CNN-Transformer architectures [23].

- Vision Transformers (ViTs): Most large-scale FMs are based on the Vision Transformer architecture [21]. ViTs process images as sequences of patches, using self-attention mechanisms to capture global contextual relationships across the entire image. This capability is particularly valuable for histopathology, where understanding tissue architecture and long-range spatial relationships is critical for diagnosis [22] [24].

Key Self-Supervised Learning Algorithms

SSL algorithms generate their own supervisory signals from the data, eliminating the need for manual labels. Major algorithms used in pathology FMs include:

- DINOv2 and DINO: A self-distillation framework that uses a teacher-student network structure. The student network learns to match the output of the teacher network for different augmented views of the same image, encouraging the learning of robust, view-invariant features [22] [25] [23].

- iBOT: Combines masked image modeling (MIM) with online tokenization. The model learns by predicting masked portions of the input image in a self-distillation framework, improving robustness and representation quality [23].

- Contrastive Learning: Used in vision-language models like CONCH, this method learns aligned representations by maximizing the similarity between corresponding image-text pairs and minimizing it for non-corresponding pairs in a shared embedding space [26].

Comparative Analysis of Major Pathology Foundation Models

Table 1: Core Specifications of Major Pathology Foundation Models

| Model | Architecture | Parameters | SSL Algorithm | Training Data Scale | Primary Innovation |

|---|---|---|---|---|---|

| UNI [25] [23] | ViT-L/16 | 303 million | DINOv2 | 100M tiles, 100K slides | Early demonstration of large-scale SSL on diverse clinical dataset |

| Virchow [22] [23] | ViT-H | 632 million | DINOv2 | 2B tiles, 1.5M slides | Massive scale training; strong pan-cancer detection |

| CONCH [26] | Vision-Language | Not specified | Contrastive Learning | 1.17M image-caption pairs | Multimodal capabilities linking images with pathological concepts |

| Phikon [23] | ViT-Base | 86 million | iBOT | 43M tiles, 6K slides | Early open-weight FM; strong performance on TCGA tasks |

Table 2: Performance Comparison on Key Benchmarks

| Model | Pan-Cancer Detection (AUC) | Rare Cancer Detection (AUC) | Tile-Level Classification | Biomarker Prediction | Cross-Modal Retrieval |

|---|---|---|---|---|---|

| Virchow | 0.950 [22] | 0.937 [22] | SOTA [24] | Strong [22] | Not specialized |

| UNI | 0.940 [22] | 0.935 (approx) [22] | Strong [25] | Competitive [25] | Not specialized |

| CONCH | Not primary focus | Not primary focus | Strong [26] | Not reported | SOTA [26] |

| Phikon | 0.932 [22] | 0.920 (approx) [22] | Competitive [23] | Competitive [23] | Not specialized |

UNI: Pioneering General-Purpose Pathology FM

UNI represents a significant milestone as one of the first general-purpose pathology FMs trained on a large-scale clinical dataset. Developed by Mahmood Lab, UNI employs a ViT-L/16 architecture with 303 million parameters pre-trained using the DINOv2 algorithm on 100 million histology image tiles from 100,000 WSIs [25] [23]. The training dataset encompassed 20 major tissue types from Mass General Brigham, providing substantial morphological diversity [23]. UNI's embeddings demonstrated state-of-the-art performance across 33 diverse diagnostic tasks, including tissue classification, segmentation, and weakly-supervised subtyping, establishing that SSL on domain-specific data dramatically outperforms models pre-trained on natural images [25].

Virchow: Scaling to Million-Slide Training

Virchow, named after the father of modern pathology, exemplifies the scaling hypothesis in pathology FM development. With 632 million parameters, Virchow is a ViT-H model trained on an unprecedented dataset of approximately 1.5 million H&E-stained WSIs from 100,000 patients at Memorial Sloan Kettering Cancer Center, representing 4-10 times more data than previous efforts [22] [24]. Using DINOv2 self-supervision, Virchow learns embeddings that capture a wide spectrum of histopathologic patterns across 17 tissue types [22].

Virchow's most notable achievement is enabling high-performance pan-cancer detection, achieving 0.950 specimen-level AUC across 17 cancer types, including 0.937 AUC on 7 rare cancers [22]. This demonstrates remarkable generalization capability, particularly significant for rare malignancies where training data is inherently limited. In benchmarking studies, Virchow consistently outperformed or matched other FMs across both common and rare cancers, with quantitative comparisons showing it achieved 72.5% specificity at 95% sensitivity compared to 68.9% for UNI and 52.3% for CTransPath [22].

CONCH: Vision-Language Foundation Model

CONCH (CONtrastive learning from Captions for Histopathology) introduces a crucial innovation—multimodal learning that jointly processes histopathology images and textual descriptions [26]. While most pathology FMs are vision-only, CONCH leverages over 1.17 million histopathology image-caption pairs via contrastive pre-training, learning aligned visual and textual representations in a shared embedding space [26].

This multimodal approach enables unique capabilities not possible with vision-only FMs, including:

- Text-to-image and image-to-text retrieval, allowing pathologists to search for relevant cases using natural language queries

- Automated caption generation for histopathology images

- Zero-shot reasoning about histopathologic entities by connecting visual patterns with textual descriptions

CONCH demonstrates state-of-the-art performance on 14 diverse benchmarks spanning image classification, segmentation, and retrieval tasks, proving particularly valuable for non-H&E stained images like IHC and special stains [26].

Phikon: Publicly Available Foundation Model

Phikon represents an important effort in creating publicly accessible pathology FMs. Based on a ViT-Base architecture with 86 million parameters, Phikon was trained using the iBOT SSL framework on 43.3 million tiles from 6,093 TCGA slides across 13 anatomic sites [23]. As an open-weight model trained on public data, Phikon significantly lowers the barrier to entry for computational pathology research, enabling broader adoption and experimentation.

Despite its smaller scale compared to proprietary FMs like Virchow, Phikon delivers competitive performance across 17 downstream tasks covering cancer subtyping, genomic alteration prediction, and outcome prediction [23]. Phikon-v2, an enhanced version trained with DINOv2 on 460 million tiles from over 50,000 slides, demonstrates performance comparable to leading pathology FMs, highlighting the importance of continued scaling even with public data [23].

Experimental Methodologies and Benchmarking

Standardized Evaluation Protocols

Rigorous benchmarking of pathology FMs employs standardized protocols across multiple task types:

Tile-Level Linear Probing evaluates the quality of frozen features by training a linear classifier on top of fixed embeddings for tasks like tissue classification and nucleus segmentation [22] [23]. This directly assesses the representation quality without confounding factors from fine-tuning.

Slide-Level Aggregation tests embedding utility for whole-slide analysis by aggregating tile-level embeddings (e.g., using attention-based multiple instance learning) to predict slide-level labels for cancer detection, subtyping, or biomarker status [22].

Domain Generalization measures model robustness to technical variations like scanner differences and staining protocols by testing on external datasets not seen during training [27] [22]. Recent studies reveal that even large FMs remain susceptible to scanner-induced domain shift, highlighting an important challenge for clinical deployment [27].

Key Experimental Findings

Comparative analyses reveal several consistent patterns across pathology FM evaluations:

- Scale improves performance: Larger models trained on more data generally outperform smaller counterparts, with Virchow (632M parameters, 1.5M slides) consistently ranking top in benchmarks [22] [23].

- Domain-specific pre-training is crucial: SSL models trained directly on pathology images significantly outperform those pre-trained on natural images (e.g., ImageNet) or even adapted through transfer learning [23].

- Generalization to rare cancers: Large-scale FMs demonstrate remarkable capability in detecting rare cancers, with Virchow achieving 0.937 AUC averaged across seven rare cancer types despite limited training examples for these specific malignancies [22].

- Multimodal integration enhances capabilities: Vision-language models like CONCH enable novel applications like cross-modal retrieval and report generation, expanding the utility of FMs beyond classification tasks [26].

Implementation Workflow: From Whole Slide Images to Diagnostic Insights

Diagram Title: Pathology Foundation Model Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Resources for Pathology Foundation Model Research

| Resource Category | Specific Examples | Function & Utility |

|---|---|---|

| Pre-Trained Models | UNI [25], Virchow [22], CONCH [26], Phikon [23] | Provide foundational feature extractors for transfer learning; avoid need for expensive pre-training |

| Benchmark Datasets | TCGA [23], PAIP [23], Internal hospital cohorts [22] | Standardized evaluation across institutions; facilitate model comparison and validation |

| SSL Algorithms | DINOv2 [22] [25], iBOT [23], Contrastive Learning [26] | Core frameworks for self-supervised pre-training on unlabeled data |

| Computational Infrastructure | High-performance GPU clusters [27] | Essential for training large-scale FMs; inference requires less resources |

| Visualization Tools | Attention mapping, Feature visualization | Interpret model predictions; identify morphological patterns driving decisions |

Emerging Challenges and Future Directions

Despite rapid progress, several challenges remain in the development and deployment of pathology FMs:

Domain Generalization and Scanner Bias: Studies show that even state-of-the-art FMs like UNI and Virchow exhibit performance degradation when applied to images from different scanners, highlighting the need for more robust representation learning [27]. Lightweight frameworks like HistoLite attempt to address this through domain-invariant learning but face trade-offs between accuracy and generalization [27].

Computational Resource Requirements: Training large FMs requires substantial GPU resources inaccessible to many research groups, creating a barrier to entry and innovation [27]. This has spurred interest in more efficient architectures and training methods.

Multimodal Integration: While CONCH demonstrates the power of vision-language alignment, future FMs will need to integrate more diverse data modalities, including genomic profiles, clinical outcomes, and spatial transcriptomics [26] [2].

Whole-Slide Representation Learning: Current FMs primarily operate at the patch level, requiring additional aggregation steps for slide-level predictions. Emerging models like TITAN aim to learn direct slide-level representations through hierarchical transformers and multimodal pre-training with pathology reports [2].

The evolution of UNI, Virchow, CONCH, and Phikon represents a transformative period in computational pathology, establishing a new paradigm where general-purpose AI models can accelerate research and enhance clinical decision-making across a broad spectrum of diagnostic challenges.

Building and Applying SSL Frameworks: From Masked Autoencoders to Multimodal AI

The analysis of gigapixel images, particularly in computational pathology, represents one of the most data-intensive challenges in computer vision. Whole Slide Images (WSIs) in histopathology routinely exceed several gigabytes in size, containing both cellular-level details and tissue-level architectural patterns essential for diagnostic decisions. Traditional convolutional neural networks struggle with such massive inputs due to hardware memory limitations and the fundamental multi-scale nature of biological systems. Within the broader thesis context of self-supervised learning for pathology image analysis, multi-resolution hierarchical networks have emerged as a foundational architecture for overcoming these challenges, enabling models to learn powerful, transferable representations without extensive manual annotation [6] [2].

This technical guide comprehensively examines the architectural principles, methodological implementations, and experimental protocols for multi-resolution hierarchical networks. These architectures explicitly model the hierarchical organization of gigapixel images, from sub-cellular features at the highest magnifications to tissue-level structures and spatial relationships across the entire slide. By integrating self-supervised learning objectives, these systems can leverage vast unlabeled WSI repositories, capturing morphological patterns that generalize across diverse cancer types and clinical tasks [28] [2].

Foundational Principles and Key Challenges

The Multi-Resolution Paradigm

Gigapixel images contain information at multiple spatially-correlated scales. In histopathology, diagnostically relevant features span from nuclear morphology (requiring 20x-40x magnification) to tissue architecture (visible at 5x-10x) and overall slide-level organization. Multi-resolution hierarchical networks explicitly model this structure through parallel or sequential processing paths operating at different magnification levels [6] [29].

A critical challenge in this domain is the gradient conflict problem that arises when optimizing similarity and regularization losses across different resolution levels. Strong regularization preserves global structure but loses fine details, while weak regularization causes instability in global alignment. The Hierarchical Gradient Modulation (HGD) strategy addresses this by introducing a compatibility criterion that analyzes the angle between similarity and regularization loss gradients, applying orthogonal projection for conflicting gradients and averaging for compatible ones [30].

Self-Supervised Learning for Pathology

Self-supervised learning has revolutionized computational pathology by overcoming the annotation bottleneck. SSL methods formulate pretext tasks that leverage the intrinsic structure of unlabeled WSIs, enabling models to learn transferable visual representations. For multi-resolution networks, this typically involves:

- Masked Image Modeling (MIM): Randomly masking portions of input patches and training the network to reconstruct the missing tissue structures [6] [2].

- Contrastive Learning: Maximizing agreement between differently augmented views of the same tissue region while minimizing agreement with different regions [6].

- Multi-Scale Consistency: Enforcing predictive consistency between features extracted at different magnification levels [29].

Architectural Components and Frameworks

Core Building Blocks

Table 1: Essential Components of Multi-Resolution Hierarchical Networks

| Component | Function | Implementation Examples |

|---|---|---|

| Feature Pyramid Encoder | Extracts features at multiple scales simultaneously | Residual networks with feature pyramid [31] [32] |

| Hierarchical Gradient Modulation | Balances similarity and regularization losses across resolutions | Orthogonal projection for conflicting gradients [30] |

| Cross-Scale Attention | Models interactions between different magnification levels | Parent-child links between coarse (5x) and fine (20x) features [29] |

| Multi-Resolution Fusion | Integrates features from different scales for unified representation | Dual-attention mechanisms with channel grouping shuffle [31] |

Representative Architectural Frameworks

HiVE-MIL Framework: This hierarchical vision-language framework constructs a unified graph connecting visual and textual representations across scales. It establishes parent-child links between coarse (5x) and fine (20x) visual/textual nodes to capture hierarchical relationships, and heterogeneous intra-scale edges linking visual and textual nodes at the same magnification. A text-guided dynamic filtering mechanism removes weakly correlated patch-text pairs, while hierarchical contrastive loss aligns semantics across scales [29].

TITAN Architecture: The Transformer-based pathology Image and Text Alignment Network processes gigapixel WSIs through a Vision Transformer that creates general-purpose slide representations. TITAN employs a three-stage pretraining approach: (1) vision-only unimodal pretraining on region crops, (2) cross-modal alignment of generated morphological descriptions at region-level, and (3) cross-modal alignment at whole-slide level with clinical reports. To handle extremely long sequences, TITAN uses attention with linear bias (ALiBi) for long-context extrapolation [2].

MRLF Network: Originally designed for remote sensing, the Multi-Resolution Layered Fusion network provides valuable architectural insights for pathology applications. It decomposes input images into low-resolution global structural features and high-resolution local detail features using a hierarchical feature decoupling mechanism. A dual-attention collaborative mechanism dynamically adjusts modal weights and focuses on complementary regions across scales [32].

Experimental Protocols and Methodologies

Model Training and Optimization

Training multi-resolution hierarchical networks requires specialized protocols to handle computational constraints while maintaining gradient stability:

Streaming Implementation: For end-to-end training on gigapixel images, StreamingCLAM uses a streaming implementation of convolutional layers that processes portions of the WSI while maintaining contextual awareness. This approach enables training on 4-gigapixel images using only slide-level labels [33].

Progressive Fine-tuning: A progressive fine-tuning protocol starts with low-resolution pretraining, gradually incorporating higher-resolution branches while freezing lower-level parameters. This strategy maintains stable optimization while incrementally increasing model capacity [6].

Multi-Resolution Loss Balancing: The Hierarchical Gradient Modulation method defines a gradient compatibility criterion across resolutions. During backpropagation, it analyzes the angle between similarity and regularization loss gradients, applying orthogonal projection when conflicts exceed a threshold and maintaining dominant gradient directions when compatible [30].

Evaluation Metrics and Benchmarks

Table 2: Quantitative Performance of Multi-Resolution Hierarchical Networks

| Method | Dataset | Key Metrics | Performance | Improvement Over Baselines |

|---|---|---|---|---|

| HGD Registration [30] | Medical, Sonar, Fabric | Registration Accuracy | Superior to baseline methods | Optimal loss balance without extra computation |

| SSL with Adaptive Augmentation [6] | TCGA-BRCA, CAMELYON16 | Dice: 0.825, mIoU: 0.742 | 4.3% Dice, 7.8% mIoU improvement | 70% reduction in annotation requirements |

| HiVE-MIL [29] | TCGA Breast, Lung, Kidney | Macro F1 (16-shot) | Up to 4.1% gain | Outperforms traditional MIL approaches |

| StreamingCLAM [33] | CAMELYON16 | AUC: 0.9757 | Close to fully supervised | Uses only slide-level labels |

| TITAN [2] | Mass-340K (335,645 WSIs) | Slide retrieval, zero-shot classification | Outperforms supervised baselines | Generalizes to rare cancer retrieval |

Implementation Toolkit

Research Reagent Solutions

Table 3: Essential Resources for Multi-Resolution Network Implementation

| Resource | Type | Function | Example Specifications |

|---|---|---|---|

| MSK-SLCPFM Dataset [28] | Pretraining Data | Foundation model development | ~300M images, 39 cancer types, 51,578 WSIs |

| TCGA Datasets [6] [29] | Benchmark Data | Method evaluation | Breast (BRCA), Lung (LUAD), Kidney cancers |

| CAMELYON16 [33] | Evaluation Dataset | Metastasis detection | Lymph node WSIs with slide-level labels |

| CONCH Embeddings [2] | Pretrained Features | Patch representation | 768-dimensional features from visual-language model |

| ALiBi Positional Encoding [2] | Algorithm | Long-sequence handling | Extends to 2D for WSI feature grids |

Architectural Diagrams

Multi-Resolution Hierarchical Network Workflow

Hierarchical Gradient Modulation System

The field of multi-resolution hierarchical networks for gigapixel images continues to evolve rapidly. Promising research directions include more efficient transformer architectures for long sequences, unified visual-language representation learning at multiple scales, and federated learning approaches to leverage distributed WSI repositories while preserving patient privacy [28] [2].

As computational pathology advances, multi-resolution hierarchical networks represent a foundational architectural paradigm that aligns with the hierarchical nature of biological systems. By integrating self-supervised learning objectives with structurally appropriate network designs, these approaches enable more data-efficient, interpretable, and clinically applicable models for cancer diagnosis and research. The continued development of these architectures will play a crucial role in realizing the potential of AI in digital pathology.

The integration of Masked Image Modeling (MIM) and contrastive learning represents a transformative advancement in self-supervised learning for computational pathology. This hybrid approach effectively addresses the critical challenges of annotation scarcity and generalization limitations that have historically constrained the development of robust AI models in histopathology. By combining MIM's strength in reconstructing fine-grained tissue structures with contrastive learning's ability to learn invariant representations across staining and preparation variations, these frameworks achieve superior performance across diverse downstream tasks including segmentation, classification, and slide retrieval. This technical guide comprehensively examines the architectural principles, methodological innovations, and experimental protocols underpinning successful hybrid SSL implementations, providing researchers with practical insights for advancing pathology image analysis.

Computational pathology faces fundamental challenges due to the scarce availability of pixel-level annotations for gigapixel Whole Slide Images (WSIs) and limited model generalization across diverse tissue types and institutional settings [34]. Self-supervised learning has emerged as a promising paradigm to address these limitations by leveraging unlabeled data to learn transferable representations. While individual SSL methods like contrastive learning and masked image modeling have demonstrated considerable success, each possesses distinct limitations when applied in isolation to histopathological data.

Hybrid SSL frameworks strategically integrate complementary learning objectives to overcome the limitations of individual approaches. The synergy between MIM, which excels at capturing fine-grained cellular structures through reconstruction tasks, and contrastive learning, which develops augmentation-invariant representations of tissue morphology, creates more robust feature representations [34] [2]. This integration is particularly valuable in pathology image analysis where both local cellular details and global tissue architecture are diagnostically significant.

The implementation of hybrid SSL strategies has yielded substantial empirical improvements. Recent research demonstrates that combining masked autoencoder reconstruction with multi-scale contrastive learning achieves a Dice coefficient of 0.825 (4.3% improvement) and mIoU of 0.742 (7.8% enhancement) while significantly reducing boundary error metrics [34]. Furthermore, these approaches exhibit exceptional data efficiency, requiring only 25% of labeled data to achieve 95.6% of full performance compared to 85.2% for supervised baselines [34].

Technical Foundations

Masked Image Modeling (MIM) in Pathology

Masked Image Modeling operates by randomly masking portions of an input image and training a model to reconstruct the missing regions based on the visible context. This approach forces the model to learn semantically meaningful representations of tissue structures and their spatial relationships. In pathology-specific implementations, standard random masking strategies are often enhanced with semantic-aware masking that preserves histological integrity during reconstruction [34].

The adaptation of MIM for histopathology presents unique considerations. Unlike natural images, WSIs exhibit multi-scale structural hierarchies ranging from sub-cellular features to tissue-level organization. Advanced implementations like the Mask in Mask (MiM) framework address this by introducing multiple levels of granularity for masked inputs, enabling simultaneous reconstruction at both fine and coarse levels [35]. This hierarchical approach is particularly valuable for capturing the nested morphological patterns present in histopathological samples.

Contrastive Learning Principles

Contrastive learning frameworks learn representations by maximizing agreement between differently augmented views of the same image while distinguishing them from other images in the dataset [1]. The core principle relies on constructing positive pairs (different augmentations of the same image) and negative pairs (different images) to train models to be invariant to non-semantic variations while capturing diagnostically relevant features.

In pathology applications, contrastive learning must account for domain-specific challenges. Standard augmentations used in natural images may compromise histological semantics or introduce biologically implausible artifacts. Domain-adapted approaches like Spatial Guided Contrastive Learning (SGCL) leverage intrinsic properties of WSIs, including spatial proximity priors and multi-object priors, to generate semantically meaningful positive pairs [36]. These methods model intra-invariance within the same WSI and inter-invariance across different WSIs while maintaining biological plausibility.

Integration Rationale and Synergistic Benefits

The combination of MIM and contrastive learning creates a complementary learning system that addresses the limitations of each approach individually. MIM excels at capturing local structural details through pixel-level reconstruction tasks but may underemphasize global semantic relationships. Contrastive learning develops invariant representations to non-semantic variations but may overlook fine-grained morphological patterns. Their integration enables comprehensive feature learning spanning both local and global tissue characteristics.

The hybrid approach demonstrates particular strength in multi-scale feature learning, which is essential for pathology image analysis. MIM components capture cellular-level details, while contrastive objectives encode tissue-level contextual relationships. This synergy is evident in frameworks that achieve a 13.9% improvement in cross-dataset generalization compared to unimodal approaches [34].

Table 1: Performance Comparison of SSL Paradigms in Pathology

| Method | Dice Coefficient | mIoU | Data Efficiency | Generalization Improvement |

|---|---|---|---|---|

| Supervised Baseline | 0.791 | 0.688 | 85.2% with 100% labels | Reference |

| Contrastive Only | 0.802 | 0.714 | 90.3% with 25% labels | 8.7% |

| MIM Only | 0.811 | 0.726 | 92.1% with 25% labels | 10.2% |

| Hybrid MIM + Contrastive | 0.825 | 0.742 | 95.6% with 25% labels | 13.9% |

Architectural Framework

Multi-Resolution Hierarchical Architecture

Effective hybrid SSL frameworks for pathology employ multi-resolution architectures specifically designed for gigapixel WSIs [34]. These architectures process images at multiple magnification levels to capture both cellular-level details and tissue-level context simultaneously. The hierarchical design typically consists of parallel encoder pathways operating at different scales, with cross-connections to share information across resolutions.

A critical innovation in these architectures is the adaptive feature fusion mechanism that dynamically integrates information from different scales based on tissue type and morphological characteristics. This approach mirrors the clinical practice of pathologists who routinely adjust magnification levels during slide examination to appreciate both fine cytological details and overall tissue architecture. The multi-resolution design contributes significantly to the documented 10.7% improvement in Hausdorff Distance and 9.5% improvement in Average Surface Distance metrics [34].

Hybrid Learning Objectives

The integration of MIM and contrastive learning involves designing composite loss functions that balance both objectives effectively. Typically, these frameworks employ a weighted combination of reconstruction loss (for MIM) and contrastive loss, with the relative weighting often optimized through empirical validation. Advanced implementations may include adaptive weighting schemes that dynamically adjust the contribution of each objective during training based on task complexity or training progress.

The MIM component typically uses patch-level reconstruction with strategies like semantic-aware masking that prioritize histologically significant regions. Simultaneously, the contrastive component employs multi-scale sampling to generate positive pairs that capture both local and global semantic similarities. This dual objective approach has been shown to learn more balanced representations, excelling in both fine-grained segmentation tasks and whole-slide classification [34] [2].

Adaptive Semantic-Aware Data Augmentation

Hybrid SSL frameworks incorporate adaptive augmentation networks that preserve histological semantics while maximizing data diversity [34]. Unlike traditional augmentation techniques that may introduce biologically implausible artifacts, these learned transformation policies respect the structural integrity of tissue morphology. The augmentation strategies are typically optimized through reinforcement learning or gradient-based methods to maximize downstream task performance.

These adaptive approaches demonstrate particular value in maintaining diagnostic relevance during augmentation by avoiding transformations that alter pathologically significant features. The integration of semantic awareness enables more aggressive augmentation without compromising clinical utility, contributing to the observed improvements in generalization across diverse institutional environments [34].

Diagram 1: Hybrid SSL architecture combining MIM and contrastive learning pathways

Experimental Protocols and Methodologies

Pre-training Implementation Framework

The pre-training phase for hybrid SSL models requires careful configuration of both MIM and contrastive components. For the MIM module, implementations typically employ a masking ratio of 60-80%, significantly higher than in natural images, to force the model to learn robust structural representations of tissue morphology [34] [2]. The masking strategy often incorporates semantic awareness, prioritizing diagnostically relevant regions for reconstruction to enhance clinical utility.

The contrastive learning component utilizes domain-specific augmentations that preserve histological semantics. These include stain normalization, elastic deformations, and spatially coherent cropping that maintain tissue structure. Implementations like SGCL explicitly model spatial relationships through spatial proximity priors, where patches from anatomically adjacent regions are treated as positive pairs to incorporate structural context [36]. This approach has demonstrated 7-12% performance improvements over generic contrastive methods on pathology-specific tasks.

Progressive Fine-tuning Protocol

Successful application of hybrid SSL models employs a progressive fine-tuning approach that gradually adapts the pre-trained representations to downstream tasks [34]. This protocol typically begins with task-agnostic adaptation using a small subset of annotated data, followed by task-specific optimization with the full labeled dataset. The fine-tuning process often employs boundary-focused loss functions that prioritize accurate segmentation of tissue boundaries, addressing a common challenge in histopathology image analysis.

The fine-tuning phase may also incorporate adaptive learning rates for different components of the model, with lower rates for the pre-trained encoder to preserve the learned representations and higher rates for task-specific heads. This strategy balances representation preservation with task adaptation, contributing to the observed 70% reduction in annotation requirements while maintaining 95.6% of full performance [34].

Evaluation Metrics and Validation

Comprehensive evaluation of hybrid SSL models extends beyond standard performance metrics to include clinical validation and generalization assessment. Technical metrics typically include Dice coefficient, mIoU, boundary accuracy measures (Hausdorff Distance, Average Surface Distance), and data efficiency curves [34]. Additionally, cross-dataset generalization is quantified through performance consistency across diverse tissue types and institutional sources.

Clinical validation involves expert pathologist assessment of model outputs for diagnostic utility and boundary accuracy. In recent implementations, hybrid SSL frameworks received ratings of 4.3/5.0 for clinical applicability and 4.1/5.0 for boundary accuracy from practicing pathologists [34]. This multi-faceted evaluation approach ensures that technical improvements translate to clinically meaningful advancements.

Table 2: Detailed Performance Metrics Across Cancer Types

| Cancer Type | Dataset | Dice Coefficient | mIoU | Hausdorff Distance | Surface Distance |

|---|---|---|---|---|---|

| Breast Cancer | TCGA-BRCA | 0.841 | 0.762 | 9.3 | 8.1 |

| Lung Cancer | TCGA-LUAD | 0.832 | 0.751 | 10.2 | 8.9 |

| Colon Cancer | TCGA-COAD | 0.819 | 0.738 | 11.7 | 9.8 |

| Lymph Node | CAMELYON16 | 0.867 | 0.781 | 8.5 | 7.3 |

| Pan-Cancer | PanNuke | 0.826 | 0.743 | 10.8 | 9.1 |

The implementation of hybrid SSL strategies requires specific computational resources and methodological components. The following table details essential "research reagents" for developing and evaluating these frameworks in computational pathology.

Table 3: Essential Research Reagents for Hybrid SSL Implementation

| Component | Representative Examples | Function | Implementation Considerations |

|---|---|---|---|

| Patch Encoders | CONCH, ViT, UNI [2] [37] | Feature extraction from image patches | Pre-trained on histopathology data; support for multi-scale processing |