Single-Cell Data Normalization and HVG Selection: A Comprehensive Guide for Robust Analysis

This article provides a comprehensive guide to single-cell RNA sequencing data normalization and highly variable gene (HVG) selection, critical steps that directly impact all downstream analyses.

Single-Cell Data Normalization and HVG Selection: A Comprehensive Guide for Robust Analysis

Abstract

This article provides a comprehensive guide to single-cell RNA sequencing data normalization and highly variable gene (HVG) selection, critical steps that directly impact all downstream analyses. We explore the foundational concepts of technical and biological variability in scRNA-seq data, detail state-of-the-art methodologies from global scaling to regularized negative binomial regression, and offer practical troubleshooting advice for common pitfalls. By comparing performance validation strategies and benchmarking outcomes, we equip researchers and drug development professionals with the knowledge to make informed decisions, optimize their analysis pipelines, and ensure biologically meaningful results in studies of cellular heterogeneity.

Understanding scRNA-seq Data: From Technical Biases to Biological Variation

Frequently Asked Questions (FAQs)

1. What are "dropouts" in single-cell RNA-seq data? Dropouts are a phenomenon where a gene is expressed at a moderate or low level in a cell but fails to be detected during the sequencing process, resulting in a zero count [1]. This occurs due to the exceptionally low starting amounts of mRNA in individual cells, inefficiencies in mRNA capture, and the inherent stochasticity of gene expression at the single-cell level [1] [2]. In a typical dataset, over 97% of the count matrix can be zeros, a characteristic known as zero-inflation [1].

2. How does technical noise confound single-cell data analysis? Technical noise refers to non-biological fluctuations in the data caused by the entire data generation process. This includes variability in cell lysis, reverse transcription efficiency, amplification, and molecular sampling during sequencing [2] [3]. This noise masks true biological variation, such as subtle cell-to-cell differences in gene expression, and can obscure important biological signals, including tumor-suppressor events in cancer or cell-type-specific transcription factor activities [3].

3. Why can't I just use analysis methods designed for bulk RNA-seq? Bulk RNA-seq measures the average gene expression across thousands of cells, which masks the heterogeneity within a cell population [2]. Single-cell data, in contrast, is defined by its high sparsity (excessive zero counts) and high technical variability, even for genes with medium or high expression levels [2]. The assumptions made for bulk RNA-seq analysis, such as a lower proportion of zeros and different noise structure, often do not hold for single-cell data, leading to misleading results if bulk methods are applied directly [2].

4. My cell clusters are unstable. Could dropouts be the cause? Yes. High dropout rates can break the fundamental assumption that "similar cells are close to each other" in the analytical space [4]. While clusters might still appear homogeneous (containing the same cell type), their stability—whether the same cells are grouped together consistently—decreases as dropout rates increase. This makes it particularly difficult to reliably identify fine-grained subpopulations within broader cell types [4].

5. How does data preprocessing introduce artifacts into my analysis? Many preprocessing methods, particularly imputation tools designed to fill in dropout events, can oversmooth the data [5]. This oversmoothing introduces spurious (false) gene-gene correlations, creating associations that do not exist biologically. One study found that several popular normalization and imputation methods resulted in a noticeable inflation of gene-gene correlation coefficients for gene pairs that are not expected to be co-expressed [5].

Troubleshooting Guides

Problem: Poor or Unstable Cell Clustering

Potential Cause: High dropout rates are obscuring the true biological signals needed to distinguish cell types [4].

Solutions:

- Explore Alternative Normalization: Move beyond simple global-scaling normalization. Test methods like SCTransform, which uses regularized negative binomial regression to model technical noise, or Scran, which pools cells to improve size factor estimation [6].

- Leverage Dropout Patterns: Instead of treating all zeros as missing information, consider methods that use the binary (on/off) dropout pattern itself as a signal. Genes in the same pathway can exhibit similar dropout patterns across cell types, which can be used for clustering [1].

- Benchmark Preprocessing Methods: Systematically test different preprocessing pipelines. There is no single best method that works for all datasets and all clustering algorithms [7]. Use the SC3-e algorithm or similar approaches to help identify the optimal preprocessing method for your specific dataset and chosen clustering tool [7].

Problem: Inflated or Spurious Gene-Gene Correlations

Potential Cause: Data imputation or normalization methods have oversmoothed the data, creating artificial correlations [5].

Solutions:

- Apply Noise Regularization: After preprocessing, add a noise-regularization step. This involves adding noise drawn from a uniform distribution that is scaled to the dynamic expression range of each gene. This has been shown to effectively reduce correlation artifacts while preserving true biological associations [5].

- Use High-Dimensional Noise Reduction: Employ tools like RECODE, which are based on high-dimensional statistics to model and reduce technical noise without oversmoothing, thereby preserving the structure of the data for network inference [3].

- Validate with Independent Data: Correlations inferred from preprocessed scRNA-seq data should be treated with caution and validated against known protein-protein interaction databases (e.g., STRING) or other orthogonal data sources [5].

Problem: Batch Effects Masking Biological Variation

Potential Cause: Technical variations between experiments conducted at different times or on different sequencing platforms introduce non-biological variability [3].

Solutions:

- Implement Integrated Noise Reduction: Use a method capable of simultaneously reducing both technical noise (dropouts) and batch effects, such as iRECODE. This approach integrates batch correction within a noise-reduced essential space, preventing the degradation of correction accuracy that can occur with high-dimensional, noisy data [3].

- Choose Appropriate Batch-Correction Algorithms: When using standalone batch correction, select methods that are compatible with single-cell data. Benchmarking suggests that Harmony, MNN-correct, and Scanorama are prominent options, with their performance potentially varying by dataset [3].

Experimental Protocols & Data Presentation

Detailed Methodology: Co-occurrence Clustering Using Dropout Patterns

This protocol is based on a method that clusters cells by treating dropout events as useful biological signals rather than noise [1].

- Data Binarization: Start with the raw scRNA-seq count matrix. Transform this matrix into a binary (0/1) matrix, where

0represents a dropout (non-detection) and1represents a detected gene transcript [1]. - Gene-Gene Co-occurrence Graph Construction:

- For each pair of genes, compute a statistical measure of co-occurrence, which quantifies how often the two genes are simultaneously detected (value of

1) in the same cells. - Filter and adjust these co-occurrence measures using the Jaccard index to create a weighted gene-gene graph [1].

- For each pair of genes, compute a statistical measure of co-occurrence, which quantifies how often the two genes are simultaneously detected (value of

- Gene Pathway Identification:

- Pathway Activity Space Representation:

- For each gene cluster (pathway) and for each cell, calculate the percentage of genes within that cluster that were detected.

- This generates a low-dimensional representation of the cells, where each dimension corresponds to the activity level of a specific gene pathway [1].

- Cell-Clustering:

- Construct a cell-cell graph using Euclidean distances calculated from the low-dimensional pathway activity matrix.

- Filter this graph with the Jaccard index and apply community detection to partition the cells into clusters [1].

- Cluster Merging and Hierarchical Division:

- Evaluate pairs of cell clusters using metrics like signal-to-noise ratio, mean difference, and mean ratio of pathway activities. Merge clusters if no pathway shows a differential activity above a defined threshold.

- The entire process can be applied iteratively to each new cell cluster in a hierarchical manner to identify finer subpopulations [1].

Table 1: Comparison of scRNA-seq Normalization Methods

| Method | Principle | Key Features | Considerations |

|---|---|---|---|

| Global Scaling (e.g., in Seurat/Scanpy) | Divides counts by total per-cell counts, scales (e.g., 10,000), and log-transforms [6]. | Simple, fast, widely used. | May fail to normalize high-abundance genes effectively; cell order in embeddings can correlate with sequencing depth [6]. |

| SCTransform | Regularized Negative Binomial regression on UMI counts with sequencing depth as a covariate [6]. | Produces depth-independent Pearson residuals; no assumption of fixed size factor. | Model-based; can be computationally intensive for very large datasets. |

| Scran | Pools cells and sums expression to estimate pool-based size factors, then solves a linear system to deconvolve cell-specific factors [6]. | Robust to the high number of zero counts; generates individual cell size factors. | Requires a pooling step. |

| BASiCS | Bayesian hierarchical model that jointly quantifies technical noise and biological heterogeneity [6]. | Can use spike-in genes or technical replicates; also identifies highly variable genes. | Requires spike-ins or technical replicates for best performance. |

Table 2: Impact of Data Preprocessing on Gene-Gene Correlation Inference

| Preprocessing Method | Median Spearman Correlation (ρ) | Effect vs. Raw Data (NormUMI) | Risk of Spurious Correlation |

|---|---|---|---|

| NormUMI (Baseline) | 0.023 | -- | Low |

| NBR | 0.839 | 36x inflation | High |

| MAGIC | 0.789 | 34x inflation | High |

| DCA | 0.770 | 33x inflation | High |

| SAVER | 0.166 | 7x inflation | Moderate |

Data adapted from a benchmarking study on HCA bone marrow data [5].

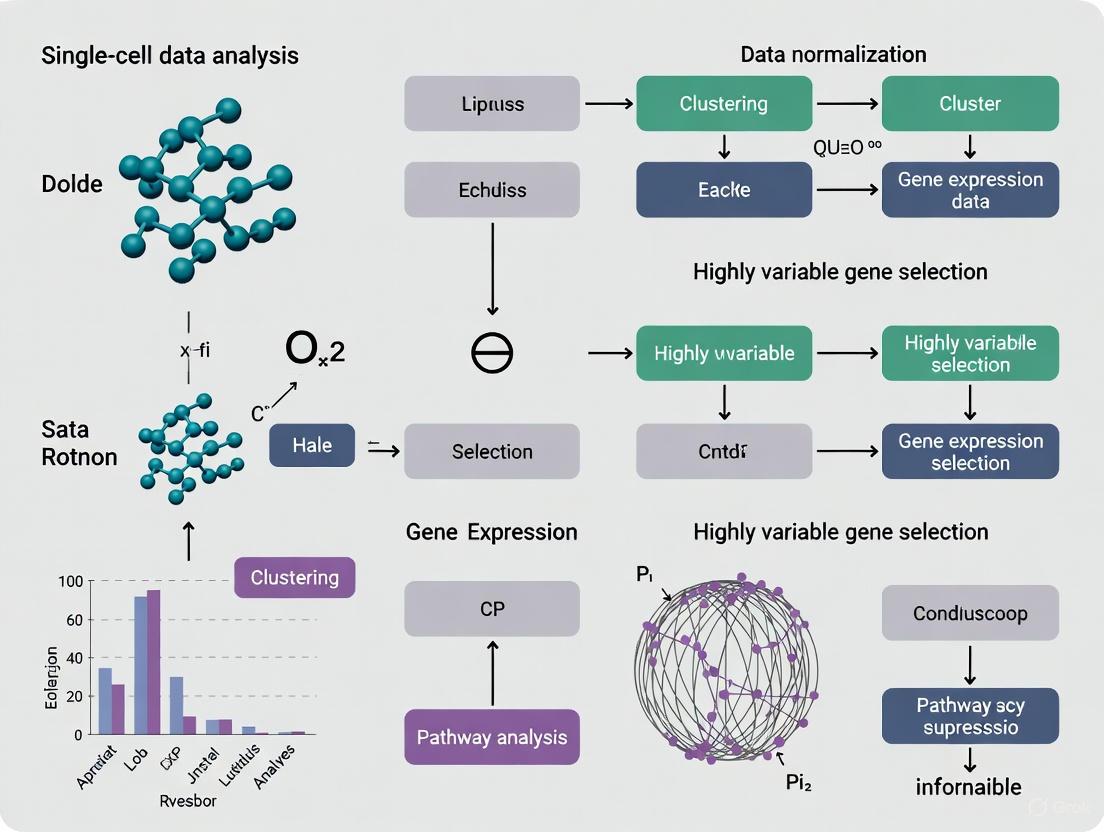

Visualization Diagrams

Diagram 1: The Single-Cell Data Analysis Challenge: From Drops to Data

Diagram 2: Co-occurrence Clustering Workflow Using Dropout Patterns

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for scRNA-seq Data Analysis

| Tool / Resource | Function | Relevance to Sparsity/Dropouts |

|---|---|---|

| Unique Molecular Identifiers (UMIs) | Short nucleotide barcodes that label individual mRNA molecules, allowing for the correction of PCR amplification bias and more accurate transcript counting [8]. | Reduces technical noise from amplification but does not solve pre-capture issues like low mRNA input or reverse transcription inefficiency, which are primary sources of dropouts [2]. |

| Spike-in RNAs (e.g., from External RNA Control Consortium) | Known quantities of foreign RNA transcripts added to the cell lysate before library preparation [2]. | Provides an external standard to model technical variation and quantify the absolute number of transcript molecules, helping to distinguish technical zeros (dropouts) from true biological absence [2] [6]. |

| 10x Genomics Barcoded Gel Beads | Microfluidic reagents and chips for partitioning thousands of single cells into droplets for parallel library preparation [8]. | Standardizes the initial capture and reverse transcription step for many cells at once, though cell-to-cell variability in capture efficiency remains a key contributor to observed dropout patterns [1] [8]. |

| Bayesian Hierarchical Models (e.g., BASiCS) | Statistical models that jointly quantify technical noise and biological heterogeneity by integrating spike-in controls [6]. | Directly models the sources of technical noise, allowing for a more precise normalization that accounts for the zero-inflated nature of the data [6]. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: What are the main sources of systematic technical bias in scRNA-seq experiments? The primary technical biases originate from three main sources: capture efficiency (varying success in mRNA molecule capture during reverse transcription), amplification bias (non-linear amplification during PCR), and sequencing depth (variable number of reads per cell). These factors introduce cell-specific systematic biases that must be accounted for during normalization [2] [9].

Q2: Why can't I use bulk RNA-seq normalization methods for my scRNA-seq data? Bulk normalization methods assume minimal zero counts and balanced differential expression, which doesn't hold for scRNA-seq data characterized by high sparsity (zero inflation) and substantial technical noise. Using bulk methods can lead to misleading results in downstream analyses like highly variable gene detection and clustering [2].

Q3: How do Unique Molecular Identifiers (UMIs) help with amplification bias? UMIs are random barcodes ligated to each molecule during reverse transcription that allow bioinformatic collapsing of PCR duplicates. This provides absolute molecular counts that correct for PCR amplification biases, though they cannot account for differences in capture efficiency prior to reverse transcription [2] [9].

Q4: When should I use spike-in RNAs for normalization? Spike-ins are most beneficial when you need to preserve biological differences in total RNA content between cells, such as when studying cellular activation states where transcriptome size changes are biologically meaningful. They assume consistent spike-in addition across cells [10] [11].

Q5: My clustering results seem driven by technical effects rather than biology. How can I address this? This often indicates incomplete normalization. Consider moving beyond simple library size normalization to methods like deconvolution normalization (scran) or regularized negative binomial regression (SCTransform), which better handle composition biases and the mean-variance relationship in single-cell data [10] [6].

Troubleshooting Common Problems

Problem: High variance in library sizes across cells Solution: Implement deconvolution normalization using the scran method, which pools cells to estimate size factors more accurately than per-cell library size normalization, especially important for heterogeneous cell populations [10].

Problem: Composition biases affecting differential expression results Solution: Use normalization methods that account for unbalanced differential expression, such as those in DESeq2 or edgeR adapted for single-cell data, or consider spike-in normalization if available [10].

Problem: Inaccurate highly variable gene detection

Solution: Apply variance modeling that separates technical from biological variability using methods like modelGeneVar() or modelGeneVarByPoisson() in scran, which model the mean-variance relationship to identify genuine biological heterogeneity [12].

Normalization Method Comparison

Table 1: Comparison of scRNA-seq Normalization Methods and Their Applications

| Method | Underlying Principle | Handles Composition Bias | Spike-ins Required | Best Use Case |

|---|---|---|---|---|

| Library Size | Scales by total UMI count per cell | No | No | Initial exploration, homogeneous populations [10] |

| Deconvolution (scran) | Pooling across cells to estimate factors | Yes | No | Heterogeneous cell populations, differential expression [10] [6] |

| Spike-in | Normalizes to exogenous RNA controls | Yes | Yes | When total RNA content differences are biologically important [10] |

| SCTransform | Regularized negative binomial regression | Yes | No | Integrated analysis, clustering, addressing overfitting [6] |

| SCnorm | Quantile regression by gene groups | Yes | Optional | Conditions with strong mean-variance dependence [6] |

| BASiCS | Bayesian hierarchical modeling | Yes | Optional (needs spike-ins or replicates) | When quantifying technical and biological variation is critical [13] [6] |

Table 2: Quantitative Performance Metrics Across Normalization Methods

| Method | Computational Intensity | Preserves Transcriptome Size | Handles Zeros | Downstream Clustering | Differential Expression |

|---|---|---|---|---|---|

| Library Size | Low | No | Moderate | Good for major types | Limited by composition bias [10] |

| Deconvolution | Medium | Partial | Good | Good | Improved [10] |

| Spike-in | Low | Yes | Good | Good | Good, preserves biological differences [10] [11] |

| SCTransform | Medium-High | No | Good | Excellent | Excellent [6] |

| SCnorm | Medium | No | Good | Good | Good for cross-condition [6] |

| BASiCS | High | Yes | Excellent | Good | Excellent, provides uncertainty [13] [6] |

Experimental Protocols

Protocol 1: Implementing Deconvolution Normalization with Scran

Purpose: To accurately normalize scRNA-seq data from heterogeneous cell populations while mitigating composition biases [10].

- Data Preparation: Load count data into a SingleCellExperiment object

- Quick Clustering:

- Perform rapid clustering using

quickCluster()to group similar cells - This step ensures normalization within biologically similar subsets

- Perform rapid clustering using

- Size Factor Calculation:

- Apply

computeSumFactors()to estimate cell-specific size factors via deconvolution - The method solves linear equations from pooled cell sums

- Apply

- Normalization:

- Apply size factors using

logNormCounts()for log-transformed normalized counts

- Apply size factors using

- Validation:

- Check that size factors are not correlated with cell type identities

- Verify reduction in technical batch effects

Protocol 2: Highly Variable Gene Detection with Variance Modeling

Purpose: To identify genes with genuine biological variability while accounting for technical noise [12].

- Normalization: Begin with properly normalized data (e.g., using log-normalized counts)

- Variance Modeling:

- Use

modelGeneVar()to fit a trend to the variance with respect to abundance - Alternatively, use

modelGeneVarByPoisson()for UMI count data

- Use

- Component Separation:

- Decompose total variance into technical (uninteresting) and biological (interesting) components

- Biological component = Total variance - Technical variance

- Gene Selection:

- Select top genes based on biological component using

getTopHVGs() - Typical settings: 1000-5000 HVGs for downstream analysis

- Select top genes based on biological component using

- Validation:

- Check that selected HVGs drive biologically meaningful clustering

- Ensure representation of known cell type markers

Workflow Visualization

scRNA-seq Normalization Decision Framework

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for scRNA-seq Normalization

| Tool/Reagent | Type | Primary Function | Considerations |

|---|---|---|---|

| UMI Barcodes | Wet-bench | Molecular counting by tagging individual molecules | Eliminates PCR duplication bias but doesn't address capture efficiency [2] [9] |

| ERCC Spike-ins | Wet-bench | External RNA controls for technical variation | Requires consistent addition across cells; may not mirror endogenous RNA behavior perfectly [9] [10] |

| 10X Genomics | Platform | Droplet-based single-cell partitioning with UMIs | High throughput but fixed 3' end sequencing only [9] |

| Smart-seq2/3 | Platform | Full-length transcript sequencing | Lower throughput but enables isoform detection; Smart-seq3 includes UMIs [9] |

| Scran (R) | Software | Deconvolution normalization using cell pooling | Excellent for heterogeneous populations; requires similar cells for pooling [10] [6] |

| SCTransform (R) | Software | Regularized negative binomial regression | Handles overdispersion well; integrated in Seurat pipeline [6] |

| BASiCS (R) | Software | Bayesian hierarchical modeling | Provides uncertainty quantification; computationally intensive [13] [6] |

| Scanpy (Python) | Software | Toolkit including multiple normalization methods | Python alternative to Seurat; includes basic and advanced methods [6] |

Core Concepts in scRNA-seq Normalization

Why is normalization necessary in single-cell RNA-seq analysis?

Normalization is a critical first step in scRNA-seq data analysis because raw gene counts are not directly comparable between cells. Significant differences can exist in the expression distributions of the same gene in the same cell type across different samples due to technology-induced effects like sequencing depth [14]. Normalization removes these technical variations to enable valid biological comparisons.

What fundamental assumption do most normalization methods rely on?

Most normalization methods operate on the key assumption that the total RNA output—the transcriptome size—should be similar across most cells. These global scaling methods aim to eliminate systematic technical differences by equalizing total read counts across cells [9]. However, this assumption can be problematic when true biological differences in RNA content exist between cell types.

Normalization Methods: Comparison and Applications

What are the primary categories of normalization methods?

The table below summarizes the main categories of normalization methods used in scRNA-seq analysis [9]:

Table 1: Categories of scRNA-seq Normalization Methods

| Method Category | Mathematical Foundation | Key Examples | Primary Use Case |

|---|---|---|---|

| Global Scaling | Multiplicative size factors | CPM, TPM, CP10K | Standardization of library sizes |

| Generalized Linear Models | Regression-based approaches | SCTransform | Accounting for technical covariates |

| Mixed Methods | Combined approaches | - | Complex experimental designs |

| Machine Learning-based | Pattern recognition | - | Large, heterogeneous datasets |

How do common normalization methods compare in practice?

Table 2: Comparative Analysis of scRNA-seq Normalization Methods

| Method | Mechanism | Advantages | Limitations | Impact on Downstream Analysis |

|---|---|---|---|---|

| CP10K/CPM | Scales counts per 10,000 molecules | Simple, interpretable | Assumes constant transcriptome size; distorts biology | Can amplify small, suppress large transcriptomes [14] |

| SCTransform | Pearson residuals from GLM | Models technical noise | Complex implementation | Reduces influence of technical artifacts [15] |

| CLTS | Leverages linear correlation of transcriptome sizes | Preserves biological differences in RNA content | Requires cell type information | Avoids scaling issues; better for cross-sample comparison [14] |

| GLIMES | Uses absolute UMI counts with generalized Poisson/Binomial models | Preserves absolute RNA abundance; improves sensitivity | Newer, less established | Reduces false discoveries; enhances biological interpretability [16] |

Critical Considerations for Experimental Design and Execution

How does feature selection interact with normalization?

Feature selection—identifying highly variable genes (HVGs)—is often performed after normalization but is deeply intertwined with it. The choice of normalization method directly affects which genes are identified as highly variable [17]. Some key considerations include:

- Batch-aware feature selection improves integration when dealing with multiple samples [17]

- The number of selected features significantly impacts downstream integration and mapping quality [17]

- Methods like GLP (LOESS regression with positive ratio) offer robust feature selection by modeling the relationship between gene average expression and detection rate [15]

What experimental factors should be considered during normalization planning?

Table 3: Research Reagent Solutions for scRNA-seq Experiments

| Reagent/Resource | Function | Considerations for Normalization |

|---|---|---|

| UMIs (Unique Molecular Identifiers) | Corrects for PCR amplification bias [9] | Enables absolute quantification; foundation for UMI-count-based methods like GLIMES [16] |

| Spike-in RNAs (e.g., ERCC) | External controls for technical variation [9] | Creates standard baseline for counting and normalization; not feasible for all platforms |

| Cell Barcodes | Tags sequences from individual cells [9] | Enables multiplexing; helps identify multiplets that can distort normalization |

| Droplet-Based Systems (10X Genomics) | High-throughput single-cell isolation [9] | Generates digital counting data; requires appropriate normalization for 3' bias |

Workflow Integration and Troubleshooting

How can I integrate Seurat with improved normalization methods?

There are two primary approaches for integrating advanced normalization methods like CLTS with popular analysis tools like Seurat [14]:

Traditional Seurat with post-hoc validation: Use Seurat's default CP10K normalization for clustering and initial cell type annotation, then use CLTS-normalized data to validate results and conduct downstream analysis.

CLTS-integrated workflow: Use Leiden or Louvain clustering to identify cell groups, then apply CLTS normalization using these clusters as putative cell types before final annotation and analysis.

The following workflow diagram illustrates the integration of robust normalization practices within a standard scRNA-seq analysis pipeline:

What are common normalization pitfalls and how can they be addressed?

Table 4: Troubleshooting Guide for scRNA-seq Normalization Issues

| Problem | Potential Causes | Diagnostic Checks | Recommended Solutions |

|---|---|---|---|

| Poor cell type separation | Over-correction by normalization; ignoring true biological differences in RNA content | Check if library sizes vary systematically by cell type [16] | Try methods that preserve absolute abundances (CLTS, GLIMES) [14] [16] |

| Batch effects persist | Inadequate normalization for technical variability | Visualize data by batch before and after normalization | Apply batch correction after normalization or use batch-aware methods [17] |

| Inconsistent marker genes | Scaling issues from assuming equal transcriptome size | Check if markers show different patterns in raw vs. normalized data [14] | Validate findings with raw counts or alternative normalization |

| Low concordance with validation data | Normalization distorting biological signals | Compare with bulk RNA-seq or qPCR validation data | Use UMI-preserving methods; avoid over-transformation of counts [16] |

Advanced Applications and Future Directions

How should bulk RNA-seq deconvolution account for transcriptome sizes?

When using scRNA-seq data to deconvolve bulk RNA-seq samples, normalization must consider cell type composition. The transcriptome size of a bulk sample correlates with its cell type composition [14]. For accurate deconvolution:

- Calculate average transcriptome sizes for each cell type from CLTS-normalized scRNA-seq data

- Compute expected transcriptome size for each bulk sample based on its cell type proportions

- Normalize bulk data using the ratio of expected to observed transcriptome sizes

This approach prevents misinterpretation of samples with unusual expression profiles that may actually reflect their cellular composition rather than technical artifacts [14].

The following diagram illustrates the logical relationship between normalization choices and their biological implications, highlighting the central goal of separating technical artifacts from true biological signals:

What Are Highly Variable Genes? Biological Significance and Analytical Importance

In single-cell RNA sequencing (scRNA-seq) analysis, Highly Variable Genes (HVGs) are genes that exhibit significant variation in expression levels across individual cells beyond what is expected from technical noise alone [18]. The identification of HVGs is a critical feature selection step that reduces the dimensionality of the data, enhancing computational efficiency and improving the clarity of downstream analyses such as clustering, dimensionality reduction, and trajectory inference [15] [18]. By focusing on genes that contribute most substantially to biological heterogeneity, researchers can better uncover the cellular diversity, dynamic processes, and molecular mechanisms that define complex biological systems [19] [20].

Biological Significance of Highly Variable Genes

The biological significance of HVGs stems from their close association with the core sources of heterogeneity in a cell population.

- Cellular Identity and Heterogeneity: HVGs are often markers of distinct cell types or cell states within a seemingly homogeneous population. For example, genes specifically expressed in a rare cell type or at a particular stage of a differentiation process will show high variability across the entire dataset [18] [21].

- Key Biological Processes: Genes involved in critical dynamic processes such as the cell cycle, stress response, signal transduction, and metabolic pathways frequently appear as highly variable. Their fluctuating expression reflects active biological regulation rather than a static, housekeeping function [19].

- Regulatory Underpinnings: High variability can be driven by underlying transcriptional regulation, including stochastic transcriptional bursting, which leads to differences in mRNA abundance between cells even in a uniform environment [18].

Analytical Importance of Highly Variable Genes

From a computational perspective, selecting HVGs is a prerequisite for most scRNA-seq analysis workflows.

- Improved Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) rely on dimensions of greatest variance. By focusing on HVGs, the resulting principal components are more likely to represent biologically meaningful structure [18].

- Enhanced Clustering Performance: Clustering algorithms perform better when the data is devoid of non-informative genes. Using HVGs helps to distinguish true cell subpopulations by reducing the obscuring effect of technical noise and low-information genes [17].

- Efficient Data Integration: When integrating multiple scRNA-seq datasets to build a unified atlas, the selection of HVGs is a crucial step. Properly selected HVGs facilitate the correction of technical batch effects while preserving relevant biological variation, leading to higher-quality integrations and more accurate mapping of new query datasets [17].

- Trajectory Inference: In studies of dynamic processes like differentiation, HVGs are essential for reconstructing the sequence of gene expression changes along a biological trajectory [15].

A Comparison of HVG Detection Methods

Multiple computational methods have been developed to identify HVGs, each with different underlying models and assumptions. The table below summarizes some of the prominent approaches.

Table 1: Key Methods for Highly Variable Gene Detection

| Method | Underlying Principle | Key Features | Reference |

|---|---|---|---|

| Brennecke et al. | Models technical noise using spike-in transcripts or a global mean-variance relationship. | Separates technical from biological variation; requires spike-ins or assumes most genes are not biologically variable. | [18] [22] |

| SCTransform | Uses regularized negative binomial regression to model the relationship between gene expression and sequencing depth. | Directly models UMI count data; outputs Pearson residuals that are used for HVG selection and downstream analysis. | [15] [6] |

| Scran | Employs a trend fitting approach to the variance of log-normalized expression values across all cells. | Decomposes total variance into technical and biological components; robust for diverse cell population structures. | [18] [22] |

| GLP (Genes identified through LOESS with Positive Ratio) | Leverages optimized LOESS regression on the relationship between a gene's average expression and its "positive ratio" (fraction of cells where it is detected). | Uses positive ratio as a robust metric; employs a two-step regression to minimize influence of outliers, reducing overfitting. | [15] |

| BASiCS | A Bayesian hierarchical model that jointly quantifies technical noise and cell-to-cell biological heterogeneity. | Can use spike-in genes; provides a rigorous statistical framework for HVG detection and differential expression. | [22] [6] |

| Seurat (VST) | Fits a loess curve to the relationship between log(variance) and log(mean) of expression. | A widely used and accessible method; identifies genes that deviate positively from the fitted trend. | [15] [22] |

Performance Considerations

Benchmarking studies have shown that the choice of HVG method can significantly impact downstream analysis outcomes. A 2025 benchmark evaluating feature selection for data integration and query mapping reinforced that HVG selection is effective for producing high-quality integrations but noted that performance can vary [17]. Similarly, an earlier evaluation of seven HVG methods found that discrepancies exist between tools and that a larger sample size (number of cells) is required for reproducible HVG analysis compared to bulk RNA-seq differential expression analysis [22].

Detailed Experimental Protocol: Investigating HVGs Across Multiple Time Points and Cell Types

The following protocol is adapted from a published workflow for characterizing dynamic HVG expression patterns in time-series scRNA-seq data, using public dengue virus and COVID-19 datasets as examples [19].

Required Materials and Reagents

Table 2: Essential Research Reagents and Computational Tools

| Item | Function / Description | Example / Source |

|---|---|---|

| scRNA-seq Dataset | A count matrix with genes as rows and cells as columns, ideally from a time-series or multi-condition experiment. | Public repositories (e.g., GEO, ArrayExpress). Used dataset: Dengue virus and COVID-19 infection data [19]. |

| Computational Environment | A programming environment for executing the analysis steps. | R or Python. Code availability: https://github.com/vclabsysbio/scRNAseq_DVtimecourse [19]. |

| Normalization Tool | Corrects for technical variations like sequencing depth. | Scran (pooling-based size factors) or SCTransform (regularized negative binomial regression) [23] [6]. |

| HVG Detection Package | Implements statistical models to identify genes with high biological variation. | scran::modelGeneVar(), Seurat::FindVariableFeatures(), or custom GLP script [15] [18]. |

| Pathway Database | A collection of annotated biological pathways for functional interpretation. | KEGG, GO, Reactome. |

Step-by-Step Methodology

Data Pre-processing and Quality Control

- Begin with a raw count matrix. Filter out low-quality cells based on metrics like total counts per cell, number of genes detected per cell, and the fraction of mitochondrial counts [21].

- Perform data normalization to account for differences in cellular sequencing depth. The protocol can utilize methods like scran's pooling-based size factors or SCTransform, which have been shown to effectively handle single-cell data characteristics [23] [6].

HVG Detection

- Apply one or more HVG selection methods to the normalized data. For instance, the

modelGeneVarfunction in the scran package fits a trend to the per-gene variance with respect to abundance, then defines the biological component of variation as the difference between the total variance and the fitted technical component [18]. - Alternatively, the GLP method can be used. This involves:

- For each gene, calculating its average expression (λ) and positive ratio (f), which is the fraction of cells where the gene is expressed [15].

- Using the Bayesian Information Criterion (BIC) to determine the optimal bandwidth for a LOESS regression that models the relationship between

f(independent variable) andλ(dependent variable). - Identifying HVGs as those with expression levels significantly higher than the value predicted by the LOESS curve [15].

- Select the top genes ranked by their biological variance score for downstream analysis.

- Apply one or more HVG selection methods to the normalized data. For instance, the

Downstream Analysis and Biological Interpretation

- Use the selected HVGs for dimensionality reduction (e.g., PCA, UMAP) and clustering to reveal cell subpopulations.

- Perform pathway enrichment analysis on the HVGs to explore their involvement in common and cell-type-specific biological processes (e.g., immune response pathways in the context of infection) [19].

- Visualize the dynamic expression patterns of key HVGs across multiple time points and cell types to generate hypotheses about their roles in the biological process under study [19].

FAQs and Troubleshooting Guide

FAQ 1: Why does my HVG list seem to be dominated by very highly expressed genes?

Answer: This is a common issue arising from the strong mean-variance relationship in count data. The variance of a gene is often driven more by its abundance than its biological heterogeneity [18].

Troubleshooting:

- Use a dedicated HVG method: Ensure you are using an HVG method that explicitly models and removes the dependence of variance on mean expression. Methods like

scran::modelGeneVar(),SCTransform, andGLPare designed for this purpose [18] [15] [6]. - Avoid simple variance ranking: Do not simply select genes with the highest variance without considering their average expression level, as this will bias selection toward high-abundance genes.

FAQ 2: I get different HVG lists when I use different methods or change parameters. How do I know which one to trust?

Answer: Discrepancies between methods are expected due to their different statistical models and assumptions. There is no single "best" method for all datasets [22] [17].

Troubleshooting:

- Benchmark on your data: Evaluate how different HVG lists affect the biological coherence of your downstream analysis (e.g., clustering results, trajectory inference). A robust set of HVGs should lead to stable and interpretable biological findings [17].

- Consider your analysis goal: If your goal is data integration, a benchmark suggests that using a batch-aware variant of HVG selection can be considered good practice [17].

- Start with a consensus: For an initial analysis, you might consider taking the union or intersection of HVGs from two well-established methods (e.g., Scran and Seurat's VST).

FAQ 3: How many HVGs should I select for downstream analysis?

Answer: The optimal number is dataset-dependent. Using too few genes may miss important biological signals, while using too many can introduce noise [17].

Troubleshooting:

- Follow software defaults: Popular packages like Seurat often use a default of 2,000 HVGs, which is a good starting point for many datasets [17].

- Use a statistical cutoff: Some methods, like the one in

scran, provide a p-value for the significance of the biological component. You can select all genes with a false discovery rate (FDR) below a certain threshold (e.g., 5%). - Empirical testing: A 2025 benchmark showed that integration performance generally improves with the number of selected features, with gains plateauing after a certain point. You can test a range of values (e.g., 1,000 to 3,000) and inspect the stability of your downstream clustering [17].

FAQ 4: My dataset has multiple experimental batches. How does this affect HVG selection?

Answer: Technical differences between batches can be misinterpreted as biological variation, causing batch-specific genes to be incorrectly selected as HVGs.

Troubleshooting:

- Perform batch-aware HVG selection: Some workflows recommend performing HVG selection separately on each batch and then taking the union of genes, which helps ensure that genes variable in any batch are considered for integration [17].

- Integrate with caution: Be aware that if you select HVGs on the unintegrated data, the selection itself might be biased by batch effects. Using a batch-aware method is a recommended best practice for integration tasks [17].

In single-cell RNA sequencing (scRNA-seq) analysis, selecting highly variable genes (HVGs) is a critical step for identifying biologically interesting genes that drive heterogeneity across cells. However, this process is fundamentally complicated by the mean-variance relationship observed in transcriptomic data. This technical guide addresses common challenges and provides solutions for researchers navigating HVG selection in their single-cell workflows.

FAQs: Understanding HVG Selection Challenges

Why does the mean-variance relationship complicate HVG selection?

The mean-variance relationship presents a fundamental challenge because in single-cell count data, a gene's variance is inherently correlated with its mean expression level [12] [24]. This means that highly expressed genes naturally exhibit higher variance due to technical factors rather than biological interest. Without proper correction, HVG selection would simply identify highly expressed genes rather than those exhibiting genuine biological heterogeneity.

How can I distinguish technical noise from biological variation in HVG selection?

Specialized computational methods model the mean-variance trend to separate technical components from biological variation:

- modelGeneVar(): Fits a trend to the variance with respect to abundance across all genes, assuming most genes are dominated by technical noise [12]

- modelGeneVarWithSpikes(): Uses spike-in transcripts to establish a technical noise trend when available [12]

- modelGeneVarByPoisson(): Applies Poisson-based assumptions about technical noise in UMI count data [12]

The biological component for each gene is calculated as the difference between its total variance and the estimated technical component [12].

What are the consequences of improper mean-variance modeling?

Failure to properly account for the mean-variance relationship can lead to:

- Over-representation of highly expressed genes in HVG lists

- Failure to detect biologically relevant but moderately expressed genes

- Reduced performance in downstream analyses like clustering and trajectory inference

- Inflation of variance estimates in lowly sequenced cells for high-abundance genes [25]

How many HVGs should I select for my analysis?

There is no universal optimal number, but these guidelines apply:

- Typical range: 500-2,000 HVGs sufficiently capture broad heterogeneity [24]

- Robustness principle: Results should be reasonably consistent when selecting between 300-700 HVGs if your initial selection is 500 [24]

- Trade-off consideration: Higher HVG counts can reveal nested cell types but risk incorporating more noise [24]

Troubleshooting Guides

Problem: HVG list dominated by highly expressed housekeeping genes

Solution: Apply variance stabilization transformations before HVG selection

Methodology: Use regularized negative binomial regression (sctransform) that successfully removes technical influences while preserving biological heterogeneity [25]. This method:

- Constructs generalized linear models for each gene with sequencing depth as covariate

- Pools information across genes with similar abundances to obtain stable parameter estimates

- Generates Pearson residuals that represent effectively normalized values [25]

Problem: Inconsistent HVG selection across datasets or protocols

Solution: Implement batch-aware feature selection methods

Methodology: When integrating multiple samples or datasets, use feature selection methods that account for batch effects [17]. Benchmark studies show that batch-aware HVG selection improves integration quality and query mapping performance [17].

Experimental Protocol:

- Normalize each dataset using appropriate methods (sctransform or log-normalization)

- Identify integration anchors using batch-aware feature selection

- Select HVGs that show consistent biological variation across batches

- Validate selection by assessing integration metrics (Batch ASW, iLISI) [17]

Problem: Low-resolution clusters in downstream analysis

Solution: Optimize HVG selection parameters for your biological question

Methodology: Adjust HVG stringency based on expected cell population rarity [24]:

- For rare populations: Lower minimum detection threshold to include genes expressed in fewer cells

- For broad classification: Use standard thresholds (e.g., detected in ≥50 cells)

- Validate by assessing whether known marker genes appear in HVG list

Variance Quantification Methods Comparison

| Method | Technical Noise Model | Best For | Limitations |

|---|---|---|---|

modelGeneVar() |

Trend-based assumption | Standard datasets without spikes | Assumes most genes not biologically variable |

modelGeneVarWithSpikes() |

Spike-in transcripts | Experiments with spike-ins | Requires spike-in data |

modelGeneVarByPoisson() |

Poisson distribution | UMI-based datasets | Underestimates technical noise |

sctransform |

Regularized NB regression | UMI-based datasets, large studies | Computational intensity |

HVG Selection Parameters and Impact

| Parameter | Default Setting | Adjustment Guidance | Impact of Mis-setting |

|---|---|---|---|

| Minimum cell detection | Varies by dataset | ~50% of smallest cluster size | May miss rare population markers |

| Corrected variance threshold | Top 2000 genes | 500-2000 range | Too high: noisy clusters; Too low: missed heterogeneity |

| Mean expression cutoff | None typically | Exclude extreme high means | Housekeeping gene domination |

Research Reagent Solutions

Essential Computational Tools for HVG Analysis

| Tool/Platform | Function | Application in HVG Selection |

|---|---|---|

| Seurat R package | Single-cell analysis | Implements modelGeneVar(), sctransform |

| Scanny Python package | Single-cell analysis | Highly variable gene selection |

| SCTransform | Normalization | Regularized negative binomial regression |

| Bioconductor OSCA | Analysis framework | Feature selection methodologies |

Experimental Controls for Technical Validation

| Control Type | Purpose | Application in HVG Context |

|---|---|---|

| Spike-in RNAs | Technical noise modeling | Establish mean-variance trend |

| UMIs (Unique Molecular Identifiers) | Molecular counting | Reduces amplification bias [26] |

| Positive control cells | Protocol validation | Identify batch-specific effects |

Advanced Methodologies

Integrated HVG Selection Workflow

For comprehensive HVG analysis, follow this detailed protocol:

Quality Control and Preprocessing

Variance Stabilization

HVG Selection with Optimization

- Calculate per-gene variation using chosen model

- Select genes based on biological component of variation

- Exclude genes in blocklist (histones, ribosomal, mitochondrial) [24]

- Validate selection using known cell-type markers

Validation and Benchmarking Framework

After HVG selection, validate your results using these approaches:

- Downstream Analysis Consistency: Check if clustering results are robust to small changes in HVG number [24]

- Biological Plausibility: Verify that selected HVGs include known cell-type markers

- Integration Performance: Assess using metrics like batch correction and biological conservation [17]

- Trajectory Inference Quality: Evaluate whether HVGs support biologically reasonable trajectories [27]

By understanding and properly addressing the mean-variance relationship in HVG selection, researchers can significantly improve the quality and biological relevance of their single-cell RNA-seq analyses.

A Practical Guide to Normalization and HVG Selection Methods

Frequently Asked Questions (FAQs)

Q1: What is the fundamental purpose of log-normalization in single-cell RNA-seq analysis?

Log-normalization aims to remove technical variation, particularly differences in sequencing depth between cells, while preserving biological variation. The standard method involves dividing the raw UMI count for each gene in a cell by the total counts for that cell, multiplying by a scale factor (e.g., 10,000), adding a pseudo-count (typically 1), and then log-transforming the result. This process ensures that gene counts are comparable within and between cells, which is a critical prerequisite for downstream analyses like clustering and differential expression [6].

Q2: When should I use log-normalization versus a method like SCTransform?

Log-normalization is a robust and widely understood method that performs satisfactorily for common analyses like cell type clustering. However, it has known limitations, such as failing to effectively normalize high-abundance genes and creating a dependency between normalized expression values and cellular sequencing depth. SCTransform, which uses regularized negative binomial regression, often provides superior performance for tasks like identifying subtle sub-cell types, as it produces variance-stabilized Pearson residuals that are independent of sequencing depth. Benchmarking studies recommend testing multiple normalization methods and comparing their outcomes in cell clustering, embedding, and differential gene expression analysis [6] [28].

Q3: I've applied log-normalization, but my downstream clustering still seems driven by batch effects. What should I do?

Log-normalization primarily controls for cell-specific technical effects like sequencing depth but is not designed to remove sample-level batch effects. For this, you need data integration or batch-effect correction tools. A simple, lightweight approach is to perform Highly Variable Gene (HVG) selection in a batch-aware manner by setting the batch_key parameter in sc.pp.highly_variable_genes(). This selects genes that are variable across batches, avoiding genes that are batch-specific. For stronger integration, consider using tools like Harmony, which iteratively corrects the PCA embeddings to penalize clusters that are disproportionately composed of cells from a small subset of datasets [29] [30] [28].

Q4: Why does the number of highly variable genes change when I use subset=True with a batch_key?

This is a documented issue and unexpected behavior in Scanpy. When batch_key is provided and subset=True, the function may not correctly subset the AnnData object, leading to an incorrect number of highly variable genes being retained. The recommended workaround is to run sc.pp.highly_variable_genes() with subset=False first. Then, manually subset your AnnData object to the highly variable genes using adata[:, adata.var['highly_variable']] [31].

Q5: Can I use the log-normalized counts for differential expression analysis across conditions?

Caution is advised. While log-normalized data corrects for sequencing depth, it may still contain technical artifacts or batch effects that can confound differential expression (DE) analysis. Using these values in a naive DE test (e.g., Wilcoxon rank-sum test) without accounting for these factors can lead to spurious results. For robust DE analysis, it is recommended to use methods that can incorporate batch as a covariate in a generalized linear model (GLM), such as those implemented in diffxpy (Python) or DESeq2 (R) [29].

Troubleshooting Guides

Issue 1: Poor Cell Type Separation After Log-Normalization and Clustering

Problem: After performing log-normalization, highly variable gene selection, PCA, and clustering, the resulting clusters do not align with expected cell types or appear driven by technical factors.

Diagnosis and Solutions:

- Check the Normalization: Verify that the normalization has effectively mitigated the relationship between sequencing depth and gene expression. You can plot the principal components against the total UMI counts per cell. If a strong correlation persists, consider using an alternative normalization method.

- Investigate Highly Variable Gene Selection: The performance of downstream integration and clustering is highly dependent on feature selection [28]. Ensure you are using a sufficient number of HVGs (often 2,000-5,000). Test if using a batch-aware HVG selection method improves results.

- Apply Batch Correction: If your data comes from multiple samples or batches, apply a dedicated integration tool. The following workflow illustrates the decision process:

Issue 2: Inconsistent Highly Variable Gene Selection with Batches

Problem: When using sc.pp.highly_variable_genes with the batch_key parameter, the resulting list of genes or the behavior of the subset parameter is unexpected.

Understanding the Algorithm: When batch_key is set, the function selects the top n_top_genes from each batch individually based on within-batch variance. It then combines these gene lists and ranks them based on how many batches a gene is highly variable in. This means the final set of HVGs is the union of the top genes from all batches, and the parameter n_top_genes controls the depth of selection from each batch, influencing the final number of overlapping genes [30].

Solution:

- Avoid

subset=Truewith Batches: Due to the reported issue, do not usesubset=Truewhenbatch_keyis provided [31]. - Manual Subsetting Workflow:

- Perform HVG selection with

batch_keyandsubset=False. - Inspect the

adata.var['highly_variable']boolean column or the'highly_variable_nbatches'column to see in how many batches each gene was selected. - Manually subset your data using

adata = adata[:, adata.var['highly_variable']].copy().

- Perform HVG selection with

- Ensure Sufficient Overlap: If you require genes that are variable across all batches, you can filter for genes where

'highly_variable_nbatches'equals the total number of batches.

Performance Comparison of Normalization Methods

The table below summarizes key characteristics of log-normalization and other common methods, based on empirical surveys [6].

Table 1: Comparison of Single-Cell RNA-seq Normalization Methods

| Method | Underlying Principle | Key Features | Pros | Cons |

|---|---|---|---|---|

| Log-Normalization (Global Scaling) | Scales counts by cell-specific size factor (total counts), then log-transforms. | Simple, intuitive, and widely used. Implemented as normalize_total + log1p in Scanpy and NormalizeData in Seurat. |

Fast; effective for separating common cell types; satisfactory in many benchmarking studies [6]. | Ineffective normalization for high-abundance genes; normalized data can retain correlation with sequencing depth. |

| SCTransform | Regularized Negative Binomial GLM. | Models count data directly, accounting for sequencing depth. Outputs Pearson residuals. | Residuals are independent of sequencing depth; superior for identifying subtle sub-populations; integrated into Seurat. | More computationally intensive; requires installation of specific R packages. |

| SCnorm | Quantile regression to group genes with similar depth-dependence. | Estimates multiple scale factors for different gene groups, not a single global factor. | Robust to genes with distinct relationships between expression and sequencing depth. | Can be slower than global scaling methods. |

| Scran | Pooling-based size factor estimation. | Deconvolves cell pools to compute cell-specific size factors. | Particularly effective for data with many zero counts. | Computationally more complex than simple scaling. |

Experimental Protocol: Benchmarking Normalization Methods

To empirically determine the best normalization method for your specific dataset, follow this benchmarking protocol [6] [28]:

Apply Multiple Normalization Methods: Process your raw count data using 2-3 different methods. A standard set could include:

- Log-Normalization: Using

sc.pp.normalize_total(adata, target_sum=1e4)followed bysc.pp.log1p(adata). - SCTransform: Using the R Seurat package's

SCTransform()function. - Scran: Using the

scranpackage in R to compute size factors, which can then be used in Scanpy.

- Log-Normalization: Using

Perform Consistent Downstream Analysis: For each normalized dataset, run an identical analysis pipeline:

- Highly Variable Gene selection (using the same

n_top_genes). - Scaling (

sc.pp.scale). - PCA.

- Clustering (e.g., Leiden clustering).

- UMAP visualization.

- Highly Variable Gene selection (using the same

Evaluate Performance with Metrics: Use a set of metrics to assess the quality of the normalization. Key metrics include [28]:

- Batch Effect Removal: Batch Average Silhouette Width (Batch ASW), Principal Component Regression (Batch PCR).

- Biological Variation Conservation: normalized mutual information (NMI), Adjusted Rand Index (ARI) with known cell type labels.

- Local Structure: Graph connectivity, local density factor difference.

The workflow for this benchmarking process is summarized below:

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Computational Tools for scRNA-seq Normalization and HVG Selection

| Tool/Reagent | Function | Implementation | Key Reference |

|---|---|---|---|

| Scanpy | A comprehensive toolkit for single-cell analysis in Python. Includes normalize_total, log1p, and highly_variable_genes. |

Python | [32] |

| Seurat | An R package for single-cell genomics. Provides NormalizeData and FindVariableFeatures functions. |

R | [6] |

| SCTransform | A normalization and variance stabilization method based on regularized negative binomial regression. | R (Seurat) | [6] |

| Scran | Method for pooling across cells to compute size factors, robust to many zero counts. | R | [6] |

| Harmony | Integration algorithm for correcting batch effects in PCA embeddings. | R, Python | [29] |

The computeSumFactors function in Scran implements a deconvolution strategy for scaling normalization. This method is designed to handle the high number of zero counts typical in single-cell RNA-seq (scRNA-seq) data by pooling cells to obtain more robust size factor estimates [33].

The core principle involves creating pools of cells and summing their expression profiles. This summed profile is normalized against an average reference pseudo-cell, constructed from the average counts across all cells. The median ratio of the pool's count sums to this average yields a size factor for the entire pool. The key insight is that the pool's size factor is the sum of the size factors of its constituent cells. By repeating this process with multiple pools, a linear system is built that can be solved to deconvolve the individual cell-based size factors [33]. This pooling approach reduces the impact of stochastic zeros, leading to more accurate size factor estimates than those derived from single cells [6] [33].

Frequently Asked Questions (FAQs)

1. Why am I getting negative size factors, and how can I resolve this?

Negative size factors are nonsensical and usually indicate an issue with the input data or parameters. They most commonly occur with low-quality cells that have very few expressed genes, or when insufficient filtering of low-abundance genes has been performed [33].

Troubleshooting Steps:

- Increase Stringency of Quality Control: Remove suspected low-quality cells more aggressively.

- Filter More Low-Abundance Genes: Use the

min.meanargument to filter out genes with low average counts. The default is often too low; increasing this threshold (e.g., to 0.1 or 1) can prevent negative estimates [23] [33]. - Increase the Number of Pool Sizes: Use a wider range of values in the

sizesargument to improve estimation precision. - Last Resort: The function defaults to

positive=TRUE, which coerces negative values to positive. You can setpositive=FALSEto inspect the raw output and perform your own diagnostics [33].

2. My dataset has multiple distinct cell types. Should I adjust the normalization process?

Yes. The deconvolution method assumes most genes are not differentially expressed (DE) across the cells being pooled. If you pool highly different cell types, this assumption is violated. To handle this, perform normalization within clusters of biologically similar cells [33].

Protocol: Normalization with Clustering

- Generate a Rough Clustering: Use a quick, preliminary clustering on the cells. The

quickClusterfunction from Scran is designed for this purpose, though any reasonable clustering can be used [33]. - Run

computeSumFactorswith Clusters: Provide the cluster information to theclustersargument. Scran will perform deconvolution normalization separately within each cluster [23] [33]. - Rescale Between Clusters: Scran automatically rescales the size factors from different clusters to be comparable. By default, it selects the cluster with the most non-zero counts as a reference for this rescaling [33].

3. What should I do if my dataset is very small (e.g., fewer than 100 cells)?

The deconvolution method requires a sufficient number of cells for effective pooling. The official documentation recommends having at least 100 cells for the algorithm to work well. If you have fewer cells than the smallest window size (default is 21), the function will naturally degrade to performing library size normalization, which is the same as using librarySizeFactors [33].

Key Parameters and Troubleshooting

The table below summarizes critical parameters for computeSumFactors and how to adjust them to resolve common issues.

| Parameter | Default Value | Description | Common Troubleshooting Adjustments |

|---|---|---|---|

sizes |

seq(21, 101, 5) |

A numeric vector of pool sizes (number of cells per pool). | For small datasets, use smaller sizes. For instability, use a wider range of sizes [33]. |

clusters |

NULL (all cells in one group) |

A factor specifying cell clusters for within-cluster normalization. | Essential for heterogeneous datasets. Provide a cluster factor to avoid violating the non-DE assumption [33]. |

min.mean |

NULL |

A numeric scalar for the minimum average count of genes used in normalization. | Increase to fix negative size factors. A higher value (e.g., 0.1, 1) filters out more low-abundance genes [23] [33]. |

positive |

TRUE |

A logical scalar indicating whether to enforce positive estimates. | Set to FALSE for debugging to see unconverted negative values [33]. |

max.cluster.size |

3000 | The maximum number of cells in a cluster for the linear system solver. | Generally does not need adjustment. Prevents computational issues with very large clusters [33]. |

Experimental Protocol

Here is a detailed step-by-step protocol for performing normalization with Scran in an R-based analysis environment.

Step 1: Load Packages and Data

Step 2: Preliminary Clustering (Recommended for Heterogeneous Data)

Step 3: Compute Pooling-Based Size Factors

Step 4: Generate and Inspect Size Factors

The Scientist's Toolkit

| Essential Material / Tool | Function in the Experiment |

|---|---|

| SingleCellExperiment Object | The standard data structure in R/Bioconductor for holding single-cell genomics data, including the raw count matrix and associated metadata [12]. |

| Scran R Package | Provides the computeSumFactors function and auxiliary tools like quickCluster for pooling-based normalization and modelGeneVar for highly variable gene selection [12] [33]. |

| Cell Clusters (Factor) | A vector defining groups of biologically similar cells. Crucial for ensuring the deconvolution method's "non-DE majority" assumption is met in complex datasets [33]. |

| High-Performance Computing (HPC) Node | The deconvolution calculation, especially on large datasets or with many pool sizes, can be computationally intensive. The BPPARAM argument allows for parallel processing to speed up computation [33]. |

Single-cell RNA sequencing (scRNA-seq) data normalization presents significant challenges due to substantial technical variation, particularly in sequencing depth, which can confound biological heterogeneity [25]. Traditional normalization methods that apply a single scaling factor per cell often fail to effectively normalize genes across different abundance levels, especially high-abundance genes [25] [6]. This technical guide focuses on SCTransform, a computational method that utilizes regularized negative binomial regression to normalize and variance-stabilize UMI-based scRNA-seq data, effectively addressing these limitations within the broader context of single-cell data normalization research [25] [34].

The SCTransform Workflow

The SCTransform function implements a three-step procedure for normalizing scRNA-seq data:

Initial Negative Binomial Regression: A generalized linear model (GLM) with negative binomial error distribution is fitted independently for each gene, using cellular sequencing depth (total UMI count) as a covariate [25] [35]. This model estimates parameters for each gene: intercept (β₀), slope (β₁) for sequencing depth effect, and dispersion (θ).

Parameter Regularization: To prevent overfitting—a common issue when modeling sparse scRNA-seq data—parameter estimates are regularized by sharing information across genes with similar abundances [25] [35]. Kernel regression captures global trends between parameter values and gene means, stabilizing estimates particularly for low-abundance genes.

Residual Calculation: Pearson residuals are computed using the regularized parameters, transforming observed UMI counts into normalized values that are effectively independent of technical variation [25] [34]. The formula for Pearson residuals is:

( z{ij} = \frac{x{ij} - \mu{ij}}{\sigma{ij}} )

where ( x{ij} ) is the observed UMI count, ( \mu{ij} ) is the expected count under the regularized model, and ( \sigma_{ij} ) is the expected standard deviation [35].

Key Advantages Over Traditional Methods

- Eliminates Heuristic Steps: Replaces pseudocount addition and log-transformation with a statistically grounded approach [25] [34]

- Preserves Biological Variance: Effectively removes technical variation while maintaining biologically relevant heterogeneity [25]

- Comprehensive Processing: Replaces multiple steps in standard Seurat workflow (

NormalizeData,FindVariableFeatures, andScaleData) with a single function call [34]

Experimental Protocols

Basic SCTransform Implementation in Seurat

Advanced Parameter Configuration

Downstream Analysis Integration

SCTransform Workflow Diagram

Key Parameters and Default Settings

Table 1: Essential SCTransform Parameters for Experimental Design

| Parameter | Default Value | Description | Experimental Impact |

|---|---|---|---|

variable.features.n |

3000 | Number of variable features to identify | Affects feature selection for downstream analysis; increasing may capture subtler biological signals [36] [34] |

vars.to.regress |

NULL | Variables to regress out (e.g., percent.mito, cell cycle) | Critical for removing confounding technical variation; improves biological interpretation [36] [34] |

vst.flavor |

'v2' | Method flavor ('v1' or 'v2') | v2 uses method = glmGamPoi_offset, n_cells=2000, and exclude_poisson = TRUE for improved performance [36] |

do.correct.umi |

TRUE | Place corrected UMI matrix in assay counts | Enables interpretation as "corrected" counts expected if cells sequenced at same depth [36] [34] |

ncells |

5000 | Number of cells for parameter estimation | Balances computational efficiency and parameter accuracy [36] |

clip.range |

c(-sqrt(n/30), sqrt(n/30)) | Range for clipping residuals | Prevents extreme outliers from dominating analysis [36] |

Troubleshooting Guides and FAQs

Common Error Messages and Solutions

Table 2: Frequently Encountered SCTransform Issues and Resolutions

| Error Message | Potential Cause | Solution |

|---|---|---|

contrasts can be applied only to factors with 2 or more levels |

Regression variable with only one level (e.g., all cells have identical percent.mt) | Check metadata for variables with zero variance; remove from vars.to.regress or include more diverse cells [37] |

| Excessive memory usage | Large dataset with all genes being processed | Set conserve.memory = TRUE to avoid creating full residual matrix; increases runtime but reduces memory footprint [36] |

| Long computation time | Large dataset or complex model | Install glmGamPoi package for accelerated performance; reduces computation time significantly [34] |

| Warnings about model convergence | Problematic genes or extreme outliers | Review warning messages; consider pre-filtering low-abundance genes or extreme cells [25] |

Frequently Asked Questions

Q: How does SCTransform compare to traditional log-normalization? A: SCTransform outperforms log-normalization by effectively removing the relationship between gene variance and sequencing depth, particularly for high-abundance genes. Traditional methods often fail to normalize high-abundance genes effectively, which can disproportionately influence downstream analysis [25] [6]. SCTransform also enables the use of more principal components in dimensionality reduction as technical effects are more effectively removed [34].

Q: When should I use SCTransform versus traditional normalization? A: SCTransform is generally recommended for most UMI-based datasets, particularly when seeking finer biological resolution (e.g., identifying subpopulations within cell types). It provides sharper biological distinctions and reduces parameter dependency in downstream analysis [34]. Traditional methods may still be adequate for basic cell type identification but can maintain confounding technical effects [25].

Q: How are the results of SCTransform stored in Seurat objects?

A: SCTransform creates a new assay called "SCT" with three key components: (1) pbmc[["SCT"]]$scale.data contains Pearson residuals used for dimensionality reduction; (2) pbmc[["SCT"]]$counts contains "corrected" UMI counts; and (3) pbmc[["SCT"]]$data contains log-normalized versions of corrected counts for visualization [34].

Q: Can SCTransform be applied to non-UMI data? A: The method was specifically designed for UMI-based data where molecule counts can be modeled using negative binomial distribution. Application to non-UMI data (e.g., TPM or FPKM) may not be appropriate without methodological adjustments [25].

Q: What evidence supports the choice of negative binomial over Poisson distribution? A: Extensive benchmarking across 59 datasets demonstrated that while Poisson models may appear adequate for sparsely sequenced datasets, negative binomial models are necessary to capture overdispersion present in most scRNA-seq data, particularly for genes with sufficient sequencing depth [38]. The degree of overdispersion varies across datasets, supporting SCTransform's data-driven approach to parameter estimation [38].

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Computational Tools for SCTransform Implementation

| Tool/Resource | Function | Implementation Notes |

|---|---|---|

| Seurat R package | Single-cell analysis platform with SCTransform integration | Primary environment for SCTransform implementation; provides comprehensive downstream analysis capabilities [39] [34] |

| sctransform R package | Core normalization engine | Directly implements regularized negative binomial regression; now integrated into Seurat [25] |

| glmGamPoi R package | Accelerated GLM fitting | Significantly improves SCTransform computation speed; recommended for large datasets [34] |

| 10X Genomics Cell Ranger | UMI count matrix generation | Produces raw count matrices from Chromium platforms that serve as SCTransform input [39] [6] |

Statistical Foundations

Theoretical Basis for Regularized Negative Binomial Regression

The statistical foundation of SCTransform addresses a key challenge in scRNA-seq analysis: unconstrained negative binomial models tend to overfit the data, particularly for low-abundance genes, resulting in unstable parameter estimates [25]. This overfitting dampens biological variance and reduces the ability to detect true biological signals.

SCTransform overcomes this through information sharing across genes. By regularizing parameters based on the relationship between parameter values and gene abundance, the method achieves stable estimates while preserving biological heterogeneity [25] [35]. This approach was inspired by bulk RNA-seq methods like DESeq2 and edgeR, but adapted specifically for the unique characteristics of single-cell data [35].

The output Pearson residuals represent normalized values that are approximately normally distributed and exhibit constant variance across the dynamic range of expression, making them suitable for standard downstream analysis techniques like PCA [25]. This variance stabilization is crucial for ensuring that both lowly and highly expressed genes can contribute meaningfully to the definition of cellular states.

SCTransform represents a significant advancement in single-cell RNA-seq normalization methodology, providing a robust framework that effectively addresses technical variation while preserving biological heterogeneity. Through its implementation of regularized negative binomial regression, the method enables more accurate identification of cell populations and biological signals, particularly subtle subpopulations that may be obscured by technical artifacts. As single-cell technologies continue to evolve toward higher sequencing depths, the statistical principles underlying SCTransform will remain essential for extracting meaningful biological insights from complex datasets.

Single-cell RNA sequencing (scRNA-seq) reveals cellular heterogeneity but introduces significant technical noise. BASiCS (Bayesian Analysis of Single-Cell Sequencing Data) tackles this challenge through integrated spike-in normalization, providing a robust framework for quantifying biological variability separate from technical artifacts.

BASiCS utilizes a hierarchical model that jointly analyzes endogenous genes and spike-in transcripts, allowing precise normalization and technical noise quantification without assuming most genes are non-differentially expressed [6]. This makes it particularly valuable for analyzing heterogeneous cell populations where standard housekeeping gene assumptions fail [40].

The core strength of BASiCS lies in its ability to propagate statistical uncertainty throughout the analysis pipeline, from normalization to downstream inferences about highly variable genes and differential expression [41].

Frequently Asked Questions (FAQs)

What are the primary applications of BASiCS in single-cell analysis?

BASiCS specializes in identifying highly variable genes within homogeneous cell populations, detecting changes in expression variability between cell populations, and performing differential expression testing while accounting for technical noise. It is particularly useful for studying subtle variability within seemingly homogeneous populations, which often precedes cell fate decisions or reflects stochastic transcriptional events [41].

How does BASiCS differ from standard global scaling normalization methods?

Unlike global scaling methods that assume a fixed size factor, BASiCS implements a joint hierarchical model that simultaneously normalizes data and quantifies technical variation using spike-in controls. This approach avoids the pitfalls of bulk RNA-seq normalization methods applied to single-cell data, which can lead to misleading results in downstream analyses like highly variable gene detection [2]. BASiCS propagates statistical uncertainty throughout all analysis stages, providing more reliable variability estimates [41].

When should researchers consider using BASiCS over other normalization methods?

BASiCS is particularly recommended when: (1) studying transcriptionally heterogeneous populations where the "non-DE majority" assumption fails; (2) investigating subtle variability within seemingly homogeneous cell populations; (3) precise quantification of technical noise is essential; and (4) spike-in controls have been incorporated in the experimental design [40] [41].

What are the critical requirements for successful BASiCS implementation?

Successful implementation requires: (1) high-quality spike-in data with known concentrations added during cell lysis; (2) proper quality control to validate spike-in coverage; (3) sufficient sequencing depth for both endogenous and spike-in transcripts; and (4) appropriate computational resources for Bayesian inference. If spike-in genes are unavailable, BASiCS can leverage technical replicates where cells from a population are randomly allocated to multiple independent experimental replicates [6].

Troubleshooting Common BASiCS Implementation Issues

Poor Spike-in Coverage or High Variability

Table: Troubleshooting Spike-in Related Issues