Spatial Feature Extraction from MRI with Convolutional Neural Networks: Foundations, Applications, and Advanced Architectures

This article provides a comprehensive analysis of Convolutional Neural Networks (CNNs) for spatial feature extraction from Magnetic Resonance Imaging (MRI) data, tailored for researchers, scientists, and drug development professionals.

Spatial Feature Extraction from MRI with Convolutional Neural Networks: Foundations, Applications, and Advanced Architectures

Abstract

This article provides a comprehensive analysis of Convolutional Neural Networks (CNNs) for spatial feature extraction from Magnetic Resonance Imaging (MRI) data, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of CNN architectures, detailing their evolution and core components for analyzing neurological disorders and oncology. The scope extends to advanced methodological applications, including hybrid models and transfer learning, followed by a critical examination of optimization strategies for computational efficiency and data scarcity. The review culminates in a comparative validation of state-of-the-art models, discussing performance metrics, generalizability, and their integration into clinical and research pipelines to enhance diagnostic accuracy and biomarker discovery.

Core Principles and Architectural Evolution of CNNs for Medical Imaging

Core Architectural Components of CNNs

Convolutional Neural Networks (CNNs) have revolutionized the field of medical image analysis by enabling automated learning of hierarchical features from complex datasets [1]. Their architecture is fundamentally composed of three types of layers that work in concert to transform input images into increasingly abstract representations for classification tasks.

Convolutional Layers

Convolutional layers form the foundational building blocks of CNNs, responsible for detecting spatial hierarchies in images [1]. These layers apply learned filters (kernels) to input data through a mathematical convolution operation. Each filter scans across the input image, producing a feature map that highlights specific visual patterns such as edges, textures, or more complex shapes in deeper layers. The key advantage of this operation is parameter sharing - the same filter weights are used across all spatial locations, significantly reducing the number of parameters compared to fully connected networks. In medical imaging, particularly for MRI analysis, these layers excel at identifying subtle tissue changes and morphological patterns essential for detecting pathological conditions [2].

Pooling Layers

Pooling layers are strategically inserted between convolutional layers to reduce the spatial dimensions of feature maps while preserving critical features [3]. The most common approach, max-pooling, selects the maximum value from a set of inputs within a defined window, effectively highlighting the most prominent features and providing translational invariance. By progressively downsampling feature maps, pooling layers enhance computational efficiency, control overfitting, and increase the receptive field of subsequent layers. This allows the network to build robustness to small spatial variations in medical images, which is particularly valuable given the anatomical variability present in MRI scans across different patients [4].

Fully Connected Layers

Fully connected (dense) layers typically form the final stage of a CNN architecture, where all neurons are connected to all activations from the previous layer [3]. These layers synthesize the high-level features extracted by the convolutional and pooling layers into final predictions. Each neuron in a fully connected layer performs a weighted sum of its inputs followed by a non-linear activation function (commonly ReLU or softmax for classification). In medical diagnosis applications, these layers integrate the spatially distributed feature information to produce probability distributions over target classes (e.g., tumor types or healthy vs. pathological) [2] [5].

CNN Applications in MRI Feature Extraction

The hierarchical feature learning capability of CNNs has demonstrated remarkable success in MRI-based brain tumor analysis. The complementary functions of convolutional, pooling, and fully connected layers enable these networks to extract both fine-grained and high-level tumor features from complex magnetic resonance imaging data [2].

Table 1: Performance of CNN Architectures in Brain Tumor Classification from MRI

| Architecture | Accuracy (%) | Precision (%) | Recall (%) | Specificity (%) | F1-Score (%) |

|---|---|---|---|---|---|

| DCBTN (Proposed) [2] | 98.81 | 97.69 | 97.75 | 99.18 | 97.70 |

| Lightweight CNN [3] | 99.00 | 98.75 | 99.20 | - | 98.87 |

| CNN from Scratch [6] | 99.17 | - | - | - | - |

| Modified EfficientNetB0 [6] | 99.83 | - | - | - | - |

| Ensemble Model [7] | 86.17 | - | - | - | - |

| Hybrid CNN-Transformer [2] | 98.70 | - | - | - | - |

In practical applications, researchers have developed specialized CNN architectures that leverage these core components for enhanced MRI analysis. The Dual Deep Convolutional Brain Tumor Network (DCBTN) combines a pre-trained Visual Geometry Group 19 model with a custom-designed CNN to extract both fine-grained and high-level tumor features [2]. Similarly, lightweight CNN implementations demonstrate that carefully optimized architectures with just three convolutional layers, two pooling layers, and a fully connected dense layer can achieve 99% accuracy in brain tumor detection even with limited training data [3]. These implementations highlight how the strategic arrangement of core CNN components can yield highly effective diagnostic tools for clinical applications.

Experimental Protocols for MRI-Based Brain Tumor Classification

Data Preparation and Preprocessing Protocol

Dataset Acquisition:

- Utilize publicly available brain tumor MRI datasets from repositories such as Kaggle (e.g., Brain Tumor MRI Dataset comprising glioma, meningioma, pituitary tumors, and non-tumor cases) [5].

- Ensure dataset includes balanced representation across classes (e.g., 1000 tumor and 1000 non-tumor images) to mitigate classification bias [5].

- For enhanced generalization, incorporate multiple datasets such as BR35H containing 1500 positive and 1500 negative cases [6].

Image Preprocessing Pipeline:

- Conversion to Grayscale: Transform all images to single-channel to reduce computational complexity while preserving essential intensity information [6].

- Noise Reduction: Apply Gaussian filters to diminish high-frequency noise and enhance relevant features [5].

- Intensity Normalization: Rescale pixel values to [0,1] range to standardize inputs and improve training stability [6].

- Spatial Standardization: Resize all images to consistent dimensions (e.g., 32×32, 64×64, or 224×224 depending on architecture) [6].

- Data Augmentation: Address limited data availability using techniques like random rotation, flipping, and CutMix to improve model robustness [2] [8].

Table 2: Essential Research Reagents and Computational Resources

| Resource Category | Specific Examples | Function in CNN Research |

|---|---|---|

| Programming Frameworks | TensorFlow, TFlearn [3] | Provide high-level APIs for implementing and training CNN architectures |

| Computational Hardware | GPUs [2] | Accelerate training of deep neural networks through parallel processing |

| Public Datasets | Kaggle Brain Tumor MRI [5], BR35H [6] | Supply annotated medical images for training and validation |

| Pre-trained Models | VGG-19 [2], ResNet50 [6], DenseNet121 [6] | Serve as feature extractors or starting points for transfer learning |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, ROC-AUC [3] | Quantify model performance for clinical reliability assessment |

CNN Implementation and Training Protocol

Architecture Configuration:

- Implement a sequential model with alternating convolutional and pooling layers, culminating in fully connected layers for classification [3].

- For convolutional layers, use 3×3 or 5×5 filter sizes with ReLU activation functions to introduce non-linearity [6].

- Configure max-pooling layers with 2×2 windows to progressively reduce spatial dimensions while retaining salient features [3].

- Include batch normalization layers to stabilize training and accelerate convergence [6].

- Add dropout layers before fully connected layers to prevent overfitting (typically 0.2-0.5 dropout rate) [6].

Training Procedure:

- Parameter Initialization: Initialize weights using He or Xavier initialization strategies.

- Optimization: Utilize Adam optimizer with default parameters (learning rate=0.001, β1=0.9, β2=0.999) [3].

- Loss Function: Employ sparse categorical cross-entropy for multi-class classification problems [6].

- Training Regimen: Train for 10-100 epochs with batch sizes of 32-64, monitoring validation loss for early stopping [3].

- Validation: Implement k-fold cross-validation (typically k=10) to ensure robust performance estimation [2].

Evaluation Framework:

- Calculate standard classification metrics including accuracy, precision, recall, specificity, and F1-score [2].

- Generate confusion matrices to visualize performance across different tumor classes.

- Utilize Grad-CAM++ or similar explainable AI techniques to generate heatmaps highlighting regions influencing classification decisions [7].

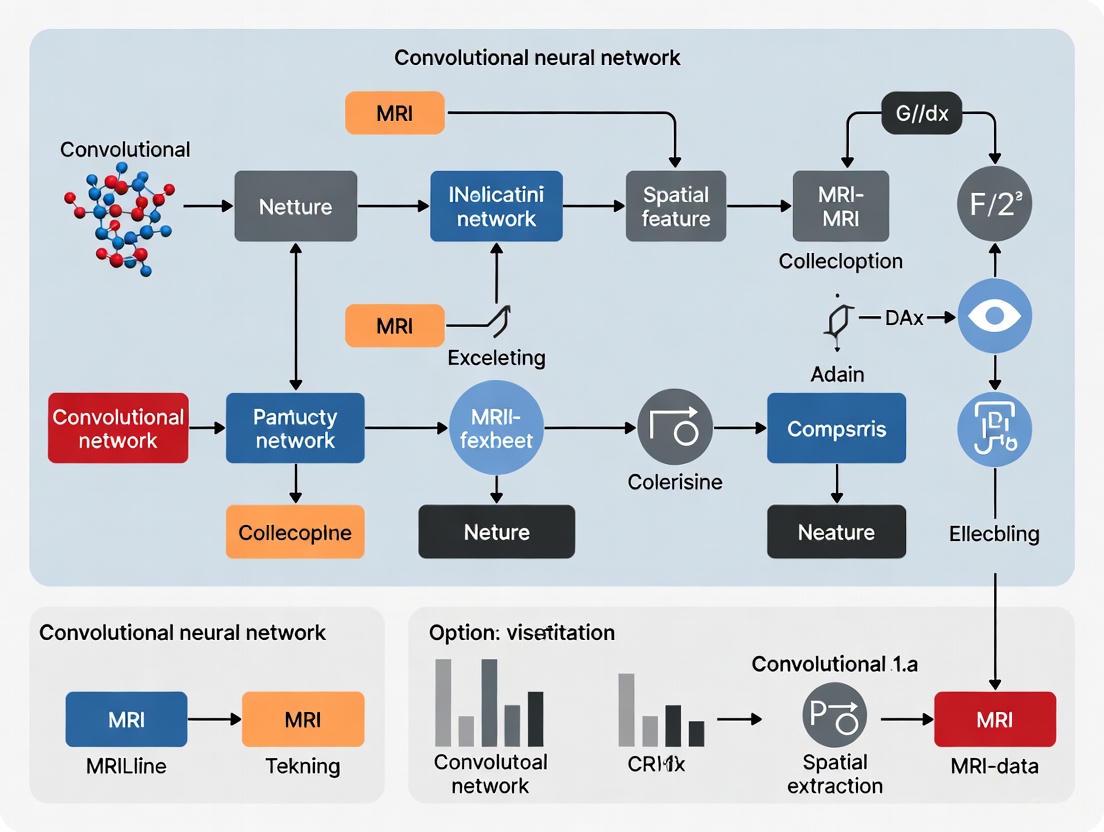

Workflow Visualization

CNN Hierarchical Feature Learning for MRI Analysis

The diagram illustrates the progressive transformation of MRI data through CNN layers. Input images first undergo feature detection in convolutional layers, followed by dimensionality reduction in pooling layers. This sequence repeats, building spatial hierarchies, before high-level features are integrated by fully connected layers for final classification.

Experimental Protocol for MRI Classification

This workflow outlines the systematic process for developing CNN-based MRI classification systems, from data acquisition through clinical interpretation, highlighting the comprehensive methodology required for robust medical image analysis.

The hierarchical architecture of CNNs, comprising convolutional, pooling, and fully connected layers, provides a powerful framework for spatial feature extraction from MRI data. Through their coordinated functions—local feature detection, spatial hierarchy building, and global feature integration—these networks achieve exceptional performance in brain tumor classification tasks, with recent models reporting accuracy exceeding 98% [2]. The experimental protocols outlined herein offer researchers a methodological foundation for implementing these architectures, while the visualization of workflows and component relationships enhances understanding of how CNNs progressively transform medical images into diagnostic predictions. As research advances, the integration of these core components with emerging techniques like attention mechanisms and explainable AI will further strengthen their utility in clinical neurosciences.

The Role of Spatial Feature Extraction in MRI Analysis for Neurology and Oncology

Spatial feature extraction is a foundational process in medical image analysis that identifies and isolates meaningful patterns or structures within spatial data [9]. In the context of magnetic resonance imaging (MRI), this involves detecting edges, textures, shapes, and other attributes that define spatial relationships and hierarchical patterns within neurological and oncological images [10]. The growing application of Convolutional Neural Networks (CNNs) has revolutionized this domain, enabling automated learning of spatial hierarchies through multiple building blocks including convolution layers, pooling layers, and fully connected layers [10]. This capability is particularly valuable for analyzing the complex and diverse structures of brain tumors and neurological disorders, where accurate identification of spatial features directly impacts diagnosis, treatment planning, and therapeutic monitoring [11] [2] [12].

Within neuro-oncology, the central role of MRI is undisputed, serving as a primary tool for diagnosis, monitoring disease activity, supporting treatment decisions, and evaluating treatment response [13] [12]. The integration of advanced spatial feature extraction techniques, particularly through deep learning approaches, is addressing critical limitations of conventional MRI, including difficulty discerning the full extent of infiltrative tumors and distinguishing between neoplastic and non-neoplastic processes in post-treatment scenarios [12]. This article examines the technical protocols, applications, and emerging frontiers of spatial feature extraction in MRI analysis, with specific focus on implementations for neurology and oncology.

Fundamentals of Spatial Feature Extraction in MRI

Spatial feature extraction in MRI involves converting raw image data into structured, machine-readable features that represent clinically relevant patterns [9]. CNNs automatically and adaptively learn spatial hierarchies of features through backpropagation using multiple building blocks: convolution layers, pooling layers, and fully connected layers [10]. The process begins with convolution operations where kernels (small arrays of numbers) are applied across input image tensors to create feature maps that highlight specific patterns [10]. These features become progressively more complex through successive layers, enabling the network to evolve from detecting simple edges to identifying complex pathological structures.

Key Technical Components:

- Convolution Layers: Perform feature extraction through linear operations optimized by learnable kernels that scan input images [10]. Key hyperparameters include kernel size (typically 3×3, 5×5, or 7×7), number of kernels, padding (typically zero padding to maintain dimensions), and stride (usually 1 for detailed scanning) [10].

- Non-linear Activation Functions: Introduce non-linearity to the system, with Rectified Linear Unit (ReLU) being most common due to its computational efficiency [10].

- Pooling Layers: Provide downsampling operations that reduce feature map dimensionality while introducing translation invariance to small shifts and distortions. Max pooling (2×2 with stride of 2) is most popular, while global average pooling is sometimes applied before fully connected layers [10].

The fundamental advantage of CNN-based spatial feature extraction lies in weight sharing, where kernels are shared across all image positions, allowing detection of learned local patterns regardless of their location while significantly reducing parameters compared to fully connected networks [10].

Spatial Feature Extraction in Neuro-Oncology

Clinical Application Domains

In neuro-oncology, spatial feature extraction enables precise tumor classification, segmentation, and characterization. Malignant brain tumors can be categorized as either metastatic tumors (originating outside the brain) or primary tumors (originating within brain tissue and meninges), with gliomas representing approximately 80% of malignant brain tumors [12]. Accurate spatial feature analysis is crucial for differentiating tumor types and grades, guiding treatment decisions, and monitoring therapeutic response.

Table 1: Performance of Advanced Spatial Feature Extraction Models in Brain Tumor Classification

| Model Architecture | Dataset | Accuracy | Sensitivity/Specificity | Key Spatial Features Extracted |

|---|---|---|---|---|

| ResNet-152 with EChOA feature selection [11] | Figshare dataset | 98.85% | Not specified | Hierarchical texture and shape features optimized via modified chimp algorithm |

| Dual Deep Convolutional Brain Tumor Network (D²CBTN) [2] | Kaggle brain tumor dataset | 98.81% | 97.75% recall, 99.18% specificity | Combined fine-grained and high-level tumor features |

| VGG-19 + Custom CNN [2] | Kaggle brain tumor dataset | 98.81% | 97.69% precision, 97.70% F1-score | Global and local tumor morphological patterns |

| nnU-Net for MS lesion segmentation [14] | 103 patient FLAIR MRI dataset | 83% (slice level) | 100% sensitivity, 75% PPV | MS lesion boundaries and spatial distribution |

Advanced models like the Dual Deep Convolutional Brain Tumor Network (D²CBTN) demonstrate how combining pre-trained networks (VGG-19) with custom CNNs can extract complementary feature sets—global contextual features and localized detailed patterns—significantly enhancing classification accuracy for complex brain tumor types including glioma, meningioma, pituitary tumors, and non-tumor cases [2].

Technical Approaches and Architectures

Contemporary research employs sophisticated architectures for spatial feature extraction. Residual networks like ResNet-152 leverage skip connections to enable training of very deep networks, capturing complex spatial hierarchies while avoiding vanishing gradient problems [11]. The integration of optimization algorithms such as the Enhanced Chimpanzee Optimization Algorithm (EChOA) further improves feature selection by minimizing redundant features and enhancing discriminative spatial patterns [11].

For segmentation tasks, nnU-Net frameworks have demonstrated robust performance in automatically configuring themselves for specific medical imaging datasets, achieving high accuracy in segmenting Multiple Sclerosis (MS) lesions from FLAIR MRI images with Dice Similarity Coefficients of 70-75% [14]. This capability is particularly valuable for quantifying disease burden and monitoring progression in demyelinating disorders.

Spatial Feature Extraction in Neurological Disorders

Beyond oncology, spatial feature extraction plays a crucial role in diagnosing and monitoring neurodegenerative disorders. In cognitive impairment, CNN algorithms applied to structural MRI (sMRI) data have demonstrated significant capability in differentiating between Alzheimer's Disease (AD), Mild Cognitive Impairment (MCI), and normal cognition (NC) [15].

Table 2: CNN Performance in Differentiating Cognitive Impairment Categories Using Structural MRI

| Comparison | Pooled Sensitivity | Pooled Specificity | Clinical Utility |

|---|---|---|---|

| AD vs. NC [15] | 0.92 | 0.91 | High accuracy for definitive diagnosis |

| MCI vs. NC [15] | 0.74 | 0.79 | Moderate accuracy for early detection |

| AD vs. MCI [15] | 0.73 | 0.79 | Moderate differentiation capability |

| pMCI vs. sMCI [15] | 0.69 | 0.81 | Challenging but clinically valuable progression prediction |

The meta-analysis of 21 studies comprising 16,139 participants revealed that CNN algorithms achieve highest accuracy in distinguishing AD from normal cognition, with pooled sensitivity of 0.92 and specificity of 0.91 [15]. This performance reflects the distinct spatial patterns of cortical atrophy, ventricular enlargement, and hippocampal shrinkage characteristic of advanced AD that CNNs can effectively extract and recognize.

For Multiple Sclerosis, spatial feature extraction focuses on detecting demyelinating lesions in white matter, with FLAIR MRI sequences being particularly valuable [14]. The nnU-Net architecture has demonstrated robust performance in this domain, achieving 83% accuracy in slice-level classification and 100% sensitivity in lesion detection on internal test sets [14]. This high sensitivity is clinically crucial as missing lesions could lead to underestimation of disease burden.

Advanced Imaging Modalities and Hybrid Approaches

Beyond conventional MRI, advanced imaging modalities are creating new frontiers for spatial feature extraction. The integration of positron emission tomography (PET) with MRI combines exceptional structural detail with metabolic and functional information, providing a multidimensional view of brain pathology [12]. Amino acid PET tracers like [¹⁸F]FET offer better visualization of tumor borders compared to traditional glucose analogs, as normal brain tissue doesn't exhibit increased amino acid uptake [12].

Table 3: Advanced Imaging Modalities for Enhanced Spatial Feature Extraction

| Imaging Modality | Key Spatial Features | Clinical Advantages | Limitations |

|---|---|---|---|

| Amino Acid PET (e.g., [¹⁸F]FET) [12] | Tumor metabolism, infiltration boundaries | Superior tumor margin delineation, independent of blood-brain barrier disruption | Limited availability, higher cost |

| MR Perfusion Imaging [12] | Vascular density, blood flow characteristics | Differentiates tumor grade, identifies angiogenesis | Requires contrast administration, analysis complexity |

| MR Fingerprinting [12] | Simultaneous quantitative tissue parameter mapping | Rapid multi-parametric quantitative assessment | Emerging technology, validation ongoing |

| MR Elastography [12] | Tissue stiffness, mechanical properties | Differentiates tumor consistency pre-surgery, planning guidance | Motion sensitivity, technical expertise required |

| MR Spectroscopy [12] | Metabolic profiles, chemical composition | Identifies metabolic signatures of specific tumors | Limited spatial resolution, complex interpretation |

Radiomics represents another advanced frontier, converting medical images into mineable high-dimensional data to discover radiomic signatures of disease states [13]. This approach extracts vast numbers of quantitative spatial features—including texture, shape, and intensity patterns—that may not be visually perceptible but contain prognostic and predictive information [13]. When combined with CNN-based deep learning, radiomics enables discovery of complex spatial biomarkers for precision neuro-oncology.

Experimental Protocols and Methodologies

Protocol 1: Brain Tumor Classification with Integrated CNN Architecture

Objective: To implement a dual deep convolutional network for precise classification of brain tumor types from MRI scans [2].

Dataset Preparation:

- Utilize the Kaggle brain tumor classification dataset comprising glioma, no tumor, meningioma, and pituitary categories [2].

- Implement data augmentation using ImageDataGenerator function to address class imbalance [2].

- Apply preprocessing including normalization, skull stripping, and resizing to 224×224 pixels [2].

Experimental Setup:

- Architecture: Dual Deep Convolutional Brain Tumor Network (D²CBTN) integrating pre-trained VGG-19 with custom CNN [2].

- Feature Fusion: Implement "Add" layer to combine global features from VGG-19 and localized features from custom CNN [2].

- Training: 10-fold cross-validation to ensure robust performance estimation [2].

- Optimization: Adam optimizer with categorical cross-entropy loss function [2].

Evaluation Metrics:

- Primary: Accuracy, Precision, Recall, Specificity, F1-score [2].

- Validation: Comparative analysis against ResNet152, EfficientNetB0, DenseNet121, and transformer models [2].

Protocol 2: Multiple Sclerosis Lesion Segmentation with nnU-Net

Objective: To develop an automated system for segmenting MS lesions from FLAIR MRI images using nnU-Net architecture [14].

Dataset Preparation:

- Collect FLAIR MRI images from 103 MS patients with 512×512 pixel resolution [14].

- Acquire external validation set of 10 patients from additional centers [14].

- Expert radiologist annotation using Pixlr Suite program for ground truth masks [14].

Preprocessing Pipeline:

- Skull stripping to remove non-brain tissue [14].

- Intensity normalization to standardize value ranges [14].

- Entropy-based exclusion to filter non-informative slices [14].

- Data augmentation including rotation, flipping, and intensity variations [14].

Model Configuration:

- Architecture: nnU-Net framework configured for 2D slices [14].

- Training: Fivefold cross-validation approach [14].

- Hardware: NVIDIA GeForce RTX 3090 GPU for accelerated training [14].

Evaluation Framework:

- Slice-level metrics: Accuracy, Sensitivity, Positive Predictive Value (PPV), Negative Predictive Value (NPV) [14].

- Voxel-level metrics: Dice Similarity Coefficient (DSC) for segmentation overlap [14].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Materials for MRI Spatial Feature Extraction Experiments

| Research Component | Specifications | Function/Purpose |

|---|---|---|

| MRI Dataset [11] [14] [2] | Figshare, Kaggle brain tumor dataset, or institutional FLAIR MRI collections | Ground truth data for model training and validation |

| Annotation Software [14] | Pixlr Suite or equivalent medical image annotation tools | Expert labeling of regions of interest for supervised learning |

| Deep Learning Framework [11] [2] | Python with TensorFlow/PyTorch, nnU-Net for medical segmentation | Implementation of CNN architectures and training pipelines |

| Computational Hardware [14] | NVIDIA GeForce RTX 3090 GPU or equivalent high-performance computing | Accelerated model training and inference for large volumetric data |

| Data Augmentation Tools [2] | ImageDataGenerator or custom augmentation pipelines | Address dataset imbalance and improve model generalization |

| Optimization Algorithms [11] | Enhanced Chimpanzee Optimization Algorithm (EChOA) or genetic algorithms | Feature selection and dimensionality reduction |

| Evaluation Metrics [14] [2] | Accuracy, Sensitivity, Specificity, Dice Score, F1-score | Quantitative performance assessment and model comparison |

Spatial feature extraction using CNN-based methodologies has fundamentally advanced MRI analysis in neurology and oncology, enabling unprecedented accuracy in tumor classification, lesion segmentation, and disease characterization. The integration of dual-network architectures, advanced optimization algorithms, and comprehensive validation frameworks has yielded systems capable of exceeding 98% accuracy in specific classification tasks [11] [2]. These technological advances are transitioning neuro-imaging from qualitative subjective interpretation to quantitative analytical approaches that enhance diagnostic precision and clinical decision-making.

Future directions in spatial feature extraction research include several critical frontiers. First, the development of explainable AI methodologies is essential to enhance clinical trust and adoption by providing interpretable visualizations of which spatial features drive specific classifications [2]. Second, technical validation and biological correlation remain challenging, requiring rigorous multi-institutional studies to establish reliable imaging biomarkers [13]. Third, the integration of multi-modal data—combining structural MRI with advanced sequences, PET metabolic information, and clinical parameters—will enable more comprehensive disease characterization [12]. Finally, distinguishing between active and non-active lesions in Multiple Sclerosis and differentiating true tumor progression from treatment-related changes represent particularly valuable clinical targets for next-generation spatial feature extraction algorithms [14] [12].

As these advancements mature, spatial feature extraction will increasingly serve as the foundation for precision neuro-oncology, enabling earlier detection, personalized treatment strategies, and more sensitive monitoring of therapeutic response—ultimately improving outcomes for patients with neurological and oncological disorders.

The evolution of Convolutional Neural Network (CNN) architectures has fundamentally transformed the landscape of medical image analysis, particularly in the domain of magnetic resonance imaging (MRI). From the pioneering AlexNet to the sophisticated ResNet and DenseNet, each architectural innovation has addressed specific challenges in model performance, training efficiency, and feature extraction capability. Within MRI spatial feature extraction research, these architectures enable the identification of complex, hierarchical patterns essential for precise diagnosis and therapeutic development. The progression from simple stacked layers to complex residual and densely connected pathways represents a paradigm shift in how deep learning models capture and represent spatial information from volumetric medical data. This evolution is particularly critical for neuroimaging applications, where subtle anatomical variations and pathological signatures require models capable of extracting both local texture details and global contextual information across three-dimensional spatial domains.

Architectural Evolution: From Foundation to Innovation

AlexNet: The Pioneering Architecture

AlexNet marked a watershed moment in deep learning, demonstrating for the first time that complex hierarchical features could be learned directly from image data through an eight-layer architecture. The network employed a series of five convolutional layers followed by three fully-connected layers, utilizing novel approaches that would become standard in subsequent architectures [16] [17]. For MRI research, AlexNet introduced critical capabilities for automated feature extraction from medical images, reducing reliance on manual feature engineering. Its architectural innovations included the use of ReLU activation functions to address the vanishing gradient problem and accelerate training, overlapping max-pooling for dimensional reduction while preserving spatial information, and dropout regularization to prevent overfitting on limited medical datasets [17]. Though comparatively shallow by modern standards, AlexNet established the fundamental blueprint for deep CNN architectures in medical image analysis, with its input configuration (227×227×3) demonstrating that learned hierarchical features could outperform hand-crafted features for complex visual recognition tasks.

VGGNet: The Power of Depth and Simplicity

VGGNet advanced CNN architecture through a systematic investigation of network depth, demonstrating that progressive layers of small 3×3 filters could significantly enhance feature learning capabilities [18] [19]. The VGG-16 and VGG-19 configurations implemented a uniform architecture throughout the network, with stacked convolutional layers followed by spatial reduction via max-pooling. This design created a natural feature hierarchy where early layers captured simple spatial patterns like edges and textures, while deeper layers assembled these into complex anatomical structures - a property particularly valuable for MRI analysis where pathologies often manifest at multiple spatial scales [18]. The VGG architecture's strength in transfer learning is evidenced by its continued application in medical imaging research, such as in the Dual Deep Convolutional Brain Tumor Network (D²CBTN), where VGG-19 serves as a robust feature extractor for classifying brain tumors from MRI scans [2]. However, VGG's computational requirements (138 million parameters in VGG-16) and sensitivity to vanishing gradients in very deep configurations present practical limitations for large-scale volumetric MRI analysis [19].

ResNet: Overcoming the Depth Barrier

The Residual Network (ResNet) architecture represented a fundamental breakthrough in enabling extremely deep networks through the introduction of skip connections and residual learning [20]. Prior architectures, including VGG, faced the degradation problem where accuracy would saturate and then decline with increasing depth, indicating that not all systems were equally easy to optimize. ResNet addressed this by reframing the learning objective: instead of expecting stacked layers to directly learn a desired underlying mapping H(x), they would learn residual functions F(x) = H(x) - x, with the original input x being passed forward via identity skip connections [20] [21]. This innovative approach allowed gradients to flow directly backward through the network during training, mitigating the vanishing gradient problem and enabling the successful training of networks with up to 152 layers for 2D images and even deeper configurations for volumetric medical data [20].

For MRI feature extraction, ResNet's residual blocks prove particularly valuable in capturing multi-scale spatial features across large volumetric datasets. The architecture's ability to maintain feature propagation through deep networks enables learning of complex hierarchical representations essential for distinguishing subtle pathological patterns in neuroimaging. Variants like Wide ResNet challenge the assumption that depth alone is optimal, instead increasing width within residual blocks to enhance feature reuse and computational efficiency - an approach particularly beneficial when working with limited medical data [21]. Similarly, ResNeXt introduces cardinality (parallel pathways within blocks) as an additional dimension, creating models that capture diverse feature representations more efficiently than simply increasing depth or width [21].

DenseNet: Maximizing Feature Reuse with Dense Connections

DenseNet represents a further evolution of connectivity patterns by introducing direct connections between all layers in a dense block, with each layer receiving feature maps from all preceding layers and passing its own feature maps to all subsequent layers [8] [22]. This dense connectivity pattern yields several compelling advantages for medical image analysis: it strengthens feature propagation throughout the network, encourages substantial feature reuse, and substantially reduces the number of parameters through efficient feature learning [22]. In MRI research, where datasets are often limited and computational resources may be constrained, DenseNet's parameter efficiency enables the development of high-capacity models without proportional increases in computational requirements.

The feature concatenation approach in DenseNet ensures that both low-level spatial information from early layers and high-level semantic information from deeper layers remain accessible throughout the network, preserving spatial details that might be lost in other architectures through successive pooling operations. This property is particularly valuable for segmentation tasks and lesion detection in MRI, where precise spatial localization is critical. Research has demonstrated DenseNet's effectiveness in medical applications, with studies employing DenseNet-121 as part of hybrid deep learning frameworks for Alzheimer's disease classification from MRI data, achieving high accuracy in delineating cognitive impairment stages [8].

Comparative Analysis of CNN Architectures

Table 1: Architectural Specifications and Performance Characteristics

| Architecture | Depth (Layers) | Key Innovation | Parameters | Medical Imaging Applications | Strengths for MRI Analysis |

|---|---|---|---|---|---|

| AlexNet | 8 | First successful deep CNN; ReLU & dropout | 62 million | Foundational feature extraction | Demonstrated automated feature learning from medical images |

| VGG-16/VGG-19 | 16/19 | Small 3×3 filters; depth increase | 138/144 million | Brain tumor classification [2] | Hierarchical feature learning; transfer learning capability |

| ResNet | 34-152+ | Skip connections; residual learning | ~25-60 million | Alzheimer's classification [8] | Enables very deep networks; mitigates vanishing gradients |

| DenseNet | 121-264 | Dense inter-layer connectivity | ~8-30 million | Multi-class MRI classification [22] | Feature reuse; parameter efficiency; gradient flow |

Table 2: Experimental Performance on Medical Imaging Tasks

| Architecture | Dataset/Task | Reported Performance | Computational Considerations |

|---|---|---|---|

| VGG-19 | Brain tumor classification (Kaggle dataset) | 98.81% accuracy, 97.69% precision [2] | High memory footprint (528MB); suitable for transfer learning |

| ResNet-152 | CheXpert chest X-ray classification | AUROC 0.882 for multi-label classification [22] | Bottleneck design reduces parameters while maintaining depth |

| DenseNet-121 | OASIS-1 (Alzheimer's classification) | 91.67% accuracy as part of hybrid framework [8] | Parameter efficiency enables training on limited medical data |

| Custom Lightweight CNN | MRI brain tumor classification | 99.54% accuracy with only 1.8M parameters [23] | Optimized for clinical deployment with limited resources |

Experimental Protocols for MRI Feature Extraction

Protocol 1: Transfer Learning for Medical Image Classification

Purpose: To adapt pre-trained CNN architectures for MRI-based classification tasks using transfer learning.

Materials and Reagents:

- Pre-trained Models: ImageNet-trained weights for standard architectures (VGG, ResNet, DenseNet)

- MRI Dataset: Curated collection with appropriate class labels (e.g., ADNI for Alzheimer's, BraTS for tumors)

- Data Augmentation Pipeline: Techniques including random rotation (±10°), flipping, zooming (up to 110%), and intensity variation [2] [22]

- Computational Resources: GPU-enabled workstation (e.g., NVIDIA Titan series with ≥11GB RAM)

Procedure:

- Data Preprocessing: Convert volumetric MRI data to appropriate 2D slice format (224×224 for VGG, 227×227 for AlexNet) with normalization to [0,1] range

- Architecture Modification: Replace final classification layer (1000-class ImageNet output) with task-specific outputs (e.g., 4 classes for tumor types)

- Progressive Training:

- Phase 1: Freeze convolutional base, train only classification head (5-8 epochs)

- Phase 2: Unfreeze entire network, fine-tune with reduced learning rate (1e-5 to 1e-6) for 3-5 additional epochs [22]

- Validation: Implement k-fold cross-validation (typically k=10) to ensure robustness and assess generalization [2]

Troubleshooting: For class imbalance, employ weighted loss functions or oversampling. For overfitting, increase dropout rates or employ additional regularization.

Protocol 2: Hybrid 3D CNN for Volumetric MRI Analysis

Purpose: To extract spatiotemporal features from volumetric MRI data using 3D CNN architectures.

Materials and Reagents:

- Volumetric Data: Preprocessed 3D MRI scans (e.g., 1mm³ isotropic resolution)

- Architecture Framework: 3D CNN implementation (e.g., 3D DenseNet) with self-attention mechanisms [8]

- Memory Optimization: Gradient checkpointing and mixed-precision training for large volumes

Procedure:

- Volumetric Preprocessing: Skull stripping, intensity normalization, and registration to standard space (e.g., MNI152)

- Architecture Configuration: Implement 3D convolutional layers with kernel sizes (3×3×3) and residual/dense connectivity patterns

- Multi-scale Feature Extraction: Incorporate parallel pathways at different resolutions to capture local and global context

- Attention Integration: Augment with self-attention blocks to enhance volumetric brain feature extraction and capture long-range dependencies [8]

- Regularization Strategy: Employ spatial dropout, label smoothing, and early stopping to improve generalization

Applications: Particularly effective for longitudinal MRI analysis and tracking disease progression over time [8].

Visualization of Architectural Evolution

Table 3: Critical Research Reagents and Computational Resources for CNN MRI Research

| Resource Category | Specific Examples | Function/Application | Implementation Notes |

|---|---|---|---|

| Benchmark Datasets | OASIS-1 (cross-sectional), OASIS-2 (longitudinal) [8] | Model training and validation for neuroimaging | Standardized pre-processing essential for cross-study comparison |

| Data Augmentation Tools | ImageDataGenerator (Keras), RandomRotation, CutMix [8] [2] | Address class imbalance and improve generalization | Particularly critical for medical data with limited samples |

| Regularization Techniques | Dropout (rate=0.5), Label Smoothing, Early Stopping [8] [17] | Prevent overfitting on limited medical data | Dropout rate of 0.5 first introduced in AlexNet [17] |

| Optimization Algorithms | SGD with Momentum, Adaptive Learning Rates [16] [22] | Stabilize training and accelerate convergence | Learning rate typically between 1e-5 and 1e-6 for fine-tuning [22] |

| Computational Infrastructure | NVIDIA Titan/RTX Series (≥11GB RAM) [22] [19] | Enable training of deep architectures on volumetric data | Memory constraints often dictate batch size and input dimensions |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, AUROC [8] [2] | Comprehensive performance assessment | Medical applications often prioritize sensitivity for screening |

Emerging Trends and Future Directions

The evolution of CNN architectures continues to advance MRI spatial feature extraction research through several promising directions. Hybrid architectures that combine the strengths of CNNs with attention mechanisms are demonstrating remarkable performance, such as frameworks integrating DenseNet with self-attention mechanisms for Alzheimer's disease classification that achieve up to 97.33% accuracy on longitudinal MRI data [8]. The development of lightweight customized networks represents another significant trend, with research showing that optimized compact models of just 1.8 million parameters can achieve 99.54% accuracy on brain tumor classification while requiring minimal computational resources [23]. These efficient architectures facilitate clinical deployment in resource-constrained environments.

Future architectural innovations will likely focus on multi-modal integration, combining MRI with complementary data sources like genetic markers or clinical history for more comprehensive diagnostic models. Additionally, explainable AI techniques are becoming increasingly important for clinical adoption, providing interpretable visualizations of the spatial features driving model decisions. As architectural complexity grows, efficient volumetric processing methods will be essential for handling high-resolution 3D MRI data without prohibitive computational requirements. The continued evolution of CNN architectures promises to further enhance their capability to extract clinically relevant spatial features from complex medical imaging data, ultimately advancing precision medicine and therapeutic development.

Why CNNs Excel at Interpreting Brain Anatomy and Pathological Patterns in MRI

Convolutional Neural Networks (CNNs) have revolutionized the interpretation of brain Magnetic Resonance Imaging (MRI) by providing an automated, highly accurate framework for analyzing complex neuroanatomical patterns. Their architectural properties align exceptionally well with the spatial hierarchies and structural relationships inherent in brain imaging data. Unlike traditional machine learning approaches that rely on handcrafted features, CNNs autonomously learn hierarchical discriminative patterns directly from raw MRI pixels, capturing nuanced biomarkers that may be missed by conventional metrics [24]. This capability is particularly valuable in clinical neuroscience, where subtle morphological changes often represent the earliest indicators of pathological processes.

The fundamental strength of CNNs lies in their ability to preserve critical spatial hierarchies through convolutional layers, maintaining relationships between adjacent brain regions that are vital for interpreting sMRI data [24]. This spatial awareness enables CNNs to detect early multifocal atrophy patterns in neurodegenerative diseases and precisely delineate tumor boundaries in neuro-oncology. Furthermore, CNN architectures efficiently manage the high-dimensional nature of MRI data (typically 180 × 210 × 180 voxels) through pooling layers that reduce dimensionality without sacrificing diagnostically critical information [24]. These capabilities make CNNs uniquely suited to harness the full spatial richness of MRI data across diverse clinical applications.

Fundamental Architectural Advantages of CNNs for MRI Analysis

Hierarchical Feature Learning Mirroring Brain Organization

CNN architectures excel at MRI interpretation because their fundamental design principles mirror the structural organization of neuroimaging data. The hierarchical feature learning in CNNs progresses from simple edges and textures in early layers to complex morphological patterns in deeper layers, effectively capturing the multi-scale nature of brain anatomy and pathology [25] [1]. This compositional hierarchy allows CNNs to detect everything from local texture variations in tissue microstructure to global volumetric changes in brain regions, providing a comprehensive analytical framework for MRI interpretation.

The spatial invariance achieved through shared weight convolutions and pooling operations enables CNNs to recognize pathological patterns regardless of their location in the brain, a crucial advantage for analyzing tumors and lesions that may appear in diverse neuroanatomical contexts [1]. Furthermore, the translation equivariance property of convolutional operations ensures that spatial relationships between brain structures are preserved throughout the network, allowing the model to learn clinically relevant contextual patterns such as the differential atrophy of hippocampal subfields in early Alzheimer's disease [24]. These intrinsic architectural properties make CNNs uniquely capable of extracting biologically meaningful representations from complex MRI data without requiring explicit spatial priors or manual feature engineering.

Comparative Advantages Over Traditional Methods

Table 1: Comparative Analysis of MRI Interpretation Methods

| Analytical Approach | Feature Representation | Satial Context Preservation | Adaptability to Complex Patterns | Dependency on Domain Expertise |

|---|---|---|---|---|

| Traditional Machine Learning (e.g., SVM, Random Forests) | Handcrafted features (volumetrics, cortical thickness) | Limited (flattened vectors) | Moderate (requires explicit feature engineering) | High (manual feature selection) |

| Convolutional Neural Networks | Self-learned hierarchical features | Excellent (convolutional operations maintain spatial relationships) | High (automatic pattern discovery) | Low (end-to-end learning) |

| Hybrid CNN-Transformer Models [26] | Local and global contextual features | Superior (combines spatial and long-range dependencies) | Very High (multi-scale representation) | Moderate (architecture design) |

CNNs demonstrate distinct advantages over traditional machine learning models in deciphering complex neuroimaging patterns [24]. Unlike support vector machines or decision trees that rely on handcrafted features derived from prior knowledge, CNNs autonomously learn hierarchical discriminative patterns directly from raw sMRI pixels [27]. This end-to-end feature learning mitigates bias from incomplete manual feature engineering and captures nuanced biomarkers, such as microstructural changes in the entorhinal cortex that may be missed by conventional metrics [24].

Quantitative Performance Across Clinical Applications

Neurodegenerative Disease Classification

Table 2: CNN Performance in Neurodegenerative Disease Classification from sMRI

| Classification Task | Pooled Sensitivity | Pooled Specificity | Number of Studies | Participants | Key Regional Biomarkers |

|---|---|---|---|---|---|

| Alzheimer's Disease (AD) vs. Normal Cognition (NC) | 0.92 | 0.91 | 21 | 16,139 | Medial temporal lobe, hippocampal atrophy |

| Mild Cognitive Impairment (MCI) vs. NC | 0.74 | 0.79 | 21 | 16,139 | Hippocampal and entorhinal cortex atrophy |

| AD vs. MCI | 0.73 | 0.79 | 21 | 16,139 | Differential atrophy patterns across cortex |

| Progressive MCI vs. Stable MCI | 0.69 | 0.81 | 21 | 16,139 | Complex, multi-regional degeneration patterns |

CNNs demonstrate promising diagnostic performance in differentiating Alzheimer's disease, mild cognitive impairment, and normal cognition using structural MRI data [24]. The highest accuracy is observed in distinguishing AD from normal cognition, while the classification of progressive MCI versus stable MCI presents greater challenges, reflecting the subtlety of early neurodegenerative changes [24]. This performance spectrum underscores the CNN's sensitivity to both overt atrophy in established disease and subtle morphological changes in prodromal stages.

Brain Tumor Analysis and Segmentation

Table 3: CNN Performance in Brain Tumor Analysis

| Application | Model Architecture | Key Metrics | Dataset | Clinical Utility |

|---|---|---|---|---|

| Tumor Classification [2] | Dual Deep Convolutional Brain Tumor Network (D²CBTN) | Accuracy: 98.81%, Precision: 97.69%, Recall: 97.75%, Specificity: 99.18% | Kaggle Brain Tumor Classification Dataset | Differential diagnosis of glioma, meningioma, pituitary tumors |

| Tumor Segmentation [28] | AG-MS3D-CNN (Attention-Guided Multiscale 3D CNN) | Dice Scores: Whole Tumor: 0.91, Tumor Core: 0.87, Enhancing Tumor: 0.84 | BraTS 2021 | Surgical planning, treatment monitoring |

| Lightweight Tumor Detection [25] [3] | 5-layer CNN | Accuracy: 99%, Precision: 98.75%, Recall: 99.20%, F1-score: 98.87% | 189 grayscale brain MRI images | Accessible diagnosis with limited data |

| Multi-class Tumor Classification [29] | CNN with Firefly Optimization | Average Accuracy: 98.6% | BBRATS2018 | Tumor subtype characterization |

CNNs have revolutionized brain tumor analysis by automating the detection and segmentation processes that traditionally required extensive manual effort by neuroradiologists [30]. The AG-MS3D-CNN model incorporates attention mechanisms and multiscale feature extraction to enhance boundary delineation, particularly for infiltrative tumors with ambiguous margins [28]. This capability is crucial for surgical planning and treatment monitoring in neuro-oncology, where precise volumetric assessment directly impacts clinical decision-making.

Brain Age Estimation and Connectivity Analysis

Emerging CNN applications extend beyond disease classification to quantitative brain aging assessment. The NeuroAgeFusionNet framework demonstrates how hybrid architectures integrating CNNs with transformers and graph neural networks can achieve state-of-the-art performance in brain age estimation, with an MAE of 2.30 years, Pearson correlation of 0.97, and R² score of 0.96 on the UK Biobank dataset [26]. This precise age estimation provides a valuable biomarker for detecting accelerated brain aging associated with various neurological and psychiatric conditions.

Experimental Protocols for CNN Implementation in MRI Analysis

Protocol 1: CNN Pipeline for Brain Tumor Segmentation

Objective: Implement an automated segmentation pipeline for brain tumor subregions using multimodal MRI sequences.

Materials and Equipment:

- Hardware: GPU-enabled workstation (≥8GB VRAM)

- Software: Python 3.8+, TensorFlow 2.8+ or PyTorch 1.12+

- Data: BraTS 2021 dataset (T1, T1c, T2, FLAIR sequences)

Procedure:

- Data Preprocessing:

- Co-register all modalities to a common space

- Apply N4 bias field correction

- Normalize intensity values (zero mean, unit variance)

- Extract patches of size 128×128×128 centered on tumor regions

Model Configuration (AG-MS3D-CNN) [28]:

- Implement a 3D U-Net backbone with residual connections

- Integrate spatial attention gates at skip connections

- Add Monte Carlo dropout for uncertainty estimation

- Use deep supervision at multiple scales

Training Protocol:

- Loss function: Compound loss (Dice + Cross-Entropy + Boundary-aware term)

- Optimizer: Adam (lr=1e-4, β₁=0.9, β₂=0.999)

- Batch size: 2 (limited by GPU memory)

- Training duration: 300 epochs with early stopping

Evaluation Metrics:

- Compute Dice Similarity Coefficient for enhancing tumor, tumor core, and whole tumor

- Calculate Hausdorff Distance for boundary accuracy

- Generate uncertainty maps using Monte Carlo dropout (50 iterations)

Protocol 2: CNN for Alzheimer's Disease Classification

Objective: Develop a CNN model to discriminate between Alzheimer's disease, mild cognitive impairment, and normal cognition based on structural MRI.

Materials and Equipment:

- Hardware: GPU with ≥12GB VRAM

- Software: Python 3.7+, Keras 2.4+ with TensorFlow backend

- Data: ADNI dataset (T1-weighted MRI)

Procedure:

- Data Preprocessing:

- Perform anterior commissure - posterior commissure (AC-PC) alignment

- Apply skull stripping using BET (FSL) or HD-BET

- Segment into gray matter, white matter, and CSF using SPM or FSL

- Normalize to MNI space using non-linear registration

- Apply data augmentation (rotation, scaling, flipping)

Model Architecture:

- Implement a 3D CNN with 4 convolutional blocks

- Each block: 3D convolution (3×3×3), BatchNorm, ReLU, MaxPooling (2×2×2)

- Final layers: Global average pooling, fully connected layer (512 units), softmax output

- Add L2 regularization (λ=0.001) and dropout (rate=0.5)

Training Protocol:

- Loss function: Categorical cross-entropy

- Optimizer: Adam (lr=1e-5)

- Batch size: 8

- Training-validation split: 80-20 with stratified sampling

- Apply learning rate reduction on plateau

Performance Assessment:

- Calculate sensitivity, specificity, accuracy, and AUC-ROC

- Perform 10-fold cross-validation

- Generate saliency maps for model interpretability

Protocol 3: Lightweight CNN for Tumor Detection with Limited Data

Objective: Create an efficient CNN model for binary tumor classification when limited training data is available.

Materials and Equipment:

- Hardware: CPU or GPU with ≥4GB VRAM

- Software: TensorFlow 2.5+, TFlearn

- Data: 189 grayscale brain MRI images (98 tumor, 91 non-tumor)

Procedure:

- Data Preparation:

- Resize all images to 256×256 pixels

- Normalize pixel values to [0,1] range

- Apply data augmentation: rotation (±15°), zoom (±10%), horizontal flipping

- Use class weighting to address imbalance

-

- Input: 256×256 grayscale images

- Conv2D (32 filters, 3×3, ReLU) → MaxPooling (2×2)

- Conv2D (64 filters, 3×3, ReLU) → MaxPooling (2×2)

- Conv2D (128 filters, 3×3, ReLU) → GlobalAveragePooling

- Fully connected (64 units, ReLU) → Dropout (0.5)

- Output: Sigmoid activation (binary classification)

Training Protocol:

- Loss function: Binary cross-entropy

- Optimizer: Adam (lr=0.001)

- Batch size: 16

- Epochs: 10 with early stopping

- Validation split: 20%

Evaluation:

- Compute accuracy, precision, recall, F1-score, ROC-AUC

- Generate confusion matrix

- Plot learning curves for training and validation

Table 4: Key Research Resources for CNN-Based MRI Analysis

| Resource Category | Specific Tools/Datasets | Application | Key Features | Access Information |

|---|---|---|---|---|

| Public MRI Datasets | BraTS (Brain Tumor Segmentation) | Tumor segmentation, classification | Multimodal scans with expert annotations | [28] |

| ADNI (Alzheimer's Disease Neuroimaging Initiative) | Neurodegenerative disease classification | Longitudinal data with clinical correlates | [24] | |

| UK Biobank | Brain age estimation, population studies | Large-scale dataset (N=500,000) | [26] | |

| Kaggle Brain Tumor Dataset | Method development, benchmarking | Curated classification dataset | [25] [3] | |

| Software Frameworks | TensorFlow, PyTorch | Model development, training | Flexible deep learning frameworks | Open source |

| MONAI | Medical imaging-specific tools | Domain-specific optimizations | Open source | |

| SPM, FSL | Medical image preprocessing | Established neuroimaging tools | Academic licenses | |

| Validation Tools | QUADAS-2 | Quality assessment of diagnostic studies | Standardized methodology evaluation | [24] |

| METRICS (Methodological Radiomics Quality Score) | Radiomics methodology quality | Comprehensive quality scoring | [24] |

Advanced Architectures and Future Directions

Hybrid Models and Emerging Paradigms

While standard CNNs provide strong performance for many neuroimaging tasks, recent research has focused on hybrid architectures that address specific limitations of conventional approaches. The NeuroAgeFusionNet framework exemplifies this trend by integrating CNNs with transformers and graph neural networks to capture complementary information types [26]. This ensemble approach leverages CNNs for spatial feature extraction, transformers for long-range contextual dependencies, and GNNs for structural connectivity patterns, resulting in more robust brain age estimation.

Attention mechanisms have emerged as particularly valuable enhancements to CNN architectures, improving model interpretability and performance for complex segmentation tasks. The AG-MS3D-CNN model demonstrates how attention gates can enhance boundary delineation in brain tumor segmentation by selectively emphasizing relevant spatial locations while suppressing irrelevant regions [28]. This capability is especially valuable for infiltrative tumors where precise margin identification directly impacts surgical planning and treatment outcomes.

Uncertainty Quantification and Domain Adaptation

For clinical translation, reliable uncertainty estimation is essential. Monte Carlo dropout integration in models like AG-MS3D-CNN provides confidence measures for segmentation outputs, allowing clinicians to identify regions where model predictions may be less reliable [28]. This transparency builds trust in AI systems and supports informed clinical decision-making.

Domain adaptation techniques address another critical challenge: performance degradation when models are applied to data from different scanners or acquisition protocols. Incorporating domain adaptation modules enhances model robustness, ensuring consistent performance across diverse clinical environments [28]. This capability is particularly important for real-world deployment where MRI protocols vary significantly between institutions.

CNNs have fundamentally transformed MRI analysis by providing an automated, accurate, and scalable framework for extracting clinically relevant information from complex neuroimaging data. Their architectural properties—hierarchical feature learning, spatial relationship preservation, and translation invariance—align exceptionally well with the analytical requirements of brain image interpretation. The demonstrated success across diverse applications including tumor analysis, neurodegenerative disease classification, and brain age estimation underscores the versatility and power of these approaches.

Future advancements will likely focus on enhancing model interpretability, improving generalization across diverse populations and imaging protocols, and integrating multi-modal data for more comprehensive brain analysis. As CNN architectures continue to evolve, their role in clinical neuroscience will expand, ultimately contributing to more precise diagnosis, personalized treatment planning, and improved patient outcomes in neurological disorders.

Advanced Architectures and Practical Implementation for MRI Analysis

The application of Convolutional Neural Networks (CNNs) for analyzing Magnetic Resonance Imaging (MRI) data represents a cornerstone of modern computational pathology. In the context of brain tumor classification, these architectures excel at extracting hierarchical spatial features—from simple edges and textures in initial layers to complex morphological patterns in deeper layers—that are critical for distinguishing pathological tissue from healthy structures and for differentiating between various tumor subtypes [25] [3]. Among the plethora of available architectures, EfficientNet, VGG, and ResNet have emerged as dominant backbones for research and clinical translation. Their widespread adoption stems from their complementary strengths: VGG provides a robust foundational design, ResNet enables the training of very deep networks through residual connections, and EfficientNet optimizes the trade-off between model performance and computational efficiency through compound scaling [31] [32]. This document details the application, performance, and experimental protocols for these key architectures, providing a structured resource for researchers and drug development professionals engaged in neuro-oncology and medical image analysis.

The following tables summarize the quantitative performance of key architectures as reported in recent, high-quality studies focused on brain tumor classification using MRI data.

Table 1: Performance of Dominant Architectures in Brain Tumor Classification

| Model Architecture | Reported Accuracy | Key Strengths | Notable Variants/Applications | Citation |

|---|---|---|---|---|

| EfficientNet | 98.33% - 98.6% | High parameter efficiency, compound scaling, strong performance on multi-class tasks. | EfficientNet-B9, Improved EfficientNet for multi-grade classification. | [33] [32] |

| VGG | 98.69% - 99.46% | Simple, sequential design; strong transfer learning performance; excellent feature extraction. | VGG-16, VGG-19, Hybrid VGG-16 + FTVT-b16. | [34] [35] |

| ResNet | 99.15% - 99.66% | Very deep networks via skip connections; mitigates vanishing gradient; high accuracy. | ResNet-34, ResNet-50, Fine-tuned ResNet34 with Ranger optimizer. | [35] |

| Dual Deep Convolutional Network (D²CBTN) | 98.81% | Combines pre-trained VGG-19 and custom CNN; extracts both fine-grained and high-level features. | Integrated feature fusion via an "Add" layer. | [2] |

| Lightweight Custom CNN | 99% | Minimal computational footprint; effective with limited data and for binary classification. | Five-layer architecture (3 convolutional, 2 pooling, 1 dense). | [25] [3] |

Table 2: Model Complexity and Resource Requirements

| Model Architecture | Parameter Count (Approx.) | Training Time (per epoch) | Inference Throughput (images/sec) | Citation |

|---|---|---|---|---|

| VGG-19 | ~171 million | High | Moderate | [31] |

| ResNet-50 | ~25.6 million | Moderate | High | [31] |

| EfficientNetB0 | ~5.9 million | 25.4 seconds | 226 | [31] |

| MobileNet | ~3.2 million | 23.7 seconds | 226 | [31] |

| Custom Lightweight CNN | Very Low | Very Low | Very High (234.37) | [25] [31] |

Detailed Experimental Protocols

Protocol 1: Implementing a Fine-Tuned ResNet34 for High-Accuracy Classification

This protocol outlines the methodology for achieving state-of-the-art classification accuracy (99.66%) using a fine-tuned ResNet34 architecture [35].

- Dataset Preparation and Preprocessing

- Source: Utilize the public Brain Tumor MRI Dataset (e.g., from Figshare) containing T1-weighted, T2-weighted, and contrast-enhanced MRI volumes.

- Data Curation: Employ the MD5 hashing algorithm to identify and remove duplicate images, mitigating overfitting risk.

- Preprocessing: Resize all 2D slice images to 256x256 pixels. Normalize pixel intensities using the mean and standard deviation from the ImageNet dataset. During training, apply a random 224x224 center crop to each image to introduce scale and position variance.

- Data Augmentation Strategy

To enhance model robustness and generalizability, apply on-the-fly data augmentation during training. Recommended transformations include:

- Vertical Flipping: To account for orientation variability in clinical scans.

- Random Rotation: ±20 degrees.

- Random Zoom: Up to 20%.

- Brightness Adjustment: Maximum delta of 0.4 to simulate scanner setting variations.

- Model Architecture and Training Configuration

- Backbone: Initialize the model with weights from a ResNet34 pre-trained on ImageNet.

- Custom Classification Head: Replace the final fully connected layer with a new head tailored to the number of tumor classes (e.g., 4 classes: glioma, meningioma, pituitary, no tumor). This head can include additional Batch Normalization and Dropout layers for regularization.

- Optimizer: Use the Ranger optimizer, which combines RAdam (Rectified Adam) and Lookahead, for stable and efficient convergence. A common initial learning rate is 1e-4.

- Training: Use a batch size of 32 or 64. Monitor validation loss for early stopping to prevent overfitting.

Protocol 2: Developing a Hybrid VGG-16 and Vision Transformer Model

This protocol describes the procedure for building a hybrid model that leverages both the local feature extraction of CNNs and the global contextual understanding of Transformers, achieving 99.46% accuracy [34].

- Dataset and Preprocessing

- Utilize a multi-class dataset (e.g., 7,023 MRI scans across glioma, meningioma, pituitary, and no tumor).

- Standardize image size to 224x224 pixels to match the input requirements of both VGG-16 and the Vision Transformer.

- Model Architecture and Fusion

- Feature Extraction Branches:

- Branch 1 (VGG-16): Use a pre-trained VGG-16 (without its top classifier) to process the input images and extract hierarchical convolutional features.

- Branch 2 (Fine-Tuned Vision Transformer - FTVT-b16): Use a pre-trained Vision Transformer (ViT-B/16), fine-tuned on the target medical dataset. A custom classifier head with Batch Normalization (BN), ReLU, and Dropout can be added.

- Feature Fusion: Combine the feature maps or embeddings from both branches using an "Add" layer or concatenation. This fused feature set captures both local texture/shape details (from VGG-16) and global spatial dependencies (from FTVT).

- Classification Head: Attach a final fully connected layer with softmax activation on top of the fused features to perform the tumor classification.

- Feature Extraction Branches:

- Training Strategy

- Use transfer learning for both VGG-16 and FTVT components, potentially freezing the early layers during initial training phases.

- Employ the Adam optimizer and train the combined model end-to-end after the individual branches have been preliminarily fine-tuned.

Protocol 3: Building a Lightweight CNN for Data-Constrained Environments

This protocol is designed for scenarios with limited data (e.g., a few hundred images) or computational resources, where a simple 5-layer CNN can achieve 99% accuracy for binary classification [25] [3].

- Data Preparation

- Use a balanced dataset of tumor and non-tumor grayscale MRI images.

- Resize images to a consistent, manageable size (e.g., 128x128 or 256x256).

- Model Architecture

- Layer 1: A convolutional layer with 32 filters of size 3x3, followed by ReLU activation and a 2x2 max-pooling layer.

- Layer 2: A convolutional layer with 64 filters of size 3x3, followed by ReLU activation and a 2x2 max-pooling layer.

- Layer 3: A convolutional layer with 128 filters of size 3x3, followed by ReLU activation.

- Classification Block: Flatten the output and connect to a fully connected (dense) layer with a number of units (e.g., 64), followed by a final output layer with sigmoid (for binary) or softmax (for multi-class) activation.

- Training

- Use the Adam optimizer with default parameters.

- Train for a limited number of epochs (e.g., 10-50), monitoring for overfitting due to the small dataset size.

Workflow Visualization

The following diagram illustrates the logical workflow for model selection and application, integrating the protocols described above.

Model Selection Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Solutions

| Tool/Resource | Type | Function/Application | Example/Reference |

|---|---|---|---|

| Br35H & Figshare Datasets | Public Dataset | Benchmarking and training models for brain tumor detection and multi-class classification. | [33] [31] [35] |

| Pre-trained Models (ImageNet) | Software Model | Provides powerful feature extractors for transfer learning, significantly reducing required data and training time. | VGG-16, ResNet-34, EfficientNet-B0 [31] [35] |

| Data Augmentation Generators | Software Library | Synthetically expands training datasets to improve model generalization and combat overfitting. | ImageDataGenerator (Keras) [2] |

| Gradient-weighted Class Activation Mapping (Grad-CAM) | Software Tool | Provides visual explanations for model decisions (Explainable AI), highlighting tumor regions in MRIs. | [32] [34] |

| Ranger Optimizer | Software Tool | Combines RAdam and Lookahead optimizers for faster, more stable convergence during model training. | [35] |

| Hybrid Loss Functions (ACL + FL) | Software Tool | Improves segmentation accuracy by combining boundary delineation (ACL) and handling class imbalance (FL). | Active Contour Loss (ACL) & Focal Loss (FL) [36] |

The analysis of Magnetic Resonance Imaging (MRI) data presents a unique computational challenge, requiring the effective integration of spatial, temporal, and structural information. Convolutional Neural Networks (CNNs) have become the cornerstone for spatial feature extraction from medical images due to their exceptional ability to recognize patterns and hierarchical structures in complex image data [4] [37]. These networks utilize a series of convolutional and pooling layers that progressively identify features from simple edges to complex morphological characteristics, making them particularly suited for analyzing anatomical structures in MRI [3]. However, standard 2D CNNs processing individual slices may overlook crucial volumetric context, while 3D CNNs, though more comprehensive, demand significantly greater computational resources and are more challenging to optimize, especially with limited datasets [37].

The integration of CNNs with specialized architectures for sequence modeling and graph-based analysis has emerged as a powerful paradigm to overcome these limitations. Hybrid models leverage the spatial feature extraction capabilities of CNNs while incorporating temporal dependencies and relational reasoning through Long Short-Term Memory (LSTM) networks, Gated Recurrent Units (GRUs), and Spatial-Temporal Graph Networks (STGNs) [4] [38]. These architectures are particularly valuable for dynamic MRI analysis, disease progression monitoring, and capturing complex inter-regional brain connectivity patterns that are inaccessible to purely spatial models. The fusion of these capabilities enables more accurate classification, segmentation, and predictive modeling in neuroimaging, facilitating advances in personalized medicine and treatment planning [37].

Hybrid Model Architectures: Principles and Applications

CNN-LSTM Networks

CNN-LSTM architectures are designed to model spatial-temporal data by processing spatial features extracted by CNNs across sequential time points. The CNN component acts as a feature extractor that identifies relevant spatial patterns from individual MRI slices or volumes, while the LSTM component models temporal dependencies across sequential slices or longitudinal scans [4]. This architecture is particularly effective for tasks such as analyzing 4D functional MRI (fMRI) data, monitoring tumor evolution across multiple time points, and predicting disease progression from longitudinal studies.

Notably, a 3D CNN-LSTM model developed for Alzheimer's disease classification demonstrated the capability to extract spatiotemporal features from resting-state fMRI data with minimal preprocessing, successfully differentiating between Alzheimer's disease, Mild Cognitive Impairment (MCI) stages, and healthy controls [39]. The model architecture began with 1×1×1 convolutional kernels to capture temporal features across the BOLD signal, followed by spatial convolutional layers at multiple scales to integrate spatial information, effectively learning both the when and where of neurologically relevant signals [39].

CNN-GRU Networks

CNN-GRU networks represent an evolution of the hybrid approach, leveraging the simplified gating mechanism of GRUs to reduce computational complexity while maintaining competitive performance in capturing temporal dependencies. The GRU's streamlined architecture, with fewer gates than LSTM, often leads to faster training times and reduced parameter counts, making it particularly suitable for scenarios with limited computational resources or smaller datasets [38].

A novel Vision Transformer-GRU (ViT-GRU) model exemplifies this approach, achieving 98.97% accuracy in brain tumor classification using MRI scans [38]. In this architecture, the Vision Transformer component extracts essential spatial features through self-attention mechanisms, capturing global contextual information often missed by traditional CNNs. The GRU layer then processes the sequence of extracted features, modeling their interdependencies to enhance classification performance. This combination of global spatial attention and temporal modeling addresses both feature representation and sequential relationship challenges in medical image analysis [38].

CNN with Spatial-Temporal Graph Networks

Spatial-Temporal Graph Networks (STGNs) represent the most advanced hybrid architecture for analyzing brain network dynamics. These models combine CNN-based feature extraction with graph neural networks that model the brain as a complex network of interconnected regions. The CNN processes structural or functional imaging data to extract node features, while the graph component models information propagation and functional connectivity between different brain regions [2].

A hybrid model combining Graph Neural Networks (GNNs) and CNNs demonstrated the potential of this approach, leveraging GNNs to capture relational dependencies among image regions while utilizing CNNs to extract spatial features [2]. Though this particular implementation achieved 93.68% accuracy and faced challenges in capturing intricate patterns, the architecture illustrates a promising direction for modeling complex brain network interactions that underlie neurological disorders and tumor characterization.

Table 1: Performance Comparison of Hybrid Models in Medical Imaging Tasks

| Model Architecture | Application Domain | Dataset | Key Performance Metrics | Reference |

|---|---|---|---|---|

| 3D CNN-LSTM | Alzheimer's Disease Classification | ADNI fMRI (120 subjects) | High accuracy in multi-class classification of AD, MCI stages, CN | [39] |

| ViT-GRU | Brain Tumor Classification | BrTMHD-2023 Primary Dataset | 98.97% accuracy with AdamW optimizer | [38] |

| CNN-GRU (GNN hybrid) | Brain Tumor Classification | Multiple MRI Datasets | 93.68% accuracy, challenges with intricate patterns | [2] |

| Hybrid CNN | Alzheimer's Disease Classification | ADNI MRI (1296 scans) | 99.13% accuracy in 5-class classification | [40] |

| Lightweight CNN | Brain Tumor Detection | Kaggle/UCI (189 images) | 99% accuracy, precision: 98.75%, recall: 99.20% | [3] |

Experimental Protocols and Implementation

Data Preparation and Preprocessing Pipeline

Consistent and thorough data preprocessing is essential for training effective hybrid models. The standard pipeline begins with medical image acquisition using appropriate MRI sequences (T1-weighted, T2-weighted, FLAIR, etc.), followed by motion correction to address patient movement artifacts [39]. Intensity normalization ensures consistent signal ranges across different scanners and protocols, while coregistration aligns all images to a standard space such as the Montreal Neurological Institute (MNI) atlas, ensuring uniform spatial dimensions [39].

For temporal data analysis, temporal filtering removes low-frequency drifts and high-frequency noise from fMRI time series. Data augmentation techniques are crucial for addressing limited dataset sizes; these include random rotations, flips, intensity variations, and synthetic sample generation using Generative Adversarial Networks (GANs) [38] [2]. For graph-based approaches, brain parcellation defines nodes based on anatomical or functional atlases, while connectivity matrix construction establishes edges based on structural or functional connectivity measures.

Model Implementation Framework

Implementing a CNN-LSTM hybrid model for fMRI classification involves a structured approach. The input preparation phase involves partitioning 4D fMRI data into overlapping sub-sequences of consecutive volumes to increase training samples [39]. The CNN backbone typically begins with 1×1×1 convolutional kernels to capture temporal BOLD signal patterns, followed by 3D convolutional layers with increasing filter sizes (3×3×3, 5×5×5) to extract spatial features at multiple scales [40] [39]. Batch normalization and leaky ReLU activations (with negative slope of 0.1) stabilize training, while 3D max-pooling layers (2×2×2) progressively reduce spatial dimensions [39].

The temporal modeling component flattens CNN outputs and reshapes them into sequence format for LSTM layers, which typically employ 128-256 units to capture long-range dependencies. The classification head consists of fully connected layers with dropout regularization (0.3-0.5 rate) followed by a softmax output layer for multi-class prediction [39]. Throughout training, the Adam optimizer with learning rate scheduling and categorical cross-entropy loss function are employed, with gradient clipping to address exploding gradients in deep architectures.

Diagram 1: CNN-LSTM fMRI analysis workflow (76 characters)

Training and Optimization Strategies

Effective training of hybrid models requires specialized strategies to address convergence challenges. Transfer learning leverages pre-trained CNN weights (from ImageNet or medical imaging tasks) to initialize the spatial feature extractor, significantly reducing training time and improving performance, especially with limited data [3] [2]. Multi-stage training approaches first train the CNN component separately, then freeze CNN weights while training the LSTM/GRU component, and finally fine-tune the entire network end-to-end with a reduced learning rate [38].