Spatio-Temporal Feature Extraction in Medical Imaging: AI-Driven Methods for Enhanced Diagnosis and Drug Development

This article provides a comprehensive exploration of spatio-temporal feature extraction and its transformative impact on medical imaging analysis.

Spatio-Temporal Feature Extraction in Medical Imaging: AI-Driven Methods for Enhanced Diagnosis and Drug Development

Abstract

This article provides a comprehensive exploration of spatio-temporal feature extraction and its transformative impact on medical imaging analysis. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of capturing dynamic physiological processes across space and time. The scope spans methodological advances in deep learning architectures like 3D CNNs, hybrid CNN-LSTMs, and Transformers, their application in disease diagnosis from Alzheimer's to cancer, and the optimization of these models to overcome data and computational challenges. A critical validation framework is presented, comparing model performance, clinical applicability, and future directions, including integration with spatiotemporally controlled drug delivery systems for personalized medicine.

The Core Principles of Spatio-Temporal Data in Medicine

The extraction of spatio-temporal features represents a cornerstone of modern medical imaging research, providing critical insights into dynamic physiological and pathological processes that static images cannot capture. In functional and dynamic imaging modalities, spatial features delineate the anatomical location, extent, and morphology of physiological phenomena, while temporal features capture the evolution, kinetics, and dynamic relationships of these phenomena over time. The integration of these dimensions enables researchers to construct comprehensive models of biological systems in health and disease. This whitepaper focuses on two pivotal imaging techniques where spatio-temporal feature extraction has proven particularly transformative: functional Magnetic Resonance Imaging (fMRI), specifically through Blood Oxygen Level Dependent (BOLD) signals, and Dynamic Contrast-Enhanced MRI (DCE-MRI) kinetics.

Within the broader context of medical imaging research, spatio-temporal analysis forms the foundation for understanding complex biological systems. The spatio-temporal feature extraction frameworks discussed herein are not merely technical procedures but constitute a philosophical approach to interpreting biological complexity through its manifestation in space and time. For fMRI, this involves decoding neural activity patterns and functional connectivity networks; for DCE-MRI, it quantifies tissue perfusion, permeability, and vascular heterogeneity. These applications share common mathematical foundations in kinetic modeling, signal processing, and multivariate statistics, yet each has developed specialized analytical frameworks tailored to its specific biological questions and technical constraints.

Spatio-Temporal Features in fMRI BOLD Signals

Fundamental Principles of BOLD fMRI

The Blood Oxygen Level Dependent (BOLD) signal forms the basis of most functional MRI studies, providing an indirect measure of neural activity through coupled hemodynamic changes. The BOLD effect originates from magnetic susceptibility differences between oxygenated and deoxygenated hemoglobin, with local increases in neural activity triggering a hemodynamic response that typically peaks 4-6 seconds after stimulus onset [1]. This hemodynamic response function (HRF) represents the fundamental temporal feature in BOLD fMRI, while its spatial distribution maps functional specialization across brain regions.

The spatio-temporal characteristics of BOLD signals enable researchers to investigate both the location and timing of neural processes. Traditional analytical approaches, particularly the General Linear Model (GLM), assume a fixed HRF shape and linear relationships between stimulus and response [1]. However, these assumptions are problematic when HRF shapes vary across regions, subjects, or cortical layers, or when nonlinearities exist between stimulus and BOLD response, particularly for paradigms with short inter-trial intervals or brief stimuli [1]. These limitations have driven the development of more flexible, model-free approaches for spatio-temporal feature extraction.

Advanced Analytical Frameworks for BOLD Signals

Information theory provides a powerful model-free framework for analyzing BOLD signals without assumptions about HRF shape or linearity. This approach enables whole-brain visualization of voxels most involved in coding specific task conditions, the time at which they are most informative, and their average amplitude at that preferred time [1]. In motor learning tasks, this method has revealed that BOLD responses in unimodal motor cortical areas precede responses in higher-order multimodal association areas, including posterior parietal cortex, while areas associated with reduced activity during learning are informative about the task at significantly later times [1].

Latency structure analysis represents another model-free approach that characterizes the temporal sequencing of brain activity. By calculating lagged cross-covariance of time series between brain regions, researchers can map the propagation of intrinsic brain activity across neural networks [2]. Recent advances have linked these latency structures to fundamental neural parameters through biophysical models, revealing significant correlations with excitatory and inhibitory synaptic gating, recurrent connection strength, and excitation/inhibition balance [2]. These latency eigenvectors align with established models of cortical hierarchy and intrinsic neural signaling, providing a bridge between macroscopic fMRI signals and underlying neurophysiology.

Table 1: Key Spatio-Temporal Features in fMRI BOLD Signals

| Feature Category | Specific Features | Analytical Methods | Biological Interpretation |

|---|---|---|---|

| Temporal Features | Hemodynamic Response Function (HRF) shape | General Linear Model (GLM) | Neurovascular coupling efficiency |

| Response latency | Information theory analysis [1] | Relative timing of regional engagement | |

| Intrinsic Neural Timescale (INT) | Autocorrelation decay [2] | Temporal receptive window, information integration capacity | |

| Spatial Features | Activation maps | Voxel-wise statistical testing | Functional specialization localization |

| Functional connectivity | Correlation/Coherence analysis [3] | Network organization and integration | |

| Latency eigenvectors | Principal Component Analysis [2] | Large-scale spatio-temporal propagation patterns | |

| Integrated Spatio-Temporal Features | Information time maps | Mutual information calculation [1] | Spatio-temporal patterns of task-related information coding |

| Dynamic functional connectivity | Sliding window correlation | Time-varying network interactions |

Experimental Protocols for BOLD Spatio-Temporal Analysis

Motor learning paradigms provide an excellent experimental framework for investigating spatio-temporal dynamics in BOLD signals. A typical protocol involves subjects performing a bimanual serial reaction-time task while learning a novel sequence during fMRI acquisition [1]. The experimental design should include sufficient trials and counterbalancing to separate learning-related effects from performance effects. For data acquisition, a repetition time (TR) of 1-2 seconds provides adequate temporal resolution to capture HRF dynamics, with whole-brain coverage achieved through multi-slice acquisition protocols.

For model-free information theory analysis, the processing pipeline involves several stages. First, pre-processing (motion correction, spatial smoothing, temporal filtering) standardizes the data. Next, mutual information between the task condition and BOLD signal is computed at multiple time shifts for each voxel, generating spatio-temporal information maps that identify when and where the signal contains the most information about the task condition [1]. The time shift with maximal mutual information represents the preferred time for each voxel, while the amplitude at that time reflects response magnitude. This approach enables estimation of relative delays between brain regions without prior knowledge of the experimental design, suggesting a general method applicable to natural, uncontrolled conditions [1].

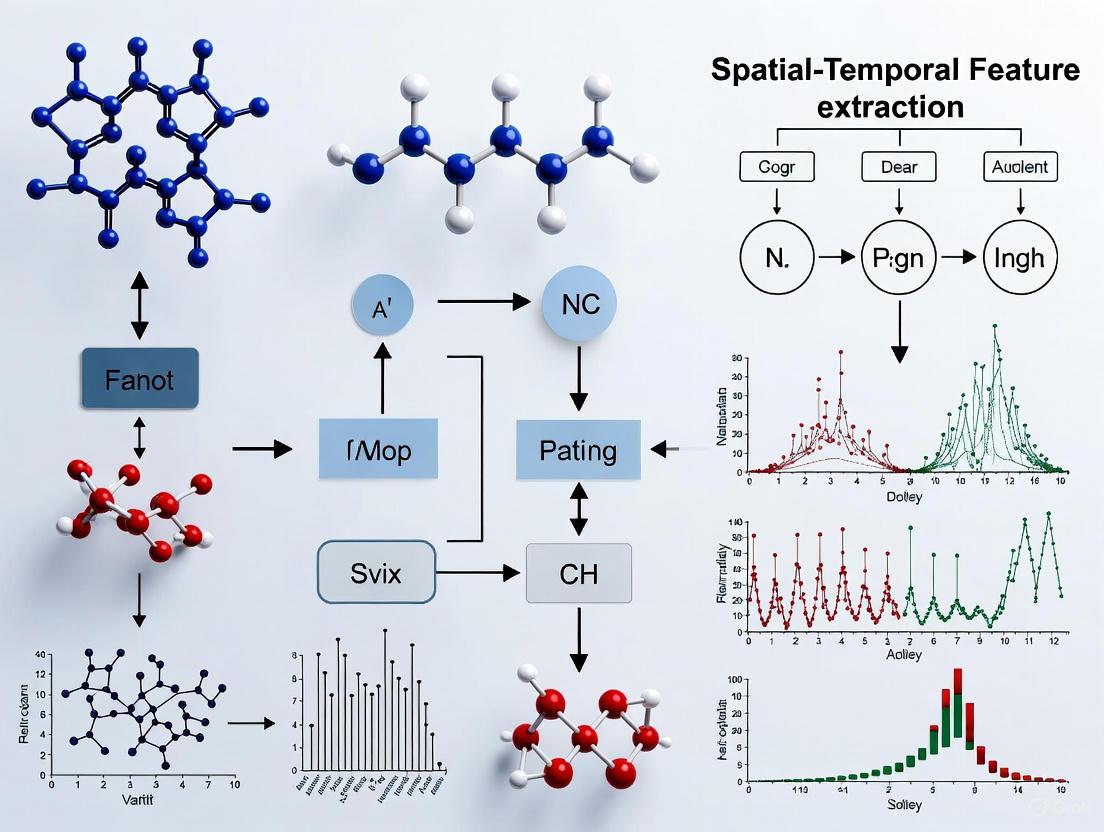

Figure 1: Analytical Framework for Spatio-Temporal Feature Extraction from BOLD fMRI Signals

Spatio-Temporal Features in DCE-MRI Kinetics

Pharmacokinetic Modeling in DCE-MRI

Dynamic Contrast-Enhanced MRI (DCE-MRI) tracks the temporal evolution of contrast agent distribution through tissues, providing quantitative measures of tissue vascularity, perfusion, and permeability. Unlike BOLD fMRI, which reflects hemodynamic changes coupled to neural activity, DCE-MRI directly characterizes vascular properties through kinetic modeling of contrast agent concentration time courses. The fundamental spatio-temporal feature in DCE-MRI is the contrast agent concentration curve, which captures the inflow, distribution, and washout of contrast agent in each voxel over time.

The Tofts model represents the most widely used pharmacokinetic model for DCE-MRI analysis, conceptualizing tissue as comprising two compartments: the vascular space (plasma) and the extravascular extracellular space (EES) [4]. The model defines three primary kinetic parameters: Ktrans (volume transfer constant between blood plasma and EES), ve (fractional volume of EES), and kep (rate constant between EES and blood plasma, defined as Ktrans/ve) [4]. These parameters are derived by fitting the model to measured contrast concentration curves using nonlinear least squares estimation, typically on a voxel-wise basis to generate parametric maps that spatially represent kinetic properties.

Quantitative and Semi-Quantitative Parameters in DCE-MRI

DCE-MRI analysis occurs at three levels of complexity with corresponding spatio-temporal features. Qualitative assessment involves visual inspection of contrast enhancement patterns, while semi-quantitative analysis extracts features directly from the concentration-time curve without physiological modeling. Key semi-quantitative parameters include Time-To-Peak (TTP), initial rate of enhancement (IRE), and maximum enhancement ratio [5]. These features provide robust, model-free characterization of contrast dynamics but have limited physiological specificity.

Quantitative analysis through pharmacokinetic modeling generates parameters with specific physiological interpretations. Ktrans reflects both blood flow and permeability, with flow dominance in high-permeability situations and permeability dominance in low-flow conditions [4]. The ve parameter indicates the fractional volume of the extracellular extravascular space, often increased in tumors due to disrupted tissue architecture and expanded interstitial space. These quantitative parameters enable more precise characterization of tissue properties but require accurate measurement of the arterial input function (AIF) and more complex modeling approaches.

Table 2: Key Spatio-Temporal Features in DCE-MRI Kinetics

| Parameter Type | Specific Parameters | Calculation Method | Physiological Interpretation |

|---|---|---|---|

| Semi-Quantitative Parameters | Time To Peak (TTP) | Time from onset to maximum concentration | Perfusion and permeability composite |

| Initial Rate of Enhancement (IRE) | Slope of initial uptake phase | Tissue perfusion and inflow | |

| Maximum Enhancement | Peak concentration value | Vascular density and volume | |

| Initial Area Under the Curve (iAUC) | Integration of early concentration curve | Composite perfusion-permeability measure | |

| Quantitative Parameters | Ktrans | Pharmacokinetic modeling (Tofts model) | Volume transfer constant (flow/permeability) |

| ve | Pharmacokinetic modeling (Tofts model) | Extravascular extracellular volume fraction | |

| kep | Pharmacokinetic modeling (Ktrans/ve) | Rate constant from EES to plasma | |

| vp | Expanded pharmacokinetic modeling | Blood plasma volume fraction | |

| Vascular Morphology Features | Plasma Flow (Fp) | Distributed parameter models | Capillary blood flow |

| Permeability-Surface Area (PS) | Tissue homogeneity models | Vascular permeability | |

| Mean Transit Time (MTT) | Bolus tracking methods [6] | Average capillary transit time |

Experimental Protocols for DCE-MRI Spatio-Temporal Analysis

A comprehensive DCE-MRI protocol for spatio-temporal feature extraction requires meticulous attention to acquisition parameters and modeling approaches. For prostate cancer characterization, as exemplified in recent research, patients undergo multiparametric MRI prior to intervention, including T2-weighted, diffusion-weighted imaging (DWI), and DCE-MRI sequences [5]. The DCE-MRI acquisition uses a 3D spoiled gradient echo sequence with high temporal resolution (3-7 second intervals) repeated 60-120 times after contrast administration (0.1 mmol/kg of gadoterate meglumine) [5]. Pre-contrast T1 mapping with variable flip angles enables quantitative concentration calculations.

For quantitative analysis, the arterial input function (AIF) must be accurately characterized, either using population-based models or patient-specific measurement from an arterial region. The Parker AIF has demonstrated superior performance compared to the Weinmann AIF in discriminating tumor and benign tissue [5]. Following concentration calculation, voxel-wise fitting to the Tofts model or other pharmacokinetic models generates parametric maps of Ktrans, ve, and kep. Validation against histopathological specimens from radical prostatectomy confirms the biological relevance of these spatio-temporal features, with studies showing that DCE-MRI parameters combined with DWI and T2w imaging improve tumor detection accuracy to 78% for low-grade tumors and 85% for high-grade tumors compared to 58% and 72%, respectively, without DCE parameters [5].

Figure 2: DCE-MRI Spatio-Temporal Feature Extraction Workflow

Comparative Analysis and Integrative Approaches

Methodological Comparisons Across Modalities

While fMRI BOLD and DCE-MRI focus on different physiological processes, their analytical frameworks for spatio-temporal feature extraction share fundamental similarities. Both modalities employ kinetic modeling approaches to derive physiologically relevant parameters from dynamic image series, and both generate parametric maps that spatially represent temporal features. However, important distinctions exist in their temporal scales, contrast mechanisms, and modeling assumptions.

BOLD fMRI typically operates at faster temporal scales (TR~0.5-2 seconds) compared to DCE-MRI (TR~3-10 seconds), reflecting their different physiological targets. The BOLD signal represents an indirect, complex function of cerebral blood flow, volume, and oxygen consumption, while DCE-MRI directly tracks contrast agent concentration. From a modeling perspective, DCE-MRI pharmacokinetic models have more established physiological interpretations, whereas BOLD models remain more empirically derived despite recent advances in biophysical modeling [2].

Emerging Integrative Frameworks

The integration of multiple imaging modalities provides unprecedented opportunities for comprehensive tissue characterization. Combined fMRI-DCE-MRI studies enable correlation of vascular and neural features, particularly valuable in oncology where tumor vascular properties may influence peritumoral neural function. Similarly, the integration of DCE-MRI parameters with diffusion-weighted imaging and T2-weighted imaging significantly improves tumor detection and characterization accuracy compared to any single parameter alone [5].

Advanced machine learning approaches, particularly spatio-temporal deep learning frameworks, represent the frontier of integrative analysis. Methods like the global attention convolutional recurrent neural network (globAttCRNN) combine spatial feature extraction through convolutional neural networks with temporal modeling through recurrent networks with attention mechanisms [7]. The temporal attention module prioritizes informative time points, enabling the model to capture key spatio-temporal features while ignoring irrelevant information [7]. Such approaches have demonstrated superior performance in tasks like lung nodule classification from longitudinal CT scans, achieving AUC-ROC of 0.954 by effectively leveraging both spatial and temporal information [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Spatio-Temporal Feature Extraction Studies

| Category | Specific Item | Function/Application | Representative Examples |

|---|---|---|---|

| Imaging Equipment | High-Field MRI Scanner | Image acquisition with high spatial-temporal resolution | 3T Siemens MAGNETOM Prisma/Skyra [5] [8] |

| Multi-Channel Receive Coil | Signal reception with improved SNR | 32-channel head coil [8] | |

| Physiological Monitoring System | Monitoring of physiological confounds | Photoplethysmography, capnography, beat-to-beat blood pressure [8] | |

| Contrast Agents | Gadolinium-Based Contrast | DCE-MRI tracer for pharmacokinetic modeling | Dotarem (gadoterate meglumine) [5] |

| Analysis Software | Pharmacokinetic Modeling Tools | Quantitative parameter estimation | Tofts model implementation [4] |

| Statistical Parametric Mapping | Voxel-wise statistical analysis | SPM, FSL [1] | |

| Independent Component Analysis | Blind source separation of spatio-temporal features | MELODIC ICA [3] | |

| Computational Resources | High-Performance Computing | Processing of large spatio-temporal datasets | Cluster computing for population studies |

| Deep Learning Frameworks | Implementation of spatio-temporal networks | TensorFlow, PyTorch for globAttCRNN [7] | |

| Experimental Apparatus | Response Devices | Behavioral monitoring during fMRI | 5-fingered response box for motor tasks [1] |

| Physiological Challenge Equipment | Controlled perturbation of physiological state | Thigh-cuff release system [8] |

Spatio-temporal feature extraction represents a powerful paradigm for extracting biologically meaningful information from dynamic medical imaging data. In fMRI BOLD analysis, model-free approaches based on information theory and latency analysis enable mapping of neural processing sequences without assumptions about hemodynamic response shape, revealing hierarchical temporal organization across brain networks [1] [2]. In DCE-MRI, quantitative pharmacokinetic parameters derived from contrast agent kinetics provide precise measures of tissue vascular properties that significantly improve diagnostic accuracy when combined with structural and diffusion imaging [5] [4].

The continued advancement of spatio-temporal feature extraction methodologies will undoubtedly enhance our understanding of biological systems in health and disease. Future directions include the development of more sophisticated biophysical models that bridge spatial and temporal scales, the application of attention-based deep learning architectures that automatically prioritize informative spatio-temporal features [7], and the integration of multimodal data to construct comprehensive models of physiological and pathological processes. As these techniques mature, they will increasingly inform clinical decision-making and drug development by providing quantitative, spatially-resolved measures of treatment response and disease progression.

In medical imaging research, the transition from three-dimensional (3D) static snapshots to four-dimensional (4D) spatiotemporal analysis represents a fundamental paradigm shift, moving from visualizing structure to understanding function and dynamics. A 4D dataset incorporates three spatial dimensions plus the critical fourth dimension of time, enabling researchers to capture and quantify dynamic processes as they unfold. This capability is not merely an incremental improvement but a clinical imperative for understanding a vast range of physiological and pathological processes, from the beating heart and blood flow to the dynamic neural activation patterns in the brain and the progression of neurodegenerative diseases. The spatial-temporal feature extraction discussed in this thesis is the computational foundation that makes this advanced analysis possible, transforming raw 4D data into quantifiable biomarkers for research and drug development.

The limitations of static, 3D imaging become acutely apparent when studying dynamic physiological systems. Traditional methods often rely on template-dependent approaches or separate processing of spatial and temporal components, which can lack inter-subject specificity, discard temporal continuity, and compromise the fidelity of the underlying dynamic process [9]. In contrast, joint 4D spatiotemporal modeling preserves the intrinsic, continuous nature of biological systems, offering a more accurate and comprehensive basis for analysis. This whitepaper details the technical methodologies, experimental validations, and essential tools that establish 4D analysis as the indispensable standard for investigating dynamic processes in medical research.

Quantitative Evidence: Performance Benchmarks of 4D Analysis

The superiority of 4D analytical approaches is demonstrated by concrete performance metrics across various clinical applications. The following tables summarize key quantitative findings from recent seminal studies.

Table 1: Classification Performance of 4D Analysis in Neurological and Cardiac Applications

| Pathology / Application | Dataset | Methodology | Key Performance Metric | Result |

|---|---|---|---|---|

| Early Mild Cognitive Impairment (eMCI) | ADNI (324 subjects) | Axial Slice-Centric 4D fMRI Model [9] | Classification Accuracy | 97% |

| Disorder of Consciousness | Private Dataset (164 subjects) | Axial Slice-Centric 4D fMRI Model [9] | Classification Accuracy | Outperformed state-of-the-art by 5% |

| Cardiac & Knee Joint Dynamics | ACDC & Dynamic Knee Joint Datasets | TSSC-Net (Diffusion-based Temporal Super-Resolution) [10] | Temporal Super-Resolution Factor | 6x increase |

| Longitudinal Image Prediction | Public Longitudinal Datasets (Cardiac, Stroke, Glioblastoma) | Temporal Flow Matching (TFM) [11] | Prediction Accuracy vs. LCI Baseline | Consistently Surpassed |

Table 2: Operational Advantages of 4D Analysis and Visualization

| Domain | Technology | Advantage | Impact |

|---|---|---|---|

| 4D Surgical Visualization | 4D Microscope-Integrated OCT (MIOCT) [12] | Imaging Rate | Up to 10 volumes/second |

| 4D Surgical Visualization | 4D MIOCT in Mock Trials [12] | Surgical Outcome | Enhanced suturing accuracy and instrument control |

| Market Adoption | Advanced 3D/4D Visualization Systems Market [13] | Projected Growth (2025-2035) | 4.6% CAGR, from USD 799M to USD 1.2B |

| Respiratory Diagnostics | 4DMedical CT:VQ [14] | Addressable U.S. Market | $1.6 billion per annum |

Experimental Protocols: Methodologies for 4D Spatiotemporal Analysis

Protocol 1: Efficient 4D fMRI for Brain Disorder Classification

This protocol outlines the methodology for a template-free analysis of 4D functional MRI (fMRI) data to classify neurological disorders such as early mild cognitive impairment [9].

- Objective: To classify brain disorders by jointly modeling spatiotemporal representations from native 4D fMRI data, eliminating dependency on fixed brain atlases and preserving intrinsic, individualized brain activity patterns.

- Procedure:

- Data Decomposition: The 4D fMRI data (x, y, z, t) is decomposed into 3D spatiotemporal manifolds along the axial axis (z-axis).

- Hierarchical Feature Extraction: A hierarchical encoder extracts local spatiotemporal interactions within each axial slice. The information is progressively aggregated to capture multi-granularity neural patterns.

- Adaptive Sampling: A differentiable TopK operation is applied to adaptively select the most informative slices and time points. This balances computational efficiency with the need to model long-range temporal dependencies.

- Model Training & Evaluation: The model is trained end-to-end. Performance is evaluated on standard datasets like ADNI for classification accuracy, computational complexity (FLOPs), and the clinical relevance of visualized biomarker attention maps.

- Clinical Relevance: This approach achieved 97% accuracy in classifying eMCI vs. normal controls on the ADNI dataset and identified biomarkers consistent with established research, demonstrating its template-free diagnostic capability [9].

Protocol 2: Diffusion-Driven Temporal Super-Resolution for 4D MRI

This protocol describes a framework for enhancing the temporal resolution of dynamic 4D MRI, crucial for capturing fast, large-amplitude motion in organs like the heart and joints [10].

- Objective: To generate high-fidelity intermediate frames between acquired 3D volumes in a time series, thereby increasing temporal resolution and preserving spatial consistency across slices.

- Procedure:

- Temporal Super-Resolution: A diffusion-based network generates multiple intermediate frames using the start frame (I₀) and end frame (I₁) of a motion sequence as key references. This model is trained to achieve a 6x increase in temporal resolution in a single inference step.

- Spatial Consistency Enhancement: The generated frame sequences are processed by a tri-directional Mamba-based module. This module leverages long-range contextual information from three volumetric directions (axial, coronal, sagittal) to resolve spatial inconsistencies and cross-slice misalignments, ensuring volumetric coherence.

- Loss Function Optimization: The model is trained using a combined loss function that includes Mean Squared Error (MSE) for voxel-wise accuracy, Wavelet Transform loss to preserve high-frequency details, and Total Variation (TV) Smoothness loss to ensure anatomical realism.

- Clinical Relevance: Validated on the ACDC cardiac MRI and a dynamic knee joint dataset, TSSC-Net successfully handled large deformations where traditional registration-based interpolation methods fail, producing high-resolution dynamic MRI with structural fidelity [10].

Protocol 3: Temporal Flow Matching for Longitudinal Disease Progression

This protocol details a generative approach for modeling the temporal evolution of 3D anatomical structures from sparse and irregularly sampled longitudinal scans [11].

- Objective: To learn the underlying temporal distribution of anatomical changes from a set of context images (e.g., prior scans) in order to predict a future 3D state (e.g., tumor growth, brain atrophy).

- Procedure:

- Problem Formulation: For a given patient, a variable number of context 3D images ( \mathcal{I} = {I1, \dots, IT} ), acquired at possibly irregular time points ( \mathcal{T} = {t1, \dots, tT} ), are used to predict a target image ( I{\text{target}} ) at a future time ( t{\text{target}} ).

- Difference Modeling: The Temporal Flow Matching (TFM) model is designed to learn only the changes between scans. It defines a trajectory between a source state (e.g., the most recent context image) and the target state, modeling the velocity field of this transformation.

- Sparsity Handling: Missing context images due to irregular sampling are set to zero. The model architecture is designed to be robust to this sparse and incomplete data.

- Training and Inference: The model is trained to regress the velocity field ( u_\tau ) that governs the transformation. It can natively handle 3D volumetric time series of variable length and context, making it agnostic to specific diseases or imaging modalities.

- Clinical Relevance: TFM established a new state-of-the-art in predicting future medical images across heterogeneous applications, including cardiac function (Cine-MRI), stroke progression (perfusion CT), and glioblastoma growth (MRI) [11].

The logical workflow for implementing a 4D analysis pipeline, synthesizing the core concepts from these protocols, is illustrated below.

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful execution of 4D medical imaging research requires a suite of specialized software, data, and computational resources. The following table details key components of the research toolkit.

Table 3: Essential Research Reagents and Solutions for 4D Medical Imaging Analysis

| Tool Category | Specific Tool / Solution | Function / Application | Source / Reference |

|---|---|---|---|

| Public Datasets | ADNI (Alzheimer's Disease Neuroimaging Initiative) | Provides longitudinal MRI/fMRI data for modeling disease progression and validating classification algorithms [9] [15]. | https://adni.loni.usc.edu/ |

| Public Datasets | ACDC (Automated Cardiac Diagnosis Challenge) | Offers cardiac cine-MRI data for developing and benchmarking 4D dynamic heart analysis models [10] [11]. | https://www.creatis.insa-lyon.fr/Challenge/acdc/ |

| Software & Libraries | Spaco / SpacoR | A spatially-aware colorization protocol for optimizing categorical data visualization in spatial plots (e.g., cell types in transcriptomics) [16]. | GitHub: BrainStOrmics/Spaco |

| Software & Libraries | Temporal Flow Matching (TFM) Code | A unified generative model for learning spatio-temporal trajectories in 4D longitudinal medical imaging [11]. | GitHub: MIC-DKFZ/Temporal-Flow-Matching |

| AI Models | 4D Convolutional Neural Network (4D CNN) | Employs 4D joint temporal-spatial kernels to capture spatiotemporal dynamics in fMRI data for tasks like Alzheimer's classification [15]. | Custom Implementation |

| AI Models | Tri-directional Mamba Module | Leverages a state-space model for efficient long-range context modeling to resolve spatial inconsistencies in volumetric data [10]. | Custom Implementation |

| Computational Hardware | High-Performance GPUs | Accelerates training of deep learning models and enables real-time rendering of large 4D datasets (e.g., volumetric rendering at 10 vols/s) [12]. | Industry Standard (e.g., NVIDIA) |

The evidence is conclusive: the dynamic nature of physiological and pathological processes demands analytical methods that are themselves dynamic. 4D spatiotemporal analysis is not a niche specialization but a foundational toolset for modern medical research and drug development. As the field advances, the integration of generative AI models like Temporal Flow Matching and diffusion models will further enhance our ability to predict disease trajectories and synthesize high-fidelity 4D data. The convergence of 4D imaging with other technological frontiers—such as real-time rendering, cloud-based visualization, and AI-driven predictive analytics—will unlock new frontiers in personalized medicine [13] [17].

For researchers and drug development professionals, mastering 4D spatial-temporal feature extraction is no longer optional but a clinical imperative. It is the key to transforming transient, dynamic biological events into stable, quantifiable biomarkers that can power early diagnosis, precise treatment planning, and the development of next-generation therapeutics.

The advancement of medical imaging and microscopy is intrinsically linked to the ability to capture and analyze changes across both space and time. Spatiotemporal feature extraction represents a core methodology in modern biomedical research, enabling the quantification of dynamic biological processes, from cellular reactions to whole-organ function and network-level brain activity. This technical guide provides an in-depth examination of four pivotal data modalities—fMRI, DCE-MRI, Ultrasound, and Multi-Time-Point Microscopy—focusing on their roles in capturing dynamic data, the quantitative parameters they yield, and their applications in therapeutic development. Within drug discovery and development, these modalities provide a critical bridge between preclinical research and clinical application, offering non-invasive, quantitative biomarkers for understanding disease mechanisms, assessing treatment efficacy, and guiding therapeutic decisions [18]. The integration of artificial intelligence and radiomics with these imaging data further accelerates the extraction of meaningful biological insights, pushing the frontiers of personalized medicine [18].

Functional Magnetic Resonance Imaging (fMRI)

Functional Magnetic Resonance Imaging (fMRI) is a non-invasive technique that measures brain activity by detecting changes in blood flow and oxygenation. Its high spatial and temporal resolution makes it indispensable for mapping neural networks and identifying biomarkers of neurological disorders.

Spatiotemporal Features and Analysis Protocols

Traditional fMRI analysis often relies on template-dependent methods that map data to a standard brain atlas, which can lack inter-subject specificity. Emerging template-free models directly process native 4D fMRI data (three spatial dimensions plus time), preserving individual brain architecture and intrinsic temporal dynamics [9]. One advanced analytical framework involves:

- Axial Slice-Centric Decomposition: The 4D fMRI data is decomposed into 3D spatiotemporal manifolds along the axial axis, enabling joint learning of spatial and temporal features without separating them [9].

- Hierarchical Spatiotemporal Encoder: This architecture extracts local spatiotemporal interactions within each slice and progressively aggregates information to capture multi-granularity neural patterns [9].

- Differentiable TopK Operation: An adaptive mechanism that selects the most informative slices and time points, balancing computational efficiency with the preservation of long-range temporal dependencies, which are crucial for understanding connected neural dynamics [9].

Table 1: Key Spatiotemporal Features in Template-Free 4D fMRI Analysis

| Feature Category | Specific Features | Biological Significance |

|---|---|---|

| Spatial Features | Local Spatiotemporal Interactions, Multi-granularity Neural Patterns | Captures localized brain activity and hierarchical organization of neural circuits. |

| Temporal Features | Temporal Continuity, Long-range Temporal Dependencies | Reflects the smooth, correlated nature of neural dynamics over time. |

| Composite Features | Slice-level Attention Maps, Axial Manifold Representations | Identifies biomarkers and regions of significance without pre-defined anatomical priors. |

Experimental Protocol for Neurological Disorder Classification

A representative protocol for classifying brain disorders such as early mild cognitive impairment (eMCI) using template-free 4D fMRI analysis is as follows [9]:

- Data Acquisition: Collect native 4D fMRI data from participants (e.g., 324 subjects for eMCI vs. normal controls study) without applying spatial normalization to a standard template.

- Preprocessing: Perform standard preprocessing steps including motion correction, slice-timing correction, and band-pass filtering.

- Model Training: Implement the axial slice-centric model with a hierarchical encoder and differentiable TopK operation for end-to-end feature learning and subject classification.

- Validation: Evaluate model performance using metrics like classification accuracy and computational efficiency (e.g., floating-point operations). The cited study achieved 97% accuracy in classifying eMCI with a 25% reduction in computational operations compared to baseline methods [9].

- Biomarker Visualization: Generate and interpret slice-level attention maps to identify neural patterns consistent with known disease biomarkers, validating the model's clinical relevance.

Figure 1: Template-Free 4D fMRI Analysis Workflow

Dynamic Contrast-Enhanced Magnetic Resonance Imaging (DCE-MRI)

DCE-MRI tracks the passage of a contrast agent through tissue to quantify microvascular properties, providing critical insights into perfusion, capillary permeability, and vascular volume in oncology and other fields.

Key Kinetic Parameters and Analysis Methods

DCE-MRI data analysis can be performed using semi-quantitative (model-free) or quantitative (model-based) approaches, each yielding specific parameters [19].

Semi-quantitative Analysis: This method derives parameters directly from the Time-Intensity Curve (TIC) without complex modeling. It is robust and independent of the Arterial Input Function (AIF) but lacks direct physiological correlates and can be sensitive to variations in acquisition protocols [19]. Key parameters include:

- Maximum Enhancement (ME): The peak signal intensity post-contrast.

- Wash-in Slope: The initial rate of signal increase.

- Wash-out Slope: The rate of signal decrease after the peak.

- Time to Peak (TTP): The time taken to reach maximum enhancement.

- Initial Area Under the Curve (iAUC): The area under the curve within a specified early time period (e.g., 60 seconds), reflecting the total inflow of contrast [20].

- Maximum Slope (MS): The highest tangent value for the slope of the kinetic curve, indicative of the most rapid uptake [21] [20].

Quantitative Pharmacokinetic Modeling: This approach uses mathematical models to derive absolute physiological parameters. The most common models are the Standard Tofts and Extended Tofts models, which conceptualize tissue as comprising blood plasma and the extravascular extracellular space (EES) [22]. Key parameters include:

- Ktrans: The volume transfer constant between blood plasma and the EES, reflecting blood flow and capillary permeability.

- kep: The rate constant between the EES and blood plasma (kep = Ktrans/ve).

- ve: The volume of the EES per unit tissue volume.

- vp: The blood plasma volume per unit tissue volume (included in the Extended Tofts model).

Table 2: Core DCE-MRI Kinetic Parameters and Their Interpretations

| Parameter | Type | Physiological Interpretation | Typical Application Context |

|---|---|---|---|

| Ktrans (min⁻¹) | Quantitative | Rate of contrast transfer from plasma to EES; reflects perfusion & permeability. | Oncology (tumor characterization), therapy monitoring. |

| ve | Quantitative | Fractional volume of extravascular extracellular space. | Assessing tissue cellularity and necrosis. |

| vp | Quantitative | Fractional plasma volume. | Measuring vascularity. |

| kep (min⁻¹) | Quantitative | Rate constant from EES back to plasma. | Often correlated with Ktrans. |

| Maximum Slope (%/s) | Semi-quantitative | Maximum rate of contrast uptake. | Ultrafast DCE-MRI for lesion differentiation [21] [20]. |

| iAUC (mM·s) | Semi-quantitative | Total contrast inflow over a defined time. | Early response assessment, ultrafast imaging [20]. |

| Time to Peak (s) | Semi-quantitative | Time from contrast arrival to peak enhancement. | General perfusion assessment. |

Experimental Protocol for Ultrafast DCE-MRI in Breast Lesion Characterization

Ultrafast DCE-MRI captures the very early kinetics of contrast uptake with high temporal resolution, showing high diagnostic value. A protocol for optimizing scan duration in breast imaging is detailed below [20]:

- Patient Population: Recruit patients with suspicious breast findings (BI-RADS ≥4) for preoperative MRI. Exclusions include prior breast surgery, biopsy, or treatment before the MRI.

- Image Acquisition:

- Use a 3 Tesla MRI system with a dedicated breast coil.

- Acquire a T1 map prior to contrast injection using a two-flip angle VIBE sequence.

- Perform ultrafast DCE-MRI with a compressed sensing-accelerated T1-weighted sequence. Key parameters: temporal resolution = 4.5 s/phase, one pre-contrast and 29 post-contrast phases (total potential duration 135 s).

- Inject gadolinium-based contrast agent (0.1 mmol/kg) at 2.0 mL/s.

- Data Analysis:

- Create multiple dynamic datasets from the acquired data, corresponding to different scan durations (e.g., from 40.5 s to 135 s in 8 intervals).

- For each dataset and lesion, draw a volume of interest (VOI) on a post-processing workstation.

- Extract key ultrafast parameters like Maximum Slope (MS) and Initial Area Under the Curve (iAUC) for each scan duration.

- Statistical Analysis and Outcome: Compare MS and iAUC between benign and malignant lesions for each scan duration. Use Receiver Operating Characteristic (ROC) analysis to determine the diagnostic performance (AUC) of the parameters at each duration. The study found that a scan duration of 67.5 seconds was optimal, providing high diagnostic accuracy (AUC for MS = 0.804) without significant improvement from longer acquisitions [20].

Multi-Institutional Considerations and Clinical Applications

A critical challenge in quantitative DCE-MRI is inter-algorithm variability. A multi-institutional comparison of 11 different algorithms implementing Tofts models found that while most could correctly order parameter values from digital reference objects, there was low consistency in classifying patients above or below median values [23]. This highlights that DCE-MRI results may not be directly comparable or combinable when derived from different software implementations, necessitating careful cross-algorithm quality assurance [23].

DCE-MRI has diverse and growing applications:

- Oncology: Predicting pathological complete response (pCR) to neoadjuvant chemotherapy in breast cancer. For instance, in HER2-enriched subtypes, a low initial enhancement and low angiovolume on pre-treatment DCE-MRI were associated with a higher likelihood of pCR [21].

- Locoregional Therapy for HCC: In hepatocellular carcinoma, DCE-MRI is being investigated to guide thermal ablation by mapping tumor microvasculature, assess treatment efficacy after transarterial chemoembolization (TACE), and refine dosimetry for radioembolization (TARE) by mapping tumor blood flow [22].

- Peripheral Perfusion: Applied to evaluate microvascular status in the extremities, useful in areas like fracture healing and rheumatoid illnesses [19].

Figure 2: DCE-MRI Data Processing Workflow

Ultrasound Imaging

While the search results lack specific technical details on ultrasound for spatiotemporal feature extraction, its role in this domain is well-established in the broader literature. Clinical and pre-clinical ultrasound systems can capture dynamic processes in real-time.

Spatiotemporal Features and Analysis

Advanced ultrasound techniques leverage both the spatial distribution and temporal changes of signals.

- Contrast-Enhanced Ultrasound (CEUS): Utilizes microbubble contrast agents to visualize and quantify blood flow dynamics. Time-Intensity Curves (TICs) derived from CEUS provide semi-quantitative parameters like peak enhancement, wash-in/wash-out rates, and time-to-peak, analogous to DCE-MRI.

- Doppler Imaging: Spectral and Color Doppler assess blood flow velocity and direction over time, useful for evaluating vascular stenosis and cardiac function.

- Elastography: Measures tissue stiffness by tracking the propagation of shear waves through tissue over time, providing a mechanical property map.

- Ultrasound Localization Microscopy: A super-resolution technique that tracks the movement of individual microbubbles over thousands of frames to map microvascular networks at a resolution beyond the diffraction limit.

Multi-Time-Point Microscopy

Multi-Time-Point Microscopy encompasses a range of optical imaging techniques that monitor biological processes at the cellular and sub-cellular level over time. Although specific protocols were not detailed in the search results, this modality is a cornerstone of spatiotemporal analysis in preclinical drug discovery [18].

Spatiotemporal Features and Workflow

This modality captures the dynamics of live cells and organisms, enabling the study of complex processes such as cell migration, proliferation, differentiation, and intracellular signaling.

- Key Features: Commonly extracted features include cell trajectory, velocity, and mean squared displacement for migration studies; fluorescence intensity and localization for signaling and expression dynamics; and morphological changes (e.g., shape, size) for cell state and health.

- Workflow Integration: In the drug discovery pipeline, these assays are used for high-content screening to understand disease mechanisms, identify new pharmacological targets, and assess the efficacy and potential toxicity of new drug candidates [18]. The integration with artificial intelligence and radiomics allows for the automated extraction of complex patterns from large-scale microscopy data [18].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Materials for Spatiotemporal Imaging Modalities

| Item Name | Primary Function | Application Context |

|---|---|---|

| Gadolinium-Based Contrast Agent (e.g., Gadoterate Meglumine) | Shortens T1 relaxation time of tissues, causing signal enhancement on T1-weighted MRI. | Essential for DCE-MRI studies across all applications (oncology, neurology, etc.) [21] [19]. |

| Dedicated Phased-Array Coil | Improves signal-to-noise ratio (SNR) by using multiple receiver channels close to the region of interest. | Critical for high-resolution fMRI and DCE-MRI (e.g., 16-channel breast coil, head coil) [21] [20]. |

| Arterial Spin Labeling (ASL) MRI Sequence | Labels arterial blood water magnetically as an endogenous tracer to measure perfusion without external contrast. | Used as an alternative to DCE-MRI for quantitative blood flow measurement, particularly in the brain [22] [19]. |

| Open-Source DCE-MRI Analysis Package (e.g., in-house software) | Performs pharmacokinetic model fitting (e.g., Tofts model) to derive quantitative parameters like Ktrans and ve. | Enables quantitative analysis of DCE-MRI data; many current studies use in-house or open-source solutions [22]. |

| Microbubble Ultrasound Contrast Agent | Intravenous microbubbles oscillate in an ultrasound field, enhancing the backscattered signal from blood. | Required for Contrast-Enhanced Ultrasound (CEUS) to visualize and quantify tissue perfusion and vascularity. |

| Live-Cell Imaging Media | Provides a physiologically stable environment that maintains pH, osmolality, and nutrient supply for cells during extended imaging. | Essential for Multi-Time-Point Microscopy to ensure cell viability and normal function throughout the experiment. |

| Fluorescent Probes/Dyes (e.g., for Ca²⁺, specific proteins) | Binds to specific ions or molecules, emitting fluorescence at a characteristic wavelength upon excitation. | Allows visualization and tracking of dynamic biochemical events within live cells in Multi-Time-Point Microscopy. |

The integration of spatial hierarchies with temporal dynamics represents a frontier challenge in computational analysis, particularly within medical imaging research. Spatial-temporal feature extraction has emerged as a critical paradigm for diagnosing complex diseases, monitoring treatment efficacy, and advancing drug development. This whitepaper provides an in-depth technical examination of methodologies, architectures, and experimental protocols that effectively fuse multi-dimensional data across spatial and temporal domains. By synthesizing cutting-edge research from deep learning architectures and multimodal fusion frameworks, this guide establishes a foundational roadmap for researchers and scientists tackling the complexities of dynamic biological systems in medical imaging.

Spatial-temporal feature extraction addresses a fundamental challenge in modern medical imaging: biological systems are intrinsically dynamic, yet diagnostic imaging often captures only static snapshots. The integration of spatial hierarchies—from cellular structures to organ-level systems—with temporal dynamics reflecting disease progression or treatment response enables a more comprehensive physiological understanding. This fusion is technically challenging due to differing data resolutions, dimensional mismatches, and the complex, often non-linear, relationships between spatial features and their temporal evolution.

The clinical imperative for this integration is particularly evident in applications such as cardiac function analysis, tumor progression monitoring, and neural dynamics mapping. For instance, in cardiac magnetic resonance imaging (MRI), myocardial spatial–temporal morphology features extracted from cine images have demonstrated diagnostic value in differentiating etiologies of left ventricular hypertrophy (LVH), including cardiac amyloidosis, hypertrophic cardiomyopathy, and hypertensive heart disease [24]. Similarly, in dynamic contrast-enhanced MRI (DCE-MRI) of breast tumors, capturing both 3D spatial structures and multi-phase hemodynamic features significantly improves segmentation accuracy and diagnostic precision [25].

This technical guide examines core architectural patterns, detailed experimental methodologies, and practical implementation tools for addressing the spatial-temporal fusion challenge within medical imaging research.

Core Methodologies and Architectures

Hybrid Deep Learning Architectures

Advanced deep learning architectures that combine complementary neural network components have proven highly effective for spatial-temporal fusion. These models typically employ parallel encoders for multi-modal input processing, temporal modeling layers for sequence analysis, and fusion mechanisms for feature integration.

The DuSTiLNet (Dual-time point Space–Time fusion LSTM Network) architecture exemplifies this approach, processing dual time points using parallel convolutional encoders to extract highly representative deep features independently [26]. The encodings are concatenated and processed through Long Short-Term Memory (LSTM) layers to model temporal dependencies, with a decoder performing space–time feature fusion that optimizes information representation of spectral, spatial, and temporal details [26]. This architecture has demonstrated strong performance in change detection tasks, achieving an overall accuracy of 97.4%, F1 Score of 89%, and intersection over union (IoU) of 86.7% on benchmark datasets [26].

For medical imaging applications, ConvLSTM (Convolutional Long Short-Term Memory) units have been successfully employed to handle spatial–temporal features extracted from time-dependent slices in cardiac cine MR images [24]. This approach preserves spatial information while modeling temporal sequences, enabling analysis of dynamic physiological processes.

Frequency-Domain Fusion Techniques

An alternative approach to spatial-temporal fusion operates in the frequency domain, offering computational advantages and unique capabilities for capturing complex relationships. The Spatiotemporal Fourier Knowledge Tracing (STFKT) model demonstrates this paradigm, processing spatiotemporal fusion features in the frequency domain through Fourier Graph Neural Networks (FourierGNN) [27]. This method captures complex spatiotemporal relationships while significantly reducing computational complexity through matrix operations in the frequency domain.

In medical contexts where physiological processes often exhibit characteristic frequency signatures (e.g., cardiac rhythms, neural oscillations), frequency-domain analysis can reveal patterns obscured in time-domain representations. This approach naturally handles periodicity and can efficiently model long-range dependencies in temporal sequences.

Multi-Branch Networks with Attention Mechanisms

Multi-branch neural network architectures with integrated attention mechanisms have shown particular effectiveness in capturing subtle variations in spatial-temporal patterns. These architectures typically employ dedicated branches for processing different aspects of the data (spatial, temporal, spectral), with attention mechanisms dynamically weighting the importance of different features, time points, or spatial locations.

The FN-SSIR (Feature Fusion Network with Spatial-Temporal-Enhanced Strategy and Information Reconstruction) algorithm combines a multi-scale spatial-temporal convolution module with a spatial-temporal-enhanced strategy, a convolutional auto-encoder for information reconstruction, and long short-term memory with self-attention [28]. This comprehensive approach enables the extraction and fusion of dynamic features across fine-grained time-frequency variations and spatial-temporal patterns, achieving 86.7% classification accuracy in motor imagery tasks with force intensity variation [28].

Table 1: Performance Comparison of Spatial-Temporal Fusion Architectures

| Architecture | Application Domain | Key Innovation | Reported Performance |

|---|---|---|---|

| DuSTiLNet [26] | Remote Sensing Change Detection | Parallel encoders with LSTM temporal modeling | Accuracy: 97.4%, F1 Score: 89.0%, IoU: 86.7% |

| Multi-channel RNN with ConvLSTM [24] | Cardiac MRI (LVH Etiology Classification) | Multi-sequence temporal feature integration | Overall Accuracy: 77.4%, AUCs: 0.848-0.983 |

| Spatial-Temporal Mamba Network [25] | Breast DCE-MRI Tumor Segmentation | 4D encoder with spatial-temporal modules | Superior DSC and HD metrics vs. state-of-the-art |

| FN-SSIR [28] | Motor Imagery EEG Classification | Multi-scale convolution with self-attention LSTM | Accuracy: 86.7% on force variation dataset |

| STFKT [27] | Knowledge Tracing | FourierGNN for frequency-domain processing | AUC improvement: 19.53%-38.68% |

Experimental Protocols and Methodologies

Data Preparation and Preprocessing

Robust spatial-temporal analysis requires meticulous data preparation to address dimensional consistency across modalities and time points. For medical imaging applications, core preprocessing steps typically include:

- Temporal Alignment: Synchronizing data acquisition time points across modalities and subjects, particularly critical for dynamic studies (e.g., DCE-MRI, cardiac cine) [24]

- Spatial Normalization: Standardizing image resolutions and orientations across datasets, often through registration to common anatomical templates [29]

- Feature Standardization: Normalizing feature values to consistent scales across different measurement modalities

In the cardiac MRI study for LVH etiology classification, researchers extracted regions of interest (ROIs) containing the left ventricular myocardium from two-chamber, four-chamber, and short-axis cine images, with all images reconstructed to a standardized resolution of 1 mm × 1 mm × 1 mm before model development [24].

Model Training and Validation

Effective spatial-temporal model training requires specialized validation approaches to address temporal dependencies and prevent data leakage:

- Temporal Cross-Validation: Implementing time-aware splits where earlier time points train models tested on later time points

- Multi-Cohort Validation: Utilizing separate cohorts for training, validation, internal testing, and external testing to assess generalizability [24]

- Ablation Studies: Systematically removing architectural components to quantify their contribution to overall performance

In the LVH classification study, researchers employed a rigorous multi-cohort approach with 302 patients as the primary cohort (split into training, validation, and internal test sets) plus 53 additional patients from multiple centers as an external test dataset [24]. This design robustly assessed model generalizability across different populations and imaging protocols.

Performance Evaluation Metrics

Comprehensive evaluation of spatial-temporal fusion models requires multiple complementary metrics:

- Spatial Accuracy Measures: Dice Similarity Coefficient (DSC), Hausdorff Distance (HD) for segmentation tasks [25]

- Temporal Alignment Metrics: Dynamic Time Warping (DTW) distance for assessing temporal pattern fidelity

- Classification Performance: Area Under the Curve (AUC), overall accuracy, and per-class metrics for diagnostic tasks [24]

- Fusion-Specific Metrics: Metrics assessing information preservation from all input modalities

Table 2: Experimental Protocol Overview for Spatial-Temporal Fusion Studies

| Experimental Phase | Key Considerations | Medical Imaging Specific Adaptations |

|---|---|---|

| Data Collection | Multi-temporal alignment, spatial resolution consistency | Protocol standardization across scanners, contrast agent timing |

| Preprocessing | Spatial normalization, temporal interpolation | Anatomical template registration, physiological noise removal |

| Feature Extraction | Multi-scale spatial features, temporal dynamics encoding | Disease-specific feature prioritization (e.g., texture, shape, kinetics) |

| Model Training | Temporal cross-validation, regularization for small datasets | Transfer learning from larger datasets, data augmentation |

| Validation | Independent temporal test sets, external cohorts | Multi-center trials, clinical benchmark comparison |

| Interpretation | Visualization of spatial-temporal saliency | Clinical correlation with pathology, outcome data |

Visualization of Architectural Patterns

The following diagrams illustrate key architectural patterns for spatial-temporal fusion identified across the research literature.

Diagram 1: Parallel Encoding Architecture for Spatial-Temporal Fusion

Diagram 2: Medical Imaging Spatial-Temporal Analysis Workflow

The Scientist's Toolkit: Research Reagent Solutions

Implementing effective spatial-temporal fusion in medical imaging research requires both computational frameworks and domain-specific analytical tools. The following table details essential components of the spatial-temporal fusion research toolkit.

Table 3: Essential Research Reagents and Tools for Spatial-Temporal Fusion

| Research Tool | Function | Application Example |

|---|---|---|

| ConvLSTM Units | Captures spatiotemporal correlations in image sequences | Cardiac cine MRI analysis for tracking myocardial motion patterns [24] |

| Multi-scale Convolutional Kernels | Extracts features at different spatial scales | Tumor heterogeneity characterization in DCE-MRI [25] |

| Attention Mechanisms | Dynamically weights important spatial and temporal features | Highlighting critical brain regions in motor imagery EEG analysis [28] |

| Fourier Graph Neural Networks | Processes spatiotemporal relationships in frequency domain | Modeling long-range dependencies in physiological time series [27] |

| Parallel Encoder Architectures | Processes multi-modal or multi-temporal inputs simultaneously | Dual-time point analysis for change detection in longitudinal studies [26] |

| Residual Dense Blocks (RDB) | Enhances feature propagation and reuse | Preserving spatial details while modeling temporal dynamics [30] |

| Bayesian Fusion Frameworks | Combines multiple data sources with uncertainty quantification | Integrating EEG and fMRI data with reliability estimates [29] |

| 3D/4D Convolutional Networks | Processes volumetric data across time dimensions | Breast tumor segmentation in multi-phase DCE-MRI [25] |

Spatial-temporal feature extraction represents a paradigm shift in medical imaging analysis, moving beyond static snapshots to dynamic, integrated models of disease progression and treatment response. The architectures and methodologies detailed in this whitepaper provide a technical foundation for addressing the core challenge of integrating spatial hierarchies with temporal dynamics. As these approaches mature, they promise to enhance diagnostic precision, accelerate drug development, and ultimately advance personalized medicine through more comprehensive characterization of complex biological systems across spatial and temporal dimensions. Future directions include developing more computationally efficient models, improving interpretability for clinical translation, and establishing standardized validation frameworks for spatial-temporal fusion in medical applications.

Advanced Architectures and Clinical Implementation

The evolution of deep learning has fundamentally transformed medical image analysis, moving beyond static image interpretation to dynamic spatio-temporal feature extraction. This paradigm shift is critical in clinical practice, where disease progression, physiological motion, and procedural navigation unfold over time. Traditional 2D convolutional neural networks (CNNs), while powerful for spatial feature extraction, often overlook the rich temporal dependencies inherent in medical video sequences, dynamic scans, and longitudinal studies. This technical guide examines three advanced architectures redefining spatio-temporal analysis in medical imaging: 3D CNNs, hybrid CNN-Long Short-Term Memory (LSTM) networks, and Transformer-based models. By capturing both spatial patterns and temporal evolution, these architectures enable more accurate disease classification, progression tracking, and treatment monitoring, thereby supporting enhanced clinical decision-making and drug development research.

Architectural Foundations and Comparative Analysis

3D Convolutional Neural Networks (3D CNNs)

3D CNNs extend traditional convolutional operations to the temporal dimension, directly learning spatio-temporal features from volumetric data. Unlike 2D CNNs that process individual frames, 3D convolutions apply 3D kernels that slide through width, height, and time, simultaneously capturing spatial features and their temporal evolution. This architecture is particularly suited for medical video analysis, including endoscopic procedures, ultrasound, and 4D medical imaging (e.g., dynamic MRI, cardiac CT).

A novel 3D CNN framework for gastrointestinal (GI) endoscopic video classification demonstrates this approach, utilizing a 3D version of the parallel spatial and channel squeeze-and-excitation (P-scSE) module and a proposed residual with parallel attention (RPA) block. To address computational complexity, the model employs (2+1)D convolution, decomposing 3D convolution into spatial 2D convolution followed by temporal 1D convolution. This architecture achieved an average accuracy of 93.3%, precision of 93.2%, recall of 94.4%, F1-score of 93.5%, and AUC of 93.3% on the hyperKvasir dataset, with the P-scSE3D integration increasing the F1-score by 7% [31].

Hybrid CNN-LSTM Architectures

Hybrid CNN-LSTM networks combine the strengths of CNNs for spatial feature extraction with LSTMs for modeling temporal sequences. The CNN backbone processes individual frames to extract discriminative spatial features, which are then fed into LSTM layers that learn temporal dependencies and long-range relationships across frames. This separation of spatial and temporal processing provides flexibility in handling variable-length sequences and capturing complex temporal dynamics.

The MediVision framework exemplifies this approach, integrating a vision backbone based on CNNs for feature extraction, LSTM for identifying sequential dependencies to recognize disease progression, and an attention mechanism that selectively focuses on salient features. Additionally, it utilizes skip connections and Grad-CAM heatmaps to visualize important regions in medical images. Tested on ten diverse medical image datasets, MediVision consistently achieved classification accuracies above 95%, with a peak of 98% [32].

For ECG arrhythmia classification, a hybrid CNN-Bidirectional LSTM (BLSTM) architecture demonstrates the power of this approach. The CNN layers autonomously learn morphological features from raw ECG waveforms, while the BLSTM layers model sequential and temporal dependencies in both forward and backward directions. Incorporating the Mish activation function for enhanced training stability, this model achieved remarkable performance: 99.52% accuracy, 99.48% sensitivity, and 99.85% specificity on the MIT-BIH Arrhythmia Database and clinical ECG recordings [33].

Transformer-Based Architectures

Vision Transformers (ViTs) process images as sequences of patches, utilizing self-attention mechanisms to capture global dependencies across the entire image. Unlike CNNs with their inductive biases toward locality and translation invariance, Transformers learn relationships between any patches regardless of their spatial separation, enabling more comprehensive context modeling. This capability is particularly valuable for medical images where global context influences local interpretations.

The TransBreastNet framework represents a sophisticated hybrid approach, combining CNNs for spatial encoding of lesions with Transformer-based modules for temporal encoding of lesion progression, alongside dense metadata encoders for patient-specific clinical information. This multimodal, multitask framework simultaneously predicts breast cancer subtype and disease stage from mammogram images, achieving a macro accuracy of 95.2% for subtype classification and 93.8% for stage prediction [34].

For medical image segmentation, the FE-SwinUper model integrates a feature enhancement Swin Transformer (FE-ST) backbone with UPerNet. The FE-ST module utilizes self-attention to extract rich spatial and contextual features across different scales, while an adaptive feature fusion (AFF) module optimizes multi-scale feature integration. This architecture achieves Dice scores of 91.58% on the Synapse multi-organ segmentation dataset and 90.15% on the ACDC cardiac segmentation dataset [35].

Comparative Performance Analysis

Table 1: Quantitative Performance Comparison Across Architectures

| Architecture | Application Domain | Key Metrics | Performance | Dataset Used |

|---|---|---|---|---|

| 3D CNN with P-scSE3D | GI Endoscopic Video Classification | Accuracy/F1-Score | 93.3% / 93.5% | hyperKvasir [31] |

| Hybrid CNN-LSTM (MediVision) | Multi-Domain Medical Image Classification | Peak Accuracy | 98.0% | 10 Diverse Datasets [32] |

| Hybrid CNN-BLSTM | ECG Arrhythmia Classification | Accuracy/Sensitivity/Specificity | 99.52% / 99.48% / 99.85% | MIT-BIH & Clinical ECGs [33] |

| CNN-Transformer (TransBreastNet) | Breast Cancer Subtype & Stage Classification | Macro Accuracy | 95.2% (Subtype) / 93.8% (Stage) | Public Mammogram Dataset [34] |

| Transformer (FE-SwinUper) | Multi-Organ & Cardiac Segmentation | Dice Score | 91.58% / 90.15% | Synapse & ACDC [35] |

| ResNet-50 (Baseline) | Chest X-ray Pneumonia Detection | Accuracy | 98.37% | Chest X-ray Dataset [36] |

Table 2: Strengths and Limitations by Architecture

| Architecture | Strengths | Limitations | Ideal Use Cases |

|---|---|---|---|

| 3D CNNs | Native spatio-temporal processing; Unified feature learning | Computationally intensive; High parameter count | Short-range temporal modeling; Volumetric data |

| Hybrid CNN-LSTMs | Powerful temporal dynamics modeling; Flexible sequence handling | Separate spatial/temporal processing; Complex training | Longitudinal analysis; Time-series data |

| Transformers | Global context capture; Superior scalability with data | Data-hungry; Computationally expensive for high resolution | Large-scale datasets; Global dependency tasks |

| Hybrid CNN-Transformers | Balanced local-global feature learning; State-of-the-art performance | Architectural complexity; Training challenges | Multi-scale segmentation; Comprehensive analysis |

Experimental Protocols and Methodologies

Data Preparation and Preprocessing

Robust experimental protocols begin with meticulous data preparation. For spatio-temporal medical data, standard practices include:

- Temporal Sampling: For video data, frame sampling at consistent intervals (e.g., 1 frame per second for endoscopic videos) ensures manageable sequence lengths while preserving critical temporal information [31].

- Spatial Standardization: Image resizing to uniform dimensions (commonly 224×224 or 256×256) facilitates batch processing and architectural compatibility [34].

- Data Augmentation: Temporal and spatial augmentation techniques, including random temporal cropping, frame shuffling, spatial rotations, and flipping, enhance dataset diversity and model generalization [32].

- Sequence Formation: For CNN-LSTM models, sequences of 16-64 frames are typical, with the CNN processing individual frames and the LSTM handling the resulting feature sequences [37].

Implementation and Training Details

- Optimization Algorithms: Adaptive optimizers like AdamW and SGD with momentum are prevalent, often with cosine annealing learning rate schedules for stable convergence [32] [33].

- Loss Functions: Task-specific loss functions include categorical cross-entropy for classification, Dice loss for segmentation, and composite losses for multi-task learning [34] [35].

- Regularization Strategies: Weight decay, dropout, and label smoothing are commonly employed to prevent overfitting, particularly important for data-hungry architectures like Transformers [36].

- Validation Protocols: K-fold cross-validation (typically 5-fold) and strict train-validation-test splits (e.g., 70%-15%-15%) ensure reliable performance estimation [32].

Architectural Visualizations

Spatio-Temporal Architecture Comparison

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Resources for Spatio-Temporal Medical Imaging Research

| Resource Category | Specific Examples | Function in Research | Implementation Notes |

|---|---|---|---|

| Medical Imaging Datasets | hyperKvasir (GI endoscopy), MIT-BIH Arrhythmia, BreaKHis, INbreast, ACDC, Synapse | Benchmark training and validation | Address class imbalance via oversampling or weighted loss functions [31] [33] [34] |

| Deep Learning Frameworks | PyTorch, TensorFlow, MONAI | Model implementation and training | MONAI provides medical imaging-specific transforms and network architectures |

| Pretrained Models | ImageNet-pretrained CNNs, Clinical-Trials-in-Progress | Transfer learning initialization | Critical for data-scarce medical domains; improves convergence [32] |

| Attention Mechanisms | Squeeze-and-Excitation, Multi-Head Self-Attention, Grad-CAM | Feature emphasis and model interpretability | Identifies clinically relevant regions; enhances trust [32] [34] |

| Optimization Tools | AdamW, SGD with Momentum, Cosine Annealing | Model parameter optimization | Adaptive learning rates prevent overshooting in early training |

| Computational Hardware | NVIDIA GPUs (e.g., A100, V100, RTX 4090) | Accelerate model training and inference | 3D CNNs and Transformers require substantial VRAM for medical volumes |

The evolution of deep learning architectures for spatio-temporal feature extraction represents a paradigm shift in medical image analysis. Each architectural family offers distinct advantages: 3D CNNs provide native volumetric processing, hybrid CNN-LSTMs excel at modeling complex temporal dynamics, and Transformers capture unparalleled global context. The emerging trend toward hybrid architectures, such as CNN-Transformer combinations, demonstrates the field's maturation in leveraging complementary strengths. As these technologies continue to evolve, their integration into clinical workflows promises to enhance diagnostic precision, enable personalized treatment planning, and accelerate therapeutic development. Future research directions include developing more computationally efficient architectures, improving model interpretability for clinical trust, and creating standardized evaluation frameworks for spatio-temporal medical imaging applications.

Alzheimer's disease (AD) is a progressive neurodegenerative disorder and a leading cause of dementia worldwide, characterized by memory impairment and cognitive decline. Early diagnosis is crucial for timely intervention and management of the disease. Resting-state functional magnetic resonance imaging (rs-fMRI) has emerged as a powerful, non-invasive tool for detecting functional brain changes associated with AD, capturing spontaneous neural activity through blood oxygen level-dependent (BOLD) signals. Unlike structural MRI, rs-fMRI provides insights into brain network connectivity and dynamics, offering potential biomarkers for early AD detection.

The analysis of rs-fMRI data presents significant computational challenges due to its complex four-dimensional (4D) nature—incorporating three spatial dimensions plus time. Traditional analytical approaches often separate spatial and temporal processing, potentially discarding critical information embedded in their continuous interaction. Within this context, 3D Convolutional Neural Networks (3D CNNs) have shown remarkable potential for extracting spatially rich features from neuroimaging data. This case study explores the application of 3D CNN architectures for AD classification from rs-fMRI data, framed within the broader research theme of spatial-temporal feature extraction in medical imaging.

Technical Background and Literature Review

The Spatial-Temporal Challenge in fMRI Analysis

Rs-fMRI generates 4D data (x, y, z, time) that captures both the spatial organization and temporal dynamics of brain activity. Traditional analytical methods can be broadly categorized as template-dependent or template-free approaches. Template-dependent methods rely on predefined brain atlases for Region of Interest (ROI) analysis but lack inter-subject specificity and generalizability due to fixed anatomical priors. Template-free models process native fMRI data directly but have often separated spatial and temporal processing, discarding temporal continuity which encompasses key characteristics such as the smooth and correlated nature of neural dynamics over time [9].

Functional connectivity (FC) analysis, which measures the temporal correlation between different brain regions, has been widely used to identify network disruptions in AD, particularly within the default mode network (DMN) [38]. More recently, interest has shifted beyond traditional FC analyses toward more physiologically informative metrics like brain entropy mapping, which estimates the complexity of fMRI-BOLD signals and is hypothesized to reflect the brain's capacity for information processing and cognitive flexibility [39].

Evolution of Deep Learning in AD Diagnosis

Deep learning approaches have progressively advanced in their capacity to handle neuroimaging data. Initial studies utilized 2D CNN architectures applied to slices of MRI data, but these methods often suffered from data leakage issues due to high similarity between adjacent slices and failed to capture comprehensive spatial information [40]. This limitation prompted the development of 3D CNN models that process full volumetric brain data, preserving spatial context and preventing information loss during dimensionality reduction [41].

More recent innovations include hybrid architectures that combine the strengths of CNNs for spatial feature extraction with transformers for global context modeling. For instance, the 3D-CNN-VSwinFormer model integrates a 3D CNN with a Convolutional Block Attention Module (CBAM) and a Video Swin Transformer, achieving an accuracy of 92.92% and AUC of 0.966 in differentiating AD patients from cognitively normal individuals [40]. Similarly, novel frameworks have emerged that jointly model spatiotemporal representations through end-to-end processing of native 4D fMRI data, eliminating template dependency while preserving intrinsic brain activity patterns [9].

3D CNN Architectures for fMRI Analysis

Core Architectural Components

3D CNN architectures for fMRI analysis typically incorporate several key components designed to handle the unique characteristics of neuroimaging data:

Volumetric Convolutional Layers: These layers apply 3D kernels that slide through the spatial dimensions of the fMRI volume, extracting features that preserve the volumetric context of brain structures. This differs from 2D approaches that process individual slices independently [41].

Attention Mechanisms: Modules like the 3D Convolutional Block Attention Module (CBAM) enhance model capability to capture crucial features in volumetric data and weight information from different regions. This augments the model's aptitude for discerning localized attributes within cerebral MRI scans [40].

Temporal Integration Components: To handle the temporal dimension of fMRI data, architectures may incorporate recurrent layers (e.g., LSTMs) or transformer modules that model dependencies across time points [9] [26].

Specialized Architectures for 4D fMRI

Recent research has introduced specialized architectures that address the unique challenges of 4D fMRI data. Zeng et al. proposed an axial slice-centric model that redefines 4D fMRI analysis by decomposing it into 3D spatiotemporal manifolds along the axial axis, enabling joint learning of spatial and temporal features while preserving individualized structure organization [9]. Their framework employs a hierarchical encoder to extract local spatiotemporal interactions within each slice, progressively aggregating information to capture multi-granularity neural patterns.

Another approach utilizes brain entropy mapping via rs-fMRI as a marker of impaired brain function related to tauopathy. This method applies 3D CNN models to entropy maps, achieving up to 84% accuracy in classifying cognitive impairment using complexity measures derived from fMRI data [39].

Table 1: Performance Comparison of 3D CNN-based Approaches for AD Classification

| Study | Architecture | Dataset | Classification Task | Accuracy | AUC |

|---|---|---|---|---|---|

| 3D-CNN-VSwinFormer [40] | 3D CNN + Video Swin Transformer | ADNI | AD vs CN | 92.92% | 0.966 |

| Spatio-temporal Screening [9] | Axial Slice-Centric CNN | ADNI | EMCI vs NC | 97% | N/A |

| fMRI Entropy Classifier [39] | 3D CNN on Entropy Maps | ADNI | CN vs MCI/AD | 84% | 0.73 |

| Template-free 4D fMRI [9] | Hierarchical Spatiotemporal Encoder | ADNI + Private Dataset | eMCI vs NC | 97% | N/A |