Strategic Vigilance: Advancing Early Detection Through Risk-Based Surveillance

This article provides a comprehensive analysis of risk-based surveillance strategies for the early detection of threats in biomedical and clinical research.

Strategic Vigilance: Advancing Early Detection Through Risk-Based Surveillance

Abstract

This article provides a comprehensive analysis of risk-based surveillance strategies for the early detection of threats in biomedical and clinical research. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles and ethical imperatives of risk-based monitoring, details innovative methodological applications from drug safety to infectious diseases, addresses critical implementation challenges in diverse settings, and presents rigorous validation frameworks. By synthesizing insights from clinical development, public health, and regulatory science, this review serves as a strategic guide for enhancing the sensitivity, efficiency, and global harmonization of early warning systems.

The Bedrock of Vigilance: Principles and Imperatives of Risk-Based Surveillance

The journey from the Hippocratic Oath to modern International Council for Harmonisation (ICH) guidelines represents a profound evolution in medical ethics and regulatory science. This transition reflects a shift from individual physician virtue to systematically implemented, risk-proportioned oversight frameworks that protect patients and ensure data integrity across global clinical research. The Hippocratic Oath, formulated around 400 BC, established the fundamental ethical principle of beneficence—to "do no harm or injustice" to patients [1] [2]. For centuries, this oath served as the primary ethical compass for physicians, emphasizing patient confidentiality, gratitude to teachers, and the sanctity of the patient-physician relationship [1].

In contemporary medicine, these foundational principles have been systematically codified into regulatory frameworks that govern clinical research worldwide. The ICH Good Clinical Practice (GCP) guidelines, particularly the upcoming E6(R3) revision scheduled for implementation in 2025, represent the modern embodiment of these ethical commitments [3] [4]. This evolution addresses complex challenges in global drug development, digital health technologies, and risk-based surveillance strategies that were unimaginable in Hippocrates' era. The progression from personal ethical commitment to structured regulatory oversight demonstrates how medicine has maintained its ethical foundations while adapting to enormous scientific and societal changes.

Historical Foundations: From Hippocratic Oath to Modern Bioethics

Core Principles of the Hippocratic Oath

The Hippocratic Oath established several enduring ethical principles that continue to resonate in modern medical practice. Its directives include confidentiality ("Whatever I see or hear in the lives of my patients... I will keep secret"), beneficence ("I will use treatment to help the sick according to my ability and judgment"), and non-maleficence ("I will do no harm or injustice to them") [1] [2]. The oath also emphasized respect for teachers and the sharing of medical knowledge, establishing a culture of mentorship and continuous learning within the profession. These principles formed the ethical bedrock of medicine for centuries, creating a foundation of trust between physicians and patients.

Limitations in Modern Contexts

Despite its enduring values, the original Hippocratic Oath faces significant limitations in contemporary medical practice. The oath was created for a paternalistic model of medicine where physicians made decisions with minimal patient input, failing to address modern concepts of patient autonomy and informed consent [1]. Its prohibitions against abortion and euthanasia conflict with legal medical practices in many jurisdictions, where abortion is legal under specific conditions and euthanasia or physician-assisted dying is permitted in several countries [1]. The oath's exclusion of women from medical practice (it was originally intended for male physicians only) and its swearing to Greek gods make it culturally problematic in today's multicultural, pluralistic societies [1] [5]. Furthermore, it provides no guidance on contemporary challenges such as digital health technologies, health insurance systems, corporate influences on medicine, or legal liabilities that modern physicians navigate regularly [1].

Key Historical Developments in Research Ethics

The 20th century witnessed crucial developments that exposed the limitations of relying solely on individual physician ethics and catalyzed the creation of systematic research regulations. The Nuremberg Code (1947) established the fundamental requirement of voluntary informed consent following the atrocities of Nazi medical experiments [6]. The Declaration of Helsinki (1964) further developed principles for human research ethics, emphasizing subject welfare and risk-benefit assessment [6]. The Belmont Report (1979) articulated three core ethical principles: respect for persons, justice, and beneficence, providing the foundation for modern institutional review boards (IRBs) [6]. These developments reflected growing recognition that individual ethical commitments, while necessary, required supplementation with structured oversight systems to protect vulnerable populations in increasingly complex research environments.

Table 1: Historical Evolution of Medical Ethics and Regulations

| Time Period | Key Document/Event | Core Ethical Principles | Regulatory Impact |

|---|---|---|---|

| 400 BC | Hippocratic Oath | Beneficence, Confidentiality, Non-maleficence | Individual physician commitment |

| 1947 | Nuremberg Code | Voluntary consent, Avoid unnecessary suffering | Foundation for research ethics |

| 1964 | Declaration of Helsinki | Risk-benefit assessment, Subject welfare | International research standards |

| 1979 | Belmont Report | Respect for persons, Justice, Beneficence | IRB requirements |

| 1996 | ICH E6(R1) GCP | Data quality, Subject protection | Harmonized global clinical trials |

| 2016 | ICH E6(R2) GCP | Risk-based monitoring, Quality management | Enhanced oversight efficiency |

| 2025 (anticipated) | ICH E6(R3) GCP | Proportionality, Digital trials, Data governance | Modernized for current research landscape |

The Rise of Risk-Based Approaches in Surveillance and Monitoring

Fundamental Principles of Risk-Based Surveillance

Risk-based surveillance represents a strategic methodology that directs monitoring resources toward the most critical elements that impact patient safety and data quality. This approach acknowledges that not all processes, data, or sites carry equal risk in clinical research or disease surveillance. The fundamental principle involves identifying critical-to-quality factors that are essential to trial integrity and participant safety, then allocating monitoring resources proportionately to these risks [3] [7]. This represents a significant shift from traditional "one-size-fits-all" monitoring approaches toward targeted, efficient oversight that enhances detection capabilities while optimizing resource utilization.

Risk-based methodologies have demonstrated particular value in early detection systems for emerging threats. In plant pathology, sophisticated risk-based surveillance optimization has shown that concentrating solely on the highest-risk sites may be suboptimal; instead, strategically distributing resources across multiple locations accounting for spatial correlations in risk can significantly improve detection probability [8] [9]. This "don't put all your eggs in one basket" approach has relevance for clinical trial monitoring, where diversifying surveillance strategies may provide more robust safety detection than focusing exclusively on presumed highest-risk sites.

Application in Clinical Trial Monitoring

The implementation of risk-based monitoring (RBM) in clinical trials represents a practical application of these surveillance principles. RBM employs centralized monitoring activities complemented by targeted on-site monitoring focused on critical data and processes [10] [7]. This approach utilizes key risk indicators (KRIs) and quality tolerance limits (QTLs) to identify sites or processes deviating from expected patterns, enabling proactive intervention before issues impact patient safety or data integrity [3]. The U.S. Food and Drug Administration (FDA) has explicitly endorsed this approach through its guidance on "Oversight of Clinical Investigations—A Risk-Based Approach to Monitoring," emphasizing that sponsors should focus monitoring activities on the most important aspects of study conduct and reporting [10].

The advantages of risk-based monitoring are substantial. Studies have demonstrated that RBM can reduce monitoring costs by 15-30% while simultaneously improving data quality and patient safety oversight [7]. This efficiency gain comes from eliminating redundant source document verification, focusing on-site visits on higher-risk activities, and leveraging centralized statistical surveillance to identify anomalous patterns across sites. Furthermore, the risk-based approach creates a systematic framework for prioritizing monitoring activities based on their potential impact on human subject protection and trial conclusions, moving beyond tradition-based monitoring schedules toward scientifically justified oversight strategies.

Implementation Framework for Risk-Based Surveillance

Implementing an effective risk-based surveillance system requires a structured approach. The process begins with risk identification—systematically assessing all trial processes to determine which elements are most critical to patient safety and data reliability. This is followed by risk evaluation—assessing the likelihood and impact of potential errors or safety issues. Based on this evaluation, organizations develop mitigation strategies including tailored monitoring plans, targeted training, and specialized procedures for high-risk activities. Finally, continuous risk review ensures the surveillance strategy evolves as new risks emerge and existing risks change throughout the trial lifecycle [10] [3].

Table 2: Core Components of Risk-Based Surveillance Systems

| Component | Description | Application in Clinical Research | Application in Pathogen Surveillance |

|---|---|---|---|

| Risk Assessment | Systematic identification and evaluation of risks | Identify critical data points, vulnerable populations | Identify high-risk introduction pathways, susceptible hosts |

| Resource Allocation | Direction of surveillance resources based on risk | Increased monitoring at sites with protocol deviations | Enhanced sampling in areas with high introduction probability |

| Detection Methodologies | Sensitivity-specificity optimization | Statistical surveillance, centralized monitoring | Diagnostic tools with appropriate sensitivity for early detection |

| Adaptive Strategy | Evolution based on emerging data | Protocol amendments, monitoring plan updates | Surveillance strategy refinement as epidemiological understanding improves |

| Quality Indicators | Metrics to evaluate surveillance effectiveness | Quality tolerance limits, key risk indicators | Detection probability, time to detection, false positive rates |

ICH E6(R3): The Modern Implementation of Ethical Principles

Key Updates and Structural Changes

ICH E6(R3), scheduled for implementation in July 2025, represents the most significant revision of Good Clinical Practice guidelines in nearly a decade. This update fundamentally restructures the guideline into an overarching Principles document accompanied by annexes addressing specific trial types [3]. The structural reorganization includes Annex 1 for interventional clinical trials and a planned Annex 2 for "non-traditional" trial designs such as decentralized, adaptive, and platform trials [3] [4]. This modular approach provides a more flexible framework that can adapt to evolving methodological innovations while maintaining core ethical principles.

A central theme of E6(R3) is the embrace of media-neutral language that facilitates the integration of digital health technologies into clinical research [3]. The guidelines explicitly recognize electronic informed consent (eConsent), wearable devices, telemedicine visits, and electronic source documentation (eSource) as valid components of clinical trials. This modernization acknowledges the technological transformation of clinical research while maintaining rigorous standards for data quality and participant protection. By removing media-specific requirements, the guidelines encourage innovation while focusing on functional outcomes—ensuring that regardless of the technology used, the rights, safety, and well-being of trial participants remain protected.

Enhanced Ethical Protections in E6(R3)

ICH E6(R3) strengthens several ethical dimensions of clinical research that echo concerns first articulated in the Hippocratic Oath. The guideline introduces richer informed consent requirements, specifically mandating that participants receive information about data handling after withdrawal, results communication, storage duration, and safeguards protecting secondary data use [4]. This enhanced transparency respects participant autonomy in an era of increasingly complex data flows, addressing modern challenges to confidentiality that Hippocrates could not have imagined yet upholding his principle of protecting patient information.

The revision also elevates data governance from a technical concern to an ethical imperative. Chapter 4 of E6(R3) establishes an integrated framework encompassing audit trails, metadata integrity, access controls, and end-to-end data retention [4]. This formalizes sponsor responsibilities for data quality and security while empowering ethics committees to interrogate these controls as they relate to participant rights and welfare. The guidelines further signal an ethical shift through terminology changes, replacing "trial subject" with "trial participant" throughout the document to emphasize partnership and respect for autonomy [4]. This linguistic evolution reflects deeper ethical commitments to recognizing research participants as active collaborators rather than passive subjects.

Risk-Proportionate Implementation

A cornerstone of ICH E6(R3) is the principle of risk-proportionate oversight, which applies the risk-based surveillance approach to ethics review and trial conduct. The guideline explicitly encourages ethics committees to set continuing review frequency according to actual participant risk rather than calendar defaults [4]. This enables more efficient allocation of committee resources to higher-risk studies while reducing unnecessary administrative burdens on minimal-risk research. The risk-proportionate approach extends to monitoring strategies, documentation requirements, and safety reporting, creating a cohesive framework that scales oversight activities to match the specific risks of each trial.

The implementation of risk-proportionate oversight requires sophisticated risk assessment methodologies that can systematically evaluate and categorize studies based on their potential harms and vulnerabilities. Ethics committees must develop criteria for determining review intensity, considering factors such as intervention novelty, population vulnerability, endpoint criticality, and procedural complexity. This nuanced approach represents a maturation from standardized checklists toward context-sensitive ethical oversight that can more effectively protect participants while facilitating efficient research conduct.

Experimental Protocols for Risk-Based Surveillance Implementation

Protocol 1: Spatial Optimization for Early Detection Surveillance

Purpose: This protocol provides a methodology for optimizing surveillance site selection to maximize early detection probability for emerging threats, adapting approaches successfully used in plant disease surveillance [8] [9] to clinical safety monitoring.

Materials and Reagents:

- Geographic information system (GIS) software with spatial analysis capabilities

- Historical introduction risk data (e.g., prior safety events, protocol deviations)

- Host susceptibility landscape (e.g., patient population distribution, site capabilities)

- Pathogen spread parameters (e.g., communication patterns between sites, referral networks)

- Detection sensitivity specifications for monitoring methodologies

Methodology:

- Model Development: Create a spatially explicit stochastic model of threat introduction and spread through the research network, incorporating between-site transmission probabilities.

- Simulation Execution: Run multiple iterations (n≥10,000) simulating threat spread from random introduction points until predefined prevalence threshold is exceeded.

- Detection Modeling: Calculate detection probabilities for potential surveillance site arrangements using statistical detection models that account for sampling frequency and diagnostic sensitivity.

- Optimization Algorithm: Apply stochastic optimization (e.g., simulated annealing) to identify surveillance site arrangements that maximize detection probability before threshold prevalence.

- Validation: Compare optimized surveillance strategy performance against conventional risk-based targeting using holdout simulation data.

Implementation Considerations:

- Optimal surveillance arrangements account for spatial correlations in risk—distributing resources across multiple moderate-risk sites often outperforms concentration solely on highest-risk locations [8].

- Surveillance strategy should be dynamically updated as new risk information emerges throughout the trial lifecycle.

- The balance between surveillance sensitivity and resource constraints should be explicitly quantified to support rational resource allocation decisions.

Spatial Optimization Surveillance Workflow

Protocol 2: Risk-Based Quality Tolerance Limit Implementation

Purpose: To establish and monitor Quality Tolerance Limits (QTLs) for critical trial parameters, enabling proactive risk-based surveillance focused on variables most impacting participant safety and data reliability.

Materials and Reagents:

- Clinical trial protocol with identified critical data and processes

- Historical benchmark data from similar trials

- Statistical process control software

- Centralized monitoring platform with visualization capabilities

- Predefined key risk indicators (KRIs)

Methodology:

- Criticality Assessment: Identify critical-to-quality factors essential to participant safety and trial conclusions through systematic process mapping and risk assessment.

- QTL Establishment: Define acceptable variability ranges for each parameter using historical data, therapeutic area standards, and clinical rationale.

- Monitoring Framework: Implement statistical surveillance to track parameter performance against QTLs, with automated alerts for breaches or trend violations.

- Escalation Protocol: Establish graded response procedures for QTL breaches based on severity, including root cause analysis and corrective/preventive actions.

- Effectiveness Evaluation: Periodically assess QTL performance in identifying meaningful issues, refining limits based on accumulating trial experience.

Implementation Considerations:

- QTLs should focus on parameters with direct impact on participant safety or trial conclusions rather than convenient but non-critical metrics.

- Tolerance limits should balance sensitivity (detecting real problems) with specificity (avoiding excessive false alarms) to maintain monitoring efficiency.

- The QTL framework should be dynamic, with periodic reassessment of limit appropriateness as trial experience accumulates.

Table 3: Risk-Based Monitoring Implementation Toolkit

| Tool Category | Specific Tools/Methods | Primary Function | Implementation Considerations |

|---|---|---|---|

| Risk Assessment Tools | Risk Assessment Categorization Tool (RACT), Failure Mode Effects Analysis (FMEA) | Systematic risk identification and prioritization | Involve multidisciplinary team; focus on patient safety and data integrity |

| Centralized Monitoring Tools | Statistical surveillance algorithms, Data visualization dashboards | Remote detection of anomalous patterns across sites | Validate against known issues; establish clear triggers for on-site follow-up |

| Key Risk Indicators | Screening failures, Protocol deviations, Informed consent errors | Early warning of emerging site issues | Benchmark against similar studies; adjust for site-specific factors |

| Quality Tolerance Limits | Eligibility violations, Primary endpoint data quality, SAE reporting timeliness | Define acceptable performance variability | Establish based on scientific rationale; review periodically for appropriateness |

| Source Document Verification Tools | Targeted SDV planners, Risk-based SDV algorithms | Focus verification on critical data elements | Identify critical observations; avoid 100% SDV unless justified |

The Scientist's Toolkit: Essential Reagents and Materials

Implementing effective risk-based surveillance requires both methodological frameworks and practical tools. This toolkit summarizes essential components for establishing modern, ethics-aligned surveillance systems in clinical research and early detection contexts.

Table 4: Essential Research Reagent Solutions for Risk-Based Surveillance

| Reagent/Material | Specifications | Functional Role | Application Context |

|---|---|---|---|

| Spatial Modeling Software | GIS capabilities, Stochastic simulation, Network analysis | Models threat introduction and spread pathways | Optimizing surveillance site selection for early detection |

| Statistical Process Control Tools | Real-time analytics, Visualization dashboards, Alert algorithms | Monitors key risk indicators and quality tolerance limits | Centralized monitoring of clinical trial parameters |

| Diagnostic Sensitivity Standards | Validated detection limits, Quantitative performance metrics | Establishes minimum performance requirements for detection methods | Ensuring surveillance methods can identify threats at acceptable prevalence |

| Data Governance Framework | Audit trail specifications, Access control protocols, Retention policies | Ensures data integrity, confidentiality, and reliability | Implementing ICH E6(R3) data governance requirements |

| Risk Assessment Categorization Tool | Risk scoring algorithm, Criticality weighting factors | Systematically identifies and prioritizes risks | Initial risk assessment for clinical trial monitoring planning |

| Digital Health Technologies | eConsent platforms, Wearable sensors, Telemedicine interfaces | Enables decentralized trial conduct and remote data collection | Implementing patient-centric, efficient trial designs |

The evolution from the Hippocratic Oath to ICH E6(R3) guidelines demonstrates how medicine has maintained its ethical foundation while systematically addressing increasingly complex challenges. The core commitment to patient benefit articulated in ancient Greece remains recognizable in modern risk-based surveillance approaches, though now implemented through sophisticated methodological frameworks. Contemporary clinical research oversight has transformed the physician's individual ethical commitment into systematically implemented, evidence-based surveillance strategies that protect participants across global research networks.

The successful implementation of risk-based surveillance requires both methodological rigor and ethical commitment. As demonstrated in the experimental protocols, optimal surveillance strategies often diverge from intuitive approaches—sometimes distributing resources across multiple moderate-risk locations outperforms concentration solely on highest-risk sites [8]. This underscores the value of evidence-based surveillance optimization compared to tradition-based monitoring approaches. Furthermore, the integration of ethical considerations throughout surveillance design ensures that efficiency gains do not come at the cost of participant protection or data integrity.

Looking forward, the principles of risk-based surveillance will continue to evolve alongside technological and methodological innovations. The implementation of ICH E6(R3) in 2025 represents not an endpoint but a milestone in the ongoing refinement of research oversight. As decentralized trials, digital health technologies, and novel methodologies advance, surveillance strategies must adapt while maintaining their foundational commitment to the ethical principles first articulated millennia ago. This continuous evolution ensures that medical research can efficiently generate reliable evidence while steadfastly protecting those who volunteer to participate in advancing medical knowledge.

Effective risk-based surveillance is fundamental to modern drug development, enabling the proactive detection of safety signals and ensuring public health. This framework is built on three interdependent core principles: risk assessment, the systematic process of identifying and analyzing potential risks; risk control, the measures implemented to modify those risks; and risk communication, the strategic sharing of information about risks to guide decision-making. Together, these principles form a continuous cycle that allows researchers and regulatory scientists to monitor products throughout their lifecycle, from clinical trials to post-market surveillance. A robust understanding of these elements is crucial for developing effective early detection research strategies that can adapt to emerging data in a dynamic regulatory environment [11] [12].

Risk Assessment: The Foundational Element

Definition and Purpose

Risk assessment is the structured process of identifying, analyzing, and evaluating potential uncertainties that could impact an organization's objectives, operations, or assets [13] [14] [15]. In the context of drug surveillance, it involves the evaluation of risks considering potential direct and indirect consequences of an incident, known vulnerabilities, and general or specific threat information [11]. This process provides the evidence base for proactive planning, allowing research scientists to allocate resources effectively and respond with agility rather than reacting to crises [15]. A well-executed assessment is documented, reproducible, and defensible to ensure transparency and practicality for stakeholders and decision-makers [11].

Core Components and Process

The risk assessment process consists of three core components executed through a series of defined steps.

Core Components:

- Risk Identification: The process of discovering what could go wrong, considering prime assets and how they could be impacted [14]. Techniques include stakeholder interviews, data classification analysis, and reviewing threat intelligence feeds [14].

- Risk Analysis: A deeper investigation into the level of risk associated with each identified threat using qualitative or quantitative methods to estimate likelihood and impact [14].

- Risk Evaluation: Determining how each identified risk should be handled by comparing potential impact and likelihood against the organization's risk tolerance to decide if it requires immediate mitigation [14].

Process Workflow:

The logical sequence of the risk assessment process is systematically mapped from context establishment through to mitigation planning. This workflow establishes the foundation for all subsequent risk management activities by transforming identified risks into actionable treatment strategies.

Quantitative and Qualitative Methodologies

Researchers must select appropriate assessment methodologies based on data availability, regulatory requirements, and the nature of risks. The following table compares the primary approaches:

Table: Comparison of Risk Assessment Methodologies

| Methodology | Definition / Approach | Best Application | Key Trade-Offs |

|---|---|---|---|

| Qualitative Assessment | Uses descriptive labels (High, Medium, Low) based on subjective judgment and expert opinion [14] [15]. | Situations with limited data or when quick prioritization is needed; effective for hard-to-quantify risks like reputational damage [15]. | Simple and fast but lacks precision; results can be subjective and potentially inconsistent [15]. |

| Quantitative Assessment | Uses numerical data, mathematical models (e.g., Monte Carlo simulations), and statistical methods to calculate risks [14] [15]. | Mature risk environments with sufficient data for detailed financial or statistical modeling; ideal for cost-benefit analysis [15]. | Highly precise and transparent but requires reliable data and technical modeling expertise; can be time-consuming [15]. |

| Semi-Quantitative Assessment | Blends numeric scoring (e.g., 1-10 scales) with qualitative judgment; often visualized via risk matrices [15]. | Teams needing more structure than qualitative offers but lacking resources for full quantitative analysis [15]. | More standardized than qualitative alone but still involves subjective bias; may create false sense of precision [15]. |

| Scenario Analysis (What-If) | Structured brainstorming to develop threat/hazard scenarios and assess likelihood and consequences [11]. | Developing strategy for managing risk from identified scenarios; useful for unusual or emerging risks [11]. | Creative and comprehensive but can be time-intensive; dependent on participant expertise [11]. |

Experimental Protocol: Conducting a Qualitative Risk Assessment for a Clinical Trial

Protocol Title: Qualitative Risk Assessment for Clinical Trial Safety Surveillance

Purpose: To systematically identify, analyze, and prioritize potential safety risks in a clinical trial setting using expert judgment to inform monitoring strategies.

Materials and Reagents:

- Risk Register Template: Digital or physical template for logging identified risks with descriptions, categories, and proposed controls [14].

- Risk Assessment Matrix: A visual grid tool (typically 5x5) that categorizes risks based on likelihood and impact [14].

- Stakeholder List: Comprehensive list of clinical, regulatory, and scientific experts to interview.

- Data Sources: Previous clinical trial data, literature on similar compounds, pre-clinical findings, and known class effects.

Procedure:

- Establish Context: Define the assessment's scope (e.g., Phase III trial for novel oncology therapeutic), objectives, and key stakeholders including pharmacovigilance, clinical development, and biostatistics team members [14].

- Risk Identification: a. Conduct structured interviews and workshops with stakeholders. b. Document all potential safety risks (e.g., specific adverse events, drug interactions, population-specific risks) in the risk register. c. Utilize "what-if" analysis for unusual or emerging risks not evident from historical data [11].

- Risk Analysis: a. For each identified risk, convene an expert panel to score likelihood (e.g., Rare to Almost Certain) and impact (e.g., Insignificant to Catastrophic) using predefined scales. b. Plot each risk on the risk matrix to determine its initial rating (e.g., Low, Medium, High, Extreme) [14].

- Risk Evaluation: a. Prioritize risks based on their matrix positioning. b. Compare prioritized risks against the organization's risk appetite to determine which require immediate control measures [14] [12].

- Documentation: a. Record all findings, including the rationale for scores and priorities. b. Present the finalized risk register and matrix to decision-makers for resource allocation [14].

Risk Control: Implementing Protective Measures

Definition and Purpose

Risk control encompasses the strategies, procedures, and measures utilized to modify risk by reducing its likelihood, impact, or velocity (the speed at which a risk escalates) [16] [17]. These are essential measures that an organization implements to minimize, mitigate, or manage risk levels, enabling operations within established risk appetite boundaries [12]. Controls actively intervene in risk factors that could impact an organization's objectives, with the goal of either decreasing the likelihood of risks occurring or minimizing their potential impact [16]. In pharmaceutical surveillance, effective controls are critical for ensuring that potential safety issues are contained before they can affect patient populations.

Types of Risk Controls

Controls are categorized based on their point of application in the risk lifecycle, each serving a distinct function in modifying risk characteristics:

Primary Control Categories:

Comprehensive Control Approaches: Beyond the primary categories, organizations employ several strategic approaches to risk control:

- Risk Avoidance: Eliminating exposure to a risk factor entirely, such as halting a clinical trial due to emerging safety signals [12].

- Loss Prevention: Implementing measures to reduce and prevent losses, such as surveillance protocols and systematic data monitoring [12].

- Loss Reduction: Limiting the extent of loss that may occur, exemplified by implementing additional patient monitoring for known adverse events [12].

- Risk Separation: Limiting risk exposure spread across locations, such as geographic diversification of manufacturing sites to ensure supply continuity [12].

Experimental Protocol: Developing a Control Register for Drug Safety Surveillance

Protocol Title: Creation and Maintenance of a Risk Control Register for Pharmacovigilance

Purpose: To systematically document, track, and test controls implemented to mitigate identified drug safety risks, ensuring they remain effective and aligned with risk appetite.

Materials and Reagents:

- Control Register Template: Digital repository (e.g., GRC software or database) for recording control details [12].

- Risk Register: Previously documented list of identified and prioritized risks [12].

- Testing Framework: Questionnaires, inspection checklists, and data analysis procedures for control validation.

- Effectiveness Metrics: Key Risk Indicators (KRIs) and operational data to measure control performance.

Procedure:

- Control Identification: a. For each risk in the risk register, identify existing and proposed controls. b. Categorize each control as preventive, detective, or reactive [16] [17]. c. Limit registered controls to key and medium controls (typically 2-4 per risk) to maintain focus [17].

- Control Documentation: a. In the control register, capture for each control: name, description, type, owner, purpose, related risk, frequency of application, implementation status, and testing frequency [12]. b. Ensure each control is directly mapped to the specific risk(s) it modifies [12].

- Effectiveness Testing: a. Establish a regular schedule for control testing based on the organization's needs and risk nature [12]. b. Employ multiple testing methods: questionnaires, observations, inspections, and review of relevant documents and records [12]. c. Document testing results and any identified weaknesses.

- Performance Monitoring: a. Leverage operational data (e.g., adverse event reports, compliance metrics) to assess if controls are maintaining risk within tolerable levels [12]. b. Use integrated GRC platforms to map controls to incident data and KRIs for real-time monitoring [12].

- Optimization: a. Analyze control strengths and weaknesses alongside cost-effectiveness [12]. b. Adjust or replace ineffective controls based on testing results and performance data. c. Regularly reassess the control register to reflect changes in the risk landscape.

Risk Communication: Strategic Information Exchange

Definition and Purpose

Risk communication is a strategic, two-way process of sharing information about risks and benefits to facilitate optimal decision-making [18]. For regulatory agencies and pharmaceutical companies, it involves communicating "frequently and clearly about risks and benefits—and about what organizations and individuals can do to minimize risk" [18]. In drug development, this means providing healthcare professionals, patients, and consumers with the information they need about regulated products in an accessible format and timely manner to ensure appropriate use [18]. Effective communication is not merely about disseminating information but ensuring comprehension and enabling informed choices that protect public health.

Core Principles and Strategic Framework

Effective risk communication follows several guiding principles: it must be integral to organizational mission, adapted to various audience needs, and continuously evaluated for optimal effectiveness [18]. The U.S. FDA's Strategic Plan for Risk Communication outlines a comprehensive framework built on three pillars with associated strategies:

Table: Strategic Framework for Risk Communication

| Strategic Area | Key Strategies |

|---|---|

| Strengthening Science | 1. Identify and fill gaps in risk communication knowledge.2. Evaluate effectiveness of risk communication activities.3. Translate knowledge gained through research into practice [18]. |

| Expanding Capacity | 1. Streamline message development and coordination.2. Plan for crisis communications.3. Improve two-way communication through enhanced partnerships.4. Increase staff with behavioral science expertise [18]. |

| Optimizing Policy | 1. Develop principles for consistent and easily understood communications.2. Identify consistent criteria for when and how to communicate emerging risk information.3. Assess and improve communication policies in high public health impact areas [18]. |

Communication Channels and Tools

Pharmaceutical companies and regulators employ multiple channels to communicate risk information, each serving distinct purposes and audiences:

- Labeling Tools: Summary of Product Characteristics (SmPC), package inserts, patient information leaflets (PILs), and carton labeling represent primary, regulated communication channels [19].

- Direct Communications: "Dear Healthcare Professional Communications" disseminate important safety information directly to prescribers [19].

- Public Health Advisories: FDA Drug Safety Communications provide timely information about new safety issues to patients and healthcare professionals to support informed treatment decisions [20].

- Digital Platforms: Web sites and web tools serve as primary mechanisms for communicating with different stakeholders, requiring continuous optimization for usability and accessibility [18].

Experimental Protocol: Developing a Risk Communication Strategy for an Emerging Safety Signal

Protocol Title: Protocol for Developing a Targeted Risk Communication Plan

Purpose: To create and evaluate a strategic communication plan for conveying emerging risk information about a medicinal product to relevant stakeholders, maximizing comprehension and appropriate action.

Materials and Reagents:

- Audience Analysis Templates: Profiles for healthcare professionals, patients, and caregivers detailing information needs, literacy levels, and preferred channels.

- Message Mapping Tools: Frameworks for developing consistent, clear, and concise core messages.

- Testing Materials: Draft communications, survey questionnaires, and focus group guides for message testing.

- Dissemination Checklist: Inventory of communication channels (regulatory documents, direct communications, public announcements, digital platforms).

Procedure:

- Situation Analysis: a. Constitute a dedicated communications group with relevant expertise to coordinate strategy [19]. b. Gather all available data on the emerging safety signal, including evidence quality, populations at risk, and clinical implications.

- Audience Assessment: a. Identify and segment target audiences (e.g., prescribing specialists, primary care physicians, patients with specific comorbidities). b. For each segment, analyze their specific information needs, pre-existing knowledge, and potential concerns [18].

- Message Development: a. Develop core messages explaining the risk, context, evidence strength, and recommended actions. b. Apply principles for consistent and easily understood communications, avoiding absolute terms and explaining the benefit-risk context [18]. c. Test draft messages with representative audience samples and refine based on feedback [18].

- Channel Selection and Dissemination: a. Select appropriate channels for each audience (e.g., Drug Safety Communications for HCPs, patient-friendly materials for the public) [20]. b. Coordinate internal reviews and simultaneous release to ensure message consistency [19]. c. Implement dissemination according to the plan, utilizing multiple channels for reinforcement.

- Effectiveness Evaluation: a. Monitor audience reaction through surveys, media analysis, and tracking of relevant health metrics [18] [19]. b. Assess comprehension levels and behavioral changes following communication. c. Adjust future communications based on evaluation findings and emerging data.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Essential Resources for Risk-Based Surveillance Research

| Tool / Resource | Function / Purpose | Application Context |

|---|---|---|

| Risk Register Template | Digital or physical template for systematically logging identified risks, with descriptions, categories, and proposed controls [14]. | Serves as the central repository during risk identification and assessment phases across all research domains. |

| Risk Assessment Matrix | A visual grid tool (typically 5x5) that categorizes risks based on their assessed likelihood and impact [14]. | Used during risk analysis and evaluation to determine risk priority levels and inform resource allocation decisions. |

| GRC Software | Governance, Risk, and Compliance platforms provide a centralized system for managing risks, controls, assessments, and incident data [12]. | Automates the risk management process, enables real-time monitoring, and facilitates reporting and visualization for stakeholders. |

| Control Register | A log used to document and track controls across an enterprise, directly linked to the organization's risk register [12]. | Essential for control management, testing scheduling, and maintaining an audit trail of risk mitigation efforts. |

| Stakeholder List | Comprehensive inventory of clinical, regulatory, and scientific experts, along with patients or community representatives. | Used throughout the risk management process to ensure appropriate input, validation, and communication with all relevant parties. |

| Message Testing Materials | Draft communications, survey questionnaires, and focus group guides for evaluating message clarity and effectiveness [18]. | Critical for pre-launch validation of risk communications to ensure target audiences correctly interpret safety information. |

In the fields of public health, plant protection, and clinical drug development, the imperative for early detection of adverse events is paramount. Traditionally, surveillance has operated on a model of standardized, periodic monitoring. However, this approach is increasingly being supplanted by risk-based paradigms that strategically focus resources where they are most likely to detect problems. A traditional surveillance system is characterized by its reactive, scheduled, and broad-based application, whereas a risk-based system is proactive, adaptive, and targeted [21]. This shift is driven by the recognition that uniform surveillance is often inefficient, missing early warning signs in populations or processes with elevated risk. The consequences of delayed detection can be severe, ranging from uncontrolled disease outbreaks in animal populations [21] and devastated agricultural industries [9] to compromised data integrity and patient safety in clinical trials [22].

The core thesis of this proactive shift is that risk-based surveillance strategies significantly enhance the sensitivity and efficiency of early detection systems. By integrating quantitative risk assessments and dynamic resource allocation, these paradigms offer a more powerful framework for identifying threats before they escalate. This document provides detailed application notes and experimental protocols to guide researchers and drug development professionals in implementing and optimizing these advanced surveillance strategies.

Comparative Analysis: Traditional vs. Risk-Based Surveillance

The following table summarizes the fundamental contrasts between the two surveillance paradigms, highlighting the operational and philosophical differences.

Table 1: Core Contrasts Between Traditional and Risk-Based Surveillance Paradigms

| Feature | Traditional Surveillance | Risk-Based Surveillance |

|---|---|---|

| Core Philosophy | Reactive, uniform coverage | Proactive, targeted based on threat |

| Resource Allocation | Fixed, evenly distributed | Dynamic, focused on high-risk units |

| Data Utilization | Relies on scheduled data collection | Leverages real-time data and risk indicators |

| Key Strength | Simple to design and implement | Higher sensitivity and efficiency for early detection |

| Primary Limitation | Can miss emerging threats in blind spots | Requires sophisticated risk assessment and analysis |

| Example in Clinical Trials | 100% Source Data Verification (SDV) | Centralized monitoring focused on critical data points [22] |

| Example in Pathogen Detection | Periodic, random sampling across a landscape | Surveillance optimized to maximize probability of detecting an invading pathogen [9] |

Quantitative evidence demonstrates the rapid adoption and efficacy of risk-based approaches. In clinical trials, implementation of at least one Risk-Based Quality Management (RBQM) component surged from 53% of trials in 2019 to 88% in 2021 [22]. This shift is driven by the ability of risk-based methods to improve trial outcomes, enhance data quality, and optimize resource allocation in an increasingly complex clinical research landscape [23].

Application Note: Implementing Risk-Based Surveillance for Early Detection

Core Principles and Workflow

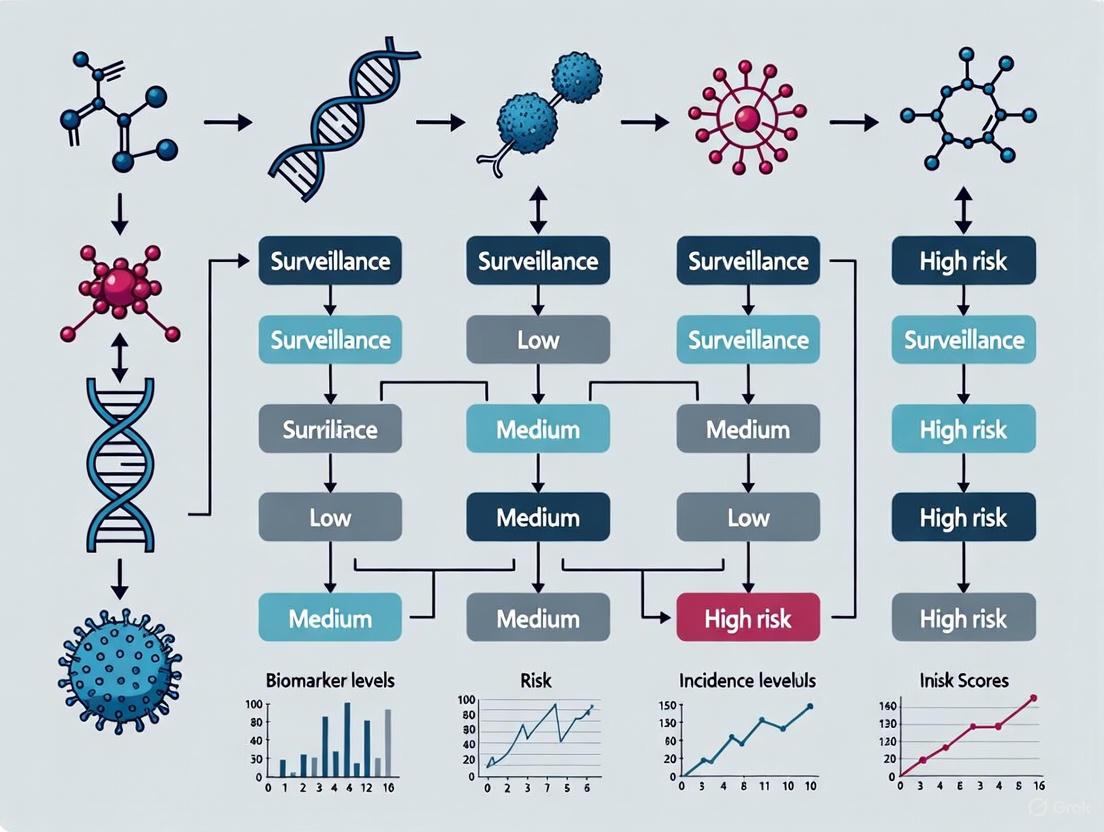

Implementing a risk-based surveillance system is a structured process that moves from risk identification to continuous optimization. The core workflow can be visualized as a cycle of key activities, as shown in the following diagram.

Diagram 1: The RBQM Continuous Cycle. This workflow illustrates the iterative process of risk-based quality management, from defining objectives to continuous optimization based on feedback.

The foundation of this paradigm is the identification of Critical to Quality (CTQ) factors—the processes and data points most essential to patient safety and data integrity [23]. This is followed by continuous risk assessment and targeted resource deployment. A critical insight from epidemiological modelling is that optimal surveillance does not always mean focusing solely on the very highest-risk site. When risk is spatially correlated, "putting all your eggs in one basket" can be suboptimal; spreading resources according to a calculated strategy can maximize the overall probability of detection [9].

Quantitative Framework for Sensitivity

A key advantage of the risk-based paradigm is the ability to quantify surveillance system sensitivity (SSe). For early disease detection, SSe can be conceptualized as a function of three components [21]:

- Population Coverage: The proportion of the population under surveillance.

- Temporal Coverage: The frequency of observation or testing.

- Detection Sensitivity: The ability to identify the target once a unit is surveyed.

This quantitative framework allows for direct comparison of different surveillance system designs. Expert elicitation panels can be used to weight specific system traits—such as observation frequency, clarity of reporting guidance, and observer incentives—to build a replicable model for estimating SSe in observational surveillance [24]. This model can then inform both early detection design and the confidence in disease freedom provided by negative surveillance reports.

Experimental Protocols

Protocol 1: Designing a Risk-Based Surveillance Network for Pathogen Detection

This protocol provides a methodology for optimizing the physical arrangement of surveillance sites to maximize the probability of early pathogen detection, adaptable for plant, animal, or public health applications.

4.1.1 Research Reagent Solutions

Table 2: Essential Materials for Spatial Surveillance Optimization

| Item | Function |

|---|---|

| Spatially Explicit Host Data | Provides a landscape map of host population density and distribution, the foundation for modeling spread and risk. |

| Pathogen Entry & Spread Model | A stochastic, simulation model to replicate the introduction and spread of the pathogen through the host landscape. |

| Stochastic Optimization Routine | A computational algorithm (e.g., Monte Carlo) to identify surveillance site arrangements that maximize detection probability. |

| Diagnostic Sensitivity Parameter | The known probability that a test will correctly identify an infected host, used to calibrate the detection model. |

4.1.2 Methodology

Model Parameterization:

- Acquire or develop a high-resolution, spatially explicit map of the host population (e.g., citrus tree density for Huanglongbing surveillance) [9].

- Develop a stochastic model simulating pathogen entry at likely introduction points (e.g., ports, high-traffic areas) and subsequent spread through the host landscape. The model should run until a predefined, maximum acceptable prevalence is exceeded.

Integration of Detection Module:

- Couple the epidemiological model with a statistical detection module. This module should incorporate logistical parameters, including the number of surveillance sites, survey frequency, and the diagnostic sensitivity of the detection method.

Optimization Execution:

- Use a stochastic optimization algorithm to test thousands of potential surveillance site arrangements.

- The algorithm should select the arrangement of sites that maximizes the probability of detecting the pathogen before the outbreak reaches the maximum acceptable prevalence threshold.

Validation and Comparison:

- Compare the performance (probability of detection and cost) of the optimized surveillance network against conventional, static risk-based targeting.

- Validate the model's recommendations against historical outbreak data, if available.

The following workflow diagram illustrates the key computational steps in this protocol.

Diagram 2: Pathogen Surveillance Optimization. A computational workflow for designing a surveillance network that maximizes early detection probability for an invading pathogen.

Protocol 2: Implementing a Risk-Based Quality Management (RBQM) System in Clinical Trials

This protocol outlines the steps for integrating an RBQM system into a clinical trial, aligning with ICH E6(R2) and E8(R1) guidelines and modern regulatory expectations [22] [23].

4.2.1 Research Reagent Solutions

Table 3: Essential Components for a Clinical RBQM System

| Item | Function |

|---|---|

| Centralized Monitoring Platform | A technology platform that enables remote, real-time oversight of clinical site data, facilitating risk identification across sites. |

| Risk Assessment Tool | A standardized framework (e.g., weighted checklist, software) for conducting initial and ongoing cross-functional risk assessments. |

| Key Risk Indicator (KRI) Dashboard | A visual interface that tracks predefined metrics (KRIs) to signal potential emerging issues at clinical sites. |

| Quality Tolerance Limit (QTL) Framework | Predefined boundaries for acceptable variation in critical study parameters, used to trigger corrective actions. |

4.2.2 Methodology

Initial Cross-Functional Risk Assessment:

- Convene a team including representatives from clinical operations, data management, biostatistics, and safety.

- Identify Critical to Quality (CTQ) factors: Determine the critical processes and data points essential to trial integrity and participant safety (e.g., primary endpoint data, informed consent process, adherence to inclusion/exclusion criteria) [23].

- Identify and evaluate risks: For each CTQ factor, brainstorm potential risks. Evaluate each risk based on its probability of occurrence and its impact on patient safety and data reliability.

- Develop mitigation strategies: For each high-risk item, define a proactive mitigation plan. This may include specialized training, enhanced monitoring, or protocol clarification.

Define Monitoring Triggers:

- Establish Quality Tolerance Limits (QTLs): Set statistical boundaries for key study metrics (e.g., screen failure rate, rate of protocol deviations). Exceeding a QTL triggers a formal investigation.

- Define Key Risk Indicators (KRIs): Identify leading indicators of potential problems (e.g., rate of data entry lag, frequency of specific adverse events) and configure the centralized monitoring platform to track them via a dashboard.

Execute Centralized and Targeted On-Site Monitoring:

- Reduce Source Data Verification (SDV): Move away from 100% SDV. Instead, use centralized monitoring to review all site data and target SDV to critical data points or sites flagged by KRIs/QTLs [22].

- Focus on-site visits: Use insights from centralized monitoring to plan targeted, on-site visits that investigate root causes of issues rather than performing exhaustive data checks.

Ongoing Review and Adaptation:

- Hold regular, cross-functional meetings to review KRIs, QTL status, and the overall risk landscape.

- Adapt the risk management plan as the trial progresses and new risks are identified, ensuring the system remains dynamic and responsive.

The logical flow of risk identification, control, and review in an RBQM system is depicted below.

Diagram 3: Clinical RBQM Logic. The continuous loop of risk management in clinical trials, from initial assessment to adaptive control.

The evidence from diverse fields—clinical research, animal health, and plant pathology—converges on a single conclusion: the proactive, risk-based paradigm is fundamentally superior to traditional surveillance for the purpose of early detection. The shift from a reactive, uniform approach to a dynamic, targeted strategy represents a maturation of surveillance science. It is a shift from merely looking to seeing, and from simply collecting data to deriving intelligence.

The protocols outlined herein provide a tangible roadmap for researchers and drug development professionals to implement this paradigm. By quantifying system sensitivity, strategically allocating resources, and leveraging continuous feedback loops, risk-based surveillance enhances our capacity to detect threats at the earliest possible moment. This not only safeguards health and health data but also generates significant efficiency and cost savings. As clinical trials grow more complex and global pathogen pressures intensify, the adoption of these sophisticated surveillance strategies transitions from a best practice to an indispensable component of responsible research and public health protection.

Global Regulatory Alignment and Remaining Discrepancies

Risk-based surveillance represents a strategic approach to early disease detection in which resources are preferentially allocated to subpopulations, geographical areas, or pathways classified as high-risk for disease introduction or spread [8] [25]. This methodology intentionally introduces selection bias to optimize the probability of detecting diseases or infections when resources are limited [25] [26]. For researchers and drug development professionals, understanding the interplay between evolving global regulatory frameworks and the epidemiological principles of risk-based surveillance is critical for designing effective early detection systems for emerging health threats.

The fundamental objective of risk-based surveillance is to achieve higher benefit-cost ratios with existing or reduced resources by focusing on units with the greatest likelihood of disease presence [26]. These systems apply risk assessment methods at various stages of traditional surveillance design to enhance early detection and management of diseases or hazards [26]. In practice, this requires navigating an increasingly complex global regulatory environment characterized by both harmonization initiatives and persistent jurisdictional fragmentation.

Current Regulatory Frameworks: Alignment Initiatives and Persistent Gaps

International Regulatory Convergence

Global regulatory alignment has seen significant advances through international organizations and agreements that establish shared standards and principles. The World Trade Organization (WTO) remains the primary mediator of trade between nations, balancing exports and imports, while the International Monetary Fund (IMF) establishes frameworks for international economic cooperation [27]. The Organisation for Economic Co-operation and Development (OECD) addresses economic and social challenges through policy coordination, and regional blocs like the European Union and USMCA have strengthened policies to promote fairer trade [27].

Substantive alignment has occurred in several key areas:

- Financial Action Task Force (FATF) Recommendations: Provide near-global standards for anti-money laundering and counter-terrorist financing practices, though implementation varies [28]

- Basel III frameworks: International banking regulations that have achieved significant cross-border adoption [28]

- EU AI Act: Establishing a risk-based framework for artificial intelligence with tiered obligations based on system classification [27]

Areas of Significant Regulatory Divergence

Despite convergence in certain domains, significant regulatory fragmentation persists across jurisdictions, creating operational complexity for global research and surveillance initiatives.

Table 1: Key Areas of Regulatory Divergence Impacting Global Surveillance

| Domain | Nature of Divergence | Impact on Surveillance Programs |

|---|---|---|

| Artificial Intelligence Governance | EU adopts comprehensive risk-based framework (AI Act) while other regions implement sector-specific guidelines [27] | Creates compliance complexity for AI-powered diagnostic tools and surveillance algorithms |

| Data Privacy & Transfer | GDPR-inspired regulations versus region-specific frameworks (e.g., India's DPDPA-2023) with differing data localization requirements [27] [28] | Restricts cross-border data sharing essential for global disease surveillance |

| Anti-Money Laundering (AML) | Each country maintains unique requirements despite FATF guidelines, creating overlapping and sometimes contradictory rulesets [28] | Complicates financial transactions supporting international research collaborations |

| Digital Assets | Fragmented rulebooks with different regional priorities without coordinated implementation [28] | Hinders development of blockchain-based surveillance data systems |

This regulatory fragmentation creates substantial operational challenges for organizations implementing global surveillance systems. Companies face duplicated compliance efforts across jurisdictions, manual and inconsistent regulatory interpretations, language barriers requiring local expertise, and difficulty maintaining centralized views of global obligations [28]. The financial impact includes costs for hiring legal experts in each market, implementing compliance tracking systems, continuous employee training, and third-party audits [27].

Methodological Framework: Risk-Based Surveillance Protocols

Core Principles and Definitions

Risk-based surveillance systems are defined as those that apply risk assessment methods in different steps of traditional surveillance design for early detection and management of diseases or hazards [26]. The principal objectives include identifying surveillance needs to protect health, setting priorities, and allocating resources effectively and efficiently [26].

The epidemiological foundation relies on appropriate risk quantification. For risk-based surveillance, crude (unadjusted) relative risk or odds ratio estimates are preferable to adjusted estimates, as units are selected based on the presence of specific risk factors regardless of other potential confounders [25]. This represents the total (unadjusted) risk encompassing both causal and non-causal associations relevant to practical sampling situations [25].

Experimental Protocol: Optimizing Spatial Surveillance Design

The following protocol provides a methodology for designing risk-based surveillance systems that explicitly account for pathogen entry and spread dynamics, adapted from Mastin et al.'s approach for detecting invasive plant pathogens [8] [9].

Materials and Equipment

Table 2: Research Reagent Solutions for Surveillance Optimization

| Item | Specification | Function/Application |

|---|---|---|

| Spatial Host Density Data | High-resolution geographical data on host distribution (e.g., citrus density maps for HLB surveillance) [8] | Informs risk model parameterization and surveillance site selection |

| Pathogen Dispersal Kernel | Exponential or power-law models parameterized from empirical spread data [8] | Predicts spatial spread patterns from introduction points |

| Diagnostic Sensitivity Parameters | Test performance characteristics (probability of detection) for available diagnostic methods [8] [29] | Informs sampling intensity requirements and detection probabilities |

| Stochastic Optimization Algorithm | Computational method (e.g., simulated annealing) for site selection [8] | Identifies surveillance arrangements maximizing detection probability |

| Risk-Based Sampling Framework | Protocol for preferential sampling of high-risk strata [25] [26] | Enhances detection probability through targeted resource allocation |

Procedure

Step 1: Landscape Parameterization

- Obtain or develop a gridded landscape (e.g., 1 km × 1 km cells) containing density data for relevant hosts [8]

- Parameterize secondary spread using an exponential dispersal kernel fitted to available pathogen spread data [8]

- Define pathogen introduction rates and locations based on known risk pathways (e.g., human movement patterns from infected areas) [8]

Step 2: Simulation Model Implementation

- Develop a spatially explicit, stochastic model simulating pathogen entry and spread through the landscape

- Run multiple simulations (minimum 1,000 iterations) until a predefined maximum acceptable prevalence threshold is exceeded [8]

- Record timing and location of infections for each simulation run

Step 3: Detection Modeling

- Incorporate statistical models of detection that account for:

- Model increase in detectability following infection as deterministic processes based on known pathogen dynamics [8]

Step 4: Surveillance Optimization

- Use stochastic optimization (e.g., simulated annealing) to identify surveillance site arrangements (Ω) that maximize probability of detection before prevalence threshold is reached [8]

- Constrain optimization by practical parameters:

- Validate that optimal strategy avoids over-concentration on single highest-risk sites when spatial correlation exists [8]

Step 5: Performance Evaluation

- Compare optimized surveillance strategy performance against conventional risk-based approaches [8]

- Calculate performance gain and potential cost savings [8]

- Conduct sensitivity analysis on key parameters (introduction location, diagnostic sensitivity, sampling frequency) [8]

Workflow Visualization

Diagram 1: Risk-based surveillance design workflow

Regulatory Navigation Protocol: Managing Cross-Border Compliance

Technology-Enabled Compliance Framework

Modern regulatory change management requires systematic processes to monitor, assess, adapt to, and comply with evolving international requirements [27]. The following protocol leverages RegTech solutions to maintain compliance across fragmented regulatory landscapes.

Materials

- Governance, Risk, and Compliance (GRC) software with API access to 400+ global data sources [28]

- Automated regulatory tracking systems monitoring specialized websites, agency portals, and regulator communications [27]

- AI-powered platforms capable of parsing regulatory documents across multiple languages [28]

- Control mapping systems linking requirements across frameworks [27]

Procedure

Step 1: Regulatory Change Monitoring

- Implement automated monitoring of regulatory updates across all relevant jurisdictions [27]

- Configure AI systems to extract obligations with context from regulatory documents [28]

- Establish knowledge graphs connecting obligations to internal policies, controls, and products [28]

Step 2: Impact Assessment and Gap Analysis

- Conduct thorough analysis of operational, procedural, and compliance implications for each regulatory change [27]

- Perform gap analysis comparing existing policies with new requirements [27]

- Document discrepancies and associated risks, prioritizing by risk level [27]

Step 3: Control Mapping and Implementation

- Map controls across multiple regulatory frameworks to eliminate redundancies [27]

- Develop action plans with resource allocation, timelines, and priorities based on urgency [27]

- Update internal policies and controls to address identified gaps [27]

Step 4: Continuous Compliance Monitoring

- Implement automated dashboards providing real-time compliance status [27]

- Establish continuous monitoring systems flagging non-compliance proactively [27]

- Maintain comprehensive documentation for regulatory reporting and audits [27]

Cross-Border Material Transfer Protocol

Diagram 2: Cross-border material transfer compliance

Discussion: Strategic Implications for Surveillance Research

The integration of risk-based surveillance principles with adaptive regulatory compliance creates powerful frameworks for early detection of emerging health threats. Research demonstrates that optimal surveillance strategies must account for complex spatial dynamics rather than simply targeting the highest-risk sites [8]. Specifically, spatial correlation in risk can make it suboptimal to focus solely on the highest-risk locations, necessitating strategic distribution of surveillance resources across multiple potential introduction sites [8].

The regulatory landscape continues to evolve toward what experts term a "regulatory tsunami," with increasingly stringent requirements across sectors [27]. While some regional harmonization occurs, geopolitical factors are driving further divergence in many domains [28]. Successful navigation of this environment requires organizations to invest in regulatory agility—the ability to adapt quickly to regulatory changes regardless of their origin [28].

For researchers developing surveillance systems, key considerations include:

- Diagnostic sensitivity impact: The optimal surveillance strategy differs depending on available detection methods and their sensitivity [8]

- Resource allocation trade-offs: The number of survey sites, sampling frequency, and samples per site interact to determine overall system performance [8] [29]

- Validation requirements: Risk-based surveillance systems must demonstrate equal or higher efficacy than traditional systems with higher efficiency (benefit-cost ratio) [26]

- Cross-border data sharing: Regulatory restrictions on data transfer may limit the effectiveness of international surveillance networks [27] [28]

Future developments in AI-powered regulatory technology show promise for alleviating compliance burdens through automated monitoring, control mapping, and continuous compliance assessment [27] [28]. However, the fundamental tension between globalized health threats and jurisdictional regulatory sovereignty will continue to present challenges for international surveillance initiatives.

From Theory to Practice: Implementing Advanced Risk-Based Frameworks

Risk-Based Quality Management (RBQM) is a systematic, proactive framework for managing quality in clinical trials by focusing efforts on factors critical to human subject protection and the reliability of trial results. The implementation of RBQM is championed by global regulatory bodies, including the FDA and EMA, and is codified in the ICH E6 (R2) and the upcoming ICH E6 (R3) guidelines [30] [31]. This approach represents a fundamental shift from reactive, error-correction models to a preventive strategy that prioritizes "errors that matter," thereby optimizing resource allocation and enhancing the overall quality and efficiency of clinical research [30].

Within the broader thesis of risk-based surveillance for early detection, RBQM provides a powerful operational model. Just as surveillance strategies in other fields (e.g., infectious disease control or cancer recurrence monitoring) aim to allocate resources based on risk to achieve early detection [32] [33], RBQM applies the same paradigm to clinical trial oversight. It advocates for a surveillance system within the trial that is adaptive, targeted, and data-driven, moving away from a one-size-fits-all frequency of monitoring visits and 100% data verification towards a model where oversight activities are continuously calibrated to the evolving risks of the study [34] [31]. This ensures that the greatest oversight is directed at the processes and data most critical to patient safety and the robustness of the trial's conclusions.

The Regulatory and Quantitative Landscape of RBQM Adoption

The regulatory impetus for RBQM began with the ICH E6 (R2) addendum, which introduced new sections on quality management and risk-based monitoring [31]. This addendum mandates that sponsors implement a quality management system where critical processes and data are identified, and risks are assessed and mitigated [34]. The forthcoming ICH E6 (R3) is expected to provide even greater support for these RBQM principles throughout the clinical trial lifecycle [35].

A recent Tufts Center for the Study of Drug Development (CSDD) survey provides a quantitative snapshot of RBQM adoption across the industry. The study, which assessed 32 distinct RBQM practices, found that, on average, companies have implemented RBQM in 57% of their clinical trials [35]. However, adoption varies significantly based on organizational size and experience.

Table 1: Adoption of RBQM Components in Clinical Trials (Based on Tufts CSDD Survey) [35]

| RBQM Component Category | Examples of Specific Components | Implementation Notes |

|---|---|---|

| Planning & Design | Identification of Critical-to-Quality factors, Risk Assessment, Protocol Complexity Assessment | Foundational activities; ~80% of trials implement the initial risk assessment [30]. |

| Execution | Key Risk Indicators (KRIs), Quality Tolerance Limits (QTLs), Statistical Data Monitoring, Reduced Source Data Verification (SDV) | Centralized monitoring techniques are key; adoption of other components beyond risk assessment is lower, ranging from 22-43% [30]. |

| Documentation & Resolution | Identification and Evaluation of Risks and QTL deviations, Updates to monitoring plans | Critical for continuous learning and system improvement. |

Table 2: Barriers to RBQM Adoption and Potential Mitigations [30] [35]

| Barrier Category | Specific Challenges | Potential Mitigation Strategies |

|---|---|---|

| Organizational & Knowledge | Lack of organizational knowledge and awareness, mixed perceptions of value proposition. | Secure executive sponsorship, appoint RBQM champions, and invest in cross-functional training [30]. |

| Process & Change Management | Poor change management planning, complexity of available solutions. | Follow a structured implementation roadmap (e.g., a 10-step process), start with pilot studies [30]. |

| Operational | Difficulties in integrating processes and technology across functions. | Select flexible, interoperable technology platforms and foster cross-functional ownership of RBQM [30]. |

Application Notes: Core Principles and Implementation Protocol

Successful implementation of RBQM relies on a cross-functional team and a structured, iterative process. The following protocol outlines the key stages.

Foundational Protocol: A Cross-Functional RBQM Implementation Workflow

This workflow details the continuous cycle of risk management in a clinical trial, from initial design through study closeout.

Phase 1: Pre-Study Planning & Risk Assessment

- Step 1: Define Critical-to-Quality (CtQ) Factors. A cross-functional team (e.g., clinical, data management, biostatistics, medical) must identify the few data and processes that are most critical to the scientific validity of the trial and to patient safety [30] [34]. These are the "errors that matter."

- Step 2: Conduct Risk Assessment. For each CtQ factor, the team identifies what could go wrong (risks), evaluates the likelihood and impact of those risks, and prioritizes them [34] [31]. A common tool for this is a risk log or a failure mode and effects analysis (FMEA).

- Step 3: Develop the RBQM Plan. This living document details:

- Risk Control Strategies: Actions to prevent or mitigate prioritized risks.

- Monitoring Strategy: The mix of centralized and on-site monitoring activities.

- Key Risk Indicators (KRIs): Proactive, quantifiable measures to monitor risk exposure (e.g., data entry timeliness, query rates, protocol deviation rates) [34].

- Quality Tolerance Limits (QTLs): Predefined thresholds for key study variables that, if breached, trigger a formal evaluation and action [34].

Phase 2: Study Execution & Centralized Monitoring

- Step 4: Implement Centralized Monitoring. This is the remote, timely evaluation of accumulating data from all sites [31]. It employs:

- Step 5: Conduct Targeted On-Site Monitoring. On-site activities are no longer focused on 100% Source Data Verification (SDV). Instead, visits are targeted based on triggers from centralized monitoring (e.g., a site with a KRI alert) and focus on verifying critical data and processes, training site staff, and investigating root causes of issues [30] [31].

Phase 3: Ongoing Review & Adaptive Action

- Step 6: Perform Periodic Cross-Functional Review. The study team regularly reviews data from centralized monitoring, KRIs, and QTLs to assess if the risk profile has changed and if control measures are effective [34] [35].

- Step 7: Trigger Corrective and Preventive Actions (CAPA). When a QTL is breached or a significant risk is identified, a root cause analysis is performed, and appropriate CAPA is implemented. This may include updating the RBQM plan, providing additional site training, or modifying data collection tools [31].

The Scientist's Toolkit: Essential Reagents for RBQM Implementation

Table 3: Key Research Reagent Solutions for RBQM

| Tool / Reagent | Category | Primary Function in the RBQM Experiment |

|---|---|---|

| RBQM Software Platform | Technology | Provides an integrated environment for risk planning, KRI/QTL tracking, statistical data monitoring, and generating centralized monitoring reports [30]. |

| Electronic Data Capture (EDC) | Technology | The primary system for clinical data collection; allows for real-time data validation and is a core data source for KRIs and centralized analyses [34]. |

| Clinical Trial Management System (CTMS) | Technology | Provides operational data (e.g., site activation, enrollment rates) that can be integrated into KRIs for comprehensive risk oversight [34]. |

| Risk Management Plan Template | Document | A standardized template (often an SOP) for documenting the initial risk assessment, CtQ factors, KRIs, QTLs, and mitigation strategies [34] [36]. |

| Key Risk Indicators (KRIs) | Metric | Quantifiable measures (e.g., query aging, screening failure rate) that act as early warning signals for emerging operational and data quality risks [34]. |

| Quality Tolerance Limits (QTLs) | Metric | Predefined study-level thresholds for critical data and processes (e.g., rate of primary endpoint misclassification) that signal a potential threat to trial integrity [30] [34]. |

Advanced Methodologies: Detailed Experimental Protocols for Key RBQM Components

Protocol for Developing and Implementing Key Risk Indicators (KRIs)

Objective: To proactively identify and monitor operational and data quality risks through specific, measurable, and actionable indicators.

Materials: Clinical trial protocol, RBQM plan, EDC and CTMS systems, data visualization or RBQM software.

Methodology:

- KRI Identification: Based on the pre-study risk assessment, define 5-10 core KRIs that are strong predictors of the prioritized risks. Common KRIs in Clinical Data Management include [34]:

- Data Entry Timeliness: Days between patient visit and data entry into EDC.

- Query Rate: Number of data queries raised per data point or per site.

- Protocol Deviation Rate: Frequency and severity of deviations from the protocol.

- Missing Data Proportion: Percentage of missing values in critical data fields.

- KRI Specification: Ensure each KRI is Specific, Measurable, Actionable, Relevant, and Timely (SMART) [34]. For example: "The percentage of case report form pages not entered within 3 days of a patient's visit must be below 10% for each site."