Transforming Oncology: AI-Powered Strategies for Early Cancer Detection and Precision Diagnostics

This article provides a comprehensive analysis of the transformative role of Artificial Intelligence (AI) in early cancer detection for a specialized audience of researchers, scientists, and drug development professionals.

Transforming Oncology: AI-Powered Strategies for Early Cancer Detection and Precision Diagnostics

Abstract

This article provides a comprehensive analysis of the transformative role of Artificial Intelligence (AI) in early cancer detection for a specialized audience of researchers, scientists, and drug development professionals. It explores the foundational principles of AI, including machine and deep learning models, and details their application across diverse modalities such as medical imaging, liquid biopsies, and multi-omics data integration. The content critically examines the methodological challenges of data quality, model interpretability, and clinical integration, while presenting advanced optimization strategies like federated learning and explainable AI (XAI). Furthermore, it synthesizes evidence from recent validation studies and meta-analyses comparing AI performance to clinical experts, offering a realistic assessment of current capabilities and future pathways for clinical translation and regulatory approval.

The New Frontier: Core AI Principles and Their Revolutionary Potential in Early Oncology

Artificial intelligence (AI) is fundamentally reshaping the landscape of oncological research and clinical practice, offering unprecedented capabilities for early cancer detection. By leveraging sophisticated algorithms to analyze complex datasets, AI architectures demonstrate transformative potential in identifying malignancies across diverse imaging and molecular modalities [1]. The integration of machine learning (ML) and deep learning (DL) within oncology represents a paradigm shift, enabling researchers and clinicians to detect patterns imperceptible to human observation, thereby facilitating earlier diagnosis and improved patient outcomes [2]. This technical guide examines the core AI architectures driving this revolution, with a specific focus on their implementation, performance, and experimental protocols in early cancer detection research.

The market expansion of AI in oncology, projected to grow from $1.9 billion in 2023 to approximately $17.9 billion by 2032, underscores the rapid adoption and immense potential of these technologies [3]. This growth is fueled by converging advancements in three critical areas: development of novel algorithms and training methods, evolution of specialized computing hardware, and increased accessibility to large-scale cancer datasets encompassing imaging, genomics, and clinical information [1]. For researchers and drug development professionals, understanding these architectural foundations is essential for leveraging AI capabilities in their investigative workflows and therapeutic development pipelines.

Foundational AI Concepts: A Hierarchical Framework

At its core, artificial intelligence enables computational systems to learn from data, recognize complex patterns, and make data-driven decisions with minimal human intervention [1]. Within this broad field, several specialized architectures have emerged, each with distinct capabilities and applications in cancer research:

Machine Learning (ML) represents a fundamental approach where algorithms identify patterns and relationships within data without explicit programming for each task. ML encompasses various techniques including support vector machines (SVMs), random forests, and decision trees, which are particularly effective for structured data analysis, biomarker discovery, and predictive modeling using clinical and molecular datasets [3].

Deep Learning (DL), a specialized subset of machine learning, utilizes multi-layered neural networks to model abstract representations from large-scale, high-dimensional data. DL architectures have demonstrated remarkable proficiency in processing medical images, genomic sequences, and other complex data modalities prevalent in cancer research [1].

Neural Networks serve as the fundamental building blocks of deep learning, loosely inspired by biological neural networks. These interconnected nodes or "neurons" process information through layered transformations, enabling the identification of hierarchical features essential for cancer detection and classification [3].

Table 1: Core AI Architectures in Cancer Detection Research

| Architecture Type | Key Examples | Strengths | Common Cancer Applications |

|---|---|---|---|

| Machine Learning | Support Vector Machines (SVM), Random Forests, Gradient Boosting (XGBoost) | Effective with structured data, interpretable models, works well with smaller datasets | Molecular diagnostics, risk prediction, biomarker identification [3] |

| Deep Learning | Convolutional Neural Networks (CNNs), Vision Transformers (ViTs), Artificial Neural Networks (ANNs) | Superior with unstructured data, automatic feature extraction, high accuracy with large datasets | Medical image analysis, histopathology classification, genomic sequencing [4] [5] |

| Hybrid Approaches | Deep Support Vector Machines, Ensemble Methods, CLAM | Combines strengths of multiple architectures, improves generalization | Whole Slide Image analysis, multi-modal data integration [3] |

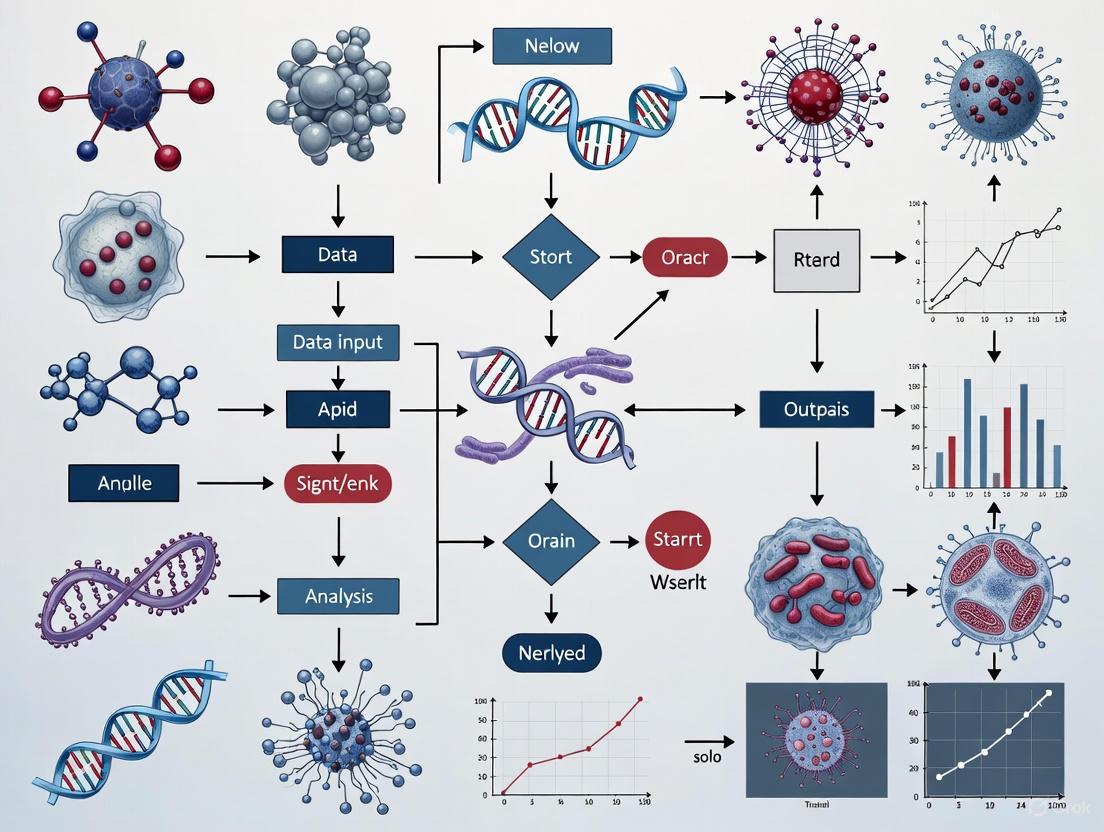

Figure 1: AI Architecture Hierarchy: This diagram illustrates the hierarchical relationship between artificial intelligence, machine learning, deep learning, and specific neural network architectures used in cancer detection research.

Deep Learning Architectures: Technical Foundations and Cancer Applications

Convolutional Neural Networks (CNNs) in Medical Imaging

Convolutional Neural Networks (CNNs) represent the cornerstone of image-based cancer detection, employing specialized layers to automatically learn hierarchical features from medical images. The fundamental strength of CNNs lies in their ability to preserve spatial relationships while progressively extracting more abstract features through multiple layers of processing [4]. In practice, CNNs process input images through convolutional layers that detect low-level features like edges and textures, followed by pooling layers that reduce dimensionality while preserving essential features, and finally fully-connected layers that perform classification based on the extracted features [5].

Multiple CNN architectures have been extensively validated for cancer detection. The DenseNet architecture, characterized by dense connections between layers, promotes feature reuse and mitigates the vanishing gradient problem, achieving remarkable performance in multi-cancer classification. In a comprehensive study evaluating seven cancer types (brain, oral, breast, kidney, Acute Lymphocytic Leukemia, lung and colon, and cervical cancer), DenseNet121 achieved a validation accuracy of 99.94% with exceptionally low loss (0.0017) and RMSE values (0.036056 for training, 0.045826 for validation) [5]. The ResNet architecture addresses degradation in deep networks through skip connections that enable alternative pathways for gradient flow, proving particularly effective for analyzing complex imaging datasets like Digital Breast Tomosynthesis (DBT) [4]. In bone cancer detection using CT images, AlexNet demonstrated exceptional performance with training accuracy of 98%, validation accuracy of 98%, and testing accuracy of 100% [6].

Table 2: Performance Comparison of CNN Architectures in Cancer Detection

| Architecture | Cancer Type | Imaging Modality | Accuracy | Specificity | Sensitivity/Recall | Dataset |

|---|---|---|---|---|---|---|

| DenseNet121 | Multi-Cancer (7 types) | Histopathology | 99.94% | - | - | Multiple public datasets [5] |

| AlexNet | Bone Cancer | CT | 98% (training) 100% (testing) | - | - | 1141 CT images (530 cancer, 511 normal) [6] |

| ResNet50 | Breast Cancer | Pathological tissue | 99.2% (AUC: 0.999) | 99.6% | - | BreakHis v1 [7] |

| ConvNeXT | Breast Cancer | Pathological tissue | 99.2% | 99.6% | - | BreakHis v1 [7] |

| Multiple CNNs | Lung Cancer | Multiple | 77.8%-100% | 0.46-1.00 | 0.81-0.99 | Multi-study analysis [8] |

Vision Transformers (ViTs) and Advanced Architectures

Vision Transformers (ViTs) represent a groundbreaking shift in medical image analysis by replacing traditional convolutional operations with self-attention mechanisms that simultaneously capture local and global contextual information [4]. Unlike CNNs, which excel at detecting localized patterns, ViTs divide images into patches and process them as sequences, making them particularly effective for analyzing complex morphological and spatial relationships in cancer imaging [4]. This architecture demonstrates exceptional proficiency in identifying subtle lesions such as microcalcifications and masses, enhancing early-stage breast cancer detection capabilities.

The performance of ViTs in cancer detection has been remarkable across multiple modalities. In histopathology analysis, fine-tuned ViTs achieved 99.99% accuracy on the BreakHis dataset, while in medical image retrieval, ViT-based hashing methods reached MAP scores of 98.9% [4]. For breast ultrasound classification, specialized implementations like BU ViTNet utilizing multistage transfer learning have demonstrated performance comparable to or surpassing state-of-the-art CNNs [4]. The integration of self-supervised learning has further enhanced ViT utility by enabling pre-training on vast unlabeled medical image datasets, a significant advantage in oncology where annotated data is often scarce and costly to produce [4].

Specialized Architectures for Oncology Applications

Beyond general-purpose architectures, several specialized DL approaches have emerged to address unique challenges in cancer detection:

Generative Adversarial Networks (GANs) employ a dual-network structure with generators that create synthetic images and discriminators that distinguish real from generated images. In cancer research, GANs primarily address data scarcity through realistic synthetic data generation and image enhancement techniques such as virtual staining and mitotic cell detection [4] [3].

Constrained Attention Multiple Instance Learning (CLAM) represents a specialized approach for analyzing Whole Slide Images (WSI), which are high-resolution digital scans of human tissue. CLAM operates on weakly-labeled or unlabeled data by segmenting WSIs into patches, encoding them via pre-trained CNNs, and using attention mechanisms to rank regions by their diagnostic importance [3]. This method is particularly valuable in histopathology where detailed annotations are impractical due to the massive size of WSIs, which can exceed 100,000 × 100,000 pixels [3].

Experimental Protocols and Methodologies

Standardized Workflow for AI-Based Cancer Detection

Implementing AI architectures for cancer detection follows a systematic experimental pipeline that ensures robustness and reproducibility. The following protocol outlines key methodological steps validated across multiple cancer types:

1. Data Acquisition and Curation: Source relevant medical images from public repositories (The Cancer Imaging Archive, Radiopaedia) or institutional databases. For multi-cancer classification studies, ensure representation across target cancer types (e.g., brain, breast, kidney, lung, oral, cervical cancers) [5]. Dataset sizes vary significantly, with studies utilizing between 1,000-3,000 images for model training and validation [5] [6].

2. Image Pre-processing: Apply standardized pre-processing techniques including grayscale conversion, noise reduction using median filters, and intensity normalization [6]. For bone cancer detection in CT images, median filters have demonstrated superior performance for noise reduction while preserving critical edge information [6].

3. Segmentation and Feature Extraction: Implement segmentation algorithms to isolate regions of interest. K-means clustering combined with Canny edge detection has proven effective for segmenting cancer regions in CT images [6]. Following segmentation, extract contour features including perimeter, area, and epsilon parameters to quantify morphological characteristics of potential malignancies [5].

4. Model Training with Cross-Validation: Partition datasets into training (70-80%), validation (10-20%), and testing (10-20%) subsets [5] [6]. Utilize transfer learning by initializing models with weights pre-trained on natural image datasets (e.g., ImageNet), then fine-tune on medical imaging data. Implement k-fold cross-validation to ensure robustness and mitigate overfitting.

5. Performance Evaluation: Assess model performance using comprehensive metrics including accuracy, precision, recall (sensitivity), F1-score, specificity, and area under the receiver operating characteristic curve (AUC-ROC) [7]. Compute 95% confidence intervals for key metrics to quantify uncertainty in performance estimates.

Figure 2: AI Cancer Detection Workflow: This diagram illustrates the standardized experimental pipeline for implementing AI architectures in cancer detection research, from data acquisition through clinical deployment.

Experimental Protocol for Multi-Cancer Classification

A comprehensive study published in Scientific Reports (2024) detailed an experimental protocol for multi-cancer classification using histopathology images across seven cancer types: brain, oral, breast, kidney, Acute Lymphocytic Leukemia, lung and colon, and cervical cancer [5]. The methodology encompassed the following key stages:

Image Pre-processing and Segmentation:

- Converted all images to grayscale to standardize input format

- Applied Otsu binarization for initial segmentation

- Implemented noise removal algorithms to clean images

- Utilized watershed transformation for precise boundary detection

- Performed contour feature extraction computing parameters including perimeter, area, and epsilon

Model Training and Evaluation:

- Evaluated ten CNN architectures: DenseNet121, DenseNet201, Xception, InceptionV3, MobileNetV2, NASNetLarge, NASNetMobile, InceptionResNetV2, VGG19, and ResNet152V2

- Employed transfer learning with models pre-trained on ImageNet

- Split datasets with standard partitioning: training (70-80%), validation (10-20%), testing (10-20%)

- Assessed performance using multiple metrics: accuracy, precision, recall, F1-score, RMSE, and loss

- Conducted statistical analysis to compare model performance across cancer types

This protocol established that DenseNet121 achieved superior performance with 99.94% validation accuracy, underscoring the effectiveness of densely connected architectures for complex multi-cancer classification tasks [5].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Computational Tools for AI Cancer Detection

| Resource Category | Specific Examples | Function in Research | Application Context |

|---|---|---|---|

| Public Image Datasets | BreakHis v1, Clear Cell Renal Cell Carcinoma dataset, Cancer Imaging Archive | Provides standardized datasets for model training and validation | Benchmarking algorithm performance across institutions [7] [3] |

| Pre-trained Models | ImageNet weights, Foundation Models (UNI, DINOV2), Prov-GigaPath | Transfer learning initialization, feature extraction | Reducing training time and computational requirements [7] |

| Annotation Tools | Whole Slide Imaging (WSI) platforms, Segmentation software | Enables data labeling for supervised learning | Creating ground truth datasets for model training [3] |

| Computational Frameworks | TensorFlow, PyTorch, Keras | Provides environment for model development and training | Implementing and customizing deep learning architectures [5] |

| Performance Metrics | Accuracy, AUC-ROC, Sensitivity, Specificity, F1-score | Quantifies model performance and clinical utility | Standardized reporting and comparison across studies [7] |

Challenges and Future Directions in AI Cancer Detection

Despite remarkable progress, several significant challenges impede the widespread clinical adoption of AI architectures for cancer detection. Model generalizability remains a persistent concern, as performance often diminishes when applied to external datasets from different institutions due to variations in population characteristics, imaging equipment, and acquisition protocols [4]. Additionally, issues of interpretability, data privacy, regulatory compliance, and potential algorithmic biases require concerted attention from the research community [4] [2].

Future advancements will likely focus on several key areas. Federated learning approaches enable model training across decentralized data sources without transferring sensitive patient information, addressing critical privacy concerns while expanding available training data [2]. Explainable AI (XAI) methodologies enhance model transparency by providing interpretable rationales for predictions, building clinician trust and facilitating regulatory approval [2]. The emergence of foundation models pre-trained on massive diverse datasets demonstrates exceptional generalization capabilities, with architectures like UNI achieving 95.5% accuracy in complex eight-class breast cancer classification tasks following fine-tuning [7]. Multimodal integration represents another promising frontier, combining imaging data with genomic, transcriptomic, proteomic, and clinical information to enable comprehensive cancer detection and risk stratification [1].

As these architectures evolve, rigorous validation through multi-site prospective trials, standardized reporting frameworks, and ongoing monitoring for algorithmic drift will be essential to ensure sustained safety, efficacy, and equity in AI-enabled cancer detection systems [4]. The continued collaboration between AI researchers, clinical oncologists, and drug development professionals will ultimately determine the translational impact of these transformative technologies on patient outcomes across the cancer care continuum.

The integration of artificial intelligence (AI) into oncology represents a paradigm shift in cancer research and clinical practice. AI's capacity to analyze complex, high-dimensional data is particularly suited to addressing the challenges of early cancer detection, where subtle patterns often elude conventional analysis [1]. The efficacy of these AI-driven approaches hinges on the sophisticated fusion of three core data modalities: medical imaging, genomics, and clinical records. Individually, each modality provides a unique window into the disease; together, they offer a comprehensive view of a patient's health status, enabling the development of robust predictive models [9] [10]. This whitepaper provides an in-depth technical examination of these foundational data types, detailing their individual characteristics, the methodologies for their integration, and their collective power in advancing AI for early cancer detection. Framed within the context of a broader thesis on AI for oncology, this guide is structured to equip researchers and drug development professionals with a clear understanding of the current landscape, practical experimental protocols, and the essential tools required to drive innovation in this rapidly evolving field.

Core Data Modalities in AI-Driven Oncology

The successful application of AI in early cancer detection relies on the strategic acquisition and processing of diverse data types. These modalities provide complementary biological information, and their integration is key to overcoming the limitations of any single source.

Medical Imaging

Medical imaging provides non-invasive, high-resolution anatomical and functional information critical for locating and characterizing tumors. AI, particularly deep learning models like Convolutional Neural Networks (CNNs), has demonstrated exceptional proficiency in analyzing these images [1].

Data Sources and AI Applications: Common imaging modalities include X-ray mammography for breast cancer, low-dose computed tomography (LDCT) for lung cancer, and MRI and ultrasound for various solid tumors. AI applications are diverse, encompassing automated tumor detection (computer-aided detection, or CADe), segmentation, classification of malignancy (computer-aided diagnosis, or CADx), and prediction of treatment response [11] [1]. For instance, a deep learning system developed for lung cancer screening demonstrated accuracy matching or exceeding expert radiologists in detecting early-stage malignancies from LDCT scans [11].

Quantitative Imaging (Radiomics): Beyond visual assessment, the field of radiomics uses computational methods to extract hundreds of quantitative features from standard medical images. These features, which describe tumor intensity, shape, and texture, can reveal patterns of tumor heterogeneity that are invisible to the human eye. AI models leverage these radiomic features to predict molecular subtypes, gene mutations, and patient prognosis, thereby bridging anatomical imaging with underlying tumor biology [11].

Genomics

Genomic data reveals the molecular blueprint of cancer, detailing the somatic and germline mutations, gene expression patterns, and other molecular alterations that drive carcinogenesis. The analysis of this data is central to precision oncology.

Data Sources and Technologies: Next-Generation Sequencing (NGS) is the primary technology, enabling the high-throughput analysis of DNA and RNA. Common data types include:

- Whole Genome/Exome Sequencing: Identifies mutations across the entire genome or protein-coding regions.

- Targeted Gene Panels: Focuses on a curated set of cancer-related genes for efficient clinical profiling [12].

- RNA Sequencing: Reveals gene expression levels, which can be used for cancer subtyping.

AI Applications in Genomics: Machine learning algorithms are used to distinguish driver mutations from passenger mutations, classify cancer subtypes based on gene expression, and predict therapeutic susceptibility. The emergence of large, consented genomic databases, such as the pancreatic cancer cell line released by the National Institute of Standards and Technology (NIST), provides critical resources for training and validating these AI models [13]. Furthermore, AI-powered comprehensive genomic profiling panels are becoming mainstream in oncology, allowing clinicians to routinely use information from hundreds of genes to guide diagnosis and treatment [12].

Clinical Records

Clinical records encompass the longitudinal data collected during patient care, providing essential context for imaging and genomic findings. This modality includes structured data (e.g., lab values, vitals, prescribed treatments) and unstructured data (e.g., pathology reports, physician notes).

- Data Complexity and NLP: The unstructured nature of clinical text requires sophisticated Natural Language Processing (NLP) techniques for information extraction. Modern AI, including Large Language Models (LLMs), can mine these records to identify patients at high risk for cancer, extract key biomarkers (e.g., estrogen receptor status from pathology reports), and integrate disparate data points to form a comprehensive patient profile [9] [1]. This helps flag individuals for earlier screening and provides a richer dataset for predictive modeling.

Table 1: Core Data Modalities for AI in Cancer Detection

| Modality | Key Data Types | Primary AI Techniques | Main Applications in Cancer Detection |

|---|---|---|---|

| Medical Imaging | Mammograms, CT, MRI, PET, digital pathology slides | Deep Learning (CNNs), Radiomics | Tumor detection, segmentation, classification, treatment response monitoring |

| Genomics | DNA Sequence (WGS, Gene Panels), RNA Expression (RNA-Seq) | Machine Learning (ML), Deep Learning (RNNs, Transformers) | Mutation identification, cancer subtyping, biomarker discovery, predicting drug response |

| Clinical Records | Lab results, pathology reports, physician notes, medication history | Natural Language Processing (NLP), Large Language Models (LLMs) | Risk stratification, data integration for holistic profiling, outcome prediction |

Multimodal Data Fusion: Methodologies and Experimental Protocols

A primary challenge and opportunity in AI-driven oncology is the effective integration of the core data modalities. This process, known as multimodal data fusion, is where the most significant gains in predictive accuracy are often realized.

Fusion Strategies

The strategy for combining data profoundly impacts model performance and is typically categorized into three levels, with late fusion showing particular promise for heterogeneous biomedical data [10].

Diagram: Multimodal data fusion strategies for AI in oncology. Early fusion combines raw data, intermediate fusion integrates processed features, and late fusion aggregates predictions from separate models.

- Early Fusion (Data-Level): Raw data from different modalities are combined into a single input vector before being fed into a machine learning model. This approach can capture complex, low-level interactions but is highly susceptible to the "curse of dimensionality" and overfitting, especially with high-dimensional genomic or imaging data [10].

- Intermediate Fusion (Feature-Level): This strategy involves extracting features from each modality separately and then combining these feature sets into a joint representation before the final prediction layer. It offers a balance, allowing the model to learn cross-modal interactions while mitigating some dimensionality challenges.

- Late Fusion (Decision-Level): Separate AI models are trained independently on each data modality. Their predictions are then combined, often using a meta-learner, to produce a final output. This approach is highly robust, as it avoids diluting strong unimodal signals with noisy or high-dimensional data from other modalities. Research has shown that late fusion models consistently outperform single-modality approaches in tasks like overall survival prediction [10].

Detailed Experimental Protocol for Multimodal Fusion

The following protocol, based on contemporary research, outlines a standardized pipeline for developing and validating a late fusion model for cancer patient survival prediction [10]. This serves as a template that can be adapted for other objectives like early detection.

Table 2: Experimental Protocol for Multimodal Survival Prediction

| Stage | Action | Details & Techniques |

|---|---|---|

| 1. Data Curation | Acquire multimodal data from cohorts like TCGA. | Collect transcripts, protein data, metabolites, and clinical factors for a specific cancer type (e.g., lung, breast). |

| 2. Preprocessing & Imputation | Clean and normalize each modality; handle missing data. | Apply modality-specific normalization (e.g., for gene expression); use imputation methods for missing clinical values. |

| 3. Feature Selection | Perform dimensionality reduction on each modality. | Use linear (Pearson) or monotonic (Spearman) correlation with the outcome (e.g., survival time) to select top features. |

| 4. Unimodal Model Training | Train a separate predictive model on each modality's features. | Use ensemble survival models like Gradient Boosting or Random Forests, which are effective for tabular omics data. |

| 5. Late Fusion | Combine predictions from all unimodal models. | Use a meta-learner (e.g., a linear model) to integrate the predictions and generate a final, robust survival risk score. |

| 6. Validation | Rigorously evaluate model performance. | Use multiple random train/test splits; report C-index with confidence intervals; compare against unimodal baselines. |

The Scientist's Toolkit: Essential Research Reagents and Materials

To implement the experimental protocols outlined, researchers require access to specific datasets, computational tools, and analytical pipelines. The following table details key resources that constitute the essential toolkit for work in this domain.

Table 3: Research Reagent Solutions for AI Oncology

| Resource Category | Item | Function / Application |

|---|---|---|

| Reference Datasets | The Cancer Genome Atlas (TCGA) | Provides curated, multi-platform molecular data (genomics, transcriptomics, epigenomics) and clinical data for over 20,000 primary cancers across 33 cancer types. Essential for training and validating models [10]. |

| Reference Datasets | NIST "Genome in a Bottle" Cancer Cell Line | Provides a deeply sequenced, broadly consented pancreatic cancer cell line (with matched normal). Serves as a gold-standard reference for benchmarking genomic sequencing platforms and AI mutation-calling algorithms [13]. |

| Analytical Pipelines | AZ-AI Multimodal Pipeline | A Python library for multimodal feature integration and survival prediction. It provides functionalities for preprocessing, dimensionality reduction, and training/evaluating survival models with various fusion strategies [10]. |

| Analytical Pipelines | Radiomics Software (e.g., PyRadiomics) | Enables the extraction of a large number of quantitative features from medical images, which can be used as inputs for AI models to predict clinical outcomes [11]. |

| Instrumentation & Assays | EXPLORER Total-Body PET Scanner | A first-of-its-kind platform that enables dynamic imaging with unprecedented sensitivity. Used to validate novel AI-driven imaging techniques like PET-enabled Dual-Energy CT [14]. |

| Instrumentation & Assays | Handheld Raman Spectrometer | Used in research to acquire molecular spectroscopic data (e.g., SERS spectra from pleural effusions) that can be fused with clinical biomarkers for cancer detection in liquid biopsies [15]. |

| Biomarkers | Serum Carcinoembryonic Antigen (CEA) | A common clinical tumor marker. In research settings, its quantitative values can be digitally merged with other data types (e.g., spectral data) in a mid-level fusion strategy to improve diagnostic accuracy for lung cancer [15]. |

Experimental Workflow: From Data to Diagnostic Insight

The journey from raw data to a validated AI model involves a series of critical, interconnected steps. The following diagram maps this workflow, highlighting the parallel processing of different data modalities and their ultimate fusion.

Diagram: AI workflow for multi-modal data fusion in oncology. The process involves parallel processing of imaging, genomic, and clinical data, followed by model training and late-stage fusion to generate clinical insights.

The confluence of medical imaging, genomics, and clinical records provides the foundational substrate for the next generation of AI tools in early cancer detection. As this whitepaper has detailed, the power of these modalities is not merely additive but multiplicative when integrated through sophisticated fusion strategies like late fusion, which has been shown to yield more accurate and robust predictions than single-source models [10]. The field is supported by a growing ecosystem of high-quality reference data, such as the consented genomes from NIST, and versatile computational pipelines that enable rigorous development and testing [10] [13]. For researchers and drug developers, the path forward requires a concerted focus on overcoming challenges related to data quality, standardization, and model interpretability. By systematically harnessing the complementary strengths of each data modality through the methodologies and tools outlined herein, the research community can accelerate the translation of AI from a research novelty to a clinical reality, ultimately fulfilling the promise of precise, proactive, and personalized cancer care.

Cancer remains one of the most pressing public health challenges worldwide, with incidence rates continuing to rise at an alarming rate. Current statistics reveal the sobering scale of this disease: in the United States alone, approximately 2.0 million people will be diagnosed with cancer in 2025, resulting in an estimated 618,120 deaths [16]. The global outlook is equally concerning, with projections estimating 35 million cases by 2050, representing a 47% increase from 2020 figures [17] [18]. This escalating burden underscores the critical limitations of conventional diagnostic approaches and the urgent need for innovative solutions that can transform cancer detection paradigms.

The most prevalent cancer types highlight the diverse diagnostic challenges facing clinicians and researchers. As shown in Table 1, breast cancer leads in incidence with 319,750 new cases expected in 2025, followed closely by prostate cancer (313,780 cases) and lung cancer (226,650 cases) [16]. Despite having the third-highest incidence, lung and bronchus cancer is responsible for the most deaths (124,730), nearly triple the mortality of colorectal cancer, the second deadliest cancer [16]. This disparity between incidence and mortality rates for specific cancer types points to significant shortcomings in early detection capabilities, particularly for malignancies with non-specific early symptoms or inaccessible anatomical locations.

Table 1: Projected US Cancer Incidence and Mortality for 2025 (Top Cancers)

| Cancer Site | Estimated New Cases | Estimated Deaths | 5-Year Relative Survival (%) |

|---|---|---|---|

| Breast | 319,750 | 42,680 | 91.6 |

| Prostate | 313,780 | 35,770 | 97.9 |

| Lung & Bronchus | 226,650 | 124,730 | 28.1 |

| Colorectum | 154,270 | 52,900 | 65.4 |

| Pancreas | 67,440 | 51,980 | 13.3 |

| Bladder | 84,870 | 17,420 | 79.0 |

Source: SEER Cancer Stat Facts (2025) [16]

The limitations of current diagnostic methodologies are particularly evident for certain high-mortality cancers. Pancreatic cancer, with a devastating five-year survival rate of just 13.3%, exemplifies this critical need for innovation [16]. Traditional detection methods often identify these cancers only at advanced stages, when treatment options are limited and less effective. Similarly, liver cancer maintains a persistently low survival rate of 22.0%, further highlighting the inadequacy of existing diagnostic paradigms [16]. These statistics collectively frame an urgent mandate for the oncology research community: to develop and implement next-generation diagnostic technologies capable of detecting cancer at its earliest, most treatable stages.

Limitations of Current Diagnostic Modalities

Conventional cancer detection methods face significant constraints that impact their effectiveness across the cancer continuum. Standard approaches including tissue biopsy, medical imaging, and laboratory tests each present distinct limitations that contribute to diagnostic delays, invasive procedures, and missed early detection opportunities.

Tissue biopsy, long considered the diagnostic gold standard, presents several critical limitations. As an invasive procedure, it carries inherent risks including bleeding, infection, and patient discomfort. From a diagnostic perspective, biopsies suffer from sampling bias, where the collected tissue may not represent the full heterogeneity of a tumor [18]. This is particularly problematic for complex or heterogeneous cancers where molecular characteristics vary significantly across different tumor regions. Additionally, tissue biopsies are anatomically constrained, making them unsuitable for repeated monitoring or for cancers in surgically challenging locations.

Medical imaging technologies including MRI, CT, and mammography have revolutionized cancer detection but face their own constraints. Current state-of-the-art methods require trained specialists to manually review thousands to millions of cells on a slide, a process that can take many hours and introduces human fatigue and variability into the diagnostic equation [19]. The interpretation of these images remains subjective, leading to inter-observer variability that can impact diagnostic consistency. While advances like liquid biopsies—which detect cancer cells or DNA circulating in blood—offer promising alternatives, even these modern approaches have traditionally required extensive human intervention and expertise [19].

The workflow challenges in conventional cancer diagnostics are substantial and multifaceted. The process typically involves sequential assessment steps that create significant time delays between initial suspicion and confirmed diagnosis. The resource-intensive nature of these procedures, requiring specialized equipment and highly trained personnel, further limits their scalability and accessibility, particularly in resource-constrained settings. These limitations collectively represent a critical innovation gap that artificial intelligence is uniquely positioned to address through automated, rapid, and highly accurate diagnostic solutions.

AI-Driven Innovations in Cancer Diagnostics

Artificial intelligence is fundamentally transforming cancer diagnostics through novel approaches that overcome the limitations of conventional methodologies. These innovations span multiple domains, from image analysis to genomic interpretation, offering unprecedented capabilities for early detection and accurate diagnosis.

Advanced Algorithmic Approaches

The RED (Rare Event Detection) algorithm represents a groundbreaking approach to liquid biopsy analysis. Developed by researchers at USC, this AI tool automates the detection of cancer cells in blood samples in as little as 10 minutes, dramatically faster than the many hours required by manual review [19]. Unlike traditional computational tools that require human intervention and rely on known features of cancer cells, RED uses a deep learning approach to identify unusual patterns without prior knowledge of what the "needle" looks like [19]. The algorithm ranks cells by rarity, allowing the most unusual findings to rise to the top for further investigation. This method has demonstrated remarkable performance, detecting 99% of added epithelial cancer cells and 97% of added endothelial cells while reducing the data requiring human review by 1,000 times [19].

Another significant innovation comes from the field of multi-modal imaging integration. The Adaptive Multi-Resolution Imaging Network (AMRI-Net) framework incorporates advanced capabilities for analyzing medical images across different resolutions and modalities [20]. Combined with the Explainable Domain-Adaptive Learning (EDAL) strategy, this approach enhances domain generalizability while providing interpretable results that build clinical trust—a critical factor in healthcare adoption. Experimental results demonstrate the framework's exceptional performance, achieving classification accuracies up to 94.95% and F1-Scores up to 94.85% across multi-modal medical imaging datasets [20].

Integration of Multi-Modal Data

AI systems excel at integrating diverse data types that traditionally exist in separate diagnostic silos. Modern AI models can simultaneously process radiological images, pathological slides, genomic data, and clinical records to generate comprehensive diagnostic assessments [18]. This integrated approach enables more accurate and holistic cancer profiling than single-modality analysis.

Deep learning architectures are particularly suited for this multi-modal challenge. Convolutional Neural Networks (CNNs) extract spatial features from imaging data, while transformer models and recurrent neural networks handle sequential data such as genomic sequences and clinical notes [17] [18]. Graph neural networks further extend these capabilities by capturing spatial relationships across regions of interest, providing broader context over entire images and tissue samples [18].

Table 2: AI Model Applications in Cancer Diagnostics

| AI Model Type | Primary Data Modalities | Diagnostic Applications | Key Advantages |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Radiology images, histopathology slides | Tumor detection, segmentation, and grading | Superior spatial pattern recognition; automated feature extraction |

| Transformer Models | Genomic sequences, clinical notes | Biomarker discovery, EHR mining | Captures long-range dependencies in sequential data |

| Graph Neural Networks (GNNs) | Spatial omics, tissue morphology | Tumor microenvironment analysis, cancer subtyping | Models complex spatial relationships between biological entities |

| Large Language Models (LLMs) | Scientific literature, clinical text | Hypothesis generation, trial matching, data extraction | Processes unstructured text; accelerates knowledge synthesis |

Enhanced Diagnostic Accuracy and Efficiency

AI-driven diagnostic tools consistently demonstrate superior performance compared to conventional methods. In breast cancer detection, an ensemble of three deep learning models applied to mammography data showed significant improvements over human readers, with increased sensitivity of +2.7% in UK data and +9.4% in US data, while also improving specificity by +1.2% and +5.7% respectively [17]. Similarly, a progressively trained RetinaNet with multi-scale prediction for digital breast tomosynthesis demonstrated a 14.2% absolute increase in detection sensitivity at average reader specificity [17].

These improvements extend beyond raw accuracy metrics to encompass critical workflow enhancements. AI systems can process and analyze data orders of magnitude faster than human experts, dramatically reducing the time between sample collection and diagnostic reporting. This acceleration enables earlier intervention and treatment initiation, particularly valuable for aggressive cancer types where time is critical. Furthermore, AI systems maintain consistent performance without suffering from fatigue or cognitive biases that can affect human diagnosticians, especially during extended review sessions.

Experimental Protocols and Methodologies

The validation of AI-driven cancer diagnostics requires rigorous experimental frameworks and standardized methodologies. This section details key protocols from groundbreaking studies, providing researchers with reproducible templates for further innovation.

Rare Event Detection in Liquid Biopsies

The RED algorithm validation followed a comprehensive experimental protocol to establish its diagnostic capabilities [19]:

Sample Preparation and Data Acquisition:

- Collected blood samples from patients with advanced breast cancer and from healthy controls

- Spiked normal blood samples with known quantities of epithelial and endothelial cancer cells

- Prepared standard blood smears on slides for imaging

- Acquired high-resolution digital images of entire slides, capturing millions of cells per sample

Algorithm Training and Validation:

- Implemented a deep learning architecture based on outlier detection principles

- Trained the model using a contrastive learning approach to identify unusual cellular patterns

- Employed two validation strategies:

- Testing on blood samples from known patients with advanced breast cancer

- Testing on contrived samples with added cancer cells in normal blood

- Compared algorithm performance against standard pathological review by trained specialists

Performance Metrics and Analysis:

- Calculated sensitivity and specificity for detecting cancer cells

- Measured data reduction factor indicating how much data required human review

- Compared cell detection rates between RED and conventional analysis methods

- Assessed processing time from sample to result

This methodology established that RED could identify 99% of added epithelial cancer cells and 97% of added endothelial cells while reducing the data requiring human review by 1,000 times and finding twice as many interesting cells compared to conventional approaches [19].

Multi-Modal Imaging Integration

The AMRI-Net and EDAL framework development followed a rigorous experimental protocol [20]:

Dataset Curation and Preprocessing:

- Collected multi-modal medical imaging datasets including X-rays, CT, and MRI scans

- Incorporated both radiology and pathology images for integrated analysis

- Applied standardized preprocessing including normalization and augmentation

- Established ground truth labels through consensus reading by multiple specialists

Model Architecture and Training:

- Implemented AMRI-Net with multi-resolution feature extraction branches

- Incorporated attention-guided fusion mechanisms to integrate features across scales

- Designed task-specific decoders for different diagnostic outcomes

- Applied EDAL strategy with domain alignment techniques

- Integrated uncertainty-aware learning to prioritize high-confidence predictions

- Employed transfer learning from larger non-medical image datasets

Validation and Interpretation:

- Conducted comprehensive experiments on multi-modal medical imaging datasets

- Compared performance against state-of-the-art baselines

- Evaluated domain adaptation capabilities across different imaging devices and protocols

- Applied attention-based interpretability tools to highlight critical image regions

- Assessed clinical utility through collaboration with practicing radiologists and pathologists

This rigorous methodology resulted in classification accuracies reaching 94.95% and F1-Scores up to 94.85%, while providing transparent, interpretable results for clinical decision-making [20].

The Scientist's Toolkit: Research Reagent Solutions

Implementing AI-driven cancer diagnostics requires specialized reagents, computational tools, and data resources. This section details essential research solutions that enable the development and validation of innovative diagnostic approaches.

Table 3: Essential Research Reagents and Resources for AI-Enhanced Cancer Diagnostics

| Resource Category | Specific Tools/Reagents | Research Application | Key Features |

|---|---|---|---|

| Algorithmic Frameworks | RED (Rare Event Detection) | Liquid biopsy analysis | Identifies unusual cellular patterns without predefined features; processes samples in ~10 minutes |

| Integrated AI Models | AMRI-Net with EDAL | Multi-modal image integration | Combines multi-resolution feature extraction with explainable domain adaptation; achieves 94.95% accuracy |

| Data Resources | fastMRI Dataset | AI-driven image reconstruction | Large open-source collection of deidentified MRI data for algorithm development and validation |

| Genomic Analysis | DeepHRD | HRD detection from biopsy slides | Deep learning tool detects homologous recombination deficiency; 3x more accurate than current tests |

| Clinical Validation | Prov-GigaPath, Owkin Models | Cancer detection imaging | Validated AI models for biomarker identification and cancer subtyping from pathological images |

| Liquid Biopsy | Targeted Methylation Analysis | Multi-cancer early detection | ML-based analysis of cell-free DNA for detecting and localizing multiple cancer types with high specificity |

These research tools collectively enable the development of comprehensive AI-driven diagnostic systems. The RED algorithm addresses the critical challenge of rare cell detection in liquid biopsies, while frameworks like AMRI-Net with EDAL facilitate the integration of multi-modal data sources [19] [20]. The availability of large, curated datasets such as the fastMRI collection provides essential training resources for developing robust algorithms [21]. Specialized tools like DeepHRD extend AI capabilities into genomic analysis, detecting homologous recombination deficiency characteristics with significantly higher accuracy than conventional genomic tests [22].

For researchers implementing these solutions, several practical considerations are essential. Computational infrastructure must support both training and inference phases, with GPU acceleration critical for processing high-resolution medical images. Data management systems should handle diverse formats including DICOM for medical images, FASTQ for genomic data, and structured formats for clinical information. Quality control protocols must be established for each data modality, ensuring that input quality meets the requirements of AI algorithms. Finally, interpretability frameworks should be integrated to provide transparent results that build clinical trust and facilitate adoption.

The integration of artificial intelligence into cancer diagnostics represents a fundamental shift in how we detect, characterize, and monitor malignant disease. The innovations detailed in this whitepaper—from rare event detection in liquid biopsies to multi-modal data integration—demonstrate the transformative potential of AI to address critical limitations in conventional diagnostic approaches. These technologies offer not merely incremental improvements but paradigm-shifting advances that can detect cancer earlier, with greater accuracy, and less invasively than previously possible.

The research community stands at a pivotal moment, with the opportunity to accelerate the development and validation of these AI-driven solutions. Through rigorous experimentation, standardized validation protocols, and collaborative innovation across disciplines, we can translate these technological advances into tangible improvements in patient outcomes. The tools, methodologies, and frameworks presented here provide a foundation for this important work, enabling researchers to build upon current breakthroughs and drive the next wave of diagnostic innovation. As these technologies mature and gain clinical adoption, they hold the promise of fundamentally altering the cancer landscape, moving us toward a future where early detection is routine, accurate, and accessible to all populations.

Artificial intelligence (AI) is fundamentally reshaping the landscape of early cancer detection research. By leveraging machine learning (ML) and deep learning (DL) algorithms, AI offers powerful new capabilities to analyze complex biomedical data, identify subtle patterns, and support critical clinical decisions. This technical guide provides an in-depth examination of AI's role across four key application domains: screening, diagnosis, risk stratification, and biomarker discovery. Within the context of a broader thesis on AI for early cancer detection, this document serves as a comprehensive resource for researchers, scientists, and drug development professionals, detailing current methodologies, performance metrics, and experimental protocols that are advancing the frontier of oncological research and precision medicine.

AI in Cancer Screening and Early Detection

Cancer screening aims to identify cancer in asymptomatic populations, and AI significantly enhances the speed, accuracy, and reliability of various screening modalities [17]. These technologies are particularly valuable for analyzing the extensive datasets generated by modern screening programs.

Imaging-Based Screening

AI algorithms, particularly convolutional neural networks (CNNs), demonstrate remarkable proficiency in analyzing medical images to detect early signs of cancer.

Lung Cancer: In low-dose CT screening for lung cancer, a primary challenge is the high false-positive rate associated with pulmonary nodule assessment. A recent deep learning tool was trained on data from the National Lung Screening Trial (16,077 nodules, 1,249 malignant) and externally validated on three European trials (Danish, Italian, and Dutch-Belgian) [23]. The algorithm achieved an area under the curve (AUC) of 0.98 for cancers diagnosed within one year and 0.94 throughout screening. Crucially, at 100% sensitivity, it classified 68.1% of benign cases as low risk compared to 47.4% using the established PanCan model, representing a 39.4% relative reduction in false positives [23].

Breast Cancer: DL models applied to mammography have shown performance comparable to or exceeding human radiologists. An ensemble of three DL models demonstrated a significant increase in sensitivity (+9.4%) and specificity (+5.7%) compared to radiologists in US datasets [17]. For challenging early cases, AI systems have detected cancers in retrospectively analyzed "negative" exams taken 12-24 months prior to diagnosis, with a 17.5% absolute increase in detection rate at average reader specificity [17].

Colorectal Cancer: AI systems like CRCNet have been developed for malignancy detection during colonoscopy. In testing across three independent cohorts involving 2,263 patients, the system achieved sensitivities between 82.9% and 96.5%, outperforming skilled endoscopists in two of the three test sets [17].

Liquid Biopsy and Molecular Screening

Beyond imaging, AI plays a crucial role in analyzing molecular biomarkers for non-invasive cancer detection.

The MIGHT (Multidimensional Informed Generalized Hypothesis Testing) algorithm represents a significant advancement for analyzing circulating cell-free DNA (ccfDNA) fragmentation patterns in blood samples [24]. This method addresses the critical challenge of false positives by incorporating data from non-cancerous conditions that produce similar signals. When applied to 1,000 individuals (352 cancer patients, 648 controls), MIGHT achieved a sensitivity of 72% at 98% specificity using aneuploidy-based features [24]. A companion algorithm, CoMIGHT, was further developed to combine multiple biological variable sets, showing particular promise for detecting early-stage breast and pancreatic cancers [24].

Table 1: Performance Metrics of AI Algorithms in Cancer Screening

| Cancer Type | Screening Modality | AI System | Sensitivity | Specificity | AUC | Dataset Size |

|---|---|---|---|---|---|---|

| Lung Cancer | Low-dose CT | Deep Learning Model | 100% (1-year) | 68.1% (Benign classified as low risk) | 0.94 (Overall screening) | 16,077 nodules (Training); 4,146 participants (Validation) |

| Multiple Cancers | Liquid Biopsy (ccfDNA) | MIGHT | 72% | 98% | NR | 1,000 individuals |

| Breast Cancer | 2D Mammography | Ensemble DL Model | +9.4% vs radiologists | +5.7% vs radiologists | 0.8107 (US dataset) | 25,856 women (UK); 3,097 women (US) |

| Colorectal Cancer | Colonoscopy | CRCNet | 82.9%-96.5% (across cohorts) | 85.3%-99.2% (across cohorts) | 0.867-0.882 (across cohorts) | 2,263 patients (Testing) |

Experimental Protocol: AI-Enhanced Liquid Biopsy Analysis

Objective: To detect cancer early from blood samples using ccfDNA fragmentation patterns while minimizing false positives from non-cancerous conditions.

Methodology:

- Sample Collection: Collect blood samples from individuals with and without cancer (including those with inflammatory conditions like autoimmune diseases).

- DNA Extraction and Sequencing: Isolate ccfDNA and perform shallow whole-genome sequencing to assess fragmentation patterns and chromosomal abnormalities.

- Data Processing: Generate 44 different variable sets consisting of biological features such as DNA fragment lengths and chromosomal abnormalities.

- Model Training: Implement the MIGHT algorithm which uses tens of thousands of decision-trees to fine-tune itself using real data and checks accuracy on different data subsets [24].

- Model Enhancement: Apply CoMIGHT to determine whether combining multiple variable sets improves detection performance for specific cancer types.

- Validation: Validate performance on held-out test sets, measuring sensitivity, specificity, and AUC.

AI in Cancer Diagnosis and Risk Stratification

Following detection, accurate diagnosis and risk stratification are essential for determining appropriate treatment strategies. AI excels at analyzing complex histopathological and radiological data to predict disease aggressiveness and guide clinical decisions.

Lymph Node Metastasis Prediction in Colorectal Cancer

Predicting lymph node metastasis (LNM) is critical for treatment planning in early-stage colorectal cancer. A recent meta-analysis of 9 studies involving 8,540 patients evaluated the diagnostic accuracy of AI-based models for predicting LNM in T1 and T2 CRC lesions [25]. The analysis found that DL and ML techniques demonstrated a pooled sensitivity of 0.87 (95% CI: 0.76-0.93) and specificity of 0.69 (95% CI: 0.52-0.82), with an AUC of 0.88 (95% CI: 0.84-0.90) [25]. This performance surpasses traditional imaging methods like MRI (sensitivity 0.73, specificity 0.74) and CT (sensitivity 78.6%, specificity 75%) [25].

Addressing Diagnostic Variability

Traditional histopathological assessment of high-risk features including vascular invasion, tumor budding, and deep submucosal invasion suffers from substantial interobserver variability, with kappa values for tumor budding assessment ranging between 0.077 and 0.357 [25]. AI models address this challenge by providing consistent, quantitative assessments of histopathological features, reducing subjectivity in diagnosis and risk stratification.

Table 2: AI Performance in Cancer Diagnosis and Risk Stratification

| Diagnostic Task | Cancer Type | AI System Type | Sensitivity (95% CI) | Specificity (95% CI) | AUC (95% CI) | Reference Standard |

|---|---|---|---|---|---|---|

| Lymph Node Metastasis Prediction | Colorectal Cancer | DL/ML Models | 0.87 (0.76-0.93) | 0.69 (0.52-0.82) | 0.88 (0.84-0.90) | Histopathology |

| Histological Classification of Polyps | Colorectal Cancer | Real-time image recognition system with SVM classifier | 95.9% (neoplastic lesions) | 93.3% (nonneoplastic lesions) | NR | Histopathology by GI pathologist |

| Malignancy Risk Estimation | Lung Cancer | Deep Learning Algorithm | 100% (1-year) | 68.1% (benign as low risk) | 0.94 (throughout screening) | Diagnosis within screening period |

Experimental Protocol: AI for Lymph Node Metastasis Prediction

Objective: To develop an AI model for predicting lymph node metastasis in T1/T2 colorectal cancer using histopathological images.

Methodology:

- Data Curation: Collect digitized histopathology slides from surgical specimens of T1/T2 colorectal cancer patients with confirmed lymph node status.

- Annotation: An expert pathologist annotates regions of interest highlighting tumor morphology, tumor budding, lymphovascular invasion, and other relevant features.

- Preprocessing: Apply data augmentation techniques (rotation, flipping, color normalization) to increase dataset diversity and reduce overfitting.

- Model Architecture: Implement a convolutional neural network (CNN) with attention mechanisms, trained using a multi-instance learning framework.

- Training: Use a balanced training set with appropriate loss functions (e.g., focal loss) to address class imbalance between metastatic and non-metastatic cases.

- Validation: Evaluate model performance using cross-validation and external test sets, comparing predictions to established clinicopathological risk factors.

AI in Biomarker Discovery

AI accelerates the discovery and validation of novel cancer biomarkers by mining complex multi-omics datasets to identify hidden patterns and biological signatures that may elude conventional analysis.

Multi-Omics Integration

AI algorithms excel at integrating diverse data modalities including genomics, transcriptomics, proteomics, and metabolomics to identify novel biomarker signatures. This approach is particularly valuable for developing multi-cancer early detection (MCED) tests that aim to identify multiple cancer types from a single sample [26]. For instance, tests like CancerSEEK combine DNA mutations, methylation profiles, and protein biomarkers to detect multiple cancer types simultaneously [26]. The Galleri test, currently undergoing clinical trials, analyzes ctDNA to detect over 50 cancer types and represents the potential of AI-driven biomarker discovery [26].

Addressing Biological Complexity

A critical challenge in biomarker development is ensuring cancer specificity. Research has revealed that ccfDNA fragmentation signatures previously believed to be specific to cancer also occur in patients with autoimmune conditions (lupus, systemic sclerosis) and vascular diseases [24]. Subsequent analysis found increased inflammatory biomarkers across all these patient groups, suggesting that inflammation—rather than cancer specifically—contributes to these fragmentation signals [24]. AI approaches like MIGHT address this by incorporating characteristic inflammatory patterns into training data, thereby reducing false positives from non-cancerous conditions [24].

Emerging Biomarker Classes

AI facilitates the discovery and validation of various emerging biomarker classes:

- Circulating Tumor DNA (ctDNA): AI analyzes fragmentation patterns, methylation status, and mutations in cell-free DNA [27].

- Exosomes and Extracellular Vesicles: ML algorithms identify protein and nucleic acid signatures in tumor-derived vesicles [26].

- MicroRNAs (miRNAs): DL models detect specific miRNA expression patterns associated with early carcinogenesis [27].

- Immunotherapy Biomarkers: AI helps identify predictive biomarkers for immune checkpoint inhibitor response, such as PD-L1 expression patterns and tumor mutational burden [26].

Table 3: AI-Driven Biomarker Discovery Platforms and Applications

| Biomarker Class | Data Type | AI Methods | Clinical Applications | Key Challenges |

|---|---|---|---|---|

| Circulating Tumor DNA (ctDNA) | Genomic sequencing data (mutations, methylation, fragmentation patterns) | CNNs, RNNs, Transformers | Multi-cancer early detection, treatment monitoring, minimal residual disease detection | Low concentration in early-stage disease, non-cancerous sources of fragmentation signals |

| Exosomes/Extracellular Vesicles | Protein arrays, RNA sequencing | SVM, Random Forests, DL | Early detection, cancer subtyping, therapeutic response prediction | Complex isolation procedures, standardization |

| MicroRNAs (miRNAs) | RNA sequencing, qPCR data | DL, ML classifiers | Early diagnosis, prognostic stratification, treatment selection | Inter-patient variability, tissue specificity |

| Immunotherapy Biomarkers (PD-L1, TMB) | Immunohistochemistry, whole exome sequencing | CNNs, NLP for pathology reports | Predicting response to immune checkpoint inhibitors | Spatial heterogeneity, dynamic changes during treatment |

Experimental Protocol: AI-Driven Multi-Omics Biomarker Discovery

Objective: To identify novel biomarker signatures for early cancer detection by integrating multi-omics data using AI.

Methodology:

- Data Collection: Acquire multi-omics data including whole-genome sequencing, DNA methylation arrays, transcriptomics, and proteomics from cancer and normal samples.

- Data Preprocessing: Normalize data across platforms, handle missing values, and perform quality control using automated pipelines.

- Feature Selection: Apply dimensionality reduction techniques (PCA, t-SNE) and feature importance algorithms to identify most predictive features.

- Model Training: Implement ensemble methods or neural networks to integrate multi-omics data and identify complex interactions.

- Biological Validation: Perform pathway analysis and functional annotation to interpret discovered biomarkers in biological context.

- Clinical Validation: Validate biomarker panels in independent cohorts using appropriate statistical methods to assess clinical utility.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Platforms for AI-Enhanced Cancer Detection Research

| Reagent/Platform | Function | Application Examples |

|---|---|---|

| Circulating Cell-Free DNA (ccfDNA) Extraction Kits | Isolation of cell-free DNA from blood plasma | Liquid biopsy development, fragmentation pattern analysis [24] |

| Next-Generation Sequencing (NGS) Platforms | Comprehensive genomic, epigenomic, and transcriptomic profiling | Mutation detection, methylation analysis, transcriptome sequencing [26] |

| Multiplex Immunoassay Panels | Simultaneous measurement of multiple protein biomarkers | Validation of protein biomarkers, inflammatory signature profiling [24] |

| Digital Pathology Scanners | High-resolution digitization of histopathology slides | AI model training for histopathological image analysis [25] |

| AI Frameworks (TensorFlow, PyTorch) | Development and training of custom deep learning models | Implementation of MIGHT, CoMIGHT, and other AI algorithms [24] |

| Liquid Biopsy Reference Standards | Controlled materials with known biomarker concentrations | Method validation, quality control, assay standardization [27] |

AI technologies are fundamentally transforming the landscape of early cancer detection across screening, diagnosis, risk stratification, and biomarker discovery. The methodologies and performance metrics detailed in this technical guide demonstrate the substantial progress achieved in applying ML and DL algorithms to complex oncological challenges. As these technologies continue to evolve, their integration into standard research protocols and clinical workflows promises to accelerate the development of more sensitive, specific, and accessible approaches to cancer detection. For researchers and drug development professionals, understanding these AI applications is crucial for advancing the field and ultimately improving patient outcomes through earlier cancer diagnosis and intervention. Future directions will likely focus on enhancing algorithm interpretability, validating performance in diverse populations, and establishing standardized frameworks for clinical implementation.

From Algorithm to Action: Cutting-Edge AI Applications in Clinical Cancer Detection

Artificial intelligence (AI) is fundamentally reshaping the landscape of oncologic medical imaging, offering unprecedented opportunities for enhancing early cancer detection. The convergence of advanced deep-learning algorithms, specialized computational hardware, and increased availability of large-scale, annotated imaging datasets has propelled AI into the forefront of cancer diagnostics [17]. In histopathology, radiology, and mammography, AI applications are demonstrating remarkable capabilities in tumor detection, characterization, and quantification, potentially transforming patient outcomes through earlier intervention. This technical review examines the current state of AI implementation across these key imaging modalities, presenting comprehensive performance metrics, detailed experimental methodologies, and critical analysis of the computational frameworks driving these innovations. As these technologies mature from research concepts to clinical implementation, understanding their technical specifications, validation protocols, and integration challenges becomes paramount for researchers, scientists, and drug development professionals working at the intersection of AI and oncology.

AI in Mammography

Performance Metrics and Clinical Impact

Mammography stands at the forefront of AI integration in medical imaging, with numerous studies demonstrating measurable improvements in diagnostic performance, particularly for less experienced radiologists. Recent evidence spans from controlled reader studies to large-scale real-world implementations, providing a comprehensive view of AI's potential impact on breast cancer screening.

Table 1: Performance Metrics of AI in Mammography Screening

| Study Type | Sample Size | AI System | Key Findings | Performance Metrics |

|---|---|---|---|---|

| Multicenter Reader Study [28] | 500 cases (250 cancer) | FxMammo | AI improved performance for residents; greatest gains in dense breasts | Junior residents: AUROC increased from 0.84 to 0.86 (P=0.38); Senior residents: 0.85 to 0.88 (P=0.13) |

| Nationwide Implementation [29] | 463,094 women (260,739 AI-supported) | Vara MG | AI-supported screening detected more cancers without increasing recall rate | Detection rate: 6.7 vs 5.7 per 1000 (+17.6%); Recall rate: 37.4 vs 38.3 per 1000 |

| Eye-Tracking Study [30] | 150 women (75 cancer) | Not specified | AI guided radiologists' attention to suspicious areas | Increased accuracy with AI; no significant difference in sensitivity, specificity, or reading time |

| Comparative Performance [31] | 617 mammograms (104 cancer) | Lunit INSIGHT | Radiologists more sensitive; AI more specific, especially in non-dense breasts | Radiologist sensitivity: 98% vs AI: 87%; Radiologist specificity: 17% vs AI: 44.4% |

The integration of AI into mammography workflows demonstrates particular utility in addressing variability in radiologist experience and challenging anatomical scenarios. A Singapore-based study revealed that with AI assistance, senior residents approached consultant-level performance (AUROC difference 0.02; P=.051), suggesting AI's potential to narrow experience-based performance gaps [28]. Diagnostic gains with AI were most pronounced in women with dense breasts and among less experienced radiologists, addressing two persistent challenges in breast cancer screening.

Eye-tracking research provides mechanistic insights into how AI improves radiologist performance. When AI support was available, radiologists spent more time examining regions containing actual lesions and adjusted their reading behavior based on the AI's level of suspicion [30]. The AI's region markings functioned as visual cues, guiding radiologists' attention to potentially suspicious areas, essentially serving as an additional set of eyes during interpretation.

Experimental Protocols in AI Mammography Research

The methodology for evaluating AI systems in mammography typically follows rigorous reader study designs or large-scale implementation frameworks:

Multi-Reader Multi-Case (MRMC) Study Design: The Singapore study exemplifies a rigorous MRMC approach where 17 radiologists (4 consultants, 4 senior residents, and 9 junior residents) interpreted 500 mammography cases over two reading sessions—one without and one with AI assistance, separated by a 1-month washout period [28]. Each case included four standard views (craniocaudal and mediolateral oblique for each breast). The AI system (FxMammo) provided heatmaps and malignancy risk scores (0-100%) to support decision-making, with the highest risk score from each examination determining the overall patient-level risk.

Real-World Implementation Framework: The PRAIM study in Germany employed a prospective, observational design embedded within the country's organized breast cancer screening program [29]. The study implemented a decision-referral approach where AI preclassified examinations as "normal" (56.7% of cases) or "suspicious," triggering a safety net alert when radiologists interpreted an AI-highlighted case as unsuspicious. The study involved 119 radiologists across 12 screening sites using mammography hardware from five different vendors, demonstrating real-world generalizability.

Performance Validation Metrics: Standard evaluation includes area under the receiver operating characteristic curve (AUROC) with confidence intervals, sensitivity, specificity, positive predictive value (PPV), and cancer detection rates per 1,000 screens. Statistical analyses typically employ propensity score weighting to control for confounders and establish non-inferiority or superiority margins [29].

AI in Histopathology

Digital Transformation and AI Integration

The field of histopathology has undergone a remarkable transformation from its origins in microscopic tissue examination to today's AI-powered diagnostic platforms. This evolution began with fundamental breakthroughs in tissue processing, including the development of microtomes for precise sectioning, paraffin embedding by Edward Klebs in 1869, and hematoxylin staining by Franz Böhm in 1865, which remains a cornerstone of histopathology [32]. The advent of immunohistochemistry in the 1960s further revolutionized diagnostics by enabling targeted antigen localization in tissues.

The digital pathology revolution commenced in 1994 with James Bacus's development of the BLISS system, the first commercial slide scanner [32]. This innovation paved the way for whole-slide imaging (WSI), which converts physical glass slides into high-resolution digital images and serves as the essential technological foundation for AI integration. Digital pathology addresses numerous limitations of conventional microscopy by enabling remote consultations, electronic storage, automated measurement, and creating virtual slide libraries for education.

Table 2: AI Platforms in Digital Pathology

| AI Platform | Developer | Regulatory Status | Function | Performance Evidence |

|---|---|---|---|---|

| Paige Prostate Detect | Paige AI | FDA-cleared | Prostate cancer detection | 7.3% reduction in false negatives [32] |

| PanCancer Detect | Paige | FDA Breakthrough Device Designation | Multi-site cancer detection | Under investigation [32] |

| MSIntuit CRC | Owkin | Not specified | Triage for microsatellite instability | Prioritizes cases for confirmatory testing [32] |

| UNICORN | Multiple | Research phase | Multiple tasks across pathology/radiology | Testing 20 tasks [33] |

AI systems in pathology increasingly demonstrate diagnostic capabilities approaching and sometimes surpassing human pathologists. For instance, one study reported that an AI system achieved a sensitivity of 95.9% for detecting neoplastic lesions in colorectal cancer with a specificity of 93.3% for identifying nonneoplastic lesions [17]. These systems typically employ convolutional neural networks (CNNs) trained on vast datasets of annotated whole-slide images to recognize patterns indicative of malignancy, tumor grade, and specific molecular subtypes.

Experimental Framework for Pathology AI Validation

Whole-Slide Imaging and Data Preparation: The technical workflow begins with high-resolution scanning of glass slides using specialized slide scanners capable of capturing images at 20× to 40× magnification [32]. Resulting whole-slide images (WSIs) are stored in specialized formats optimized for rapid retrieval and processing. Data preprocessing includes color normalization to address staining variability, tissue segmentation to identify diagnostically relevant regions, and patch extraction for computational efficiency.

AI Model Architecture and Training: Deep learning approaches in pathology predominantly utilize CNN-based architectures such as ResNet, Inception, and custom networks designed for gigapixel image analysis [32] [33]. Training typically employs weakly supervised methods when slide-level labels are available but pixel-level annotations are scarce. Advanced approaches include multiple instance learning frameworks where slides are treated as bags of patches with slide-level labels. Recent foundation models are being developed to handle multiple tasks across different tissue types and staining modalities.

Validation Methodologies: Rigorous validation follows TRIPOD (Transparent Reporting of a multivariable prediction model for Individual Prognosis Or Diagnosis) guidelines, incorporating external validation on datasets from different institutions to assess generalizability [32]. Performance metrics include area under the curve (AUC), sensitivity, specificity, and in some cases, time-based measures such as review time reduction. The FDA-cleared Paige Prostate underwent validation demonstrating statistically significant improvement in sensitivity with reduced false negatives [32].

AI in Radiology Beyond Mammography

Advanced Imaging Applications

Beyond mammography, AI applications in radiology span diverse modalities including CT, MRI, and ultrasound, addressing multiple cancer types through specialized detection, characterization, and quantification algorithms. The RSNA (Radiological Society of North America) has organized numerous AI challenges to spur innovation in these areas, focusing on tasks such as detection, localization, and categorization of abnormal features across various anatomical sites [34].

The 2025 RSNA Intracranial Aneurysm Detection AI Challenge exemplifies current priorities, tasking researchers with building models that can detect and localize intracranial aneurysms across multiple medical imaging modalities, including CT angiography, MR angiography, and MRI [34]. Previous challenges have addressed abdominal trauma detection (2023), cervical spine fractures (2022), COVID-19 detection (2021), brain tumors (2021), pulmonary embolism (2020), intracranial hemorrhage (2019), and pneumonia detection (2018), establishing standardized benchmarks for AI performance across diverse radiological tasks.

Technical Frameworks and Validation

Data Challenges and Benchmarking: RSNA-style challenges typically involve two main phases: training and evaluation [34]. In the training phase, researchers develop models using provided labeled datasets with expert annotations. In the evaluation phase, models are assessed against reserved portions of the dataset without labels, with winners determined based on standardized performance metrics. These challenges address critical needs for substantial volumes of expertly annotated imaging data required for training robust AI systems.

Radiomics and Quantitative Imaging: AI-based Radiomics represents a transformative approach that extracts quantitative data from medical images beyond conventional visual interpretation [35]. This paradigm uses advanced image analysis to capture spatial, temporal, and textural tumor characteristics, providing comprehensive tumor profiling. The integration of AI introduces sophisticated machine learning and deep learning algorithms capable of processing large volumes of complex imaging data to identify subtle patterns imperceptible to human observers.