Unsupervised Learning in Digital Pathology: How Foundation Models Decode Tissue Morphology Without Labels

This article explores the paradigm shift in computational pathology driven by self-supervised foundation models that learn powerful histopathological representations from vast unlabeled image datasets.

Unsupervised Learning in Digital Pathology: How Foundation Models Decode Tissue Morphology Without Labels

Abstract

This article explores the paradigm shift in computational pathology driven by self-supervised foundation models that learn powerful histopathological representations from vast unlabeled image datasets. We examine the core principles, including masked image modeling and contrastive learning, that enable models like TITAN and BEPH to capture diagnostically relevant features without manual annotation. The content details their application across cancer diagnosis, subtyping, and survival prediction, while critically addressing current challenges in robustness, generalization, and clinical deployment. Designed for researchers and drug development professionals, this review synthesizes methodological innovations, empirical validations, and future directions for creating clinically viable AI tools in pathology.

The New Paradigm: Learning Histopathology Without Human Supervision

The field of computational pathology stands at the precipice of a revolution, driven by the emergence of foundation models that learn powerful representations from histopathology images without manual annotation. The current clinical practice of pathology, reliant on manual examination of tissue slides, faces fundamental limitations in scalability, reproducibility, and ability to extract the full spectrum of morphological information embedded in gigapixel whole-slide images (WSIs). Traditional supervised deep learning approaches have struggled to address these challenges due to their dependency on extensive labeled datasets, which are costly, time-consuming to produce, and prone to inter-observer variability [1]. Self-supervised learning (SSL) represents a paradigm shift by leveraging the inherent structure of unlabeled histopathology data to learn transferable representations, mirroring the transformative impact of foundation models in natural language processing and computer vision.

The promise of SSL extends far beyond mere automation of pathological tasks. By learning from massive volumes of unannotated WSIs, SSL-based foundation models capture the fundamental principles of tissue architecture, cellular morphology, and spatial relationships that underlie disease processes. These models have demonstrated remarkable capabilities across diverse clinical applications, from cancer subtyping and biomarker prediction to rare disease identification and prognosis estimation, often matching or exceeding the performance of supervised counterparts while requiring only a fraction of the labeled data [1] [2]. This technical guide explores the architectural foundations, methodological innovations, and clinical applications of self-supervised learning in pathology, providing researchers and drug development professionals with a comprehensive framework for understanding and leveraging these transformative technologies.

Core SSL Paradigms and Architectural Foundations

Representation Learning Strategies for Histopathology

Self-supervised learning in pathology primarily employs three interconnected paradigms: contrastive learning, masked image modeling, and multimodal alignment. Each approach leverages different aspects of histological data to learn meaningful representations without manual labels.

Contrastive learning frameworks, such as DINO and MoCo v3, learn representations by maximizing agreement between differently augmented views of the same image while distinguishing them from other images in the dataset [2]. This paradigm has proven particularly effective for histopathology due to its ability to learn invariant features across staining variations, tissue preparation artifacts, and magnification differences. For instance, the Virchow model, a ViT-huge architecture trained with DINOv2 on 2 billion tiles from 1.5 million slides, demonstrates how contrastive learning at scale can capture morphological features relevant for diverse downstream tasks [2].

Masked image modeling (MIM), inspired by language modeling in natural language processing, learns representations by reconstructing randomly masked portions of input images. Methods like iBOT combine masked modeling with online tokenization to learn features that capture both local cellular structures and global tissue architecture [3] [1]. The MIRROR framework extends this approach by integrating pathological and transcriptomic data through modality alignment and retention modules, demonstrating how MIM can bridge histological and molecular representations [4].

Multimodal alignment strategies create shared embedding spaces for histology images and associated clinical data, particularly pathology reports. TITAN (Transformer-based pathology Image and Text Alignment Network) exemplifies this approach, leveraging 335,645 whole-slide images aligned with corresponding pathology reports and 423,122 synthetic captions to learn representations that enable cross-modal retrieval and report generation [3]. This alignment enables zero-shot capabilities where models can perform classification tasks without explicit training on labeled examples for those specific tasks.

Architectural Innovations for Whole-Slide Images

The gigapixel nature of WSIs presents unique computational challenges that have driven architectural innovations in SSL for pathology. Unlike natural images, WSIs cannot be processed directly by standard neural architectures, necessitating specialized approaches.

Hierarchical processing represents the dominant architectural pattern, where models first encode small tissue patches (typically 256×256 or 512×512 pixels at 20× magnification) then aggregate these patch-level representations into slide-level embeddings [3] [5]. The UNI model exemplifies this approach, using a ViT-large architecture to process 100 million tiles from 100,000 diagnostic slides across 20 major tissue types [2]. This hierarchical processing mirrors the clinical practice of pathologists who examine tissue at multiple magnifications.

Multi-resolution architectures capture complementary information at different spatial scales, from cellular details at high magnification to tissue architecture at lower magnifications. Recent frameworks incorporate dedicated modules to fuse features across resolutions, enabling simultaneous modeling of nuclear morphology and tissue microenvironment [1]. The Virchow2 model extends this further by incorporating multiple magnifications (5×, 10×, 20×, and 40×) during pretraining, significantly enhancing performance on tasks requiring both local and global context [2].

Transformer-based slide encoders have emerged as powerful alternatives to multiple instance learning for WSI-level representation. Models like TITAN process sequences of patch features using Vision Transformers with specialized positional encodings that preserve spatial relationships across the tissue [3]. To handle the long sequences inherent to WSIs (often >10,000 patches), TITAN employs attention with linear bias (ALiBi), originally developed for large language models, adapted to two-dimensional feature grids to enable extrapolation to large slide contexts [3].

Performance Benchmarking and Comparative Analysis

Quantitative Performance Across Clinical Tasks

Table 1: Performance of SSL Models on Histopathology Tasks

| Task Category | Specific Task | SSL Model | Performance | Comparison to Supervised Baseline |

|---|---|---|---|---|

| Cancer Subtyping | Breast Cancer Classification | UNI | AUC: 96% [5] | +4.3% improvement [1] |

| Biomarker Prediction | EGFR Mutation in NSCLC | Phikon | Sensitivity: 80%, Specificity: 77% [6] | Comparable to molecular testing |

| Survival Prediction | Pan-Cancer Survival | Prov-GigaPath | C-index: 0.72 [2] | +0.08 over clinical variables |

| Rare Cancer Detection | Low-Prevalence Cancers | Virchow | AUROC: 0.93 [2] | Enables detection in resource-limited settings |

| Segmentation | Tissue Substructure | Hybrid SSL Framework | Dice: 0.825, mIoU: 0.742 [1] | +7.8% enhancement over supervised |

| RNA Expression Prediction | Spatial Gene Localization | RNAPath | 5,156 genes significantly predicted [7] | Recapitulates known spatial specificity |

SSL foundation models demonstrate particularly strong performance in low-data regimes, a critical advantage for clinical applications involving rare diseases or specialized biomarkers. TITAN achieves remarkable data efficiency, requiring only 25% of labeled data to achieve 95.6% of full performance compared to 85.2% for supervised baselines, representing a 70% reduction in annotation requirements [1]. This efficiency stems from the rich morphological knowledge encoded during large-scale pretraining, which provides strong inductive biases for downstream tasks with limited labeled examples.

Comparative Analysis of Public Foundation Models

Table 2: Characteristics of Publicly Available Pathology Foundation Models

| Model | Parameters | SSL Algorithm | Training Data | Key Capabilities |

|---|---|---|---|---|

| CTransPath | 28M | SRCL (MoCo v3) | 15.6M tiles, 32K slides [2] | Strong performance on segmentation and retrieval |

| Phikon | 86M | iBOT | 43M tiles, 6K TCGA slides [2] | Excellence in mutation prediction |

| UNI | 303M | DINOv2 | 100M tiles, 100K slides [2] | General-purpose across 33 tasks |

| Virchow | 631M | DINOv2 | 2B tiles, 1.5M slides [2] | State-of-the-art on rare cancer detection |

| Prov-GigaPath | 1.135B | DINOv2 + MAE | 1.3B tiles, 171K slides [2] | Superior genomic prediction |

| TITAN | Not specified | iBOT + VLA | 335K WSIs + reports [3] | Multimodal capabilities, report generation |

Benchmarking studies reveal that SSL-trained pathology models consistently outperform models pretrained on natural images like ImageNet, with performance gaps widening on specialized tasks requiring domain-specific morphological knowledge [2]. The representation quality of SSL models demonstrates significant scaling behavior, with larger models trained on more diverse datasets showing improved performance across tasks and better generalization to external validation cohorts [2]. For instance, Virchow2 and Virchow2G, trained on 1.7B and 1.9B tiles respectively from 3.1M histopathology slides, establish new state-of-the-art performance on 12 tile-level tasks, surpassing earlier models like UNI and Phikon [2].

Experimental Protocols and Methodologies

Large-Scale Pretraining Implementation

The pretraining of pathology foundation models follows meticulously optimized protocols to handle the computational challenges of gigapixel WSIs while maximizing representation quality.

Data curation and preprocessing begins with quality control to exclude slides with excessive artifacts, blurring, or insufficient tissue content. The TITAN framework uses a three-stage pretraining approach: (1) vision-only unimodal pretraining on region crops using iBOT, (2) cross-modal alignment of generated morphological descriptions at ROI-level, and (3) cross-modal alignment at WSI-level with clinical reports [3]. This progressive training strategy first establishes strong visual representations then grounds them in clinical context.

Feature extraction and augmentation strategies are specifically designed for histological data. TITAN constructs input embeddings by dividing each WSI into non-overlapping 512×512 pixel patches at 20× magnification, extracting 768-dimensional features for each patch using CONCHv1.5 [3]. To address large and irregularly shaped WSIs, the model creates views by randomly cropping the 2D feature grid, sampling region crops of 16×16 features covering 8,192×8,192 pixels. From these region crops, multiple global (14×14) and local (6×6) crops are extracted for self-supervised pretraining [3].

Multimodal alignment incorporates both real pathology reports and synthetic captions generated using PathChat, a multimodal generative AI copilot for pathology [3]. The synthetic captions provide fine-grained morphological descriptions at the region-of-interest level, enabling precise localization of visual-textual correspondences. The alignment objective maximizes similarity between image features and corresponding text embeddings while minimizing similarity with non-matching pairs, creating a joint embedding space that supports cross-modal retrieval.

Downstream Task Adaptation

The utility of SSL foundation models is realized through their adaptation to downstream clinical tasks, which employs specialized fine-tuning protocols.

Linear probing evaluates representation quality by training a linear classifier on top of frozen features, isolating the representation power from the adaptation process. SSL models consistently outperform supervised pretraining in linear evaluation, with TITAN demonstrating 4.3% improvement in Dice coefficient for segmentation tasks compared to supervised baselines [1].

Few-shot and zero-shot learning protocols are particularly relevant for clinical applications with limited annotated data. TITAN's multimodal training enables zero-shot classification by leveraging natural language descriptions of pathological entities, achieving competitive performance without task-specific fine-tuning [3]. For few-shot scenarios, models like Virchow demonstrate the ability to learn from very limited examples (as few as 10-20 slides per class) while maintaining diagnostic accuracy [2].

Cross-modal retrieval evaluation measures the model's ability to retrieve relevant histology slides given text queries and vice versa. This capability has direct clinical utility for search and reference within large pathology archives. TITAN establishes new state-of-the-art on cross-modal retrieval benchmarks, enabling pathologists to find morphologically similar cases or generate descriptive reports for unfamiliar morphologies [3].

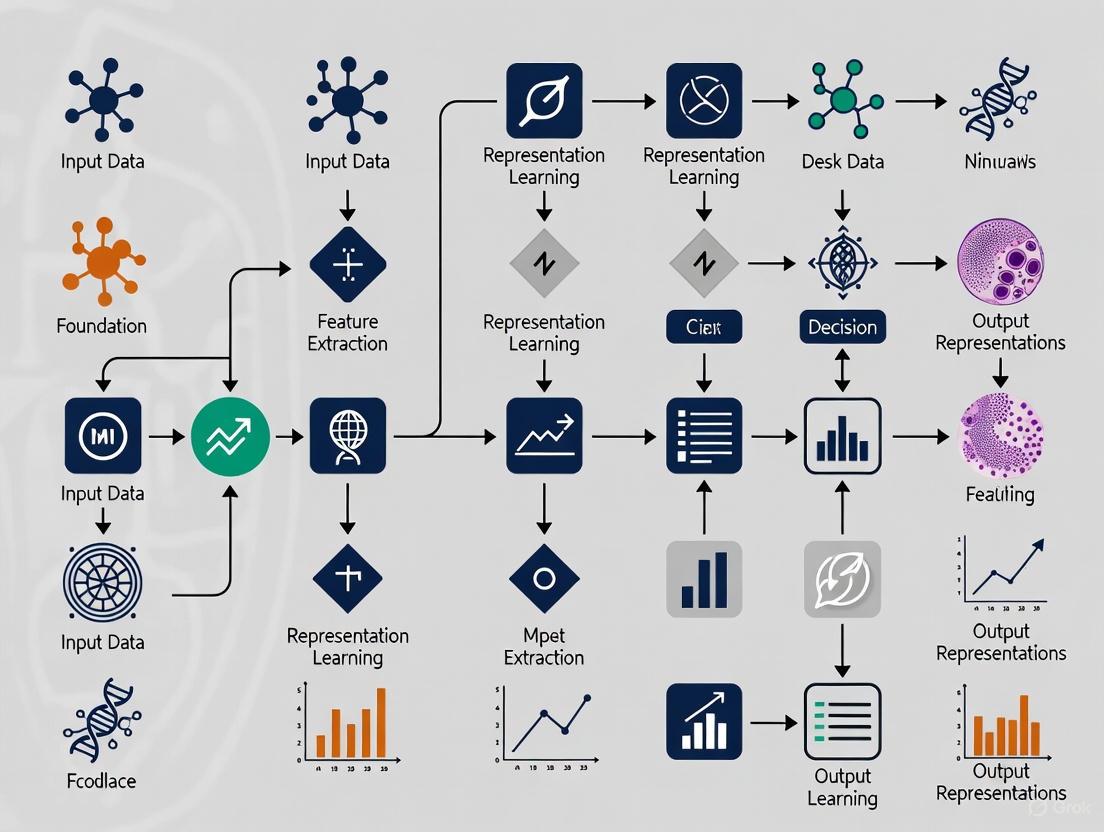

Visualization of SSL Workflows in Pathology

Figure 1: End-to-End SSL Workflow in Computational Pathology

The workflow begins with gigapixel whole-slide images, which are divided into smaller patches for manageable processing. These patches undergo multi-view augmentation, creating global and local crops that enable the self-supervised objectives to learn scale-invariant and context-aware representations. The core pretraining combines contrastive learning and masked image modeling to build a general-purpose foundation model. The resulting model serves as a frozen encoder for various downstream tasks and can be integrated into multimodal frameworks aligning histology with clinical reports and molecular data.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents and Computational Resources

| Resource Category | Specific Tool/Model | Function/Purpose | Access Information |

|---|---|---|---|

| Public Foundation Models | CTransPath, Phikon | Feature extraction from histology patches | GitHub repositories with pretrained weights |

| Benchmarking Frameworks | HistoPathExplorer | Performance evaluation across tasks | www.histopathexpo.ai [5] |

| SSL Algorithms | DINOv2, iBOT, MAE | Self-supervised pretraining | Open-source implementations |

| Whole-Slide Datasets | TCGA, GTEx | Large-scale pretraining and validation | Controlled access repositories |

| Multimodal Resources | TITAN, CONCH | Vision-language pathology AI | Research publications with methodology |

| Specialized Architectures | MIRROR, RNAPath | Multimodal integration, spatial prediction | Code available in research papers |

The implementation of SSL in pathology requires both computational resources and domain-specific data. Public foundation models like CTransPath and Phikon provide readily available feature extractors that can be applied to diverse histology images without extensive retraining [2]. Benchmarking platforms like HistoPathExplorer offer interactive dashboards for evaluating model performance across cancer types and clinical tasks, enabling researchers to identify state-of-the-art approaches for their specific applications [5]. Multimodal resources such as TITAN and CONCH extend capabilities beyond visual analysis to include textual reports and molecular data, creating opportunities for more comprehensive tissue analysis [3].

Future Directions and Clinical Translation

The trajectory of self-supervised learning in pathology points toward increasingly integrated, multimodal foundation models capable of supporting comprehensive diagnostic workflows. Several key frontiers are emerging that will shape the next generation of SSL approaches.

Whole-slide foundation models represent the evolution from patch-level encoders to slide-level representations that explicitly model long-range spatial dependencies and tissue architecture. TITAN demonstrates this direction with its transformer-based slide encoder that processes sequences of patch features while preserving spatial context through specialized positional encodings [3]. This shift enables more holistic analysis that captures tissue microenvironment and spatial relationships between different histological structures.

Multimodal integration extends beyond vision-language pairs to include genomics, transcriptomics, and proteomics data. RNAPath exemplifies this direction, predicting spatial RNA expression patterns directly from H&E histology and validating predictions with matched immunohistochemistry [7]. Similarly, MIRROR integrates histopathology and transcriptomics through modality alignment and retention modules, demonstrating superior performance in cancer subtyping and survival analysis [4]. These multimodal approaches create bridges between morphological phenotypes and molecular mechanisms, offering unprecedented opportunities for biomarker discovery and mechanistic understanding.

Clinical deployment strategies must address challenges of robustness, interpretability, and integration into diagnostic workflows. SSL models demonstrate promising generalization across institutions and scanner types, with frameworks like SSRDL (Self-Supervised Representation Distribution Learning) specifically designed to enhance robustness through representation sampling and data augmentation [8]. The emerging capability for cross-modal retrieval and report generation positions SSL models as collaborative tools that can enhance, rather than replace, pathologist expertise by providing morphological similarities, differential diagnoses, and automated documentation.

As these technologies mature, the promise of self-supervised learning in pathology extends beyond automation to fundamentally new capabilities in morphological analysis—discovering previously unrecognized patterns, predicting molecular alterations from routine histology, and personalizing cancer therapy through comprehensive tissue profiling. The convergence of large-scale self-supervision, multimodal integration, and clinical validation heralds a new era in computational pathology, transforming the microscopic examination of tissue into a quantitative, predictive science.

Foundation models are revolutionizing computational pathology by learning versatile and transferable feature representations from histopathology images without manual annotation. This capability is crucial in a field where expert labels are scarce, costly, and subject to inter-observer variability. Self-supervised learning provides the foundational framework enabling this breakthrough, with three core techniques—contrastive learning, masked image modeling, and self-distillation—driving recent advances. These methods leverage the vast quantities of unlabeled whole slide images to learn powerful representations that capture essential morphological patterns in tissues and cells, forming the basis for downstream clinical tasks including cancer diagnosis, prognosis, and biomarker prediction.

Core SSL Techniques in Histopathology

Contrastive Learning

Contrastive learning operates on a principle of instance discrimination, training models to recognize similarities and differences between data points. In computational pathology, this technique learns representations by maximizing agreement between differently augmented views of the same histopathology image while distinguishing them from other images in a dataset.

Key Mechanism: The core objective is to learn an embedding space where similar sample pairs are positioned close together while dissimilar pairs are far apart. This is typically achieved using a contrastive loss function such as InfoNCE, which pulls positive pairs (different views of the same image) together in the embedding space while pushing apart negative pairs (views from different images).

Histopathology Adaptations: Standard contrastive approaches developed for natural images require significant adaptation for histopathology data. Unlike object-centric natural images, histopathology images display different characteristics including repetitive tissue patterns, fine-grained morphological features, and hierarchical structures from cellular to tissue-level organization. Researchers have developed specialized view generation strategies that account for these unique characteristics, including multi-scale patches that capture both cellular details and tissue architecture, and environment-aware cropping that preserves spatial context around cells.

The VOLTA framework exemplifies advanced contrastive learning adapted for histopathology, incorporating environment-aware cell representation learning. As illustrated in the workflow below, VOLTA uses a two-branch architecture that maximizes mutual information between cells and their surrounding microenvironment while masking out other cells to prevent bias.

Figure 1: Contrastive Learning Workflow in VOLTA. The framework processes both cell crops and their surrounding environment patches through separate encoders, using contrastive learning to align their representations.

Masked Image Modeling

Masked image modeling has emerged as a powerful self-supervised pre-training approach where models learn to reconstruct randomly masked portions of input images. This technique forces the model to develop a comprehensive understanding of tissue morphology and spatial relationships by predicting missing content based on surrounding context.

Key Mechanism: MIM operates by randomly masking a significant portion (e.g., 60-80%) of input image patches and training a model to reconstruct the missing visual content. The training objective typically combines reconstruction loss with a feature-level distillation component, enabling the model to learn rich, contextualized representations that capture both local cellular features and global tissue architecture.

Histopathology Implementation: In histopathology, MIM has been successfully implemented using the iBOT framework, which employs a teacher-student architecture with masked image modeling. The student network processes both original and masked versions of input images, while the teacher network provides reconstruction targets through exponential moving average updates. This approach has demonstrated remarkable performance across diverse cancer types and tasks.

The TITAN model exemplifies sophisticated MIM implementation for whole-slide images, utilizing a vision transformer architecture pretrained on 335,645 whole-slide images. As shown below, TITAN's pretraining pipeline employs knowledge distillation and masked image modeling on region-of-interest crops to learn powerful slide-level representations.

Figure 2: Masked Image Modeling in TITAN. The framework processes feature grids from whole slide images through random cropping and masked image modeling with teacher-student knowledge distillation.

Self-Distillation

Self-distillation represents a specialized SSL approach where a model learns by distilling knowledge from itself, typically through a teacher-student architecture where both networks share identical parameters initially and evolve through training.

Key Mechanism: Self-distillation frameworks maintain two neural networks with identical architecture: a teacher and a student. The teacher network produces targets for the student to learn from, and its parameters are updated as an exponential moving average of the student parameters. This creates a self-supervised feedback loop where the model continuously improves its own representations without external labels.

Histopathology Applications: In histopathology, self-distillation has been successfully implemented in frameworks like DINO and iBOT, where it enables learning of robust visual features without human supervision. The GPFM model further advanced this approach by incorporating unified knowledge distillation with both expert and self-knowledge distillation components, enabling local-global feature alignment across diverse pathology tasks.

The self-distillation process creates a self-reinforcing learning cycle where the teacher network provides increasingly better targets for the student network, leading to continuous improvement in representation quality without manual annotation.

Comparative Analysis of SSL Techniques

The table below summarizes the key characteristics, advantages, and limitations of the three core SSL techniques as applied in histopathology.

Table 1: Comparative Analysis of Core SSL Techniques in Histopathology

| Technique | Key Mechanism | Representative Models | Advantages | Limitations |

|---|---|---|---|---|

| Contrastive Learning | Maximizes agreement between augmented views of same image while distinguishing from different images | VOLTA [9], SimCLR [10] | Effective for capturing cellular heterogeneity; Strong performance on cell classification tasks | Requires careful negative sampling; Sensitive to augmentation strategies |

| Masked Image Modeling | Reconstructs randomly masked portions of input images | TITAN [3], iBOT [11] | Learns rich contextual representations; Excellent transfer performance across tasks | Computationally intensive; Requires careful masking strategy design |

| Self-Distillation | Knowledge distillation from teacher to student network with identical architecture | GPFM [12], DINO [13] | Creates stable training dynamics; Learns semantically meaningful features | Can collapse to trivial solutions without proper regularization |

Performance Comparison of Foundation Models

Recent comprehensive benchmarking studies have evaluated the performance of various foundation models across multiple histopathology tasks. The table below summarizes key findings from these evaluations, particularly focusing on ovarian carcinoma subtyping performance.

Table 2: Foundation Model Performance on Ovarian Carcinoma Subtyping Tasks [14]

| Model | Backbone | Training Data Scale | Balanced Accuracy (%) | Key Strengths |

|---|---|---|---|---|

| H-optimus-0 | Vision Transformer | Not specified | 89% (internal), 97% (Transcanadian), 74% (OCEAN) | Best overall performance across validation sets |

| UNI | Vision Transformer | 100M patches | Similar to H-optimus-0 at ¼ computational cost | Computational efficiency with strong performance |

| ImageNet Pretrained ResNet | CNN | 1.4M natural images | Lower than specialized foundation models | General-purpose features, suboptimal for histopathology |

| GPFM | Vision Transformer | Multi-source distillation | Ranked 1st in 42 of 72 tasks in comprehensive benchmark [12] | Superior generalization across diverse task types |

Experimental Protocols and Methodologies

Contrastive Learning Protocol (VOLTA Framework)

The VOLTA framework implements a sophisticated environment-aware contrastive learning approach with the following key methodological components [9]:

Cell and Environment Processing:

- Input images are processed to extract individual cell crops (centered on nuclei) and corresponding environment patches (256×256 pixels surrounding each cell)

- Environment patches undergo cell masking to remove other visible cells, preventing the model from biasing toward cell density patterns

- Two sets of augmentations are applied to create visually distinct perspectives of each cell

Training Configuration:

- Model architecture: Dual-branch network with ResNet-50 backbone

- Optimization: Adam optimizer with learning rate of 0.0003 and weight decay of 10^-4

- Batch size: 512 distributed across multiple GPUs

- Temperature parameter: τ = 0.1 for contrastive loss scaling

- Training epochs: 400 with linear learning rate decay

Loss Function:

The framework combines standard contrastive loss for cell views with environment-aware contrastive loss:

L_total = L_contrastive(cell_view1, cell_view2) + λ * L_InfoNCE(cell, environment)

where λ balances the two objectives and is typically set to 0.5.

Masked Image Modeling Protocol (TITAN Framework)

The TITAN framework implements large-scale masked image modeling for whole-slide images with the following methodology [3]:

Multi-Stage Pretraining:

- Stage 1: Vision-only unimodal pretraining on 335,645 WSIs using iBOT framework

- Stage 2: Cross-modal alignment with 423,122 synthetic ROI captions

- Stage 3: Cross-modal alignment with 182,862 clinical pathology reports

Architecture Specifications:

- Patch feature extraction: CONCHv1.5 encoder generating 768-dimensional features from 512×512 patches

- Transformer architecture: ViT-Base with 86M parameters

- Masking strategy: 60% random masking of patch features

- Positional encoding: Attention with Linear Biases for long-context extrapolation

Training Configuration:

- Optimization: AdamW with learning rate of 1.5e-4

- Batch size: 4,096 region crops (16×16 features each)

- Cropping strategy: Random global (14×14) and local (6×6) crops from region features

- Augmentations: Vertical/horizontal flipping with posterization feature augmentation

Self-Distillation Protocol (GPFM Framework)

The Generalizable Pathology Foundation Model implements unified knowledge distillation with the following experimental approach [12]:

Dual Knowledge Distillation Framework:

- Expert knowledge distillation: Learning from multiple pre-trained expert models

- Self knowledge distillation: Local-global alignment through self-supervised learning

Training Methodology:

- Teacher-student architecture with momentum encoder (momentum=0.996)

- Multi-crop strategy with 2 global and 8 local views

- Loss function: Combination of cross-entropy and similarity matching losses

- Optimization: LARS optimizer with learning rate warmup and cosine decay

Research Reagent Solutions

The table below outlines essential computational tools and resources referenced in the surveyed literature for developing SSL-based foundation models in histopathology.

Table 3: Essential Research Reagents for SSL in Histopathology

| Resource | Type | Function | Example Implementations |

|---|---|---|---|

| Whole Slide Image Datasets | Data | Pre-training and evaluation | TCGA (The Cancer Genome Atlas), Mass-340K (335,645 WSIs) [3], In-house institutional archives |

| Patch Feature Extractors | Algorithm | Converting image patches to feature vectors | CONCH [3], ImageNet-pretrained ResNets [14], Self-supervised vision transformers |

| SSL Frameworks | Software | Implementing self-supervised learning algorithms | iBOT [3] [11], DINO [13], SimCLR [10], MoCo-v2 |

| Computational Infrastructure | Hardware | Model training and inference | High-performance GPU clusters, Cloud computing resources (required for models with 80M+ parameters) |

| Evaluation Benchmarks | Methodology | Standardized performance assessment | Multi-task benchmarks (72 tasks across 6 types) [12], Domain shift evaluation frameworks [13] |

Contrastive learning, masked image modeling, and self-distillation represent the three pillars of self-supervised learning that enable foundation models to learn powerful histopathological representations without manual labels. Each technique offers distinct advantages: contrastive learning excels at capturing cellular heterogeneity, masked image modeling learns rich contextual representations, and self-distillation provides stable training dynamics for semantically meaningful features. The ongoing development of models like TITAN, VOLTA, and GPFM demonstrates how these core SSL techniques can be adapted to address the unique challenges of histopathology data, from gigapixel whole-slide images to fine-grained cellular morphology. As these methods continue to evolve, they pave the way for more accessible, robust, and generalizable computational pathology tools that can enhance diagnostic accuracy and accelerate biomedical discovery.

The field of computational pathology is undergoing a transformative shift with the emergence of foundation models trained on massive datasets of whole-slide images (WSIs). These models, inspired by successes in natural language processing and computer vision, are learning powerful, transferable representations of histopathological morphology without relying on manually curated labels. This paradigm is particularly crucial in digital pathology, where the gigapixel size of WSIs and the prohibitive cost of expert annotation have historically constrained the development of robust artificial intelligence (AI) tools. By leveraging self-supervised learning (SSL) on millions of unlabeled images, these foundation models capture the complex visual semantics of tissue microenvironments, providing a versatile starting point for diverse downstream clinical tasks such as cancer subtyping, prognosis prediction, and biomarker identification [3] [15].

The core challenge in computational pathology is the immense scale of the data. A single WSI can be over 100,000 × 100,000 pixels, containing billions of pixels and representing a complex spatial organization of cells and tissue structures [16]. Traditional supervised deep learning approaches, which require vast amounts of labeled data, are often impractical. Foundation models overcome this bottleneck by using SSL to learn from the inherent structure and patterns within the data itself. This guide details the methodologies, architectural innovations, and experimental protocols that enable effective model pretraining on millions of WSIs, framing these advances within the broader thesis of how foundation models learn histopathological representations without labels.

Core Methodologies for Self-Supervised Pretraining

The pretraining of foundation models in computational pathology primarily follows two complementary paradigms: visual self-supervised learning and vision-language pretraining. These approaches allow models to learn from the intrinsic structure of image data and the rich, albeit noisy, supervisory signal contained in paired clinical reports.

Visual Self-Supervised Learning

Visual SSL methods treat each WSI as a complex, structured data source and construct learning objectives directly from the image content without human-provided labels. A common and highly effective strategy is intraslide contrastive learning. This method creates multiple augmented views of a WSI and trains the model to recognize that these views originate from the same source slide while being distinct from patches taken from other slides [3]. The process typically involves:

- Patch Feature Extraction: A WSI is divided into thousands of non-overlapping image patches (e.g., 256x256 or 512x512 pixels at 20x magnification). Each patch is processed by a pre-trained patch encoder (like CTransPath or CONCH) to extract a compact feature vector, creating a 2D feature map of the entire slide [3] [17].

- View Construction: Multiple "views" of the slide are created by randomly cropping regions from this 2D feature grid. These crops can be at different scales; for instance, large "global" crops (e.g., 14x14 features) that capture tissue architecture and smaller "local" crops (e.g., 6x6 features) that focus on cellular details [3].

- Contrastive Objective: The model is trained using an objective function like that in iBOT or DINOv2, which encourages the representations of different views from the same WSI to be similar ("positive pairs") while pushing apart representations from different WSIs ("negative pairs") [3] [17] [18]. This process teaches the model to be invariant to irrelevant augmentations and to focus on diagnostically relevant morphological features.

Another powerful technique is masked image modeling, where random portions of the WSI's feature grid are masked, and the model is tasked with reconstructing the missing features based on the surrounding context [17]. This forces the model to learn robust, contextual representations of histology.

Vision-Language Pretraining

Vision-language pretraining aligns visual features from WSIs with textual features from corresponding pathology reports, creating a shared representation space. This multimodal approach enables capabilities like cross-modal retrieval and zero-shot classification.

- Data Pairs: Models are trained on large datasets of WSI and report pairs, for example, 335,645 WSIs paired with 182,862 medical reports [3].

- Contrastive Alignment: A contrastive loss function (e.g., InfoNCE) is used to pull the feature representations of a WSI and its corresponding report closer in a shared embedding space while pushing them apart from non-matching WSIs and reports [3] [18].

- Synthetic Data Augmentation: To enhance the granularity of language supervision, some frameworks like TITAN generate synthetic, fine-grained captions for specific regions of interest using a multimodal generative AI copilot, creating hundreds of thousands of additional image-text pairs for training [3].

Architectural Innovations for Whole-Slide Modeling

A key innovation enabling WSI-scale foundation models is the development of architectures capable of processing the long sequences of data representing a full slide.

Hierarchical and Transformer-Based Encoders

Modern pathology foundation models employ a two-stage encoding process to handle the gigapixel scale:

- Tile Encoder: A vision transformer (ViT) or convolutional neural network (CNN) first processes individual image tiles, converting each into a feature vector or "visual token" [17].

- Slide Encoder: A second transformer model then processes the entire sequence of tile features from one WSI. To handle sequences that can contain tens of thousands of tiles, specialized techniques are required. LongNet and its dilated self-attention mechanism have been successfully used in models like Prov-GigaPath, as they reduce the computational complexity of modeling these ultra-long sequences [17].

Positional Encoding and Context

Preserving the spatial relationships between tiles is critical. Models use 2D positional encodings to ensure the slide encoder understands the original spatial layout of the tissue [3]. Some approaches, like TITAN, adapt Attention with Linear Biases (ALiBi) from natural language processing to the 2D domain, using the Euclidean distance between tiles in the feature grid to inform the self-attention mechanism, which improves extrapolation to large contexts during inference [3].

Table 1: Overview of Major Whole-Slide Foundation Models and Their Pretraining Data Scale

| Model Name | Core Architecture | Pretraining Dataset Scale | Key Pretraining Methodology |

|---|---|---|---|

| TITAN [3] | Vision Transformer (ViT) | 335,645 WSIs; 423,122 synthetic captions | Visual SSL (iBOT) & Vision-Language Alignment |

| Prov-GigaPath [17] | Hierarchical ViT with LongNet | 171,189 WSIs; ~1.3 billion image tiles | DINOv2 (tile) & Masked Autoencoder (slide) |

| CS-CO [19] | Hybrid CNN | Not specified in detail | Hybrid Generative & Discriminative SSL |

| HIPT [17] | Hierarchical ViT | ~30,000 WSIs (TCGA) | Self-Supervised Hierarchical Pretraining |

Experimental Protocols and Benchmarking

Rigorous evaluation on diverse, clinically relevant tasks is essential to validate the effectiveness of foundation models. The standard protocol involves pretraining on a large, unlabeled dataset followed by transfer learning on smaller, labeled datasets for specific tasks.

Transfer Learning Evaluation Protocols

- Linear Probing: The pretrained foundation model is frozen, and only a simple linear classifier is trained on top of its output features for a new task. This tests the quality of the representations learned during pretraining [3].

- End-to-End Fine-Tuning: The entire model (or a significant portion of it) is further trained on the downstream task. This typically yields higher performance but is more computationally intensive and requires careful regularization to avoid overfitting [17].

- Few-Shot and Zero-Shot Learning: These protocols evaluate the model's ability to generalize with very limited labeled data (few-shot) or even no labeled examples (zero-shot). Zero-shot is typically enabled by vision-language models that can classify images based on their similarity to textual descriptions of disease classes [3] [17].

Key Performance Benchmarks

Foundation models are evaluated on a wide range of tasks to demonstrate their generalizability. The table below summarizes benchmark results for leading models, highlighting the performance gains enabled by large-scale pretraining.

Table 2: Benchmarking Performance on Key Computational Pathology Tasks

| Task Category | Example Task | Best Performing Model | Reported Metric & Performance |

|---|---|---|---|

| Cancer Subtyping [17] | Classifying cancer subtypes across 9 types | Prov-GigaPath | State-of-the-art (SOTA) on all 9; significantly better on 6 |

| Mutation Prediction [17] | Predicting EGFR mutation status from WSIs | Prov-GigaPath | 23.5% AUROC improvement over second-best |

| Biomarker Prediction [20] | Predicting BRAF-V600 status in melanoma | Prov-GigaPath + XGBoost | AUC of 0.824 (TCGA), 0.772 (independent test set) |

| Rare Cancer Retrieval [3] | Retrieving similar WSIs for rare diseases | TITAN | Outperformed existing slide and region-of-interest (ROI) models |

| Prognosis Prediction [21] | Predicting patient survival outcomes | WSINet | Compelling performance in end-to-end survival prediction |

Model Architecture Flow

The Scientist's Toolkit: Essential Research Reagents

Implementing and experimenting with whole-slide foundation models requires a suite of computational tools and data resources. The following table details key components.

Table 3: Essential Research Reagents for Whole-Slide Foundation Model Research

| Reagent / Solution | Function / Description | Example Implementations / Sources |

|---|---|---|

| Patch Encoder | Pre-trained network to convert image patches into feature vectors. Provides the foundational visual vocabulary. | CTransPath, CONCH, HoVer-Net, ImageNet-pretrained CNNs [3] [22] [15] |

| Slide Encoder | Model architecture that aggregates patch features into a slide-level representation. Handles long sequences. | Vision Transformer (ViT), LongNet, Hierarchical Image Pyramid Transformer (HIPT) [3] [17] |

| Self-Supervised Learning Framework | Software library providing implementations of SSL algorithms. | iBOT, DINOv2, Masked Autoencoder (MAE) [3] [17] |

| Whole-Slide Image Datasets | Large-scale collections of WSIs for pretraining and benchmarking. | Prov-Path, The Cancer Genome Atlas (TCGA), internal hospital archives [3] [17] |

| Synthetic Data Generator | Generates realistic synthetic histology images to augment training data. | StyleGAN2 with Adaptive Discriminator Augmentation (ADA) [22] |

| Computational Backend | Hardware and software infrastructure for distributed training on gigapixel images. | High-Performance Computing (HPC) clusters, NVIDIA GPUs, PyTorch, MONAI [17] |

End-to-End Workflow

Training foundation models on millions of unlabeled whole-slide images represents a paradigm shift in computational pathology. By leveraging scalable self-supervised and multimodal learning techniques, coupled with innovative architectures like LongNet, these models learn powerful, general-purpose representations of histopathological morphology. The resulting models, such as TITAN and Prov-GigaPath, establish new state-of-the-art performance across a wide spectrum of clinical tasks, from cancer subtyping to mutation prediction, demonstrating the profound effectiveness of data scaling in this domain. This approach directly addresses the long-standing challenges of label scarcity and gigapixel complexity, paving the way for more robust, data-efficient, and clinically impactful AI tools in pathology. The continued expansion of diverse WSI datasets and the refinement of pretraining methodologies will further solidify the role of foundation models as the cornerstone of next-generation computational pathology.

The analysis of whole slide images (WSIs) in computational histopathology presents a unique computational challenge: these images are gigapixel in size, often exceeding 100,000 pixels in each dimension, making them impossible to process directly on standard hardware [23]. This technical constraint, combined with the prohibitive cost and time required for detailed expert annotations, has driven the development of innovative weakly-supervised learning approaches. These methods operate under the paradigm that while detailed, patch-level annotations may be unavailable, slide-level or patient-level labels—such as cancer diagnosis, molecular subtypes, or patient survival data—can be utilized to train models that simultaneously learn both localized features and global predictions [23]. The fundamental computational strategy involves breaking WSIs into smaller patches for processing, then developing intelligent aggregation methods to reconstruct slide-level predictions from these patch-level representations.

The emergence of foundation models pretrained using self-supervised learning (SSL) has dramatically accelerated progress in this field. These models learn domain-specific morphological features from vast amounts of unlabeled histopathology data, capturing essential patterns in tissue structure and cellular organization without requiring manual annotations [24] [3]. This pretraining approach has proven particularly valuable in histopathology, where the complexity and variability of tissue morphology benefit from models that have learned general-purpose representations before being fine-tuned for specific diagnostic tasks. The transition from patch-level to slide-level analysis while maintaining morphological context across multiple magnifications represents the central challenge in scaling representation learning for digital pathology.

Technical Foundations: From Patch Processing to Whole-Slide Analysis

Patch Sampling Strategies

The first critical step in WSI analysis is sampling representative patches from the gigapixel image. Multiple strategies have been developed to address the dual challenges of computational efficiency and morphological representativeness:

Table 1: Patch Sampling Strategies for Whole Slide Image Analysis

| Strategy | Methodology | Advantages | Limitations |

|---|---|---|---|

| Random Selection | Random sampling of patches during each training epoch [23] | Computational simplicity; no prior knowledge required | May sample uninformative regions; inefficient for sparse phenotypes |

| Tumor-First Selection | Pathologist annotation or cancer detection algorithm identifies tumor regions before sampling [23] | Focuses computational resources on diagnostically relevant areas | Requires preliminary annotation or model; may miss important microenvironment cues |

| Clustering-Based Selection | Patches clustered by appearance features; sampling ensures morphological diversity [23] | Captures comprehensive tissue heterogeneity; avoids redundant sampling | Increased computational overhead for clustering |

| Pyramid Tiling with Overlap (PTO) | Extracts multiple resolution views of image subsections using sliding window [25] | Maintains spatial context across magnifications; enables multi-scale feature learning | Computationally intensive; requires specialized architecture |

Feature Extraction and Compression

Once patches are selected, feature extraction transforms the high-dimensional image data into compact, meaningful representations. Transfer learning from models pretrained on natural image datasets like ImageNet has been widely used, but recent advances demonstrate the superiority of models pretrained specifically on histopathology data [23]. Self-supervised learning approaches have proven particularly effective for this domain-specific pretraining:

- DINO (self-distillation with no labels): A framework that knowledge-distills features from a teacher network to a student network using different augmentations of the same image, effectively learning morphological representations without labels [24]

- Masked Image Modeling (MIM): Techniques like iBOT randomly mask portions of input patches and train models to reconstruct the missing content, learning robust representations of tissue structure [3]

- Contrastive Learning: Methods like SimCLR and MoCo learn representations by maximizing agreement between differently augmented views of the same patch while distinguishing them from other patches [24]

Feature and Prediction Aggregation Methods

The core challenge in slide-level analysis lies in effectively aggregating patch-level information to make global predictions. Multiple instance learning (MIL) provides the theoretical framework for this process, where each WSI is treated as a "bag" containing multiple "instances" (patches) [23].

Table 2: Feature Aggregation Methods for Whole Slide Images

| Method | Mechanism | Interpretability | Best-Suited Tasks |

|---|---|---|---|

| Max/Mean Pooling | Simple statistical aggregation across patches [23] | Limited; provides no patch-level weighting | Diffuse disease patterns; robust to noise |

| Attention Mechanisms | Learned weights for weighted sum of patch features [23] [3] | High; attention weights highlight diagnostically relevant regions | Tasks with spatially sparse phenotypes |

| Quantile Aggregation | Characterizes distribution of patch predictions using quantile functions [23] | Moderate; shows prediction distribution across slide | Tasks where prevalence of features matters |

| Graph Neural Networks | Models spatial relationships between patches as graph connections [23] | Moderate; reveals architectural tissue patterns | Tasks where tissue architecture is diagnostically relevant |

Advanced Architectures for Slide-Level Representation Learning

Hierarchical Vision Transformers for Multi-Scale Phenotyping

The CypherViT architecture represents a significant advancement in patch-level representation learning by incorporating a hierarchical Vision Transformer (ViT) with multiple class tokens to capture both coarse and fine-grained histopathological features [24]. This model employs a feature agglomerative attention module that enables the model to learn representations at multiple biological scales—from subcellular features to tissue-level patterns. When trained within the DINO self-supervised framework on breast cancer histopathology images, CypherViT demonstrated remarkable transfer learning capabilities, effectively generalizing to colorectal cancer images without additional fine-tuning [24]. The model achieved state-of-the-art performance on patch-level tissue phenotyping tasks across four public datasets, outperforming both traditional ImageNet-based transfer learning and other SSL approaches.

Whole-Slide Foundation Models: The TITAN Framework

Translating patch-level representations to whole-slide analysis requires specialized architectures capable of processing extremely long sequences of patch features. The TITAN (Transformer-based pathology Image and Text Alignment Network) framework addresses this challenge through a multimodal approach that processes entire WSIs [3]. Key innovations in TITAN include:

- Multi-stage pretraining: The model undergoes three distinct phases: (1) vision-only pretraining on region crops, (2) cross-modal alignment with generated morphological descriptions at region-level, and (3) cross-modal alignment at slide-level with clinical reports [3]

- Handling variable-length sequences: Using Attention with Linear Biases (ALiBi) enables extrapolation to long contexts at inference time, crucial for gigapixel images [3]

- Leveraging synthetic data: The incorporation of 423,122 synthetic captions generated by a multimodal AI copilot enhances training without requiring manual annotation [3]

Trained on 335,645 WSIs across 20 organ types, TITAN generates general-purpose slide representations applicable to diverse clinical tasks including cancer subtyping, biomarker prediction, and outcome prognosis, outperforming supervised baselines without requiring task-specific fine-tuning [3].

Environment-Aware Cell Representation Learning

While most approaches focus on tissue patches, the VOLTA (enVironment-aware cOntrastive ceLl represenTation leArning) framework operates at the cellular level, learning cell representations that incorporate microenvironmental context [9]. This approach recognizes that cells are fundamentally influenced by their surrounding tissue architecture. VOLTA employs a two-branch architecture:

- Cell Block: Processes augmented views of individual cells

- Environment Block: Incorporates contextual information from the surrounding tissue while masking other cells to prevent bias [9]

When evaluated on datasets comprising over 800,000 cells across six cancer types, VOLTA demonstrated superior performance in unsupervised cell clustering, achieving approximately twice the performance of baseline methods on metrics like adjusted mutual information (AMI) and adjusted rand index (ARI) [9].

Experimental Protocols and Methodologies

Implementation Framework for Whole-Slide Analysis

A robust experimental pipeline for WSI analysis requires careful attention to data preprocessing, model architecture, and training protocols:

Data Preparation and Augmentation

- Tissue Detection: Initial filtering to remove background regions and artifacts using tissue coverage thresholds (e.g., 70% minimum tissue coverage) [24]

- Patch Extraction: Extraction of patches at appropriate magnification (typically 20×) with strategic overlap to ensure comprehensive coverage

- Data Augmentation: Application of histopathology-specific transformations including rotation (90°, 180°), vertical and horizontal inversion, and stain normalization [24]

Model Training Protocols

- Self-Supervised Pretraining: Unsupervised learning on large-scale unlabeled datasets (300,000+ patches) using contrastive or masked image modeling objectives [24]

- Weakly-Supervised Fine-tuning: Training with slide-level labels using multiple instance learning frameworks

- Validation Strategies: Rigorous cross-validation with patient-level splits to prevent data leakage and ensure clinical applicability

Quantitative Benchmarking Results

Comprehensive evaluation across multiple datasets and tasks demonstrates the effectiveness of self-supervised approaches for histopathology representation learning:

Table 3: Performance Comparison of Self-Supervised Learning Models on Histopathology Tasks

| Model | Training Data | Task | Performance | Benchmark |

|---|---|---|---|---|

| CypherViT [24] | 300K breast cancer patches | Colorectal cancer patch classification | Superior to SSL baselines | Accuracy on CRC dataset: >85% |

| TITAN [3] | 336K WSIs across 20 organs | Slide-level subtyping | Outperforms supervised baselines | AUC: 0.91-0.96 across cancer types |

| VOLTA [9] | 800K+ cells across 6 cancer types | Unsupervised cell clustering | ~2× baseline performance | AMI: 0.61 vs 0.29-0.35 for baselines |

| HipoMap [26] | TCGA lung cancer WSIs | Survival prediction | 3.5% improvement in c-index | c-index: 0.787 vs 0.760 baselines |

Successful implementation of representation learning for histopathology requires both computational resources and carefully curated data resources:

Table 4: Essential Research Reagents and Resources for Histopathology Representation Learning

| Resource Type | Examples | Function | Access |

|---|---|---|---|

| Public Datasets | TCGA (The Cancer Genome Atlas), PANNUKE [24], CoNSeP [9] | Benchmarking model performance across tissue types and cancer types | Publicly available with data use agreements |

| Annotation Tools | ASAP, QuPath, HistoQC | Slide visualization, patch extraction, and manual annotation | Open source |

| Computational Frameworks | PyTorch, TensorFlow, MONAI, TIAToolbox | Model development, training, and inference | Open source |

| Whole Slide Image Storage | DICOM WG26 Standard, Cloud Archives | Scalable storage and retrieval of gigapixel images | Institutional infrastructure |

| SSL Frameworks | DINO [24], iBOT [3], MoCo, SimCLR [9] | Self-supervised pretraining of foundation models | Open source implementations |

Visualizing Workflows: Architectural Diagrams

Whole Slide Image Analysis Pipeline

Self-Supervised Learning with Environmental Context

The transition from patch-level to slide-level representation learning marks a pivotal advancement in computational pathology, enabling models that can interpret histological patterns at both cellular and architectural scales. Self-supervised learning has emerged as the foundational paradigm for this progress, allowing models to learn domain-specific morphological features without extensive manual annotation. The development of specialized architectures like hierarchical Vision Transformers, whole-slide foundation models, and environment-aware cellular models has addressed the unique challenges of gigapixel image analysis while maintaining biological relevance.

Future research directions will likely focus on several key areas: (1) improved multimodal integration combining histology with genomic, transcriptomic, and clinical data; (2) more efficient attention mechanisms for processing ultra-long sequences of patch features; (3) standardized benchmarking across diverse tissue types and disease states; and (4) development of explainability frameworks that connect model predictions to biologically interpretable features. As these models continue to mature, they hold the potential to not only augment pathological diagnosis but also to discover novel morphological biomarkers that predict therapeutic response and disease progression, ultimately advancing personalized cancer care and drug development.

Architectures in Action: Technical Implementation and Real-World Applications

The application of deep learning to computational pathology represents a paradigm shift in cancer diagnosis and treatment planning. However, the gigapixel size of Whole Slide Images (WSIs) presents a fundamental challenge for conventional vision models, which are typically designed for standard-resolution natural images [27]. Vision Transformers (ViTs), renowned for their global reasoning capabilities, are computationally overwhelmed when applied directly to WSIs due to the quadratic complexity of self-attention relative to token sequence length [28]. This technical constraint has spurred the development of innovative hierarchical architectures that make ViTs tractable for histopathology. These architectures enable foundation models to learn powerful, clinically relevant histopathological representations from vast repositories of unlabeled data, effectively addressing the critical bottleneck of manual annotation in medical imaging [27] [29] [30].

Core Architectural Principles for Gigapixel Adaptation

Hierarchical Representation Learning

Hierarchical Vision Transformers build multi-resolution feature pyramids through stage-wise processing, mirroring the feature extraction principles of Convolutional Neural Networks (CNNs) while preserving the global contextual capabilities of transformers [28]. This approach initiates processing by dividing the input image into small non-overlapping patches, or "tokens." These tokens undergo successive stages of transformation, where each stage applies a local self-attention operation within spatially contiguous regions, followed by a patch merging operation that reduces spatial resolution while increasing the channel dimension [28]. Formally, this process can be represented as:

F(s) = M(s)(Alocal(s)(F(s-1)))

Where F(s) is the feature representation at stage s, Alocal(s) is the local attention function, and M(s) is the merging/downsampling operation [28]. This hierarchical pyramid structure provides computational efficiency while capturing both cellular-level details and tissue-level context, which is essential for accurate pathological assessment [27].

Efficient Attention Mechanisms

To address the prohibitive computational complexity of global self-attention, adapted ViTs employ localized attention mechanisms. The shifted-window mechanism has proven particularly effective, where the feature map is divided into non-overlapping windows and self-attention is computed within each window [28]. In alternating layers, window partitions are spatially shifted by an offset (typically half the window size), enabling cross-window communication and allowing the model to progressively build global receptive fields without the computational burden of global attention [28]. The attention within each window w is computed as:

Attention(Qw, Kw, Vw) = SoftMax((QwKw⊤)/√d + B)Vw

Where B is a relative position bias term that preserves spatial structure [28]. For extremely high-resolution WSIs, group window attention further optimizes computation by dynamically partitioning sparse tokens into optimally sized groups, framed as a knapsack problem solvable via dynamic programming to minimize overall FLOPs [28].

Hybrid Convolutional-Transformer Integration

Successful adaptation of ViTs to histopathology often involves strategic integration of convolutional operations to inject valuable spatial priors. Key hybridization strategies include:

- Convolutional Embedding modules that stack convolutional layers before token projection in each stage, providing multi-scale local feature extraction [28].

- Hybrid CNN-Transformer architectures where CNN stages extract hierarchical features that are then transformed into tokens for transformer processing [28].

- Depthwise separable convolutions operating in parallel with attention mechanisms, enhancing feature richness and positional sensitivity without requiring explicit position embeddings [28].

These convolutional integrations improve data efficiency and local structure representation, which is particularly valuable for identifying fine-grained histological patterns [28].

Key Architectures and Methodologies

Hierarchical Image Pyramid Transformer (HIPT)

The HIPT architecture represents a seminal approach specifically designed for gigapixel WSIs, employing a three-stage hierarchical structure that formulates WSIs as nested sequences of visual tokens [31]. The architecture operates as follows:

- Stage 1 (Cell Level): A Vision Transformer (ViT-16) processes non-overlapping 16×16 pixel patches from 256×256 image regions, generating feature representations for cellular structures [31].

- Stage 2 (Patch Level): A Vision Transformer (ViT-256) processes the [CLS] tokens from Stage 1, arranged in a 16×16 grid (from 4096×4096 regions), capturing tissue-level patterns [31].

- Stage 3 (Region Level): A Vision Transformer (ViT-4K) processes the [CLS] tokens from Stage 2, arranged in a 256×256 grid, enabling slide-level representation learning [31].

This nested attention mechanism enables the model to capture dependencies across multiple biological scales, from subcellular features to tissue architecture, while maintaining computational tractability through localized attention windows [31]. The model employs a self-supervised pretraining approach using DINO, applied recursively at each hierarchical level to learn robust feature representations without manual annotations [31].

Multi-Resolution Hybrid Self-Supervised Framework

Recent advancements integrate masked image modeling with contrastive learning in a unified framework specifically optimized for histopathology segmentation [27]. This approach features three key innovations:

- Multi-Resolution Hierarchical Architecture: Specifically designed for gigapixel WSIs, capturing both cellular-level details and tissue-level context through progressive downsampling [27].

- Hybrid Self-Supervised Learning: Combines masked autoencoder reconstruction with multi-scale contrastive learning to learn robust feature representations without extensive annotations [27].

- Adaptive Semantic-Aware Augmentation: Learns content-specific transformations that preserve histological integrity while maximizing data diversity through learned transformation policies [27].

The framework employs a progressive fine-tuning protocol with semantic-aware masking strategies and boundary-focused loss functions optimized for dense prediction tasks [27].

Table 1: Performance Comparison of Hierarchical Vision Transformer Architectures

| Architecture | Primary Application | Key Innovation | Reported Performance | Computational Efficiency |

|---|---|---|---|---|

| HIPT [31] | Cancer subtyping, survival prediction | Three-stage hierarchical self-supervised learning | RCC subtyping: Matches supervised CLAM-SB with no labels | Attention only in local windows; enables slide-level representation |

| Multi-Resolution Hybrid [27] | Histopathology image segmentation | Combines MIM with contrastive learning + adaptive augmentation | Dice: 0.825 (4.3% improvement); mIoU: 0.742 (7.8% improvement) | 70% reduction in annotation requirements; 25% labeled data achieves 95.6% of full performance |

| Swin Transformer [28] | General vision backbone; adapted to medical imaging | Shifted window attention mechanism | ImageNet-1K: 87.3% top-1; COCO: 58.7 box AP | Linear computational complexity with image size |

Table 2: Quantitative Performance Metrics on Histopathology Tasks

| Metric | Baseline Performance | Hierarchical ViT Performance | Improvement | Dataset |

|---|---|---|---|---|

| Dice Coefficient | 0.791 | 0.825 | +4.3% | TCGA-BRCA, TCGA-LUAD, TCGA-COAD, CAMELYON16, PanNuke [27] |

| mIoU | 0.688 | 0.742 | +7.8% | TCGA-BRCA, TCGA-LUAD, TCGA-COAD, CAMELYON16, PanNuke [27] |

| Hausdorff Distance | Baseline | Improved | -10.7% | TCGA-BRCA, TCGA-LUAD, TCGA-COAD, CAMELYON16, PanNuke [27] |

| Average Surface Distance | Baseline | Improved | -9.5% | TCGA-BRCA, TCGA-LUAD, TCGA-COAD, CAMELYON16, PanNuke [27] |

| Cross-Dataset Generalization | Baseline | Improved | +13.9% | Cross-dataset evaluation [27] |

Self-Supervised Learning with Barlow Twins for Feature Discovery

An alternative approach leverages the Barlow Twins self-supervised method to learn non-redundant image features from unannotated WSIs [29]. This method employs a siamese network architecture that maximizes similarity between embeddings of distorted versions of the same image while minimizing redundancy between components of the embedding vectors [29]. The objective function evaluates the cross-correlation matrix between the embeddings of two identical backbone networks fed distorted variants of image tiles, optimized by minimizing the deviation of this matrix from the identity matrix [29]. This approach has successfully discovered clinically relevant histomorphological phenotype clusters (HPCs) in colon cancer, with 47 distinct HPCs identified that correlate with patient survival and treatment response [29].

Experimental Protocols and Methodologies

Self-Supervised Pretraining with iBot

The Phikon model, developed by Owkin, demonstrates a standardized protocol for self-supervised pretraining on histopathology data [30]:

- Training Data: 40 million patches extracted from WSIs in The Cancer Genome Atlas (TCGA) database.

- Algorithm: iBot self-supervised learning method with masked image modeling.

- Infrastructure: 32 NVIDIA A100 GPUs with 32GB memory each.

- Training Duration: 1,200 GPU hours (approximately one week).

- Model Architecture: Vision Transformer Base configuration.

- Cost Estimation: Several thousand dollars for complete training [30].

This protocol emphasizes the substantial computational resources required for effective self-supervised pretraining but highlights the potential for creating powerful foundational models that generalize across multiple downstream tasks.

Histomorphological Phenotype Clustering

A comprehensive methodology for discovering interpretable tissue patterns from unlabeled WSIs involves:

- Feature Extraction: A Barlow Twins encoder processes 224×224 pixel tiles at 10x magnification, generating 128-dimensional feature vectors for each tile [29].

- Graph Construction: A nearest neighbor graph is built from the tile vector representations.

- Community Detection: The Leiden community detection algorithm clusters tiles with similar vector representations into Histomorphological Phenotype Clusters (HPCs) [29].

- Histopathological Validation: Expert pathologists independently assess each HPC, scoring tissue types (tumor epithelium, stroma, immune cells) and morphological features [29].

- Clinical Correlation: HPCs are linked to patient outcomes, molecular profiles, and treatment responses through statistical analysis [29].

This protocol successfully identified 47 clinically relevant HPCs in colon cancer, grouped into eight super-clusters representing distinct tissue types and architectural patterns [29].

Adaptive Semantic-Aware Data Augmentation

To address the challenge of limited annotated data while preserving histological semantics, advanced augmentation strategies include:

- Learned Transformation Policies: Meta-learning discovers augmentation policies tailored to histopathological data [27].

- Semantic Preservation Constraints: Ensure transformations maintain diagnostically relevant tissue structures [27].

- Class Mix-Up Color Augmentation: Randomly transfers color distributions between tissue classes to improve model robustness to staining variations [32].

- Center-Loss Optimization: Enforces compact feature representations for normal tissue classes in the embedding space, improving anomaly detection performance [32].

These techniques enable models to achieve 95.6% of full performance with only 25% of labeled data, representing a 70% reduction in annotation requirements compared to supervised baselines [27].

Implementation and Workflow

Diagram 1: Hierarchical Processing Workflow for Gigapixel WSIs

Diagram 2: Self-Supervised Learning with Barlow Twins

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Resources

| Resource | Specifications | Application in Research |

|---|---|---|

| Whole Slide Images | TCGA cohorts (e.g., COAD, BRCA, LUAD), CAMELYON16, PanNuke, institutional collections [27] [29] | Primary data for model training and validation; diverse tissue types and cancer subtypes improve model generalization |

| High-Performance Computing | 32+ NVIDIA A100/A6000 GPUs with 32GB+ memory each [30] | Enables self-supervised pretraining on millions of image patches; critical for foundation model development |

| Vision Transformer Architectures | ViT-Small, ViT-Base configurations; hierarchical variants (HIPT, Swin) [28] [31] | Backbone models for feature extraction; hierarchical designs optimized for multi-scale pathology images |

| Self-Supervised Learning Algorithms | DINO, iBot, Barlow Twins, Masked Autoencoders [27] [29] [31] | Learn powerful representations without manual annotations; overcome labeling bottleneck in medical imaging |

| Clustering and Visualization | Leiden community detection, UMAP, t-SNE [29] | Identify histomorphological patterns in learned embeddings; enable discovery of novel phenotype clusters |

| Pathology Assessment Tools | Standardized scoring sheets for tissue composition, cellular features, architectural patterns [29] | Validate clinical relevance of discovered patterns; establish ground truth for model interpretation |

Hierarchical Vision Transformer architectures represent a transformative approach to analyzing gigapixel histopathology images, enabling foundation models to learn clinically relevant representations without extensive manual annotation. Through innovative adaptations including multi-scale processing, localized attention mechanisms, and self-supervised learning objectives, these models effectively capture the biological hierarchy from subcellular features to tissue architecture. The resulting representations demonstrate remarkable generalizability across institutions, cancer types, and downstream tasks, while significantly reducing dependency on labeled data. As these architectures continue to evolve, they hold tremendous potential to accelerate drug development, power precision medicine initiatives, and uncover novel histomorphological biomarkers across diverse disease states.

The development of artificial intelligence (AI) in computational pathology has long been constrained by the scarcity of expertly annotated histopathology images. Foundation models that can learn powerful representations without extensive manual labeling are revolutionizing this field. By aligning visual features from tissue samples with pathology reports and synthetically generated captions, these models are unlocking new capabilities in diagnosis, prognosis, and biomarker discovery. This technical guide explores the core methodologies, experimental protocols, and reagent solutions driving innovation in label-free representation learning for histopathology, providing researchers and drug development professionals with practical insights into implementing these cutting-edge approaches.

Histopathology, the microscopic examination of tissue to study disease manifestations, forms the cornerstone of cancer diagnosis and numerous other medical conditions. The digitization of histology slides has created unprecedented opportunities for AI to transform pathology practice. However, the traditional paradigm of training specialized models for individual tasks requires vast amounts of labeled data, creating a significant bottleneck for medical AI development [33].

Foundation models pretrained on massive datasets through self-supervised objectives represent a paradigm shift. These models learn general-purpose representations that can be adapted to diverse downstream tasks with minimal or no additional labeled examples. A key innovation in this space is multimodal learning, which aligns visual patterns in whole-slide images (WSIs) with textual information from pathology reports and synthetically generated captions [3] [33]. This approach mirrors how pathologists naturally correlate visual morphology with descriptive language, enabling models to capture rich semantic relationships between tissue features and diagnostic concepts without explicit manual annotation.

Core Methodologies for Multimodal Alignment

Visual-Language Foundation Models

Visual-language foundation models employ contrastive learning to create a shared embedding space where images and their corresponding text descriptions are closely aligned. The CONCH (Contrastive Learning from Captions for Histopathology) model exemplifies this approach, having been pretrained on over 1.17 million image-caption pairs gathered from diverse sources [33]. The model architecture typically comprises three core components:

- Image Encoder: A vision transformer (ViT) that processes histopathology image patches and generates visual feature representations.

- Text Encoder: A transformer-based model that processes textual descriptions and generates textual feature representations.

- Multimodal Fusion Decoder: A component that integrates information from both modalities for generative tasks.

These models are trained using a combination of contrastive losses that pull matching image-text pairs closer in the embedding space while pushing non-matching pairs apart, and captioning losses that learn to generate accurate textual descriptions from visual inputs [33].

Table 1: Key Visual-Language Foundation Models in Histopathology

| Model | Training Data Scale | Core Architecture | Key Capabilities |

|---|---|---|---|

| CONCH [33] | 1.17M image-text pairs | ViT + Text Transformer + Multimodal Decoder | Zero-shot classification, cross-modal retrieval, image captioning |

| TITAN [3] [34] | 335,645 WSIs + 423K synthetic captions | ViT with ALiBi position encoding | Slide-level representation, report generation, rare cancer retrieval |

| PathDiff [35] | Unpaired text and mask conditions | Diffusion framework | Histopathology image synthesis, data augmentation |

| Quilt-1M Tuned Models [36] | 1M image-text pairs | CLIP-based architecture | Zero-shot classification, linear probing, cross-modal retrieval |

Whole-Slide Foundation Models with Synthetic Data Integration

The TITAN (Transformer-based pathology Image and Text Alignment Network) framework introduces a sophisticated three-stage pretraining approach specifically designed for whole-slide images [3] [34]:

Stage 1 - Vision-Only Pretraining: The model processes 335,645 WSIs using a teacher-student knowledge distillation framework with masked image modeling. Patches of 512×512 pixels are extracted at 20× magnification, and features are encoded using specialized histopathology encoders like CONCHv1.5.

Stage 2 - ROI-Level Visual-Language Alignment: The model aligns high-resolution regions of interest (8,192×8,192 pixels) with 423,122 synthetically generated fine-grained captions produced by PathChat, a multimodal generative AI copilot for pathology [3].