User-Centered Design in Cancer Care: A Framework for Developing Effective Quality Improvement Tools

This article provides a comprehensive guide for researchers and drug development professionals on applying user-centered design (UCD) to create impactful cancer quality improvement tools.

User-Centered Design in Cancer Care: A Framework for Developing Effective Quality Improvement Tools

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying user-centered design (UCD) to create impactful cancer quality improvement tools. It explores the foundational need for UCD in oncology, details practical methodological approaches like co-design and iterative prototyping, addresses key implementation challenges including ethics and interoperability, and presents robust validation strategies. By synthesizing current evidence and real-world case studies, this resource aims to bridge the gap between technological innovation and clinical utility, ultimately fostering the development of digital tools that are both scientifically sound and readily adopted in cancer care.

The Critical Need for User-Centered Design in Modern Cancer Care

Cancer remains a leading global health challenge, consistently ranking as a major cause of mortality worldwide [1]. Despite significant advancements, conventional diagnostic and therapeutic methods frequently lack the precision and adaptability required for complex cancer care environments [2]. The standard toolkit—comprising surgery, chemotherapy, radiation therapy, and imaging-based diagnostics—often falls short due to intrinsic limitations such as lack of personalization, collateral damage to healthy tissues, and inability to address dynamic tumor heterogeneity [1] [2].

Traditional diagnostics relying on symptoms, basic imaging, and biopsies often detect cancer at advanced stages, while treatments like chemotherapy and radiation struggle with toxicity, resistance, and imprecise targeting [1] [2]. These foundational limitations create critical gaps in patient care, prompting the oncology field to develop more sophisticated, user-centered tools that integrate molecular insights, artificial intelligence, and participatory design principles to overcome these challenges [3] [4] [5].

Quantitative Analysis of Traditional vs. Emerging Approaches

Table 1: Performance Comparison of Diagnostic Modalities in Oncology

| Diagnostic Method | Sensitivity | Specificity | Area Under Curve (AUC) | Key Limitations |

|---|---|---|---|---|

| Standard Imaging (CT, X-ray) | Not quantified | Not quantified | Not quantified | Fails to detect ~20% of breast cancers in dense tissue; high false positives for PSA tests [2] |

| Traditional Biopsy | Gold standard | Gold standard | Gold standard | Invasive, time-consuming, limited by tumor location/accessibility [2] [4] |

| AI-Based Lung Cancer Detection | 0.86 (0.84-0.87) | 0.86 (0.84-0.87) | 0.92 (0.90-0.94) | Requires large, high-quality datasets; integration challenges [4] |

| AI for EGFR Mutation Prediction | 0.78 (0.75-0.81) | 0.81 (0.77-0.84) | 0.86 (0.83-0.89) | Limited by imaging quality and algorithm transparency [4] |

Table 2: Limitations of Traditional Cancer Treatment Modalities

| Treatment Modality | Key Advancements | Persistent Challenges | Impact on Patient Outcomes |

|---|---|---|---|

| Surgery | Fluorescence-guided systems, robotic assistance, minimally invasive techniques [1] | Minimal residual disease (MRD), tumor heterogeneity, post-surgical metastatic progression [1] | Recurrence due to MRD; immunosuppression facilitating evasion [1] |

| Radiation Therapy | SBRT, IMRT, FLASH radiotherapy, radiation protectors/sensitizers [1] | Precision limitations, immune suppression, regional access issues [1] | Damage to healthy tissues; recurrence due to radioresistant cells [1] |

| Chemotherapy | Synthesized derivatives with amplified cytotoxicity [1] | Drug resistance, toxicity to healthy cells, limited efficacy [1] | Severe side effects; treatment failure due to resistance mechanisms [1] |

| Hormonal Therapy | Targeted approaches for hormone-dependent cancers [1] | Resistance development, quality of life impacts [1] | Limited long-term efficacy; adverse effects on wellbeing [1] |

Experimental Protocols for Evaluating Next-Generation Oncology Tools

Protocol: Co-Design Framework for Digital Health Applications in Supportive Cancer Care

Background: Digital health tools can potentially revolutionize supportive cancer care but often face implementation challenges due to limited stakeholder involvement in development [3]. This protocol outlines a participatory design methodology for creating patient-centered digital health applications.

Materials:

- Participant Recruitment Materials: Informed consent forms, screening questionnaires

- Data Collection Tools: Audio recording equipment, transcription services, qualitative interview guides

- Design Materials: Scoring cards, prototyping software, think-aloud protocol instructions

- Analysis Software: Qualitative data analysis packages (e.g., NVivo), statistical software

Methodology:

- Predesign Phase: Context mapping through systematic literature review and stakeholder analysis of supportive care challenges at the implementation site [3].

- Participant Recruitment: Employ purposive sampling to recruit key stakeholders:

- Patients with cancer (current treatment, age ≥18 years)

- Healthcare professionals (oncologists, nurses, supportive care specialists)

- Survivors of cancer and patient advocates [3]

- Generative Phase: Conduct collaborative workshops using:

- Scoring cards to prioritize functionalities

- Focus groups for brainstorming digital solutions

- Individual qualitative interviews to explore unmet needs [3]

- Prototyping Phase: Iterative development with continuous stakeholder feedback:

- Evaluation: Assess usability using validated instruments:

- System Usability Scale (SUS)

- User Experience Questionnaire (UEQ)

- Post-Study System Usability Questionnaire (PSSUQ) [6]

Expected Outcomes: Identification of critical user needs; development of a user-centered digital health application with improved adoption potential; comprehensive understanding of implementation facilitators and barriers [3].

Protocol: Validation of AI Algorithms for Lung Cancer Diagnosis and Prognosis

Background: Artificial intelligence shows promise for addressing limitations in traditional cancer diagnosis but requires rigorous validation [4]. This protocol provides a framework for evaluating image-based AI tools in lung cancer management.

Materials:

- Imaging Data: CT and PET scans from multiple institutions

- Computational Resources: High-performance computing infrastructure

- AI Algorithms: Deep learning and machine learning models for image analysis

- Validation Datasets: Independent cohorts with confirmed diagnoses and outcomes

Methodology:

- Data Collection and Curation:

- Collect retrospective imaging studies from multiple centers (n≥18,905 records initially)

- Apply inclusion/exclusion criteria: confirmed lung cancer diagnosis, available imaging, outcome data

- Exclude poor-quality images and standardize preprocessing [4]

- Region of Interest Identification:

- Employ manual or semi-automated segmentation to extract nodules

- Utilize data augmentation techniques to expand raw data volumes [4]

- Model Development and Training:

- Implement both deep learning (DL) and machine learning (ML) approaches

- For ML: extract and select handcrafted radiomic features

- For DL: integrate feature engineering into learning step [4]

- Validation:

- Conduct external validation using out-of-sample datasets

- Compare performance across different clinical objectives:

- Lung cancer detection (n=128 studies)

- Histological subtyping (ADC vs. SCC, n=19 studies)

- EGFR mutation prediction (n=46 studies) [4]

- Statistical Analysis:

- Calculate pooled sensitivity, specificity, and AUC with 95% confidence intervals

- Assess heterogeneity using I² statistics

- Perform subgroup analyses based on algorithm type, study objectives, and validation cohorts [4]

Quality Assessment:

- Apply QUADAS-AI tool for diagnostic accuracy studies

- Use Newcastle-Ottawa Scale for prognostic studies

- Document risk of bias across patient selection, index testing, reference standard, and flow/timing [4]

Expected Outcomes: Quantified performance metrics for AI algorithms in lung cancer diagnosis; identification of optimal AI approaches for specific clinical questions; framework for multicenter validation of AI tools [4].

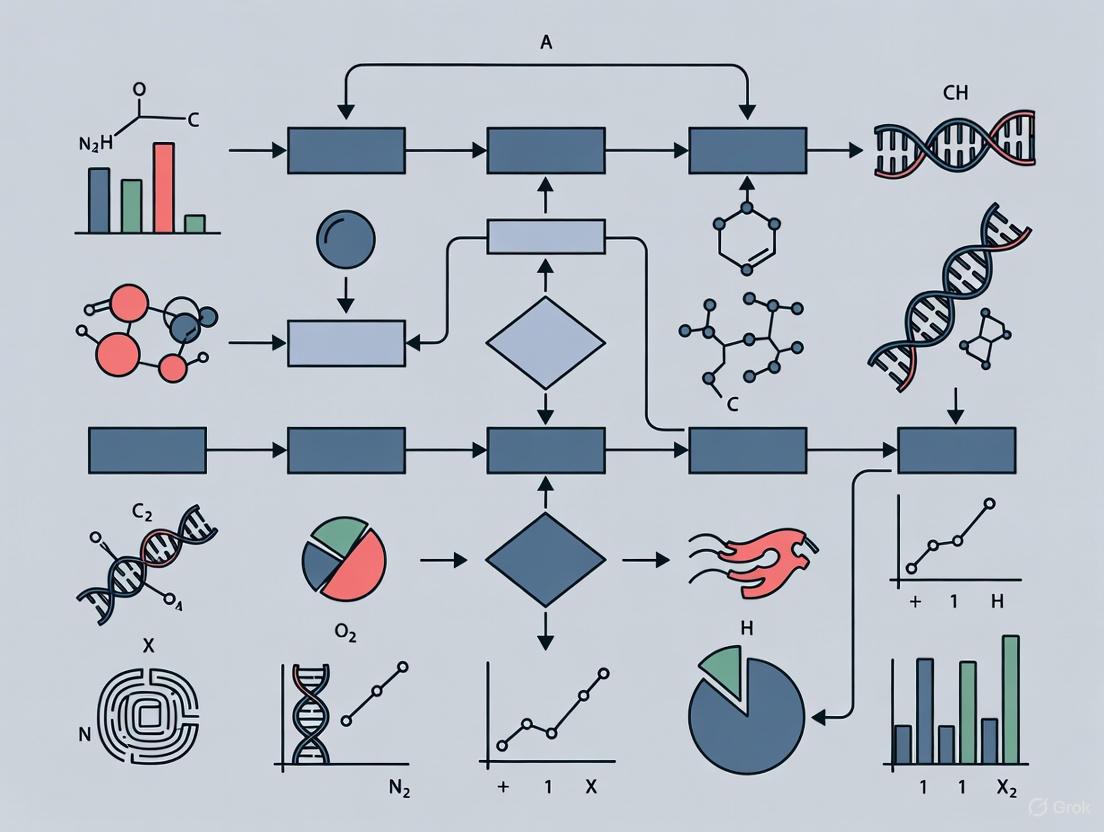

Visualization of Key Workflows and Signaling Pathways

Biomarker-Driven Precision Oncology Workflow

Co-Design Process for Digital Health Tools

Research Reagent Solutions for Advanced Oncology Studies

Table 3: Essential Research Reagents and Platforms for Modern Oncology Investigations

| Research Reagent/Platform | Function | Application in Addressing Traditional Gaps |

|---|---|---|

| Next-Generation Sequencing (NGS) | Comprehensive genomic profiling to identify targetable mutations [2] [7] | Enables precision medicine by moving beyond one-size-fits-all treatment approaches [2] |

| Circulating Tumor DNA (ctDNA) Assays | Non-invasive liquid biopsy for monitoring treatment response and minimal residual disease [1] [5] | Overcomes limitations of traditional tissue biopsies; enables dynamic treatment adaptation [1] |

| DeepHRD | AI tool detecting homologous recombination deficiency from standard biopsy slides [7] | Identifies patients for PARP inhibitors; more accurate than current genomic tests with lower failure rates [7] |

| Prov-GigaPath, Owkin's Models | AI-powered diagnostic tools for cancer detection imaging [7] | Improves early detection accuracy beyond conventional imaging and human interpretation [4] [7] |

| Spatial Transcriptomics | High-resolution mapping of gene expression within tumor microenvironment [5] | Reveals tumor heterogeneity and therapy resistance mechanisms invisible to traditional histology [5] |

| Patient-Reported Outcome (PRO) Systems | Digital platforms for symptom monitoring and quality of life assessment [3] [6] | Addresses supportive care gaps by capturing patient experiences in real-world settings [3] |

| CAR T-Cells with Boolean Logic | Engineered cellular therapies with multiple receptors for enhanced cancer cell specificity [5] | Reduces off-target effects common in traditional chemotherapy; targets cancer stem cells [5] |

In the specialized field of cancer quality improvement research, the methodology employed to develop tools and interventions significantly influences their ultimate efficacy and adoption. While often used interchangeably, user-centered design (UCD), co-design, and participatory development represent distinct yet complementary approaches with unique philosophical and practical implications. For researchers, scientists, and drug development professionals working in oncology, understanding these nuances is critical for selecting the appropriate methodological framework. This application note delineates these core principles, provides structured protocols for their implementation, and contextualizes their application within cancer research, from clinical trial design to patient care tool development.

Defining the Conceptual Frameworks

User-Centered Design (UCD)

User-centered design is an iterative design process in which designers focus on the users and their needs in each phase of the design process [8]. UCD employs a mixture of investigative methods and tools (e.g., surveys, interviews) and generative ones (e.g., brainstorming) to develop an understanding of user needs [8]. The ultimate aim is to create highly usable and accessible products that address real user requirements [8].

Core Principles of UCD [9] [10] [11]:

- User Focus: Putting the user first and figuring out what they need and want

- User Involvement: Asking end-users for their thoughts and ideas throughout the design process

- Usability: Designing solutions that are intuitive, efficient, and enjoyable

- Iterative Design: Continually revising and improving based on user feedback

- Empathetic Design: Understanding users' emotions and experiences

Co-Design

Co-design represents a more collaborative approach, positioning itself as a structured process for involving patients throughout all stages of quality improvement [12]. In healthcare contexts, co-design captures the patient's lived experience, aims to understand these experiences, and implements improvements based on this understanding [12]. This approach has been characterized as involving six key phases: engage, plan, explore, develop, decide, and change [12].

In cancer care specifically, co-design has been successfully implemented to develop interventions such as information resource booklets and films [13], where patients and clinicians work collaboratively to identify improvement priorities and develop solutions.

Participatory Development

Participatory development serves as an umbrella term encompassing various collaborative approaches. In cancer research, it often manifests as community-based participatory research (CBPR), which forms partnerships between researchers and communities to address disparities [14]. This approach is particularly valuable when developing interventions for populations experiencing cancer health disparities, as it ensures cultural relevance and community ownership [14].

Table 1: Comparative Analysis of Design Approaches in Cancer Research

| Dimension | User-Centered Design (UCD) | Co-Design | Participatory Development |

|---|---|---|---|

| Primary Focus | User needs and usability | Shared creation process | Community empowerment and capacity building |

| Typical Participant Role | Informant and tester | Active co-creator | Partner and decision-maker |

| Power Dynamic | Researcher-led | Shared ownership | Community-led |

| Key Strength | Optimizing usability and user experience | Leveraging lived experience for innovation | Ensuring cultural relevance and sustainability |

| Common Methods | Usability testing, interviews, personas | Joint workshops, prototyping | Community advisory boards, partnership development |

| Typical Output | Refined product or tool | Co-created intervention | Community-owned program |

Methodological Protocols for Cancer Research Applications

Protocol 1: UCD for Digital Health Tool Development

This protocol outlines a systematic approach for developing digital health tools for cancer patients, such as symptom tracking applications or educational platforms.

Phase 1: Context Analysis and User Research [10]

- Objective: Understand user characteristics, contexts, and needs

- Procedure:

- Conduct semi-structured interviews with cancer patients, caregivers, and clinicians (30-60 minutes each)

- Administer validated psychosocial scales (e.g., FACT-G, PRO-CTCAE) to quantify patient experiences

- Develop detailed user personas including demographics, goals, frustrations, and technical proficiency

- Deliverable: User needs assessment report with prioritized requirements

Phase 2: Requirement Specification [10]

- Objective: Align user needs with technical and clinical constraints

- Procedure:

- Convene stakeholder workshop including patients, clinicians, developers, and researchers

- Map HEART framework metrics (Happiness, Engagement, Adoption, Retention, Task Success) to specific user goals

- Establish minimum viable product (MVP) specifications and success criteria

- Deliverable: Requirements specification document with validated success metrics

Phase 3: Iterative Prototyping and Evaluation [8] [10]

- Objective: Develop and refine the digital tool through iterative testing

- Procedure:

- Create low-fidelity wireframes and conduct cognitive walkthroughs with 5-8 users

- Develop high-fidelity interactive prototype and conduct usability testing with 10-15 users

- Implement A/B testing for critical interface elements (e.g., navigation, data entry)

- Measure task success rates, time-on-task, and error rates

- Deliverable: Refined digital tool with usability report

Figure 1: UCD Iterative Process for Digital Health Tool Development

Protocol 2: Co-Design for Cancer Care Intervention

This protocol details the experience-based co-design (EBCD) approach for developing cancer care interventions, adapted from successful implementations in oncology settings [12] [13].

Phase 1: Project Establishment and Ethical Approval

- Objective: Establish project foundation and governance

- Procedure:

- Secure institutional approvals and clinical leadership support

- Establish project advisory group including patient advocates

- Develop participant recruitment materials and consent forms

- Define scope and resource allocation for co-design activities

- Deliverable: Project charter with governance structure

Phase 2: Experience Exploration [13]

- Objective: Understand patient and clinician experiences in depth

- Procedure:

- Conduct ethnographic observations in clinical settings (20-30 hours)

- Perform narrative interviews with patients (n=15-20) and clinicians (n=8-12)

- Create "touchpoint film" editing patient interviews to highlight key moments

- Conduct separate patient and clinician workshops to identify improvement priorities

- Deliverable: Experience mapping report and touchpoint film

Phase 3: Co-Design Workshops [12] [13]

- Objective: Collaboratively develop interventions based on identified priorities

- Procedure:

- Convene joint patient-clinician workshop to review findings and establish shared priorities

- Form small co-design teams (3-5 participants each) to address specific priorities

- Conduct 4-6 iterative workshop sessions to develop and refine interventions

- Utilize creative methods (storyboarding, prototyping, role-playing) to generate ideas

- Deliverable: Prototype interventions with design rationale

Phase 4: Implementation and Reflection [13]

- Objective: Finalize interventions and plan for implementation

- Procedure:

- Host celebration event to share co-design outcomes

- Develop implementation plan with resource requirements

- Establish evaluation framework with process and outcome measures

- Conduct reflective sessions with co-design participants to capture insights

- Deliverable: Finalized intervention package with implementation guide

Table 2: Co-design Workshop Structure for Cancer Care Interventions

| Session | Duration | Participants | Key Activities | Materials |

|---|---|---|---|---|

| Introduction | 90 minutes | Patients, clinicians, facilitators | Project overview, confidentiality agreement, establishing group norms | Project information sheets, consent forms |

| Experience Sharing | 120 minutes | Patients, clinicians, facilitators | Watching touchpoint film, shared reflection, identifying key moments | Touchpoint film, audio recording equipment |

| Priority Setting | 90 minutes | Patients, clinicians, facilitators | Dot voting, discussion, consensus building on improvement areas | Voting materials, flip charts, colored markers |

| Ideation | 120 minutes | Patients, clinicians, facilitators | Brainstorming, storyboarding, concept development | Storyboard templates, sticky notes, prototyping materials |

| Refinement | 120 minutes | Patients, clinicians, facilitators | Prototype testing, feedback cycles, iteration | Prototypes, feedback forms, recording devices |

| Action Planning | 90 minutes | Patients, clinicians, facilitators | Implementation planning, responsibility assignment, evaluation planning | Action plan templates, implementation guides |

Protocol 3: Participatory Development for Cancer Health Disparity Interventions

This protocol adapts the community-based participatory research (CBPR) framework for developing interventions to reduce cancer health disparities, drawing parallels to therapeutic drug development [14].

Stage 1: Community Engagement and Partnership Building [14]

- Objective: Establish authentic community partnerships and identify disparities

- Procedure:

- Conduct community landscape analysis to identify key organizations and leaders

- Form community advisory board with representation from affected populations

- Jointly conduct needs assessment to identify and prioritize cancer disparities

- Develop formal partnerships through memoranda of understanding

- Deliverable: Community partnership structure with identified disparities

Stage 2: Intervention Development and Adaptation [14]

- Objective: Develop culturally appropriate interventions using community wisdom

- Procedure:

- Conduct focus groups (4-6 groups, 6-8 participants each) to explore intervention ideas

- Utilize culturally appropriate methods (photovoice, community dialogues, storytelling)

- Adapt evidence-based interventions to local cultural context

- Establish community-approved metrics for success

- Deliverable: Culturally adapted intervention protocol

Stage 3: Implementation and Evaluation [14]

- Objective: Implement and evaluate the intervention within the community context

- Procedure:

- Train community health workers to deliver the intervention

- Implement with tracking of reach, adoption, and implementation fidelity

- Collect pre-post data on primary outcomes (knowledge, behavior, screening rates)

- Compare with historical controls or matched comparison communities

- Deliverable: Implementation report with outcome data

Stage 4: Sustainability and Scaling [14]

- Objective: Ensure intervention sustainability and adapt for broader dissemination

- Procedure:

- Develop sustainability plan with community partners

- Identify potential funding mechanisms for ongoing delivery

- Adapt intervention for other cultural contexts or geographic areas

- Work toward adoption as standard practice within healthcare systems

- Deliverable: Sustainability plan and adaptation toolkit

Figure 2: Participatory Development Framework for Cancer Disparity Interventions

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Methodological Reagents for Design Research in Cancer Quality Improvement

| Research Reagent | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| Semi-structured Interview Guides | Elicit rich qualitative data on experiences and needs | UCD, Co-Design, Participatory Development | Must be adapted to cultural context and health literacy levels |

| Experience-Based Co-Design Toolkit | Facilitate collaborative design sessions | Co-Design | Requires trained facilitation; includes touchpoint films, journey mapping templates |

| HEART Framework Metrics | Align user experience goals with measurable outcomes | UCD | Must be customized for cancer-specific contexts (e.g., treatment adherence, symptom management) |

| Community Advisory Board | Ensure cultural relevance and community ownership | Participatory Development | Requires budget for stipends, transportation; must represent diversity of affected population |

| Usability Testing Protocol | Identify interface problems and usability issues | UCD | Should include cancer patients with varying levels of technological proficiency and health status |

| Co-Design Workshop Materials | Support creative collaboration and idea generation | Co-Design | Includes prototyping materials, journey mapping templates, voting materials |

| Cultural Adaptation Framework | Modify evidence-based interventions for specific cultural contexts | Participatory Development | Must address language, values, traditions, and historical trauma |

| Stakeholder Engagement Plan | Manage involvement of diverse stakeholders across project lifecycle | All approaches | Identifies key stakeholders, engagement frequency, methods, and communication channels |

The selection of UCD, co-design, or participatory development approaches in cancer quality improvement research should be guided by project goals, context, and desired outcomes. UCD excels when optimizing usability and user experience of existing tools or developing new digital health technologies. Co-design proves particularly valuable when leveraging lived experience to innovate cancer care services and interventions. Participatory development emerges as essential when addressing cancer health disparities and ensuring cultural relevance and community ownership.

Each approach demands distinct resources, timelines, and expertise, but all share the fundamental principle of engaging end-users in the development process. By applying these structured protocols and utilizing the provided toolkit, cancer researchers and drug development professionals can enhance the relevance, effectiveness, and adoption of quality improvement tools and interventions across the cancer care continuum.

The development and implementation of successful digital health tools in oncology depend on systematically identifying and engaging a diverse ecosystem of stakeholders. Human-centered design (HCD) methodologies provide a critical framework for ensuring that cancer quality improvement tools address the authentic needs of all end-users [15]. These approaches—including participatory design, co-design, and design thinking—prioritize the needs, desires, and behaviors of the people central to the problem being solved [16]. When applied to cancer care, this means actively involving patients, clinicians, and healthcare systems throughout the development process to create solutions that are not only technically robust but also clinically relevant, usable, and sustainable [3] [15].

The consequences of excluding key stakeholders are significant, leading to digital health tools with poor adoption, limited effectiveness, and ultimately, technological waste [15]. This application note provides a structured approach to stakeholder identification and engagement, framed within the context of cancer quality improvement research.

Mapping the Stakeholder Ecosystem

The stakeholder ecosystem for cancer quality improvement tools comprises three primary groups, each with distinct roles, needs, and influences. A comprehensive mapping is essential for targeted engagement strategies.

Table 1: Key Stakeholder Groups in Cancer Quality Improvement

| Stakeholder Group | Specific Roles | Primary Needs & Motivations | Influence on Implementation |

|---|---|---|---|

| Patients & Caregivers | - End-users of digital tools- Provide lived experience- Report outcomes and symptoms | - Access to clear information and support [17]- Streamlined communication with care team [3]- Self-efficacy and empowerment in care [3] [18] | - Ultimate determinants of adoption and engagement- Provide crucial feedback on usability and acceptability |

| Clinicians & Care Teams | - Primary facilitators of digital tool use- Interpret patient data and act on alerts- Integrate tools into clinical workflow | - Tools that save time and reduce administrative burden [18]- Seamless integration with existing EHR systems [19]- Clear clinical decision support [20] | - Gatekeepers to clinical integration- Crucial for championing the tool within the organization |

| Healthcare Systems & Leadership | - Provide infrastructure and resources- Establish governance and policies- Manage financial sustainability | - Improved patient outcomes and satisfaction [21]- Operational efficiency and cost-effectiveness [21]- Alignment with value-based care models and reimbursement [21] | - Create enabling environment (funding, IT, policy)- Drive organization-wide adoption and scaling |

Beyond these primary groups, other important stakeholders include payers who influence reimbursement models, regulators who ensure safety and efficacy, and health technology vendors who partner on development and integration [18] [22].

Methodologies for Stakeholder Engagement: A Phased Approach

A phased, iterative approach to stakeholder engagement ensures that input is gathered meaningfully throughout the development lifecycle, from initial problem definition to post-implementation refinement.

Phase 1: Discover and Define

The initial phase focuses on building empathy and deeply understanding the problem context from all stakeholder perspectives.

- Methods: Semi-structured interviews, focus groups, and observational studies are highly effective for qualitative insight gathering [3] [17]. User personas and journey maps are powerful tools to synthesize findings and create empathy within the design team [16].

- Protocol - Conducting a Stakeholder Focus Group:

- Recruitment: Purposively sample 6-10 participants from a single stakeholder group (e.g., patients) to ensure psychological safety and open discussion [17].

- Moderator Guide: Develop a guide with open-ended questions. For patients: "Can you walk us through a challenge you faced when managing your symptoms at home?".

- Environment: Conduct in a comfortable, private setting; offer virtual participation to improve accessibility.

- Execution: A skilled moderator leads the discussion while a note-taker documents non-verbal cues. Session should be audio-recorded and transcribed verbatim for analysis.

- Analysis: Use framework analysis to identify recurring themes and unmet needs across transcripts [17].

Phase 2: Ideation and Co-Design

This phase translates insights into tangible solutions by collaboratively generating and refining ideas with stakeholders.

- Methods: Co-design workshops bring patients, clinicians, and designers together to brainstorm concepts [16]. Brainstorming sessions using "How Might We..." questions foster creative problem-solving. Low-fidelity prototyping with wireframes or mock-ups allows for early feedback without significant investment [3].

- Protocol - Facilitating a Co-Design Workshop:

- Preparation: Create a diverse team of 5-8 participants, including patients, caregivers, nurses, and physicians. Prepare provocative design prompts based on Phase 1 insights.

- Idea Generation: Use structured activities like "mind washing" or "brainwriting" to ensure all participants contribute equally [16].

- Concept Development: Groups sketch out ideas and create simple storyboards or paper prototypes for their top concepts.

- Prioritization: Use scoring cards or feasibility-impact matrices to collectively prioritize which concepts to advance, based on desirability, feasibility, and viability [3].

Phase 3: Prototyping and Testing

Stakeholders evaluate functional prototypes to identify usability issues and assess real-world fit before full-scale development.

- Methods: Think-aloud protocols where users verbalize their thoughts while interacting with a prototype are invaluable for usability testing [3]. Pilot studies and usability surveys (e.g., System Usability Scale - SUS) provide structured quantitative and qualitative feedback [23].

- Protocol - Usability Testing with a Think-Aloud Protocol:

- Setup: Recruit 5-8 users per stakeholder group. Prepare a prototype and a set of core tasks (e.g., "Report your pain level for today").

- Briefing: Instruct the participant to use the prototype and continuously think aloud. Assure them that the prototype is being tested, not their skills.

- Session: A facilitator observes, takes notes, and may ask probing questions ("What are you thinking right now?"). The session is recorded.

- Analysis: Review recordings and notes to identify usability pain points, navigation errors, and comprehension issues. Iterate the prototype to address these findings.

The following diagram visualizes this iterative, multi-phase engagement process and its key outputs.

Implementation Protocol: Integrating Patient-Reported Outcomes (PROs) in Cancer Care

The following detailed protocol is adapted from successful implementations of Patient-Reported Outcome (PRO) systems in oncology, which demonstrate high-impact stakeholder engagement [20]. PROs are a critical cancer quality improvement tool, and their implementation exemplifies the principles discussed.

Table 2: Implementation Protocol for PRO Integration in Cancer Care

| Implementation Step | Stakeholder Engagement Activities | Key Outputs | Rationale & Evidence |

|---|---|---|---|

| 1. Needs Assessment & Planning | - Clinician input: Focus groups to identify key symptoms for monitoring (e.g., pain, fatigue) [20].- Patient input: Interviews to determine PRO acceptability, burden, and meaningful triggers for alerts [20]. | - List of target symptoms and PRO measures.- Defined thresholds for "concerning" PRO responses that trigger clinical review. | Ensures clinical relevance and patient acceptability, increasing long-term adoption [3] [20]. |

| 2. Development of Clinical Decision Support (CDS) | - Multidisciplinary team input: Oncology nurses, physicians, and pharmacists collaborate to develop evidence-based care pathways for concerning PROs [20]. | - PRO-based CDS tools (e.g., automated symptom management guidelines linked to specific PRO scores). | Standardizes care, supports nurses in managing alerts, and translates data into actionable clinical guidance [19] [20]. |

| 3. Training & Integration | - Role-specific training: Hands-on sessions for clinicians (interpreting PROs) and staff/CRAs (software use) [20].- Workflow integration: Map PRO review into existing clinical workflows (e.g., during pre-visit huddles) [3]. | - Trained clinical team.- Integrated workflow schematic.- Technical support plan. | Embeds the tool into routine practice, minimizing disruption and building clinician confidence [19] [20]. |

| 4. Pilot Testing & Iteration | - Stakeholder feedback: SUS surveys and interviews with patients and clinicians on usability and perceived value [23].- Workload assessment: Monitor alert frequency and nursing response time [20]. | - Refined PRO platform and CDS.- Understanding of resource needs for full implementation. | Identifies and resolves unforeseen technical and workflow issues in a controlled setting [3] [16]. |

| 5. Full Implementation & Sustainment | - Ongoing support: Dedicated coordinator for technical and logistical issues [19].- Feedback loops: Regular meetings with stakeholder champions to review metrics and address concerns. | - Fully operational PRO system.- Plan for continuous quality improvement. | Facilitates long-term sustainability and allows the system to adapt to evolving needs [18] [19]. |

The Scientist's Toolkit: Essential Reagents for Stakeholder Engagement

Successful stakeholder engagement requires both methodological and practical tools. The following table details key "research reagents" for designing and executing this work.

Table 3: Essential Reagents for Stakeholder-Engaged Research

| Category & Item | Specification / Example | Primary Function in Research |

|---|---|---|

| Recruitment & Ethics | ||

| Purposive Sampling Framework | A predefined matrix to ensure diversity in stakeholder recruitment (e.g., by cancer type, treatment stage, clinical role) [17]. | Ensures a wide range of perspectives are captured, improving the validity and generalizability of findings. |

| Informed Consent Materials | Documents explaining study procedures, risks, benefits, and data handling in clear, accessible language. | Protects participant rights and meets ethical requirements for research. |

| Data Collection & Analysis | ||

| Semi-Structured Interview Guides | Question prompts tailored to each stakeholder group (patients, clinicians, etc.) [17] [23]. | Ensures consistent coverage of key topics while allowing flexibility to explore emergent themes. |

| Thematic Analysis Framework | A coding framework (e.g., based on Fitch's Supportive Care Framework) to analyze qualitative data [17]. | Provides a systematic method for identifying, analyzing, and reporting patterns (themes) across qualitative data sets. |

| Design & Evaluation | ||

| System Usability Scale (SUS) | A standardized 10-item questionnaire with a 5-point Likert scale [23]. | Provides a quick, reliable, and validated measure of a system's perceived usability from the user's perspective. |

| Low-Fidelity Prototyping Tools | Paper wireframes or digital mock-up tools (e.g., Figma, Adobe XD). | Allows for rapid, low-cost visualization of ideas for early-stage feedback and iteration before costly development. |

| Co-Design Workshop Kits | Physical (post-its, markers, printouts) or digital (Miro, Jamboard) tools for collaborative idea generation. | Facilitates creative collaboration and ensures all stakeholder voices are heard during the ideation phase [16]. |

Navigating the complex stakeholder landscape of cancer care is not a peripheral activity but a core component of developing effective, adoptable, and sustainable quality improvement tools. A structured, phased approach to engagement—Discover and Define, Ideation and Co-Design, and Prototyping and Testing—ensures that the resulting interventions are deeply rooted in the real-world needs of patients, clinicians, and healthcare systems. The provided protocols, visualization, and toolkit offer a practical starting point for researchers to design their own stakeholder engagement strategies, ultimately contributing to a more responsive and patient-centered oncology care ecosystem.

User-Centered Design (UCD) has emerged as a critical methodology for developing effective digital health tools in oncology. By prioritizing the needs, preferences, and workflows of end-users throughout the design process, UCD significantly enhances tool adoption, usability, and ultimately, clinical outcomes. This approach is particularly vital in cancer care, where digital tools must address complex patient needs and integrate seamlessly into clinical workflows. This application note synthesizes current evidence and provides structured protocols for implementing UCD in oncology quality improvement research.

The imperative for UCD in oncology is underscored by documented challenges with existing digital systems. Electronic Health Records (EHRs), for instance, often demonstrate significant usability failures, with physicians rating them in the bottom 9% of all software systems, a factor linked to increased burnout risk [24]. UCD directly addresses these shortcomings by ensuring digital tools are intuitive, efficient, and aligned with user expectations.

Quantitative Evidence: UCD Impact in Oncology

Research demonstrates that UCD methodologies lead to tangible improvements in usability metrics and implementation success. The following table summarizes key quantitative findings from recent studies.

Table 1: Quantitative Evidence for UCD in Oncology Digital Health Tools

| Digital Tool / Study | UCD Methodology | Key Usability & Outcome Metrics | Result |

|---|---|---|---|

| Step Proactive (AE Management Software) [25] | Usability testing with 6 patients & 6 HCPs; Iterative design per IEC 62366-1:2015 | System Usability Scale (SUS) Score; Scenario Completion Rate | SUS score in the 90th percentile (Grade A); 100% task completion rate |

| OncoSupport+ (Supportive Care App) [3] | Participatory co-design; Workshops, interviews, and focus groups with patients and HCPs | Identification of Critical Adoption Factors | Facilitators: Ease of use, workflow integration, professional introduction |

| Digital PRO Assessment (University Cancer Center) [26] | Interdisciplinary project group; Involvement of patient advisory board | Feasibility of Clinical Implementation | Successful development of a modular, clinically integrated PRO system |

| Young Adult Needs Assessment (NA-SB Intervention) [27] | Three-phase UCD: usability testing, contextual inquiry, prototyping | Optimization for Implementation | Intervention designed for implementation and scale-up across varied contexts |

UCD's impact extends beyond initial usability. The harmonization of evidence-based practices, implementation context, and implementation strategies through UCD methods potentially minimizes the need for elaborate, burdensome implementation strategies later, promoting sustainment [27].

Application Protocols: Implementing UCD in Oncology Research

This section provides detailed methodological protocols for key UCD experiments and processes cited in the evidence base.

Protocol: Co-Designing a Digital Health App for Supportive Care

Based on: Difrancesco et al. (2025), JMIR Human Factors [3]

Objective: To collaboratively design and develop a digital health application for supportive cancer care with patients and healthcare professionals.

Methodology Overview: A participatory study divided into three sequential phases: Predesign, Generative, and Prototyping.

Table 2: Phases of the Co-Design Protocol

| Phase | Primary Activities | Stakeholders Involved | Key Outcomes |

|---|---|---|---|

| Predesign | Understand context, challenges, and needs in supportive care. | Patients, survivors, HCPs, researchers. | Mapped challenges and needs at the clinical site. |

| Generative | Brainstorm app functionalities; Identify adoption facilitators/barriers. | Patients, survivors, HCPs. | Prioritized app features and implementation factors. |

| Prototyping | Iterative development of the app prototype and interface. | Patients, nurses. | A high-fidelity, user-validated app prototype (OncoSupport+). |

Detailed Procedures:

- Stakeholder Recruitment: Recruit patients currently in treatment, survivors (as patient advocates), and relevant HCPs (oncologists, nurses). Inclusion criteria should ensure participants can meaningfully engage (e.g., language proficiency).

- Predesign - Contextual Inquiry: Conduct qualitative interviews and ethnographic observation to understand the current supportive care workflow, pain points, and unmet needs from multiple perspectives.

- Generative - Idea Co-Creation: Facilitate collaborative workshops using scoring cards and focus groups to brainstorm and prioritize desired app functionalities.

- Prototyping - Iterative Feedback: Develop interactive wireframes and prototypes. Use "think-aloud" protocols and structured usability tests with patients and nurses to gather feedback on navigation, layout, and content, refining the design through multiple cycles.

Protocol: Usability Testing of a Clinical Decision Support System

Based on: PMC Article 11924132 (2025) [25]

Objective: To assess the usability and user-friendliness of a medical device software for managing adverse events in oncology.

Methodology Overview: A multi-method usability test conforming to international standard IEC 62366-1:2015, involving both patients and healthcare professionals.

Detailed Procedures:

- Participant Recruitment: Recruit a diverse group of end-users (e.g., n=6 patients, n=6 HCPs). Patients should be adults without cognitive impairments.

- Test Environment & Scenario Design: Conduct tests in a controlled environment. Participants are asked to complete specific, realistic tasks (e.g., "log in and report a new symptom" for patients; "identify a high-risk patient from the dashboard" for HCPs).

- Data Collection:

- Observation & Qualitative Feedback: Researchers observe participants, noting difficulties, errors, and non-verbal cues. Qualitative feedback is collected through open-ended questions.

- System Usability Scale (SUS): After the tasks, participants complete the standardized SUS questionnaire, which provides a quantitative usability score.

- Task Success Rate: The percentage of correctly completed tasks without assistance is recorded.

- Data Analysis & Iteration:

- Analyze SUS scores, with a score above 80 (90th percentile) considered "excellent" and Grade A.

- Compile errors and qualitative feedback to identify specific design flaws.

- The development team uses these insights to implement refinements, and the testing cycle is repeated to validate improvements.

Protocol: Harmonizing EBP, Context, and Implementation via UCD

Based on: Hussaini et al. (2021), Implementation Science Communications [27]

Objective: To design a care coordination intervention and its implementation strategy to ensure fit with the clinical context.

Methodology Overview: A three-phase UCD process modeled as an iterative cycle of harmonization.

Diagram 1: The iterative UCD process for harmonization.

Detailed Procedures:

- Usability Testing (Refining the Evidence-Based Practice): Select an existing evidence-based practice (e.g., a patient-reported outcome measure) and conduct usability testing to identify and fix issues related to length, wording, and layout, thereby optimizing it for real-world use.

- Ethnographic Contextual Inquiry (Preparing the Context): Use ethnographic methods (e.g., shadowing, informal interviews) to deeply understand the clinical context, including workflows, culture, and potential barriers to implementation. This prepares the context for the new intervention.

- Iterative Prototyping with a Multidisciplinary Team (Threading the Needle): Form a design team including clinicians, administrators, researchers, and patients. Collaboratively create prototypes of both the intervention and the implementation strategies, iterating based on continuous feedback. This ensures the final product is designed for implementation from the outset.

The Scientist's Toolkit: Essential Reagents for UCD in Oncology

Table 3: Key Research Reagents and Solutions for UCD Experiments

| Item / Solution | Function / Description | Application in Protocol |

|---|---|---|

| System Usability Scale (SUS) | A reliable, 10-item Likert scale providing a global view of subjective usability assessments. | Quantitative usability assessment post-testing [25]. |

| Think-Aloud Protocol | A qualitative method where users verbalize their thoughts, feelings, and opinions while interacting with a prototype. | Identifying usability issues and understanding the user's mental model during prototyping [3]. |

| Interactive Prototyping Software | Tools (e.g., Figma, Adobe XD) to create high-fidelity, clickable mockups of digital applications. | Creating realistic prototypes for iterative testing in the prototyping phase without writing code [3] [27]. |

| IEC 62366-1:2015 Standard | International standard specifying a process for a manufacturer to analyze, design, develop and evaluate usability of a medical device. | Ensuring usability testing meets regulatory requirements for medical device software [25]. |

| Multidisciplinary Design Team | A core group including clinical experts (MDs, RNs), patients, designers, and software developers. | Ensuring all perspectives are integrated throughout the co-design and prototyping process [3] [27] [26]. |

The structured application of User-Centered Design is no longer optional but essential for developing successful digital health tools in oncology. The evidence demonstrates that UCD directly addresses the critical challenges of poor adoption and usability plaguing many healthcare technologies. By employing the detailed protocols and tools outlined in this document, researchers and drug development professionals can significantly enhance the implementation, effectiveness, and impact of their oncology quality improvement initiatives, ensuring that new technologies truly meet the needs of patients and clinicians.

Practical Frameworks and Co-Design Methods for Cancer Tool Development

Application Notes: Iterative UCD in Digital Cancer Tool Development

The development of digital tools for cancer care has seen a significant shift towards methodologies that prioritize end-user needs through iterative design and evaluation. The following applications demonstrate the practical implementation of these approaches.

Lion-App: A Smartphone Application for Quality of Life Assessment in Oncology

The Lion-App project exemplifies a rigorous, multi-stage iterative development process for a smartphone application that enables cancer patients to autonomously measure their quality of life (QoL). This research involved patients in a 3-stage process from conceptualization to deployment on private devices [28].

Key Quantitative Outcomes: The usability evaluation across development phases demonstrated consistent improvement, as captured by the User Experience Questionnaire (UEQ+) Key Performance Indicator (KPI), which ranges from -3 to +3 [28].

Table 1: Usability Evaluation Metrics Across Lion-App Development Cycles

| Development Phase | Participants (N) | Mean KPI (SD) | Key Findings | |------------------------||-------------------|------------------| | Usability Test 1 | 18 | 2.12 (0.64) | 94% response rate on UEQ+ | | Usability Test 2 | 14 | 2.28 (0.49) | Improvement from previous cycle | | Beta Test | 19 | 1.78 (0.84) | 74% UEQ+ response rate; age-dependent usage patterns |

The iterative refinements based on user feedback included restructuring the patient diary and integrating a shorter questionnaire for QoL assessment, demonstrating responsive adaptation to user needs [28]. The study found that age influenced engagement, with response rates decreasing with increasing age (P=.02), while sex demonstrated minimal influence on usability perceptions [28].

OncoSupport+: A Co-Designed Digital Health App for Supportive Cancer Care

The development of OncoSupport+ employed a participatory study design with stakeholders at the University Hospital Zurich, integrating patients with cancer, survivors, and healthcare professionals throughout the development process [3].

Methodological Framework: The co-design process was structured into three distinct phases:

- Predesign Phase: Focused on understanding the context of supportive cancer care, including challenges faced by cancer nurses and patients

- Generative Phase: Brainstormed digital health app functionalities and identified factors impacting future technology uptake

- Prototyping Phase: Iteratively developed the app prototype by gathering continuous user feedback [3]

The resulting application featured two integrated components: (1) a patient dashboard for recording patient-reported outcome measures (PROMs) and accessing personalized supportive care information, and (2) a nurse dashboard for visualizing patient data during nursing consultations [3].

Cancer Prevention Web Application: Usability-Focused Development

A German research team applied iterative development and early user testing to create a cancer prevention web application, demonstrating the value of formative evaluations during prototyping [29].

Usability Outcomes: The graphical user interface test yielded a System Usability Scale (SUS) score of 69.7/100 and a usefulness score of 75.8, indicating acceptable usability, though the learnability score was lower at 48.4, suggesting potential challenges in user understanding and satisfaction [29].

Table 2: Usability and Functional Assessment of Cancer Prevention Web Application

| Assessment Domain | Score/Outcome | Interpretation |

|---|---|---|

| Overall Usability (SUS) | 69.7/100 | Acceptable range |

| Usefulness | 75.8 | Above average |

| Learnability | 48.4 | Needs improvement |

| Identified Issues | 8 UX/UI categories | 1 severe, 3 moderate issues |

The qualitative feedback highlighted strengths in navigation, information presentation, and interactive features, particularly the risk simulation tool [29]. The identified usability issues primarily related to data input, user guidance, and risk visualization, providing clear direction for future iterations.

Experimental Protocols

Protocol 1: Multi-Stage Usability Testing for Cancer Care Applications

This protocol outlines the structured approach for iterative usability testing of digital health applications for cancer care, derived from the Lion-App development process [28].

2.1.1 Objectives

- To evaluate and enhance usability through iterative development cycles

- To identify and address age-related and sex-related usage patterns

- To progressively refine application features based on direct user feedback

2.1.2 Materials and Equipment

- Functional application prototypes (varying maturity levels)

- Private mobile devices for testing (personal smartphones)

- User Experience Questionnaire+ (UEQ+) assessment tool

- Recording equipment for focus group sessions

- Structured interview guides

2.1.3 Procedure

Phase 1: Focus Group Conduction

- Recruit participants from relevant cancer support groups (target N=21)

- Conduct moderated focus groups with transcript writer present

- Gather perceptions regarding eHealth apps and user needs

- Analyze transcripts to identify core user requirements

Phase 2: Initial Usability Testing

- Develop initial prototype incorporating focus group findings

- Recruit participants (N=18) for individual usability tests

- Administer UEQ+ questionnaire to assess usability metrics

- Calculate Key Performance Indicator (KPI) from UEQ+ data

Phase 3: Iterative Refinement and Beta Testing

- Refine prototype based on initial usability test findings

- Conduct second usability test with new participants (N=14)

- Deploy beta version on participants' private devices (N=19)

- Monitor usage rates over extended period (2 months)

- Administer final UEQ+ assessment and analyze age-dependent usage patterns

2.1.4 Data Analysis

- Calculate mean KPI scores for each development phase

- Perform statistical analysis of age-dependent response patterns

- Conduct thematic analysis of qualitative feedback

- Compare usability metrics across iterative cycles

Protocol 2: Collaborative Co-Design for Supportive Cancer Care Applications

This protocol details the participatory approach for engaging multiple stakeholders in the design of digital health applications for supportive cancer care [3].

2.2.1 Objectives

- To understand supportive care challenges within specific clinical contexts

- To identify essential functionalities through stakeholder engagement

- To explore factors influencing technology adoption and implementation

2.2.2 Participant Recruitment

Table 3: Stakeholder Inclusion Criteria for Co-Design Studies

| Stakeholder Group | Inclusion Criteria | Recruitment Source |

|---|---|---|

| Patients with Cancer | Current cancer treatment at participating clinic; Age ≥18 years; Language proficiency | Oncologic day clinic of Department of Oncology and Hematology |

| Patient Advocates | History of cancer; Age ≥18 years; Language proficiency | Swiss Group for Clinical Cancer Research (SAKK) |

| Cancer Nurses | Employment at participating institution; Direct patient care experience | Department of Oncology and Hematology |

| Supportive Care Specialists | Multidisciplinary expertise (nutrition, physiotherapy, psychology) | Comprehensive Cancer Center |

2.2.3 Procedure

Predesign Phase (Context Understanding)

- Conduct collaborative workshops with all stakeholder groups

- Map current supportive care workflows and challenges

- Identify specific barriers to supportive care access

- Document existing technology infrastructure and limitations

Generative Phase (Functionality Brainstorming)

- Facilitate focus groups with cancer nurses using structured guides

- Conduct qualitative interviews with patients and patient advocates

- Employ scoring cards to prioritize potential app functionalities

- Identify potential adoption facilitators and barriers

Prototyping Phase (Iterative Development)

- Develop initial prototype incorporating prior phase findings

- Conduct think-aloud protocols with representative users

- Iteratively refine interface and functionality based on feedback

- Validate technical feasibility with development team

2.2.4 Data Analysis

- Thematic analysis of qualitative data from workshops and interviews

- Prioritization matrix of app functionalities based on scoring card data

- Identification of implementation facilitators and barriers

- Usability assessment of final prototype

Visualization of Iterative UCD Workflow

Diagram 1: Iterative UCD Process

Research Reagent Solutions

Table 4: Essential Research Instruments and Tools for UCD Studies in Digital Health

| Research Reagent | Function/Application | Implementation Example |

|---|---|---|

| User Experience Questionnaire+ (UEQ+) | Modular assessment of usability metrics; calculates Key Performance Indicator (KPI) | Lion-App evaluation across development phases; KPI range -3 to +3 [28] |

| System Usability Scale (SUS) | Standardized 10-item scale for global usability assessment; scores 0-100 | Cancer prevention web app evaluation; overall score 69.7/100 [29] |

| Think-Aloud Protocols | Qualitative method where users verbalize thoughts while interacting with system | OncoSupport+ prototype testing; identification of UX/UI issues [3] |

| Focus Groups | Structured group discussions to gather perceptions and needs | Lion-App initial requirement gathering; 3 groups with 21 total participants [28] |

| Scoring Cards | Participatory prioritization technique for functionality ranking | OncoSupport+ co-design workshops; stakeholder-driven feature selection [3] |

| Patient-Reported Outcome Measures (PROMs) | Standardized instruments to capture patient health status | EORTC QLQ-C30 integration in Lion-App and OncoSupport+ for QoL assessment [28] [3] |

Within the paradigm of user-centered design, the development of effective cancer quality improvement tools hinges on a foundational principle: meaningful engagement with stakeholders. This includes patients, caregivers, healthcare professionals, and community partners. Moving beyond tokenistic involvement, structured engagement strategies are crucial for ensuring that tools and interventions are relevant, usable, and impactful. This application note details three core methodologies—charrettes, workshops, and qualitative interviews—providing protocols and data to guide their implementation in cancer research. These approaches are framed within the broader thesis that user-centered design is not merely an additive step but an integral component of rigorous, translational cancer research that ultimately enhances patient-centered care and tool efficacy.

Methodological Protocols and Applications

This section provides a detailed exploration of three key stakeholder engagement methods, including experimental protocols and quantitative outcomes.

The Community-Based Participatory Research (CBPR) Charrette

The CBPR Charrette model is an intensive, facilitated workshop designed to rapidly develop and strengthen collaborative research partnerships between community, academic, and medical stakeholders [30]. Its structured process fosters transparency and collective negotiation.

Experimental Protocol: Implementing the CBPR Charrette

- Objective: To establish a robust, equitable CBPR partnership for a cancer research study, clearly defining goals, roles, and communication structures.

- Stakeholder Recruitment: Recruit a diverse group of 10-15 participants representing all key stakeholder groups (e.g., patients, caregivers, community advocates, academic researchers, clinical providers) [30]. Recruitment can be done through existing community networks, clinical partners, and patient advocacy groups.

- Materials:

- Facilitator's guide.

- Consent forms.

- Audio recording equipment.

- Large writing surfaces (whiteboards, flip charts) or digital equivalents (e.g., Miro board).

- Name tags and session agendas.

- Procedure:

- Pre-Session Briefing (30 minutes): Co-facilitators meet with the research team to review goals and logistics.

- Introduction and Ground Rules (20 minutes): Facilitators welcome participants, establish a respectful environment, and outline the charrette's objectives.

- Strengths, Needs, and Challenges Brainstorming (60 minutes): Stakeholders engage in a guided discussion to identify partnership assets, resource gaps, and anticipated obstacles. Insights are recorded in real-time.

- Collective Negotiation (60 minutes): The group negotiates and defines specific project goals, implementation plans, roles, responsibilities, and compensation structures for community partners [30].

- Expert Consultation (30 minutes): Community and academic experts with CBPR experience provide external feedback and recommendations on the partnership's plans.

- Action Planning and Conclusion (50 minutes): The group synthesizes discussions into a concrete action plan, establishing timelines and communication protocols for the partnership's next steps.

- Analysis: Thematic analysis of the session transcripts and notes is conducted to extract key partnership agreements, identified challenges, and co-developed solutions.

The following diagram illustrates the logical workflow and participant interactions in a CBPR Charrette:

Application in Cancer Research: The CHAMPS (Cancer Health Accountability for Managing Pain and Symptoms) Study leveraged the CBPR Charrette to develop its partnership, which led to greater transparency, accountability, and trust among community, academic, and medical partners [30]. The process served as a catalyst for capacity building and allowed for the exploration of challenges with expert support.

Structured Stakeholder Workshops

Stakeholder workshops, such as those conducted by the EMA Cancer Medicines Forum (CMF) and the Extension for Community Healthcare Outcomes (ECHO) model, are organized meetings for collaborative discussion, education, and problem-solving around specific topics in cancer care [31] [32].

Experimental Protocol: Conducting a Virtual Training Workshop (e.g., ACS ECHO Model)

- Objective: To increase healthcare professionals' knowledge and confidence in a specific area of cancer care (e.g., tobacco cessation, colorectal cancer screening) via virtual telementoring [32].

- Stakeholder Recruitment: Participants are recruited through direct outreach to health system partners or via open registration. Programs can be "public" (open access) or "private" (invitation-only with attendance requirements) [32].

- Materials:

- Virtual meeting platform (e.g., iECHO).

- Pre- and post-program surveys (digital, e.g., Microsoft Forms).

- Presentation slides for didactic sessions.

- Standardized case presentation templates.

- Procedure:

- Pre-Program Assessment: Distribute a pre-program survey to collect demographic data and baseline self-reported knowledge and confidence using 5-point Likert scales [32].

- Session Execution: Conduct a series of monthly virtual sessions (e.g., 4-9 sessions). Each session follows the ECHO Model:

- Didactic Presentation (20-30 minutes): A subject matter expert presents on a key topic.

- Case-Based Discussion (30-40 minutes): Participants present de-identified patient cases for group discussion and expert guidance [32].

- Post-Session Data Collection: After each session, distribute a survey to gauge the likelihood of participants using the information presented.

- Post-Program Assessment: At the program's conclusion, redistribute the knowledge and confidence survey to measure change.

- Analysis: Quantitative data analysis includes calculating descriptive statistics and mean differences between pre- and post-program scores for knowledge and confidence.

Quantitative Outcomes from ACS ECHO Programs: The table below summarizes aggregated quantitative data from four distinct ACS ECHO programs, demonstrating the model's effectiveness in engaging professionals and improving self-reported outcomes [32].

Table 1: Quantitative Outcomes from ACS ECHO Cancer Care Programs (2023-2024)

| Metric | Program A (Tobacco Cessation) | Program B (Colorectal Cancer Screening) | Program C (Prostate Cancer Screening) | Program D (Caregiving) | Aggregated Average |

|---|---|---|---|---|---|

| Unique Participants | 195 | 45 | 59 | 132 | 108 |

| Session Count | 4 | 7 | 9 | 7 | 6.75 |

| Participants Likely to Use Information | 59% | 59% | 59% | 59% | 59% |

| Mean Knowledge Increase | +0.84 | +0.84 | +0.84 | +0.84 | +0.84 |

| Mean Confidence Increase | +0.77 | +0.77 | +0.77 | +0.77 | +0.77 |

Data sourced from a quantitative evaluation of four ACS ECHO programs [32]. Likelihood to use, knowledge, and confidence data are reported as averages across all programs.

Qualitative Patient Interviews

Qualitative interviews provide an in-depth understanding of patients' lived experiences, unmet needs, and perspectives on interventions, which is critical for developing truly patient-centered tools.

Experimental Protocol: A Two-Stage Qualitative Interview Study

- Objective: To explore patients' experiences with current clinical practice and their views on how a new tool (e.g., an electronic Patient-Reported Outcome Measure [ePROM]) might enhance patient-centered follow-up [33] [34].

- Stakeholder Recruitment: A purposeful sampling strategy is used. Clinicians identify eligible patients (e.g., by diagnosis, treatment status) and provide study information. Researchers then contact interested individuals to confirm eligibility and obtain informed consent [34].

- Materials:

- Semi-structured and structured interview guides.

- Informed consent documents.

- Audio recorder and transcription service.

- Conceptual prototype of the tool (e.g., presented via PowerPoint) [34].

- Procedure:

- Stage 1 - Exploring Experiences: Conduct semi-structured individual interviews with patients (e.g., n=8) to understand their experiences with symptom management and patient-centered care, including challenges and unmet needs [34].

- Data Analysis: Transcribe interviews and analyze data using reflexive thematic analysis to identify initial themes.

- Stage 2 - Evaluating Solutions: Conduct structured interviews with a participant subgroup (e.g., n=6), presenting a conceptual version of the tool. Elicit feedback on its perceived usefulness, content, design, and potential role in care [34].

- Integrated Analysis: Synthesize data from both stages to develop overarching themes that span patient experiences and potential solutions.

- Analysis: Reflexive thematic analysis is used to identify, analyze, and report patterns (themes) within the data.

The workflow for this two-stage qualitative interview process is as follows:

Key Findings from an ePROM Study: A Norwegian study employing this method revealed two central themes: 1) Symptom management in the shadow of disease-centered care, where patients felt personally responsible for bringing symptoms to clinicians' attention, and 2) ePROMs: bridging holistic care and disease management, where patients viewed ePROMs as a promising tool to amplify their voice and enable more holistic, responsive follow-up [33] [34].

The Scientist's Toolkit: Essential Reagents for Engagement

Successful execution of these engagement strategies requires a suite of conceptual and practical "research reagents." The following table details key tools and their functions.

Table 2: Key Research Reagent Solutions for Stakeholder Engagement

| Item | Function/Application in Engagement Research |

|---|---|

| Semi-Structured Interview Guide | Ensures consistent coverage of key topics (e.g., patient experiences, unmet needs) while allowing flexibility to explore novel participant-led insights [34]. |

| System Usability Scale (SUS) | A ten-item, Likert-scale questionnaire used to quickly and reliably assess the perceived usability of a tool or system prototype [23]. |

| Conceptual Prototype (e.g., PowerPoint, wireframe) | A low-fidelity visualization of a proposed tool used in interviews or workshops to elicit concrete, actionable feedback from stakeholders before significant resources are invested in development [34]. |

| CBPR Charrette Facilitator's Guide | A structured protocol for guiding the intensive partnership workshop, ensuring all critical elements (strengths, challenges, goals, roles) are addressed collaboratively [30]. |

| Pre-/Post-Program Survey (5-point Likert scale) | A quantitative instrument for measuring changes in stakeholders' (e.g., clinicians') self-reported knowledge and confidence before and after an educational workshop or intervention [32]. |

Charrettes, workshops, and qualitative interviews are not merely data collection techniques but are foundational processes for embedding user-centered design into cancer quality improvement research. The structured protocols and supporting data presented here provide a roadmap for researchers to implement these methods effectively. By rigorously engaging stakeholders through these tailored approaches, the field can ensure that the resulting tools—from clinical databases and ePROM systems to professional education programs—are grounded in real-world needs, thereby enhancing their relevance, adoption, and ultimate impact on patient care.

In the development of digital tools for cancer quality improvement (QI), the transition from abstract requirements to a functional prototype is a critical phase. For researchers, scientists, and drug development professionals, this process must be rigorous, evidence-based, and efficient. Unvalidated, feature-heavy software can lead to clinician burnout, data integrity issues, and failed implementation. This document outlines application notes and protocols for wireframing, creating low-fidelity mockups, and employing structured feature prioritization, all framed within a user-centered design (UCD) methodology essential for creating effective cancer QI tools.

Experimental Protocols for User-Centered Design

Protocol: Rapid Wireframing for Clinical Workflow Integration

Objective: To quickly visualize and validate the core layout and navigation of a cancer QI tool (e.g., an Adverse Event Management System) with clinical stakeholders.

Materials:

- Whiteboard or digital collaboration tool (e.g., Miro, FigJam).

- Markers or digital stylus.

- User stories derived from ethnographic research in oncology clinics.

Methodology:

- Define User Stories: Based on observational studies, formulate specific user stories. Example: "As an oncologist, I need to report a Grade 3 neutropenia event for a patient on a clinical trial in less than 2 minutes."

- Sketched Wireframing: For each user story, create a series of hand-sketched wireframes.

- Focus on structure and layout, not visual design.

- Use simple placeholders for text, images, and buttons (e.g., lines for text, boxes for buttons).

- Diagram the flow between screens to represent the user's task sequence.

- Stakeholder Walkthrough: Present the wireframe flow to a small group of clinical stakeholders (e.g., 2-3 oncologists, 1 research nurse).

- Cognitive Debriefing: Ask stakeholders to "think aloud" as they navigate the wireframes to complete the user story. Prompt for feedback on layout logic, missing elements, and workflow efficiency.

- Iterate: Revise the wireframes based on feedback. A minimum of two iteration cycles is recommended before proceeding.

Table 1: Quantitative Feedback Summary from Wireframe Walkthroughs (n=5 Clinical Stakeholders)

| Feedback Metric | Pre-Iteration (Average Score 1-5) | Post-Iteration 1 (Average Score 1-5) | Post-Iteration 2 (Average Score 1-5) |

|---|---|---|---|

| Ease of Navigation | 2.8 | 3.6 | 4.4 |

| Clarity of Layout | 3.0 | 3.8 | 4.6 |

| Alignment with Clinical Workflow | 2.6 | 3.8 | 4.4 |

| Overall Usability Perception | 2.8 | 3.7 | 4.5 |

Protocol: Low-Fidelity Interactive Mockup Usability Testing

Objective: To assess the usability and functional logic of an interactive, low-fidelity prototype before any code is written.

Materials:

- Prototyping software (e.g., Figma, Adobe XD) with an interactive low-fidelity mockup.

- A defined testing script and scenarios.

- Screen and audio recording software.

- Consent forms for participants.

Methodology:

- Prototype Fidelity: Develop a clickable mockup using the validated wireframes. Use a grayscale color palette and standard UI elements to maintain low-fidelity focus.

- Participant Recruitment: Recruit 5-7 end-users (e.g., clinical research coordinators, pharmacists) who were not involved in the wireframing phase.

- Testing Session:

- Introduce the session, emphasizing the prototype's unfinished nature.

- Provide participants with realistic scenarios (e.g., "Find patient John Doe and update his treatment cycle status.").

- Instruct participants to complete the tasks while using the "think-aloud" protocol.

- The facilitator observes without guiding, noting points of confusion, errors, and task completion time.

- Data Analysis: Analyze recordings and notes to identify usability issues. Calculate quantitative metrics like Task Success Rate and Single Ease Question (SEQ) score.

Table 2: Usability Test Results for Low-Fidelity Prototype (n=6 Participants)

| Task Description | Success Rate (%) | Avg. SEQ (1-7) | Critical Errors Encountered |

|---|---|---|---|

| Log a new patient symptom | 100% | 6.5 | 0 |

| Report a CTCAE-gradeable adverse event | 83% | 5.2 | 1 (Difficulty finding grading scale) |

| Generate a standard therapy response report | 67% | 4.3 | 2 (Confusion over report parameters) |

Feature Prioritization Framework: The RICE Model for Strategic Development

Given the constrained resources in clinical research, a systematic approach to feature prioritization is paramount. The RICE scoring model provides a quantitative framework.

RICE Score = (Reach × Impact × Confidence) / Effort

- Reach: The number of users or events affected by the feature per time period (e.g., 50 oncologists per quarter).

- Impact: A 1-3 scale (0.25 = Minimal, 0.5 = Low, 1 = Medium, 2 = High, 3 = Massive) on the user's goal.

- Confidence: A percentage (50%, 80%, 100%) representing certainty in the estimates.

- Effort: The total "person-months" required to implement the feature.

Table 3: RICE Prioritization for a Hypothetical Cancer QI Tool

| Feature Idea | Reach (users/quarter) | Impact (1-3 scale) | Confidence (%) | Effort (person-months) | RICE Score |

|---|---|---|---|---|---|

| Automated CTCAE v6.0 grading | 500 | 3.0 | 100% | 4 | 375.0 |

| EHR Bi-directional Integration | 500 | 2.0 | 80% | 12 | 66.7 |

| Patient-Reported Outcome (PRO) Portal | 1000 | 2.0 | 100% | 8 | 250.0 |

| Customizable Dashboard Widgets | 200 | 1.0 | 50% | 3 | 33.3 |

Visual Workflows and Logical Diagrams

Title: UCD Workflow: Wireframing to Prototype

Title: RICE Scoring Model Components

Title: MoSCoW Prioritization Framework

The Scientist's Toolkit: Essential Research Reagents for Digital Prototyping

Table 4: Key Research Reagent Solutions for Prototyping Cancer QI Tools

| Item / Solution | Function / Explanation |

|---|---|

| Figma / FigJam | A collaborative, web-based platform for creating wireframes, low-fidelity mockups, and interactive prototypes. Essential for distributed team collaboration. |

| User Story Map | A visual artifact that organizes user stories into a logical workflow model, ensuring feature development aligns with the complete user journey. |

| RICE Scoring Sheet | A quantitative model (Spreadsheet) for prioritizing features based on Reach, Impact, Confidence, and Effort, reducing subjective bias. |

| MoSCoW Method | A prioritization framework for categorizing features into Must-haves, Should-haves, Could-haves, and Won't-haves, crucial for managing scope. |

| Think-Aloud Protocol | A usability testing methodology where participants verbalize their thought process, providing direct insight into user cognition and interface problems. |

| System Usability Scale (SUS) | A reliable, 10-item questionnaire for measuring the perceived usability of a system. Provides a quick, standardized usability score. |

Application Note: Quantitative Frameworks in Cancer Quality Improvement

This document presents a synthesis of successful applications of user-centered design principles in cancer quality improvement (QI) tools. Framed within a broader thesis on user-centered design, these case studies demonstrate how quantitative evaluation, structured implementation, and accessible design are critical for developing effective cancer care tools for researchers, scientists, and drug development professionals. The integration of rigorous data collection and adherence to usability standards ensures that these tools meet the complex needs of both clinicians and patients.

Case Study 1: Project ECHO for Cancer Survivorship and Professional Education

The Extension for Community Healthcare Outcomes (ECHO) model, developed by the University of New Mexico, was utilized by the American Cancer Society (ACS) to address cancer-related knowledge gaps among healthcare professionals in underserved communities. This virtual telementoring program creates a collaborative "all-teach, all-learn" environment that connects community providers with specialist mentors, thereby increasing local expertise and improving patient care without requiring patient travel [32].

Experimental Protocol and Methodology