Validating Emergent Behavior in Biomedical Research: A Histopathology-Driven Framework for Discovery and Translation

This article provides a comprehensive framework for leveraging advanced histopathology to validate emergent biological behaviors in disease models and drug development.

Validating Emergent Behavior in Biomedical Research: A Histopathology-Driven Framework for Discovery and Translation

Abstract

This article provides a comprehensive framework for leveraging advanced histopathology to validate emergent biological behaviors in disease models and drug development. It explores the foundational principles of emergent feature discovery in tissue samples, details cutting-edge methodological applications of digital pathology and artificial intelligence, and addresses critical troubleshooting and optimization strategies for robust analysis. By presenting rigorous validation and comparative approaches, this resource equips researchers and drug development professionals with the knowledge to objectively quantify complex phenotypic changes, thereby enhancing the predictive power of preclinical research and accelerating the translation of findings into clinical applications.

Unveiling the Blueprint: Core Concepts of Emergent Behavior in Tissue Morphology

Emergent behavior in pathology represents a paradigm shift in understanding cancer progression, where complex tissue-level organization arises from seemingly chaotic molecular interactions. This guide compares three leading computational approaches—neural network control, data-driven system identification, and histo-genomic integration—for quantifying and validating these emergent phenomena. By framing each methodology within experimental protocols and providing structured performance comparisons, we equip researchers with a practical framework for investigating pathological emergence, ultimately advancing predictive oncology and personalized treatment strategies.

Emergent behavior describes the phenomenon where complex, coordinated patterns arise at a macroscopic level from relatively simple interactions at a microscopic level, without central coordination. In pathological contexts, this translates to tissue-level organizational signatures—such as tumor morphology, immune spatial distributions, and stromal architecture—emerging from subcellular molecular chaos. The clinical significance lies in correlating these emergent histological patterns with clinical outcomes, drug response, and disease progression.

The foundational principle, derived from complex systems physics, is that these macroscopic transitions often occur suddenly at critical points, following mathematical patterns similar to phase transitions [1]. In cancer systems, molecular interactions create a self-organizing system that exhibits emergent capabilities not predictable from individual components alone. The integration of high-resolution molecular data (OMICs) with spatial histological context through digital pathology enables researchers to visualize and quantify this emergence, providing a critical layer of information for precision medicine [2].

Comparative Analysis of Research Approaches

Table 1: Methodological Comparison for Studying Emergent Behavior in Pathology

| Research Approach | Primary Application | Data Requirements | Key Measurable Outputs | Technical Implementation Complexity |

|---|---|---|---|---|

| Neural Network Control of Emergence [3] | Guiding collective motion patterns in agent-based systems | Agent trajectory data (e.g., GPS tracking, cell migration paths) | Transition timing, cluster size control, pattern stability metrics | High (requires neural network architecture design and training) |

| Data-Driven System Identification [4] | Discovering interaction rules from observed dynamics | Short-time trajectory observations of agent-based systems | Estimated interaction kernels, trajectory prediction accuracy, emergent behavior reproduction | Medium-High (requires specialized algorithms for nonparametric inference) |

| Histo-Genomic Integration [2] | Spatial context for molecular data in digital pathology | OMICs data paired with digitally-scanned tumor samples | Spatial biomarker expression patterns, host immune response mapping, radio-histomic correlations | Medium (requires digital slide scanning and image analysis expertise) |

| Bibliometric Network Visualization [5] | Mapping scientific landscapes and research trends | Publication data from bibliographic databases | Journal/researcher networks, citation relationships, co-occurrence term networks | Low-Medium (tool-assisted with minimal coding) |

| Text Analysis & Word Clouds [6] | Quantitative analysis of document collections | Text files for analysis | Word frequency counts, vocabulary density, interactive visualizations | Low (web-based tool with simple interface) |

Table 2: Performance Comparison in Predicting System Behaviors

| Approach | Short-Term Prediction Accuracy | Long-Term Emergent Behavior Reproduction | Scalability to Large Systems | Interpretability of Results |

|---|---|---|---|---|

| Neural Network Control | High (within training interval) | Moderate (often extends beyond training data) | Memory-efficient implementations available | Low (black-box nature of neural networks) |

| Data-Driven System Identification | High (near-optimal regression rates) | High (demonstrates same emergent behaviors) | Scalable to large data sets with many agents | Medium (visualizable interaction kernels) |

| Histo-Genomic Integration | Context-dependent on cancer type | High (captures spatial-temporal progression) | Technical challenges in digital slide storage | High (direct spatial visualization) |

| Bibliometric Network Visualization | Not predictive | Identifies emerging research trends | Handles large publication datasets | High (visual network representations) |

| Text Analysis & Word Clouds | Not predictive | Identifies thematic patterns | Limited by text processing capabilities | Medium (quantitative summary with visualizations) |

Experimental Protocols & Methodologies

Neural Network Control Framework for Emergent Behavior

Protocol Objective: Employ deep neural networks to control the emergence of complex collective motions at desired moments with intended global patterns.

Methodology Details:

- System Configuration: Define agent-based system with N interacting entities (cells, particles, or organisms)

- Neural Network Architecture: Implement physics-informed neural networks that obey dynamical laws

- Training Data Collection: Gather trajectory data (e.g., GPS data from bird flocks, cell migration paths)

- Interaction Rule Learning: Train network to find inter-agent interaction rules that produce desired collective structures

- Validation: Test learned rules in confined or obstacle-filled environments to verify robust emergence maintenance

Key Parameters Monitored:

- Average radius and cluster size in vortical swarms

- Transition timing from random to ordered states

- Pattern stability under environmental perturbations

This approach has demonstrated capability in reproducing real-world bird flock dynamics by learning directly from observational GPS data [3].

Data-Driven Discovery of Interaction Kernels

Protocol Objective: Infer governing equations of collective dynamics from observational data without prior knowledge of interaction rules.

Methodology Details:

- Data Collection: Observe short-time trajectories of agent-based dynamical systems

- System Modeling: Represent system as first- or second-order collective dynamics model:

- First-order model:

x˙i = 1/N ∑ ϕ(||xi' - xi||)(xi' - xi) - Second-order model: Incorporates acceleration terms

- First-order model:

- Estimation Algorithm: Apply regularized least-squares estimators to learn interaction kernels ϕ

- Nonparametric Inference: Construct estimators without assuming parametric form of interactions

- Prediction Validation: Test estimated kernels on new initial conditions, both within and beyond training time interval

Application Example: Planetary motion analysis successfully rediscovered Newton's law of universal gravitation (1/r² form) without parametric assumptions or elliptical orbit presuppositions [4].

Histo-Genomic Integration Protocol

Protocol Objective: Spatially contextualize molecular data within histological tissue architecture.

Methodology Details:

- Sample Preparation: Process tumor tissue sections for digital scanning

- Digital Pathology: Perform whole-slide imaging at high resolution

- OMICs Data Integration: Map genomic, transcriptomic, and proteomic data onto digital pathology images

- Spatial Analysis: Quantify biomarker expression patterns within specific histological regions

- Multi-dimensional Correlation: Integrate radiological images with digital pathological images, genomics, and clinical data (radio-histomics)

This approach enables a four-dimensional (temporal/spatial) analysis of cancer progression, essential for understanding evolution patterns and tailoring individual treatment plans [2].

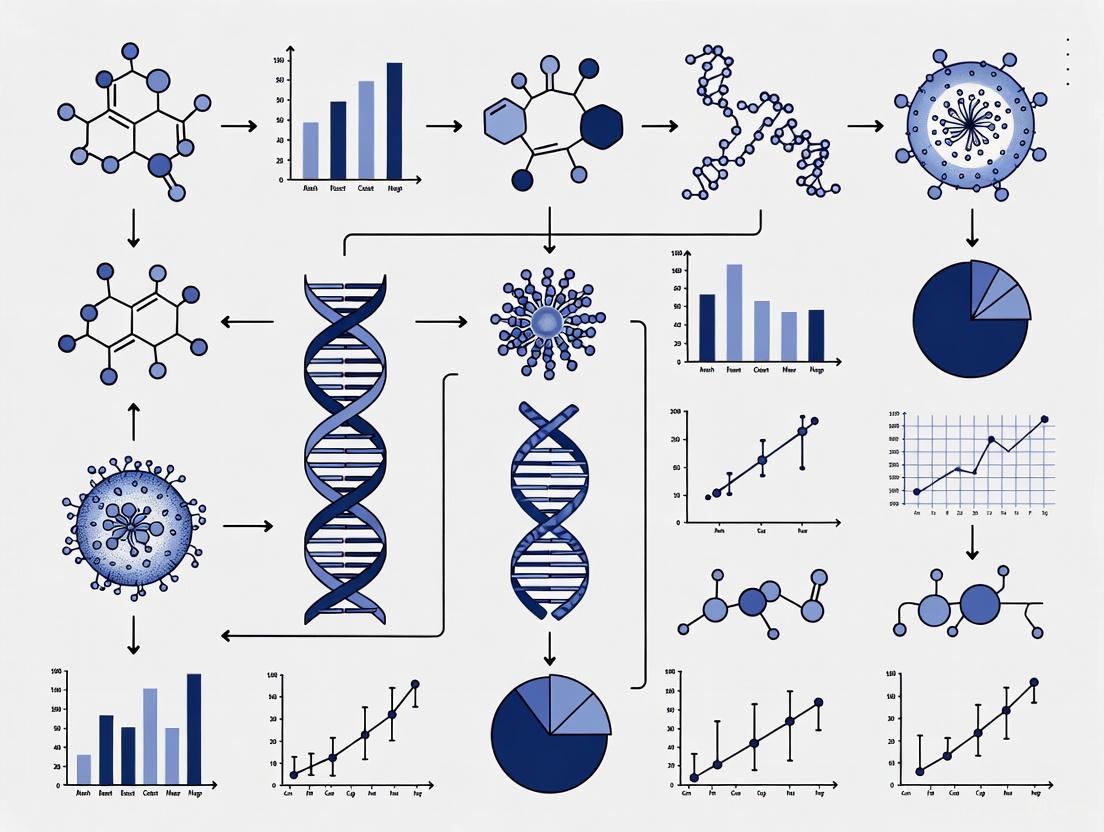

Visualization of Research Workflows

Data-Driven Emergence Discovery Workflow

Diagram 1: Data-driven discovery workflow for emergent behavior.

Histo-Genomic Integration Framework

Diagram 2: Histo-genomic integration for spatial analysis.

The Scientist's Toolkit: Essential Research Solutions

Table 3: Research Reagent Solutions for Emergent Behavior Studies

| Tool/Category | Specific Examples | Primary Function | Implementation Considerations |

|---|---|---|---|

| Data Visualization Platforms | Tableau, RAW, Plot.ly | Interactive visualization of complex datasets | Tableau Public (free), Tableau Desktop (student license available) |

| Programming Environments | Processing, D3.js | Custom visualization coding and implementation | Processing designed for coding beginners in visual arts context |

| Bibliometric Analysis | VOSviewer | Constructing and visualizing bibliometric networks | Supports citation, co-citation, co-authorship relations |

| Text Analysis Tools | Voyant Word Cloud | Quantitative text analysis and visualization | Free online web application for document collections |

| Digital Pathology Infrastructure | Whole-slide scanners, Image analysis software | Digitizing pathological samples for spatial analysis | Technical challenges in storage and processing of large image files |

| Statistical Learning Libraries | Custom MATLAB/Python implementations | Nonparametric inference of interaction kernels | Requires specialized algorithms for system identification |

This comparison guide demonstrates that multiple complementary approaches exist for investigating emergent behavior in pathological contexts. Neural network methods offer direct control over emergent patterns, data-driven system identification provides mathematical rigor for discovering fundamental interaction rules, and histo-genomic integration creates essential spatial context for molecular data. The choice of methodology depends on research goals, data availability, and technical implementation capabilities. As the field advances, integrating these approaches will be crucial for unraveling the complex emergence of tissue-level organization from molecular interactions, ultimately enhancing diagnostic precision and therapeutic targeting in oncology.

In biomedical research and drug development, the accurate characterization of disease states is paramount. Histopathology, the microscopic examination of tissue, has long served as the gold standard for diagnosis and validation, providing an essential bridge between observable clinical symptoms and underlying molecular mechanisms. This guide explores how advanced computational methods are correlating intricate microscopic phenotypes from tissue samples with macroscopic disease presentations, thereby validating complex emergent behaviors in biological systems. The integration of artificial intelligence with traditional histology is revolutionizing our approach to disease classification, prognostic prediction, and therapeutic development, creating a more nuanced understanding of pathological processes across multiple disease contexts.

Experimental Approaches for Phenotype-Disease Correlation

Automated Cellular Phenotyping Using Multiplexed Immunofluorescence

Experimental Protocol: This approach establishes reliable ground truth for cell type identification by combining multiplexed immunofluorescence (mIF) with H&E-stained whole slide images (WSIs) from the same tissue section [7]. The experimental workflow begins with performing mIF staining of antibodies against specific cell lineage protein markers (e.g., pan-CK, CD3, CD20, CD66b, CD68) on formalin-fixed paraffin-embedded (FFPE) tumor samples, followed by H&E staining of the identical tissue section [7]. After imaging both modalities, researchers apply co-registration algorithms to align mIF and H&E images at the single-cell level, transferring accurate cell type labels based on protein marker expression to corresponding cells on H&E images [7]. This generates a high-quality dataset for training deep learning models to classify major cell types (tumor cells, lymphocytes, neutrophils, macrophages) in standard H&E images with reported accuracy of 86-89% [7].

Key Applications:

- Spatial biomarker discovery in the tumor microenvironment

- Prediction of patient response to immunotherapy

- Analysis of cellular interactions underlying disease progression

Unsupervised Identification of Disease States from Histopathological Profiles

Experimental Protocol: This methodology applies unsupervised machine learning to identify novel disease states from high-dimensional physiological and histopathological data [8]. Researchers begin by collecting physiology data (blood chemistry, body/tissue weights) and histology data from H&E-stained tissue sections, with the latter recorded using standard constrained terminology by expert pathologists [8]. The protocol involves visualizing treatment conditions using t-distributed stochastic neighbor embedding (t-SNE) to highlight dissimilarities in high-dimensional physiological data, followed by computation of histopathology severity scores based on the number of abnormal histology phenotypes observed [8]. Density-based clustering algorithms are then applied to identify discrete disease state clusters, with consensus clustering performed across multiple iterations to ensure robustness [8]. Finally, researchers characterize each disease state by its distinctive physiological and histopathological features and correlate these with molecular biomarkers through subsequent gene expression analysis [8].

Key Applications:

- Discovery of novel toxin-induced disease states

- Identification of tolerance mechanisms in toxicological response

- Analysis of inter-tissue communication pathways

2.5D Pathology and Volumetric Tissue Analysis

Experimental Protocol: This framework enhances traditional 2D histopathology by capturing volumetric tissue information through alignment of serial sections [9]. The process involves extracting individual tissue ribbons from serial section WSIs using morphological labeling, followed by both rigid and non-rigid co-registration of corresponding high-resolution WSIs (up to 20× magnification) [9]. Researchers employ the VALIS framework with SuperGlue Graph Neural Network keypoint matching for initial alignment, then use SimpleElastix to perform non-rigid registration based on ribbon boundaries to preserve nuclei and glandular morphology [9]. The resulting 2.5D cores are processed using video transformer models pretrained with a modified DINO contrastive learning framework, treating sequential tissue sections as video frames to capture spatial dependencies across depth [9]. These models can then be applied to tasks such as cancer grade classification using attention-based multiple instance learning [9].

Key Applications:

- Improved prostate cancer grading

- Enhanced visualization of complex 3D tissue structures

- Better characterization of spatial distribution of pathological structures

Generative AI for Histopathology Report Generation

Experimental Protocol: The HistoGPT framework represents a vision-language model that generates comprehensive pathology reports from multiple gigapixel-sized WSIs [10]. The methodology involves training on paired WSIs and corresponding pathology reports (15,129 images from 6,705 patients), with models incorporating a vision module (CTransPath or UNI) and a language module (BioGPT) integrated through cross-attention mechanisms [10]. During inference, the model uses an Ensemble Refinement method to sample multiple reports focusing on different aspects of the WSIs, which are then aggregated using general-purpose LLMs [10]. The framework operates in either unguided mode or "Expert Guidance" mode where the correct diagnosis is provided, enabling interactive use with pathologists [10]. Performance validation includes both natural language processing metrics and blinded domain expert evaluations comparing generated reports with human-written ones [10].

Key Applications:

- Automated draft report generation for common malignancies

- Tumor subtype classification and margin assessment

- Standardization of pathology reporting

Comparative Performance Data

Diagnostic Performance of Imaging Modalities Against Histopathology Gold Standard

Table 1: Comparison of diagnostic performance for various modalities using histopathology as reference standard

| Imaging Modality | Clinical Application | Sensitivity | Specificity | Diagnostic Accuracy | Study Details |

|---|---|---|---|---|---|

| 18F-fluorocholine PET-CT | Primary hyperparathyroidism localization | 93.1% | - | 78.8% | 245 patients; detected smaller glands with chief-cell predominance [11] |

| 99mTc-methoxy-isobutyl-isonitrol SPECT-CT | Primary hyperparathyroidism localization | 70.4% | - | 60.7% | 245 patients; higher uptake in oxyphilic/oncocytic adenomas [11] |

| Two-dimensional ultrasonography | Axillary lymph node metastasis in breast cancer | 41.9% | 60.6% | 52.0% | 175 patients; moderate diagnostic value [12] |

| Elastosonography | Axillary lymph node metastasis in breast cancer | 58.0% | 45.7% | 51.4% | 175 patients; higher sensitivity but more false positives [12] |

Performance of Computational Pathology Models

Table 2: Performance metrics of AI-based histopathology classification models

| Model Name | Task Description | Performance Metrics | Key Advantages |

|---|---|---|---|

| CancerDet-Net | Multi-cancer classification across 9 histopathological subtypes from 4 cancer types | 98.51% accuracy | Explainable AI visualizations; web and mobile deployment [13] |

| HistoGPT | Dermatopathology report generation from whole slide images | ~67% keyword coverage; high semantic similarity to human reports | Generates comprehensive reports from multiple WSIs; zero-shot prediction of tumor subtypes/thickness [10] |

| Automated Cell Classification | Classification of 4 cell types on H&E images | 86-89% overall accuracy | Eliminates error-prone human annotations; enables spatial biomarker discovery [7] |

| 2.5D Prostate Cancer Grading | Prostate cancer grading using sequential sections | - | Captures 3D tissue context; improves grading accuracy [9] |

Signaling Pathways and Molecular Correlations

Genomic Correlates of Nuclear Morphology

Experimental Protocol: This approach quantitatively links nuclear morphological features with gene expression patterns across multiple healthy tissues [14]. Researchers extract parenchymal regions from H&E-stained WSIs of 13 organs from the GTEx database, then perform nucleus segmentation using the Efficient Deep Equilibrium Model (EDEM) to precisely segment nuclei in parenchymal regions [14]. The protocol involves computing quantitative nuclear morphological features (size, shape, texture) for each segmented nucleus, followed by identification of differentially expressed genes across tissues and correlation analysis with nuclear features [14]. Finally, pathway enrichment analysis reveals biological processes associated with nuclear morphology gene sets, including cell growth, development, metabolism, and immunity [14].

Key Findings: Differences in nuclear morphological features across healthy organs are associated with differential RNA expression patterns, revealing connections between gene expression and cellular phenotypes at the organ level [14].

Ferroptosis in Toxin-Induced Tissue Injury

Experimental Protocol: Analysis of gene expression signatures associated with machine-identified disease states reveals molecular mechanisms of toxin response [8]. Researchers perform differential gene expression analysis for each unsupervised-identified disease state, followed by gene set enrichment analysis to identify pathways associated with specific disease states, including xenobiotic metabolism and ferroptosis pathways [8]. The protocol includes validation of ferroptosis sensitivity biomarkers through correlation with disease state transitions, particularly in tolerance induction [8]. Investigation of inter-tissue communication involves analysis of hepatokine expression (Gdf15, Igf1) and correlation with body weight changes during toxin exposure [8].

Key Findings: Unsupervised analysis identified nine discrete toxin-induced disease states, with tolerance induction correlated with upregulation of xenobiotic defense genes and desensitization to ferroptosis, suggesting ferroptosis as a druggable driver of tissue pathophysiology [8].

Visualization of Key Methodologies

Diagram 1: Automated cell classification workflow for spatial biomarker discovery.

Diagram 2: Unsupervised disease state identification from physiological and histopathological profiles.

Research Reagent Solutions

Table 3: Essential research reagents and computational tools for histopathology correlation studies

| Reagent/Tool | Function | Application Example |

|---|---|---|

| Multiplexed Immunofluorescence Panel (pan-CK, CD3, CD20, CD66b, CD68) | Definitive cell type identification based on protein markers | Automated cell annotation for H&E image classification [7] |

| Hematoxylin and Eosin (H&E) Stain | Standard tissue staining for nuclear and cytoplasmic visualization | Gold standard for histopathological assessment across all studies |

| VALIS Framework with SuperGlue GNN | Tissue section co-registration and alignment | Construction of 2.5D biopsy cores from serial sections [9] |

| DINO Contrastive Learning Framework | Self-supervised pretraining for feature extraction | Video transformer training for 2.5D core analysis [9] |

| Attention-Based Multiple Instance Learning (ABMIL) | Weakly supervised learning for slide-level classification | Cancer grading with slide-level labels only [9] |

| t-Distributed Stochastic Neighbor Embedding (t-SNE) | Dimensionality reduction for high-dimensional data | Visualization of physiology and histopathology relationships [8] |

| Leiden Clustering Algorithm | Unsupervised cell population identification | Cell type definition from protein marker expression [7] |

The correlation of microscopic phenotypes with macroscopic disease states represents a fundamental paradigm in biomedical research, with histopathology maintaining its position as the indispensable gold standard. The experimental approaches detailed in this guide—from automated cellular phenotyping and unsupervised disease state identification to volumetric analysis and generative AI—demonstrate how computational advances are enhancing rather than replacing traditional histopathological assessment. As these methodologies continue to evolve, they promise to deepen our understanding of emergent behaviors in complex disease systems, ultimately accelerating drug development and improving patient outcomes through more precise disease classification and biomarker discovery. The integration of these technologies into standardized research workflows will be essential for realizing the full potential of histopathology as both a validation tool and a discovery platform.

The integration of artificial intelligence and digital pathology is fundamentally transforming histopathology, shifting the field from qualitative, subjective assessment to robust, data-driven quantitative analysis [15] [16]. This evolution enables the extraction of vast feature sets from gigapixel whole slide images (WSIs), uncovering subtle morphological patterns that may elude human observation [14]. Within the broader context of validating emergent behavior in histopathology research, these quantitative features provide the empirical foundation for identifying complex, system-level phenomena that arise from interactions within tissue microenvironments. This guide systematically details the core quantitative feature subsets—color, texture, shape, and topology—providing researchers and drug development professionals with standardized frameworks for computational histopathology.

Quantitative Feature Subsets in Histopathological Image Analysis

The quantitative analysis of histological images involves calculating specific, numerically-represented characteristics from distinct tissue structures. The table below summarizes the primary feature categories and their biological significance.

Table 1: Core Quantitative Feature Subsets in Histopathology

| Feature Subset | Representative Metrics | Biological Correlates | Common Applications |

|---|---|---|---|

| Color | Stain intensity (Hematoxylin, Eosin) [17], Positive Pixel Count [17], Color deconvolution values [17] | Protein expression, cellular metabolism, fibrosis, nucleic acid density [18] [17] | Biomarker quantification, fibrosis assessment [17] |

| Texture | Haralick features (Contrast, Correlation, Energy, Homogeneity) [14], Graph-based features, Local Binary Patterns (LBP) | Tissue architecture, nuclear chromatin distribution, stromal organization [14] | Cancer grading, tumor-stroma characterization, prognosis prediction [14] |

| Shape | Area, Perimeter, Circularity, Eccentricity, Solidity, Major/Minor axis length [18] [14] | Nuclear pleomorphism, cellular hypertrophy, cytoskeletal organization [18] [14] | Nuclear grading, detection of cellular hypertrophy [18] |

| Topology | Cell density, Nearest Neighbor distances, Graph networks (Voronoi, Delaunay) [14], Spatial arrangement | Tissue microenvironment, cell-cell interactions, spatial heterogeneity, tumor infiltrating lymphocytes (TILs) [17] | Analysis of tumor immune contexture, tissue organization [14] [17] |

Experimental Protocols for Feature Extraction

Reproducible extraction of quantitative features requires standardized computational workflows. The following protocols are adapted from large-scale studies and open-source software documentation.

Protocol for Nuclear Morphometric Analysis

This protocol is designed for quantifying nuclear shape and size across multiple healthy or diseased tissues, based on methodologies from the Genotype-Tissue Expression (GTEx) project analysis [14].

- Parenchyma Extraction: For organs with complex structures (e.g., breast, kidney, esophagus), first extract the parenchymal region to avoid confounding signals from heterogeneous stromal tissues. Methods include:

- Clustering-based extraction: For scattered parenchyma with high color contrast (e.g., breast mammary tissue) [14].

- Threshold-based extraction: For parenchyma with continuous distribution and clear boundaries (e.g., adrenal gland, heart). Use mean R, G, B pixel values from reference patches to define selection ranges [14].

- Deep learning segmentation: For complex structures with low color contrast (e.g., kidney glomeruli), fine-tune pre-trained models like Se-ResNext101 for pixel-level accuracy [14].

- Nucleus Segmentation: Utilize a high-accuracy, generalizable segmentation model such as the Efficient Deep Equilibrium Model (EDEM) to precisely identify nuclear boundaries within the parenchymal regions. This model integrates classical methods with deep learning for stability across varied tissue types [14].

- Shape Feature Computation: For each segmented nucleus, calculate morphometric descriptors, including:

- Area: The cross-sectional area of the nucleus.

- Perimeter: The length of the nuclear boundary.

- Circularity: Calculated as

(4 * π * Area) / (Perimeter^2). - Eccentricity: The ratio of the distance between the foci of the best-fit ellipse and its major axis length [14].

- Data Aggregation: Compute summary statistics (median, interquartile range) for each shape feature per WSI or patient for downstream association analysis with molecular data [14].

Protocol for Color-Based Vacuole Quantification

This protocol details the steps for identifying and quantifying cytoplasmic vacuoles (e.g., lipid droplets) in H&E-stained images, such as in liver tissue, using standard image analysis software [18].

- Image Preprocessing: Extract a region of interest (ROI) from the WSI that represents the tissue structure under investigation.

- Vacuole Identification: Define vacuoles as circular, unstained objects within the cytoplasm. Use color and morphological criteria to detect them:

- Area: Set a threshold to exclude non-vacuole objects (e.g.,

1–500 μm²). - Roundness: Set a threshold to select circular objects (e.g.,

1–1.5units) [18].

- Area: Set a threshold to exclude non-vacuole objects (e.g.,

- Object Separation: If vacuoles are densely packed and overlapping, apply morphological processing or use a Watershed Split algorithm to separate touching objects without altering their original forms [18].

- Quantitative Measurement: For each detected vacuole, measure:

- Vacuole Area: The cross-sectional area of each individual vacuole.

- Total Vacuolated Area: The sum of all vacuole areas within the ROI.

- Vacuole Count: The total number of vacuoles in the ROI [18].

The analytical workflow for quantitative histopathology integrates these specific protocols into a broader pipeline, from tissue preparation to statistical modeling, as visualized below.

Figure 1: Histopathology Image Analysis Workflow. This diagram outlines the standard computational pipeline for extracting quantitative features from histological images.

The Scientist's Toolkit: Essential Research Reagents & Software

Successful implementation of quantitative histopathology relies on a suite of robust, often open-source, software tools and libraries.

Table 2: Essential Open-Source Software for Histological Image Analysis

| Tool Name | Primary Function | Key Strengths | Application in Feature Extraction |

|---|---|---|---|

| HistomicsTK [17] | Python library for WSI analysis | Modular, scalable; offers preprocessing, segmentation, and feature extraction; can be containerized via DSA. | Color deconvolution, nuclei segmentation, positive pixel count for fibrosis. |

| QuPath [16] [19] | Bioimage analysis software | User-friendly interface, robust WSI support, strong machine learning integration for detection and classification. | Interactive nucleus detection, cell counting, shape and topology analysis. |

| CellProfiler [17] [19] | Cell image analysis platform | High-throughput quantitative analysis, designed for cell biology applications, pipeline-based workflow. | High-throughput measurement of cell shape, texture, and intensity. |

| Ilastik [16] [19] | Interactive segmentation tool | User-friendly pixel classification using machine learning without requiring coding expertise. | Semi-automatic segmentation of tissue regions and structures for subsequent feature extraction. |

| ImageJ/Fiji [19] | General-purpose image analysis | Vast ecosystem of plugins, highly customizable, extensive community support. | Fundamental shape and color measurements, manual and semi-automated analysis. |

Comparative Performance of Open-Source Tools

The selection of an appropriate software tool depends on the specific analytical task, scale of data, and user expertise. The following table compares the performance of key open-source tools across critical dimensions relevant to research and drug development.

Table 3: Tool Performance Comparison for Key Analytical Tasks

| Analytical Task | Recommended Tools | Performance Notes & Supporting Data |

|---|---|---|

| Nuclear Shape & Size Quantification | QuPath [19], CellProfiler [19], HistomicsTK [17] | QuPath and CellProfiler provide accurate, high-throughput nucleus detection and measurement. HistomicsTK's EDEM model offers high-accuracy segmentation for complex nuclei [14] [17]. |

| Color-Based Analysis (Stain Intensity) | HistomicsTK [17], ImageJ [19] | HistomicsTK provides specialized algorithms for color deconvolution and positive pixel count, successfully used to quantify fibrosis in kidney allografts and IHC staining [17]. |

| Texture & Topology Analysis | Ilastik [19], Custom Python scripts | Ilastik's pixel classification excels at segmenting tissue regions based on textural differences. Graph-based topological features are often extracted via custom scripts built on libraries like scikit-image [14]. |

| Handling Gigapixel WSIs | QuPath [16], Cytomine [16], HistomicsTK [17] | QuPath and Cytomine are specifically designed to handle large WSIs (>40 GB). HistomicsTK is architected to be agnostic to image size, handling tiling and stitching for gigapixel images [16] [17]. |

| Integration with ML/AI Pipelines | HistomicsTK [17], QuPath [16], CellProfiler [16] | All three support machine learning integration. HistomicsTK serves as a baseline for model comparison (e.g., CellViT++), while QuPath allows training of custom object classifiers [16] [17]. |

The mining of histological images for quantitative color, texture, shape, and topology features represents a cornerstone of modern computational pathology. This guide provides a structured overview of the feature subsets, detailed experimental protocols, and a comparative analysis of the open-source toolkit available to researchers. The rigorous application of these methodologies is critical for validating the complex, emergent behaviors observed in tissue systems, ultimately accelerating biomarker discovery and therapeutic development in precision medicine. As the field evolves with trends like foundation models and multimodal integration, the standardized extraction of these quantitative features will continue to be fundamental to unlocking the rich biological information embedded within histopathological images [15].

The histopathological classification of renal cell carcinomas (RCC) represents a dynamic field where traditional morphological assessment increasingly integrates with molecular insights to define tumor entities with greater precision. The World Health Organization (WHO) classification of urinary and male genital tumours, updated in 2022, reflects this evolving understanding through significant revisions that impact diagnostic criteria, prognostic stratification, and therapeutic decision-making [20]. These changes occur within the broader thesis that emergent behavioral patterns in renal neoplasia can be validated through systematic histopathology research, creating a foundation for more personalized patient management.

Recent developments in the WHO classification include substantive adjustments to histomorphologically defined tumor types. Notably, papillary renal cell carcinoma is no longer categorized into two distinct subtypes, recognizing the limited clinical utility of this histological subdivision [20]. Furthermore, the classification now acknowledges the benign nature of clear cell papillary tumors, which have been reclassified as clear cell papillary renal cell tumors (ccpRCT) rather than carcinomas [20] [21]. These revisions demonstrate how continual refinement of diagnostic criteria emerges from accumulating clinicopathological evidence.

Simultaneously, computational approaches to histological image analysis have revealed that specific morphological features tend to emerge as part of optimal diagnostic models for particular cancer endpoints [22]. This data-mining methodology applied to renal tumor tissue samples demonstrates that comprehensive image feature sets can uncover biological clues for disease diagnosis, creating bridges between visual pattern recognition and molecular underpinnings of renal neoplasia.

Current WHO Classification Framework for Renal Tumors

Key Updates in the 2022 WHO Classification

The 2022 WHO classification introduced several critical revisions that refine how renal epithelial tumors are categorized (Table 1). These changes reflect the growing understanding of the clinical behavior and molecular features of various renal tumor subtypes.

Table 1: Key Updates in the 2022 WHO Classification of Renal Tumors

| Tumor Type | Classification Change | Clinical Significance |

|---|---|---|

| Papillary RCC | No longer subdivided into Type 1 and Type 2 | Recognizes limited clinical utility of histological subtyping |

| Clear Cell Papillary Tumor | Reclassified from carcinoma to tumor | Acknowledges benign clinical behavior with minimal metastatic potential |

| Emerging Entities | Introduction of several provisional categories | Identifies newly characterized tumors requiring further validation |

The most significant nomenclature change affects clear cell papillary renal cell tumors, which are now recognized as distinct from malignant carcinomas due to their highly favorable outcomes [21]. This reclassification emerged from studies demonstrating that ccpRCT patients typically present with lower grade (G1/G2) and lower stage (I/II) disease, exhibiting prolonged overall survival (OS) and disease-specific survival (DSS) compared to clear cell RCC (ccRCC) and papillary RCC (pRCC) patients [21].

Clinicopathological Features of Major Renal Tumor Subtypes

Different renal tumor subtypes demonstrate characteristic clinicopathological features that inform prognosis and management strategies (Table 2). Understanding these patterns is essential for accurate diagnosis and risk stratification.

Table 2: Clinicopathological Features of Major Renal Tumor Subtypes

| Tumor Type | Frequency | Characteristic Morphology | Typical Behavior | Key Molecular Features |

|---|---|---|---|---|

| Clear Cell RCC | 65-70% of RCC [23] | Clear cytoplasm, nested growth with delicate vasculature | Aggressive, metastatic potential | VHL inactivation, chromosome 3p loss [23] |

| Papillary RCC | ~15% of RCC [24] | Papillary architecture, foamy macrophages | Variable prognosis | No longer subtyped [20] |

| Chromophobe RCC | ~5% of RCC [24] | Plant-like cells with transparent cytoplasm, thick membranes | Generally favorable prognosis | – |

| Clear Cell Papillary RCT | 2-4% of RCC [21] | Papillae lined by clear cells, nuclear polarity | Benign behavior, minimal metastatic risk | – |

Clear cell RCC, the most common malignant renal epithelial tumor, typically presents as a solitary cortical mass with a characteristic golden yellow variegated cut surface [23]. Histologically, it demonstrates diverse architectural patterns, primarily solid and nested, with tumor cells containing clear or granular eosinophilic cytoplasm intersected by a prominent but delicate capillary network. The vast majority (95%) occur sporadically, with a peak incidence in the sixth to seventh decade, and show a male predominance (M:F = 1.5:1) [23].

Computational Histopathology: Mining Emergent Feature Patterns

Comprehensive Image Feature Analysis Framework

Advanced computational approaches now enable systematic mining of histological image features to identify optimal diagnostic patterns for renal tumor classification and grading. One comprehensive methodology extracts 2,671 distinct features from renal tissue images, categorized into 12 specialized subsets that quantify different morphological properties [22]. This feature extraction framework processes histological images through multiple analytical pathways to capture color, texture, topological, and shape characteristics.

The analytical workflow begins with image preprocessing and segmentation, identifying key histological structures including nuclear, cytoplasmic, and glandular components. Feature subsets are then calculated to capture specific tissue properties: Color features quantify intensity distributions across RGB channels; Texture features include Haralick, Gabor, wavelet, and fractal dimensions; Shape features describe morphological properties of cellular structures; and Topology features characterize spatial relationships between cells and tissue structures [22]. This multi-faceted approach ensures comprehensive quantification of histopathological patterns.

Emergent Feature Patterns for Renal Tumor Classification

When applied to renal tumor classification, this computational approach reveals that specific feature subsets emerge as optimal predictors for different diagnostic endpoints. Research demonstrates that for the six renal tumor subtype classification endpoints analyzed, distinct feature combinations consistently produce the most accurate diagnostic models [22]. These emergent feature patterns provide biological insights into the distinctive morphological characteristics of each tumor subtype.

The experimental protocol for identifying these diagnostic patterns employs a rigorous validation framework. Researchers evaluate classification models across 12 binary endpoints (comparing pairs of tumor subtypes or grades) using multiple classification methods (Bayesian, Logistic Regression, k-NN, and Linear SVM) with various parameters [22]. Feature selection techniques include t-test, Wilcoxon rank sum test, Significance Analysis of Microarrays (SAM), and minimum redundancy and maximum relevance (mRMR) approaches. Optimal models are identified through stratified nested cross-validation with 10 iterations and 5 folds in both the feature selection and classification stages [22].

Computational Histopathology Workflow: From image processing to tumor classification

Prognostic Stratification: Integration of Morphological and Clinical Features

Grading and Staging Systems in Renal Cell Carcinoma

The prognostic assessment of renal cell carcinomas relies on integrated evaluation of histological grade, tumor stage, and specific morphological features. The WHO/International Society of Urological Pathology (ISUP) grading system has replaced the Fuhrman system, using nucleolar prominence to create four prognostic tiers [23]. This system applies to clear cell and papillary RCC but not to chromophobe RCC, which has its own prognostic assessment framework.

The TNM staging system (8th edition) provides critical prognostic information, with tumor confinement to the kidney (pT1 and pT2) associated with more favorable outcomes. pT1 tumors are further subdivided by size (pT1a ≤4 cm; pT1b >4 cm to 7 cm), while pT2 tumors represent larger lesions still confined to the kidney [23]. Advanced disease (pT3) involves regional extrarenal spread into perinephric fat, renal sinus fat, venous structures, or the pelvicalyceal system. Application of these updated systems has demonstrated significant impact on prognostic accuracy, with one study showing restaging of 59% of cases and identification of sarcomatoid and rhabdoid differentiation in 7% of tumors upon re-evaluation [24].

Comparative Survival Analysis Across Renal Tumor Subtypes

Clear cell papillary renal cell tumors demonstrate distinctly favorable outcomes compared to other RCC subtypes. A comprehensive analysis of 59,076 RCC patients revealed that ccpRCT patients were characterized by younger median age (63 years), lower male predominance (54.1%), and more favorable tumor features including higher rates of low-grade tumors (G1‒G2) and lower incidence of advanced stage disease [21]. These patients exhibited prolonged overall survival and disease-specific survival compared to both ccRCC and pRCC patients.

Multivariate Cox regression analysis identified that age at diagnosis and treatment type were crucial prognostic factors for both OS and DSS in ccpRCT patients [21]. Surgical intervention was associated with improved outcomes, with 96.4% of ccpRCT patients undergoing surgery compared to 90.8% of ccRCC and 92.5% of pRCC patients. The highly favorable prognosis of ccpRCT validates its reclassification as a tumor rather than carcinoma, though the authors note these tumors have "low rather than no malignant potential" [21].

Table 3: Essential Research Reagents for Renal Tumor Pathology Studies

| Reagent/Resource | Application | Utility in Renal Tumor Research |

|---|---|---|

| CAIX (Carbonic Anhydrase IX) | Immunohistochemistry | Identifies "box-like" pattern in clear cell RCC; "cup-shaped" in clear cell papillary tumors [24] |

| CK7 (Cytokeratin 7) | Immunohistochemistry | Differentiates chromophobe RCC and oncocytic tumors; ~50% of oncocytosis tumors show positivity [25] |

| CD117 (c-kit) | Immunohistochemistry | Characteristic staining pattern in chromophobe RCC [24] |

| BCOR | Immunohistochemistry | Supports diagnosis of clear cell sarcoma of kidney [26] |

| FH (Fumarate Hydratase) | Immunohistochemistry | Identifies FH-deficient RCC, a recently recognized subtype [24] |

| SDH (Succinate Dehydrogenase) | Immunohistochemistry | Detects SDH-deficient RCC included in current WHO classification [24] |

| H&E Staining | Histology | Fundamental morphological assessment for architecture and cytology [22] |

| Molecular Panels | Genetic Analysis | Identifies characteristic alterations including VHL, TFE3, TFEB, BCOR, TSC mutations [26] [23] [25] |

This curated toolkit enables comprehensive characterization of renal tumors according to contemporary classification standards. The strategic application of these reagents facilitates accurate subtyping, particularly for morphologically overlapping entities, and provides insights into the molecular mechanisms driving tumor development and progression.

Signaling Pathways and Molecular Mechanisms in Renal Tumorigenesis

Renal tumorigenesis involves distinct molecular pathways that correlate with histological subtypes and clinical behavior. Clear cell RCC demonstrates characteristic VHL gene inactivation located on chromosome 3p25, present in 50-82% of cases [23]. This loss of VHL protein function leads to accumulation of hypoxia-inducible transcription factor alpha (HIF1α), driving transcription of hypoxia-associated genes including VEGF, PDFGβ, GLUT1, TGFα, CAIX, and EPO [23].

Emerging renal tumor entities demonstrate unique molecular alterations that distinguish them from established subtypes. Eosinophilic solid and cystic RCC (ESC RCC) harbors TSC mutations in both sporadic cases and those associated with tuberous sclerosis complex [25]. Clear cell sarcoma of the kidney, a rare pediatric malignant mesenchymal tumor, is characterized by molecular alterations leading to oncogenic upregulation of BCOR, a component of noncanonical PRC1 [26]. These include internal tandem duplication affecting exon 15 of the BCOR gene, YWHAE::NUTM2 gene fusion, or BCOR::CCNB3 gene fusion [26].

Molecular Pathways in Renal Tumor Subtypes: Distinct alterations drive different tumor entities

Discussion: Clinical Implications and Future Directions

The evolving classification of renal tumors reflects an ongoing integration of morphological patterns with molecular insights, enabling more precise diagnosis and prognostication. The emergent feature patterns identified through computational histopathology and validated by clinical outcome studies demonstrate the power of systematic analysis to uncover biologically significant characteristics. This approach facilitates the development of diagnostic models that optimize feature selection for specific classification endpoints, potentially leading to more reproducible and accurate pathological assessment.

The reclassification of clear cell papillary renal cell carcinoma to clear cell papillary renal cell tumor exemplifies how long-term clinical validation can reshape diagnostic categories. This modification acknowledges the indolent nature of these neoplasms while recognizing that they maintain low malignant potential [21]. Similarly, the elimination of papillary RCC subtyping reflects growing evidence that the historical Type 1/Type 2 distinction lacks clinical utility for prognostication or therapeutic decision-making [20]. These refinements ensure that the classification system remains clinically relevant while accommodating new insights.

Future directions in renal tumor pathology will likely include increased incorporation of molecular markers into diagnostic algorithms, potentially enhancing classification systems beyond pure morphology. Emerging entities such as eosinophilic solid and cystic RCC, thyroid-like follicular RCC, and biphasic squamoid alveolar RCC continue to be characterized [25], with some likely to achieve formal recognition in future WHO classifications. Additionally, computational pathology approaches will probably expand, potentially incorporating artificial intelligence and machine learning to identify subtle morphological patterns not readily apparent through conventional microscopic examination.

The continued validation of emergent feature patterns through histopathology research creates a virtuous cycle of refinement, where computational identification of diagnostically significant characteristics informs biological investigation, which in turn enhances diagnostic precision. This integrative approach promises to advance our understanding of renal tumor biology while simultaneously improving patient care through more accurate diagnosis, prognostication, and personalized therapeutic approaches.

In contemporary biomedical research, the reductionist approach—explaining whole systems by their constituent parts—has been powerfully advanced by molecular profiling technologies. However, this approach faces limits when confronting complex biological systems where emergent properties arise from nonlinear interactions between components, creating behaviors that cannot be predicted from individual parts alone [27]. In pathology, this concept manifests as diagnostic features that emerge from complex interactions across molecular, cellular, and tissue levels.

This guide compares three technological approaches for detecting and interpreting these emergent features: histopathological image analysis, AI-based digital pathology, and liquid biopsy profiling. By objectively evaluating their performance characteristics, experimental requirements, and clinical applications, we provide researchers with a framework for selecting appropriate methodologies for investigating emergent biological phenomena in disease states.

Comparative Analysis of Emergent Feature Detection Technologies

The table below summarizes the core performance characteristics and applications of three primary technologies for emergent feature detection.

Table 1: Performance Comparison of Emergent Feature Detection Technologies

| Technology | Primary Data Source | Key Performance Metrics | Detectable Emergent Features | Clinical Applications |

|---|---|---|---|---|

| Histopathological Image Feature Mining | H&E stained tissue sections | Diagnostic accuracy: 81.5-97.5% across renal tumor subtypes [22] | Nuclear morphology, tissue texture, architectural patterns | Tumor classification, grading, stromal characterization |

| AI-Based Digital Pathology | Whole Slide Images (WSIs) | AUC: 0.746-0.999 for lung cancer subtyping; Sensitivity: 82-87%, Specificity: 77-94% for melanoma diagnosis [28] [29] | Tumor-infiltrating lymphocyte patterns, spatial relationships, molecular surrogates | Diagnostic classification, prognosis prediction, mutation prediction |

| Liquid Biopsy Profiling | Circulating tumor DNA (ctDNA) | Emergent alterations detected in 63% of refractory GI cancers; VAF detection threshold: 0.01% [30] | Resistance mutations, clonal evolution patterns, dynamic TMB | Therapy resistance monitoring, minimal residual disease, treatment selection |

Experimental Protocols for Emergent Feature Detection

Protocol 1: Comprehensive Histopathological Image Feature Mining

This methodology enables quantitative analysis of emergent morphological patterns in tissue samples through extensive feature extraction [22].

- Sample Preparation: Hematoxylin and eosin (H&E) stained tissue sections are digitized using whole slide scanners at 40x magnification. For renal tumor analysis, 48-58 images across subtypes (chromophobe, clear cell, papillary, oncocytoma) and Fuhrman grades are typically required [22].

- Image Processing: From each whole slide image, multiple 512×512 pixel non-overlapping tiles are extracted from the central portion. Color normalization and quantization are applied using self-organizing maps with 64 levels. Automatic stain segmentation separates nuclear, cytoplasmic, and glandular structures [22].

- Feature Extraction: A comprehensive set of 2,671 features is extracted, including:

- Color features: 48 features capturing RGB channel intensity distributions

- Texture features: 565 global texture features using Haralick, Gabor, wavelet, and fractal algorithms

- Shape features: 249 morphological features describing object area, perimeter, solidity, and fractal dimensions

- Topology features: 56 spatial relationship features via Delaunay triangulation and Voronoi diagrams [22]

- Classification Pipeline: Twelve distinct binary classification endpoints are evaluated using nested cross-validation with 10 iterations and 5 folds. For each endpoint, 9,675 models are tested combining four classifiers (Bayesian, Logistic Regression, k-NN, Linear SVM) with five feature selection methods (t-test, Wilcoxon, SAM, mRMR) across 45 feature sizes [22].

Protocol 2: AI-Based Digital Pathology Classification

Deep learning systems detect emergent diagnostic patterns in whole slide images through automated feature learning [29] [31].

- Data Set Preparation: Whole slide images are partitioned into training (typically 60-70%), validation (15-20%), and test (15-20%) sets. For melanoma diagnosis, datasets should include diverse subtypes (e.g., superficial spreading, nodular, acral lentiginous) and benign mimics (e.g., nevi, dysplastic nevi) [29].

- Pre-processing: Techniques address digital pathology challenges:

- Model Architecture: Convolutional Neural Networks (e.g., U-Net, Mask R-CNN) process image tiles of 256×256 to 960×960 pixels. Multi-scale analysis incorporates information from different magnification levels (e.g., 5x, 10x, 20x, 40x) [29] [31].

- Validation: External validation is performed on datasets from different institutions to assess generalizability. Performance metrics including AUC, sensitivity, specificity, F1-score, and accuracy are calculated [28].

Protocol 3: Longitudinal Liquid Biopsy Profiling

This approach captures emergent molecular features through serial monitoring of circulating tumor DNA [30].

- Sample Collection: Peripheral blood samples (typically 10-20mL) are collected in cell-free DNA collection tubes at baseline and progression timepoints. For gastrointestinal cancers, sampling is aligned with radiographic assessments using RECIST 1.1 criteria [30].

- ctDNA Analysis: Plasma separation via centrifugation, followed by cell-free DNA extraction. Next-generation sequencing is performed using FDA-approved panels (e.g., Guardant360). Sequencing coverage of ~15,000x enables detection of variants at 0.01% variant allele frequency [30].

- Variant Categorization: Somatic alterations are classified by dynamic behavior:

- Emergent: Not detected at baseline, present at progression

- Increasing: VAF increased >25% from baseline

- Stable: VAF change <25% from baseline

- Decreasing: VAF decreased >25% from baseline

- Lost: Present at baseline, undetectable at progression [30]

- Resistance Alteration Identification: Pathogenic mutations classified as "emergent," "increasing," or "stable" are considered potential resistance mechanisms. Genes with statistically significant enrichment for resistance-associated dynamics are identified [30].

Visualizing Emergent Feature Detection Workflows

The following diagrams illustrate the core workflows for detecting and interpreting emergent features across the three technologies.

Diagram 1: Histopathological Image Feature Mining. This workflow illustrates the process from image acquisition through biological interpretation, highlighting the comprehensive feature extraction and selection steps crucial for identifying emergent morphological patterns.

Diagram 2: AI-Based Digital Pathology Workflow. This diagram outlines the process for developing and validating AI systems that detect emergent diagnostic patterns in whole slide images, emphasizing the importance of external validation.

Diagram 3: Longitudinal Liquid Biopsy Profiling. This workflow shows the process for detecting emergent molecular features through serial ctDNA monitoring, highlighting how dynamic variant categorization enables identification of resistance mechanisms.

The Scientist's Toolkit: Essential Research Reagents and Platforms

The table below details key reagents, platforms, and computational tools required for implementing the described experimental protocols.

Table 2: Essential Research Reagents and Platforms for Emergent Feature Detection

| Category | Specific Tools/Platforms | Primary Function | Key Considerations |

|---|---|---|---|

| Sample Processing | H&E staining reagents, cell-free DNA collection tubes (e.g., Streck), DNA extraction kits | Tissue preservation and nucleic acid stabilization | Pre-analytical variables significantly impact downstream feature detection |

| Imaging & Sequencing | Whole slide scanners (e.g., Aperio, Hamamatsu), NGS platforms (e.g., Illumina, Thermo Fisher) | Digital image acquisition and high-throughput sequencing | Scanner resolution and sequencing depth determine feature detection sensitivity |

| Computational Platforms | Python, R, TensorFlow, PyTorch, OpenCV, QuPath, Docker | Image processing, feature extraction, and model development | Containerization ensures reproducibility across research environments |

| Feature Extraction | Custom feature extraction algorithms, pre-trained CNN models (e.g., VGG16, ResNet) | Quantitative characterization of morphological and molecular patterns | Comprehensive feature sets (2,671+ features) enable discovery of emergent properties [22] |

| Data Resources | The Cancer Genome Atlas, public WSI repositories, FAIR data platforms | Training datasets for algorithm development | Diverse, multi-center datasets improve model generalizability [32] |

Biological Interpretation of Emergent Features

The fundamental value of emergent features lies in their ability to reveal higher-order biological organization that cannot be observed through reductionist approaches alone. In cancer biology, tumor development demonstrates "vertical emergence" where systemic properties cannot be deduced from the properties of the system's parts [27]. This manifests through sequential state shifts: from inflammatory response to chronic inflammation, then to pre-cancerous cells, and finally to established tumors with metastatic potential [27].

At the molecular level, emergent ctDNA alterations in refractory gastrointestinal cancers reveal evolutionary pressures under therapy. TP53, KRAS, and PIK3CA mutations are significantly associated with treatment resistance, while alterations in genes like FGFR2 show polyclonal emergence consistent with acquired resistance to targeted therapies [30].

In histopathological analysis, emergent computational features map to biologically meaningful tissue patterns. Nuclear shape and topology features correlate with chromatin organization and nuclear envelope integrity, while glandular architectural features reflect epithelial-stromal interactions and tissue organization [22]. These emergent features provide a quantitative bridge between tissue morphology and underlying molecular mechanisms.

The technologies compared in this guide provide complementary approaches for detecting and interpreting emergent features across biological scales. Histopathological image feature mining offers high interpretability for morphological patterns, AI-based digital pathology enables automated discovery of complex diagnostic features, and liquid biopsy profiling captures dynamic molecular evolution.

Each methodology demonstrates that disease states represent emergent properties of complex biological systems, where nonlinear interactions between components give rise to features that cannot be predicted from individual elements alone [27] [33] [34]. This understanding enables a more comprehensive approach to disease diagnosis and mechanism elucidation, moving beyond reductionist models to embrace the complex, hierarchical nature of biological systems.

Future directions will require increased integration of these technologies, creating multidimensional maps of emergent features across molecular, cellular, tissue, and organismal levels. Such integrated approaches will advance both fundamental understanding of disease mechanisms and clinical capabilities for diagnosis, prognosis, and therapeutic intervention.

The Next-Generation Toolbox: Methodologies for Capturing and Quantifying Emergent Phenomena

Scanner Performance and Throughput: A Comparative Analysis

The transition to a digital workflow hinges on the performance of whole-slide imaging (WSI) scanners. Throughput—encompassing scanning speed, capacity, and automation—is a critical differentiator for high-throughput operations. The table below summarizes experimental performance data for various scanner models, highlighting the significant speed differences that impact large-scale studies. [35]

Table 1: Comparative Whole-Slide Scanner Performance Data

| Scanner Model | Approx. Capacity | Avg. Scan Time for Resection (s) | Avg. Scan Time for Biopsy (s) | Avg. Scan Time for IHC (s) | Normalized Time for 15x15 mm area (s) |

|---|---|---|---|---|---|

| Hamamatsu NanoZoomer S360 | 360 slides | 73.3 | 30.0 | 119.7 | 39.7 |

| Roche VENTANA DP200 | 6 slides | 241.3 | 98.7 | 123.7 | 123.7 |

| Hamamatsu NanoZoomer S210 | 210 slides | 615.7 | 242.0 | 525.0 | 227.8 |

| Zeiss AxioScan Z1 | 100 slides | 1025.7 | 301.7 | 647.0 | 729.6 |

Data adapted from a 2022 study testing nine sample slides (3 resections, 3 biopsies, 3 IHC) on four different scanners. Pixel size ranged from 0.22 to 0.25 μm per pixel. Normalized time represents the estimated time to scan a 225 mm² area. [35]

Key Performance Insights: The data demonstrates that modern high-throughput scanners like the Hamamatsu NanoZoomer S360 achieve significantly faster scan times, particularly for larger resection specimens. This speed is a function of the scanner's architecture (e.g., line scanning vs. tile scanning) and its processing software. The normalized time metric is crucial, as it corrects for variations in tissue size on the slide, providing a standardized basis for comparison. For high-throughput environments, a scanner's batch capacity is equally important; larger capacities (hundreds of slides) enable unattended operation and greater workflow efficiency. [35]

Diagnostic and AI Model Performance: Validating the Digital Equivalency

The core thesis of digital pathology's value rests on its diagnostic equivalency to traditional microscopy and its enhancement through artificial intelligence (AI). Recent large-scale studies provide robust experimental data to validate this.

Diagnostic Concordance in Clinical Practice

A 2025 validation study at a large tertiary academic center followed guidelines from the College of American Pathologists (CAP) and others. In a blinded review of 60 retrospective cases per pathologist, the study demonstrated a 99% diagnostic concordance between digital and physical glass slide diagnoses. Furthermore, the transition to a digital workflow reduced the time to sign out a case by almost a minute, indicating tangible efficiency gains. Pathologists reported increased flexibility and satisfaction, though challenges with specific findings like detecting H. pylori and color oversaturation were noted. [36]

Meta-Analysis of AI Diagnostic Accuracy

A 2024 systematic review and meta-analysis of 100 studies evaluated the diagnostic test accuracy of AI in digital pathology. The findings, summarized below, confirm the high potential of AI as a tool for quantitative analysis. [37]

Table 2: AI in Digital Pathology - Diagnostic Test Accuracy Meta-Analysis

| Metric | Performance Value | Confidence Interval (CI) | Number of Studies Analyzed |

|---|---|---|---|

| Mean Sensitivity | 96.3% | 94.1% - 97.7% | 48 |

| Mean Specificity | 93.3% | 90.5% - 95.4% | 48 |

| F1 Score Range | 0.43 to 1.0 | - | 48 |

| Mean F1 Score | 0.87 | - | 48 |

The meta-analysis included over 152,000 Whole Slide Images (WSIs) across various diseases. The largest subgroups of studies were in gastrointestinal, breast, and urological pathology. [37]

Performance Context and Limitations: Despite high aggregate accuracy, the review highlighted significant heterogeneity in study design. A majority of studies (99%) had at least one area at high or unclear risk of bias, often due to non-consecutive case selection or unclear separation of training and testing data. This underscores the need for rigorous, transparent experimental protocols when developing and validating AI models for clinical or research use. [37]

Foundation Models and Emerging Capabilities

Beyond task-specific AI, foundation models pre-trained on massive datasets are pushing the boundaries of computational pathology. Prov-GigaPath, an open-weight foundation model pre-trained on 1.3 billion image tiles from 171,189 whole slides, represents a significant advance. It uses a novel architecture (GigaPath) adapted from LongNet to model entire gigapixel slides, capturing both local and global context. [38]

In a benchmark of 26 tasks, including cancer subtyping and mutation prediction, Prov-GigaPath achieved state-of-the-art performance on 25. For example, it attained a 23.5% improvement in AUROC for EGFR mutation prediction in lung cancer compared to the next best model, demonstrating the power of whole-slide context and large-scale real-world data for predictive tasks in histopathology. [38]

Experimental Protocols for Digital Pathology Workflow

For researchers seeking to implement or validate digital pathology workflows, the following methodologies provide a foundational framework.

Protocol for High-Throughput Slide Digitization

This protocol is designed for the efficient digitization of large slide cohorts, critical for AI/ML development. [35]

- Slide Curation and Preparation: Prioritize slides based on research needs (e.g., process biopsies first as they scan faster). During tissue embedding, place fragments closer together and centrally on the slide to minimize scan area and time. [36]

- Scanner Setup and Calibration: Follow manufacturer guidelines for daily calibration to ensure color fidelity and focus accuracy. For high-throughput scans, configure automated settings for tissue detection and focus.

- Batch Loading and Scanning: Utilize the scanner's full batch capacity to minimize manual intervention. A high-throughput scanner should be defined by fast scan speed (e.g., under 2 minutes per slide for most tissues), large capacity (hundreds of slides), and a high degree of automation. [35]

- Post-Scan Processing and Transfer: Automated file post-processing and transfer to a secure server or cloud storage are essential. File management includes applying appropriate compression (lossy or lossless) based on the trade-off between file size and image quality requirements. [39]

- Quality Control (QC): Implement a systematic QC check to ensure the entire tissue section is captured and the image is in focus. This can be automated with informatics tools or performed via manual sampling.

Protocol for Digital vs. Analog Diagnostic Validation

For institutions validating digital slides for primary diagnosis, a phased approach aligned with CAP guidelines is recommended. [36]

- Training Phase: Each pathologist reviews 15-30 of their own recent cases digitally immediately after conventional sign-out. The objective is to gain familiarity with digital morphology and the viewing software. Feedback on image quality and workflow should be collected.

- Validation Phase: Each pathologist reviews a larger set (e.g., 60 cases) of their own historical cases blinded to the original diagnosis. A "wash-out" period (e.g., 9 months) should be enforced to reduce recall bias. Cases are selected to represent a typical spectrum of their workload.

- Data Analysis: Compare the digital diagnosis with the original glass slide diagnosis. Discrepancies are classified as:

- No Discrepancy: Reports are identical.

- Minor Discrepancy: Differ in descriptive text but not final diagnosis.

- Major Discrepancy: Differ in the final diagnosis, which must be reconciled.

- Workflow Integration: After successful validation, progressively reduce the number of physical slides delivered to pathologists, moving toward a fully digital workflow. [36]

Workflow and System Architecture

The transition to a high-throughput digital pathology operation involves a fundamental shift from interactive to automated workflows. The diagram below contrasts these two paradigms.

The high-throughput workflow leverages automation at every stage, from batch loading and automated tissue detection to informatics-driven quality control and data management. This reduces manual intervention, increases consistency, and enables the processing of large slide volumes necessary for robust quantitative analysis and AI development. [35]

The architecture of modern AI models for pathology further builds on this automated data stream. The diagram below illustrates the structure of a whole-slide foundation model like Prov-GigaPath, which is designed to handle the computational challenge of gigapixel images.

This architecture addresses the key challenge of modeling slide-level context by first encoding individual image tiles and then using a specialized transformer (LongNet) to process the ultra-long sequence of tile embeddings. The output is a single, contextualized slide embedding that can be used for a wide variety of prediction tasks, from classic cancer subtyping to predicting genetic mutations directly from histology. [38]

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key hardware, software, and reagent solutions essential for establishing a digital pathology workflow for high-throughput quantitative analysis.

Table 3: Essential Digital Pathology Research Toolkit

| Tool Category | Specific Product/Type Examples | Primary Function in Research |

|---|---|---|

| Whole-Slide Scanners | Aperio GT450Dx (Leica), Hamamatsu NanoZoomer, Roche VENTANA DP200 | High-speed, automated digitization of glass slides into whole-slide images (WSIs). |

| Medical Grade Displays | 27-32 inch, DICOM-compliant, calibrated displays (e.g., from Barco, Eizo) | Ensure diagnostic-grade color accuracy and resolution for reliable digital interpretation. |

| Image Management Software | Proprietary vendor software, MSK/TUM slide viewer, PACS systems | Organize, store, retrieve, and view large WSI repositories. |

| Digital Image Analysis (DIA) Software | Open-source (QuPath, ImageJ) & commercial platforms | Quantitative analysis of biomarkers, cell counting, tissue classification. |

| AI/ML Development Platforms | Prov-GigaPath, HIPT, CtransPath (foundation models) | Serve as a base for developing custom AI models for prediction and discovery. |

| Staining Reagents & Kits | H&E, Immunohistochemistry (IHC), Multiplexed IHC/IF kits | Generate contrast and specific biomarker signals in tissue samples for quantification. |

| Laboratory Information System (LIS) | Nexus Pathology, other commercial LIS | Integrate digital pathology images and data with clinical and specimen metadata. |

| Cloud Storage & Computing | AWS, Google Cloud, Azure HIPAA-compliant services | Scalable storage for large WSI files and computational power for training AI models. |

Scanner selection should be based on throughput needs (speed and capacity), image quality, and compatibility with existing lab systems. [35] [36] The choice between displays involves balancing size (27-32 inches is optimal for most diagnostic work), resolution (4K/8K), and mandatory DICOM compliance for color consistency. [40] Software tools range from vendor-specific applications to open-source solutions like QuPath, which are invaluable for developing custom analysis pipelines. [41] Finally, foundation models like the open-weight Prov-GigaPath are emerging as a powerful new tool, providing a pre-trained base that can be fine-tuned for specific research tasks with limited labeled data, dramatically accelerating AI development in histopathology. [38]

The integration of artificial intelligence (AI) into cancer histopathology represents a paradigm shift in oncology research and clinical practice. The ability of deep learning models to extract subtle morphological features from standard hematoxylin and eosin (H&E)-stained whole-slide images (WSIs) has opened new frontiers for pan-cancer analysis. This approach moves beyond traditional cancer-specific diagnostic models toward unified systems capable of detection, grading, and outcome prediction across multiple cancer types from a single architecture [42] [43]. These advances are particularly valuable for drug development, enabling more precise patient stratification and biomarker discovery through analysis of routinely acquired tissue samples.

The emergence of pan-cancer AI models addresses critical limitations in traditional histopathology analysis, including inter-observer variability, diagnostic fatigue, and the inability to consistently identify complex prognostic patterns across diverse cancer types [44] [45]. Furthermore, by leveraging digitized histology slides already available in clinical workflows, these AI tools offer a scalable and cost-effective alternative to molecular assays that require additional tissue processing and specialized laboratory techniques [42] [46]. This review provides a comprehensive comparison of state-of-the-art AI methodologies for pan-cancer analysis, with detailed experimental protocols and performance benchmarks to guide researchers and drug development professionals in evaluating these rapidly evolving technologies.

Performance Comparison of Pan-Cancer AI Models

Quantitative Performance Metrics

Table 1: Performance comparison of pan-cancer prognostic models

| Model Name | Primary Function | Cancer Types Validated | Key Metrics | Data Modalities | Validation Scope |

|---|---|---|---|---|---|

| PROGPATH [42] | Survival prediction | 12 cancer types across 17 external cohorts | Mean C-index: 0.725 (TCGA) | Histopathology + clinical variables | 7,374 WSIs from 4,441 patients (external) |

| UMPSNet [47] [48] | Survival prediction | 5 TCGA cancers + zero-shot transfer to pancreatic cancer | Mean C-index: 0.725; Zero-shot: 0.652 | Histopathology, genomic, clinical (text) | 3,523 WSIs (n=2,831) + 392 WSIs (n=66) external |

| EfficientNet-B6 [45] | Bladder cancer classification | Multi-institutional (5 institutions) | Accuracy: 0.913; AUC: 0.983; Sensitivity: 0.909; Specificity: 0.956 | Histopathology only | 12,500 WSIs |

| Deep Learning IHC Prediction [49] | IHC biomarker prediction | Gastrointestinal cancers | AUC: 0.90-0.96; Accuracy: 83.04-90.81% | H&E to predict IHC status | 134 WSIs for training; 150 WSIs for clinical validation |

Cross-Cancer Generalization Capabilities

Table 2: Generalization performance across cancer types and institutions

| Model | Training Data Scope | External Validation Results | Strengths | Limitations |

|---|---|---|---|---|

| PROGPATH [42] | 7,999 WSIs from 6,670 patients across 15 cancer types | Consistent superior performance vs. state-of-the-art across 17 cohorts; Robust in stratified subgroups | Integrates routinely available clinical data; Strong interpretability features | Requires clinical data for optimal performance |

| UMPSNet [47] | 5 TCGA cancer types (BLCA, BRCA, GBMLGG, LUAD, UCEC) | Zero-shot transfer to pancreatic cancer (C-index: 0.652) without fine-tuning | Handles multiple data modalities; Effective for unseen cancer types | Complex architecture requiring multiple data types |

| EfficientNet-B6 [45] | 12,500 WSIs from 5 institutions | Maintained high accuracy (0.913) across institutions | Specialized for bladder cancer classification; High specificity (0.956) | Limited to bladder cancer applications |

| AI-IHC Prediction [49] | 134 WSIs with H&E-IHC pairs | MRMC study showed 70-100% consistency with conventional IHC across markers | Reduces need for actual IHC staining; Automates biomarker assessment | Variable performance across markers (P53: 70% consistency) |

Experimental Protocols and Methodologies

PROGPATH Architecture and Workflow