Validating Standardized Indicators for Next-Generation Cancer Surveillance: A Framework for Researchers and Drug Developers

This article provides a comprehensive framework for the validation of standardized epidemiological indicators in cancer surveillance systems, tailored for researchers and drug development professionals.

Validating Standardized Indicators for Next-Generation Cancer Surveillance: A Framework for Researchers and Drug Developers

Abstract

This article provides a comprehensive framework for the validation of standardized epidemiological indicators in cancer surveillance systems, tailored for researchers and drug development professionals. It explores the foundational need for standardization to ensure data comparability across diverse healthcare settings. The piece details methodological approaches for developing and applying validated checklists, integrating advanced analytics like GIS and predictive modeling. It addresses common challenges in data quality and harmonization, offering optimization strategies from leading global registries. Finally, it presents rigorous validation techniques and comparative evaluations of existing systems, underscoring the critical role of robust, validated data in accelerating epidemiological research and therapeutic development.

The Critical Need for Standardization in Cancer Surveillance

Epidemiological indicators are fundamental metrics used to quantify the burden of cancer in populations, track trends over time, and evaluate the impact of prevention and treatment strategies. In cancer surveillance research, these indicators provide the evidentiary foundation for public health decision-making, resource allocation, and scientific inquiry. Standardized definitions and consistent measurement methodologies are crucial for ensuring valid comparisons across different populations, geographic regions, and time periods. This guide examines six core indicators—incidence, prevalence, mortality, survival, Years of Life Lost (YLL), and Years Lived with Disability (YLD)—within the specific context of cancer research, providing researchers, scientists, and drug development professionals with a structured comparison of their definitions, calculations, applications, and data sources.

The validation of these standardized indicators relies on robust data collection systems, with programs like the Surveillance, Epidemiology, and End Results (SEER) program serving as authoritative sources for cancer statistics in the United States [1]. SEER collects demographic, clinical, and outcome data on all malignancies diagnosed in representative geographic regions and subpopulations, encompassing approximately 48% of the total U.S. cancer population [1]. Such population-based cancer registries provide the critical infrastructure for calculating comparable and reliable epidemiological indicators that drive cancer surveillance research and public health practice.

Defining the Core Indicators

Table of Core Epidemiological Indicators

The following table provides a comprehensive overview of the six core epidemiological indicators, their definitions, core functions, and primary data sources in cancer research.

Table 1: Core Epidemiological Indicators for Cancer Surveillance

| Indicator | Definition | Core Function in Cancer Research | Typical Data Sources |

|---|---|---|---|

| Incidence | The number of newly diagnosed cases during a specific time period [2]. | Measures disease occurrence and risk; identifies trends and clusters. | Cancer registries (e.g., SEER), public health surveillance systems [3]. |

| Prevalence | The number of new and pre-existing cases for people alive on a certain date [2]. | Quantifies the total disease burden; informs healthcare resource planning. | Cancer registries, population health surveys, analysis of incidence and survival data. |

| Mortality | The number of deaths during a specific time period [2]. | Tracks lethality and effectiveness of health interventions at a population level. | Vital statistics systems, death certificates, cancer registries [4]. |

| Survival | The proportion of patients alive at some point subsequent to the diagnosis of their cancer [2]. | Assesses prognosis and evaluates treatment effectiveness over time. | Cancer registry data with patient follow-up (e.g., SEER) [1] [4]. |

| YLL (Years of Life Lost) | Years of life lost due to premature mortality, calculated from a standard life expectancy. | Quantifies the impact of premature death; prioritizes causes of early death. | Mortality data, life tables, cancer registry data. |

| YLD (Years Lived with Disability) | Years of life lived with less-than-optimal health, weighted for severity of disability. | Measures the burden of living with illness and long-term sequelae of cancer/treatment. | Population health studies, patient-reported outcomes, quality-of-life research. |

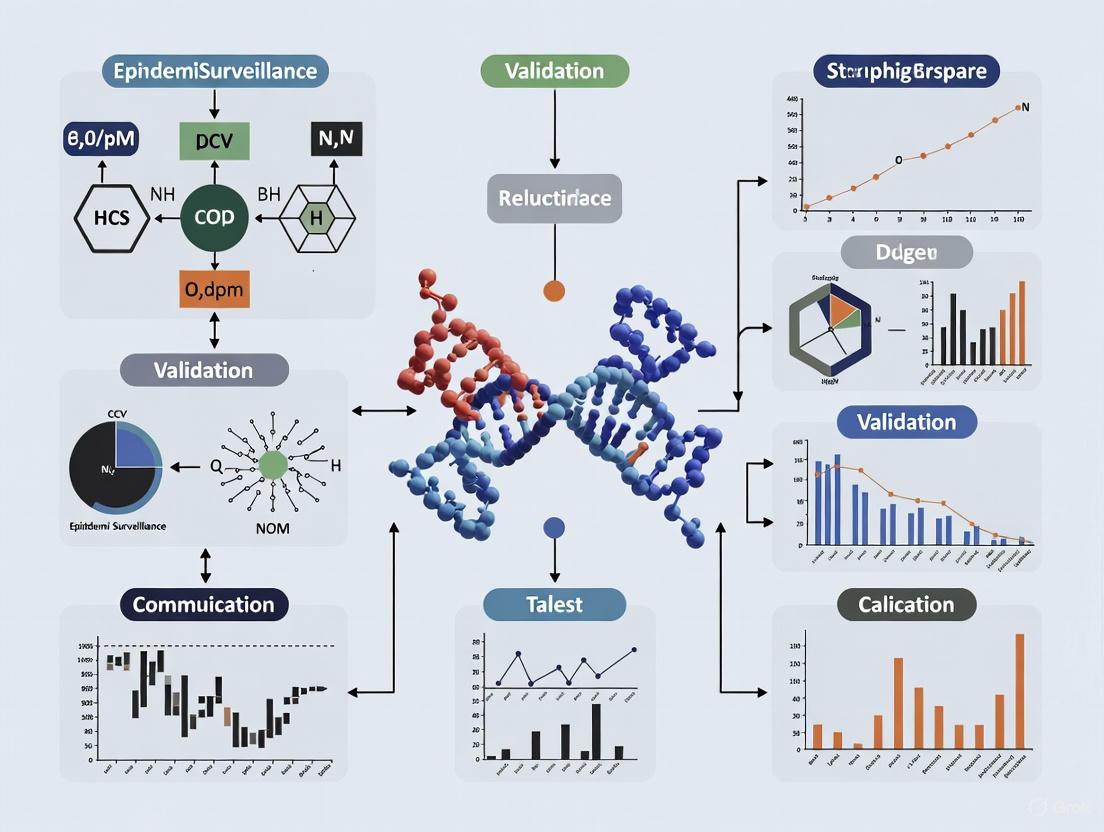

Visualizing the Interrelationships of Epidemiological Indicators

The following diagram illustrates the logical relationships and flow between these core indicators in describing the cancer burden continuum, from new cases to outcomes of survival and mortality.

Methodologies and Data Analysis Protocols

The accurate calculation of core indicators depends on high-quality, standardized data collection systems. The Surveillance, Epidemiology, and End Results (SEER) program is a prime example of such an infrastructure, providing comprehensive population-based data that are critical for cancer research [1]. SEER data encompass patient demographics, socioeconomic and geographic characteristics, primary tumor locations, tumor morphologies and biomarkers, cancer stage at diagnosis, first-course treatment regimens, and detailed follow-up for vital status [1]. This rich, multi-faceted data source allows researchers to compute and cross-reference incidence, prevalence, mortality, and survival statistics with a high degree of reliability.

Other essential data sources include the National Vital Statistics System for mortality data, the CDC's National Program of Cancer Registries, and tracking networks that integrate cancer incidence data with environmental data for ecological studies [3] [5]. The ongoing modernization of public health data infrastructure, as outlined in the U.S. Public Health Data Strategy, aims to strengthen these core data sources by making them more complete, timely, and interoperable. Key initiatives include expanding electronic case reporting, automating hospital data feeds, and implementing faster mortality data exchange [5]. For researchers, understanding the provenance, granularity, and potential biases of these data sources is a fundamental first step in any analytical protocol.

Analytical and Statistical Approaches

Different indicators require specific statistical methodologies for calculation and analysis. The SEER program and similar registries employ a standard set of analytical tools to generate core statistics.

Table 2: Key Analytical Methods for Core Indicators

| Indicator | Common Analytical Methods | Key Output Metrics | Application Example |

|---|---|---|---|

| Incidence & Mortality | Age-standardization (to a standard population), calculation of crude and specific rates. | Rate per 100,000 population [4] [3]. | Comparing cancer diagnosis rates between countries or over time. |

| Survival | Cox Proportional-Hazards Model [1], Actuarial/Life-table methods. | Hazard Ratio, 1-, 5-, and 10-year survival percentages [4]. | Evaluating if a new treatment improves 5-year survival, adjusting for patient age and stage. |

| Prevalence | Counting method (from registries), Mathematical modeling (using incidence/survival data). | Count or Proportion of the population alive with a cancer history. | Estimating the number of people needing long-term follow-up care. |

| YLL & YLD | Summary measures of population health methodology, incorporating life tables and disability weights. | Number of years or rate per 100,000. | Assessing the comprehensive burden of lung cancer versus breast cancer. |

For short-term outcomes (e.g., one-month mortality post-surgery), logistic regression is frequently used. This model calculates the probability of a binary outcome and reports Odds Ratios to identify significant risk factors [1]. In contrast, for analyzing the time until an event like death or recurrence, the Cox proportional-hazards model is the most widely used regression method. It correlates multiple risk variables with survival time and produces Hazard Ratios, which indicate the relative risk of an event occurring at any given time [1].

The following diagram outlines a standard workflow for a cancer registry-based study, from data collection to the calculation of core indicators and final analysis.

Successful epidemiological research relies on both data resources and methodological tools. The following table lists essential components for conducting studies on core cancer indicators.

Table 3: Essential Research Resources for Cancer Indicator Studies

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| SEER*Explorer [6] | Database Interface | Interactive tool to query and visualize SEER cancer statistics. |

| SEER Database [1] | Population-based Data | Primary data source for incidence, survival, prevalence; used for prognostic studies. |

| CDC Tracking Network [3] | Data Repository | Provides data on cancer types potentially linked with environmental risk factors. |

| Cox Regression Model [1] | Statistical Method | Primary analysis for survival data; identifies factors influencing survival time. |

| Logistic Regression Model [1] | Statistical Method | Analyzes binary short-term outcomes (e.g., 1-month mortality). |

| NHANES/NVSS | Data Source | Provides complementary data on risk factors (NHANES) and mortality (NVSS). |

Comparative Analysis and Application of Indicators

Strengths, Limitations, and Complementary Use

Each epidemiological indicator provides a distinct perspective on the cancer burden, and understanding their individual strengths and limitations is crucial for accurate interpretation.

Incidence is a direct measure of new disease events and is therefore critical for etiological research and monitoring the effectiveness of primary prevention programs. However, it does not reflect the future outcomes of diagnosed individuals.

Mortality indicates the fatality of cancer and is a key measure of public health success in reducing cancer deaths. A key limitation is that it is influenced not only by the disease's lethality but also by its incidence; a decline in mortality could be due to fewer people getting cancer, more people being cured, or a combination of both.

Survival is the primary indicator for evaluating progress in cancer treatment and early detection. A common challenge in interpretation is that improving survival rates do not necessarily mean a cure rate increase. For instance, lead-time bias—where early diagnosis artificially increases the measured survival time without delaying the time of death—can inflate survival statistics independent of any true therapeutic benefit.

Prevalence is indispensable for health services planning, as it defines the population requiring care, follow-up, and support resources. High prevalence can be a marker of success (people are living longer with cancer) but also indicates a significant burden on the healthcare system.

YLL and YLD move beyond simple counts of events to capture the comprehensive burden of disease in terms of both premature death and reduced quality of life. YLL emphasizes diseases that cause early death, while YLD highlights conditions that cause significant long-term disability. Together, they form the core of Disability-Adjusted Life Years, a summary measure that allows for comparing the burden of diverse diseases.

In practice, these indicators are most powerful when used together. For example, a researcher might find that the incidence of a certain cancer is stable, but survival is improving, and as a result, the prevalence is increasing. This combined finding would suggest that therapeutic advances are allowing patients to live longer, thereby increasing the need for long-term care resources—a conclusion that could not be drawn from any single indicator alone.

Contextual Interpretation for Research and Public Health

The interpretation of these indicators must always consider the context. For example, a "5-year survival" statistic of 70% does not mean that 70% of patients died within 5 years, nor that 30% are cured. It is an estimate of the proportion of people with that cancer who are alive 5 years after diagnosis, irrespective of whether they are in remission, disease-free, or still in treatment [4]. Furthermore, statistics are group-level measures and cannot predict the outcome for an individual patient, whose unique circumstances, including cancer stage, molecular pathology, comorbidities, and treatment response, will determine their personal prognosis [4].

For public health planning, these indicators help identify disparities and prioritize actions. The PAHO Core Indicators Dashboard, for instance, allows for the comparison of over 140 health indicators across countries, enabling the identification of nations with unusually high cancer mortality or low early detection rates [7]. This facilitates targeted interventions and resource allocation. Similarly, the validation of an epidemiological risk score for neonatal death, which combines individual and municipal-level data, demonstrates how core indicators and risk factors can be synthesized into practical tools for clinical prioritization and resource allocation [8], a approach that can be adapted to cancer care.

Addressing Global Gaps in Data Comparability and Interoperability

The escalating global burden of cancer necessitates robust surveillance systems to generate accurate, comprehensive data for effective public health interventions. Despite significant advancements, substantial gaps persist in data standardization, interoperability, and adaptability across diverse healthcare settings, which severely limits the comparability and utility of cancer data for research and clinical care. The current state of oncology data interoperability remains far from optimal; foundational data types—including cancer staging, biomarker status, adverse events, and patient outcomes—are often captured within Electronic Health Records (EHRs) in non-computable form, trapped within unstructured clinical notes and documents [9]. This lack of standardization poses a significant barrier to aggregating data for large-scale research, developing evidence-based policies, and ultimately improving cancer care outcomes on a global scale.

The core of the problem lies in the lack of standardization in data collection, classification, and coding practices. Variations in the adoption of standard populations for calculating metrics like Age-Standardized Rates (ASRs) and a frequent failure to integrate disability-adjusted measures, such as Years Lived with Disability (YLD) and Years of Life Lost (YLL), further complicate cross-regional comparisons and a holistic assessment of the cancer burden [10]. This article provides a comparative analysis of emerging standards and frameworks designed to bridge these gaps, with a specific focus on validating standardized epidemiological indicators for cancer surveillance research. It is intended to equip researchers, scientists, and drug development professionals with a clear understanding of the available tools and methodologies to enhance data consistency, comparability, and interoperability in their work.

Comparative Analysis of Standardization Frameworks

A systematic review and comparative evaluation of international cancer surveillance systems reveals critical gaps and emerging solutions. The following section objectively compares two key approaches: a consensus-based data standard and a comprehensive surveillance framework.

The Minimal Common Oncology Data Elements (mCODE) Standard

mCODE is a consensus data standard developed to facilitate the transmission of structured, computable data of patients with cancer between EHRs and other systems [9].

- Development and Governance: Initiated in 2018 by the American Society of Clinical Oncology (ASCO) and collaborators, including MITRE, the Alliance for Clinical Trials in Oncology, and the US Food and Drug Administration. The standard was balloted and approved by Health Level Seven International (HL7), with its first version formally published on March 18, 2020 [9].

- Technical Specifications: The standard is structured around six primary domains: Patient, Laboratory/Vital, Disease, Genomics, Treatment, and Outcome. These domains encompass 23 profiles composed of 90 discrete data elements [9].

- Primary Use Case: mCODE is designed to enable the seamless exchange of core oncology data, directly addressing the interoperability shortcomings of modern EHRs which often relegate complex oncology data to unstructured text [9].

Comprehensive Framework for Cancer Surveillance Systems (CSS)

A recent systematic review proposed a validated framework to address limitations in existing Cancer Surveillance Systems (CSS), emphasizing global applicability and regional relevance [10].

- Development Method: The framework was developed through a systematic review of 13 studies (from an initial pool of 1,085 articles) and a comparative evaluation of 13 international CSS, including the Global Cancer Observatory (GCO) and the European Cancer Information System (ECIS). A researcher-designed checklist was validated through expert consultation, achieving high reliability (Cronbach’s alpha = 0.849) [10].

- Framework Components: It integrates a comprehensive set of epidemiological indicators—incidence, prevalence, mortality, survival rates, YLD, and YLL—calculated using multiple standard populations for ASRs. It also incorporates key demographic filters (age, sex, geographic location) for stratified analyses and mandates cancer type classification based on ICD-O standards for precision and consistency [10].

- Primary Use Case: This framework is designed to serve as a structured, adaptable model for national and regional cancer surveillance systems, enhancing public health decision-making and resource allocation by ensuring data is both globally comparable and locally relevant [10].

Table 1: Comparative Analysis of Standardization Frameworks

| Feature | mCODE Standard | Comprehensive CSS Framework |

|---|---|---|

| Primary Objective | Enable interoperability of patient-level data between EHRs and systems [9] | Standardize population-level data collection and analysis for public health surveillance [10] |

| Scope & Granularity | 90 data elements across 6 clinical domains [9] | Broad epidemiological indicators and demographic stratifiers [10] |

| Technical Foundation | HL7 FHIR implementation guide [9] | Consolidated data elements and methodological practices from global systems analysis [10] |

| Key Indicators | Staging, biomarkers, treatments, outcomes [9] | Incidence, prevalence, mortality, survival, YLD, YLL [10] |

| Validation Method | HL7 balloting process and pilot implementations [9] | Systematic review and expert validation (Cronbach’s alpha = 0.849) [10] |

Experimental Protocols for Validation Studies

Validating data elements and ensuring the accuracy of aggregated information is paramount for reliable cancer surveillance and research. The following protocols outline established methodologies for this critical process.

Protocol for Validation of Epidemiological Data

This protocol is designed to assess the quality and accuracy of data within a surveillance system or research dataset.

- Objective: To estimate the Positive Predictive Value (PPV), sensitivity, and specificity of key cancer variables (e.g., diagnosis, stage, histology) within a dataset [11].

- Materials:

- The cancer dataset under validation (e.g., registry data, EHR extracts).

- A verified "gold standard" reference dataset (e.g., detailed chart abstraction by trained tumor registrars, pathology reports, clinical trial data).

- Methodology:

- Sampling: Define a representative sample from the target dataset. This can be a random sample or may oversample specific rare cancer types to ensure adequate precision [11].

- Data Abstraction: For each record in the sample, abstract the corresponding variables from the pre-defined "gold standard" source.

- Comparison: Create a 2x2 contingency table for each variable of interest, comparing its status in the target dataset against the gold standard.

- Calculation:

- PPV = (True Positives) / (True Positives + False Positives)

- Sensitivity = (True Positives) / (True Positives + False Negatives)

- Specificity = (True Negatives) / (True Negatives + False Positives) [11]

- Considerations: When using external validation studies, the generalizability of the PPV, sensitivity, and specificity must be carefully considered, as these metrics can be influenced by the disease prevalence and data collection practices in the source population [11].

Protocol for Implementing the mCODE Standard

This protocol describes the steps for implementing and testing the mCODE standard within a clinical data system.

- Objective: To enable the structured capture and FHIR-based exchange of mCODE data elements for a cohort of cancer patients.

- Materials:

- Access to an EHR or clinical database with cancer patient data.

- mCODE FHIR Implementation Guide (IG).

- A FHIR server or API-enabled infrastructure.

- Methodology:

- Mapping: Identify corresponding source data fields within the local EHR or database for each of the 90 mCODE data elements.

- Profiling: Create FHIR profiles based on the mCODE IG, defining constraints and terminology bindings (e.g., to SNOMED CT, LOINC) for each element.

- Implementation: Develop or configure the system's API to generate mCODE-compliant FHIR resources for patients, conditions, observations, and medication statements.

- Pilot Testing:

- Execute a test for a defined patient cohort (e.g., all new lung cancer diagnoses in a 6-month period).

- Extract data and format it into mCODE FHIR resources.

- Validate the output against the logical and terminology requirements specified in the mCODE IG.

- Success Metrics: Measure the percentage of mCODE data elements that can be successfully populated with structured, computable data compared to the baseline [9].

Visualization of Standardization Workflows

The following diagrams, created using Graphviz DOT language, illustrate the logical relationships and workflows described in the comparative analysis and experimental protocols.

mCODE Development and Implementation Workflow

CSS Framework Validation Methodology

The Scientist's Toolkit: Essential Research Reagents and Solutions

For researchers embarking on studies involving cancer data interoperability and validation, the following tools and resources are essential.

Table 2: Key Research Reagent Solutions for Data Interoperability and Validation

| Item | Function & Application |

|---|---|

| HL7 FHIR R4.0.1+ | The underlying interoperability standard required by US regulation, upon which profiles like mCODE are built. Provides the core data models and API specifications for exchanging healthcare data electronically [9]. |

| mCODE FHIR Implementation Guide | The definitive specification for implementing the Minimal Common Oncology Data Elements standard. It provides the structure, definitions, and terminology bindings for creating mCODE-compliant data [9]. |

| Standard Terminologies (SNOMED CT, LOINC, ICD-O-3) | Controlled vocabularies essential for ensuring semantic interoperability. They provide standardized codes for representing clinical concepts, laboratory observations, and cancer morphology/topography, enabling consistent data interpretation across systems [9] [10]. |

| US Core Data for Interoperability (USCDI) | A standardized set of health data classes that must be accessible via FHIR APIs under US regulation. Cancer-specific standards like mCODE often extend the USCDI to meet specialized oncology needs [9]. |

| Validation "Gold Standard" Datasets | Curated, high-quality data sources (e.g., detailed chart abstractions, central pathology review reports) used as a benchmark to calculate PPV, sensitivity, and specificity when assessing the quality of a larger, automated dataset [11]. |

| Statistical Software (R, Python, SAS) | Essential for performing validation calculations, analyzing epidemiological trends, and calculating advanced metrics such as Age-Standardized Rates (ASRs), YLD, and YLL [10]. |

In the rigorous field of cancer surveillance research, the validation of epidemiological indicators hinges upon a foundation of standardized tools. Two cornerstones of this foundation are the International Classification of Diseases for Oncology, Third Edition (ICD-O-3), which provides a consistent language for describing the characteristics of tumors, and the use of standard populations, which enable the calculation of comparable age-adjusted rates. Together, these classifications form an indispensable toolkit for researchers, scientists, and drug development professionals. They allow for the valid comparison of cancer incidence, mortality, and survival across diverse geographic regions, over time, and between different racial, ethnic, and demographic groups. Without such standards, the detection of meaningful trends in cancer burden, the assessment of screening program effectiveness, and the evaluation of therapeutic advances would be mired in confounding and bias. This guide objectively compares the specific applications and products of these standardized systems, providing the experimental data and protocols that underpin their critical role in producing robust, comparable cancer statistics.

Understanding ICD-O-3: The Coding Framework for Cancer Morphology and Topography

The ICD-O-3 is a specialized classification system used by cancer registries worldwide to code the site (topography) and microscopic type (morphology) of a tumor, as well as its behavior (e.g., malignant, benign). Its primary function is to ensure that every cancer diagnosis is recorded in a consistent and unambiguous manner. This consistency is vital for aggregating data, grouping cases for analysis, and monitoring trends for specific cancer types. The system is continuously refined to incorporate the latest diagnostic and pathological understandings.

Comparative Analysis of ICD-O-3 Implementation and Evolution

The implementation of ICD-O-3 is not static; it evolves through initiatives like the National Cancer Institute's Cancer PathCHART (Cancer Pathology Coding and Histopathology Terminology). The table below summarizes key comparative features of the ICD-O-3 system and its contemporary updates, demonstrating its dynamic nature.

Table: Comparison of ICD-O-3 Standards and Cancer PathCHART Updates

| Feature | Traditional ICD-O-3 Standards | Cancer PathCHART Updates (2024-2026) |

|---|---|---|

| Primary Function | Code tumor site, morphology, and behavior [12] | Validate and refine site-morphology combinations based on expert pathology review [13] |

| Coding Source | International Classification of Diseases for Oncology, Third Edition [12] | ICD-O-3.2, incorporating new WHO Classification of Tumours, 5th Edition terms [13] |

| Validity Status | Classifies tumors as valid, unlikely, or impossible combinations | Updates validity status post-pathologist review (Newly Valid, Impossible, Unlikely) [13] |

| Implementation | Phased review of organ systems; pre-2024 standards used for historical cases [13] | Mandatory for cases diagnosed January 1, 2024, and forward; annual version releases (e.g., V2026) [13] |

| Reviewed Sites (Example) | Varies by year of diagnosis | 2024: Bone, Breast, Digestive, Female/Male Genital, Urinary2025: Respiratory, CNS, Soft Tissue2026: Head and Neck [13] |

Experimental Protocol for ICD-O-3 Coding and Validation

The process of coding and validating cancer registry data using ICD-O-3 is methodical and involves multiple steps to ensure data quality and accuracy.

- Case Ascertainment and Abstraction: Reporting sources, such as hospitals and treatment centers, are required to submit all newly diagnosed cancer cases to central cancer registries (e.g., the Pennsylvania Cancer Registry) [12]. Trained registrars abstract key information from medical records, including primary tumor site and histology.

- ICD-O-3 Coding: The abstracted information is coded according to ICD-O-3. Topography codes describe the organ of origin (e.g., C34.1 for upper lobe of lung), while morphology codes describe the cell type and behavior (e.g., 8140/3 for adenocarcinoma, malignant) [12].

- PathCHART Validation Edit: For cases diagnosed on or after January 1, 2024, the coded data is run against the Cancer PathCHART ICD-O-3 Site Morphology Validation Lists (CPC SMVLs) [13]. This edit checks for valid, unlikely, and impossible site, histology, and behavior code combinations based on the latest expert pathology review.

- Data Quality Assurance: Comprehensive evaluation activities are conducted by programs like the CDC's National Program of Cancer Registries (NPCR) and the NCI's Surveillance, Epidemiology, and End Results (SEER) Program to find missing cases or identify errors in the data [14]. This may involve re-abstraction of a sample of records to ensure consistency and completeness.

- Analysis and Grouping: For statistical analysis, individual ICD-O-3 codes are grouped into meaningful categories (e.g., "lung and bronchus" or "prostate") using schemes like the SEER Site Recode [15] to calculate incidence, mortality, and survival statistics.

Standard Populations for Age Adjustment: Enabling Comparative Epidemiology

Cancer risk varies dramatically with age. To compare cancer rates between two populations that have different age structures—such as Florida versus Utah, or the United States versus Nigeria—epidemiologists must remove the confounding effect of age. This is achieved through age-adjustment (or age-standardization), a statistical process that applies observed age-specific rates to a standard population distribution.

Comparative Analysis of Major Standard Populations

Different standard populations are used for different comparative purposes. The choice of standard can affect the absolute value of the reported rate, which is why it is critical to use the same standard when comparing rates. The following table provides a structured comparison of the most commonly used standard populations in cancer surveillance research.

Table: Comparison of Standard Populations for Age-Adjusting Cancer Rates

| Standard Population | Primary Use Case | Temporal/Geographic Focus | Key Characteristics |

|---|---|---|---|

| 2000 U.S. Standard Population [16] [14] | U.S. national and state-level cancer incidence and mortality reporting | Contemporary U.S. comparisons; default for SEER and CDC | Reflects an older age structure than earlier U.S. standards (1940, 1970); recommended by NCHS [14] |

| World (WHO 2000-2025) Standard [16] [17] | International comparisons of cancer incidence and mortality | Global health studies and worldwide comparisons | Designed to represent an average global population age structure for the early 21st century [16] |

| European Standard (EU-27 plus EFTA 2011-2030) [16] [17] | Health statistics within European nations | Intra-European and Europe-specific comparisons | Based on contemporary and projected demographic structures of European Union and EFTA countries [16] |

| World Cancer Patient Population (WCPP) [18] | Age-standardisation of cancer survival estimates | International benchmarking of cancer survival | A patient-based standard with three sets of weights for cancers with different age profiles (e.g., pediatric, young adult, older adult) |

Experimental Protocol for Calculating Age-Adjusted Rates

The direct method of age-adjustment is the standard protocol for calculating comparable cancer rates. The following workflow visualizes this multi-step process, from data collection to the final age-adjusted rate.

Diagram 1: Workflow for Direct Age-Adjustment of Cancer Rates. This diagram outlines the key steps researchers use to calculate age-adjusted rates, allowing for unbiased comparisons between populations with different age structures.

The methodology for direct age-adjustment, as referenced in the technical notes of the Pennsylvania Cancer Dashboard and the CDC, involves the following detailed steps [12] [14]:

- Stratify and Calculate Age-Specific Rates: Both the number of cancer cases (or deaths) and the corresponding population-at-risk are divided into the same age groups (e.g., 0-4, 5-9, ..., 85+). For each age group ( i ), a crude rate ( Ri ) is calculated as ( \frac{\text{Number of Cases}i}{\text{Population}_i} \times 100,000 ).

- Apply to Standard Population: Each age-specific rate ( Ri ) is applied to the proportion of individuals in the same age group within a chosen standard population ( ( Si ) ). This calculates the number of cases that would be "expected" in the standard population if it experienced the same age-specific rates as the study population: ( \text{Expected Cases}i = Ri \times S_i ).

- Sum Expected Cases: The total number of expected cases across all age groups is calculated: ( \text{Total Expected Cases} = \sum \text{Expected Cases}_i ).

- Calculate Final Age-Adjusted Rate: The total expected cases are divided by the total standard population and multiplied by 100,000 to produce the final age-adjusted rate: ( \text{Age-Adjusted Rate} = \frac{\text{Total Expected Cases}}{\text{Total Standard Population}} \times 100,000 ). This rate represents what the overall cancer rate would have been in the study population if it had the same age distribution as the standard population.

The Scientist's Toolkit: Essential Reagents for Standardized Cancer Surveillance

This table details key resources and methodologies that form the essential "research reagents" for conducting standardized cancer surveillance and epidemiology research.

Table: Essential Research Reagents and Resources for Cancer Surveillance

| Tool/Resource | Function in Research | Application Context |

|---|---|---|

| SEER*Stat Software [16] [12] | Statistical software for analyzing cancer incidence, mortality, survival, and prevalence data. | The primary tool used by SEER and NPCR to calculate age-adjusted rates, trends, and survival statistics. Provides access to public-use data. |

| Joinpoint Regression Model [12] [15] | A statistical algorithm that fits trend data and identifies points (joinpoints) where the trend changes significantly. | Used to analyze cancer trends over time. It calculates the Annual Percent Change (APC) for each segment and the Average Annual Percent Change (AAPC) over a fixed interval [15]. |

| Standard Population Data Files [16] | Provides the age-distribution weights (e.g., 2000 U.S., World WHO) needed for age-adjustment calculations. | Essential for ensuring comparability when calculating incidence or mortality rates. The 2000 U.S. Standard Population is the current default for U.S. reporting [14]. |

| Pohar-Perme Estimator [12] [17] | A statistical method for calculating net survival, which estimates survival in a hypothetical scenario where cancer is the only possible cause of death. | Used in population-based survival studies to account for background mortality, providing a standardized measure of cancer survival unbiased by other causes of death. |

| Cancer PathCHART SMVLs [13] | Site Morphology Validation Lists that define valid, unlikely, and impossible combinations of tumor site and morphology codes. | Used as an edit check for data quality control in cancer registries for cases diagnosed 2024 onward, ensuring pathological consistency. |

The rigorous application of ICD-O-3 and standard populations is not merely an administrative exercise in data management; it is the very framework that enables the validation of standardized epidemiological indicators. As demonstrated through the comparative data and experimental protocols, these tools provide the consistent definitions and methodological rigor required to generate reliable, comparable cancer statistics. For researchers, scientists, and drug development professionals, understanding and correctly applying these standards is fundamental. They allow for the accurate monitoring of cancer burden, the objective assessment of progress against cancer, and the identification of disparities that require intervention. As cancer diagnostics evolve, so too will these standards—as evidenced by the continuous updates to ICD-O-3 through Cancer PathCHART and the refinement of age groups for standardization. This ongoing process ensures that the global cancer research community remains equipped with a validated and unified toolkit for surveillance, ultimately accelerating the translation of data into knowledge and public health action.

Robust cancer surveillance systems are fundamental to public health, providing the data necessary to track epidemiological trends, guide resource allocation, and evaluate the success of cancer control interventions [10]. The global burden of cancer necessitates reliable, comparable data to inform policy and clinical research. However, significant challenges persist in achieving standardization across different systems, including variations in data collection practices, classification codes, and the adoption of key epidemiological indicators [10]. This guide objectively compares the performance and methodologies of major international cancer surveillance systems—the Global Cancer Observatory (GCO), the U.S. Surveillance, Epidemiology, and End Results (SEER) Program, the National Program of Cancer Registries (NPCR), and European registries—within the critical context of validating standardized epidemiological indicators for cancer research.

Comparative Analysis of Major Cancer Surveillance Systems

The following table summarizes the core characteristics, strengths, and data quality approaches of the four major systems under review.

Table 1: Comparative Overview of International Cancer Surveillance Systems

| System | Geographic Scope & Governance | Core Data Elements & Standardization | Key Strengths | Documented Data Quality Focus |

|---|---|---|---|---|

| Global Cancer Observatory (GCO) | Global; International Agency for Research on Cancer (IARC)/WHO [10]. | Incidence, prevalence, mortality, survival; ICD-O standards; multiple standard populations for ASRs [10]. | Comprehensive global coverage; interactive visualization tools; essential for international policy [10]. | Relies on aggregation of national data; quality can be limited in low-resource settings [19]. |

| SEER Program | United States (specific regions, ~48% population coverage); National Cancer Institute (NCI) [20]. | Incidence, mortality, survival, stage; ICD-O-3; delay-adjusted incidence rates [20]. | High-quality, validated data with deep historical data (since 1973); detailed patient and tumor characteristics [20]. | Uses statistical models (e.g., Joinpoint) for trends; adjusts for reporting delays; high reliability for research [20]. |

| National Program of Cancer Registries (NPCR) | United States (complementary coverage to SEER, ~99.7% population coverage); Centers for Disease Control and Prevention (CDC) [20]. | Incidence, mortality; data compiled with SEER for national estimates; ICD-O-3 [20]. | Achieves near-complete national population coverage through partnership with SEER [20]. | Data contributed to national statistics undergoes quality control and delay-adjustment [20]. |

| European Cancer Registries (e.g., via ECIS) | European Union; European Network of Cancer Registries (ENCR) & Joint Research Centre (JRC) [21] [22]. | Incidence, mortality, survival; ICD-O-3; data from 130+ population-based registries [21]. | Strong focus on harmonization and data quality indicators across diverse member states [22]. | Systematically monitors quality indicators: completeness (M:I ratio), validity (MV%, DCO%), timeliness [21]. |

Methodological Frameworks and Data Quality Protocols

A critical differentiator among surveillance systems is their methodological rigor in ensuring data quality, validity, and comparability. The following section details the specific experimental and quality control protocols employed.

Experimental Protocols for Data Quality Evaluation

European registries, coordinated through the ENCR and JRC, have established a robust, quantitative framework for assessing data quality. A 2023 study of 130 registries defined and evaluated the following key indicators, which serve as a benchmark for surveillance systems [21] [22]:

- Completeness (M:I Ratio): Calculated by dividing the number of deaths by the number of incident cases for a specific cancer site. A ratio that is too high may indicate under-ascertainment of incidence cases [21].

- Validity - Microscopic Verification (MV%): The proportion of cases confirmed by cytology, histology of a primary tumour, or histology of a metastasis. This confirms diagnostic accuracy [21].

- Validity - Death Certificate Only (DCO%): The proportion of cases registered based on a death certificate without a prior incidence report. A high DCO% suggests incomplete case finding from other sources [21] [22].

- Validity - Unspecified Morphology (UM%): The proportion of cases with non-specific morphology codes (e.g., 8000-8005 for solid tumours), indicating a lack of precise pathological classification [21].

- Timeliness: Measured as the median difference (in days) between the date of incidence and the date of registration in the database [21].

Table 2: Experimental Data Quality Benchmarks from European Registries (2010-2014)

| Cancer Site | DCO% (Total) | MV% (Total) | UM% (Total) | M:I Ratio (Total) | Timeliness (Days, Total) |

|---|---|---|---|---|---|

| Lip, Oral Cavity, Pharynx | 2.0% | 95.0% | 3.8% | 0.38 | 650 |

| Oesophagus | 3.3% | 88.9% | 6.7% | 0.90 | 394 |

| Stomach | 6.3% | 86.0% | 11.5% | 0.73 | 690 |

| Colon & Rectum | 3.4% | 89.9% | 6.8% | Information Missing | Information Missing |

Source: Adapted from [21]. Data is for all age groups (20+) across the study period.

Advanced System Design and Integration Protocols

Next-generation surveillance systems are incorporating advanced protocols for spatial analysis and predictive modeling. A 2025 study on a GIS-integrated system for Iran detailed a multi-phase development protocol [19]:

- Requirement Analysis: A systematic review (PRISMA guidelines) of 1,085 articles and evaluation of 13 international CSS to define critical data elements and standardization practices [19].

- System Design & Architecture: Use of Unified Modeling Language (UML) for data flow, use-case, and sequence diagrams. Implementation of a modular architecture using Django and Vue.js frameworks, with a relational database and API for data exchange [19].

- Evaluation: Usability assessment using Nielsen’s Heuristic Evaluation, achieving resolution of 85% of identified issues and high reliability (Cronbach’s alpha = 0.849) [19].

Diagram 1: Workflow for Developing Advanced Cancer Surveillance Systems. This protocol integrates systematic review, technical design, and rigorous validation [19].

The Researcher's Toolkit: Essential Reagents for Surveillance Research

This table catalogues key methodological "reagents" and their functions in cancer surveillance research, as evidenced by the comparative analysis.

Table 3: Essential Research Reagents for Cancer Surveillance Methodology

| Reagent / Methodological Component | Function in Surveillance Research | Exemplar System(s) |

|---|---|---|

| ICD-O-3 Classification | Ensures standardized coding of cancer topography and morphology, enabling consistent data collection and international comparability [10] [21]. | GCO, SEER, NPCR, European (ECIS) |

| Standard Populations (e.g., WHO, SEGI) | Allows for the calculation of Age-Standardized Rates (ASRs), which are essential for comparing incidence and mortality across populations with different age structures [10]. | GCO, SEER |

| Joinpoint Regression Analysis | A statistical method used to quantify trends in cancer rates (Annual Percent Change, APC) and identify significant points where the trend changes direction [20]. | SEER |

| Data Quality Indicators (MV%, DCO%, M:I) | Quantitative metrics that function as internal controls, validating the completeness and diagnostic accuracy of the registry data [21] [22]. | European Registries (ENCR) |

| Delay-Adjustment Modeling | A statistical correction applied to account for lags in case reporting, which is particularly important for the most recent data years and certain cancers [20]. | SEER, NPCR |

| GIS (Geographic Information Systems) | Enables spatial analysis and mapping of cancer incidence, helping to identify geographic hotspots and disparities for targeted interventions [19]. | Advanced/Next-Gen Systems |

The comparative analysis reveals that while systems like GCO provide indispensable global breadth, regional systems like SEER and the European network offer greater depth and proven rigor in data validation protocols. The future of cancer surveillance lies in integrating the strengths of these systems: adopting the comprehensive indicator frameworks and quality benchmarks of European registries, leveraging the advanced statistical modeling of SEER, and utilizing the spatial and predictive capabilities of next-generation systems. For researchers and drug development professionals, this synthesis underscores that rigorous, comparable cancer research depends on a foundation of standardized epidemiological indicators, whose validation is paramount for accurate progress tracking and equitable resource allocation worldwide.

Building and Implementing a Standardized Validation Framework

Systematic Development of a Standardized Data Checklist

This guide compares methodologies for developing standardized data checklists, with a specific focus on validating epidemiological indicators for cancer surveillance research. It is designed to assist researchers, scientists, and drug development professionals in selecting and applying rigorous checklist development protocols.

Methodological Approaches to Checklist Development

The systematic development of a standardized data checklist is a multi-stage process essential for ensuring transparency, reproducibility, and utility in research. This section compares the core methodologies identified from current literature, detailing their protocols and key differentiators.

Table 1: Comparative Evaluation of Checklist Development Methodologies

| Development Method | Key Characteristics | Primary Applications | Validation Approach | Included Sources |

|---|---|---|---|---|

| Guidelines 2.0 Framework [23] | Iterative development; 18 topics & 146 items; "guidelines for guidelines" | Health care guideline planning, formulation, implementation, and evaluation | Expert feedback via iterative consultation rounds | Manuals from international guideline developers, methodology reports |

| Systematic Review & Expert Consensus [10] | Multi-phase design; PRISMA-guided review; comparative system evaluation | Developing comprehensive frameworks for cancer surveillance systems (CSS) | Content Validity Ratio (CVR); Cronbach's alpha (α=0.849); expert panel (82% response rate) | 13 studies from 1,085 articles; 13 international CSS |

| ACCORD Roadmap [24] | Translates systematic review gaps into reporting checklist items | Enhancing quality and transparency in reporting guideline development | Flexibility in search strategies and data extraction; panelist feedback | Systematic review findings; EQUATOR network toolkit |

| GUIDES Checklist [25] | 16-factor checklist across 4 domains (context, content, system, implementation) | Improving successful use of guideline-based computerised clinical decision support (CDS) | International expert panel (90%+ response); pilot testing with 30 trial reports; patient feedback | 71 papers from 5,347 screened; 21 frameworks; 16 systematic reviews |

Detailed Experimental Protocols

Protocol for Systematic Review & Expert Consensus (as used in CSS framework development) [10]:

- Search Strategy: Execute systematic searches across major databases (e.g., PubMed, Embase, Scopus, Web of Science, IEEE) using predefined keywords and Boolean operators. The search should be confined to a specific timeframe (e.g., 2000-2023) and peer-reviewed articles.

- Screening Process: Adhere to PRISMA guidelines. Initially screen titles and abstracts against eligibility criteria, followed by a full-text review of shortlisted articles.

- Data Extraction: Systematically extract key data elements, indicators, and methodological practices from included studies into a standardized form.

- Comparative Evaluation: Analyze existing international systems (e.g., GCO, ECIS, NordCan) to identify universal data elements and best practices.

- Checklist Validation:

- Content Validity: Calculate the Content Validity Ratio (CVR) with an expert panel to assess the necessity of each item.

- Reliability: Measure internal consistency using Cronbach's alpha (a value > 0.8 indicates high reliability [10]).

Protocol for Iterative Framework Development (as used in the GUIDES checklist) [25]:

- Systematic Review: Conduct a sensitive review of evidence and frameworks to identify success factors.

- Synthesis and Drafting: Extract identified factors to create a preliminary checklist.

- Expert Consultation: Engage an international, multidisciplinary expert panel for structured feedback over multiple rounds, assessing factors against desirable framework attributes.

- Pilot Testing: Apply the checklist to a sample of trial reports and use it in focus group discussions with end-users (e.g., clinicians, patients) to evaluate practicality and identify omitted factors.

Technical Implementation and Visualization

The following workflow and diagram synthesize the core process for developing a standardized checklist, integrating elements from the methodologies compared in Table 1.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential methodological components for developing and validating a standardized checklist in cancer surveillance.

Table 2: Essential Reagents and Resources for Checklist Development

| Research Reagent / Resource | Function / Application in Development | Exemplar Use Case |

|---|---|---|

| PRISMA Guidelines [10] | Ensures transparent and complete reporting of systematic reviews, which form the evidence base for checklist items. | Guided the systematic review in CSS framework development [10]. |

| Content Validity Ratio (CVR) [10] | Statistically quantifies expert consensus on the necessity of each proposed checklist item. | Validated critical data elements for cancer surveillance with expert panel [10]. |

| Cronbach's Alpha [10] | Measures the internal consistency and reliability of the checklist items as a scale. | Achieved high reliability (α=0.849) for the CSS checklist [10]. |

| International Expert Panel | Provides multidisciplinary feedback on draft checklist items, ensuring relevance and practicality. | Used in both the GUIDES and CSS frameworks to refine factors and items [25] [10]. |

| Pilot Testing Protocol | Evaluates the real-world usability and effectiveness of the checklist in a controlled setting. | Involved applying the GUIDES checklist to 30 trial reports and focus groups [25]. |

| Standardized Data Models (e.g., ICD-O-3) [10] | Provides a common language for data elements, ensuring consistency and interoperability in the resulting checklist. | Incorporated into the CSS framework for precise cancer type classification [10]. |

In the field of cancer surveillance research, the accuracy and reliability of data collection instruments are paramount. Robust validation techniques ensure that epidemiological indicators accurately capture the complex constructs they are designed to measure, such as cancer incidence, prevalence, survival rates, and years of life lost. Within this context, content validity determines whether an instrument adequately covers all relevant aspects of the construct, while reliability assesses the consistency of measurements. Two fundamental metrics used in this validation process are the Content Validity Ratio (CVR) and Cronbach's Alpha.

Content validity evaluates how well an instrument covers all relevant parts of the construct it aims to measure [26]. In cancer surveillance, this ensures that all essential epidemiological indicators—such as incidence, mortality, survival rates, and disability-adjusted measures—are sufficiently represented in data collection tools [27]. Content Validity Ratio (CVR) provides a quantitative measure of content validity, specifically assessing whether individual items in an instrument are essential for measuring the construct [28]. Meanwhile, Cronbach's Alpha serves as a crucial measure of reliability, specifically internal consistency, indicating how closely related a set of items are as a group [29] [30]. For researchers developing and validating standardized epidemiological indicators for cancer surveillance, understanding the complementary applications of these two metrics is essential for creating robust, scientifically sound measurement instruments.

Theoretical Foundations: CVR vs. Cronbach's Alpha

Conceptual Definitions and Distinctions

Content Validity Ratio (CVR) and Cronbach's Alpha represent fundamentally different aspects of measurement quality, though both are essential in the development and validation of epidemiological instruments. CVR is primarily concerned with content validity—the degree to which elements of an assessment instrument are relevant to and representative of the targeted construct for a particular assessment purpose [28] [31]. In contrast, Cronbach's Alpha measures internal consistency reliability, which assesses the extent to which items in a test or instrument measure the same underlying construct [29] [32].

This distinction is crucial in cancer surveillance research, where instruments must not only measure constructs consistently (reliability) but must also ensure those constructs comprehensively represent the multidimensional nature of cancer epidemiology (validity). For instance, a cancer surveillance instrument might demonstrate high internal consistency (high Cronbach's Alpha) while failing to capture important aspects of cancer burden, such as years lived with disability or geographic disparities—a limitation that would be identified through content validity assessment using CVR [27].

Relationship to Other Validity Types

Both CVR and Cronbach's Alpha function within a broader validity framework that includes multiple validation approaches:

- Face Validity: Assesses whether the test appears suitable for its aims at surface level [26]

- Criterion Validity: Evaluates how well results measure the concrete outcome they're designed to measure [26]

- Construct Validity: Determines whether the test measures the theoretical concept it intends to measure [26]

Content validity, measured by CVR, is considered a prerequisite for other forms of validity [28]. Without adequate content validity, even instruments with high internal consistency (Cronbach's Alpha) may lack meaningfulness for their intended purpose in cancer surveillance.

Table 1: Key Characteristics of CVR and Cronbach's Alpha

| Feature | Content Validity Ratio (CVR) | Cronbach's Alpha |

|---|---|---|

| Primary Focus | Content representation and relevance | Internal consistency and reliability |

| Measurement Scale | -1 to +1 | 0 to 1 |

| Key Interpretation | Values above critical threshold indicate essential items | Higher values indicate greater internal consistency |

| Dependence on Test Length | Independent | Increases with more items |

| Expert Involvement | Requires subject matter experts | Does not require expert judgment |

| Stage of Use | Early instrument development | Later validation stages |

Content Validity Ratio (CVR): Methodology and Application

Theoretical Basis and Calculation

The Content Validity Ratio, developed by Lawshe, provides a quantitative approach to content validity assessment that systematically incorporates judgments from subject matter experts (SMEs) [28] [31]. The CVR methodology is particularly valuable in cancer surveillance research, where accurate representation of complex epidemiological constructs is essential. The process begins with assembling a panel of SMEs who evaluate each item in an instrument based on its necessity for measuring the target construct. Each expert classifies items as "essential," "useful but not essential," or "not necessary" [26] [28].

The CVR for each item is calculated using the formula:

CVR = (nₑ - N/2) / (N/2)

Where:

This formula yields values ranging from -1 to +1. A value of +1 indicates all panelists agree the item is essential; -1 indicates all agree the item is not necessary; and 0 indicates equal numbers of essential and non-essential ratings [28].

Critical Values and Statistical Significance

To determine whether agreement among experts exceeds chance levels, Lawshe established critical values for CVR based on the number of experts participating [26]. The following table presents these critical values:

Table 2: Lawshe's Critical Values for Content Validity Ratio

| Number of Panelists | Critical Value |

|---|---|

| 5 | 0.99 |

| 6 | 0.99 |

| 7 | 0.99 |

| 8 | 0.75 |

| 9 | 0.78 |

| 10 | 0.62 |

| 11 | 0.59 |

| 12 | 0.56 |

| 20 | 0.42 |

| 30 | 0.33 |

| 40 | 0.29 |

Items with CVR values below the critical value for the corresponding number of experts should be revised or eliminated from the instrument [26].

Content Validity Index (CVI)

To assess the overall content validity of an entire instrument, the Content Validity Index (CVI) is calculated as the average of all CVR scores for items retained after the initial evaluation [26]. The CVI provides a single value representing the overall content validity of the instrument, with values closer to 1.0 indicating stronger content validity [26].

Diagram 1: Content Validity Ratio Assessment Workflow

Cronbach's Alpha: Methodology and Interpretation

Theoretical Basis and Calculation

Cronbach's Alpha, developed by Lee Cronbach in 1951, is the most widely used measure of internal consistency reliability [29]. It assesses the extent to which items in a instrument measure the same underlying construct, based on the average inter-item correlations and the number of items [30] [32]. In cancer surveillance research, this is particularly important for ensuring that multi-item scales designed to measure complex constructs like healthcare quality or patient-centered communication produce consistent results.

The formula for Cronbach's Alpha is:

α = (k / (k-1)) * (1 - (∑σ²ᵢ / σ²ₜ))

Where:

- k = number of items

- ∑σ²ᵢ = sum of variances of each item

- σ²ₜ = variance of the total test scores [32]

Alternatively, it can be expressed as:

α = (k * c̄) / (v̄ + (k-1) * c̄)

Where:

- k = number of items

- c̄ = average covariance between item pairs

- v̄ = average variance of items [30] [32]

Interpretation Guidelines

Interpretation of Cronbach's Alpha values follows generally accepted guidelines, though context should be considered:

Table 3: Interpreting Cronbach's Alpha Values

| Alpha Coefficient | Interpretation | Recommendation |

|---|---|---|

| α < 0.5 | Unacceptable | Substantive revision required |

| 0.5 ≤ α < 0.6 | Poor | Consider revision |

| 0.6 ≤ α < 0.7 | Questionable | May be acceptable for exploratory research |

| 0.7 ≤ α < 0.8 | Acceptable | Suitable for applied research |

| 0.8 ≤ α < 0.9 | Good | Appropriate for high-stakes decisions |

| α ≥ 0.9 | Excellent | Possible item redundancy [29] [30] [32] |

It's important to note that alpha is sensitive to the number of items in the scale—adding more items tends to increase alpha, potentially leading to inflated values when items are redundant [29] [33]. Conversely, scales with too few items may underestimate reliability [29].

Limitations and Misconceptions

Despite its widespread use, several limitations and misconceptions surround Cronbach's Alpha:

- Not a Measure of Unidimensionality: A high alpha does not prove that a scale measures a single construct; multidimensional scales can also produce high alpha values [30] [32]

- Assumption of Tau-Equivalence: Alpha assumes all items measure the same underlying construct on the same scale, which may not always hold true [29]

- Context-Dependent: Alpha is a property of scores from a specific sample, not the test itself, and should be calculated each time the test is administered [29]

- Potential for Misinterpretation: Very high alpha values (>0.95) may indicate redundant items rather than excellent reliability [29]

Diagram 2: Cronbach's Alpha Assessment Workflow

Comparative Application in Cancer Surveillance Research

Practical Implementation in Epidemiological Studies

In cancer surveillance research, CVR and Cronbach's Alpha play complementary but distinct roles throughout the instrument development and validation process. A recent systematic review aimed at developing a comprehensive framework for cancer surveillance systems demonstrated this integrated approach, where a researcher-designed checklist consolidating essential data elements was "validated through expert consultation with a response rate of 82% (n = 14), achieving high reliability (Cronbach's alpha = 0.849)" [27]. This exemplifies how both content validity and internal consistency reliability are established in rigorous instrument development.

Similarly, in the development of a GIS-integrated cancer surveillance system, researchers reported that the system "incorporated critical data elements validated with CVR (> 0.51) and Cronbach's alpha (0.849)" [19]. This demonstrates the sequential application of these metrics—first establishing content validity through CVR, then assessing internal consistency through Cronbach's Alpha.

Comparative Strengths and Limitations

Table 4: Direct Comparison of CVR and Cronbach's Alpha in Research Context

| Aspect | Content Validity Ratio (CVR) | Cronbach's Alpha |

|---|---|---|

| Primary Research Question | "Do these items adequately represent the construct domain?" | "Do these items consistently measure the same construct?" |

| Stage of Application | Early content development phase | Later validation phase |

| Data Source | Expert judgments | Participant responses |

| Resource Requirements | Access to subject matter experts | Access to target population sample |

| Key Strengths | Ensures comprehensive content coverage; Identifies redundant or missing content | Quantifies measurement consistency; Assesses scale coherence |

| Key Limitations | Dependent on expert selection; Does not assess actual performance | Does not ensure content validity; Sensitive to number of items |

| Complementary Role | Establishes foundational validity | Confirms measurement reliability |

Integrated Validation Framework

For comprehensive instrument validation in cancer surveillance research, CVR and Cronbach's Alpha should be employed sequentially within a broader validation framework:

- Construct Definition: Clearly define the epidemiological construct (e.g., cancer burden, healthcare quality, surveillance completeness)

- Content Validation (CVR): Engage subject matter experts to evaluate item relevance and representativeness

- Instrument Refinement: Revise based on CVR results to ensure content validity

- Reliability Assessment (Cronbach's Alpha): Administer to pilot sample to assess internal consistency

- Dimensionality Analysis: Conduct factor analysis to verify underlying structure

- Final Validation: Establish criterion and construct validity through hypothesis testing

This integrated approach ensures that cancer surveillance instruments are both comprehensive in their content coverage and consistent in their measurement properties.

Essential Research Reagents and Materials

Table 5: Essential Research Reagents for Validation Studies

| Resource Category | Specific Examples | Research Function |

|---|---|---|

| Expert Panel Resources | Subject Matter Experts (SMEs) in oncology, epidemiology, public health; Lay experts from target population | Provide essential judgments for content validity assessment (CVR) |

| Data Collection Platforms | SPSS, Stata, R, Python with specialized packages (psy, psych) | Facilitate statistical analysis including Cronbach's Alpha calculation |

| Validation Protocols | Lawshe's CVR protocol; Factor analysis procedures; Cognitive interviewing guides | Standardize implementation of validation methodologies |

| Reference Standards | ICD-O-3 classification; Standard populations (SEGI, WHO); Epidemiological guidelines | Ensure alignment with established classification and reporting systems |

| Sample Populations | Pilot participants representing target demographic and clinical characteristics | Provide data for reliability testing and instrument refinement |

In the rigorous field of cancer surveillance research, robust validation of measurement instruments is not merely methodological refinement but a scientific necessity. The Content Validity Ratio (CVR) and Cronbach's Alpha offer complementary approaches to establishing different aspects of measurement quality—CVR ensuring comprehensive content coverage and relevance, and Cronbach's Alpha confirming internal consistency and reliability. Rather than viewing these metrics as alternatives, researchers should employ them sequentially within an integrated validation framework.

The application of these techniques in recent cancer surveillance research demonstrates their practical utility in developing standardized epidemiological indicators [27] [19]. By systematically implementing both CVR and Cronbach's Alpha throughout the instrument development process, researchers can create measurement tools that are both comprehensive in their coverage of complex cancer-related constructs and consistent in their measurement properties. This rigorous approach to validation strengthens the scientific foundation of cancer surveillance systems, ultimately enhancing the quality of data that informs public health decision-making and cancer control strategies globally.

The escalating global burden of cancer necessitates a transformation in public health surveillance, moving from static reporting to dynamic, predictive systems capable of informing targeted interventions. Robust cancer surveillance systems (CSS) are indispensable for tracking epidemiological trends, allocating resources, and guiding evidence-based cancer control policies [19] [10]. However, traditional systems often lack on-demand analytics, spatial visualization, and predictive modeling, limiting their utility in addressing critical healthcare disparities [19]. The integration of Geographic Information Systems (GIS) mapping and predictive modeling represents a paradigm shift, enabling a more nuanced understanding of cancer patterns and their underlying drivers. This guide objectively compares the performance of various technological approaches and methodological frameworks employed in modern cancer surveillance, providing researchers and drug development professionals with validated experimental data and protocols to advance the field of epidemiological indicator validation.

Comparative Evaluation of Predictive Modeling Approaches

Performance Metrics of Spatial Prediction Models

Selecting an appropriate predictive model is crucial for accurate cancer surveillance and risk mapping. The following table summarizes the performance of different machine learning models as evaluated in recent spatial epidemiological studies.

Table 1: Performance Comparison of Machine Learning Models in Cancer Spatial Prediction

| Model Name | Application Context | Performance Metrics | Key Strengths | Reference Study |

|---|---|---|---|---|

| Random Forest (RF) | Predicting Cholangiocarcinoma (CCA) Age-Standardized Rates (ASR) in Thailand | Training R² = 72.07%; Testing R² = 71.66% | Superior overall prediction performance, handled non-linear relationships well | [34] |

| Random Forest (RF) | Analyzing geospatial & socioeconomic disparities in US breast cancer screening | R² = 64.53%; RMSE = 2.06 | Outperformed Linear Regression and Support Vector Machine models | [35] |

| Extreme Gradient Boosting (XGBoost) | Predicting Cholangiocarcinoma (CCA) ASR in Thailand (regional variation) | Best performance in central and southern regions of Thailand | Regional variation in performance; excelled in specific geographical contexts | [34] |

| Linear Regression | Predicting Cholangiocarcinoma (CCA) ASR in Thailand (baseline comparison) | Lower performance compared to tree-based models | Served as a baseline; assumes linear relationships between variables | [34] |

Methodologies for Model Development and Evaluation

The experimental protocols for developing and validating these models are critical for ensuring reproducible results.

2.2.1 Data Preparation and Preprocessing

- Data Splitting: Models were typically trained and tested using a 70:30 or 75:25 train-test validation split to evaluate generalization performance [35] [34].

- Handling Missing Data: For the breast cancer screening study, census tracts with missing mammography data were excluded. Missing independent variables were imputed using the mean (for numerical data) or mode (for binary data) from the 20 closest neighboring records [35].

- Variable Transformation: In the CCA study, variables exhibiting abnormal distribution patterns (skewness) underwent logarithmic transformation before being fed into the machine learning models [34].

2.2.2 Model Training and Validation

- Hyperparameter Tuning: A systematic hyperparameter search was conducted for the Random Forest model in the breast cancer study. A predefined grid of values for the number of trees and variables per split was explored [35].

- Cross-Validation: A 5-fold cross-validation technique was employed to assess the model's performance across different combinations of hyperparameters, helping to select the configuration that minimized the Root Mean Squared Error (RMSE) [35].

- Variable Importance Analysis: Shapley Additive Explanations (SHAP) values were used in the breast cancer screening study to assess the significance of variables and the direction of their influence, moving beyond simple predictive accuracy to model interpretability [35].

A Framework for Advanced Cancer Surveillance Systems

Essential Data Elements and Standardization

A comprehensive, validated framework is foundational for any CSS integrating advanced capabilities. A systematic review and comparative evaluation of 13 international systems identified critical, standardized data elements required for a robust CSS [19] [10].

Table 2: Standardized Data Framework for Advanced Cancer Surveillance

| Category | Specific Data Elements | Standardization & Function |

|---|---|---|

| Core Epidemiological Indicators | Incidence, Prevalence, Mortality, Survival Rates | Tracks burden and outcomes; enables trend analysis. |

| Disability-Adjusted Measures | Years Lived with Disability (YLD), Years of Life Lost (YLL) | Captures societal and economic impact of cancer. |

| Demographic Stratification | Age, Sex, Geographic Location | Enables identification of disparities and targeted interventions. |

| Cancer Classification | ICD-O-3 morphology and topography codes | Ensures precision, consistency, and global comparability. |

| Age-Standardized Rates (ASR) | Uses SEGI, WHO, or national standard populations | Allows for valid cross-regional and temporal comparisons. |

This framework, validated with high reliability (Cronbach’s alpha = 0.849) and expert consensus (Content Validity Ratio > 0.51), ensures data consistency and interoperability, which are vital for multi-site research and drug development trials [19] [10].

System Architecture and Implementation

The technological implementation of an advanced CSS requires a modular and scalable architecture. One exemplar system was built using Django (a Python-based back-end framework) and Vue.js (a front-end JavaScript framework), creating a responsive platform capable of handling over 20 million records [19]. The design process utilized Unified Modeling Language (UML) for data flow, use-case, sequence, and activity diagrams to ensure robust data integration and intuitive user workflows. An Application Programming Interface (API) was implemented for seamless data exchange, and Role-Based Access Control (RBAC) was defined to manage different user permissions [19]. A usability evaluation based on Nielsen’s Heuristic Assessment resolved 85% of identified issues, confirming the system's functionality and user satisfaction [19].

Implementing GIS and predictive modeling in cancer research requires a suite of methodological tools and data resources.

Table 3: Essential Research Reagent Solutions for GeoAI and Predictive Modeling

| Tool/Resource | Category | Primary Function |

|---|---|---|

| ICD-O-3 Coding | Data Standardization | Standardized classification of cancer morphology and topography for consistent data aggregation and international comparison. |

| UML Diagrams | System Design | Visualizes system architecture, data flows, and user interactions during the CSS design phase to ensure robustness. |

| Random Forest / XGBoost | Predictive Analytics | Machine learning algorithms for predicting cancer incidence, screening rates, and identifying high-risk spatial clusters. |

| Getis-Ord Gi Statistic | Spatial Analysis | Identifies statistically significant hotspots and coldspots of cancer incidence or screening rates from geospatial data. |

| Shapley Additive Explanations (SHAP) | Model Interpretation | Explains the output of machine learning models, showing how each input variable contributes to the prediction. |

| Django & Vue.js | Software Development | Frameworks for building scalable, modular web applications for surveillance systems with real-time analytics. |

| Behavioral Risk Factor Surveillance System (BRFSS) | Data Source | Provides population-level data on health behaviors, including cancer screening uptake, used as model input. |

Workflow and System Integration

The integration of diverse data sources and analytical components into a cohesive surveillance system follows a structured workflow. The diagram below illustrates the logical pathway from data collection to actionable public health insights.

Surveillance System Workflow

This workflow underpins advanced surveillance platforms. For instance, a GIS-integrated CSS in Iran demonstrated the capability for on-demand monitoring, spatial analysis, and risk factor evaluation, forecasting cancer trends over 5-, 10-, and 20-year horizons [19]. Similarly, a US study used this logical flow to first process data, then perform spatial clustering to identify low-screening regions in the Midwest, and finally use a Random Forest model to identify key predictive variables like the percentage of the Black population and the number of nearby mammography facilities [35]. This end-to-end integration bridges the gap between raw data and evidence-based intervention strategies.

Leveraging Real-Time Data Extraction from Electronic Health Records (EHRs)

The escalating global burden of cancer necessitates advanced surveillance methodologies capable of leveraging the vast data resources contained within Electronic Health Records (EHRs). Traditional cancer registry systems often operate with significant time lags, limiting their utility for real-time public health intervention and clinical research [19]. The emergence of sophisticated data extraction technologies, including automated harmonization systems and artificial intelligence (AI), is transforming EHRs from static digital repositories into dynamic sources of real-world evidence. This guide objectively compares the performance of contemporary real-time EHR data extraction systems and their validation within cancer surveillance research, providing researchers, scientists, and drug development professionals with a critical analysis of technological alternatives and their experimental underpinnings.

Performance Comparison of EHR Data Extraction Systems

The evaluation of systems designed for EHR data extraction reveals significant variations in architectural approach, technological implementation, and performance metrics. The table below provides a structured comparison of contemporary solutions based on recent validation studies.

Table 1: Performance Comparison of Real-Time EHR Data Extraction and Harmonization Systems

| System / Approach | Primary Technology | Key Validation Metric | Performance Outcome | Cancer Types Validated |

|---|---|---|---|---|

| Datagateway (NCR) [36] | Automated ETL, Common Data Model | Diagnosis Concordance | 100% | Acute Myeloid Leukemia, Multiple Myeloma, Lung Cancer, Breast Cancer |

| New Diagnosis Accuracy | 95% | |||

| Treatment Regimen Accuracy | >95% | |||

| Flatiron Health LLM [37] | Large Language Model (Anthropic Claude) | F1 Score (Progression Event Extraction) | Similar to Expert Human Abstractors | 14 Cancer Types |

| Real-world Progression-Free Survival Estimate Concordance | Nearly Identical to Manual Abstraction | |||