Validating TME-Related Gene Signatures: From Biomarker Discovery to Clinical Application in Cancer

This comprehensive review explores the evolving landscape of tumor microenvironment (TME) gene signature validation for cancer research and therapeutic development.

Validating TME-Related Gene Signatures: From Biomarker Discovery to Clinical Application in Cancer

Abstract

This comprehensive review explores the evolving landscape of tumor microenvironment (TME) gene signature validation for cancer research and therapeutic development. We examine foundational concepts of TME heterogeneity across cancer types including NSCLC, cholangiocarcinoma, gastric cancer, and osteosarcoma, then detail methodological frameworks integrating multi-omics data, machine learning, and spatial transcriptomics for signature development. The article addresses critical troubleshooting aspects including batch effect correction, feature selection challenges, and model overfitting, while providing rigorous validation frameworks encompassing external cohort testing, clinical correlation analysis, and comparative performance assessment against established biomarkers. This resource equips researchers and drug development professionals with validated approaches for translating TME signatures into reliable prognostic tools and predictive biomarkers for immunotherapy response.

Decoding the Tumor Microenvironment: Cellular Heterogeneity and Prognostic Significance

This technical support center provides resources for researchers validating Tumor Microenvironment (TME)-related gene signatures. The TME is a complex ecosystem of cancerous and non-cancerous cells that evolves throughout cancer progression and critically influences tumor behavior, metastasis, and therapy response [1]. Accurately defining its components—immune cells, stromal elements, and vascular networks—is foundational for building robust prognostic and predictive molecular models [2].

Core TME Components: Definitions and Functions

The TME consists of cellular and non-cellular components that interact dynamically with tumor cells. Its composition varies by tumor type, stage, and patient characteristics [1].

- Diagram: Core Composition of the Tumor Microenvironment (TME)

Immune Cells

Immune cells within the TME exhibit a functional dichotomy, capable of both suppressing and promoting tumor growth [3]. Their spatial distribution defines critical tumor immunophenotypes: immune-inflamed (cells infiltrated throughout), immune-excluded (cells trapped at the periphery), and immune-desert (minimal to no infiltration) [3] [4].

Key Immune Cell Types and Functions:

- Cytotoxic T-cells (CD8+): Recognize and kill tumor cells; their presence is generally associated with a favorable prognosis [3].

- Regulatory T-cells (Tregs): Suppress anti-tumor immune responses, promoting tumor progression [3] [5].

- Tumor-Associated Macrophages (TAMs): Often polarized to a pro-tumor (M2) state, supporting angiogenesis, matrix remodeling, and immunosuppression. High density frequently correlates with poor prognosis [3] [5].

- Myeloid-Derived Suppressor Cells (MDSCs): Suppress T-cell and NK cell activity, facilitating immune evasion [5].

- B-cells: Can have pro- or anti-tumor roles via antibody production, antigen presentation, and cytokine secretion [3].

- Natural Killer (NK) Cells: Mediate direct killing of tumor cells, though their activity is often suppressed within the core TME [3].

Table 1: Major Immune Cell Populations in the TME

| Cell Type | Key Subsets | Primary Functions in TME | General Prognostic Association |

|---|---|---|---|

| T Lymphocytes | Cytotoxic (CD8+), Helper (CD4+), Regulatory (Treg) | Direct tumor killing, immune coordination, immune suppression | Favorable (CD8+), Variable/Poor (High Treg) [3] [4] |

| B Lymphocytes | Regulatory B cells (Bregs), Plasma cells | Antibody production, antigen presentation, cytokine secretion | Context-dependent (pro- or anti-tumor) [3] |

| Innate Immune Cells | M1/M2 Macrophages, Neutrophils, MDSCs, Dendritic Cells | Phagocytosis, matrix remodeling, angiogenesis, antigen presentation, immune suppression | Often poor (High M2, MDSCs) [3] [5] |

| Natural Killer Cells | Various cytotoxic subsets | Direct tumor cell lysis, cytokine secretion | Favorable [3] |

Stromal Elements

Stromal cells provide structural and functional support to the tumor. They are recruited or co-opted from host tissues and become activated, playing critical roles in tumor progression and therapy resistance [6].

- Cancer-Associated Fibroblasts (CAFs): The most abundant stromal cell type. CAFs are highly heterogeneous and can originate from resident fibroblasts, mesenchymal stem cells, or even endothelial cells [6]. They promote tumor growth by remodeling the extracellular matrix (ECM), secreting pro-tumorigenic cytokines (e.g., CXCL12, IL-6), and inducing therapy resistance [7] [6].

- Mesenchymal Stem Cells (MSCs): Can differentiate into various stromal cells, contribute to the pre-metastatic niche, and modulate immune responses [6] [5].

- Adipocytes: In relevant cancers, provide metabolic support to tumors and secrete hormones and cytokines that promote progression [6] [5].

- Extracellular Matrix (ECM): A non-cellular network of proteins (collagens, fibronectin) and polysaccharides. Tumor ECM is often denser and stiffer due to increased collagen deposition and cross-linking, which activates pro-invasive signaling pathways in cancer cells (e.g., via TWIST1) [7]. This stiffness is a key biophysical property that influences tumor cell behavior [7].

Table 2: Key Stromal Components in the TME

| Component | Origin | Key Functions & Influences | Experimental Markers |

|---|---|---|---|

| CAFs | Resident fibroblasts, MSCs, endothelial cells | ECM remodeling, cytokine secretion, immune modulation, drug resistance | α-SMA, FAP, PDGFR-α/β [6] |

| Mesenchymal Stem Cells (MSCs) | Bone marrow, adipose tissue | Differentiation into stromal cells, immunomodulation, niche formation | CD73, CD90, CD105 [6] |

| Extracellular Matrix (ECM) | Secreted by stromal/cancer cells | Structural scaffold, biophysical cues (stiffness), stores growth factors | Collagen I/III, Fibronectin, Laminin [7] |

| Adipocytes | Adipose tissue | Energy storage, secretion of adipokines and hormones | FABP4, Adiponectin [6] [5] |

Vascular Networks

Tumor blood vessels form to supply oxygen and nutrients. This process, angiogenesis, is primarily driven by hypoxia-induced factors (HIFs) and signaling through Vascular Endothelial Growth Factor (VEGF) [3].

- Endothelial Cells: Line blood vessels. In tumors, they are often abnormal—leaky, disorganized, and poorly perfused—contributing to hypoxia and high interstitial fluid pressure [3].

- Pericytes: Cells that provide stability to vessel walls. Their coverage is often loose in tumors, exacerbating vessel abnormality [6].

- Lymphatic Vessels: Facilitate immune cell trafficking and can serve as conduits for metastatic spread [5].

The resulting hypoxic and acidic conditions within the TME are potent drivers of immune evasion, genomic instability, and therapy resistance, making hypoxia-related genes critical components of many TME signatures [2] [5].

TME Gene Signature Validation: A Technical Workflow

Gene signatures quantify TME states by measuring the expression of curated gene sets. The validation of such signatures is a multi-step process critical for establishing clinical utility [2].

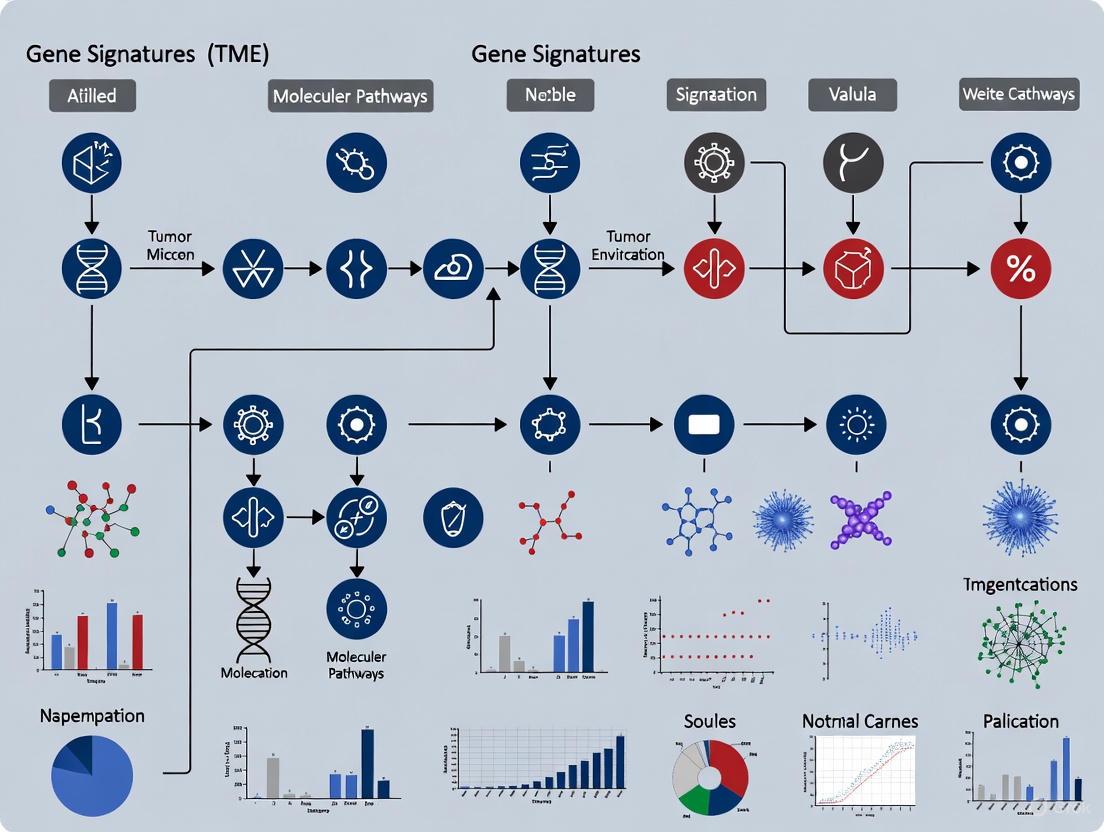

- Diagram: Workflow for Developing & Validating a TME Gene Signature

Detailed Experimental Protocol (Based on a Hypoxia-Immune Signature Study [2]):

- Data Acquisition: Obtain transcriptomic data (RNA-seq or microarray) with matched clinical information (e.g., survival, stage) from public repositories like The Cancer Genome Atlas (TCGA) and Gene Expression Omnibus (GEO). This serves as the training cohort.

- Gene Curation: Compile a candidate gene list related to TME processes of interest (e.g., hypoxia, immune response) from specialized databases such as MSigDB and ImmPort [2].

- Feature Selection: Perform univariate Cox proportional hazards regression to identify genes with significant prognostic value. Refine the list using LASSO (Least Absolute Shrinkage and Selection Operator) regression to penalize and reduce overfitting, yielding a final, compact gene set [2].

- Model Construction: Calculate a risk score for each patient. A common formula is:

Risk Score = Σ (Expression_i * Coefficient_i)for each gene in the signature. Patients are stratified into high-risk and low-risk groups based on the median or optimal cut-off score. - Internal Validation: Assess the model's performance on the training cohort using:

- Kaplan-Meier Analysis: Log-rank test to compare survival curves between risk groups.

- Time-Dependent ROC Curves: Evaluate the model's predictive accuracy at 1, 3, and 5 years (e.g., AUC of 0.65 indicates fair predictive power) [2].

- Multivariate Cox Regression: Confirm the risk score is an independent prognostic factor after adjusting for clinical variables like age and stage [2].

- External Validation: Apply the exact same risk score formula and cut-off to an independent validation cohort (e.g., from a different GEO dataset) to verify generalizability.

- Biological Correlation: Use algorithms (e.g., CIBERSORT, ESTIMATE) on the transcriptomic data to infer immune cell infiltration scores. Correlate these with the risk score to confirm the signature captures the intended TME biology (e.g., high-risk score correlates with immunosuppression) [2].

Table 3: Example Performance Metrics from a Validated TME Signature Study [2]

| Validation Metric | Cohort | Result / Value | Interpretation |

|---|---|---|---|

| Signature Genes | TCGA NSCLC | 8 genes (e.g., AKAP12, SERPINE1, CD79A) | Compact, biologically relevant gene set. |

| Risk Score HR (Multivariate) | TCGA NSCLC | HR = 1.82 (95% CI: 1.44-2.30, P<0.001) | Risk score is a strong, independent prognostic factor. |

| Prediction AUC (1/3/5-year) | TCGA NSCLC | 0.643 / 0.649 / 0.620 | Consistent, fair predictive accuracy over time. |

| Survival Difference (Log-rank P) | TCGA & GEO | P < 0.001 | Highly significant separation of risk groups. |

| Immune Correlation | TCGA NSCLC | High immune activity linked to better survival | Signature reflects immunogenic TME state. |

Technical Support Center: Troubleshooting TME Research

Troubleshooting Guides & FAQs

Q1: Our TME gene signature performs well in the training cohort but fails in the validation cohort. What could be the cause? A: This is often due to batch effects or cohort-specific heterogeneity.

- Solution: Apply batch correction algorithms (e.g., ComBat) to the combined training and validation datasets before model building. Ensure the validation cohort represents a similar patient population (same cancer subtype, stage). Consider using multiple independent cohorts for validation to ensure robustness [2].

Q2: How do we account for the spatial heterogeneity of the TME when using bulk RNA-seq data to develop a signature? A: Bulk RNA-seq averages signals across all cells in a sample.

- Solution: Acknowledge this limitation in your study. Use deconvolution algorithms (e.g., CIBERSORTx, MCP-counter) to estimate constituent cell type proportions from bulk data. Whenever feasible, validate key findings using spatial transcriptomics or multiplex immunohistochemistry on a subset of samples to confirm spatial localization [8].

Q3: We are trying to isolate CAFs from tumor tissue, but our cultures seem contaminated with other cell types. How can we improve purity? A: CAFs are highly heterogeneous and lack a single unique marker [6].

- Solution: Use fluorescence-activated cell sorting (FACS) with a combination of positive (e.g., α-SMA, FAP, PDGFR-β) and negative (exclude CD31 for endothelial cells, CD45 for immune cells, EpCAM for epithelial cells) markers. Employ serial passaging, as CAFs often outgrow other stromal cells over time, but be aware this may alter their phenotype [6].

Q4: When analyzing immune checkpoint inhibitor (ICI) response, is tissue-based PD-L1 testing sufficient as a biomarker? A: PD-L1 expression on tumor tissue has limitations, including heterogeneity and dynamic change during therapy [9].

- Solution: Adopt an integrated biomarker approach. Complement tissue PD-L1 with:

- Peripheral blood biomarkers: e.g., dynamic changes in absolute lymphocyte count (ALC), myeloid-derived suppressor cells (MDSCs), or soluble checkpoint proteins, which offer real-time monitoring [9] [4].

- Tumor Mutational Burden (TMB) and gene expression signatures of T-cell inflammation.

- Computational models that integrate multiple data streams to predict response [8].

Q5: How can we functionally validate that a specific TME component (e.g., a CAF subset) is responsible for a phenotype predicted by our gene signature? A: Move from correlation to causation using co-culture or in vivo models.

- Solution: Isolate the cell population of interest (e.g., FAP+ CAFs). In 2D or 3D co-culture with tumor cells, assess changes in tumor cell proliferation, invasion, or drug sensitivity. For in vivo validation, use xenograft models where tumor cells are injected alone or mixed with the candidate stromal cells, and measure tumor growth and metastasis.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents for TME Component Analysis

| Research Goal | Key Reagents & Tools | Primary Function | Considerations |

|---|---|---|---|

| Immune Cell Profiling | Fluorescent-conjugated antibodies (CD45, CD3, CD8, CD4, FOXP3, CD68, CD163), CIBERSORTx software | Identify, quantify, and spatially resolve immune cell subsets via flow cytometry or IHC. | Panel design must account for spectral overlap. Deconvolution tools require a validated reference signature [3] [4]. |

| CAF Isolation & Study | Antibodies for FACS (α-SMA, FAP, PDGFR-β), Recombinant TGF-β, Collagen I-coated plates | Isolate CAFs, activate fibroblast-to-CAF differentiation in vitro, mimic stiff ECM conditions. | CAF markers are context-dependent; use combinations. TGF-β is a key driver of CAF activation [6]. |

| Hypoxia Modeling | Hypoxia chamber/chamber kits, Cobalt Chloride (CoCl₂), Dimethyloxallyl Glycine (DMOG), HIF-1α antibodies | Induce and stabilize HIF-1α in vitro to study hypoxia-driven gene expression and pathways. | Chemical inducers (CoCl₂) may have off-target effects. Physiological hypoxia (low O₂ chamber) is preferred [2]. |

| Extracellular Matrix Analysis | Collagen I/III antibodies, Masson's Trichrome Stain, recombinant MMPs, TGF-β inhibitors | Visualize and quantify ECM deposition and remodeling (fibrosis). Modulate ECM stiffness and turnover. | Trichrome staining provides a broad measure of collagen. Antibodies allow for specific isoform analysis [7]. |

| Angiogenesis Assay | Recombinant VEGF, Matrigel, Tube formation assay kits, CD31 antibodies | Stimulate vessel growth, provide a basement membrane matrix for in vitro tube formation, label endothelial cells. | Matrigel is a complex, tumor-derived mixture. Factor-reduced versions are available for specific studies [3]. |

| Spatial Transcriptomics | GeoMx (NanoString) or Visium (10x Genomics) platforms, PanCK/CD45/other morphology markers | Preserve spatial context while obtaining transcriptome data from specific tissue regions or cell populations. | High cost. Requires specialized expertise and analysis pipelines. Ideal for validating bulk RNA-seq findings [8]. |

Advanced Considerations: Heterogeneity and Modeling

- Diagram: Heterogeneity and Interactions in the CAF Population

TME Heterogeneity: Beyond inter-patient differences, there is significant intra-tumor spatial heterogeneity. For example, CAFs exist in multiple functional subtypes (e.g., myofibroblastic (myCAFs), inflammatory (iCAFs)), each with distinct roles [6]. Similarly, immune cell densities and types can vary radically between the tumor core, invasive margin, and tertiary lymphoid structures [4]. This heterogeneity is a major challenge for biomarker development and necessitates technologies that preserve spatial information [9] [8].

Computational Integration: The future of TME signature validation lies in integrating multi-omics data with advanced computational models. Agent-based models (ABMs) and hybrid AI-mechanistic models can simulate the dynamic interactions within the TME, generating testable hypotheses about therapy response and resistance [8]. The concept of creating patient-specific "digital twins" using these models represents a cutting-edge approach for personalized therapy prediction [8]. Validating a gene signature by showing its output aligns with the predictions of such a biologically grounded model adds a powerful layer of confirmation.

Within the framework of validating Tumor Microenvironment (TME)-related gene signatures, a central technical challenge is the profound biological heterogeneity observed across and within cancer types. This heterogeneity manifests in cellular composition, genomic drivers, and immune contexture, directly impacting the performance and generalizability of predictive signatures. This technical support center addresses common experimental and analytical obstacles encountered when studying the TME in three distinct cancers: Non-Small Cell Lung Cancer (NSCLC), Cholangiocarcinoma (CCA), and Gastric Cancer (GC). The guidance is rooted in a thesis focused on developing robust, cross-validated gene signatures that can account for such variability to improve prognostic and predictive accuracy in oncology research and drug development.

Troubleshooting Guide: Common Experimental Pitfalls & Solutions

Table: Common Technical Issues in TME Research Across Cancer Types

| Problem Area | Specific Issue | Probable Cause | Recommended Solution |

|---|---|---|---|

| Sample & Profiling | scRNA-seq data shows high stromal/immune cell content, masking cancer cell signals. | Biopsy site bias (inflammatory margin vs. tumor core); inherent desmoplasia (especially in CCA) [10] [11]. | Perform multi-region sampling where possible; use cell type deconvolution tools (e.g., CIBERSORT, xCell) on bulk data to estimate proportions [12]. |

| Data Analysis | A gene signature validated in NSCLC adenocarcinoma fails in squamous cell carcinoma. | High intertumoral heterogeneity between histological/molecular subtypes [10] [13]. | Subtype-specific signature training and validation. Always stratify analysis by key subtypes (e.g., LUAD vs. LUSC, iCCA vs. eCCA, GC molecular subtypes) [14] [15]. |

| Signature Validation | A prognostic immune signature is predictive in MSI-H GC but not in CIN or GS subtypes. | Fundamental differences in TME immune infiltration and T cell spatial distribution between subtypes [16] [14]. | Avoid pan-cancer or pan-subtype signatures. Develop and validate signatures within defined molecular contexts. Integrate spatial transcriptomics to account for T cell exclusion [16]. |

| Functional Assay | In vitro co-culture assays do not replicate in vivo immunosuppressive phenotypes. | Over-simplified system lacking critical TME components (e.g., CAFs, complex myeloid subsets, extracellular matrix) [11] [16]. | Employ patient-derived organoid (PDO) co-culture systems with autologous immune components or CAFs to better mimic the native TME [15]. |

Frequently Asked Questions (FAQs)

Q1: Our single-cell analysis of advanced NSCLC reveals extreme patient-to-patient variability in TME composition. How do we distinguish biologically significant heterogeneity from technical noise or sampling bias? A: This is a core observation. To address it:

- Increase Cohort Size: Ensure your cohort (e.g., >40 patients) captures the known spectrum of disease (stage, subtype, treatment history) [10].

- Leverage Public Data: Use large public scRNA-seq atlases (e.g., from TCGA or independent studies) as a reference to see if your patient clusters align with established subgroups [10] [13].

- Correlate with Outcome: The most critical validation is clinical correlation. Test if specific TME compositions (e.g., high T cell vs. neutrophil infiltration) are consistently associated with progression-free or overall survival in your cohort and independent datasets [10].

- Multi-region Sequencing: Where feasible, perform sequencing on multiple biopsy regions from the same tumor to assess intra-tumoral heterogeneity and distinguish it from inter-patient differences [17].

Q2: For cholangiocarcinoma, a highly desmoplastic cancer, how can we accurately profile the cancer cell-specific transcriptome amidst a dominant stroma? A: This requires a combined wet-lab and computational strategy:

- Computational Sorting: Use tools like

inferCNVon scRNA-seq data to separate malignant epithelial cells (which show copy number alterations) from non-malignant epithelial and stromal cells based on genomic signatures, not just transcriptomic markers [15]. - Physical Enrichment: Prior to sequencing, consider methods like laser-capture microdissection (LCM) to isolate regions of interest, though this may compromise cell viability for live-cell assays.

- Focus on Programs: Instead of just cell types, analyze the functional "meta-programs" (e.g., senescence, IFN-response) within cancer cells. These programs can be conserved and clinically relevant even across heterogeneous samples [15].

- Spatial Validation: Validate findings using spatial transcriptomics or multiplex immunofluorescence to confirm the localization and interaction of identified cancer cell states within the dense stroma.

Q3: In gastric cancer, we see that a "high immune score" does not always correlate with response to immunotherapy. What are the critical TME features beyond overall lymphocyte infiltration that we should measure? A: Simply quantifying total immune infiltration is insufficient. Your analysis must capture spatial and functional heterogeneity:

- Spatial Distribution: Determine if CD8+ T cells are infiltrated throughout the tumor center or merely confined to the invasive margin. The latter ("immune-excluded" phenotype) is common in CIN-type GC and is associated with poor ICI response [14].

- Functional State: Assess T cell exhaustion markers (e.g., PD-1, LAG3, TIM3) and check for the presence of regulatory T cells (Tregs). A signature of exhausted CD8+ T cells in metastatic sites may predict poor outcomes [16].

- Tertiary Lymphoid Structures (TLS): Identify the presence of TLS, which are organized immune aggregates associated with better prognosis and response in GS-type GC [14].

- Myeloid Context: Characterize tumor-associated macrophages (TAMs). An M2-polarized, immunosuppressive macrophage population can dominate the TME and inhibit cytotoxic function, even in the presence of T cells [16] [14].

Detailed Experimental Protocols

Protocol 1: Single-Cell RNA Sequencing (scRNA-seq) Workflow for TME Deconstruction This protocol outlines the key steps for generating a cell atlas from solid tumor biopsies, based on established methods [10] [15].

- Sample Acquisition & Dissociation: Obtain fresh tumor tissue (e.g., biopsy or resection). Minces tissue and dissociate using a validated, gentle enzymatic cocktail (e.g., collagenase/hyaluronidase/DNase mix) to preserve live cell integrity and surface markers. Pass through a cell strainer.

- Cell Viability & Selection: Remove debris and dead cells using a density gradient centrifugation or a dead cell removal kit. Aim for >80% viability. For immune cell-focused studies, consider negative or positive selection (e.g., CD45+ selection) prior to loading.

- Library Preparation & Sequencing: Use a droplet-based system (e.g., 10x Genomics Chromium). Load cells according to the manufacturer's protocol to achieve a target of 3,000-10,000 cells per sample. Generate single-cell 3' gene expression libraries. Sequence on an Illumina platform to a recommended depth of >50,000 reads per cell.

- Primary Computational Analysis: Process raw sequencing data with the platform's toolkit (e.g.,

Cell Ranger). Align reads to the reference genome, quantify gene expression, and generate a feature-barcode matrix. - Downstream Analysis in R/Python: Using

SeuratorScanpy, perform quality control (filter by genes/cell, UMIs/cell, mitochondrial percentage), normalize data, identify highly variable features, scale data, and perform linear dimensional reduction (PCA). Cluster cells using a graph-based algorithm (e.g., Louvain) and visualize with UMAP/t-SNE. Annotate cell types using canonical marker genes.

Protocol 2: Computational Deconvolution of Bulk RNA-seq to Infer TME Composition This protocol describes how to estimate cellular abundances from bulk tumor transcriptomic data, a cost-effective method for large cohorts [12].

- Data Input: Prepare your bulk RNA-seq data as a normalized gene expression matrix (e.g., TPM, FPKM).

- Signature Selection: Choose a deconvolution method. For a comprehensive view, use a signature-based method like xCell. xCell uses a curated set of 64 immune and stromal cell type signatures and performs a spillover compensation step to improve accuracy [12].

- Running Deconvolution: Utilize the

xCellR package. Input your expression matrix. The function will return an enrichment score for each cell type per sample. - Validation & Interpretation: Correlate xCell scores with matched histopathological data (e.g., CD8 IHC scores) or scRNA-seq-derived proportions if available. Use the scores as continuous variables in survival analysis (Cox regression) or to classify samples (e.g., "immune-hot" vs. "immune-cold").

Diagram Title: Integrated Workflow for TME Profiling via scRNA-seq and Computational Deconvolution

Table: Essential Research Reagents and Tools for TME Studies

| Reagent/Resource | Primary Function | Application Example | Key Consideration |

|---|---|---|---|

| 10x Genomics Chromium Single Cell 3' Kit | Partitioning single cells, barcoding, and preparing sequencing libraries for scRNA-seq. | Generating transcriptomic profiles of thousands of individual cells from a NSCLC biopsy to map cancer and immune cell heterogeneity [10] [15]. | Optimize cell loading concentration to balance cell recovery and doublet rate. |

| Anti-CD45 Magnetic Beads | Positive or negative selection of leukocytes (immune cells) from a heterogeneous cell suspension. | Enriching for immune cells from a CCA sample prior to scRNA-seq to deepen sequencing coverage of rare T cell subsets [11]. | Can be used for both pre-enrichment and downstream functional assays like flow cytometry. |

| xCell R Package | Computational deconvolution of bulk RNA-seq data to infer the relative abundance of 64 immune and stromal cell types. | Estimating changes in TME composition (e.g., macrophage score, CD8+ T cell score) across hundreds of GC samples from TCGA for survival analysis [14] [12]. | Results are enrichment scores, not absolute cell counts. Validate with orthogonal methods. |

| inferCNV Software | Inferring copy number variations (CNVs) from scRNA-seq read counts to distinguish malignant from non-malignant epithelial cells. | Identifying tumor cell clusters in eCCA scRNA-seq data dominated by stromal cells, based on large-scale chromosomal gains/losses [15]. | Requires a set of reference "normal" cells (e.g., fibroblasts, immune cells) from the same sample for comparison. |

| Multiplex IHC/IF Panels (e.g., CD8, CD68, CK, PD-L1) | Spatial profiling of multiple cell types and functional markers within intact tumor tissue sections. | Validating the spatial relationship between exhausted CD8+ T cells (PD-1+) and immunosuppressive M2 macrophages (CD163+) in the GC TME [16] [14]. | Requires careful antibody validation and spectral unmixing for fluorescence-based panels. |

Technical Support Center: TME Gene Signature Validation

Welcome to the Technical Support Center for Tumor Microenvironment (TME) Research. This resource is designed to assist researchers, scientists, and drug development professionals in navigating the technical challenges of developing and validating gene signatures related to hypoxia, immune activity, and cellular senescence within the TME. The following troubleshooting guides, FAQs, and detailed protocols are framed within the critical context of a broader thesis on validating TME-related biomarkers for prognostic and predictive applications [18] [19] [20].

Troubleshooting Guides & FAQs

This section addresses common experimental and analytical challenges encountered in TME gene signature research, offering targeted solutions and best practices.

This is a common issue often rooted in biases introduced during study design or analysis. A biomarker's validity is contingent on it being "fit for purpose," and rigorous technical and analytical validation is essential to ensure generalizability [19] [21].

- Troubleshooting Steps:

- Audit Cohort Design: Ensure your validation cohort matches the intended-use population of your signature (e.g., same cancer type, stage, prior treatment). Performance can drift if cohorts over-represent specific populations [22].

- Check for Batch Effects: Confounding technical variation (batch effects) is a major cause of failure. During discovery, use randomization and blinding when processing samples to control for non-biological experimental effects [19]. For public dataset validation, apply batch correction algorithms like ComBat before analysis [18].

- Re-evaluate Pre-analytical Variables: For wet-lab validation (e.g., qPCR, IHC), pre-analytical factors are the "weakest link." [21] Inconsistent tissue collection, fixation times (warm ischemia), and fixation methods can dramatically alter gene and protein expression metrics, especially for phosphoproteins or sensitive biomarkers [21].

- Scrutinize Model Overfitting: Using too many genes relative to patient samples leads to overfitting. Employ shrinkage methods like LASSO Cox regression during signature construction to penalize non-contributing genes and build a more generalizable model [18] [23].

FAQ 2: How do I determine if my TME signature is prognostic, predictive, or both?

Clarifying the clinical application of your signature is a fundamental first step that dictates the required validation study design [19].

- Diagnostic Flow & Key Differences: The table below outlines the core distinctions and validation pathways.

Table 1: Distinguishing and Validating Prognostic vs. Predictive Biomarkers

| Aspect | Prognostic Biomarker | Predictive Biomarker |

|---|---|---|

| Core Question | Does it inform about likely disease outcome independent of therapy? | Does it inform about likely benefit from a specific therapy? |

| Typical Use | Stratifies patient risk (e.g., high vs. low risk of recurrence). | Identifies patients who will respond to a given drug (e.g., immune checkpoint inhibitors). |

| Validation Study Design | Can be assessed in a single-arm cohort or untreated patient groups [19]. | Must be assessed using data from a randomized clinical trial (RCT) to compare outcomes between treatment arms within biomarker groups [19]. |

| Key Statistical Test | Main effect test of association between biomarker and outcome (e.g., Kaplan-Meier, univariate Cox). | Interaction test between treatment and biomarker in a statistical model [19]. |

| Example from Literature | A TMEscore predicting overall survival in bladder cancer patients from a retrospective cohort [18]. | EGFR mutation status predicting superior progression-free survival for gefitinib vs. chemotherapy in NSCLC, proven in the IPASS RCT [19]. |

FAQ 3: What are the best practices for transitioning a signature from bulk RNA-seq to a clinically applicable assay?

Translating a multi-gene signature from discovery platforms to a robust clinical test involves strategic simplification and rigorous technical validation.

- Troubleshooting Steps:

- Signature Refinement: Reduce the gene list to the minimum core set that retains predictive power using feature selection algorithms (e.g., Boruta) [24] or LASSO [18] [23]. Aim for a targeted panel (e.g., NanoString) or a multiplex qPCR assay.

- Lock Down the Assay Protocol: Define and standardize every step, from nucleic acid extraction and input amount to reagent lots and instrument settings. "For a test to be 'fit for purpose,' the testing... must be based on a reliable and robust technology." [21]

- Establish Analytical Validity: Before clinical validation, you must demonstrate:

- Precision: Reproducibility across replicates, operators, days, and sites.

- Accuracy: Concordance with a gold-standard method (if one exists).

- Sensitivity/Specificity: For the assay itself [22].

- Use Appropriate Controls: Include positive and negative controls in every run. For IHC, use cell line pellets or tissue controls with known status [21]. For gene expression, use synthetic RNA controls or validated reference samples.

FAQ 4: How can I use single-cell RNA-seq (scRNA-seq) data to improve or validate a bulk tissue-derived TME signature?

scRNA-seq data is invaluable for deconvoluting bulk signatures and understanding cellular mechanisms.

- Troubleshooting Steps:

- Deconvolution & Cellular Attribution: Use your signature genes to see which cell types express them. For example, machine learning applied to scRNA-seq can identify if predictive signal comes from specific T-cell states, macrophages, or even tumor cells [24]. This biological insight strengthens the rationale for your signature.

- Identify Context-Specific Interactions: Advanced analysis like SHAP (SHapley Additive exPlanations) on scRNA-seq models can reveal non-linear and context-dependent interactions between genes in your signature, explaining why simple additive models might fail in some patients [24].

- Refine Signature Components: If certain genes in your bulk signature are expressed broadly or only in non-relevant cells, consider replacing them with more specific markers identified from the single-cell atlas.

- Validate Cellular Hypotheses: If your signature implies high cytotoxic immune activity, use scRNA-seq from independent samples to confirm the presence of activated CD8+ T cells and their spatial co-localization (if using spatial transcriptomics).

Detailed Experimental Protocols

This section provides step-by-step methodologies for key experiments cited in TME signature research.

Objective: To develop a multi-gene prognostic signature from transcriptomic data using bioinformatics and validate it in independent cohorts.

Workflow Overview:

Step-by-Step Procedure:

Data Acquisition and Preprocessing:

- Download transcriptome data (e.g., FPKM or TPM) and clinical survival data for a discovery cohort (e.g., TCGA-BLCA) [18].

- Download independent validation datasets from GEO (e.g., GSE13507) [18].

- Normalize data (e.g., convert FPKM to TPM, perform quantile normalization on microarray data) and correct for batch effects between datasets using the ComBat algorithm [18].

Identification of Prognostic Differentially Expressed Genes (DEGs):

- Obtain a list of TME-related genes (TMRGs) from databases like MSigDB [18].

- Using the

limmaR package, identify TMRGs differentially expressed between tumor and normal tissue (FDR < 0.05, |log2FC| > 1) [18]. - Perform univariate Cox regression analysis on these DEGs to select those with significant association with overall survival (OS) (p < 0.01) [18].

Molecular Clustering (Optional but Recommended):

- Use consensus clustering (e.g., via the

CancerSubtypesR package) on the expression of prognostic TMRGs to identify distinct TME subtypes [18]. Validate that clusters have different clinicopathological features and survival outcomes.

- Use consensus clustering (e.g., via the

Signature Construction with LASSO Cox Regression:

- To prevent overfitting, apply Least Absolute Shrinkage and Selection Operator (LASSO) Cox regression (using the

glmnetR package) on the prognostic DEGs [18] [23]. - Use 10-fold cross-validation to select the optimal penalty parameter (λ) that minimizes partial likelihood deviance. The genes with non-zero coefficients at this λ are retained for the signature.

- To prevent overfitting, apply Least Absolute Shrinkage and Selection Operator (LASSO) Cox regression (using the

Build the Prognostic Model:

- Perform multivariate Cox regression on the LASSO-selected genes to obtain their final coefficients.

- Calculate a risk score for each patient: Risk Score = Σ (Expression of Genei * Coefficienti).

- Dichotomize patients into high-risk and low-risk groups using the median risk score or an optimal cut-off determined from the discovery cohort.

Internal and External Validation:

- Apply the same formula to calculate risk scores in the validation cohorts.

- Use Kaplan-Meier survival analysis and log-rank tests to compare OS between risk groups.

- Assess the signature's predictive accuracy using time-dependent Receiver Operating Characteristic (ROC) curve analysis and calculate the Area Under the Curve (AUC) [19] [23].

Mechanistic and Functional Exploration:

- Use single-sample GSEA (ssGSEA) to estimate the abundance of immune cell infiltrates in high- vs. low-risk groups [18].

- Perform functional enrichment analysis (GO, KEGG) on genes differentially expressed between risk groups.

- Explore associations with tumor mutation burden (TMB) and predicted response to immunotherapy using algorithms like TIDE [18].

Objective: To validate a TME-based signature (e.g., IKCscore) as a predictive biomarker for response to immune checkpoint inhibitors (ICB).

Workflow Overview:

Step-by-Step Procedure:

Cohort and Response Definition:

- Assemble a cohort of patients with advanced cancer treated with ICB, with available pre-treatment tumor RNA-seq data and radiological response assessment.

- Classify patients based on best objective response using RECIST criteria: Responders (R) = Complete Response (CR) + Partial Response (PR); Non-Responders (NR) = Stable Disease (SD) + Progressive Disease (PD) [20].

Calculate Predictive Signature Score:

Assess Predictive Capacity:

- Compare signature scores between R and NR groups using the Wilcoxon rank-sum test.

- Perform ROC analysis to predict response (R vs. NR) and determine the AUC. An AUC > 0.75 is generally considered good discriminatory power.

Survival Analysis:

- Divide patients into high- and low-score groups (median cut-off or optimal ROC cut-off).

- Perform Kaplan-Meier analysis and log-rank test to compare Progression-Free Survival (PFS) or Overall Survival (OS) between groups. A significant p-value (< 0.05) and Hazard Ratio (HR < 1 for high score) support predictive value [20].

Comparison with Established Biomarkers:

- Statistically compare the predictive performance (AUC) of your signature with standard biomarkers like PD-L1 expression (by IHC) and Tumor Mutational Burden (TMB) [20].

Independent and Pan-Cancer Validation:

This table details critical reagents, algorithms, and databases essential for TME gene signature research.

Table 2: Essential Research Reagent Solutions for TME Signature Validation

| Item / Resource | Function / Purpose | Key Considerations & Examples |

|---|---|---|

| LASSO Cox Regression | Statistical Method: Constructs a parsimonious prognostic gene signature by applying a penalty that shrinks coefficients of non-informative genes to zero, effectively selecting the most relevant features and reducing overfitting [18] [23]. | Implemented in R glmnet package. The optimal penalty parameter (λ) is chosen via cross-validation. |

| Single-sample GSEA (ssGSEA) | Computational Algorithm: Quantifies the enrichment level of a specific gene set (e.g., immune cells, hypoxia pathway) in an individual sample. Used to calculate signature scores and estimate immune cell infiltration from bulk RNA-seq data [18] [20]. | Foundation for scores like TMEscore and IKCscore. Available in R packages like GSVA. |

| ESTIMATE Algorithm | Computational Tool: Infers the fraction of stromal and immune cells in tumor samples (StromalScore, ImmuneScore) and calculates a combined ESTIMATEScore, which inversely correlates with tumor purity [23]. | Useful for initial TME characterization and identifying stromal/immune-related DEGs. |

| TIDE Algorithm | Computational Framework: (Tumor Immune Dysfunction and Exclusion) Models tumor immune evasion to predict potential response to immune checkpoint blockade therapy [18]. | A useful comparator for validating the predictive value of novel immunotherapy signatures. |

| Boruta Feature Selection | Machine Learning Wrapper: Identifies all relevant features (genes) by comparing original feature importance with importance of randomized "shadow" features. Used with models like XGBoost on complex data (e.g., scRNA-seq) [24]. | More robust than simple importance ranking; helps build interpretable, high-performance signatures (AUC ~0.89) [24]. |

| Formalin-Fixed, Paraffin-Embedded (FFPE) Tissue Controls | Experimental Control: Essential for validating immunohistochemistry (IHC) assays. Includes cell line pellets with known biomarker status or tissue microarrays (TMAs) with annotated cores [21]. | Critical for establishing antibody specificity and assay sensitivity. Concordance between TMA and whole-section results must be verified [21]. |

| shRNA/siRNA Knockdown Systems | Functional Validation: Used to create isogenic negative controls in cell lines for antibody validation (Western blot, IHC) and to perform in vitro phenotypic assays (migration, invasion) to confirm the functional role of a candidate gene from the signature [18] [25]. | Provides direct causal evidence linking a signature gene to a cancer-relevant biological process. |

Essential Concepts and Quantitative Classifications

This technical support center is designed within the context of a broader thesis on validating Tumor Microenvironment (TME)-related gene signatures. A core challenge in this field is the accurate classification of tumors into immunologically "cold" or "hot" phenotypes, a critical determinant of clinical outcomes and therapeutic response [26] [27]. The following section defines these phenotypes and presents key quantitative data to guide your experimental design and analysis.

Cold Tumors are characterized by an immunosuppressive TME with minimal cytotoxic immune cell infiltration, leading to poor responses to immunotherapies like immune checkpoint inhibitors (ICIs) [26] [27]. Hot Tumors, in contrast, exhibit robust immune infiltration and a pro-inflammatory environment, correlating with better prognosis and ICI sensitivity [26] [27].

Table 1: Defining Characteristics of Cold vs. Hot Tumor Phenotypes

| Characteristic | Cold Tumor Phenotype | Hot Tumor Phenotype |

|---|---|---|

| Immune Cell Infiltration | Sparse; limited CD8+ T cells and NK cells [28]. | Abundant cytotoxic CD8+ T cells and NK cells [28]. |

| Key Immune Players | Dominated by M2-type macrophages, Tregs, MDSCs [29] [28]. | Presence of activated dendritic cells, M1-type macrophages, and T helper cells [28]. |

| Common Features | Low tumor mutational burden (TMB), defective antigen presentation, hypoxic, dense stroma [30] [27]. | High TMB, functional antigen presentation, presence of Tertiary Lymphoid Structures (TLS) [27]. |

| Response to ICIs | Poor or non-responsive [26] [27]. | More likely to respond favorably [26] [27]. |

| Clinical Outcome | Generally associated with poorer prognosis [28]. | Generally associated with improved prognosis [28]. |

Biomarkers derived from TME gene signatures must be rigorously validated for a specific Context of Use (COU). The FDA BEST resource categorizes biomarkers, and your validation strategy must align with the intended category [31].

Table 2: Biomarker Categories and Their Role in TME Phenotype Research

| Biomarker Category | Primary Use in Drug Development/TME Research | Example in Oncology |

|---|---|---|

| Diagnostic | Identify or confirm the presence of a disease or subtype. | Classifying a tumor as "hot" based on a gene signature. |

| Prognostic | Identify likelihood of a clinical event, recurrence, or progression. | Gene signature indicating "cold" phenotype linked to poorer survival [28]. |

| Predictive | Identify individuals more likely to experience a favorable or unfavorable effect from a specific therapeutic intervention. | Signature predicting response to immune checkpoint blockade. |

| Pharmacodynamic/Response | Show a biological response has occurred in an individual who has been exposed to a medical product. | Change in immune gene expression after administering a TME-reprogramming agent. |

| Safety | Measure the presence or extent of toxicity related to an intervention. | Signature for cytokine release syndrome following adoptive T-cell therapy. |

Troubleshooting Guides & FAQs

FAQ 1: What are the most common reasons for inconsistent or weak classification of tumor samples into cold/hot phenotypes using gene signatures?

- Problem: Your gene expression-based classifier yields ambiguous or low-confidence calls.

- Solution & Checklist:

- Verify Input Data Quality: Ensure your RNA-seq or microarray data is properly normalized and free of batch effects. Low sequencing depth or poor RNA quality can obscure true biological signals.

- Audit Your Signature Gene List: The signature must be robust. Consult recent literature (e.g., [28]) for validated gene sets. Common pitfalls include using signatures derived from a single cancer type on a different one, or signatures contaminated with housekeeping genes.

- Check for Stromal Contamination: A high stromal content (e.g., from cancer-associated fibroblasts) can dilute the immune signal, making a "hot" tumor appear "colder." Use deconvolution tools (like CIBERSORT [28] or MCP-counter) to estimate stromal and immune cell fractions.

- Consider Spatial Heterogeneity: A bulk RNA sample averages the entire tumor. An "immune-excluded" phenotype, where T cells are trapped in the stroma, may yield a moderate immune score that doesn't reflect the functionally "cold" tumor core [29] [27]. Correlate with spatial transcriptomics or multiplex IHC if possible.

- Validate with a Complementary Method: Always confirm computational calls with an orthogonal method. The most direct validation is multiplex immunohistochemistry (mIHC) for key cellular markers (e.g., CD8, CD68, FOXP3) on the same patient samples [28].

FAQ 2: Ourin vitroorin vivomodel is not recapitulating the expected "cold" to "hot" transition after treatment with a TME-reprogramming agent. What could be wrong?

- Problem: Experimental models fail to show immune activation upon treatment.

- Solution & Checklist:

- Confirm Target Engagement: First, verify that your drug or therapeutic agent is hitting its intended target in your model. Use pharmacodynamic biomarkers (e.g., phosphorylation status, metabolite levels) to confirm biological activity [31].

- Re-evaluate Your Model System: Standard immunocompetent mouse models (e.g., MC38, CT26) are inherently more "hot" than many human cancers. Consider using genetically engineered mouse models (GEMMs) or patient-derived xenografts (PDXs) in humanized mice that better mimic immunosuppressive, "cold" human TMEs [30].

- Assess the Timeframe: Immune recruitment and activation are not instantaneous. The "hotting" effect may peak days or weeks after the initial treatment. Conduct a time-course experiment instead of a single endpoint analysis.

- Look for Compensatory Immunosuppression: The treatment may activate one arm of immunity (e.g., CD8+ T cells) while simultaneously upregulating a compensatory checkpoint (e.g., LAG-3, TIM-3) or recruiting Tregs [27]. Profile a broad panel of immune inhibitors and suppressive cell markers.

- Address Hypoxia: Hypoxia is a master regulator of immunosuppression [30]. If your treatment does not alleviate hypoxia, the TME may remain "cold." Measure tumor oxygenation or HIF-1α levels post-treatment.

FAQ 3: We have a promising TME-related gene signature, but how do we navigate the path for regulatory acceptance to use it in clinical trials?

- Problem: Uncertainty about translating a research-grade signature into a regulatory-grade tool.

- Solution & Pathways:

- Define a Precise Context of Use (COU): This is the critical first step [31]. Is your signature a prognostic biomarker (identifying high-risk "cold" tumors), a predictive biomarker (selecting patients for a specific TME-targeting therapy), or a pharmacodynamic biomarker (measuring drug effect)? The COU dictates the validation strategy.

- Pursue Fit-for-Purpose Analytical Validation: Develop an assay (e.g., RNA-seq panel, Nanostring) and rigorously validate its analytical performance (precision, accuracy, sensitivity, reproducibility) for the intended COU [31].

- Engage Early with Regulators: The FDA encourages early dialogue. You can discuss biomarker validation plans through:

- Pre-IND Meetings: For biomarkers tied to a specific drug development program [31].

- Biomarker Qualification Program (BQP): For biomarkers intended for broader use across multiple drug development programs. Be aware that the BQP process is lengthy (median Qualification Plan development takes ~32 months) and has seen limited success for novel response biomarkers [32].

- Generate Robust Clinical Validation Data: Demonstrate that the biomarker reliably correlates with or predicts the clinical endpoint specified in your COU, using well-designed, retrospective, and eventually prospective studies [31].

Detailed Experimental Protocols

Protocol 1: Computational Pan-Cancer Identification of Hot/Cold Phenotypes Using TCGA Data

This protocol is adapted from the methodology used in [28] to classify tumors based on immune composition.

Objective: To reproducibly classify tumors from TCGA or similar transcriptomic datasets into immunologically hot and cold subtypes.

Materials & Software:

- Data: TCGA transcriptomic data (e.g., TPM values) for your cancer(s) of interest.

- R Software with packages:

IOBR(for CIBERSORT),ConsensusClusterPlus,GSVA,survival.

Procedure:

- Immune Deconvolution: Use the CIBERSORT algorithm via the

IOBRpackage to estimate the relative fractions of 22 immune cell types in each tumor sample [28]. - Immune Functional Scoring: Calculate scores for critical immune functions using single-sample Gene Set Enrichment Analysis (ssGSEA). Key gene sets include:

- Unsupervised Clustering: Perform consensus clustering on the combined matrix of immune cell fractions and functional scores using

ConsensusClusterPlus. Determine the optimal number of clusters (k) based on consensus cumulative distribution function (CDF) plots. - Phenotype Assignment: Label the clusters as "Hot-Immune" or "Cold-Immune" based on the following quantitative criteria [28]:

- Hot: High infiltration of CD8+ T cells and activated NK cells, high cytolytic activity and T cell proliferation scores, and low infiltration of M2-type macrophages.

- Cold: The inverse pattern—low CD8+ T cells, low cytolytic activity, and high M2 macrophages.

- Survival Analysis: Validate the clinical relevance of your classification by performing Kaplan-Meier and Cox proportional hazards survival analysis comparing the "Hot" and "Cold" groups using the

survivalpackage [28]. - Hub Gene Identification: Perform correlation analysis to identify immune regulatory genes (e.g., checkpoint genes like

PDCD1(PD-1),CD276(B7-H3),NT5E(CD73)) that are most strongly associated with the "Cold" phenotype across clusters [28].

Protocol 2: Validation of Phenotype and Hub Genes by Multiplex Immunohistochemistry (mIHC)

Objective: To spatially validate computational predictions of hot/cold phenotypes and the expression of hub genes (e.g., NT5E/CD73) at the protein level in tumor tissue sections [28].

Materials:

- Formalin-fixed, paraffin-embedded (FFPE) tumor tissue sections.

- Primary antibodies: e.g., anti-CD8, anti-CD68 (for macrophages), anti-CD163 (M2 marker), anti-NT5E/CD73.

- Multiplex IHC staining kit (e.g., Opal, CODEX, or fluorescent tyramide signal amplification).

- Fluorescent microscope or scanner for imaging.

Procedure:

- Tissue Preparation: Cut 4-5 μm sections from FFPE blocks. Bake and deparaffinize using standard protocols.

- Multiplex Staining Cycle: Perform sequential rounds of staining. Each round includes: a. Antigen retrieval (specific to the target antigen). b. Blocking of endogenous peroxidase and nonspecific sites. c. Incubation with a primary antibody from your panel. d. Incubation with a horseradish peroxidase (HRP)-conjugated secondary polymer. e. Application of a fluorescent tyramide (Opal) reagent, unique for that antibody. f. Heat-based antibody stripping to remove the primary-secondary complex, preparing the slide for the next round.

- Counterstaining and Mounting: After all markers are stained, apply a nuclear counterstain (e.g., DAPI). Apply an anti-fade mounting medium and a coverslip.

- Image Acquisition & Analysis: Scan slides using a fluorescent whole-slide scanner. Use digital image analysis software to: a. Identify and segment different cell types based on marker positivity (e.g., CD8+ cells, CD68+CD163+ M2 macrophages). b. Quantify the density and spatial distribution of these cells (e.g., intratumoral vs. stromal). c. Measure expression intensity of the hub gene target (e.g., NT5E) on specific cell populations.

- Correlation: Statistically correlate the mIHC-derived metrics (e.g., CD8+/M2 macrophage ratio, NT5E intensity) with the computational phenotype calls and patient survival data [28].

Key Signaling and Workflow Visualizations

Table 3: Key Resources for TME Phenotype Research

| Category | Item/Resource | Function & Application | Example/Reference |

|---|---|---|---|

| Computational Tools | CIBERSORT/xCell/… | Deconvolutes bulk RNA-seq data to estimate relative immune cell abundances. Critical for phenotype scoring. | [28] |

| ssGSEA/GSVA | Calculates enrichment scores for gene signatures (e.g., cytolytic activity) at the single-sample level. | [28] | |

| The Cancer Genome Atlas (TCGA) | Public repository of multi-omics data from >30 cancer types. Primary source for discovery and validation. | [28] | |

| Laboratory Reagents | Multiplex IHC Kits | Enable simultaneous detection of 4+ protein markers on one FFPE section for spatial validation of phenotypes. | Opal, CODEX [28] |

| Hypoxia Probes | Chemical probes (e.g., pimonidazole) to detect hypoxic regions in tumors, a key feature of "cold" TME. | [30] | |

| Recombinant Cytokines/Growth Factors | Used in in vitro assays to polarize macrophages (M1/M2), differentiate MDSCs, or study T cell function. | [29] | |

| Experimental Models | Humanized Mouse Models | Immunodeficient mice engrafted with human immune cells and PDX tumors. Model human-specific TME interactions. | [29] |

| 3D Spheroid/Organoid Co-cultures | In vitro systems incorporating tumor cells with fibroblasts, immune cells to study TME crosstalk. | N/A | |

| Reference Databases | FDA-NIH BEST Resource | Definitive glossary for biomarker definitions and categories. Essential for planning validation studies. | [31] |

| Immune Gene Signatures | Curated lists of genes representing cell types or functions (e.g., MSigDB, literature-derived lists). | [28] |

Technical Support & Troubleshooting Center

This technical support center provides targeted troubleshooting guides, detailed protocols, and curated resources for researchers employing single-cell RNA sequencing (scRNA-seq) to identify and validate high-stemness cell clusters within the tumor microenvironment (TME). The content is framed within a broader thesis on validating TME-related gene signatures for prognostic and therapeutic insight.

Detailed Experimental Protocols for Key Analyses

Researchers investigating stemness often integrate the following core computational and analytical protocols. The table below summarizes their purpose and key tools.

Table: Core Analytical Protocols for Stemness & TME Research

| Protocol Name | Primary Purpose | Key Tools/Packages | Typical Output |

|---|---|---|---|

| mRNAsi Calculation [33] | Quantifies transcriptomic stemness of samples or single cells. | OCLR algorithm [34], gelnet R package [35] |

Stemness index score per sample/cell. |

| Malignant Cell Identification [35] | Distinguishes tumor cells from stromal/immune cells in scRNA-seq data. | CopyKAT (inference of copy number variations) [35] | Classification of cells as "aneuploid" (malignant) or "diploid". |

| Developmental Trajectory & Stemness State [33] | Orders cells along a pseudo-temporal continuum of differentiation. | CytoTRACE [33] | Trajectory plot positioning high-stemness cells. |

| Intercellular Communication Analysis [33] | Infers signaling interactions between cell clusters (e.g., high vs. low stemness). | CellChat, CellCall [33] | Network diagrams and enriched signaling pathways. |

| Prognostic Model Construction [33] [36] | Builds a multi-gene signature predictive of patient survival from stemness-related genes. | Integrative machine learning (e.g., CoxBoost, RSF, LASSO) [33] [36] | Risk score model and validated hub genes. |

Protocol 1: Calculating the mRNA Stemness Index (mRNAsi) The mRNAsi quantifies oncogenic dedifferentiation using a machine learning model trained on pluripotent stem cell data [34].

- Model Training: Use the One-Class Logistic Regression (OCLR) algorithm implemented in the

gelnetR package. Train the model on gene expression data from the Progenitor Cell Biology Consortium (PCBC), using only pluripotent stem cells as the positive class [35]. - Signature Extraction: Retain the 500 genes with the highest absolute weight coefficients from the trained OCLR model as the stemness signature [35].

- Score Calculation: For each cell or bulk sample, calculate the mRNAsi as the Spearman correlation coefficient between its gene expression profile and the stemness signature vector [35].

Protocol 2: Identifying High-Stemness Clusters via CytoTRACE CytoTRACE predicts the differentiation state of individual cells based on the diversity of expressed genes.

- Data Input: Use a normalized count matrix (e.g., from Seurat) of identified malignant epithelial or aneuploid cells [33] [35].

- Run Analysis: Execute the CytoTRACE algorithm. It will calculate a score for each cell, where a higher score indicates greater stemness (less differentiated) [33].

- Stratification: Divide cells into "high stemness" and "low stemness" groups based on the median CytoTRACE score [33].

- Visualization: Overlay the CytoTRACE scores onto UMAP embeddings and pseudo-temporal trajectory plots to confirm that high-stemness cells are concentrated at the start or end of differentiation trajectories [33].

Troubleshooting Guides

Issue Category 1: scRNA-seq Data Pre-processing & Quality Control

- Problem: Low Cell Viability or High Ambient RNA Contaminating Library.

- Problem: Inability to Confidently Identify Malignant Cells from TME Stromal/Immune Cells.

Issue Category 2: Stemness Analysis & Interpretation

- Problem: mRNAsi Scores Show Minimal Variance Across Samples or Cells.

- Problem: High-Stemness Cell Cluster Shows Weak or No Association with Poor Clinical Prognosis.

- Solution: 1) Re-evaluate cluster definition thresholds (e.g., median vs. quartile split of mRNAsi). 2) Intersect cluster marker genes with established stemness pathways (WNT, NOTCH, HIPPO) [33] [38] for biological validation. 3) Perform survival analysis on external bulk RNA-seq cohorts using the high-stemness cluster gene signature, not just the cluster's existence [33].

Issue Category 3: TME & Therapy Response Validation

- Problem: Constructed Stemness Gene Signature Fails to Predict Immunotherapy Response.

- Solution: Beyond standard immune deconvolution, calculate specific immunotherapy response scores. Use the Tumor Immune Dysfunction and Exclusion (TIDE) algorithm and Immunophenoscore (IPS) to quantitatively predict potential response to immune checkpoint blockade [18] [39]. Validate the signature in dedicated immunotherapy cohorts (e.g., IMvigor210) [36] [18].

- Problem: Difficulty Linking Stemness Clusters to Specific TME Interactions.

Frequently Asked Questions (FAQs)

Q1: What is the most reliable method to define "stemness" in scRNA-seq data from human tumors? A1: There is no single gold-standard method. A robust approach is to employ a multi-algorithm consensus. The computational mRNAsi (via OCLR) provides a transcriptome-wide quantitative index [34]. This should be combined with a tool like CytoTRACE, which predicts differentiation state based on transcriptional diversity, to identify high-stemness clusters [33]. Functional validation, such as examining enrichment for known stemness pathways (HIPPO, Notch) or association with a dedifferentiated cell state at the end of a pseudo-temporal trajectory, is essential [33] [38].

Q2: How can I transition my scRNA-seq-derived stemness signature into a validated prognostic model for patient stratification? A2: This requires an integrated analysis pipeline:

- Identify Candidate Genes: Obtain differentially expressed genes (DEGs) between your high- and low-stemness scRNA-seq clusters [35].

- Bulk Tissue Validation & Modeling: Test these candidate genes in bulk RNA-seq cohorts (e.g., TCGA) with clinical outcomes. Use machine learning algorithms (LASSO, CoxBoost, Random Survival Forest) to shrink the gene list and build a multivariate prognostic risk model [33] [36].

- Independent Validation: Validate the model's performance in independent GEO datasets [33] [40].

- Link to Therapy: Correlate the model's risk score with TME features (immune infiltration, TIDE score) [18] [39] and drug sensitivity predictions from databases like GDSC [36].

Q3: Why might high-stemness tumor cells be associated with resistance to immunotherapy, and how can I test this? A3: High-stemness cells can create an immunosuppressive TME by recruiting regulatory immune cells, expressing immune checkpoints, and promoting T-cell exclusion [33] [34]. To test this in your data:

- In silico: Use your stemness signature or risk score to stratify patients in immunotherapy cohorts (e.g., IMvigor210). Compare TIDE scores, IPS, and actual response rates between high- and low-stemness groups [36] [18].

- In your scRNA-seq data: Perform differential expression analysis on high-stemness malignant cells to check for overexpression of immunosuppressive ligands (e.g., CD274/PD-L1). Use cell communication analysis (CellChat) to infer interactions between these cells and inhibitory immune cells like Tregs or M2 macrophages [33] [41].

Visualizing Key Concepts & Workflows

Diagram 1: Integrated scRNA-seq Workflow for Stemness Cluster Identification. This flowchart outlines the stepwise analytical process from raw data to validated biological insight, highlighting the core stemness scoring step.

Diagram 2: Core Stemness Pathways and Their Functional Impact on CSCs. This diagram illustrates how dysregulated developmental pathways converge to drive the defining properties of cancer stem cells, including therapy resistance and TME modulation.

Table: Key Resources for scRNA-seq-Based Stemness and TME Research

| Category | Item/Resource | Function/Purpose | Example/Note |

|---|---|---|---|

| Wet-Lab Consumables | Viability Stain & Dead Cell Removal Kits | Ensures high-quality input for scRNA-seq by removing dead cells which increase background noise [37]. | Propidium iodide, DAPI; Magnetic bead-based removal kits. |

| scRNA-seq Platform | 10x Genomics Chromium System | Enables high-throughput, barcoded single-cell library preparation via droplet microfluidics [37]. | Standard for capturing thousands of cells; includes cell & UMI barcoding. |

| Core Software Packages | Seurat (R) | Comprehensive toolkit for scRNA-seq QC, integration, clustering, and differential expression [33] [35]. | Industry standard for analysis and visualization. |

| CellChat / CellCall (R) | Infers and analyzes intercellular communication networks from scRNA-seq data [33]. | Critical for studying how high-stemness cells interact with the TME. | |

| Specialized Algorithms | CopyKAT (R) | Identifies malignant cells from scRNA-seq data by inferring genomic copy number variations [35]. | Essential for accurately isolating the tumor cell population for stemness analysis. |

| CytoTRACE (R/Python) | Predicts cellular differentiation state and orders cells along a developmental trajectory [33]. | Used to validate and complement mRNAsi-based stemness ordering. | |

| Reference Databases | The Cancer Genome Atlas (TCGA) | Source of bulk RNA-seq and clinical data for validating scRNA-seq-derived signatures and building prognostic models [33] [18]. | |

| Gene Expression Omnibus (GEO) | Repository for independent scRNA-seq and bulk expression datasets used for validation [33] [35]. | ||

| MSigDB | Curated database of gene sets for pathway (e.g., senescence, stemness) enrichment analysis [36] [18]. |

Advanced Computational Approaches for TME Signature Development

This technical support center provides troubleshooting guidance and best practices for researchers developing and validating Tumor Microenvironment (TME)-related gene signatures. The FAQs address common experimental and analytical challenges using feature selection strategies like LASSO, Cox regression, and machine learning.

Frequently Asked Questions

Core Concepts & Strategy

Q1: In the context of validating a TME gene signature for cancer prognosis, what are the fundamental strengths of LASSO-Cox regression compared to traditional statistical methods? LASSO-Cox regression is particularly powerful for TME signature validation because it simultaneously performs variable selection and model fitting in high-dimensional settings where the number of potential genes (predictors) far exceeds the number of patient samples [42]. Its key strength is the L1 regularization penalty, which shrinks the coefficients of irrelevant or redundant genes to exactly zero, yielding a sparse, interpretable model of the most prognostic genes [18] [42]. This is crucial for TME research, as it can distill hundreds of candidate genes derived from databases like MSigDB into a parsimonious signature (e.g., a 5 or 9-gene model) with direct clinical relevance for survival prediction [18] [43]. Unlike univariate filtering or stepwise selection, it helps prevent overfitting and improves the model's generalizability to external validation cohorts [44].

Q2: Our goal is to build a prognostic TME signature. What is a robust, step-by-step workflow that integrates LASSO-Cox and machine learning? A robust, widely published workflow involves sequential data integration and analytical filtering [18] [43]:

- Data Acquisition & TME Gene Compilation: Obtain transcriptomic (e.g., RNA-seq) and clinical survival data from public repositories (TCGA, GEO). Compile a list of TME-related genes from resources like MSigDB [18].

- Initial Filtering: Identify differentially expressed TME genes (DETMRGs) between tumor and normal tissues. Perform univariate Cox regression for an initial screen of prognosis-associated genes [18].

- Dimensionality Reduction with LASSO-Cox: Apply LASSO-Cox regression to the filtered gene list. Use 10-fold cross-validation to find the optimal penalty (lambda) value that minimizes the cross-validated error. This selects the final gene signature [18] [45].

- Risk Model Construction: Calculate a risk score for each patient using the formula: Risk Score = Σ (Gene Expressioni * Coefficienti). Patients are dichotomized into high- and low-risk groups using the median or optimal cut-off value [45] [43].

- Validation & Evaluation:

- Internal Validation: Assess the signature's prognostic power on the training data using Kaplan-Meier (KM) survival curves (log-rank test) and time-dependent Receiver Operating Characteristic (ROC) curves [18].

- External Validation: Test the risk model on independent datasets (e.g., from GEO or ICGC) to verify its robustness [43].

- Machine Learning Enhancement: Use the risk score as a key input feature for machine learning classifiers (e.g., Random Forest) or ensemble models to further improve prognostic or therapeutic response classification [46].

- Biological & Clinical Interpretation: Conduct functional enrichment analysis (GO, KEGG) on the signature genes. Correlate the risk score with immune cell infiltration (using ssGSEA or CIBERSORT), tumor mutation burden, and immunotherapy response indicators (e.g., TIDE score) [18] [45].

Diagram 1: TME Signature Development & Validation Workflow (92 characters)

Q3: When should I consider advanced regularization methods like the Fused Sparse-Group Lasso (FSGL) over standard LASSO for survival analysis? Consider FSGL when analyzing multi-state models in complex disease pathways (e.g., transitions from diagnosis to remission, relapse, or death), a common scenario in cancer progression studies [47]. Standard LASSO performs selection independently for each transition. FSGL is superior when you have prior knowledge that certain biomarkers may have similar effects (fused effect) across related transitions (e.g., from complete remission to either relapse or death), or when you want to select a gene as relevant only if it affects a specific group of transitions (grouping effect) [47]. This method integrates sparsity, fusion, and grouping penalties, leading to a more structured and biologically plausible model from high-dimensional data. For a standard single-endpoint overall survival analysis, regular LASSO-Cox is usually sufficient [42].

Implementation & Troubleshooting

Q4: During LASSO-Cox regression, how do I choose between lambda.min and lambda.1se for the final model, and what are the practical implications?

This choice balances model complexity against generalizability [42].

lambda.min: The value of lambda that gives the minimum mean cross-validated error. It selects the model with the best fit to the training data but may include more genes, carrying a slightly higher risk of overfitting.lambda.1se: The largest value of lambda such that the error is within 1 standard error of the minimum. This is the "one standard error rule," which selects a more parsimonious model with fewer genes. It prioritizes simplicity and often better generalization to new data.

Recommendation: For discovery-phase biomarker research where sensitivity is key, consider lambda.min. For building a clinically applicable, robust prognostic signature, lambda.1se is often preferred as it yields a sparser, more stable model [42].

Q5: My LASSO-Cox model yields a risk score, but the Kaplan-Meier curves for high/low-risk groups are not statistically significant (p > 0.05). What could be wrong? This common issue has several potential causes and solutions:

- Poor Gene Signature: The selected genes may not be strongly prognostic. Solution: Revisit the initial TME gene list and differential expression filters. Consider incorporating biological prior knowledge or using more advanced FS methods like copula entropy-based selection, which captures interaction gains between genes [48].

- Suboptimal Cut-off: Using the median risk score as a cut-off is arbitrary and may not separate prognostic groups well. Solution: Use the

surv_cutpointfunction (from thesurvminerR package) to determine the risk score threshold that maximizes survival differences [43]. - Cohort Heterogeneity: The patient cohort may be too heterogeneous (e.g., mixed stages, subtypes). Solution: Perform consensus clustering based on TME gene expression to identify molecular subtypes first, then build or validate the signature within more homogeneous clusters [18].

- Inadequate Power: The number of "events" (e.g., deaths) may be too low for reliable model estimation. Solution: Ensure you have at least 5-10 events per candidate predictor variable before analysis [42].

Q6: How can I use machine learning to improve my TME signature, and how do I interpret "black box" models in a biological context? Machine learning (ML) models like Random Forest (RF) or XGBoost can be used in two key ways: 1) as advanced feature selectors to complement LASSO, or 2) as powerful classifiers that use your TME risk score and other clinicopathological features to predict outcomes or therapy response [46]. To tackle the "black box" problem, use Explainable AI (XAI) techniques:

- SHAP (SHapley Additive exPlanations): This method assigns each feature (gene) an importance value for a specific prediction. It can reveal non-linear relationships and interactions. You can use SHAP values post-hoc to identify the top genes driving the ML model's decisions, creating a shortlist of high-confidence biomarkers [46]. For instance, one study used SHAP to reduce over 21,000 features to a coherent list of 172 unique influential genes for cancer classification [46].

- Strategy: Use LASSO-Cox to get a preliminary, interpretable prognostic signature. Then, use an ML model (with SHAP explanation) on a broader set of genes to identify additional interactive or non-linear biomarkers that may have been missed, enriching your biological insight [46].

Diagram 2: Feature Selection Strategy Relationships (96 characters)

Advanced Analysis & Validation

Q7: Beyond prognostic prediction, how can I validate that my TME signature is biologically relevant and has potential therapeutic implications? Technical validation must be complemented by functional and immunological analysis [18]:

- Immune Infiltration Correlation: Use ssGSEA or CIBERSORT to quantify immune cell abundances. Correlate these with your risk score. A valid TME signature should show strong associations (e.g., low-risk score correlating with higher CD8+ T cell infiltration) [18] [45].

- Immunotherapy Response Prediction: Calculate TIDE score or Immunophenoscore (IPS). A well-constructed signature should show lower TIDE scores (indicating a lower likelihood of immune evasion) in the favorable prognostic group (e.g., low-risk), suggesting potential responsiveness to immune checkpoint inhibitors [18].

- Functional Enrichment Analysis: Perform GSEA or GSVA on all genes correlated with the risk score. The high-risk group should be enriched in pathways like epithelial-mesenchymal transition (EMT) or extracellular matrix (ECM) remodeling, while the low-risk group may be enriched in immune activation pathways [18] [43].

- In Vitro/In Vivo Validation: The gold standard. Select a key gene from your signature (e.g., SERPINB3 in a bladder cancer study) and perform functional experiments (knockdown/overexpression) in relevant cell lines to validate its role in migration, invasion, or drug sensitivity [18].

Q8: What are the critical experimental protocols for the initial bioinformatics steps in building a TME signature? The foundational computational steps require rigorous protocols:

- Data Preprocessing: For TCGA RNA-seq data (FPKM format), convert to TPM (Transcripts Per Kilobase Million) and apply log2 transformation for normalization. For microarray data from GEO, use RMA normalization for background correction and quantile normalization. Use the Combat algorithm to remove batch effects when merging datasets [18].

- Differential Expression Analysis: Use the

limmaR package with a threshold of|log2FC| > 1and FDR (False Discovery Rate) < 0.05 to identify TME-related differentially expressed genes (DETMRGs) between tumor and normal samples [18]. - Consensus Clustering: Use the

CancerSubtypesR package with 1000 iterations to identify stable TME-related molecular subtypes. Validate clusters by assessing significant differences in survival and clinicopathological features [18]. - Functional Analysis: Use the

clusterProfilerR package for Gene Ontology (GO) and KEGG pathway enrichment analysis of signature genes or risk-correlated genes. Use theGSVApackage for pathway activity estimation [18] [45].

Comparative Analysis of Feature Selection Methods

Table 1: Comparison of Feature Selection Methods for TME Signature Development

| Method | Core Principle | Best For / Key Strength | Primary Limitation | Example in TME Research |

|---|---|---|---|---|

| LASSO-Cox Regression [18] [42] | L1 regularization shrinks coefficients to zero. | High-dimensional survival data (p >> n). Produces sparse, interpretable models. | Assumes linear effects. May select one from a group of correlated genes arbitrarily. | Selecting a 9-gene prognostic signature for bladder cancer from 133 candidates [18]. |

| Fused Sparse-Group Lasso (FSGL) [47] | Combines L1 penalty with fusion & group penalties. | Multi-state survival models where biomarkers have similar effects across related transitions. | Computationally intensive. Requires careful tuning of multiple penalty parameters. | Modeling effects of biomarkers on transitions between remission, relapse, and death in AML. |

| Copula Entropy (CEFS+) [48] | Information-theoretic; maximizes relevance, minimizes redundancy, captures interaction gain. | High-dimensional genetic data where gene-gene interactions are important. | Computationally heavy for extremely large feature sets. Relatively new method. | Selecting feature subsets that capture non-linear interactions between genes in expression data. |

| SHAP-based Selection [46] | Post-hoc explanation of ML models using Shapley values. | Interpreting "black box" ML models (RF, XGBoost) to identify influential features. | Dependent on the underlying ML model's performance and stability. | Identifying 172 key genes from 21,480 for classifying five female cancers using Random Forest. |