Virchow, CONCH, and UNI: A Comparative Overview of Foundation Models Revolutionizing Computational Pathology

This article provides a comprehensive overview of leading foundation models in computational pathology—Virchow, CONCH, and UNI.

Virchow, CONCH, and UNI: A Comparative Overview of Foundation Models Revolutionizing Computational Pathology

Abstract

This article provides a comprehensive overview of leading foundation models in computational pathology—Virchow, CONCH, and UNI. It explores the core architectures and self-supervised learning approaches that underpin these models, detailing their applications in critical tasks such as pan-cancer detection, biomarker prediction, and rare disease diagnosis. The content further addresses practical challenges in implementation, including data scarcity and computational demands, and presents a rigorous comparative analysis of model performance based on recent independent benchmarking studies. Aimed at researchers, scientists, and drug development professionals, this review synthesizes current capabilities and future trajectories of these transformative AI tools in biomedical research and clinical practice.

Understanding Pathology Foundation Models: Core Architectures and Self-Supervised Learning

The field of computational pathology is undergoing a fundamental transformation driven by the emergence of foundation models. These models represent a seismic shift from traditional task-specific artificial intelligence systems toward general-purpose representations that can be adapted to a wide range of downstream clinical and research applications. Foundation models are defined as large-scale AI models trained on broad data using self-supervision at scale that can be adapted to a wide range of downstream tasks [1]. This transition mirrors developments in natural language processing and computer vision but presents unique challenges and opportunities due to the complex, multi-scale nature of histopathology data.

The limitations of traditional task-specific models have become increasingly apparent as the field advances. Earlier approaches in computational pathology relied heavily on supervised learning, requiring extensive manual annotation by expert pathologists for each specific task—whether cancer detection, biomarker prediction, or grading. The average cost of pathologist annotation alone is approximately $12 per slide when calculated at standard rates, creating significant bottlenecks in model development [1]. Furthermore, these specialized models often struggled with generalization across different tissue types, cancer variants, and institutional-specific preparations. Foundation models address these limitations by learning universal feature representations from massive datasets without task-specific labels, capturing the fundamental morphological patterns that underlie pathological assessment across diverse diseases and tissue types.

The Evolution: From Task-Specific Models to Foundation Models

The progression from task-specific AI to foundation models in computational pathology represents not merely an incremental improvement but a fundamental rearchitecture of how AI systems are developed and deployed in histopathology. Traditional deep learning models in pathology were characterized by their narrow focus—typically excelling at a single diagnostic task such as cancer grading, mitotic figure counting, or specific biomarker detection. These models were usually trained on limited, carefully annotated datasets using supervised learning approaches, which constrained their applicability and required extensive relabeling for each new clinical task.

Foundation models differ from their predecessors across several critical dimensions, as summarized in Table 1. The most significant distinction lies in their training paradigm: rather than being trained with labeled data for a specific task, foundation models leverage self-supervised learning on massive, diverse datasets of histopathology images. This allows them to learn the fundamental language of tissue morphology—capturing patterns across cellular structures, tissue architecture, staining characteristics, and spatial relationships without human-provided labels. The resulting models exhibit remarkable versatility, enabling application to numerous downstream tasks including cancer detection, subtyping, biomarker prediction, prognosis estimation, and even cross-modal applications linking images with pathological reports or genomic data.

Table 1: Fundamental Differences Between Traditional AI Models and Foundation Models in Computational Pathology

| Characteristics | Foundation Models | Traditional AI Models |

|---|---|---|

| Model Architecture | (Mainly) Transformer | Convolutional Neural Network |

| Model Size | Very large (hundreds of millions to billions of parameters) | Medium to large |

| Applicable Tasks | Many diverse tasks | Single specific task |

| Performance on Adapted Tasks | State-of-the-art (SOTA) | High to SOTA |

| Performance on Untrained Tasks | Medium to high | Low |

| Data Amount for Training | Very large (millions of images) | Medium to large |

| Use of Labeled Data for Training | No (self-supervised) | Yes (supervised) |

The scaling laws observed in other AI domains have proven equally relevant to computational pathology. Model performance demonstrates strong dependence on both dataset size and model architecture complexity [2]. Early foundation models in pathology were trained on limited public datasets such as The Cancer Genome Atlas (TCGA), which contains approximately 29,000 whole slide images (WSIs). Contemporary foundation models now leverage proprietary datasets orders of magnitude larger—Virchow was trained on 1.5 million WSIs, while UNI 2 was trained on over 200 million pathology images sampled from 350,000+ diverse whole slide images [3] [4]. This massive scale, combined with advanced self-supervised learning techniques like contrastive learning and masked image modeling, enables the models to capture the rich diversity of morphological patterns present across different tissue types, disease states, and laboratory preparations.

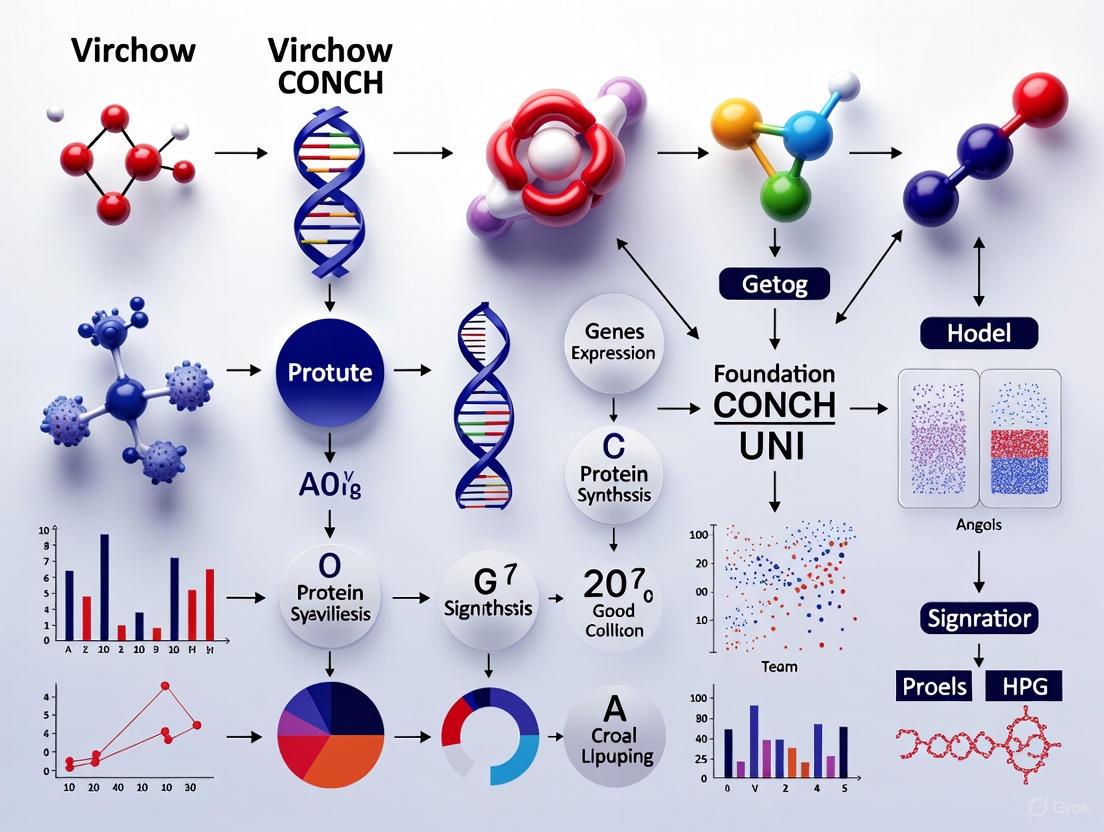

The following diagram illustrates the key evolutionary pathway from task-specific models to general-purpose foundation models in computational pathology:

Major Foundation Models: Architectures and Capabilities

Virchow: Scaling Vision-Only Pathology Models

Virchow represents a landmark in vision-only foundation models for computational pathology. Developed by Paige and Memorial Sloan Kettering Cancer Center, this model is a 632 million parameter vision transformer (ViT) trained on an unprecedented dataset of 1.5 million H&E-stained whole slide images from approximately 100,000 patients [2] [4]. The model employs the DINOv2 self-supervised learning algorithm, which leverages both global and local regions of tissue tiles to learn rich embeddings that capture morphological patterns at multiple scales. This extensive training dataset encompassed 17 different tissue types including both cancerous and benign tissues, collected via biopsy (63%) and resection (37%) procedures, providing exceptional diversity in morphological representation.

The clinical utility of Virchow has been demonstrated through its application to pan-cancer detection, where it achieved a remarkable specimen-level area under the receiver operating characteristic curve (AUROC) of 0.95 across nine common and seven rare cancer types [2]. Particularly noteworthy is its performance on rare cancers—defined by the National Cancer Institute as having an annual incidence of fewer than 15 people per 100,000—where it maintained an AUROC of 0.937, demonstrating robust generalization to uncommon morphological patterns. When compared to specialized clinical-grade AI products, the Virchow-based pan-cancer model performed nearly as well as these targeted systems overall and actually outperformed them on some rare cancer variants, highlighting the value of learning from massively diverse datasets.

CONCH: Vision-Language Pretraining for Histopathology

CONCH (CONtrastive learning from Captions for Histopathology) pioneered vision-language foundation models in computational pathology. Unlike vision-only approaches, CONCH was trained on 1.17 million image-caption pairs, learning to align visual histological patterns with textual descriptions [5]. This multimodal approach mirrors how human pathologists learn and communicate—associating visual morphological patterns with descriptive terminology. The model architecture enables a wide range of applications including image classification, segmentation, captioning, text-to-image retrieval, and image-to-text retrieval without requiring task-specific fine-tuning.

In comprehensive benchmarking studies, CONCH demonstrated exceptional performance across multiple domains. For morphology-related tasks, it achieved a mean AUROC of 0.77, the highest among 19 foundation models evaluated [6]. Across 19 biomarker-related tasks, CONCH and Virchow2 both achieved the highest mean AUROCs of 0.73, while in prognostic-related tasks, CONCH again led with a mean AUROC of 0.63 [6]. The model's vision-language capabilities make it particularly valuable for applications requiring joint understanding of visual patterns and textual context, such as generating pathological descriptions or retrieving cases based on textual queries.

UNI: Towards General-Purpose Pathology Representations

UNI represents another significant advancement in general-purpose representations for computational pathology. Developed by Mahmood Lab, the original UNI model utilized a ViT-l/16 architecture, while the more recent UNI2 employs a larger ViT-h/14-reg8 architecture trained on over 200 million pathology H&E and IHC images sampled from 350,000+ diverse whole slide images [3]. This massive scale of training data enables the model to learn highly transferable representations applicable to diverse downstream tasks with minimal adaptation.

The UNI framework emphasizes task-agnostic pretraining followed by efficient adaptation to specific clinical applications. This approach has been widely adopted in the research community, with numerous studies demonstrating its effectiveness for tasks ranging from cancer subtyping and biomarker prediction to survival analysis and tissue segmentation [3]. The model's representations have proven particularly valuable in low-data regimes, where limited annotated examples are available for specific rare conditions or specialized tasks.

Emerging Architectures: TITAN and Multimodal Approaches

The field continues to evolve rapidly with newer architectures addressing limitations of earlier approaches. TITAN (Transformer-based pathology Image and Text Alignment Network) represents a recent advancement in whole-slide foundation models that processes entire slides rather than isolated patches [7]. This model employs a three-stage pretraining strategy: vision-only unimodal pretraining on region-of-interest crops, cross-modal alignment of generated morphological descriptions at the ROI level, and cross-modal alignment at the whole-slide level with clinical reports.

TITAN was trained on 335,645 whole-slide images aligned with corresponding pathology reports and 423,122 synthetic captions generated from a multimodal generative AI copilot for pathology [7]. This approach enables the model to generate general-purpose slide representations that can be directly applied to slide-level tasks without additional aggregation steps. The model has demonstrated strong performance in few-shot and zero-shot settings, particularly for challenging scenarios such as rare disease retrieval and cancer prognosis with limited labeled data.

Table 2: Comparative Analysis of Major Pathology Foundation Models

| Model | Architecture | Training Data Scale | Training Method | Key Strengths | Performance Highlights |

|---|---|---|---|---|---|

| Virchow | ViT (632M params) | 1.5M WSIs | Self-supervised (DINOv2) | Pan-cancer detection, rare cancer identification | 0.95 AUROC pan-cancer detection, 0.937 AUROC rare cancers |

| CONCH | Vision-Language | 1.17M image-caption pairs | Contrastive learning | Multimodal applications, captioning, retrieval | 0.77 AUROC morphology tasks, leading biomarker prediction |

| UNI/UNI2 | ViT-l/16 → ViT-h/14 | 200M+ images from 350K+ WSIs | Self-supervised | General-purpose representations, transfer learning | State-of-the-art on multiple tissue classification tasks |

| TITAN | Slide-level ViT | 335K WSIs + 423K synthetic captions | Multimodal self-supervised | Whole-slide representation, report generation | Strong few-shot/zero-shot performance, rare disease retrieval |

Benchmarking and Experimental Evaluation

Comprehensive Performance Benchmarking

Independent benchmarking studies provide crucial insights into the relative strengths and limitations of different foundation models. A comprehensive evaluation of 19 foundation models across 31 clinically relevant tasks revealed important patterns in model performance [6]. This study utilized 6,818 patients and 9,528 slides from lung, colorectal, gastric, and breast cancers, assessing tasks related to morphology, biomarkers, and prognostication. When averaged across all tasks, CONCH and Virchow2 achieved the highest AUROCs of 0.71, followed closely by Prov-GigaPath and DinoSSLPath with AUROCs of 0.69.

The benchmarking revealed that different models excel in different domains. For morphology-related tasks, CONCH achieved the highest mean AUROC of 0.77, followed by Virchow2 and DinoSSLPath with 0.76 [6]. In biomarker prediction, Virchow2 and CONCH both led with AUROCs of 0.73, while for prognostic tasks, CONCH again achieved the highest performance (0.63 AUROC) [6]. These results suggest that vision-language models like CONCH may have particular advantages for tasks requiring conceptual understanding of tissue morphology, while vision-only models like Virchow excel in pattern recognition for specific pathological entities.

Experimental Protocols for Model Evaluation

Standardized evaluation protocols are essential for meaningful comparison across foundation models. The typical benchmarking workflow involves multiple stages: feature extraction, aggregation, and task-specific evaluation. For feature extraction, whole slide images are first tessellated into small, non-overlapping patches, typically at 20× magnification [6]. Each patch is then processed through the foundation model to generate embedding vectors that capture the morphological features of that tissue region.

For slide-level prediction tasks, these patch embeddings must be aggregated into a slide-level representation. Transformer-based multiple instance learning (MIL) approaches have demonstrated superior performance compared to traditional attention-based MIL, with an average AUROC difference of 0.01 across tasks [6]. The aggregated representations are then used to train task-specific classifiers, typically using weakly supervised learning approaches that require only slide-level labels rather than detailed patch-level annotations.

Evaluation in low-data scenarios is particularly important for assessing clinical utility. Studies have examined model performance with varying training set sizes (75, 150, and 300 patients) while maintaining similar ratios of positive samples [6]. Interestingly, performance metrics remained relatively stable between 75 and 150 patient cohorts, suggesting that foundation models can maintain effectiveness even with limited fine-tuning data. This has important implications for rare conditions where large annotated datasets are unavailable.

The following diagram illustrates the standard benchmarking workflow used to evaluate pathology foundation models:

Clinical Applications and Implementation

Diagnostic Applications and Biomarker Prediction

Foundation models are enabling transformative applications across the diagnostic spectrum. In cancer detection, models like Virchow have demonstrated the capability to identify both common and rare cancers across diverse tissue types with high accuracy [2]. This pan-cancer capability is particularly valuable for screening applications and for cases with atypical presentations. For biomarker prediction, foundation models can infer molecular alterations directly from H&E-stained images, potentially reducing reliance on expensive additional testing. Studies have successfully predicted biomarkers including BRAF mutations, microsatellite instability (MSI), and CpG island methylator phenotype (CIMP) status from routine histology images [6] [2].

The ability to predict biomarkers from standard H&E stains has significant implications for precision oncology. By identifying patients likely to have specific molecular alterations, foundation models can help prioritize cases for confirmatory testing and enable earlier treatment decisions. In many cases, these models have achieved AUROCs exceeding 0.70 for various biomarker prediction tasks, approaching the performance of dedicated specialized tests while utilizing routinely available tissue sections [6].

Prognostication and Therapeutic Response Prediction

Beyond diagnostic classification, foundation models show increasing promise for prognostic prediction and therapeutic response forecasting. By capturing subtle morphological patterns associated with disease aggressiveness and tumor microenvironment composition, these models can stratify patients according to likely clinical outcomes. In benchmarking studies, foundation models achieved mean AUROCs of approximately 0.63 for prognostic tasks, demonstrating modest but meaningful predictive value for outcomes such as survival and recurrence risk [6].

The multimodal capabilities of models like CONCH and TITAN enable particularly sophisticated applications in this domain. By integrating histological patterns with clinical data, pathological reports, and eventually genomic information, these systems can provide comprehensive prognostic assessments that account for multiple dimensions of disease biology. This approach aligns with the trend toward multidimensional classification in oncology, where treatment decisions incorporate histological, molecular, and clinical factors.

Implementation Considerations and Clinical Translation

The translation of foundation models from research tools to clinical practice requires careful consideration of multiple factors. Computational efficiency remains a significant challenge, as processing gigapixel whole slide images demands substantial resources [8]. Some studies have reported prohibitive computational overhead when applying certain foundation models to large slide repositories, highlighting the need for optimization in real-world deployment.

Generalization across diverse patient populations and laboratory protocols is another critical consideration. While foundation models trained on large datasets demonstrate better generalization than earlier approaches, performance variations across different demographic groups and institutional-specific preparations have been observed [8]. Continuous monitoring and potential recalibration may be necessary when deploying these models across varied clinical settings.

Regulatory approval pathways for foundation models in pathology are still evolving. The adaptability that makes these models powerful—their application to multiple tasks with minimal modification—presents challenges for traditional regulatory frameworks that typically evaluate medical AI systems for specific intended uses. Developing appropriate validation frameworks that preserve the flexibility of foundation models while ensuring safety and efficacy for each application represents an important frontier in clinical translation.

Table 3: Research Reagent Solutions for Pathology Foundation Model Research

| Resource Category | Specific Examples | Function and Application | Access Information |

|---|---|---|---|

| Pretrained Models | Virchow, CONCH, UNI, UNI2, TITAN | Feature extraction, transfer learning, few-shot applications | GitHub repositories, Hugging Face, institutional collaborations |

| Benchmark Datasets | TCGA, CPTAC, PANDA, CRC100K | Model evaluation, comparative performance assessment | Public repositories, controlled access platforms |

| Evaluation Frameworks | Linear probing, KNN classification, few-shot evaluation | Standardized performance assessment across models and tasks | Custom implementations, research code repositories |

| Computational Infrastructure | High-memory GPUs, distributed computing systems | Handling gigapixel whole slide images and large model architectures | Institutional HPC resources, cloud computing platforms |

| Annotation Tools | Digital pathology annotation software | Generating labeled datasets for fine-tuning and evaluation | Commercial and open-source pathology viewing platforms |

Challenges and Future Directions

Despite remarkable progress, several challenges remain in the development and deployment of pathology foundation models. Data diversity and representation continue to be concerns, as even large-scale training datasets may not fully capture the morphological spectrum across different populations, specimen types, and laboratory protocols [8]. The replicability of results across institutions also requires further investigation, with some studies reporting mixed success in reproducing published findings when using different datasets and computational environments [8].

The scaling laws observed in foundation models suggest that continued increases in data and model size may yield further performance improvements. However, the optimal balance between data quantity, diversity, and quality remains an open research question. Some evidence suggests that data diversity may outweigh sheer volume, with models trained on more heterogeneous datasets sometimes outperforming those trained on larger but more homogeneous collections [6].

Future development will likely focus on several key areas: whole-slide modeling approaches that better capture long-range spatial relationships across tissue sections; improved multimodal integration combining histology with genomic, transcriptomic, and clinical data; more efficient architectures that reduce computational requirements; and enhanced interpretability methods that make model predictions transparent to pathologists. As these technical advances progress, parallel efforts will be needed to establish appropriate validation frameworks, regulatory pathways, and clinical integration strategies to ensure that foundation models fulfill their potential to enhance pathological practice and patient care.

The emergence of generalist medical AI systems that integrate pathology foundation models with models from other medical domains represents a particularly promising direction [1]. Such systems could provide comprehensive diagnostic support by combining information from histology, radiology, laboratory medicine, and clinical notes, moving closer to the holistic assessment approaches used by expert clinicians. Realizing this vision will require not only technical innovation but also careful attention to workflow integration, usability, and the development of appropriate trust mechanisms between pathologists and AI systems.

The field of computational pathology has been transformed by the advent of foundation models, which are large-scale AI models trained on broad data that can be adapted to a wide range of downstream tasks [1]. These models address critical challenges in pathology AI development, notably the high cost and time required for pathologists to annotate data and the need for models that generalize across diverse tissue types and cancer variants [1] [9]. Prior to Virchow, pathology foundation models were trained on significantly smaller datasets, ranging from tens to hundreds of thousands of slides [9] [2]. Virchow represents a substantial scaling in both data and model size, trained on 1.5 million hematoxylin and eosin (H&E) stained whole slide images (WSIs) using the self-supervised learning algorithm DINOv2 [10] [2]. This massive scale is crucial for capturing the immense diversity of morphological patterns in histopathology and enables robust performance, particularly on rare cancers where labeled data is scarce [2].

Model Architecture & Training Methodology

Core Architectural Framework

Virchow is built on the Vision Transformer (ViT) architecture [10] [2]. The model comprises 632 million parameters, classifying it as a ViT-huge model [9] [2]. The input to the model consists of tissue tiles extracted from gigapixel whole-slide images. The fundamental components and training data are summarized in the table below.

Table 1: Virchow Model Architecture and Training Data Specifications

| Component | Specification | Details |

|---|---|---|

| Model Architecture | Vision Transformer (ViT) | 632 million parameters (ViT-huge) [10] [2] |

| Training Algorithm | DINOv2 | Self-distillation with no labels (SSL) [2] |

| Training Dataset | 1.5 million H&E WSIs | Sourced from ~100,000 patients at MSKCC; includes biopsies (63%) and resections (37%) [2] |

| Tissue Coverage | 17 high-level tissues | Cancerous and benign tissues [2] |

| Input Processing | Tissue Tiles | Extracted from WSIs; uses global and local views for self-supervised learning [2] |

DINOv2 Self-Supervised Training

The DINOv2 (self-DIstillation with NO labels) algorithm is central to Virchow's training [2]. This method employs a student-teacher network structure where both are fed different augmented views of the same image tiles. The student network is trained to match the output of the teacher network. The teacher's weights are an exponential moving average (EMA) of the student's weights, which stabilizes training. This process allows the model to learn versatile visual representations without any manual annotations by leveraging the inherent structure of the data itself.

Diagram 1: DINOv2 Training Workflow for Virchow. The diagram illustrates the self-supervised learning process where the student network learns to match the output of a teacher network fed with different augmented views of the same pathology image tiles. The teacher's weights are an exponential moving average (EMA) of the student's weights. This process creates general-purpose visual embeddings without manual labels.

Key Experiments & Performance Benchmarks

Pan-Cancer Detection

A primary application of Virchow is pan-cancer detection, which involves training a single model to identify cancer across multiple tissue types. A weakly supervised aggregator model uses Virchow's tile embeddings to make slide-level predictions.

Table 2: Pan-Cancer Detection Performance (Specimen-Level AUC) [2]

| Cancer Category | Virchow | UNI | Phikon | CTransPath |

|---|---|---|---|---|

| Overall (16 cancers) | 0.950 | 0.940 | 0.932 | 0.907 |

| Rare Cancers (7 types) | 0.937 | Not Reported | Not Reported | Not Reported |

| Common Cancers (9 types) | >0.950 (avg) | >0.940 (avg) | >0.932 (avg) | >0.907 (avg) |

| Bone Cancer | 0.841 | 0.813 | 0.822 | 0.728 |

| Cervix Cancer | 0.875 | 0.830 | 0.810 | 0.753 |

The pan-cancer detector demonstrated strong generalization on rare cancers and out-of-distribution data from external institutions. At a high sensitivity of 95%, the model using Virchow embeddings achieved a specificity of 72.5%, outperforming other foundation models [2].

Tile-Level Benchmarks and Biomarker Prediction

Virchow's embeddings were also evaluated on tile-level classification tasks, where they achieved state-of-the-art performance on internal and external benchmarks [10]. Furthermore, the model showed strong capabilities in predicting biomarkers directly from routine H&E images, potentially reducing the need for additional specialized testing. Virchow outperformed other models in predicting key gene mutations, such as in lung adenocarcinoma [2].

Comparative Analysis of Pathology Foundation Models

The landscape of pathology foundation models has expanded rapidly. The table below contextualizes Virchow among other notable models.

Table 3: Comparative Analysis of Public Pathology Foundation Models [9]

| Model | Parameters | Training Slides | Training Tiles | Architecture | SSL Algorithm |

|---|---|---|---|---|---|

| Virchow | 631 M | 1.5 M | 2.0 B | ViT-H | DINOv2 |

| Prov-GigaPath | 1135 M | 171 k | 1.3 B | LongNet | DINOv2 + MAE |

| UNI | 303 M | 100 k | 100 M | ViT-L | DINOv2 |

| Phikon | 86 M | 6 k | 43 M | ViT-B | iBOT |

| CTransPath | 28 M | 32 k | 16 M | Swin Transformer + CNN | MoCo v2 |

This comparison highlights Virchow's position as a model trained on an exceptionally large slide dataset. Other models like CONCH explore a different approach as a visual-language foundation model pretrained on 1.17 million image-caption pairs, enabling tasks like image classification, captioning, and cross-modal retrieval [5].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Implementing Pathology Foundation Models

| Resource / Solution | Function / Purpose | Example / Note |

|---|---|---|

| Whole-Slide Scanners | Digitizes glass slides into gigapixel WSIs | Various vendors and models; introduces variability [11] |

| Tile Extraction Pipeline | Divides WSIs into smaller, manageable patches | Requires tissue detection & background filtering [11] [9] |

| Self-Supervised Learning (SSL) Frameworks | Enables training on unlabeled image data | DINOv2, iBOT, MAE [9] [2] |

| Multiple Instance Learning (MIL) | Aggregates tile-level features for slide-level prediction | Attention-based MIL networks [11] [9] |

| Vision Transformer (ViT) Architecture | Neural network backbone for processing image sequences | Scales to hundreds of millions of parameters [10] [2] |

| Public Model Weights | Provides pre-trained models for transfer learning | Virchow, UNI, CONCH, and CTransPath weights are publicly available [3] [9] [5] |

Experimental Protocol: Downstream Task Fine-Tuning

To adapt Virchow for a specific downstream task (e.g., cancer subtyping or biomarker prediction), the following methodology is employed:

- Feature Extraction: A downstream whole-slide image (WSI) is processed by first removing background regions using a tissue detection mask. The remaining tissue is divided into non-overlapping patches (e.g., 224x224 pixels at 20x magnification). Each patch is then encoded into a feature vector using the pre-trained Virchow encoder, generating hundreds to thousands of feature vectors per slide [9].

- Model Training for Slide-Level Prediction: These feature vectors are aggregated using an attention-based multiple instance learning (MIL) network. The MIL model learns to weight the importance of different tiles and produces a final slide-level prediction [11] [9]. This approach is summarized in the following diagram:

Diagram 2: Downstream Task Fine-Tuning. This workflow shows how the pre-trained Virchow model is used as a feature extractor for a downstream task like cancer detection. Features from individual image tiles are aggregated by a multiple instance learning (MIL) model to produce a final slide-level prediction.

Virchow establishes that scaling up training data to millions of whole-slide images enables the creation of a powerful foundation model capable of robust pan-cancer detection and biomarker prediction. Its success, along with that of other models like UNI, CONCH, and Prov-GigaPath, underscores a paradigm shift in computational pathology towards large-scale, self-supervised learning. These models form a foundational toolkit for researchers and drug development professionals, accelerating tasks ranging from rare cancer diagnosis to the discovery of novel morphological biomarkers.

The field of computational pathology is undergoing a transformation driven by artificial intelligence and the emergence of foundation models. These models, pre-trained on vast datasets, can be adapted to a wide range of downstream tasks with minimal fine-tuning. Among these, CONCH (CONtrastive learning from Captions for Histopathology) represents a significant advancement as a visual-language foundation model specifically designed for histopathology. Unlike vision-only models, CONCH leverages both histopathology images and corresponding textual descriptions, mirroring how pathologists teach and reason about histopathologic entities. This approach enables a single model to perform diverse tasks without task-specific training, addressing critical challenges of label scarcity in the medical domain and the impracticality of training separate models for every possible diagnostic scenario [12].

The Landscape of Pathology Foundation Models

Several foundation models have been developed for computational pathology, each with distinct architectures, training datasets, and capabilities. The table below summarizes three leading models: CONCH, Virchow, and UNI.

Table 1: Comparison of Major Pathology Foundation Models

| Feature | CONCH | Virchow | UNI |

|---|---|---|---|

| Model Type | Visual-Language [12] | Vision-Only [2] | Vision-Only [9] |

| Core Architecture | ViT-B/16 Vision Encoder & L12 Text Encoder [13] | ViT-H (632M parameters) [2] [10] | ViT-L (303M parameters) [9] |

| Pre-training Algorithm | Contrastive Learning & Captioning (CoCa) [12] | Self-supervised Learning (DINOv2) [2] [10] | Self-supervised Learning (DINO) [9] |

| Training Data Scale | 1.17M image-caption pairs [5] [12] | ~1.5M whole slide images (WSIs) [2] | 100M tiles from 100K WSIs [9] |

| Key Capabilities | Image & text encoding, zero-shot classification, cross-modal retrieval, captioning [12] | Pan-cancer detection, biomarker prediction [2] | Tile and slide-level classification [9] |

| Primary Application | Multimodal tasks involving images and text [5] | High-performance cancer detection across common and rare types [2] | General-purpose visual feature extraction [9] |

CONCH Architecture and Pre-training Methodology

Model Design and Components

CONCH is built upon the CoCa (Contrastive Captioner) framework, which integrates three core components: a vision encoder, a text encoder, and a multimodal fusion decoder. The vision encoder is a Vision Transformer (ViT-B/16) with 90 million parameters, processing histopathology image patches. The text encoder is a transformer-based model (L12-E768-H12) with 110 million parameters, handling textual input. During pre-training, the model is trained using a combination of an image-text contrastive loss, which aligns visual and textual representations in a shared embedding space, and a captioning loss, which teaches the model to generate descriptive captions for given images [12] [13]. This dual objective ensures the model learns both discriminative and generative capabilities.

Pre-training Dataset and Regime

CONCH was pre-trained on a diverse collection of 1.17 million histopathology image-caption pairs, the largest such dataset for pathology at the time of its development. The data was sourced from publicly available PubMed Central Open Access (PMC-OA) articles and internally curated sources. The images encompass various stain types, including Hematoxylin and Eosin (H&E), immunohistochemistry (IHC), and special stains, contributing to the model's robustness. The training was conducted using mixed-precision (fp16) on 8 Nvidia A100 GPUs for approximately 21.5 hours [13]. A key advantage noted by the developers is that CONCH was not pre-trained on large public slide collections like TCGA, PAIP, or GTEX, minimizing the risk of data contamination when evaluating on popular public benchmarks [5] [13].

Diagram 1: CONCH pre-training workflow.

Experimental Protocols and Performance Benchmarks

CONCH was rigorously evaluated against other visual-language models, including PLIP, BiomedCLIP, and OpenAICLIP, across 14 diverse benchmarks covering tasks like classification, segmentation, and retrieval [12].

Zero-shot Classification

Methodology: For zero-shot region-of-interest (ROI) classification, class names are converted into a set of text prompts (e.g., "a histology image of invasive lobular carcinoma"). The image is encoded by the vision encoder, and the text prompts are encoded by the text encoder. The classification is performed by computing the cosine similarity between the image embedding and each text prompt embedding in the shared multimodal space, selecting the class with the highest similarity score [12]. For whole-slide image (WSI) classification, the MI-Zero method is employed: the gigapixel WSI is divided into smaller tiles, each tile is classified via zero-shot prediction, and the individual tile-level scores are aggregated into a final slide-level prediction [12].

Results: CONCH demonstrated state-of-the-art performance. The table below summarizes its zero-shot classification results across several slide-level and ROI-level tasks.

Table 2: Zero-shot Classification Performance of CONCH

| Task | Dataset | Primary Metric | CONCH Performance | Next-Best Model Performance |

|---|---|---|---|---|

| NSCLC Subtyping | TCGA NSCLC | Balanced Accuracy | 90.7% [12] | 78.7% (PLIP) [12] |

| RCC Subtyping | TCGA RCC | Balanced Accuracy | 90.2% [12] | 80.4% (PLIP) [12] |

| BRCA Subtyping | TCGA BRCA | Balanced Accuracy | 91.3% [12] | 55.3% (BiomedCLIP) [12] |

| Gleason Grading | SICAP | Quadratic Weighted Kappa (κ) | 0.690 [12] | 0.550 (BiomedCLIP) [12] |

| Colorectal Tissue Classification | CRC100k | Balanced Accuracy | 79.1% [12] | 67.4% (PLIP) [12] |

Cross-Modal Retrieval and Other Tasks

Image-to-Text and Text-to-Image Retrieval: Cross-modal retrieval is a core strength of CONCH. Given a query image, the model can retrieve the most relevant text description from a database, and vice versa. This is achieved by computing the cosine similarity between the query's embedding and the embeddings of all candidates in the database [12]. This capability is crucial for tasks like knowledge search and case retrieval in clinical and research settings.

Segmentation and Captioning: CONCH can also be adapted for semantic segmentation tasks. Using a method similar to ClusterFit, the model generates patch-level features that are clustered to create pseudo-masks, which are then used to train a segmentation decoder [12]. Although the publicly released weights do not include the multimodal decoder (to prevent potential leakage of private data), the original model was also trained with a captioning objective, enabling it to generate descriptive captions for histopathology images [13].

Practical Implementation Guide

Access and Setup

CONCH is available for non-commercial academic research under a CC-BY-NC-ND 4.0 license. Access is gated; researchers must request access through the Hugging Face model repository using an official institutional email address [13].

Installation and Basic Usage:

- Install the CONCH package:

pip install git+https://github.com/Mahmoodlab/CONCH.git - After receiving access, load the model using the provided token:

- Encode an image for linear probing or multiple instance learning:

- For retrieval tasks, use the normalized projections:

python with torch.inference_mode(): image_embs = model.encode_image(image, proj_contrast=True, normalize=True) text_embs = model.encode_text(tokenized_prompts) similarity_scores = (image_embs @ text_embs.T).squeeze(0)[13]

The Researcher's Toolkit

Table 3: Essential Research Reagents and Resources for CONCH

| Item / Resource | Description & Function |

|---|---|

| CONCH Model Weights | Pre-trained parameters for the vision and text encoders. Used as a foundational feature extractor or for zero-shot inference [13]. |

Python conch Package |

The official software library that provides the model architecture and necessary functions to load and run the CONCH model [5] [13]. |

| High-Performance GPU (e.g., Nvidia A100) | Graphics processing unit essential for efficient model inference and fine-tuning, reducing computation time from hours to minutes [13]. |

| PyTorch & Hugging Face Transformers | Core machine learning frameworks used for model implementation, training, and tokenization [13]. |

| Institutional Hugging Face Account | A mandatory requirement for accessing the gated model repository, ensuring compliance with the license terms [13]. |

Diagram 2: CONCH zero-shot WSI classification process.

CONCH establishes a new paradigm in computational pathology by effectively bridging visual and linguistic domains. Its ability to perform zero-shot classification, cross-modal retrieval, and other diverse tasks with state-of-the-art accuracy demonstrates the power of visual-language pre-training. By providing a versatile and powerful foundation, CONCH has the potential to accelerate research and development across a wide spectrum of pathology applications, from diagnostic support and educational tools to biomarker discovery. Its open availability to the research community further catalyzes innovation, paving the way for more generalized and impactful AI tools in histopathology.

The field of computational pathology has been transformed by foundation models that encode histopathology regions-of-interest (ROIs) into versatile and transferable feature representations via self-supervised learning [7]. Within this landscape, UNI represents a significant advancement as a visual-language foundation model specifically designed for computational pathology tasks. Unlike traditional AI models that require extensive labeled data for each specific task, foundation models like UNI are trained on broad data using self-supervision at scale and can be adapted to a wide range of downstream tasks [14]. This capability is particularly valuable in histopathology, where obtaining large-scale annotated datasets imposes a substantial labeling burden on pathologists, limiting the practical benefits of AI-assisted diagnostics [15].

UNI belongs to a class of multimodal foundation models (MFMs) that integrate multiple data modalities such as language, image, and bioinformatics [14]. These models demonstrate superior expressiveness and scalability based on large model architectures, extensive training data, and parallelizable training methods compared to traditional deep learning models [14]. The development of UNI and similar models addresses a critical need in pathology for more accurate and efficient AI tools that can reduce workload for pathologists and support decision-making in treatment plans while handling the complex morphological patterns found in histology images [7] [14].

Core Architectural Framework and Technical Specifications

Model Architecture and Design Principles

UNI employs a transformer-based architecture that has proven effective in handling the complex visual and linguistic representations required for pathology tasks. The model utilizes dedicated encoders to extract comprehensive feature representations for each modality, enabling seamless integration between phenotype patterns and molecular profiles [16]. This architectural approach allows UNI to process whole slide images (WSIs) at multiple resolutions, capturing both cellular-level details and tissue-level architectural patterns essential for accurate pathological assessment.

The model's design incorporates several innovative components to address domain-specific challenges in computational pathology. Unlike conventional multi-modal integration methods that primarily emphasize modality alignment, UNI's framework is designed to foster both modality alignment and retention [16]. This dual approach is crucial because histopathology and other biomedical data modalities exhibit pronounced heterogeneity, offering orthogonal yet complementary insights. While histopathology data provides morphological and spatial context elucidating tissue architecture and cellular topology, other modalities like transcriptomics delineate molecular signatures through gene expression patterns [16].

Technical Specifications and Implementation

UNI processes input data through a sophisticated pipeline that handles the unique characteristics of pathological images. The model constructs its input embedding space by dividing each WSI into non-overlapping patches, typically at 20× magnification, followed by the extraction of dimensional features for each patch using advanced feature extractors [7]. To manage the computational complexity caused by long input sequences in gigapixel WSIs, UNI employs efficient attention mechanisms and feature compression techniques.

Table 1: UNI Model Specifications and Key Technical Features

| Component | Specification | Function |

|---|---|---|

| Input Processing | Non-overlapping patches at 20× magnification | Divides WSIs into manageable units for processing |

| Feature Extraction | Pre-trained encoders (e.g., CONCH-based) | Converts image patches into feature representations |

| Modality Integration | Cross-attention mechanisms with alignment and retention modules | Fuses information from different data sources |

| Positional Encoding | Attention with linear bias (ALiBi) extended to 2D | Preserves spatial relationships between patches |

| Output Representation | Multi-scale feature embeddings | Captures both local cellular and global tissue patterns |

A critical innovation in UNI's architecture is its approach to handling variable-sized WSIs. The model creates views of a WSI by randomly cropping 2D feature grids, sampling region crops of specific dimensions from the WSI feature grid [7]. From these region crops, multiple global and local crops are sampled for self-supervised pretraining. This approach enables the model to learn robust representations that capture both fine-grained cellular details and broader tissue organizational patterns.

Cross-Modal Alignment Methodology

Technical Framework for Modality Alignment

UNI implements a sophisticated modality alignment module that dynamically draws paired data from different modalities into closer proximity in the embedding space while dispersing unrelated samples [16]. This alignment is achieved through contrastive learning objectives that maximize the similarity between corresponding image-text pairs while minimizing similarity for non-corresponding pairs. The alignment process operates at multiple levels, including ROI-level alignment with fine-grained morphological descriptions and slide-level alignment with clinical reports [7].

The alignment methodology addresses the fundamental challenge of bridging the semantic gap between visual pathological findings and their corresponding textual descriptions. Unlike conventional multi-modal inputs that often share highly overlapping features, histopathology and associated textual data exhibit significant heterogeneity, operating at different biological scales and encoding distinct yet complementary dimensions of disease-related information [16]. UNI's alignment framework is specifically designed to handle this heterogeneity while identifying and leveraging the shared correlations between modalities.

Implementation and Training Approach

UNI's cross-modal alignment is typically implemented through a multi-stage pretraining strategy. The initial stage involves vision-only unimodal pretraining on large datasets of histopathology images, enabling the model to learn fundamental visual representations of pathological structures [7]. Subsequent stages introduce cross-modal alignment, first at the ROI-level with generated morphological descriptions, and then at the whole-slide level with clinical reports [7].

Table 2: Cross-Modal Alignment Training Strategy in UNI

| Training Stage | Data Input | Objective | Outcome |

|---|---|---|---|

| Stage 1: Vision Pretraining | Diverse WSIs across multiple organ types | Self-supervised learning via masked image modeling and knowledge distillation | Robust visual feature extraction for pathological structures |

| Stage 2: ROI-Level Alignment | 8K×8K ROIs paired with synthetic captions | Contrastive learning between image patches and fine-grained textual descriptions | Fine-grained understanding of localized pathological features |

| Stage 3: Slide-Level Alignment | Complete WSIs paired with pathology reports | Global alignment between entire slides and comprehensive diagnostic text | Slide-level diagnostic reasoning and report generation capabilities |

This staged approach allows UNI to progressively build its cross-modal understanding, from localized cellular and tissue patterns to broader diagnostic concepts and relationships. The model leverages both real pathology reports and synthetically generated fine-grained descriptions to create a comprehensive alignment between visual patterns and their semantic representations [7].

Transfer Learning Capabilities and Performance

Adaptability to Diverse Pathology Tasks

UNI demonstrates exceptional transfer learning capabilities across a wide spectrum of pathology tasks without requiring extensive task-specific fine-tuning. The model can be effectively applied to histology image classification, segmentation, captioning, text-to-image retrieval, and image-to-text retrieval tasks [5]. This versatility stems from the rich, general-purpose representations learned during pretraining, which capture fundamental morphological patterns relevant across different organs, disease types, and staining protocols.

The model's architecture enables multiple transfer learning paradigms, including linear probing (training a simple classifier on frozen features), few-shot learning (adapting with very limited labeled examples), and zero-shot learning (performing tasks without any task-specific training) [7]. Particularly impressive is UNI's performance in low-data regimes, where it outperforms supervised baselines and previous foundation models, making it highly valuable for rare diseases and specialized applications where labeled data is scarce [7].

Quantitative Performance Benchmarks

UNI has been rigorously evaluated on diverse benchmarks demonstrating state-of-the-art performance across multiple domains. The model shows particular strength in few-shot and zero-shot classification scenarios, where it achieves competitive performance with only minimal training examples. In slide retrieval tasks, UNI enables effective retrieval of similar cases based on visual similarity or textual queries, facilitating comparative pathology and decision support [7].

Table 3: UNI Performance Across Key Pathology Tasks

| Task Category | Specific Applications | Key Performance Metrics | Comparative Advantage |

|---|---|---|---|

| Image Classification | Cancer subtyping, grading, biomarker prediction | Top-1 accuracy, AUC-ROC | Reduces annotation requirements by up to 90% in few-shot settings |

| Segmentation | Tissue and cellular segmentation | Dice coefficient, IoU | Generalizes across stain types and tissue preparations |

| Captioning & Report Generation | Automated pathology report generation | BLEU scores, clinical accuracy | Generates clinically relevant descriptions from WSIs |

| Cross-Modal Retrieval | Text-to-image, image-to-text retrieval | Recall@K, mean average precision | Enables content-based search in large pathology archives |

| Survival Analysis | Patient outcome prediction | Concordance index, log-rank p-values | Integrates morphological patterns with clinical data |

The model's strong performance across these diverse tasks demonstrates its effectiveness as a general-purpose feature extractor for computational pathology. By capturing biologically meaningful representations, UNI reduces the dependency on large annotated datasets and accelerates the development of AI tools for specialized pathological applications.

Experimental Protocols and Methodologies

Standardized Evaluation Framework

To ensure rigorous assessment of UNI's capabilities, researchers have established comprehensive evaluation protocols covering multiple downstream tasks and data modalities. The standard evaluation framework typically involves 5-fold cross-validation on multiple cohorts from large-scale datasets such as The Cancer Genome Atlas (TCGA), focusing on critical downstream tasks including cancer subtyping and survival analysis [16]. This approach ensures robust performance estimation across different tissue sites and patient populations.

The evaluation incorporates both linear probing and few-shot learning settings to comprehensively assess the model's performance and generalizability [16]. In linear probing evaluations, a simple classifier is trained on top of frozen features extracted by UNI, testing the quality of the representations without task-specific adaptation. In few-shot learning scenarios, the model is adapted with very limited labeled examples (typically ranging from 1 to 16 examples per class) to simulate real-world conditions where extensive annotations are unavailable.

Implementation Protocols for Key Applications

For cancer subtyping and classification, the standard protocol involves extracting features from WSIs using UNI, then training a classifier on these features using a limited set of labeled examples. The model processes input WSIs by dividing them into patches, encoding each patch, and aggregating the patch-level representations into a slide-level embedding that captures both local and global pathological patterns.

For survival analysis and prognosis prediction, UNI features are used in Cox proportional hazards models or other survival analysis frameworks to predict patient outcomes based on histomorphological patterns. The model's ability to capture prognostically relevant tissue and cellular characteristics enables accurate risk stratification without requiring explicit annotation of histological features.

For cross-modal retrieval tasks, the standard protocol involves encoding both images and text into a shared embedding space, then measuring similarity using cosine distance or other metrics. This enables bidirectional retrieval, where text queries can retrieve relevant images and vice versa, facilitating knowledge discovery and comparative analysis.

Research Reagent Solutions and Computational Tools

The effective implementation and application of UNI requires specific computational tools and resources that form the essential "research reagents" for working with this foundation model.

Table 4: Essential Research Reagent Solutions for UNI Implementation

| Tool Category | Specific Solutions | Function in Research Pipeline |

|---|---|---|

| Whole Slide Image Management | OMERO, Digital Slide Archive | Hosts and manages large-scale WSI datasets with appropriate metadata |

| Pathology Data Annotation | QuPath, ImageJ | Enables region-of-interest annotation and ground truth generation |

| Computational Pathology Frameworks | CONCH ecosystem, TIAToolbox | Provides pretrained models and standardized processing pipelines |

| Multi-Modal Learning Platforms | MIRROR framework, CLIP-based adaptations | Facilitates alignment between histopathology and other data modalities |

| Visualization and Analysis | Comparative Pathology Workbench (CPW) | Enables interactive visual analytics and collaborative interpretation |

These tools collectively support the end-to-end workflow for applying UNI to diverse pathology tasks, from data management and preprocessing to model implementation, evaluation, and visualization of results. The Comparative Pathology Workbench (CPW) deserves special mention as it provides a web-browser-based visual analytics platform offering shared access to an interactive "spreadsheet" style presentation of images and associated analysis data [17]. This facilitates direct and dynamic comparison of images at various magnifications, selected regions of interest, and results of image analysis or other data analyses such as scRNA-seq [17].

Workflow and Integration Diagrams

UNI Cross-Modal Alignment and Transfer Learning Workflow

The following diagram illustrates the complete workflow for UNI's cross-modal alignment and transfer learning capabilities:

Multi-Modal Alignment and Retention Mechanism

The following diagram details UNI's core innovation in balancing modality alignment with modality-specific retention:

Future Directions and Clinical Translation

The development of UNI represents a significant milestone in computational pathology, but several challenges remain for widespread clinical adoption. Future research directions focus on enhancing interpretability and explainability of model predictions to build trust among pathologists and clinicians. Additional efforts are needed to improve model robustness across diverse tissue preparation protocols, staining variations, and scanner types commonly encountered in real-world clinical settings.

A promising direction is the development of generalist medical AI systems that integrate pathology foundation models with FMs from other medical domains [14]. Such integrated systems could provide comprehensive diagnostic support by combining pathological findings with radiological, genomic, and clinical data, ultimately promoting precision and personalized medicine. As noted by researchers, "In the future, the development of generalist medical AI, which integrates pathology FMs with FMs from other medical domains, is expected to progress, effectively utilizing AI in real clinical settings to promote precision and personalized medicine" [14].

The clinical translation of UNI and similar foundation models also requires addressing regulatory considerations, standardization of deployment pipelines, and validation in multi-center trials. As these models continue to evolve, they hold tremendous potential to transform pathological practice by augmenting human expertise, reducing diagnostic variability, and uncovering novel morphological biomarkers that predict disease behavior and treatment response.

The Role of Self-Supervised Learning in Leveraging Unlabeled Whole Slide Images

The analysis of histopathological images, particularly whole slide images (WSIs), is fundamental to cancer diagnosis, prognosis prediction, and treatment formulation. However, this field faces a critical challenge: the complex morphology of tissues, inconsistency of staining protocols, and, most importantly, the scarcity of pixel-level annotations required by supervised deep learning methods [18]. Annotating gigapixel WSIs is costly, time-consuming, and requires skilled pathologists, making it a significant bottleneck [18]. This limitation has motivated the exploration of alternative learning paradigms that can leverage the vast amounts of unlabeled histopathological images available in clinical archives [18].

Self-supervised learning (SSL) has emerged as a powerful solution to this annotation bottleneck [18]. SSL processes large quantities of unlabeled data by leveraging intrinsic data structures to create its own supervisory signals, learning robust feature representations without extensive manual labeling [19]. Recent advances in masked image modeling (MIM) and contrastive learning have shown remarkable success in natural image domains, and these benefits are now being effectively adapted to medical imaging tasks [18]. Within the context of major histopathology foundation models like Virchow, CONCH, and UNI, SSL provides the foundational pretraining that enables these models to achieve state-of-the-art performance across diverse downstream tasks with minimal fine-tuning [20]. This technical guide explores the core SSL methodologies, their implementation in leading foundation models, and their practical applications in histopathology research.

Core Self-Supervised Learning Approaches for WSIs

Technical Foundations and Methodologies

Self-supervised learning for histopathology primarily utilizes two complementary approaches: contrastive learning and masked image modeling. These methods learn robust feature representations by solving pretext tasks designed to capture essential histological features without manual labels.

Contrastive Learning aims to learn an embedding space where similar sample pairs are positioned close together while dissimilar pairs are far apart. In digital histopathology, this approach has been successfully applied at scale. One large-scale study pretrained models on 57 histopathology datasets without labels, finding that combining multiple multi-organ datasets with different staining and resolution properties improved learned feature quality [19]. The study also revealed that using more images for pretraining leads to better downstream task performance, albeit with diminishing returns after approximately 50,000 images [19]. This approach enables models to learn features invariant to technical variations like staining protocols while capturing biologically relevant morphological patterns.

Masked Image Modeling (MIM) has recently shown remarkable success in histopathology. Inspired by language modeling in natural language processing, MIM randomly masks portions of input images and trains models to reconstruct the missing content. This approach forces the model to learn meaningful representations of tissue structures and cellular relationships. For histopathology, domain-specific knowledge can be incorporated into the masking strategy to produce more meaningful self-supervised representations [18]. The GMIM framework extends this concept with adaptive and hierarchical masked image modeling, bringing the benefits of masked modeling to volumetric medical images [18].

Hybrid approaches that combine multiple SSL techniques have demonstrated superior performance. One novel framework integrates masked image modeling with contrastive learning and adaptive semantic-aware data augmentation [18]. This hybrid approach leverages MIM to reconstruct fine-grained tissue structures while using contrastive learning to enforce feature invariance across scales and staining variations [18]. The combination is particularly suited to multi-scale WSI analysis, where capturing both local cellular details and global tissue context is essential.

Quantitative Performance of SSL Methods

Recent comprehensive evaluations demonstrate the substantial improvements achieved by advanced SSL methods over traditional supervised approaches across multiple histopathology datasets. The following table summarizes key performance metrics:

Table 1: Performance Comparison of SSL Methods on Histopathology Segmentation Tasks

| Method | Dice Coefficient | mIoU | Hausdorff Distance | Annotation Efficiency |

|---|---|---|---|---|

| Proposed Hybrid SSL Framework [18] | 0.825 (4.3% improvement) | 0.742 (7.8% enhancement) | 10.7% reduction | 95.6% performance with only 25% labels |

| Supervised Baselines [18] | 0.791 | 0.688 | Baseline | 85.2% performance with 25% labels |

| Cross-Dataset Generalization [18] | - | - | - | 13.9% improvement over existing approaches |

Additional studies have confirmed these advantages. Models based on self-supervised contrastive learning have demonstrated excellent results on most primary sites and cancer subtypes, achieving state-of-the-art performance on validation tasks such as lung cancer classification [21]. Furthermore, linear classifiers trained on top of features learned from SSL pretraining on digital histopathology datasets perform significantly better than ImageNet-pretrained networks, boosting task performances by more than 28% in F1 scores on average [19].

Foundation Models: Architectural Frameworks and Implementation

Major Foundation Models in Histopathology

The field has recently witnessed the emergence of powerful foundation models pretrained using SSL on massive histopathology datasets. These models serve as versatile feature extractors adaptable to various downstream tasks. The following table compares key foundation models:

Table 2: Comparison of Major Histopathology Foundation Models

| Foundation Model | Training Data Scale | SSL Methodology | Key Capabilities | Clinical Applications |

|---|---|---|---|---|

| CONCH [5] | 1.17M image-caption pairs | Contrastive learning from captions | Image classification, segmentation, captioning, cross-modal retrieval | Diagnosis, biomarker prediction, treatment response prediction |

| UNI [18] | 100M+ images from 100,000+ WSIs | Self-supervised learning | General-purpose feature extraction across 20+ tissue types | Cancer subtyping, rare cancer detection, prognostic analysis |

| Virchow [18] | 1.5M WSIs from 100,000 patients | Self-supervised pretraining | Rare cancer detection, clinical-grade diagnostics | Surpasses supervised methods in low-resource settings |

| TITAN [7] | 335,645 WSIs + synthetic captions | Multimodal self-supervision & vision-language alignment | Slide representation, report generation, zero-shot classification | Rare disease retrieval, cancer prognosis, cross-modal search |

| Prov-GigaPath [18] | 1.3B pathology images | Masked autoencoding | Whole-slide feature learning | Generalizes across hundreds of cancer types and tasks |

These foundation models overcome critical limitations of earlier approaches. Unlike traditional supervised models that require extensive labeling for each specific task, foundation models leverage SSL to learn general-purpose representations adaptable to diverse applications with minimal fine-tuning [20]. For instance, CONCH demonstrates how integrating visual and textual information through contrastive learning enables more advanced comprehension of histopathological entities [5].

The S3L Framework: A Unified SSL Approach for WSIs

The S3L (Self-Supervised Whole Slide Learning) framework provides a general, flexible, and lightweight approach for gigapixel-scale self-supervision of WSIs [22]. S3L treats gigapixel WSIs as sequences of patch tokens and applies domain-informed vision-language transformations—including splitting, cropping, and masking—to generate high-quality views for self-supervised training [22].

The framework employs a two-stage architecture:

- A pre-trained patch encoder that transforms individual image patches into feature representations

- A transformer-based whole-slide encoder that aggregates patch-level features into comprehensive slide-level representations [22]

This approach effectively leverages the inherent regional heterogeneity, histologic feature variability, and information redundancy within WSIs to learn high-quality representations without extensive annotations [22]. Benchmarking experiments demonstrate that S3L significantly outperforms WSI baselines for cancer diagnosis and genetic mutation prediction, achieving good performance with both in-domain and out-of-distribution patch encoders [22].

Diagram 1: S3L Framework for Whole Slide Images

Experimental Protocols and Methodologies

Implementation of Hybrid SSL Frameworks

Comprehensive experimental evaluations demonstrate the effectiveness of SSL approaches. One recently proposed framework integrates three key innovations: a multi-resolution hierarchical architecture for gigapixel WSIs, a hybrid SSL strategy combining masked autoencoder reconstruction with multi-scale contrastive learning, and an adaptive augmentation network that preserves histological semantics [18].

The experimental protocol involves:

Data Preparation and Preprocessing:

- Collect WSIs from diverse sources (TCGA-BRCA, TCGA-LUAD, TCGA-COAD, CAMELYON16, PanNuke)

- Extract non-overlapping patches at multiple magnifications (e.g., 20x, 10x, 5x)

- Apply stain normalization to address technical variations

Progressive Fine-tuning Protocol:

- Pretraining Phase: Train on large unlabeled dataset using hybrid SSL objectives

- Adaptation Phase: Fine-tune on limited labeled data with task-specific heads

- Evaluation Phase: Comprehensive benchmarking against supervised baselines

Evaluation Metrics:

- Segmentation performance: Dice coefficient, mIoU

- Boundary accuracy: Hausdorff Distance, Average Surface Distance

- Data efficiency: Performance with reduced annotation budgets

- Generalization: Cross-dataset transfer learning capability

This framework demonstrated substantial improvements, achieving a Dice coefficient of 0.825 (4.3% improvement) and mIoU of 0.742 (7.8% enhancement), with significant reductions in boundary error metrics (10.7% in Hausdorff Distance, 9.5% in Average Surface Distance) [18]. Notably, the method exhibited exceptional data efficiency, requiring only 25% of labeled data to achieve 95.6% of full performance compared to 85.2% for supervised baselines, representing a 70% reduction in annotation requirements [18].

Multimodal Pretraining with TITAN

The TITAN (Transformer-based pathology Image and Text Alignment Network) framework implements a sophisticated multimodal pretraining approach for whole-slide images [7]. The experimental protocol consists of three distinct stages:

Stage 1: Vision-Only Unimodal Pretraining

- Pretrain on Mass-340K dataset (335,645 WSIs) using iBOT framework

- Construct input embedding space by dividing WSIs into non-overlapping 512×512 pixel patches at 20× magnification

- Extract 768-dimensional features for each patch using CONCHv1.5 encoder

- Create views by randomly cropping 2D feature grid with global (14×14) and local (6×6) crops

- Apply feature augmentations (vertical/horizontal flipping, posterization)

Stage 2: ROI-Level Cross-Modal Alignment

- Align generated morphological descriptions at region-of-interest (ROI) level

- Utilize 423k pairs of 8k×8k ROIs and synthetic captions generated via PathChat

- Implement contrastive learning to align visual and textual representations

Stage 3: WSI-Level Cross-Modal Alignment

- Align representations at whole-slide level using 183k pairs of WSIs and clinical reports

- Enable cross-modal retrieval and zero-shot classification capabilities

This multi-stage pretraining strategy enables TITAN to extract general-purpose slide representations that outperform both ROI and slide foundation models across diverse clinical tasks, including linear probing, few-shot and zero-shot classification, rare cancer retrieval, and pathology report generation [7].

Diagram 2: TITAN Multi-stage Pretraining Pipeline

Implementing SSL approaches for histopathology requires specific computational frameworks and data resources. The following table details key components of the research toolkit:

Table 3: Essential Research Reagents and Computational Resources for SSL in Histopathology

| Resource Category | Specific Examples | Function & Application | Availability |

|---|---|---|---|

| Foundation Models | CONCH, UNI, Virchow, TITAN | Pre-trained feature extractors for transfer learning | Publicly available (CONCH) or through research collaborations |

| SSL Frameworks | S3L, HIPT, Giga-SSL | Implement self-supervised learning for WSIs | GitHub repositories (e.g., S3L framework) |

| Patch Encoders | CONCHv1.5, DINOv2, ResNet | Encode individual image patches into feature representations | Open-source implementations |

| WSI Datasets | TCGA, CAMELYON, PanNuke | Benchmark datasets for training and evaluation | Publicly available with restrictions |

| Computational Resources | GPUs (≥ 16GB VRAM), High-CPU servers | Handle computational demands of gigapixel images | Research computing clusters |

| Digital Pathology Tools | QuPath, HALO, Whole Slide Scanners | Slide digitization, annotation, and preliminary analysis | Commercial and open-source options |

These resources enable researchers to implement and experiment with SSL approaches for histopathology. For instance, CONCH is publicly available on GitHub and can be installed via pip, providing researchers with direct access to state-of-the-art vision-language capabilities for histopathology [5]. The model demonstrates particular strength for non-H&E stained images such as IHCs and special stains, and can be used for diverse tasks involving histopathology images and text [5].

Self-supervised learning has fundamentally transformed the analysis of whole slide images in computational pathology. By effectively leveraging vast amounts of unlabeled data, SSL approaches address the critical annotation bottleneck that has long constrained the development of robust AI systems for histopathology. Through techniques like contrastive learning, masked image modeling, and their hybrid implementations, SSL enables models to learn rich, transferable representations of histological features that form the foundation for diverse downstream tasks.

The emergence of powerful foundation models like CONCH, UNI, Virchow, and TITAN represents a paradigm shift in computational pathology. These models, pretrained using SSL on massive datasets, demonstrate exceptional versatility across classification, segmentation, retrieval, and generative tasks while significantly reducing annotation requirements. The integration of multimodal capabilities, particularly vision-language alignment, further enhances their utility in clinical and research settings.

Future research directions include developing more efficient architectures for processing gigapixel images, improving model interpretability for clinical adoption, and enhancing generalization across diverse patient populations and tissue types. As SSL methodologies continue to evolve alongside foundation models, they hold tremendous potential to accelerate histopathology research, support clinical decision-making, and ultimately advance precision medicine through more accessible and powerful computational tools.

The emergence of foundation models is fundamentally transforming computational pathology by enabling the development of artificial intelligence (AI) tools that can interpret gigapixel whole-slide images (WSIs) for tasks ranging from cancer diagnosis and biomarker prediction to prognosis estimation [7] [9]. Unlike earlier AI models designed for a single, specific task, foundation models are trained on massive, diverse datasets without explicit labels, learning general-purpose representations of histopathology images. These representations can then be adapted with high efficiency to a wide array of downstream clinical and research applications, even those with very limited annotated data [2] [23]. The performance and generalizability of these models are critically dependent on their pretraining—the initial phase where the model learns the fundamental patterns of histology data. This phase varies significantly across models in terms of the scale of data, the learning algorithms employed, and the modalities used (e.g., images alone or images paired with text) [9].

This technical guide provides a comparative analysis of three pivotal foundation models—Virchow, CONCH, and UNI—each representing a distinct paradigm in pretraining strategy. Virchow exemplifies the "scale-up" approach, leveraging millions of WSIs for self-supervised visual learning [2] [24]. CONCH pioneers the vision-language pathway, aligning histopathology images with textual descriptions to capture semantic concepts [12]. UNI establishes a general-purpose visual encoder by exploring scaling laws and demonstrating robust performance across dozens of clinical tasks [23]. Understanding their core architectures, training data, and experimental benchmarks is essential for researchers and drug development professionals aiming to leverage these models in oncology and anatomic pathology.

Core Model Architectures & Pretraining Strategies

Virchow and Virchow 2: Scaling Visual Self-Supervision

The Virchow model family is architected around the principle of scaling both data and model size using visual self-supervised learning. The original Virchow is a 632 million parameter Vision Transformer (ViT) trained on approximately 1.5 million H&E-stained WSIs, corresponding to roughly 2 billion image tiles [2] [9]. Its successor, Virchow 2, scales this further to 3.1 million WSIs and introduces a giant 1.85 billion parameter variant (Virchow 2G), trained on a mixed-magnification dataset that includes both H&E and immunohistochemistry (IHC) stains [24].

The pretraining methodology for Virchow employs DINOv2 (self-DIstillation with NO labels), a robust self-supervised algorithm. DINOv2 uses a student-teacher network structure where the student learns to match the output of the teacher when presented with different augmented "views" of the same image tile. This process encourages the model to learn representations that are invariant to perturbations like staining variations and cropping, focusing on biologically relevant morphological features [2] [24]. A key domain-specific adaptation in Virchow 2 is the modification of the DINOv2 augmentation policy. It omets image solarization, which can generate unrealistic color profiles in pathology, and carefully tunes the random crop-and-resize operation to minimize unwanted distortions to critical cellular and tissue structures [24].

CONCH: Vision-Language Alignment via Contrastive Learning

CONCH (CONtrastive learning from Captions for Histopathology) represents a paradigm shift by integrating language and vision. It is a visual-language foundation model pretrained on over 1.17 million histopathology image-caption pairs, one of the largest such datasets in the domain [12] [5].

The model's architecture is based on the CoCa (Contrastive Captioners) framework. It consists of three core components:

- An image encoder (a Vision Transformer) that processes histology image tiles.

- A text encoder that processes corresponding text captions.

- A multimodal fusion decoder that attends to both image and text features to generate captions.