Zero-Shot Visual-Language Foundation Models in Pathology: A New Paradigm for AI-Driven Diagnosis

This article explores the transformative potential of visual-language foundation models (VLFMs) for zero-shot classification in computational pathology.

Zero-Shot Visual-Language Foundation Models in Pathology: A New Paradigm for AI-Driven Diagnosis

Abstract

This article explores the transformative potential of visual-language foundation models (VLFMs) for zero-shot classification in computational pathology. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how models like CONCH and TITAN are overcoming the critical challenge of label scarcity by learning from image-text pairs. The scope ranges from foundational concepts and core architectures to advanced fine-tuning methodologies, performance optimization strategies, and rigorous benchmarking against traditional deep learning and human experts. By synthesizing the latest research, this review serves as a strategic guide for developing and deploying robust, generalizable AI tools that can accelerate diagnostic workflows, enhance personalized medicine, and fuel drug discovery.

Understanding Visual-Language Foundation Models and Their Role in Pathology

The Problem of Label Scarcity in Computational Pathology

The development of robust artificial intelligence (AI) models for computational pathology has been fundamentally constrained by the pervasive challenge of label scarcity. The acquisition of high-quality, expert-annotated data for whole-slide images (WSIs) is labor-intensive, time-consuming, and cost-prohibitive, making it difficult to scale across the thousands of possible diagnoses and rare diseases encountered in pathology practice [1]. This scarcity severely limits the development of task-specific supervised learning models, particularly for rare diseases or complex tasks requiring specialized expertise [1] [2].

Visual-language foundation models represent a paradigm shift in addressing these limitations. By leveraging task-agnostic pretraining on diverse sources of histopathology images paired with biomedical text, these models learn rich, aligned representations of visual and linguistic concepts in pathology [1] [3]. The resulting models can be applied to a wide array of downstream tasks—including classification, segmentation, and retrieval—in a zero-shot manner, requiring minimal or no further labeled data for effective deployment [1]. This approach mirrors how human pathologists teach and reason about histopathologic entities using both visual cues and descriptive language [1].

Quantitative Performance of Zero-Shot Models

Evaluation across multiple benchmarks demonstrates that visual-language foundation models achieve state-of-the-art performance on diverse pathology tasks without task-specific training. The table below summarizes the zero-shot classification performance of CONCH, a leading visual-language foundation model, across multiple tissue and disease types.

Table 1: Zero-shot classification performance of CONCH across diverse diagnostic tasks

| Task Description | Dataset | Disease/Cancer Type | Primary Metric | Performance |

|---|---|---|---|---|

| Slide-level Subtyping | TCGA NSCLC | Non-small cell lung cancer | Balanced Accuracy | 90.7% [1] |

| Slide-level Subtyping | TCGA RCC | Renal cell carcinoma | Balanced Accuracy | 90.2% [1] |

| Slide-level Subtyping | TCGA BRCA | Invasive breast carcinoma | Balanced Accuracy | 91.3% [1] |

| ROI-level Classification | CRC100k | Colorectal cancer | Balanced Accuracy | 79.1% [1] |

| ROI-level Classification | WSSS4LUAD | Lung adenocarcinoma | Balanced Accuracy | 71.9% [1] |

| Gleason Pattern Classification | SICAP | Prostate cancer | Quadratic Weighted κ | 0.690 [1] |

Comparative studies reveal that CONCH substantially outperforms concurrent visual-language models such as PLIP and BiomedCLIP across these tasks, often by large margins (e.g., >10% accuracy on TCGA NSCLC and RCC subtyping, and >35% on TCGA BRCA subtyping) [1]. This performance establishes a strong baseline for clinical applications, especially when training labels are scarce.

Beyond classification, these models enable cross-modal retrieval, allowing pathologists to search for similar image cases using textual descriptions or generate descriptive captions for histopathology images, thereby enhancing diagnostic workflows and educational applications [1].

Experimental Protocols for Zero-Shot Evaluation

Protocol 1: Zero-Shot Tile and WSI Classification

This protocol details the methodology for applying a pre-trained visual-language foundation model to classify tissue regions or entire whole-slide images without task-specific training [1].

Table 2: Key reagents and computational tools for zero-shot classification

| Item | Specification/Version | Function/Purpose |

|---|---|---|

| Pre-trained VLM Weights | CONCH (Hugging Face) | Provides foundational image and text encoders for zero-shot inference [3]. |

| Whole-Slide Image Data | SICAP, TCGA, CRC100k | Forms the input image data for evaluation; should represent target diseases [1]. |

| Text Prompt Templates | Custom-defined ensemble | Converts class names into multiple textual descriptions to enhance prediction robustness [1]. |

| Computational Framework | PyTorch/TensorFlow | Enables model loading, inference, and similarity score calculation. |

Procedure:

- Task Definition and Prompt Engineering: Define the classification task and corresponding class names (e.g., "invasive ductal carcinoma," "normal colon mucosa"). Create an ensemble of text prompts for each class to account for variations in terminology. For example: "This is a histology image of {classname}," "A micrograph showing {classname}," etc [1] [4].

- Text Feature Embedding: Use the pre-trained model's text encoder to generate feature embeddings for all text prompts in the ensemble. This projects the textual concepts into the shared visual-text representation space [1].

- Image Preprocessing and Tiling: For whole-slide images, segment the WSI into smaller, manageable tiles at a specified magnification (e.g., 20×). Apply standard normalization to each tile [1] [5].

- Image Feature Embedding: Process each image tile through the pre-trained model's image encoder to obtain visual feature embeddings [1].

- Similarity Calculation and Aggregation: Compute the cosine similarity between each image tile embedding and all text prompt embeddings. For WSI-level prediction, aggregate tile-level similarity scores (e.g., by averaging or using attention mechanisms) to produce a slide-level classification [1] [5].

- Visualization: Generate heatmaps overlaying the WSI, where each tile's color intensity represents its similarity score to the predicted class text prompt, providing visual interpretability for model predictions [1].

Protocol 2: Systematic Prompt Engineering for Diagnostic Pathology

This protocol, derived from recent investigational studies, provides a structured framework for optimizing text prompts to maximize zero-shot diagnostic accuracy [4] [6].

Procedure:

- Dimensional Analysis: Systematically vary prompts along four key dimensions:

- Domain Specificity (DS): Incorporate low, medium, or high levels of domain-specific terminology [6].

- Anatomical Precision (AP): Specify anatomical references with low, medium, or high precision (e.g., from "this tissue" to "colonic mucosa") [6].

- Instructional Framing (IF): Frame the prompt using different styles: Expert (positioning model as expert), Minimal (basic instruction), or Task-oriented [6].

- Output Constraints (OC): Explicitly define the output format and length, or use implicit, flexible constraints [6].

- Template Design and Testing: Create multiple prompt templates that combine different levels of these dimensions. For example, a high-DS, high-AP, Expert-framed prompt with Explicit output constraints [6].

- Model Inference and Evaluation: Execute zero-shot inference using each prompt template across the target dataset.

- Performance Analysis: Evaluate model performance (e.g., accuracy, F1-score) for each prompt variant. Identify the optimal combination of dimensions for the specific diagnostic task [4].

Recent findings indicate that precise anatomical references and moderate-to-high domain specificity significantly enhance performance, with the CONCH model showing particular sensitivity to these dimensions [4] [6].

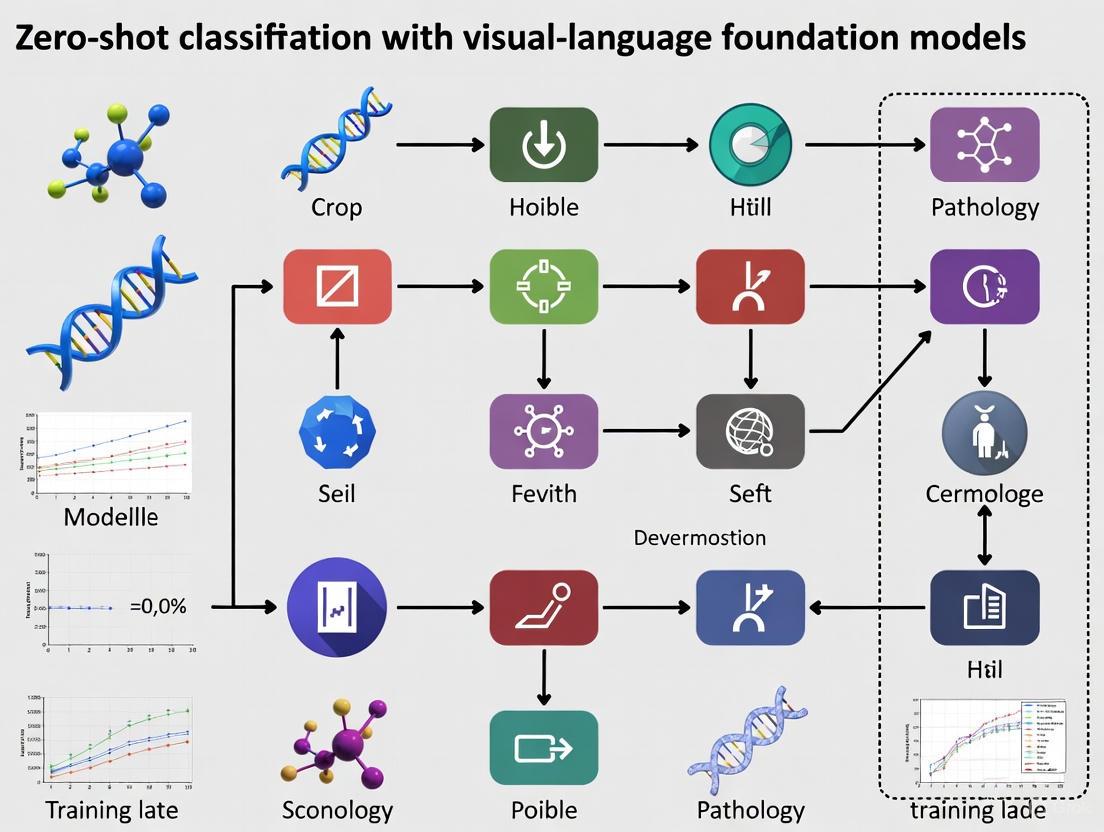

Workflow Visualization of Zero-Shot Classification

The following diagram illustrates the integrated workflow for zero-shot classification in computational pathology, combining the protocols for model inference and prompt engineering.

Diagram 1: Zero-shot classification workflow for computational pathology, integrating visual and textual processing pipelines.

Essential Research Reagent Solutions

The successful implementation of zero-shot classification in computational pathology relies on several key computational and data resources, as previously referenced in the experimental protocols.

Table 3: Essential research reagents and resources for zero-shot pathology

| Category | Resource | Function in Research |

|---|---|---|

| Foundation Models | CONCH [1] [3] | Pre-trained visual-language foundation model for pathology; enables zero-shot transfer to various tasks without fine-tuning. |

| Foundation Models | PLIP [1] | Pathology-language image pretraining model; serves as a baseline for comparative performance studies. |

| Foundation Models | BiomedCLIP [1] | Biomedical-specific CLIP model; provides domain-adapted visual-language representations. |

| Computational Frameworks | MI-Zero [1] [5] | Framework combining visual-language models with multiple instance learning for WSI-level zero-shot classification. |

| Data Resources | TCGA (The Cancer Genome Atlas) [1] [2] | Provides extensive, well-characterized WSI datasets across multiple cancer types for model evaluation. |

| Data Resources | Grand Challenge Datasets [2] | Source of public pathology image datasets (e.g., PANDA, CAMELYON) for benchmarking and validation. |

What Are Visual-Language Foundation Models? From CLIP to Pathology-Specific Architectures

Visual-Language Foundation Models (VLFMs) represent a transformative class of artificial intelligence systems pre-trained on vast datasets containing both images and associated textual information. Unlike traditional computer vision models trained for specific tasks, foundation models learn generalized representations that can be adapted to numerous downstream applications with minimal task-specific modification [7]. These models fundamentally change how AI systems understand and process visual information by creating a shared embedding space where both images and text can be compared and related through a common representation [8]. This capability is particularly valuable in medical domains like pathology, where diagnostic reasoning inherently combines visual pattern recognition with conceptual knowledge typically expressed in natural language.

The core innovation of VLFMs lies in their ability to perform cross-modal alignment—learning relationships between visual patterns in images and semantic concepts in text. During pre-training, these models process millions of image-text pairs, adjusting their parameters so that embeddings for a histopathology image showing, for instance, invasive ductal carcinoma become positioned close to the text embedding for "invasive ductal carcinoma" in the shared feature space [1]. This training paradigm enables remarkable capabilities including zero-shot classification, where models can recognize categories they were never explicitly trained to identify, by simply comparing image features with text descriptions of the target classes [9].

Evolution of Architectural Paradigms

Foundational Architectures: CLIP and Beyond

The development of VLFMs began with architectures like CLIP (Contrastive Language-Image Pre-training), which established the dual-encoder paradigm that remains influential today [9]. CLIP employs separate image and text encoders trained jointly using a contrastive learning objective that maximizes agreement between matching image-text pairs while minimizing agreement for non-matching pairs [8]. This architecture enables efficient mapping of both visual and textual inputs into a shared embedding space where semantic similarity can be measured using simple cosine distance [9].

Subsequent models like ALIGN scaled this approach using noisier but larger datasets, while CoCa (Contrastive Captioners) incorporated both contrastive and generative objectives, adding a text decoder to enable caption generation capabilities [1]. The transformer architecture has been particularly instrumental in advancing VLFMs, with its attention mechanism allowing models to focus on relevant regions of both images and text, capturing long-range dependencies essential for understanding complex histopathological images [7].

Pathology-Specific Adaptations

While general-purpose VLFMs demonstrated promising capabilities, their application to pathology required significant domain adaptation to address the unique challenges of computational pathology, including gigapixel whole-slide images (WSIs), subtle morphological features, and specialized domain knowledge [8]. This led to the development of pathology-specific VLFMs including:

- CONCH (CONtrastive learning from Captions for Histopathology): Trained on 1.17 million histopathology image-caption pairs, CONCH incorporates both contrastive alignment and captioning objectives, enabling state-of-the-art performance on classification, segmentation, and retrieval tasks [1].

- TITAN (Transformer-based pathology Image and Text Alignment Network): A multimodal whole-slide foundation model pretrained on 335,645 whole-slide images with corresponding pathology reports and synthetic captions. TITAN can extract general-purpose slide representations and generate pathology reports without requiring fine-tuning [10].

- PLIP (Pathology Language Image Pre-training): A community-built VLFM developed using medical Twitter data that serves as an open-source resource for the computational pathology community [8].

- QuiltNet & Quilt-LLaVA: QuiltNet adapts the CLIP framework for pathology using 1M histopathology image-text pairs, while Quilt-LLaVA extends this approach by integrating a large language model for enhanced conversational capabilities [9].

Table 1: Comparison of Pathology-Specific Visual-Language Foundation Models

| Model | Architecture | Training Data | Key Capabilities | Parameters |

|---|---|---|---|---|

| CONCH | CoCa-based | 1.17M image-caption pairs | Classification, segmentation, captioning, retrieval | ~200M [9] |

| TITAN | Vision Transformer | 335,645 WSIs + reports | Slide representation, report generation, rare cancer retrieval | Not specified [10] |

| PLIP | CLIP-based | Medical Twitter data | Zero-shot classification, image-text retrieval | ~150M [8] |

| QuiltNet | CLIP-based | 1M image-text pairs | Contrastive learning, feature alignment | ~150M [9] |

| Quilt-LLaVA | LLaVA-based | 107K Q&A pairs | Visual question answering, conversational AI | ~7B [9] |

Quantitative Performance Comparison

Evaluation of VLFMs in pathology applications demonstrates their growing capabilities across diverse tasks. On slide-level classification benchmarks, CONCH achieved zero-shot accuracies of 90.7% for NSCLC subtyping and 90.2% for RCC subtyping, outperforming other models by significant margins [1]. On the more challenging task of invasive breast carcinoma (BRCA) subtyping, CONCH achieved 91.3% accuracy while other models performed at near-random levels [1].

The TITAN model has shown particular strength in low-data regimes and rare disease applications, demonstrating robust performance in cancer prognosis and rare cancer retrieval without requiring fine-tuning [10]. In comprehensive evaluations across 14 diverse benchmarks, CONCH consistently outperformed other VLFMs including PLIP, BiomedCLIP, and general-purpose models like OpenAICLIP, establishing new state-of-the-art performance across classification, segmentation, captioning, and retrieval tasks [1].

Table 2: Zero-Shot Classification Performance of CONCH Across Pathology Tasks [1]

| Task | Dataset | Evaluation Metric | CONCH Performance | Next Best Model |

|---|---|---|---|---|

| NSCLC Subtyping | TCGA NSCLC | Balanced Accuracy | 90.7% | 78.7% (PLIP) |

| RCC Subtyping | TCGA RCC | Balanced Accuracy | 90.2% | 80.4% (PLIP) |

| BRCA Subtyping | TCGA BRCA | Balanced Accuracy | 91.3% | 55.3% (BiomedCLIP) |

| Gleason Grading | SICAP | Quadratic Kappa | 0.690 | 0.550 (BiomedCLIP) |

| Tissue Classification | CRC100K | Balanced Accuracy | 79.1% | 67.4% (PLIP) |

Experimental Protocols for Zero-Shot Classification in Pathology

Protocol 1: Whole-Slide Image Classification Using TITAN

Purpose: To perform slide-level classification of whole-slide images without task-specific fine-tuning.

Materials and Reagents:

- TITAN model (pretrained weights)

- Whole-slide images (WSIs) in standard formats (SVS, NDPI, etc.)

- Computational environment with GPU acceleration (≥16GB VRAM recommended)

- Python libraries: PyTorch, OpenSlide, Whole-Slide Data Loader

Procedure:

- WSI Preprocessing: Load WSIs and extract representative regions of interest (ROIs) at 20× magnification. Convert each ROI to a feature grid using a pretrained patch encoder [10].

- Feature Grid Construction: Spatially arrange patch features in a 2D grid replicating their positions in the original tissue. Apply random cropping to generate views of 16×16 features covering regions of 8,192×8,192 pixels [10].

- Embedding Extraction: Process feature grids through TITAN's vision transformer encoder using attention with linear bias (ALiBi) for long-context extrapolation. Extract slide-level embeddings from the [CLS] token or via pooling operations [10].

- Text Prompt Preparation: Create descriptive text prompts for each target class (e.g., "histopathology image of invasive ductal carcinoma"). Multiple prompt variations can be ensemble for improved performance [9].

- Similarity Calculation: Encode text prompts using TITAN's text encoder. Compute cosine similarity between slide embeddings and all text prompt embeddings.

- Classification: Assign the class label corresponding to the highest similarity score. Generate confidence scores based on similarity values.

Troubleshooting Tips:

- For large WSIs exceeding memory constraints, implement sliding window inference with aggregation.

- If performance is suboptimal, refine text prompts to include more specific pathological terminology.

- Ensure consistent magnification levels during feature extraction to maintain morphological context.

Protocol 2: Fine-Grained Patch Alignment for Brain Tumor Subclassification

Purpose: To enhance zero-shot classification of subtle brain tumor subtypes using patch-level feature alignment.

Materials and Reagents:

- Pretrained VLFMs (CONCH or similar)

- Whole-slide images of brain tumor specimens

- Computational environment with GPU acceleration

- Python libraries: PyTorch, Transformers, OpenSlide

Procedure:

- Patch Sampling: Extract patches of 512×512 pixels at 20× magnification from diagnostically relevant regions of WSIs, ensuring coverage of diverse morphological patterns [11].

- Local Feature Refinement: Process patches through VLFM image encoder. Model spatial relationships between patches using self-attention mechanisms to enhance feature discriminability [11].

- Fine-Grained Text Generation: Leverage large language models (e.g., GPT-4) to generate detailed, pathology-aware descriptions for each tumor subtype. Include specific morphological features (e.g., "nuclear atypia," "serpentine necrosis," "microvascular proliferation") [11].

- Patch-Text Alignment: Compute similarity between refined patch features and text embeddings of class descriptions. Apply transformer-based cross-attention to model fine-grained correspondences [11].

- Uncertainty-Aware Aggregation: Aggregate patch-level predictions using uncertainty-weighted voting, prioritizing patches with higher prediction confidence [11].

- Slide-Level Classification: Generate final slide-level prediction based on aggregated patch scores. Visualize attention maps to highlight diagnostically relevant regions.

Validation:

- Compare predictions with ground truth annotations from certified pathologists.

- Perform qualitative analysis of attention maps to ensure alignment with known pathological features.

- Evaluate robustness through cross-validation across multiple institutions and scanner types.

Research Reagent Solutions

Table 3: Essential Computational Tools for VLFM Research in Pathology

| Tool Name | Type | Function | Availability |

|---|---|---|---|

| CONCH | Vision-Language Model | Feature extraction, zero-shot classification, retrieval | Public [1] |

| TITAN | Whole-Slide Foundation Model | Slide-level representation, report generation | Upon request [10] |

| PLIP | Pathology Language-Image Model | Open-source visual-language pretraining | Public [8] |

| Quilt-LLaVA | Large Multimodal Model | Visual question answering, conversational AI | Public [9] |

| FM² | Model Fusion Framework | Disentangled consensus-divergence representation | Public [8] |

| FG-PAN | Fine-Grained Alignment | Patch-text alignment for subtle subtypes | Public [11] |

Workflow Visualization

Diagram 1: Workflow of Visual-Language Foundation Models in Pathology. This diagram illustrates the complete pipeline from multimodal data input through feature extraction, cross-modal alignment, and diverse zero-shot applications in computational pathology.

Diagram 2: Architectural Paradigms of Pathology VLMs. This diagram compares three predominant architectural frameworks for visual-language foundation models in pathology, highlighting their distinctive components and learning objectives.

Future Directions and Challenges

The development of VLFMs for pathology faces several important challenges that represent active research directions. Computational efficiency remains a significant constraint, as processing gigapixel whole-slide images requires substantial memory and processing resources [10]. Current approaches address this through hierarchical processing and feature distillation, but more efficient architectures are needed for clinical deployment. Interpretability and reliability are particularly crucial in medical applications, where model decisions must be explainable and trustworthy [7]. Techniques like attention visualization and uncertainty quantification are being integrated into modern VLFMs to address these concerns.

Future research directions include the development of specialized foundation models for rare diseases, where limited training data creates particular challenges for general-purpose models [10]. The integration of multimodal patient data beyond images and text—including genomic profiles, clinical variables, and longitudinal outcomes—represents another promising frontier [7]. Finally, federated learning approaches are being explored to enable model training across multiple institutions while preserving data privacy, which is essential for advancing model generalizability while complying with healthcare regulations [7].

As VLFMs continue to evolve, they hold the potential to fundamentally transform pathology practice, not by replacing pathologists but by augmenting their capabilities through powerful tools for pattern recognition, data integration, and knowledge retrieval. The progression from general-purpose models like CLIP to specialized pathology architectures represents an important step toward clinically relevant AI systems that understand both the visual language of histopathology and the semantic language of human diagnosis.

Contrastive learning is a self-supervised machine learning paradigm that trains models to distinguish between similar (positive) and dissimilar (negative) data pairs. In computational pathology, this framework is powerfully applied to create a unified embedding space where histopathology images and textual descriptions can be directly compared. The core objective is to maximize agreement between matching image-text pairs while minimizing agreement between non-matching pairs. This enables models to learn rich, transferable representations of histopathological tissue morphology without relying on extensive manual annotations, thereby directly facilitating zero-shot classification capabilities where models can recognize and categorize pathological concepts without task-specific training data.

Visual-language foundation models like CONCH (Contrastive learning from Captions for Histopathology) exemplify this approach, having been pretrained on over 1.17 million histopathology image-caption pairs to create a joint embedding space where semantically similar images and texts are positioned close together regardless of whether they were explicitly seen during training [12]. This architecture enables powerful applications including cross-modal retrieval, where a text query can find relevant images or vice versa, and zero-shot classification, where natural language descriptions of diseases can be used to categorize unseen histopathology images without additional training.

Key Technical Principles and Architectures

Core Architectural Frameworks

Visual-language foundation models in pathology typically employ dual-encoder architectures consisting of separate image and text encoders trained with contrastive objectives:

- Image Encoder: A Vision Transformer (ViT) processes tissue regions-of-interest (ROIs) or whole-slide images, converting visual patterns into embedding vectors. Models like CONCH use a ViT-B architecture, while others like UNI employ ViT-H [13].

- Text Encoder: A transformer-based language model processes descriptive text, such as pathology reports or synthetic captions, converting natural language into the same embedding space.

- Contrastive Objective: The training maximizes cosine similarity between corresponding image-text pairs while minimizing similarity for non-corresponding pairs. For a batch of N image-text pairs, the contrastive loss function treats the N matched pairs as positives and the N²-N mismatched pairs as negatives.

Multimodal Alignment Strategies

Advanced models employ sophisticated alignment techniques to bridge visual and linguistic domains:

- Cross-modal Attention: Models like TITAN (Transformer-based pathology Image and Text Alignment Network) use attention mechanisms to align image patches with relevant text tokens, enabling fine-grained correspondence between morphological features and descriptive concepts [10] [14].

- Knowledge Distillation: Some frameworks leverage pretrained patch encoders like CONCHv1.5 to extract visual features before multimodal alignment, creating hierarchical representations [10].

- Synthetic Data Augmentation: TITAN incorporates 423,122 synthetic captions generated from a multimodal generative AI copilot to enhance training diversity and robustness [10] [14].

Table 1: Contrastive Learning Architectures in Computational Pathology

| Model | Architecture | Training Data | Embedding Dimension | Parameters |

|---|---|---|---|---|

| CONCH | ViT-B + Text Encoder | 1.17M image-text pairs | 512 | ~200M [13] [12] |

| TITAN | ViT + Transformer | 335,645 WSIs + 423K synthetic captions | 768 | Not specified [10] |

| Quilt-Net | ViT-B/32 + GPT-2 | 1M image-text samples | 512 | ~150M [9] |

| Quilt-LLaVA | Visual encoder + LLM | ~107K QA pairs | Not specified | ~7B [9] |

Quantitative Benchmarking of Model Performance

Zero-Shot Classification Capabilities

Comprehensive evaluations demonstrate the practical efficacy of contrastively trained visual-language models across diverse pathological tasks. In systematic assessments of diagnostic accuracy across 3,507 digestive system whole-slide images, the CONCH model achieved highest accuracy when provided with precise anatomical references, with performance consistently degrading when anatomical precision was reduced [9]. This highlights the critical importance of prompt design and anatomical context in leveraging these models for zero-shot histopathological image analysis.

The TITAN model demonstrates exceptional versatility in zero-shot settings, outperforming both region-of-interest and slide foundation models across multiple machine learning settings including linear probing, few-shot and zero-shot classification, rare cancer retrieval, cross-modal retrieval and pathology report generation [10] [14]. This generalizability is particularly valuable for resource-limited clinical scenarios involving rare diseases with limited training data.

Cross-Modal Retrieval Performance

The joint embedding space enables powerful retrieval capabilities between visual and textual domains:

- Image-to-Text Retrieval: Given a histopathology image, models can retrieve relevant pathological descriptions or generate comprehensive reports.

- Text-to-Image Retrieval: Natural language queries describing morphological findings can retrieve visually similar tissue regions from large databases.

Table 2: Performance Benchmarks of Visual-Language Models in Pathology

| Model | Zero-shot Accuracy (%) | Top-1 Retrieval Rate | Training Paradigm | Report Generation Quality |

|---|---|---|---|---|

| CONCH | 15-20% gain over non-VLMs [13] | State-of-the-art [12] | Vision-Language Contrastive | Not specified |

| TITAN | Outperforms ROI/slide models [10] | Enables rare cancer retrieval [10] | Multimodal SSL + V-L Alignment | Generates clinically relevant reports [10] |

| Quilt-Net | Varies with prompt design [9] | Not specified | CLIP-based fine-tuning | Not applicable |

| PLIP | Competitive on subtype classification [13] | Effective on public datasets [13] | Vision-Language Contrastive | Not applicable |

Experimental Protocols for Zero-Shot Classification

Protocol: Zero-Shot Diagnostic Classification Using Pre-trained VLMs

Purpose: To evaluate the zero-shot classification performance of visual-language foundation models on histopathology whole-slide images without task-specific fine-tuning.

Materials:

- Pre-trained visual-language model (CONCH, TITAN, or PLIP)

- Whole-slide images (WSIs) for evaluation

- Textual prompts describing target diseases/conditions

- Computational resources (GPU recommended)

- Python environment with necessary libraries (PyTorch, Transformers, OpenSlide)

Procedure:

- Image Preprocessing:

Prompt Engineering:

- Design text prompts incorporating domain-specific terminology, anatomical precision, and instructional framing [9].

- Example prompts: "H&E stain of colon tissue showing invasive adenocarcinoma with desmoplastic reaction" or "Lymph node tissue with metastatic carcinoma deposits".

- Generate text embeddings using the model's text encoder.

Similarity Calculation:

- Compute cosine similarity between image embeddings and all candidate text prompt embeddings in the joint space.

- For patch-level features, aggregate similarities across all patches in a WSI using attention pooling or max pooling.

Classification:

- Assign the class label corresponding to the text prompt with highest similarity score.

- Set confidence thresholds based on similarity scores for reliable predictions.

Validation:

- Compare predictions with ground truth diagnoses from pathologists.

- Calculate standard metrics (accuracy, F1-score, AUC-ROC) across different tissue types and disease conditions.

- Perform qualitative analysis of attention maps to verify diagnostically relevant regions [9].

Protocol: Cross-Modal Retrieval for Rare Cancer Identification

Purpose: To retrieve histologically similar cases from a database using text descriptions for rare cancer subtyping.

Materials:

- Database of whole-slide images with diagnostic annotations

- Pre-trained multimodal foundation model (TITAN recommended for slide-level retrieval)

- Clinical descriptions of rare cancer phenotypes

Procedure:

- Database Indexing:

- Precompute and index visual embeddings for all WSIs in the database using the model's image encoder.

- Store embeddings in a search-efficient data structure (e.g., FAISS index).

Query Processing:

- Encode textual description of rare cancer using the model's text encoder.

- Alternatively, use a representative image patch as query if textual description is insufficient.

Similarity Search:

- Perform k-nearest neighbor search in the joint embedding space.

- Retrieve top-k most similar cases based on cosine similarity.

Validation:

- Assess retrieval precision using expert-annotated ground truth.

- Evaluate clinical utility through pathologist concordance studies [9].

Implementation Workflows and Signaling Pathways

Contrastive Learning Workflow for Pathology Images and Text

Zero-Shot Classification Pipeline for Pathology

Research Reagent Solutions for Implementation

Table 3: Essential Research Reagents for Visual-Language Pathology Research

| Resource Category | Specific Examples | Function/Application | Availability |

|---|---|---|---|

| Pre-trained Models | CONCH, TITAN, PLIP, Quilt-Net | Provide foundation for zero-shot classification and retrieval | Publicly available on GitHub, Hugging Face [12] |

| Pathology Datasets | TCGA, Quilt-1M, OpenPath, PMC-Patients-DD | Benchmark model performance and train custom encoders | Public access with restrictions [9] [13] |

| Annotation Tools | QuPath, ImageJ, ASAP | Manual annotation for validation studies | Open source |

| Computational Frameworks | PyTorch, Transformers, FAISS | Model implementation, training, and efficient similarity search | Open source |

| Visualization Libraries | matplotlib, Plotly, SlideMap | Embedding visualization and result interpretation | Open source |

The diagnosis of diseases from tissue samples, or histopathology, is undergoing a revolutionary transformation through artificial intelligence. Foundation models, pre-trained on vast datasets, are enabling new capabilities in computational pathology. Among these, visual-language models such as CONCH (CONtrastive learning from Captions for Histopathology) and TITAN (Transformer-based pathology Image and Text Alignment Network) represent a paradigm shift. By learning from both histopathology images and their corresponding textual descriptions, these models achieve remarkable zero-shot classification capabilities—performing diagnostic tasks without task-specific training data. This application note details the technical specifications, performance benchmarks, and experimental protocols for leveraging CONCH and TITAN in pathology research and drug development.

CONCH and TITAN are visual-language foundation models specifically designed for computational pathology, but they employ distinct architectural approaches and training methodologies.

CONCH is a vision-language foundation model pre-trained on 1.17 million histopathology image-caption pairs, the largest such dataset at its time of development [1] [15]. Its architecture is based on the CoCa (Contrastive Captioner) framework, integrating an image encoder, a text encoder, and a multimodal fusion decoder [1]. The model is trained using a combination of contrastive alignment objectives that align image and text modalities in a shared representation space, and a captioning objective that learns to generate captions corresponding to images [1]. This dual approach enables CONCH to perform both image-text retrieval and classification tasks effectively.

TITAN represents a more recent advancement as a multimodal whole-slide foundation model pretrained on 335,645 whole-slide images [10]. Its pretraining strategy consists of three stages: (1) vision-only unimodal pretraining on region-of-interest (ROI) crops, (2) cross-modal alignment of generated morphological descriptions at the ROI-level using 423,122 synthetic captions, and (3) cross-modal alignment at the whole-slide image level with corresponding pathology reports [10]. A cornerstone of TITAN's architecture is its use of a Vision Transformer (ViT) that operates on pre-extracted patch features from whole-slide images, employing attention with linear bias (ALiBi) for long-context extrapolation [10].

Table 1: Technical Specifications of CONCH and TITAN

| Specification | CONCH | TITAN |

|---|---|---|

| Model Type | Visual-language encoders | Multimodal whole-slide Vision Transformer |

| Primary Innovation | Contrastive learning from captions | Hierarchical whole-slide encoding with synthetic data |

| Training Data | 1.17M image-caption pairs [1] | 335,645 WSIs + 423K synthetic captions + 183K reports [10] |

| Vision Encoder | ViT-B/16 (90M params) [15] | Vision Transformer (ViT) |

| Text Encoder | L12-E768-H12 (110M params) [15] | Integrated transformer architecture |

| Multimodal Alignment | Image-text contrastive + captioning loss [1] | Vision-language alignment at ROI and WSI levels [10] |

| Key Capabilities | Classification, segmentation, retrieval, captioning | Slide representation, report generation, rare cancer retrieval |

Performance Benchmarks and Comparative Analysis

Both CONCH and TITAN have demonstrated state-of-the-art performance across diverse pathology tasks, particularly in zero-shot settings where no task-specific training is required.

Zero-Shot Classification Performance

In comprehensive evaluations, CONCH has shown superior zero-shot classification capabilities compared to other visual-language foundation models. On slide-level cancer subtyping tasks, CONCH achieved remarkable accuracy: 90.7% on non-small cell lung cancer (NSCLC) subtyping, 90.2% on renal cell carcinoma (RCC) subtyping, and 91.3% on invasive breast carcinoma (BRCA) subtyping [1]. This represents a performance improvement of 9.8-35% over other models like PLIP and BiomedCLIP across these tasks [1]. On the more challenging lung adenocarcinoma (LUAD) pattern classification task, CONCH achieved a Cohen's κ of 0.200, outperforming other models [1].

TITAN has demonstrated exceptional performance in resource-limited clinical scenarios, including rare disease retrieval and cancer prognosis [10]. In evaluations across diverse clinical tasks, TITAN outperformed both region-of-interest and slide foundation models across multiple machine learning settings, including linear probing, few-shot, and zero-shot classification [10]. The model's pretraining with synthetic fine-grained morphological descriptions has shown particular utility for rare cancer retrieval tasks [10].

Performance on Non-Neoplastic Pathology

Recent benchmarking studies have evaluated how these pathology foundation models generalize beyond cancer to non-neoplastic diseases. In placental pathology benchmarks—doubly out-of-distribution for these models as placental data was largely absent from their training—pathology foundation models still outperformed general-purpose models [16]. However, the performance gap between pathology and non-pathology models diminished in tasks related to inflammation, suggesting areas for future improvement [16].

Table 2: Zero-Shot Classification Performance Across Pathology Tasks

| Task/Dataset | Model | Performance Metric | Score | Comparative Advantage |

|---|---|---|---|---|

| TCGA NSCLC Subtyping | CONCH | Balanced Accuracy | 90.7% [1] | +12.0% over next best (PLIP) |

| TCGA RCC Subtyping | CONCH | Balanced Accuracy | 90.2% [1] | +9.8% over next best (PLIP) |

| TCGA BRCA Subtyping | CONCH | Balanced Accuracy | 91.3% [1] | ~35% over other models |

| SICAP (Gleason Patterns) | CONCH | Quadratic κ | 0.690 [1] | +0.140 over BiomedCLIP |

| Rare Cancer Retrieval | TITAN | Retrieval Accuracy | Superior performance [10] | Outperforms other slide foundation models |

| Placental Gestational Age | Pathology FMs | KNN Regression | Best performance [16] | Outperforms non-pathology models |

Experimental Protocols and Workflows

Protocol 1: Zero-Shot Whole-Slide Image Classification

Principle: Leverage pretrained visual-language models to classify entire gigapixel whole-slide images without task-specific training.

Procedure:

- WSI Tiling: Divide the whole-slide image into smaller, manageable patches at 20× magnification (e.g., 256×256 or 512×512 pixels) [1].

- Feature Extraction: For each patch, extract image embeddings using the vision encoder of either CONCH or TITAN.

- Prompt Engineering: Create a set of text prompts describing the candidate diagnostic classes. Employ prompt ensembles with multiple phrasings for each class to enhance robustness [1] [4].

- Text Embedding: Encode all text prompts using the model's text encoder to obtain text embeddings in the shared vision-language space.

- Similarity Calculation: Compute cosine similarity scores between each image patch embedding and all text embeddings.

- Score Aggregation: Use an aggregation strategy (e.g., mean, max, or attention-based pooling) across all patches to generate a slide-level prediction [1] [17].

Protocol 2: Cross-Modal Slide Retrieval

Principle: Retrieve the most relevant whole-slide images based on textual queries, or generate textual descriptions based on slide content.

Procedure:

- Database Building: Extract and store feature embeddings for all whole-slide images in the target database using the model's vision encoder.

- Query Processing: For text-to-slide retrieval, encode the natural language query using the model's text encoder. For slide-to-text retrieval, use the slide's feature embedding.

- Similarity Search: Compute similarity scores (cosine similarity) between the query embedding and all database embeddings.

- Result Ranking: Rank database entries by similarity scores and return the top-k matches.

- Report Generation (TITAN-specific): Leverage TITAN's capability to generate pathology reports from whole-slide images by decoding from the aligned multimodal representation [10].

Protocol 3: Fine-Grained Patch-Text Alignment for Challenging Subtypes

Principle: Enhance zero-shot classification of morphologically similar subtypes through localized patch-text alignment and spatial reasoning.

Procedure:

- Local Feature Refinement: Implement a local feature refinement module (e.g., using local window attention) to enhance patch-level visual features by modeling spatial relationships among representative patches [17].

- Fine-Grained Text Generation: Leverage large language models (LLMs) to generate pathology-aware, fine-grained class descriptions that capture subtle morphological distinctions [17].

- Patch-Text Alignment: Align refined visual features with LLM-generated fine-grained descriptions in the shared embedding space.

- Uncertainty-Aware Aggregation: Aggregate patch-level predictions using uncertainty-aware strategies to generate slide-level diagnoses [17].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Pathology Visual-Language Research

| Tool/Resource | Function | Access Information |

|---|---|---|

| CONCH Model Weights | Pre-trained weights for the CONCH model for feature extraction and zero-shot inference | Available via Hugging Face Hub after request and approval [15] |

| TITAN Framework | Architecture and methodology for whole-slide representation learning | Implementation details in original publication [10] |

| Whole-Slide Image Datasets | Benchmark datasets for model evaluation (e.g., TCGA, CAMELYON) | Publicly available with appropriate data use agreements |

| Pathology Language Prompts | Curated text prompts for pathological concepts and diagnoses | Generated using domain knowledge and LLMs [17] |

| Synthetic Caption Generators | Tools for generating fine-grained morphological descriptions | PathChat and other multimodal generative AI copilots [10] |

Implementation Considerations and Best Practices

Prompt Engineering for Optimal Performance

Effective prompt engineering is critical for maximizing zero-shot performance. Studies have demonstrated that prompt engineering significantly impacts model performance, with the CONCH model achieving highest accuracy when provided with precise anatomical references [4]. Key strategies include:

- Anatomical Precision: Incorporate specific anatomical references relevant to the diagnostic task

- Domain Specificity: Use appropriate pathological terminology and nomenclature

- Prompt Ensembling: Employ multiple phrasings for the same concept to enhance robustness

- Instructional Framing: Structure prompts with clear instructional context when appropriate

Computational Infrastructure Requirements

Implementing these models requires substantial computational resources, particularly for whole-slide image analysis:

- Hardware: GPU acceleration (e.g., NVIDIA A100 or equivalent) is essential for efficient inference

- Memory: Large RAM capacity (≥64GB) is recommended for handling gigapixel whole-slide images

- Storage: High-speed SSD storage facilitates efficient loading and processing of large whole-slide image files

Integration with Existing Pathology Workflows

Successful deployment requires thoughtful integration with existing digital pathology infrastructure:

- DICOM Compatibility: Ensure compatibility with standard whole-slide image formats

- LIS Integration: Develop interfaces with Laboratory Information Systems for seamless clinical workflow integration

- Validation Frameworks: Implement rigorous validation protocols against ground truth pathological diagnoses

The development of CONCH and TITAN represents a significant milestone in computational pathology, demonstrating that visual-language foundation models can achieve remarkable zero-shot classification capabilities across diverse pathological diagnoses. These models offer particular promise for rare diseases and low-resource scenarios where annotated training data is scarce.

Future research directions include expansion to non-neoplastic pathologies, integration with multi-omics data, development of interactive agentic systems for slide navigation [18], and advancement of prompt optimization techniques. As these technologies mature, they hold potential to augment pathological diagnosis, enhance diagnostic consistency, and accelerate drug development workflows.

The protocols and guidelines presented in this application note provide researchers with practical methodologies for leveraging these pioneering models in their computational pathology research.

Visual-language foundation models represent a paradigm shift in computational pathology, moving from single-task, supervised models to versatile, general-purpose tools. Their key advantages of generalizability and task agnosticism are primarily enabled through large-scale, self-supervised pretraining on diverse multimodal datasets [10] [19]. These models demonstrate robust performance across unseen tasks without task-specific fine-tuning, making them particularly valuable for research and drug development applications where labeled data is scarce and hypothesis generation is critical [20]. The following application note details the quantitative performance, experimental protocols, and practical implementation of these capabilities, with a specific focus on zero-shot classification scenarios.

Quantitative Performance Benchmarking

Foundation models consistently outperform traditional supervised approaches, especially in low-data regimes and zero-shot settings. The table below summarizes key performance metrics across diverse pathology tasks.

Table 1: Performance Benchmarking of Pathology Foundation Models

| Model | Pretraining Data | Task Type | Performance Metric | Result | Significance |

|---|---|---|---|---|---|

| TITAN [10] | 335,645 WSIs + 423k synthetic captions | Rare Cancer Retrieval | Not specified | Outperforms baselines | Generalizability to rare diseases with limited data |

| TITAN [10] | 335,645 WSIs + pathology reports | Zero-shot Classification | Not specified | Superior to ROI/slide models | Effective without clinical labels or fine-tuning |

| CONCH [9] | 1.17M image-text pairs [21] | Cancer Invasiveness (Zero-shot) | Accuracy | Highest with precise prompts | Demonstrates critical impact of prompt design |

| Prov-GigaPath [19] | 1.3B patches from 171k slides | Pan-Cancer Subtyping | State-of-the-art | 25/26 tasks | Generalist capability across cancers and institutions |

| Virchow [19] | Millions of tissue images | Tumor Detection (9 common & 7 rare cancers) | AUC | 0.95 | Label efficiency and generalization to rare cancers |

Experimental Protocols for Zero-Shot Classification

Protocol: Zero-Shot Slide-Level Classification Using Prompt Ensembling

This protocol enables disease classification without task-specific model retraining by leveraging the model's inherent semantic understanding [9].

I. Research Reagent Solutions Table 2: Essential Components for Zero-Shot Classification

| Component | Function | Example / Specification |

|---|---|---|

| Visual-Language Model (VLM) | Encodes images and text into a shared embedding space. | CONCH [9] [21] or TITAN [10] pretrained weights. |

| Whole-Slide Image (WSI) | The input gigapixel digital pathology image. | Formalin-fixed, paraffin-embedded (FFPE) tissue section, H&E stained. |

| Text Prompt Templates | Define the classification classes in natural language. | "A whole-slide image of [ANATOMY] with [CLASS_LABEL]." |

| Prompt Engineering Framework | Systematically varies prompt structure to improve robustness. | Modulates domain specificity, anatomical precision, and instructional framing [9]. |

II. Procedure

- WSI Preprocessing: Segment the gigapixel WSI into smaller, non-overlapping patches (e.g., 512x512 pixels at 20x magnification) [10]. Extract feature embeddings for each patch using the model's vision encoder.

- Prompt Design: For each potential class (e.g., "invasive carcinoma," "high-grade dysplasia," "normal tissue"), generate multiple text prompts. Systematically vary:

- Anatomical Precision: "colon tissue" vs. "muscular colon wall."

- Domain Specificity: "showing signs of malignancy" vs. "with adenocarcinoma."

- Instructional Framing: "Is this image representative of [CLASS]?" vs. "This is a histology image showing [CLASS]." [9]

- Feature Encoding: Process all text prompts through the model's text encoder to obtain a set of text embeddings, ( E{text}^{1}, E{text}^{2}, ..., E_{text}^{N} ), where ( N ) is the number of class-prompt combinations.

- Similarity Calculation: For a given WSI, compute the similarity between the aggregate WSI image embedding and each text embedding. This is typically done using cosine similarity in the shared multimodal embedding space.

- Classification: The final prediction is the class associated with the text prompt achieving the highest similarity score. For robustness, aggregate results across an ensemble of the best-performing prompts [9].

Diagram 1: Zero-Shot Classification Workflow.

Protocol: Cross-Modal Retrieval for Rare Cancer Identification

This protocol identifies histologically similar cases or relevant reports from a database, crucial for rare disease research and drug target identification [10] [19].

I. Research Reagent Solutions Table 3: Essential Components for Cross-Modal Retrieval

| Component | Function | Example / Specification |

|---|---|---|

| Multimodal Embedding Database | A searchable repository of feature vectors from WSIs and reports. | Vector database (e.g., FAISS) containing TITAN-generated slide and text embeddings [10]. |

| Query (Image or Text) | The input used to search the database. | A WSI of a rare cancer subtype or a free-text morphological description. |

| Similarity Metric | Algorithm to find the closest matches in the embedding space. | Cosine similarity or Euclidean distance. |

II. Procedure

- Database Construction: Process a large repository of WSIs and their corresponding pathology reports using a multimodal foundation model (e.g., TITAN) to generate paired image and text embeddings. Store these embeddings in a indexed vector database [10].

- Query Processing: For a novel WSI (image query) or a text-based morphological description (text query), process it with the same model to generate its representative embedding.

- Nearest Neighbor Search: Execute a search in the vector database to find the pre-computed embeddings with the smallest distance to the query embedding.

- Result Retrieval: Return the top-k most similar WSIs or pathology reports based on the similarity metric. This enables researchers to find rare cases with similar morphological phenotypes or clinical descriptions, aiding in hypothesis generation and cohort building [10] [19].

Implementation and Integration

The generalizability of foundation models translates into practical advantages for the research and drug development pipeline. They function as a single, reusable backbone, drastically reducing the need for annotated data and specialized model development for each new task [19] [20]. For instance, a model like UNI, pretrained on 100 million patches, can be adapted to 34 different clinical tasks, from cancer subtyping to inflammation analysis, outperforming task-specific models [19]. This "pretrain-once, adapt-to-many" approach accelerates iterative research cycles. Furthermore, their task-agnostic nature is key for discovering novel morphological biomarkers; by analyzing tissue at scale, models can identify subtle patterns and correlations with molecular data (e.g., inferring MSI status from H&E images) that are imperceptible to the human eye, thus opening new avenues for drug target discovery and patient stratification [19] [20].

Implementing Zero-Shot Classification: Architectures, Training, and Real-World Applications

Visual-language foundation models represent a transformative advancement in computational pathology by learning to associate histopathological imagery with descriptive clinical text. These models develop a shared semantic space where visual patterns from Whole Slide Images (WSIs) and textual concepts from pathology reports can be directly compared and integrated. This capability is particularly valuable for zero-shot classification, where models can recognize and categorize pathological findings without task-specific training data by leveraging their cross-modal understanding. The architecture of these systems typically comprises three core components: image encoders that process gigapixel WSIs, text encoders that interpret clinical language, and fusion mechanisms that create aligned representations across modalities. Current research demonstrates that models like TITAN (Transformer-based pathology Image and Text Alignment Network) can generate general-purpose slide representations applicable to diverse clinical scenarios including rare disease retrieval and cancer prognosis without requiring fine-tuning or clinical labels [10] [14].

Model Architectures: Components and Functionality

Image Encoders for Whole Slide Images

Image encoders for computational pathology must address the significant challenge of processing gigapixel Whole Slide Images (WSIs) that can exceed 1GB in size while preserving critical diagnostic information at multiple scales. The predominant approach involves a two-stage feature extraction process that first encodes local regions then aggregates these into slide-level representations.

The TITAN model employs a Vision Transformer (ViT) architecture that operates on pre-extracted patch features rather than raw pixels. The input embedding space is constructed by dividing each WSI into non-overlapping patches of 512×512 pixels at 20× magnification, with 768-dimensional features extracted for each patch using specialized histopathology encoders like CONCHv1.5. To handle computational complexity, TITAN creates views of a WSI by randomly cropping the 2D feature grid, sampling region crops of 16×16 features covering 8,192×8,192 pixel regions. For self-supervised pretraining, it samples two random global (14×14) and ten local (6×6) crops from these region crops [10].

MPath-Net utilizes Multiple Instance Learning (MIL) for WSI feature extraction, treating each slide as a "bag" of patch-level instances. This approach leverages attention-based pooling mechanisms like ABMIL, ACMIL, TransMIL, and DSMI to aggregate patch information without requiring localized annotations. These methods identify diagnostically relevant regions and weight their contributions accordingly, enabling slide-level classification from weakly supervised data [22].

For nuclei segmentation in pathology images, advanced encoder architectures incorporate modules like Dense-CA (Dense Channel Attention) within U-Net based encoder-decoder frameworks. This module improves feature extraction in complex backgrounds by adaptively emphasizing relevant cellular features and reducing information loss during downsampling. The Multi-scale Transformer Attention (MSTA) module further enhances boundary segmentation by fusing features between encoder and decoder using Transformer-based feature fusion across different scales [23].

Table 1: Comparative Analysis of Image Encoder Architectures in Pathology

| Architecture | Input Processing | Key Components | Output Representation | Computational Considerations |

|---|---|---|---|---|

| TITAN ViT | 512×512 patches → 768D features | Vision Transformer, ALiBi positional encoding, knowledge distillation | General-purpose slide embeddings | Handles long sequences (>10⁴ tokens), random feature cropping |

| MPath-Net MIL | Patch-level feature extraction | Attention-based pooling, instance-level weighting | Slide-level classification scores | Reduces annotation dependency, memory-efficient |

| Dense-CA/MSTA | Full resolution patches | Dense Channel Attention, Multi-scale Transformer | Nuclei segmentation masks | Optimized for boundary accuracy, handles density |

Text Encoders for Pathology Reports

Text encoders process clinical narratives from pathology reports to extract semantically meaningful representations that can be aligned with visual features. These encoders must handle specialized medical terminology, variable reporting styles, and the implicit relationships between morphological descriptions and diagnostic conclusions.

In the MPath-Net framework, Sentence-BERT (Bidirectional Encoder Representations from Transformers) generates embeddings from pathology report text. This approach leverages transfer learning from biomedical literature to create 512-dimensional text representations that capture clinical semantics. The encoder remains frozen during multimodal training to preserve pretrained contextual representations while enabling effective integration with visual features [22].

TITAN employs a two-stage alignment process that first associates generated morphological descriptions at the region-of-interest (ROI) level, then performs cross-modal alignment at the whole-slide level. The model was fine-tuned using 423,122 synthetic captions generated from PathChat, a multimodal generative AI copilot for pathology, in addition to 182,862 medical reports. This approach enables the model to learn fine-grained correspondences between visual patterns and textual descriptions [10].

Specialized biomedical language models like ClinicalBERT have also been applied to pathology report encoding, demonstrating superior performance on medical concept extraction compared to general-domain models. These models are pretrained on large corpora of clinical text, allowing them to capture nuances of medical documentation, including abbreviations, differential diagnoses, and structured reporting elements [22].

Multimodal Fusion Mechanisms

Multimodal fusion mechanisms integrate visual and textual representations to create a shared semantic space where cross-modal reasoning and retrieval can occur. The design of these fusion components critically impacts model performance on downstream tasks like zero-shot classification and cross-modal retrieval.

TITAN employs vision-language alignment through contrastive learning at both region and slide levels. The model aligns image and text representations by maximizing the similarity between matching pairs while minimizing similarity for non-matching pairs. This approach enables zero-shot capabilities where text prompts can be directly matched with visual patterns without task-specific training. The alignment is performed after the vision-only pretraining stage, allowing the model to first learn robust visual representations before incorporating linguistic information [10] [14].

MPath-Net utilizes feature-level fusion (a form of intermediate fusion) where 512-dimensional image and text embeddings are concatenated and passed through trainable layers that learn cross-modal interactions. This approach allows joint reasoning over both visual and textual signals while maintaining the integrity of modality-specific representations. The framework employs an end-to-end training process where the image classifier and downstream fusion layers are trained jointly, enabling the model to learn synergistic representations across modalities [22].

Advanced fusion strategies also include transformer-based fusion where cross-attention mechanisms allow elements from each modality to attend to relevant parts of the other modality. This approach enables fine-grained alignment between specific visual features and textual concepts, which is particularly valuable for interpretability and localization of diagnostically relevant regions [24].

Experimental Protocols and Benchmarking

Model Training and Implementation

The development of effective visual-language models for pathology requires carefully designed training protocols that address data limitations, computational constraints, and clinical requirements.

TITAN Pretraining Protocol: The TITAN model undergoes a three-stage pretraining process on the Mass-340K dataset comprising 335,645 WSIs and 182,862 medical reports across 20 organ types. Stage 1 involves vision-only unimodal pretraining using the iBOT framework (masked image modeling and knowledge distillation) on region crops. Stage 2 performs cross-modal alignment of generated morphological descriptions at the ROI-level using 423,122 synthetic caption-ROI pairs. Stage 3 conducts cross-modal alignment at the WSI-level using slide-report pairs. The model uses Attention with Linear Biases (ALiBi) for long-context extrapolation, with linear bias based on relative Euclidean distance between features in the 2D feature grid [10].

MPath-Net Training Protocol: Implementation begins with feature extraction where WSIs are processed using a Multiple Instance Learning approach and pathology reports are encoded using Sentence-BERT. The model then concatenates 512-dimensional image and text embeddings, passing them through custom fine-tuning layers for tumor classification. The training employs joint optimization where the image encoder is initialized with self-supervised weights and remains trainable, while the text encoder remains frozen to preserve linguistic representations. The framework was evaluated on TCGA dataset (1,684 cases: 916 kidney, 768 lung) using standard cross-validation protocols [22].

PathPT Few-shot Adaptation: For rare cancer subtyping with limited data, PathPT implements spatially-aware visual aggregation and task-specific prompt tuning. This approach converts WSI-level supervision into fine-grained tile-level guidance by leveraging the zero-shot capabilities of vision-language models. The method preserves localization on cancerous regions and enables cross-modal reasoning through prompts aligned with histopathological semantics, addressing the key limitation of conventional MIL methods which overlook cross-modal knowledge [24].

Table 2: Performance Benchmarks of Multimodal Pathology Models

| Model | Training Data | Zero-shot Accuracy | Few-shot Accuracy | Cross-modal Retrieval | Report Generation |

|---|---|---|---|---|---|

| TITAN | 335,645 WSIs + 423K synthetic captions + 183K reports | Superior to ROI/slide foundations models across settings | Outperforms baselines in low-data regimes | Enables slidereport retrieval | Generates pathological descriptions |

| MPath-Net | TCGA (1,684 cases) | Not reported | 94.65% accuracy, 0.9553 precision, 0.9472 recall, 0.9473 F1-score | Not primary focus | Not supported |

| PathPT | 2,910 WSIs across 56 rare cancer subtypes | Leverages VL foundation models | Substantial gains in subtyping accuracy and region grounding | Preserves cross-modal localization | Not primary focus |

Evaluation Metrics and Benchmarks

Rigorous evaluation of visual-language models in pathology requires diverse assessment strategies across multiple clinical tasks and data regimes.

Zero-shot and Few-shot Evaluation: Models are tested on their ability to recognize novel disease categories without task-specific training (zero-shot) or with very limited examples (few-shot). TITAN was evaluated across diverse clinical tasks including cancer subtyping, biomarker prediction, and outcome prognosis, demonstrating superior performance compared to both region-of-interest and slide foundation models. In few-shot settings, the model maintained strong performance with limited training samples, particularly valuable for rare diseases [10] [14].

Cross-modal Retrieval: This evaluation measures the model's ability to retrieve relevant pathology reports given a query WSI (and vice versa). TITAN demonstrated effective cross-modal retrieval capabilities, enabling clinicians to find similar cases based on either visual or textual queries. This functionality supports diagnostic decision-making by identifying clinically comparable cases [10].

Rare Cancer Retrieval: Specialized evaluation was conducted on rare cancer subtyping, where PathPT was benchmarked on eight rare cancer datasets (four adult and four pediatric) spanning 56 subtypes and 2,910 WSIs. The framework consistently delivered superior performance, achieving substantial gains in subtyping accuracy and cancerous region grounding ability compared to conventional MIL frameworks and vision-language models [24].

Architectural Visualizations

TITAN Model Architecture

MPath-Net Multimodal Fusion Framework

Table 3: Essential Research Resources for Pathology Visual-Language Models

| Resource Category | Specific Examples | Function in Research | Key Characteristics |

|---|---|---|---|

| WSI Datasets | Mass-340K (335,645 WSIs), TCGA (1,684 kidney/lung cases), Rare Cancer Benchmarks (8 datasets, 56 subtypes) | Model pretraining and evaluation | Multi-organ, diverse stains, scanner variants, rare disease representation |

| Text Corpora | Pathology reports (183K), Synthetic captions (423K via PathChat) | Cross-modal alignment, report generation | Clinical narratives, fine-grained morphological descriptions |

| Image Encoders | CONCHv1.5, Self-supervised patch encoders, DenseNet variants | Feature extraction from histology patches | 768D feature output, pretrained on histology data |

| Text Encoders | Sentence-BERT, ClinicalBERT, Specialized biomedical PLMs | Text representation learning | Domain-specific pretraining, clinical concept capture |

| Fusion Frameworks | TITAN, MPath-Net, PathPT | Multimodal integration, zero-shot transfer | Cross-modal attention, feature concatenation, prompt tuning |

| Evaluation Suites | Cancer subtyping tasks, Rare cancer retrieval, Cross-modal retrieval | Performance benchmarking | Multiple few-shot settings, diverse cancer types |

Visual-language foundation models represent a paradigm shift in computational pathology, enabling zero-shot classification and cross-modal retrieval without extensive task-specific training. The architectural principles embodied in models like TITAN, MPath-Net, and PathPT demonstrate that effective integration of image encoders, text encoders, and fusion mechanisms can yield powerful general-purpose representations applicable across diverse clinical scenarios. These approaches are particularly valuable for addressing the critical challenge of rare disease diagnosis where limited training data constrains conventional deep learning methods.

Future research directions include scaling pretraining with synthetic data, developing more efficient architectures for gigapixel image processing, enhancing interpretability through better alignment between visual and textual concepts, and expanding clinical validation across broader disease spectra. As these models mature, they hold significant potential to reduce diagnostic variability, support pathologists in challenging cases, and ultimately improve patient care through more accurate and accessible computational pathology tools.

The development of robust visual-language foundation models for computational pathology is fundamentally constrained by the scarcity of large-scale, expertly annotated medical imaging datasets. Traditional supervised learning approaches require extensive domain expertise for data labeling and are often limited to specific tasks and diseases, hindering their broad applicability across the diverse landscape of pathology research and drug development [3] [25]. Zero-shot classification, wherein a model can recognize categories it was never explicitly trained on, presents a promising alternative. This capability is critically dependent on pretraining strategies that effectively align visual representations with rich semantic concepts from text. Leveraging large-scale image-caption pairs extracted from biomedical literature and educational resources offers a powerful pathway to build models that encapsulate the vast, nuanced knowledge of the pathology domain without relying on manual, pathology-specific annotations [26] [27]. These strategies mitigate the data bottleneck and produce models with superior generalization capabilities across a wide array of downstream tasks, from histology image classification to image-text retrieval [3].

This document outlines the core application notes and experimental protocols for leveraging these data sources to pretrain visual-language foundation models, with a specific focus on enabling zero-shot classification in pathology research.

The pretraining ecosystem for biomedical vision-language models primarily relies on large-scale datasets curated from scientific literature, particularly the PubMed Central Open Access (PMC-OA) subset. The table below summarizes the primary data sources and their quantitative significance.

Table 1: Key Large-Scale Biomedical Image-Caption Datasets

| Dataset Name | Source | Scale (Image-Caption Pairs) | Domain Focus | Key Features |

|---|---|---|---|---|

| BIOMEDICA [27] | PMC-OA | > 24 Million | Pan-biomedical (Pathology, Radiology, Cell Biology, etc.) | Extracts all figures & captions; rich metadata (MeSH terms, licenses); expert-guided image content annotations. |

| PMC-15M [27] | PMC-OA | ~15 Million | Pan-medical | A large-scale collection from 3 million articles; used for training models like BiomedCLIP. |

| ROCOv2 [28] | PMC-OA | ~116,000 | Radiology | A curated dataset of radiology images; serves as a benchmark for tasks like concept detection and caption prediction in ImageCLEFmed. |

| CONCH Pretraining Data [3] [25] | Diverse Sources | > 1.17 Million | Histopathology | Includes histopathology images, biomedical text, and image-caption pairs from educational and literature sources. |

A critical insight from recent work is the move towards domain-agnostic data collection. Rather than pre-filtering data solely for specific domains like radiology, archives like BIOMEDICA advocate for extracting the entirety of available scientific figures. This approach captures the full spectrum of biomedical knowledge, from radiology and pathology to molecular biology and genetics, thereby creating a more comprehensive and powerful knowledge base for foundation models [27]. The resulting models demonstrate improved performance not only in broad benchmarks but also in specialized tasks, as they can leverage interconnected biological concepts.

Foundational Models and Architectures

The dominant architectural paradigm for zero-shot classification is the CLIP (Contrastive Language-Image Pre-training) framework and its derivatives. These models jointly train an image encoder and a text encoder to maximize the similarity between the embeddings of matched image-text pairs while minimizing the similarity for non-matched pairs within a batch [26] [26].

Several specialized models have been developed using the data sources described in Section 2:

- CONCH (Contrastive learning from Captions for Histopathology): A visual-language foundation model specifically designed for computational pathology. CONCH is pretrained on a diverse set of histopathology images and biomedical text, enabling state-of-the-art performance on zero-shot classification, segmentation, captioning, and retrieval across a suite of 14 diverse benchmarks [3] [25].

- BMCA-CLIP: A suite of CLIP-style models continually pretrained on the massive BIOMEDICA dataset. These models achieve state-of-the-art zero-shot performance across 40 tasks spanning pathology, radiology, ophthalmology, and cell biology, demonstrating an average performance improvement of 6.56% while using 10 times less compute than prior efforts [27].

- BiomedCLIP: A domain-adapted CLIP model pretrained on the PMC-15M dataset, which has shown strong zero-shot capabilities on various biomedical image classification tasks [27].

Experimental Protocols for Pretraining and Fine-Tuning

Protocol 1: Large-Scale Contrastive Pretraining from Scratch

This protocol outlines the process for training a CLIP-style model on a large-scale biomedical image-caption corpus, such as BIOMEDICA or PMC-15M.

Objective: To learn aligned visual and textual representations from a massive collection of biomedical image-caption pairs, creating a foundation model for zero-shot transfer.

Research Reagent Solutions:

Table 2: Essential Reagents for Large-Scale Pretraining

| Reagent / Resource | Function / Description | Example / Source |

|---|---|---|

| Image-Caption Dataset | The core training data. A large-scale collection of figures and captions from biomedical literature. | BIOMEDICA (24M pairs) [27], PMC-15M (15M pairs) [27] |

| Image Encoder | A neural network that converts an image into a feature vector. | Vision Transformer (ViT) [27], ResNet-50 [29] |

| Text Encoder | A neural network that converts a text caption into a feature vector. | Transformer-based models (e.g., BioBERT, DeBERTa) [27] [28] |

| Contrastive Loss Function | The objective function that pulls positive pairs together and pushes negative pairs apart. | InfoNCE (NT-Xent) loss [26] [26] |

| High-Performance Compute | Clusters of GPUs or TPUs are required for training on large datasets. | NVIDIA A100/A6000, Google Cloud TPU |

| High-Throughput Data Loader | Software to efficiently stream and preprocess large datasets during training. | WebDataset format for 3x-10x higher I/O rates [27] |

Procedure:

- Data Acquisition and Streaming: Download or directly stream the dataset in a high-throughput format like WebDataset to avoid local storage bottlenecks [27].

- Preprocessing:

- Images: Resize images to a uniform resolution (e.g., 224x224 or 384x384). Apply standard augmentation techniques (e.g., random cropping, horizontal flipping, color jitter).

- Text: Tokenize captions using the text encoder's tokenizer. A common practice is to prepend a prompt like "A biomedical image of" to the original caption to improve semantic alignment [26].

- Model Initialization: Initialize the image and text encoders. These can be initialized from scratch or from weights pretrained on general-domain data (e.g., OpenAI CLIP, Microsoft CLIP).

- Contrastive Training:

- For a batch of N image-caption pairs, compute the image embeddings ( I \in \mathbb{R}^{N \times d} ) and text embeddings ( T \in \mathbb{R}^{N \times d} ), where ( d ) is the embedding dimension.

- Compute the cosine similarity matrix ( S \in \mathbb{R}^{N \times N} ), where ( S{i,j} = Ii \cdot T_j ).

- Optimize the symmetric InfoNCE loss. The image-to-text loss component is: [ \mathcal{L}{i2t} = -\frac{1}{N} \sum{i=1}^{N} \log \frac{\exp(S{i,i} / \tau)}{\sum{j=1}^{N} \exp(S{i,j} / \tau)} ] where ( \tau ) is a learnable temperature parameter. The text-to-image loss ( \mathcal{L}{t2i} ) is computed similarly, and the total loss is ( \mathcal{L} = (\mathcal{L}{i2t} + \mathcal{L}{t2i})/2 ) [26] [26].

- Validation: Monitor training using a downstream zero-shot classification task on a held-out validation set not seen during training.

Protocol 2: Fine-Tuning for Enhanced Zero-Shot Pathology Classification

This protocol describes a specialized fine-tuning strategy to adapt a pre-trained visual-language model for improved zero-shot pathology classification, addressing unique challenges in medical data.

Objective: To enhance a pre-trained model's zero-shot performance on pathology tasks by incorporating domain-specific adaptations, such as handling multi-labeled image-report pairs and dense medical captions.

Research Reagent Solutions:

Table 3: Essential Reagents for Fine-Tuning

| Reagent / Resource | Function / Description | Example / Source |

|---|---|---|

| Pre-trained VLM | The base model to be adapted. | CONCH [3], BMCA-CLIP [27], General-domain CLIP |